More on Marketing

Dung Claire Tran

3 years ago

Is the future of brand marketing with virtual influencers?

Digital influences that mimic humans are rising.

Lil Miquela has 3M Instagram followers, 3.6M TikTok followers, and 30K Twitter followers. She's been on the covers of Prada, Dior, and Calvin Klein magazines. Miquela released Not Mine in 2017 and launched Hard Feelings at Lollapazoolas this year. This isn't surprising, given the rise of influencer marketing.

This may be unexpected. Miquela's fake. Brud, a Los Angeles startup, produced her in 2016.

Lil Miquela is one of many rising virtual influencers in the new era of social media marketing. She acts like a real person and performs the same tasks as sports stars and models.

The emergence of online influencers

Before 2018, computer-generated characters were rare. Since the virtual human industry boomed, they've appeared in marketing efforts worldwide.

In 2020, the WHO partnered up with Atlanta-based virtual influencer Knox Frost (@knoxfrost) to gather contributions for the COVID-19 Solidarity Response Fund.

Lu do Magalu (@magazineluiza) has been the virtual spokeswoman for Magalu since 2009, using social media to promote reviews, product recommendations, unboxing videos, and brand updates. Magalu's 10-year profit was $552M.

In 2020, PUMA partnered with Southeast Asia's first virtual model, Maya (@mayaaa.gram). She joined Singaporean actor Tosh Zhang in the PUMA campaign. Local virtual influencer Ava Lee-Graham (@avagram.ai) partnered with retail firm BHG to promote their in-house labels.

In Japan, Imma (@imma.gram) is the face of Nike, PUMA, Dior, Salvatore Ferragamo SpA, and Valentino. Imma's bubblegum pink bob and ultra-fine fashion landed her on the cover of Grazia magazine.

Lotte Home Shopping created Lucy (@here.me.lucy) in September 2020. She made her TV debut as a Christmas show host in 2021. Since then, she has 100K Instagram followers and 13K TikTok followers.

Liu Yiexi gained 3 million fans in five days on Douyin, China's TikTok, in 2021. Her two-minute video went viral overnight. She's posted 6 videos and has 830 million Douyin followers.

China's virtual human industry was worth $487 million in 2020, up 70% year over year, and is expected to reach $875.9 million in 2021.

Investors worldwide are interested. Immas creator Aww Inc. raised $1 million from Coral Capital in September 2020, according to Bloomberg. Superplastic Inc., the Vermont-based startup behind influencers Janky and Guggimon, raised $16 million by 2020. Craft Ventures, SV Angels, and Scooter Braun invested. Crunchbase shows the company has raised $47 million.

The industries they represent, including Augmented and Virtual reality, were worth $14.84 billion in 2020 and are projected to reach $454.73 billion by 2030, a CAGR of 40.7%, according to PR Newswire.

Advantages for brands

Forbes suggests brands embrace computer-generated influencers. Examples:

Unlimited creative opportunities: Because brands can personalize everything—from a person's look and activities to the style of their content—virtual influencers may be suited to a brand's needs and personalities.

100% brand control: Brand managers now have more influence over virtual influencers, so they no longer have to give up and rely on content creators to include brands into their storytelling and style. Virtual influencers can constantly produce social media content to promote a brand's identity and ideals because they are completely scandal-free.

Long-term cost savings: Because virtual influencers are made of pixels, they may be reused endlessly and never lose their beauty. Additionally, they can move anywhere around the world and even into space to fit a brand notion. They are also always available. Additionally, the expense of creating their content will not rise in step with their expanding fan base.

Introduction to the metaverse: Statista reports that 75% of American consumers between the ages of 18 and 25 follow at least one virtual influencer. As a result, marketers that support virtual celebrities may now interact with younger audiences that are more tech-savvy and accustomed to the digital world. Virtual influencers can be included into any digital space, including the metaverse, as they are entirely computer-generated 3D personas. Virtual influencers can provide brands with a smooth transition into this new digital universe to increase brand trust and develop emotional ties, in addition to the young generations' rapid adoption of the metaverse.

Better engagement than in-person influencers: A Hype Auditor study found that online influencers have roughly three times the engagement of their conventional counterparts. Virtual influencers should be used to boost brand engagement even though the data might not accurately reflect the entire sector.

Concerns about influencers created by computers

Virtual influencers could encourage excessive beauty standards in South Korea, which has a $10.7 billion plastic surgery industry.

A classic Korean beauty has a small face, huge eyes, and pale, immaculate skin. Virtual influencers like Lucy have these traits. According to Lee Eun-hee, a professor at Inha University's Department of Consumer Science, this could make national beauty standards more unrealistic, increasing demand for plastic surgery or cosmetic items.

Other parts of the world raise issues regarding selling items to consumers who don't recognize the models aren't human and the potential of cultural appropriation when generating influencers of other ethnicities, called digital blackface by some.

Meta, Facebook and Instagram's parent corporation, acknowledges this risk.

“Like any disruptive technology, synthetic media has the potential for both good and harm. Issues of representation, cultural appropriation and expressive liberty are already a growing concern,” the company stated in a blog post. “To help brands navigate the ethical quandaries of this emerging medium and avoid potential hazards, (Meta) is working with partners to develop an ethical framework to guide the use of (virtual influencers).”

Despite theoretical controversies, the industry will likely survive. Companies think virtual influencers are the next frontier in the digital world, which includes the metaverse, virtual reality, and digital currency.

In conclusion

Virtual influencers may garner millions of followers online and help marketers reach youthful audiences. According to a YouGov survey, the real impact of computer-generated influencers is yet unknown because people prefer genuine connections. Virtual characters can supplement brand marketing methods. When brands are metaverse-ready, the author predicts virtual influencer endorsement will continue to expand.

Joseph Mavericks

3 years ago

You Don't Have to Spend $250 on TikTok Ads Because I Did

900K impressions, 8K clicks, and $$$ orders…

I recently started dropshipping. Now that I own my business and can charge it as a business expense, it feels less like money wasted if it doesn't work. I also made t-shirts to sell. I intended to open a t-shirt store and had many designs on a hard drive. I read that Tiktok advertising had a high conversion rate and low cost because they were new. According to many, the advertising' cost/efficiency ratio would plummet and become as bad as Google or Facebook Ads. Now felt like the moment to try Tiktok marketing and dropshipping. I work in marketing for a SaaS firm and have seen how poorly ads perform. I wanted to try it alone.

I set up $250 and ran advertising for a week. Before that, I made my own products, store, and marketing. In this post, I'll show you my process and results.

Setting up the store

Dropshipping is a sort of retail business in which the manufacturer ships the product directly to the client through an online platform maintained by a seller. The seller takes orders but has no stock. The manufacturer handles all orders. This no-stock concept increases profitability and flexibility.

In my situation, I used previous t-shirt designs to make my own product. I didn't want to handle order fulfillment logistics, so I looked for a way to print my designs on demand, ship them, and handle order tracking/returns automatically. So I found Printful.

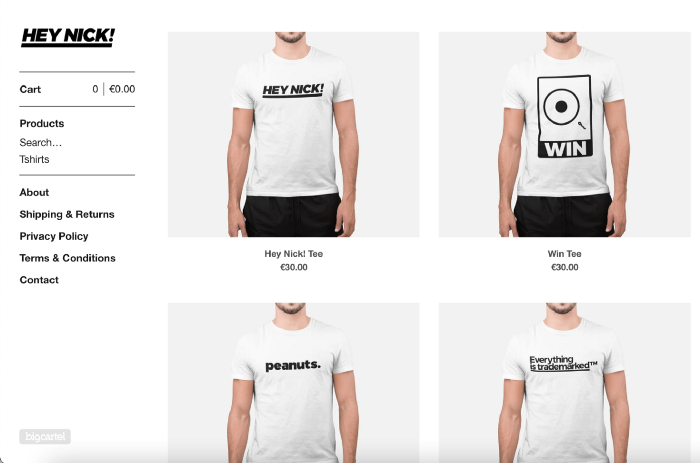

I needed to connect my backend and supplier to a storefront so visitors could buy. 99% of dropshippers use Shopify, but I didn't want to master the difficult application. I wanted a one-day project. I'd previously worked with Big Cartel, so I chose them.

Big Cartel doesn't collect commissions on sales, simply a monthly flat price ($9.99 to $19.99 depending on your plan).

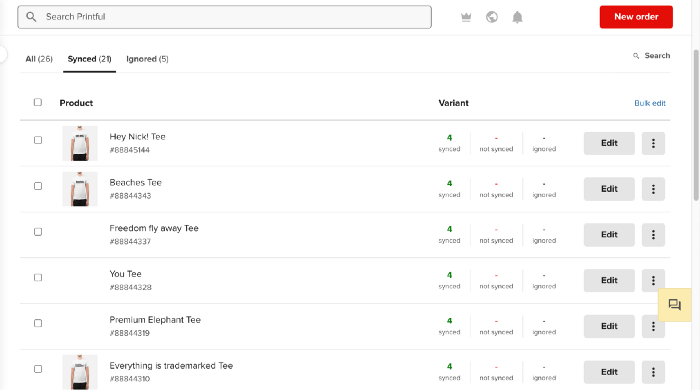

After opening a Big Cartel account, I uploaded 21 designs and product shots, then synced each product with Printful.

Developing the ads

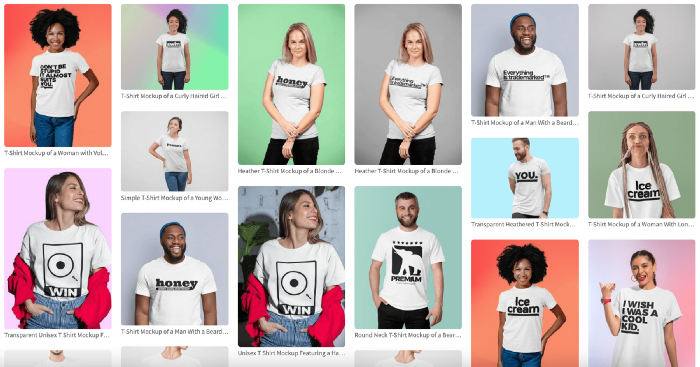

I mocked up my designs on cool people photographs from placeit.net, a great tool for creating product visuals when you don't have a studio, camera gear, or models to wear your t-shirts.

I opened an account on the website and had advertising visuals within 2 hours.

Because my designs are simple (black design on white t-shirt), I chose happy, stylish people on plain-colored backdrops. After that, I had to develop an animated slideshow.

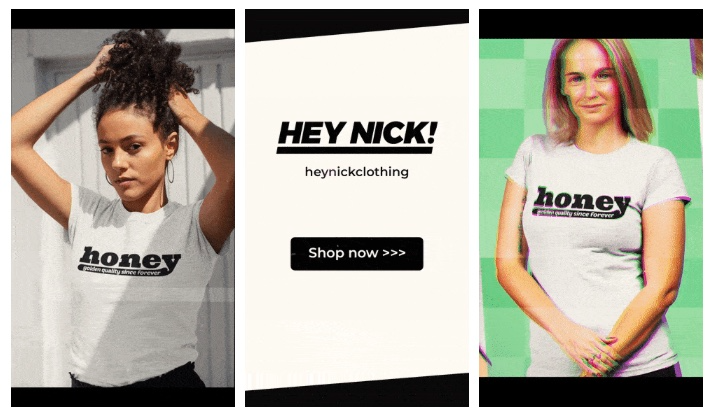

Because I'm a graphic designer, I chose to use Adobe Premiere to create animated Tiktok advertising.

Premiere is a fancy video editing application used for more than advertisements. Premiere is used to edit movies, not social media marketing. I wanted this experiment to be quick, so I got 3 social media ad templates from motionarray.com and threw my visuals in. All the transitions and animations were pre-made in the files, so it only took a few hours to compile. The result:

I downloaded 3 different soundtracks for the videos to determine which would convert best.

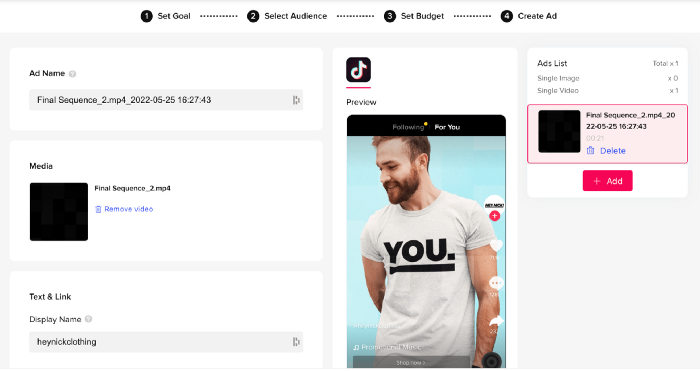

After that, I opened a Tiktok business account, uploaded my films, and inserted ad info. They went live within one hour.

The (poor) outcomes

As a European company, I couldn't deliver ads in the US. All of my advertisements' material (title, description, and call to action) was in English, hence they continued getting rejected in Europe for countries that didn't speak English. There are a lot of them:

I lost a lot of quality traffic, but I felt that if the images were engaging, people would check out the store and buy my t-shirts. I was wrong.

51,071 impressions on Day 1. 0 orders after 411 clicks

114,053 impressions on Day 2. 1.004 clicks and no orders

Day 3: 987 clicks, 103,685 impressions, and 0 orders

101,437 impressions on Day 4. 0 orders after 963 clicks

115,053 impressions on Day 5. 1,050 clicks and no purchases

125,799 impressions on day 6. 1,184 clicks, no purchases

115,547 impressions on Day 7. 1,050 clicks and no purchases

121,456 impressions on day 8. 1,083 clicks, no purchases

47,586 impressions on Day 9. 419 Clicks. No orders

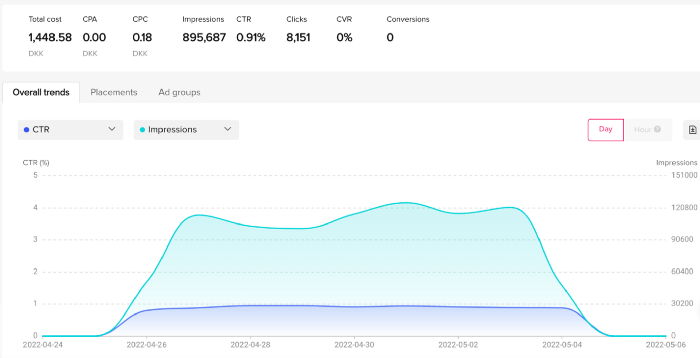

My overall conversion rate for video advertisements was 0.9%. TikTok's paid ad formats all result in strong engagement rates (ads average 3% to 12% CTR to site), therefore a 1 to 2% CTR should have been doable.

My one-week experiment yielded 8,151 ad clicks but no sales. Even if 0.1% of those clicks converted, I should have made 8 sales. Even companies with horrible web marketing would get one download or trial sign-up for every 8,151 clicks. I knew that because my advertising were in English, I had no impressions in the main EU markets (France, Spain, Italy, Germany), and that this impacted my conversion potential. I still couldn't believe my numbers.

I dug into the statistics and found that Tiktok's stats didn't match my store traffic data.

Looking more closely at the numbers

My ads were approved on April 26 but didn't appear until April 27. My store dashboard showed 440 visitors but 1,004 clicks on Tiktok. This happens often while tracking campaign results since different platforms handle comparable user activities (click, view) differently. In online marketing, residual data won't always match across tools.

My data gap was too large. Even if half of the 1,004 persons who clicked closed their browser or left before the store site loaded, I would have gained 502 visitors. The significant difference between Tiktok clicks and Big Cartel store visits made me suspicious. It happened all week:

Day 1: 440 store visits and 1004 ad clicks

Day 2: 482 store visits, 987 ad clicks

3rd day: 963 hits on ads, 452 store visits

443 store visits and 1,050 ad clicks on day 4.

Day 5: 459 store visits and 1,184 ad clicks

Day 6: 430 store visits and 1,050 ad clicks

Day 7: 409 store visits and 1,031 ad clicks

Day 8: 166 store visits and 418 ad clicks

The disparity wasn't related to residual data or data processing. The disparity between visits and clicks looked regular, but I couldn't explain it.

After the campaign concluded, I discovered all my creative assets (the videos) had a 0% CTR and a $0 expenditure in a separate dashboard. Whether it's a dashboard reporting issue or a budget allocation bug, online marketers shouldn't see this.

Tiktok can present any stats they want on their dashboard, just like any other platform that runs advertisements to promote content to its users. I can't verify that 895,687 individuals saw and clicked on my ad. I invested $200 for what appears to be around 900K impressions, which is an excellent ROI. No one bought a t-shirt, even an unattractive one, out of 900K people?

Would I do it again?

Nope. Whether I didn't make sales because Tiktok inflated the dashboard numbers or because I'm horrible at producing advertising and items that sell, I’ll stick to writing content and making videos. If setting up a business and ads in a few days was all it took to make money online, everyone would do it.

Video advertisements and dropshipping aren't dead. As long as the internet exists, people will click ads and buy stuff. Converting ads and selling stuff takes a lot of work, and I want to focus on other things.

I had always wanted to try dropshipping and I’m happy I did, I just won’t stick to it because that’s not something I’m interested in getting better at.

If I want to sell t-shirts again, I'll avoid Tiktok advertisements and find another route.

Jon Brosio

3 years ago

This Landing Page is a (Legal) Money-Printing Machine

and it’s easy to build.

A landing page with good copy is a money-maker.

Let's be honest, page-builder templates are garbage.

They can help you create a nice-looking landing page, but not persuasive writing.

Over the previous 90 days, I've examined 200+ landing pages.

What's crazy?

Top digital entrepreneurs use a 7-part strategy to bring in email subscribers, generate prospects, and (passively) sell their digital courses.

Steal this 7-part landing page architecture to maximize digital product sales.

The offer

Landing pages require offers.

Newsletter, cohort, or course offer.

Your reader should see this offer first. Includind:

Headline

Imagery

Call-to-action

Clear, persuasive, and simplicity are key. Example: the Linkedin OS course home page of digital entrepreneur Justin Welsh offers:

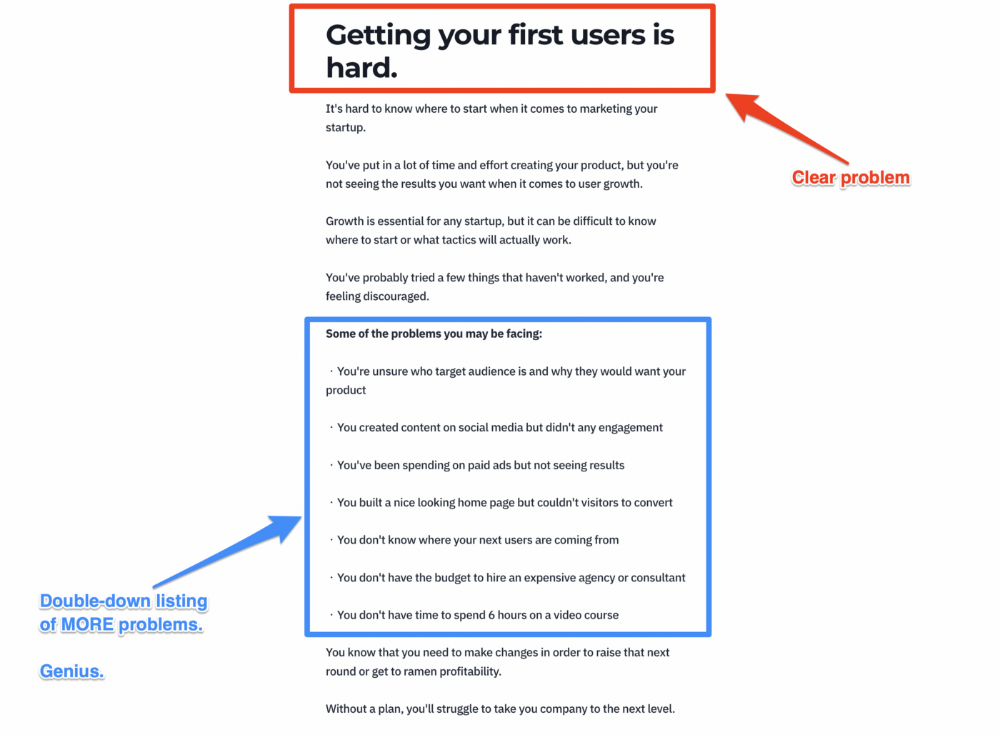

A distinctly defined problem

Everyone needs an enemy.

You need an opponent on your landing page. Problematic.

Next, employ psychology to create a struggle in your visitor's thoughts.

Don't be clever here; label your customer's problem. The more particular you are, the bigger the situation will seem.

When you build a clear monster, you invite defeat. I appreciate Theo Ohene's Growth Roadmaps landing page.

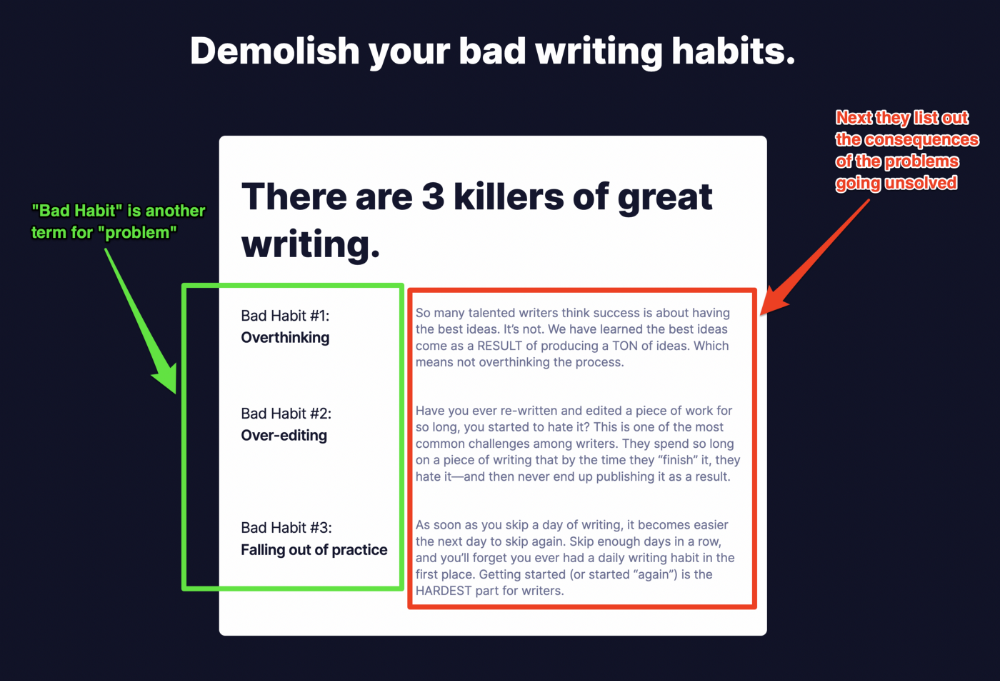

Exacerbation of the effects

Problem identification doesn't motivate action.

What would an unresolved problem mean?

This is landing page copy. When you describe the unsolved problem's repercussions, you accomplish several things:

You write a narrative (and stories are remembered better than stats)

You cause the reader to feel something.

You help the reader relate to the issue

Important!

My favorite script is:

"Sure, you can let [problem] go untreated. But what will happen if you do? Soon, you'll begin to notice [new problem 1] will start to arise. That might bring up [problem 2], etc."

Take the copywriting course, digital writer and entrepreneur Dickie Bush illustrates below when he labels the problem (see: "poor habit") and then illustrates the repercussions.

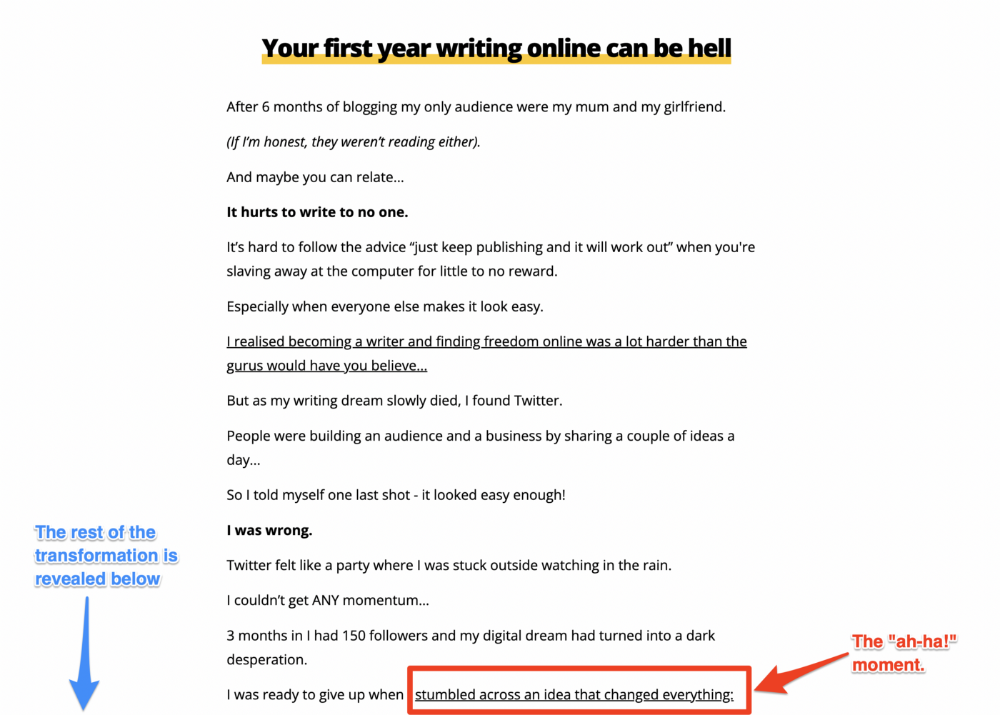

The tale of transformation

Every landing page needs that "ah-ha!" moment.

Transformation stories do this.

Did you find a solution? Someone else made the discovery? Have you tested your theory?

Next, describe your (or your subject's) metamorphosis.

Kieran Drew nails his narrative (and revelation) here. Right before the disclosure, he introduces his "ah-ha!" moment:

Testimonials

Social proof completes any landing page.

Social proof tells the reader, "If others do it, it must be worthwhile."

This is your argument.

Positive social proof helps (obviously).

Offer "free" training in exchange for a testimonial if you need social evidence. This builds social proof.

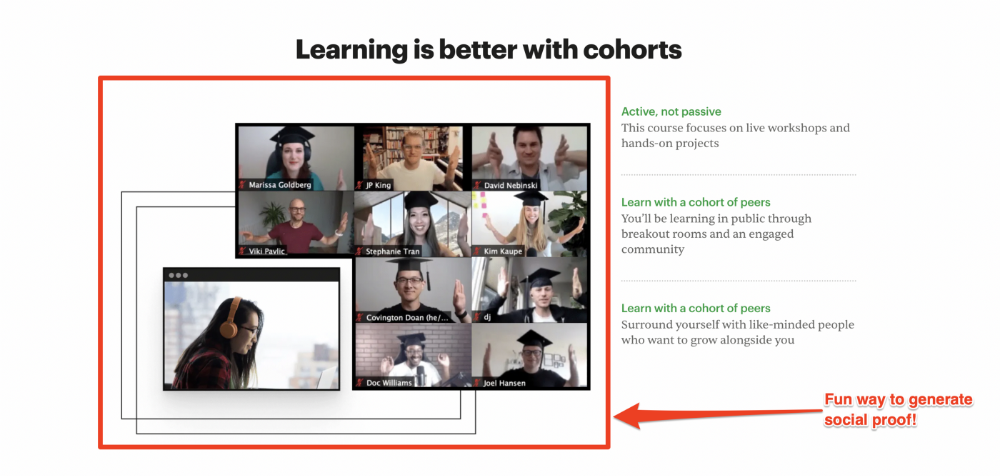

Most social proof is testimonies (recommended). Kurtis Hanni's creative take on social proof (using a screenshot of his colleague) is entertaining.

Bravo.

Reveal your offer

Now's the moment to act.

Describe the "bundle" that provides the transformation.

Here's:

Course

Cohort

Ebook

Whatever you're selling.

Include a product or service image, what the consumer is getting ("how it works"), the price, any "free" bonuses (preferred), and a CTA ("buy now").

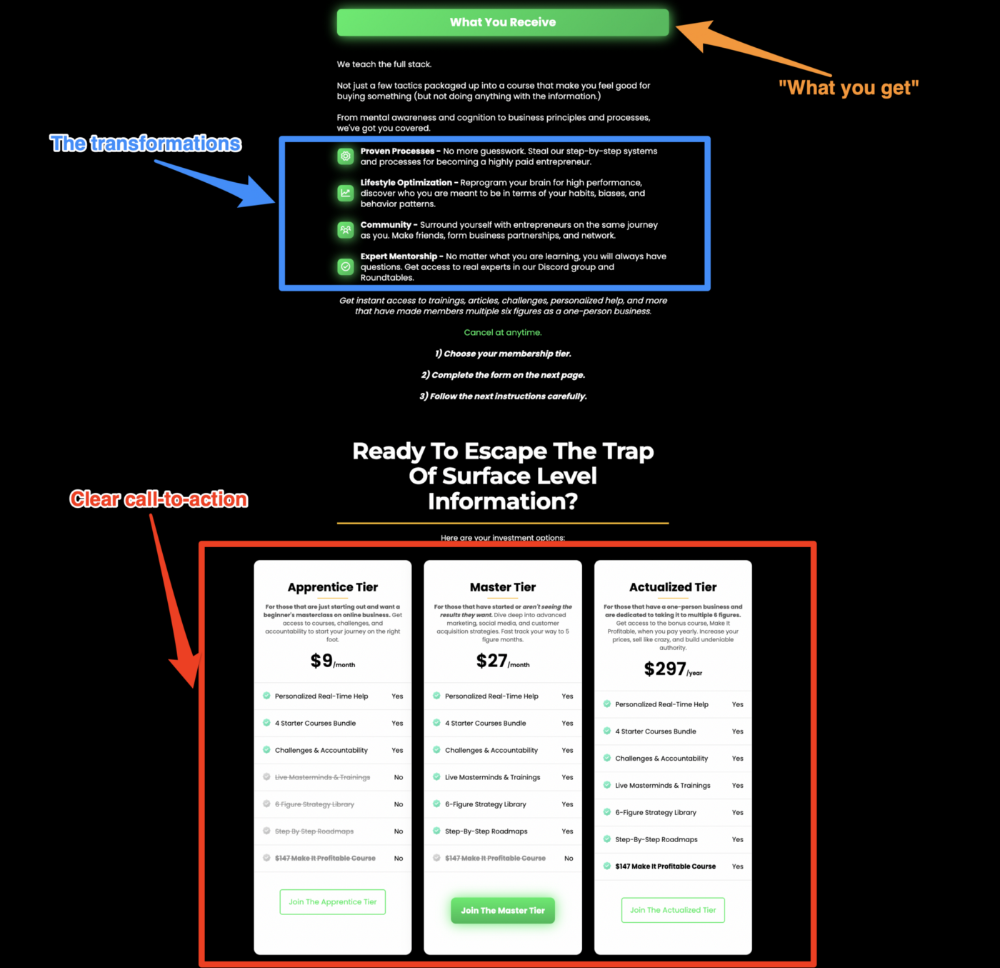

Clarity is key. Don't make a cunning offer. Make sure your presentation emphasizes customer change (benefits). Dan Koe's Modern Mastery landing page makes an offer. Consider:

An ultimatum

Offering isn't enough.

You must give your prospect an ultimatum.

They can buy your merchandise from you.

They may exit the webpage.

That’s it.

It's crucial to show what happens if the reader does either. Stress the consequences of not buying (again, a little consequence amplification). Remind them of the benefits of buying.

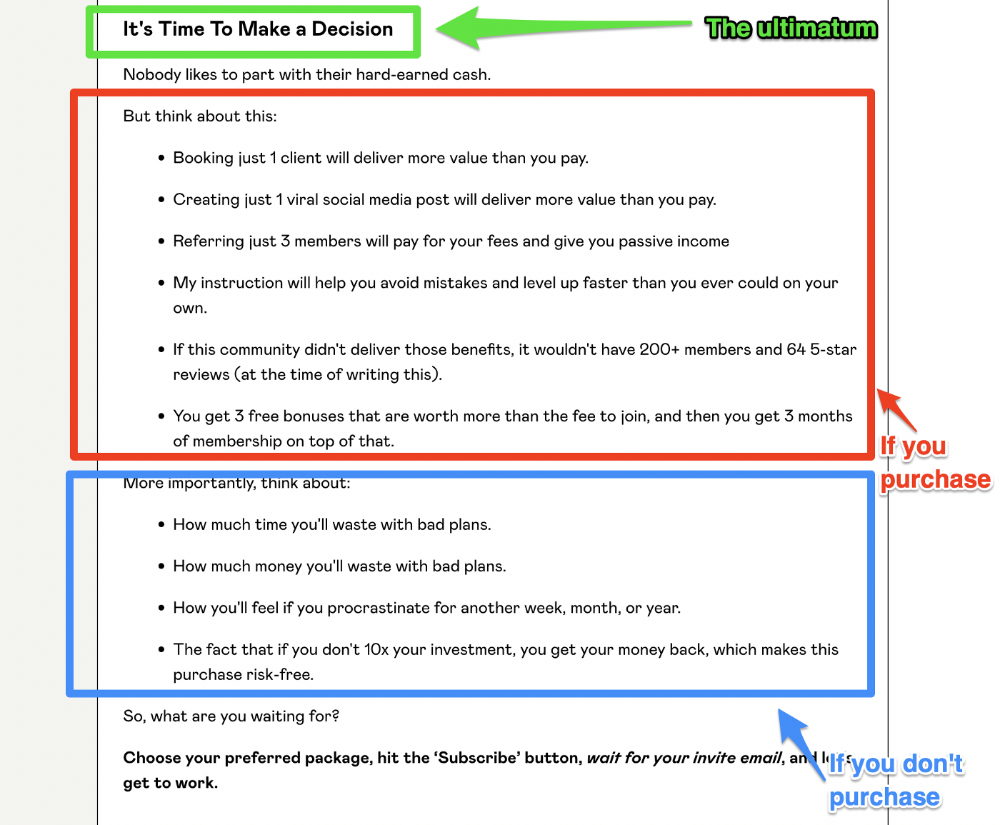

I appreciate Charles Miller's product offer ending:

The top online creators use a 7-part landing page structure:

Offer the service

Describe the problem

Amplify the consequences

Tell the transformational story

Include testimonials and social proof.

Reveal the offer (with any bonuses if applicable)

Finally, give the reader a deadline to encourage them to take action.

Sequence these sections to develop a landing page that (essentially) prints money.

You might also like

Andy Raskin

3 years ago

I've Never Seen a Sales Deck This Good

It’s Zuora’s, and it’s brilliant. Here’s why.

My friend Tim got a sales position at a Series-C software company that garnered $60 million from A-list investors. He's one of the best salespeople I know, yet he emailed me after starting to struggle.

Tim has a few modest clients. “Big companies ignore my pitch”. Tim said.

I love helping teams write the strategic story that drives sales, marketing, and fundraising. Tim and I had lunch at Amber India on Market Street to evaluate his deck.

After a feast, I asked Tim when prospects tune out.

He said, “several slides in”.

Intent on maximizing dining ROI, Tim went back to the buffet for seconds. When he returned, I pulled out my laptop and launched into a Powerpoint presentation.

“What’s this?” Tim asked.

“This,” I said, “is the greatest sales deck I have ever seen.”

Five Essentials of a Great Sales Narrative

I showed Tim a sales slide from IPO-bound Zuora, which sells a SaaS platform for subscription billing. Zuora supports recurring payments (e.g. enterprise software).

Ex-Zuora salesman gave me the deck, saying it helped him close his largest business. (I don't know anyone who works at Zuora.) After reading this, a few Zuora employees contacted me.)

Tim abandoned his naan in a pool of goat curry and took notes while we discussed the Zuora deck.

We remarked how well the deck led prospects through five elements:

(The ex-Zuora salesperson begged me not to release the Zuora deck publicly.) All of the images below originate from Zuora's website and SlideShare channel.)

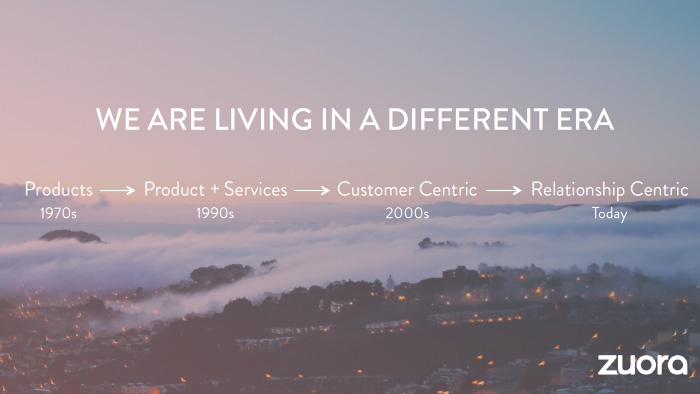

#1. Name a Significant Change in the World

Don't start a sales presentation with mentioning your product, headquarters, investors, clients, or yourself.

Name the world shift that raises enormous stakes and urgency for your prospect.

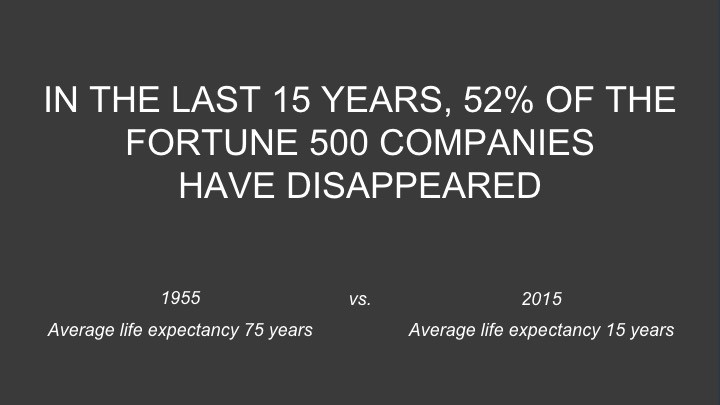

Every Zuora sales deck begins with this slide:

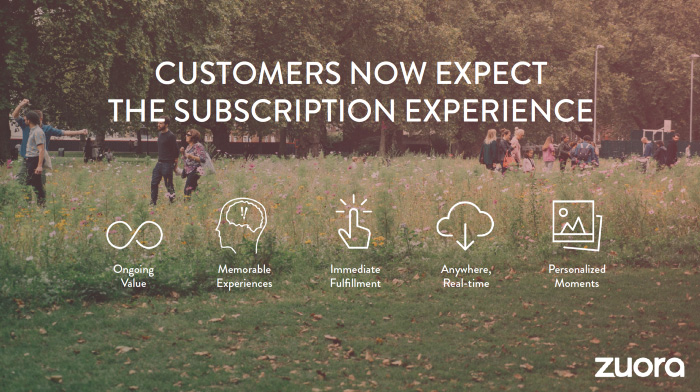

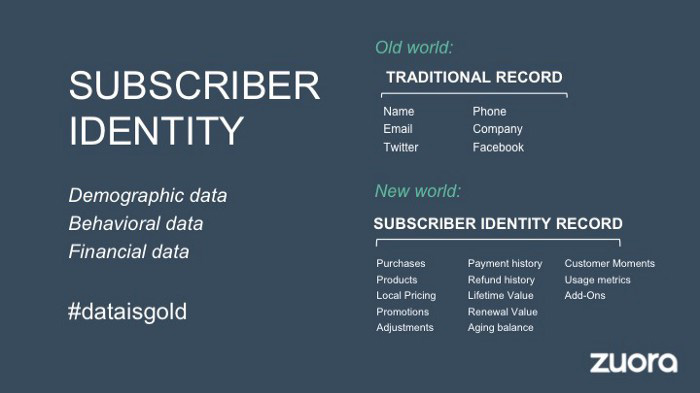

Zuora coined the term subscription economy to describe a new market where purchasers prefer regular service payments over outright purchases. Zuora then shows a slide with the change's history.

Most pitch recommendation advises starting with the problem. When you claim a problem, you put prospects on the defensive. They may be unaware of or uncomfortable admitting the situation.

When you highlight a global trend, prospects open up about how it affects them, worries them, and where they see opportunity. You capture their interest. Robert McKee says:

…what attracts human attention is change. …if the temperature around you changes, if the phone rings — that gets your attention. The way in which a story begins is a starting event that creates a moment of change.

#2. Show There’ll Be Winners and Losers

Loss aversion affects all prospects. They avoid a loss by sticking with the status quo rather than risking a gain by changing.

To fight loss aversion, show how the change will create winners and losers. You must show both

that if the prospect can adjust to the modification you mentioned, the outcome will probably be quite favorable; and

That failing to do so is likely to have an unacceptable negative impact on the prospect's future

Zuora shows a mass extinction among Fortune 500 firms.

…and then showing how the “winners” have shifted from product ownership to subscription services. Those include upstarts…

…as well as rejuvenated incumbents:

To illustrate, Zuora asks:

Winners utilize Zuora's subscription service models.

#3. Tease the Promised Land

It's tempting to get into product or service details now. Resist that urge.

Prospects won't understand why product/service details are crucial if you introduce them too soon, therefore they'll tune out.

Instead, providing a teaser image of the happily-ever-after your product/service will assist the prospect reach.

Your Promised Land should be appealing and hard to achieve without support. Otherwise, why does your company exist?

Zuora shows this Promised Land slide after explaining that the subscription economy will have winners and losers.

Not your product or service, but a new future state.

(I asked my friend Tim to describe his Promised Land, and he answered, "You’ll have the most innovative platform for ____." Nope: the Promised Land isn't possessing your technology, but living with it.)

Your Promised Land helps prospects market your solution to coworkers after your sales meeting. Your coworkers will wonder what you do without you. Your prospects are more likely to provide a persuasive answer with a captivating Promised Land.

#4. Present Features as “Mystic Gifts” for Overcoming Difficulties on the Road to the Promised Land

Successful sales decks follow the same format as epic films and fairy tales. Obi Wan gives Luke a lightsaber to help him destroy the Empire. You're Gandalf, helping Frodo destroy the ring. Your prospect is Cinderella, and you're her fairy godmother.

Position your product or service's skills as mystical gifts to aid your main character (prospect) achieve the Promised Land.

Zuora's client record slide is shown above. Without context, even the most technical prospect would be bored.

Positioned in the context of shifting from an “old” to a “new world”, it's the foundation for a compelling conversation with prospects—technical and otherwise—about why traditional solutions can't reach the Promised Land.

#5. Show Proof That You Can Make the Story True.

In this sense, you're promising possibilities that if they follow you, they'll reach the Promised Land.

The journey to the Promised Land is by definition rocky, so prospects are right to be cautious. The final part of the pitch is proof that you can make the story come true.

The most convincing proof is a success story about how you assisted someone comparable to the prospect. Zuora's sales people use a deck of customer success stories, but this one gets the essence.

I particularly appreciate this one from an NCR exec (a Zuora customer), which relates more strongly to Zuora's Promised Land:

Not enough successful customers? Product demos are the next best evidence, but features should always be presented in the context of helping a prospect achieve the Promised Land.

The best sales narrative is one that is told by everyone.

Success rarely comes from a fantastic deck alone. To be effective, salespeople need an organization-wide story about change, Promised Land, and Magic Gifts.

Zuora exemplifies this. If you hear a Zuora executive, including CEO Tien Tzuo, talk, you'll likely hear about the subscription economy and its winners and losers. This is the theme of the company's marketing communications, campaigns, and vision statement.

According to the ex-Zuora salesperson, company-wide story alignment made him successful.

The Zuora marketing folks ran campaigns and branding around this shift to the subscription economy, and [CEO] Tien [Tzuo] talked it up all the time. All of that was like air cover for my in-person sales ground attack. By the time I arrived, prospects were already convinced they had to act. It was the closest thing I’ve ever experienced to sales nirvana.

The largest deal ever

Tim contacted me three weeks after our lunch to tell me that prospects at large organizations were responding well to his new deck, which we modeled on Zuora's framework. First, prospects revealed their obstacles more quickly. The new pitch engages CFOs and other top gatekeepers better, he said.

A week later, Tim emailed that he'd signed his company's biggest agreement.

Next week, we’re headed back to Amber India to celebrate.

DC Palter

3 years ago

Is Venture Capital a Good Fit for Your Startup?

5 VC investment criteria

I reviewed 200 startup business concepts last week. Brainache.

The enterprises sold various goods and services. The concepts were achingly similar: give us money, we'll produce a product, then get more to expand. No different from daily plans and pitches.

Most of those 200 plans sounded plausible. But 10% looked venture-worthy. 90% of startups need alternatives to venture finance.

With the success of VC-backed businesses and the growth of venture funds, a common misperception is that investors would fund any decent company idea. Finding investors that believe in the firm and founders is the key to funding.

Incorrect. Venture capital needs investing in certain enterprises. If your startup doesn't match the model, as most early-stage startups don't, you can revise your business plan or locate another source of capital.

Before spending six months pitching angels and VCs, make sure your startup fits these criteria.

Likely to generate $100 million in sales

First, I check the income predictions in a pitch deck. If it doesn't display $100M, don't bother.

The math doesn't work for venture financing in smaller businesses.

Say a fund invests $1 million in a startup valued at $5 million that is later acquired for $20 million. That's a win everyone should celebrate. Most VCs don't care.

Consider a $100M fund. The fund must reach $360M in 7 years with a 20% return. Only 20-30 investments are possible. 90% of the investments will fail, hence the 23 winners must return $100M-$200M apiece. $15M isn't worth the work.

Angel investors and tiny funds use the same ideas as venture funds, but their smaller scale affects the calculations. If a company can support its growth through exit on less than $2M in angel financing, it must have $25M in revenues before large companies will consider acquiring it.

Aiming for Hypergrowth

A startup's size isn't enough. It must expand fast.

Developing a great business takes time. Complex technology must be constructed and tested, a nationwide expansion must be built, or production procedures must go from lab to pilot to factories. These can be enormous, world-changing corporations, but venture investment is difficult.

The normal 10-year venture fund life. Investments are made during first 3–4 years.. 610 years pass between investment and fund dissolution. Funds need their investments to exit within 5 years, 7 at the most, therefore add a safety margin.

Longer exit times reduce ROI. A 2-fold return in a year is excellent. Loss at 2x in 7 years.

Lastly, VCs must prove success to raise their next capital. The 2nd fund is raised from 1st fund portfolio increases. Third fund is raised using 1st fund's cash return. Fund managers must raise new money quickly to keep their jobs.

Branding or technology that is protected

No big firm will buy a startup at a high price if they can produce a competing product for less. Their development teams, consumer base, and sales and marketing channels are large. Who needs you?

Patents, specialist knowledge, or brand name are the only answers. The acquirer buys this, not the thing.

I've heard of several promising startups. It's not a decent investment if there's no exit strategy.

A company that installs EV charging stations in apartments and shopping areas is an example. It's profitable, repeatable, and big. A terrific company. Not a startup.

This building company's operations aren't secret. No technology to protect, no special information competitors can't figure out, no go-to brand name. Despite the immense possibilities, a large construction company would be better off starting their own.

Most venture businesses build products, not services. Services can be profitable but hard to safeguard.

Probable purchase at high multiple

Once a software business proves its value, acquiring it is easy. Pharma and medtech firms have given up on their own research and instead acquire startups after regulatory permission. Many startups, especially in specialized areas, have this weakness.

That doesn't mean any lucrative $25M-plus business won't be acquired. In many businesses, the venture model requires a high exit premium.

A startup invents a new glue. 3M, BASF, Henkel, and others may buy them. Adding more adhesive to their catalogs won't boost commerce. They won't compete to buy the business. They'll only buy a startup at a profitable price. The acquisition price represents a moderate EBITDA multiple.

The company's $100M revenue presumably yields $10m in profits (assuming they’ve reached profitability at all). A $30M-$50M transaction is likely. Not terrible, but not what venture investors want after investing $25M to create a plant and develop the business.

Private equity buys profitable companies for a moderate profit multiple. It's a good exit for entrepreneurs, but not for investors seeking 10x or more what PE firms pay. If a startup offers private equity as an exit, the conversation is over.

Constructed for purchase

The startup wants a high-multiple exit. Unless the company targets $1B in revenue and does an IPO, exit means acquisition.

If they're constructing the business for acquisition or themselves, founders must decide.

If you want an indefinitely-running business, I applaud you. We need more long-term founders. Most successful organizations are founded around consumer demands, not venture capital's urge to grow fast and exit. Not venture funding.

if you don't match the venture model, what to do

VC funds moonshots. The 10% that succeed are extraordinary. Not every firm is a rocketship, and launching the wrong startup into space, even with money, will explode.

But just because your startup won't make $100M in 5 years doesn't mean it's a bad business. Most successful companies don't follow this model. It's not venture capital-friendly.

Although venture capital gets the most attention due to a few spectacular triumphs (and disasters), it's not the only or even most typical option to fund a firm.

Other ways to support your startup:

Personal and family resources, such as credit cards, second mortgages, and lines of credit

bootstrapping off of sales

government funding and honors

Private equity & project financing

collaborating with a big business

Including a business partner

Before pitching angels and VCs, be sure your startup qualifies. If so, include them in your pitch.

Michelle Teheux

3 years ago

Get Real, All You Grateful Laid-Off LinkedIn Users

WTF is wrong with you people?

When I was laid off as editor of my town's daily newspaper, I went silent on social media. I knew it was coming and had been quietly removing personal items each day, but the pain was intense.

I posted a day later. I didn't bad-mouth GateHouse Media but expressed my sadness at leaving the newspaper industry, pride in my accomplishments, and hope for success in another industry.

Normal job-loss response.

What do you recognize as abnormal?

The bullshit I’ve been reading from laid-off folks on LinkedIn.

If you're there, you know. Many Twitter or Facebook/Meta employees recently lost their jobs.

Well, many of them did not “lose their job,” actually. They were “impacted by the layoffs” at their former employer. I keep seeing that phrase.

Why don’t they want to actually say it? Why the euphemism?

Many are excited about the opportunities ahead. The jobless deny being sad.

They're ecstatic! They have big plans.

Hope so. Sincerely! Being laid off stinks, especially if, like me, your skills are obsolete. It's worse if, like me, you're too old to start a new career. Ageism exists despite denials.

Nowadays, professionalism seems to demand psychotic levels of fake optimism.

Why? Life is unpredictable. That's indisputable. You shouldn't constantly complain or cry in public, but you also shouldn't pretend everything's great.

It makes you look psychotic, not positive. It's like saying at work:

“I was impacted by the death of my spouse of 20 years this week, and many of you have reached out to me, expressing your sympathy. However, I’m choosing to remember the amazing things we shared. I feel confident that there is another marriage out there for me, and after taking a quiet weekend trip to reset myself, I’ll be out there looking for the next great marital adventure! #staypositive #available #opentolove

Also:

“Now looking for our next #dreamhome after our entire neighborhood was demolished by a wildfire last night. We feel so lucky to have lived near so many amazing and inspirational neighbors, all of whom we will miss as we go on our next housing adventure. The best house for us is yet to come! If you have a great neighborhood you’d recommend, please feel free to reach out and touch base with us! #newhouse #newneighborhood #newlife

Admit it. That’s creepy.

The constant optimism makes me feel sick to my stomach.

Viscerally.

I hate fakes.

Imagine a fake wood grain desk. Wouldn't it be better if the designer accepted that it's plastic and went with that?

Real is better but not always nice. When something isn't nice, you don't have to go into detail, but you also shouldn't pretend it's great.

How to announce your job loss to the world.

Do not pretend to be happy, but don't cry and drink vodka all afternoon.

Say you loved your job, and that you're looking for new opportunities.

Yes, if you'll miss your coworkers. Otherwise, don't badmouth. No bridge-burning!

Please specify the job you want. You may want to pivot.

Alternatively, try this.

You could always flame out.

If you've pushed yourself too far into toxic positivity, you may be ready to burn it all down. If so, make it worthwhile by writing something like this:

Well, I was shitcanned by the losers at #Acme today. That bitch Linda in HR threw me under the bus just because she saw that one of my “friends” tagged me in some beach pics on social media after I called in sick with Covid. The good thing is I will no longer have to watch my ass around that #asspincher Ron in accounting, but I’m sad that I will no longer have a cushy job with high pay or access to the primo office supplies I’ve been sneaking home for the last five years. (Those gel pens were the best!) I am going to be taking some time off to enjoy my unemployment and hammer down shots of Jägermeister but in about five months I’ll be looking for anything easy with high pay and great benefits. Reach out if you can help! #officesupplies #unemploymentrocks #drinkinglikeagirlboss #acmesucks

It beats the fake positivity.