More on Technology

Mark Schaefer

3 years ago

20 Fun Uses for ChatGPT

Our RISE community is stoked on ChatGPT. ChatGPT has countless uses.

Early on. Companies are figuring out the legal and ethical implications of AI's content revolution. Using AI for everyday tasks is cool.

So I challenged RISE friends... Let's have fun and share non-obvious uses.

Onward!

1. Tweet

Jim MacLeod requested a design-themed Twitter thread from ChatGPT.

2. Novelize

Giuseppe Fratoni writes novels.

"This is amazing!" Impotent. I asked about writing, mystery novels, and jazz.

"Develop a mystery novel plot in which a disillusioned homicide detective hunts a serial killer who targets jazz musicians who play Autumn Leaves."

"I have:

Detective James Thompson is a seasoned veteran of the Homicide department, but lately he’s been feeling burnt out and disillusioned. His latest case, the murder of a young jazz pianist who was found brutally killed in his home, only serves to deepen his sense of despair … (much more provided)

3. Question-and-answer sessions

Bliss recommends:

You have a guest speaker and want to ask audience questions.

Pre-generate questions with ChatGPT. "And here's a question from our AI?"

The conference is about Western water scarcity. Water management expert. My AI question was...

Which desalination-related questions should I ask an expert?

ChatGPT said...

How is desalination currently being used around the world, and in what types of locations is it most effective?

What are the potential advantages and disadvantages of using desalination to provide fresh water?

What are some of the key challenges and limitations of desalination technology, and how are they being addressed?

4. Game-making

Richard's a board game expert. He suggests using ChatGPT to plan games. Note: Some people have started getting help solving crossword puzzle clues.

5. Matchmaking

Tanya Thompson thinks it helps dating. ChatGPT:

Here are some flirty first-message ideas for dating apps:

I noticed you from afar. I had to say hi and see if we clicked.

Your profile picture is gorgeous. I'm obsessed with your [Feature] and want to know you better.

I don't usually message first, but I had to. You're [Attractive Quality] and I can't wait to talk.”

Hello. Your profile intimidates me. I'm starstruck by your [Attractiveness]. (Many instances)

5. Solitude/anxiety

ChatGPT excels at one-on-one conversations.

PepperBrooks: "I'm sad and lonely."

ChatGPT: I’m sorry to hear that you’re feeling sad and lonely. It’s natural to feel down sometimes, but it’s important to remember that you’re not alone. There are many people who care about you and want to help you. It can be helpful to talk to someone about your feelings, whether it’s a close friend, family member, or mental health professional.

Sometimes, just expressing your thoughts and emotions to someone else can help to alleviate your sadness and loneliness. It’s also important to take care of yourself and do things that make you happy, such as spending time with loved ones, pursuing a hobby, or exercising. These things can help to improve your mood and make you feel more connected to others. Remember that you are strong and capable, and that you can get through this difficult time.

Daniel Nestle asked ChatGPT to generate journal prompts to help him deal with anxiety.

Write down the things that are causing you anxiety at the moment.

Describe a situation in which you felt anxious and how you coped with it.

Reflect on how your thoughts and beliefs contribute to your anxiety.

Write a letter to your anxiety, expressing your feelings and concerns

Imagine yourself in a calm and peaceful place, and describe it in detail.

(many more items followed)

6. Names

Continued Daniel:

Also, naming ideas.

Me: Name this drink. Japanese gin, unsweetened tonic, lime, Szechuan pepper, grapefruit.

ChatGPT: How about “Sakura Sunrise?” The name incorporates the Japanese gin and the citrus flavors of grapefruit and lime, and the word “sunrise” suggests a fresh and invigorating start to the day. Alternatively, you could go with something like “Peppered Grapefruit Gin Fizz” to emphasize the unique flavor combination of Szechuan pepper and grapefruit.

7. Translation on the go

Monks recommends: ChatGPT is a perfect mobile translation tool. Say you need help in Italy.

ME: Translate to Italian

Where is the nearest gas station?

ChatGPT: “La mia auto sta per esaurire il carburante, mi puoi dire dove si trova la stazione di servizio più vicina?”

8. Fitness

Monks added:

ME: In 3 months, I want to run 5km. Plan out how I can do this.

ChatGPT: Here's a 3-month plan to run 5km.

Start by walking for 15–20 minutes each day to build up your endurance and get your body used to regular exercise.

After a week of walking, begin incorporating short jogs into your routine. Start by jogging for 1–2 minutes at a time, with walking breaks in between. Gradually increase the length of your jogs and reduce the amount of time you spend walking.

Once you can jog for 10 minutes without stopping, start increasing the distance of your runs. Aim to run 1km without stopping and then gradually increase the distance over time.

In addition to your regular runs, incorporate strength training and stretching into your routine to improve your overall fitness and reduce the risk of injury.

(more details continued on)

9. Integrations and coding

10. Teacher lesson plans

Spencer Crandall saved time.

This morning, I wondered who has the hardest job. Teachers.

Many students, little time. ChatGPT could handle lesson planning. I tried it and got this.

Lesson Title: The History and Impact of Artificial Intelligence

Students will understand the history and development of AI and the potential positive and negative impacts on society.

AI timeline AI technologies and applications

Overview:

Introduce the topic of artificial intelligence, and explain that it refers to the development of computer systems and algorithms that can simulate human intelligence and behavior.

Show the timeline of significant events in the history of AI, and discuss the key milestones and developments in the field.

Show examples of AI technologies and applications, and explain how they are used in various industries and fields.

Discuss the potential positive and negative impacts of AI on society, including its potential to improve productivity, efficiency, and safety, as well as its potential to create new challenges and concerns, such as job displacement and ethical issues.

(many more details followed)

11. Find podcast guests.

Joeri Billast suggested:

Who should be on my Web3 podcast?

ChatGPT: Web3 marketing experts, influencers, and thought leaders make great podcast guests. These include Web3 marketers, content creators, business owners, entrepreneurs, and technologists. You could invite Web3 startups and organizations to your podcast.

Please give examples.

ChatGPT: Ideal Web3 marketing podcast guests include:

Tim Berner-Lee, inventor of the World Wide Web

Chris Dixon, general partner at Andreessen Horowitz

Vitalik Buterin, founder of Ethereum

Joe Lubin, founder of ConsenSys

Susan Fowler, author of Whistleblower

(many other suggestions)

12. Comments and reviews on social media

Lisa Monk thinks ChatGPT can help retail staff with social media.

She asked ChatGPT to "review this restaurant"

POSITIVE CUSTOMER REVIEW: I absolutely love this place. We eat out a lot however this place has topped it all. Perfect meals, amazing staff and the timing for it all was great! Special thanks to Sophie B (you rock) From your guests all the way from the Gold Coast, THANK YOU

ChatGPT REPLY: Thank you so much for your kind words and for taking the time to write a review. We are delighted to hear that you had such a great experience at our restaurant. We are thrilled that you enjoyed your meal and the service provided by Sophie. It’s always a pleasure to serve guests visiting from the Gold Coast and we are grateful for your support. We look forward to welcoming you back again soon. Thank you again for your positive review.

CUSTOMER REVIEW: Most average food, there is a nice view, But that’s about it

ChatGPT: Thank you for your review. We apologize if your dining experience was not up to your expectations. We strive to provide top-quality food and service to all of our customers, and we will take your feedback into consideration as we continue to improve. Thank you for letting us know about your experience.

13. Crime-fighting

Zack Seipert said, "I researched some famous unsolved murders, and ChatGPT laid out the crime, suspects, clues, and more." I bet experienced detectives could solve some of these cold cases.

14. Reduce bias

Joanne Taylor struggles to remove biases from her writing. She asked ChatGPT for ideas.

I asked ChatGPT to check a text for racial bias, and it did well.

15. Furnishings

I designed a small, unique table using ChatGPT and MidJourney. I edited ChatGPT's idea for clarity and brevity before entering it in MidJourney.

Results:

16. Serialize a novel

Scott Scowcoft led ChatCPT through iterations of a story text to create serialized novel frames. You can view this here.

17. Interview preparation

I was on a sales podcast. Although this is marketing-related, it's not my forte. I asked ChatGPT for help with a sales interview. Response:

Here are some interview questions about the future of sales and how to answer them.

How will technology affect sales in the future?

Technology will impact sales in the coming years. Automation and AI could be used to handle routine tasks, and data analysis and CRM could become more important. E-commerce and online sales channels are also reshaping the sales landscape.

How will the salesperson's role change?

Future sales roles may change in response to changing customer needs and expectations. Salespeople must be knowledgeable and consultative to help customers navigate complex products and services. Increasing importance of data analysis and CRM tools will require salespeople to understand and use technology effectively.

Future sales professionals will need what skills?

Future salespeople will need many skills. Sales professionals must have strong communication and interpersonal skills, as well as product and service knowledge and the ability to use data analysis and CRM tools effectively. Adapting to changing customer needs and staying current on industry trends will also be important for sales success.

18. Gift ideas

I need Christmas gift ideas for my wife. I asked:

Suggest a gift idea under $200 for a middle-aged woman who loves The Eagles.

A middle-aged woman who loves The Eagles might like a vinyl record of "Hotel California" or "The Long Run." Many music fans enjoy the unique sound and experience of vinyl records.

Pair the record with a high-quality record player for a complete gift. The vinyl record and record player should fit within your $200 budget for an Eagles fan.

19. Explaining complex concepts

I entered: Explain quantum physics to me as if I were 10 years old.

Quantum physics is a branch of science that studies how tiny particles, like atoms and subatomic particles, behave. These particles are so small that they don’t always follow the same rules as the things we can see and touch every day.

For example, sometimes they can be in two places at the same time, or they can be friends with each other even if they are far apart. This might sound strange, but it’s what scientists have learned from studying these tiny particles. Quantum physics helps us understand how the world works at a very small scale, and it can also help us make new technologies, like computers that are much faster than the ones we have today.

20. AP-style editing

Blogs, journals, and books follow AP Style. I asked ChatGPT to "edit using AP Style" and it did a great job.

And then... "Write a catchy headline for a blog post about 16 ChatGPT uses"

Here are some possible blog post titles for 20 creative ChatGPT uses:

“20 Fun and Creative Ways to Use ChatGPT: From Games to Quizzes and More”

“The Ultimate Guide to Using ChatGPT for Entertainment and Creativity”

“20 Reasons to Use ChatGPT: How it Can Enhance Your Gaming, Storytelling, and More”

20 Entertaining Uses for ChatGPT You Never Knew Were Possible”

“Unleash Your Imagination with ChatGPT: 16 Creative and Fun Ways to Use it”

In a previous post, I said ChatGPT will change marketing careers forever. I have never made a statement like that in my life. Even in the early days of the internet, people were cautious.

This technology was just released two weeks ago, and over a million people are already using it. This is the fastest technology adoption in history.

Today's post offers inventive and entertaining ideas, but it's just the beginning. ChatGPT writes code, music, and papers.

Tim Soulo

3 years ago

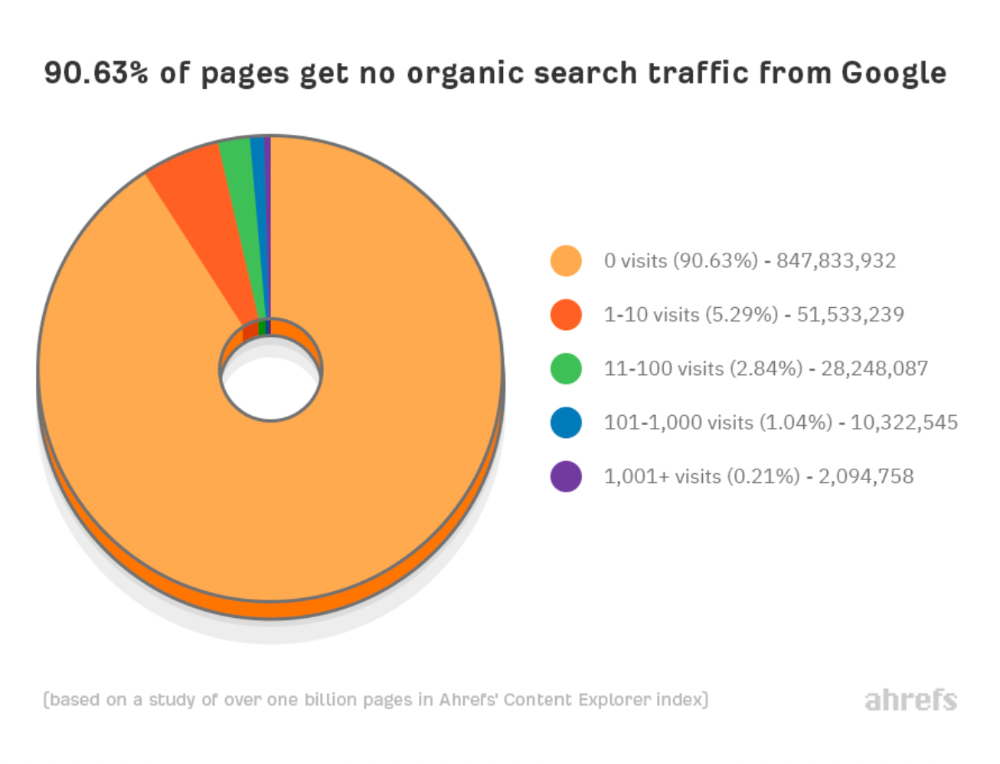

Here is why 90.63% of Pages Get No Traffic From Google.

The web adds millions or billions of pages per day.

How much Google traffic does this content get?

In 2017, we studied 2 million randomly-published pages to answer this question. Only 5.7% of them ranked in Google's top 10 search results within a year of being published.

94.3 percent of roughly two million pages got no Google traffic.

Two million pages is a small sample compared to the entire web. We did another study.

We analyzed over a billion pages to see how many get organic search traffic and why.

How many pages get search traffic?

90% of pages in our index get no Google traffic, and 5.2% get ten visits or less.

90% of google pages get no organic traffic

How can you join the minority that gets Google organic search traffic?

There are hundreds of SEO problems that can hurt your Google rankings. If we only consider common scenarios, there are only four.

Reason #1: No backlinks

I hate to repeat what most SEO articles say, but it's true:

Backlinks boost Google rankings.

Google's "top 3 ranking factors" include them.

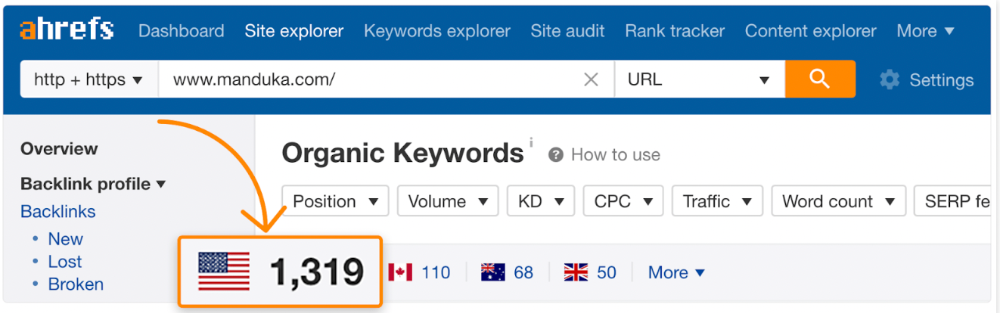

Why don't we divide our studied pages by the number of referring domains?

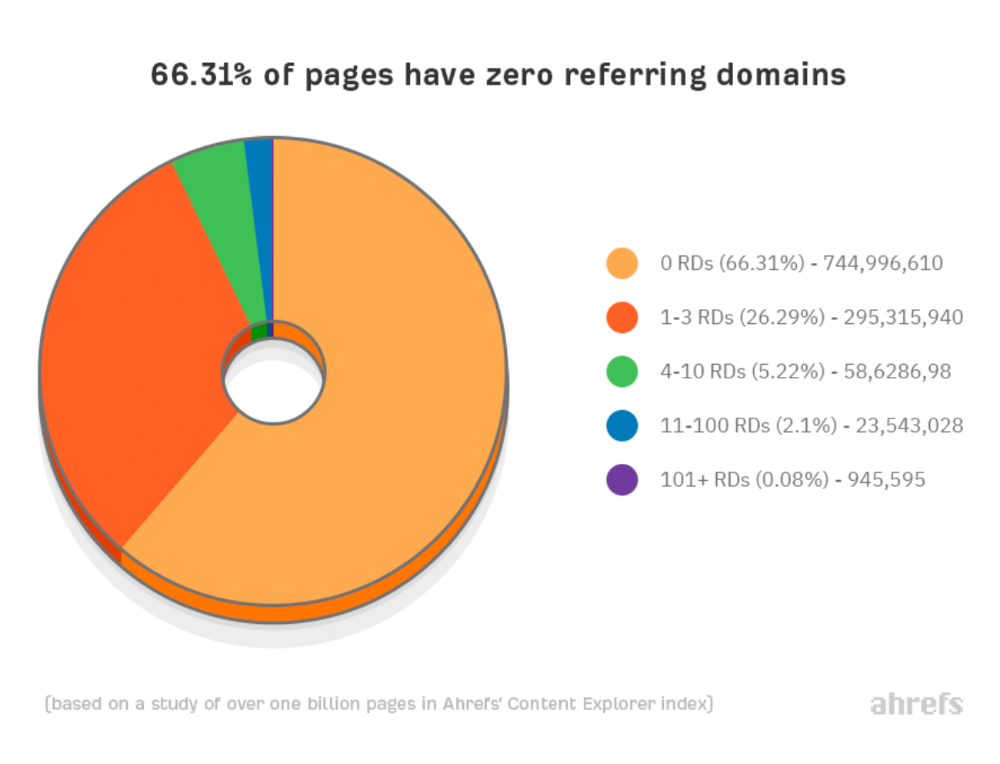

66.31 percent of pages have no backlinks, and 26.29 percent have three or fewer.

Did you notice the trend already?

Most pages lack search traffic and backlinks.

But are these the same pages?

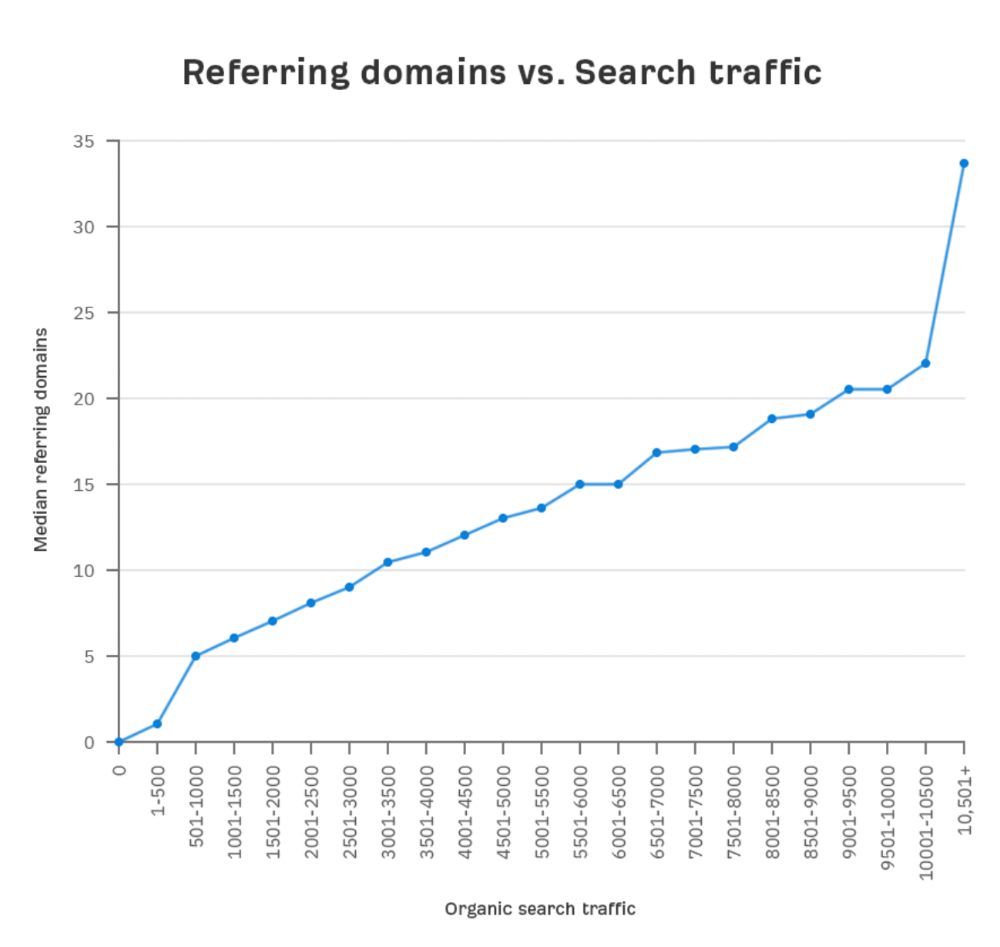

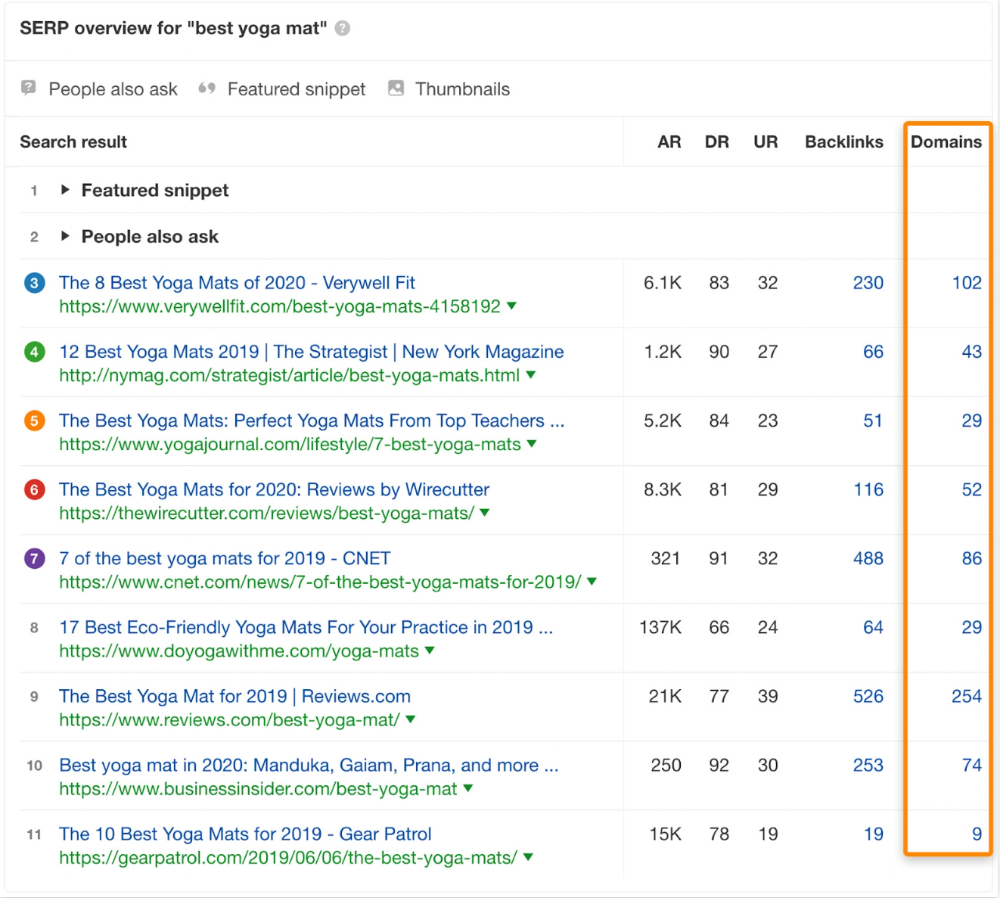

Let's compare monthly organic search traffic to backlinks from unique websites (referring domains):

More backlinks equals more Google organic traffic.

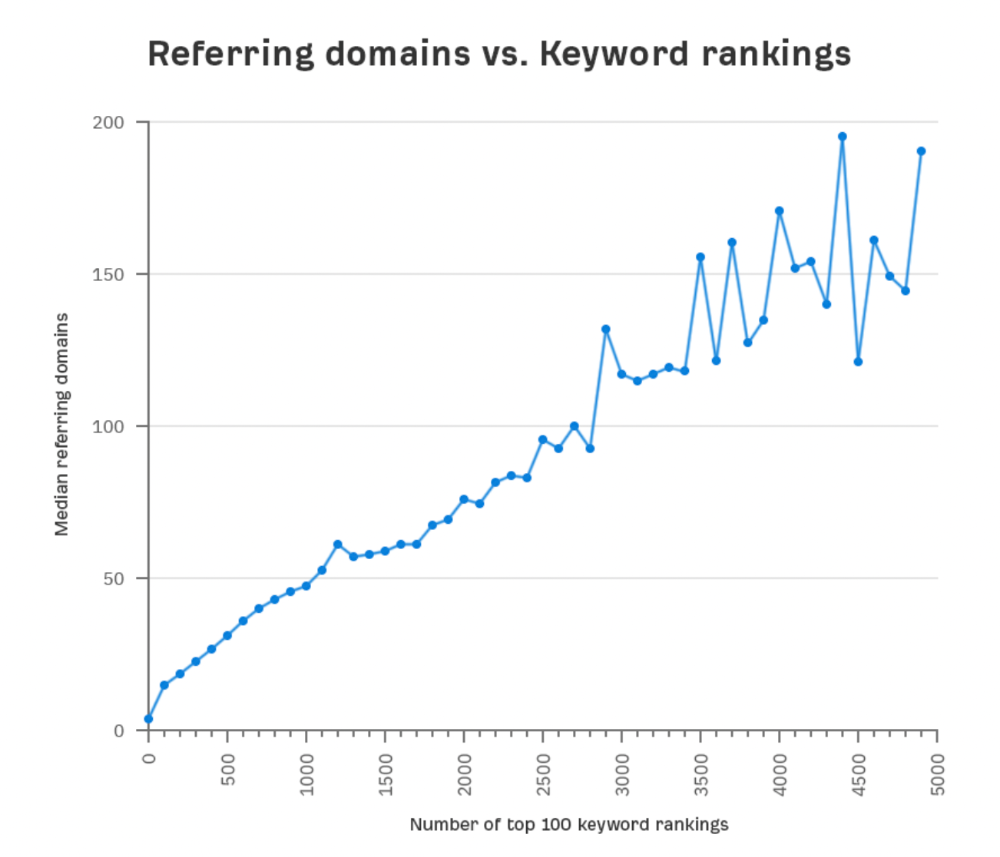

Referring domains and keyword rankings are correlated.

It's important to note that correlation does not imply causation, and none of these graphs prove backlinks boost Google rankings. Most SEO professionals agree that it's nearly impossible to rank on the first page without backlinks.

You'll need high-quality backlinks to rank in Google and get search traffic.

Is organic traffic possible without links?

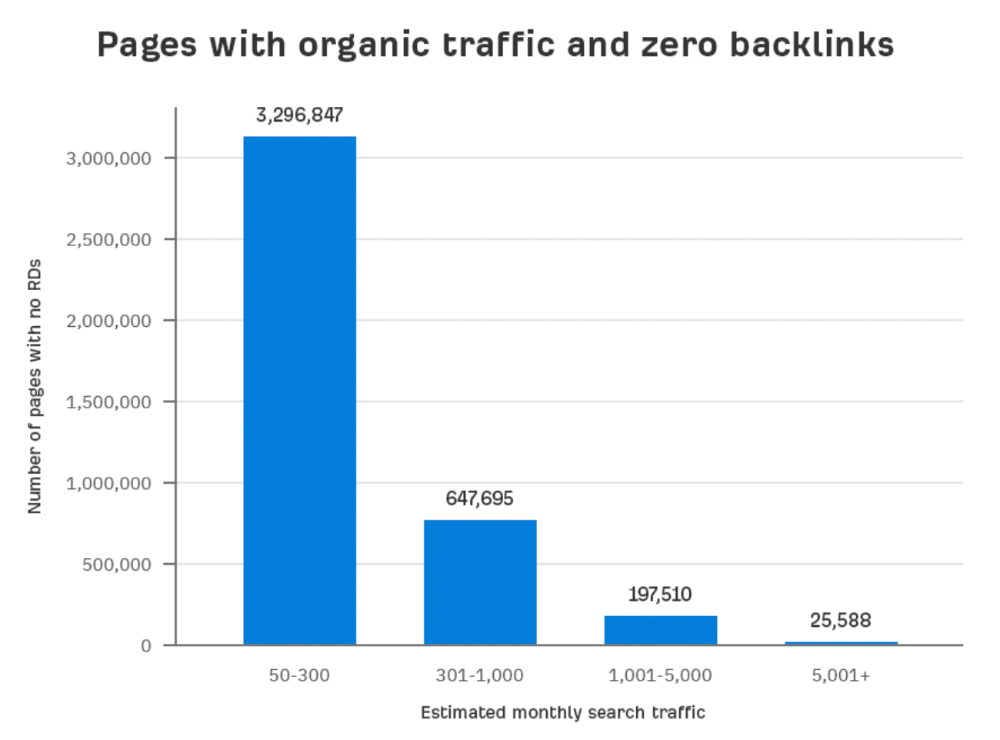

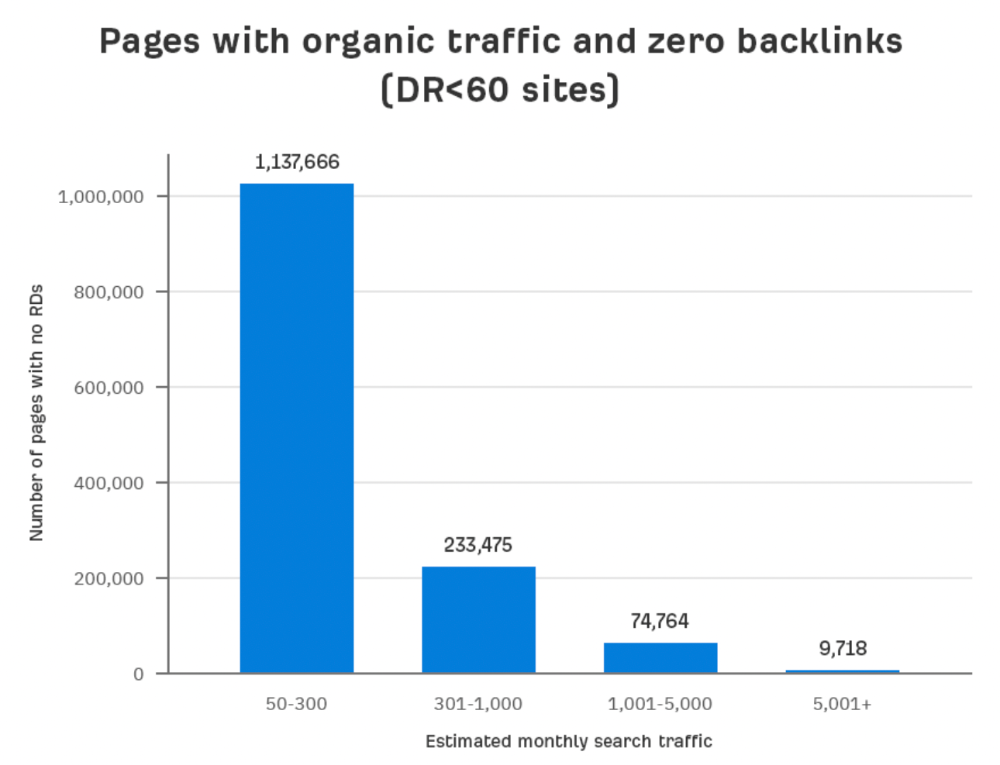

Here are the numbers:

Four million pages get organic search traffic without backlinks. Only one in 20 pages without backlinks has traffic, which is 5% of our sample.

Most get 300 or fewer organic visits per month.

What happens if we exclude high-Domain-Rating pages?

The numbers worsen. Less than 4% of our sample (1.4 million pages) receive organic traffic. Only 320,000 get over 300 monthly organic visits, or 0.1% of our sample.

This suggests high-authority pages without backlinks are more likely to get organic traffic than low-authority pages.

Internal links likely pass PageRank to new pages.

Two other reasons:

Our crawler's blocked. Most shady SEOs block backlinks from us. This prevents competitors from seeing (and reporting) PBNs.

They choose low-competition subjects. Low-volume queries are less competitive, requiring fewer backlinks to rank.

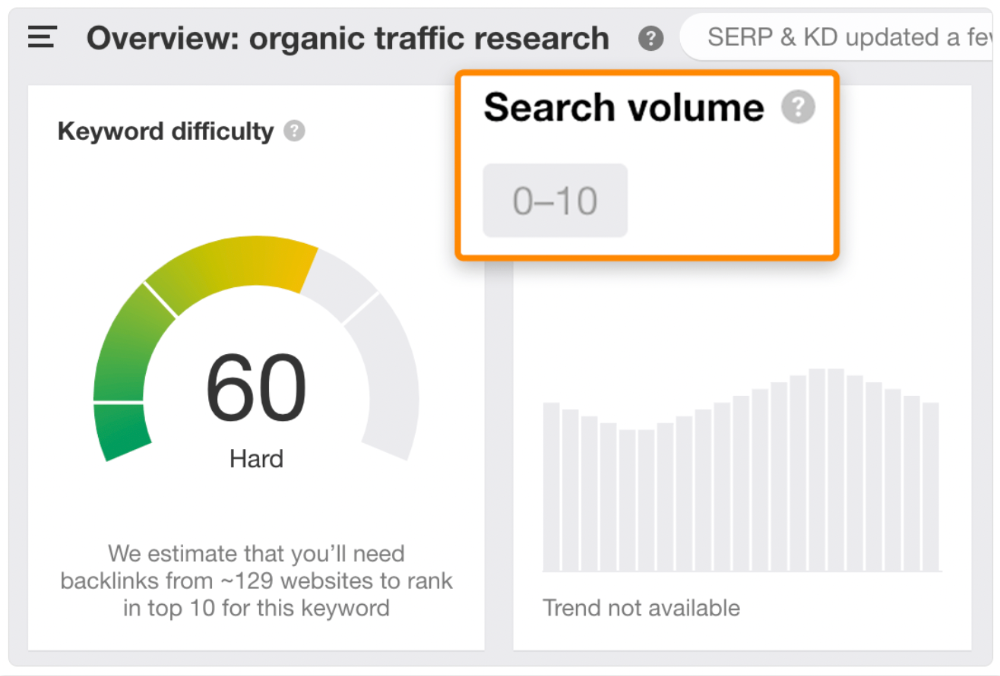

If the idea of getting search traffic without building backlinks excites you, learn about Keyword Difficulty and how to find keywords/topics with decent traffic potential and low competition.

Reason #2: The page has no long-term traffic potential.

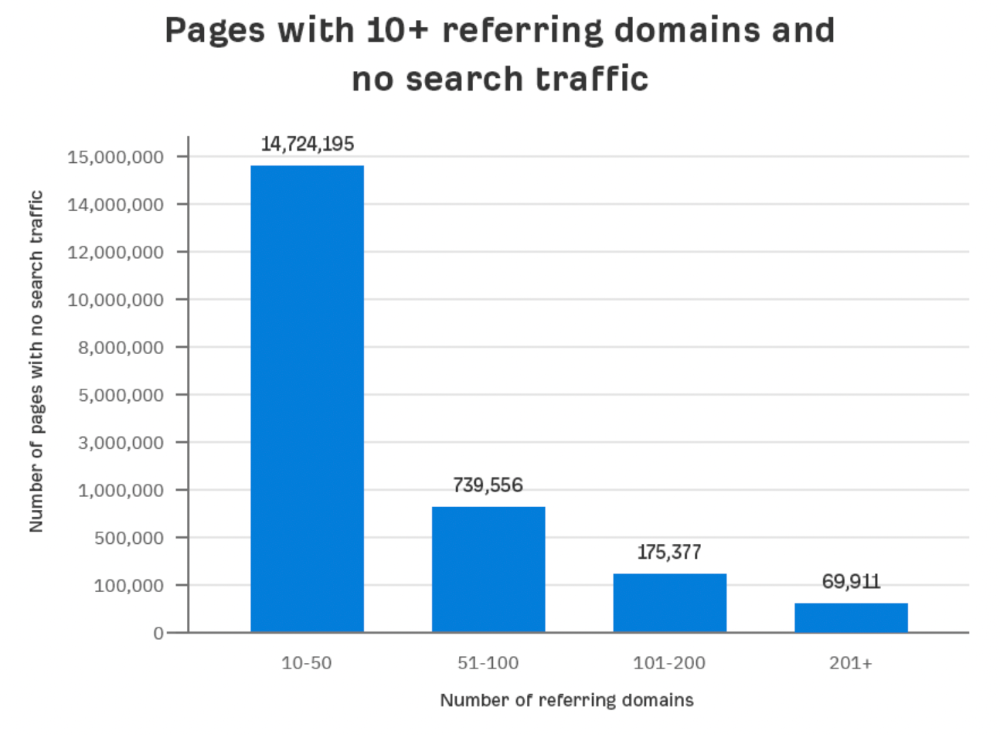

Some pages with many backlinks get no Google traffic.

Why? I filtered Content Explorer for pages with no organic search traffic and divided them into four buckets by linking domains.

Almost 70k pages have backlinks from over 200 domains, but no search traffic.

By manually reviewing these (and other) pages, I noticed two general trends that explain why they get no traffic:

They overdid "shady link building" and got penalized by Google;

They're not targeting a Google-searched topic.

I won't elaborate on point one because I hope you don't engage in "shady link building"

#2 is self-explanatory:

If nobody searches for what you write, you won't get search traffic.

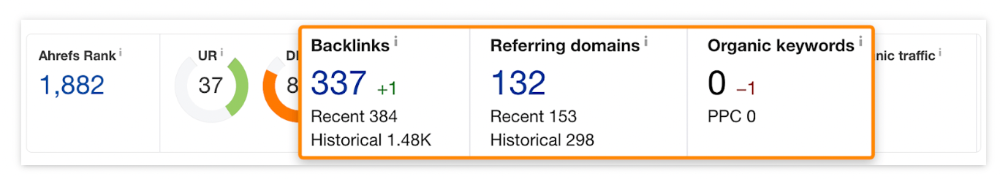

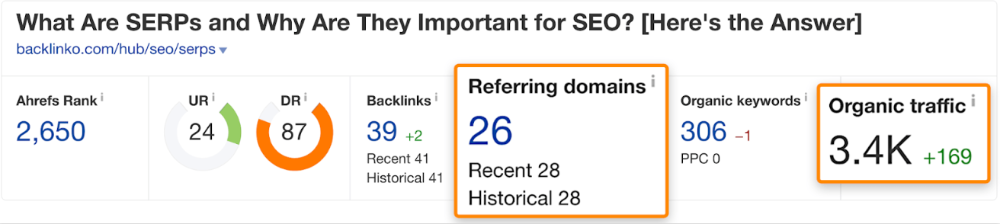

Consider one of our blog posts' metrics:

No organic traffic despite 337 backlinks from 132 sites.

The page is about "organic traffic research," which nobody searches for.

News articles often have this. They get many links from around the web but little Google traffic.

People can't search for things they don't know about, and most don't care about old events and don't search for them.

Note:

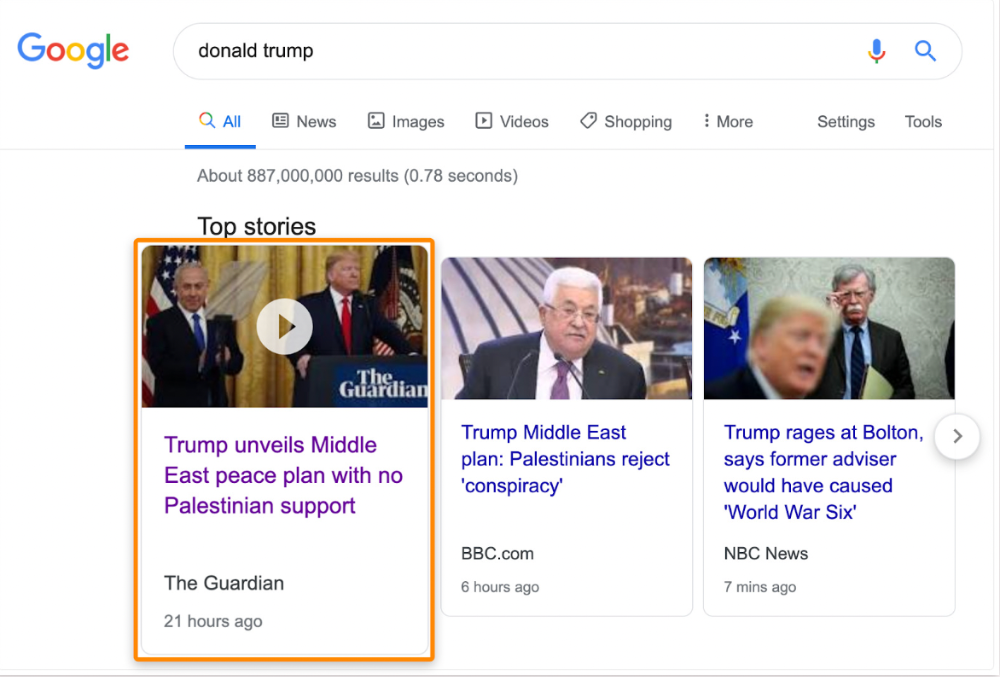

Some news articles rank in the "Top stories" block for relevant, high-volume search queries, generating short-term organic search traffic.

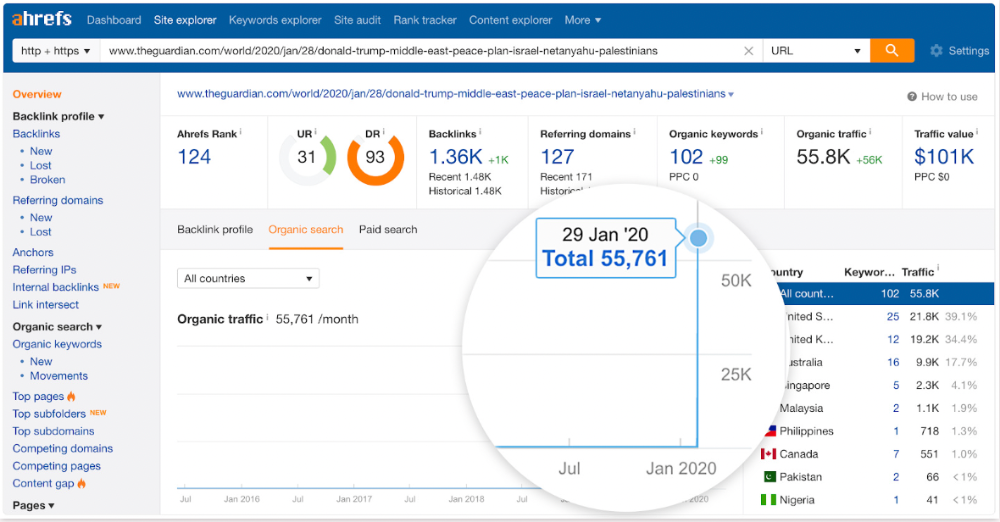

The Guardian's top "Donald Trump" story:

Ahrefs caught on quickly:

"Donald Trump" gets 5.6M monthly searches, so this page got a lot of "Top stories" traffic.

I bet traffic has dropped if you check now.

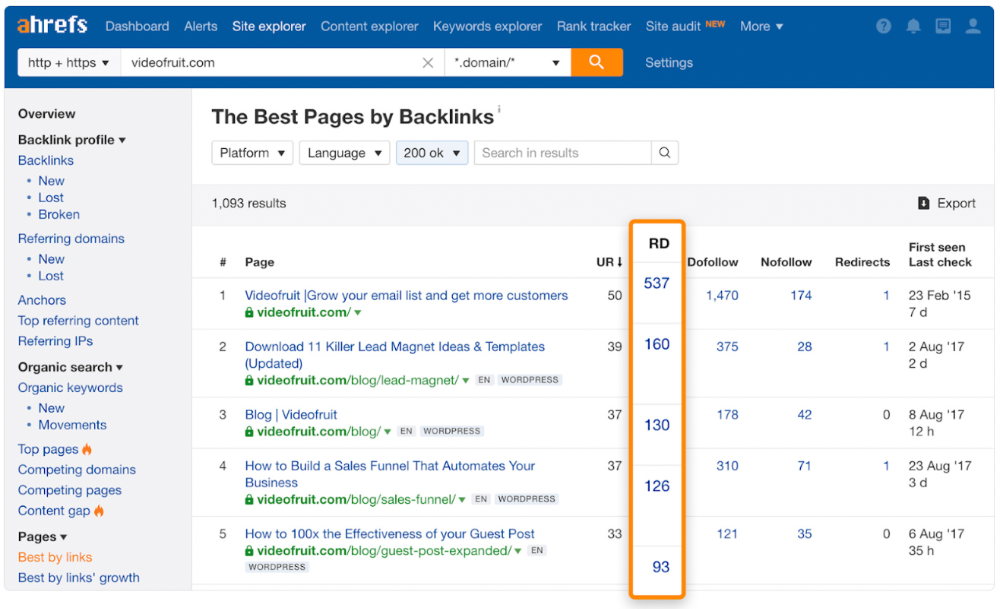

One of the quickest and most effective SEO wins is:

Find your website's pages with the most referring domains;

Do keyword research to re-optimize them for relevant topics with good search traffic potential.

Bryan Harris shared this "quick SEO win" during a course interview:

He suggested using Ahrefs' Site Explorer's "Best by links" report to find your site's most-linked pages and analyzing their search traffic. This finds pages with lots of links but little organic search traffic.

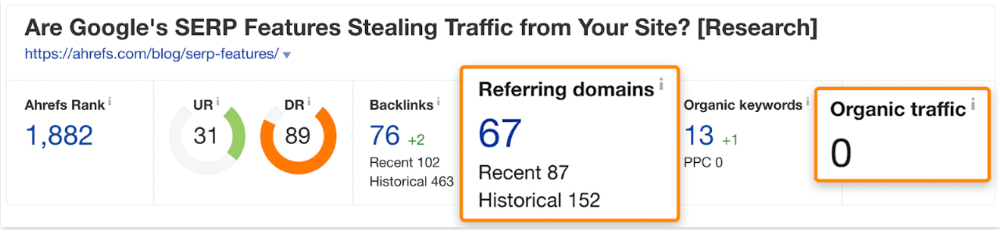

We see:

The guide has 67 backlinks but no organic traffic.

We could fix this by re-optimizing the page for "SERP"

A similar guide with 26 backlinks gets 3,400 monthly organic visits, so we should easily increase our traffic.

Don't do this with all low-traffic pages with backlinks. Choose your battles wisely; some pages shouldn't be ranked.

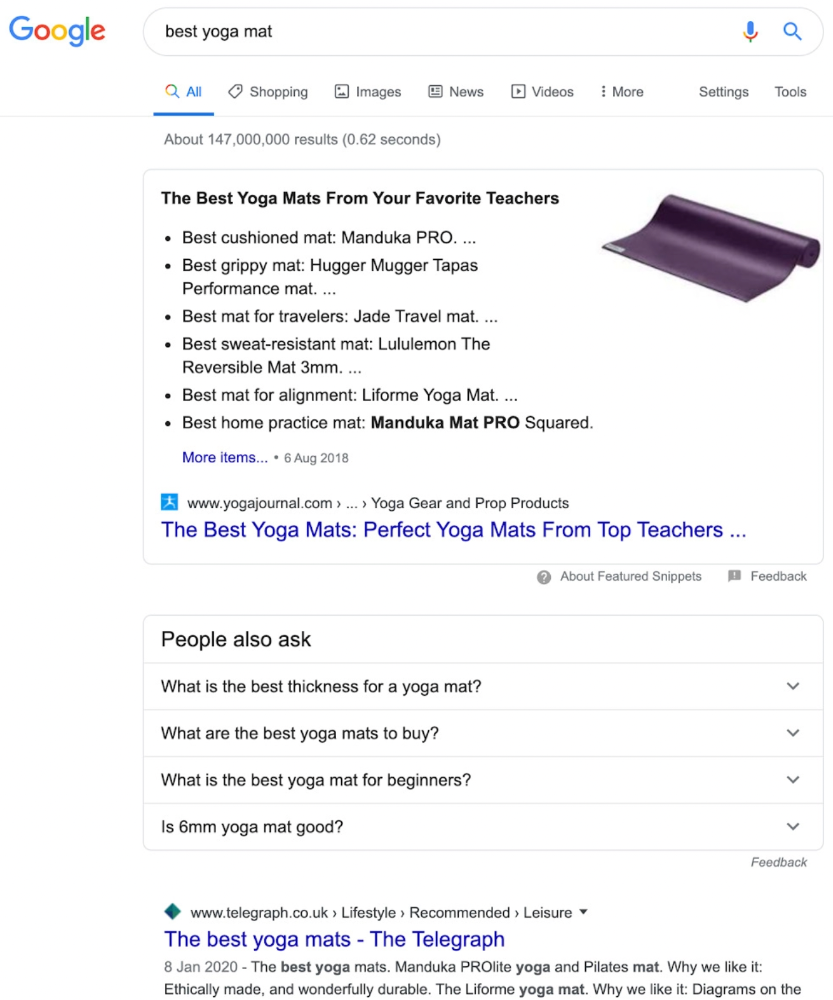

Reason #3: Search intent isn't met

Google returns the most relevant search results.

That's why blog posts with recommendations rank highest for "best yoga mat."

Google knows that most searchers aren't buying.

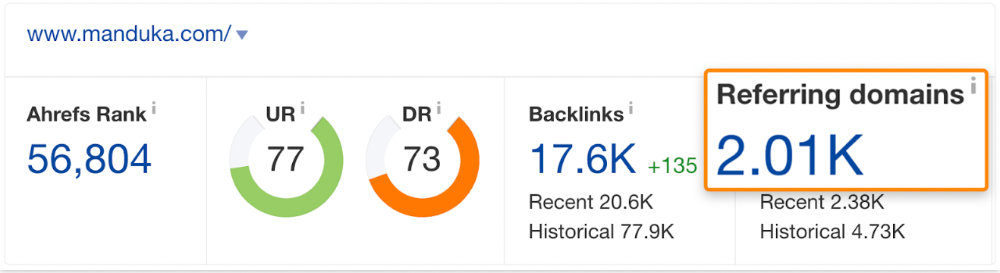

It's also why this yoga mats page doesn't rank, despite having seven times more backlinks than the top 10 pages:

The page ranks for thousands of other keywords and gets tens of thousands of monthly organic visits. Not being the "best yoga mat" isn't a big deal.

If you have pages with lots of backlinks but no organic traffic, re-optimizing them for search intent can be a quick SEO win.

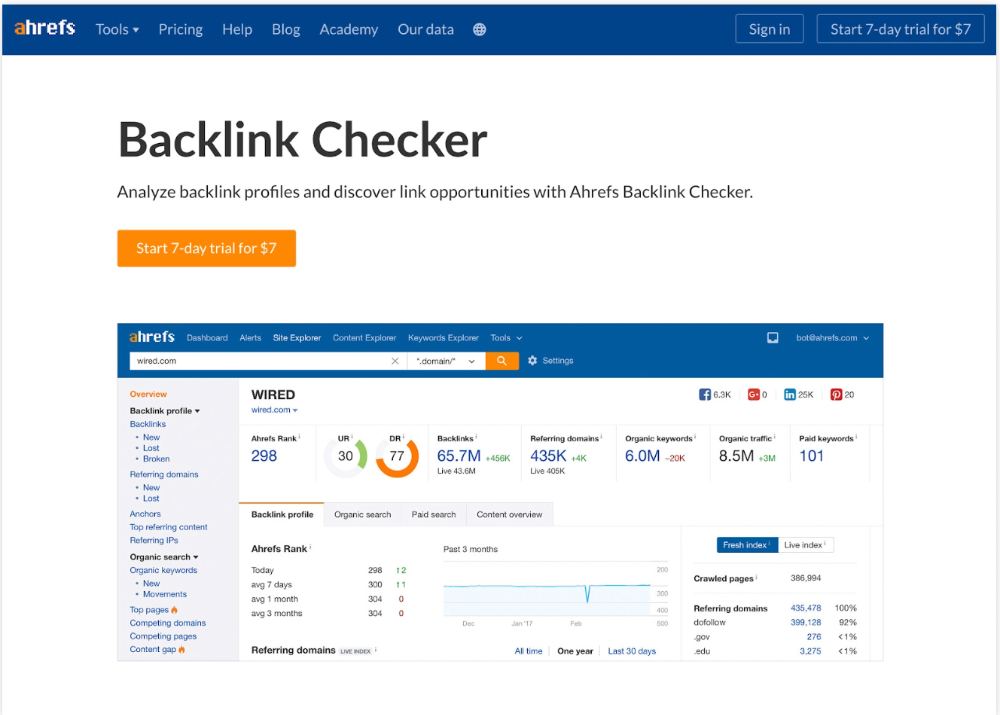

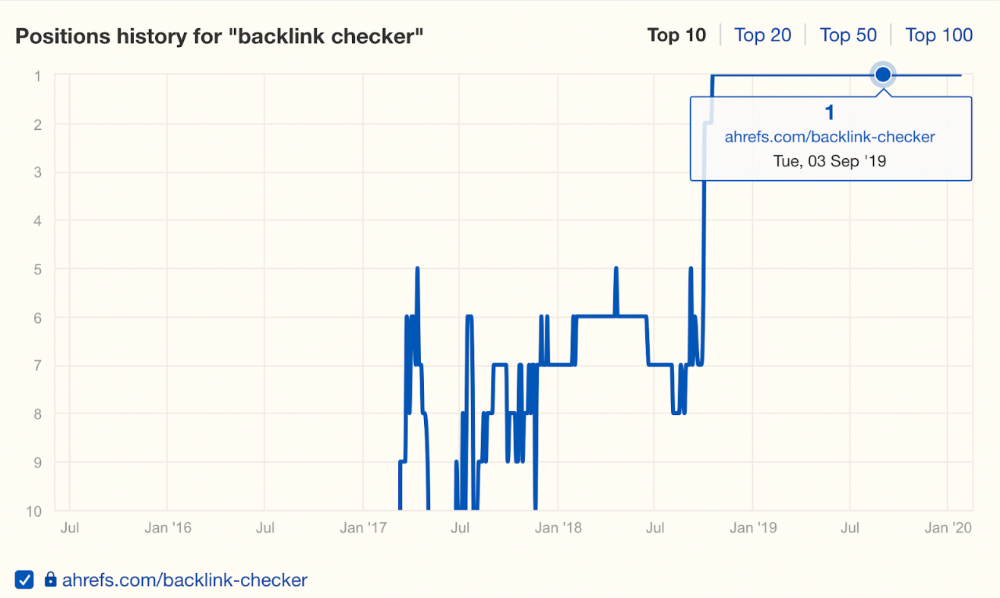

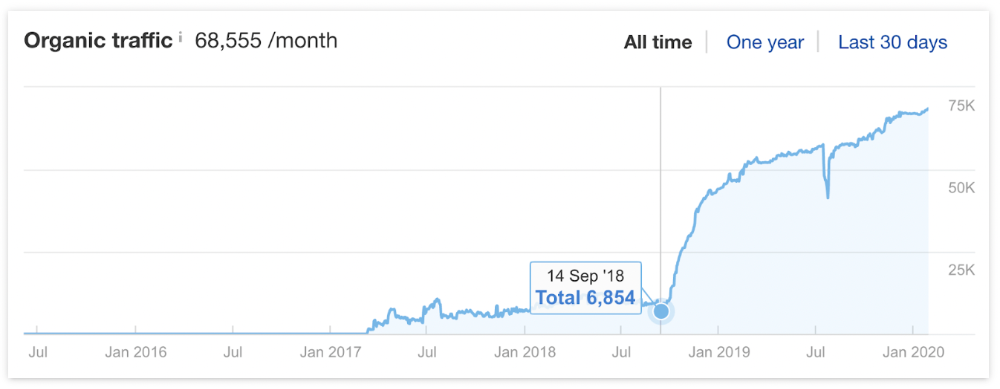

It was originally a boring landing page describing our product's benefits and offering a 7-day trial.

We realized the problem after analyzing search intent.

People wanted a free tool, not a landing page.

In September 2018, we published a free tool at the same URL. Organic traffic and rankings skyrocketed.

Reason #4: Unindexed page

Google can’t rank pages that aren’t indexed.

If you think this is the case, search Google for site:[url]. You should see at least one result; otherwise, it’s not indexed.

A rogue noindex meta tag is usually to blame. This tells search engines not to index a URL.

Rogue canonicals, redirects, and robots.txt blocks prevent indexing.

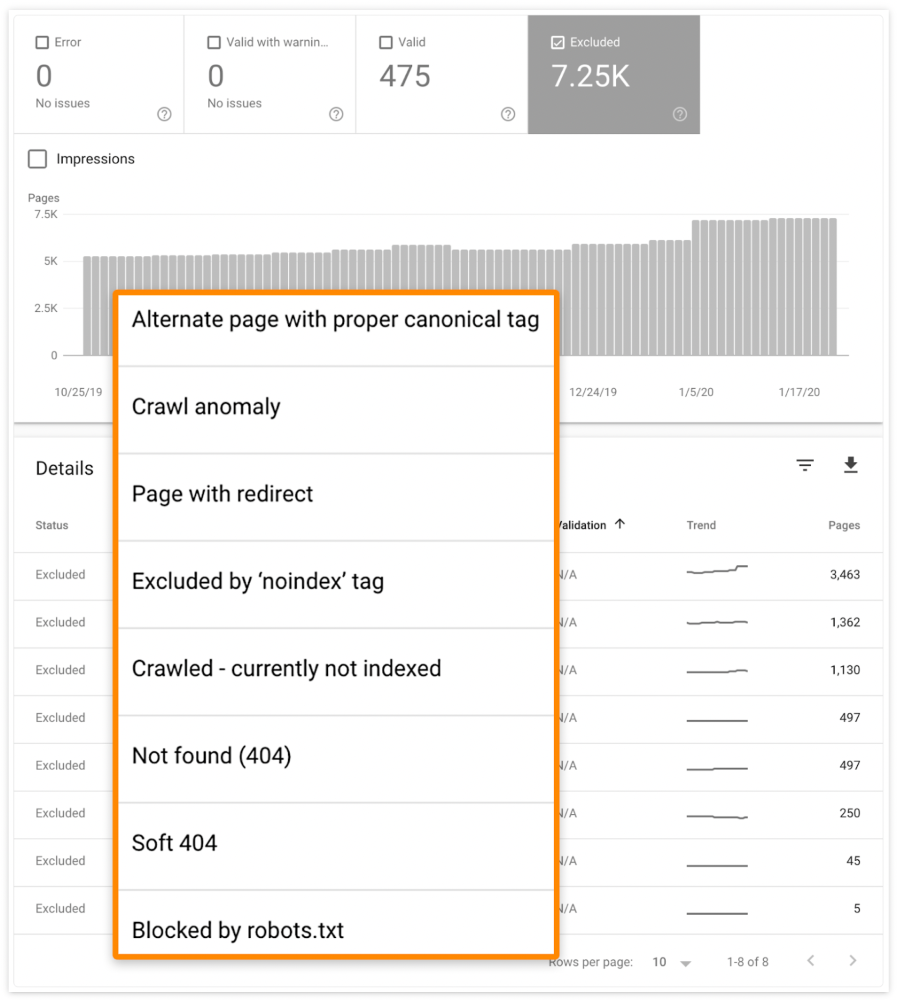

Check the "Excluded" tab in Google Search Console's "Coverage" report to see excluded pages.

Google doesn't index broken pages, even with backlinks.

Surprisingly common.

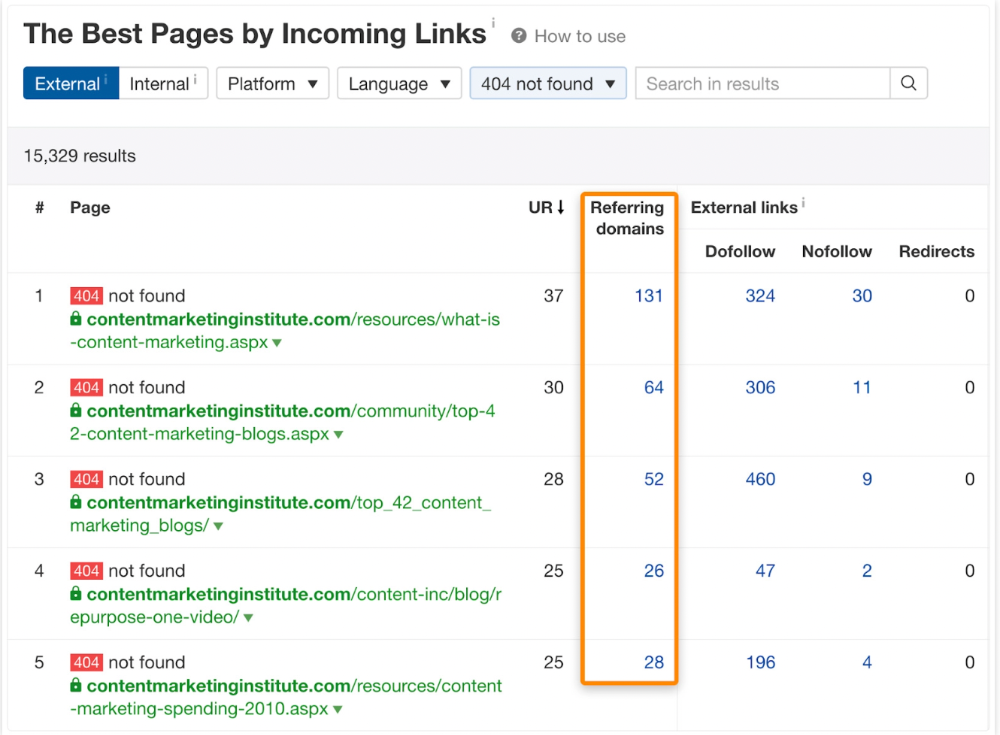

In Ahrefs' Site Explorer, the Best by Links report for a popular content marketing blog shows many broken pages.

One dead page has 131 backlinks:

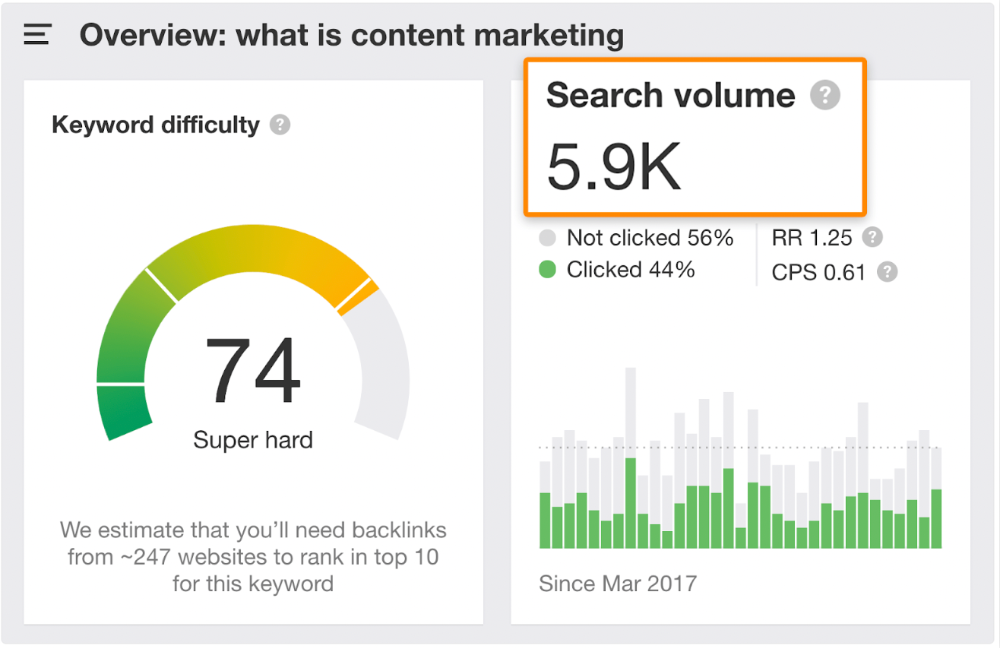

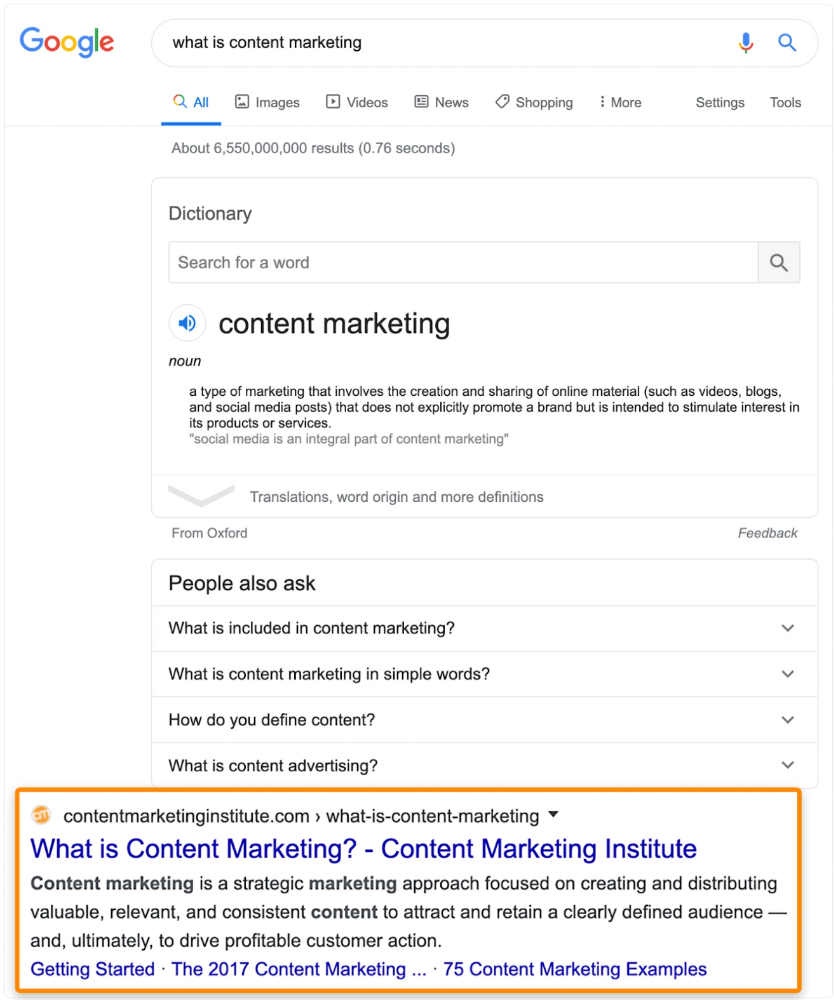

According to the URL, the page defined content marketing. —a keyword with a monthly search volume of 5,900 in the US.

Luckily, another page ranks for this keyword. Not a huge loss.

At least redirect the dead page's backlinks to a working page on the same topic. This may increase long-tail keyword traffic.

This post is a summary. See the original post here

Will Lockett

3 years ago

The World Will Change With MIT's New Battery

It's cheaper, faster charging, longer lasting, safer, and better for the environment.

Batteries are the future. Next-gen and planet-saving technology, including solar power and EVs, require batteries. As these smart technologies become more popular, we find that our batteries can't keep up. Lithium-ion batteries are expensive, slow to charge, big, fast to decay, flammable, and not environmentally friendly. MIT just created a new battery that eliminates all of these problems. So, is this the battery of the future? Or is there a catch?

When I say entirely new, I mean it. This battery employs no currently available materials. Its electrodes are constructed of aluminium and pure sulfur instead of lithium-complicated ion's metals and graphite. Its electrolyte is formed of molten chloro-aluminate salts, not an organic solution with lithium salts like lithium-ion batteries.

How does this change in materials help?

Aluminum, sulfur, and chloro-aluminate salts are abundant, easy to acquire, and cheap. This battery might be six times cheaper than a lithium-ion battery and use less hazardous mining. The world and our wallets will benefit.

But don’t go thinking this means it lacks performance.

This battery charged in under a minute in tests. At 25 degrees Celsius, the battery will charge 25 times slower than at 110 degrees Celsius. This is because the salt, which has a very low melting point, is in an ideal state at 110 degrees and can carry a charge incredibly quickly. Unlike lithium-ion, this battery self-heats when charging and discharging, therefore no external heating is needed.

Anyone who's seen a lithium-ion battery burst might be surprised. Unlike lithium-ion batteries, none of the components in this new battery can catch fire. Thus, high-temperature charging and discharging speeds pose no concern.

These batteries are long-lasting. Lithium-ion batteries don't last long, as any iPhone owner can attest. During charging, metal forms a dendrite on the electrode. This metal spike will keep growing until it reaches the other end of the battery, short-circuiting it. This is why phone batteries only last a few years and why electric car range decreases over time. This new battery's molten salt slows deposition, extending its life. This helps the environment and our wallets.

These batteries are also energy dense. Some lithium-ion batteries have 270 Wh/kg energy density (volume and mass). Aluminum-sulfur batteries could have 1392 Wh/kg, according to calculations. They'd be 5x more energy dense. Tesla's Model 3 battery would weigh 96 kg instead of 480 kg if this battery were used. This would improve the car's efficiency and handling.

These calculations were for batteries without molten salt electrolyte. Because they don't reflect the exact battery chemistry, they aren't a surefire prediction.

This battery seems great. It will take years, maybe decades, before it reaches the market and makes a difference. Right?

Nope. The project's scientists founded Avanti to develop and market this technology.

So we'll soon be driving cheap, durable, eco-friendly, lightweight, and ultra-safe EVs? Nope.

This battery must be kept hot to keep the salt molten; otherwise, it won't work and will expand and contract, causing damage. This issue could be solved by packs that can rapidly pre-heat, but that project is far off.

Rapid and constant charge-discharge cycles make these batteries ideal for solar farms, homes, and EV charging stations. The battery is constantly being charged or discharged, allowing it to self-heat and maintain an ideal temperature.

These batteries aren't as sexy as those making EVs faster, more efficient, and cheaper. Grid batteries are crucial to our net-zero transition because they allow us to use more low-carbon energy. As we move away from fossil fuels, we'll need millions of these batteries, so the fact that they're cheap, safe, long-lasting, and environmentally friendly will be huge. Who knows, maybe EVs will use this technology one day. MIT has created another world-changing technology.

You might also like

Scott Hickmann

4 years ago

YouTube

This is a YouTube video:

Enrique Dans

3 years ago

What happens when those without morals enter the economic world?

I apologize if this sounds basic, but throughout my career, I've always been clear that a company's activities are shaped by its founder(s)' morality.

I consider Palantir, owned by PayPal founder Peter Thiel, evil. He got $5 billion tax-free by hacking a statute to help middle-class savings. That may appear clever, but I think it demonstrates a shocking lack of solidarity with society. As a result of this and other things he has said and done, I early on dismissed Peter Thiel as someone who could contribute anything positive to society, and events soon proved me right: we are talking about someone who clearly considers himself above everyone else and who does not hesitate to set up a company, Palantir, to exploit the data of the little people and sell it to the highest bidder, whoever that is and whatever the consequences.

The German courts have confirmed my warnings concerning Palantir. The problem is that politicians love its surveillance tools because they think knowing more about their constituents gives them power. These are ideal for dictatorships who want to snoop on their populace. Hence, Silicon Valley's triumphalist dialectic has seduced many governments at many levels and collected massive volumes of data to hold forever.

Dangerous company. There are many more. My analysis of the moral principles that disclose company management changed my opinion of Facebook, now Meta, and anyone with a modicum of interest might deduce when that happened, a discovery that leaves you dumbfounded. TikTok was easy because its lack of morality was revealed early when I saw the videos it encouraged minors to post and the repercussions of sharing them through its content recommendation algorithm. When you see something like this, nothing can convince you that the firm can change its morals and become good. Nothing. You know the company is awful and will fail. Speak it, announce it, and change it. It's like a fingerprint—unchangeable.

Some of you who read me frequently make its Facebook today jokes when I write about these firms, and that's fine: they're my moral standards, those of an elderly professor with thirty-five years of experience studying corporations and discussing their cases in class, but you don't have to share them. Since I'm writing this and don't have to submit to any editorial review, that's what it is: when you continuously read a person, you have to assume that they have moral standards and that sometimes you'll agree with them and sometimes you won't. Morality accepts hierarchies, nuances, and even obsessions. I know not everyone shares my opinions, but at least I can voice them. One day, one of those firms may sue me (as record companies did some years ago).

Palantir is incredibly harmful. Limit its operations. Like Meta and TikTok, its business strategy is shaped by its founders' immorality. Such a procedure can never be beneficial.

Owolabi Judah

3 years ago

How much did YouTube pay for 10 million views?

Ali's $1,054,053.74 YouTube Adsense haul.

YouTuber, entrepreneur, and former doctor Ali Abdaal. He began filming productivity and financial videos in 2017. Ali Abdaal has 3 million YouTube subscribers and has crossed $1 million in AdSense revenue. Crazy, no?

Ali will share the revenue of his top 5 youtube videos, things he's learned that you can apply to your side hustle, and how many views it takes to make a livelihood off youtube.

First, "The Long Game."

All good things take time to bear fruit. Compounding improves everything. Long-term work yields better returns. Ali made his first dollar after nine months and 85 videos.

Second, "One piece of content can transform your life, but you never know which one."

Had he abandoned YouTube at 84 videos without making any money, he wouldn't have filmed the 85th video that altered everything.

Third Lesson: Your Industry Choice Can Multiply.

The industry or niche you target as a business owner or side hustler can have a major impact on how much money you make.

Here are the top 5 videos.

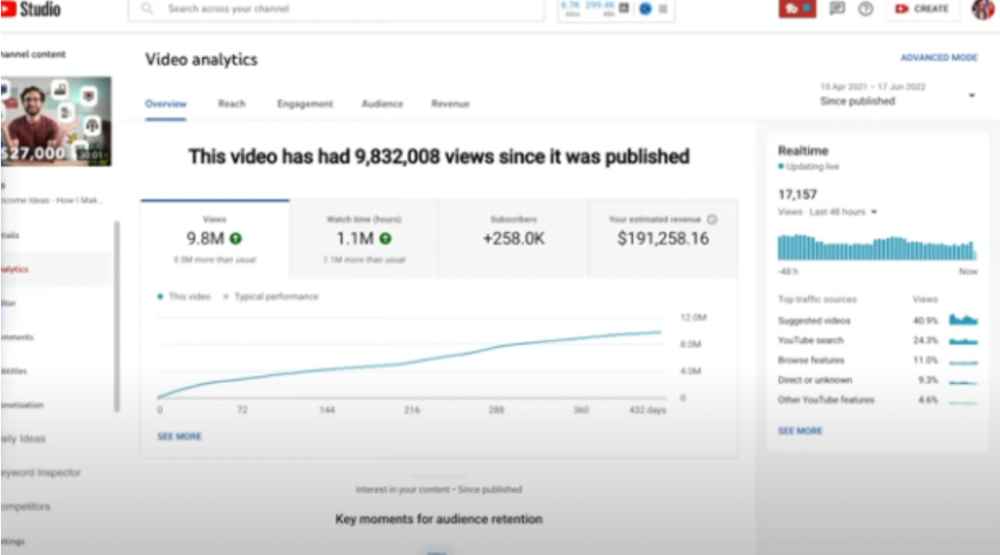

1) 9.8m views: $191,258.16 for 9 passive income ideas

Ali made 2 points.

We should consider YouTube videos digital assets. They're investments, which make us money. His investments are yielding passive income.

Investing extra time and effort in your films can pay off.

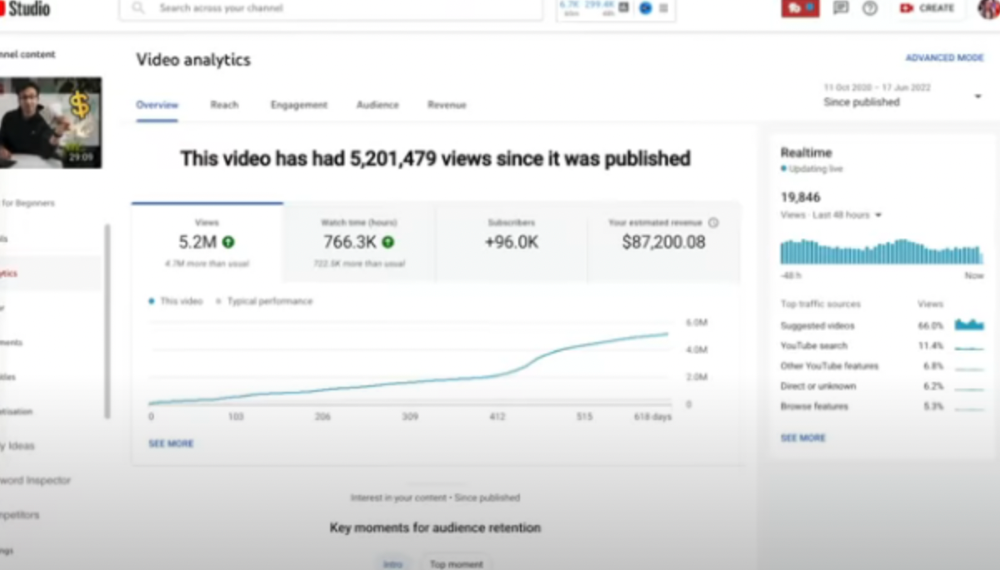

2) How to Invest for Beginners — 5.2m Views: $87,200.08.

This video did poorly in the first several weeks after it was published; it was his tenth poorest performer. Don't worry about things you can't control. This applies to life, not just YouTube videos.

He stated we constantly have anxieties, fears, and concerns about things outside our control, but if we can find that line, life is easier and more pleasurable.

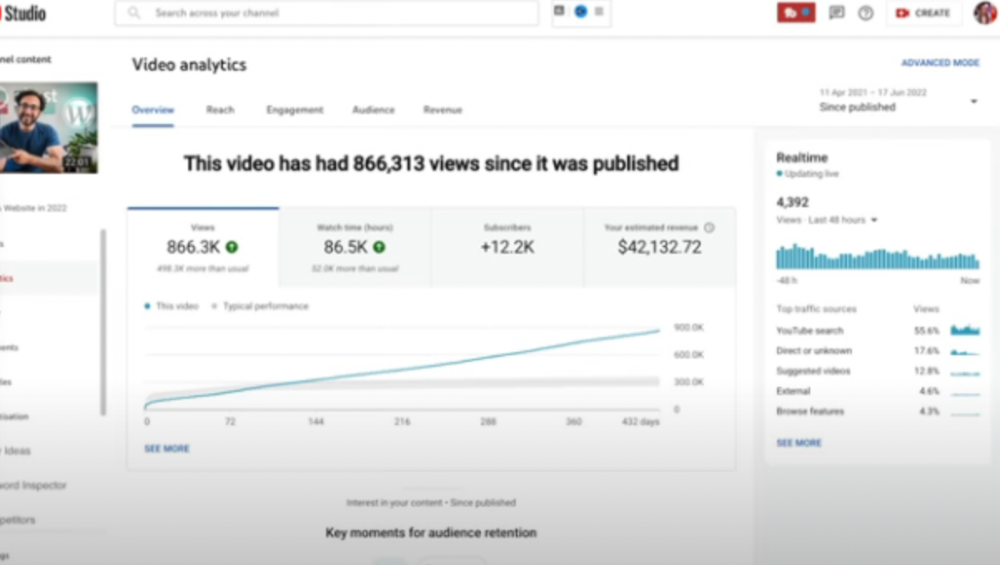

3) How to Build a Website in 2022— 866.3k views: $42,132.72.

The RPM was $48.86 per thousand views, making it his highest-earning video. Squarespace, Wix, and other website builders are trying to put ads on it and competing against one other, so ad rates go up.

Because it was beyond his niche, Ali almost didn't make the video. He made the video because he wanted to help at least one person.

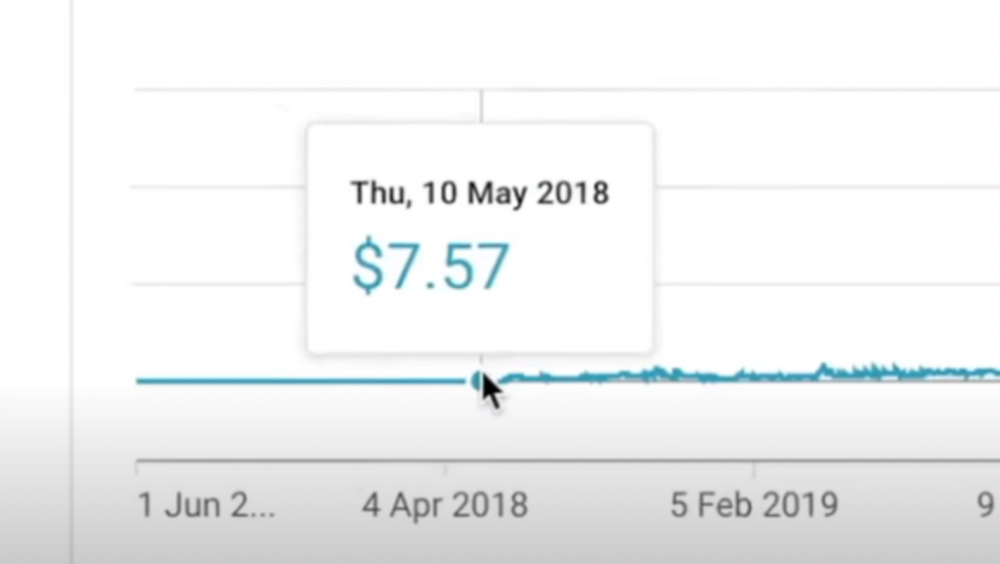

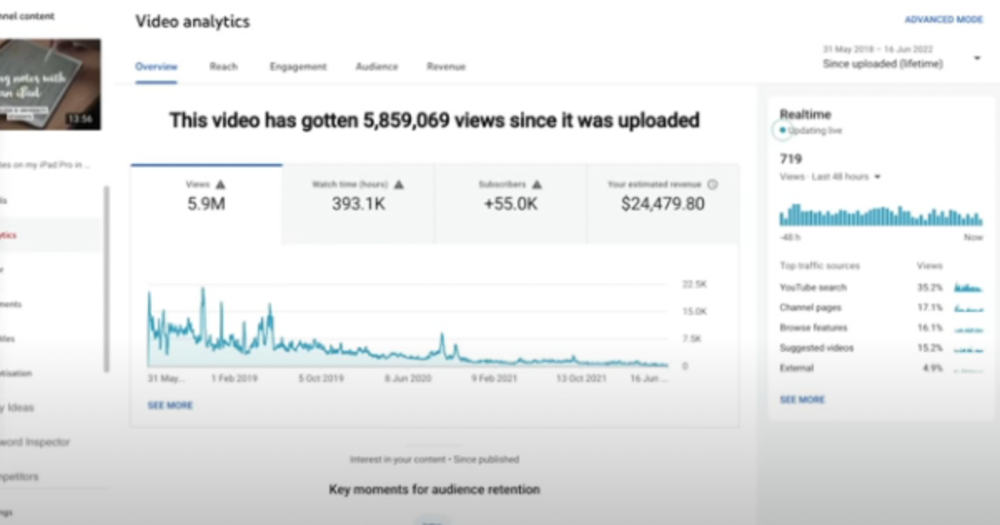

4) How I take notes on my iPad in medical school — 5.9m views: $24,479.80

85th video. It's the video that affected Ali's YouTube channel and his life the most. The video's success wasn't certain.

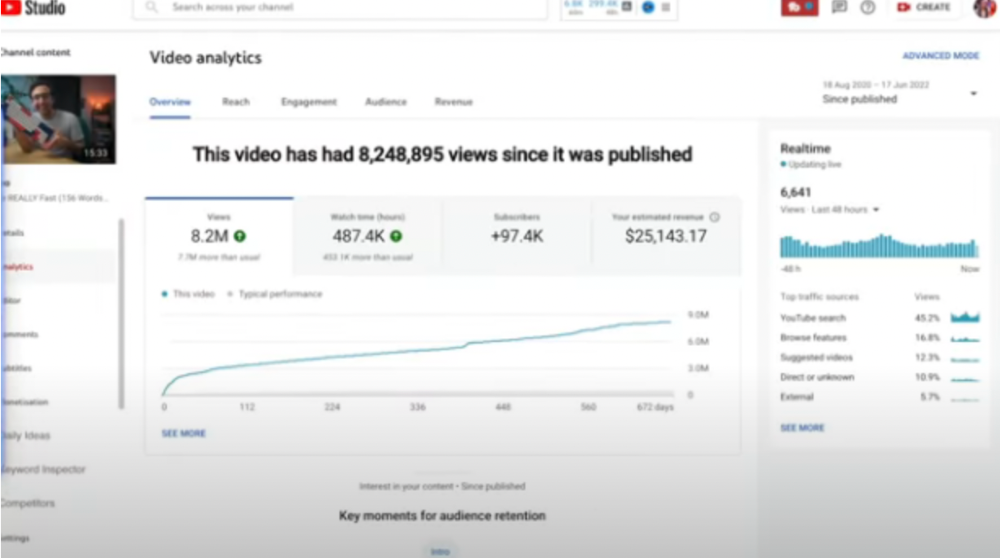

5) How I Type Fast 156 Words Per Minute — 8.2M views: $25,143.17

Ali didn't know this video would perform well; he made it because he can type fast and has been practicing for 10 years. So he made a video with his best advice.

How many views to different wealth levels?

It depends on geography, niche, and other monetization sources. To keep things simple, he would solely utilize AdSense.

How many views to generate money?

To generate money on Youtube, you need 1,000 subscribers and 4,000 hours of view time. How much work do you need to make pocket money?

Ali's first 1,000 subscribers took 52 videos and 6 months. The typical channel with 1,000 subscribers contains 152 videos, according to Tubebuddy. It's time-consuming.

After monetizing, you'll need 15,000 views/month to make $5-$10/day.

How many views to go part-time?

Say you make $35,000/year at your day job. If you work 5 days/week, you make $7,000/year each day. If you want to drop down from 5 days to 4 days/week, you need to make an extra $7,000/year from YouTube, or $600/month.

What's the quit-your-job budget?

Silicon Valley Girl is in a highly successful niche targeting tech-focused folks in the west. When her channel had 500k views/month, she made roughly $3,000/month or $47,000/year, enough to quit your work.

Marina has another 1.5m subscriber channel in Russia, which has a lower rpm because fewer corporations advertise there than in the west. 2.3 million views/month is $4,000/month or $50,000/year, enough to quit your employment.

Marina is an intriguing example because she has three YouTube channels with the same skills, but one is 16x more profitable due to the niche she chose.

In Ali's case, he made 100+ videos when his channel was producing enough money to quit his job, roughly $4,000/month.

How many views make you rich?

Depending on how you define rich. Ali felt prosperous with over $100,000/year and 3–5m views/month.

Conclusion

YouTubers and artists don't treat their work like a company, which is a mistake. Businesses have been attempting to figure this out for decades, if not centuries.

We can learn from the business world how to monetize YouTube, Instagram, and Tiktok and make them into sustainable enterprises where we can hire people and delegate tasks.

Bonus

Watch Ali's video explaining all this:

This post is a summary. Read the full article here