More on Personal Growth

Matthew Royse

3 years ago

Ten words and phrases to avoid in presentations

Don't say this in public!

Want to wow your audience? Want to deliver a successful presentation? Do you want practical takeaways from your presentation?

Then avoid these phrases.

Public speaking is difficult. People fear public speaking, according to research.

"Public speaking is people's biggest fear, according to studies. Number two is death. "Sounds right?" — Comedian Jerry Seinfeld

Yes, public speaking is scary. These words and phrases will make your presentation harder.

Using unnecessary words can weaken your message.

You may have prepared well for your presentation and feel confident. During your presentation, you may freeze up. You may blank or forget.

Effective delivery is even more important than skillful public speaking.

Here are 10 presentation pitfalls.

1. I or Me

Presentations are about the audience, not you. Replace "I or me" with "you, we, or us." Focus on your audience. Reward them with expertise and intriguing views about your issue.

Serve your audience actionable items during your presentation, and you'll do well. Your audience will have a harder time listening and engaging if you're self-centered.

2. Sorry if/for

Your presentation is fine. These phrases make you sound insecure and unprepared. Don't pressure the audience to tell you not to apologize. Your audience should focus on your presentation and essential messages.

3. Excuse the Eye Chart, or This slide's busy

Why add this slide if you're utilizing these phrases? If you don't like this slide, change it before presenting. After the presentation, extra data can be provided.

Don't apologize for unclear slides. Hide or delete a broken PowerPoint slide. If so, divide your message into multiple slides or remove the "business" slide.

4. Sorry I'm Nervous

Some think expressing yourself will win over the audience. Nerves are horrible. Even public speakers are nervous.

Nerves aren't noticeable. What's the point? Let the audience judge your nervousness. Please don't make this obvious.

5. I'm not a speaker or I've never done this before.

These phrases destroy credibility. People won't listen and will check their phones or computers.

Why present if you use these phrases?

Good speakers aren't necessarily public speakers. Be confident in what you say. When you're confident, many people will like your presentation.

6. Our Key Differentiators Are

Overused term. It's widely utilized. This seems "salesy," and your "important differentiators" are probably like a competitor's.

This statement has been diluted; say, "what makes us different is..."

7. Next Slide

Many slides or stories? Your presentation needs transitions. They help your viewers understand your argument.

You didn't transition well when you said "next slide." Think about organic transitions.

8. I Didn’t Have Enough Time, or I’m Running Out of Time

The phrase "I didn't have enough time" implies that you didn't care about your presentation. This shows the viewers you rushed and didn't care.

Saying "I'm out of time" shows poor time management. It means you didn't rehearse enough and plan your time well.

9. I've been asked to speak on

This phrase is used to emphasize your importance. This phrase conveys conceit.

When you say this sentence, you tell others you're intelligent, skilled, and appealing. Don't utilize this term; focus on your topic.

10. Moving On, or All I Have

These phrases don't consider your transitions or presentation's end. People recall a presentation's beginning and end.

How you end your discussion affects how people remember it. You must end your presentation strongly and use natural transitions.

Conclusion

10 phrases to avoid in a presentation. I or me, sorry if or sorry for, pardon the Eye Chart or this busy slide, forgive me if I appear worried, or I'm really nervous, and I'm not good at public speaking, I'm not a speaker, or I've never done this before.

Please don't use these phrases: next slide, I didn't have enough time, I've been asked to speak about, or that's all I have.

We shouldn't make public speaking more difficult than it is. We shouldn't exacerbate a difficult issue. Better public speakers avoid these words and phrases.

“Remember not only to say the right thing in the right place, but far more difficult still, to leave unsaid the wrong thing at the tempting moment.” — Benjamin Franklin, Founding Father

This is a summary. See the original post here.

Katrine Tjoelsen

3 years ago

8 Communication Hacks I Use as a Young Employee

Learn these subtle cues to gain influence.

Hate being ignored?

As a 24-year-old, I struggled at work. Attention-getting tips How to avoid being judged by my size, gender, and lack of wrinkles or gray hair?

I've learned seniority hacks. Influence. Within two years as a product manager, I led a team. I'm a Stanford MBA student.

These communication hacks can make you look senior and influential.

1. Slowly speak

We speak quickly because we're afraid of being interrupted.

When I doubt my ideas, I speak quickly. How can we slow down? Jamie Chapman says speaking slowly saps our energy.

Chapman suggests emphasizing certain words and pausing.

2. Interrupted? Stop the stopper

Someone interrupt your speech?

Don't wait. "May I finish?" No pause needed. Stop interrupting. I first tried this in Leadership Laboratory at Stanford. How quickly I gained influence amazed me.

Next time, try “May I finish?” If that’s not enough, try these other tips from Wendy R.S. O’Connor.

3. Context

Others don't always see what's obvious to you.

Through explanation, you help others see the big picture. If a senior knows it, you help them see where your work fits.

4. Don't ask questions in statements

“Your statement lost its effect when you ended it on a high pitch,” a group member told me. Upspeak, it’s called. I do it when I feel uncertain.

Upspeak loses influence and credibility. Unneeded. When unsure, we can say "I think." We can even ask a proper question.

Someone else's boasting is no reason to be dismissive. As leaders and colleagues, we should listen to our colleagues even if they use this speech pattern.

Give your words impact.

5. Signpost structure

Signposts improve clarity by providing structure and transitions.

Communication coach Alexander Lyon explains how to use "first," "second," and "third" He explains classic and summary transitions to help the listener switch topics.

Signs clarify. Clarity matters.

6. Eliminate email fluff

“Fine. When will the report be ready? — Jeff.”

Notice how senior leaders write short, direct emails? I often use formalities like "dear," "hope you're well," and "kind regards"

Formality is (usually) unnecessary.

7. Replace exclamation marks with periods

See how junior an exclamation-filled email looks:

Hi, all!

Hope you’re as excited as I am for tomorrow! We’re celebrating our accomplishments with cake! Join us tomorrow at 2 pm!

See you soon!

Why the exclamation points? Why not just one?

Hi, all.

Hope you’re as excited as I am for tomorrow. We’re celebrating our accomplishments with cake. Join us tomorrow at 2 pm!

See you soon.

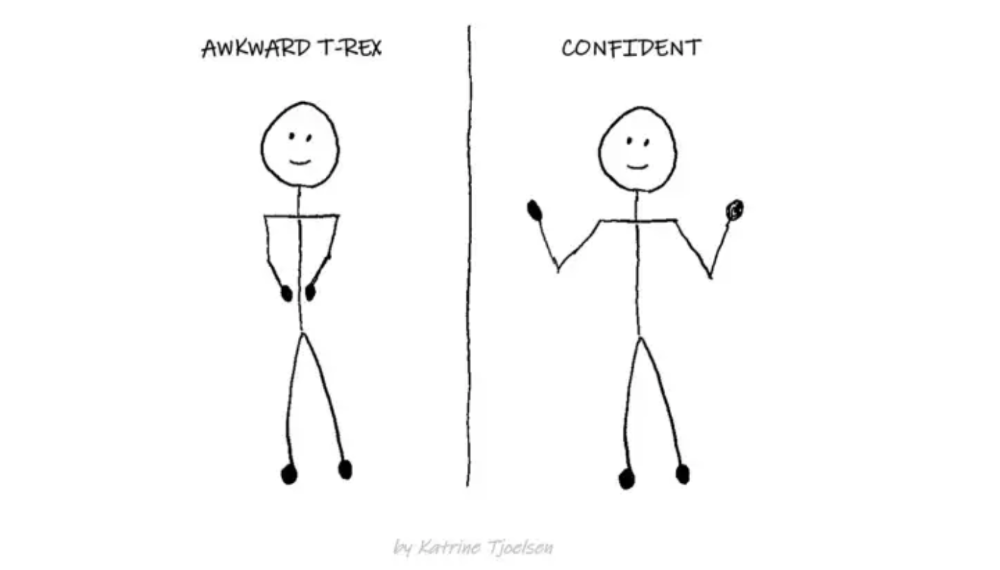

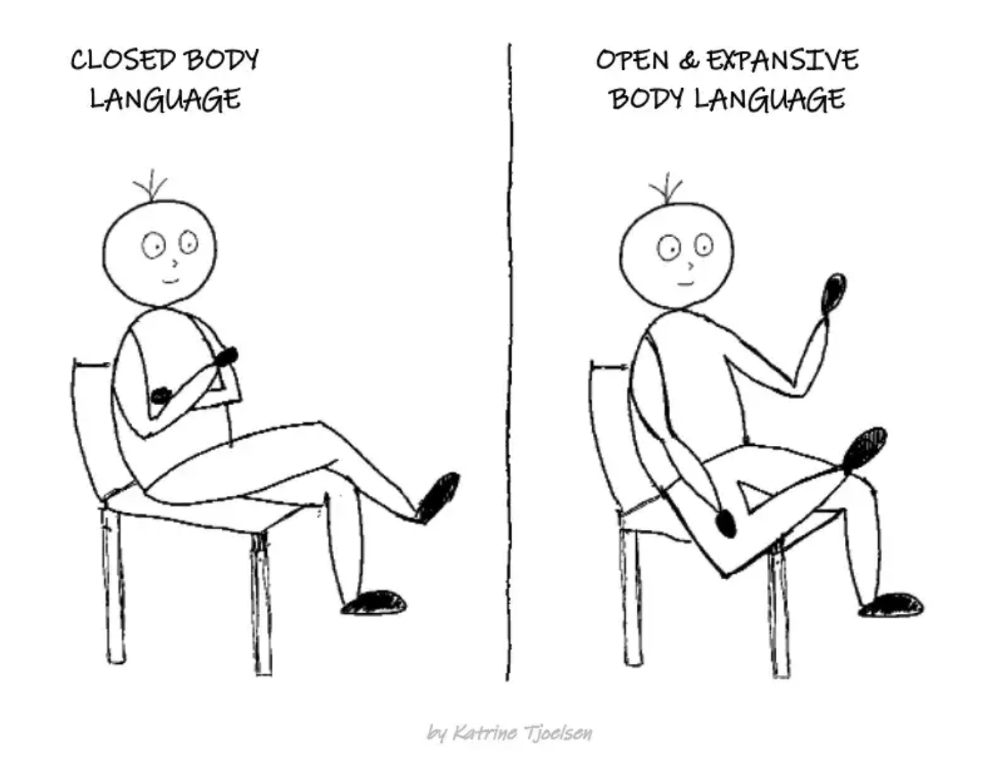

8. Take space

"Playing high" means having an open, relaxed body, says Stanford professor and author Deborah Gruenfield.

Crossed legs or looking small? Relax. Get bigger.

Daniel Vassallo

3 years ago

Why I quit a $500K job at Amazon to work for myself

I quit my 8-year Amazon job last week. I wasn't motivated to do another year despite promotions, pay, recognition, and praise.

In AWS, I built developer tools. I could have worked in that field forever.

I became an Amazon developer. Within 3.5 years, I was promoted twice to senior engineer and would have been promoted to principal engineer if I stayed. The company said I had great potential.

Over time, I became a reputed expert and leader within the company. I was respected.

First year I made $75K, last year $511K. If I stayed another two years, I could have made $1M.

Despite Amazon's reputation, my work–life balance was good. I no longer needed to prove myself and could do everything in 40 hours a week. My team worked from home once a week, and I rarely opened my laptop nights or weekends.

My coworkers were great. I had three generous, empathetic managers. I’m very grateful to everyone I worked with.

Everything was going well and getting better. My motivation to go to work each morning was declining despite my career and income growth.

Another promotion, pay raise, or big project wouldn't have boosted my motivation. Motivation was also waning. It was my freedom.

Demotivation

My motivation was high in the beginning. I worked with someone on an internal tool with little scrutiny. I had more freedom to choose how and what to work on than in recent years. Me and another person improved it, talked to users, released updates, and tested it. Whatever we wanted, we did. We did our best and were mostly self-directed.

In recent years, things have changed. My department's most important project had many stakeholders and complex goals. What I could do depended on my ability to convince others it was the best way to achieve our goals.

Amazon was always someone else's terms. The terms started out simple (keep fixing it), but became more complex over time (maximize all goals; satisfy all stakeholders). Working in a large organization imposed restrictions on how to do the work, what to do, what goals to set, and what business to pursue. This situation forced me to do things I didn't want to do.

Finding New Motivation

What would I do forever? Not something I did until I reached a milestone (an exit), but something I'd do until I'm 80. What could I do for the next 45 years that would make me excited to wake up and pay my bills? Is that too unambitious? Nope. Because I'm motivated by two things.

One is an external carrot or stick. I'm not forced to file my taxes every April, but I do because I don't want to go to jail. Or I may not like something but do it anyway because I need to pay the bills or want a nice car. Extrinsic motivation

One is internal. When there's no carrot or stick, this motivates me. This fuels hobbies. I wanted a job that was intrinsically motivated.

Is this too low-key? Extrinsic motivation isn't sustainable. Getting promoted felt good for a week, then it was over. When I hit $100K, I admired my W2 for a few days, but then it wore off. Same thing happened at $200K, $300K, $400K, and $500K. Earning $1M or $10M wouldn't change anything. I feel the same about every material reward or possession. Getting them feels good at first, but quickly fades.

Things I've done since I was a kid, when no one forced me to, don't wear off. Coding, selling my creations, charting my own path, and being honest. Why not always use my strengths and motivation? I'm lucky to live in a time when I can work independently in my field without large investments. So that’s what I’m doing.

What’s Next?

I'm going all-in on independence and will make a living from scratch. I won't do only what I like, but on my terms. My goal is to cover my family's expenses before my savings run out while doing something I enjoy. What more could I want from my work?

You can now follow me on Twitter as I continue to document my journey.

This post is a summary. Read full article here

You might also like

Alex Carter

3 years ago

Metaverse, Web 3, and NFTs are BS

Most crypto is probably too.

The goals of Web 3 and the metaverse are admirable and attractive. Who doesn't want an internet owned by users? Who wouldn't want a digital realm where anything is possible? A better way to collaborate and visit pals.

Companies pursue profits endlessly. Infinite growth and revenue are expected, and if a corporation needs to sacrifice profits to safeguard users, the CEO, board of directors, and any executives will lose to the system of incentives that (1) retains workers with shares and (2) makes a company answerable to all of its shareholders. Only the government can guarantee user protections, but we know how successful that is. This is nothing new, just a problem with modern capitalism and tech platforms that a user-owned internet might remedy. Moxie, the founder of Signal, has a good articulation of some of these current Web 2 tech platform problems (but I forget the timestamp); thoughts on JRE aside, this episode is worth listening to (it’s about a bunch of other stuff too).

Moxie Marlinspike, founder of Signal, on the Joe Rogan Experience podcast.

Source: https://open.spotify.com/episode/2uVHiMqqJxy8iR2YB63aeP?si=4962b5ecb1854288

Web 3 champions are premature. There was so much spectacular growth during Web 2 that the next wave of founders want to make an even bigger impact, while investors old and new want a chance to get a piece of the moonshot action. Worse, crypto enthusiasts believe — and financially need — the fact of its success to be true, whether or not it is.

I’m doubtful that it will play out like current proponents say. Crypto has been the white-hot focus of SV’s best and brightest for a long time yet still struggles to come up any mainstream use case other than ‘buy, HODL, and believe’: a store of value for your financial goals and wishes. Some kind of the metaverse is likely, but will it be decentralized, mostly in VR, or will Meta (previously FB) play a big role? Unlikely.

METAVERSE

The metaverse exists already. Our digital lives span apps, platforms, and games. I can design a 3D house, invite people, use Discord, and hang around in an artificial environment. Millions of gamers do this in Rust, Minecraft, Valheim, and Animal Crossing, among other games. Discord's voice chat and Slack-like servers/channels are the present social anchor, but the interface, integrations, and data portability will improve. Soon you can stream YouTube videos on digital house walls. You can doodle, create art, play Jackbox, and walk through a door to play Apex Legends, Fortnite, etc. Not just gaming. Digital whiteboards and screen sharing enable real-time collaboration. They’ll review code and operate enterprises. Music is played and made. In digital living rooms, they'll watch movies, sports, comedy, and Twitch. They'll tweet, laugh, learn, and shittalk.

The metaverse is the evolution of our digital life at home, the third place. The closest analog would be Discord and the integration of Facebook, Slack, YouTube, etc. into a single, 3D, customizable hangout space.

I'm not certain this experience can be hugely decentralized and smoothly choreographed, managed, and run, or that VR — a luxury, cumbersome, and questionably relevant technology — must be part of it. Eventually, VR will be pragmatic, achievable, and superior to real life in many ways. A total sensory experience like the Matrix or Sword Art Online, where we're physically hooked into the Internet yet in our imaginations we're jumping, flying, and achieving athletic feats we never could in reality; exploring realms far grander than our own (as grand as it is). That VR is different from today's.

Ben Thompson released an episode of Exponent after Facebook changed its name to Meta. Ben was suspicious about many metaverse champion claims, but he made a good analogy between Oculus and the PC. The PC was initially far too pricey for the ordinary family to afford. It began as a business tool. It got so powerful and pervasive that it affected our personal life. Price continues to plummet and so much consumer software was produced that it's impossible to envision life without a home computer (or in our pockets). If Facebook shows product market fit with VR in business, through use cases like remote work and collaboration, maybe VR will become practical in our personal lives at home.

Before PCs, we relied on Blockbuster, the Yellow Pages, cabs to get to the airport, handwritten taxes, landline phones to schedule social events, and other archaic methods. It is impossible for me to conceive what VR, in the form of headsets and hand controllers, stands to give both professional and especially personal digital experiences that is an order of magnitude better than what we have today. Is looking around better than using a mouse to examine a 3D landscape? Do the hand controls make x10 or x100 work or gaming more fun or efficient? Will VR replace scalable Web 2 methods and applications like Web 1 and Web 2 did for analog? I don't know.

My guess is that the metaverse will arrive slowly, initially on displays we presently use, with more app interoperability. I doubt that it will be controlled by the people or by Facebook, a corporation that struggles to properly innovate internally, as practically every large digital company does. Large tech organizations are lousy at hiring product-savvy employees, and if they do, they rarely let them explore new things.

These companies act like business schools when they seek founders' results, with bureaucracy and dependency. Which company launched the last popular consumer software product that wasn't a clone or acquisition? Recent examples are scarce.

Web 3

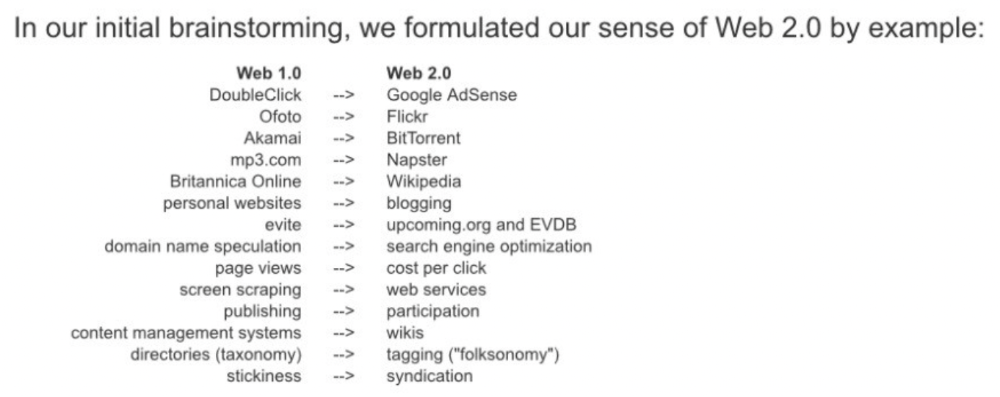

Investors and entrepreneurs of Web 3 firms are declaring victory: 'Web 3 is here!' Web 3 is the future! Many profitable Web 2 enterprises existed when Web 2 was defined. The word was created to explain user behavior shifts, not a personal pipe dream.

Origins of Web 2: http://www.oreilly.com/pub/a/web2/archive/what-is-web-20.html

One of these Web 3 startups may provide the connecting tissue to link all these experiences or become one of the major new digital locations. Even so, successful players will likely use centralized power arrangements, as Web 2 businesses do now. Some Web 2 startups integrated our digital lives. Rockmelt (2010–2013) was a customizable browser with bespoke connectors to every program a user wanted; imagine seeing Facebook, Twitter, Discord, Netflix, YouTube, etc. all in one location. Failure. Who knows what Opera's doing?

Silicon Valley and tech Twitter in general have a history of jumping on dumb bandwagons that go nowhere. Dot-com crash in 2000? The huge deployment of capital into bad ideas and businesses is well-documented. And live video. It was the future until it became a niche sector for gamers. Live audio will play out a similar reality as CEOs with little comprehension of audio and no awareness of lasting new user behavior deceive each other into making more and bigger investments on fool's gold. Twitter trying to buy Clubhouse for $4B, Spotify buying Greenroom, Facebook exploring live audio and 'Tiktok for audio,' and now Amazon developing a live audio platform. This live audio frenzy won't be worth their time or energy. Blind guides blind. Instead of learning from prior failures like Twitter buying Periscope for $100M pre-launch and pre-product market fit, they're betting on unproven and uncompelling experiences.

NFTs

NFTs are also nonsense. Take Loot, a time-limited bag drop of "things" (text on the blockchain) for a game that didn't exist, bought by rich techies too busy to play video games and foolish enough to think they're getting in early on something with a big reward. What gaming studio is incentivized to use these items? Who's encouraged to join? No one cares besides Loot owners who don't have NFTs. Skill, merit, and effort should be rewarded with rare things for gamers. Even if a small minority of gamers can make a living playing, the average game's major appeal has never been to make actual money - that's a profession.

No game stays popular forever, so how is this objective sustainable? Once popularity and usage drop, exclusive crypto or NFTs will fall. And if NFTs are designed to have cross-game appeal, incentives apart, 30 years from now any new game will need millions of pre-existing objects to build around before they start. It doesn’t work.

Many games already feature item economies based on real in-game scarcity, generally for cosmetic things to avoid pay-to-win, which undermines scaled gaming incentives for huge player bases. Counter-Strike, Rust, etc. may be bought and sold on Steam with real money. Since the 1990s, unofficial cross-game marketplaces have sold in-game objects and currencies. NFTs aren't needed. Making a popular, enjoyable, durable game is already difficult.

With NFTs, certain JPEGs on the internet went from useless to selling for $69 million. Why? Crypto, Web 3, early Internet collectibles. NFTs are digital Beanie Babies (unlike NFTs, Beanie Babies were a popular children's toy; their destinies are the same). NFTs are worthless and scarce. They appeal to crypto enthusiasts seeking for a practical use case to support their theory and boost their own fortune. They also attract to SV insiders desperate not to miss the next big thing, not knowing what it will be. NFTs aren't about paying artists and creators who don't get credit for their work.

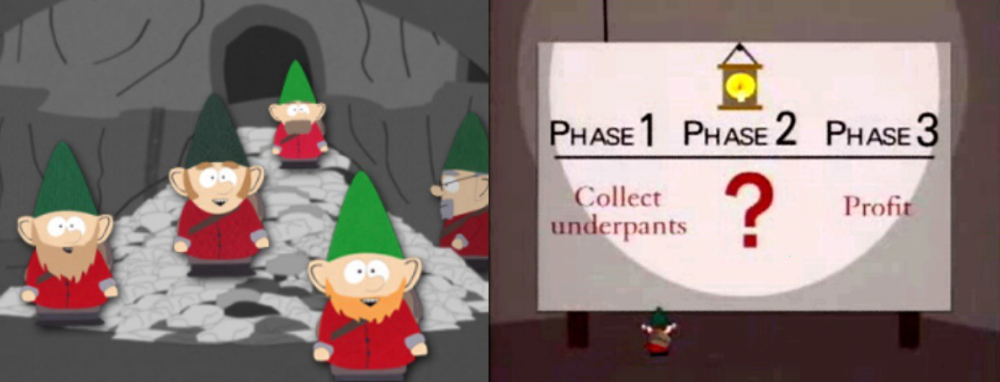

South Park's Underpants Gnomes

NFTs are a benign, foolish plan to earn money on par with South Park's underpants gnomes. At worst, they're the world of hucksterism and poor performers. Or those with money and enormous followings who, like everyone, don't completely grasp cryptocurrencies but are motivated by greed and status and believe Gary Vee's claim that CryptoPunks are the next Facebook. Gary's watertight logic: if NFT prices dip, they're on the same path as the most successful corporation in human history; buy the dip! NFTs aren't businesses or museum-worthy art. They're bs.

Gary Vee compares NFTs to Amazon.com. vm.tiktok.com/TTPdA9TyH2

We grew up collecting: Magic: The Gathering (MTG) cards printed in the 90s are now worth over $30,000. Imagine buying a digital Magic card with no underlying foundation. No one plays the game because it doesn't exist. An NFT is a contextless image someone conned you into buying a certificate for, but anyone may copy, paste, and use. Replace MTG with Pokemon for younger readers.

When Gary Vee strongarms 30 tech billionaires and YouTube influencers into buying CryptoPunks, they'll talk about it on Twitch, YouTube, podcasts, Twitter, etc. That will convince average folks that the product has value. These guys are smart and/or rich, so I'll get in early like them. Cryptography is similar. No solid, scaled, mainstream use case exists, and no one knows where it's headed, but since the global crypto financial bubble hasn't burst and many people have made insane fortunes, regular people are putting real money into something that is highly speculative and could be nothing because they want a piece of the action. Who doesn’t want free money? Rich techies and influencers won't be affected; normal folks will.

Imagine removing every $1 invested in Bitcoin instantly. What would happen? How far would Bitcoin fall? Over 90%, maybe even 95%, and Bitcoin would be dead. Bitcoin as an investment is the only scalable widespread use case: it's confidence that a better use case will arise and that being early pays handsomely. It's like pouring a trillion dollars into a company with no business strategy or users and a CEO who makes vague future references.

New tech and efforts may provoke a 'get off my lawn' mentality as you approach 40, but I've always prided myself on having a decent bullshit detector, and it's flying off the handle at this foolishness. If we can accomplish a functional, responsible, equitable, and ethical user-owned internet, I'm for it.

Postscript:

I wanted to summarize my opinions because I've been angry about this for a while but just sporadically tweeted about it. A friend handed me a Dan Olson YouTube video just before publication. He's more knowledgeable, articulate, and convincing about crypto. It's worth seeing:

This post is a summary. See the original one here.

VIP Graphics

3 years ago

Leaked pitch deck for Metas' new influencer-focused live-streaming service

As part of Meta's endeavor to establish an interactive live-streaming platform, the company is testing with influencers.

The NPE (new product experimentation team) has been testing Super since late 2020.

Bloomberg defined Super as a Cameo-inspired FaceTime-like gadget in 2020. The tool has evolved into a Twitch-like live streaming application.

Less than 100 creators have utilized Super: Creators can request access on Meta's website. Super isn't an Instagram, Facebook, or Meta extension.

“It’s a standalone project,” the spokesperson said about Super. “Right now, it’s web only. They have been testing it very quietly for about two years. The end goal [of NPE projects] is ultimately creating the next standalone project that could be part of the Meta family of products.” The spokesperson said the outreach this week was part of a drive to get more creators to test Super.

A 2021 pitch deck from Super reveals the inner workings of Meta.

The deck gathered feedback on possible sponsorship models, with mockups of brand deals & features. Meta reportedly paid creators $200 to $3,000 to test Super for 30 minutes.

Meta's pitch deck for Super live streaming was leaked.

What were the slides in the pitch deck for Metas Super?

Embed not supported: see full deck & article here →

View examples of Meta's pitch deck for Super:

Product Slides, first

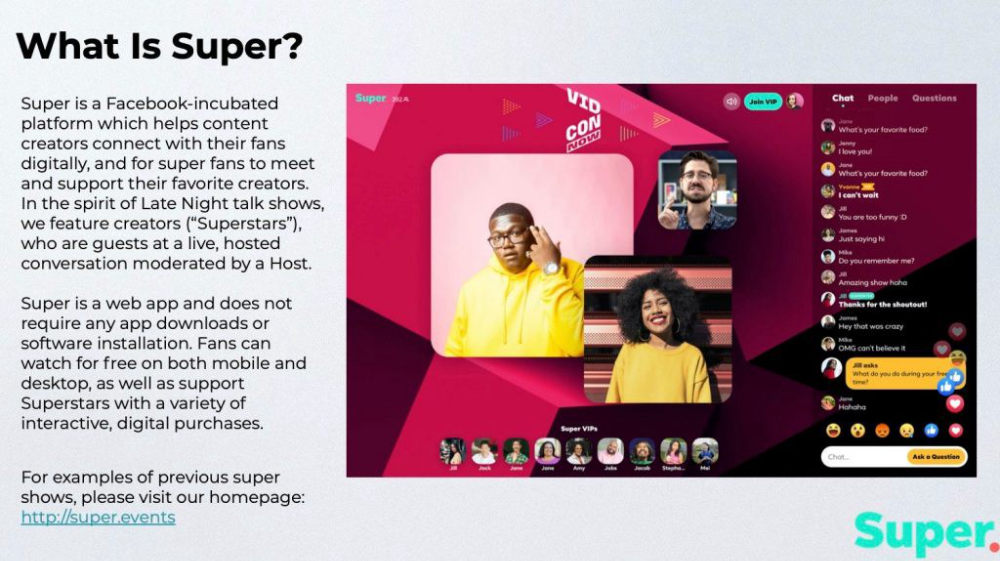

The pitch deck begins with Super's mission:

Super is a Facebook-incubated platform which helps content creators connect with their fans digitally, and for super fans to meet and support their favorite creators. In the spirit of Late Night talk shows, we feature creators (“Superstars”), who are guests at a live, hosted conversation moderated by a Host.

This slide (and most of the deck) is text-heavy, with few icons, bullets, and illustrations to break up the content. Super's online app status (which requires no download or installation) might be used as a callout (rather than paragraph-form).

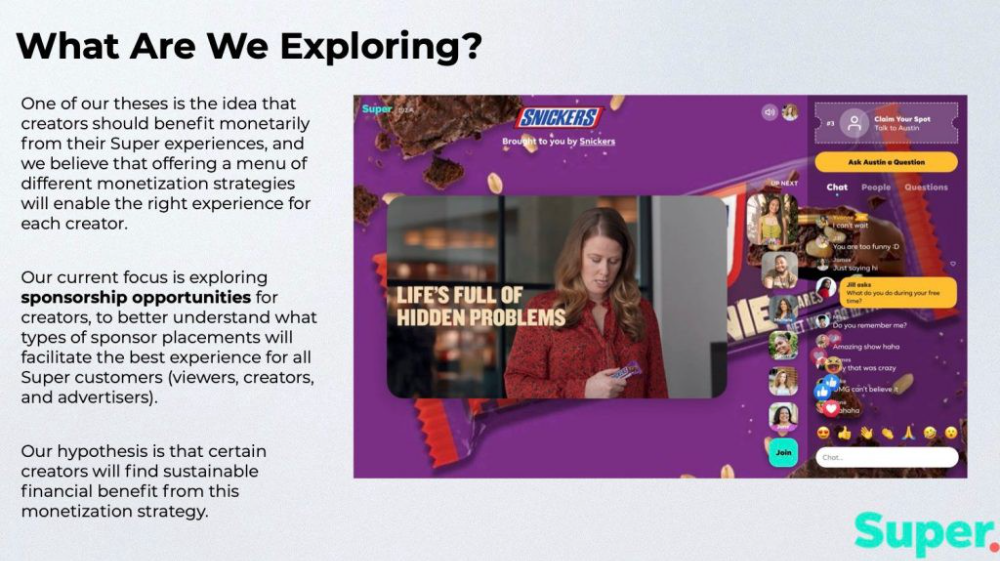

Meta's Super platform focuses on brand sponsorships and native placements, as shown in the slide above.

One of our theses is the idea that creators should benefit monetarily from their Super experiences, and we believe that offering a menu of different monetization strategies will enable the right experience for each creator. Our current focus is exploring sponsorship opportunities for creators, to better understand what types of sponsor placements will facilitate the best experience for all Super customers (viewers, creators, and advertisers).

Colorful mockups help bring Metas vision for Super to life.

2. Slide Features

Super's pitch deck focuses on the platform's features. The deck covers pre-show, pre-roll, and post-event for a Sponsored Experience.

Pre-show: active 30 minutes before the show's start

Pre-roll: Play a 15-minute commercial for the sponsor before the event (auto-plays once)

Meet and Greet: This event can have a branding, such as Meet & Greet presented by [Snickers]

Super Selfies: Makers and followers get a digital souvenir to post on social media.

Post-Event: Possibility to draw viewers' attention to sponsored content/links during the after-show

Almost every screen displays the Sponsor logo, link, and/or branded background. Viewers can watch sponsor video while waiting for the event to start.

Slide 3: Business Model

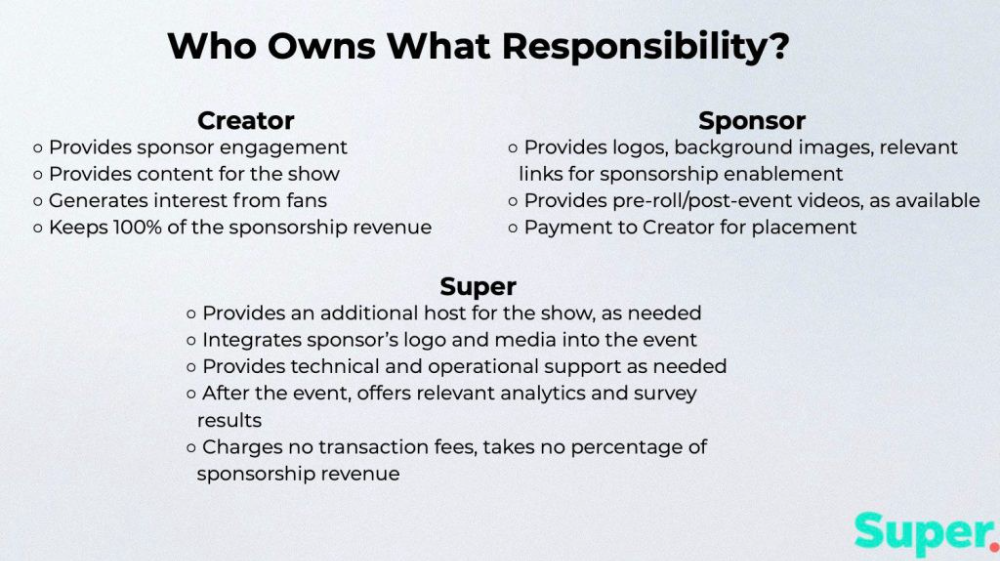

Meta's presentation for Super is incomplete without numbers. Super's first slide outlines the creator, sponsor, and Super's obligations. Super does not charge creators any fees or commissions on sponsorship earnings.

How to make a great pitch deck

We hope you can use the Super pitch deck to improve your business. Bestpitchdeck.com/super-meta is a bookmarkable link.

You can also use one of our expert-designed templates to generate a pitch deck.

Our team has helped close $100M+ in agreements and funding for premier companies and VC firms. Use our presentation templates, one-pagers, or financial models to launch your pitch.

Every pitch must be audience-specific. Our team has prepared pitch decks for various sectors and fundraising phases.

Pitch Deck Software VIP.graphics produced a popular SaaS & Software Pitch Deck based on decks that closed millions in transactions & investments for orgs of all sizes, from high-growth startups to Fortune 100 enterprises. This easy-to-customize PowerPoint template includes ready-made features and key slides for your software firm.

Accelerator Pitch Deck The Accelerator Pitch Deck template is for early-stage founders seeking funding from pitch contests, accelerators, incubators, angels, or VC companies. Winning a pitch contest or getting into a top accelerator demands a strategic investor pitch.

Pitch Deck Template Series Startup and founder pitch deck template: Workable, smart slides. This pitch deck template is for companies, entrepreneurs, and founders raising seed or Series A finance.

M&A Pitch Deck Perfect Pitch Deck is a template for later-stage enterprises engaging more sophisticated conversations like M&A, late-stage investment (Series C+), or partnerships & funding. Our team prepared this presentation to help creators confidently pitch to investment banks, PE firms, and hedge funds (and vice versa).

Browse our growing variety of industry-specific pitch decks.

Aldric Chen

3 years ago

Jack Dorsey's Meeting Best Practice was something I tried. It Performs Exceptionally Well in Consulting Engagements.

Yes, client meetings are difficult. Especially when I'm alone.

Clients must tell us their problems so we can help.

In-meeting challenges contribute nothing to our work. Consider this:

Clients are unprepared.

Clients are distracted.

Clients are confused.

Introducing Jack Dorsey's Google Doc approach

I endorse his approach to meetings.

Not Google Doc-related. Jack uses it for meetings.

This is what his meetings look like.

Prior to the meeting, the Chair creates the agenda, structure, and information using Google Doc.

Participants in the meeting would have 5-10 minutes to read the Google Doc.

They have 5-10 minutes to type their comments on the document.

In-depth discussion begins

There is elegance in simplicity. Here's how Jack's approach is fantastic.

Unprepared clients are given time to read.

During the meeting, they think and work on it.

They can see real-time remarks from others.

Discussion ensues.

Three months ago, I fell for this strategy. After trying it with a client, I got good results.

I conducted social control experiments in a few client workshops.

Context matters.

I am sure Jack Dorsey’s method works well in meetings. What about client workshops?

So, I tested Enterprise of the Future with a consulting client.

I sent multiple emails to client stakeholders describing the new approach.

No PowerPoints that day. I spent the night setting up the Google Doc with conversation topics, critical thinking questions, and a Before and After section.

The client was shocked. First, a Google Doc was projected. Second surprise was a verbal feedback.

“No pre-meeting materials?”

“Don’t worry. I know you are not reading it before our meeting, anyway.”

We laughed. The experiment started.

Observations throughout a 90-minute engagement workshop from beginning to end

For 10 minutes, the workshop was silent.

People read the Google Doc. For some, the silence was unnerving.

“Are you not going to present anything to us?”

I said everything's in Google Doc. I asked them to read, remark, and add relevant paragraphs.

As they unlocked their laptops, they were annoyed.

Ten client stakeholders are typing on the Google Doc. My laptop displays comment bubbles, red lines, new paragraphs, and strikethroughs.

The first 10 minutes were productive. Everyone has seen and contributed to the document.

I was silent.

The move to a classical workshop was smooth. I didn't stimulate dialogue. They did.

Stephanie asked Joe why a blended workforce hinders company productivity. She questioned his comments and additional paragraphs.

That is when a light bulb hit my head. Yes, you want to speak to the right person to resolve issues!

Not only that was discussed. Others discussed their remark bubbles with neighbors. Debate circles sprung up one after the other.

The best part? I asked everyone to add their post-discussion thoughts on a Google Doc.

After the workshop, I have:

An agreement-based working document

A post-discussion minutes that are prepared for publication

A record of the discussion points that were brought up, argued, and evaluated critically

It showed me how stakeholders viewed their Enterprise of the Future. It allowed me to align with them.

Finale Keynotes

Client meetings are a hit-or-miss. I know that.

Jack Dorsey's meeting strategy works for consulting. It promotes session alignment.

It relieves clients of preparation.

I get the necessary information to advance this consulting engagement.

It is brilliant.