More on Leadership

Alexander Nguyen

3 years ago

A Comparison of Amazon, Microsoft, and Google's Compensation

Learn or earn

In 2020, I started software engineering. My base wage has progressed as follows:

Amazon (2020): $112,000

Microsoft (2021): $123,000

Google (2022): $169,000

I didn't major in math, but those jumps appear more than a 7% wage increase. Here's a deeper look at the three.

The Three Categories of Compensation

Most software engineering compensation packages at IT organizations follow this format.

Minimum Salary

Base salary is pre-tax income. Most organizations give a base pay. This is paid biweekly, twice monthly, or monthly.

Recruiting Bonus

Sign-On incentives are one-time rewards to new hires. Companies need an incentive to switch. If you leave early, you must pay back the whole cost or a pro-rated amount.

Equity

Equity is complex and requires its own post. A company will promise to give you a certain amount of company stock but when you get it depends on your offer. 25% per year for 4 years, then it's gone.

If a company gives you $100,000 and distributes 25% every year for 4 years, expect $25,000 worth of company stock in your stock brokerage on your 1 year work anniversary.

Performance Bonus

Tech offers may include yearly performance bonuses. Depends on performance and funding. I've only seen 0-20%.

Engineers' overall compensation usually includes:

Base Salary + Sign-On + (Total Equity)/4 + Average Performance Bonus

Amazon: (TC: 150k)

Base Pay System

Amazon pays Seattle employees monthly on the first work day. I'd rather have my money sooner than later, even if it saves processing and pay statements.

The company upped its base pay cap from $160,000 to $350,000 to compete with other tech companies.

Performance Bonus

Amazon has no performance bonus, so you can work as little or as much as you like and get paid the same. Amazon is savvy to avoid promising benefits it can't deliver.

Sign-On Bonus

Amazon gives two two-year sign-up bonuses. First-year workers could receive $20,000 and second-year workers $15,000. It's probably to make up for the company's strange equity structure.

If you leave during the first year, you'll owe the entire money and a prorated amount for the second year bonus.

Equity

Most organizations prefer a 25%, 25%, 25%, 25% equity structure. Amazon takes a different approach with end-heavy equity:

the first year, 5%

15% after one year.

20% then every six months

We thought it was constructed this way to keep staff longer.

Microsoft (TC: 185k)

Base Pay System

Microsoft paid biweekly.

Gainful Performance

My offer letter suggested a 0%-20% performance bonus. Everyone will be satisfied with a 10% raise at year's end.

But misleading press where the budget for the bonus is doubled can upset some employees because they won't earn double their expected bonus. Still barely 10% for 2022 average.

Sign-On Bonus

Microsoft's sign-on bonus is a one-time payout. The contract can require 2-year employment. You must negotiate 1 year. It's pro-rated, so that's fair.

Equity

Microsoft is one of those companies that has standard 25% equity structure. Except if you’re a new graduate.

In that case it’ll be

25% six months later

25% each year following that

New grads will acquire equity in 3.5 years, not 4. I'm guessing it's to keep new grads around longer.

Google (TC: 300k)

Base Pay Structure

Google pays biweekly.

Performance Bonus

Google's offer letter specifies a 15% bonus. It's wonderful there's no cap, but I might still get 0%. A little more than Microsoft’s 10% and a lot more than Amazon’s 0%.

Sign-On Bonus

Google gave a 1-year sign-up incentive. If the contract is only 1 year, I can move without any extra obligations.

Not as fantastic as Amazon's sign-up bonuses, but the remainder of the package might compensate.

Equity

We covered Amazon's tail-heavy compensation structure, so Google's front-heavy equity structure may surprise you.

Annual structure breakdown

33% Year 1

33% Year 2

22% Year 3

12% Year 4

The goal is to get them to Google and keep them there.

Final Thoughts

This post hopefully helped you understand the 3 firms' compensation arrangements.

There's always more to discuss, such as refreshers, 401k benefits, and business discounts, but I hope this shows a distinction between these 3 firms.

Christian Soschner

3 years ago

Steve Jobs' Secrets Revealed

From 1984 until 2011, he ran Apple using the same template.

What is a founder CEO's most crucial skill?

Presentation, communication, and sales

As a Business Angel Investor, I saw many pitch presentations and met with investors one-on-one to promote my companies.

There is always the conception of “Investors have to invest,” so there is no need to care about the presentation.

It's false. Nobody must invest. Many investors believe that entrepreneurs must convince them to invest in their business.

Sometimes — like in 2018–2022 — too much money enters the market, and everyone makes good money.

Do you recall the Buy Now, Pay Later Movement? This amazing narrative had no return potential. Only buyers who couldn't acquire financing elsewhere shopped at these companies.

Klarna's failing business concept led to high valuations.

Investors become more cautious when the economy falters. 2022 sees rising inflation, interest rates, wars, and civil instability. It's like the apocalypse's four horsemen have arrived.

Storytelling is important in rough economies.

When investors draw back, how can entrepreneurs stand out?

In Q2/2022, every study I've read said:

Investors cease investing

Deals are down in almost all IT industries from previous quarters.

What do founders need to do?

Differentiate yourself.

Storytelling talents help.

The Steve Jobs Way

Every time I watch a Steve Jobs presentation, I'm enthralled.

I'm a techie. Everything technical interests me. But, I skim most presentations.

What's Steve Jobs's secret?

Steve Jobs created Apple in 1976 and made it a profitable software and hardware firm in the 1980s. Macintosh goods couldn't beat IBM's. This mistake sacked him in 1985.

Before rejoining Apple in 1997, Steve Jobs founded Next Inc. and Pixar.

From then on, Apple became America's most valuable firm.

Steve Jobs understood people's needs. He said:

“People don’t know what they want until you show it to them. That’s why I never rely on market research. Our task is to read things that are not yet on the page.”

In his opinion, people talk about problems. A lot. Entrepreneurs must learn what the population's pressing problems are and create a solution.

Steve Jobs showed people what they needed before they realized it.

I'll explain:

Present a Big Vision

Steve Jobs starts every presentation by describing his long-term goals for Apple.

1984's Macintosh presentation set up David vs. Goliath. In a George Orwell-style dystopia, IBM computers were bad. It was 1984.

Apple will save the world, like Jedis.

Why do customers and investors like Big Vision?

People want a wider perspective, I think. Humans love improving the planet.

Apple users often cite emotional reasons for buying the brand.

Revolutionizing several industries with breakthrough inventions

Establish Authority

Everyone knows Apple in 2022. It's hard to find folks who confuse Apple with an apple around the world.

Apple wasn't as famous as it is today until Steve Jobs left in 2011.

Most entrepreneurs lack experience. They may market their company or items to folks who haven't heard of it.

Steve Jobs presented the company's historical accomplishments to overcome opposition.

In his presentation of the first iPhone, he talked about the Apple Macintosh, which altered the computing sector, and the iPod, which changed the music industry.

People who have never heard of Apple feel like they're seeing a winner. It raises expectations that the new product will be game-changing and must-have.

The Big Reveal

A pitch or product presentation always has something new.

Steve Jobs doesn't only demonstrate the product. I don't think he'd skip the major point of a company presentation.

He consistently discusses present market solutions, their faults, and a better consumer solution.

No solution exists yet.

It's a multi-faceted play:

It's comparing the new product to something familiar. This makes novelty and the product more relatable.

Describe a desirable solution.

He's funny. He demonstrated an iPod with an 80s phone dial in his iPhone presentation.

Then he reveals the new product. Macintosh presented itself.

Show the benefits

He outlines what Apple is doing differently after demonstrating the product.

How do you distinguish from others? The Big Breakthrough Presentation.

A few hundred slides might list all benefits.

Everyone would fall asleep. Have you ever had similar presentations?

When the brain is overloaded with knowledge, the limbic system changes to other duties, like lunch planning.

What should a speaker do? There's a classic proverb:

“Tell me and I forget, teach me and I may remember, involve me and I learn” (— Not Benjamin Franklin).

Steve Jobs showcased the product live.

Again, using ordinary scenarios to highlight the product's benefits makes it relatable.

The 2010 iPad Presentation uses this technique.

Invite the Team and Let Them Run the Presentation

CEOs spend most time outside the organization. Many companies elect to have only one presenter.

It sends the incorrect message to investors. Product presentations should always include the whole team.

Let me explain why.

Companies needing investment money frequently have shaky business strategies or no product-market fit or robust corporate structure.

Investors solely bet on a team's ability to implement ideas and make a profit.

Early team involvement helps investors understand the company's drivers. Travel costs are worthwhile.

But why for product presentations?

Presenters of varied ages, genders, social backgrounds, and skillsets are relatable. CEOs want relatable products.

Some customers may not believe a white man's message. A black woman's message may be more accepted.

Make the story relatable when you have the best product that solves people's concerns.

Best example: 1984 Macintosh presentation with development team panel.

What is the largest error people make when companies fail?

Saving money on the corporate and product presentation.

Invite your team to five partner meetings when five investors are shortlisted.

Rehearse the presentation till it's natural. Let the team speak.

Successful presentations require structure, rehearsal, and a team. Steve Jobs nailed it.

Sammy Abdullah

3 years ago

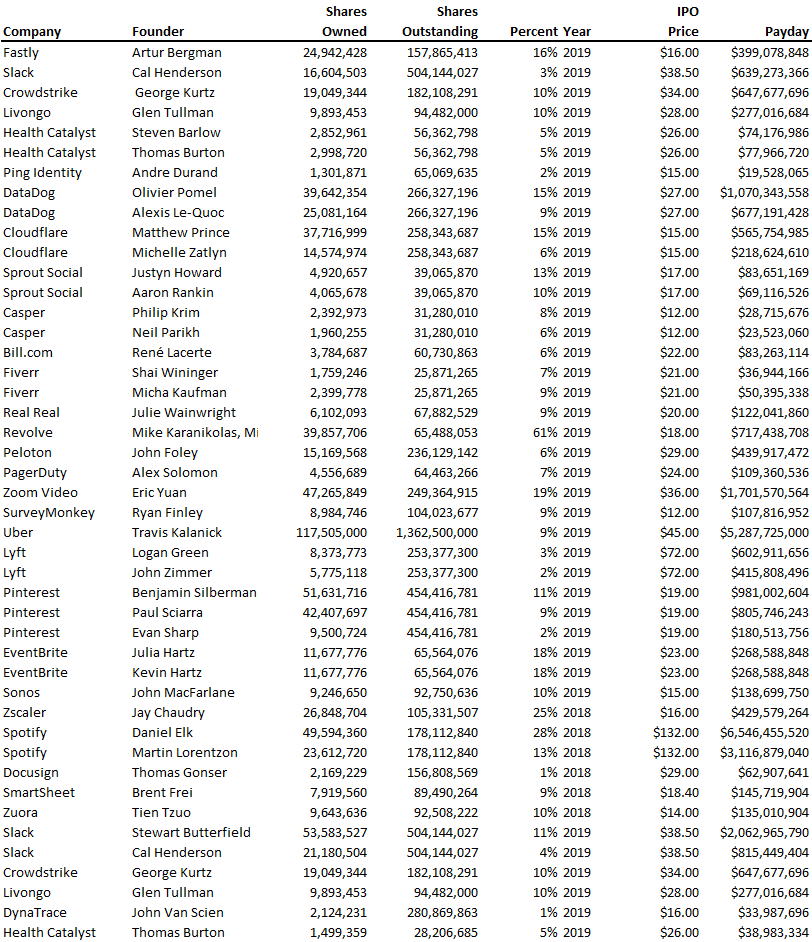

Payouts to founders at IPO

How much do startup founders make after an IPO? We looked at 2018's major tech IPOs. Paydays aren't what founders took home at the IPO (shares are normally locked up for 6 months), but what they were worth at the IPO price on the day the firm went public. It's not cash, but it's nice. Here's the data.

Several points are noteworthy.

Huge payoffs. Median and average pay were $399m and $918m. Average and median homeownership were 9% and 12%.

Coinbase, Uber, UI Path. Uber, Zoom, Spotify, UI Path, and Coinbase founders raised billions. Zoom's founder owned 19% and Spotify's 28% and 13%. Brian Armstrong controlled 20% of Coinbase at IPO and was worth $15bn. Preserving as much equity as possible by staying cash-efficient or raising at high valuations also helps.

The smallest was Ping. Ping's compensation was the smallest. Andre Duand owned 2% but was worth $20m at IPO. That's less than some billion-dollar paydays, but still good.

IPOs can be lucrative, as you can see. Preserving equity could be the difference between a $20mm and $15bln payday (Coinbase).

You might also like

1eth1da

3 years ago

6 Rules to build a successful NFT Community in 2022

Too much NFT, Discord, and shitposting.

How do you choose?

How do you recruit more members to join your NFT project?

In 2021, a successful NFT project required:

Monkey/ape artwork

Twitter and Discord bot-filled

Roadmap overpromise

Goal was quick cash.

2022 and the years after will change that.

These are 6 Rules for a Strong NFT Community in 2022:

THINK LONG TERM

This relates to roadmap planning. Hype and dumb luck may drive NFT projects (ahem, goblins) but rarely will your project soar.

Instead, consider sustainability.

Plan your roadmap based on your team's abilities.

Do what you're already doing, but with NFTs, make it bigger and better.

You shouldn't copy a project's roadmap just because it was profitable.

This will lead to over-promising, team burnout, and an RUG NFT project.

OFFER VALUE

Building a great community starts with giving.

Why are musicians popular?

Because they offer entertainment for everyone, a random person becomes a fan, and more fans become a cult.

That's how you should approach your community.

TEAM UP

A great team helps.

An NFT project could have 3 or 2 people.

Credibility trumps team size.

Make sure your team can answer community questions, resolve issues, and constantly attend to them.

Don't overwork and burn out.

Your community will be able to recognize that you are trying too hard and give up on the project.

BUILD A GREAT PRODUCT

Bored Ape Yacht Club altered the NFT space.

Cryptopunks transformed NFTs.

Many others did, including Okay Bears.

What made them that way?

Because they answered a key question.

What is my NFT supposed to be?

Before planning art, this question must be answered.

NFTs can't be just jpegs.

What does it represent?

Is it a Metaverse-ready project?

What blockchain are you going to be using and why?

Set some ground rules for yourself. This helps your project's direction.

These questions will help you and your team set a direction for blockchain, NFT, and Web3 technology.

EDUCATE ON WEB3

The more the team learns about Web3 technology, the more they can offer their community.

Think tokens, metaverse, cross-chain interoperability and more.

BUILD A GREAT COMMUNITY

Several projects mistreat their communities.

They treat their community like "customers" and try to sell them NFT.

Providing Whitelists and giveaways aren't your only community-building options.

Think bigger.

Consider them family and friends, not wallets.

Consider them fans.

These are some tips to start your NFT project.

Nick Nolan

3 years ago

In five years, starting a business won't be hip.

People are slowly recognizing entrepreneurship's downside.

Growing up, entrepreneurship wasn't common. High school class of 2012 had no entrepreneurs.

Businesses were different.

They had staff and a lengthy history of achievement.

I never wanted a business. It felt unattainable. My friends didn't care.

Weird.

People desired degrees to attain good jobs at big companies.

When graduated high school:

9 out of 10 people attend college

Earn minimum wage (7%) working in a restaurant or retail establishment

Or join the military (3%)

Later, entrepreneurship became a thing.

2014-ish

I was in the military and most of my high school friends were in college, so I didn't hear anything.

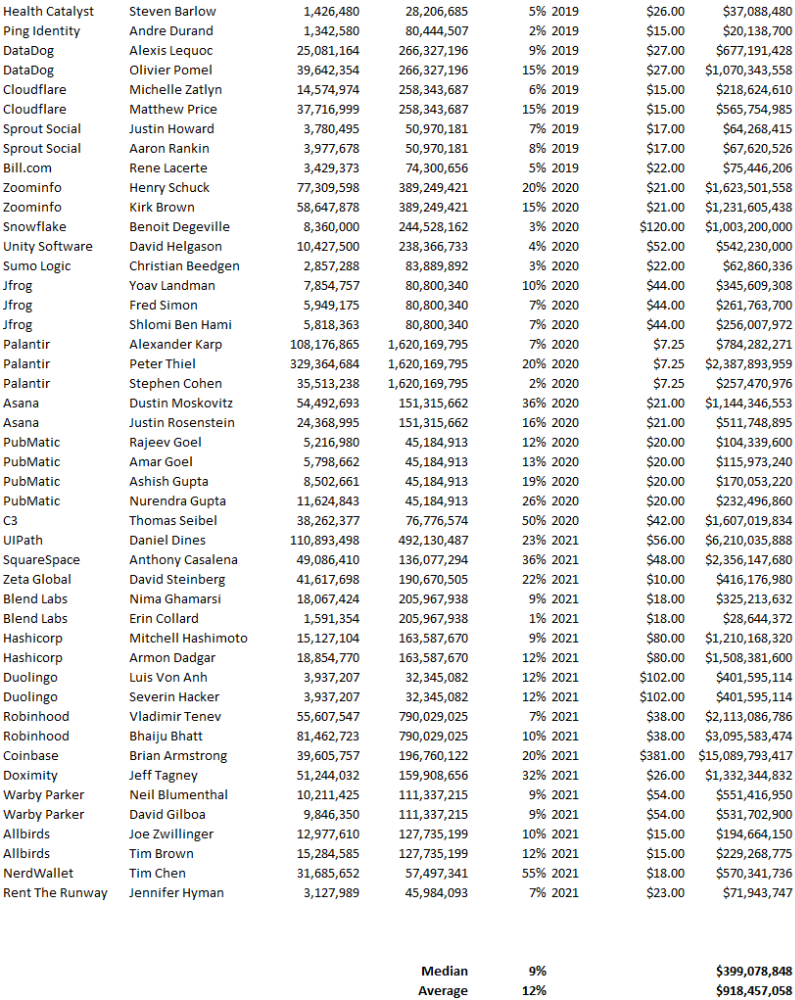

Entrepreneurship soared in 2015, according to Google Trends.

Then more individuals were interested. Entrepreneurship went from unusual to cool.

In 2015, it was easier than ever to build a website, run Facebook advertisements, and achieve organic social media reach.

There were several online business tools.

You didn't need to spend years or money figuring it out. Most entry barriers were gone.

Everyone wanted a side gig to escape the 95.

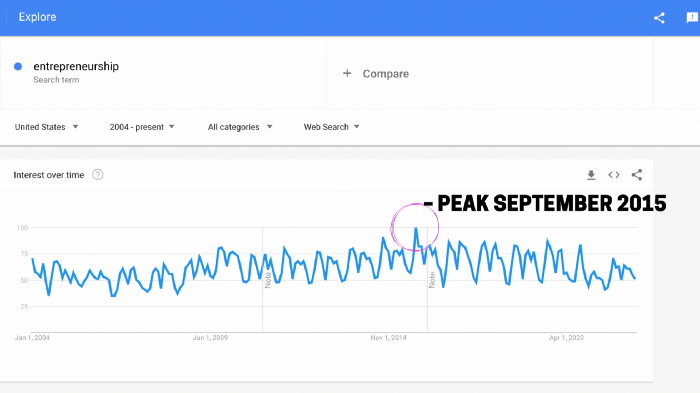

Small company applications have increased during the previous 10 years.

2011-2014 trend continues.

2015 adds 150,000 applications. 2016 adds 200,000. Plus 300,000 in 2017.

The graph makes it look little, but that's a considerable annual spike with no indications of stopping.

By 2021, new business apps had doubled.

Entrepreneurship will return to its early 2010s level.

I think we'll go backward in 5 years.

Entrepreneurship is half as popular as it was in 2015.

In the late 2020s and 30s, entrepreneurship will again be obscure.

Entrepreneurship's decade-long splendor is fading. People will cease escaping 9-5 and launch fewer companies.

That’s not a bad thing.

I think people have a rose-colored vision of entrepreneurship. It's fashionable. People feel that they're missing out if they're not entrepreneurial.

Reality is showing up.

People say on social media, "I knew starting a business would be hard, but not this hard."

More negative posts on entrepreneurship:

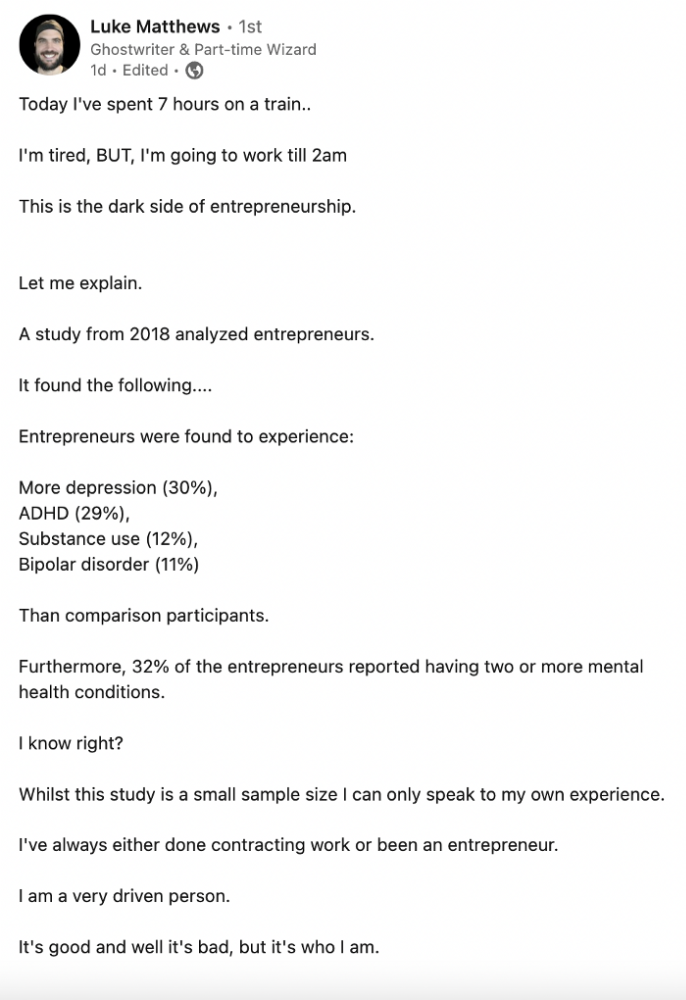

Luke adds:

Is being an entrepreneur ‘healthy’? I don’t really think so. Many like Gary V, are not role models for a well-balanced life. Despite what feel-good LinkedIn tells you the odds are against you as an entrepreneur. You have to work your face off. It’s a tough but rewarding lifestyle. So maybe let’s stop glorifying it because it takes a lot of (bleepin) work to survive a pandemic, mental health battles, and a competitive market.

Entrepreneurship is no longer a pipe dream.

It’s hard.

I went full-time in March 2020. I was done by April 2021. I had a good-paying job with perks.

When that fell through (on my start date), I had to continue my entrepreneurial path. I needed money by May 1 to pay rent.

Entrepreneurship isn't as great as many think.

Entrepreneurship is a serious business.

If you have a 9-5, the grass isn't greener here. Most people aren't telling the whole story when they post on social media or quote successful entrepreneurs.

People prefer to communicate their victories than their defeats.

Is this a bad thing?

I don’t think so.

Over the previous decade, entrepreneurship went from impossible to the finest thing ever.

It peaked in 2020-21 and is returning to reality.

Startups aren't for everyone.

If you like your job, don't quit.

Entrepreneurship won't amaze people if you quit your job.

It's irrelevant.

You're doomed.

And you'll probably make less money.

If you hate your job, quit. Change jobs and bosses. Changing jobs could net you a greater pay or better perks.

When you go solo, your paycheck and perks vanish. Did I mention you'll fail, sleep less, and stress more?

Nobody will stop you from pursuing entrepreneurship. You'll face several challenges.

Possibly.

Entrepreneurship may be romanticized for years.

Based on what I see from entrepreneurs on social media and trends, entrepreneurship is challenging and few will succeed.

John Rampton

3 years ago

Ideas for Samples of Retirement Letters

Ready to quit full-time? No worries.

Baby Boomer retirement has accelerated since COVID-19 began. In 2020, 29 million boomers retire. Over 3 million more than in 2019. 75 million Baby Boomers will retire by 2030.

First, quit your work to enjoy retirement. Leave a professional legacy. Your retirement will start well. It all starts with a retirement letter.

Retirement Letter

Retirement letters are formal resignation letters. Different from other resignation letters, these don't tell your employer you're leaving. Instead, you're quitting.

Since you're not departing over grievances or for a better position or higher income, you may usually terminate the relationship amicably. Consulting opportunities are possible.

Thank your employer for their support and give them transition information.

Resignation letters aren't merely a formality. This method handles wages, insurance, and retirement benefits.

Retirement letters often accompany verbal notices to managers. Schedule a meeting before submitting your retirement letter to discuss your plans. The letter will be stored alongside your start date, salary, and benefits in your employee file.

Retirement is typically well-planned. Employers want 6-12 months' notice.

Summary

Guidelines for Giving Retirement Notice

Components of a Successful Retirement Letter

Template for Retirement Letter

Ideas for Samples of Retirement Letters

First Example of Retirement Letter

Second Example of Retirement Letter

Third Example of Retirement Letter

Fourth Example of Retirement Letter

Fifth Example of Retirement Letter

Sixth Example of Retirement Letter

Seventh Example of Retirement Letter

Eighth Example of Retirement Letter

Ninth Example of Retirement Letter

Tenth Example of Retirement Letter

Frequently Asked Questions

1. What is a letter of retirement?

2. Why should you include a letter of retirement?

3. What information ought to be in your retirement letter?

4. Must I provide notice?

5. What is the ideal retirement age?

Guidelines for Giving Retirement Notice

While starting a new phase, you're also leaving a job you were qualified for. You have years of experience. So, it may not be easy to fill a retirement-related vacancy.

Talk to your boss in person before sending a letter. Notice is always appreciated. Properly announcing your retirement helps you and your organization transition.

How to announce retirement:

Learn about the retirement perks and policies offered by the company. The first step in figuring out whether you're eligible for retirement benefits is to research your company's retirement policy.

Don't depart without providing adequate notice. You should give the business plenty of time to replace you if you want to retire in a few months.

Help the transition by offering aid. You could be a useful resource if your replacement needs training.

Contact the appropriate parties. The original copy should go to your boss. Give a copy to HR because they will manage your 401(k), pension, and health insurance.

Investigate the option of working as a consultant or part-time. If you desire, you can continue doing some limited work for the business.

Be nice to others. Describe your achievements and appreciation. Additionally, express your gratitude for giving you the chance to work with such excellent coworkers.

Make a plan for your future move. Simply updating your employer on your goals will help you maintain a good working relationship.

Use a formal letter or email to formalize your plans. The initial step is to speak with your supervisor and HR in person, but you must also give written notice.

Components of a Successful Retirement Letter

To write a good retirement letter, keep in mind the following:

A formal salutation. Here, the voice should be deliberate, succinct, and authoritative.

Be specific about your intentions. The key idea of your retirement letter is resignation. Your decision to depart at this time should be reflected in your letter. Remember that your intention must be clear-cut.

Your deadline. This information must be in resignation letters. Laws and corporate policies may both stipulate a minimum amount of notice.

A kind voice. Your retirement letter shouldn't contain any resentments, insults, or other unpleasantness. Your letter should be a model of professionalism and grace. A straightforward thank you is a terrific approach to accomplish that.

Your ultimate goal. Chaos may start to happen as soon as you turn in your resignation letter. Your position will need to be filled. Additionally, you will have to perform your obligations up until a successor is found. Your availability during the interim period should be stated in your resignation letter.

Give us a way to reach you. Even if you aren't consulting, your company will probably get in touch with you at some point. They might send you tax documents and details on perks. By giving your contact information, you can make this process easier.

Template for Retirement Letter

Identify

Title you held

Address

Supervisor's name

Supervisor’s position

Company name

HQ address

Date

[SUPERVISOR],

1.

Inform that you're retiring. Include your last day worked.

2.

Employer thanks. Mention what you're thankful for. Describe your accomplishments and successes.

3.

Helping moves things ahead. Plan your retirement. Mention your consultancy interest.

Sincerely,

[Signature]

First and last name

Phone number

Personal Email

Ideas for Samples of Retirement Letters

First Example of Retirement Letter

Martin D. Carey

123 Fleming St

Bloomfield, New Jersey 07003

(555) 555-1234

June 6th, 2022

Willie E. Coyote

President

Acme Co

321 Anvil Ave

Fairfield, New Jersey 07004

Dear Mr. Coyote,

This letter notifies Acme Co. of my retirement on August 31, 2022.

There has been no other organization that has given me that sense of belonging and purpose.

My fifteen years at the helm of the Structural Design Division have given me a strong sense of purpose. I’ve been fortunate to have your support, and I’ll be always grateful for the opportunity you offered me.

I had a difficult time making this decision. As a result of finding a small property in Arizona where we will be able to spend our remaining days together, my wife and I have decided to officially retire.

In spite of my regret at being unable to contribute to the firm we’ve built, I believe it is wise to move on.

My heart will always belong to Acme Co. Thank you for the opportunity and best of luck in the years to come.

Sincerely,

Martin D. Carey

Second Example of Retirement Letter

Gustavo Fring

Los Pollas Hermanos

12000–12100 Coors Rd SW,

Albuquerque, New Mexico 87045

Dear Mr. Fring,

I write this letter to announce my formal retirement from Los Pollas Hermanos as manager, effective October 15.

As an employee at Los Pollas Hermanos, I appreciate all the great opportunities you have given me. It has been a pleasure to work with and learn from my colleagues for the past 10 years, and I am looking forward to my next challenge.

If there is anything I can do to assist during this time, please let me know.

Sincerely,

Linda T. Crespo

Third Example of Retirement Letter

William M. Arviso

4387 Parkview Drive

Tustin, CA 92680

May 2, 2023

Tony Stark

Owner

Stark Industries

200 Industrial Avenue

Long Beach, CA 90803

Dear Tony:

I’m writing to inform you that my final day of work at Stark Industries will be May14, 2023. When that time comes, I intend to retire.

As I embark on this new chapter in my life, I would like to thank you and the entire Stark Industries team for providing me with so many opportunities. You have all been a pleasure to work with and I will miss you all when I retire.

I am glad to assist you with the transition in any way I can to ensure your new hire has a seamless experience. All ongoing projects will be completed until my retirement date, and all key information will be handed over to the team.

Once again, thank you for the opportunity to be part of the Stark Industries team. All the best to you and the team in the days to come.

Please do not hesitate to contact me if you require any additional information. In order to finalize my retirement plans, I’ll meet with HR and can provide any details that may be necessary.

Sincerely,

(Signature)

William M. Arviso

Fourth Example of Retirement Letter

Garcia, Barbara

First Street, 5432

New York City, NY 10001

(1234) (555) 123–1234

1 October 2022

Gunther

Owner

Central Perk

199 Lafayette St.

New York City, NY 10001

Mr. Gunther,

The day has finally arrived. As I never imagined, I will be formally retiring from Central Perk on November 1st, 2022.

Considering how satisfied I am with my current position, this may surprise you. It would be best if I retired now since my health has deteriorated, so I think this is a good time to do so.

There is no doubt that the past two decades have been wonderful. Over the years, I have seen a small coffee shop grow into one of the city’s top destinations.

It will be hard for me to leave this firm without wondering what more success we could have achieved. But I’m confident that you and the rest of the Central Perk team will achieve great things.

My family and I will never forget what you’ve done for us, and I am grateful for the chance you’ve given me. My house is always open to you.

Sincerely Yours

Garcia, Barbara

Fifth Example of Retirement Letter

Pat Williams

618 Spooky Place

Monstropolis, 23221

123–555–0031

pwilliams@email.com

Feb. 16, 2022

Mike Wazowski

Co-CEO

Monters, Inc.

324 Scare Road

Monstropolis

Dear Mr. Wazowski,

As a formal notice of my upcoming retirement, I am submitting this letter. I will be leaving Monters, Inc. on April 13.

These past 10 years as a marketing associate have provided me with many opportunities. Since we started our company a decade ago, we have seen the face of harnessing screams change dramatically into harnessing laughter. During my time working with this dynamic marketing team, I learned a lot about customer behavior and marketing strategies. Working closely with some of our long-standing clients, such as Boo, was a particular pleasure.

I would be happy to assist with the transition following my retirement. It would be my pleasure to assist in the hiring or training of my replacement. In order to spend more time with my family, I will also be able to offer part-time consulting services.

After I retire, I plan to cash out the eight unused vacation days I’ve accumulated and take my pension as a lump sum.

Thank you for the opportunity to work with Monters, Inc. In the years to come, I wish you all the best!

Sincerely,

Paul Williams

Sixth Example of Retirement Letter

Dear Micheal,

As In my tenure at Dunder Mifflin Paper Company, I have given everything I had. It has been an honor to work here. But I have decided to move on to new challenges and retire from my position — mainly bears, beets, and Battlestar Galactia.

I appreciate the opportunity to work here and learn so much. During my time at this company, I will always remember the good times and memories we shared. Wishing you all the best in the future.

Sincerely,

Dwight K. Shrute

Your signature

May 16

Seventh Example of Retirement Letter

Greetings, Bill

I am announcing my retirement from Initech, effective March 15, 2023.

Over the course of my career here, I’ve had the privilege of working with so many talented and inspiring people.

In 1999, when I began working as a customer service representative, we were a small organization located in a remote office park.

The fact that we now occupy a floor of the Main Street office building with over 150 employees continues to amaze me.

I am looking forward to spending more time with family and traveling the country in our RV. Although I will be sad to leave.

Please let me know if there are any extra steps I can take to facilitate this transfer.

Sincerely,

Frankin, RenitaEighth Example of Retirement Letter

Height Example of Retirement Letter

Bruce,

Please accept my resignation from Wayne Enterprises as Marketing Communications Director. My last day will be August 1, 2022.

The decision to retire has been made after much deliberation. Now that I have worked in the field for forty years, I believe it is a good time to begin completing my bucket list.

It was not easy for me to decide to leave the company. Having worked at Wayne Enterprises has been rewarding both professionally and personally. There are still a lot of memories associated with my first day as a college intern.

My intention was not to remain with such an innovative company, as you know. I was able to see the big picture with your help, however. Today, we are a force that is recognized both nationally and internationally.

In addition to your guidance, the bold, visionary leadership of our company contributed to the growth of our company.

My departure from the company coincides with a particularly hectic time. Despite my best efforts, I am unable to postpone my exit.

My position would be well served by an internal solution. I have a more than qualified marketing manager in Caroline Crown. It would be a pleasure to speak with you about this.

In case I can be of assistance during the switchover, please let me know. Contact us at (555)555–5555. As part of my responsibilities, I am responsible for making sure all work is completed to Wayne Enterprise’s stringent requirements. Having the opportunity to work with you has been a pleasure. I wish you continued success with your thriving business.

Sincerely,

Cash, Cole

Marketing/Communications

Ninth Example of Retirement Letter

Norman, Jamie

2366 Hanover Street

Whitestone, NY 11357

555–555–5555

15 October 2022

Mr. Lippman

Head of Pendant Publishing

600 Madison Ave.

New York, New York

Respected Mr. Lippman,

Please accept my resignation effective November 1, 2022.

Over the course of my ten years at Pendant Publishing, I’ve had a great deal of fun and I’m quite grateful for all the assistance I’ve received.

It was a pleasure to wake up and go to work every day because of our outstanding corporate culture and the opportunities for promotion and professional advancement available to me.

While I am excited about retiring, I am going to miss being part of our team. It’s my hope that I’ll be able to maintain the friendships I’ve formed here for a long time to come.

In case I can be of assistance prior to or following my departure, please let me know. If I can assist in any way to ensure a smooth transfer to my successor, I would be delighted to do so.

Sincerely,

Signed (hard copy letter)

Norman, Jamie

Tenth Example of Retirement Letter

17 January 2023

Greg S. Jackson

Cyberdyne Systems

18144 El Camino Real,

Sunnyvale, CA

Respected Mrs. Duncan,

I am writing to inform you that I will be resigning from Cyberdyne Systems as of March 1, 2023. I’m grateful to have had this opportunity, and it was a difficult decision to make.

My development as a programmer and as a more seasoned member of the organization has been greatly assisted by your coaching.

I have been proud of Cyberdyne Systems’ ethics and success throughout my 25 years at the company. Starting as a mailroom clerk and currently serving as head programmer.

The portfolios of our clients have always been handled with the greatest care by my colleagues. It is our employees and services that have made Cyberdyne Systems the success it is today.

During my tenure as head of my division, I’ve increased our overall productivity by 800 percent, and I expect that trend to continue after I retire.

In light of the fact that the process of replacing me may take some time, I would like to offer my assistance in any way I can.

The greatest contender for this job is Troy Ledford, my current assistant.

Also, before I leave, I would be willing to teach any partners how to use the programmer I developed to track and manage the development of Skynet.

Over the next few months, I’ll be enjoying vacations with my wife as well as my granddaughter moving to college.

If Cyberdyne Systems has any openings for consultants, please let me know. It has been a pleasure working with you over the last 25 years. I appreciate your concern and care.

Sincerely,

Greg S, Jackson

Questions and Answers

1. What is a letter of retirement?

Retirement letters tell your supervisor you're retiring. This informs your employer that you're departing, like a letter. A resignation letter also requests retirement benefits.

Supervisors frequently receive retirement letters and verbal resignations. Before submitting your retirement letter, meet to discuss your plans. This letter will be filed with your start date, salary, and benefits.

2. Why should you include a letter of retirement?

Your retirement letter should explain why you're leaving. When you quit, your manager and HR department usually know. Regardless, a retirement letter might help you leave on a positive tone. It ensures they have the necessary papers.

In your retirement letter, you tell the firm your plans so they can find your replacement. You may need to stay in touch with your company after sending your retirement letter until a successor is identified.

3. What information ought to be in your retirement letter?

Format it like an official letter. Include your retirement plans and retirement-specific statistics. Date may be most essential.

In some circumstances, benefits depend on when you resign and retire. A date on the letter helps HR or senior management verify when you gave notice and how long.

In addition to your usual salutation, address your letter to your manager or supervisor.

The letter's body should include your retirement date and transition arrangements. Tell them whether you plan to help with the transition or train a new employee. You may have a three-month time limit.

Tell your employer your job title, how long you've worked there, and your biggest successes. Personalize your letter by expressing gratitude for your career and outlining your retirement intentions. Finally, include your contact info.

4. Must I provide notice?

Two-week notice isn't required. Your company may require it. Some state laws contain exceptions.

Check your contract, company handbook, or HR to determine your retirement notice. Resigning may change the policy.

Regardless of your company's policy, notification is standard. Entry-level or junior jobs can be let go so the corporation can replace them.

Middle managers, high-level personnel, and specialists may take months to replace. Two weeks' notice is a courtesy. Start planning months ahead.

You can finish all jobs at that period. Prepare transition documents for coworkers and your replacement.

5. What is the ideal retirement age?

Depends on finances, state, and retirement plan. The average American retires at 62. The average retirement age is 66, according to Gallup's 2021 Economy and Personal Finance Survey.

Remember:

Before the age of 59 1/2, withdrawals from pre-tax retirement accounts, such as 401(k)s and IRAs, are subject to a penalty.

Benefits from Social Security can be accessed as early as age 62.

Medicare isn't available to you till you're 65,

Depending on the year of your birth, your Full Retirement Age (FRA) will be between 66 and 67 years old.

If you haven't taken them already, your Social Security benefits increase by 8% annually between ages 6 and 77.