More on Productivity

Cammi Pham

3 years ago

7 Scientifically Proven Things You Must Stop Doing To Be More Productive

Smarter work yields better results.

17-year-old me worked and studied 20 hours a day. During school breaks, I did coursework and ran a nonprofit at night. Long hours earned me national campaigns, A-list opportunities, and a great career. As I aged, my thoughts changed. Working harder isn't necessarily the key to success.

In some cases, doing less work might lead to better outcomes.

Consider a hard-working small business owner. He can't beat his corporate rivals by working hard. Time's limited. An entrepreneur can work 24 hours a day, 7 days a week, but a rival can invest more money, create a staff, and put in more man hours. Why have small startups done what larger companies couldn't? Facebook paid $1 billion for 13-person Instagram. Snapchat, a 30-person startup, rejected Facebook and Google bids. Luck and efficiency each contributed to their achievement.

The key to success is not working hard. It’s working smart.

Being busy and productive are different. Busy doesn't always equal productive. Productivity is less about time management and more about energy management. Life's work. It's using less energy to obtain more rewards. I cut my work week from 80 to 40 hours and got more done. I value simplicity.

Here are seven activities I gave up in order to be more productive.

1. Give up working extra hours and boost productivity instead.

When did the five-day, 40-hour work week start? Henry Ford, Ford Motor Company founder, experimented with his workers in 1926.

He decreased their daily hours from 10 to 8, and shortened the work week from 6 days to 5. As a result, he saw his workers’ productivity increase.

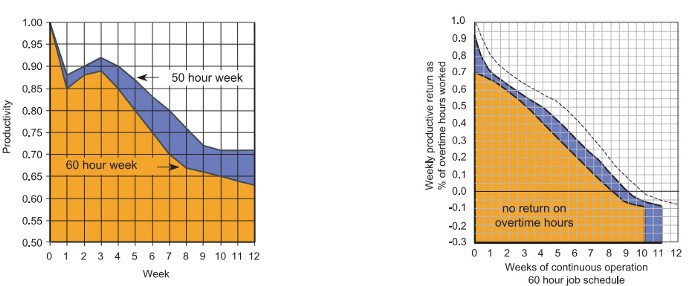

According to a 1980 Business Roundtable report, Scheduled Overtime Effect on Construction Projects, the more you work, the less effective and productive you become.

“Where a work schedule of 60 or more hours per week is continued longer than about two months, the cumulative effect of decreased productivity will cause a delay in the completion date beyond that which could have been realized with the same crew size on a 40-hour week.” Source: Calculating Loss of Productivity Due to Overtime Using Published Charts — Fact or Fiction

AlterNet editor Sara Robinson cited US military research showing that losing one hour of sleep per night for a week causes cognitive impairment equivalent to a.10 blood alcohol level. You can get fired for showing up drunk, but an all-nighter is fine.

Irrespective of how well you were able to get on with your day after that most recent night without sleep, it is unlikely that you felt especially upbeat and joyous about the world. Your more-negative-than-usual perspective will have resulted from a generalized low mood, which is a normal consequence of being overtired. More important than just the mood, this mind-set is often accompanied by decreases in willingness to think and act proactively, control impulses, feel positive about yourself, empathize with others, and generally use emotional intelligence. Source: The Secret World of Sleep: The Surprising Science of the Mind at Rest

To be productive, don't overwork and get enough sleep. If you're not productive, lack of sleep may be to blame. James Maas, a sleep researcher and expert, said 7/10 Americans don't get enough sleep.

Did you know?

Leonardo da Vinci slept little at night and frequently took naps.

Napoleon, the French emperor, had no qualms about napping. He splurged every day.

Even though Thomas Edison felt self-conscious about his napping behavior, he regularly engaged in this ritual.

President Franklin D. Roosevelt's wife Eleanor used to take naps before speeches to increase her energy.

The Singing Cowboy, Gene Autry, was known for taking regular naps in his dressing area in between shows.

Every day, President John F. Kennedy took a siesta after eating his lunch in bed.

Every afternoon, oil businessman and philanthropist John D. Rockefeller took a nap in his office.

It was unavoidable for Winston Churchill to take an afternoon snooze. He thought it enabled him to accomplish twice as much each day.

Every afternoon around 3:30, President Lyndon B. Johnson took a nap to divide his day into two segments.

Ronald Reagan, the 40th president, was well known for taking naps as well.

Source: 5 Reasons Why You Should Take a Nap Every Day — Michael Hyatt

Since I started getting 7 to 8 hours of sleep a night, I've been more productive and completed more work than when I worked 16 hours a day. Who knew marketers could use sleep?

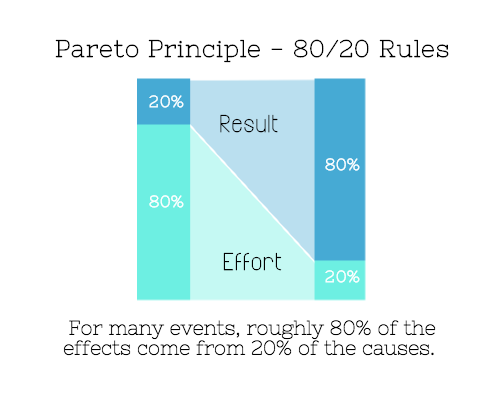

2. Refrain from accepting too frequently

Pareto's principle states that 20% of effort produces 80% of results, but 20% of results takes 80% of effort. Instead of working harder, we should prioritize the initiatives that produce the most outcomes. So we can focus on crucial tasks. Stop accepting unproductive tasks.

“The difference between successful people and very successful people is that very successful people say “no” to almost everything.” — Warren Buffett

What should you accept? Why say no? Consider doing a split test to determine if anything is worth your attention. Track what you do, how long it takes, and the consequences. Then, evaluate your list to discover what worked (or didn't) to optimize future chores.

Most of us say yes more often than we should, out of guilt, overextension, and because it's simpler than no. Nobody likes being awful.

Researchers separated 120 students into two groups for a 2012 Journal of Consumer Research study. One group was educated to say “I can't” while discussing choices, while the other used “I don't”.

The students who told themselves “I can’t eat X” chose to eat the chocolate candy bar 61% of the time. Meanwhile, the students who told themselves “I don’t eat X” chose to eat the chocolate candy bars only 36% of the time. This simple change in terminology significantly improved the odds that each person would make a more healthy food choice.

Next time you need to say no, utilize I don't to encourage saying no to unimportant things.

The 20-second rule is another wonderful way to avoid pursuits with little value. Add a 20-second roadblock to things you shouldn't do or bad habits you want to break. Delete social media apps from your phone so it takes you 20 seconds to find your laptop to access them. You'll be less likely to engage in a draining hobby or habit if you add an inconvenience.

Lower the activation energy for habits you want to adopt and raise it for habits you want to avoid. The more we can lower or even eliminate the activation energy for our desired actions, the more we enhance our ability to jump-start positive change. Source: The Happiness Advantage: The Seven Principles of Positive Psychology That Fuel Success and Performance at Work

3. Stop doing everything yourself and start letting people help you

I once managed a large community and couldn't do it alone. The community took over once I burned out. Members did better than I could have alone. I learned about community and user-generated content.

Consumers know what they want better than marketers. Octoly says user-generated videos on YouTube are viewed 10 times more than brand-generated videos. 51% of Americans trust user-generated material more than a brand's official website (16%) or media coverage (22%). (14 percent). Marketers should seek help from the brand community.

Being a successful content marketer isn't about generating the best content, but cultivating a wonderful community.

We should seek aid when needed. We can't do everything. It's best to delegate work so you may focus on the most critical things. Instead of overworking or doing things alone, let others help.

Having friends or coworkers around can boost your productivity even if they can't help.

Just having friends nearby can push you toward productivity. “There’s a concept in ADHD treatment called the ‘body double,’ ” says David Nowell, Ph.D., a clinical neuropsychologist from Worcester, Massachusetts. “Distractable people get more done when there is someone else there, even if he isn’t coaching or assisting them.” If you’re facing a task that is dull or difficult, such as cleaning out your closets or pulling together your receipts for tax time, get a friend to be your body double. Source: Friendfluence: The Surprising Ways Friends Make Us Who We Are

4. Give up striving for perfection

Perfectionism hinders professors' research output. Dr. Simon Sherry, a psychology professor at Dalhousie University, did a study on perfectionism and productivity. Dr. Sherry established a link between perfectionism and productivity.

Perfectionism has its drawbacks.

They work on a task longer than necessary.

They delay and wait for the ideal opportunity. If the time is right in business, you are already past the point.

They pay too much attention to the details and miss the big picture.

Marketers await the right time. They miss out.

The perfect moment is NOW.

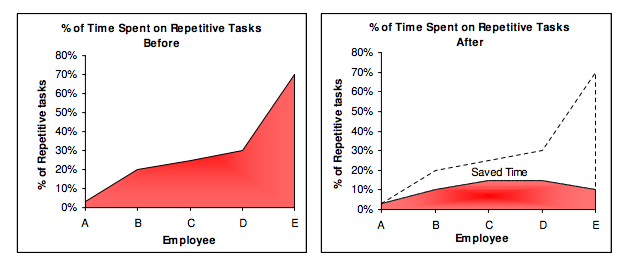

5. Automate monotonous chores instead of continuing to do them.

A team of five workers who spent 3%, 20%, 25%, 30%, and 70% of their time on repetitive tasks reduced their time spent to 3%, 10%, 15%, 15%, and 10% after two months of working to improve their productivity.

Last week, I wrote a 15-minute Python program. I wanted to generate content utilizing Twitter API data and Hootsuite to bulk schedule it. Automation has cut this task from a day to five minutes. Whenever I do something more than five times, I try to automate it.

Automate monotonous chores without coding. Skills and resources are nice, but not required. If you cannot build it, buy it.

People forget time equals money. Manual work is easy and requires little investigation. You can moderate 30 Instagram photographs for your UGC campaign. You need digital asset management software to manage 30,000 photographs and movies from five platforms. Filemobile helps individuals develop more user-generated content. You may buy software to manage rich media and address most internet difficulties.

Hire an expert if you can't find a solution. Spend money to make money, and time is your most precious asset.

Visit GitHub or Google Apps Script library, marketers. You may often find free, easy-to-use open source code.

6. Stop relying on intuition and start supporting your choices with data.

You may optimize your life by optimizing webpages for search engines.

Numerous studies might help you boost your productivity. Did you know individuals are most distracted from midday to 4 p.m.? This is what a Penn State psychology professor found. Even if you can't find data on a particular question, it's easy to run a split test and review your own results.

7. Stop working and spend some time doing absolutely nothing.

Most people don't know that being too focused can be destructive to our work or achievements. The Boston Globe's The Power of Lonely says solo time is excellent for the brain and spirit.

One ongoing Harvard study indicates that people form more lasting and accurate memories if they believe they’re experiencing something alone. Another indicates that a certain amount of solitude can make a person more capable of empathy towards others. And while no one would dispute that too much isolation early in life can be unhealthy, a certain amount of solitude has been shown to help teenagers improve their moods and earn good grades in school. Source: The Power of Lonely

Reflection is vital. We find solutions when we're not looking.

We don't become more productive overnight. It demands effort and practice. Waiting for change doesn't work. Instead, learn about your body and identify ways to optimize your energy and time for a happy existence.

Simon Egersand

3 years ago

Working from home for more than two years has taught me a lot.

Since the pandemic, I've worked from home. It’s been +2 years (wow, time flies!) now, and during this time I’ve learned a lot. My 4 remote work lessons.

I work in a remote distributed team. This team setting shaped my experience and teachings.

Isolation ("I miss my coworkers")

The most obvious point. I miss going out with my coworkers for coffee, weekend chats, or just company while I work. I miss being able to go to someone's desk and ask for help. On a remote world, I must organize a meeting, share my screen, and avoid talking over each other in Zoom - sigh!

Social interaction is more vital for my health than I believed.

Online socializing stinks

My company used to come together every Friday to play Exploding Kittens, have food and beer, and bond over non-work things.

Different today. Every Friday afternoon is for fun, but it's not the same. People with screen weariness miss meetings, which makes sense. Sometimes you're too busy on Slack to enjoy yourself.

We laugh in meetings, but it's not the same as face-to-face.

Digital social activities can't replace real-world ones

Improved Work-Life Balance, if You Let It

At the outset of the pandemic, I recognized I needed to take better care of myself to survive. After not leaving my apartment for a few days and feeling miserable, I decided to walk before work every day. This turned into a passion for exercise, and today I run or go to the gym before work. I use my commute time for healthful activities.

Working from home makes it easier to keep working after hours. I sometimes forget the time and find myself writing coding at dinnertime. I said, "One more test." This is a disadvantage, therefore I keep my office schedule.

Spend your commute time properly and keep to your office schedule.

Remote Pair Programming Is Hard

As a software developer, I regularly write code. My team sometimes uses pair programming to write code collaboratively. One person writes code while another watches, comments, and asks questions. I won't list them all here.

Internet pairing is difficult. My team struggles with this. Even with Tuple, it's challenging. I lose attention when I get a notification or check my computer.

I miss a pen and paper to rapidly sketch down my thoughts for a colleague or a whiteboard for spirited talks with others. Best answers are found through experience.

Real-life pair programming beats the best remote pair programming tools.

Lessons Learned

Here are 4 lessons I've learned working remotely for 2 years.

-

Socializing is more vital to my health than I anticipated.

-

Digital social activities can't replace in-person ones.

-

Spend your commute time properly and keep your office schedule.

-

Real-life pair programming beats the best remote tools.

Conclusion

Our era is fascinating. Remote labor has existed for years, but software companies have just recently had to adapt. Companies who don't offer remote work will lose talent, in my opinion.

We're still figuring out the finest software development approaches, programming language features, and communication methods since the 1960s. I can't wait to see what advancements assist us go into remote work.

I'll certainly work remotely in the next years, so I'm interested to see what I've learnt from this post then.

This post is a summary of this one.

David G Chen

3 years ago

If you want to earn money, stop writing for entertainment.

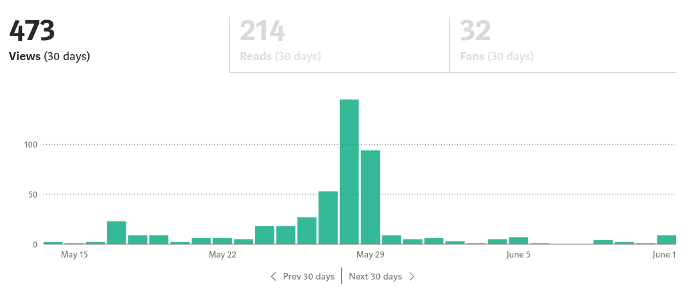

When you stop blogging for a few weeks, your views and profits plummet.

Because you're writing fascinating posts for others. Everyone's done ithat…

If I keep writing, the graph should maintain velocity, you could say. If I wrote more, it could rise.

However, entertaining pieces still tend to roller coaster and jump.

this type of writing is like a candle. They burn out and must be replaced. You must continuously light new ones to maintain the illumination.

When you quit writing, your income stops.

A substitute

Instead of producing amusing articles, try solving people's issues. You should answer their search questions.

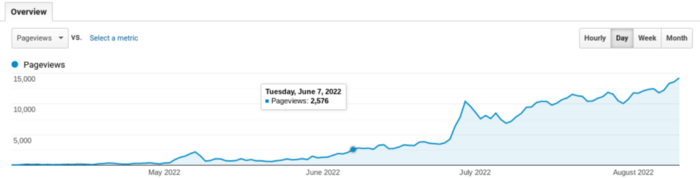

Here's what happens when you answer their searches.

My website's Google analytics. As a dentist, I answer oral health questions.

This chart vs. Medium is pretty glaring, right?

As of yesterday, it was averaging 15k page views each day.

How much would you make on Medium with 15k daily views?

Evergreen materials

In SEO, this is called evergreen content.

Your content is like a lush, evergreen forest, and by green I mean Benjamins.

Do you have knowledge that you can leverage? Why not help your neighbors and the world?

Answer search inquiries and help others. You'll be well rewarded.

This is better than crafting candle-like content that fizzles out quickly.

Is beauty really ephemeral like how flowers bloom? Nah, I prefer watching forests grow instead (:

You might also like

Sammy Abdullah

3 years ago

SaaS payback period data

It's ok and even desired to be unprofitable if you're gaining revenue at a reasonable cost and have 100%+ net dollar retention, meaning you never lose customers and expand them. To estimate the acceptable cost of new SaaS revenue, we compare new revenue to operating loss and payback period. If you pay back the customer acquisition cost in 1.5 years and never lose them (100%+ NDR), you're doing well.

To evaluate payback period, we compared new revenue to net operating loss for the last 73 SaaS companies to IPO since October 2017. (55 out of 73). Here's the data. 1/(new revenue/operating loss) equals payback period. New revenue/operating loss equals cost of new revenue.

Payback averages a year. 55 SaaS companies that weren't profitable at IPO got a 1-year payback. Outstanding. If you pay for a customer in a year and never lose them (100%+ NDR), you're establishing a valuable business. The average was 1.3 years, which is within the 1.5-year range.

New revenue costs $0.96 on average. These SaaS companies lost $0.96 every $1 of new revenue last year. Again, impressive. Average new revenue per operating loss was $1.59.

Loss-in-operations definition. Operating loss revenue COGS S&M R&D G&A (technical point: be sure to use the absolute value of operating loss). It's wrong to only consider S&M costs and ignore other business costs. Operating loss and new revenue are measured over one year to eliminate seasonality.

Operating losses are desirable if you never lose a customer and have a quick payback period, especially when SaaS enterprises are valued on ARR. The payback period should be under 1.5 years, the cost of new income < $1, and net dollar retention 100%.

Trevor Stark

3 years ago

Economics is complete nonsense.

Mainstream economics haven't noticed.

What come to mind when I say the word "economics"?

Probably GDP, unemployment, and inflation.

If you've ever watched the news or listened to an economist, they'll use data like these to defend a political goal.

The issue is that these statistics are total bunk.

I'm being provocative, but I mean it:

The economy is not measured by GDP.

How many people are unemployed is not counted in the unemployment rate.

Inflation is not measured by the CPI.

All orthodox economists' major economic statistics are either wrong or falsified.

Government institutions create all these stats. The administration wants to reassure citizens the economy is doing well.

GDP does not reflect economic expansion.

GDP measures a country's economic size and growth. It’s calculated by the BEA, a government agency.

The US has the world's largest (self-reported) GDP, growing 2-3% annually.

If GDP rises, the economy is healthy, say economists.

Why is the GDP flawed?

GDP measures a country's yearly spending.

The government may adjust this to make the economy look good.

GDP = C + G + I + NX

C = Consumer Spending

G = Government Spending

I = Investments (Equipment, inventories, housing, etc.)

NX = Exports minus Imports

GDP is a country's annual spending.

The government can print money to boost GDP. The government has a motive to increase and manage GDP.

Because government expenditure is part of GDP, printing money and spending it on anything will raise GDP.

They've done this. Since 1950, US government spending has grown 8% annually, faster than GDP.

In 2022, government spending accounted for 44% of GDP. It's the highest since WWII. In 1790-1910, it was 3% of GDP.

Who cares?

The economy isn't only spending. Focus on citizens' purchasing power or quality of life.

Since GDP just measures spending, the government can print money to boost GDP.

Even if Americans are poorer than last year, economists can say GDP is up and everything is fine.

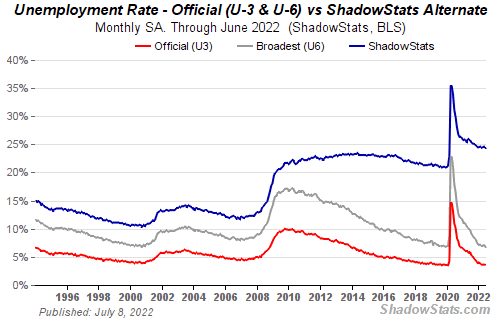

How many people are unemployed is not counted in the unemployment rate.

The unemployment rate measures a country's labor market. If unemployment is high, people aren't doing well economically.

The BLS estimates the (self-reported) unemployment rate as 3-4%.

Why is the unemployment rate so high?

The US government surveys 100k persons to measure unemployment. They extrapolate this data for the country.

They come into 3 categories:

Employed

People with jobs are employed … duh.

Unemployed

People who are “jobless, looking for a job, and available for work” are unemployed

Not in the labor force

The “labor force” is the employed + the unemployed.

The unemployment rate is the percentage of unemployed workers.

Problem is unemployed definition. You must actively seek work to be considered unemployed.

You're no longer unemployed if you haven't interviewed in 4 weeks.

This shit makes no goddamn sense.

Why does this matter?

You can't interview if there are no positions available. You're no longer unemployed after 4 weeks.

In 1994, the BLS redefined "unemployed" to exclude discouraged workers.

If you haven't interviewed in 4 weeks, you're no longer counted in the unemployment rate.

If unemployment were measured by total unemployed, it would be 25%.

Because the government wants to keep the unemployment rate low, they modify the definition.

If every US resident was unemployed and had no job interviews, economists would declare 0% unemployment. Excellent!

Inflation is not measured by the CPI.

The BLS measures CPI. This month was the highest since 1981.

CPI measures the cost of a basket of products across time. Food, energy, shelter, and clothes are included.

A 9.1% CPI means the basket of items is 9.1% more expensive.

What is the CPI problem?

Here's a more detailed explanation of CPI's flaws.

In summary, CPI is manipulated to be understated.

Housing costs are understated to manipulate CPI. Housing accounts for 33% of the CPI because it's the biggest expense for most people.

This signifies it's the biggest CPI weight.

Rather than using actual house prices, the Bureau of Labor Statistics essentially makes shit up. You can read more about the process here.

Surprise! It’s bullshit

The BLS stated Shelter's price rose 5.5% this month.

House prices are up 11-21%. (Source 1, Source 2, Source 3)

Rents are up 14-26%. (Source 1, Source 2)

Why is this important?

If CPI included housing prices, it would be 12-15 percent this month, not 9.1 percent.

9% inflation is nuts. Your money's value halves every 7 years at 9% inflation.

Worse is 15% inflation. Your money halves every 4 years at 15% inflation.

If everyone realized they needed to double their wage every 4-5 years to stay wealthy, there would be riots.

Inflation drains our money's value so the government can keep printing it.

The Solution

Most individuals know the existing system doesn't work, but can't explain why.

People work hard yet lag behind. The government lies about the economy's data.

In reality:

GDP has been down since 2008

25% of Americans are unemployed

Inflation is actually 15%

People might join together to vote out kleptocratic politicians if they knew the reality.

Having reliable economic data is the first step.

People can't understand the situation without sufficient information. Instead of immigrants or billionaires, people would blame liar politicians.

Here’s the vision:

A decentralized, transparent, and global dashboard that tracks economic data like GDP, unemployment, and inflation for every country on Earth.

Government incentives influence economic statistics.

ShadowStats has already started this effort, but the calculations must be transparent, decentralized, and global to be effective.

If interested, email me at trevorstark02@gmail.com.

Here are some links to further your research:

Leon Ho

3 years ago

Digital Brainbuilding (Your Second Brain)

The human brain is amazing. As more scientists examine the brain, we learn how much it can store.

The human brain has 1 billion neurons, according to Scientific American. Each neuron creates 1,000 connections, totaling over a trillion. If each neuron could store one memory, we'd run out of room. [1]

What if you could store and access more info, freeing up brain space for problem-solving and creativity?

Build a second brain to keep up with rising knowledge (what I refer to as a Digital Brain). Effectively managing information entails realizing you can't recall everything.

Every action requires information. You need the correct information to learn a new skill, complete a project at work, or establish a business. You must manage information properly to advance your profession and improve your life.

How to construct a second brain to organize information and achieve goals.

What Is a Second Brain?

How often do you forget an article or book's key point? Have you ever wasted hours looking for a saved file?

If so, you're not alone. Information overload affects millions of individuals worldwide. Information overload drains mental resources and causes anxiety.

This is when the second brain comes in.

Building a second brain doesn't involve duplicating the human brain. Building a system that captures, organizes, retrieves, and archives ideas and thoughts. The second brain improves memory, organization, and recall.

Digital tools are preferable to analog for building a second brain.

Digital tools are portable and accessible. Due to these benefits, we'll focus on digital second-brain building.

Brainware

Digital Brains are external hard drives. It stores, organizes, and retrieves. This means improving your memory won't be difficult.

Memory has three components in computing:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

For example:

Due to rigorous security settings, many websites need you to create complicated passwords with special characters.

You must now memorize (Record), organize (Organize), and input this new password the next time you check in (Recall).

Even in this simple example, there are many pieces to remember. We can't recognize this new password with our usual patterns. If we don't use the password every day, we'll forget it. You'll type the wrong password when you try to remember it.

It's common. Is it because the information is complicated? Nope. Passwords are basically letters, numbers, and symbols.

It happens because our brains aren't meant to memorize these. Digital Brains can do heavy lifting.

Why You Need a Digital Brain

Dual minds are best. Birth brain is limited.

The cerebral cortex has 125 trillion synapses, according to a Stanford Study. The human brain can hold 2.5 million terabytes of digital data. [2]

Building a second brain improves learning and memory.

Learn and store information effectively

Faster information recall

Organize information to see connections and patterns

Build a Digital Brain to learn more and reach your goals faster. Building a second brain requires time and work, but you'll have more time for vital undertakings.

Why you need a Digital Brain:

1. Use Brainpower Effectively

Your brain has boundaries, like any organ. This is true while solving a complex question or activity. If you can't focus on a work project, you won't finish it on time.

Second brain reduces distractions. A robust structure helps you handle complicated challenges quickly and stay on track. Without distractions, it's easy to focus on vital activities.

2. Staying Organized

Professional and personal duties must be balanced. With so much to do, it's easy to neglect crucial duties. This is especially true for skill-building. Digital Brain will keep you organized and stress-free.

Life success requires action. Organized people get things done. Organizing your information will give you time for crucial tasks.

You'll finish projects faster with good materials and methods. As you succeed, you'll gain creative confidence. You can then tackle greater jobs.

3. Creativity Process

Creativity drives today's world. Creativity is mysterious and surprising for millions worldwide. Immersing yourself in others' associations, triggers, thoughts, and ideas can generate inspiration and creativity.

Building a second brain is crucial to establishing your creative process and building habits that will help you reach your goals. Creativity doesn't require perfection or overthinking.

4. Transforming Your Knowledge Into Opportunities

This is the age of entrepreneurship. Today, you can publish online, build an audience, and make money.

Whether it's a business or hobby, you'll have several job alternatives. Knowledge can boost your economy with ideas and insights.

5. Improving Thinking and Uncovering Connections

Modern career success depends on how you think. Instead of overthinking or perfecting, collect the best images, stories, metaphors, anecdotes, and observations.

This will increase your creativity and reveal connections. Increasing your imagination can help you achieve your goals, according to research. [3]

Your ability to recognize trends will help you stay ahead of the pack.

6. Credibility for a New Job or Business

Your main asset is experience-based expertise. Others won't be able to learn without your help. Technology makes knowledge tangible.

This lets you use your time as you choose while helping others. Changing professions or establishing a new business become learning opportunities when you have a Digital Brain.

7. Using Learning Resources

Millions of people use internet learning materials to improve their lives. Online resources abound. These include books, forums, podcasts, articles, and webinars.

These resources are mostly free or inexpensive. Organizing your knowledge can save you time and money. Building a Digital Brain helps you learn faster. You'll make rapid progress by enjoying learning.

How does a second brain feel?

Digital Brain has helped me arrange my job and family life for years.

No need to remember 1001 passwords. I never forget anything on my wife's grocery lists. Never miss a meeting. I can access essential information and papers anytime, anywhere.

Delegating memory to a second brain reduces tension and anxiety because you'll know what to do with every piece of information.

No information will be forgotten, boosting your confidence. Better manage your fears and concerns by writing them down and establishing a strategy. You'll understand the plethora of daily information and have a clear head.

How to Develop Your Digital Brain (Your Second Brain)

It's cheap but requires work.

Digital Brain development requires:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

1. Decide what information matters before recording.

To succeed in today's environment, you must manage massive amounts of data. Articles, books, webinars, podcasts, emails, and texts provide value. Remembering everything is impossible and overwhelming.

What information do you need to achieve your goals?

You must consolidate ideas and create a strategy to reach your aims. Your biological brain can imagine and create with a Digital Brain.

2. Use the Right Tool

We usually record information without any preparation - we brainstorm in a word processor, email ourselves a message, or take notes while reading.

This information isn't used. You must store information in a central location.

Different information needs different instruments.

Evernote is a top note-taking program. Audio clips, Slack chats, PDFs, text notes, photos, scanned handwritten pages, emails, and webpages can be added.

Pocket is a great software for saving and organizing content. Images, videos, and text can be sorted. Web-optimized design

Calendar apps help you manage your time and enhance your productivity by reminding you of your most important tasks. Calendar apps flourish. The best calendar apps are easy to use, have many features, and work across devices. These calendars include Google, Apple, and Outlook.

To-do list/checklist apps are useful for managing tasks. Easy-to-use, versatility, budget, and cross-platform compatibility are important when picking to-do list apps. Google Keep, Google Tasks, and Apple Notes are good to-do apps.

3. Organize data for easy retrieval

How should you organize collected data?

When you collect and organize data, you'll see connections. An article about networking can assist you comprehend web marketing. Saved business cards can help you find new clients.

Choosing the correct tools helps organize data. Here are some tools selection criteria:

Can the tool sync across devices?

Personal or team?

Has a search function for easy information retrieval?

Does it provide easy data categorization?

Can users create lists or collections?

Does it offer easy idea-information connections?

Does it mind map and visually organize thoughts?

Conclusion

Building a Digital Brain (second brain) helps us save information, think creatively, and implement ideas. Your second brain is a biological extension. It prevents amnesia, allowing you to tackle bigger creative difficulties.

People who love learning often consume information without using it. Every day, they postpone life-improving experiences until they're forgotten. Useful information becomes strength.

Reference

[1] ^ Scientific American: What Is the Memory Capacity of the Human Brain?

[2] ^ Clinical Neurology Specialists: What is the Memory Capacity of a Human Brain?

[3] ^ National Library of Medicine: Imagining Success: Multiple Achievement Goals and the Effectiveness of Imagery