More on Marketing

Karo Wanner

3 years ago

This is how I started my Twitter account.

My 12-day results look good.

Twitter seemed for old people and politicians.

I thought the platform would die soon like Facebook.

The platform's growth stalled around 300m users between 2015 and 2019.

In 2020, Twitter grew and now has almost 400m users.

Niharikaa Kaur Sodhi built a business on Twitter while I was away, despite its low popularity.

When I read about the success of Twitter users in the past 2 years, I created an account and a 3-month strategy.

I'll see if it's worth starting Twitter in 2022.

Late or perfect? I'll update you. Track my Twitter growth. You can find me here.

My Twitter Strategy

My Twitter goal is to build a community and recruit members for Mindful Monday.

I believe mindfulness is the only way to solve problems like poverty, inequality, and the climate crisis.

The power of mindfulness is my mission.

Mindful Monday is your weekly reminder to live in the present moment. I send mindfulness tips every Monday.

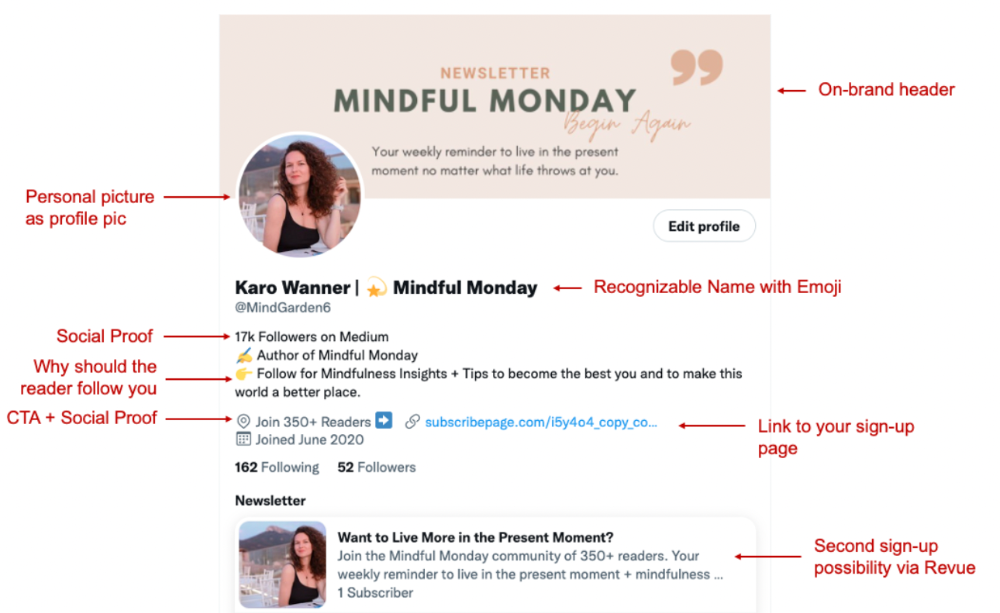

My Twitter profile promotes Mindful Monday and encourages people to join.

What I paid attention to:

I designed a brand-appropriate header to promote Mindful Monday.

Choose a profile picture. People want to know who you are.

I added my name as I do on Medium, Instagram, and emails. To stand out and be easily recognized, add an emoji if appropriate. Add what you want to be known for, such as Health Coach, Writer, or Newsletter.

People follow successful, trustworthy people. Describe any results you have. This could be views, followers, subscribers, or major news outlets. Create!

Tell readers what they'll get by following you. Can you help?

Add CTA to your profile. Your Twitter account's purpose. Give instructions. I placed my sign-up link next to the CTA to promote Mindful Monday. Josh Spector recommended this. (Thanks! Bonus tip: If you don't want the category to show in your profile, e.g. Entrepreneur, go to edit profile, edit professional profile, and choose 'Other'

Here's my Twitter:

I'm no expert, but I tried. Please share any additional Twitter tips and suggestions in the comments.

To hide your Revue newsletter subscriber count:

Join Revue. Select 'Hide Subscriber Count' in Account settings > Settings > Subscriber Count. Voila!

How frequently should you tweet?

1 to 20 Tweets per day, but consistency is key.

Stick to a daily tweet limit. Start with less and be consistent than the opposite.

I tweet 3 times per day. That's my comfort zone. Larger accounts tweet 5–7 times daily.

Do what works for you and that is the right amount.

Twitter is a long-term game, so plan your tweets for a year.

How to Batch Your Tweets?

Sunday batchs.

Sunday evenings take me 1.5 hours to create all my tweets for the week.

Use a word document and write down your posts. Podcasts, books, my own articles inspire me.

When I have a good idea or see a catchy Tweet, I take a screenshot.

To not copy but adapt.

Two pillars support my content:

(90% ~ 29 tweets per week) Inspirational quotes, mindfulness tips, zen stories, mistakes, myths, book recommendations, etc.

(10% 2 tweets per week) I share how I grow Mindful Monday with readers. This pillar promotes MM and behind-the-scenes content.

Second, I schedule all my Tweets using TweetDeck. I tweet at 7 a.m., 5 p.m., and 6 p.m.

Include Twitter Threads in your content strategy

Tweets are blog posts. In your first tweet, you include a headline, then tweet your content.

That’s how you create a series of connected Tweets.

What’s the point? You have more room to convince your reader you're an expert.

Add a call-to-action to your thread.

Follow for more like this

Newsletter signup (share your link)

Ask for retweet

One thread per week is my goal.

I'll schedule threads with Typefully. In the free version, you can schedule one Tweet, but that's fine.

Pin a thread to the top of your profile if it leads to your newsletter. So new readers see your highest-converting content first.

Tweet Medium posts

I also tweet Medium articles.

I schedule 1 weekly repost for 5 weeks after each publication. I share the same article daily for 5 weeks.

Every time I tweet, I include a different article quote, so even if the link is the same, the quote adds value.

Engage Other Experts

When you first create your account, few people will see it. Normal.

If you comment on other industry accounts, you can reach their large audience.

First, you need 50 to 100 followers. Here's my beginner tip.

15 minutes a day or when I have downtime, I comment on bigger accounts in my niche.

My 12-Day Results

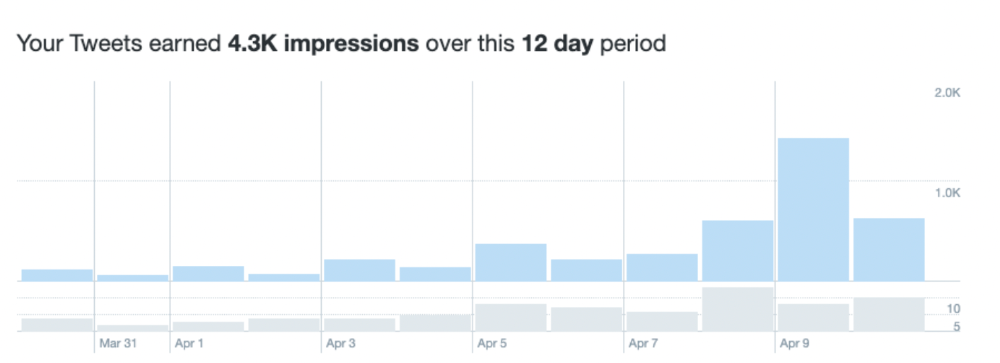

Now let's look at the first data.

I had 32 followers on March 29. 12 followers in 11 days. I have 52 now.

Not huge, but growing rapidly.

Let's examine impressions/views.

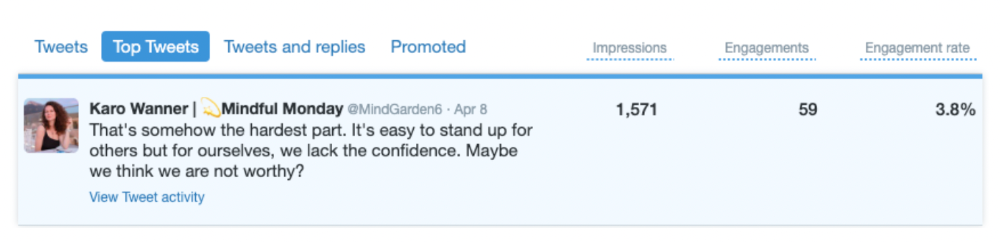

As a newbie, I gained 4,300 impressions/views in 12 days. On Medium, I got fewer views.

The 1,6k impressions per day spike comes from a larger account I mentioned the day before. First, I was shocked to see the spike and unsure of its origin.

These results are promising given the effort required to be consistent on Twitter.

Let's see how my journey progresses. I'll keep you posted.

Tweeters, Does this content strategy make sense? What's wrong? Comment below.

Let's support each other on Twitter. Here's me.

Which Twitter strategy works for you in 2022?

This post is a summary. Read the full article here

Rachel Greenberg

3 years ago

6 Causes Your Sales Pitch Is Unintentionally Repulsing Customers

Skip this if you don't want to discover why your lively, no-brainer pitch isn't making $10k a month.

You don't want to be repulsive as an entrepreneur or anyone else. Making friends, influencing people, and converting strangers into customers will be difficult if your words evoke disgust, distrust, or disrespect. You may be one of many entrepreneurs who do this obliviously and involuntarily.

I've had to master selling my skills to recruiters (to land 6-figure jobs on Wall Street), selling companies to buyers in M&A transactions, and selling my own companies' products to strangers-turned-customers. I probably committed every cardinal sin of sales repulsion before realizing it was me or my poor salesmanship strategy.

If you're launching a new business, frustrated by low conversion rates, or just curious if you're repelling customers, read on to identify (and avoid) the 6 fatal errors that can kill any sales pitch.

1. The first indication

So many people fumble before they even speak because they assume their role is to convince the buyer. In other words, they expect to pressure, arm-twist, and combat objections until they convert the buyer. Actuality, the approach stinks of disgust, and emotionally-aware buyers would feel "gross" immediately.

Instead of trying to persuade a customer to buy, ask questions that will lead them to do so on their own. When a customer discovers your product or service on their own, they need less outside persuasion. Why not position your offer in a way that leads customers to sell themselves on it?

2. A flawless performance

Are you memorizing a sales script, tweaking video testimonials, and expunging historical blemishes before hitting "publish" on your new campaign? If so, you may be hurting your conversion rate.

Perfection may be a step too far and cause prospects to mistrust your sincerity. Become a great conversationalist to boost your sales. Seriously. Being charismatic is hard without being genuine and showing a little vulnerability.

People like vulnerability, even if it dents your perfect facade. Show the customer's stuttering testimonial. Open up about your or your company's past mistakes (and how you've since improved). Make your sales pitch a two-way conversation. Let the customer talk about themselves to build rapport. Real people sell, not canned scripts and movie-trailer testimonials.

If marketing or sales calls feel like a performance, you may be doing something wrong or leaving money on the table.

3. Your greatest phobia

Three minutes into prospect talks, I'd start sweating. I was talking 100 miles per hour, covering as many bases as possible to avoid the ones I feared. I knew my then-offering was inadequate and my firm had fears I hadn't addressed. So I word-vomited facts, features, and everything else to avoid the customer's concerns.

Do my prospects know I'm insecure? Maybe not, but it added an unnecessary and unhelpful layer of paranoia that kept me stressed, rushed, and on edge instead of connecting with the prospect. Skirting around a company, product, or service's flaws or objections is a poor, temporary, lazy (and cowardly) decision.

How can you project confidence and trust if you're afraid? Before you make another sales call, face your shortcomings, weak points, and objections. Your company won't be everyone's cup of tea, but you should have answers to every question or objection. You should be your business's top spokesperson and defender.

4. The unintentional apologies

Have you ever begged for a sale? I'm going to say no, however you may be unknowingly emitting sorry, inferior, insecure energy.

Young founders, first-time entrepreneurs, and those with severe imposter syndrome may elevate their target customer. This is common when trying to get first customers for obvious reasons.

Since you're truly new at this, you naturally lack experience.

You don't have the self-confidence boost of thousands or hundreds of closed deals or satisfied client results to remind you that your good or service is worthwhile.

Getting those initial few clients seems like the most difficult task, as if doing so will decide the fate of your company as a whole (it probably won't, and you shouldn't actually place that much emphasis on any one transaction).

Customers can smell fear, insecurity, and anxiety just like they can smell B.S. If you believe your product or service improves clients' lives, selling it should feel like a benevolent act of service, not a sleazy money-grab. If you're a sincere entrepreneur, prospects will believe your proposition; if you're apprehensive, they'll notice.

Approach every sale as if you're fine with or without it. This has improved my salesmanship, marketing skills, and mental health. When you put pressure on yourself to close a sale or convince a difficult prospect "or else" (your company will fail, your rent will be late, your electricity will be cut), you emit desperation and lower the quality of your pitch. There's no point.

5. The endless promises

We've all read a million times how to answer or disprove prospects' arguments and add extra incentives to speed or secure the close. Some objections shouldn't be refuted. What if I told you not to offer certain incentives, bonuses, and promises? What if I told you to walk away from some prospects, even if it means losing your sales goal?

If you market to enough people, make enough sales calls, or grow enough companies, you'll encounter prospects who can't be satisfied. These prospects have endless questions, concerns, and requests for more, more, more that you'll never satisfy. These people are a distraction, a resource drain, and a test of your ability to cut losses before they erode your sanity and profit margin.

To appease or convert these insatiably needy, greedy Nellies into customers, you may agree with or acquiesce to every request and demand — even if you can't follow through. Once you overpromise and answer every hole they poke, their trust in you may wane quickly.

Telling a prospect what you can't do takes courage and integrity. If you're honest, upfront, and willing to admit when a product or service isn't right for the customer, you'll gain respect and positive customer experiences. Sometimes honesty is the most refreshing pitch and the deal-closer.

6. No matter what

Have you ever said, "I'll do anything to close this sale"? If so, you've probably already been disqualified. If a prospective customer haggles over a price, requests a discount, or continues to wear you down after you've made three concessions too many, you have a metal hook in your mouth, not them, and it may not end well. Why?

If you're so willing to cut a deal that you cut prices, comp services, extend payment plans, waive fees, etc., you betray your own confidence that your product or service was worth the stated price. They wonder if anyone is paying those prices, if you've ever had a customer (who wasn't a blood relative), and if you're legitimate or worth your rates.

Once a prospect senses that you'll do whatever it takes to get them to buy, their suspicions rise and they wonder why.

Why are you cutting pricing if something is wrong with you or your service?

Why are you so desperate for their sale?

Why aren't more customers waiting in line to pay your pricing, and if they aren't, what on earth are they doing there?

That's what a prospect thinks when you reveal your lack of conviction, desperation, and willingness to give up control. Some prospects will exploit it to drain you dry, while others will be too frightened to buy from you even if you paid them.

Walking down a two-way street. Be casual.

If we track each act of repulsion to an uneasiness, fear, misperception, or impulse, it's evident that these sales and marketing disasters were forced communications. Stiff, imbalanced, divisive, combative, bravado-filled, and desperate. They were unnatural and accepted a power struggle between two sparring, suspicious, unequal warriors, rather than a harmonious oneness of two natural, but opposite parties shaking hands.

Sales should be natural, harmonious. Sales should feel good for both parties, not like one party is having their arm twisted.

You may be doing sales wrong if it feels repulsive, icky, or degrading. If you're thinking cringe-worthy thoughts about yourself, your product, service, or sales pitch, imagine what you're projecting to prospects. Don't make it unpleasant, repulsive, or cringeworthy.

Francesca Furchtgott

3 years ago

Giving customers what they want or betraying the values of the brand?

A J.Crew collaboration for fashion label Eveliina Vintage is not a paradox; it is a solution.

Eveliina Vintage's capsule collection debuted yesterday at J.Crew. This J.Crew partnership stopped me in my tracks.

Eveliina Vintage sells vintage goods. Eeva Musacchia founded the shop in Finland in the 1970s. It's recognized for its one-of-a-kind slip dresses from the 1930s and 1940s.

I wondered why a vintage brand would partner with a mass shop. Fast fashion against vintage shopping? Will Eveliina Vintages customers be turned off?

But Eveliina Vintages customers don't care about sustainability. They want Eveliina's Instagram look. Eveliina Vintage collaborated with J.Crew to give customers what they wanted: more Eveliina at a lower price.

Vintage: A Fashion Option That Is Eco-Conscious

Secondhand shopping is a trendy response to quick fashion. J.Crew releases hundreds of styles annually. Waste and environmental damage have been criticized. A pair of jeans requires 1,800 gallons of water. J.Crew's limited-time deals promote more purchases. J.Crew items are likely among those Americans wear 7 times before discarding.

Consumers and designers have emphasized sustainability in recent years. Stella McCartney and Eileen Fisher are popular eco-friendly brands. They've also flocked to ThredUp and similar sites.

Gap, Levis, and Allbirds have listened to consumer requests. They promote recycling, ethical sourcing, and secondhand shopping.

Secondhand shoppers feel good about reusing and recycling clothing that might have ended up in a landfill.

Eco-conscious fashionistas shop vintage. These shoppers enjoy the thrill of the hunt (that limited-edition Chanel bag!) and showing off a unique piece (nobody will have my look!). They also reduce their environmental impact.

Is Eveliina Vintage capitalizing on an aesthetic or is it a sustainable brand?

Eveliina Vintage emphasizes environmental responsibility. Vogue's Amanda Musacchia emphasized sustainability. Amanda, founder Eeva's daughter, is a company leader.

But Eveliina's press message doesn't address sustainability, unlike Instagram. Scarcity and fame rule.

Eveliina Vintages Instagram has see-through dresses and lace-trimmed slip dresses. Celebrities and influencers are often photographed in Eveliina's apparel, which has 53,000+ followers. Vogue appreciates Eveliina's style. Multiple publications discuss Alexa Chung's Eveliina dress.

Eveliina Vintage markets its one-of-a-kind goods. It teases future content, encouraging visitors to return. Scarcity drives demand and raises clothing prices. One dress is $1,600+, but most are $500-$1,000.

The catch: Eveliina can't monetize its expanding popularity due to exorbitant prices and limited quantity. Why?

Most people struggle to pay for their clothing. But Eveliina Vintage lacks those more affordable entry-level products, in contrast to other luxury labels that sell accessories or perfume.

Many people have trouble fitting into their clothing. The bodies of most women in the past were different from those for which vintage clothing was designed. Each Eveliina dress's specific measurements are mentioned alongside it. Be careful, you can fall in love with an ill-fitting dress.

No matter how many people can afford it and fit into it, there is only one item to sell. To get the item before someone else does, those people must be on the Eveliina Vintage website as soon as it becomes available.

A Way for Eveliina Vintage to Make Money (and Expand) with J.Crew Its following

Eveliina Vintages' cooperation with J.Crew makes commercial sense.

This partnership spreads Eveliina's style. Slightly better pricing The $390 outfits have multicolored slips and gauzy cotton gowns. Sizes range from 00 to 24, which is wider than vintage racks.

Eveliina Vintage customers like the combination. Excited comments flood the brand's Instagram launch post. Nobody is mocking the 50-year-old vintage brand's fast-fashion partnership.

Vintage may be a sustainable fashion trend, but that's not why Eveliina's clients love the brand. They only care about the old look.

And that is a tale as old as fashion.

You might also like

Alexander Nguyen

3 years ago

A Comparison of Amazon, Microsoft, and Google's Compensation

Learn or earn

In 2020, I started software engineering. My base wage has progressed as follows:

Amazon (2020): $112,000

Microsoft (2021): $123,000

Google (2022): $169,000

I didn't major in math, but those jumps appear more than a 7% wage increase. Here's a deeper look at the three.

The Three Categories of Compensation

Most software engineering compensation packages at IT organizations follow this format.

Minimum Salary

Base salary is pre-tax income. Most organizations give a base pay. This is paid biweekly, twice monthly, or monthly.

Recruiting Bonus

Sign-On incentives are one-time rewards to new hires. Companies need an incentive to switch. If you leave early, you must pay back the whole cost or a pro-rated amount.

Equity

Equity is complex and requires its own post. A company will promise to give you a certain amount of company stock but when you get it depends on your offer. 25% per year for 4 years, then it's gone.

If a company gives you $100,000 and distributes 25% every year for 4 years, expect $25,000 worth of company stock in your stock brokerage on your 1 year work anniversary.

Performance Bonus

Tech offers may include yearly performance bonuses. Depends on performance and funding. I've only seen 0-20%.

Engineers' overall compensation usually includes:

Base Salary + Sign-On + (Total Equity)/4 + Average Performance Bonus

Amazon: (TC: 150k)

Base Pay System

Amazon pays Seattle employees monthly on the first work day. I'd rather have my money sooner than later, even if it saves processing and pay statements.

The company upped its base pay cap from $160,000 to $350,000 to compete with other tech companies.

Performance Bonus

Amazon has no performance bonus, so you can work as little or as much as you like and get paid the same. Amazon is savvy to avoid promising benefits it can't deliver.

Sign-On Bonus

Amazon gives two two-year sign-up bonuses. First-year workers could receive $20,000 and second-year workers $15,000. It's probably to make up for the company's strange equity structure.

If you leave during the first year, you'll owe the entire money and a prorated amount for the second year bonus.

Equity

Most organizations prefer a 25%, 25%, 25%, 25% equity structure. Amazon takes a different approach with end-heavy equity:

the first year, 5%

15% after one year.

20% then every six months

We thought it was constructed this way to keep staff longer.

Microsoft (TC: 185k)

Base Pay System

Microsoft paid biweekly.

Gainful Performance

My offer letter suggested a 0%-20% performance bonus. Everyone will be satisfied with a 10% raise at year's end.

But misleading press where the budget for the bonus is doubled can upset some employees because they won't earn double their expected bonus. Still barely 10% for 2022 average.

Sign-On Bonus

Microsoft's sign-on bonus is a one-time payout. The contract can require 2-year employment. You must negotiate 1 year. It's pro-rated, so that's fair.

Equity

Microsoft is one of those companies that has standard 25% equity structure. Except if you’re a new graduate.

In that case it’ll be

25% six months later

25% each year following that

New grads will acquire equity in 3.5 years, not 4. I'm guessing it's to keep new grads around longer.

Google (TC: 300k)

Base Pay Structure

Google pays biweekly.

Performance Bonus

Google's offer letter specifies a 15% bonus. It's wonderful there's no cap, but I might still get 0%. A little more than Microsoft’s 10% and a lot more than Amazon’s 0%.

Sign-On Bonus

Google gave a 1-year sign-up incentive. If the contract is only 1 year, I can move without any extra obligations.

Not as fantastic as Amazon's sign-up bonuses, but the remainder of the package might compensate.

Equity

We covered Amazon's tail-heavy compensation structure, so Google's front-heavy equity structure may surprise you.

Annual structure breakdown

33% Year 1

33% Year 2

22% Year 3

12% Year 4

The goal is to get them to Google and keep them there.

Final Thoughts

This post hopefully helped you understand the 3 firms' compensation arrangements.

There's always more to discuss, such as refreshers, 401k benefits, and business discounts, but I hope this shows a distinction between these 3 firms.

Katrine Tjoelsen

3 years ago

8 Communication Hacks I Use as a Young Employee

Learn these subtle cues to gain influence.

Hate being ignored?

As a 24-year-old, I struggled at work. Attention-getting tips How to avoid being judged by my size, gender, and lack of wrinkles or gray hair?

I've learned seniority hacks. Influence. Within two years as a product manager, I led a team. I'm a Stanford MBA student.

These communication hacks can make you look senior and influential.

1. Slowly speak

We speak quickly because we're afraid of being interrupted.

When I doubt my ideas, I speak quickly. How can we slow down? Jamie Chapman says speaking slowly saps our energy.

Chapman suggests emphasizing certain words and pausing.

2. Interrupted? Stop the stopper

Someone interrupt your speech?

Don't wait. "May I finish?" No pause needed. Stop interrupting. I first tried this in Leadership Laboratory at Stanford. How quickly I gained influence amazed me.

Next time, try “May I finish?” If that’s not enough, try these other tips from Wendy R.S. O’Connor.

3. Context

Others don't always see what's obvious to you.

Through explanation, you help others see the big picture. If a senior knows it, you help them see where your work fits.

4. Don't ask questions in statements

“Your statement lost its effect when you ended it on a high pitch,” a group member told me. Upspeak, it’s called. I do it when I feel uncertain.

Upspeak loses influence and credibility. Unneeded. When unsure, we can say "I think." We can even ask a proper question.

Someone else's boasting is no reason to be dismissive. As leaders and colleagues, we should listen to our colleagues even if they use this speech pattern.

Give your words impact.

5. Signpost structure

Signposts improve clarity by providing structure and transitions.

Communication coach Alexander Lyon explains how to use "first," "second," and "third" He explains classic and summary transitions to help the listener switch topics.

Signs clarify. Clarity matters.

6. Eliminate email fluff

“Fine. When will the report be ready? — Jeff.”

Notice how senior leaders write short, direct emails? I often use formalities like "dear," "hope you're well," and "kind regards"

Formality is (usually) unnecessary.

7. Replace exclamation marks with periods

See how junior an exclamation-filled email looks:

Hi, all!

Hope you’re as excited as I am for tomorrow! We’re celebrating our accomplishments with cake! Join us tomorrow at 2 pm!

See you soon!

Why the exclamation points? Why not just one?

Hi, all.

Hope you’re as excited as I am for tomorrow. We’re celebrating our accomplishments with cake. Join us tomorrow at 2 pm!

See you soon.

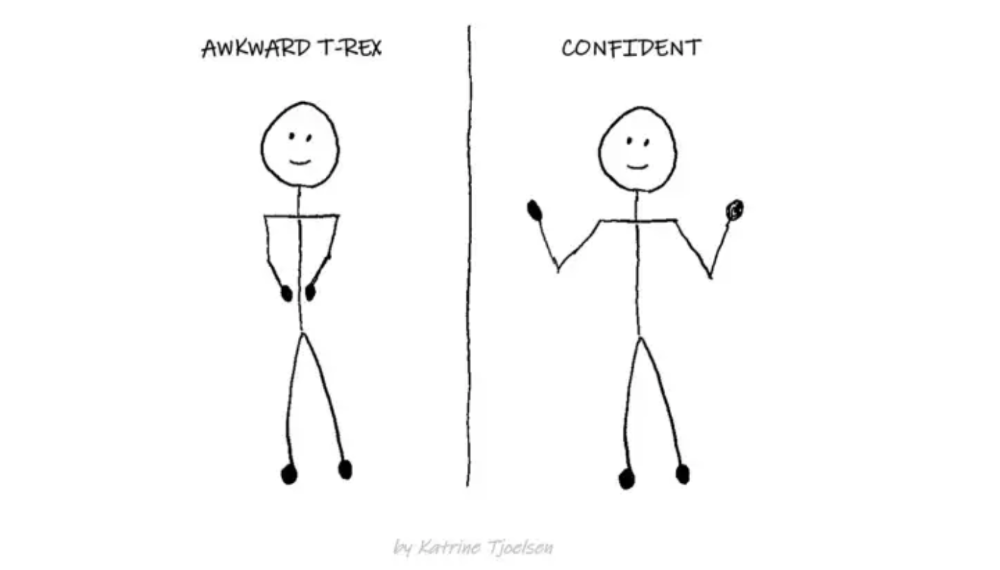

8. Take space

"Playing high" means having an open, relaxed body, says Stanford professor and author Deborah Gruenfield.

Crossed legs or looking small? Relax. Get bigger.

Mia Gradelski

3 years ago

Six Things Best-With-Money People Do Follow

I shouldn't generalize, yet this is true.

Spending is simpler than earning.

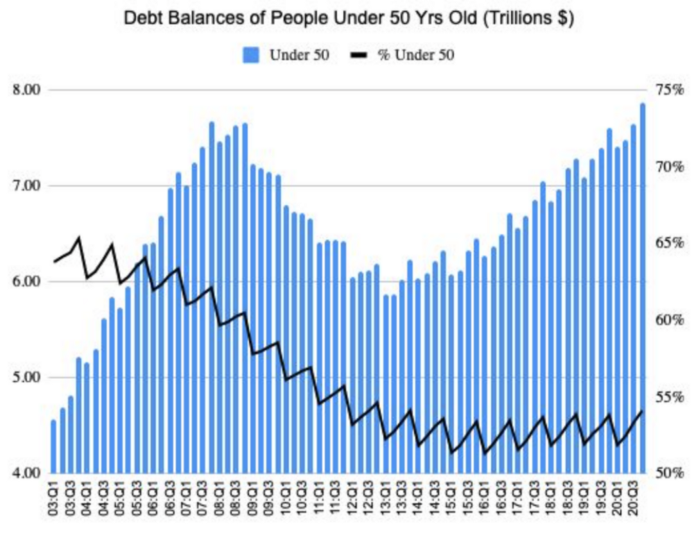

Prove me wrong, but with home debt at $145k in 2020 and individual debt at $67k, people don't have their priorities straight.

Where does this loan originate?

Under-50 Americans owed $7.86 trillion in Q4 20T. That's more than the US's 3-trillion-dollar deficit.

Here’s a breakdown:

🏡 Mortgages/Home Equity Loans = $5.28 trillion (67%)

🎓 Student Loans = $1.20 trillion (15%)

🚗 Auto Loans = $0.80 trillion (10%)

💳 Credit Cards = $0.37 trillion (5%)

🏥 Other/Medical = $0.20 trillion (3%)

Images.google.com

At least the Fed and government can explain themselves with their debt balance which includes:

-Providing stimulus packages 2x for Covid relief

-Stabilizing the economy

-Reducing inflation and unemployment

-Providing for the military, education and farmers

No American should have this much debt.

Don’t get me wrong. Debt isn’t all the same. Yes, it’s a negative number but it carries different purposes which may not be all bad.

Good debt: Use those funds in hopes of them appreciating as an investment in the future

-Student loans

-Business loan

-Mortgage, home equity loan

-Experiences

Paying cash for a home is wasteful. Just if the home is exceptionally uncommon, only 1 in a million on the market, and has an incredible bargain with numerous bidders seeking higher prices should you do so.

To impress the vendor, pay cash so they can sell it quickly. Most people can't afford most properties outright. Only 15% of U.S. homebuyers can afford their home. Zillow reports that only 37% of homes are mortgage-free.

People have clearly overreached.

Ignore appearances.

5% down can buy a 10-bedroom mansion.

Not paying in cash isn't necessarily a negative thing given property prices have increased by 30% since 2008, and throughout the epidemic, we've seen work-from-homers resort to the midwest, avoiding pricey coastal cities like NYC and San Francisco.

By no means do I think NYC is dead, nothing will replace this beautiful city that never sleeps, and now is the perfect time to rent or buy when everything is below average value for people who always wanted to come but never could. Once social distance ends, cities will recover. 24/7 sardine-packed subways prove New York isn't designed for isolation.

When buying a home, pay 20% cash and the balance with a mortgage. A mortgage must be incorporated into other costs such as maintenance, brokerage fees, property taxes, etc. If you're stuck on why a home isn't right for you, read here. A mortgage must be paid until the term date. Whether its a 10 year or 30 year fixed mortgage, depending on interest rates, especially now as the 10-year yield is inching towards 1.25%, it's better to refinance in a lower interest rate environment and pay off your debt as well since the Fed will be inching interest rates up following the 10-year eventually to stabilize the economy, but I believe that won't be until after Covid and when businesses like luxury, air travel, and tourism will get bashed.

Bad debt: I guess the contrary must be true. There is no way to profit from the loan in the future, therefore it is just money down the drain.

-Luxury goods

-Credit card debt

-Fancy junk

-Vacations, weddings, parties, etc.

Credit cards and school loans are the two largest risks to the financial security of those under 50 since banks love to compound interest to affect your credit score and make it tougher to take out more loans, not that you should with that much debt anyhow. With a low credit score and heavy debt, banks take advantage of you because you need aid to pay more for their services. Paying back debt is the challenge for most.

Choose Not Chosen

As a financial literacy advocate and blogger, I prefer not to brag, but I will now. I know what to buy and what to avoid. My parents educated me to live a frugal, minimalist stealth wealth lifestyle by choice, not because we had to.

That's the lesson.

The poorest person who shows off with bling is trying to seem rich.

Rich people know garbage is a bad investment. Investing in education is one of the best long-term investments. With information, you can do anything.

Good with money shun some items out of respect and appreciation for what they have.

Less is more.

Instead of copying the Joneses, use what you have. They may look cheerful and stylish in their 20k ft home, yet they may be as broke as OJ Simpson in his 20-bedroom mansion.

Let's look at what appears good to follow and maintain your wealth.

#1: Quality comes before quantity

Being frugal doesn't entail being cheap and cruel. Rich individuals care about relationships and treating others correctly, not impressing them. You don't have to be rich to be good with money, although most are since they don't live the fantasy lifestyle.

Underspending is appreciating what you have.

Many people believe organic food is the same as washing chemical-laden produce. Hopefully. Organic, vegan, fresh vegetables from upstate may be more expensive in the short term, but they will help you live longer and save you money in the long run.

Consider. You'll save thousands a month eating McDonalds 3x a day instead of fresh seafood, veggies, and organic fruit, but your life will be shortened. If you want to save money and die early, go ahead, but I assume we all want to break the world record for longest person living and would rather spend less. Plus, elderly people get tax breaks, medicare, pensions, 401ks, etc. You're living for free, therefore eating fast food forever is a terrible decision.

With a few longer years, you may make hundreds or millions more in the stock market, spend more time with family, and just live.

Folks, health is wealth.

Consider the future benefit, not simply the cash sign. Cheapness is useless.

Same with stuff. Don't stock your closet with fast-fashion you can't wear for years. Buying inexpensive goods that will fail tomorrow is stupid.

Investing isn't only in stocks. You're living. Consume less.

#2: If you cannot afford it twice, you cannot afford it once

I learned this from my dad in 6th grade. I've been lucky to travel, experience things, go to a great university, and conduct many experiments that others without a stable, decent lifestyle can afford.

I didn't live this way because of my parents' paycheck or financial knowledge.

Saving and choosing caused it.

I always bring cash when I shop. I ditch Apple Pay and credit cards since I can spend all I want on even if my account bounces.

Banks are nasty. When you lose it, they profit.

Cash hinders banks' profits. Carrying a big, hefty wallet with cash is lame and annoying, but it's the best method to only spend what you need. Not for vacation, but for tiny daily expenses.

Physical currency lets you know how much you have for lunch or a taxi.

It's physical, thus losing it prevents debt.

If you can't afford it, it will harm more than help.

#3: You really can purchase happiness with money.

If used correctly, yes.

Happiness and satisfaction differ.

It won't bring you fulfillment because you must work hard on your own to help others, but you can travel and meet individuals you wouldn't otherwise meet.

You can meet your future co-worker or strike a deal while waiting an hour in first class for takeoff, or you can meet renowned people at a networking brunch.

Seen a pattern here?

Your time and money are best spent on connections. Not automobiles or firearms. That’s just stuff. It doesn’t make you a better person.

Be different if you've earned less. Instead of trying to win the lotto or become an NFL star for your first big salary, network online for free.

Be resourceful. Sign up for LinkedIn, post regularly, and leave unengaged posts up because that shows power.

Consistency is beneficial.

I did that for a few months and met amazing people who helped me get jobs. Money doesn't create jobs, it creates opportunities.

Resist social media and scammers that peddle false hopes.

Choose wisely.

#4: Avoid gushing over titles and purchasing trash.

As Insider’s Hillary Hoffower reports, “Showing off wealth is no longer the way to signify having wealth. In the US particularly, the top 1% have been spending less on material goods since 2007.”

I checked my closet. No brand comes to mind. I've never worn a brand's logo and rotate 6 white shirts daily. I have my priorities and don't waste money or effort on clothing that won't fit me in a year.

Unless it's your full-time work, clothing shouldn't be part of our mornings.

Lifestyle of stealth wealth. You're so fulfilled that seeming homeless won't hurt your self-esteem.

That's self-assurance.

Extroverts aren't required.

That's irrelevant.

Showing off won't win you friends.

They'll like your personality.

#5: Time is the most valuable commodity.

Being rich doesn't entail working 24/7 M-F.

They work when they are ready to work.

Waking up at 5 a.m. won't make you a millionaire, but it will inculcate diligence and tenacity in you.

You have a busy day yet want to exercise. You can skip the workout or wake up at 4am instead of 6am to do it.

Emotion-driven lazy bums stay in bed.

Those that are accountable keep their promises because they know breaking one will destroy their week.

Since 7th grade, I've worked out at 5am for myself, not to impress others. It gives me greater energy to contribute to others, especially on weekends and holidays.

It's a habit that I have in my life.

Find something that you take seriously and makes you a better person.

As someone who is close to becoming a millionaire and has encountered them throughout my life, I can share with you a few important differences that have shaped who we are as a society based on the weekends:

-Read

-Sleep

-Best time to work with no distractions

-Eat together

-Take walks and be in nature

-Gratitude

-Major family time

-Plan out weeks

-Go grocery shopping because health = wealth

#6. Perspective is Important

Timing the markets will slow down your career. Professors preach scarcity, not abundance. Why should school teach success? They give us bad advice.

If you trust in abundance and luck by attempting and experimenting, growth will come effortlessly. Passion isn't a term that just appears. Mistakes and fresh people help. You can get money. If you don't think it's worth it, you won't.

You don’t have to be wealthy to be good at money, but most are for these reasons. Rich is a mindset, wealth is power. Prioritize your resources. Invest in yourself, knowing the toughest part is starting.

Thanks for reading!