More on NFTs & Art

Nate Kostar

3 years ago

# DeaMau5’s PIXELYNX and Beatport Launch Festival NFTs

Pixelynx, a music metaverse gaming platform, has teamed up with Beatport, an online music retailer focusing in electronic music, to establish a Synth Heads non-fungible token (NFT) Collection.

Richie Hawtin, aka Deadmau5, and Joel Zimmerman, nicknamed Pixelynx, have invented a new music metaverse game platform called Pixelynx. In January 2022, they released their first Beatport NFT drop, which saw 3,030 generative NFTs sell out in seconds.

The limited edition Synth Heads NFTs will be released in collaboration with Junction 2, the largest UK techno festival, and having one will grant fans special access tickets and experiences at the London-based festival.

Membership in the Synth Head community, day passes to the Junction 2 Festival 2022, Junction 2 and Beatport apparel, special vinyl releases, and continued access to future ticket drops are just a few of the experiences available.

Five lucky NFT holders will also receive a Golden Ticket, which includes access to a backstage artist bar and tickets to Junction 2's next large-scale London event this summer, in addition to full festival entrance for both days.

The Junction 2 festival will take place at Trent Park in London on June 18th and 19th, and will feature performances from Four Tet, Dixon, Amelie Lens, Robert Hood, and a slew of other artists. Holders of the original Synth Head NFT will be granted admission to the festival's guestlist as well as line-jumping privileges.

The new Synth Heads NFTs collection contain 300 NFTs.

NFTs that provide IRL utility are in high demand.

The benefits of NFT drops related to In Real Life (IRL) utility aren't limited to Beatport and Pixelynx.

Coachella, a well-known music event, recently partnered with cryptocurrency exchange FTX to offer free NFTs to 2022 pass holders. Access to a dedicated entry lane, a meal and beverage pass, and limited-edition merchandise were all included with the NFTs.

Coachella also has its own NFT store on the Solana blockchain, where fans can buy Coachella NFTs and digital treasures that unlock exclusive on-site experiences, physical objects, lifetime festival passes, and "future adventures."

Individual artists and performers have begun taking advantage of NFT technology outside of large music festivals like Coachella.

DJ Tisto has revealed that he would release a VIP NFT for his upcoming "Eagle" collection during the EDC festival in Las Vegas in 2022. This NFT, dubbed "All Access Eagle," gives collectors the best chance to get NFTs from his first drop, as well as unique access to the music "Repeat It."

NFTs are one-of-a-kind digital assets that can be verified, purchased, sold, and traded on blockchains, opening up new possibilities for artists and businesses alike. Time will tell whether Beatport and Pixelynx's Synth Head NFT collection will be successful, but if it's anything like the first release, it's a safe bet.

Vishal Chawla

3 years ago

5 Bored Apes borrowed to claim $1.1 million in APE tokens

Takeaway

Unknown user took advantage of the ApeCoin airdrop to earn $1.1 million.

He used a flash loan to borrow five BAYC NFTs, claim the airdrop, and repay the NFTs.

Yuga Labs, the creators of BAYC, airdropped ApeCoin (APE) to anyone who owns one of their NFTs yesterday.

For the Bored Ape Yacht Club and Mutant Ape Yacht Club collections, the team allocated 150 million tokens, or 15% of the total ApeCoin supply, worth over $800 million. Each BAYC holder received 10,094 tokens worth $80,000 to $200,000.

But someone managed to claim the airdrop using NFTs they didn't own. They used the airdrop's specific features to carry it out. And it worked, earning them $1.1 million in ApeCoin.

The trick was that the ApeCoin airdrop wasn't based on who owned which Bored Ape at a given time. Instead, anyone with a Bored Ape at the time of the airdrop could claim it. So if you gave someone your Bored Ape and you hadn't claimed your tokens, they could claim them.

The person only needed to get hold of some Bored Apes that hadn't had their tokens claimed to claim the airdrop. They could be returned immediately.

So, what happened?

The person found a vault with five Bored Ape NFTs that hadn't been used to claim the airdrop.

A vault tokenizes an NFT or a group of NFTs. You put a bunch of NFTs in a vault and make a token. This token can then be staked for rewards or sold (representing part of the value of the collection of NFTs). Anyone with enough tokens can exchange them for NFTs.

This vault uses the NFTX protocol. In total, it contained five Bored Apes: #7594, #8214, #9915, #8167, and #4755. Nobody had claimed the airdrop because the NFTs were locked up in the vault and not controlled by anyone.

The person wanted to unlock the NFTs to claim the airdrop but didn't want to buy them outright s o they used a flash loan, a common tool for large DeFi hacks. Flash loans are a low-cost way to borrow large amounts of crypto that are repaid in the same transaction and block (meaning that the funds are never at risk of not being repaid).

With a flash loan of under $300,000 they bought a Bored Ape on NFT marketplace OpenSea. A large amount of the vault's token was then purchased, allowing them to redeem the five NFTs. The NFTs were used to claim the airdrop, before being returned, the tokens sold back, and the loan repaid.

During this process, they claimed 60,564 ApeCoin airdrops. They then sold them on Uniswap for 399 ETH ($1.1 million). Then they returned the Bored Ape NFT used as collateral to the same NFTX vault.

Attack or arbitrage?

However, security firm BlockSecTeam disagreed with many social media commentators. A flaw in the airdrop-claiming mechanism was exploited, it said.

According to BlockSecTeam's analysis, the user took advantage of a "vulnerability" in the airdrop.

"We suspect a hack due to a flaw in the airdrop mechanism. The attacker exploited this vulnerability to profit from the airdrop claim" said BlockSecTeam.

For example, the airdrop could have taken into account how long a person owned the NFT before claiming the reward.

Because Yuga Labs didn't take a snapshot, anyone could buy the NFT in real time and claim it. This is probably why BAYC sales exploded so soon after the airdrop announcement.

Jim Clyde Monge

2 years ago

Can You Sell Images Created by AI?

Some AI-generated artworks sell for enormous sums of money.

But can you sell AI-Generated Artwork?

Simple answer: yes.

However, not all AI services enable allow usage and redistribution of images.

Let's check some of my favorite AI text-to-image generators:

Dall-E2 by OpenAI

The AI art generator Dall-E2 is powerful. Since it’s still in beta, you can join the waitlist here.

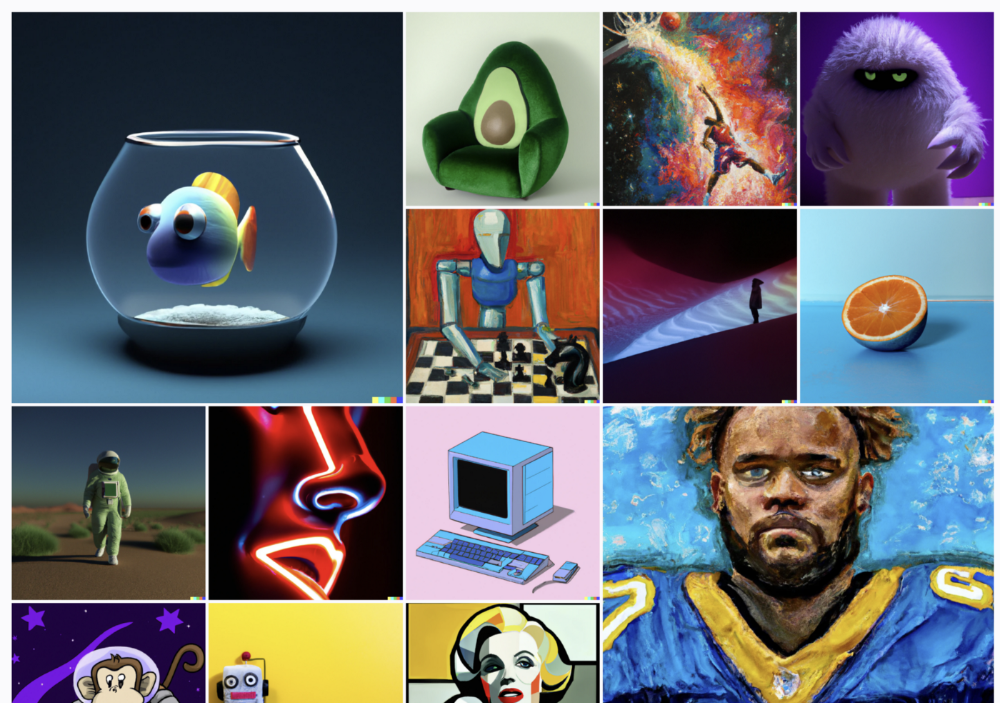

OpenAI DOES NOT allow the use and redistribution of any image for commercial purposes.

Here's the policy as of April 6, 2022.

Here are some images from Dall-E2’s webpage to show its art quality.

Several Reddit users reported receiving pricing surveys from OpenAI.

This suggests the company may bring out a subscription-based tier and a commercial license to sell images soon.

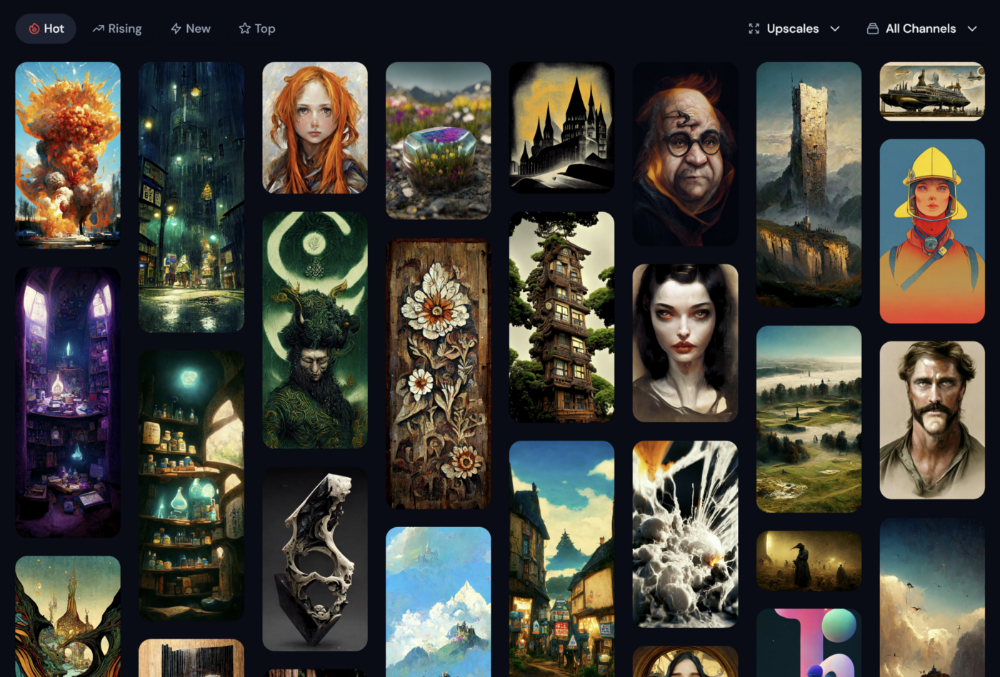

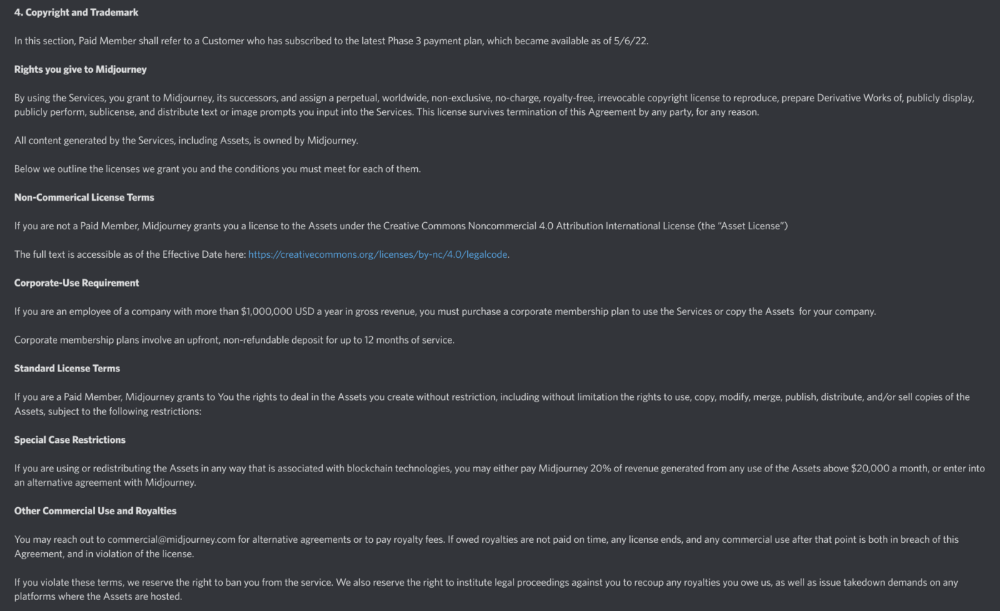

MidJourney

I like Midjourney's art generator. It makes great AI images. Here are some samples:

Standard Licenses are available for $10 per month.

Standard License allows you to use, copy, modify, merge, publish, distribute, and/or sell copies of the images, except for blockchain technologies.

If you utilize or distribute the Assets using blockchain technology, you must pay MidJourney 20% of revenue above $20,000 a month or engage in an alternative agreement.

Here's their copyright and trademark page.

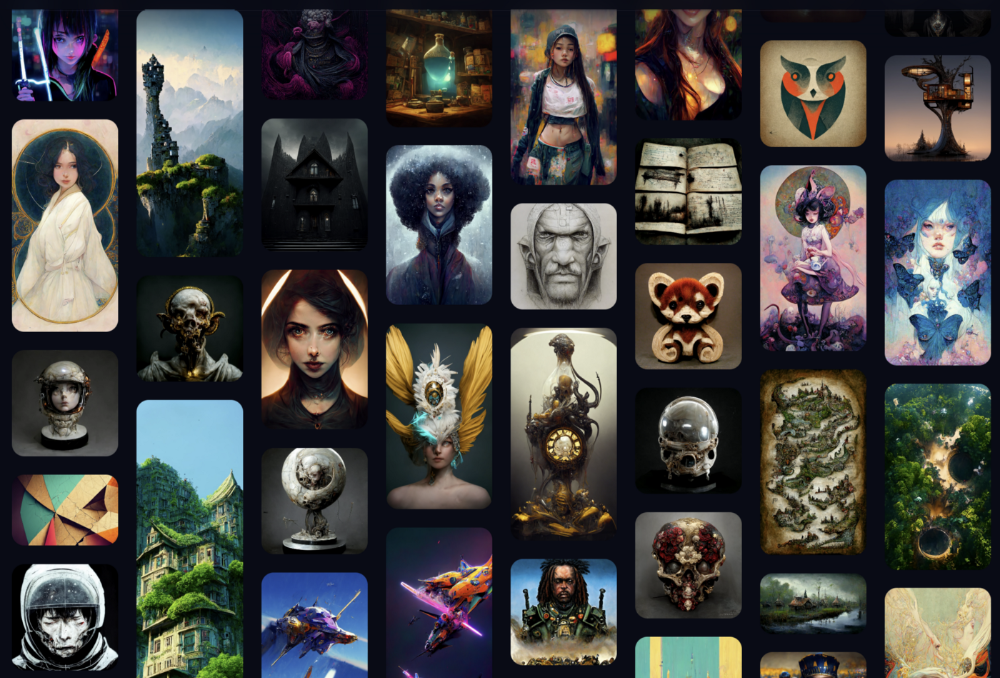

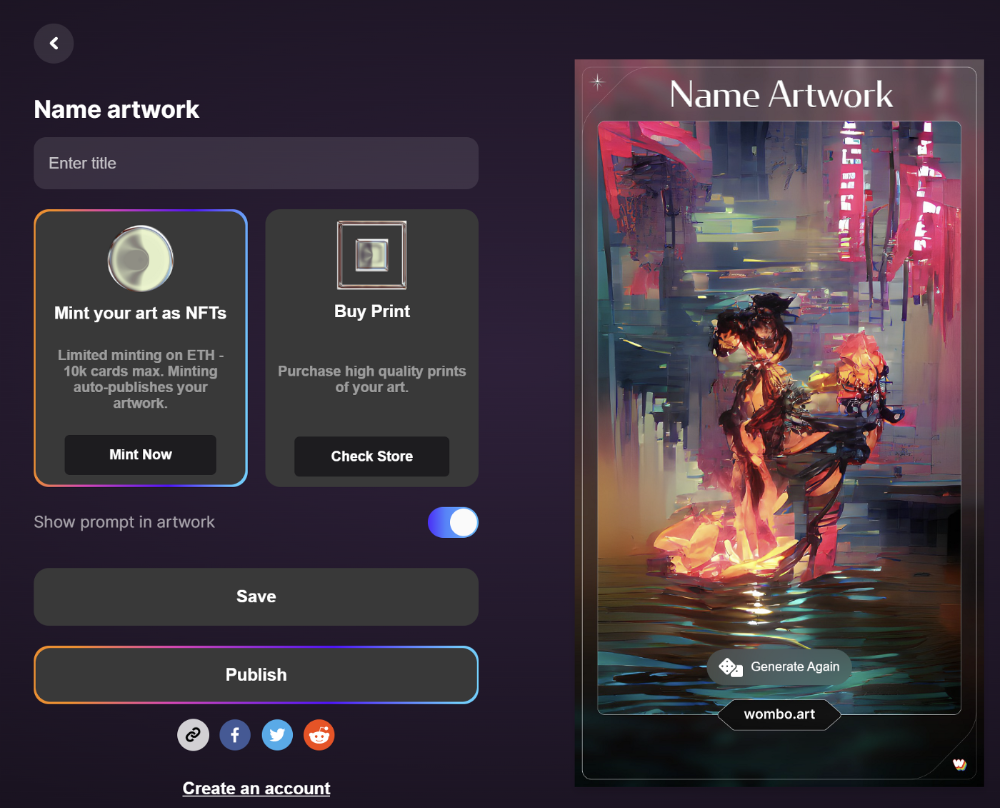

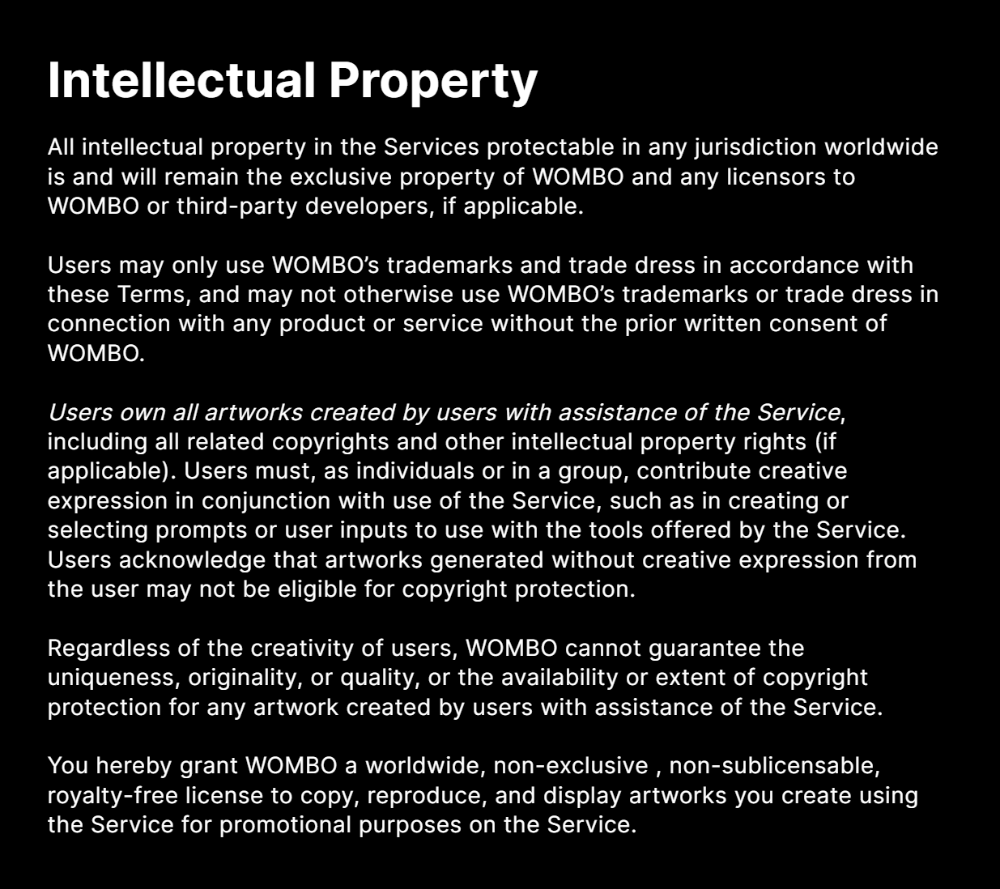

Dream by Wombo

Dream is one of the first public AI art generators.

This AI program is free, easy to use, and Wombo gives a royalty-free license to copy or share artworks.

Users own all artworks generated by the tool. Including all related copyrights or intellectual property rights.

Here’s Wombos' intellectual property policy.

Final Reflections

AI is creating a new sort of art that's selling well. It’s becoming popular and valued, despite some skepticism.

Now that you know MidJourney and Wombo let you sell AI-generated art, you need to locate buyers. There are several ways to achieve this, but that’s for another story.

You might also like

Niharikaa Kaur Sodhi

2 years ago

The Only Paid Resources I Turn to as a Solopreneur

4 Pricey Tools That Are Valuable

I pay based on ROI (return on investment).

If a $20/month tool or $500 online course doubles my return, I'm in.

Investing helps me build wealth.

Canva Pro

I initially refused to pay.

My course content needed updating a few months ago. My Google Docs text looked cleaner and more professional in Canva.

I've used it to:

product cover pages

eBook covers

Product page infographics

See my Google Sheets vs. Canva product page graph.

Google Sheets vs Canva

Yesterday, I used it to make a LinkedIn video thumbnail. It took less than 5 minutes and improved my video.

In 30 hours, the video had 39,000 views.

Here's more.

HypeFury

Hypefury rocks!

It builds my brand as I sleep. What else?

Because I'm traveling this weekend, I planned tweets for 10 days. It took me 80 minutes.

So while I travel or am absent, my content mill keeps producing.

Also I like:

I can reach hundreds of people thanks to auto-DMs. I utilize it to advertise freebies; for instance, leave an emoji remark to receive my checklist. And they automatically receive a message in their DM.

Scheduled Retweets: By appearing in a different time zone, they give my tweet a second chance.

It helps me save time and expand my following, so that's my favorite part.

It’s also super neat:

Zoom Pro

My course involves weekly and monthly calls for alumni.

Google Meet isn't great for group calls. The interface isn't great.

Zoom Pro is expensive, and the monthly payments suck, but it's necessary.

It gives my students a smooth experience.

Previously, we'd do 40-minute meetings and then reconvene.

Zoom's free edition limits group calls to 40 minutes.

This wouldn't be a good online course if I paid hundreds of dollars.

So I felt obligated to help.

YouTube Premium

My laptop has an ad blocker.

I bought an iPad recently.

When you're self-employed and work from home, the line between the two blurs. My bed is only 5 steps away!

When I read or watched videos on my laptop, I'd slide into work mode. Only option was to view on phone, which is awkward.

YouTube premium handles it. No more advertisements and I can listen on the move.

3 Expensive Tools That Aren't Valuable

Marketing strategies are sometimes aimed to make you feel you need 38474 cool features when you don’t.

Certain tools are useless.

I found it useless.

Depending on your needs. As a writer and creator, I get no return.

They could for other jobs.

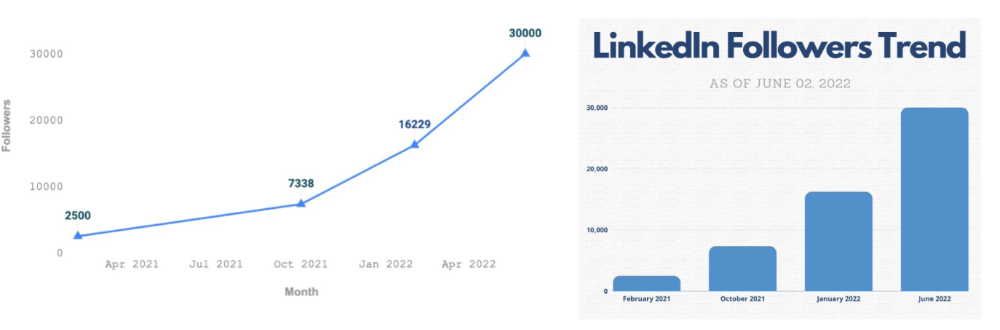

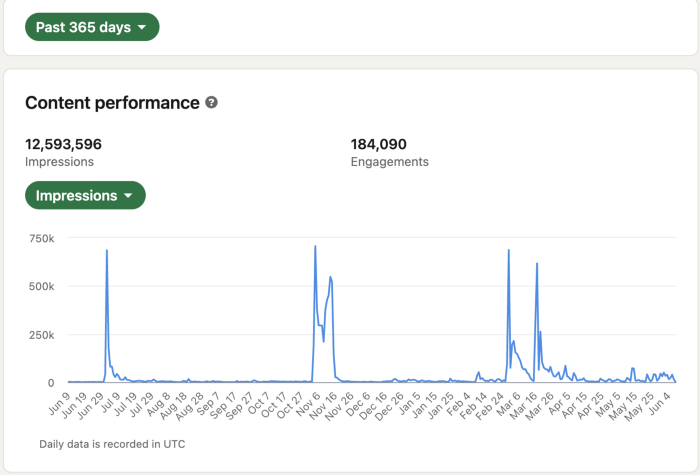

Shield Analytics

It tracks LinkedIn stats, like:

follower growth

trend chart for impressions

Engagement, views, and comment stats for posts

and much more.

Middle-tier creator costs $12/month.

I got a 25% off coupon but canceled my free trial before writing this. It's not worth the discount.

Why?

LinkedIn provides free analytics. See:

Not thorough and won't show top posts.

I don't need to see my top posts because I love experimenting with writing.

Slack Premium

Slack was my classroom. Slack provided me a premium trial during the prior cohort.

I skipped it.

Sure, voice notes are better than a big paragraph. I didn't require pro features.

Marketing methods sometimes make you think you need 38474 amazing features. Don’t fall for it.

Calendly Pro

This may be worth it if you get many calls.

I avoid calls. During my 9-5, I had too many pointless calls.

I don't need:

ability to schedule calls for 15, 30, or 60 minutes: I just distribute each link separately.

I have a Gumroad consultation page with a payment option.

follow-up emails: I hardly ever make calls, so

I just use one calendar, therefore I link to various calendars.

I'll admit, the integrations are cool. Not for me.

If you're a coach or consultant, the features may be helpful. Or book meetings.

Conclusion

Investing is spending to make money.

Use my technique — put money in tools that help you make money. This separates it from being an investment instead of an expense.

Try free versions of these tools before buying them since everyone else is.

Jari Roomer

2 years ago

10 Alternatives to Smartphone Scrolling

"Don't let technology control you; manage your phone."

"Don't become a slave to technology," said Richard Branson. "Manage your phone, don't let it manage you."

Unfortunately, most people are addicted to smartphones.

Worrying smartphone statistics:

46% of smartphone users spend 5–6 hours daily on their device.

The average adult spends 3 hours 54 minutes per day on mobile devices.

We check our phones 150–344 times per day (every 4 minutes).

During the pandemic, children's daily smartphone use doubled.

Having a list of productive, healthy, and fulfilling replacement activities is an effective way to reduce smartphone use.

The more you practice these smartphone replacements, the less time you'll waste.

Skills Development

Most people say they 'don't have time' to learn new skills or read more. Lazy justification. The issue isn't time, but time management. Distractions and low-quality entertainment waste hours every day.

The majority of time is spent in low-quality ways, according to Richard Koch, author of The 80/20 Principle.

What if you swapped daily phone scrolling for skill-building?

There are dozens of skills to learn, from high-value skills to make more money to new languages and party tricks.

Learning a new skill will last for years, if not a lifetime, compared to scrolling through your phone.

Watch Docs

Love documentaries. It's educational and relaxing. A good documentary helps you understand the world, broadens your mind, and inspires you to change.

Recent documentaries I liked include:

14 Peaks: Nothing Is Impossible

The Social Dilemma

Jim & Andy: The Great Beyond

Fantastic Fungi

Make money online

If you've ever complained about not earning enough money, put away your phone and get to work.

Instead of passively consuming mobile content, start creating it. Create something worthwhile. Freelance.

Internet makes starting a business or earning extra money easier than ever.

(Grand)parents didn't have this. Someone made them work 40+ hours. Few alternatives existed.

Today, all you need is internet and a monetizable skill. Use the internet instead of letting it distract you. Profit from it.

Bookworm

Jack Canfield, author of Chicken Soup For The Soul, said, "Everyone spends 2–3 hours a day watching TV." If you read that much, you'll be in the top 1% of your field."

Few people have more than two hours per day to read.

If you read 15 pages daily, you'd finish 27 books a year (as the average non-fiction book is about 200 pages).

Jack Canfield's quote remains relevant even though 15 pages can be read in 20–30 minutes per day. Most spend this time watching TV or on their phones.

What if you swapped 20 minutes of mindless scrolling for reading? You'd gain knowledge and skills.

Favorite books include:

The 7 Habits of Highly Effective People — Stephen R. Covey

The War of Art — Steven Pressfield

The Psychology of Money — Morgan Housel

A New Earth — Eckart Tolle

Get Organized

All that screen time could've been spent organizing. It could have been used to clean, cook, or plan your week.

If you're always 'behind,' spend 15 minutes less on your phone to get organized.

"Give me six hours to chop down a tree, and I'll spend the first four sharpening the ax," said Abraham Lincoln. Getting organized is like sharpening an ax, making each day more efficient.

Creativity

Why not be creative instead of consuming others'? Do something creative, like:

Painting

Musically

Photography\sWriting

Do-it-yourself

Construction/repair

Creative projects boost happiness, cognitive functioning, and reduce stress and anxiety. Creative pursuits induce a flow state, a powerful mental state.

This contrasts with smartphones' effects. Heavy smartphone use correlates with stress, depression, and anxiety.

Hike

People spend 90% of their time indoors, according to research. This generation is the 'Indoor Generation'

We lack an active lifestyle, fresh air, and vitamin D3 due to our indoor lifestyle (generated through direct sunlight exposure). Mental and physical health issues result.

Put away your phone and get outside. Go on nature walks. Explore your city on foot (or by bike, as we do in Amsterdam) if you live in a city. Move around! Outdoors!

You can't spend your whole life staring at screens.

Podcasting

Okay, a smartphone is needed to listen to podcasts. When you use your phone to get smarter, you're more productive than 95% of people.

Favorite podcasts:

The Pomp Podcast (about cryptocurrencies)

The Joe Rogan Experience

Kwik Brain (by Jim Kwik)

Podcasts can be enjoyed while walking, cleaning, or doing laundry. Win-win.

Journalize

I find journaling helpful for mental clarity. Writing helps organize thoughts.

Instead of reading internet opinions, comments, and discussions, look inward. Instead of Twitter or TikTok, look inward.

“It never ceases to amaze me: we all love ourselves more than other people, but care more about their opinion than our own.” — Marcus Aurelius

Give your mind free reign with pen and paper. It will highlight important thoughts, emotions, or ideas.

Never write for another person. You want unfiltered writing. So you get the best ideas.

Find your best hobbies

List your best hobbies. I guarantee 95% of people won't list smartphone scrolling.

It's often low-quality entertainment. The dopamine spike is short-lived, and it leaves us feeling emotionally 'empty'

High-quality leisure sparks happiness. They make us happy and alive. Everyone has different interests, so these activities vary.

My favorite quality hobbies are:

Nature walks (especially the mountains)

Video game party

Watching a film with my girlfriend

Gym weightlifting

Complexity learning (such as the blockchain and the universe)

This brings me joy. They make me feel more fulfilled and 'rich' than social media scrolling.

Make a list of your best hobbies to refer to when you're spending too much time on your phone.

umair haque

2 years ago

The reasons why our civilization is deteriorating

The Industrial Revolution's Curse: Why One Age's Power Prevents the Next Ones

A surprising fact. Recently, Big Oil's 1970s climate change projections were disturbingly accurate. Of course, we now know that it worked tirelessly to deny climate change, polluting our societies to this day. That's a small example of the Industrial Revolution's curse.

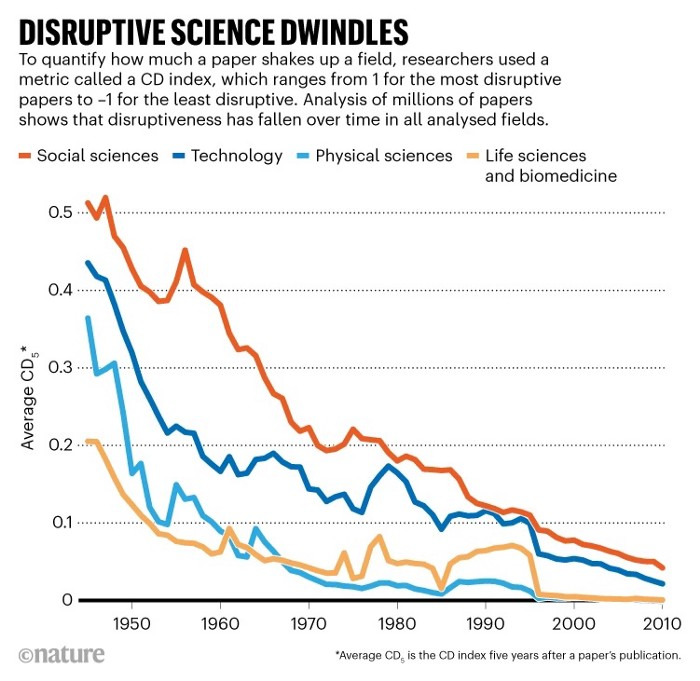

Let me rephrase this nuanced and possibly weird thought. The chart above? Disruptive science is declining. The kind that produces major discoveries, new paradigms, and shattering prejudices.

Not alone. Our civilisation reached a turning point suddenly. Progress stopped and reversed for the first time in centuries.

The Industrial Revolution's Big Bang started it all. At least some humans had riches for the first time, if not all, and with that wealth came many things. Longer, healthier lives since now health may be publicly and privately invested in. For the first time in history, wealthy civilizations could invest their gains in pure research, a good that would have sounded frivolous to cultures struggling to squeeze out the next crop, which required every shoulder to the till.

So. Don't confuse me with the Industrial Revolution's curse. Industry progressed. Contrary. I'm claiming that the Big Bang of Progress is slowing, plateauing, and ultimately reversing. All social indicators show that. From progress itself to disruptive, breakthrough research, everything is slowing down.

It's troubling. Because progress slows and plateaus, pre-modern social problems like fascism, extremism, and fundamentalism return. People crave nostalgic utopias when they lose faith in modernity. That strongman may shield me from this hazardous life. If I accept my place in a blood-and-soil hierarchy, I have a stable, secure position and someone to punch and detest. It's no coincidence that as our civilization hits a plateau of progress, there is a tsunami pulling the world backwards, with people viscerally, openly longing for everything from theocracy to fascism to fundamentalism, an authoritarian strongman to soothe their fears and tell them what to do, whether in Britain, heartland America, India, China, and beyond.

However, one aspect remains unknown. Technology. Let me clarify.

How do most people picture tech? Say that without thinking. Most people think of social media or AI. Well, small correlation engines called artificial neurons are a far cry from biological intelligence, which functions in far more obscure and intricate ways, down to the subatomic level. But let's try it.

Today, tech means AI. But. Do you foresee it?

Consider why civilisation is plateauing and regressing. Because we can no longer provide the most basic necessities at the same rate. On our track, clean air, water, food, energy, medicine, and healthcare will become inaccessible to huge numbers within a decade or three. Not enough. There isn't, therefore prices for food, medicine, and energy keep rising, with occasional relief.

Why our civilizations are encountering what economists like me term a budget constraint—a hard wall of what we can supply—should be evident. Global warming and extinction. Megafires, megadroughts, megafloods, and failed crops. On a civilizational scale, good luck supplying the fundamentals that way. Industrial food production cannot feed a planet warming past two degrees. Crop failures, droughts, floods. Another example: glaciers melt, rivers dry up, and the planet's fresh water supply contracts like a heart attack.

Now. Let's talk tech again. Mostly AI, maybe phone apps. The unsettling reality is that current technology cannot save humanity. Not much.

AI can do things that have become cliches to titillate the masses. It may talk to you and act like a person. It can generate art, which means reproduce it, but nonetheless, AI art! Despite doubts, it promises to self-drive cars. Unimportant.

We need different technology now. AI won't grow crops in ash-covered fields, cleanse water, halt glaciers from melting, or stop the clear-cutting of the planet's few remaining forests. It's not useless, but on a civilizational scale, it's much less beneficial than its proponents claim. By the time it matures, AI can help deliver therapy, keep old people company, and even drive cars more efficiently. None of it can save our culture.

Expand that scenario. AI's most likely use? Replacing call-center workers. Support. It may help doctors diagnose, surgeons orient, or engineers create more fuel-efficient motors. This is civilizationally marginal.

Non-disruptive. Do you see the connection with the paper that indicated disruptive science is declining? AI exemplifies that. It's called disruptive, yet it's a textbook incremental technology. Oh, cool, I can communicate with a bot instead of a poor human in an underdeveloped country and have the same or more trouble being understood. This bot is making more people unemployed. I can now view a million AI artworks.

AI illustrates our civilization's trap. Its innovative technologies will change our lives. But as you can see, its incremental, delivering small benefits at most, and certainly not enough to balance, let alone solve, the broader problem of steadily dropping living standards as our society meets a wall of being able to feed itself with fundamentals.

Contrast AI with disruptive innovations we need. What do we need to avoid a post-Roman Dark Age and preserve our civilization in the coming decades? We must be able to post-industrially produce all our basic needs. We need post-industrial solutions for clean water, electricity, cement, glass, steel, manufacture for garments and shoes, starting with the fossil fuel-intensive plastic, cotton, and nylon they're made of, and even food.

Consider. We have no post-industrial food system. What happens when crop failures—already dangerously accelerating—reach a critical point? Our civilization is vulnerable. Think of ancient civilizations that couldn't survive the drying up of their water sources, the failure of their primary fields, which they assumed the gods would preserve forever, or an earthquake or sickness that killed most of their animals. Bang. Lost. They failed. They splintered, fragmented, and abandoned vast capitols and cities, and suddenly, in history's sight, poof, they were gone.

We're getting close. Decline equals civilizational peril.

We believe dumb notions about AI becoming disruptive when it's incremental. Most of us don't realize our civilization's risk because we believe these falsehoods. Everyone should know that we cannot create any thing at civilizational scale without fossil fuels. Most of us don't know it, thus we don't realize that the breakthrough technologies and systems we need don't manipulate information anymore. Instead, biotechnologies, largely but not genes, generate food without fossil fuels.

We need another Industrial Revolution. AI, apps, bots, and whatnot won't matter unless you think you can eat and drink them while the world dies and fascists, lunatics, and zealots take democracy's strongholds. That's dramatic, but only because it's already happening. Maybe AI can entertain you in that bunker while society collapses with smart jokes or a million Mondrian-like artworks. If civilization is to survive, it cannot create the new Industrial Revolution.

The revolution has begun, but only in small ways. Post-industrial fundamental systems leaders are developing worldwide. The Netherlands is leading post-industrial agriculture. That's amazing because it's a tiny country performing well. Correct? Discover how large-scale agriculture can function, not just you and me, aged hippies, cultivating lettuce in our backyards.

Iceland is leading bioplastics, which, if done well, will be a major advance. Of sure, microplastics are drowning the oceans. What should we do since we can't live without it? We need algae-based bioplastics for green plastic.

That's still young. Any of the above may not function on a civilizational scale. Bioplastics use algae, which can cause problems if overused. None of the aforementioned indicate the next Industrial Revolution is here. Contrary. Slowly.

We have three decades until everything fails. Before life ends. Curtain down. No more fields, rivers, or weather. Freshwater and life stocks have plummeted. Again, we've peaked and declined in our ability to live at today's relatively rich standards. Game over—no more. On a dying planet, producing the fundamentals for a civilisation that left it too late to construct post-industrial systems becomes next to impossible, with output dropping faster and quicker each year, quarter, and day.

Too slow. That's because it's not really happening. Most people think AI when I say tech. I get a politicized response if I say Green New Deal or Clean Industrial Revolution. Half the individuals I talk to have been politicized into believing that climate change isn't real and that any breakthrough technical progress isn't required, desirable, possible, or genuine. They'll suffer.

The Industrial Revolution curse. Every revolution creates new authorities, which ossify and refuse to relinquish their privileges. For fifty years, Big Oil has denied climate change, even though their scientists predicted it. We also have a software industry and its venture capital power centers that are happy for the average person to think tech means chatbots, not being able to produce basics for a civilization without destroying the planet, and billionaires who buy comms platforms for the same eye-watering amount of money it would take to save life on Earth.

The entire world's vested interests are against the next industrial revolution, which is understandable since they were established from fossil money. From finance to energy to corporate profits to entertainment, power in our world is the result of the last industrial revolution, which means it has no motivation or purpose to give up fossil money, as we are witnessing more brutally out in the open.

Thus, the Industrial Revolution's curse—fossil power—rules our globe. Big Agriculture, Big Pharma, Wall St., Silicon Valley, and many others—including politics, which they buy and sell—are basically fossil power, and they have no interest in generating or letting the next industrial revolution happen. That's why tiny enterprises like those creating bioplastics in Iceland or nations savvy enough to shun fossil power, like the Netherlands, which has a precarious relationship with nature, do it. However, fossil power dominates politics, economics, food, clothes, energy, and medicine, and it has no motivation to change.

Allow disruptive innovations again. As they occur, its position becomes increasingly vulnerable. If you were fossil power, would you allow another industrial revolution to destroy its privilege and wealth?

You might, since power and money haven't corrupted you. However, fossil power prevents us from building, creating, and growing what we need to survive as a society. I mean the entire economic, financial, and political power structure from the last industrial revolution, not simply Big Oil. My friends, fossil power's chokehold over our society is likely to continue suffocating the advances that could have spared our civilization from a decline that's now here and spiraling closer to oblivion.