More on Entrepreneurship/Creators

Sarah Bird

3 years ago

Memes Help This YouTube Channel Earn Over $12k Per Month

Take a look at a YouTube channel making anything up to over $12k a month from making very simple videos.

And the best part? Its replicable by anyone. Basic videos can be generated for free without design abilities.

Join me as I deconstruct the channel to estimate how much they make, how they do it, and how you can too.

What Do They Do Exactly?

Happy Land posts memes with a simple caption they wrote. So, it's new. The videos are a slideshow of meme photos with stock music.

The site posts 12 times a day.

8-10-minute videos show 10 second images. Thus, each video needs 48-60 memes.

Memes are video titles (e.g. times a boyfriend was hilarious, back to school fails, funny restaurant signs).

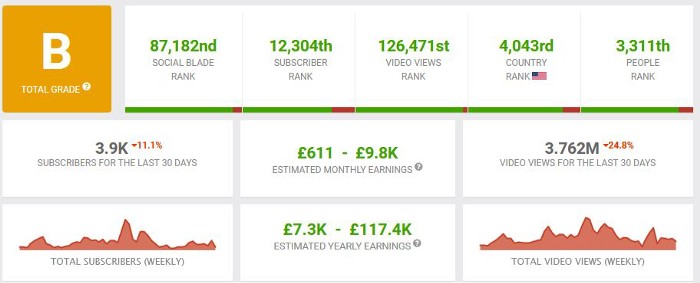

Some stats about the channel:

Founded on October 30, 2020

873 videos were added.

81.8k subscribers

67,244,196 views of the video

What Value Are They Adding?

Everyone can find free memes online. This channel collects similar memes into a single video so you don't have to scroll or click for more. It’s right there, you just keep watching and more will come.

By theming it, the audience is prepared for the video's content.

If you want hilarious animal memes or restaurant signs, choose the video and you'll get up to 60 memes without having to look for them. Genius!

How much money do they make?

According to www.socialblade.com, the channel earns $800-12.8k (image shown in my home currency of GBP).

That's a crazy estimate, but it highlights the unbelievable potential of a channel that presents memes.

This channel thrives on quantity, thus putting out videos is necessary to keep the flow continuing and capture its audience's attention.

How Are the Videos Made?

Straightforward. Memes are added to a presentation without editing (so you could make this in PowerPoint or Keynote).

Each slide should include a unique image and caption. Set 10 seconds per slide.

Add music and post the video.

Finding enough memes for the material and theming is difficult, but if you enjoy memes, this is a fun job.

This case study should have shown you that you don't need expensive software or design expertise to make entertaining videos. Why not try fresh, easy-to-do ideas and see where they lead?

Sammy Abdullah

3 years ago

SaaS payback period data

It's ok and even desired to be unprofitable if you're gaining revenue at a reasonable cost and have 100%+ net dollar retention, meaning you never lose customers and expand them. To estimate the acceptable cost of new SaaS revenue, we compare new revenue to operating loss and payback period. If you pay back the customer acquisition cost in 1.5 years and never lose them (100%+ NDR), you're doing well.

To evaluate payback period, we compared new revenue to net operating loss for the last 73 SaaS companies to IPO since October 2017. (55 out of 73). Here's the data. 1/(new revenue/operating loss) equals payback period. New revenue/operating loss equals cost of new revenue.

Payback averages a year. 55 SaaS companies that weren't profitable at IPO got a 1-year payback. Outstanding. If you pay for a customer in a year and never lose them (100%+ NDR), you're establishing a valuable business. The average was 1.3 years, which is within the 1.5-year range.

New revenue costs $0.96 on average. These SaaS companies lost $0.96 every $1 of new revenue last year. Again, impressive. Average new revenue per operating loss was $1.59.

Loss-in-operations definition. Operating loss revenue COGS S&M R&D G&A (technical point: be sure to use the absolute value of operating loss). It's wrong to only consider S&M costs and ignore other business costs. Operating loss and new revenue are measured over one year to eliminate seasonality.

Operating losses are desirable if you never lose a customer and have a quick payback period, especially when SaaS enterprises are valued on ARR. The payback period should be under 1.5 years, the cost of new income < $1, and net dollar retention 100%.

DC Palter

3 years ago

How Will You Generate $100 Million in Revenue? The Startup Business Plan

A top-down company plan facilitates decision-making and impresses investors.

A startup business plan starts with the product, the target customers, how to reach them, and how to grow the business.

Bottom-up is terrific unless venture investors fund it.

If it can prove how it can exceed $100M in sales, investors will invest. If not, the business may be wonderful, but it's not venture capital-investable.

As a rule, venture investors only fund firms that expect to reach $100M within 5 years.

Investors get nothing until an acquisition or IPO. To make up for 90% of failed investments and still generate 20% annual returns, portfolio successes must exit with a 25x return. A $20M-valued company must be acquired for $500M or more.

This requires $100M in sales (or being on a nearly vertical trajectory to get there). The company has 5 years to attain that milestone and create the requisite ROI.

This motivates venture investors (venture funds and angel investors) to hunt for $100M firms within 5 years. When you pitch investors, you outline how you'll achieve that aim.

I'm wary of pitches after seeing a million hockey sticks predicting $5M to $100M in year 5 that never materialized. Doubtful.

Startups fail because they don't have enough clients, not because they don't produce a great product. That jump from $5M to $100M never happens. The company reaches $5M or $10M, growing at 10% or 20% per year. That's great, but not enough for a $500 million deal.

Once it becomes clear the company won’t reach orbit, investors write it off as a loss. When a corporation runs out of money, it's shut down or sold in a fire sale. The company can survive if expenses are trimmed to match revenues, but investors lose everything.

When I hear a pitch, I'm not looking for bright income projections but a viable plan to achieve them. Answer these questions in your pitch.

Is the market size sufficient to generate $100 million in revenue?

Will the initial beachhead market serve as a springboard to the larger market or as quicksand that hinders progress?

What marketing plan will bring in $100 million in revenue? Is the market diffuse and will cost millions of dollars in advertising, or is it one, focused market that can be tackled with a team of salespeople?

Will the business be able to bridge the gap from a small but fervent set of early adopters to a larger user base and avoid lock-in with their current solution?

Will the team be able to manage a $100 million company with hundreds of people, or will hypergrowth force the organization to collapse into chaos?

Once the company starts stealing market share from the industry giants, how will it deter copycats?

The requirement to reach $100M may be onerous, but it provides a context for difficult decisions: What should the product be? Where should we concentrate? who should we hire? Every strategic choice must consider how to reach $100M in 5 years.

Focusing on $100M streamlines investor pitches. Instead of explaining everything, focus on how you'll attain $100M.

As an investor, I know I'll lose my money if the startup doesn't reach this milestone, so the revenue prediction is the first thing I look at in a pitch deck.

Reaching the $100M goal needs to be the first thing the entrepreneur thinks about when putting together the business plan, the central story of the pitch, and the criteria for every important decision the company makes.

You might also like

Bloomberg

4 years ago

Expulsion of ten million Ukrainians

According to recent data from two UN agencies, ten million Ukrainians have been displaced.

The International Organization for Migration (IOM) estimates nearly 6.5 million Ukrainians have relocated. Most have fled the war zones around Kyiv and eastern Ukraine, including Dnipro, Zhaporizhzhia, and Kharkiv. Most IDPs have fled to western and central Ukraine.

Since Russia invaded on Feb. 24, 3.6 million people have crossed the border to seek refuge in neighboring countries, according to the latest UN data. While most refugees have fled to Poland and Romania, many have entered Russia.

Internally displaced figures are IOM estimates as of March 19, based on 2,000 telephone interviews with Ukrainians aged 18 and older conducted between March 9-16. The UNHCR compiled the figures for refugees to neighboring countries on March 21 based on official border crossing data and its own estimates. The UNHCR's top-line total is lower than the country totals because Romania and Moldova totals include people crossing between the two countries.

Sources: IOM, UNHCR

According to IOM estimates based on telephone interviews with a representative sample of internally displaced Ukrainians, over 53% of those displaced are women, and over 60% of displaced households have children.

shivsak

3 years ago

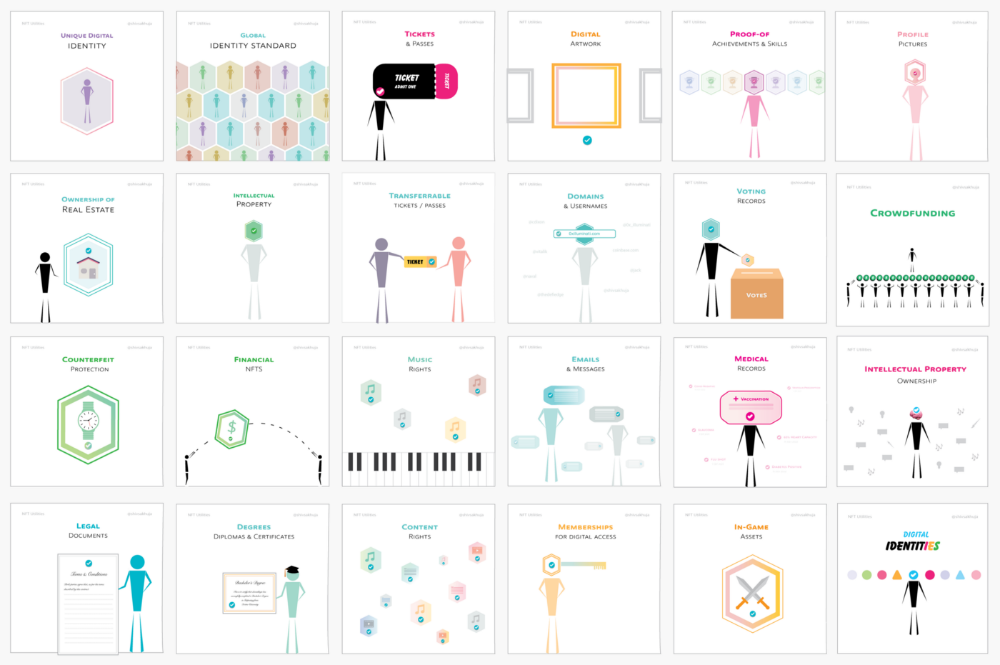

A visual exploration of the REAL use cases for NFTs in the Future

In this essay, I studied REAL NFT use examples and their potential uses.

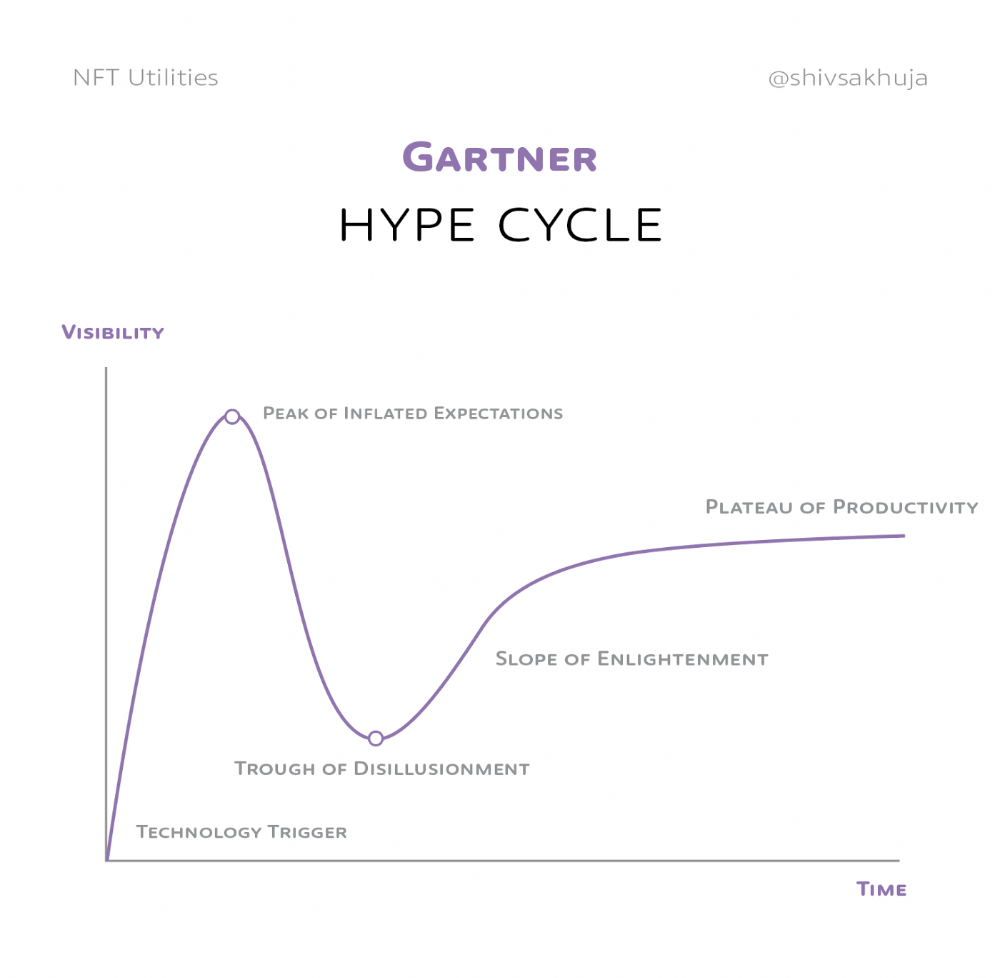

Knowledge of the Hype Cycle

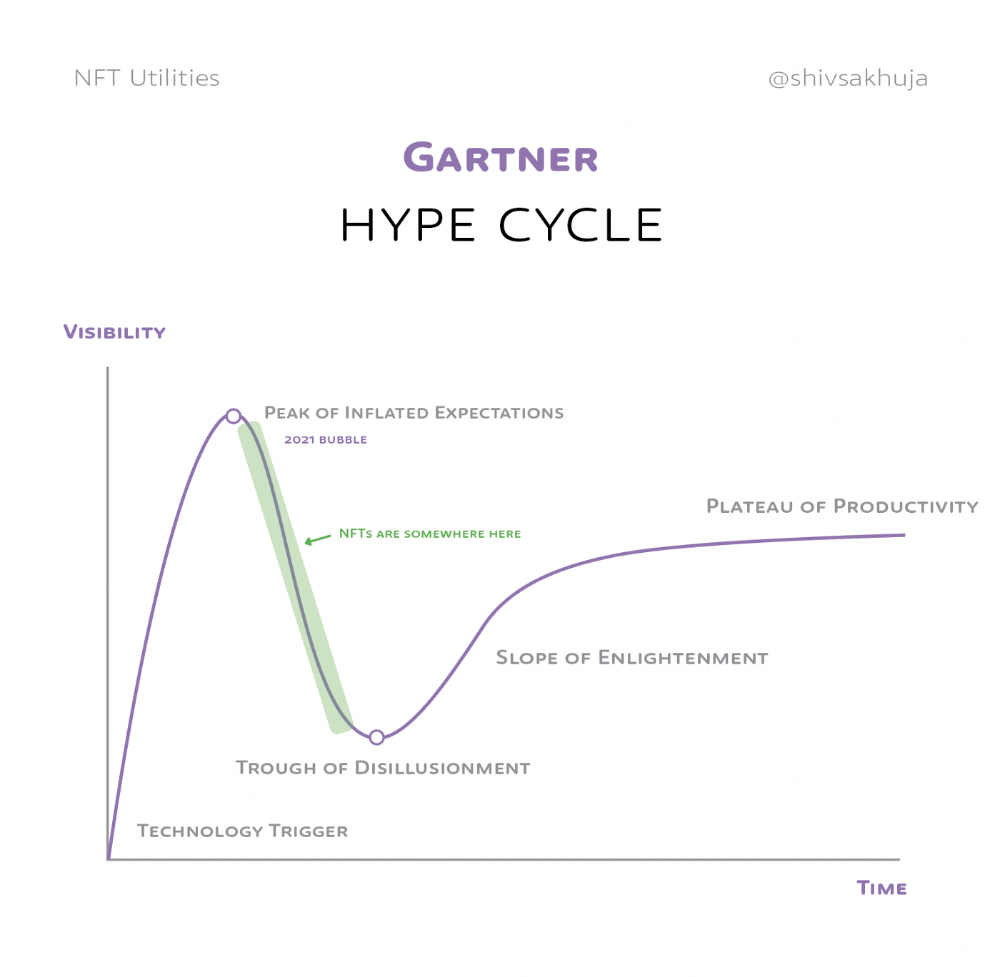

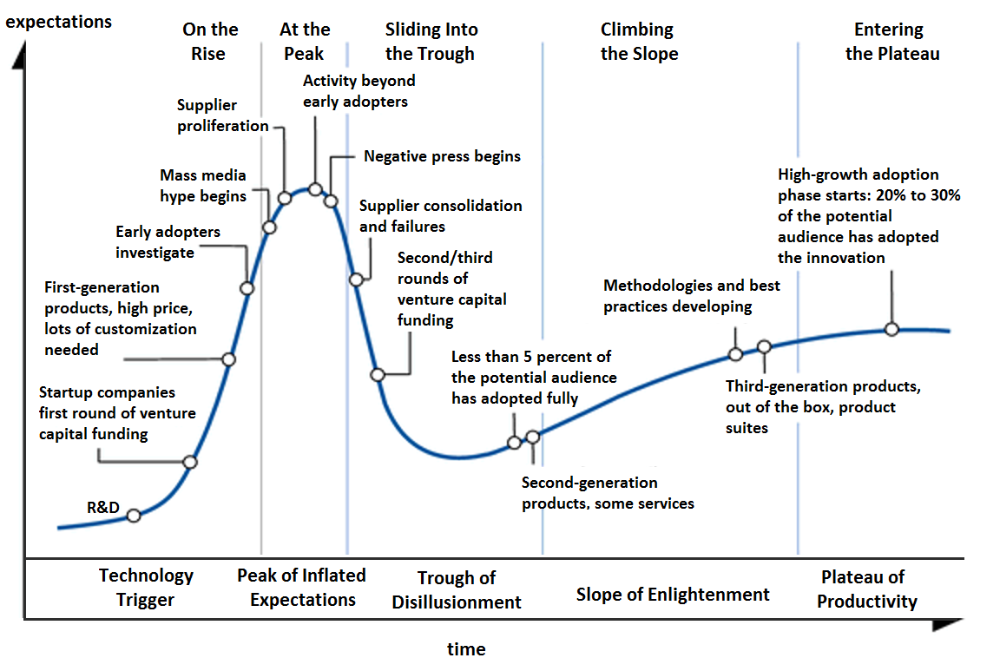

Gartner's Hype Cycle.

It proposes 5 phases for disruptive technology.

1. Technology Trigger: the emergence of potentially disruptive technology.

2. Peak of Inflated Expectations: Early publicity creates hype. (Ex: 2021 Bubble)

3. Trough of Disillusionment: Early projects fail to deliver on promises and the public loses interest. I suspect NFTs are somewhere around this trough of disillusionment now.

4. Enlightenment slope: The tech shows successful use cases.

5. Plateau of Productivity: Mainstream adoption has arrived and broader market applications have proven themselves. Here’s a more detailed visual of the Gartner Hype Cycle from Wikipedia.

In the speculative NFT bubble of 2021, @beeple sold Everydays: the First 5000 Days for $69 MILLION in 2021's NFT bubble.

@nbatopshot sold millions in video collectibles.

This is when expectations peaked.

Let's examine NFTs' real-world applications.

Watch this video if you're unfamiliar with NFTs.

Online Art

Most people think NFTs are rich people buying worthless JPEGs and MP4s.

Digital artwork and collectibles are revolutionary for creators and enthusiasts.

NFT Profile Pictures

You might also have seen NFT profile pictures on Twitter.

My profile picture is an NFT I coined with @skogards factoria app, which helps me avoid bogus accounts.

Profile pictures are a good beginning point because they're unique and clearly yours.

NFTs are a way to represent proof-of-ownership. It’s easier to prove ownership of digital assets than physical assets, which is why artwork and pfps are the first use cases.

They can do much more.

NFTs can represent anything with a unique owner and digital ownership certificate. Domains and usernames.

Usernames & Domains

@unstoppableweb, @ensdomains, @rarible sell NFT domains.

NFT domains are transferable, which is a benefit.

Godaddy and other web2 providers have difficult-to-transfer domains. Domains are often leased instead of purchased.

Tickets

NFTs can also represent concert tickets and event passes.

There's a limited number, and entry requires proof.

NFTs can eliminate the problem of forgery and make it easy to verify authenticity and ownership.

NFT tickets can be traded on the secondary market, which allows for:

marketplaces that are uniform and offer the seller and buyer security (currently, tickets are traded on inefficient markets like FB & craigslist)

unbiased pricing

Payment of royalties to the creator

4. Historical ticket ownership data implies performers can airdrop future passes, discounts, etc.

5. NFT passes can be a fandom badge.

The $30B+ online tickets business is increasing fast.

NFT-based ticketing projects:

Gaming Assets

NFTs also help in-game assets.

Imagine someone spending five years collecting a rare in-game blade, then outgrowing or quitting the game. Gamers value that collectible.

The gaming industry is expected to make $200 BILLION in revenue this year, a significant portion of which comes from in-game purchases.

Royalties on secondary market trading of gaming assets encourage gaming businesses to develop NFT-based ecosystems.

Digital assets are the start. On-chain NFTs can represent real-world assets effectively.

Real estate has a unique owner and requires ownership confirmation.

Real Estate

Tokenizing property has many benefits.

1. Can be fractionalized to increase access, liquidity

2. Can be collateralized to increase capital efficiency and access to loans backed by an on-chain asset

3. Allows investors to diversify or make bets on specific neighborhoods, towns or cities +++

I've written about this thought exercise before.

I made an animated video explaining this.

We've just explored NFTs for transferable assets. But what about non-transferrable NFTs?

SBTs are Soul-Bound Tokens. Vitalik Buterin (Ethereum co-founder) blogged about this.

NFTs are basically verifiable digital certificates.

Diplomas & Degrees

That fits Degrees & Diplomas. These shouldn't be marketable, thus they can be non-transferable SBTs.

Anyone can verify the legitimacy of on-chain credentials, degrees, abilities, and achievements.

The same goes for other awards.

For example, LinkedIn could give you a verified checkmark for your degree or skills.

Authenticity Protection

NFTs can also safeguard against counterfeiting.

Counterfeiting is the largest criminal enterprise in the world, estimated to be $2 TRILLION a year and growing.

Anti-counterfeit tech is valuable.

This is one of @ORIGYNTech's projects.

Identity

Identity theft/verification is another real-world problem NFTs can handle.

In the US, 15 million+ citizens face identity theft every year, suffering damages of over $50 billion a year.

This isn't surprising considering all you need for US identity theft is a 9-digit number handed around in emails, documents, on the phone, etc.

Identity NFTs can fix this.

NFTs are one-of-a-kind and unforgeable.

NFTs offer a universal standard.

NFTs are simple to verify.

SBTs, or non-transferrable NFTs, are tied to a particular wallet.

In the event of wallet loss or theft, NFTs may be revoked.

This could be one of the biggest use cases for NFTs.

Imagine a global identity standard that is standardized across countries, cannot be forged or stolen, is digital, easy to verify, and protects your private details.

Since your identity is more than your government ID, you may have many NFTs.

@0xPolygon and @civickey are developing on-chain identity.

Memberships

NFTs can authenticate digital and physical memberships.

Voting

NFT IDs can verify votes.

If you remember 2020, you'll know why this is an issue.

Online voting's ease can boost turnout.

Informational property

NFTs can protect IP.

This can earn creators royalties.

NFTs have 2 important properties:

Verifiability IP ownership is unambiguously stated and publicly verified.

Platforms that enable authors to receive royalties on their IP can enter the market thanks to standardization.

Content Rights

Monetization without copyrighting = more opportunities for everyone.

This works well with the music.

Spotify and Apple Music pay creators very little.

Crowdfunding

Creators can crowdfund with NFTs.

NFTs can represent future royalties for investors.

This is particularly useful for fields where people who are not in the top 1% can’t make money. (Example: Professional sports players)

Mirror.xyz allows blog-based crowdfunding.

Financial NFTs

This introduces Financial NFTs (fNFTs). Unique financial contracts abound.

Examples:

a person's collection of assets (unique portfolio)

A loan contract that has been partially repaid with a lender

temporal tokens (ex: veCRV)

Legal Agreements

Not just financial contracts.

NFT can represent any legal contract or document.

Messages & Emails

What about other agreements? Verbal agreements through emails and messages are likewise unique, but they're easily lost and fabricated.

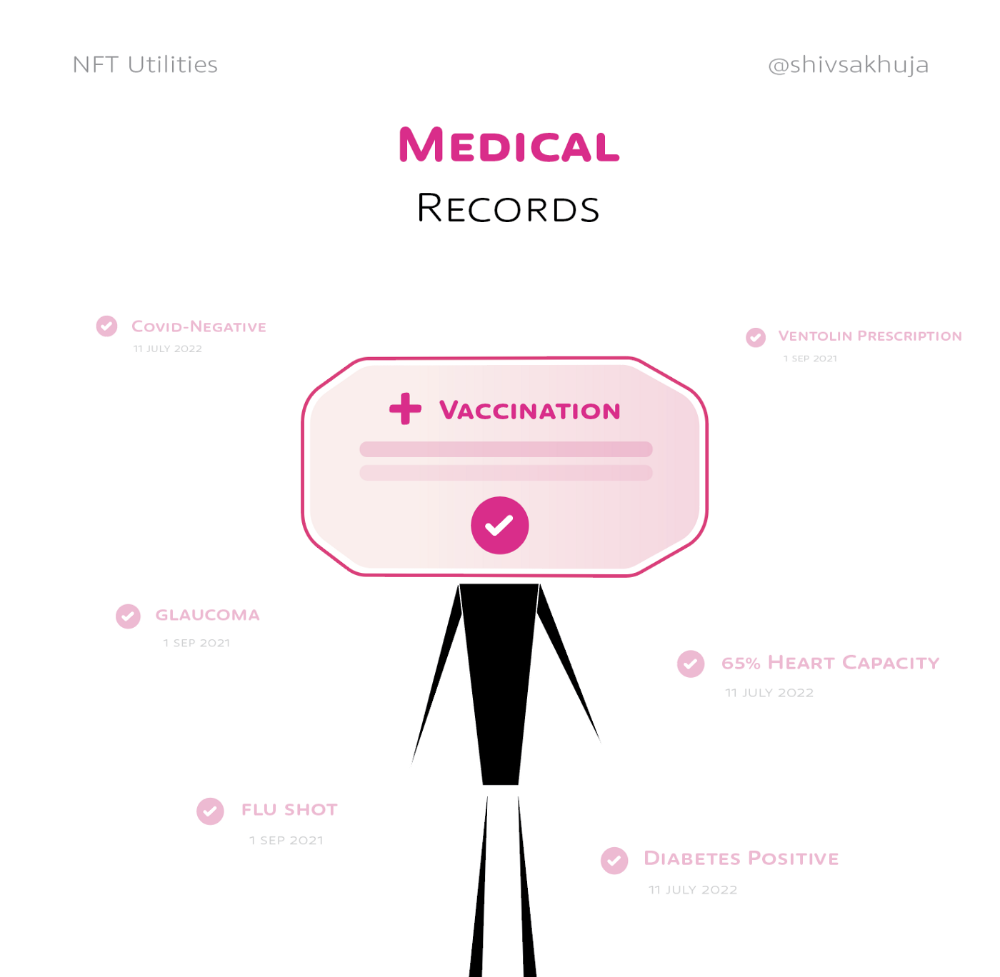

Health Records

Medical records or prescriptions are another types of documentation that has to be verified but isn't.

Medical NFT examples:

Immunization records

Covid test outcomes

Prescriptions

health issues that may affect one's identity

Observations made via health sensors

Existing systems of proof by paper / PDF have photoshop-risk.

I tried to include most use scenarios, but this is just the beginning.

NFTs have many innovative uses.

For example: @ShaanVP minted an NFT called “5 Minutes of Fame” 👇

Here are 2 Twitter threads about NFTs:

This piece of gold by @chriscantino

2. This conversation between @punk6529 and @RaoulGMI on @RealVision“The World According to @punk6529”

If you're wondering why NFTs are better than web2 databases for these use scenarios, see this Twitter thread I wrote:

If you liked this, please share it.

Isobel Asher Hamilton

3 years ago

$181 million in bitcoin buried in a dump. $11 million to get them back

James Howells lost 8,000 bitcoins. He has $11 million to get them back.

His life altered when he threw out an iPhone-sized hard drive.

Howells, from the city of Newport in southern Wales, had two identical laptop hard drives squirreled away in a drawer in 2013. One was blank; the other had 8,000 bitcoins, currently worth around $181 million.

He wanted to toss out the blank one, but the drive containing the Bitcoin went to the dump.

He's determined to reclaim his 2009 stash.

Howells, 36, wants to arrange a high-tech treasure hunt for bitcoins. He can't enter the landfill.

Newport's city council has rebuffed Howells' requests to dig for his hard drive for almost a decade, stating it would be expensive and environmentally destructive.

I got an early look at his $11 million idea to search 110,000 tons of trash. He expects submitting it to the council would convince it to let him recover the hard disk.

110,000 tons of trash, 1 hard drive

Finding a hard disk among heaps of trash may seem Herculean.

Former IT worker Howells claims it's possible with human sorters, robot dogs, and an AI-powered computer taught to find hard drives on a conveyor belt.

His idea has two versions, depending on how much of the landfill he can search.

His most elaborate solution would take three years and cost $11 million to sort 100,000 metric tons of waste. Scaled-down version costs $6 million and takes 18 months.

He's created a team of eight professionals in AI-powered sorting, landfill excavation, garbage management, and data extraction, including one who recovered Columbia's black box data.

The specialists and their companies would be paid a bonus if they successfully recovered the bitcoin stash.

Howells: "We're trying to commercialize this project."

Howells claimed rubbish would be dug up by machines and sorted near the landfill.

Human pickers and a Max-AI machine would sort it. The machine resembles a scanner on a conveyor belt.

Remi Le Grand of Max-AI told us it will train AI to recognize Howells-like hard drives. A robot arm would select candidates.

Howells has added security charges to his scheme because he fears people would steal the hard drive.

He's budgeted for 24-hour CCTV cameras and two robotic "Spot" canines from Boston Dynamics that would patrol at night and look for his hard drive by day.

Howells said his crew met in May at the Celtic Manor Resort outside Newport for a pitch rehearsal.

Richard Hammond's narrative swings from banal to epic.

Richard Hammond filmed the meeting and created a YouTube documentary on Howells.

Hammond said of Howells' squad, "They're committed and believe in him and the idea."

Hammond: "It goes from banal to gigantic." "If I were in his position, I wouldn't have the strength to answer the door."

Howells said trash would be cleaned and repurposed after excavation. Reburying the rest.

"We won't pollute," he declared. "We aim to make everything better."

After the project is finished, he hopes to develop a solar or wind farm on the dump site. The council is unlikely to accept his vision soon.

A council representative told us, "Mr. Howells can't convince us of anything." "His suggestions constitute a significant ecological danger, which we can't tolerate and are forbidden by our permit."

Will the recovered hard drive work?

The "platter" is a glass or metal disc that holds the hard drive's data. Howells estimates 80% to 90% of the data will be recoverable if the platter isn't damaged.

Phil Bridge, a data-recovery expert who consulted Howells, confirmed these numbers.

If the platter is broken, Bridge adds, data recovery is unlikely.

Bridge says he was intrigued by the proposal. "It's an intriguing case," he added. Helping him get it back and proving everyone incorrect would be a great success story.

Who'd pay?

Swiss and German venture investors Hanspeter Jaberg and Karl Wendeborn told us they would fund the project if Howells received council permission.

Jaberg: "It's a needle in a haystack and a high-risk investment."

Howells said he had no contract with potential backers but had discussed the proposal in Zoom meetings. "Until Newport City Council gives me something in writing, I can't commit," he added.

Suppose he finds the bitcoins.

Howells said he would keep 30% of the data, worth $54 million, if he could retrieve it.

A third would go to the recovery team, 30% to investors, and the remainder to local purposes, including gifting £50 ($61) in bitcoin to each of Newport's 150,000 citizens.

Howells said he opted to spend extra money on "professional firms" to help convince the council.

What if the council doesn't approve?

If Howells can't win the council's support, he'll sue, claiming its actions constitute a "illegal embargo" on the hard drive. "I've avoided that path because I didn't want to cause complications," he stated. I wanted to cooperate with Newport's council.

Howells never met with the council face-to-face. He mentioned he had a 20-minute Zoom meeting in May 2021 but thought his new business strategy would help.

He met with Jessica Morden on June 24. Morden's office confirmed meeting.

After telling the council about his proposal, he can only wait. "I've never been happier," he said. This is our most professional operation, with the best employees.

The "crypto proponent" buys bitcoin every month and sells it for cash.

Howells tries not to think about what he'd do with his part of the money if the hard disk is found functional. "Otherwise, you'll go mad," he added.

This post is a summary. Read the full article here.