More on Technology

Mark Schaefer

3 years ago

20 Fun Uses for ChatGPT

Our RISE community is stoked on ChatGPT. ChatGPT has countless uses.

Early on. Companies are figuring out the legal and ethical implications of AI's content revolution. Using AI for everyday tasks is cool.

So I challenged RISE friends... Let's have fun and share non-obvious uses.

Onward!

1. Tweet

Jim MacLeod requested a design-themed Twitter thread from ChatGPT.

2. Novelize

Giuseppe Fratoni writes novels.

"This is amazing!" Impotent. I asked about writing, mystery novels, and jazz.

"Develop a mystery novel plot in which a disillusioned homicide detective hunts a serial killer who targets jazz musicians who play Autumn Leaves."

"I have:

Detective James Thompson is a seasoned veteran of the Homicide department, but lately he’s been feeling burnt out and disillusioned. His latest case, the murder of a young jazz pianist who was found brutally killed in his home, only serves to deepen his sense of despair … (much more provided)

3. Question-and-answer sessions

Bliss recommends:

You have a guest speaker and want to ask audience questions.

Pre-generate questions with ChatGPT. "And here's a question from our AI?"

The conference is about Western water scarcity. Water management expert. My AI question was...

Which desalination-related questions should I ask an expert?

ChatGPT said...

How is desalination currently being used around the world, and in what types of locations is it most effective?

What are the potential advantages and disadvantages of using desalination to provide fresh water?

What are some of the key challenges and limitations of desalination technology, and how are they being addressed?

4. Game-making

Richard's a board game expert. He suggests using ChatGPT to plan games. Note: Some people have started getting help solving crossword puzzle clues.

5. Matchmaking

Tanya Thompson thinks it helps dating. ChatGPT:

Here are some flirty first-message ideas for dating apps:

I noticed you from afar. I had to say hi and see if we clicked.

Your profile picture is gorgeous. I'm obsessed with your [Feature] and want to know you better.

I don't usually message first, but I had to. You're [Attractive Quality] and I can't wait to talk.”

Hello. Your profile intimidates me. I'm starstruck by your [Attractiveness]. (Many instances)

5. Solitude/anxiety

ChatGPT excels at one-on-one conversations.

PepperBrooks: "I'm sad and lonely."

ChatGPT: I’m sorry to hear that you’re feeling sad and lonely. It’s natural to feel down sometimes, but it’s important to remember that you’re not alone. There are many people who care about you and want to help you. It can be helpful to talk to someone about your feelings, whether it’s a close friend, family member, or mental health professional.

Sometimes, just expressing your thoughts and emotions to someone else can help to alleviate your sadness and loneliness. It’s also important to take care of yourself and do things that make you happy, such as spending time with loved ones, pursuing a hobby, or exercising. These things can help to improve your mood and make you feel more connected to others. Remember that you are strong and capable, and that you can get through this difficult time.

Daniel Nestle asked ChatGPT to generate journal prompts to help him deal with anxiety.

Write down the things that are causing you anxiety at the moment.

Describe a situation in which you felt anxious and how you coped with it.

Reflect on how your thoughts and beliefs contribute to your anxiety.

Write a letter to your anxiety, expressing your feelings and concerns

Imagine yourself in a calm and peaceful place, and describe it in detail.

(many more items followed)

6. Names

Continued Daniel:

Also, naming ideas.

Me: Name this drink. Japanese gin, unsweetened tonic, lime, Szechuan pepper, grapefruit.

ChatGPT: How about “Sakura Sunrise?” The name incorporates the Japanese gin and the citrus flavors of grapefruit and lime, and the word “sunrise” suggests a fresh and invigorating start to the day. Alternatively, you could go with something like “Peppered Grapefruit Gin Fizz” to emphasize the unique flavor combination of Szechuan pepper and grapefruit.

7. Translation on the go

Monks recommends: ChatGPT is a perfect mobile translation tool. Say you need help in Italy.

ME: Translate to Italian

Where is the nearest gas station?

ChatGPT: “La mia auto sta per esaurire il carburante, mi puoi dire dove si trova la stazione di servizio più vicina?”

8. Fitness

Monks added:

ME: In 3 months, I want to run 5km. Plan out how I can do this.

ChatGPT: Here's a 3-month plan to run 5km.

Start by walking for 15–20 minutes each day to build up your endurance and get your body used to regular exercise.

After a week of walking, begin incorporating short jogs into your routine. Start by jogging for 1–2 minutes at a time, with walking breaks in between. Gradually increase the length of your jogs and reduce the amount of time you spend walking.

Once you can jog for 10 minutes without stopping, start increasing the distance of your runs. Aim to run 1km without stopping and then gradually increase the distance over time.

In addition to your regular runs, incorporate strength training and stretching into your routine to improve your overall fitness and reduce the risk of injury.

(more details continued on)

9. Integrations and coding

10. Teacher lesson plans

Spencer Crandall saved time.

This morning, I wondered who has the hardest job. Teachers.

Many students, little time. ChatGPT could handle lesson planning. I tried it and got this.

Lesson Title: The History and Impact of Artificial Intelligence

Students will understand the history and development of AI and the potential positive and negative impacts on society.

AI timeline AI technologies and applications

Overview:

Introduce the topic of artificial intelligence, and explain that it refers to the development of computer systems and algorithms that can simulate human intelligence and behavior.

Show the timeline of significant events in the history of AI, and discuss the key milestones and developments in the field.

Show examples of AI technologies and applications, and explain how they are used in various industries and fields.

Discuss the potential positive and negative impacts of AI on society, including its potential to improve productivity, efficiency, and safety, as well as its potential to create new challenges and concerns, such as job displacement and ethical issues.

(many more details followed)

11. Find podcast guests.

Joeri Billast suggested:

Who should be on my Web3 podcast?

ChatGPT: Web3 marketing experts, influencers, and thought leaders make great podcast guests. These include Web3 marketers, content creators, business owners, entrepreneurs, and technologists. You could invite Web3 startups and organizations to your podcast.

Please give examples.

ChatGPT: Ideal Web3 marketing podcast guests include:

Tim Berner-Lee, inventor of the World Wide Web

Chris Dixon, general partner at Andreessen Horowitz

Vitalik Buterin, founder of Ethereum

Joe Lubin, founder of ConsenSys

Susan Fowler, author of Whistleblower

(many other suggestions)

12. Comments and reviews on social media

Lisa Monk thinks ChatGPT can help retail staff with social media.

She asked ChatGPT to "review this restaurant"

POSITIVE CUSTOMER REVIEW: I absolutely love this place. We eat out a lot however this place has topped it all. Perfect meals, amazing staff and the timing for it all was great! Special thanks to Sophie B (you rock) From your guests all the way from the Gold Coast, THANK YOU

ChatGPT REPLY: Thank you so much for your kind words and for taking the time to write a review. We are delighted to hear that you had such a great experience at our restaurant. We are thrilled that you enjoyed your meal and the service provided by Sophie. It’s always a pleasure to serve guests visiting from the Gold Coast and we are grateful for your support. We look forward to welcoming you back again soon. Thank you again for your positive review.

CUSTOMER REVIEW: Most average food, there is a nice view, But that’s about it

ChatGPT: Thank you for your review. We apologize if your dining experience was not up to your expectations. We strive to provide top-quality food and service to all of our customers, and we will take your feedback into consideration as we continue to improve. Thank you for letting us know about your experience.

13. Crime-fighting

Zack Seipert said, "I researched some famous unsolved murders, and ChatGPT laid out the crime, suspects, clues, and more." I bet experienced detectives could solve some of these cold cases.

14. Reduce bias

Joanne Taylor struggles to remove biases from her writing. She asked ChatGPT for ideas.

I asked ChatGPT to check a text for racial bias, and it did well.

15. Furnishings

I designed a small, unique table using ChatGPT and MidJourney. I edited ChatGPT's idea for clarity and brevity before entering it in MidJourney.

Results:

16. Serialize a novel

Scott Scowcoft led ChatCPT through iterations of a story text to create serialized novel frames. You can view this here.

17. Interview preparation

I was on a sales podcast. Although this is marketing-related, it's not my forte. I asked ChatGPT for help with a sales interview. Response:

Here are some interview questions about the future of sales and how to answer them.

How will technology affect sales in the future?

Technology will impact sales in the coming years. Automation and AI could be used to handle routine tasks, and data analysis and CRM could become more important. E-commerce and online sales channels are also reshaping the sales landscape.

How will the salesperson's role change?

Future sales roles may change in response to changing customer needs and expectations. Salespeople must be knowledgeable and consultative to help customers navigate complex products and services. Increasing importance of data analysis and CRM tools will require salespeople to understand and use technology effectively.

Future sales professionals will need what skills?

Future salespeople will need many skills. Sales professionals must have strong communication and interpersonal skills, as well as product and service knowledge and the ability to use data analysis and CRM tools effectively. Adapting to changing customer needs and staying current on industry trends will also be important for sales success.

18. Gift ideas

I need Christmas gift ideas for my wife. I asked:

Suggest a gift idea under $200 for a middle-aged woman who loves The Eagles.

A middle-aged woman who loves The Eagles might like a vinyl record of "Hotel California" or "The Long Run." Many music fans enjoy the unique sound and experience of vinyl records.

Pair the record with a high-quality record player for a complete gift. The vinyl record and record player should fit within your $200 budget for an Eagles fan.

19. Explaining complex concepts

I entered: Explain quantum physics to me as if I were 10 years old.

Quantum physics is a branch of science that studies how tiny particles, like atoms and subatomic particles, behave. These particles are so small that they don’t always follow the same rules as the things we can see and touch every day.

For example, sometimes they can be in two places at the same time, or they can be friends with each other even if they are far apart. This might sound strange, but it’s what scientists have learned from studying these tiny particles. Quantum physics helps us understand how the world works at a very small scale, and it can also help us make new technologies, like computers that are much faster than the ones we have today.

20. AP-style editing

Blogs, journals, and books follow AP Style. I asked ChatGPT to "edit using AP Style" and it did a great job.

And then... "Write a catchy headline for a blog post about 16 ChatGPT uses"

Here are some possible blog post titles for 20 creative ChatGPT uses:

“20 Fun and Creative Ways to Use ChatGPT: From Games to Quizzes and More”

“The Ultimate Guide to Using ChatGPT for Entertainment and Creativity”

“20 Reasons to Use ChatGPT: How it Can Enhance Your Gaming, Storytelling, and More”

20 Entertaining Uses for ChatGPT You Never Knew Were Possible”

“Unleash Your Imagination with ChatGPT: 16 Creative and Fun Ways to Use it”

In a previous post, I said ChatGPT will change marketing careers forever. I have never made a statement like that in my life. Even in the early days of the internet, people were cautious.

This technology was just released two weeks ago, and over a million people are already using it. This is the fastest technology adoption in history.

Today's post offers inventive and entertaining ideas, but it's just the beginning. ChatGPT writes code, music, and papers.

Frank Andrade

3 years ago

I discovered a bug that allowed me to use ChatGPT to successfully web scrape. Here's how it operates.

This method scrapes websites with ChatGPT (demo with Amazon and Twitter)

In a recent article, I demonstrated how to scrape websites using ChatGPT prompts like scrape website X using Python.

But that doesn’t always work.

After scraping dozens of websites with ChatGPT, I realized that simple prompts rarely work for web scraping.

Using ChatGPT and basic HTML, we can scrape any website.

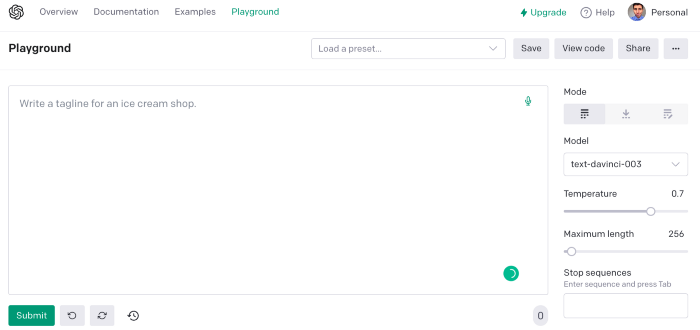

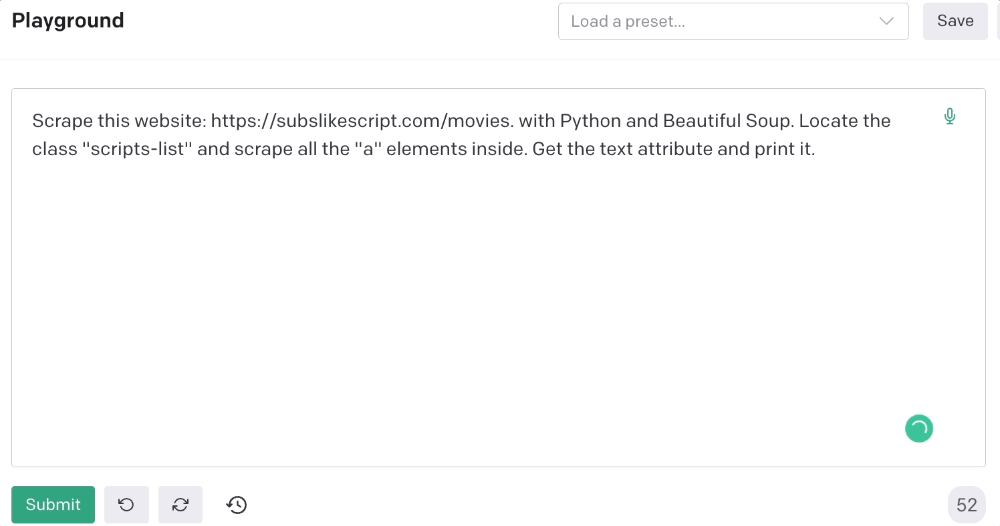

First things first, use ChatGPT's advanced version (Playground)

ChatGPT Playground's enhanced version is needed to scrape websites quickly. It generates code faster and has less constraints.

Here’s how it looks.

The classic ChatGPT display just allows you to type a prompt. Playground is more customizable and generates code faster than the basic version.

No more delays or restrictions.

In this tutorial, we'll write prompts underneath Playground.

How to Use ChatGPT to Scrape Any Website

To show you how to utilize ChatGPT to scrape any website, we'll use subslikescript, which lists movies.

Later in this post, I'll show you how to scrape Amazon and Twitter, but let's start easy.

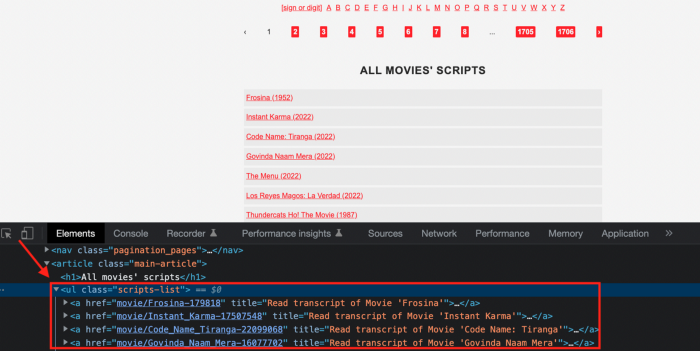

If we try a basic prompt like “scrape the movie titles on this website: https://subslikescript.com/movies” it won’t scrape anything. Here’s when we need some HTML knowledge to build the right prompt for ChatGPT.Let's scrape the above movie titles. First, check the webpage. Right-click any element and choose Inspect.

Then see below.

We identify the element to scrape (those in the red box). The data elements are ul, a, and scripts-list.

Here's the prompt for movie titles.

Scrape this website: https://subslikescript.com/movies with Python and Beautiful Soup. Locate the element with tag “ul” and class “scripts-list”. Scrape all the “a” elements inside. Get the text attribute and print it.

Code generated:

import requests

from bs4 import BeautifulSoup

page = requests.get('https://subslikescript.com/movies/')

soup = BeautifulSoup(page.content, 'html.parser')

scripts_list = soup.find(class_="scripts-list")

all_a_elements = scripts_list.find_all('a')

for element in all_a_elements:

print(element.get_text())It extracts movie titles successfully.

Let's scrape Amazon and Twitter.

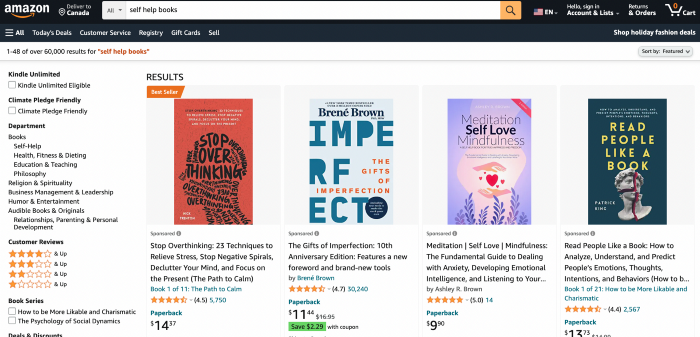

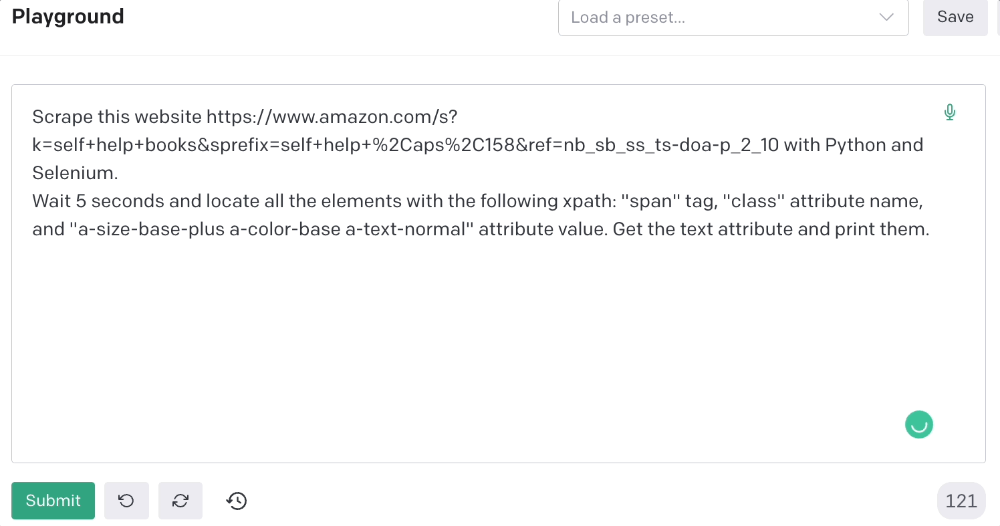

ChatGPT's Amazon scraping

Consider scraping Amazon for self-help books. First, copy the Amazon link for self-help books.

Here’s the link I got. Location-dependent connection. Use my link to replicate my results.

Now we'll check book titles. Here's our element.

If we want to extract the book titles, we need to use the tag name span, class attribute name and a-size-base-plus a-color-base a-text-normalattribute value.

This time I'll use Selenium. I'll add Selenium-specific commands like wait 5 seconds and generate an XPath.

Scrape this website https://www.amazon.com/s?k=self+help+books&sprefix=self+help+%2Caps%2C158&ref=nb_sb_ss_ts-doa-p_2_10 with Python and Selenium.

Wait 5 seconds and locate all the elements with the following xpath: “span” tag, “class” attribute name, and “a-size-base-plus a-color-base a-text-normal” attribute value. Get the text attribute and print them.

Code generated: (I only had to manually add the path where my chromedriver is located).

from selenium import webdriver

from selenium.webdriver.common.by import By

from time import sleep

#initialize webdriver

driver = webdriver.Chrome('<add path of your chromedriver>')

#navigate to the website

driver.get("https://www.amazon.com/s?k=self+help+books&sprefix=self+help+%2Caps%2C158&ref=nb_sb_ss_ts-doa-p_2_10")

#wait 5 seconds to let the page load

sleep(5)

#locate all the elements with the following xpath

elements = driver.find_elements(By.XPATH, '//span[@class="a-size-base-plus a-color-base a-text-normal"]')

#get the text attribute of each element and print it

for element in elements:

print(element.text)

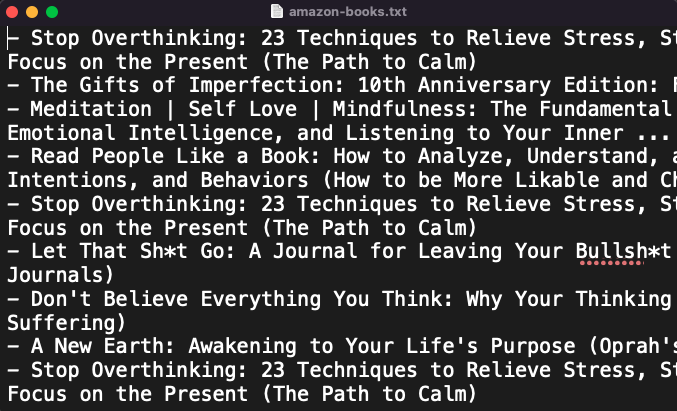

#close the webdriver

driver.close()It pulls Amazon book titles.

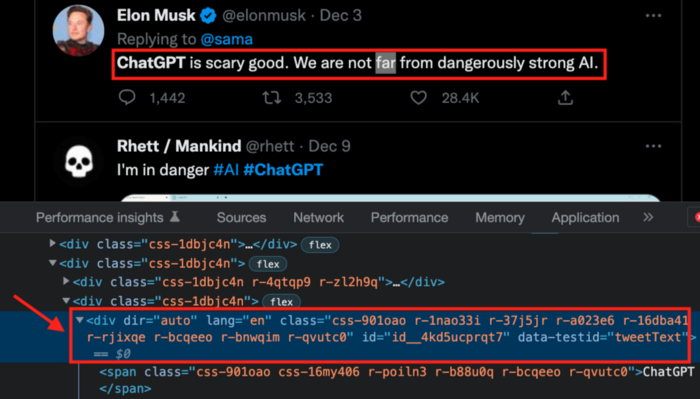

Utilizing ChatGPT to scrape Twitter

Say you wish to scrape ChatGPT tweets. Search Twitter for ChatGPT and copy the URL.

Here’s the link I got. We must check every tweet. Here's our element.

To extract a tweet, use the div tag and lang attribute.

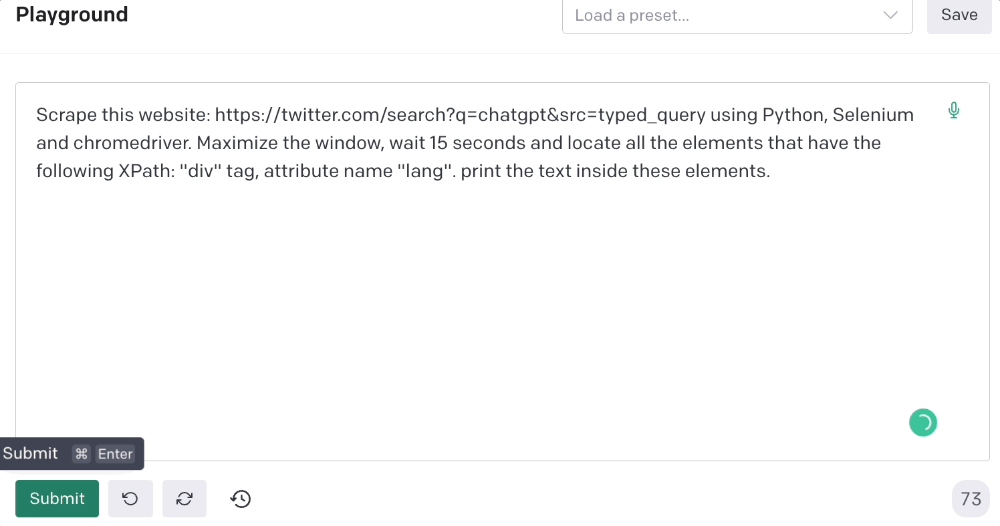

Again, Selenium.

Scrape this website: https://twitter.com/search?q=chatgpt&src=typed_query using Python, Selenium and chromedriver.

Maximize the window, wait 15 seconds and locate all the elements that have the following XPath: “div” tag, attribute name “lang”. Print the text inside these elements.

Code generated: (again, I had to add the path where my chromedriver is located)

from selenium import webdriver

import time

driver = webdriver.Chrome("/Users/frankandrade/Downloads/chromedriver")

driver.maximize_window()

driver.get("https://twitter.com/search?q=chatgpt&src=typed_query")

time.sleep(15)

elements = driver.find_elements_by_xpath("//div[@lang]")

for element in elements:

print(element.text)

driver.quit()You'll get the first 2 or 3 tweets from a search. To scrape additional tweets, click X times.

Congratulations! You scraped websites without coding by using ChatGPT.

Duane Michael

3 years ago

Don't Fall Behind: 7 Subjects You Must Understand to Keep Up with Technology

As technology develops, you should stay up to date

You don't want to fall behind, do you? This post covers 7 tech-related things you should know.

You'll learn how to operate your computer (and other electronic devices) like an expert and how to leverage the Internet and social media to create your brand and business. Read on to stay relevant in today's tech-driven environment.

You must learn how to code.

Future-language is coding. It's how we and computers talk. Learn coding to keep ahead.

Try Codecademy or Code School. There are also numerous free courses like Coursera or Udacity, but they take a long time and aren't necessarily self-paced, so it can be challenging to find the time.

Artificial intelligence (AI) will transform all jobs.

Our skillsets must adapt with technology. AI is a must-know topic. AI will revolutionize every employment due to advances in machine learning.

Here are seven AI subjects you must know.

What is artificial intelligence?

How does artificial intelligence work?

What are some examples of AI applications?

How can I use artificial intelligence in my day-to-day life?

What jobs have a high chance of being replaced by artificial intelligence and how can I prepare for this?

Can machines replace humans? What would happen if they did?

How can we manage the social impact of artificial intelligence and automation on human society and individual people?

Blockchain Is Changing the Future

Few of us know how Bitcoin and blockchain technology function or what impact they will have on our lives. Blockchain offers safe, transparent, tamper-proof transactions.

It may alter everything from business to voting. Seven must-know blockchain topics:

Describe blockchain.

How does the blockchain function?

What advantages does blockchain offer?

What possible uses for blockchain are there?

What are the dangers of blockchain technology?

What are my options for using blockchain technology?

What does blockchain technology's future hold?

Cryptocurrencies are here to stay

Cryptocurrencies employ cryptography to safeguard transactions and manage unit creation. Decentralized cryptocurrencies aren't controlled by governments or financial institutions.

Bitcoin, the first cryptocurrency, was launched in 2009. Cryptocurrencies can be bought and sold on decentralized exchanges.

Bitcoin is here to stay.

Bitcoin isn't a fad, despite what some say. Since 2009, Bitcoin's popularity has grown. Bitcoin is worth learning about now. Since 2009, Bitcoin has developed steadily.

With other cryptocurrencies emerging, many people are wondering if Bitcoin still has a bright future. Curiosity is natural. Millions of individuals hope their Bitcoin investments will pay off since they're popular now.

Thankfully, they will. Bitcoin is still running strong a decade after its birth. Here's why.

The Internet of Things (IoT) is no longer just a trendy term.

IoT consists of internet-connected physical items. These items can share data. IoT is young but developing fast.

20 billion IoT-connected devices are expected by 2023. So much data! All IT teams must keep up with quickly expanding technologies. Four must-know IoT topics:

Recognize the fundamentals: Priorities first! Before diving into more technical lingo, you should have a fundamental understanding of what an IoT system is. Before exploring how something works, it's crucial to understand what you're working with.

Recognize Security: Security does not stand still, even as technology advances at a dizzying pace. As IT professionals, it is our duty to be aware of the ways in which our systems are susceptible to intrusion and to ensure that the necessary precautions are taken to protect them.

Be able to discuss cloud computing: The cloud has seen various modifications over the past several years once again. The use of cloud computing is also continually changing. Knowing what kind of cloud computing your firm or clients utilize will enable you to make the appropriate recommendations.

Bring Your Own Device (BYOD)/Mobile Device Management (MDM) is a topic worth discussing (MDM). The ability of BYOD and MDM rules to lower expenses while boosting productivity among employees who use these services responsibly is a major factor in their continued growth in popularity.

IoT Security is key

As more gadgets connect, they must be secure. IoT security includes securing devices and encrypting data. Seven IoT security must-knows:

fundamental security ideas

Authorization and identification

Cryptography

electronic certificates

electronic signatures

Private key encryption

Public key encryption

Final Thoughts

With so much going on in the globe, it can be hard to stay up with technology. We've produced a list of seven tech must-knows.

You might also like

Hunter Walk

2 years ago

Is it bad of me to want our portfolio companies to generate greater returns for outside investors than they did for us as venture capitalists?

Wishing for Lasting Companies, Not Penny Stocks or Goodwill Write-Downs

Get me a NASCAR-style company-logoed cremation urn (notice to the executor of my will, theres gonna be a lot of weird requests). I believe in working on projects that would be on your tombstone. As the Homebrew logo is tattooed on my shoulder, expanding the portfolio to my posthumous commemoration is easy. But this isn't an IRR victory lap; it's a hope that the firms we worked for would last beyond my lifetime.

![a little boy planting a dollar bill in the ground and pouring a watering can out on it, digital art [DALL-E]](https://storage.googleapis.com/int3grity/posts/V62hkReDx56S/images/vMwzqrYeXaYnUIMXAdTY9)

Venture investors too often take credit or distance themselves from startups based on circumstances. Successful companies tell stories of crucial introductions, strategy conversations, and other value. Defeats Even whether our term involves Board service or systematic ethical violations, I'm just a little investment, so there's not much I can do. Since I'm guilty, I'm tossing stones from within the glass home (although we try to own our decisions through the lifecycle).

Post-exit company trajectories are usually unconfounded. Off the cap table, no longer a shareholder (or a diminishing one as you sell off/distribute), eventually leaving the Board. You can cheer for the squad or forget about it, but you've freed the corporation and it's back to portfolio work.

As I look at the downward track of most SPACs and other tarnished IPOs from the last few years, I wonder how I would feel if those were my legacy. Is my job done? Yes. When investing in a business, the odds are against it surviving, let alone thriving and being able to find sunlight. SPAC sponsors, institutional buyers, retail investments. Free trade in an open market is their right. Risking and losing capital is the system working! But

We were lead or co-lead investors in our first three funds, but as additional VCs joined the company, we were pushed down the cap table. Voting your shares rarely matters; supporting the firm when they need it does. Being valuable, consistent, and helping the company improve builds trust with the founders.

I hope every startup we sponsor becomes a successful public company before, during, and after we benefit. My perspective of American capitalism. Well, a stock ticker has a lot of garbage, and I support all types of regulation simplification (in addition to being a person investor in the Long-Term Stock Exchange). Yet being owned by a large group of investors and making actual gains for them is great. Likewise does seeing someone you met when they were just starting out become a public company CEO without losing their voice, leadership, or beliefs.

I'm just thinking about what we can do from the start to realize value from our investments and build companies with bright futures. Maybe seed venture financing shouldn't impact those outcomes, but I'm not comfortable giving up that obligation.

Daniel Clery

3 years ago

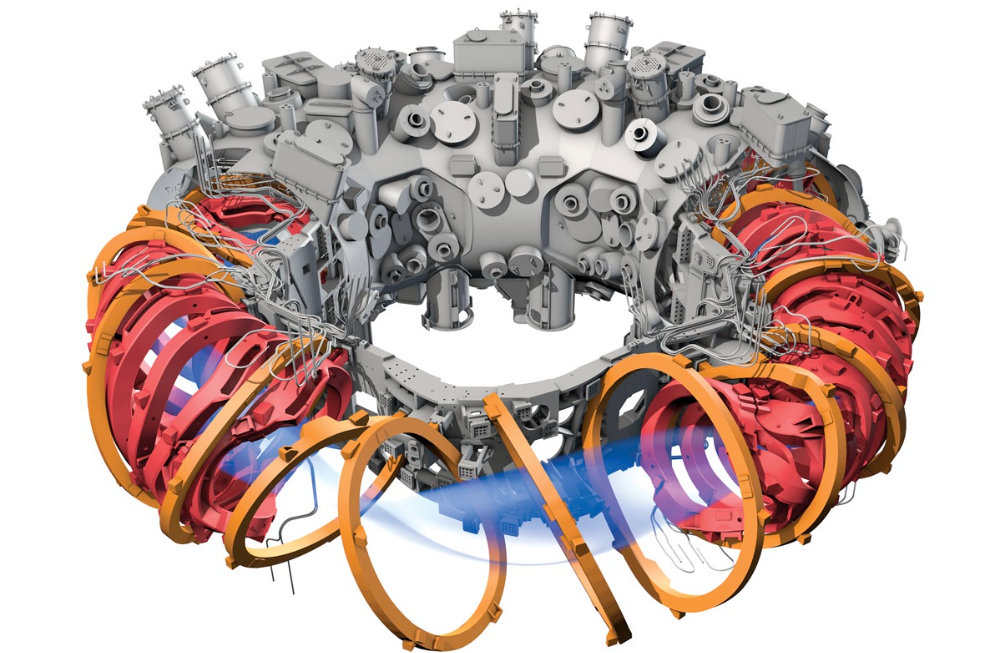

Twisted device investigates fusion alternatives

German stellarator revamped to run longer, hotter, compete with tokamaks

Tokamaks have dominated the search for fusion energy for decades. Just as ITER, the world's largest and most expensive tokamak, nears completion in southern France, a smaller, twistier testbed will start up in Germany.

If the 16-meter-wide stellarator can match or outperform similar-size tokamaks, fusion experts may rethink their future. Stellarators can keep their superhot gases stable enough to fuse nuclei and produce energy. They can theoretically run forever, but tokamaks must pause to reset their magnet coils.

The €1 billion German machine, Wendelstein 7-X (W7-X), is already getting "tokamak-like performance" in short runs, claims plasma physicist David Gates, preventing particles and heat from escaping the superhot gas. If W7-X can go long, "it will be ahead," he says. "Stellarators excel" Eindhoven University of Technology theorist Josefine Proll says, "Stellarators are back in the game." A few of startup companies, including one that Gates is leaving Princeton Plasma Physics Laboratory, are developing their own stellarators.

W7-X has been running at the Max Planck Institute for Plasma Physics (IPP) in Greifswald, Germany, since 2015, albeit only at low power and for brief runs. W7-X's developers took it down and replaced all inner walls and fittings with water-cooled equivalents, allowing for longer, hotter runs. The team reported at a W7-X board meeting last week that the revised plasma vessel has no leaks. It's expected to restart later this month to show if it can get plasma to fusion-igniting conditions.

Wendelstein 7-X's water-cooled inner surface allows for longer runs.

HOSAN/IPP

Both stellarators and tokamaks create magnetic gas cages hot enough to melt metal. Microwaves or particle beams heat. Extreme temperatures create a plasma, a seething mix of separated nuclei and electrons, and cause the nuclei to fuse, releasing energy. A fusion power plant would use deuterium and tritium, which react quickly. Non-energy-generating research machines like W7-X avoid tritium and use hydrogen or deuterium instead.

Tokamaks and stellarators use electromagnetic coils to create plasma-confining magnetic fields. A greater field near the hole causes plasma to drift to the reactor's wall.

Tokamaks control drift by circulating plasma around a ring. Streaming creates a magnetic field that twists and stabilizes ionized plasma. Stellarators employ magnetic coils to twist, not plasma. Once plasma physicists got powerful enough supercomputers, they could optimize stellarator magnets to improve plasma confinement.

W7-X is the first large, optimized stellarator with 50 6- ton superconducting coils. Its construction began in the mid-1990s and cost roughly twice the €550 million originally budgeted.

The wait hasn't disappointed researchers. W7-X director Thomas Klinger: "The machine operated immediately." "It's a friendly machine." It did everything we asked." Tokamaks are prone to "instabilities" (plasma bulging or wobbling) or strong "disruptions," sometimes associated to halted plasma flow. IPP theorist Sophia Henneberg believes stellarators don't employ plasma current, which "removes an entire branch" of instabilities.

In early stellarators, the magnetic field geometry drove slower particles to follow banana-shaped orbits until they collided with other particles and leaked energy. Gates believes W7-X's ability to suppress this effect implies its optimization works.

W7-X loses heat through different forms of turbulence, which push particles toward the wall. Theorists have only lately mastered simulating turbulence. W7-X's forthcoming campaign will test simulations and turbulence-fighting techniques.

A stellarator can run constantly, unlike a tokamak, which pulses. W7-X has run 100 seconds—long by tokamak standards—at low power. The device's uncooled microwave and particle heating systems only produced 11.5 megawatts. The update doubles heating power. High temperature, high plasma density, and extensive runs will test stellarators' fusion power potential. Klinger wants to heat ions to 50 million degrees Celsius for 100 seconds. That would make W7-X "a world-class machine," he argues. The team will push for 30 minutes. "We'll move step-by-step," he says.

W7-X's success has inspired VCs to finance entrepreneurs creating commercial stellarators. Startups must simplify magnet production.

Princeton Stellarators, created by Gates and colleagues this year, has $3 million to build a prototype reactor without W7-X's twisted magnet coils. Instead, it will use a mosaic of 1000 HTS square coils on the plasma vessel's outside. By adjusting each coil's magnetic field, operators can change the applied field's form. Gates: "It moves coil complexity to the control system." The company intends to construct a reactor that can fuse cheap, abundant deuterium to produce neutrons for radioisotopes. If successful, the company will build a reactor.

Renaissance Fusion, situated in Grenoble, France, raised €16 million and wants to coat plasma vessel segments in HTS. Using a laser, engineers will burn off superconductor tracks to carve magnet coils. They want to build a meter-long test segment in 2 years and a full prototype by 2027.

Type One Energy in Madison, Wisconsin, won DOE money to bend HTS cables for stellarator magnets. The business carved twisting grooves in metal with computer-controlled etching equipment to coil cables. David Anderson of the University of Wisconsin, Madison, claims advanced manufacturing technology enables the stellarator.

Anderson said W7-X's next phase will boost stellarator work. “Half-hour discharges are steady-state,” he says. “This is a big deal.”

ʟ ᴜ ᴄ ʏ

3 years ago

The Untapped Gold Mine of Inspiration and Startup Ideas

I joined the 1000 Digital Startups Movement (Gerakan 1000 Startup Digital) in 2017 and learned a lot about the startup sector. My previous essay outlined what a startup is and what must be prepared. Here I'll offer raw ideas for better products.

Intro

A good startup solves a problem. These can include environmental, economic, energy, transportation, logistics, maritime, forestry, livestock, education, tourism, legal, arts and culture, communication, and information challenges. Everything I wrote is simply a basic idea (as inspiration) and requires more mapping and validation. Learn how to construct a startup to maximize launch success.

Adrian Gunadi (Investree Co-Founder) taught me that a Founder or Co-Founder must be willing to be CEO (Chief Everything Officer). Everything is independent, including drafting a proposal, managing finances, and scheduling appointments. The best individuals will come to you if you're the best. It's easier than consulting Andy Zain (Kejora Capital Founder).

Description

To help better understanding from your idea, try to answer this following questions:

- Describe your idea/application

Maximum 1000 characters.

- Background

Explain the reasons that prompted you to realize the idea/application.

- Objective

Explain the expected goals of the creation of the idea/application.

- Solution

A solution that tells your idea can be the right solution for the problem at hand.

- Uniqueness

What makes your idea/app unique?

- Market share

Who are the people who need and are looking for your idea?

- Marketing Ways and Business Models

What is the best way to sell your idea and what is the business model?

Not everything here is a startup idea. It's meant to inspire creativity and new perspectives.

Ideas

#Application

1. Medical students can operate on patients or not. Applications that train prospective doctors to distinguish body organs and their placement are useful. In the advanced stage, the app can be built with numerous approaches so future doctors can practice operating on patients based on their ailments. If they made a mistake, they'd start over. Future doctors will be more assured and make fewer mistakes this way.

2. VR (virtual reality) technology lets people see 3D space from afar. Later, similar technology was utilized to digitally sell properties, so buyers could see the inside and room contents. Every gadget has flaws. It's like a gold mine for robbers. VR can let prospective students see a campus's facilities. This facility can also help hotels promote their products.

3. How can retail entrepreneurs maximize sales? Most popular goods' sales data. By using product and brand/type sales figures, entrepreneurs can avoid overstocking. Walmart computerized their procedures to track products from the manufacturer to the store. As Retail Link products sell out, suppliers can immediately step in.

4. Failing to marry is something to be avoided. But if it had to happen, the loss would be like the proverb “rub salt into the wound”. On the I do Now I dont website, Americans who don't marry can resell their jewelry to other brides-to-be. If some want to cancel the wedding and receive their down money and dress back, others want a wedding with particular criteria, such as a quick date and the expected building. Create a DP takeover marketplace for both sides.

#Games

1. Like in the movie, players must exit the maze they enter within 3 minutes or the shape will change, requiring them to change their strategy. The maze's transformation time will shorten after a few stages.

2. Treasure hunts involve following clues to uncover hidden goods. Here, numerous sponsors are combined in one boat, and participants can choose a game based on the prizes. Let's say X-mart is a sponsor and provides riddles or puzzles to uncover the prize in their store. After gathering enough points, the player can trade them for a gift utilizing GPS and AR (augmented reality). Players can collaborate to increase their chances of success.

3. Where's Wally? Where’s Wally displays a thick image with several things and various Wally-like characters. We must find the actual Wally, his companions, and the desired object. Make a game with a map where players must find objects for the next level. The player must find 5 artifacts randomly placed in an Egyptian-style mansion, for example. In the room, there are standard tickets, pass tickets, and gold tickets that can be removed for safekeeping, as well as a wall-mounted carpet that can be stored but not searched and turns out to be a flying rug that can be used to cross/jump to a different place. Regular tickets are spread out since they can buy life or stuff. At a higher level, a black ticket can lower your ordinary ticket. Objects can explode, scattering previously acquired stuff. If a player runs out of time, they can exchange a ticket for more.

#TVprogram

1. At the airport there are various visitors who come with different purposes. Asking tourists to live for 1 or 2 days in the city will be intriguing to witness.

2. Many professions exist. Carpenters, cooks, and lawyers must have known about job desks. Does HRD (Human Resource Development) only recruit new employees? Many don't know how to become a CEO, CMO, COO, CFO, or CTO. Showing young people what a Program Officer in an NGO does can help them choose a career.

#StampsCreations

Philatelists know that only the government can issue stamps. I hope stamps are creative so they have more worth.

1. Thermochromic pigments (leuco dyes) are well-known for their distinctive properties. By putting pigments to black and white batik stamps, for example, the black color will be translucent and display the basic color when touched (at a hot temperature).

2. In 2012, Liechtenstein Post published a laser-art Chinese zodiac stamp. Belgium (Bruges Market Square 2012), Taiwan (Swallow Tail Butterfly 2009), etc. Why not make a stencil of the president or king/queen?

3. Each country needs its unique identity, like Taiwan's silk and bamboo stamps. Create from your country's history. Using traditional paper like washi (Japan), hanji (Korea), and daluang/saeh (Indonesia) can introduce a country's culture.

4. Garbage has long been a problem. Bagasse, banana fronds, or corn husks can be used as stamp material.

5. Austria Post published a stamp containing meteor dust in 2006. 2004 meteorite found in Morocco produced the dust. Gibraltar's Rock of Gilbraltar appeared on stamps in 2002. What's so great about your country? East Java is muddy (Lapindo mud). Lapindo mud stamps will be popular. Red sand at Pink Beach, East Nusa Tenggara, could replace the mud.

#PostcardCreations

1. Map postcards are popular because they make searching easier. Combining laser-cut road map patterns with perforated 200-gram paper glued on 400-gram paper as a writing medium. Vision-impaired people can use laser-cut maps.

2. Regional art can be promoted by tucking traditional textiles into postcards.

3. A thin canvas or plain paper on the card's front allows the giver to be creative.

4. What is local crop residue? Cork lids, maize husks, and rice husks can be recycled into postcard materials.

5. Have you seen a dried-flower bookmark? Cover the postcard with mica and add dried flowers. If you're worried about losing the flowers, you can glue them or make a postcard envelope.

6. Wood may be ubiquitous; try a 0.2-mm copper plate engraved with an image and connected to a postcard as a writing medium.

7. Utilized paper pulp can be used to hold eggs, smartphones, and food. Form a smooth paper pulp on the plate with the desired image, the Golden Gate bridge, and paste it on your card.

8. Postcards can promote perfume. When customers rub their hands on the card with the perfume image, they'll smell the aroma.

#Tour #Travel

Tourism activities can be tailored to tourists' interests or needs. Each tourist benefits from tourism's distinct aim.

Let's define tourism's objective and purpose.

Holiday Tour is a tour that its participants plan and do in order to relax, have fun, and amuse themselves.

A familiarization tour is a journey designed to help travelers learn more about (survey) locales connected to their line of work.

An educational tour is one that aims to give visitors knowledge of the field of work they are visiting or an overview of it.

A scientific field is investigated and knowledge gained as the major goal of a scientific tour.

A pilgrimage tour is one designed to engage in acts of worship.

A special mission tour is one that has a specific goal, such a commerce mission or an artistic endeavor.

A hunting tour is a destination for tourists that plans organized animal hunting that is only allowed by local authorities for entertainment purposes.

Every part of life has tourism potential. Activities include:

1. Those who desire to volunteer can benefit from the humanitarian theme and collaboration with NGOs. This activity's profit isn't huge but consider the environmental impact.

2. Want to escape the city? Meditation travel can help. Beautiful spots around the globe can help people forget their concerns. A certified yoga/meditation teacher can help travelers release bad energy.

3. Any prison visitors? Some prisons, like those for minors under 17, are open to visitors. This type of tourism helps mental convicts reach a brighter future.

4. Who has taken a factory tour/study tour? Outside-of-school study tour (for ordinary people who have finished their studies). Not everyone in school could tour industries, workplaces, or embassies to learn and be inspired. Shoyeido (an incense maker) and Royce (a chocolate maker) offer factory tours in Japan.

5. Develop educational tourism like astronomy and archaeology. Until now, only a few astronomy enthusiasts have promoted astronomy tourism. In Indonesia, archaeology activities focus on site preservation, and to participate, office staff must undertake a series of training (not everyone can take a sabbatical from their routine). Archaeological tourist activities are limited, whether held by history and culture enthusiasts or in regional tours.

6. Have you ever longed to observe a film being made or your favorite musician rehearsing? Such tours can motivate young people to pursue entertainment careers.

7. Pamper your pets to reduce stress. Many pet owners don't have time for walks or treats. These premium services target the wealthy.

8. A quirky idea to provide tours for imaginary couples or things. Some people marry inanimate objects or animals and seek to make their lover happy; others cherish their ashes after death.

#MISCideas

1. Fashion is a lifestyle, thus people often seek fresh materials. Chicken claws, geckos, snake skin casings, mice, bats, and fish skins are also used. Needs some improvement, definitely.

2. As fuel supplies become scarcer, people hunt for other energy sources. Sound is an underutilized renewable energy. The Batechsant technology converts environmental noise into electrical energy, according to study (Battery Technology Of Sound Power Plant). South Korean researchers use Sound-Driven Piezoelectric Nanowire based on Nanogenerators to recharge cell phone batteries. The Batechsant system uses existing noise levels to provide electricity for street lamp lights, aviation, and ships. Using waterfall sound can also energize hard-to-reach locations.

3. A New York Times reporter said IQ doesn't ensure success. Our school system prioritizes IQ above EQ (Emotional Quotient). EQ is a sort of human intelligence that allows a person to perceive and analyze the dynamics of his emotions when interacting with others (and with himself). EQ is suspected of being a bigger source of success than IQ. EQ training can gain greater attention to help people succeed. Prioritize role models from school stakeholders, teachers, and parents to improve children' EQ.

4. Teaching focuses more on theory than practice, so students are less eager to explore and easily forget if they don't pay attention. Has an engineer ever made bricks from arid red soil? Morocco's non-college-educated builders can create weatherproof bricks from red soil without equipment. Can mechanical engineering grads create a water pump to solve water shortages in remote areas? Art graduates can innovate beyond only painting. Artists may create kinetic sculpture by experimenting so much. Young people should understand these sciences so they can be more creative with their potential. These might be extracurricular activities in high school and university.

5. People have been trying to recycle agricultural waste for a long time. Mycelium helps replace light, easily crushed tiles and bricks (a collection of hyphae like in the manufacture of tempe). Waste must contain lignocellulose. In this vein, anti-mainstream painting canvases can be made. The goal is to create the canvas uneven like an amoeba outline, not square or spherical. The resulting canvas is lightweight and needs no frame. Then what? Open source your idea like Precious Plastic to establish a community. By propagating this notion, many knowledgeable people will help improve your product's quality and impact.

6. As technology and humans adapt, fraud increases. Making phony doctor's letters to fool superiors, fake credentials to get hired, fraudulent land certificates to make money, and fake news (hoax). The existence of a Wikimedia can aid the community by comparing bogus and original information.

7. Do you often hit a problem-solving impasse? Since the Doraemon bag hasn't been made, construct an Idea Bank. Everyone can contribute to solving problems here. How do you recruit volunteers? Obviously, a reward is needed. Contributors can become moderators or gain complimentary tickets to TIA (Tech in Asia) conferences. Idea Bank-related concepts: the rise of startups without a solid foundation generates an age as old as corn that does not continue. Those with startup ideas should describe them here so they can be validated by other users. Other users can contribute input if a comparable notion is produced to improve the product or integrate it. Similar-minded users can become Co-Founders.

8. Why not invest in fruit/vegetables, inspired by digital farming? The landowner obtains free fruit without spending much money on maintenance. Investors can get fruits/vegetables in larger quantities, fresher, and cheaper during harvest. Fruits and vegetables are often harmed if delivered too slowly. Rich investors with limited land can invest in teak, agarwood, and other trees. When harvesting, investors might choose raw results or direct wood sales earnings. Teak takes at least 7 years to harvest, therefore long-term wood investments carry the risk of crop failure.

9. Teenagers in distant locations can't count, read, or write. Many factors hinder locals' success. Life's demands force them to work instead of study. Creating a learning playground may attract young people to learning. Make a skatepark at school. Skateboarders must learn in school. Donations buy skateboards.

10. Globally, online taxi-bike is known. By hiring a motorcycle/car online, people no longer bother traveling without a vehicle. What if you wish to cross the island or visit remote areas? Is online boat or helicopter rental possible like online taxi-bike? Such a renting process has been done independently thus far and cannot be done quickly.

11. What do startups need now? A startup or investor consultant. How many startups fail to become Unicorns? Many founders don't know how to manage investor money, therefore they waste it on promotions and other things. Many investors only know how to invest and can't guide a struggling firm.

“In times of crisis, the wise build bridges, while the foolish build barriers.” — T’Challa [Black Panther]

Don't chase cash. Money is a byproduct. Profit-seeking is stressful. Market requirements are opportunities. If you have something to say, please comment.

This is only informational. Before implementing ideas, do further study.