More on Personal Growth

Datt Panchal

3 years ago

The Learning Habit

The Habit of Learning implies constantly learning something new. One daily habit will make you successful. Learning will help you succeed.

Most successful people continually learn. Success requires this behavior. Daily learning.

Success loves books. Books offer expert advice. Everything is online today. Most books are online, so you can skip the library. You must download it and study for 15-30 minutes daily. This habit changes your thinking.

Typical Successful People

Warren Buffett reads 500 pages of corporate reports and five newspapers for five to six hours each day.

Each year, Bill Gates reads 50 books.

Every two weeks, Mark Zuckerberg reads at least one book.

According to his brother, Elon Musk studied two books a day as a child and taught himself engineering and rocket design.

Learning & Making Money Online

No worries if you can't afford books. Everything is online. YouTube, free online courses, etc.

How can you create this behavior in yourself?

1) Consider what you want to know

Before learning, know what's most important. So, move together.

Set a goal and schedule learning.

After deciding what you want to study, create a goal and plan learning time.

3) GATHER RESOURCES

Get the most out of your learning resources. Online or offline.

Katrine Tjoelsen

3 years ago

8 Communication Hacks I Use as a Young Employee

Learn these subtle cues to gain influence.

Hate being ignored?

As a 24-year-old, I struggled at work. Attention-getting tips How to avoid being judged by my size, gender, and lack of wrinkles or gray hair?

I've learned seniority hacks. Influence. Within two years as a product manager, I led a team. I'm a Stanford MBA student.

These communication hacks can make you look senior and influential.

1. Slowly speak

We speak quickly because we're afraid of being interrupted.

When I doubt my ideas, I speak quickly. How can we slow down? Jamie Chapman says speaking slowly saps our energy.

Chapman suggests emphasizing certain words and pausing.

2. Interrupted? Stop the stopper

Someone interrupt your speech?

Don't wait. "May I finish?" No pause needed. Stop interrupting. I first tried this in Leadership Laboratory at Stanford. How quickly I gained influence amazed me.

Next time, try “May I finish?” If that’s not enough, try these other tips from Wendy R.S. O’Connor.

3. Context

Others don't always see what's obvious to you.

Through explanation, you help others see the big picture. If a senior knows it, you help them see where your work fits.

4. Don't ask questions in statements

“Your statement lost its effect when you ended it on a high pitch,” a group member told me. Upspeak, it’s called. I do it when I feel uncertain.

Upspeak loses influence and credibility. Unneeded. When unsure, we can say "I think." We can even ask a proper question.

Someone else's boasting is no reason to be dismissive. As leaders and colleagues, we should listen to our colleagues even if they use this speech pattern.

Give your words impact.

5. Signpost structure

Signposts improve clarity by providing structure and transitions.

Communication coach Alexander Lyon explains how to use "first," "second," and "third" He explains classic and summary transitions to help the listener switch topics.

Signs clarify. Clarity matters.

6. Eliminate email fluff

“Fine. When will the report be ready? — Jeff.”

Notice how senior leaders write short, direct emails? I often use formalities like "dear," "hope you're well," and "kind regards"

Formality is (usually) unnecessary.

7. Replace exclamation marks with periods

See how junior an exclamation-filled email looks:

Hi, all!

Hope you’re as excited as I am for tomorrow! We’re celebrating our accomplishments with cake! Join us tomorrow at 2 pm!

See you soon!

Why the exclamation points? Why not just one?

Hi, all.

Hope you’re as excited as I am for tomorrow. We’re celebrating our accomplishments with cake. Join us tomorrow at 2 pm!

See you soon.

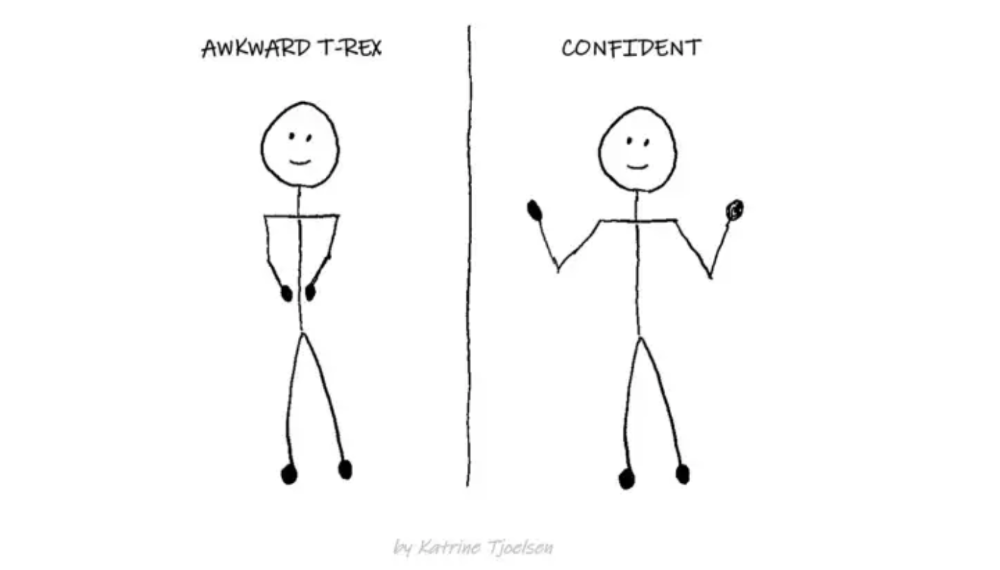

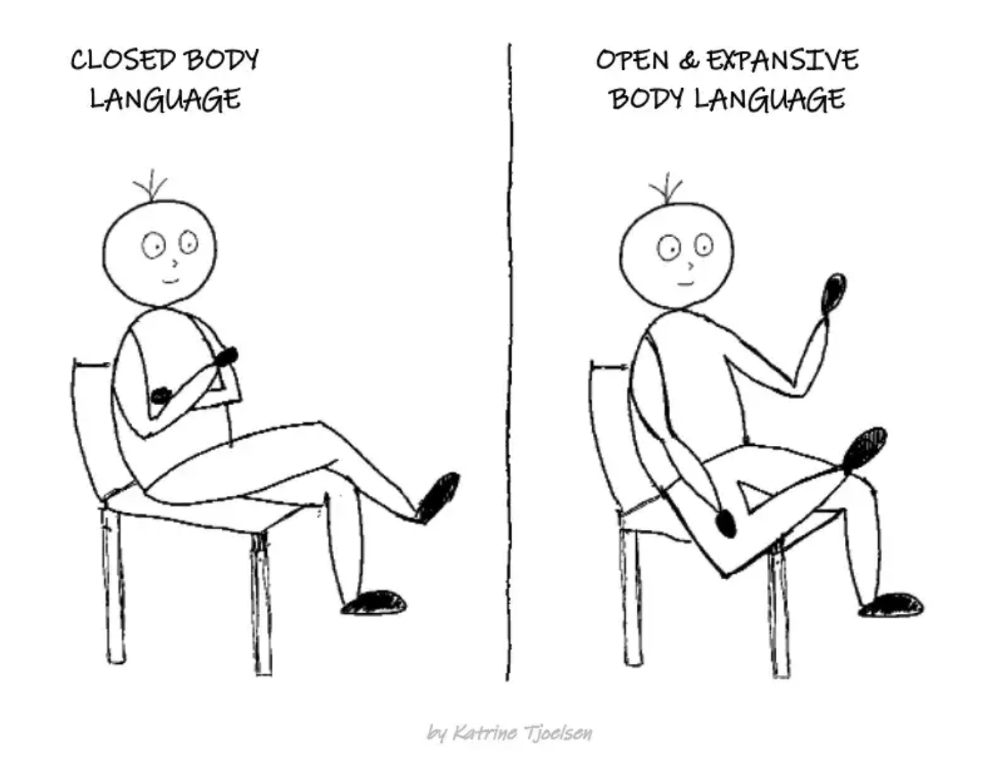

8. Take space

"Playing high" means having an open, relaxed body, says Stanford professor and author Deborah Gruenfield.

Crossed legs or looking small? Relax. Get bigger.

Alex Mathers

3 years ago

12 habits of the zenith individuals I know

Calmness is a vital life skill.

It aids communication. It boosts creativity and performance.

I've studied calm people's habits for years. Commonalities:

Have mastered the art of self-humor.

Protectors take their job seriously, draining the room's energy.

They are fixated on positive pursuits like making cool things, building a strong physique, and having fun with others rather than on depressing influences like the news and gossip.

Every day, spend at least 20 minutes moving, whether it's walking, yoga, or lifting weights.

Discover ways to take pleasure in life's challenges.

Since perspective is malleable, they change their view.

Set your own needs first.

Stressed people neglect themselves and wonder why they struggle.

Prioritize self-care.

Don't ruin your life to please others.

Make something.

Calm people create more than react.

They love creating beautiful things—paintings, children, relationships, and projects.

Don’t hold their breath.

If you're stressed or angry, you may be surprised how much time you spend holding your breath and tightening your belly.

Release, breathe, and relax to find calm.

Stopped rushing.

Rushing is disadvantageous.

Calm people handle life better.

Are aware of their own dietary requirements.

They avoid junk food and eat foods that keep them healthy, happy, and calm.

Don’t take anything personally.

Stressed people control everything.

Self-conscious.

Calm people put others and their work first.

Keep their surroundings neat.

Maintaining an uplifting and clutter-free environment daily calms the mind.

Minimise negative people.

Calm people are ruthless with their boundaries and avoid negative and drama-prone people.

You might also like

Sneaker News

3 years ago

This Month Will See The Release Of Travis Scott x Nike Footwear

Following the catastrophes at Astroworld, Travis Scott was swiftly vilified by both media outlets and fans alike, and the names who had previously supported him were quickly abandoned. Nike, on the other hand, remained silent, only delaying the release of La Flame's planned collaborations, such as the Air Max 1 and Air Trainer 1, indefinitely. While some may believe it is too soon for the artist to return to the spotlight, the Swoosh has other ideas, as Nice Kicks reveals that these exact sneakers will be released in May.

Both the Travis Scott x Nike Air Max 1 and the Travis Scott x Nike Air Trainer 1 are set to come in two colorways this month. Tinker Hatfield's renowned runner will meet La Flame's "Baroque Brown" and "Saturn Gold" make-ups, which have been altered with backwards Swooshes and outdoors-themed webbing. The high-top trainer is being customized with Hatfield's "Wheat" and "Grey Haze" palettes, both of which include zippers across the heel, co-branded patches, and other details.

See below for a closer look at the four footwear. TravisScott.com is expected to release the shoes on May 20th, according to Nice Kicks. Following that, on May 27th, Nike SNKRS will release the shoe.

Travis Scott x Nike Air Max 1 "Baroque Brown"

Release Date: 2022

Color: Baroque Brown/Lemon Drop/Wheat/Chile Red

Mens: $160

Style Code: DO9392-200

Pre-School: $85

Style Code: DN4169-200

Infant & Toddler: $70

Style Code: DN4170-200

Travis Scott x Nike Air Max 1 "Saturn Gold"

Release Date: 2022

Color: N/A

Mens: $160

Style Code: DO9392-700

Travis Scott x Nike Air Trainer 1 "Wheat"

Restock Date: May 27th, 2022 (Friday)

Original Release Date: May 20th, 2022 (Friday)

Color: N/A

Mens: $140

Style Code: DR7515-200

Travis Scott x Nike Air Trainer 1 "Grey Haze"

Restock Date: May 27th, 2022 (Friday)

Original Release Date: May 20th, 2022 (Friday)

Color: N/A

Mens: $140

Style Code: DR7515-001

Sam Hickmann

3 years ago

Nomad.xyz got exploited for $190M

Key Takeaways:

Another hack. This time was different. This is a doozy.

Why? Nomad got exploited for $190m. It was crypto's 5th-biggest hack. Ouch.

It wasn't hackers, but random folks. What happened:

A Nomad smart contract flaw was discovered. They couldn't drain the funds at once, so they tried numerous transactions. Rookie!

People noticed and copied the attack.

They just needed to discover a working transaction, substitute the other person's address with theirs, and run it.

In a two-and-a-half-hour attack, $190M was siphoned from Nomad Bridge.

Nomad is a novel approach to blockchain interoperability that leverages an optimistic mechanism to increase the security of cross-chain communication. — nomad.xyz

This hack was permissionless, therefore anyone could participate.

After the fatal blow, people fought over the scraps.

Cross-chain bridges remain a DeFi weakness and exploit target. When they collapse, it's typically total.

$190M...gobbled.

Unbacked assets are hurting Nomad-dependent chains. Moonbeam, EVMOS, and Milkomeda's TVLs dropped.

This incident is every-man-for-himself, although numerous whitehats exploited the issue...

But what triggered the feeding frenzy?

How did so many pick the bones?

After a normal upgrade in June, the bridge's Replica contract was initialized with a severe security issue. The 0x00 address was a trusted root, therefore all messages were valid by default.

After a botched first attempt (costing $350k in gas), the original attacker's exploit tx called process() without first 'proving' its validity.

The process() function executes all cross-chain messages and checks the merkle root of all messages (line 185).

The upgrade caused transactions with a'messages' value of 0 (invalid, according to old logic) to be read by default as 0x00, a trusted root, passing validation as 'proven'

Any process() calls were valid. In reality, a more sophisticated exploiter may have designed a contract to drain the whole bridge.

Copycat attackers simply copied/pasted the same process() function call using Etherscan, substituting their address.

The incident was a wild combination of crowdhacking, whitehat activities, and MEV-bot (Maximal Extractable Value) mayhem.

For example, 🍉🍉🍉. eth stole $4M from the bridge, but claims to be whitehat.

Others stood out for the wrong reasons. Repeat criminal Rari Capital (Artibrum) exploited over $3M in stablecoins, which moved to Tornado Cash.

The top three exploiters (with 95M between them) are:

$47M: 0x56D8B635A7C88Fd1104D23d632AF40c1C3Aac4e3

$40M: 0xBF293D5138a2a1BA407B43672643434C43827179

$8M: 0xB5C55f76f90Cc528B2609109Ca14d8d84593590E

Here's a list of all the exploiters:

The project conducted a Quantstamp audit in June; QSP-19 foreshadowed a similar problem.

The auditor's comments that "We feel the Nomad team misinterpreted the issue" speak to a troubling attitude towards security that the project's "Long-Term Security" plan appears to confirm:

Concerns were raised about the team's response time to a live, public exploit; the team's official acknowledgement came three hours later.

"Removing the Replica contract as owner" stopped the exploit, but it was too late to preserve the cash.

Closed blockchain systems are only as strong as their weakest link.

The Harmony network is in turmoil after its bridge was attacked and lost $100M in late June.

What's next for Nomad's ecosystems?

Moonbeam's TVL is now $135M, EVMOS's is $3M, and Milkomeda's is $20M.

Loss of confidence may do more damage than $190M.

Cross-chain infrastructure is difficult to secure in a new, experimental sector. Bridge attacks can pollute an entire ecosystem or more.

Nomadic liquidity has no permanent home, so consumers will always migrate in pursuit of the "next big thing" and get stung when attentiveness wanes.

DeFi still has easy prey...

Sources: rekt.news & The Milk Road.

Kaitlin Fritz

3 years ago

The Entrepreneurial Chicken and Egg

University entrepreneurship is like a Willy Wonka Factory of ideas. Classes, roommates, discussions, and the cafeteria all inspire new ideas. I've seen people establish a business without knowing its roots.

Chicken or egg? On my mind: I've asked university founders around the world whether the problem or solution came first.

The Problem

One African team I met started with the “instant noodles” problem in their academic ecosystem. Many of us have had money issues in college, which may have led to poor nutritional choices.

Many university students in a war-torn country ate quick noodles or pasta for dinner.

Noodles required heat, water, and preparation in the boarding house. Unreliable power from one hot plate per blue moon. What's healthier, easier, and tastier than sodium-filled instant pots?

BOOM. They were fixing that. East African kids need affordable, nutritious food.

This is a real difficulty the founders faced every day with hundreds of comrades.

This sparked their serendipitous entrepreneurial journey and became their business's cornerstone.

The Solution

I asked a UK team about their company idea. They said the solution fascinated them.

The crew was fiddling with social media algorithms. Why are some people more popular? They were studying platforms and social networks, which offered a way for them.

Solving a problem? Yes. Long nights of university research lead them to it. Is this like world hunger? Social media influencers confront this difficulty regularly.

It made me ponder something. Is there a correct response?

In my heart, yes, but in my head…maybe?

I believe you should lead with empathy and embrace the problem, not the solution. Big or small, businesses should solve problems. This should be your focus. This is especially true when building a social company with an audience in mind.

Philosophically, invention and innovation are occasionally accidental. Also not penalized. Think about bugs and the creation of Velcro, or the inception of Teflon. They tackle difficulties we overlook. The route to the problem may look different, but there is a path there.

There's no golden ticket to the Chicken-Egg debate, but I'll keep looking this summer.