More on Entrepreneurship/Creators

Keagan Stokoe

3 years ago

Generalists Create Startups; Specialists Scale Them

There’s a funny part of ‘Steve Jobs’ by Walter Isaacson where Jobs says that Bill Gates was more a copier than an innovator:

“Bill is basically unimaginative and has never invented anything, which is why I think he’s more comfortable now in philanthropy than technology. He just shamelessly ripped off other people’s ideas….He’d be a broader guy if he had dropped acid once or gone off to an ashram when he was younger.”

Gates lacked flavor. Nobody ever got excited about a Microsoft launch, despite their good products. Jobs had the world's best product taste. Apple vs. Microsoft.

A CEO's core job functions are all driven by taste: recruiting, vision, and company culture all require good taste. Depending on the type of company you want to build, know where you stand between Microsoft and Apple.

How can you improve your product judgment? How to acquire taste?

Test and refine

Product development follows two parallel paths: the ‘customer obsession’ path and the ‘taste and iterate’ path.

The customer obsession path involves solving customer problems. Lean Startup frameworks show you what to build at each step.

Taste-and-iterate doesn't involve the customer. You iterate internally and rely on product leaders' taste and judgment.

Creative Selection by Ken Kocienda explains this method. In Creative Selection, demos are iterated and presented to product leaders. Your boss presents to their boss, and so on up to Steve Jobs. If you have good product taste, you can be a panelist.

The iPhone follows this path. Before seeing an iPhone, consumers couldn't want one. Customer obsession wouldn't have gotten you far because iPhone buyers didn't know they wanted one.

In The Hard Thing About Hard Things, Ben Horowitz writes:

“It turns out that is exactly what product strategy is all about — figuring out the right product is the innovator’s job, not the customer’s job. The customer only knows what she thinks she wants based on her experience with the current product. The innovator can take into account everything that’s possible, but often must go against what she knows to be true. As a result, innovation requires a combination of knowledge, skill, and courage.“

One path solves a problem the customer knows they have, and the other doesn't. Instead of asking a person what they want, observe them and give them something they didn't know they needed.

It's much harder. Apple is the world's most valuable company because it's more valuable. It changes industries permanently.

If you want to build superior products, use the iPhone of your industry.

How to Improve Your Taste

I. Work for a company that has taste.

People with the best taste in products, markets, and people are rewarded for building great companies. Tasteful people know quality even when they can't describe it. Taste isn't writable. It's feel-based.

Moving into a community that's already doing what you want to do may be the best way to develop entrepreneurial taste. Most company-building knowledge is tacit.

Joining a company you want to emulate allows you to learn its inner workings. It reveals internal patterns intuitively. Many successful founders come from successful companies.

Consumption determines taste. Excellence will refine you. This is why restauranteurs visit the world's best restaurants and serious painters visit Paris or New York. Joining a company with good taste is beneficial.

2. Possess a wide range of interests

“Edwin Land of Polaroid talked about the intersection of the humanities and science. I like that intersection. There’s something magical about that place… The reason Apple resonates with people is that there’s a deep current of humanity in our innovation. I think great artists and great engineers are similar, in that they both have a desire to express themselves.” — Steve Jobs

I recently discovered Edwin Land. Jobs modeled much of his career after Land's. It makes sense that Apple was inspired by Land.

A Triumph of Genius: Edwin Land, Polaroid, and the Kodak Patent War notes:

“Land was introverted in person, but supremely confident when he came to his ideas… Alongside his scientific passions, lay knowledge of art, music, and literature. He was a cultured person growing even more so as he got older, and his interests filtered into the ethos of Polaroid.”

Founders' philosophies shape companies. Jobs and Land were invested. It showed in the products their companies made. Different. His obsession was spreading Microsoft software worldwide. Microsoft's success is why their products are bland and boring.

Experience is important. It's probably why startups are built by generalists and scaled by specialists.

Jobs combined design, typography, storytelling, and product taste at Apple. Some of the best original Mac developers were poets and musicians. Edwin Land liked broad-minded people, according to his biography. Physicist-musicians or physicist-photographers.

Da Vinci was a master of art, engineering, architecture, anatomy, and more. He wrote and drew at the same desk. His genius is remembered centuries after his death. Da Vinci's statue would stand at the intersection of humanities and science.

We find incredibly creative people here. Superhumans. Designers, creators, and world-improvers. These are the people we need to navigate technology and lead world-changing companies. Generalists lead.

Thomas Tcheudjio

3 years ago

If you don't crush these 3 metrics, skip the Series A.

I recently wrote about getting VCs excited about Marketplace start-ups. SaaS founders became envious!

Understanding how people wire tens of millions is the only Series A hack I recommend.

Few people understand the intellectual process behind investing.

VC is risk management.

Series A-focused VCs must cover two risks.

1. Market risk

You need a large market to cross a threshold beyond which you can build defensibilities. Series A VCs underwrite market risk.

They must see you have reached product-market fit (PMF) in a large total addressable market (TAM).

2. Execution risk

When evaluating your growth engine's blitzscaling ability, execution risk arises.

When investors remove operational uncertainty, they profit.

Series A VCs like businesses with derisked revenue streams. Don't raise unless you have a predictable model, pipeline, and growth.

Please beat these 3 metrics before Series A:

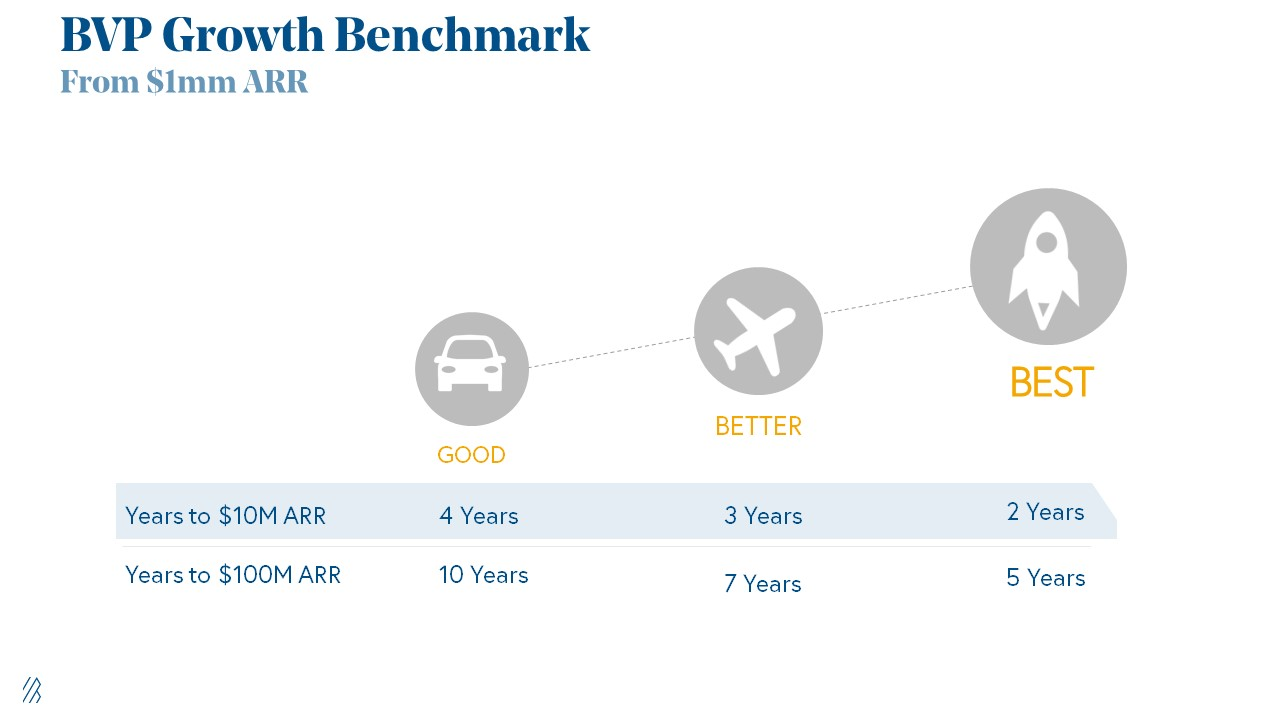

Achieve $1.5m ARR in 12-24 months (Market risk)

Above 100% Net Dollar Retention. (Market danger)

Lead Velocity Rate supporting $10m ARR in 2–4 years (Execution risk)

Hit the 3 and you'll raise $10M in 4 months. Discussing 2/3 may take 6–7 months.

If none, don't bother raising and focus on becoming a capital-efficient business (Topics for other posts).

Let's examine these 3 metrics for the brave ones.

1. Lead Velocity Rate supporting €$10m ARR in 2 to 4 years

Last because it's the least discussed. LVR is the most reliable data when evaluating a growth engine, in my opinion.

SaaS allows you to see the future.

Monthly Sales and Sales Pipelines, two predictive KPIs, have poor data quality. Both are lagging indicators, and minor changes can cause huge modeling differences.

Analysts and Associates will trash your forecasts if they're based only on Monthly Sales and Sales Pipeline.

LVR, defined as month-over-month growth in qualified leads, is rock-solid. There's no lag. You can See The Future if you use Qualified Leads and a consistent formula and process to qualify them.

With this metric in your hand, scaling your company turns into an execution play on which VCs are able to perform calculations risk.

2. Above-100% Net Dollar Retention.

Net Dollar Retention is a better-known SaaS health metric than LVR.

Net Dollar Retention measures a SaaS company's ability to retain and upsell customers. Ask what $1 of net new customer spend will be worth in years n+1, n+2, etc.

Depending on the business model, SaaS businesses can increase their share of customers' wallets by increasing users, selling them more products in SaaS-enabled marketplaces, other add-ons, and renewing them at higher price tiers.

If a SaaS company's annualized Net Dollar Retention is less than 75%, there's a problem with the business.

Slack's ARR chart (below) shows how powerful Net Retention is. Layer chart shows how existing customer revenue grows. Slack's S1 shows 171% Net Dollar Retention for 2017–2019.

Slack S-1

3. $1.5m ARR in the last 12-24 months.

According to Point 9, $0.5m-4m in ARR is needed to raise a $5–12m Series A round.

Target at least what you raised in Pre-Seed/Seed. If you've raised $1.5m since launch, don't raise before $1.5m ARR.

Capital efficiency has returned since Covid19. After raising $2m since inception, it's harder to raise $1m in ARR.

P9's 2016-2021 SaaS Funding Napkin

In summary, less than 1% of companies VCs meet get funded. These metrics can help you win.

If there’s demand for it, I’ll do one on direct-to-consumer.

Cheers!

Antonio Neto

3 years ago

Should you skip the minimum viable product?

Are MVPs outdated and have no place in modern product culture?

Frank Robinson coined "MVP" in 2001. In the same year as the Agile Manifesto, the first Scrum experiment began. MVPs are old.

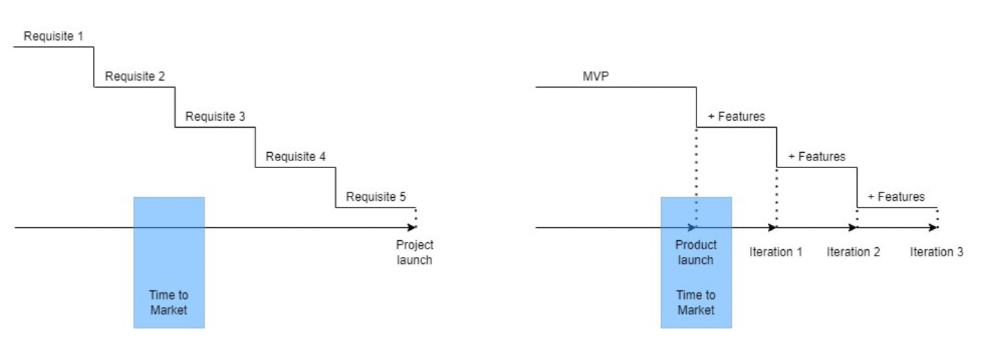

The concept was created to solve the waterfall problem at the time.

The market was still sour from the .com bubble. The tech industry needed a new approach. Product and Agile gained popularity because they weren't waterfall.

More than 20 years later, waterfall is dead as dead can be, but we are still talking about MVPs. Does that make sense?

What is an MVP?

Minimum viable product. You probably know that, so I'll be brief:

[…] The MVP fits your company and customer. It's big enough to cause adoption, satisfaction, and sales, but not bloated and risky. It's the product with the highest ROI/risk. […] — Frank Robinson, SyncDev

MVP is a complete product. It's not a prototype. It's your product's first iteration, which you'll improve. It must drive sales and be user-friendly.

At the MVP stage, you should know your product's core value, audience, and price. We are way deep into early adoption territory.

What about all the things that come before?

Modern product discovery

Eric Ries popularized the term with The Lean Startup in 2011. (Ries would work with the concept since 2008, but wide adoption came after the book was released).

Ries' definition of MVP was similar to Robinson's: "Test the market" before releasing anything. Ries never mentioned money, unlike Jobs. His MVP's goal was learning.

“Remove any feature, process, or effort that doesn't directly contribute to learning” — Eric Ries, The Lean Startup

Product has since become more about "what" to build than building it. What started as a learning tool is now a discovery discipline: fake doors, prototyping, lean inception, value proposition canvas, continuous interview, opportunity tree... These are cheap, effective learning tools.

Over time, companies realized that "maximum ROI divided by risk" started with discovery, not the MVP. MVPs are still considered discovery tools. What is the problem with that?

Time to Market vs Product Market Fit

Waterfall's Time to Market is its biggest flaw. Since projects are sliced horizontally rather than vertically, when there is nothing else to be done, it’s not because the product is ready, it’s because no one cares to buy it anymore.

MVPs were originally conceived as a way to cut corners and speed Time to Market by delivering more customer requests after they paid.

Original product development was waterfall-like.

Time to Market defines an optimal, specific window in which value should be delivered. It's impossible to predict how long or how often this window will be open.

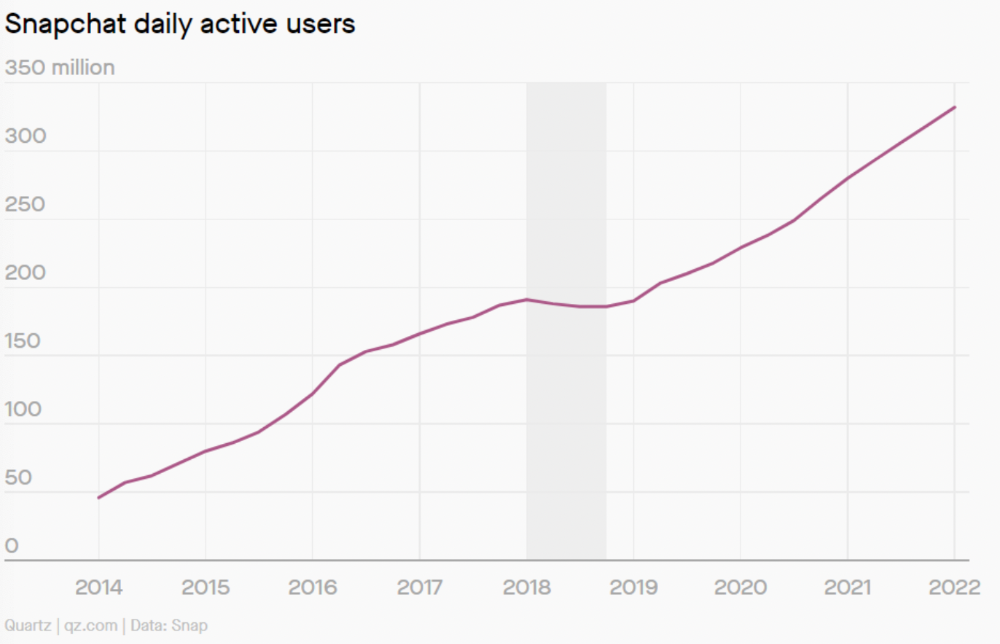

Product Market Fit makes this window a "state." You don’t achieve Product Market Fit, you have it… and you may lose it.

Take, for example, Snapchat. They had a great time to market, but lost product-market fit later. They regained product-market fit in 2018 and have grown since.

An MVP couldn't handle this. What should Snapchat do? Launch Snapchat 2 and see what the market was expecting differently from the last time? MVPs are a snapshot in time that may be wrong in two weeks.

MVPs are mini-projects. Instead of spending a lot of time and money on waterfall, you spend less but are still unsure of the results.

MVPs aren't always wrong. When releasing your first product version, consider an MVP.

Minimum viable product became less of a thing on its own and more interchangeable with Alpha Release or V.1 release over time.

Modern discovery technics are more assertive and predictable than the MVP, but clarity comes only when you reach the market.

MVPs aren't the starting point, but they're the best way to validate your product concept.

You might also like

Aniket

3 years ago

Yahoo could have purchased Google for $1 billion

Let's see this once-dominant IT corporation crumble.

What's the capital of Kazakhstan? If you don't know the answer, you can probably find it by Googling. Google Search returned results for Nur-Sultan in 0.66 seconds.

Google is the best search engine I've ever used. Did you know another search engine ruled the Internet? I'm sure you guessed Yahoo!

Google's friendly UI and wide selection of services make it my top choice. Let's explore Yahoo's decline.

Yahoo!

YAHOO stands for Yet Another Hierarchically Organized Oracle. Jerry Yang and David Filo established Yahoo.

Yahoo is primarily a search engine and email provider. It offers News and an advertising platform. It was a popular website in 1995 that let people search the Internet directly. Yahoo began offering free email in 1997 by acquiring RocketMail.

According to a study, Yahoo used Google Search Engine technology until 2000 and then developed its own in 2004.

Yahoo! rejected buying Google for $1 billion

Larry Page and Sergey Brin, Google's founders, approached Yahoo in 1998 to sell Google for $1 billion so they could focus on their studies. Yahoo denied the offer, thinking it was overvalued at the time.

Yahoo realized its error and offered Google $3 billion in 2002, but Google demanded $5 billion since it was more valuable. Yahoo thought $5 billion was overpriced for the existing market.

In 2022, Google is worth $1.56 Trillion.

What happened to Yahoo!

Yahoo refused to buy Google, and Google's valuation rose, making a purchase unfeasible.

Yahoo started losing users when Google launched Gmail. Google's UI was far cleaner than Yahoo's.

Yahoo offered $1 billion to buy Facebook in July 2006, but Zuckerberg and the board sought $1.1 billion. Yahoo rejected, and Facebook's valuation rose, making it difficult to buy.

Yahoo was losing users daily while Google and Facebook gained many. Google and Facebook's popularity soared. Yahoo lost value daily.

Microsoft offered $45 billion to buy Yahoo in February 2008, but Yahoo declined. Microsoft increased its bid to $47 billion after Yahoo said it was too low, but Yahoo rejected it. Then Microsoft rejected Yahoo’s 10% bid increase in May 2008.

In 2015, Verizon bought Yahoo for $4.5 billion, and Apollo Global Management bought 90% of Yahoo's shares for $5 billion in May 2021. Verizon kept 10%.

Yahoo's opportunity to acquire Google and Facebook could have been a turning moment. It declined Microsoft's $45 billion deal in 2008 and was sold to Verizon for $4.5 billion in 2015. Poor decisions and lack of vision caused its downfall. Yahoo's aim wasn't obvious and it didn't stick to a single domain.

Hence, a corporation needs a clear vision and a leader who can see its future.

Liked this article? Join my tech and programming newsletter here.

Yusuf Ibrahim

4 years ago

How to sell 10,000 NFTs on OpenSea for FREE (Puppeteer/NodeJS)

So you've finished your NFT collection and are ready to sell it. Except you can't figure out how to mint them! Not sure about smart contracts or want to avoid rising gas prices. You've tried and failed with apps like Mini mouse macro, and you're not familiar with Selenium/Python. Worry no more, NodeJS and Puppeteer have arrived!

Learn how to automatically post and sell all 1000 of my AI-generated word NFTs (Nakahana) on OpenSea for FREE!

My NFT project — Nakahana |

NOTE: Only NFTs on the Polygon blockchain can be sold for free; Ethereum requires an initiation charge. NFTs can still be bought with (wrapped) ETH.

If you want to go right into the code, here's the GitHub link: https://github.com/Yusu-f/nftuploader

Let's start with the knowledge and tools you'll need.

What you should know

You must be able to write and run simple NodeJS programs. You must also know how to utilize a Metamask wallet.

Tools needed

- NodeJS. You'll need NodeJs to run the script and NPM to install the dependencies.

- Puppeteer – Use Puppeteer to automate your browser and go to sleep while your computer works.

- Metamask – Create a crypto wallet and sign transactions using Metamask (free). You may learn how to utilize Metamask here.

- Chrome – Puppeteer supports Chrome.

Let's get started now!

Starting Out

Clone Github Repo to your local machine. Make sure that NodeJS, Chrome, and Metamask are all installed and working. Navigate to the project folder and execute npm install. This installs all requirements.

Replace the “extension path” variable with the Metamask chrome extension path. Read this tutorial to find the path.

Substitute an array containing your NFT names and metadata for the “arr” variable and the “collection_name” variable with your collection’s name.

Run the script.

After that, run node nftuploader.js.

Open a new chrome instance (not chromium) and Metamask in it. Import your Opensea wallet using your Secret Recovery Phrase or create a new one and link it. The script will be unable to continue after this but don’t worry, it’s all part of the plan.

Next steps

Open your terminal again and copy the route that starts with “ws”, e.g. “ws:/localhost:53634/devtools/browser/c07cb303-c84d-430d-af06-dd599cf2a94f”. Replace the path in the connect function of the nftuploader.js script.

const browser = await puppeteer.connect({ browserWSEndpoint: "ws://localhost:58533/devtools/browser/d09307b4-7a75-40f6-8dff-07a71bfff9b3", defaultViewport: null });

Rerun node nftuploader.js. A second tab should open in THE SAME chrome instance, navigating to your Opensea collection. Your NFTs should now start uploading one after the other! If any errors occur, the NFTs and errors are logged in an errors.log file.

Error Handling

The errors.log file should show the name of the NFTs and the error type. The script has been changed to allow you to simply check if an NFT has already been posted. Simply set the “searchBeforeUpload” setting to true.

We're done!

If you liked it, you can buy one of my NFTs! If you have any concerns or would need a feature added, please let me know.

Thank you to everyone who has read and liked. I never expected it to be so popular.

Nikhil Vemu

3 years ago

7 Mac Tips You Never Knew You Needed

Unleash the power of the Option key ⌥

#1 Open a link in the Private tab first.

Previously, if I needed to open a Safari link in a private window, I would:

copied the URL with the right click command,

choose File > New Private Window to open a private window, and

clicked return after pasting the URL.

I've found a more straightforward way.

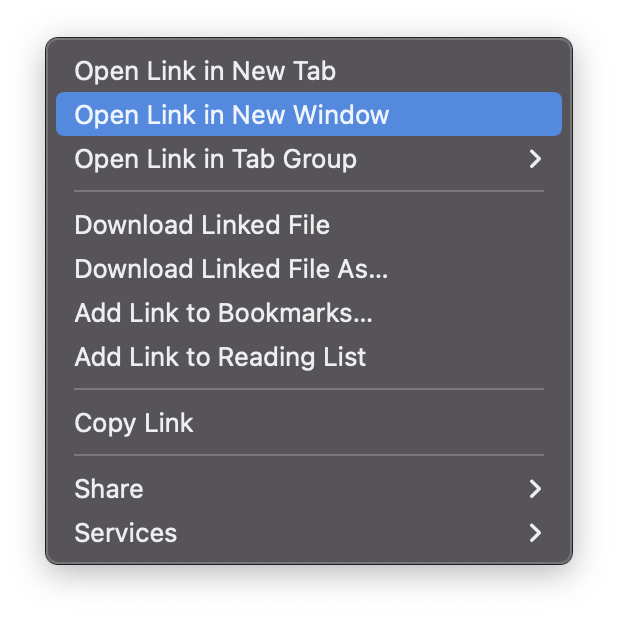

Right-clicking a link shows this, right?

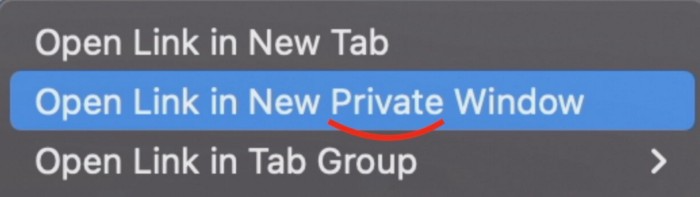

Hold option (⌥) for:

Click Open Link in New Private Window while holding.

Finished!

#2. Instead of searching for specific characters, try this

You may use unicode for business or school. Most people Google them when they need them.

That is lengthy!

You can type some special characters just by pressing ⌥ and a key.

For instance

• ⌥+2 -> ™ (Trademark)

• ⌥+0 -> ° (Degree)

• ⌥+G -> © (Copyright)

• ⌥+= -> ≠ (Not equal to)

• ⌥+< -> ≤ (Less than or equal to)

• ⌥+> -> ≥ (Greater then or equal to)

• ⌥+/ -> ÷ (Different symbol for division)#3 Activate Do Not Disturb silently.

Do Not Disturb when sharing my screen is awkward for me (because people may think Im trying to hide some secret notifications).

Here's another method.

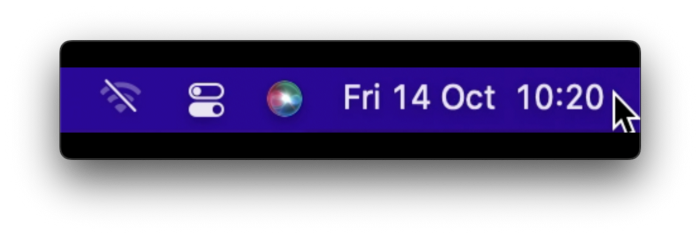

Hold ⌥ and click on Time (at the extreme right on the menu-bar).

Now, DND is activated (secretly!). To turn it off, do it again.

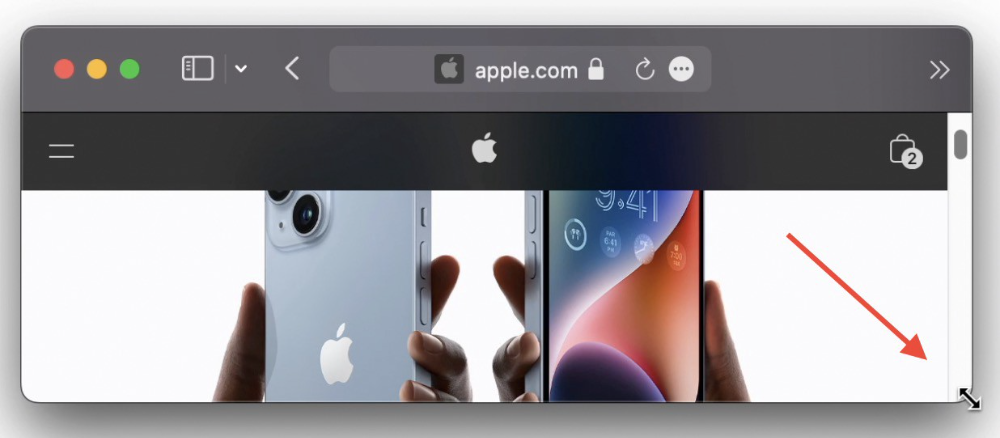

Note: This works only for DND focus.#4. Resize a window starting from its center

Although this is rarely useful, it is still a hidden trick.

When you resize a window, the opposite edge or corner is used as the pivot, right?

However, if you want to resize it with its center as the pivot, hold while doing so.

#5. Yes, Cut-Paste is available on Macs as well (though it is slightly different).

I call it copy-move rather than cut-paste. This is how it works.

Carry it out.

Choose a file (by clicking on it), then copy it (⌘+C).

Go to a new location on your Mac. Do you use ⌘+V to paste it? However, to move it, press ⌘+⌥+V.

This removes the file from its original location and copies it here. And it works exactly like cut-and-paste on Windows.

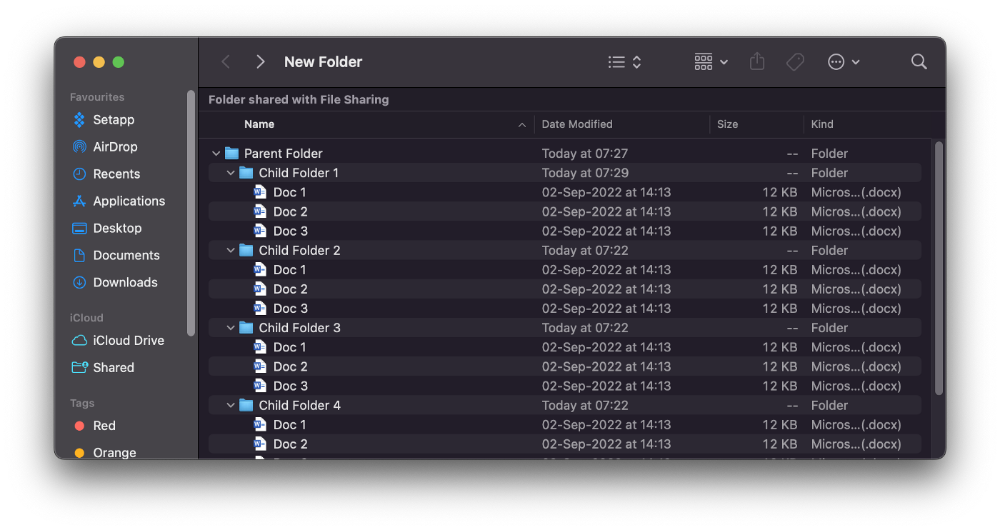

#6. Instantly expand all folders

Set your Mac's folders to List view.

Assume you have one folder with multiple subfolders, each of which contains multiple files. And you wanted to look at every single file that was over there.

How would you do?

You're used to clicking the ⌄ glyph near the folder and each subfolder to expand them all, right? Instead, hold down ⌥ while clicking ⌄ on the parent folder.

This is what happens next.

Everything expands.

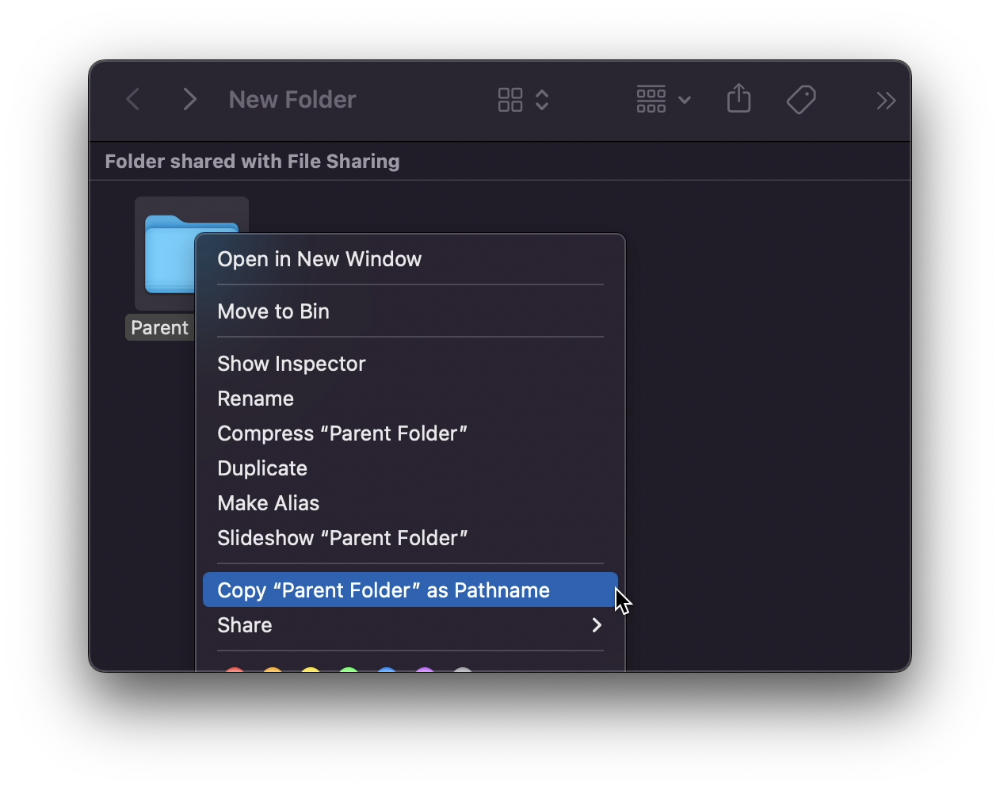

View/Copy a file's path as an added bonus

If you want to see the path of a file in Finder, select it and hold ⌥, and you'll see it at the bottom for a moment.

To copy its path, right-click on the folder and hold down ⌥ to see this

Click on Copy <"folder name"> as Pathname to do it.

#7 "Save As"

I was irritated by the lack of "Save As" in Pages when I first got a Mac (after 15 years of being a Windows guy).

It was necessary for me to save the file as a new file, in a different location, with a different name, or both.

Unfortunately, I couldn't do it on a Mac.

However, I recently discovered that it appears when you hold ⌥ when in the File menu.

Yay!