More on Web3 & Crypto

Jayden Levitt

3 years ago

The country of El Salvador's Bitcoin-obsessed president lost $61.6 million.

It’s only a loss if you sell, right?

Nayib Bukele proclaimed himself “the world’s coolest dictator”.

His jokes aren't clear.

El Salvador's 43rd president self-proclaimed “CEO of El Salvador” couldn't be less presidential.

His thin jeans, aviator sunglasses, and baseball caps like a cartel lord.

He's popular, though.

Bukele won 53% of the vote by fighting violent crime and opposition party corruption.

El Salvador's 6.4 million inhabitants are riding the cryptocurrency volatility wave.

They were powerless.

Their autocratic leader, a former Yamaha Motors salesperson and Bitcoin believer, wants to help 70% unbanked locals.

He intended to give the citizens a way to save money and cut the country's $200 million remittance cost.

Transfer and deposit costs.

This makes logical sense when the president’s theatrics don’t blind you.

El Salvador's Bukele revealed plans to make bitcoin legal tender.

Remittances total $5.9 billion (23%) of the country's expenses.

Anything that reduces costs could boost the economy.

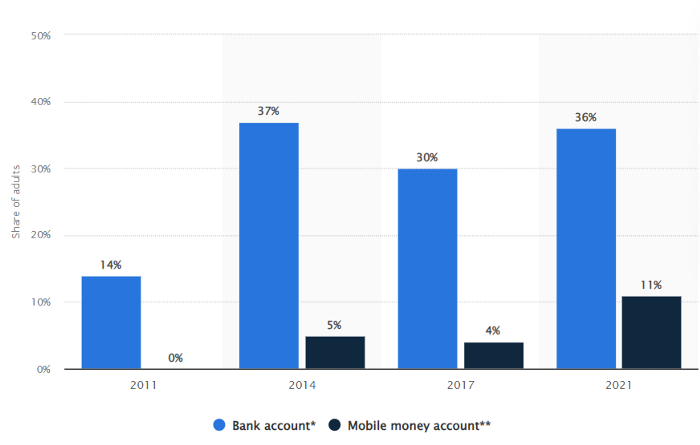

The country’s unbanked population is staggering. Here’s the data by % of people who either have a bank account (Blue) or a mobile money account (Black).

According to Bukele, 46% of the population has downloaded the Chivo Bitcoin Wallet.

In 2021, 36% of El Salvadorans had bank accounts.

Large rural countries like Kenya seem to have resolved their unbanked dilemma.

An economy surfaced where village locals would sell, trade and store network minutes and data as a store of value.

Kenyan phone networks realized unbanked people needed a safe way to accumulate wealth and have an emergency fund.

96% of Kenyans utilize M-PESA, which doesn't require a bank account.

The software involves human agents who hang out with cash and a phone.

These people are like ATMs.

You offer them cash to deposit money in your mobile money account or withdraw cash.

In a country with a faulty banking system, cash availability and a safe place to deposit it are important.

William Jack and Tavneet Suri found that M-PESA brought 194,000 Kenyan households out of poverty by making transactions cheaper and creating a safe store of value.

Mobile money, a service that allows monetary value to be stored on a mobile phone and sent to other users via text messages, has been adopted by most Kenyan households. We estimate that access to the Kenyan mobile money system M-PESA increased per capita consumption levels and lifted 194,000 households, or 2% of Kenyan households, out of poverty.

The impacts, which are more pronounced for female-headed households, appear to be driven by changes in financial behaviour — in particular, increased financial resilience and saving. Mobile money has therefore increased the efficiency of the allocation of consumption over time while allowing a more efficient allocation of labour, resulting in a meaningful reduction of poverty in Kenya.

Currently, El Salvador has 2,301 Bitcoin.

At publication, it's worth $44 million. That remains 41% of Bukele's original $105.6 million.

Unknown if the country has sold Bitcoin, but Bukeles keeps purchasing the dip.

It's still falling.

This might be a fantastic move for the impoverished country over the next five years, if they can live economically till Bitcoin's price recovers.

The evidence demonstrates that a store of value pulls individuals out of poverty, but others say Bitcoin is premature.

You may regard it as an aggressive endeavor to front run the next wave of adoption, offering El Salvador a financial upside.

Faisal Khan

3 years ago

4 typical methods of crypto market manipulation

Market fraud

Due to its decentralized and fragmented character, the crypto market has integrity difficulties.

Cryptocurrencies are an immature sector, therefore market manipulation becomes a bigger issue. Many research have attempted to uncover these abuses. CryptoCompare's newest one highlights some of the industry's most typical scams.

Why are these concerns so common in the crypto market? First, even the largest centralized exchanges remain unregulated due to industry immaturity. A low-liquidity market segment makes an attack more harmful. Finally, market surveillance solutions not implemented reduce transparency.

In CryptoCompare's latest exchange benchmark, 62.4% of assessed exchanges had a market surveillance system, although only 18.1% utilised an external solution. To address market integrity, this measure must improve dramatically. Before discussing the report's malpractices, note that this is not a full list of attacks and hacks.

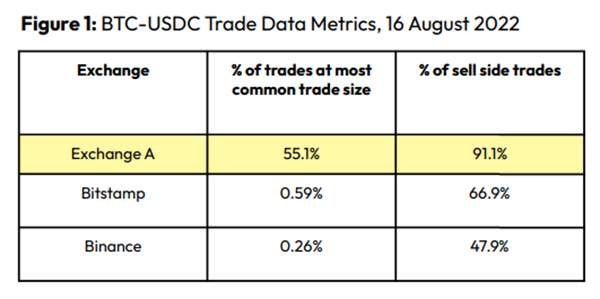

Clean Trading

An investor buys and sells concurrently to increase the asset's price. Centralized and decentralized exchanges show this misconduct. 23 exchanges have a volume-volatility correlation < 0.1 during the previous 100 days, according to CryptoCompares. In August 2022, Exchange A reported $2.5 trillion in artificial and/or erroneous volume, up from $33.8 billion the month before.

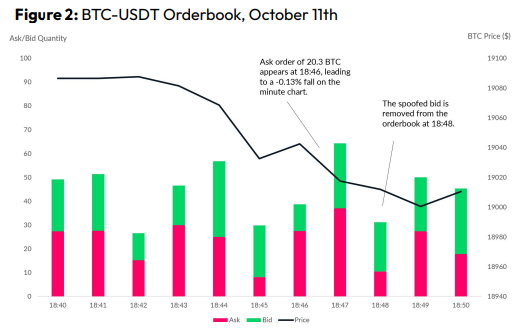

Spoofing

Criminals create and cancel fake orders before they can be filled. Since manipulators can hide in larger trading volumes, larger exchanges have more spoofing. A trader placed a 20.8 BTC ask order at $19,036 when BTC was trading at $19,043. BTC declined 0.13% to $19,018 in a minute. At 18:48, the trader canceled the ask order without filling it.

Front-Running

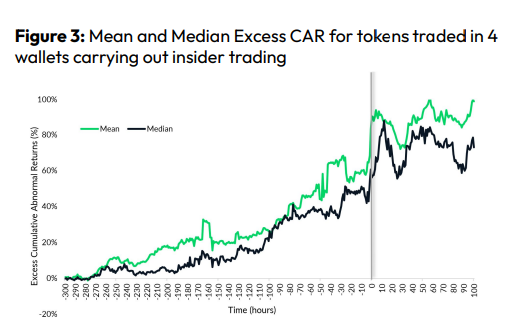

Most cryptocurrency front-running involves inside trading. Traditional stock markets forbid this. Since most digital asset information is public, this is harder. Retailers could utilize bots to front-run.

CryptoCompare found digital wallets of people who traded like insiders on exchange listings. The figure below shows excess cumulative anomalous returns (CAR) before a coin listing on an exchange.

Finally, LAYERING is a sequence of spoofs in which successive orders are put along a ladder of greater (layering offers) or lower (layering bids) values. The paper concludes with recommendations to mitigate market manipulation. Exchange data transparency, market surveillance, and regulatory oversight could reduce manipulative tactics.

Onchain Wizard

3 years ago

Three Arrows Capital & Celsius Updates

I read 1k+ page 3AC liquidation documentation so you don't have to. Also sharing revised Celsius recovery plans.

3AC's liquidation documents:

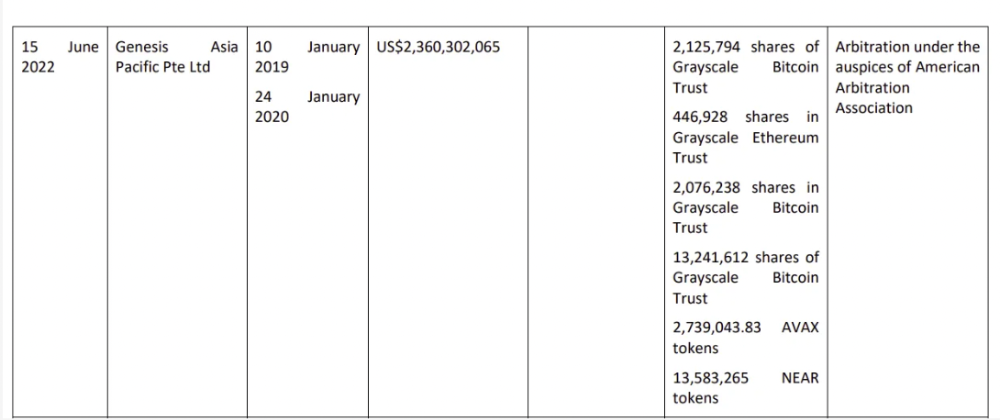

Someone disclosed 3AC liquidation records in the BVI courts recently. I'll discuss the leak's timeline and other highlights.

Three Arrows Capital began trading traditional currencies in emerging markets in 2012. They switched to equities and crypto, then purely crypto in 2018.

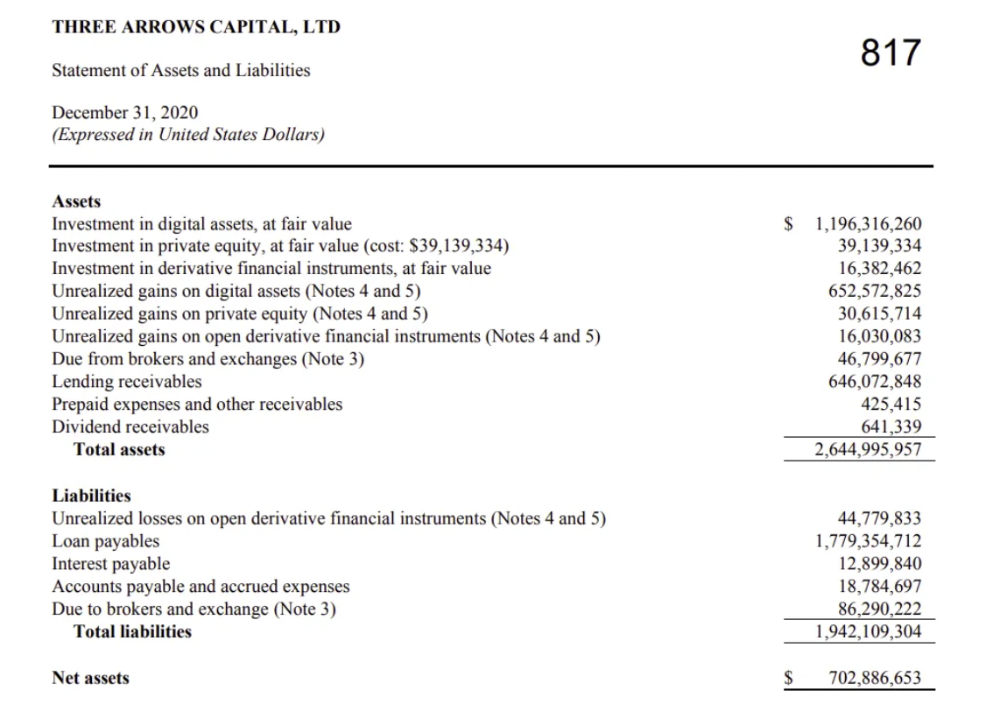

By 2020, the firm had $703mm in net assets and $1.8bn in loans (these guys really like debt).

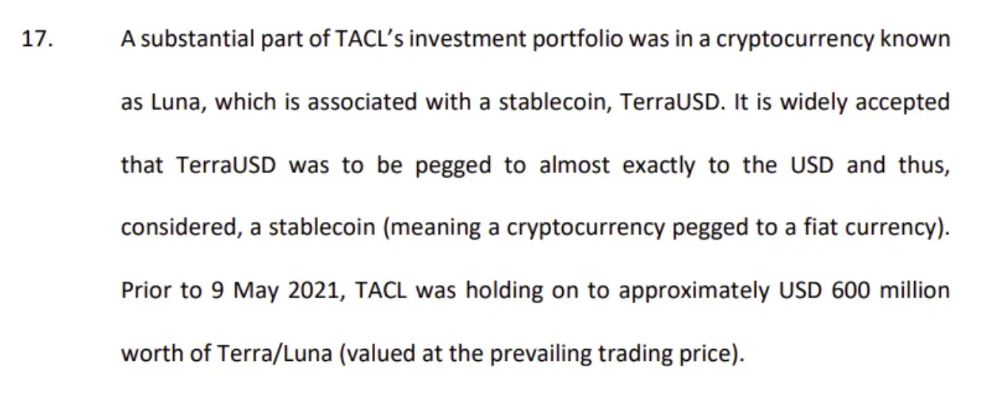

The firm's net assets under control reached $3bn in April 2022, according to the filings. 3AC had $600mm of LUNA/UST exposure before May 9th 2022, which put them over.

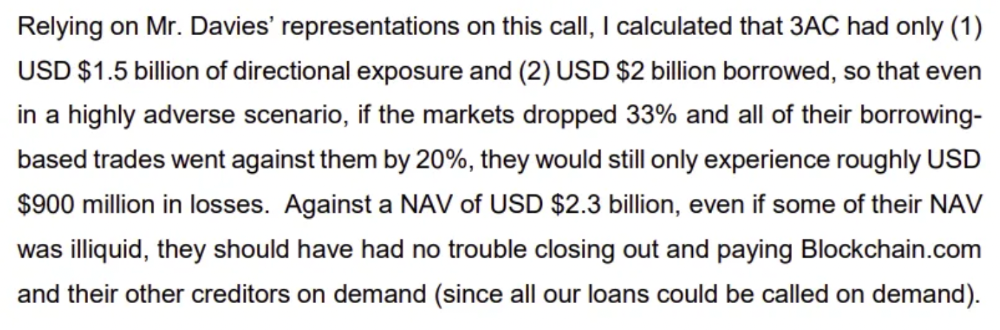

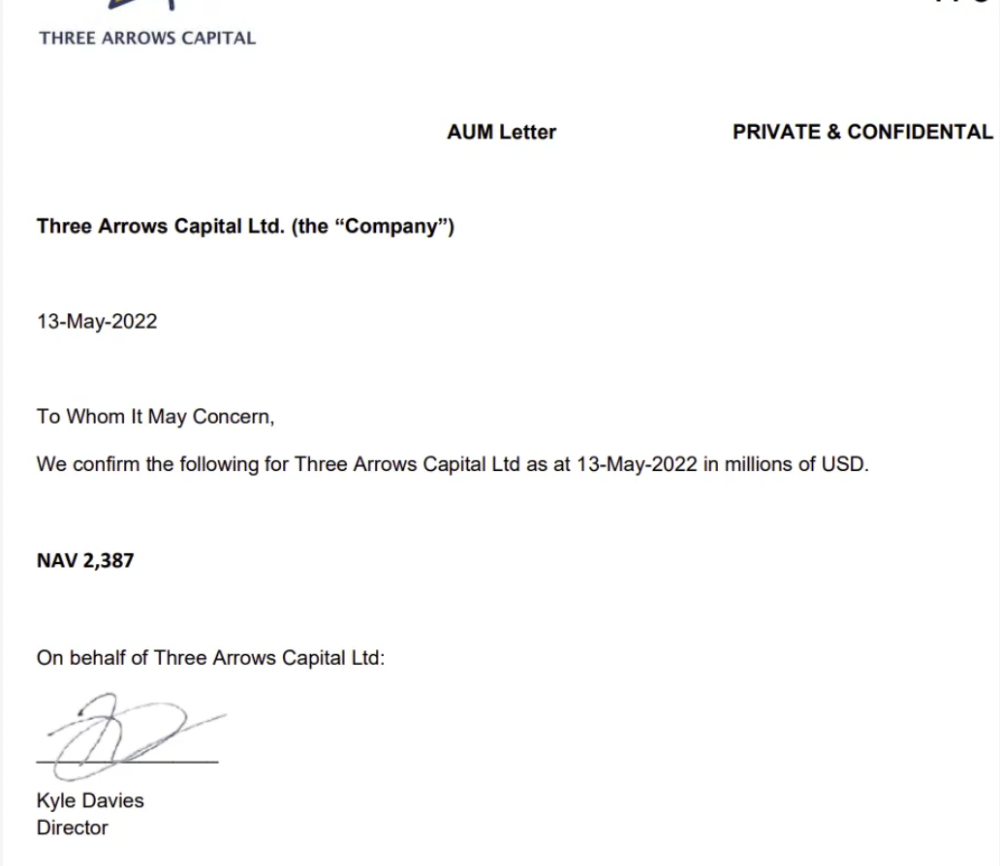

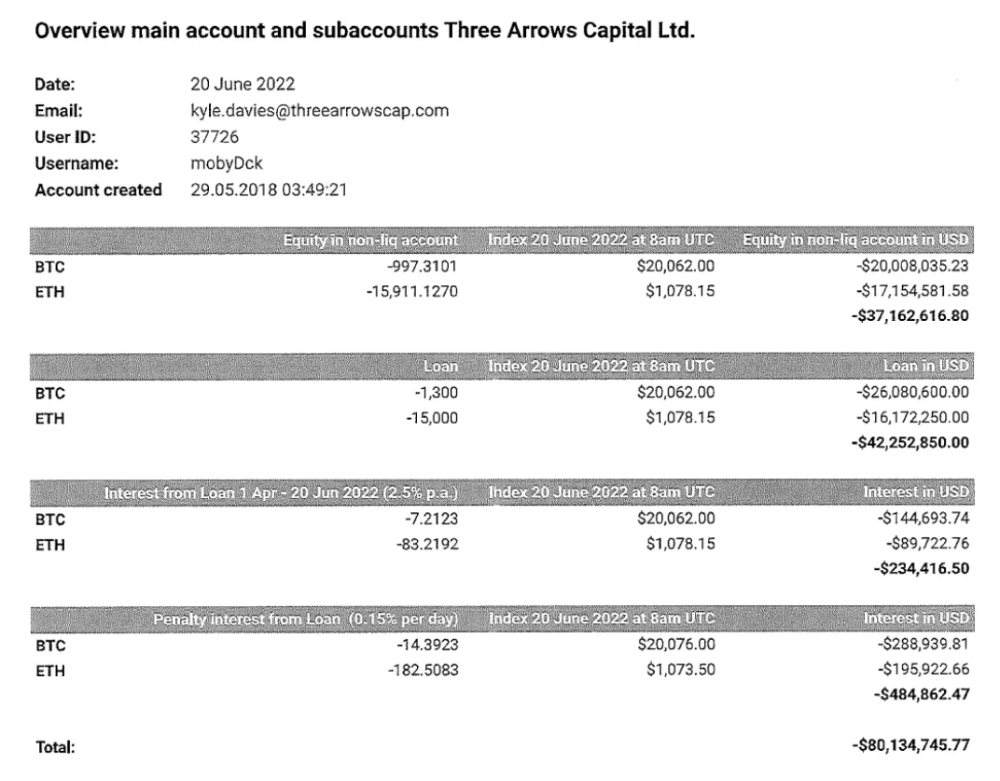

LUNA and UST go to zero quickly (I wrote about the mechanics of the blowup here). Kyle Davies, 3AC co-founder, told Blockchain.com on May 13 that they have $2.4bn in assets and $2.3bn NAV vs. $2bn in borrowings. As BTC and ETH plunged 33% and 50%, the company became insolvent by mid-2022.

3AC sent $32mm to Tai Ping Shen, a Cayman Islands business owned by Su Zhu and Davies' partner, Kelly Kaili Chen (who knows what is going on here).

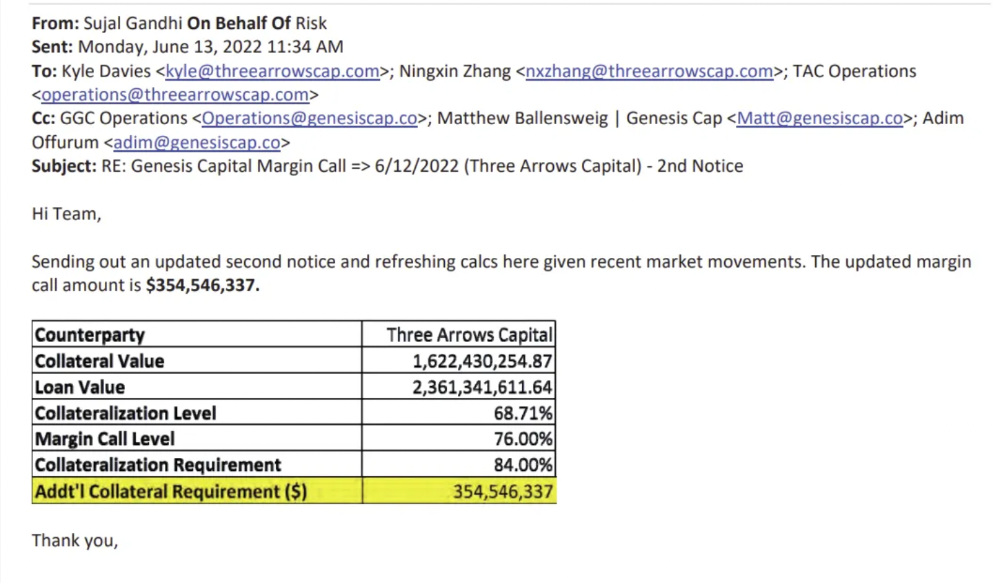

3AC had borrowed over $3.5bn in notional principle, with Genesis ($2.4bn) and Voyager ($650mm) having the most exposure.

Genesis demanded $355mm in further collateral in June.

Deribit (another 3AC investment) called for $80 million in mid-June.

Even in mid-June, the corporation was trying to borrow more money to stay afloat. They approached Genesis for another $125mm loan (to pay another lender) and HODLnauts for BTC & ETH loans.

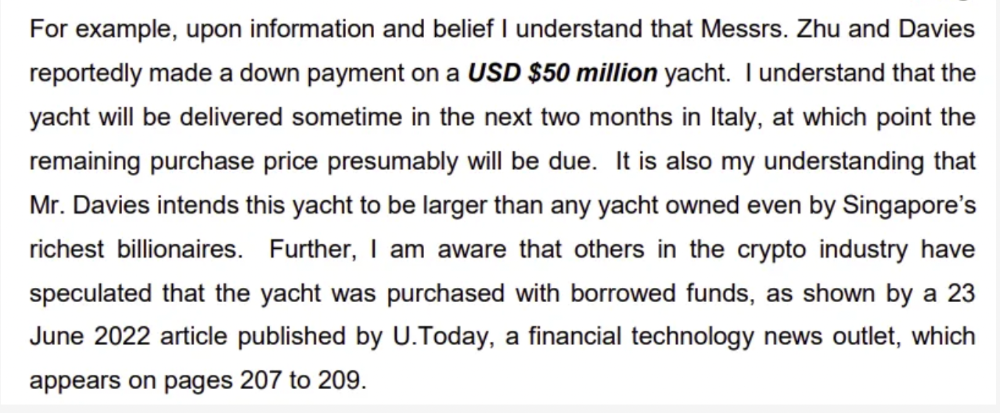

Pretty crazy. 3AC founders used borrowed money to buy a $50 million boat, according to the leak.

Su requesting for $5m + Chen Kaili Kelly asserting they loaned $65m unsecured to 3AC are identified as creditors.

Celsius:

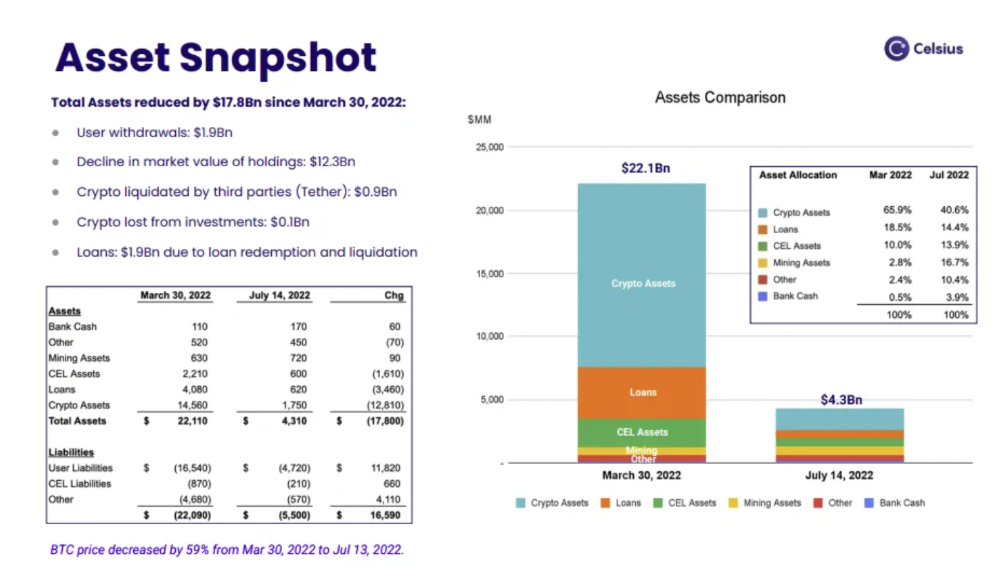

This bankruptcy presentation shows the Celsius breakdown from March to July 14, 2022. From $22bn to $4bn, crypto assets plummeted from $14.6bn to $1.8bn (ouch). $16.5bn in user liabilities dropped to $4.72bn.

In my recent post, I examined if "forced selling" is over, with Celsius' crypto assets being a major overhang. In this presentation, it looks that Chapter 11 will provide clients the opportunity to accept cash at a discount or remain long crypto. Provided that a fresh source of money is unlikely to enter the Celsius situation, cash at a discount or crypto given to customers will likely remain a near-term market risk - cash at a discount will likely come from selling crypto assets, while customers who receive crypto could sell at any time. I'll share any Celsius updates I find.

Conclusion

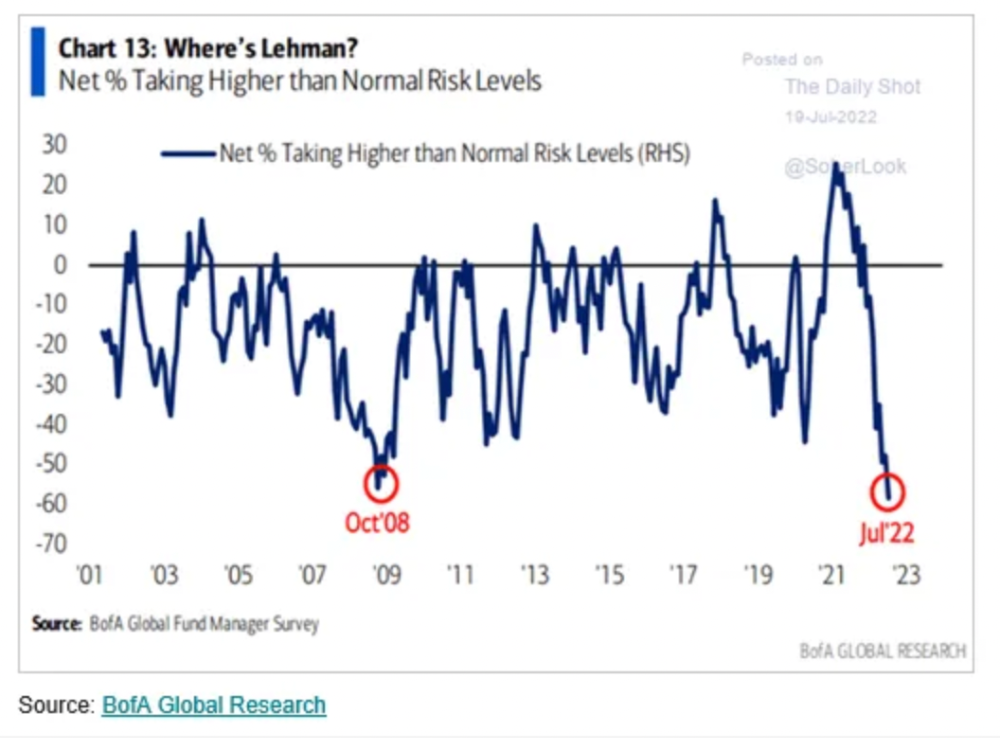

Only Celsius and the Mt Gox BTC unlock remain as forced selling catalysts. While everything went through a "relief" pump, with ETH up 75% from the bottom and numerous alts multiples higher, there are still macro dangers to equities + risk assets. There's a lot of wealth waiting to be deployed in crypto ($153bn in stables), but fund managers are risk apprehensive (lower than 2008 levels).

We're hopefully over crypto's "bottom," with peak anxiety and forced selling behind us, but we may chop around.

To see the full article, click here.

You might also like

Luke Plunkett

4 years ago

Gran Turismo 7 Update Eases Up On The Grind After Fan Outrage

Polyphony Digital has changed the game after apologizing in March.

To make amends for some disastrous downtime, Gran Turismo 7 director Kazunori Yamauchi announced a credits handout and promised to “dramatically change GT7's car economy to help make amends” last month. The first of these has arrived.

The game's 1.11 update includes the following concessions to players frustrated by the economy and its subsequent grind:

-

The last half of the World Circuits events have increased in-game credit rewards.

-

Modified Arcade and Custom Race rewards

-

Clearing all circuit layouts with Gold or Bronze now rewards In-game Credits. Exiting the Sector selection screen with the Exit button will award Credits if an event has already been cleared.

-

Increased Credits Rewards in Lobby and Daily Races

-

Increased the free in-game Credits cap from 20,000,000 to 100,000,000.

Additionally, “The Human Comedy” missions are one-hour endurance races that award “up to 1,200,000” credits per event.

This isn't everything Yamauchi promised last month; he said it would take several patches and updates to fully implement the changes. Here's a list of everything he said would happen, some of which have already happened (like the World Cup rewards and credit cap):

- Increase rewards in the latter half of the World Circuits by roughly 100%.

- Added high rewards for all Gold/Bronze results clearing the Circuit Experience.

- Online Races rewards increase.

- Add 8 new 1-hour Endurance Race events to Missions. So expect higher rewards.

- Increase the non-paid credit limit in player wallets from 20M to 100M.

- Expand the number of Used and Legend cars available at any time.

- With time, we will increase the payout value of limited time rewards.

- New World Circuit events.

- Missions now include 24-hour endurance races.

- Online Time Trials added, with rewards based on the player's time difference from the leader.

- Make cars sellable.

The full list of updates and changes can be found here.

Read the original post.

Farhad Malik

3 years ago

How This Python Script Makes Me Money Every Day

Starting a passive income stream with data science and programming

My website is fresh. But how do I monetize it?

Creating a passive-income website is difficult. Advertise first. But what useful are ads without traffic?

Let’s Generate Traffic And Put Our Programming Skills To Use

SEO boosts traffic (Search Engine Optimisation). Traffic generation is complex. Keywords matter more than text, URL, photos, etc.

My Python skills helped here. I wanted to find relevant, Google-trending keywords (tags) for my topic.

First The Code

I wrote the script below here.

import re

from string import punctuation

import nltk

from nltk import TreebankWordTokenizer, sent_tokenize

from nltk.corpus import stopwords

class KeywordsGenerator:

def __init__(self, pytrends):

self._pytrends = pytrends

def generate_tags(self, file_path, top_words=30):

file_text = self._get_file_contents(file_path)

clean_text = self._remove_noise(file_text)

top_words = self._get_top_words(clean_text, top_words)

suggestions = []

for top_word in top_words:

suggestions.extend(self.get_suggestions(top_word))

suggestions.extend(top_words)

tags = self._clean_tokens(suggestions)

return ",".join(list(set(tags)))

def _remove_noise(self, text):

#1. Convert Text To Lowercase and remove numbers

lower_case_text = str.lower(text)

just_text = re.sub(r'\d+', '', lower_case_text)

#2. Tokenise Paragraphs To words

list = sent_tokenize(just_text)

tokenizer = TreebankWordTokenizer()

tokens = tokenizer.tokenize(just_text)

#3. Clean text

clean = self._clean_tokens(tokens)

return clean

def _clean_tokens(self, tokens):

clean_words = [w for w in tokens if w not in punctuation]

stopwords_to_remove = stopwords.words('english')

clean = [w for w in clean_words if w not in stopwords_to_remove and not w.isnumeric()]

return clean

def get_suggestions(self, keyword):

print(f'Searching pytrends for {keyword}')

result = []

self._pytrends.build_payload([keyword], cat=0, timeframe='today 12-m')

data = self._pytrends.related_queries()[keyword]['top']

if data is None or data.values is None:

return result

result.extend([x[0] for x in data.values.tolist()][:2])

return result

def _get_file_contents(self, file_path):

return open(file_path, "r", encoding='utf-8',errors='ignore').read()

def _get_top_words(self, words, top):

counts = dict()

for word in words:

if word in counts:

counts[word] += 1

else:

counts[word] = 1

return list({k: v for k, v in sorted(counts.items(), key=lambda item: item[1])}.keys())[:top]

if __name__ == "1__main__":

from pytrends.request import TrendReq

nltk.download('punkt')

nltk.download('stopwords')

pytrends = TrendReq(hl='en-GB', tz=360)

tags = KeywordsGenerator(pytrends)\

.generate_tags('text_file.txt')

print(tags)Then The Dependencies

This script requires:

nltk==3.7

pytrends==4.8.0

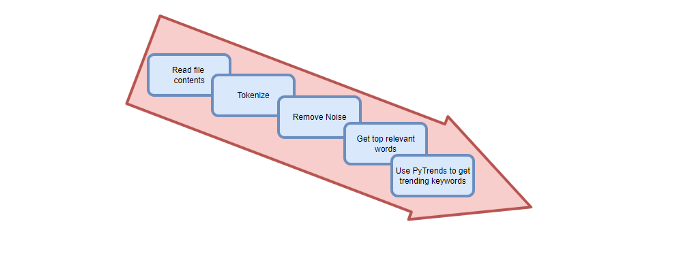

Analysis of the Script

I copy and paste my article into text file.txt, and the code returns the keywords as a comma-separated string.

To achieve this:

A class I made is called KeywordsGenerator.

This class has a function:

generate_tagsThe function

generate_tagsperforms the following tasks:

retrieves text file contents

uses NLP to clean the text by tokenizing sentences into words, removing punctuation, and other elements.

identifies the most frequent words that are relevant.

The

pytrendsAPI is then used to retrieve related phrases that are trending for each word from Google.finally adds a comma to the end of the word list.

4. I then use the keywords and paste them into the SEO area of my website.

These terms are trending on Google and relevant to my topic. My site's rankings and traffic have improved since I added new keywords. This little script puts our knowledge to work. I shared the script in case anyone faces similar issues.

I hope it helps readers sell their work.

Scott Galloway

3 years ago

Text-ure

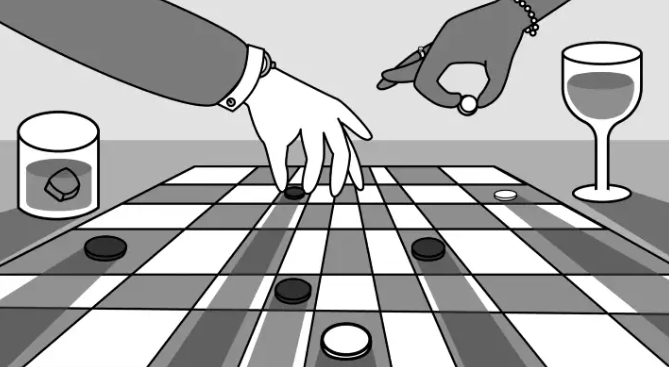

While we played checkers, we thought billionaires played 3D chess. They're playing the same game on a fancier board.

Every medium has nuances and norms. Texting is authentic and casual. A smaller circle has access, creating intimacy and immediacy. Most people read all their texts, but not all their email and mail. Many of us no longer listen to our voicemails, and calling your kids ages you.

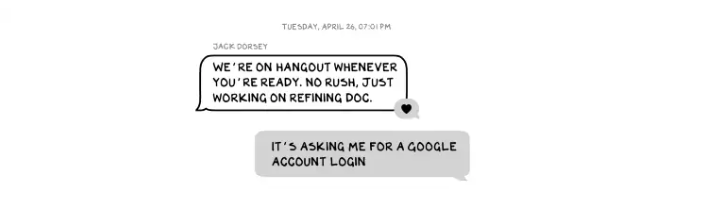

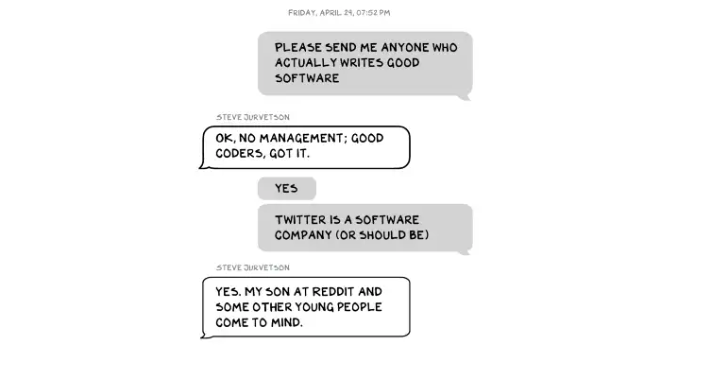

Live interviews and testimony under oath inspire real moments, rare in a world where communications departments sanitize everything powerful people say. When (some of) Elon's text messages became public in Twitter v. Musk, we got a glimpse into tech power. It's bowels.

These texts illuminate the tech community's upper caste.

Checkers, Not Chess

Elon texts with Larry Ellison, Joe Rogan, Sam Bankman-Fried, Satya Nadella, and Jack Dorsey. They reveal astounding logic, prose, and discourse. The world's richest man and his followers are unsophisticated, obtuse, and petty. Possibly. While we played checkers, we thought billionaires played 3D chess. They're playing the same game on a fancier board.

They fumble with their computers.

They lean on others to get jobs for their kids (no surprise).

No matter how rich, they always could use more (money).

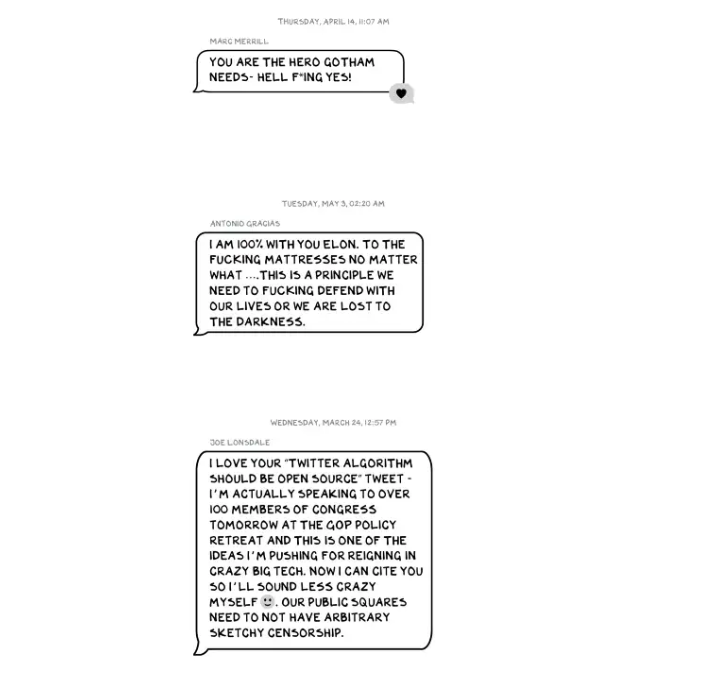

Differences A social hierarchy exists. Among this circle, the currency of deference is... currency. Money increases sycophantry. Oculus and Elon's "friends'" texts induce nausea.

Autocorrect frustrates everyone.

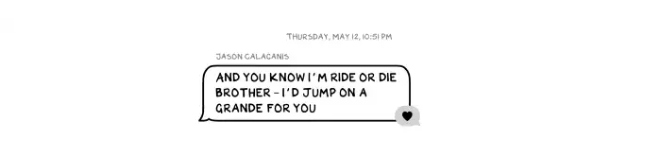

Elon doesn't stand out to me in these texts; he comes off mostly OK in my view. It’s the people around him. It seems our idolatry of innovators has infected the uber-wealthy, giving them an uncontrollable urge to kill the cool kid for a seat at his cafeteria table. "I'd grenade for you." If someone says this and they're not fighting you, they're a fan, not a friend.

Many powerful people are undone by their fake friends. Facilitators, not well-wishers. When Elon-Twitter started, I wrote about power. Unchecked power is intoxicating. This is a scientific fact, not a thesis. Power causes us to downplay risk, magnify rewards, and act on instincts more quickly. You lose self-control and must rely on others.

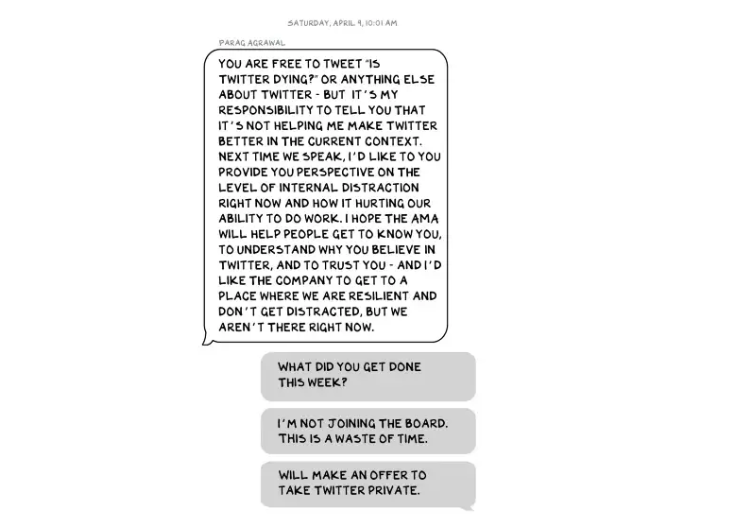

You'd hope the world's richest person has advisers who push back when necessary (i.e., not yes men). Elon's reckless, childish behavior and these texts show there is no truth-teller. I found just one pushback in the 151-page document. It came from Twitter CEO Parag Agrawal, who, in response to Elon’s unhelpful “Is Twitter dying?” tweet, let Elon know what he thought: It was unhelpful. Elon’s response? A childish, terse insult.

Scale

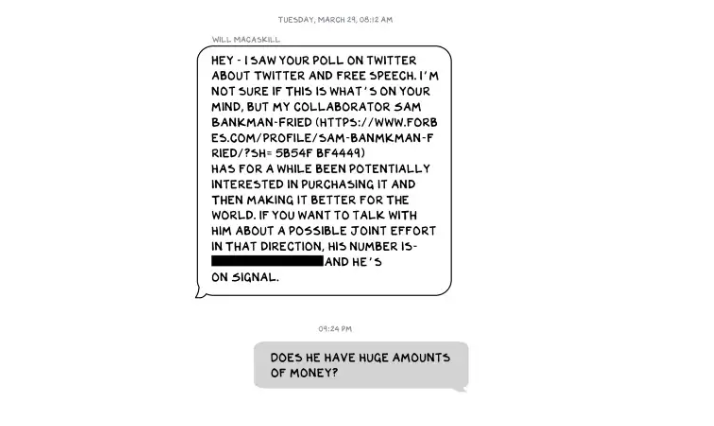

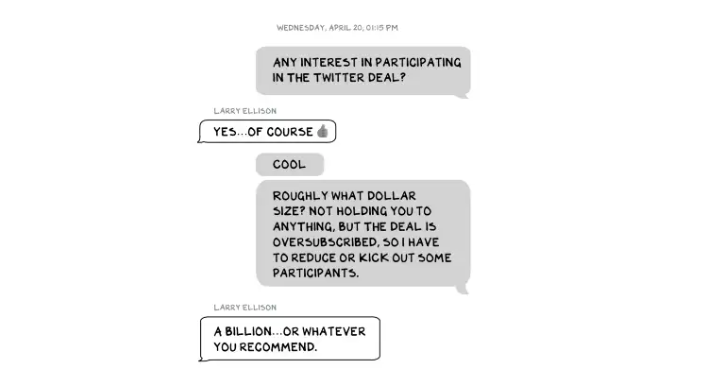

The texts are mostly unremarkable. There are some, however, that do remind us the (super-)rich are different. Specifically, the discussions of possible equity investments from crypto-billionaire Sam Bankman-Fried (“Does he have huge amounts of money?”) and this exchange with Larry Ellison:

Ellison, who co-founded $175 billion Oracle, is wealthy. Less clear is whether he can text a billion dollars. Who hasn't been texted $1 billion? Ellison offered 8,000 times the median American's net worth, enough to buy 3,000 Ferraris or the Chicago Blackhawks. It's a bedrock principle of capitalism to have incredibly successful people who are exponentially wealthier than the rest of us. It creates an incentive structure that inspires productivity and prosperity. When people offer billions over text to help a billionaire's vanity project in a country where 1 in 5 children are food insecure, isn't America messed up?

Elon's Morgan Stanley banker, Michael Grimes, tells him that Web3 ventures investor Bankman-Fried can invest $5 billion in the deal: “could do $5bn if everything vision lock... Believes in your mission." The message bothers Elon. In Elon's world, $5 billion doesn't warrant a worded response. $5 billion is more than many small nations' GDP, twice the SEC budget, and five times the NRC budget.

If income inequality worries you after reading this, trust your gut.

Billionaires aren't like the rich.

As an entrepreneur, academic, and investor, I've met modest-income people, rich people, and billionaires. Rich people seem different to me. They're smarter and harder working than most Americans. Monty Burns from The Simpsons is a cartoon about rich people. Rich people have character and know how to make friends. Success requires supporters.

I've never noticed a talent or intelligence gap between wealthy and ultra-wealthy people. Conflating talent and luck infects the tech elite. Timing is more important than incremental intelligence when going from millions to hundreds of millions or billions. Proof? Elon's texting. Any man who electrifies the auto industry and lands two rockets on barges is a genius. His mega-billions come from a well-regulated capital market, enforceable contracts, thousands of workers, and billions of dollars in government subsidies, including a $465 million DOE loan that allowed Tesla to produce the Model S. So, is Mr. Musk a genius or an impressive man in a unique time and place?

The Point

Elon's texts taught us more? He can't "fix" Twitter. For two weeks in April, he was all in on blockchain Twitter, brainstorming Dogecoin payments for tweets with his brother — i.e., paid speech — while telling Twitter's board he was going to make a hostile tender offer. Kimbal approved. By May, he was over crypto and "laborious blockchain debates." (Mood.)

Elon asked the Twitter CEO for "an update from the Twitter engineering team" No record shows if he got the meeting. It doesn't "fix" Twitter either. And this is Elon's problem. He's a grown-up child with all the toys and no boundaries. His yes-men encourage his most facile thoughts, and shitposts and errant behavior diminish his genius and ours.

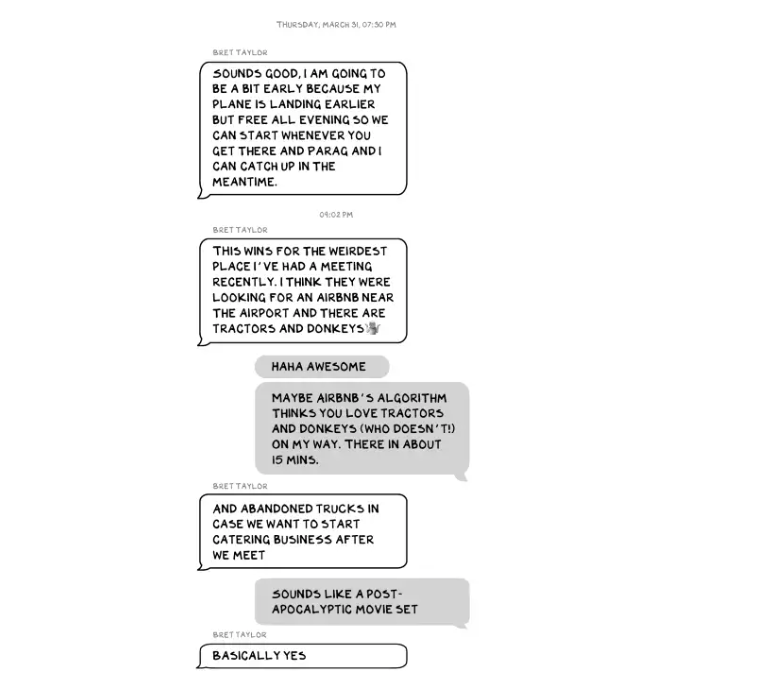

Post-Apocalyptic

The universe's titans have a sense of humor.

Every day, we must ask: Who keeps me real? Who will disagree with me? Who will save me from my psychosis, which has brought down so many successful people? Elon Musk doesn't need anyone to jump on a grenade for him; he needs to stop throwing them because one will explode in his hand.