More on NFTs & Art

Tora Northman

4 years ago

Pixelmon NFTs are so bad, they are almost good!

Bored Apes prices continue to rise, HAPEBEAST launches, Invisible Friends hype continues to grow. Sadly, not all projects are as successful.

Of course, there are many factors to consider when buying an NFT. Is the project a scam? Will the reveal derail the project? Possibly, but when Pixelmon first teased its launch, it generated a lot of buzz.

With a primary sale mint price of 3 ETH ($8,100 USD), it started as an expensive project, with plenty of fans willing to invest in what was sold as a game. After it was revealed, it fell rapidly.

Why? It was overpromised and under delivered.

According to the project's creator[^1], the funds generated will be used to develop the artwork. "The Pixelmon reveal was wrong. This is what our Pixelmon look like in-game. "Despite the fud, I will not go anywhere," he wrote on Twitter. The goal remains. The funds will still be used to build our game. I will finish this project."

The project raised $70 million USD, but the NFTs buyers received were not the project's original teasers. Some call it "the worst NFT project ever," while others call it a complete scam.

But there's hope for some buyers. Kevin emerged from the ashes as the project was roasted over the fire.

A Minecraft character meets Salad Fingers - that's Kevin. He's a frog-like creature whose reveal was such a terrible NFT that it became part of history – and a meme.

If you're laughing at people paying $8K for a silly pixelated image, you might need to take it back. Precisely because of this, lucky holders who minted Kevin have been able to sell the now-memed NFT for over 8 ETH (around $24,000 USD), with some currently listed for 100 ETH.

Of course, Twitter has been awash in memes mocking those who invested in the project, because what else can you do when so many people lose money?

It's still unclear if the NFT project is a scam, but the team behind it was hired on Upwork. There's still hope for redemption, but Kevin's rise to fame appears to be the only positive outcome so far.

[^1] This is not the first time the creator (A 20-yo New Zealanders) has sought money via an online platform and had people claiming he under-delivered. He raised $74,000 on Kickstarter for a card game called Psycho Chicken. There are hundreds of comments on the Kickstarter project saying they haven't received the product and pleading for a refund or an update.

Jennifer Tieu

4 years ago

Why I Love Azuki

Azuki Banner (www.azuki.com)

Disclaimer: This is my personal viewpoint. I'm not on the Azuki team. Please keep in mind that I am merely a fan, community member, and holder. Please do your own research and pardon my grammar. Thanks!

Azuki has changed my view of NFTs.

When I first entered the NFT world, I had no idea what to expect. I liked the idea. So I invested in some projects, fought for whitelists, and discovered some cool NFTs projects (shout-out to CATC). I lost more money than I earned at one point, but I hadn't invested excessively (only put in what you can afford to lose). Despite my losses, I kept looking. I almost waited for the “ah-ha” moment. A NFT project that changed my perspective on NFTs. What makes an NFT project more than a work of art?

Answer: Azuki.

The Art

The Azuki art drew me in as an anime fan. It looked like something out of an anime, and I'd never seen it before in NFT.

The project was still new. The first two animated teasers were released with little fanfare, but I was impressed with their quality. You can find them on Instagram or in their earlier Tweets.

The teasers hinted that this project could be big and that the team could deliver. It was amazing to see Shao cut the Azuki posters with her katana. Especially at the end when she sheaths her sword and the music cues. Then the live action video of the young boy arranging the Azuki posters seemed movie-like. I felt like I was entering the Azuki story, brand, and dope theme.

The team did not disappoint with the Azuki NFTs. The level of detail in the art is stunning. There were Azukis of all genders, skin and hair types, and more. These 10,000 Azukis have so much representation that almost anyone can find something that resonates. Rather than me rambling on, I suggest you visit the Azuki gallery

The Team

If the art is meant to draw you in and be the project's face, the team makes it more. The NFT would be a JPEG without a good team leader. Not that community isn't important, but no community would rally around a bad team.

Because I've been rugged before, I'm very focused on the team when considering a project. While many project teams are anonymous, I try to find ones that are doxxed (public) or at least appear to be established. Unlike Azuki, where most of the Azuki team is anonymous, Steamboy is public. He is (or was) Overwatch's character art director and co-creator of Azuki. I felt reassured and could trust the project after seeing someone from a major game series on the team.

Then I tried to learn as much as I could about the team. Following everyone on Twitter, reading their tweets, and listening to recorded AMAs. I was impressed by the team's professionalism and dedication to their vision for Azuki, led by ZZZAGABOND.

I believe the phrase “actions speak louder than words” applies to Azuki. I can think of a few examples of what the Azuki team has done, but my favorite is ERC721A.

With ERC721A, Azuki has created a new algorithm that allows minting multiple NFTs for essentially the same cost as minting one NFT.

I was ecstatic when the dev team announced it. This fascinates me as a self-taught developer. Azuki released a product that saves people money, improves the NFT space, and is open source. It showed their love for Azuki and the NFT community.

The Community

Community, community, community. It's almost a chant in the NFT space now. A community, like a team, can make or break a project. We are the project's consumers, shareholders, core, and lifeblood. The team builds the house, and we fill it. We stay for the community.

When I first entered the Azuki Discord, I was surprised by the calm atmosphere. There was no news about the project. No release date, no whitelisting requirements. No grinding or spamming either. People just wanted to hangout, get to know each other, and talk. It was nice. So the team could pick genuine people for their mintlist (aka whitelist).

But nothing fundamental has changed since the release. It has remained an authentic, fun, and helpful community. I'm constantly logging into Discord to chat with others or follow conversations. I see the community's openness to newcomers. Everyone respects each other (barring a few bad apples) and the variety of people passing through is fascinating. This human connection and interaction is what I enjoy about this place. Being a part of a group that supports a cause.

Finally, I want to thank the amazing Azuki mod team and the kissaten channel for their contributions.

The Brand

So, what sets Azuki apart from other projects? They are shaping a brand or identity. The Azuki website, I believe, best captures their vision. (This is me gushing over the site.)

If you go to the website, turn on the dope playlist in the bottom left. The playlist features a mix of Asian and non-Asian hip-hop and rap artists, with some lo-fi thrown in. The songs on the playlist change, but I think you get the vibe Azuki embodies just by turning on the music.

The Garden is our next stop where we are introduced to Azuki.

A brand.

We're creating a new brand together.

A metaverse brand. By the people.

A collection of 10,000 avatars that grant Garden membership. It starts with exclusive streetwear collabs, NFT drops, live events, and more. Azuki allows for a new media genre that the world has yet to discover. Let's build together an Azuki, your metaverse identity.

The Garden is a magical internet corner where art, community, and culture collide. The boundaries between the physical and digital worlds are blurring.

Try a Red Bean.

The text begins with Azuki's intention in the space. It's a community-made metaverse brand. Then it goes into more detail about Azuki's plans. Initiation of a story or journey. "Would you like to take the red bean and jump down the rabbit hole with us?" I love the Matrix red pill or blue pill play they used. (Azuki in Japanese means red bean.)

Morpheus, the rebel leader, offers Neo the choice of a red or blue pill in The Matrix. “You take the blue pill... After the story, you go back to bed and believe whatever you want. Your red pill... Let me show you how deep the rabbit hole goes.” Aware that the red pill will free him from the enslaving control of the machine-generated dream world and allow him to escape into the real world, he takes it. However, living the “truth of reality” is harsher and more difficult.

It's intriguing and draws you in. Taking the red bean causes what? Where am I going? I think they did well in piqueing a newcomer's interest.

Not convinced by the Garden? Read the Manifesto. It reinforces Azuki's role.

Here comes a new wave…

And surfing here is different.

Breaking down barriers.

Building open communities.

Creating magic internet money with our friends.

To those who don’t get it, we tell them: gm.

They’ll come around eventually.

Here’s to the ones with the courage to jump down a peculiar rabbit hole.

One that pulls you away from a world that’s created by many and owned by few…

To a world that’s created by more and owned by all.

From The Garden come the human beans that sprout into your family.

We rise together.

We build together.

We grow together.

Ready to take the red bean?

Not to mention the Mindmap, it sets Azuki apart from other projects and overused Roadmaps. I like how the team recognizes that the NFT space is not linear. So many of us are still trying to figure it out. It is Azuki's vision to adapt to changing environments while maintaining their values. I admire their commitment to long-term growth.

Conclusion

To be honest, I have no idea what the future holds. Azuki is still new and could fail. But I'm a long-term Azuki fan. I don't care about quick gains. The future looks bright for Azuki. I believe in the team's output. I love being an Azuki.

Thank you! IKUZO!

Full post here

Protos

3 years ago

Plagiarism on OpenSea: humans and computers

OpenSea, a non-fungible token (NFT) marketplace, is fighting plagiarism. A new “two-pronged” approach will aim to root out and remove copies of authentic NFTs and changes to its blue tick verified badge system will seek to enhance customer confidence.

According to a blog post, the anti-plagiarism system will use algorithmic detection of “copymints” with human reviewers to keep it in check.

Last year, NFT collectors were duped into buying flipped images of the popular BAYC collection, according to The Verge. The largest NFT marketplace had to remove its delay pay minting service due to an influx of copymints.

80% of NFTs removed by the platform were minted using its lazy minting service, which kept the digital asset off-chain until the first purchase.

NFTs copied from popular collections are opportunistic money-grabs. Right-click, save, and mint the jacked JPEGs that are then flogged as an authentic NFT.

The anti-plagiarism system will scour OpenSea's collections for flipped and rotated images, as well as other undescribed permutations. The lack of detail here may be a deterrent to scammers, or it may reflect the new system's current rudimentary nature.

Thus, human detectors will be needed to verify images flagged by the detection system and help train it to work independently.

“Our long-term goal with this system is two-fold: first, to eliminate all existing copymints on OpenSea, and second, to help prevent new copymints from appearing,” it said.

“We've already started delisting identified copymint collections, and we'll continue to do so over the coming weeks.”

It works for Twitter, why not OpenSea

OpenSea is also changing account verification. Early adopters will be invited to apply for verification if their NFT stack is worth $100 or more. OpenSea plans to give the blue checkmark to people who are active on Twitter and Discord.

This is just the beginning. We are committed to a future where authentic creators can be verified, keeping scammers out.

Also, collections with a lot of hype and sales will get a blue checkmark. For example, a new NFT collection sold by the verified BAYC account will have a blue badge to verify its legitimacy.

New requests will be responded to within seven days, according to OpenSea.

These programs and products help protect creators and collectors while ensuring our community can confidently navigate the world of NFTs.

By elevating authentic content and removing plagiarism, these changes improve trust in the NFT ecosystem, according to OpenSea.

OpenSea is indeed catching up with the digital art economy. Last August, DevianArt upgraded its AI image recognition system to find stolen tokenized art on marketplaces like OpenSea.

It scans all uploaded art and compares it to “public blockchain events” like Ethereum NFTs to detect stolen art.

You might also like

Sam Hickmann

3 years ago

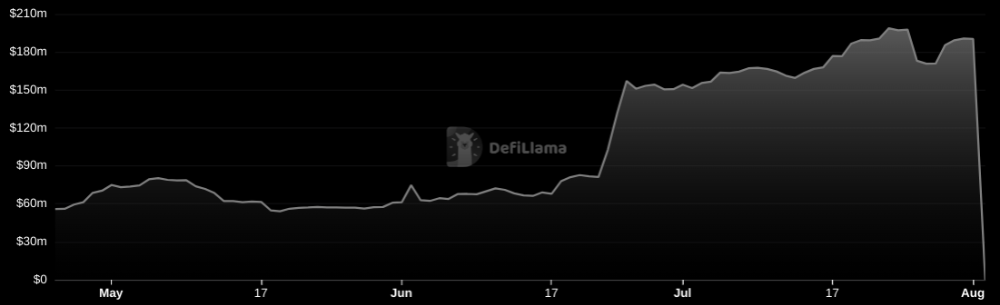

Nomad.xyz got exploited for $190M

Key Takeaways:

Another hack. This time was different. This is a doozy.

Why? Nomad got exploited for $190m. It was crypto's 5th-biggest hack. Ouch.

It wasn't hackers, but random folks. What happened:

A Nomad smart contract flaw was discovered. They couldn't drain the funds at once, so they tried numerous transactions. Rookie!

People noticed and copied the attack.

They just needed to discover a working transaction, substitute the other person's address with theirs, and run it.

In a two-and-a-half-hour attack, $190M was siphoned from Nomad Bridge.

Nomad is a novel approach to blockchain interoperability that leverages an optimistic mechanism to increase the security of cross-chain communication. — nomad.xyz

This hack was permissionless, therefore anyone could participate.

After the fatal blow, people fought over the scraps.

Cross-chain bridges remain a DeFi weakness and exploit target. When they collapse, it's typically total.

$190M...gobbled.

Unbacked assets are hurting Nomad-dependent chains. Moonbeam, EVMOS, and Milkomeda's TVLs dropped.

This incident is every-man-for-himself, although numerous whitehats exploited the issue...

But what triggered the feeding frenzy?

How did so many pick the bones?

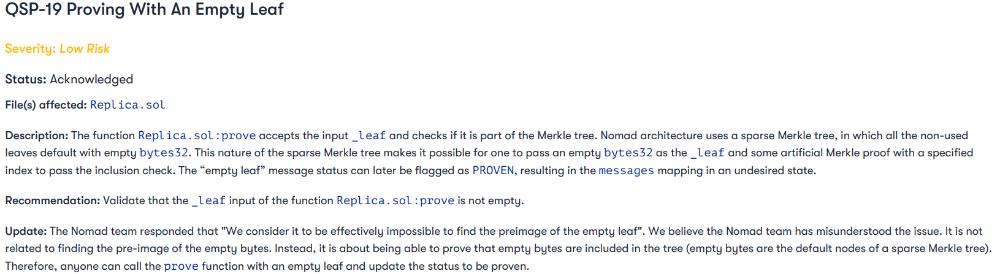

After a normal upgrade in June, the bridge's Replica contract was initialized with a severe security issue. The 0x00 address was a trusted root, therefore all messages were valid by default.

After a botched first attempt (costing $350k in gas), the original attacker's exploit tx called process() without first 'proving' its validity.

The process() function executes all cross-chain messages and checks the merkle root of all messages (line 185).

The upgrade caused transactions with a'messages' value of 0 (invalid, according to old logic) to be read by default as 0x00, a trusted root, passing validation as 'proven'

Any process() calls were valid. In reality, a more sophisticated exploiter may have designed a contract to drain the whole bridge.

Copycat attackers simply copied/pasted the same process() function call using Etherscan, substituting their address.

The incident was a wild combination of crowdhacking, whitehat activities, and MEV-bot (Maximal Extractable Value) mayhem.

For example, 🍉🍉🍉. eth stole $4M from the bridge, but claims to be whitehat.

Others stood out for the wrong reasons. Repeat criminal Rari Capital (Artibrum) exploited over $3M in stablecoins, which moved to Tornado Cash.

The top three exploiters (with 95M between them) are:

$47M: 0x56D8B635A7C88Fd1104D23d632AF40c1C3Aac4e3

$40M: 0xBF293D5138a2a1BA407B43672643434C43827179

$8M: 0xB5C55f76f90Cc528B2609109Ca14d8d84593590E

Here's a list of all the exploiters:

The project conducted a Quantstamp audit in June; QSP-19 foreshadowed a similar problem.

The auditor's comments that "We feel the Nomad team misinterpreted the issue" speak to a troubling attitude towards security that the project's "Long-Term Security" plan appears to confirm:

Concerns were raised about the team's response time to a live, public exploit; the team's official acknowledgement came three hours later.

"Removing the Replica contract as owner" stopped the exploit, but it was too late to preserve the cash.

Closed blockchain systems are only as strong as their weakest link.

The Harmony network is in turmoil after its bridge was attacked and lost $100M in late June.

What's next for Nomad's ecosystems?

Moonbeam's TVL is now $135M, EVMOS's is $3M, and Milkomeda's is $20M.

Loss of confidence may do more damage than $190M.

Cross-chain infrastructure is difficult to secure in a new, experimental sector. Bridge attacks can pollute an entire ecosystem or more.

Nomadic liquidity has no permanent home, so consumers will always migrate in pursuit of the "next big thing" and get stung when attentiveness wanes.

DeFi still has easy prey...

Sources: rekt.news & The Milk Road.

Mark Shpuntov

3 years ago

How to Produce a Month's Worth of Content for Social Media in a Day

New social media producers' biggest error

The Treadmill of Social Media Content

New creators focus on the wrong platforms.

They post to Instagram, Twitter, TikTok, etc.

They create daily material, but it's never enough for social media algorithms.

Creators recognize they're on a content creation treadmill.

They have to keep publishing content daily just to stay on the algorithm’s good side and avoid losing the audience they’ve built on the platform.

This is exhausting and unsustainable, causing creator burnout.

They focus on short-lived platforms, which is an issue.

Comparing low- and high-return social media platforms

Social media networks are great for reaching new audiences.

Their algorithm is meant to viralize material.

Social media can use you for their aims if you're not careful.

To master social media, focus on the right platforms.

To do this, we must differentiate low-ROI and high-ROI platforms:

Low ROI platforms are ones where content has a short lifespan. High ROI platforms are ones where content has a longer lifespan.

A tweet may be shown for 12 days. If you write an article or blog post, it could get visitors for 23 years.

ROI is drastically different.

New creators have limited time and high learning curves.

Nothing is possible.

First create content for high-return platforms.

ROI for social media platforms

Here are high-return platforms:

Your Blog - A single blog article can rank and attract a ton of targeted traffic for a very long time thanks to the power of SEO.

YouTube - YouTube has a reputation for showing search results or sidebar recommendations for videos uploaded 23 years ago. A superb video you make may receive views for a number of years.

Medium - A platform dedicated to excellent writing is called Medium. When you write an article about a subject that never goes out of style, you're building a digital asset that can drive visitors indefinitely.

These high ROI platforms let you generate content once and get visitors for years.

This contrasts with low ROI platforms:

Twitter

Instagram

TikTok

LinkedIn

Facebook

The posts you publish on these networks have a 23-day lifetime. Instagram Reels and TikToks are exceptions since viral content can last months.

If you want to make content creation sustainable and enjoyable, you must focus the majority of your efforts on creating high ROI content first. You can then use the magic of repurposing content to publish content to the lower ROI platforms to increase your reach and exposure.

How To Use Your Content Again

So, you’ve decided to focus on the high ROI platforms.

Great!

You've published an article or a YouTube video.

You worked hard on it.

Now you have fresh stuff.

What now?

If you are not repurposing each piece of content for multiple platforms, you are throwing away your time and efforts.

You've created fantastic material, so why not distribute it across platforms?

Repurposing Content Step-by-Step

For me, it's writing a blog article, but you might start with a video or podcast.

The premise is the same regardless of the medium.

Start by creating content for a high ROI platform (YouTube, Blog Post, Medium). Then, repurpose, edit, and repost it to the lower ROI platforms.

Here's how to repurpose pillar material for other platforms:

Post the article on your blog.

Put your piece on Medium (use the canonical link to point to your blog as the source for SEO)

Create a video and upload it to YouTube using the talking points from the article.

Rewrite the piece a little, then post it to LinkedIn.

Change the article's format to a Thread and share it on Twitter.

Find a few quick quotes throughout the article, then use them in tweets or Instagram quote posts.

Create a carousel for Instagram and LinkedIn using screenshots from the Twitter Thread.

Go through your film and select a few valuable 30-second segments. Share them on LinkedIn, Facebook, Twitter, TikTok, YouTube Shorts, and Instagram Reels.

Your video's audio can be taken out and uploaded as a podcast episode.

If you (or your team) achieve all this, you'll have 20-30 pieces of social media content.

If you're just starting, I wouldn't advocate doing all of this at once.

Instead, focus on a few platforms with this method.

You can outsource this as your company expands. (If you'd want to learn more about content repurposing, contact me.)

You may focus on relevant work while someone else grows your social media on autopilot.

You develop high-ROI pillar content, and it's automatically chopped up and posted on social media.

This lets you use social media algorithms without getting sucked in.

Thanks for reading!

Duane Michael

3 years ago

Don't Fall Behind: 7 Subjects You Must Understand to Keep Up with Technology

As technology develops, you should stay up to date

You don't want to fall behind, do you? This post covers 7 tech-related things you should know.

You'll learn how to operate your computer (and other electronic devices) like an expert and how to leverage the Internet and social media to create your brand and business. Read on to stay relevant in today's tech-driven environment.

You must learn how to code.

Future-language is coding. It's how we and computers talk. Learn coding to keep ahead.

Try Codecademy or Code School. There are also numerous free courses like Coursera or Udacity, but they take a long time and aren't necessarily self-paced, so it can be challenging to find the time.

Artificial intelligence (AI) will transform all jobs.

Our skillsets must adapt with technology. AI is a must-know topic. AI will revolutionize every employment due to advances in machine learning.

Here are seven AI subjects you must know.

What is artificial intelligence?

How does artificial intelligence work?

What are some examples of AI applications?

How can I use artificial intelligence in my day-to-day life?

What jobs have a high chance of being replaced by artificial intelligence and how can I prepare for this?

Can machines replace humans? What would happen if they did?

How can we manage the social impact of artificial intelligence and automation on human society and individual people?

Blockchain Is Changing the Future

Few of us know how Bitcoin and blockchain technology function or what impact they will have on our lives. Blockchain offers safe, transparent, tamper-proof transactions.

It may alter everything from business to voting. Seven must-know blockchain topics:

Describe blockchain.

How does the blockchain function?

What advantages does blockchain offer?

What possible uses for blockchain are there?

What are the dangers of blockchain technology?

What are my options for using blockchain technology?

What does blockchain technology's future hold?

Cryptocurrencies are here to stay

Cryptocurrencies employ cryptography to safeguard transactions and manage unit creation. Decentralized cryptocurrencies aren't controlled by governments or financial institutions.

Bitcoin, the first cryptocurrency, was launched in 2009. Cryptocurrencies can be bought and sold on decentralized exchanges.

Bitcoin is here to stay.

Bitcoin isn't a fad, despite what some say. Since 2009, Bitcoin's popularity has grown. Bitcoin is worth learning about now. Since 2009, Bitcoin has developed steadily.

With other cryptocurrencies emerging, many people are wondering if Bitcoin still has a bright future. Curiosity is natural. Millions of individuals hope their Bitcoin investments will pay off since they're popular now.

Thankfully, they will. Bitcoin is still running strong a decade after its birth. Here's why.

The Internet of Things (IoT) is no longer just a trendy term.

IoT consists of internet-connected physical items. These items can share data. IoT is young but developing fast.

20 billion IoT-connected devices are expected by 2023. So much data! All IT teams must keep up with quickly expanding technologies. Four must-know IoT topics:

Recognize the fundamentals: Priorities first! Before diving into more technical lingo, you should have a fundamental understanding of what an IoT system is. Before exploring how something works, it's crucial to understand what you're working with.

Recognize Security: Security does not stand still, even as technology advances at a dizzying pace. As IT professionals, it is our duty to be aware of the ways in which our systems are susceptible to intrusion and to ensure that the necessary precautions are taken to protect them.

Be able to discuss cloud computing: The cloud has seen various modifications over the past several years once again. The use of cloud computing is also continually changing. Knowing what kind of cloud computing your firm or clients utilize will enable you to make the appropriate recommendations.

Bring Your Own Device (BYOD)/Mobile Device Management (MDM) is a topic worth discussing (MDM). The ability of BYOD and MDM rules to lower expenses while boosting productivity among employees who use these services responsibly is a major factor in their continued growth in popularity.

IoT Security is key

As more gadgets connect, they must be secure. IoT security includes securing devices and encrypting data. Seven IoT security must-knows:

fundamental security ideas

Authorization and identification

Cryptography

electronic certificates

electronic signatures

Private key encryption

Public key encryption

Final Thoughts

With so much going on in the globe, it can be hard to stay up with technology. We've produced a list of seven tech must-knows.