An approximate introduction to how zk-SNARKs are possible (part 1)

You can make a proof for the statement "I know a secret number such that if you take the word ‘cow', add the number to the end, and SHA256 hash it 100 million times, the output starts with 0x57d00485aa". The verifier can verify the proof far more quickly than it would take for them to run 100 million hashes themselves, and the proof would also not reveal what the secret number is.

In the context of blockchains, this has 2 very powerful applications: Perhaps the most powerful cryptographic technology to come out of the last decade is general-purpose succinct zero knowledge proofs, usually called zk-SNARKs ("zero knowledge succinct arguments of knowledge"). A zk-SNARK allows you to generate a proof that some computation has some particular output, in such a way that the proof can be verified extremely quickly even if the underlying computation takes a very long time to run. The "ZK" part adds an additional feature: the proof can keep some of the inputs to the computation hidden.

You can make a proof for the statement "I know a secret number such that if you take the word ‘cow', add the number to the end, and SHA256 hash it 100 million times, the output starts with 0x57d00485aa". The verifier can verify the proof far more quickly than it would take for them to run 100 million hashes themselves, and the proof would also not reveal what the secret number is.

In the context of blockchains, this has two very powerful applications:

- Scalability: if a block takes a long time to verify, one person can verify it and generate a proof, and everyone else can just quickly verify the proof instead

- Privacy: you can prove that you have the right to transfer some asset (you received it, and you didn't already transfer it) without revealing the link to which asset you received. This ensures security without unduly leaking information about who is transacting with whom to the public.

But zk-SNARKs are quite complex; indeed, as recently as in 2014-17 they were still frequently called "moon math". The good news is that since then, the protocols have become simpler and our understanding of them has become much better. This post will try to explain how ZK-SNARKs work, in a way that should be understandable to someone with a medium level of understanding of mathematics.

Why ZK-SNARKs "should" be hard

Let us take the example that we started with: we have a number (we can encode "cow" followed by the secret input as an integer), we take the SHA256 hash of that number, then we do that again another 99,999,999 times, we get the output, and we check what its starting digits are. This is a huge computation.

A "succinct" proof is one where both the size of the proof and the time required to verify it grow much more slowly than the computation to be verified. If we want a "succinct" proof, we cannot require the verifier to do some work per round of hashing (because then the verification time would be proportional to the computation). Instead, the verifier must somehow check the whole computation without peeking into each individual piece of the computation.

One natural technique is random sampling: how about we just have the verifier peek into the computation in 500 different places, check that those parts are correct, and if all 500 checks pass then assume that the rest of the computation must with high probability be fine, too?

Such a procedure could even be turned into a non-interactive proof using the Fiat-Shamir heuristic: the prover computes a Merkle root of the computation, uses the Merkle root to pseudorandomly choose 500 indices, and provides the 500 corresponding Merkle branches of the data. The key idea is that the prover does not know which branches they will need to reveal until they have already "committed to" the data. If a malicious prover tries to fudge the data after learning which indices are going to be checked, that would change the Merkle root, which would result in a new set of random indices, which would require fudging the data again... trapping the malicious prover in an endless cycle.

But unfortunately there is a fatal flaw in naively applying random sampling to spot-check a computation in this way: computation is inherently fragile. If a malicious prover flips one bit somewhere in the middle of a computation, they can make it give a completely different result, and a random sampling verifier would almost never find out.

It only takes one deliberately inserted error, that a random check would almost never catch, to make a computation give a completely incorrect result.

If tasked with the problem of coming up with a zk-SNARK protocol, many people would make their way to this point and then get stuck and give up. How can a verifier possibly check every single piece of the computation, without looking at each piece of the computation individually? There is a clever solution.

see part 2

(Edited)

More on Web3 & Crypto

Henrique Centieiro

4 years ago

DAO 101: Everything you need to know

Maybe you'll work for a DAO next! Over $1 Billion in NFTs in the Flamingo DAO Another DAO tried to buy the NFL team Denver Broncos. The UkraineDAO raised over $7 Million for Ukraine. The PleasrDAO paid $4m for a Wu-Tang Clan album that belonged to the “pharma bro.”

DAOs move billions and employ thousands. So learn what a DAO is, how it works, and how to create one!

DAO? So, what? Why is it better?

A Decentralized Autonomous Organization (DAO). Some people like to also refer to it as Digital Autonomous Organization, but I prefer the former.

They are virtual organizations. In the real world, you have organizations or companies right? These firms have shareholders and a board. Usually, anyone with authority makes decisions. It could be the CEO, the Board, or the HIPPO. If you own stock in that company, you may also be able to influence decisions. It's now possible to do something similar but much better and more equitable in the cryptocurrency world.

This article informs you:

DAOs- What are the most common DAOs, their advantages and disadvantages over traditional companies? What are they if any?

Is a DAO legally recognized?

How secure is a DAO?

I’m ready whenever you are!

A DAO is a type of company that is operated by smart contracts on the blockchain. Smart contracts are computer code that self-executes our commands. Those contracts can be any. Most second-generation blockchains support smart contracts. Examples are Ethereum, Solana, Polygon, Binance Smart Chain, EOS, etc. I think I've gone off topic. Back on track. Now let's go!

Unlike traditional corporations, DAOs are governed by smart contracts. Unlike traditional company governance, DAO governance is fully transparent and auditable. That's one of the things that sets it apart. The clarity!

A DAO, like a traditional company, has one major difference. In other words, it is decentralized. DAOs are more ‘democratic' than traditional companies because anyone can vote on decisions. Anyone! In a DAO, we (you and I) make the decisions, not the top-shots. We are the CEO and investors. A DAO gives its community members power. We get to decide.

As long as you are a stakeholder, i.e. own a portion of the DAO tokens, you can participate in the DAO. Tokens are open to all. It's just a matter of exchanging it. Ownership of DAO tokens entitles you to exclusive benefits such as governance, voting, and so on. You can vote for a move, a plan, or the DAO's next investment. You can even pitch for funding. Any ‘big' decision in a DAO requires a vote from all stakeholders. In this case, ‘token-holders'! In other words, they function like stock.

What are the 5 DAO types?

Different DAOs exist. We will categorize decentralized autonomous organizations based on their mode of operation, structure, and even technology. Here are a few. You've probably heard of them:

1. DeFi DAO

These DAOs offer DeFi (decentralized financial) services via smart contract protocols. They use tokens to vote protocol and financial changes. Uniswap, Aave, Maker DAO, and Olympus DAO are some examples. Most DAOs manage billions.

Maker DAO was one of the first protocols ever created. It is a decentralized organization on the Ethereum blockchain that allows cryptocurrency lending and borrowing without a middleman.

Maker DAO issues DAI, a stable coin. DAI is a top-rated USD-pegged stable coin.

Maker DAO has an MKR token. These token holders are in charge of adjusting the Dai stable coin policy. Simply put, MKR tokens represent DAO “shares”.

2. Investment DAO

Investors pool their funds and make investment decisions. Investing in new businesses or art is one example. Investment DAOs help DeFi operations pool capital. The Meta Cartel DAO is a community of people who want to invest in new projects built on the Ethereum blockchain. Instead of investing one by one, they want to pool their resources and share ideas on how to make better financial decisions.

Other investment DAOs include the LAO and Friends with Benefits.

3. DAO Grant/Launchpad

In a grant DAO, community members contribute funds to a grant pool and vote on how to allocate and distribute them. These DAOs fund new DeFi projects. Those in need only need to apply. The Moloch DAO is a great Grant DAO. The tokens are used to allocate capital. Also see Gitcoin and Seedify.

4. DAO Collector

I debated whether to put it under ‘Investment DAO' or leave it alone. It's a subset of investment DAOs. This group buys non-fungible tokens, artwork, and collectibles. The market for NFTs has recently exploded, and it's time to investigate. The Pleasr DAO is a collector DAO. One copy of Wu-Tang Clan's "Once Upon a Time in Shaolin" cost the Pleasr DAO $4 million. Pleasr DAO is known for buying Doge meme NFT. Collector DAOs include the Flamingo, Mutant Cats DAO, and Constitution DAOs. Don't underestimate their websites' "childish" style. They have millions.

5. Social DAO

These are social networking and interaction platforms. For example, Decentraland DAO and Friends With Benefits DAO.

What are the DAO Benefits?

Here are some of the benefits of a decentralized autonomous organization:

- They are trustless. You don’t need to trust a CEO or management team

- It can’t be shut down unless a majority of the token holders agree. The government can't shut - It down because it isn't centralized.

- It's fully democratic

- It is open-source and fully transparent.

What about DAO drawbacks?

We've been saying DAOs are the bomb? But are they really the shit? What could go wrong with DAO?

DAOs may contain bugs. If they are hacked, the results can be catastrophic.

No trade secrets exist. Because the smart contract is transparent and coded on the blockchain, it can be copied. It may be used by another organization without credit. Maybe DAOs should use Secret, Oasis, or Horizen blockchain networks.

Are DAOs legally recognized??

In most counties, DAO regulation is inexistent. It's unclear. Most DAOs don’t have a legal personality. The Howey Test and the Securities Act of 1933 determine whether DAO tokens are securities. Although most countries follow the US, this is only considered for the US. Wyoming became the first state to recognize DAOs as legal entities in July 2021 after passing a DAO bill. DAOs registered in Wyoming are thus legally recognized as business entities in the US and thus receive the same legal protections as a Limited Liability Company.

In terms of cyber-security, how secure is a DAO?

Blockchains are secure. However, smart contracts may have security flaws or bugs. This can be avoided by third-party smart contract reviews, testing, and auditing

Finally, Decentralized Autonomous Organizations are timeless. Let us examine the current situation: Ukraine's invasion. A DAO was formed to help Ukrainian troops fighting the Russians. It was named Ukraine DAO. Pleasr DAO, NFT studio Trippy Labs, and Russian art collective Pussy Riot organized this fundraiser. Coindesk reports that over $3 million has been raised in Ethereum-based tokens. AidForUkraine, a DAO aimed at supporting Ukraine's defense efforts, has launched. Accepting Solana token donations. They are fully transparent, uncensorable, and can’t be shut down or sanctioned.

DAOs are undeniably the future of blockchain. Everyone is paying attention. Personally, I believe traditional companies will soon have to choose between adapting or being left behind.

Long version of this post: https://medium.datadriveninvestor.com/dao-101-all-you-need-to-know-about-daos-275060016663

Modern Eremite

3 years ago

The complete, easy-to-understand guide to bitcoin

Introduction

Markets rely on knowledge.

The internet provided practically endless knowledge and wisdom. Humanity has never seen such leverage. Technology's progress drives us to adapt to a changing world, changing our routines and behaviors.

In a digital age, people may struggle to live in the analogue world of their upbringing. Can those who can't adapt change their lives? I won't answer. We should teach those who are willing to learn, nevertheless. Unravel the modern world's riddles and give them wisdom.

Adapt or die . Accept the future or remain behind.

This essay will help you comprehend Bitcoin better than most market participants and the general public. Let's dig into Bitcoin.

Join me.

Ascension

Bitcoin.org was registered in August 2008. Bitcoin whitepaper was published on 31 October 2008. The document intrigued and motivated people around the world, including technical engineers and sovereignty seekers. Since then, Bitcoin's whitepaper has been read and researched to comprehend its essential concept.

I recommend reading the whitepaper yourself. You'll be able to say you read the Bitcoin whitepaper instead of simply Googling "what is Bitcoin" and reading the fundamental definition without knowing the revolution's scope. The article links to Bitcoin's whitepaper. To avoid being overwhelmed by the whitepaper, read the following article first.

Bitcoin isn't the first peer-to-peer digital currency. Hashcash or Bit Gold were once popular cryptocurrencies. These two Bitcoin precursors failed to gain traction and produce the network effect needed for general adoption. After many struggles, Bitcoin emerged as the most successful cryptocurrency, leading the way for others.

Satoshi Nakamoto, an active bitcointalk.org user, created Bitcoin. Satoshi's identity remains unknown. Satoshi's last bitcointalk.org login was 12 December 2010. Since then, he's officially disappeared. Thus, conspiracies and riddles surround Bitcoin's creators. I've heard many various theories, some insane and others well-thought-out.

It's not about who created it; it's about knowing its potential. Since its start, Satoshi's legacy has changed the world and will continue to.

Block-by-block blockchain

Bitcoin is a distributed ledger. What's the meaning?

Everyone can view all blockchain transactions, but no one can undo or delete them.

Imagine you and your friends routinely eat out, but only one pays. You're careful with money and what others owe you. How can everyone access the info without it being changed?

You'll keep a notebook of your evening's transactions. Everyone will take a page home. If one of you changed the page's data, the group would notice and reject it. The majority will establish consensus and offer official facts.

Miners add a new Bitcoin block to the main blockchain every 10 minutes. The appended block contains miner-verified transactions. Now that the next block has been added, the network will receive the next set of user transactions.

Bitcoin Proof of Work—prove you earned it

Any firm needs hardworking personnel to expand and serve clients. Bitcoin isn't that different.

Bitcoin's Proof of Work consensus system needs individuals to validate and create new blocks and check for malicious actors. I'll discuss Bitcoin's blockchain consensus method.

Proof of Work helps Bitcoin reach network consensus. The network is checked and safeguarded by CPU, GPU, or ASIC Bitcoin-mining machines (Application-Specific Integrated Circuit).

Every 10 minutes, miners are rewarded in Bitcoin for securing and verifying the network. It's unlikely you'll finish the block. Miners build pools to increase their chances of winning by combining their processing power.

In the early days of Bitcoin, individual mining systems were more popular due to high maintenance costs and larger earnings prospects. Over time, people created larger and larger Bitcoin mining facilities that required a lot of space and sophisticated cooling systems to keep machines from overheating.

Proof of Work is a vital part of the Bitcoin network, as network security requires the processing power of devices purchased with fiat currency. Miners must invest in mining facilities, which creates a new business branch, mining facilities ownership. Bitcoin mining is a topic for a future article.

More mining, less reward

Bitcoin is usually scarce.

Why is it rare? It all comes down to 21,000,000 Bitcoins.

Were all Bitcoins mined? Nope. Bitcoin's supply grows until it hits 21 million coins. Initially, 50BTC each block was mined, and each block took 10 minutes. Around 2140, the last Bitcoin will be mined.

But 50BTC every 10 minutes does not give me the year 2140. Indeed careful reader. So important is Bitcoin's halving process.

What is halving?

The block reward is halved every 210,000 blocks, which takes around 4 years. The initial payout was 50BTC per block and has been decreased to 25BTC after 210,000 blocks. First halving occurred on November 28, 2012, when 10,500,000 BTC (50%) had been mined. As of April 2022, the block reward is 6.25BTC and will be lowered to 3.125BTC by 19 March 2024.

The halving method is tied to Bitcoin's hashrate. Here's what "hashrate" means.

What if we increased the number of miners and hashrate they provide to produce a block every 10 minutes? Wouldn't we manufacture blocks faster?

Every 10 minutes, blocks are generated with little asymmetry. Due to the built-in adaptive difficulty algorithm, the overall hashrate does not affect block production time. With increased hashrate, it's harder to construct a block. We can estimate when the next halving will occur because 10 minutes per block is fixed.

Building with nodes and blocks

For someone new to crypto, the unusual terms and words may be overwhelming. You'll also find everyday words that are easy to guess or have a vague idea of what they mean, how they work, and what they do. Consider blockchain technology.

Nodes and blocks: Think about that for a moment. What is your first idea?

The blockchain is a chain of validated blocks added to the main chain. What's a "block"? What's inside?

The block is another page in the blockchain book that has been filled with transaction information and accepted by the majority.

We won't go into detail about what each block includes and how it's built, as long as you understand its purpose.

What about nodes?

Nodes, along with miners, verify the blockchain's state independently. But why?

To create a full blockchain node, you must download the whole Bitcoin blockchain and check every transaction against Bitcoin's consensus criteria.

What's Bitcoin's size?

In April 2022, the Bitcoin blockchain was 389.72GB.

Bitcoin's blockchain has miners and node runners.

Let's revisit the US gold rush. Miners mine gold with their own power (physical and monetary resources) and are rewarded with gold (Bitcoin). All become richer with more gold, and so does the country.

Nodes are like sheriffs, ensuring everything is done according to consensus rules and that there are no rogue miners or network users.

Lost and held bitcoin

Does the Bitcoin exchange price match each coin's price? How many coins remain after 21,000,000? 21 million or less?

Common reason suggests a 21 million-coin supply.

What if I lost 1BTC from a cold wallet?

What if I saved 1000BTC on paper in 2010 and it was damaged?

What if I mined Bitcoin in 2010 and lost the keys?

Satoshi Nakamoto's coins? Since then, those coins haven't moved.

How many BTC are truly in circulation?

Many people are trying to answer this question, and you may discover a variety of studies and individual research on the topic. Be cautious of the findings because they can't be evaluated and the statistics are hazy guesses.

On the other hand, we have long-term investors who won't sell their Bitcoin or will sell little amounts to cover mining or living needs.

The price of Bitcoin is determined by supply and demand on exchanges using liquid BTC. How many BTC are left after subtracting lost and non-custodial BTC?

We have significantly less Bitcoin in circulation than you think, thus the price may not reflect demand if we knew the exact quantity of coins available.

True HODLers and diamond-hand investors won't sell you their coins, no matter the market.

What's UTXO?

Unspent (U) Transaction (TX) Output (O)

Imagine taking a $100 bill to a store. After choosing a drink and munchies, you walk to the checkout to pay. The cashier takes your $100 bill and gives you $25.50 in change. It's in your wallet.

Is it simply 100$? No way.

The $25.50 in your wallet is unrelated to the $100 bill you used. Your wallet's $25.50 is just bills and coins. Your wallet may contain these coins and bills:

2x 10$ 1x 10$

1x 5$ or 3x 5$

1x 0.50$ 2x 0.25$

Any combination of coins and bills can equal $25.50. You don't care, and I'd wager you've never ever considered it.

That is UTXO. Now, I'll detail the Bitcoin blockchain and how UTXO works, as it's crucial to know what coins you have in your (hopefully) cold wallet.

You purchased 1BTC. Is it all? No. UTXOs equal 1BTC. Then send BTC to a cold wallet. Say you pay 0.001BTC and send 0.999BTC to your cold wallet. Is it the 1BTC you got before? Well, yes and no. The UTXOs are the same or comparable as before, but the blockchain address has changed. It's like if you handed someone a wallet, they removed the coins needed for a network charge, then returned the rest of the coins and notes.

UTXO is a simple concept, but it's crucial to grasp how it works to comprehend dangers like dust attacks and how coins may be tracked.

Lightning Network: fast cash

You've probably heard of "Layer 2 blockchain" projects.

What does it mean?

Layer 2 on a blockchain is an additional layer that increases the speed and quantity of transactions per minute and reduces transaction fees.

Imagine going to an obsolete bank to transfer money to another account and having to pay a charge and wait. You can transfer funds via your bank account or a mobile app without paying a fee, or the fee is low, and the cash appear nearly quickly. Layer 1 and 2 payment systems are different.

Layer 1 is not obsolete; it merely has more essential things to focus on, including providing the blockchain with new, validated blocks, whereas Layer 2 solutions strive to offer Layer 1 with previously processed and verified transactions. The primary blockchain, Bitcoin, will only receive the wallets' final state. All channel transactions until shutting and balancing are irrelevant to the main chain.

Layer 2 and the Lightning Network's goal are now clear. Most Layer 2 solutions on multiple blockchains are created as blockchains, however Lightning Network is not. Remember the following remark, as it best describes Lightning.

Lightning Network connects public and private Bitcoin wallets.

Opening a private channel with another wallet notifies just two parties. The creation and opening of a public channel tells the network that anyone can use it.

Why create a public Lightning Network channel?

Every transaction through your channel generates fees.

Money, if you don't know.

See who benefits when in doubt.

Anonymity, huh?

Bitcoin anonymity? Bitcoin's anonymity was utilized to launder money.

Well… You've heard similar stories. When you ask why or how it permits people to remain anonymous, the conversation ends as if it were just a story someone heard.

Bitcoin isn't private. Pseudonymous.

What if someone tracks your transactions and discovers your wallet address? Where is your anonymity then?

Bitcoin is like bulletproof glass storage; you can't take or change the money. If you dig and analyze the data, you can see what's inside.

Every online action leaves a trace, and traces may be tracked. People often forget this guideline.

A tool like that can help you observe what the major players, or whales, are doing with their coins when the market is uncertain. Many people spend time analyzing on-chain data. Worth it?

Ask yourself a question. What are the big players' options? Do you think they're letting you see their wallets for a small on-chain data fee?

Instead of short-term behaviors, focus on long-term trends.

More wallet transactions leave traces. Having nothing to conceal isn't a defect. Can it lead to regulating Bitcoin so every transaction is tracked like in banks today?

But wait. How can criminals pay out Bitcoin? They're doing it, aren't they?

Mixers can anonymize your coins, letting you to utilize them freely. This is not a guide on how to make your coins anonymous; it could do more harm than good if you don't know what you're doing.

Remember, being anonymous attracts greater attention.

Bitcoin isn't the only cryptocurrency we can use to buy things. Using cryptocurrency appropriately can provide usability and anonymity. Monero (XMR), Zcash (ZEC), and Litecoin (LTC) following the Mimblewimble upgrade are examples.

Summary

Congratulations! You've reached the conclusion of the article and learned about Bitcoin and cryptocurrency. You've entered the future.

You know what Bitcoin is, how its blockchain works, and why it's not anonymous. I bet you can explain Lightning Network and UTXO to your buddies.

Markets rely on knowledge. Prepare yourself for success before taking the first step. Let your expertise be your edge.

This article is a summary of this one.

Yusuf Ibrahim

4 years ago

How to sell 10,000 NFTs on OpenSea for FREE (Puppeteer/NodeJS)

So you've finished your NFT collection and are ready to sell it. Except you can't figure out how to mint them! Not sure about smart contracts or want to avoid rising gas prices. You've tried and failed with apps like Mini mouse macro, and you're not familiar with Selenium/Python. Worry no more, NodeJS and Puppeteer have arrived!

Learn how to automatically post and sell all 1000 of my AI-generated word NFTs (Nakahana) on OpenSea for FREE!

My NFT project — Nakahana |

NOTE: Only NFTs on the Polygon blockchain can be sold for free; Ethereum requires an initiation charge. NFTs can still be bought with (wrapped) ETH.

If you want to go right into the code, here's the GitHub link: https://github.com/Yusu-f/nftuploader

Let's start with the knowledge and tools you'll need.

What you should know

You must be able to write and run simple NodeJS programs. You must also know how to utilize a Metamask wallet.

Tools needed

- NodeJS. You'll need NodeJs to run the script and NPM to install the dependencies.

- Puppeteer – Use Puppeteer to automate your browser and go to sleep while your computer works.

- Metamask – Create a crypto wallet and sign transactions using Metamask (free). You may learn how to utilize Metamask here.

- Chrome – Puppeteer supports Chrome.

Let's get started now!

Starting Out

Clone Github Repo to your local machine. Make sure that NodeJS, Chrome, and Metamask are all installed and working. Navigate to the project folder and execute npm install. This installs all requirements.

Replace the “extension path” variable with the Metamask chrome extension path. Read this tutorial to find the path.

Substitute an array containing your NFT names and metadata for the “arr” variable and the “collection_name” variable with your collection’s name.

Run the script.

After that, run node nftuploader.js.

Open a new chrome instance (not chromium) and Metamask in it. Import your Opensea wallet using your Secret Recovery Phrase or create a new one and link it. The script will be unable to continue after this but don’t worry, it’s all part of the plan.

Next steps

Open your terminal again and copy the route that starts with “ws”, e.g. “ws:/localhost:53634/devtools/browser/c07cb303-c84d-430d-af06-dd599cf2a94f”. Replace the path in the connect function of the nftuploader.js script.

const browser = await puppeteer.connect({ browserWSEndpoint: "ws://localhost:58533/devtools/browser/d09307b4-7a75-40f6-8dff-07a71bfff9b3", defaultViewport: null });

Rerun node nftuploader.js. A second tab should open in THE SAME chrome instance, navigating to your Opensea collection. Your NFTs should now start uploading one after the other! If any errors occur, the NFTs and errors are logged in an errors.log file.

Error Handling

The errors.log file should show the name of the NFTs and the error type. The script has been changed to allow you to simply check if an NFT has already been posted. Simply set the “searchBeforeUpload” setting to true.

We're done!

If you liked it, you can buy one of my NFTs! If you have any concerns or would need a feature added, please let me know.

Thank you to everyone who has read and liked. I never expected it to be so popular.

You might also like

Will Lockett

3 years ago

The world will be changed by this molten salt battery.

Four times the energy density and a fraction of lithium-cost ion's

As the globe abandons fossil fuels, batteries become more important. EVs, solar, wind, tidal, wave, and even local energy grids will use them. We need a battery revolution since our present batteries are big, expensive, and detrimental to the environment. A recent publication describes a battery that solves these problems. But will it be enough?

Sodium-sulfur molten salt battery. It has existed for a long time and uses molten salt as an electrolyte (read more about molten salt batteries here). These batteries are cheaper, safer, and more environmentally friendly because they use less eco-damaging materials, are non-toxic, and are non-flammable.

Previous molten salt batteries used aluminium-sulphur chemistries, which had a low energy density and required high temperatures to keep the salt liquid. This one uses a revolutionary sodium-sulphur chemistry and a room-temperature-melting salt, making it more useful, affordable, and eco-friendly. To investigate this, researchers constructed a button-cell prototype and tested it.

First, the battery was 1,017 mAh/g. This battery is four times as energy dense as high-density lithium-ion batteries (250 mAh/g).

No one knows how much this battery would cost. A more expensive molten-salt battery costs $15 per kWh. Current lithium-ion batteries cost $132/kWh. If this new molten salt battery costs the same as present cells, it will be 90% cheaper.

This room-temperature molten salt battery could be utilized in an EV. Cold-weather heaters just need a modest backup battery.

The ultimate EV battery? If used in a Tesla Model S, you could install four times the capacity with no weight gain, offering a 1,620-mile range. This huge battery pack would cost less than Tesla's. This battery would nearly perfect EVs.

Or would it?

The battery's capacity declined by 50% after 1,000 charge cycles. This means that our hypothetical Model S would suffer this decline after 1.6 million miles, but for more cheap vehicles that use smaller packs, this would be too short. This test cell wasn't supposed to last long, so this is shocking. Future versions of this cell could be modified to live longer.

This affordable and eco-friendly cell is best employed as a grid-storage battery for renewable energy. Its safety and affordable price outweigh its short lifespan. Because this battery is made of easily accessible materials, it may be utilized to boost grid-storage capacity without causing supply chain concerns or EV battery prices to skyrocket.

Researchers are designing a bigger pouch cell (like those in phones and laptops) for this purpose. The battery revolution we need could be near. Let’s just hope it isn’t too late.

Hector de Isidro

3 years ago

Why can't you speak English fluently even though you understand it?

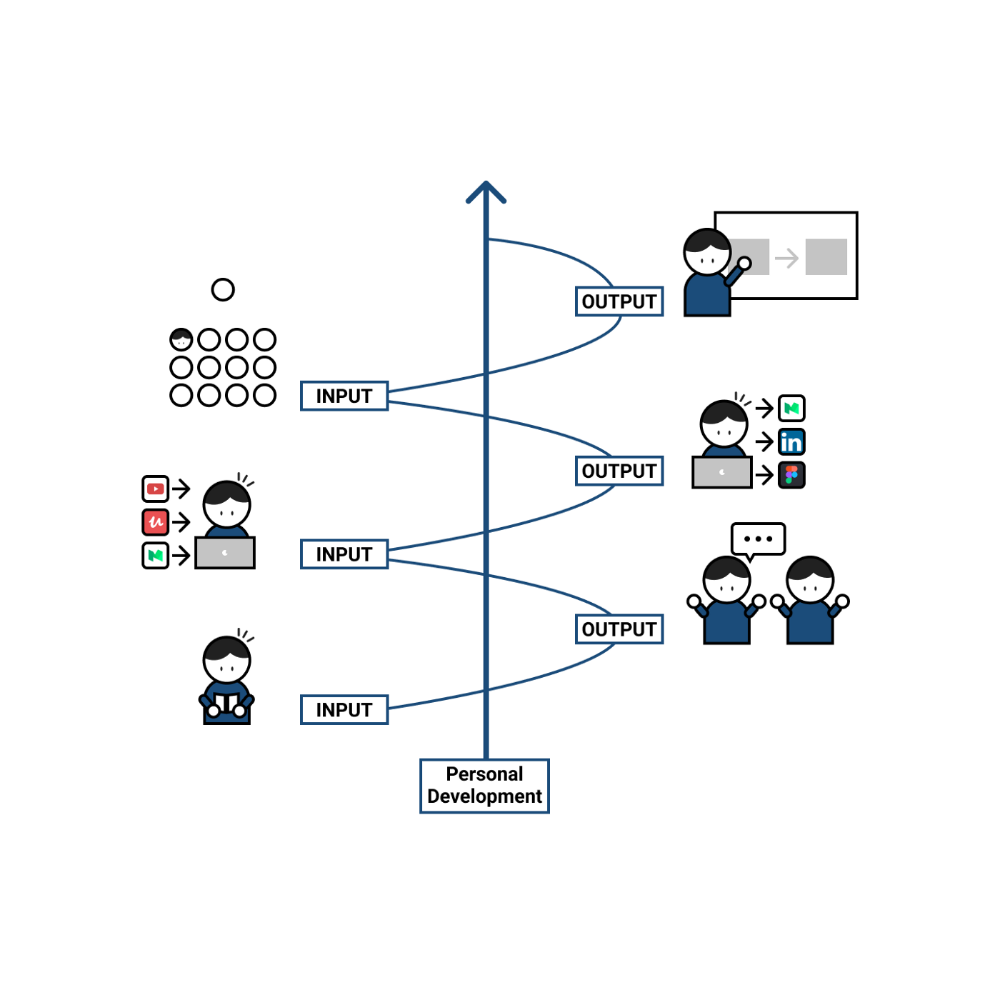

Many of us have struggled for years to master a second language (in my case, English). Because (at least in my situation) we've always used an input-based system or method.

I'll explain in detail, but briefly: We can understand some conversations or sentences (since we've trained), but we can't give sophisticated answers or speak fluently (because we have NOT trained at all).

What exactly is input-based learning?

Reading, listening, writing, and speaking are key language abilities (if you look closely at that list, it seems that people tend to order them in this way: inadvertently giving more priority to the first ones than to the last ones).

These talents fall under two learning styles:

Reading and listening are input-based activities (sometimes referred to as receptive skills or passive learning).

Writing and speaking are output-based tasks (also known as the productive skills and/or active learning).

What's the best learning style? To learn a language, we must master four interconnected skills. The difficulty is how much time and effort we give each.

According to Shion Kabasawa's books The Power of Input: How to Maximize Learning and The Power of Output: How to Change Learning to Outcome (available only in Japanese), we spend 7:3 more time on Input Based skills than Output Based skills when we should be doing the opposite, leaning more towards Output (Input: Output->3:7).

I can't tell you how he got those numbers, but I think he's not far off because, for example, think of how many people say they're learning a second language and are satisfied bragging about it by only watching TV, series, or movies in VO (and/or reading a book or whatever) their Input is: 7:0 output!

You can't be good at a sport by watching TikTok videos about it; you must play.

“being pushed to produce language puts learners in a better position to notice the ‘gaps’ in their language knowledge”, encouraging them to ‘upgrade’ their existing interlanguage system. And, as they are pushed to produce language in real time and thereby forced to automate low-level operations by incorporating them into higher-level routines, it may also contribute to the development of fluency. — Scott Thornbury (P is for Push)

How may I practice output-based learning more?

I know that listening or reading is easy and convenient because we can do it on our own in a wide range of situations, even during another activity (although, as you know, it's not ideal), writing can be tedious/boring (it's funny that we almost always excuse ourselves in the lack of ideas), and speaking requires an interlocutor. But we must leave our comfort zone and modify our thinking to go from 3:7 to 7:3. (or at least balance it better to something closer). Gradually.

“You don’t have to do a lot every day, but you have to do something. Something. Every day.” — Callie Oettinger (Do this every day)

We can practice speaking like boxers shadow box.

Speaking out loud strengthens the mind-mouth link (otherwise, you will still speak fluently in your mind but you will choke when speaking out loud). This doesn't mean we should talk to ourselves on the way to work, while strolling, or on public transportation. We should try to do it without disturbing others, such as explaining what we've heard, read, or seen (the list is endless: you can TALK about what happened yesterday, your bedtime book, stories you heard at the office, that new kitten video you saw on Instagram, an experience you had, some new fact, that new boring episode you watched on Netflix, what you ate, what you're going to do next, your upcoming vacation, what’s trending, the news of the day)

Who will correct my grammar, vocabulary, or pronunciation with an imagined friend? We can't have everything, but tools and services can help [1].

Lack of bravery

Fear of speaking a language different than one's mother tongue in front of native speakers is global. It's easier said than done, because strangers, not your friends, will always make fun of your accent or faults. Accept it and try again. Karma will prevail.

Perfectionism is a trap. Stop self-sabotaging. Communication is key (and for that you have to practice the Output too ).

“Don’t forget to have fun and enjoy the process.” — Ruri Ohama

[1] Grammarly, Deepl, Google Translate, etc.

Thomas Huault

3 years ago

A Mean Reversion Trading Indicator Inspired by Classical Mechanics Is The Kinetic Detrender

DATA MINING WITH SUPERALGORES

Old pots produce the best soup.

Science has always inspired indicator design. From physics to signal processing, many indicators use concepts from mechanical engineering, electronics, and probability. In Superalgos' Data Mining section, we've explored using thermodynamics and information theory to construct indicators and using statistical and probabilistic techniques like reduced normal law to take advantage of low probability events.

An asset's price is like a mechanical object revolving around its moving average. Using this approach, we could design an indicator using the oscillator's Total Energy. An oscillator's energy is finite and constant. Since we don't expect the price to follow the harmonic oscillator, this energy should deviate from the perfect situation, and the maximum of divergence may provide us valuable information on the price's moving average.

Definition of the Harmonic Oscillator in Few Words

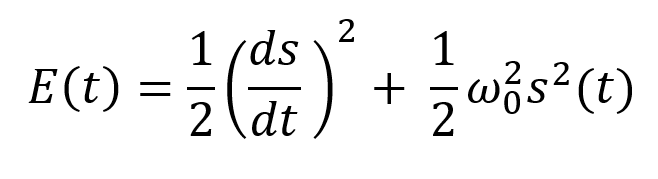

Sinusoidal function describes a harmonic oscillator. The time-constant energy equation for a harmonic oscillator is:

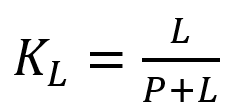

With

Time saves energy.

In a mechanical harmonic oscillator, total energy equals kinetic energy plus potential energy. The formula for energy is the same for every kind of harmonic oscillator; only the terms of total energy must be adapted to fit the relevant units. Each oscillator has a velocity component (kinetic energy) and a position to equilibrium component (potential energy).

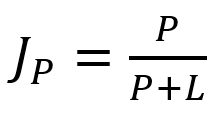

The Price Oscillator and the Energy Formula

Considering the harmonic oscillator definition, we must specify kinetic and potential components for our price oscillator. We define oscillator velocity as the rate of change and equilibrium position as the price's distance from its moving average.

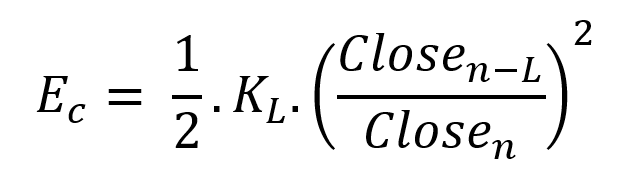

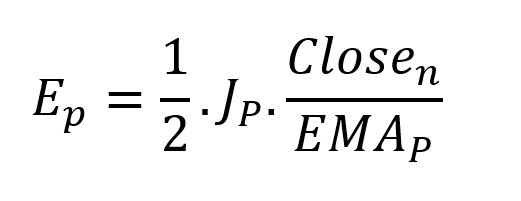

Price kinetic energy:

It's like:

With

and

L is the number of periods for the rate of change calculation and P for the close price EMA calculation.

Total price oscillator energy =

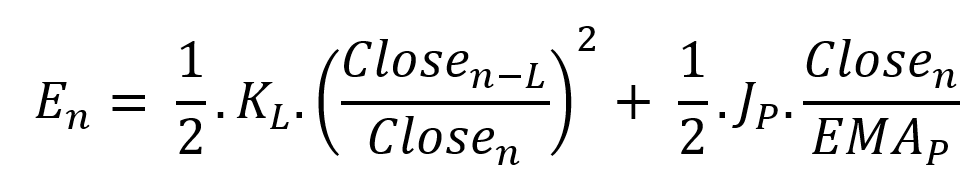

Given that an asset's price can theoretically vary at a limitless speed and be endlessly far from its moving average, we don't expect this formula's outcome to be constrained. We'll normalize it using Z-Score for convenience of usage and readability, which also allows probabilistic interpretation.

Over 20 periods, we'll calculate E's moving average and standard deviation.

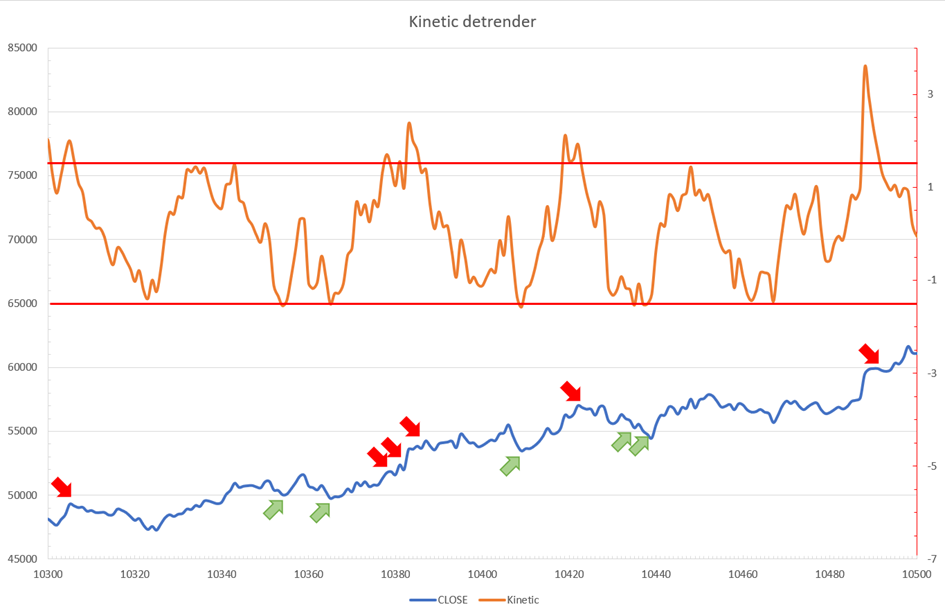

We calculated Z on BTC/USDT with L = 10 and P = 21 using Knime Analytics.

The graph is detrended. We added two horizontal lines at +/- 1.6 to construct a 94.5% probability zone based on reduced normal law tables. Price cycles to its moving average oscillate clearly. Red and green arrows illustrate where the oscillator crosses the top and lower limits, corresponding to the maximum/minimum price oscillation. Since the results seem noisy, we may apply a non-lagging low-pass or multipole filter like Butterworth or Laguerre filters and employ dynamic bands at a multiple of Z's standard deviation instead of fixed levels.

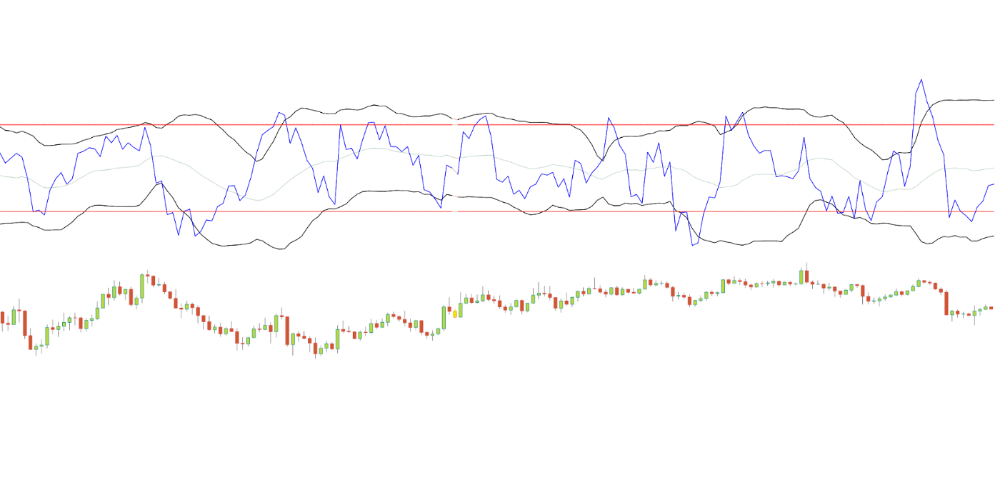

Kinetic Detrender Implementation in Superalgos

The Superalgos Kinetic detrender features fixed upper and lower levels and dynamic volatility bands.

The code is pretty basic and does not require a huge amount of code lines.

It starts with the standard definitions of the candle pointer and the constant declaration :

let candle = record.current

let len = 10

let P = 21

let T = 20

let up = 1.6

let low = 1.6Upper and lower dynamic volatility band constants are up and low.

We proceed to the initialization of the previous value for EMA :

if (variable.prevEMA === undefined) {

variable.prevEMA = candle.close

}And the calculation of EMA with a function (it is worth noticing the function is declared at the end of the code snippet in Superalgos) :

variable.ema = calculateEMA(P, candle.close, variable.prevEMA)

//EMA calculation

function calculateEMA(periods, price, previousEMA) {

let k = 2 / (periods + 1)

return price * k + previousEMA * (1 - k)

}The rate of change is calculated by first storing the right amount of close price values and proceeding to the calculation by dividing the current close price by the first member of the close price array:

variable.allClose.push(candle.close)

if (variable.allClose.length > len) {

variable.allClose.splice(0, 1)

}

if (variable.allClose.length === len) {

variable.roc = candle.close / variable.allClose[0]

} else {

variable.roc = 1

}Finally, we get energy with a single line:

variable.E = 1 / 2 * len * variable.roc + 1 / 2 * P * candle.close / variable.emaThe Z calculation reuses code from Z-Normalization-based indicators:

variable.allE.push(variable.E)

if (variable.allE.length > T) {

variable.allE.splice(0, 1)

}

variable.sum = 0

variable.SQ = 0

if (variable.allE.length === T) {

for (var i = 0; i < T; i++) {

variable.sum += variable.allE[i]

}

variable.MA = variable.sum / T

for (var i = 0; i < T; i++) {

variable.SQ += Math.pow(variable.allE[i] - variable.MA, 2)

}

variable.sigma = Math.sqrt(variable.SQ / T)

variable.Z = (variable.E - variable.MA) / variable.sigma

} else {

variable.Z = 0

}

variable.allZ.push(variable.Z)

if (variable.allZ.length > T) {

variable.allZ.splice(0, 1)

}

variable.sum = 0

variable.SQ = 0

if (variable.allZ.length === T) {

for (var i = 0; i < T; i++) {

variable.sum += variable.allZ[i]

}

variable.MAZ = variable.sum / T

for (var i = 0; i < T; i++) {

variable.SQ += Math.pow(variable.allZ[i] - variable.MAZ, 2)

}

variable.sigZ = Math.sqrt(variable.SQ / T)

} else {

variable.MAZ = variable.Z

variable.sigZ = variable.MAZ * 0.02

}

variable.upper = variable.MAZ + up * variable.sigZ

variable.lower = variable.MAZ - low * variable.sigZWe also update the EMA value.

variable.prevEMA = variable.EMA

Conclusion

We showed how to build a detrended oscillator using simple harmonic oscillator theory. Kinetic detrender's main line oscillates between 2 fixed levels framing 95% of the values and 2 dynamic levels, leading to auto-adaptive mean reversion zones.

Superalgos' Normalized Momentum data mine has the Kinetic detrender indication.

All the material here can be reused and integrated freely by linking to this article and Superalgos.

This post is informative and not financial advice. Seek expert counsel before trading. Risk using this material.