More on Entrepreneurship/Creators

Antonio Neto

3 years ago

Should you skip the minimum viable product?

Are MVPs outdated and have no place in modern product culture?

Frank Robinson coined "MVP" in 2001. In the same year as the Agile Manifesto, the first Scrum experiment began. MVPs are old.

The concept was created to solve the waterfall problem at the time.

The market was still sour from the .com bubble. The tech industry needed a new approach. Product and Agile gained popularity because they weren't waterfall.

More than 20 years later, waterfall is dead as dead can be, but we are still talking about MVPs. Does that make sense?

What is an MVP?

Minimum viable product. You probably know that, so I'll be brief:

[…] The MVP fits your company and customer. It's big enough to cause adoption, satisfaction, and sales, but not bloated and risky. It's the product with the highest ROI/risk. […] — Frank Robinson, SyncDev

MVP is a complete product. It's not a prototype. It's your product's first iteration, which you'll improve. It must drive sales and be user-friendly.

At the MVP stage, you should know your product's core value, audience, and price. We are way deep into early adoption territory.

What about all the things that come before?

Modern product discovery

Eric Ries popularized the term with The Lean Startup in 2011. (Ries would work with the concept since 2008, but wide adoption came after the book was released).

Ries' definition of MVP was similar to Robinson's: "Test the market" before releasing anything. Ries never mentioned money, unlike Jobs. His MVP's goal was learning.

“Remove any feature, process, or effort that doesn't directly contribute to learning” — Eric Ries, The Lean Startup

Product has since become more about "what" to build than building it. What started as a learning tool is now a discovery discipline: fake doors, prototyping, lean inception, value proposition canvas, continuous interview, opportunity tree... These are cheap, effective learning tools.

Over time, companies realized that "maximum ROI divided by risk" started with discovery, not the MVP. MVPs are still considered discovery tools. What is the problem with that?

Time to Market vs Product Market Fit

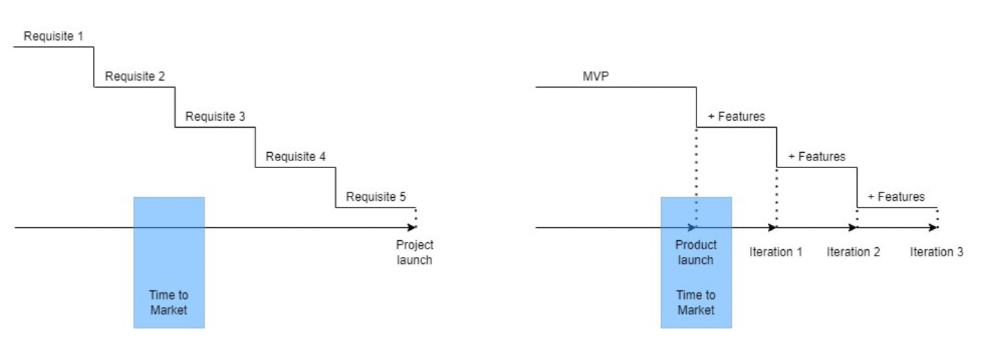

Waterfall's Time to Market is its biggest flaw. Since projects are sliced horizontally rather than vertically, when there is nothing else to be done, it’s not because the product is ready, it’s because no one cares to buy it anymore.

MVPs were originally conceived as a way to cut corners and speed Time to Market by delivering more customer requests after they paid.

Original product development was waterfall-like.

Time to Market defines an optimal, specific window in which value should be delivered. It's impossible to predict how long or how often this window will be open.

Product Market Fit makes this window a "state." You don’t achieve Product Market Fit, you have it… and you may lose it.

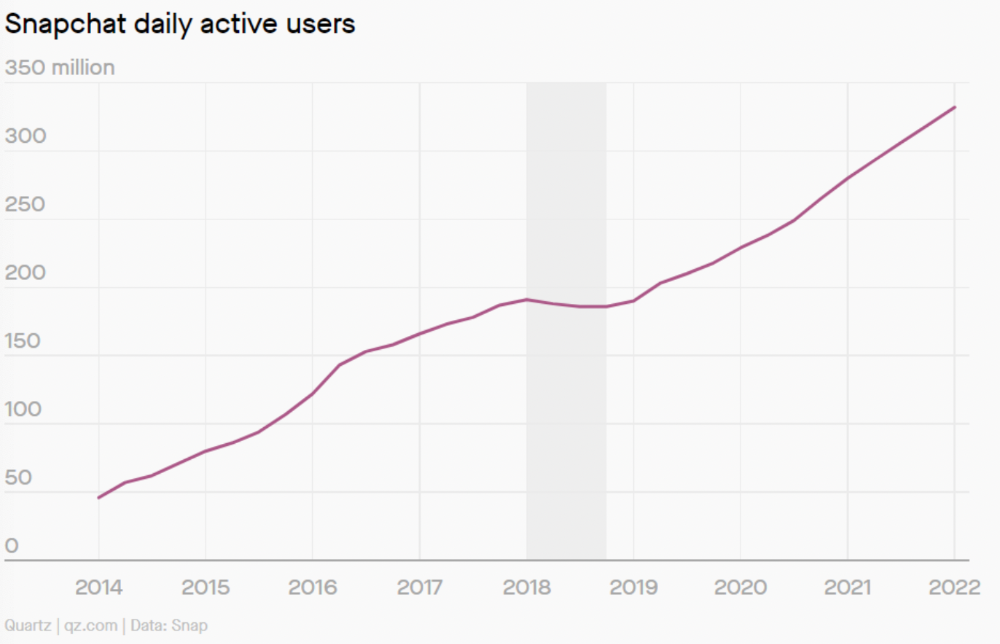

Take, for example, Snapchat. They had a great time to market, but lost product-market fit later. They regained product-market fit in 2018 and have grown since.

An MVP couldn't handle this. What should Snapchat do? Launch Snapchat 2 and see what the market was expecting differently from the last time? MVPs are a snapshot in time that may be wrong in two weeks.

MVPs are mini-projects. Instead of spending a lot of time and money on waterfall, you spend less but are still unsure of the results.

MVPs aren't always wrong. When releasing your first product version, consider an MVP.

Minimum viable product became less of a thing on its own and more interchangeable with Alpha Release or V.1 release over time.

Modern discovery technics are more assertive and predictable than the MVP, but clarity comes only when you reach the market.

MVPs aren't the starting point, but they're the best way to validate your product concept.

Tim Denning

3 years ago

Elon Musk’s Rich Life Is a Nightmare

I'm sure you haven't read about Elon's other side.

Elon divorced badly.

Nobody's surprised.

Imagine you're a parent. Someone isn't home year-round. What's next?

That’s what happened to YOLO Elon.

He can do anything. He can intervene in wars, shoot his mouth off, bang anyone he wants, avoid tax, make cool tech, buy anything his ego desires, and live anywhere exotic.

Few know his billionaire backstory. I'll tell you so you don't worship his lifestyle. It’s a cult.

Only his career succeeds. His life is a nightmare otherwise.

Psychopaths' schedule

Elon has said he works 120-hour weeks.

As he told the reporter about his job, he choked up, which was unusual for him.

His crazy workload and lack of sleep forced him to scold innocent Wall Street analysts. Later, he apologized.

In the same interview, he admits he hadn't taken more than a week off since 2001, when he was bedridden with malaria. Elon stays home after a near-death experience.

He's rarely outside.

Elon says he sometimes works 3 or 4 days straight.

He admits his crazy work schedule has cost him time with his kids and friends.

Elon's a slave

Elon's birthday description made him emotional.

Elon worked his entire birthday.

"No friends, nothing," he said, stuttering.

His brother's wedding in Catalonia was 48 hours after his birthday. That meant flying there from Tesla's factory prison.

He arrived two hours before the big moment, barely enough time to eat and change, let alone see his brother.

Elon had to leave after the bouquet was tossed to a crowd of billionaire lovers. He missed his brother's first dance with his wife.

Shocking.

He went straight to Tesla's prison.

The looming health crisis

Elon was asked if overworking affected his health.

Not great. Friends are worried.

Now you know why Elon tweets dumb things. Working so hard has probably caused him mental health issues.

Mental illness removed my reality filter. You do stupid things because you're tired.

Astronauts pelted Elon

Elon's overwork isn't the first time his life has made him emotional.

When asked about Neil Armstrong and Gene Cernan criticizing his SpaceX missions, he got emotional. Elon's heroes.

They're why he started the company, and they mocked his work. In another interview, we see how Elon’s business obsession has knifed him in the heart.

Once you have a company, you must feed, nurse, and care for it, even if it destroys you.

"Yep," Elon says, tearing up.

In the same interview, he's asked how Tesla survived the 2008 recession. Elon stopped the interview because he was crying. When Tesla and SpaceX filed for bankruptcy in 2008, he nearly had a nervous breakdown. He called them his "children."

All the time, he's risking everything.

Jack Raines explains best:

Too much money makes you a slave to your net worth.

Elon's emotions are admirable. It's one of the few times he seems human, not like an alien Cyborg.

Stop idealizing Elon's lifestyle

Building a side business that becomes a billion-dollar unicorn startup is a nightmare.

"Billionaire" means financially wealthy but otherwise broke. A rich life includes more than business and money.

This post is a summary. Read full article here

Antonio Neto

3 years ago

What's up with tech?

Massive Layoffs, record low VC investment, debate over crash... why is it happening and what’s the endgame?

This article generalizes a diverse industry. For objectivity, specific tech company challenges like growing competition within named segments won't be considered. Please comment on the posts.

According to Layoffs.fyi, nearly 120.000 people have been fired from startups since March 2020. More than 700 startups have fired 1% to 100% of their workforce. "The tech market is crashing"

Venture capital investment dropped 19% QoQ in the first four months of 2022, a 2018 low. Since January 2022, Nasdaq has dropped 27%. Some believe the tech market is collapsing.

It's bad, but nothing has crashed yet. We're about to get super technical, so buckle up!

I've written a follow-up article about what's next. For a more optimistic view of the crisis' aftermath, see: Tech Diaspora and Silicon Valley crisis

What happened?

Insanity reigned. Last decade, everyone became a unicorn. Seed investments can be made without a product or team. While the "real world" economy suffered from the pandemic for three years, tech companies enjoyed the "new normal."

COVID sped up technology adoption on several fronts, but this "new normal" wasn't so new after many restrictions were lifted. Worse, it lived with disrupted logistics chains, high oil prices, and WW3. The consumer market has felt the industry's boom for almost 3 years. Inflation, unemployment, mental distress...what looked like a fast economic recovery now looks like unfulfilled promises.

People rethink everything they eat. Paying a Netflix subscription instead of buying beef is moronic if you can watch it for free on your cousin’s account. No matter how great your real estate app's UI is, buying a house can wait until mortgage rates drop. PLGProduct Led Growth (PLG) isn't the go-to strategy when consumers have more basic expense priorities.

Exponential growth and investment

Until recently, tech companies believed that non-exponential revenue growth was fatal. Exponential growth entails doing more with less. From Salim Ismail words:

An Exponential Organization (ExO) has 10x the impact of its peers.

Many tech companies' theories are far from reality.

Investors have funded (sometimes non-exponential) growth. Scale-driven companies throw people at problems until they're solved. Need an entire closing team because you’ve just bought a TV prime time add? Sure. Want gold-weight engineers to colorize buttons? Why not?

Tech companies don't need cash flow to do it; they can just show revenue growth and get funding. Even though it's hard to get funding, this was the market's momentum until recently.

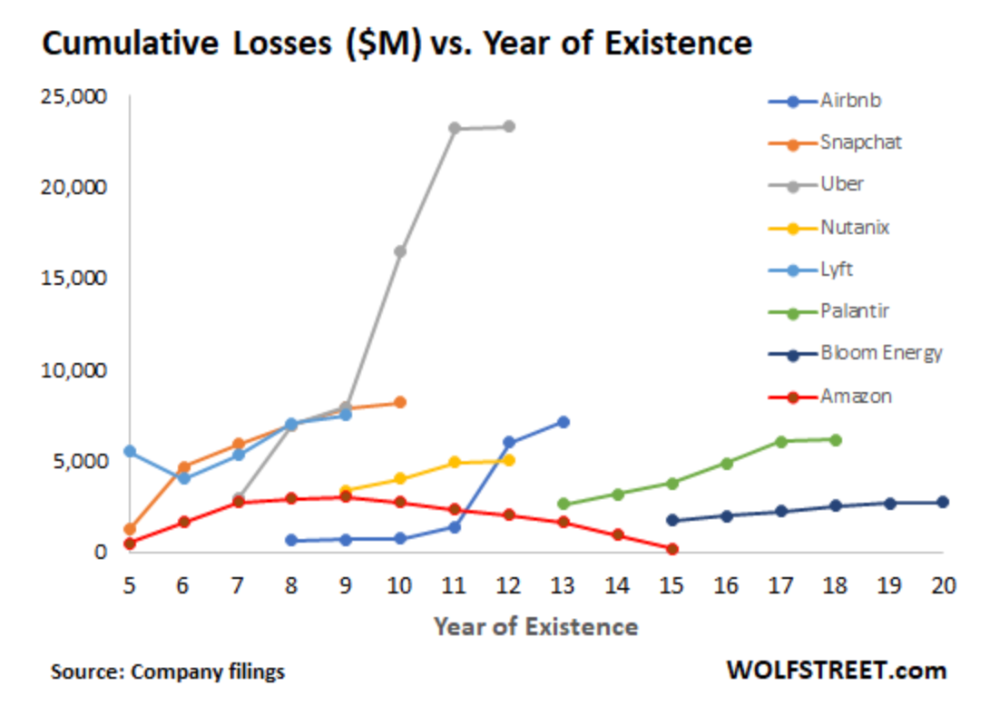

The graph at the beginning of this section shows how industry heavyweights burned money until 2020, despite being far from their market-share seed stage. Being big and being sturdy are different things, and a lot of the tech startups out there are paper tigers. Without investor money, they have no foundation.

A little bit about interest rates

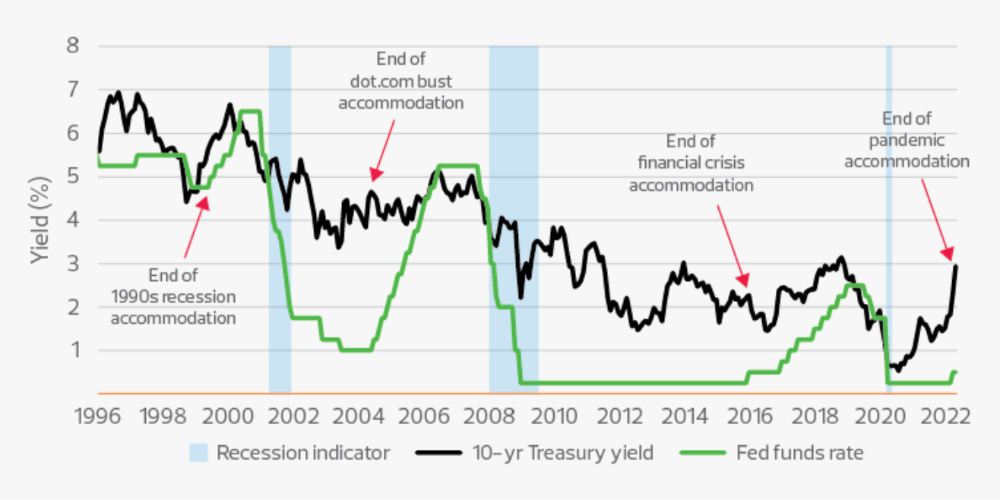

Inflation-driven high interest rates are said to be causing tough times. Investors would rather leave money in the bank than spend it (I myself said it some days ago). It’s not wrong, but it’s also not that simple.

The USA central bank (FED) is a good proxy of global economics. Dollar treasury bonds are the safest investment in the world. Buying U.S. debt, the only country that can print dollars, guarantees payment.

The graph above shows that FED interest rates are low and 10+ year bond yields are near 2018 levels. Nobody was firing at 2018. What’s with that then?

Full explanation is too technical for this article, so I'll just summarize: Bond yields rise due to lack of demand or market expectations of longer-lasting inflation. Safe assets aren't a "easy money" tactic for investors. If that were true, we'd have seen the current scenario before.

Long-term investors are protecting their capital from inflation.

Not a crash, a landing

I bombarded you with info... Let's review:

Consumption is down, hurting revenue.

Tech companies of all ages have been hiring to grow revenue at the expense of profit.

Investors expect inflation to last longer, reducing future investment gains.

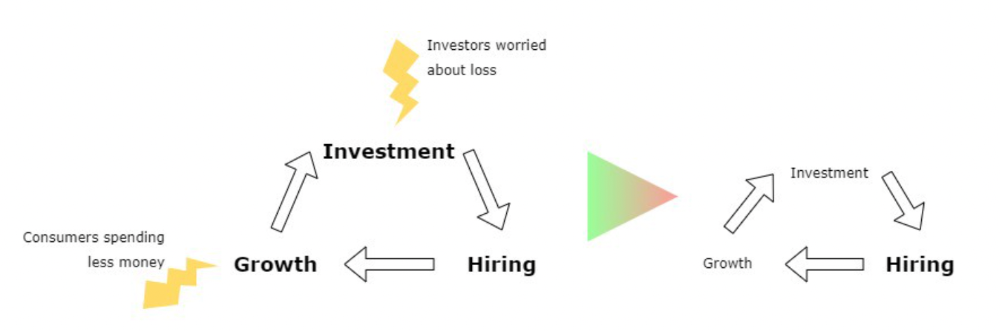

Inflation puts pressure on a wheel that was rolling full speed not long ago. Investment spurs hiring, growth, and more investment. Worried investors and consumers reduce the cycle, and hiring follows.

Long-term investors back startups. When the invested company goes public or is sold, it's ok to burn money. What happens when the payoff gets further away? What if all that money sinks? Investors want immediate returns.

Why isn't the market crashing? Technology is not losing capital. It’s expecting change. The market realizes it threw moderation out the window and is reversing course. Profitability is back on the menu.

People solve problems and make money, but they also cost money. Huge cost for the tech industry. Engineers, Product Managers, and Designers earn up to 100% more than similar roles. Businesses must be careful about who they keep and in what positions to avoid wasting money.

What the future holds

From here on, it's all speculation. I found many great articles while researching this piece. Some are cited, others aren't (like this and this). We're in an adjustment period that may or may not last long.

Big companies aren't laying off many workers. Netflix firing 100 people makes headlines, but it's only 1% of their workforce. The biggest seem to prefer not hiring over firing.

Smaller startups beyond the seeding stage may be hardest hit. Without structure or product maturity, many will die.

I expect layoffs to continue for some time, even at Meta or Amazon. I don't see any industry names falling like they did during the .com crisis, but the market will shrink.

If you are currently employed, think twice before moving out and where to.

If you've been fired, hurry, there are still many opportunities.

If you're considering a tech career, wait.

If you're starting a business, I respect you. Good luck.

You might also like

Pen Magnet

3 years ago

Why Google Staff Doesn't Work

Sundar Pichai unveiled Simplicity Sprint at Google's latest all-hands conference.

To boost employee efficiency.

Not surprising. Few envisioned Google declaring a productivity drive.

Sunder Pichai's speech:

“There are real concerns that our productivity as a whole is not where it needs to be for the head count we have. Help me create a culture that is more mission-focused, more focused on our products, more customer focused. We should think about how we can minimize distractions and really raise the bar on both product excellence and productivity.”

The primary driver driving Google's efficiency push is:

Google's efficiency push follows 13% quarterly revenue increase. Last year in the same quarter, it was 62%.

Market newcomers may argue that the previous year's figure was fuelled by post-Covid reopening and growing consumer spending. Investors aren't convinced. A promising company like Google can't afford to drop so quickly.

Google’s quarterly revenue growth stood at 13%, against 62% in last year same quarter.

Google isn't alone. In my recent essay regarding 2025 programmers, I warned about the economic downturn's effects on FAAMG's workforce. Facebook had suspended hiring, and Microsoft had promised hefty bonuses for loyal staff.

In the same article, I predicted Google's troubles. Online advertising, especially the way Google and Facebook sell it using user data, is over.

FAAMG and 2nd rung IT companies could be the first to fall without Post-COVID revival and uncertain global geopolitics.

Google has hardly ever discussed effectiveness:

Apparently openly.

Amazon treats its employees like robots, even in software positions. It has significant turnover and a terrible reputation as a result. Because of this, it rarely loses money due to staff productivity.

Amazon trumps Google. In reality, it treats its employees poorly.

Google was the founding father of the modern-day open culture.

Larry and Sergey Google founded the IT industry's Open Culture. Silicon Valley called Google's internal democracy and transparency near anarchy. Management rarely slammed decisions on employees. Surveys and internal polls ensured everyone knew the company's direction and had a vote.

20% project allotment (weekly free time to build own project) was Google's open-secret innovation component.

After Larry and Sergey's exit in 2019, this is Google's first profitability hurdle. Only Google insiders can answer these questions.

Would Google's investors compel the company's management to adopt an Amazon-style culture where the developers are treated like circus performers?

If so, would Google follow suit?

If so, how does Google go about doing it?

Before discussing Google's likely plan, let's examine programming productivity.

What determines a programmer's productivity is simple:

How would we answer Google's questions?

As a programmer, I'm more concerned about Simplicity Sprint's aftermath than its economic catalysts.

Large organizations don't care much about quarterly and annual productivity metrics. They have 10-year product-launch plans. If something seems horrible today, it's likely due to someone's lousy judgment 5 years ago who is no longer in the blame game.

Deconstruct our main question.

How exactly do you change the culture of the firm so that productivity increases?

How can you accomplish that without affecting your capacity to profit? There are countless ways to increase output without decreasing profit.

How can you accomplish this with little to no effect on employee motivation? (While not all employers care about it, in this case we are discussing the father of the open company culture.)

How do you do it for a 10-developer IT firm that is losing money versus a 1,70,000-developer organization with a trillion-dollar valuation?

When implementing a large-scale organizational change, success must be carefully measured.

The fastest way to do something is to do it right, no matter how long it takes.

You require clearly-defined group/team/role segregation and solid pass/fail matrices to:

You can give performers rewards.

Ones that are average can be inspired to improve

Underachievers may receive assistance or, in the worst-case scenario, rehabilitation

As a 20-year programmer, I associate productivity with greatness.

Doing something well, no matter how long it takes, is the fastest way to do it.

Let's discuss a programmer's productivity.

Why productivity is a strange term in programming:

Productivity is work per unit of time.

Money=time This is an economic proverb. More hours worked, more pay. Longer projects cost more.

As a buyer, you desire a quick supply. As a business owner, you want employees who perform at full capacity, creating more products to transport and boosting your profits.

All economic matrices encourage production because of our obsession with it. Productivity is the only organic way a nation may increase its GDP.

Time is money — is not just a proverb, but an economical fact.

Applying the same productivity theory to programming gets problematic. An automating computer. Its capacity depends on the software its master writes.

Today, a sophisticated program can process a billion records in a few hours. Creating one takes a competent coder and the necessary infrastructure. Learning, designing, coding, testing, and iterations take time.

Programming productivity isn't linear, unlike manufacturing and maintenance.

Average programmers produce code every day yet miss deadlines. Expert programmers go days without coding. End of sprint, they often surprise themselves by delivering fully working solutions.

Reversing the programming duties has no effect. Experts aren't needed for productivity.

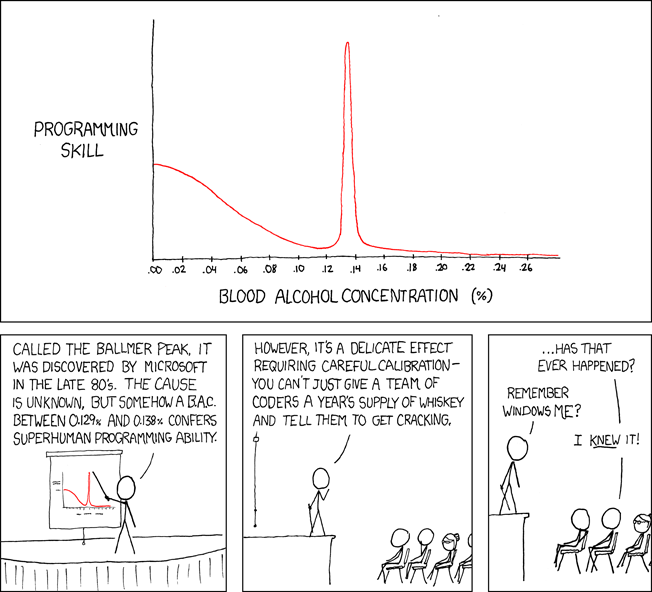

These patterns remind me of an XKCD comic.

Programming productivity depends on two factors:

The capacity of the programmer and his or her command of the principles of computer science

His or her productive bursts, how often they occur, and how long they last as they engineer the answer

At some point, productivity measurement becomes Schrödinger’s cat.

Product companies measure productivity using use cases, classes, functions, or LOCs (lines of code). In days of data-rich source control systems, programmers' merge requests and/or commits are the most preferred yardstick. Companies assess productivity by tickets closed.

Every organization eventually has trouble measuring productivity. Finer measurements create more chaos. Every measure compares apples to oranges (or worse, apples with aircraft.) On top of the measuring overhead, the endeavor causes tremendous and unnecessary stress on teams, lowering their productivity and defeating its purpose.

Macro productivity measurements make sense. Amazon's factory-era management has done it, but at great cost.

Google can pull it off if it wants to.

What Google meant in reality when it said that employee productivity has decreased:

When Google considers its employees unproductive, it doesn't mean they don't complete enough work in the allotted period.

They can't multiply their work's influence over time.

Programmers who produce excellent modules or products are unsure on how to use them.

The best data scientists are unable to add the proper parameters in their models.

Despite having a great product backlog, managers struggle to recruit resources with the necessary skills.

Product designers who frequently develop and A/B test newer designs are unaware of why measures are inaccurate or whether they have already reached the saturation point.

Most ignorant: All of the aforementioned positions are aware of what to do with their deliverables, but neither their supervisors nor Google itself have given them sufficient authority.

So, Google employees aren't productive.

How to fix it?

Business analysis: White suits introducing novel items can interact with customers from all regions. Track analytics events proactively, especially the infrequent ones.

SOLID, DRY, TEST, and AUTOMATION: Do less + reuse. Use boilerplate code creation. If something already exists, don't implement it yourself.

Build features-building capabilities: N features are created by average programmers in N hours. An endless number of features can be built by average programmers thanks to the fact that expert programmers can produce 1 capability in N hours.

Work on projects that will have a positive impact: Use the same algorithm to search for images on YouTube rather than the Mars surface.

Avoid tasks that can only be measured in terms of time linearity at all costs (if a task can be completed in N minutes, then M copies of the same task would cost M*N minutes).

In conclusion:

Software development isn't linear. Why should the makers be measured?

Notation for The Big O

I'm discussing a new way to quantify programmer productivity. (It applies to other professions, but that's another subject)

The Big O notation expresses the paradigm (the algorithmic performance concept programmers rot to ace their Google interview)

Google (or any large corporation) can do this.

Sort organizational roles into categories and specify their impact vs. time objectives. A CXO role's time vs. effect function, for instance, has a complexity of O(log N), meaning that if a CEO raises his or her work time by 8x, the result only increases by 3x.

Plot the influence of each employee over time using the X and Y axes, respectively.

Add a multiplier for Y-axis values to the productivity equation to make business objectives matter. (Example values: Support = 5, Utility = 7, and Innovation = 10).

Compare employee scores in comparable categories (developers vs. devs, CXOs vs. CXOs, etc.) and reward or help employees based on whether they are ahead of or behind the pack.

After measuring every employee's inventiveness, it's straightforward to help underachievers and praise achievers.

Example of a Big(O) Category:

If I ran Google (God forbid, its worst days are far off), here's how I'd classify it. You can categorize Google employees whichever you choose.

The Google interview truth:

O(1) < O(log n) < O(n) < O(n log n) < O(n^x) where all logarithmic bases are < n.

O(1): Customer service workers' hours have no impact on firm profitability or customer pleasure.

CXOs Most of their time is spent on travel, strategic meetings, parties, and/or meetings with minimal floor-level influence. They're good at launching new products but bad at pivoting without disaster. Their directions are being followed.

Devops, UX designers, testers Agile projects revolve around deployment. DevOps controls the levers. Their automation secures results in subsequent cycles.

UX/UI Designers must still prototype UI elements despite improved design tools.

All test cases are proportional to use cases/functional units, hence testers' work is O(N).

Architects Their effort improves code quality. Their right/wrong interference affects product quality and rollout decisions even after the design is set.

Core Developers Only core developers can write code and own requirements. When people understand and own their labor, the output improves dramatically. A single character error can spread undetected throughout the SDLC and cost millions.

Core devs introduce/eliminate 1000x bugs, refactoring attempts, and regression. Following our earlier hypothesis.

The fastest way to do something is to do it right, no matter how long it takes.

Conclusion:

Google is at the liberal extreme of the employee-handling spectrum

Microsoft faced an existential crisis after 2000. It didn't choose Amazon's data-driven people management to revitalize itself.

Instead, it entrusted developers. It welcomed emerging technologies and opened up to open source, something it previously opposed.

Google is too lax in its employee-handling practices. With that foundation, it can only follow Amazon, no matter how carefully.

Any attempt to redefine people's measurements will affect the organization emotionally.

The more Google compares apples to apples, the higher its chances for future rebirth.

Simon Egersand

3 years ago

Working from home for more than two years has taught me a lot.

Since the pandemic, I've worked from home. It’s been +2 years (wow, time flies!) now, and during this time I’ve learned a lot. My 4 remote work lessons.

I work in a remote distributed team. This team setting shaped my experience and teachings.

Isolation ("I miss my coworkers")

The most obvious point. I miss going out with my coworkers for coffee, weekend chats, or just company while I work. I miss being able to go to someone's desk and ask for help. On a remote world, I must organize a meeting, share my screen, and avoid talking over each other in Zoom - sigh!

Social interaction is more vital for my health than I believed.

Online socializing stinks

My company used to come together every Friday to play Exploding Kittens, have food and beer, and bond over non-work things.

Different today. Every Friday afternoon is for fun, but it's not the same. People with screen weariness miss meetings, which makes sense. Sometimes you're too busy on Slack to enjoy yourself.

We laugh in meetings, but it's not the same as face-to-face.

Digital social activities can't replace real-world ones

Improved Work-Life Balance, if You Let It

At the outset of the pandemic, I recognized I needed to take better care of myself to survive. After not leaving my apartment for a few days and feeling miserable, I decided to walk before work every day. This turned into a passion for exercise, and today I run or go to the gym before work. I use my commute time for healthful activities.

Working from home makes it easier to keep working after hours. I sometimes forget the time and find myself writing coding at dinnertime. I said, "One more test." This is a disadvantage, therefore I keep my office schedule.

Spend your commute time properly and keep to your office schedule.

Remote Pair Programming Is Hard

As a software developer, I regularly write code. My team sometimes uses pair programming to write code collaboratively. One person writes code while another watches, comments, and asks questions. I won't list them all here.

Internet pairing is difficult. My team struggles with this. Even with Tuple, it's challenging. I lose attention when I get a notification or check my computer.

I miss a pen and paper to rapidly sketch down my thoughts for a colleague or a whiteboard for spirited talks with others. Best answers are found through experience.

Real-life pair programming beats the best remote pair programming tools.

Lessons Learned

Here are 4 lessons I've learned working remotely for 2 years.

-

Socializing is more vital to my health than I anticipated.

-

Digital social activities can't replace in-person ones.

-

Spend your commute time properly and keep your office schedule.

-

Real-life pair programming beats the best remote tools.

Conclusion

Our era is fascinating. Remote labor has existed for years, but software companies have just recently had to adapt. Companies who don't offer remote work will lose talent, in my opinion.

We're still figuring out the finest software development approaches, programming language features, and communication methods since the 1960s. I can't wait to see what advancements assist us go into remote work.

I'll certainly work remotely in the next years, so I'm interested to see what I've learnt from this post then.

This post is a summary of this one.

Jamie Ducharme

3 years ago

How monkeypox spreads (and doesn't spread)

Monkeypox was rare until recently. In 2005, a research called a cluster of six monkeypox cases in the Republic of Congo "the longest reported chain to date."

That's changed. This year, over 25,000 monkeypox cases have been reported in 83 countries, indicating widespread human-to-human transmission.

What spreads monkeypox? Monkeypox transmission research is ongoing; findings may change. But science says...

Most cases were formerly animal-related.

According to the WHO, monkeypox was first diagnosed in an infant in the DRC in 1970. After that, instances were infrequent and often tied to animals. In 2003, 47 Americans contracted rabies from pet prairie dogs.

In 2017, Nigeria saw a significant outbreak. NPR reported that doctors diagnosed young guys without animal exposure who had genital sores. Nigerian researchers highlighted the idea of sexual transmission in a 2019 study, but the theory didn't catch on. “People tend to cling on to tradition, and the idea is that monkeypox is transmitted from animals to humans,” explains research co-author Dr. Dimie Ogoina.

Most monkeypox cases are sex-related.

Human-to-human transmission of monkeypox occurs, and sexual activity plays a role.

Joseph Osmundson, a clinical assistant professor of biology at NYU, says most transmission occurs in queer and gay sexual networks through sexual or personal contact.

Monkeypox spreads by skin-to-skin contact, especially with its blister-like rash, explains Ogoina. Researchers are exploring whether people can be asymptomatically contagious, but they are infectious until their rash heals and fresh skin forms, according to the CDC.

A July research in the New England Journal of Medicine reported that of more than 500 monkeypox cases in 16 countries as of June, 95% were linked to sexual activity and 98% were among males who have sex with men. WHO Director-General Tedros Adhanom Ghebreyesus encouraged males to temporarily restrict their number of male partners in July.

Is monkeypox a sexually transmitted infection (STI)?

Skin-to-skin contact can spread monkeypox, not simply sexual activities. Dr. Roy Gulick, infectious disease chief at Weill Cornell Medicine and NewYork-Presbyterian, said monkeypox is not a "typical" STI. Monkeypox isn't a STI, claims the CDC.

Most cases in the current outbreak are tied to male sexual behavior, but Osmundson thinks the virus might also spread on sports teams, in spas, or in college dorms.

Can you get monkeypox from surfaces?

Monkeypox can be spread by touching infected clothing or bedding. According to a study, a U.K. health care worker caught monkeypox in 2018 after handling ill patient's bedding.

Angela Rasmussen, a virologist at the University of Saskatchewan in Canada, believes "incidental" contact seldom distributes the virus. “You need enough virus exposure to get infected,” she says. It's conceivable after sharing a bed or towel with an infectious person, but less likely after touching a doorknob, she says.

Dr. Müge evik, a clinical lecturer in infectious diseases at the University of St. Andrews in Scotland, says there is a "spectrum" of risk connected with monkeypox. "Every exposure isn't equal," she explains. "People must know where to be cautious. Reducing [sexual] partners may be more useful than cleaning coffee shop seats.

Is monkeypox airborne?

Exposure to an infectious person's respiratory fluids can cause monkeypox, but the WHO says it needs close, continuous face-to-face contact. CDC researchers are still examining how often this happens.

Under precise laboratory conditions, scientists have shown that monkeypox can spread via aerosols, or tiny airborne particles. But there's no clear evidence that this is happening in the real world, Rasmussen adds. “This is expanding predominantly in communities of males who have sex with men, which suggests skin-to-skin contact,” she explains. If airborne transmission were frequent, she argues, we'd find more occurrences in other demographics.

In the shadow of COVID-19, people are worried about aerosolized monkeypox. Rasmussen believes the epidemiology is different. Different viruses.

Can kids get monkeypox?

More than 80 youngsters have contracted the virus thus far, mainly through household transmission. CDC says pregnant women can spread the illness to their fetus.

Among the 1970s, monkeypox predominantly affected children, but by the 2010s, it was more common in adults, according to a February study. The study's authors say routine smallpox immunization (which protects against monkeypox) halted when smallpox was eradicated. Only toddlers were born after smallpox vaccination halted decades ago. More people are vulnerable now.

Schools and daycares could become monkeypox hotspots, according to pediatric instances. Ogoina adds this hasn't happened in Nigeria's outbreaks, which is encouraging. He says, "I'm not sure if we should worry." We must be careful and seek evidence.