More on Marketing

Jano le Roux

3 years ago

Here's What I Learned After 30 Days Analyzing Apple's Microcopy

Move people with tiny words.

Apple fanboy here.

Macs are awesome.

Their iPhones rock.

$19 cloths are great.

$999 stands are amazing.

I love Apple's microcopy even more.

It's like the marketing goddess bit into the Apple logo and blessed the world with microcopy.

I took on a 30-day micro-stalking mission.

Every time I caught myself wasting time on YouTube, I had to visit Apple’s website to learn the secrets of the marketing goddess herself.

We've learned. Golden apples are calling.

Cut the friction

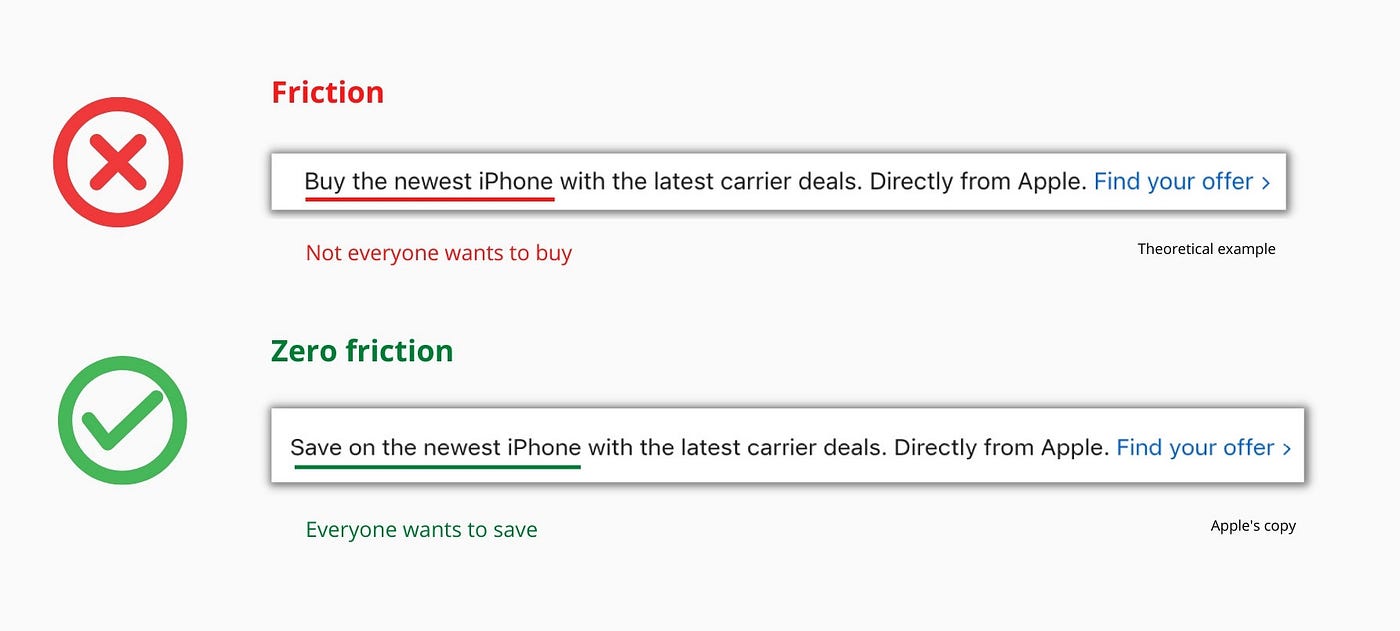

Benefit-first, not commitment-first.

Brands lose customers through friction.

Most brands don't think like customers.

Brands want sales.

Brands want newsletter signups.

Here's their microcopy:

“Buy it now.”

“Sign up for our newsletter.”

Both are difficult. They ask for big commitments.

People are simple creatures. Want pleasure without commitment.

Apple nails this.

So, instead of highlighting the commitment, they highlight the benefit of the commitment.

Saving on the latest iPhone sounds easier than buying it. Everyone saves, but not everyone buys.

A subtle change in framing reduces friction.

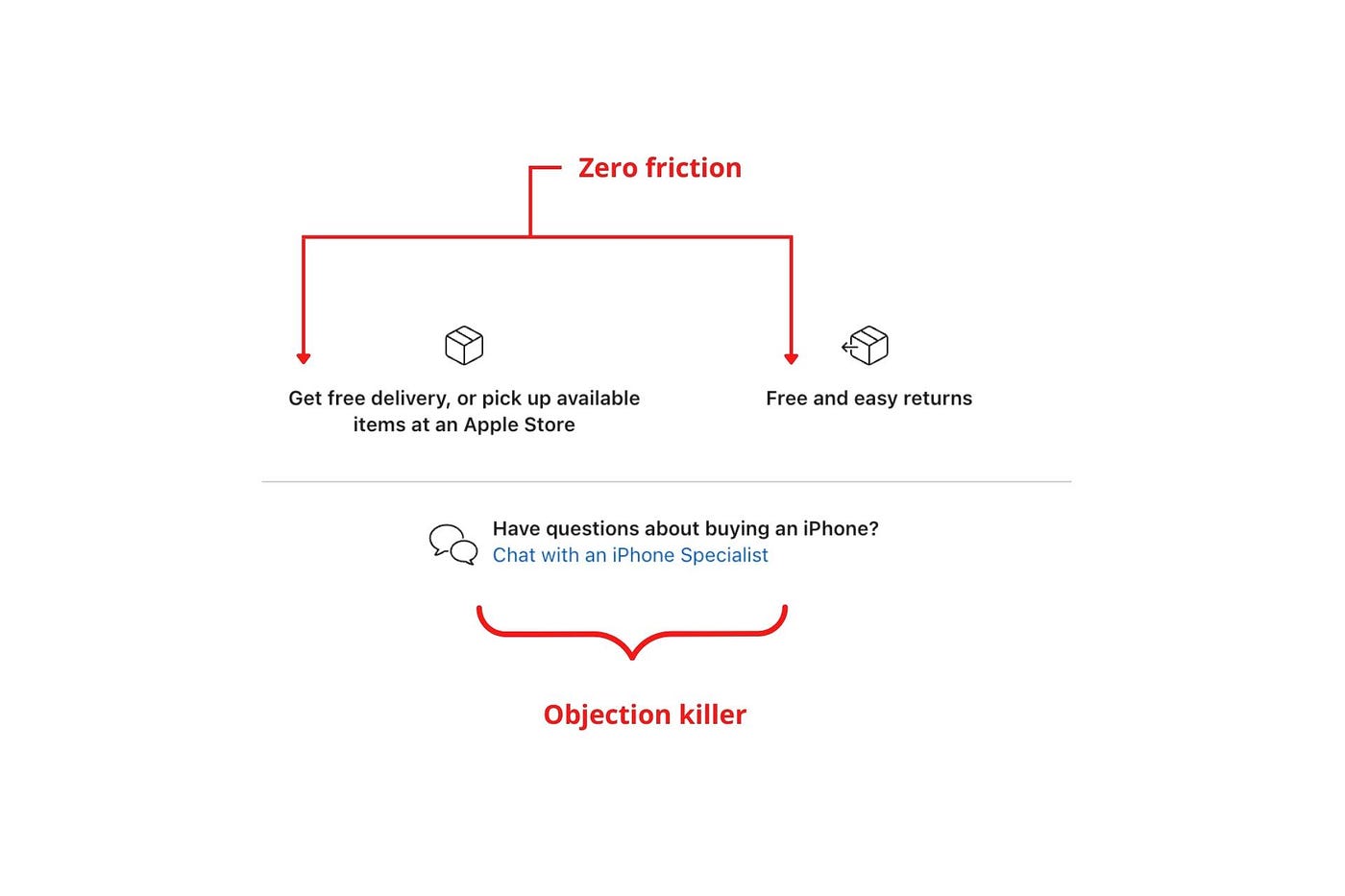

Apple eliminates customer objections to reduce friction.

Less customer friction means simpler processes.

Apple's copy expertly reassures customers about shipping fees and not being home. Apple assures customers that returning faulty products is easy.

Apple knows that talking to a real person is the best way to reduce friction and improve their copy.

Always rhyme

Learn about fine rhyme.

Poets make things beautiful with rhyme.

Copywriters use rhyme to stand out.

Apple’s copywriters have mastered the art of corporate rhyme.

Two techniques are used.

1. Perfect rhyme

Here, rhymes are identical.

2. Imperfect rhyme

Here, rhyming sounds vary.

Apple prioritizes meaning over rhyme.

Apple never forces rhymes that don't fit.

It fits so well that the copy seems accidental.

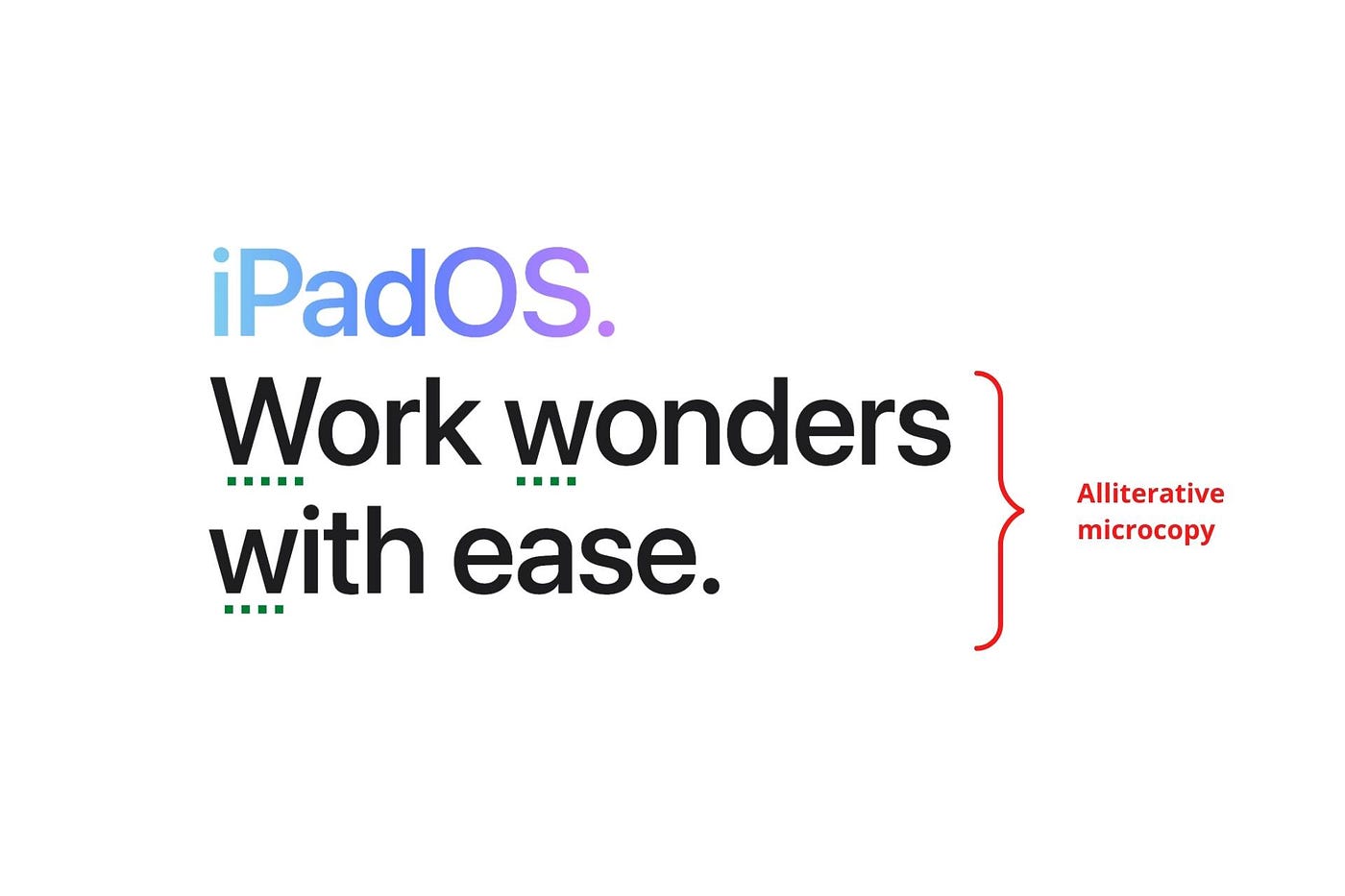

Add alliteration

Alliteration always entertains.

Alliteration repeats initial sounds in nearby words.

Apple's copy uses alliteration like no other brand I've seen to create a rhyming effect or make the text more fun to read.

For example, in the sentence "Sam saw seven swans swimming," the initial "s" sound is repeated five times. This creates a pleasing rhythm.

Microcopy overuse is like pouring ketchup on a Michelin-star meal.

Alliteration creates a memorable phrase in copywriting. It's subtler than rhyme, and most people wouldn't notice; it simply resonates.

I love how Apple uses alliteration and contrast between "wonders" and "ease".

Assonance, or repeating vowels, isn't Apple's thing.

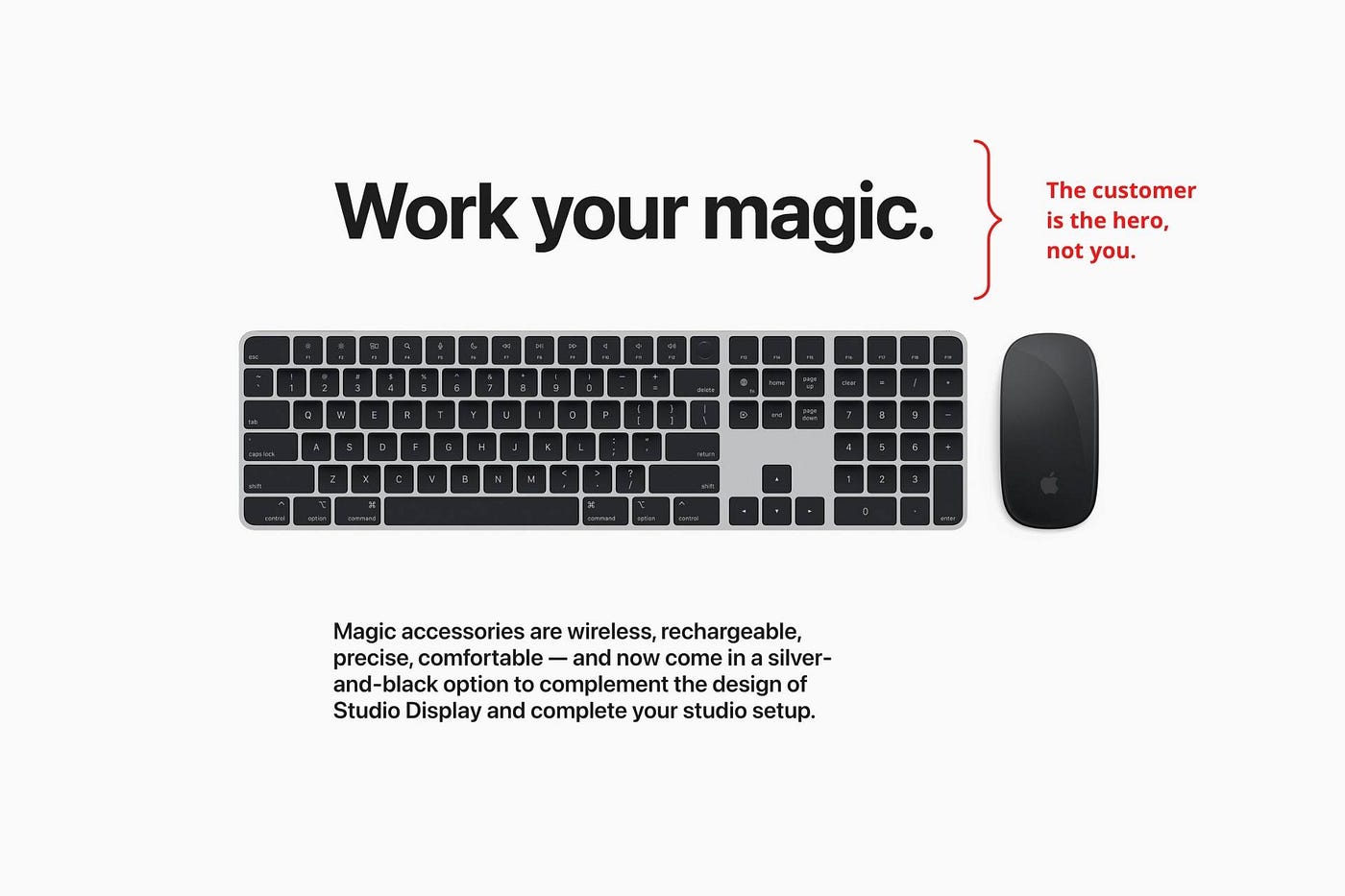

You ≠ Hero, Customer = Hero

Your brand shouldn't be the hero.

Because they'll be using your product or service, your customer should be the hero of your copywriting. With your help, they should feel like they can achieve their goals.

I love how Apple emphasizes what you can do with the machine in this microcopy.

It's divine how they position their tools as sidekicks to help below.

This one takes the cake:

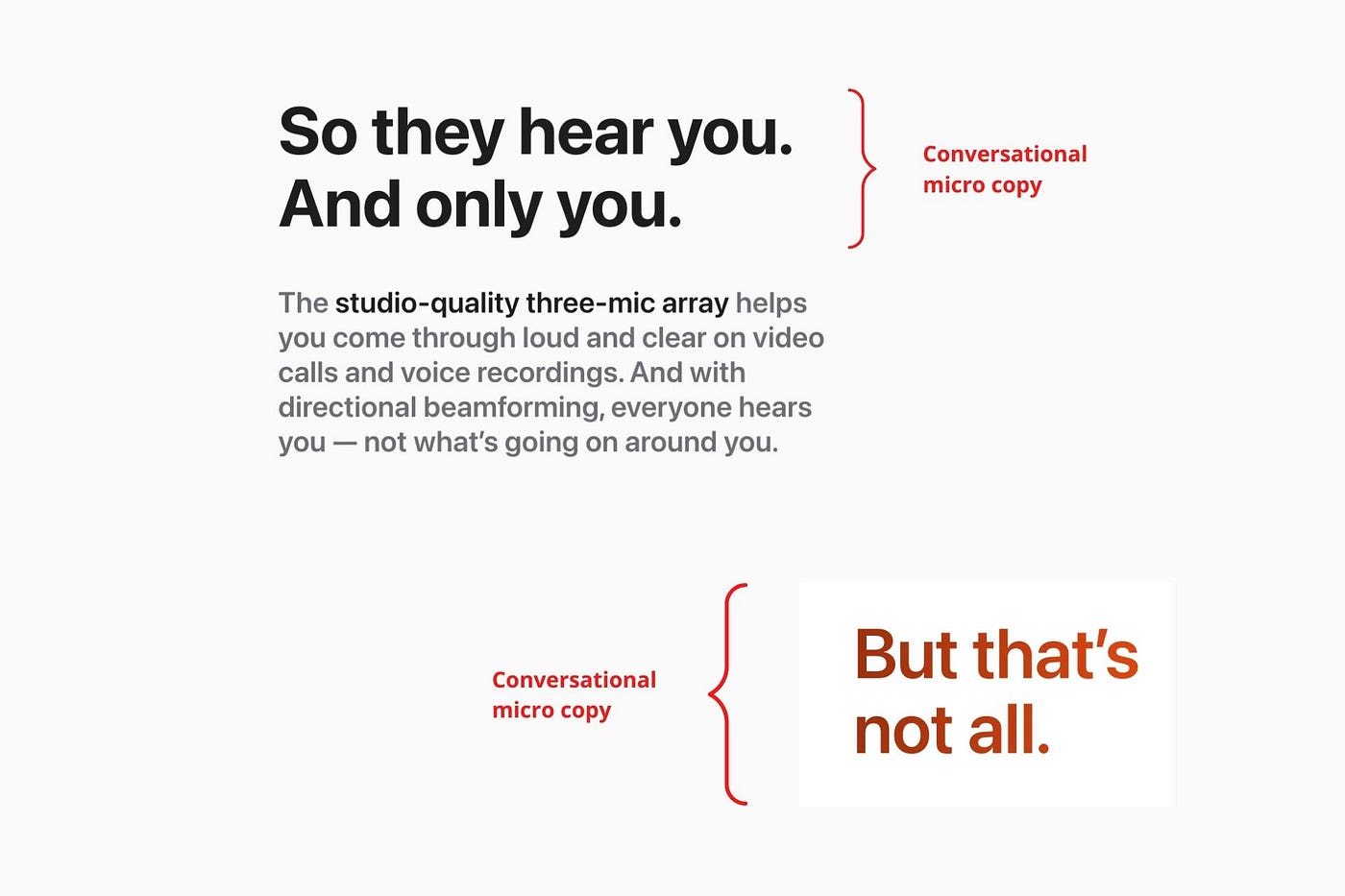

Dialogue-style writing

Conversational copy engages.

Excellent copy Like sharing gum with a friend.

This helps build audience trust.

Apple does this by using natural connecting words like "so" and phrases like "But that's not all."

Snowclone-proof

The mother of all microcopy techniques.

A snowclone uses an existing phrase or sentence to create a new one. The new phrase or sentence uses the same structure but different words.

It’s usually a well know saying like:

To be or not to be.

This becomes a formula:

To _ or not to _.

Copywriters fill in the blanks with cause-related words. Example:

To click or not to click.

Apple turns "survival of the fittest" into "arrival of the fittest."

It's unexpected and surprises the reader.

So this was fun.

But my fun has just begun.

Microcopy is 21st-century poetry.

I came as an Apple fanboy.

I leave as an Apple fanatic.

Now I’m off to find an apple tree.

Cause you know how it goes.

(Apples, trees, etc.)

This post is a summary. Original post available here.

Guillaume Dumortier

2 years ago

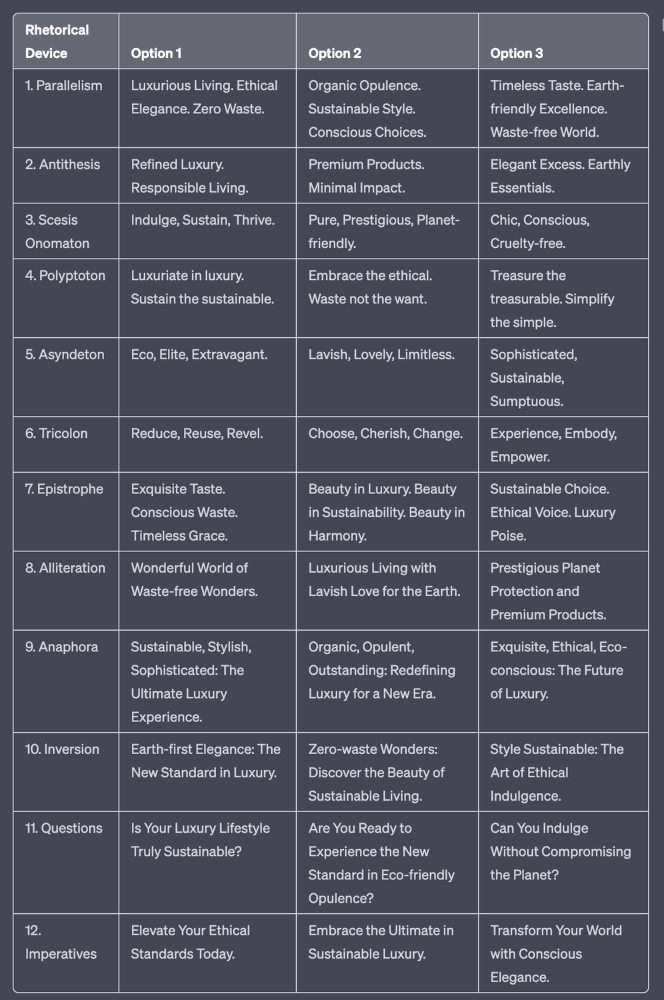

Mastering the Art of Rhetoric: A Guide to Rhetorical Devices in Successful Headlines and Titles

Unleash the power of persuasion and captivate your audience with compelling headlines.

As the old adage goes, "You never get a second chance to make a first impression."

In the world of content creation and social ads, headlines and titles play a critical role in making that first impression.

A well-crafted headline can make the difference between an article being read or ignored, a video being clicked on or bypassed, or a product being purchased or passed over.

To make an impact with your headlines, mastering the art of rhetoric is essential. In this post, we'll explore various rhetorical devices and techniques that can help you create headlines that captivate your audience and drive engagement.

tl;dr : Headline Magician will help you craft the ultimate headline titles powered by rhetoric devices

Example with a high-end luxury organic zero-waste skincare brand

✍️ The Power of Alliteration

Alliteration is the repetition of the same consonant sound at the beginning of words in close proximity. This rhetorical device lends itself well to headlines, as it creates a memorable, rhythmic quality that can catch a reader's attention.

By using alliteration, you can make your headlines more engaging and easier to remember.

Examples:

"Crafting Compelling Content: A Comprehensive Course"

"Mastering the Art of Memorable Marketing"

🔁 The Appeal of Anaphora

Anaphora is the repetition of a word or phrase at the beginning of successive clauses. This rhetorical device emphasizes a particular idea or theme, making it more memorable and persuasive.

In headlines, anaphora can be used to create a sense of unity and coherence, which can draw readers in and pique their interest.

Examples:

"Create, Curate, Captivate: Your Guide to Social Media Success"

"Innovation, Inspiration, and Insight: The Future of AI"

🔄 The Intrigue of Inversion

Inversion is a rhetorical device where the normal order of words is reversed, often to create an emphasis or achieve a specific effect.

In headlines, inversion can generate curiosity and surprise, compelling readers to explore further.

Examples:

"Beneath the Surface: A Deep Dive into Ocean Conservation"

"Beyond the Stars: The Quest for Extraterrestrial Life"

⚖️ The Persuasive Power of Parallelism

Parallelism is a rhetorical device that involves using similar grammatical structures or patterns to create a sense of balance and symmetry.

In headlines, parallelism can make your message more memorable and impactful, as it creates a pleasing rhythm and flow that can resonate with readers.

Examples:

"Eat Well, Live Well, Be Well: The Ultimate Guide to Wellness"

"Learn, Lead, and Launch: A Blueprint for Entrepreneurial Success"

⏭️ The Emphasis of Ellipsis

Ellipsis is the omission of words, typically indicated by three periods (...), which suggests that there is more to the story.

In headlines, ellipses can create a sense of mystery and intrigue, enticing readers to click and discover what lies behind the headline.

Examples:

"The Secret to Success... Revealed"

"Unlocking the Power of Your Mind... A Step-by-Step Guide"

🎭 The Drama of Hyperbole

Hyperbole is a rhetorical device that involves exaggeration for emphasis or effect.

In headlines, hyperbole can grab the reader's attention by making bold, provocative claims that stand out from the competition. Be cautious with hyperbole, however, as overuse or excessive exaggeration can damage your credibility.

Examples:

"The Ultimate Guide to Mastering Any Skill in Record Time"

"Discover the Revolutionary Technique That Will Transform Your Life"

❓The Curiosity of Questions

Posing questions in your headlines can be an effective way to pique the reader's curiosity and encourage engagement.

Questions compel the reader to seek answers, making them more likely to click on your content. Additionally, questions can create a sense of connection between the content creator and the audience, fostering a sense of dialogue and discussion.

Examples:

"Are You Making These Common Mistakes in Your Marketing Strategy?"

"What's the Secret to Unlocking Your Creative Potential?"

💥 The Impact of Imperatives

Imperatives are commands or instructions that urge the reader to take action. By using imperatives in your headlines, you can create a sense of urgency and importance, making your content more compelling and actionable.

Examples:

"Master Your Time Management Skills Today"

"Transform Your Business with These Innovative Strategies"

💢 The Emotion of Exclamations

Exclamations are powerful rhetorical devices that can evoke strong emotions and convey a sense of excitement or urgency.

Including exclamations in your headlines can make them more attention-grabbing and shareable, increasing the chances of your content being read and circulated.

Examples:

"Unlock Your True Potential: Find Your Passion and Thrive!"

"Experience the Adventure of a Lifetime: Travel the World on a Budget!"

🎀 The Effectiveness of Euphemisms

Euphemisms are polite or indirect expressions used in place of harsher, more direct language.

In headlines, euphemisms can make your message more appealing and relatable, helping to soften potentially controversial or sensitive topics.

Examples:

"Navigating the Challenges of Modern Parenting"

"Redefining Success in a Fast-Paced World"

⚡Antithesis: The Power of Opposites

Antithesis involves placing two opposite words side-by-side, emphasizing their contrasts. This device can create a sense of tension and intrigue in headlines.

Examples:

"Once a day. Every day"

"Soft on skin. Kill germs"

"Mega power. Mini size."

To utilize antithesis, identify two opposing concepts related to your content and present them in a balanced manner.

🎨 Scesis Onomaton: The Art of Verbless Copy

Scesis onomaton is a rhetorical device that involves writing verbless copy, which quickens the pace and adds emphasis.

Example:

"7 days. 7 dollars. Full access."

To use scesis onomaton, remove verbs and focus on the essential elements of your headline.

🌟 Polyptoton: The Charm of Shared Roots

Polyptoton is the repeated use of words that share the same root, bewitching words into memorable phrases.

Examples:

"Real bread isn't made in factories. It's baked in bakeries"

"Lose your knack for losing things."

To employ polyptoton, identify words with shared roots that are relevant to your content.

✨ Asyndeton: The Elegance of Omission

Asyndeton involves the intentional omission of conjunctions, adding crispness, conviction, and elegance to your headlines.

Examples:

"You, Me, Sushi?"

"All the latte art, none of the environmental impact."

To use asyndeton, eliminate conjunctions and focus on the core message of your headline.

🔮 Tricolon: The Magic of Threes

Tricolon is a rhetorical device that uses the power of three, creating memorable and impactful headlines.

Examples:

"Show it, say it, send it"

"Eat Well, Live Well, Be Well."

To use tricolon, craft a headline with three key elements that emphasize your content's main message.

🔔 Epistrophe: The Chime of Repetition

Epistrophe involves the repetition of words or phrases at the end of successive clauses, adding a chime to your headlines.

Examples:

"Catch it. Bin it. Kill it."

"Joint friendly. Climate friendly. Family friendly."

To employ epistrophe, repeat a key phrase or word at the end of each clause.

Sammy Abdullah

3 years ago

How to properly price SaaS

Price Intelligently put out amazing content on pricing your SaaS product. This blog's link to the whole report is worth reading. Our key takeaways are below.

Don't base prices on the competition. Competitor-based pricing has clear drawbacks. Their pricing approach is yours. Your company offers customers something unique. Otherwise, you wouldn't create it. This strategy is static, therefore you can't add value by raising prices without outpricing competitors. Look, but don't touch is the competitor-based moral. You want to know your competitors' prices so you're in the same ballpark, but they shouldn't guide your selections. Competitor-based pricing also drives down prices.

Value-based pricing wins. This is customer-based pricing. Value-based pricing looks outward, not inward or laterally at competitors. Your clients are the best source of pricing information. By valuing customer comments, you're focusing on buyers. They'll decide if your pricing and packaging are right. In addition to asking consumers about cost savings or revenue increases, look at data like number of users, usage per user, etc.

Value-based pricing increases prices. As you learn more about the client and your worth, you'll know when and how much to boost rates. Every 6 months, examine pricing.

Cloning top customers. You clone your consumers by learning as much as you can about them and then reaching out to comparable people or organizations. You can't accomplish this without knowing your customers. Segmenting and reproducing them requires as much detail as feasible. Offer pricing plans and feature packages for 4 personas. The top plan should state Contact Us. Your highest-value customers want more advice and support.

Question your 4 personas. What's the one item you can't live without? Which integrations matter most? Do you do analytics? Is support important or does your company self-solve? What's too cheap? What's too expensive?

Not everyone likes per-user pricing. SaaS organizations often default to per-user analytics. About 80% of companies utilizing per-user pricing should use an alternative value metric because their goods don't give more value with more users, so charging for them doesn't make sense.

At least 3:1 LTV/CAC. Break even on the customer within 2 years, and LTV to CAC is greater than 3:1. Because customer acquisition costs are paid upfront but SaaS revenues accrue over time, SaaS companies face an early financial shortfall while paying back the CAC.

ROI should be >20:1. Indeed. Ensure the customer's ROI is 20x the product's cost. Microsoft Office costs $80 a year, but consumers would pay much more to maintain it.

A/B Testing. A/B testing is guessing. When your pricing page varies based on assumptions, you'll upset customers. You don't have enough customers anyway. A/B testing optimizes landing pages, design decisions, and other site features when you know the problem but not pricing.

Don't discount. It cheapens the product, makes it permanent, and increases churn. By discounting, you're ruining your pricing analysis.

You might also like

Alex Mathers

3 years ago

12 habits of the zenith individuals I know

Calmness is a vital life skill.

It aids communication. It boosts creativity and performance.

I've studied calm people's habits for years. Commonalities:

Have mastered the art of self-humor.

Protectors take their job seriously, draining the room's energy.

They are fixated on positive pursuits like making cool things, building a strong physique, and having fun with others rather than on depressing influences like the news and gossip.

Every day, spend at least 20 minutes moving, whether it's walking, yoga, or lifting weights.

Discover ways to take pleasure in life's challenges.

Since perspective is malleable, they change their view.

Set your own needs first.

Stressed people neglect themselves and wonder why they struggle.

Prioritize self-care.

Don't ruin your life to please others.

Make something.

Calm people create more than react.

They love creating beautiful things—paintings, children, relationships, and projects.

Don’t hold their breath.

If you're stressed or angry, you may be surprised how much time you spend holding your breath and tightening your belly.

Release, breathe, and relax to find calm.

Stopped rushing.

Rushing is disadvantageous.

Calm people handle life better.

Are aware of their own dietary requirements.

They avoid junk food and eat foods that keep them healthy, happy, and calm.

Don’t take anything personally.

Stressed people control everything.

Self-conscious.

Calm people put others and their work first.

Keep their surroundings neat.

Maintaining an uplifting and clutter-free environment daily calms the mind.

Minimise negative people.

Calm people are ruthless with their boundaries and avoid negative and drama-prone people.

Chris Moyse

4 years ago

Sony and LEGO raise $2 billion for Epic Games' metaverse

‘Kid-friendly’ project holds $32 billion valuation

Epic Games announced today that it has raised $2 billion USD from Sony Group Corporation and KIRKBI (holding company of The LEGO Group). Both companies contributed $1 billion to Epic Games' upcoming ‘metaverse' project.

“We need partners who share our vision as we reimagine entertainment and play. Our partnership with Sony and KIRKBI has found this,” said Epic Games CEO Tim Sweeney. A new metaverse will be built where players can have fun with friends and brands create creative and immersive experiences, as well as creators thrive.

Last week, LEGO and Epic Games announced their plans to create a family-friendly metaverse where kids can play, interact, and create in digital environments. The service's users' safety and security will be prioritized.

With this new round of funding, Epic Games' project is now valued at $32 billion.

“Epic Games is known for empowering creators large and small,” said KIRKBI CEO Sren Thorup Srensen. “We invest in trends that we believe will impact the world we and our children will live in. We are pleased to invest in Epic Games to support their continued growth journey, with a long-term focus on the future metaverse.”

Epic Games is expected to unveil its metaverse plans later this year, including its name, details, services, and release date.

Cory Doctorow

3 years ago

The current inflation is unique.

New Stiglitz just dropped.

Here's the inflation story everyone believes (warning: it's false): America gave the poor too much money during the recession, and now the economy is awash with free money, which made them so rich they're refusing to work, meaning the economy isn't making anything. Prices are soaring due to increased cash and missing labor.

Lawrence Summers says there's only one answer. We must impoverish the poor: raise interest rates, cause a recession, and eliminate millions of jobs, until the poor are stripped of their underserved fortunes and return to work.

https://pluralistic.net/2021/11/20/quiet-part-out-loud/#profiteering

This is nonsense. Countries around the world suffered inflation during and after lockdowns, whether they gave out humanitarian money to keep people from starvation. America has slightly greater inflation than other OECD countries, but it's not due to big relief packages.

The Causes of and Responses to Today's Inflation, a Roosevelt Institute report by Nobel-winning economist Joseph Stiglitz and macroeconomist Regmi Ira, debunks this bogus inflation story and offers a more credible explanation for inflation.

https://rooseveltinstitute.org/wp-content/uploads/2022/12/RI CausesofandResponsestoTodaysInflation Report 202212.pdf

Sharp interest rate hikes exacerbate the slump and increase inflation, the authors argue. They compare monetary policy inflation cures to medieval bloodletting, where doctors repeated the same treatment until the patient recovered (for which they received credit) or died (which was more likely).

Let's discuss bloodletting. Inflation hawks warn of the wage price spiral, when inflation rises and powerful workers bargain for higher pay, driving up expenses, prices, and wages. This is the fairy-tale narrative of the 1970s, and it's true except that OPEC's embargo drove up oil prices, which produced inflation. Oh well.

Let's be generous to seventies-haunted inflation hawks and say we're worried about a wage-price spiral. Fantastic! No. Real wages are 2.3% lower than they were in Oct 2021 after peaking in June at 4.8%.

Why did America's powerful workers take a paycut rather than demand inflation-based pay? Weak unions, globalization, economic developments.

Workers don't expect inflation to rise, so they're not requesting inflationary hikes. Inflationary expectations have remained moderate, consistent with our data interpretation.

https://www.newyorkfed.org/microeconomics/sce#/

Neither are workers. Working people see surplus savings as wealth and spend it gradually over their lives, despite rising demand. People may have saved money by staying in during the lockdown, but they don't eat out every night to make up for it. Instead, they keep those savings as precautionary balances. This is why the economy is lagging.

People don't buy non-traded goods with pandemic savings (basically, imports). Imports don't multiply like domestic purchases. If you buy a loaf of bread from the corner baker for $1 and they spend it at the tavern across the street, that dollar generates $3 in economic activity. Spending a dollar on foreign goods leaves the country and any multiplier effect happens there, not in the US.

Only marginally higher wages. The ECI is up 1.6% from 2019. Almost all gains went to the 25% lowest-paid Americans. Contrary to the inflation worry about too much savings, these workers don't make enough to save, even post-pandemic.

Recreation and transit spending are at or below pre-pandemic levels. Higher food and hotel prices (which doesn’t mean we’re buying more food than we were in 2019, just that it costs more).

What causes inflation if not greedy workers, free money, and high demand? The most expensive domestic goods produce the biggest revenues for their manufacturers. They charge you more without paying their workers or suppliers more.

The largest price-gougers are funneling their earnings to rich people who store it offshore through stock buybacks and dividends. A $1 billion stock buyback doesn't buy $1 billion in bread.

Five factors influence US inflation today:

I. Price rises for energy and food

II. shifts in consumer tastes

III. supply interruptions (mainly autos);

IV. increased rents (due to telecommuting);

V. monopoly (AKA price-gouging).

None can be remedied by raising interest rates or laying off workers.

Russia's invasion of Ukraine, omicron, and China's Zero Covid policy all disrupted the flow of food, energy, and production inputs. The price went higher because we made less.

After Russia invaded Ukraine, oil prices spiked, and sanctions made it worse. But that was February. By October, oil prices had returned to pre-pandemic, 2015 levels attributable to global economic adjustments, including a shift to renewables. Every new renewable installation reduces oil consumption and affects oil prices.

High food prices have a simple solution. The US and EU have bribed farmers not to produce for 50 years. If the war continues, this program may end, and food prices may decline.

Demand changes. We want different things than in 2019, not more. During the lockdown, people substituted goods. Half of the US toilet-paper supply in 2019 was on commercial-sized rolls. This is created from different mills and stock than our toilet paper.

Lockdown pushed toilet paper demand to residential rolls, causing shortages (the TP hoarding story was just another pandemic urban legend). Because supermarket stores don't have accounts with commercial paper distributors, ordering from languishing stores was difficult. Kleenex and paper towel substitutions caused greater shortages.

All that drove increased costs in numerous product categories, and there were more cases. These increases are transient, caused by supply chain inefficiencies that are resolving.

Demand for frontline staff saw a one-time repricing of pay, which is being recouped as we speak.

Illnesses. Brittle, hollowed-out global supply chains aggravated this. The constant pursuit of cheap labor and minimal regulation by monopolies that dominate most sectors means things are manufactured in far-flung locations. Financialization means any surplus capital assets were sold off years ago, leaving firms with little production slack. After the epidemic, several of these systems took years to restart.

Automobiles are to blame. Financialization and monopolization consolidated microchip and auto production in Taiwan and China. When the lockdowns came, these worldwide corporations cancelled their chip orders, and when they placed fresh orders, they were at the back of the line.

That drove up car prices, which is why the US has slightly higher inflation than other wealthy countries: the economy is car-centric. Automobile prices account for 9% of the CPI. France: 3.6%

Rent shocks and telecommuting. After the epidemic, many professionals moved to exurbs, small towns, and the countryside to work from home. As commercial properties were vacated, it was impractical to adapt them for residential use due to planning restrictions. Addressing these restrictions will cut rent prices more than raising inflation rates, which halts housing construction.

Statistical mirages cause some rent inflation. The CPI estimates what homeowners would pay to rent their properties. When rents rise in your neighborhood, the CPI believes you're spending more on rent even if you have a 30-year fixed-rate mortgage.

Market dominance. Almost every area of the US economy is dominated by monopolies, whose CEOs disclose on investor calls that they use inflation scares to jack up prices and make record profits.

https://pluralistic.net/2022/02/02/its-the-economy-stupid/#overinflated

Long-term profit margins are rising. Markups averaged 26% from 1960-1980. 2021: 72%. Market concentration explains 81% of markup increases (e.g. monopolization). Profit margins reach a 70-year high in 2022. These elements interact. Monopolies thin out their sectors, making them brittle and sensitive to shocks.

If we're worried about a shrinking workforce, there are more humanitarian and sensible solutions than causing a recession and mass unemployment. Instead, we may boost US production capacity by easing workers' entry into the workforce.

https://pluralistic.net/2022/06/01/factories-to-condos-pipeline/#stuff-not-money

US female workforce participation ranks towards the bottom of developed countries. Many women can't afford to work due to America's lack of daycare, low earnings, and bad working conditions in female-dominated fields. If America doesn't have enough workers, childcare subsidies and minimum wages can help.

By contrast, driving the country into recession with interest-rate hikes will reduce employment, and the last recruited (women, minorities) are the first fired and the last to be rehired. Forcing America into recession won't enhance its capacity to create what its people want; it will degrade it permanently.

Nothing the Fed does can stop price hikes from international markets, lack of supply chain investment, COVID-19 disruptions, climate change, the Ukraine war, or market power. They can worsen it. When supply problems generate inflation, raising interest rates decreases investments that can remedy shortages.

Increasing interest rates won't cut rents since landlords pass on the expenses and high rates restrict investment in new dwellings where tenants could escape the costs.

Fixing the supply fixes supply-side inflation. Increase renewables investment (as the Inflation Reduction Act does). Monopolies can be busted (as the IRA does). Reshore key goods (as the CHIPS Act does). Better pay and child care attract employees.

Windfall taxes can claw back price-gouging corporations' monopoly earnings.

https://pluralistic.net/2022/03/15/sanctions-financing/#soak-the-rich

In 2008, we ruled out fiscal solutions (bailouts for debtors) and turned to monetary policy (bank bailouts). This preserved the economy but increased inequality and eroded public trust.

Monetary policy won't help. Even monetary policy enthusiasts recognize an 18-month lag between action and result. That suggests monetary tightening is unnecessary. Like the medieval bloodletter, central bankers whose interest rate hikes don't work swiftly may do more of the same, bringing the economy to its knees.

Interest rates must rise. Zero-percent interest fueled foolish speculation and financialization. Increasing rates will stop this. Increasing interest rates will destroy the economy and dampen inflation.

Then what? All recent evidence indicate to inflation decreasing on its own, as the authors argue. Supply side difficulties are finally being overcome, evidence shows. Energy and food prices are showing considerable mean reversion, which is disinflationary.

The authors don't recommend doing nothing. Best case scenario, they argue, is that the Fed won't keep raising interest rates until morale improves.