Elon Musk Bets $44 Billion on Free Speech's Future

Musk’s purchase of Twitter has sealed his bond with the American right—whether the platform’s left-leaning employees and users like it or not.

Elon Musk's pursuit of Twitter Inc. began earlier this month as a joke. It started slowly, then spiraled out of control, culminating on April 25 with the world's richest man agreeing to spend $44 billion on one of the most politically significant technology companies ever. There have been bigger financial acquisitions, but Twitter's significance has always outpaced its balance sheet. This is a unique Silicon Valley deal.

To recap: Musk announced in early April that he had bought a stake in Twitter, citing the company's alleged suppression of free speech. His complaints were vague, relying heavily on the dog whistles of the ultra-right. A week later, he announced he'd buy the company for $54.20 per share, four days after initially pledging to join Twitter's board. Twitter's directors noticed the 420 reference as well, and responded with a “shareholder rights” plan (i.e., a poison pill) that included a 420 joke.

Musk - Patrick Pleul/Getty Images

No one knew if the bid was genuine. Musk's Twitter plans seemed implausible or insincere. In a tweet, he referred to automated accounts that use his name to promote cryptocurrency. He enraged his prospective employees by suggesting that Twitter's San Francisco headquarters be turned into a homeless shelter, renaming the company Titter, and expressing solidarity with his growing conservative fan base. “The woke mind virus is making Netflix unwatchable,” he tweeted on April 19.

But Musk got funding, and after a frantic weekend of negotiations, Twitter said yes. Unlike most buyouts, Musk will personally fund the deal, putting up up to $21 billion in cash and borrowing another $12.5 billion against his Tesla stock.

Free Speech and Partisanship

Percentage of respondents who agree with the following

The deal is expected to replatform accounts that were banned by Twitter for harassing others, spreading misinformation, or inciting violence, such as former President Donald Trump's account. As a result, Musk is at odds with his own left-leaning employees, users, and advertisers, who would prefer more content moderation rather than less.

Dorsey - Photographer: Joe Raedle/Getty Images

Previously, the company's leadership had similar issues. Founder Jack Dorsey stepped down last year amid concerns about slowing growth and product development, as well as his dual role as CEO of payments processor Block Inc. Compared to Musk, a father of seven who already runs four companies (besides Tesla and SpaceX), Dorsey is laser-focused.

Musk's motivation to buy Twitter may be political. Affirming the American far right with $44 billion spent on “free speech” Right-wing activists have promoted a series of competing upstart Twitter competitors—Parler, Gettr, and Trump's own effort, Truth Social—since Trump was banned from major social media platforms for encouraging rioters at the US Capitol on Jan. 6, 2021. But Musk can give them a social network with lax content moderation and a real user base. Trump said he wouldn't return to Twitter after the deal was announced, but he wouldn't be the first to do so.

Trump - Eli Hiller/Bloomberg

Conservative activists and lawmakers are already ecstatic. “A great day for free speech in America,” said Missouri Republican Josh Hawley. The day the deal was announced, Tucker Carlson opened his nightly Fox show with a 10-minute laudatory monologue. “The single biggest political development since Donald Trump's election in 2016,” he gushed over Musk.

But Musk's supporters and detractors misunderstand how much his business interests influence his political ideology. He marketed Tesla's cars as carbon-saving machines that were faster and cooler than gas-powered luxury cars during George W. Bush's presidency. Musk gained a huge following among wealthy environmentalists who reserved hundreds of thousands of Tesla sedans years before they were made during Barack Obama's presidency. Musk in the Trump era advocated for a carbon tax, but he also fought local officials (and his own workers) over Covid rules that slowed the reopening of his Bay Area factory.

Teslas at the Las Vegas Convention Center Loop Central Station in April 2021. The Las Vegas Convention Center Loop was Musk's first commercial project. Ethan Miller/Getty Images

Musk's rightward shift matched the rise of the nationalist-populist right and the desire to serve a growing EV market. In 2019, he unveiled the Cybertruck, a Tesla pickup, and in 2018, he announced plans to manufacture it at a new plant outside Austin. In 2021, he decided to move Tesla's headquarters there, citing California's "land of over-regulation." After Ford and General Motors beat him to the electric truck market, Musk reframed Tesla as a company for pickup-driving dudes.

Similarly, his purchase of Twitter will be entwined with his other business interests. Tesla has a factory in China and is friendly with Beijing. This could be seen as a conflict of interest when Musk's Twitter decides how to treat Chinese-backed disinformation, as Amazon.com Inc. founder Jeff Bezos noted.

Musk has focused on Twitter's product and social impact, but the company's biggest challenges are financial: Either increase cash flow or cut costs to comfortably service his new debt. Even if Musk can't do that, he can still benefit from the deal. He has recently used the increased attention to promote other business interests: Boring has hyperloops and Neuralink brain implants on the way, Musk tweeted. Remember Tesla's long-promised robotaxis!

Musk may be comfortable saying he has no expectation of profit because it benefits his other businesses. At the TED conference on April 14, Musk insisted that his interest in Twitter was solely charitable. “I don't care about money.”

The rockets and weed jokes make it easy to see Musk as unique—and his crazy buyout will undoubtedly add to that narrative. However, he is a megabillionaire who is risking a small amount of money (approximately 13% of his net worth) to gain potentially enormous influence. Musk makes everything seem new, but this is a rehash of an old media story.

More on Society & Culture

Tim Smedley

2 years ago

When Investment in New Energy Surpassed That in Fossil Fuels (Forever)

A worldwide energy crisis might have hampered renewable energy and clean tech investment. Nope.

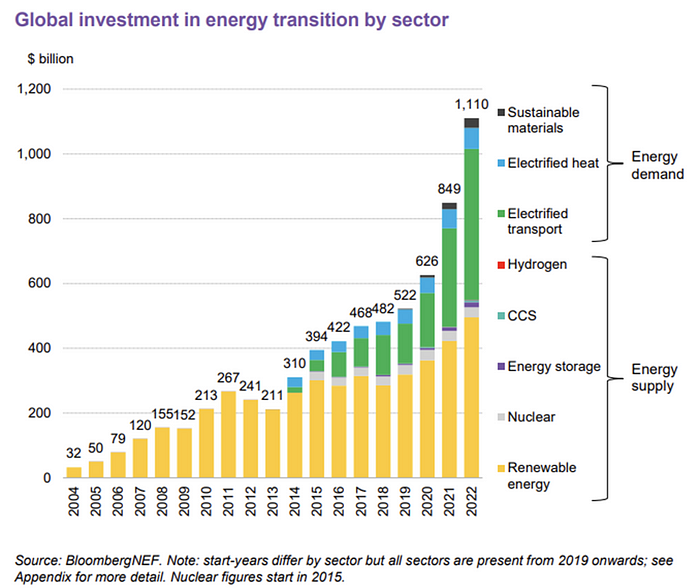

BNEF's 2023 Energy Transition Investment Trends study surprised and encouraged. Global energy transition investment reached $1 trillion for the first time ($1.11t), up 31% from 2021. From 2013, the clean energy transition has come and cannot be reversed.

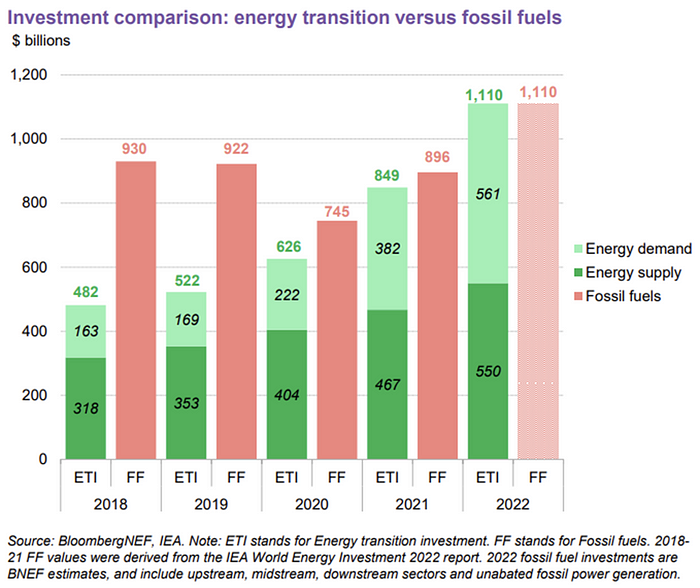

BNEF Head of Global Analysis Albert Cheung said our findings ended the energy crisis's influence on renewable energy deployment. Energy transition investment has reached a record as countries and corporations implement transition strategies. Clean energy investments will soon surpass fossil fuel investments.

The table below indicates the tripping point, which means the energy shift is occuring today.

BNEF calls money invested on clean technology including electric vehicles, heat pumps, hydrogen, and carbon capture energy transition investment. In 2022, electrified heat received $64b and energy storage $15.7b.

Nonetheless, $495b in renewables (up 17%) and $466b in electrified transport (up 54%) account for most of the investment. Hydrogen and carbon capture are tiny despite the fanfare. Hydrogen received the least funding in 2022 at $1.1 billion (0.1%).

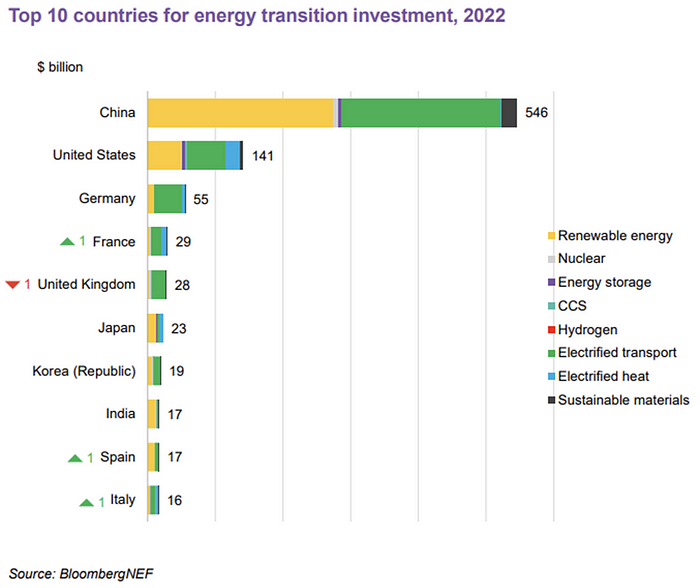

China dominates investment. China spends $546 billion on energy transition, half the global amount. Second, the US total of $141 billion in 2022 was up 11% from 2021. With $180 billion, the EU is unofficially second. China invested 91% in battery technologies.

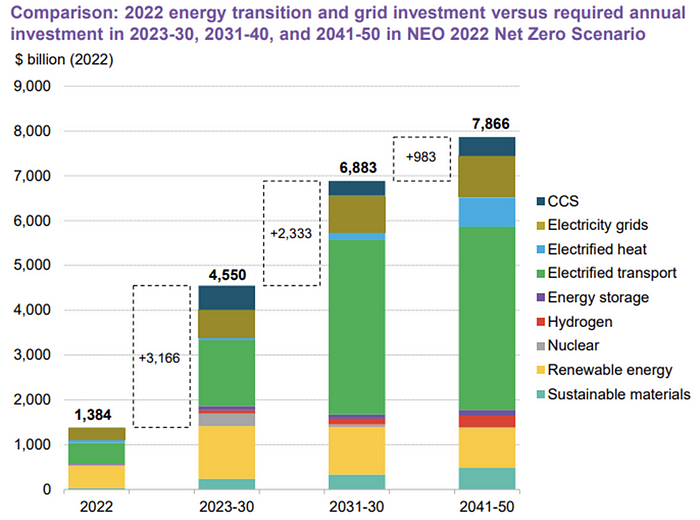

The 2022 transition tipping point is encouraging, but the BNEF research shows how far we must go to get Net Zero. Energy transition investment must average $4.55 trillion between 2023 and 2030—three times the amount spent in 2022—to reach global Net Zero. Investment must be seven times today's record to reach Net Zero by 2050.

BNEF 2023 Energy Transition Investment Trends.

As shown in the graph above, BNEF experts have been using their crystal balls to determine where that investment should go. CCS and hydrogen are still modest components of the picture. Interestingly, they see nuclear almost fading. Active transport advocates like me may have something to say about the massive $4b in electrified transport. If we focus on walkable 15-minute cities, we may need fewer electric automobiles. Though we need more electric trains and buses.

Albert Cheung of BNEF emphasizes the challenge. This week's figures promise short-term job creation and medium-term energy security, but more investment is needed to reach net zero in the long run.

I expect the BNEF Energy Transition Investment Trends report to show clean tech investment outpacing fossil fuels investment every year. Finally saying that is amazing. It's insufficient. The planet must maintain its electric (not gas) pedal. In response to the research, Christina Karapataki, VC at Breakthrough Energy Ventures, a clean tech investment firm, tweeted: Clean energy investment needs to average more than 3x this level, for the remainder of this decade, to get on track for BNEFs Net Zero Scenario. Go!

Andy Walker

3 years ago

Why personal ambition and poor leadership caused Google layoffs

Google announced 6% layoffs recently (or 12,000 people). This aligns it with most tech companies. A publicly contrite CEO explained that they had overhired during the COVID-19 pandemic boom and had to address it, but they were sorry and took full responsibility. I thought this was "bullshit" too. Meta, Amazon, Microsoft, and others must feel similarly. I spent 10 years at Google, and these things don't reflect well on the company's leaders.

All publicly listed companies have a fiduciary duty to act in the best interests of their shareholders. Dodge vs. Ford Motor Company established this (1919). Henry Ford wanted to reduce shareholder payments to offer cheaper cars and better wages. Ford stated.

My ambition is to employ still more men, to spread the benefits of this industrial system to the greatest possible number, to help them build up their lives and their homes. To do this we are putting the greatest share of our profits back in the business.

The Dodge brothers, who owned 10% of Ford, opposed this and sued Ford for the payments to start their own company. They won, preventing Ford from raising prices or salaries. If you have a vocal group of shareholders with the resources to sue you, you must prove you are acting in their best interests. Companies prioritize shareholders. Giving activist investors a stick to threaten you almost enshrines short-term profit over long-term thinking.

This underpins Google's current issues. Institutional investors who can sue Google see it as a wasteful company they can exploit. That doesn't mean you have to maximize profits (thanks to those who pointed out my ignorance of US corporate law in the comments and on HN), but it allows pressure. I feel for those navigating this. This is about unrestrained capitalism.

When Google went public, Larry Page and Sergey Brin knew the risks and worked hard to keep control. In their Founders' Letter to investors, they tried to set expectations for the company's operations.

Our long-term focus as a private company has paid off. Public companies do the same. We believe outside pressures lead companies to sacrifice long-term opportunities to meet quarterly market expectations.

The company has transformed since that letter. The company has nearly 200,000 full-time employees and a trillion-dollar market cap. Large investors have bought company stock because it has been a good long-term bet. Why are they restless now?

Other big tech companies emerged and fought for top talent. This has caused rising compensation packages. Google has also grown rapidly (roughly 22,000 people hired to the end of 2022). At $300,000 median compensation, those 22,000 people added $6.6 billion in salary overheads in 2022. Exorbitant. If the company still makes $16 billion every quarter, maybe not. Investors wonder if this value has returned.

Investors are right. Google uses people wastefully. However, by bluntly reducing headcount, they're not addressing the root causes and hurting themselves. No studies show that downsizing this way boosts productivity. There is plenty of evidence that they'll lose out because people will be risk-averse and distrust their leadership.

The company's approach also stinks. Finding out that you no longer have a job because you can’t log in anymore (sometimes in cases where someone is on call for protecting your production systems) is no way to fire anyone. Being with a narcissistic sociopath is like being abused. First, you receive praise and fancy perks for making the cut. You're fired by text and ghosted. You're told to appreciate the generous severance package. This firing will devastate managers and teams. This type of firing will take years to recover self-esteem. Senior management contributed to this. They chose the expedient answer, possibly by convincing themselves they were managing risk and taking the Macbeth approach of “If it were done when ’tis done, then ’twere well It were done quickly”.

Recap. Google's leadership did a stupid thing—mass firing—in a stupid way. How do we get rid of enough people to make investors happier? and "have 6% less people." Empathetic leaders should not emulate Elon Musk. There is no humane way to fire 12,000 people, but there are better ways. Why is Google so wasteful?

Ambition answers this. There aren't enough VP positions for a group of highly motivated, ambitious, and (increasingly) ruthless people. I’ve loitered around the edges of this world and a large part of my value was to insulate my teams from ever having to experience it. It’s like Game of Thrones played out through email and calendar and over video call.

Your company must look a certain way to be promoted to director or higher. You need the right people at the right levels under you. Long-term, growing your people will naturally happen if you're working on important things. This takes time, and you're never more than 6–18 months from a reorg that could start you over. Ambitious people also tend to be impatient. So, what do you do?

Hiring and vanity projects. To shape your company, you hire at the right levels. You value vanity metrics like active users over product utility. Your promo candidates get through by subverting the promotion process. In your quest for growth, you avoid performance managing people out. You avoid confronting toxic peers because you need their support for promotion. Your cargo cult gets you there.

Its ease makes Google wasteful. Since they don't face market forces, the employees don't see it as a business. Why would you do when the ads business is so profitable? Complacency causes senior leaders to prioritize their own interests. Empires collapse. Personal ambition often trumped doing the right thing for users, the business, or employees. Leadership's ambition over business is the root cause. Vanity metrics, mass hiring, and vague promises have promoted people to VP. Google goes above and beyond to protect senior leaders.

The decision-makers and beneficiaries are not the layoffees. Stock price increase beneficiaries. The people who will post on LinkedIn how it is about misjudging the market and how they’re so sorry and take full responsibility. While accumulating wealth, the dark room dwellers decide who stays and who goes. The billionaire investors. Google should start by addressing its bloated senior management, but — as they say — turkeys don't vote for Christmas. It should examine its wastefulness and make tough choices to fix it. A 6% cut is a blunt tool that admits you're not running your business properly. why aren’t the people running the business the ones shortly to be entering the job market?

This won't fix Google's wastefulness. The executives may never regain trust after their approach. Suppressed creativity. Business won't improve. Google will have lost its founding vision and us all. Large investors know they can force Google's CEO to yield. The rich will get richer and rationalize leaving 12,000 people behind. Cycles repeat.

It doesn’t have to be this way. In 2013, Nintendo's CEO said he wouldn't fire anyone for shareholders. Switch debuted in 2017. Nintendo's stock has increased by nearly five times, or 19% a year (including the drop most of the stock market experienced last year). Google wasted 12,000 talented people. To please rich people.

Charlie Brown

3 years ago

What Happens When You Sell Your House, Never Buying It Again, Reverse the American Dream

Homeownership isn't the only life pattern.

Want to irritate people?

My party trick is to say I used to own a house but no longer do.

I no longer wish to own a home, not because I lost it or because I'm moving.

It was a long-term plan. It was more deliberate than buying a home. Many people are committed for this reason.

Poppycock.

Anyone who told me that owning a house (or striving to do so) is a must is wrong.

Because, URGH.

One pattern for life is to own a home, but there are millions of others.

You can afford to buy a home? Go, buddy.

You think you need 1,000 square feet (or more)? You think it's non-negotiable in life?

Nope.

It's insane that society forces everyone to own real estate, regardless of income, wants, requirements, or situation. As if this trade brings happiness, stability, and contentment.

Take it from someone who thought this for years: drywall isn't happy. Living your way brings contentment.

That's in real estate. It may also be renting a small apartment in a city that makes your soul sing, but you can't afford the downpayment or mortgage payments.

Living or traveling abroad is difficult when your life savings are connected to something that eats your money the moment you sign.

#vanlife, which seems like torment to me, makes some people feel alive.

I've seen co-living, vacation rental after holiday rental, living with family, and more work.

Insisting that home ownership is the only path in life is foolish and reduces alternative options.

How little we question homeownership is a disgrace.

No one challenges a homebuyer's motives. We congratulate them, then that's it.

When you offload one, you must answer every question, even if you have a loose screw.

Why do you want to sell?

Do you have any concerns about leaving the market?

Why would you want to renounce what everyone strives for?

Why would you want to abandon a beautiful place like that?

Why would you mismanage your cash in such a way?

But surely it's only temporary? RIGHT??

Incorrect questions. Buying a property requires several inquiries.

The typical American has $4500 saved up. When something goes wrong with the house (not if, it’s never if), can you actually afford the repairs?

Are you certain that you can examine a home in less than 15 minutes before committing to buying it outright and promising to pay more than twice the asking price on a 30-year 7% mortgage?

Are you certain you're ready to leave behind friends, family, and the services you depend on in order to acquire something?

Have you thought about the connotation that moving to a suburb, which more than half of Americans do, means you will be dependent on a car for the rest of your life?

Plus:

Are you sure you want to prioritize home ownership over debt, employment, travel, raising kids, and daily routines?

Homeownership entails that. This ex-homeowner says it will rule your life from the time you put the key in the door.

This isn't questioned. We don't question enough. The holy home-ownership grail was set long ago, and we don't challenge it.

Many people question after signing the deeds. 70% of homeowners had at least one regret about buying a property, including the expense.

Exactly. Tragic.

Homes are different from houses

We've been fooled into thinking home ownership will make us happy.

Some may agree. No one.

Bricks and brick hindered me from living the version of my life that made me most comfortable, happy, and steady.

I'm spending the next month in a modest apartment in southern Spain. Even though it's late November, today will be 68 degrees. My spouse and I will soon meet his visiting parents. We'll visit a Sherry store. We'll eat, nap, walk, and drink Sherry. Writing. Jerez means flamenco.

That's my home. This is such a privilege. Living a fulfilling life brings me the contentment that buying a home never did.

I'm happy and comfortable knowing I can make almost all of my days good. Rejecting home ownership is partly to blame.

I'm broke like most folks. I had to choose between home ownership and comfort. I said, I didn't find them together.

Feeling at home trumps owning brick-and-mortar every day.

The following is the reality of what it's like to turn the American Dream around.

Leaving the housing market.

Sometimes I wish I owned a home.

I miss having my own yard and bed. My kitchen, cookbooks, and pizza oven are missed.

But I rarely do.

Someone else's life plan pushed home ownership on me. I'm grateful I figured it out at 35. Many take much longer, and some never understand homeownership stinks (for them).

It's confusing. People will think you're dumb or suicidal.

If you read what I write, you'll know. You'll realize that all you've done is choose to live intentionally. Find a home beyond four walls and a picket fence.

Miss? As I said, they're not home. If it were, a pizza oven, a good mattress, and a well-stocked kitchen would bring happiness.

No.

If you can afford a house and desire one, more power to you.

There are other ways to discover home. Find calm and happiness. For fun.

For it, look deeper than your home's foundation.

You might also like

Jeff Scallop

3 years ago

The Age of Decentralized Capitalism and DeFi

DeCap is DeFi's killer app.

“Software is eating the world.” Marc Andreesen, venture capitalist

DeFi. Imagine a blockchain-based alternative financial system that offers the same products and services as traditional finance, but with more variety, faster, more secure, lower cost, and simpler access.

Decentralised finance (DeFi) is a marketplace without gatekeepers or central authority managing the flow of money, where customers engage directly with smart contracts running on a blockchain.

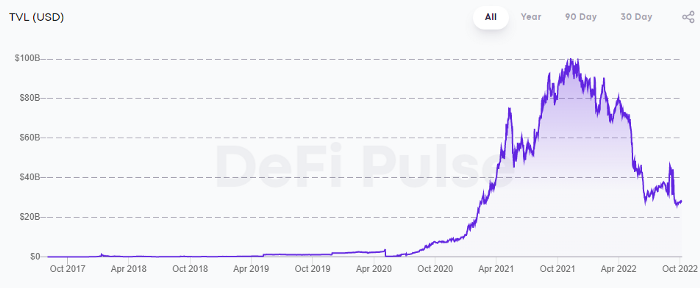

DeFi grew exponentially in 2020/21, with Total Value Locked (an inadequate estimate for market size) topping at $100 billion. After that, it crashed.

The accumulation of funds by individuals with high discretionary income during the epidemic, the novelty of crypto trading, and the high yields given (5% APY for stablecoins on established platforms to 100%+ for risky assets) are among the primary elements explaining this exponential increase.

No longer your older brothers DeFi

Since transactions are anonymous, borrowers had to overcollateralize DeFi 1.0. To borrow $100 in stablecoins, you must deposit $150 in ETH. DeFi 1.0's business strategy raises two problems.

Why does DeFi offer interest rates that are higher than those of the conventional financial system?;

Why would somebody put down more cash than they intended to borrow?

Maxed out on their own resources, investors took loans to acquire more crypto; the demand for those loans raised DeFi yields, which kept crypto prices increasing; as crypto prices rose, investors made a return on their positions, allowing them to deposit more money and borrow more crypto.

This is a bull market game. DeFi 1.0's overcollateralization speculation is dead. Cryptocrash sank it.

The “speculation by overcollateralisation” world of DeFi 1.0 is dead

At a JP Morgan digital assets conference, institutional investors were more interested in DeFi than crypto or fintech. To me, that shows DeFi 2.0's institutional future.

DeFi 2.0 protocols must handle KYC/AML, tax compliance, market abuse, and cybersecurity problems to be institutional-ready.

Stablecoins gaining market share under benign regulation and more CBDCs coming online in the next couple of years could help DeFi 2.0 separate from crypto volatility.

DeFi 2.0 will have a better footing to finally decouple from crypto volatility

Then we can transition from speculation through overcollateralization to DeFi's genuine comparative advantages: cheaper transaction costs, near-instant settlement, more efficient price discovery, faster time-to-market for financial innovation, and a superior audit trail.

Akin to Amazon for financial goods

Amazon decimated brick-and-mortar shops by offering millions of things online, warehouses by keeping just-in-time inventory, and back-offices by automating invoicing and payments. Software devoured retail. DeFi will eat banking with software.

DeFi is the Amazon for financial items that will replace fintech. Even the most advanced internet brokers offer only 100 currency pairings and limited bonds, equities, and ETFs.

Old banks settlement systems and inefficient, hard-to-upgrade outdated software harm them. For advanced gamers, it's like driving an F1 vehicle on dirt.

It is like driving a F1 car on a dirt road, for the most sophisticated players

Central bankers throughout the world know how expensive and difficult it is to handle cross-border payments using the US dollar as the reserve currency, which is vulnerable to the economic cycle and geopolitical tensions.

Decentralization is the only method to deliver 24h global financial markets. DeFi 2.0 lets you buy and sell startup shares like Google or Tesla. VC funds will trade like mutual funds. Or create a bundle coverage for your car, house, and NFTs. Defi 2.0 consumes banking and creates Global Wall Street.

Defi 2.0 is how software eats banking and delivers the global Wall Street

Decentralized Capitalism is Emerging

90% of markets are digital. 10% is hardest to digitalize. That's money creation, ID, and asset tokenization.

90% of financial markets are already digital. The only problem is that the 10% left is the hardest to digitalize

Debt helped Athens construct a powerful navy that secured trade routes. Bonds financed the Renaissance's wars and supply chains. Equity fueled industrial growth. FX drove globalization's payments system. DeFi's plans:

If the 20th century was a conflict between governments and markets over economic drivers, the 21st century will be between centralized and decentralized corporate structures.

Offices vs. telecommuting. China vs. onshoring/friendshoring. Oil & gas vs. diverse energy matrix. National vs. multilateral policymaking. DAOs vs. corporations Fiat vs. crypto. TradFi vs.

An age where the network effects of the sharing economy will overtake the gains of scale of the monopolistic competition economy

This is the dawn of Decentralized Capitalism (or DeCap), an age where the network effects of the sharing economy will reach a tipping point and surpass the scale gains of the monopolistic competition economy, further eliminating inefficiencies and creating a more robust economy through better data and automation. DeFi 2.0 enables this.

DeFi needs to pay the piper now.

DeCap won't be Web3.0's Shangri-La, though. That's too much for an ailing Atlas. When push comes to shove, DeFi folks want to survive and fight another day for the revolution. If feasible, make a tidy profit.

Decentralization wasn't meant to circumvent regulation. It circumvents censorship. On-ramp, off-ramp measures (control DeFi's entry and exit points, not what happens in between) sound like a good compromise for DeFi 2.0.

The sooner authorities realize that DeFi regulation is made ex-ante by writing code and constructing smart contracts with rules, the faster DeFi 2.0 will become the more efficient and safe financial marketplace.

More crucially, we must boost system liquidity. DeFi's financial stability risks are downplayed. DeFi must improve its liquidity management if it's to become mainstream, just as banks rely on capital constraints.

This reveals the complex and, frankly, inadequate governance arrangements for DeFi protocols. They redistribute control from tokenholders to developers, which is bad governance regardless of the economic model.

But crypto can only ride the existing banking system for so long before forming its own economy. DeFi will upgrade web2.0's financial rails till then.

Al Anany

2 years ago

Because of this covert investment that Bezos made, Amazon became what it is today.

He kept it under wraps for years until he legally couldn’t.

His shirt is incomplete. I can’t stop thinking about this…

Actually, ignore the article. Look at it. JUST LOOK at it… It’s quite disturbing, isn’t it?

Ughh…

Me: “Hey, what up?” Friend: “All good, watching lord of the rings on amazon prime video.” Me: “Oh, do you know how Amazon grew and became famous?” Friend: “Geek alert…Can I just watch in peace?” Me: “But… Bezos?” Friend: “Let it go, just let it go…”

I can question you, the reader, and start answering instantly without his consent. This far.

Reader, how did Amazon succeed? You'll say, Of course, it was an internet bookstore, then it sold everything.

Mistaken. They moved from zero to one because of this. How did they get from one to thousand? AWS-some. Understand? It's geeky and lame. If not, I'll explain my geekiness.

Over an extended period of time, Amazon was not profitable.

Business basics. You want customers if you own a bakery, right?

Well, 100 clients per day order $5 cheesecakes (because cheesecakes are awesome.)

$5 x 100 consumers x 30 days Equals $15,000 monthly revenue. You proudly work here.

Now you have to pay the barista (unless ChatGPT is doing it haha? Nope..)

The barista is requesting $5000 a month.

Each cheesecake costs the cheesecake maker $2.5 ($2.5 × 100 x 30 = $7500).

The monthly cost of running your bakery, including power, is about $5000.

Assume no extra charges. Your operating costs are $17,500.

Just $15,000? You have income but no profit. You might make money selling coffee with your cheesecake next month.

Is losing money bad? You're broke. Losing money. It's bad for financial statements.

It's almost a business ultimatum. Most startups fail. Amazon took nine years.

I'm reading Amazon Unbound: Jeff Bezos and the Creation of a Global Empire to comprehend how a company has a $1 trillion market cap.

Many things made Amazon big. The book claims that Bezos and Amazon kept a specific product secret for a long period.

Clouds above the bald head.

In 2006, Bezos started a cloud computing initiative. They believed many firms like Snapchat would pay for reliable servers.

In 2006, cloud computing was not what it is today. I'll simplify. 2006 had no iPhone.

Bezos invested in Amazon Web Services (AWS) without disclosing its revenue. That's permitted till a certain degree.

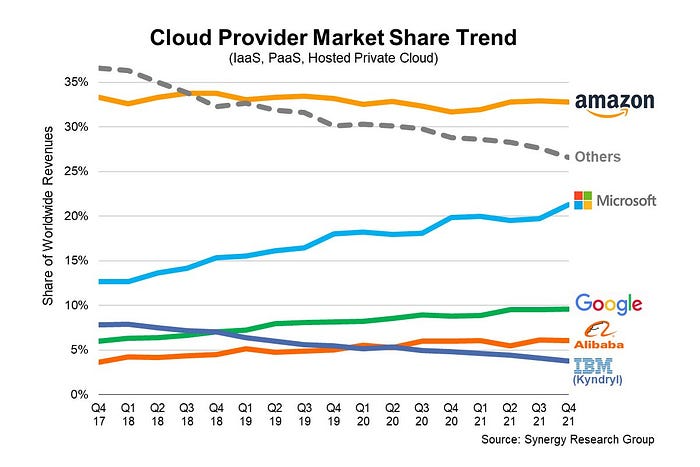

Google and Microsoft would realize Amazon is heavily investing in this market and worry.

Bezos anticipated high demand for this product. Microsoft built its cloud in 2010, and Google in 2008.

If you managed Google or Microsoft, you wouldn't know how much Amazon makes from their cloud computing service. It's enough. Yet, Amazon is an internet store, so they'll focus on that.

All but Bezos were wrong.

Time to come clean now.

They revealed AWS revenue in 2015. Two things were apparent:

Bezos made the proper decision to bet on the cloud and keep it a secret.

In this race, Amazon is in the lead.

They continued. Let me list some AWS users today.

Netflix

Airbnb

Twitch

More. Amazon was unprofitable for nine years, remember? This article's main graph.

AWS accounted for 74% of Amazon's profit in 2021. This 74% might not exist if they hadn't invested in AWS.

Bring this with you home.

Amazon predated AWS. Yet, it helped the giant reach $1 trillion. Bezos' secrecy? Perhaps, until a time machine is invented (they might host the time machine software on AWS, though.)

Without AWS, Amazon would have been profitable but unimpressive. They may have invested in anything else that would have returned more (like crypto? No? Ok.)

Bezos has business flaws. His success. His failures include:

introducing the Fire Phone and suffering a $170 million loss.

Amazon's failure in China In 2011, Amazon had a about 15% market share in China. 2019 saw a decrease of about 1%.

not offering a higher price to persuade the creator of Netflix to sell the company to him. He offered a rather reasonable $15 million in his proposal. But what if he had offered $30 million instead (Amazon had over $100 million in revenue at the time)? He might have owned Netflix, which has a $156 billion market valuation (and saved billions rather than invest in Amazon Prime Video).

Some he could control. Some were uncontrollable. Nonetheless, every action he made in the foregoing circumstances led him to invest in AWS.

Duane Michael

3 years ago

Don't Fall Behind: 7 Subjects You Must Understand to Keep Up with Technology

As technology develops, you should stay up to date

You don't want to fall behind, do you? This post covers 7 tech-related things you should know.

You'll learn how to operate your computer (and other electronic devices) like an expert and how to leverage the Internet and social media to create your brand and business. Read on to stay relevant in today's tech-driven environment.

You must learn how to code.

Future-language is coding. It's how we and computers talk. Learn coding to keep ahead.

Try Codecademy or Code School. There are also numerous free courses like Coursera or Udacity, but they take a long time and aren't necessarily self-paced, so it can be challenging to find the time.

Artificial intelligence (AI) will transform all jobs.

Our skillsets must adapt with technology. AI is a must-know topic. AI will revolutionize every employment due to advances in machine learning.

Here are seven AI subjects you must know.

What is artificial intelligence?

How does artificial intelligence work?

What are some examples of AI applications?

How can I use artificial intelligence in my day-to-day life?

What jobs have a high chance of being replaced by artificial intelligence and how can I prepare for this?

Can machines replace humans? What would happen if they did?

How can we manage the social impact of artificial intelligence and automation on human society and individual people?

Blockchain Is Changing the Future

Few of us know how Bitcoin and blockchain technology function or what impact they will have on our lives. Blockchain offers safe, transparent, tamper-proof transactions.

It may alter everything from business to voting. Seven must-know blockchain topics:

Describe blockchain.

How does the blockchain function?

What advantages does blockchain offer?

What possible uses for blockchain are there?

What are the dangers of blockchain technology?

What are my options for using blockchain technology?

What does blockchain technology's future hold?

Cryptocurrencies are here to stay

Cryptocurrencies employ cryptography to safeguard transactions and manage unit creation. Decentralized cryptocurrencies aren't controlled by governments or financial institutions.

Bitcoin, the first cryptocurrency, was launched in 2009. Cryptocurrencies can be bought and sold on decentralized exchanges.

Bitcoin is here to stay.

Bitcoin isn't a fad, despite what some say. Since 2009, Bitcoin's popularity has grown. Bitcoin is worth learning about now. Since 2009, Bitcoin has developed steadily.

With other cryptocurrencies emerging, many people are wondering if Bitcoin still has a bright future. Curiosity is natural. Millions of individuals hope their Bitcoin investments will pay off since they're popular now.

Thankfully, they will. Bitcoin is still running strong a decade after its birth. Here's why.

The Internet of Things (IoT) is no longer just a trendy term.

IoT consists of internet-connected physical items. These items can share data. IoT is young but developing fast.

20 billion IoT-connected devices are expected by 2023. So much data! All IT teams must keep up with quickly expanding technologies. Four must-know IoT topics:

Recognize the fundamentals: Priorities first! Before diving into more technical lingo, you should have a fundamental understanding of what an IoT system is. Before exploring how something works, it's crucial to understand what you're working with.

Recognize Security: Security does not stand still, even as technology advances at a dizzying pace. As IT professionals, it is our duty to be aware of the ways in which our systems are susceptible to intrusion and to ensure that the necessary precautions are taken to protect them.

Be able to discuss cloud computing: The cloud has seen various modifications over the past several years once again. The use of cloud computing is also continually changing. Knowing what kind of cloud computing your firm or clients utilize will enable you to make the appropriate recommendations.

Bring Your Own Device (BYOD)/Mobile Device Management (MDM) is a topic worth discussing (MDM). The ability of BYOD and MDM rules to lower expenses while boosting productivity among employees who use these services responsibly is a major factor in their continued growth in popularity.

IoT Security is key

As more gadgets connect, they must be secure. IoT security includes securing devices and encrypting data. Seven IoT security must-knows:

fundamental security ideas

Authorization and identification

Cryptography

electronic certificates

electronic signatures

Private key encryption

Public key encryption

Final Thoughts

With so much going on in the globe, it can be hard to stay up with technology. We've produced a list of seven tech must-knows.