More on Personal Growth

Tom Connor

3 years ago

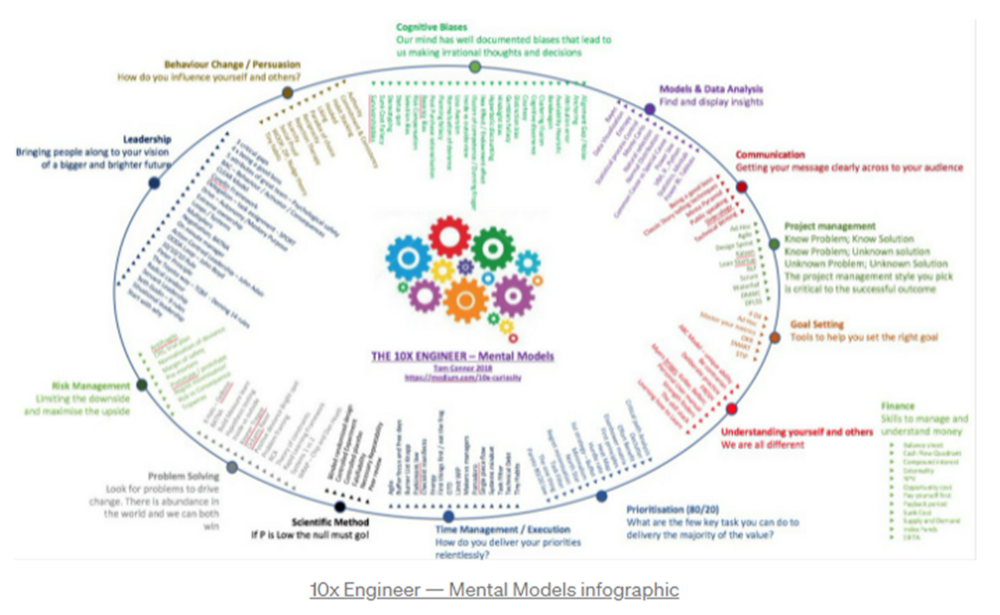

12 mental models that I use frequently

https://tomconnor.me/wp-content/uploads/2021/08/10x-Engineer-Mental-Models.pdf

I keep returning to the same mental models and tricks after writing and reading about a wide range of topics.

Top 12 mental models

12.

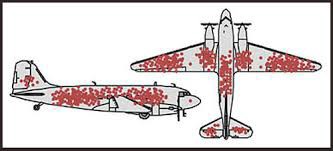

Survival bias - We perceive the surviving population as remarkable, yet they may have gotten there through sheer grit.

Survivorship bias affects us in many situations. Our retirement fund; the unicorn business; the winning team. We often study and imitate the last one standing. This can lead to genuine insights and performance improvements, but it can also lead us astray because the leader may just be lucky.

11.

The Helsinki Bus Theory - How to persevere Buss up!

Always display new work, and always be compared to others. Why? Easy. Keep riding. Stay on the fucking bus.

10.

Until it sticks… Turning up every day… — Artists teach engineers plenty. Quality work over a career comes from showing up every day and starting.

9.

WRAP decision making process (Heath Brothers)

Decision-making WRAP Model:

W — Widen your Options

R — Reality test your assumptions

A — Attain Distance

P — Prepare to be wrong or Right

8.

Systems for knowledge worker excellence - Todd Henry and Cal Newport write about techniques knowledge workers can employ to build a creative rhythm and do better work.

Todd Henry's FRESH framework:

Focus: Keep the start in mind as you wrap up.

Relationships: close a loop that's open.

Pruning is an energy.

Set aside time to be inspired by stimuli.

Hours: Spend time thinking.

7.

BBT is learning from mistakes. Science has transformed the world because it constantly updates its theories in light of failures. Complexity guarantees failure. Do we learn or self-justify?

6.

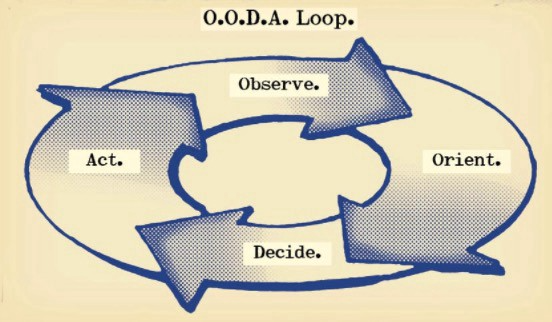

The OODA Loop - Competitive advantage

O: Observe: collect the data. Figure out exactly where you are, what’s happening.

O: Orient: analyze/synthesize the data to form an accurate picture.

D: Decide: select an action from possible options

A: Action: execute the action, and return to step (1)

Boyd's approach indicates that speed and agility are about information processing, not physical reactions. They form feedback loops. More OODA loops improve speed.

5.

Leaders who try to impose order in a complex situation fail; those who set the stage, step back, and allow patterns to develop win.

https://vimeo.com/640941172?embedded=true&source=vimeo_logo&owner=11999906

4.

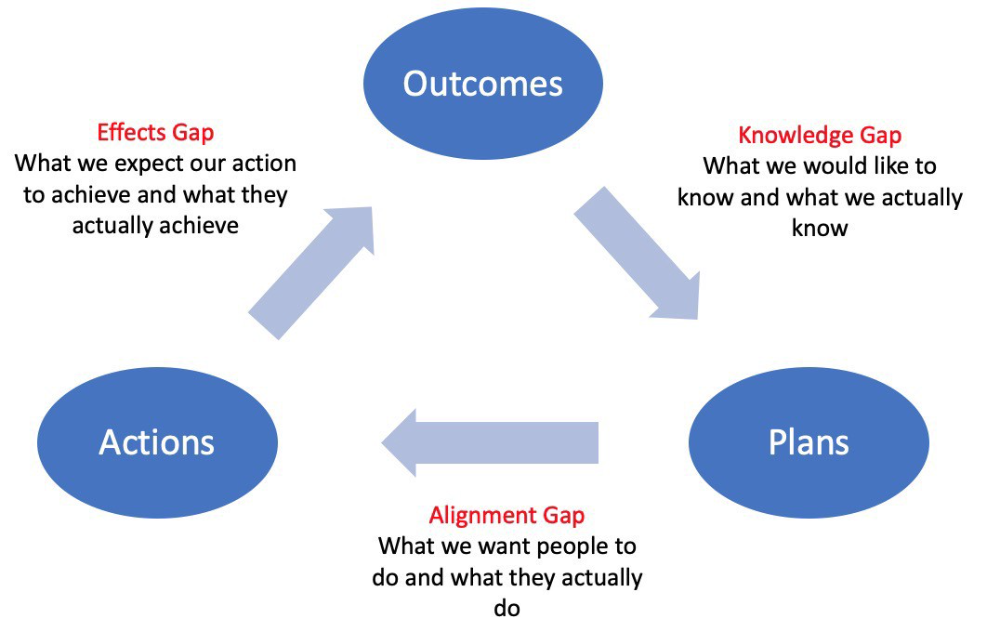

Information Gap - The discrepancy between what we know and what we would like to know

Gap in Alignment - What individuals actually do as opposed to what we wish them to do

Effects Gap - the discrepancy between our expectations and the results of our actions

3.

Theory of Constraints — The Goal - To maximize system production, maximize bottleneck throughput.

Goldratt creates a five-step procedure:

Determine the restriction

Improve the restriction.

Everything else should be based on the limitation.

Increase the restriction

Go back to step 1 Avoid letting inertia become a limitation.

Any non-constraint improvement is an illusion.

2.

Serendipity and the Adjacent Possible - Why do several amazing ideas emerge at once? How can you foster serendipity in your work?

You need specialized abilities to reach to the edge of possibilities, where you can pursue exciting tasks that will change the world. Few people do it since it takes a lot of hard work. You'll stand out if you do.

Most people simply lack the comfort with discomfort required to tackle really hard things. At some point, in other words, there’s no way getting around the necessity to clear your calendar, shut down your phone, and spend several hard days trying to make sense of the damn proof.

1.

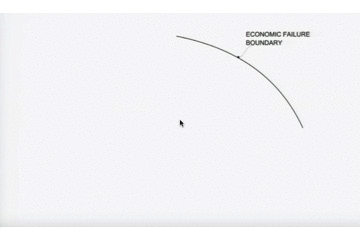

Boundaries of failure - Rasmussen's accident model.

Rasmussen modeled this. It has economic, workload, and performance boundaries.

The economic boundary is a company's profit zone. If the lights are on, you're within the economic boundaries, but there's pressure to cut costs and do more.

Performance limit reflects system capacity. Taking shortcuts is a human desire to minimize work. This is often necessary to survive because there's always more labor.

Both push operating points toward acceptable performance. Personal or process safety, or equipment performance.

If you exceed acceptable performance, you'll push back, typically forcefully.

Entreprogrammer

3 years ago

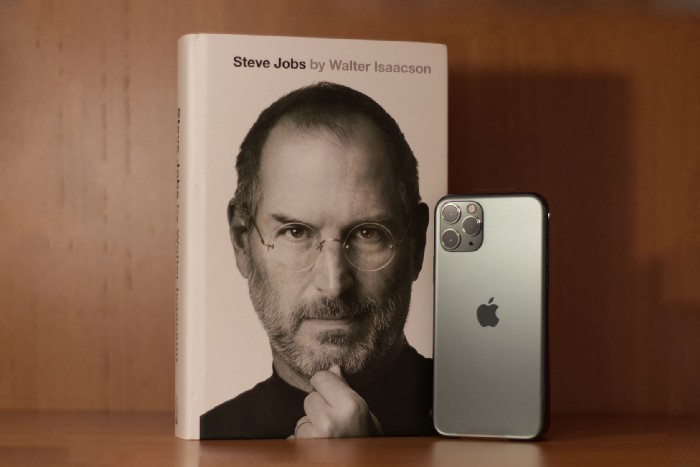

The Steve Jobs Formula: A Guide to Everything

A must-read for everyone

Jobs is well-known. You probably know the tall, thin guy who wore the same clothing every day. His influence is unavoidable. In fewer than 40 years, Jobs' innovations have impacted computers, movies, cellphones, music, and communication.

Steve Jobs may be more imaginative than the typical person, but if we can use some of his ingenuity, ambition, and good traits, we'll be successful. This essay explains how to follow his guidance and success secrets.

1. Repetition is necessary for success.

Be patient and diligent to master something. Practice makes perfect. This is why older workers are often more skilled.

When should you repeat a task? When you're confident and excited to share your product. It's when to stop tweaking and repeating.

Jobs stated he'd make the crowd sh** their pants with an iChat demo.

Use this in your daily life.

Start with the end in mind. You can put it in writing and be as detailed as you like with your plan's schedule and metrics. For instance, you have a goal of selling three coffee makers in a week.

Break it down, break the goal down into particular tasks you must complete, and then repeat those tasks. To sell your coffee maker, you might need to make 50 phone calls.

Be mindful of the amount of work necessary to produce the desired results. Continue doing this until you are happy with your product.

2. Acquire the ability to add and subtract.

How did Picasso invent cubism? Pablo Picasso was influenced by stylised, non-naturalistic African masks that depict a human figure.

Artists create. Constantly seeking inspiration. They think creatively about random objects. Jobs said creativity is linking things. Creative people feel terrible when asked how they achieved something unique because they didn't do it all. They saw innovation. They had mastered connecting and synthesizing experiences.

Use this in your daily life.

On your phone, there is a note-taking app. Ideas for what you desire to learn should be written down. It may be learning a new language, calligraphy, or anything else that inspires or intrigues you.

Note any ideas you have, quotations, or any information that strikes you as important.

Spend time with smart individuals, that is the most important thing. Jim Rohn, a well-known motivational speaker, has observed that we are the average of the five people with whom we spend the most time.

Learning alone won't get you very far. You need to put what you've learnt into practice. If you don't use your knowledge and skills, they are useless.

3. Develop the ability to refuse.

Steve Jobs deleted thousands of items when he created Apple's design ethic. Saying no to distractions meant upsetting customers and partners.

John Sculley, the former CEO of Apple, said something like this. According to Sculley, Steve’s methodology differs from others as he always believed that the most critical decisions are things you choose not to do.

Use this in your daily life.

Never be afraid to say "no," "I won't," or "I don't want to." Keep it simple. This method works well in some situations.

Give a different option. For instance, X might be interested even if I won't be able to achieve it.

Control your top priority. Before saying yes to anything, make sure your work schedule and priority list are up to date.

4. Follow your passion

“Follow your passion” is the worst advice people can give you. Steve Jobs didn't start Apple because he suddenly loved computers. He wanted to help others attain their maximum potential.

Great things take a lot of work, so quitting makes sense if you're not passionate. Jobs learned from history that successful people were passionate about their work and persisted through challenges.

Use this in your daily life.

Stay away from your passion. Allow it to develop daily. Keep working at your 9-5-hour job while carefully gauging your level of desire and endurance. Less risk exists.

The truth is that if you decide to work on a project by yourself rather than in a group, it will take you years to complete it instead of a week. Instead, network with others who have interests in common.

Prepare a fallback strategy in case things go wrong.

Success, this small two-syllable word eventually gives your life meaning, a perspective. What is success? For most, it's achieving their ambitions. However, there's a catch. Successful people aren't always happy.

Furthermore, where do people’s goals and achievements end? It’s a never-ending process. Success is a journey, not a destination. We wish you not to lose your way on this journey.

James White

3 years ago

Three Books That Can Change Your Life in a Day

I've summarized each.

Anne Lamott said books are important. Books help us understand ourselves and our behavior. They teach us about community, friendship, and death.

I read. One of my few life-changing habits. 100+ books a year improve my life. I'll list life-changing books you can read in a day. I hope you like them too.

Let's get started!

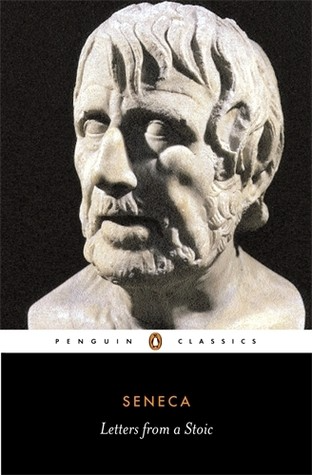

1) Seneca's Letters from a Stoic

One of my favorite philosophy books. Ryan Holiday, Naval Ravikant, and other prolific readers recommend it.

Seneca wrote 124 letters at the end of his life after working for Nero. Death, friendship, and virtue are discussed.

It's worth rereading. When I'm in trouble, I consult Seneca.

It's brief. The book could be read in one day. However, use it for guidance during difficult times.

My favorite book quotes:

Many men find that becoming wealthy only alters their problems rather than solving them.

You will never be poor if you live in harmony with nature; you will never be wealthy if you live according to what other people think.

We suffer more frequently in our imagination than in reality; there are more things that are likely to frighten us than to crush us.

2) Steven Pressfield's book The War of Art

I’ve read this book twice. I'll likely reread it before 2022 is over.

The War Of Art is the best productivity book. Steven offers procrastination-fighting tips.

Writers, musicians, and creative types will love The War of Art. Workplace procrastinators should also read this book.

My favorite book quotes:

The act of creation is what matters most in art. Other than sitting down and making an effort every day, nothing else matters.

Working creatively is not a selfish endeavor or an attempt by the actor to gain attention. It serves as a gift for all living things in the world. Don't steal your contribution from us. Give us everything you have.

Fear is healthy. Fear is a signal, just like self-doubt. Fear instructs us on what to do. The more terrified we are of a task or calling, the more certain we can be that we must complete it.

3) Darren Hardy's The Compound Effect

The Compound Effect offers practical tips to boost productivity by 10x.

The author believes each choice shapes your future. Pizza may seem harmless. However, daily use increases heart disease risk.

Positive outcomes too. Daily gym visits improve fitness. Reading an hour each night can help you learn. Writing 1,000 words per day would allow you to write a novel in under a year.

Your daily choices affect compound interest and your future. Thus, better habits can improve your life.

My favorite book quotes:

Until you alter a daily habit, you cannot change your life. The key to your success can be found in the actions you take each day.

The hundreds, thousands, or millions of little things are what distinguish the ordinary from the extraordinary; it is not the big things that add up in the end.

Don't worry about willpower. Time to use why-power. Only when you relate your decisions to your aspirations and dreams will they have any real meaning. The decisions that are in line with what you define as your purpose, your core self, and your highest values are the wisest and most inspiring ones. To avoid giving up too easily, you must want something and understand why you want it.

You might also like

Enrique Dans

2 years ago

What happens when those without morals enter the economic world?

I apologize if this sounds basic, but throughout my career, I've always been clear that a company's activities are shaped by its founder(s)' morality.

I consider Palantir, owned by PayPal founder Peter Thiel, evil. He got $5 billion tax-free by hacking a statute to help middle-class savings. That may appear clever, but I think it demonstrates a shocking lack of solidarity with society. As a result of this and other things he has said and done, I early on dismissed Peter Thiel as someone who could contribute anything positive to society, and events soon proved me right: we are talking about someone who clearly considers himself above everyone else and who does not hesitate to set up a company, Palantir, to exploit the data of the little people and sell it to the highest bidder, whoever that is and whatever the consequences.

The German courts have confirmed my warnings concerning Palantir. The problem is that politicians love its surveillance tools because they think knowing more about their constituents gives them power. These are ideal for dictatorships who want to snoop on their populace. Hence, Silicon Valley's triumphalist dialectic has seduced many governments at many levels and collected massive volumes of data to hold forever.

Dangerous company. There are many more. My analysis of the moral principles that disclose company management changed my opinion of Facebook, now Meta, and anyone with a modicum of interest might deduce when that happened, a discovery that leaves you dumbfounded. TikTok was easy because its lack of morality was revealed early when I saw the videos it encouraged minors to post and the repercussions of sharing them through its content recommendation algorithm. When you see something like this, nothing can convince you that the firm can change its morals and become good. Nothing. You know the company is awful and will fail. Speak it, announce it, and change it. It's like a fingerprint—unchangeable.

Some of you who read me frequently make its Facebook today jokes when I write about these firms, and that's fine: they're my moral standards, those of an elderly professor with thirty-five years of experience studying corporations and discussing their cases in class, but you don't have to share them. Since I'm writing this and don't have to submit to any editorial review, that's what it is: when you continuously read a person, you have to assume that they have moral standards and that sometimes you'll agree with them and sometimes you won't. Morality accepts hierarchies, nuances, and even obsessions. I know not everyone shares my opinions, but at least I can voice them. One day, one of those firms may sue me (as record companies did some years ago).

Palantir is incredibly harmful. Limit its operations. Like Meta and TikTok, its business strategy is shaped by its founders' immorality. Such a procedure can never be beneficial.

Andy Raskin

3 years ago

I've Never Seen a Sales Deck This Good

It’s Zuora’s, and it’s brilliant. Here’s why.

My friend Tim got a sales position at a Series-C software company that garnered $60 million from A-list investors. He's one of the best salespeople I know, yet he emailed me after starting to struggle.

Tim has a few modest clients. “Big companies ignore my pitch”. Tim said.

I love helping teams write the strategic story that drives sales, marketing, and fundraising. Tim and I had lunch at Amber India on Market Street to evaluate his deck.

After a feast, I asked Tim when prospects tune out.

He said, “several slides in”.

Intent on maximizing dining ROI, Tim went back to the buffet for seconds. When he returned, I pulled out my laptop and launched into a Powerpoint presentation.

“What’s this?” Tim asked.

“This,” I said, “is the greatest sales deck I have ever seen.”

Five Essentials of a Great Sales Narrative

I showed Tim a sales slide from IPO-bound Zuora, which sells a SaaS platform for subscription billing. Zuora supports recurring payments (e.g. enterprise software).

Ex-Zuora salesman gave me the deck, saying it helped him close his largest business. (I don't know anyone who works at Zuora.) After reading this, a few Zuora employees contacted me.)

Tim abandoned his naan in a pool of goat curry and took notes while we discussed the Zuora deck.

We remarked how well the deck led prospects through five elements:

(The ex-Zuora salesperson begged me not to release the Zuora deck publicly.) All of the images below originate from Zuora's website and SlideShare channel.)

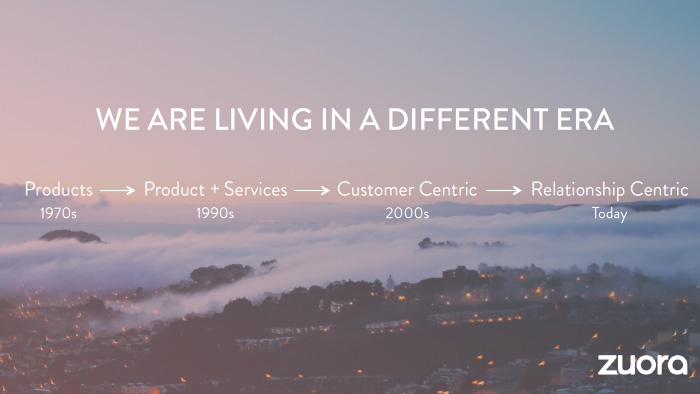

#1. Name a Significant Change in the World

Don't start a sales presentation with mentioning your product, headquarters, investors, clients, or yourself.

Name the world shift that raises enormous stakes and urgency for your prospect.

Every Zuora sales deck begins with this slide:

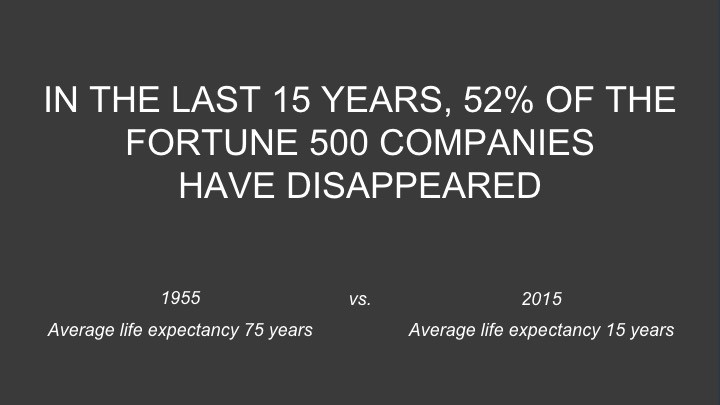

Zuora coined the term subscription economy to describe a new market where purchasers prefer regular service payments over outright purchases. Zuora then shows a slide with the change's history.

Most pitch recommendation advises starting with the problem. When you claim a problem, you put prospects on the defensive. They may be unaware of or uncomfortable admitting the situation.

When you highlight a global trend, prospects open up about how it affects them, worries them, and where they see opportunity. You capture their interest. Robert McKee says:

…what attracts human attention is change. …if the temperature around you changes, if the phone rings — that gets your attention. The way in which a story begins is a starting event that creates a moment of change.

#2. Show There’ll Be Winners and Losers

Loss aversion affects all prospects. They avoid a loss by sticking with the status quo rather than risking a gain by changing.

To fight loss aversion, show how the change will create winners and losers. You must show both

that if the prospect can adjust to the modification you mentioned, the outcome will probably be quite favorable; and

That failing to do so is likely to have an unacceptable negative impact on the prospect's future

Zuora shows a mass extinction among Fortune 500 firms.

…and then showing how the “winners” have shifted from product ownership to subscription services. Those include upstarts…

…as well as rejuvenated incumbents:

To illustrate, Zuora asks:

Winners utilize Zuora's subscription service models.

#3. Tease the Promised Land

It's tempting to get into product or service details now. Resist that urge.

Prospects won't understand why product/service details are crucial if you introduce them too soon, therefore they'll tune out.

Instead, providing a teaser image of the happily-ever-after your product/service will assist the prospect reach.

Your Promised Land should be appealing and hard to achieve without support. Otherwise, why does your company exist?

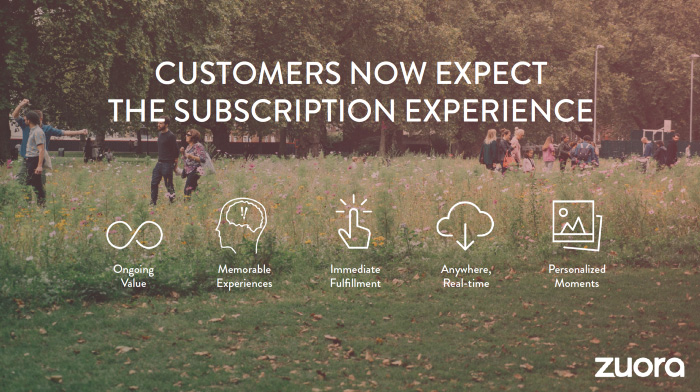

Zuora shows this Promised Land slide after explaining that the subscription economy will have winners and losers.

Not your product or service, but a new future state.

(I asked my friend Tim to describe his Promised Land, and he answered, "You’ll have the most innovative platform for ____." Nope: the Promised Land isn't possessing your technology, but living with it.)

Your Promised Land helps prospects market your solution to coworkers after your sales meeting. Your coworkers will wonder what you do without you. Your prospects are more likely to provide a persuasive answer with a captivating Promised Land.

#4. Present Features as “Mystic Gifts” for Overcoming Difficulties on the Road to the Promised Land

Successful sales decks follow the same format as epic films and fairy tales. Obi Wan gives Luke a lightsaber to help him destroy the Empire. You're Gandalf, helping Frodo destroy the ring. Your prospect is Cinderella, and you're her fairy godmother.

Position your product or service's skills as mystical gifts to aid your main character (prospect) achieve the Promised Land.

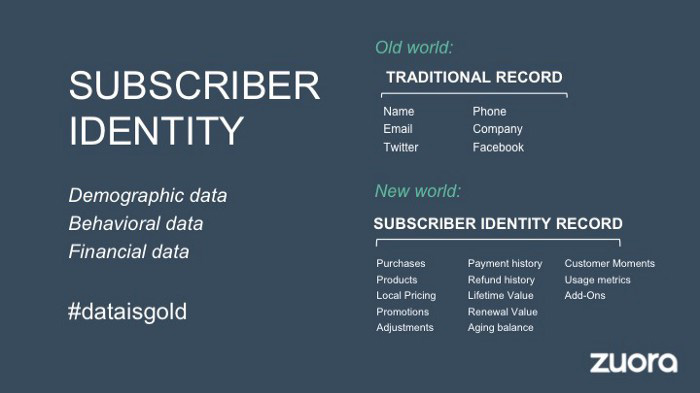

Zuora's client record slide is shown above. Without context, even the most technical prospect would be bored.

Positioned in the context of shifting from an “old” to a “new world”, it's the foundation for a compelling conversation with prospects—technical and otherwise—about why traditional solutions can't reach the Promised Land.

#5. Show Proof That You Can Make the Story True.

In this sense, you're promising possibilities that if they follow you, they'll reach the Promised Land.

The journey to the Promised Land is by definition rocky, so prospects are right to be cautious. The final part of the pitch is proof that you can make the story come true.

The most convincing proof is a success story about how you assisted someone comparable to the prospect. Zuora's sales people use a deck of customer success stories, but this one gets the essence.

I particularly appreciate this one from an NCR exec (a Zuora customer), which relates more strongly to Zuora's Promised Land:

Not enough successful customers? Product demos are the next best evidence, but features should always be presented in the context of helping a prospect achieve the Promised Land.

The best sales narrative is one that is told by everyone.

Success rarely comes from a fantastic deck alone. To be effective, salespeople need an organization-wide story about change, Promised Land, and Magic Gifts.

Zuora exemplifies this. If you hear a Zuora executive, including CEO Tien Tzuo, talk, you'll likely hear about the subscription economy and its winners and losers. This is the theme of the company's marketing communications, campaigns, and vision statement.

According to the ex-Zuora salesperson, company-wide story alignment made him successful.

The Zuora marketing folks ran campaigns and branding around this shift to the subscription economy, and [CEO] Tien [Tzuo] talked it up all the time. All of that was like air cover for my in-person sales ground attack. By the time I arrived, prospects were already convinced they had to act. It was the closest thing I’ve ever experienced to sales nirvana.

The largest deal ever

Tim contacted me three weeks after our lunch to tell me that prospects at large organizations were responding well to his new deck, which we modeled on Zuora's framework. First, prospects revealed their obstacles more quickly. The new pitch engages CFOs and other top gatekeepers better, he said.

A week later, Tim emailed that he'd signed his company's biggest agreement.

Next week, we’re headed back to Amber India to celebrate.

Jenn Leach

3 years ago

I created a faceless TikTok account. Six months later.

Follower count, earnings, and more

I created my 7th TikTok account six months ago. TikTok's great. I've developed accounts for Amazon products, content creators/brand deals education, website flipping, and more.

Introverted or shy people use faceless TikTok accounts.

Maybe they don't want millions of people to see their face online, or they want to remain anonymous so relatives and friends can't locate them.

Going faceless on TikTok can help you grow a following, communicate your message, and make money online.

Here are 6 steps I took to turn my Tik Tok account into a $60,000/year side gig.

From nothing to $60K in 6 months

It's clickbait, but it’s true. Here’s what I did to get here.

Quick context:

I've used social media before. I've spent years as a social creator and brand.

I've built Instagram, TikTok, and YouTube accounts to nearly 100K.

How I did it

First, select a niche.

If you can focus on one genre on TikTok, you'll have a better chance of success, however lifestyle creators do well too.

Niching down is easier, in my opinion.

Examples:

Travel

Food

Kids

Earning cash

Finance

You can narrow these niches if you like.

During the pandemic, a travel blogger focused on Texas-only tourism and gained 1 million subscribers.

Couponing might be a finance specialization.

One of my finance TikTok accounts gives credit tips and grants and has 23K followers.

Tons of ways you can get more specific.

Consider how you'll monetize your TikTok account. I saw many enormous TikTok accounts that lose money.

Why?

They can't monetize their niche. Not impossible to commercialize, but tough enough to inhibit action.

First, determine your goal.

In this first step, consider what your end goal is.

Are you trying to promote your digital products or social media management services?

You want brand deals or e-commerce sales.

This will affect your TikTok specialty.

This is the first step to a TikTok side gig.

Step 2: Pick a content style

Next, you want to decide on your content style.

Do you do voiceover and screenshots?

You'll demonstrate a product?

Will you faceless vlog?

Step 3: Look at the competition

Find anonymous accounts and analyze what content works, where they thrive, what their audience wants, etc.

This can help you make better content.

Like the skyscraper method for TikTok.

Step 4: Create a content strategy.

Your content plan is where you sit down and decide:

How many videos will you produce each day or each week?

Which links will you highlight in your biography?

What amount of time can you commit to this project?

You may schedule when to post videos on a calendar. Make videos.

5. Create videos.

No video gear needed.

Using a phone is OK, and I think it's preferable than posting drafts from a computer or phone.

TikTok prefers genuine material.

Use their app, tools, filters, and music to make videos.

And imperfection is preferable. Tik okers like to see videos made in a bedroom, not a film studio.

Make sense?

When making videos, remember this.

I personally use my phone and tablet.

Step 6: Monetize

Lastly, it’s time to monetize How will you make money? You decided this in step 1.

Time to act!

For brand agreements

Include your email in the bio.

Share several sites and use a beacons link in your bio.

Make cold calls to your favorite companies to get them to join you in a TikTok campaign.

For e-commerce

Include a link to your store's or a product's page in your bio.

For client work

Include your email in the bio.

Use a beacons link to showcase your personal website, portfolio, and other resources.

For affiliate marketing

Include affiliate product links in your bio.

Join the Amazon Influencer program and provide a link to your storefront in your bio.

$60,000 per year from Tik Tok?

Yes, and some creators make much more.

Tori Dunlap (herfirst100K) makes $100,000/month on TikTok.

My TikTok adventure took 6 months, but by month 2 I was making $1,000/month (or $12K/year).

By year's end, I want this account to earn $100K/year.

Imagine if my 7 TikTok accounts made $100K/year.

7 Tik Tok accounts X $100K/yr = $700,000/year