More on Personal Growth

Alexander Nguyen

3 years ago

How can you bargain for $300,000 at Google?

Don’t give a number

Google pays its software engineers generously. While many of their employees are competent, they disregard a critical skill to maximize their pay.

Negotiation.

If Google employees have never negotiated, they're as helpless as anyone else.

In this piece, I'll reveal a compensation negotiation tip that will set you apart.

The Fallacy of Negotiating

How do you negotiate your salary? “Just give them a number twice the amount you really want”. - Someplace on the internet

Above is typical negotiation advice. If you ask for more than you want, the recruiter may meet you halfway.

It seems logical and great, but here's why you shouldn't follow that advice.

Haitian hostage rescue

In 1977, an official's aunt was kidnapped in Haiti. The kidnappers demanded $150,000 for the aunt's life. It seems reasonable until you realize why kidnappers want $150,000.

FBI detective and negotiator Chris Voss researched why they demanded so much.

“So they could party through the weekend”

When he realized their ransom was for partying, he offered $4,751 and a CD stereo. Criminals freed the aunt.

These thieves gave 31.57x their estimated amount and got a fraction. You shouldn't trust these thieves to negotiate your compensation.

What happened?

Negotiating your offer and Haiti

This narrative teaches you how to negotiate with a large number.

You can and will be talked down.

If a recruiter asks your wage expectation and you offer double, be ready to explain why.

If you can't justify your request, you may be offered less. The recruiter will notice and talk you down.

Reasonably,

a tiny bit more than the present amount you earn

a small premium over an alternative offer

a little less than the role's allotted amount

Real-World Illustration

Recruiter: What’s your expected salary? Candidate: (I know the role is usually $100,000) $200,000 Recruiter: How much are you compensated in your current role? Candidate: $90,000 Recruiter: We’d be excited to offer you $95,000 for your experiences for the role.

So Why Do They Even Ask?

Recruiters ask for a number to negotiate a lower one. Asking yourself limits you.

You'll rarely get more than you asked for, and your request can be lowered.

The takeaway from all of this is to never give an expected compensation.

Tell them you haven't thought about it when you applied.

John Rampton

3 years ago

Ideas for Samples of Retirement Letters

Ready to quit full-time? No worries.

Baby Boomer retirement has accelerated since COVID-19 began. In 2020, 29 million boomers retire. Over 3 million more than in 2019. 75 million Baby Boomers will retire by 2030.

First, quit your work to enjoy retirement. Leave a professional legacy. Your retirement will start well. It all starts with a retirement letter.

Retirement Letter

Retirement letters are formal resignation letters. Different from other resignation letters, these don't tell your employer you're leaving. Instead, you're quitting.

Since you're not departing over grievances or for a better position or higher income, you may usually terminate the relationship amicably. Consulting opportunities are possible.

Thank your employer for their support and give them transition information.

Resignation letters aren't merely a formality. This method handles wages, insurance, and retirement benefits.

Retirement letters often accompany verbal notices to managers. Schedule a meeting before submitting your retirement letter to discuss your plans. The letter will be stored alongside your start date, salary, and benefits in your employee file.

Retirement is typically well-planned. Employers want 6-12 months' notice.

Summary

Guidelines for Giving Retirement Notice

Components of a Successful Retirement Letter

Template for Retirement Letter

Ideas for Samples of Retirement Letters

First Example of Retirement Letter

Second Example of Retirement Letter

Third Example of Retirement Letter

Fourth Example of Retirement Letter

Fifth Example of Retirement Letter

Sixth Example of Retirement Letter

Seventh Example of Retirement Letter

Eighth Example of Retirement Letter

Ninth Example of Retirement Letter

Tenth Example of Retirement Letter

Frequently Asked Questions

1. What is a letter of retirement?

2. Why should you include a letter of retirement?

3. What information ought to be in your retirement letter?

4. Must I provide notice?

5. What is the ideal retirement age?

Guidelines for Giving Retirement Notice

While starting a new phase, you're also leaving a job you were qualified for. You have years of experience. So, it may not be easy to fill a retirement-related vacancy.

Talk to your boss in person before sending a letter. Notice is always appreciated. Properly announcing your retirement helps you and your organization transition.

How to announce retirement:

Learn about the retirement perks and policies offered by the company. The first step in figuring out whether you're eligible for retirement benefits is to research your company's retirement policy.

Don't depart without providing adequate notice. You should give the business plenty of time to replace you if you want to retire in a few months.

Help the transition by offering aid. You could be a useful resource if your replacement needs training.

Contact the appropriate parties. The original copy should go to your boss. Give a copy to HR because they will manage your 401(k), pension, and health insurance.

Investigate the option of working as a consultant or part-time. If you desire, you can continue doing some limited work for the business.

Be nice to others. Describe your achievements and appreciation. Additionally, express your gratitude for giving you the chance to work with such excellent coworkers.

Make a plan for your future move. Simply updating your employer on your goals will help you maintain a good working relationship.

Use a formal letter or email to formalize your plans. The initial step is to speak with your supervisor and HR in person, but you must also give written notice.

Components of a Successful Retirement Letter

To write a good retirement letter, keep in mind the following:

A formal salutation. Here, the voice should be deliberate, succinct, and authoritative.

Be specific about your intentions. The key idea of your retirement letter is resignation. Your decision to depart at this time should be reflected in your letter. Remember that your intention must be clear-cut.

Your deadline. This information must be in resignation letters. Laws and corporate policies may both stipulate a minimum amount of notice.

A kind voice. Your retirement letter shouldn't contain any resentments, insults, or other unpleasantness. Your letter should be a model of professionalism and grace. A straightforward thank you is a terrific approach to accomplish that.

Your ultimate goal. Chaos may start to happen as soon as you turn in your resignation letter. Your position will need to be filled. Additionally, you will have to perform your obligations up until a successor is found. Your availability during the interim period should be stated in your resignation letter.

Give us a way to reach you. Even if you aren't consulting, your company will probably get in touch with you at some point. They might send you tax documents and details on perks. By giving your contact information, you can make this process easier.

Template for Retirement Letter

Identify

Title you held

Address

Supervisor's name

Supervisor’s position

Company name

HQ address

Date

[SUPERVISOR],

1.

Inform that you're retiring. Include your last day worked.

2.

Employer thanks. Mention what you're thankful for. Describe your accomplishments and successes.

3.

Helping moves things ahead. Plan your retirement. Mention your consultancy interest.

Sincerely,

[Signature]

First and last name

Phone number

Personal Email

Ideas for Samples of Retirement Letters

First Example of Retirement Letter

Martin D. Carey

123 Fleming St

Bloomfield, New Jersey 07003

(555) 555-1234

June 6th, 2022

Willie E. Coyote

President

Acme Co

321 Anvil Ave

Fairfield, New Jersey 07004

Dear Mr. Coyote,

This letter notifies Acme Co. of my retirement on August 31, 2022.

There has been no other organization that has given me that sense of belonging and purpose.

My fifteen years at the helm of the Structural Design Division have given me a strong sense of purpose. I’ve been fortunate to have your support, and I’ll be always grateful for the opportunity you offered me.

I had a difficult time making this decision. As a result of finding a small property in Arizona where we will be able to spend our remaining days together, my wife and I have decided to officially retire.

In spite of my regret at being unable to contribute to the firm we’ve built, I believe it is wise to move on.

My heart will always belong to Acme Co. Thank you for the opportunity and best of luck in the years to come.

Sincerely,

Martin D. Carey

Second Example of Retirement Letter

Gustavo Fring

Los Pollas Hermanos

12000–12100 Coors Rd SW,

Albuquerque, New Mexico 87045

Dear Mr. Fring,

I write this letter to announce my formal retirement from Los Pollas Hermanos as manager, effective October 15.

As an employee at Los Pollas Hermanos, I appreciate all the great opportunities you have given me. It has been a pleasure to work with and learn from my colleagues for the past 10 years, and I am looking forward to my next challenge.

If there is anything I can do to assist during this time, please let me know.

Sincerely,

Linda T. Crespo

Third Example of Retirement Letter

William M. Arviso

4387 Parkview Drive

Tustin, CA 92680

May 2, 2023

Tony Stark

Owner

Stark Industries

200 Industrial Avenue

Long Beach, CA 90803

Dear Tony:

I’m writing to inform you that my final day of work at Stark Industries will be May14, 2023. When that time comes, I intend to retire.

As I embark on this new chapter in my life, I would like to thank you and the entire Stark Industries team for providing me with so many opportunities. You have all been a pleasure to work with and I will miss you all when I retire.

I am glad to assist you with the transition in any way I can to ensure your new hire has a seamless experience. All ongoing projects will be completed until my retirement date, and all key information will be handed over to the team.

Once again, thank you for the opportunity to be part of the Stark Industries team. All the best to you and the team in the days to come.

Please do not hesitate to contact me if you require any additional information. In order to finalize my retirement plans, I’ll meet with HR and can provide any details that may be necessary.

Sincerely,

(Signature)

William M. Arviso

Fourth Example of Retirement Letter

Garcia, Barbara

First Street, 5432

New York City, NY 10001

(1234) (555) 123–1234

1 October 2022

Gunther

Owner

Central Perk

199 Lafayette St.

New York City, NY 10001

Mr. Gunther,

The day has finally arrived. As I never imagined, I will be formally retiring from Central Perk on November 1st, 2022.

Considering how satisfied I am with my current position, this may surprise you. It would be best if I retired now since my health has deteriorated, so I think this is a good time to do so.

There is no doubt that the past two decades have been wonderful. Over the years, I have seen a small coffee shop grow into one of the city’s top destinations.

It will be hard for me to leave this firm without wondering what more success we could have achieved. But I’m confident that you and the rest of the Central Perk team will achieve great things.

My family and I will never forget what you’ve done for us, and I am grateful for the chance you’ve given me. My house is always open to you.

Sincerely Yours

Garcia, Barbara

Fifth Example of Retirement Letter

Pat Williams

618 Spooky Place

Monstropolis, 23221

123–555–0031

pwilliams@email.com

Feb. 16, 2022

Mike Wazowski

Co-CEO

Monters, Inc.

324 Scare Road

Monstropolis

Dear Mr. Wazowski,

As a formal notice of my upcoming retirement, I am submitting this letter. I will be leaving Monters, Inc. on April 13.

These past 10 years as a marketing associate have provided me with many opportunities. Since we started our company a decade ago, we have seen the face of harnessing screams change dramatically into harnessing laughter. During my time working with this dynamic marketing team, I learned a lot about customer behavior and marketing strategies. Working closely with some of our long-standing clients, such as Boo, was a particular pleasure.

I would be happy to assist with the transition following my retirement. It would be my pleasure to assist in the hiring or training of my replacement. In order to spend more time with my family, I will also be able to offer part-time consulting services.

After I retire, I plan to cash out the eight unused vacation days I’ve accumulated and take my pension as a lump sum.

Thank you for the opportunity to work with Monters, Inc. In the years to come, I wish you all the best!

Sincerely,

Paul Williams

Sixth Example of Retirement Letter

Dear Micheal,

As In my tenure at Dunder Mifflin Paper Company, I have given everything I had. It has been an honor to work here. But I have decided to move on to new challenges and retire from my position — mainly bears, beets, and Battlestar Galactia.

I appreciate the opportunity to work here and learn so much. During my time at this company, I will always remember the good times and memories we shared. Wishing you all the best in the future.

Sincerely,

Dwight K. Shrute

Your signature

May 16

Seventh Example of Retirement Letter

Greetings, Bill

I am announcing my retirement from Initech, effective March 15, 2023.

Over the course of my career here, I’ve had the privilege of working with so many talented and inspiring people.

In 1999, when I began working as a customer service representative, we were a small organization located in a remote office park.

The fact that we now occupy a floor of the Main Street office building with over 150 employees continues to amaze me.

I am looking forward to spending more time with family and traveling the country in our RV. Although I will be sad to leave.

Please let me know if there are any extra steps I can take to facilitate this transfer.

Sincerely,

Frankin, RenitaEighth Example of Retirement Letter

Height Example of Retirement Letter

Bruce,

Please accept my resignation from Wayne Enterprises as Marketing Communications Director. My last day will be August 1, 2022.

The decision to retire has been made after much deliberation. Now that I have worked in the field for forty years, I believe it is a good time to begin completing my bucket list.

It was not easy for me to decide to leave the company. Having worked at Wayne Enterprises has been rewarding both professionally and personally. There are still a lot of memories associated with my first day as a college intern.

My intention was not to remain with such an innovative company, as you know. I was able to see the big picture with your help, however. Today, we are a force that is recognized both nationally and internationally.

In addition to your guidance, the bold, visionary leadership of our company contributed to the growth of our company.

My departure from the company coincides with a particularly hectic time. Despite my best efforts, I am unable to postpone my exit.

My position would be well served by an internal solution. I have a more than qualified marketing manager in Caroline Crown. It would be a pleasure to speak with you about this.

In case I can be of assistance during the switchover, please let me know. Contact us at (555)555–5555. As part of my responsibilities, I am responsible for making sure all work is completed to Wayne Enterprise’s stringent requirements. Having the opportunity to work with you has been a pleasure. I wish you continued success with your thriving business.

Sincerely,

Cash, Cole

Marketing/Communications

Ninth Example of Retirement Letter

Norman, Jamie

2366 Hanover Street

Whitestone, NY 11357

555–555–5555

15 October 2022

Mr. Lippman

Head of Pendant Publishing

600 Madison Ave.

New York, New York

Respected Mr. Lippman,

Please accept my resignation effective November 1, 2022.

Over the course of my ten years at Pendant Publishing, I’ve had a great deal of fun and I’m quite grateful for all the assistance I’ve received.

It was a pleasure to wake up and go to work every day because of our outstanding corporate culture and the opportunities for promotion and professional advancement available to me.

While I am excited about retiring, I am going to miss being part of our team. It’s my hope that I’ll be able to maintain the friendships I’ve formed here for a long time to come.

In case I can be of assistance prior to or following my departure, please let me know. If I can assist in any way to ensure a smooth transfer to my successor, I would be delighted to do so.

Sincerely,

Signed (hard copy letter)

Norman, Jamie

Tenth Example of Retirement Letter

17 January 2023

Greg S. Jackson

Cyberdyne Systems

18144 El Camino Real,

Sunnyvale, CA

Respected Mrs. Duncan,

I am writing to inform you that I will be resigning from Cyberdyne Systems as of March 1, 2023. I’m grateful to have had this opportunity, and it was a difficult decision to make.

My development as a programmer and as a more seasoned member of the organization has been greatly assisted by your coaching.

I have been proud of Cyberdyne Systems’ ethics and success throughout my 25 years at the company. Starting as a mailroom clerk and currently serving as head programmer.

The portfolios of our clients have always been handled with the greatest care by my colleagues. It is our employees and services that have made Cyberdyne Systems the success it is today.

During my tenure as head of my division, I’ve increased our overall productivity by 800 percent, and I expect that trend to continue after I retire.

In light of the fact that the process of replacing me may take some time, I would like to offer my assistance in any way I can.

The greatest contender for this job is Troy Ledford, my current assistant.

Also, before I leave, I would be willing to teach any partners how to use the programmer I developed to track and manage the development of Skynet.

Over the next few months, I’ll be enjoying vacations with my wife as well as my granddaughter moving to college.

If Cyberdyne Systems has any openings for consultants, please let me know. It has been a pleasure working with you over the last 25 years. I appreciate your concern and care.

Sincerely,

Greg S, Jackson

Questions and Answers

1. What is a letter of retirement?

Retirement letters tell your supervisor you're retiring. This informs your employer that you're departing, like a letter. A resignation letter also requests retirement benefits.

Supervisors frequently receive retirement letters and verbal resignations. Before submitting your retirement letter, meet to discuss your plans. This letter will be filed with your start date, salary, and benefits.

2. Why should you include a letter of retirement?

Your retirement letter should explain why you're leaving. When you quit, your manager and HR department usually know. Regardless, a retirement letter might help you leave on a positive tone. It ensures they have the necessary papers.

In your retirement letter, you tell the firm your plans so they can find your replacement. You may need to stay in touch with your company after sending your retirement letter until a successor is identified.

3. What information ought to be in your retirement letter?

Format it like an official letter. Include your retirement plans and retirement-specific statistics. Date may be most essential.

In some circumstances, benefits depend on when you resign and retire. A date on the letter helps HR or senior management verify when you gave notice and how long.

In addition to your usual salutation, address your letter to your manager or supervisor.

The letter's body should include your retirement date and transition arrangements. Tell them whether you plan to help with the transition or train a new employee. You may have a three-month time limit.

Tell your employer your job title, how long you've worked there, and your biggest successes. Personalize your letter by expressing gratitude for your career and outlining your retirement intentions. Finally, include your contact info.

4. Must I provide notice?

Two-week notice isn't required. Your company may require it. Some state laws contain exceptions.

Check your contract, company handbook, or HR to determine your retirement notice. Resigning may change the policy.

Regardless of your company's policy, notification is standard. Entry-level or junior jobs can be let go so the corporation can replace them.

Middle managers, high-level personnel, and specialists may take months to replace. Two weeks' notice is a courtesy. Start planning months ahead.

You can finish all jobs at that period. Prepare transition documents for coworkers and your replacement.

5. What is the ideal retirement age?

Depends on finances, state, and retirement plan. The average American retires at 62. The average retirement age is 66, according to Gallup's 2021 Economy and Personal Finance Survey.

Remember:

Before the age of 59 1/2, withdrawals from pre-tax retirement accounts, such as 401(k)s and IRAs, are subject to a penalty.

Benefits from Social Security can be accessed as early as age 62.

Medicare isn't available to you till you're 65,

Depending on the year of your birth, your Full Retirement Age (FRA) will be between 66 and 67 years old.

If you haven't taken them already, your Social Security benefits increase by 8% annually between ages 6 and 77.

Leon Ho

3 years ago

Digital Brainbuilding (Your Second Brain)

The human brain is amazing. As more scientists examine the brain, we learn how much it can store.

The human brain has 1 billion neurons, according to Scientific American. Each neuron creates 1,000 connections, totaling over a trillion. If each neuron could store one memory, we'd run out of room. [1]

What if you could store and access more info, freeing up brain space for problem-solving and creativity?

Build a second brain to keep up with rising knowledge (what I refer to as a Digital Brain). Effectively managing information entails realizing you can't recall everything.

Every action requires information. You need the correct information to learn a new skill, complete a project at work, or establish a business. You must manage information properly to advance your profession and improve your life.

How to construct a second brain to organize information and achieve goals.

What Is a Second Brain?

How often do you forget an article or book's key point? Have you ever wasted hours looking for a saved file?

If so, you're not alone. Information overload affects millions of individuals worldwide. Information overload drains mental resources and causes anxiety.

This is when the second brain comes in.

Building a second brain doesn't involve duplicating the human brain. Building a system that captures, organizes, retrieves, and archives ideas and thoughts. The second brain improves memory, organization, and recall.

Digital tools are preferable to analog for building a second brain.

Digital tools are portable and accessible. Due to these benefits, we'll focus on digital second-brain building.

Brainware

Digital Brains are external hard drives. It stores, organizes, and retrieves. This means improving your memory won't be difficult.

Memory has three components in computing:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

For example:

Due to rigorous security settings, many websites need you to create complicated passwords with special characters.

You must now memorize (Record), organize (Organize), and input this new password the next time you check in (Recall).

Even in this simple example, there are many pieces to remember. We can't recognize this new password with our usual patterns. If we don't use the password every day, we'll forget it. You'll type the wrong password when you try to remember it.

It's common. Is it because the information is complicated? Nope. Passwords are basically letters, numbers, and symbols.

It happens because our brains aren't meant to memorize these. Digital Brains can do heavy lifting.

Why You Need a Digital Brain

Dual minds are best. Birth brain is limited.

The cerebral cortex has 125 trillion synapses, according to a Stanford Study. The human brain can hold 2.5 million terabytes of digital data. [2]

Building a second brain improves learning and memory.

Learn and store information effectively

Faster information recall

Organize information to see connections and patterns

Build a Digital Brain to learn more and reach your goals faster. Building a second brain requires time and work, but you'll have more time for vital undertakings.

Why you need a Digital Brain:

1. Use Brainpower Effectively

Your brain has boundaries, like any organ. This is true while solving a complex question or activity. If you can't focus on a work project, you won't finish it on time.

Second brain reduces distractions. A robust structure helps you handle complicated challenges quickly and stay on track. Without distractions, it's easy to focus on vital activities.

2. Staying Organized

Professional and personal duties must be balanced. With so much to do, it's easy to neglect crucial duties. This is especially true for skill-building. Digital Brain will keep you organized and stress-free.

Life success requires action. Organized people get things done. Organizing your information will give you time for crucial tasks.

You'll finish projects faster with good materials and methods. As you succeed, you'll gain creative confidence. You can then tackle greater jobs.

3. Creativity Process

Creativity drives today's world. Creativity is mysterious and surprising for millions worldwide. Immersing yourself in others' associations, triggers, thoughts, and ideas can generate inspiration and creativity.

Building a second brain is crucial to establishing your creative process and building habits that will help you reach your goals. Creativity doesn't require perfection or overthinking.

4. Transforming Your Knowledge Into Opportunities

This is the age of entrepreneurship. Today, you can publish online, build an audience, and make money.

Whether it's a business or hobby, you'll have several job alternatives. Knowledge can boost your economy with ideas and insights.

5. Improving Thinking and Uncovering Connections

Modern career success depends on how you think. Instead of overthinking or perfecting, collect the best images, stories, metaphors, anecdotes, and observations.

This will increase your creativity and reveal connections. Increasing your imagination can help you achieve your goals, according to research. [3]

Your ability to recognize trends will help you stay ahead of the pack.

6. Credibility for a New Job or Business

Your main asset is experience-based expertise. Others won't be able to learn without your help. Technology makes knowledge tangible.

This lets you use your time as you choose while helping others. Changing professions or establishing a new business become learning opportunities when you have a Digital Brain.

7. Using Learning Resources

Millions of people use internet learning materials to improve their lives. Online resources abound. These include books, forums, podcasts, articles, and webinars.

These resources are mostly free or inexpensive. Organizing your knowledge can save you time and money. Building a Digital Brain helps you learn faster. You'll make rapid progress by enjoying learning.

How does a second brain feel?

Digital Brain has helped me arrange my job and family life for years.

No need to remember 1001 passwords. I never forget anything on my wife's grocery lists. Never miss a meeting. I can access essential information and papers anytime, anywhere.

Delegating memory to a second brain reduces tension and anxiety because you'll know what to do with every piece of information.

No information will be forgotten, boosting your confidence. Better manage your fears and concerns by writing them down and establishing a strategy. You'll understand the plethora of daily information and have a clear head.

How to Develop Your Digital Brain (Your Second Brain)

It's cheap but requires work.

Digital Brain development requires:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

1. Decide what information matters before recording.

To succeed in today's environment, you must manage massive amounts of data. Articles, books, webinars, podcasts, emails, and texts provide value. Remembering everything is impossible and overwhelming.

What information do you need to achieve your goals?

You must consolidate ideas and create a strategy to reach your aims. Your biological brain can imagine and create with a Digital Brain.

2. Use the Right Tool

We usually record information without any preparation - we brainstorm in a word processor, email ourselves a message, or take notes while reading.

This information isn't used. You must store information in a central location.

Different information needs different instruments.

Evernote is a top note-taking program. Audio clips, Slack chats, PDFs, text notes, photos, scanned handwritten pages, emails, and webpages can be added.

Pocket is a great software for saving and organizing content. Images, videos, and text can be sorted. Web-optimized design

Calendar apps help you manage your time and enhance your productivity by reminding you of your most important tasks. Calendar apps flourish. The best calendar apps are easy to use, have many features, and work across devices. These calendars include Google, Apple, and Outlook.

To-do list/checklist apps are useful for managing tasks. Easy-to-use, versatility, budget, and cross-platform compatibility are important when picking to-do list apps. Google Keep, Google Tasks, and Apple Notes are good to-do apps.

3. Organize data for easy retrieval

How should you organize collected data?

When you collect and organize data, you'll see connections. An article about networking can assist you comprehend web marketing. Saved business cards can help you find new clients.

Choosing the correct tools helps organize data. Here are some tools selection criteria:

Can the tool sync across devices?

Personal or team?

Has a search function for easy information retrieval?

Does it provide easy data categorization?

Can users create lists or collections?

Does it offer easy idea-information connections?

Does it mind map and visually organize thoughts?

Conclusion

Building a Digital Brain (second brain) helps us save information, think creatively, and implement ideas. Your second brain is a biological extension. It prevents amnesia, allowing you to tackle bigger creative difficulties.

People who love learning often consume information without using it. Every day, they postpone life-improving experiences until they're forgotten. Useful information becomes strength.

Reference

[1] ^ Scientific American: What Is the Memory Capacity of the Human Brain?

[2] ^ Clinical Neurology Specialists: What is the Memory Capacity of a Human Brain?

[3] ^ National Library of Medicine: Imagining Success: Multiple Achievement Goals and the Effectiveness of Imagery

You might also like

Antonio Neto

3 years ago

Should you skip the minimum viable product?

Are MVPs outdated and have no place in modern product culture?

Frank Robinson coined "MVP" in 2001. In the same year as the Agile Manifesto, the first Scrum experiment began. MVPs are old.

The concept was created to solve the waterfall problem at the time.

The market was still sour from the .com bubble. The tech industry needed a new approach. Product and Agile gained popularity because they weren't waterfall.

More than 20 years later, waterfall is dead as dead can be, but we are still talking about MVPs. Does that make sense?

What is an MVP?

Minimum viable product. You probably know that, so I'll be brief:

[…] The MVP fits your company and customer. It's big enough to cause adoption, satisfaction, and sales, but not bloated and risky. It's the product with the highest ROI/risk. […] — Frank Robinson, SyncDev

MVP is a complete product. It's not a prototype. It's your product's first iteration, which you'll improve. It must drive sales and be user-friendly.

At the MVP stage, you should know your product's core value, audience, and price. We are way deep into early adoption territory.

What about all the things that come before?

Modern product discovery

Eric Ries popularized the term with The Lean Startup in 2011. (Ries would work with the concept since 2008, but wide adoption came after the book was released).

Ries' definition of MVP was similar to Robinson's: "Test the market" before releasing anything. Ries never mentioned money, unlike Jobs. His MVP's goal was learning.

“Remove any feature, process, or effort that doesn't directly contribute to learning” — Eric Ries, The Lean Startup

Product has since become more about "what" to build than building it. What started as a learning tool is now a discovery discipline: fake doors, prototyping, lean inception, value proposition canvas, continuous interview, opportunity tree... These are cheap, effective learning tools.

Over time, companies realized that "maximum ROI divided by risk" started with discovery, not the MVP. MVPs are still considered discovery tools. What is the problem with that?

Time to Market vs Product Market Fit

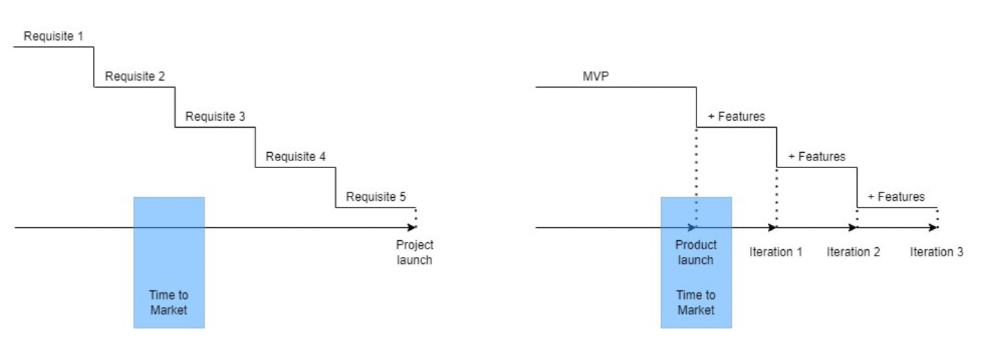

Waterfall's Time to Market is its biggest flaw. Since projects are sliced horizontally rather than vertically, when there is nothing else to be done, it’s not because the product is ready, it’s because no one cares to buy it anymore.

MVPs were originally conceived as a way to cut corners and speed Time to Market by delivering more customer requests after they paid.

Original product development was waterfall-like.

Time to Market defines an optimal, specific window in which value should be delivered. It's impossible to predict how long or how often this window will be open.

Product Market Fit makes this window a "state." You don’t achieve Product Market Fit, you have it… and you may lose it.

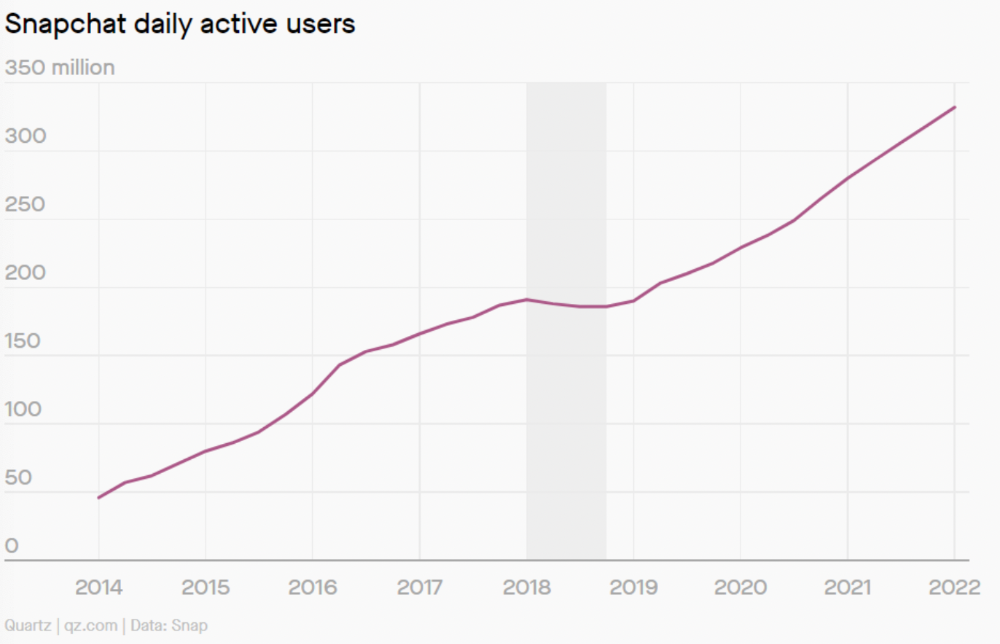

Take, for example, Snapchat. They had a great time to market, but lost product-market fit later. They regained product-market fit in 2018 and have grown since.

An MVP couldn't handle this. What should Snapchat do? Launch Snapchat 2 and see what the market was expecting differently from the last time? MVPs are a snapshot in time that may be wrong in two weeks.

MVPs are mini-projects. Instead of spending a lot of time and money on waterfall, you spend less but are still unsure of the results.

MVPs aren't always wrong. When releasing your first product version, consider an MVP.

Minimum viable product became less of a thing on its own and more interchangeable with Alpha Release or V.1 release over time.

Modern discovery technics are more assertive and predictable than the MVP, but clarity comes only when you reach the market.

MVPs aren't the starting point, but they're the best way to validate your product concept.

Sylvain Saurel

3 years ago

A student trader from the United States made $110 million in one month and rose to prominence on Wall Street.

Genius or lucky?

From the title, you might think I'm selling advertising for a financial influencer, a dubious trading site, or a training organization to attract clients. I'm suspicious. Better safe than sorry.

But not here.

Jake Freeman, 20, made $110 million in a month, according to the Financial Times. At 18, he ran for president. He made his name in markets, not politics. Two years later, he's Wall Street's prince. Interview requests flood the prodigy.

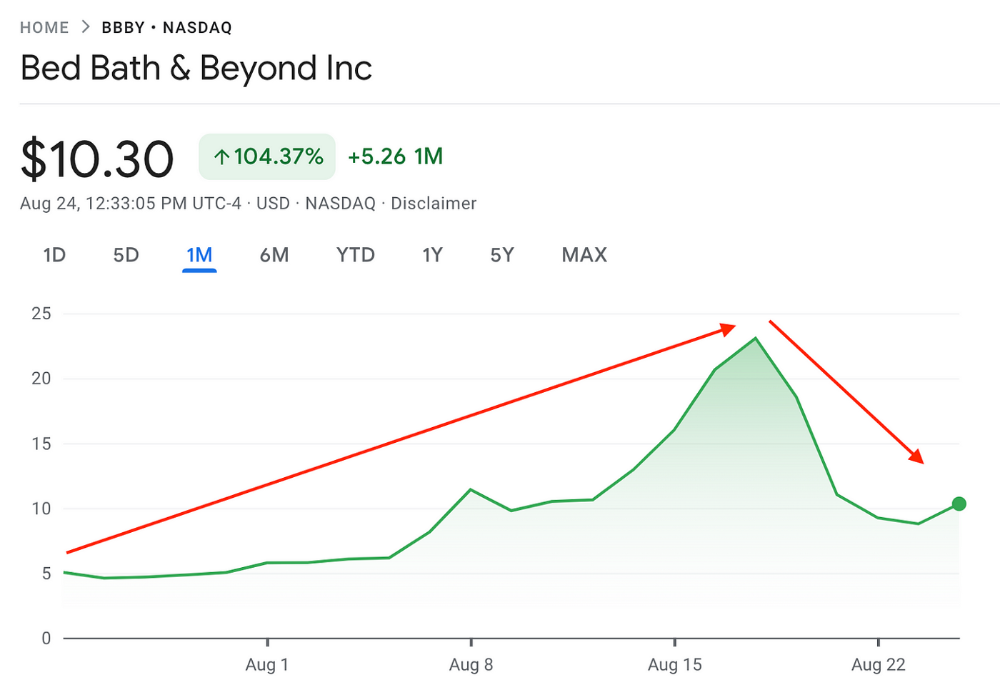

Jake Freeman bought 5 million Bed Bath & Beyond Group shares for $5.5 in July 2022 and sold them for $27 a month later. He thought the stock might double. Since speculation died down, he sold well. The stock fell 40.5% to 11 dollars on Friday, 19 August 2022. On August 22, 2022, it fell 16% to $9.

Smallholders have been buying the stock for weeks and will lose heavily if it falls further. Bed Bath & Beyond is the second most popular stock after Foot Locker, ahead of GameStop and Apple.

Jake Freeman earned $110 million thanks to a significant stock market flurry.

Online broker customers aren't the only ones with jitters. By June 2022, Ken Griffin's Citadel and Stephen Mandel's Lone Pine Capital held nearly a third of the company's capital. Did big managers sell before the stock plummeted?

Recent stock movements (derivatives) and rumors could prompt a SEC investigation.

Jake Freeman wrote to the board of directors after his investment to call for a turnaround, given the company's persistent problems and short sellers. The bathroom and kitchen products distribution group's stock soared in July 2022 due to renewed buying by private speculators, who made it one of their meme stocks with AMC and GameStop.

Second-quarter 2022 results and financial health worsened. He didn't celebrate his miraculous operation in a nightclub. He told a British newspaper, "I'm shocked." His parents dined in New York. He returned to Los Angeles to study math and economics.

Jake Freeman founded Freeman Capital Management with his savings and $25 million from family, friends, and acquaintances. They are the ones who are entitled to the $110 million he raised in one month. Will his investors pocket and withdraw all or part of their profits or will they trust the young prodigy for new stunts on Wall Street?

His operation should attract new clients. Well-known hedge funds may hire him.

Jake Freeman didn't listen to gurus or former traders. At 17, he interned at a quantitative finance and derivatives hedge fund, Volaris. At 13, he began investing with his pharmaceutical executive uncle. All countries have increased their Google searches for the young trader in the last week.

Naturally, his success has inspired resentment.

His success stirs jealousy, and he's attacked on social media. On Reddit, people who lost money on Bed Bath & Beyond, Jake Freeman's fortune, are mourning.

Several conspiracy theories circulate about him, including that he doesn't exist or is working for a Taiwanese amusement park.

If all 20 million American students had the same trading skills, they would have generated $1.46 trillion. Jake Freeman is unique. Apprentice traders' careers are often short, disillusioning, and tragic.

Two years ago, 20-year-old Robinhood client Alexander Kearns committed suicide after losing $750,000 trading options. Great traders start young. Michael Platt of BlueCrest invested in British stocks at age 12 under his grandmother's supervision and made a £30,000 fortune. Paul Tudor Jones started trading before he turned 18 with his uncle. Warren Buffett, at age 10, was discussing investments with Goldman Sachs' head. Oracle of Omaha tells all.

Wayne Duggan

4 years ago

What An Inverted Yield Curve Means For Investors

The yield spread between 10-year and 2-year US Treasury bonds has fallen below 0.2 percent, its lowest level since March 2020. A flattening or negative yield curve can be a bad sign for the economy.

What Is An Inverted Yield Curve?

In the yield curve, bonds of equal credit quality but different maturities are plotted. The most commonly used yield curve for US investors is a plot of 2-year and 10-year Treasury yields, which have yet to invert.

A typical yield curve has higher interest rates for future maturities. In a flat yield curve, short-term and long-term yields are similar. Inverted yield curves occur when short-term yields exceed long-term yields. Inversions of yield curves have historically occurred during recessions.

Inverted yield curves have preceded each of the past eight US recessions. The good news is they're far leading indicators, meaning a recession is likely not imminent.

Every US recession since 1955 has occurred between six and 24 months after an inversion of the two-year and 10-year Treasury yield curves, according to the San Francisco Fed. So, six months before COVID-19, the yield curve inverted in August 2019.

Looking Ahead

The spread between two-year and 10-year Treasury yields was 0.18 percent on Tuesday, the smallest since before the last US recession. If the graph above continues, a two-year/10-year yield curve inversion could occur within the next few months.

According to Bank of America analyst Stephen Suttmeier, the S&P 500 typically peaks six to seven months after the 2s-10s yield curve inverts, and the US economy enters recession six to seven months later.

Investors appear unconcerned about the flattening yield curve. This is in contrast to the iShares 20+ Year Treasury Bond ETF TLT +2.19% which was down 1% on Tuesday.

Inversion of the yield curve and rising interest rates have historically harmed stocks. Recessions in the US have historically coincided with or followed the end of a Federal Reserve rate hike cycle, not the start.