More on Web3 & Crypto

Scott Hickmann

4 years ago

YouTube

This is a YouTube video:

Onchain Wizard

3 years ago

Three Arrows Capital & Celsius Updates

I read 1k+ page 3AC liquidation documentation so you don't have to. Also sharing revised Celsius recovery plans.

3AC's liquidation documents:

Someone disclosed 3AC liquidation records in the BVI courts recently. I'll discuss the leak's timeline and other highlights.

Three Arrows Capital began trading traditional currencies in emerging markets in 2012. They switched to equities and crypto, then purely crypto in 2018.

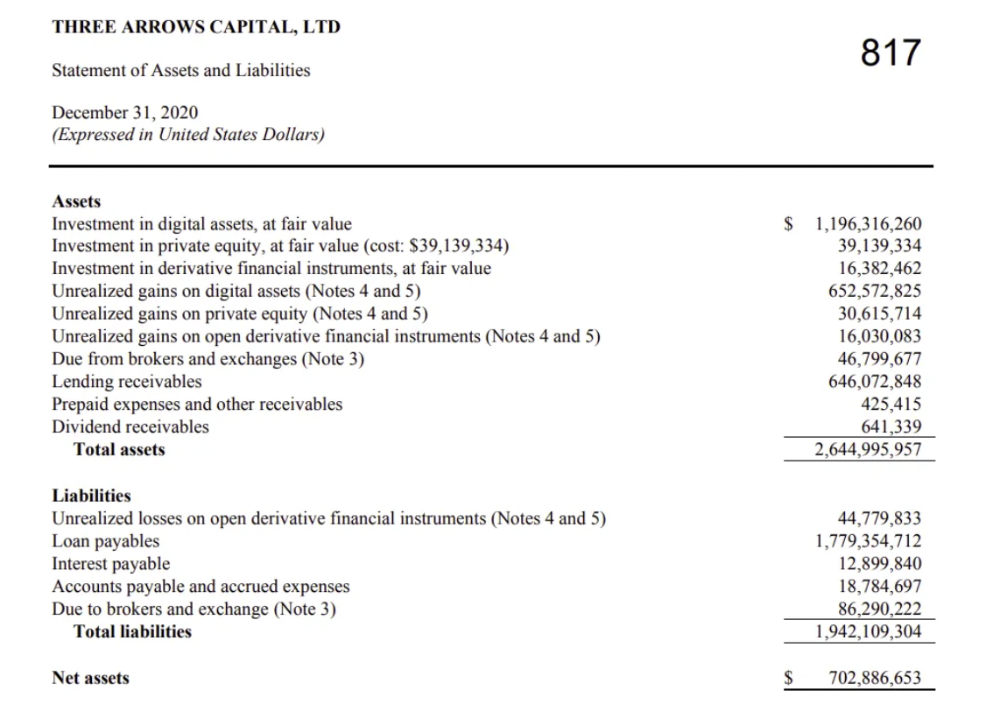

By 2020, the firm had $703mm in net assets and $1.8bn in loans (these guys really like debt).

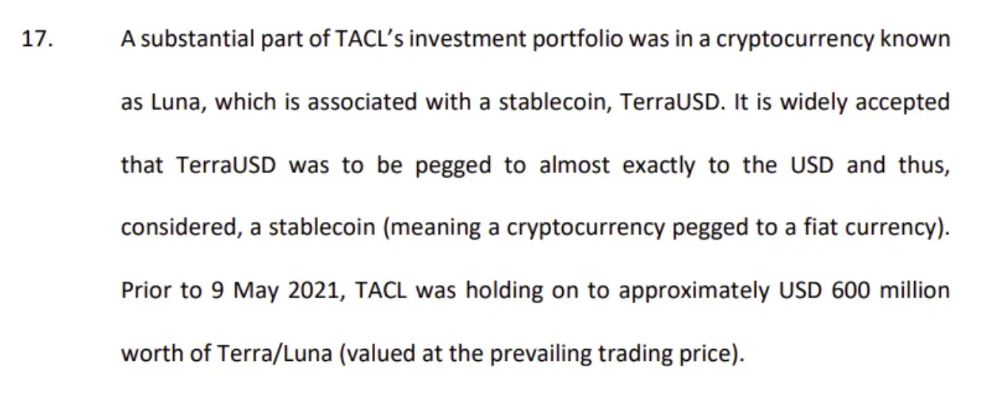

The firm's net assets under control reached $3bn in April 2022, according to the filings. 3AC had $600mm of LUNA/UST exposure before May 9th 2022, which put them over.

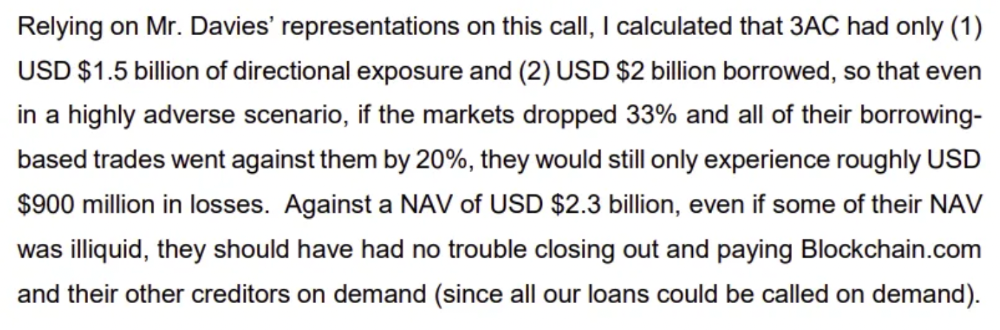

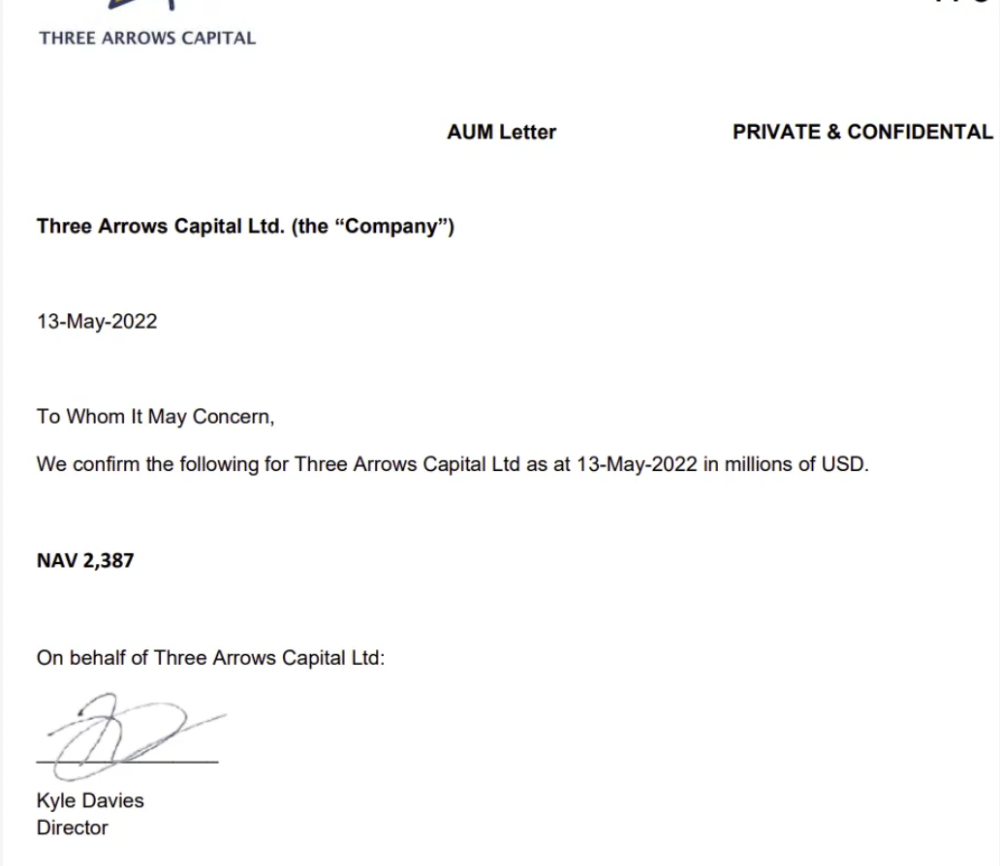

LUNA and UST go to zero quickly (I wrote about the mechanics of the blowup here). Kyle Davies, 3AC co-founder, told Blockchain.com on May 13 that they have $2.4bn in assets and $2.3bn NAV vs. $2bn in borrowings. As BTC and ETH plunged 33% and 50%, the company became insolvent by mid-2022.

3AC sent $32mm to Tai Ping Shen, a Cayman Islands business owned by Su Zhu and Davies' partner, Kelly Kaili Chen (who knows what is going on here).

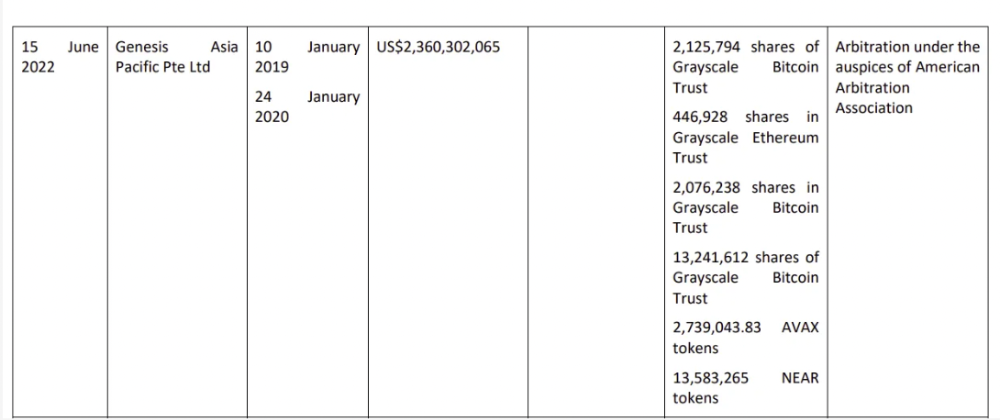

3AC had borrowed over $3.5bn in notional principle, with Genesis ($2.4bn) and Voyager ($650mm) having the most exposure.

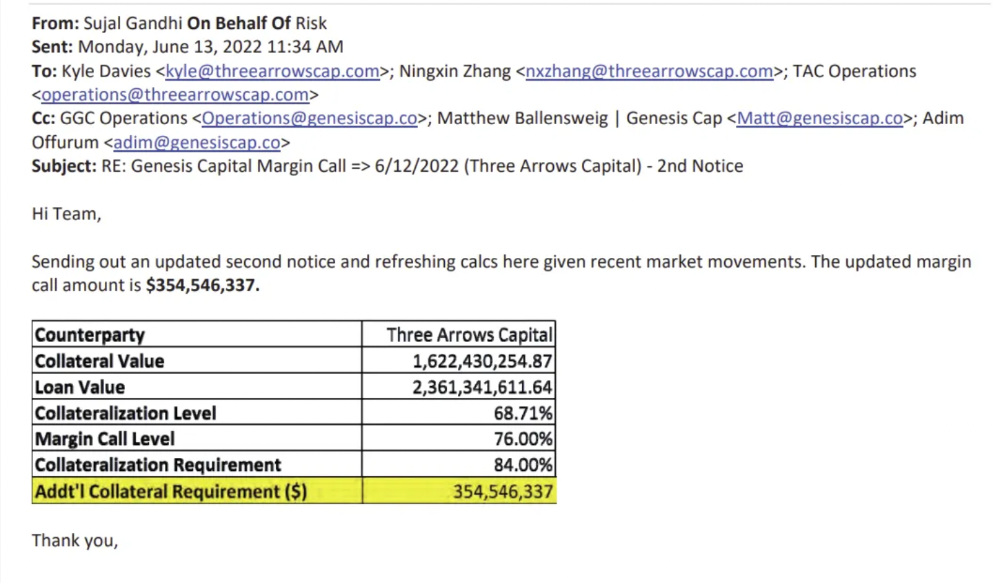

Genesis demanded $355mm in further collateral in June.

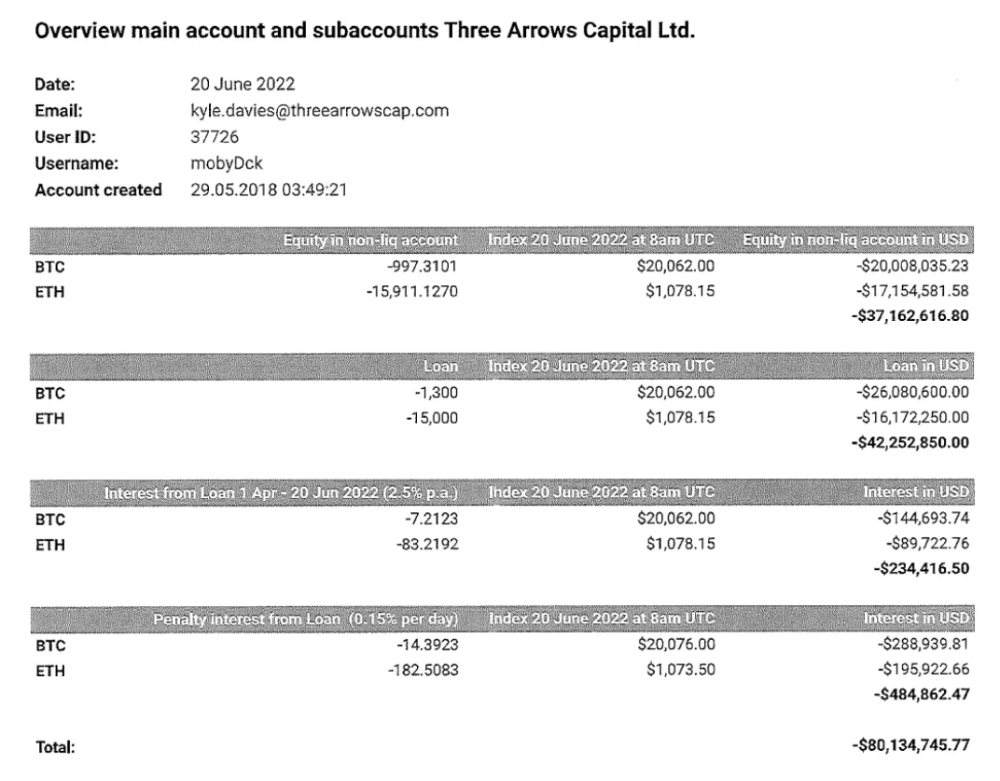

Deribit (another 3AC investment) called for $80 million in mid-June.

Even in mid-June, the corporation was trying to borrow more money to stay afloat. They approached Genesis for another $125mm loan (to pay another lender) and HODLnauts for BTC & ETH loans.

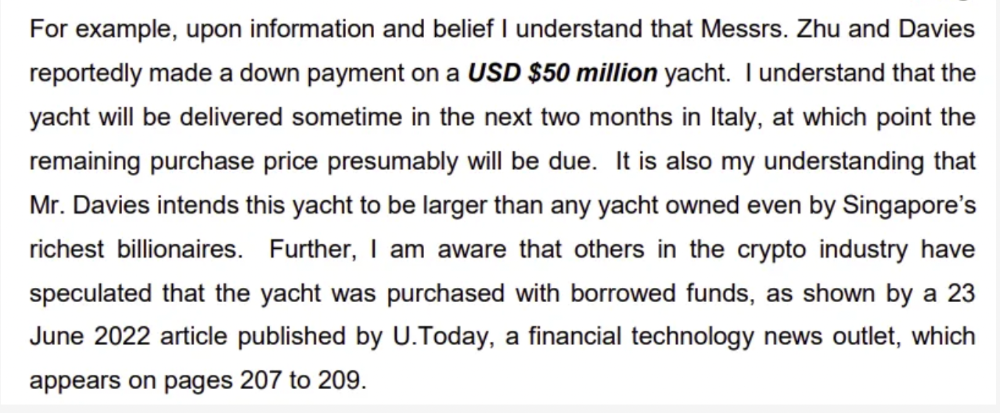

Pretty crazy. 3AC founders used borrowed money to buy a $50 million boat, according to the leak.

Su requesting for $5m + Chen Kaili Kelly asserting they loaned $65m unsecured to 3AC are identified as creditors.

Celsius:

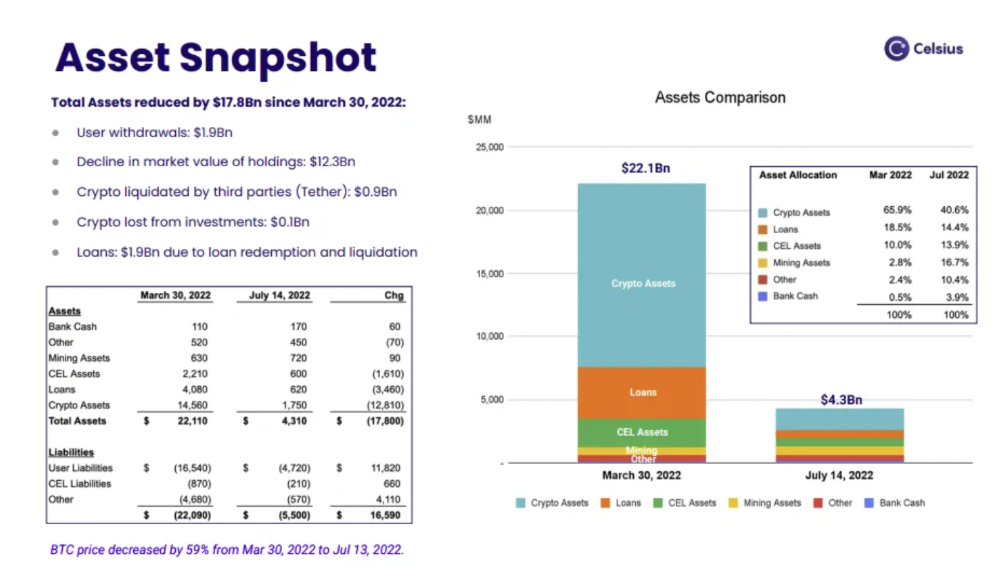

This bankruptcy presentation shows the Celsius breakdown from March to July 14, 2022. From $22bn to $4bn, crypto assets plummeted from $14.6bn to $1.8bn (ouch). $16.5bn in user liabilities dropped to $4.72bn.

In my recent post, I examined if "forced selling" is over, with Celsius' crypto assets being a major overhang. In this presentation, it looks that Chapter 11 will provide clients the opportunity to accept cash at a discount or remain long crypto. Provided that a fresh source of money is unlikely to enter the Celsius situation, cash at a discount or crypto given to customers will likely remain a near-term market risk - cash at a discount will likely come from selling crypto assets, while customers who receive crypto could sell at any time. I'll share any Celsius updates I find.

Conclusion

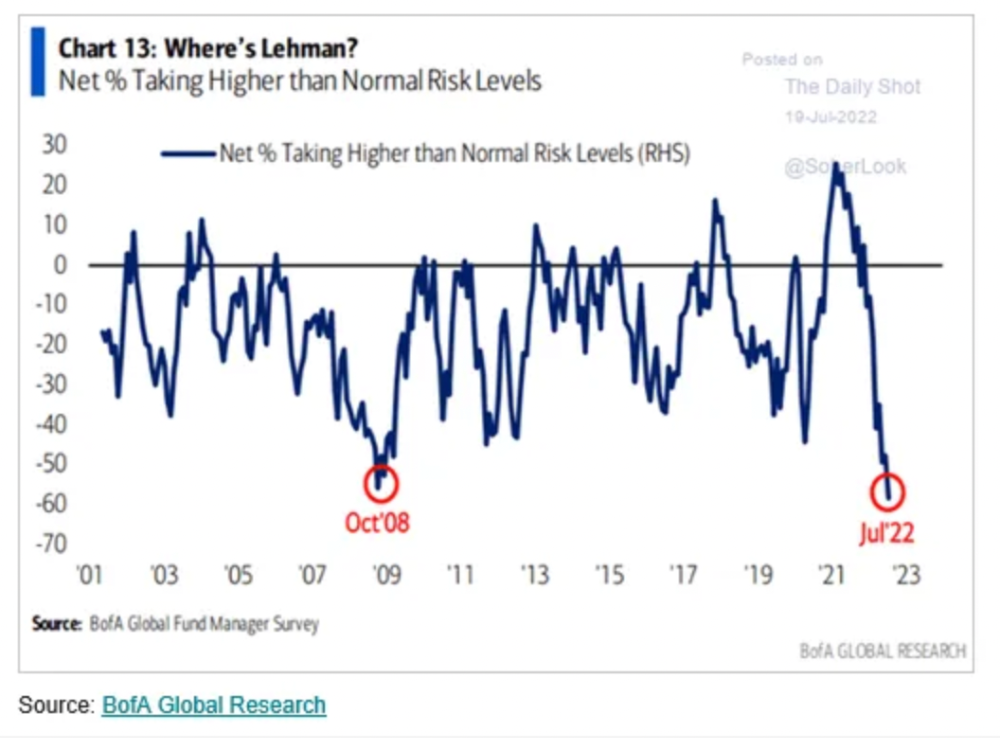

Only Celsius and the Mt Gox BTC unlock remain as forced selling catalysts. While everything went through a "relief" pump, with ETH up 75% from the bottom and numerous alts multiples higher, there are still macro dangers to equities + risk assets. There's a lot of wealth waiting to be deployed in crypto ($153bn in stables), but fund managers are risk apprehensive (lower than 2008 levels).

We're hopefully over crypto's "bottom," with peak anxiety and forced selling behind us, but we may chop around.

To see the full article, click here.

Vitalik

4 years ago

An approximate introduction to how zk-SNARKs are possible (part 1)

You can make a proof for the statement "I know a secret number such that if you take the word ‘cow', add the number to the end, and SHA256 hash it 100 million times, the output starts with 0x57d00485aa". The verifier can verify the proof far more quickly than it would take for them to run 100 million hashes themselves, and the proof would also not reveal what the secret number is.

In the context of blockchains, this has 2 very powerful applications: Perhaps the most powerful cryptographic technology to come out of the last decade is general-purpose succinct zero knowledge proofs, usually called zk-SNARKs ("zero knowledge succinct arguments of knowledge"). A zk-SNARK allows you to generate a proof that some computation has some particular output, in such a way that the proof can be verified extremely quickly even if the underlying computation takes a very long time to run. The "ZK" part adds an additional feature: the proof can keep some of the inputs to the computation hidden.

You can make a proof for the statement "I know a secret number such that if you take the word ‘cow', add the number to the end, and SHA256 hash it 100 million times, the output starts with 0x57d00485aa". The verifier can verify the proof far more quickly than it would take for them to run 100 million hashes themselves, and the proof would also not reveal what the secret number is.

In the context of blockchains, this has two very powerful applications:

- Scalability: if a block takes a long time to verify, one person can verify it and generate a proof, and everyone else can just quickly verify the proof instead

- Privacy: you can prove that you have the right to transfer some asset (you received it, and you didn't already transfer it) without revealing the link to which asset you received. This ensures security without unduly leaking information about who is transacting with whom to the public.

But zk-SNARKs are quite complex; indeed, as recently as in 2014-17 they were still frequently called "moon math". The good news is that since then, the protocols have become simpler and our understanding of them has become much better. This post will try to explain how ZK-SNARKs work, in a way that should be understandable to someone with a medium level of understanding of mathematics.

Why ZK-SNARKs "should" be hard

Let us take the example that we started with: we have a number (we can encode "cow" followed by the secret input as an integer), we take the SHA256 hash of that number, then we do that again another 99,999,999 times, we get the output, and we check what its starting digits are. This is a huge computation.

A "succinct" proof is one where both the size of the proof and the time required to verify it grow much more slowly than the computation to be verified. If we want a "succinct" proof, we cannot require the verifier to do some work per round of hashing (because then the verification time would be proportional to the computation). Instead, the verifier must somehow check the whole computation without peeking into each individual piece of the computation.

One natural technique is random sampling: how about we just have the verifier peek into the computation in 500 different places, check that those parts are correct, and if all 500 checks pass then assume that the rest of the computation must with high probability be fine, too?

Such a procedure could even be turned into a non-interactive proof using the Fiat-Shamir heuristic: the prover computes a Merkle root of the computation, uses the Merkle root to pseudorandomly choose 500 indices, and provides the 500 corresponding Merkle branches of the data. The key idea is that the prover does not know which branches they will need to reveal until they have already "committed to" the data. If a malicious prover tries to fudge the data after learning which indices are going to be checked, that would change the Merkle root, which would result in a new set of random indices, which would require fudging the data again... trapping the malicious prover in an endless cycle.

But unfortunately there is a fatal flaw in naively applying random sampling to spot-check a computation in this way: computation is inherently fragile. If a malicious prover flips one bit somewhere in the middle of a computation, they can make it give a completely different result, and a random sampling verifier would almost never find out.

It only takes one deliberately inserted error, that a random check would almost never catch, to make a computation give a completely incorrect result.

If tasked with the problem of coming up with a zk-SNARK protocol, many people would make their way to this point and then get stuck and give up. How can a verifier possibly check every single piece of the computation, without looking at each piece of the computation individually? There is a clever solution.

see part 2

You might also like

Rachel Greenberg

3 years ago

6 Causes Your Sales Pitch Is Unintentionally Repulsing Customers

Skip this if you don't want to discover why your lively, no-brainer pitch isn't making $10k a month.

You don't want to be repulsive as an entrepreneur or anyone else. Making friends, influencing people, and converting strangers into customers will be difficult if your words evoke disgust, distrust, or disrespect. You may be one of many entrepreneurs who do this obliviously and involuntarily.

I've had to master selling my skills to recruiters (to land 6-figure jobs on Wall Street), selling companies to buyers in M&A transactions, and selling my own companies' products to strangers-turned-customers. I probably committed every cardinal sin of sales repulsion before realizing it was me or my poor salesmanship strategy.

If you're launching a new business, frustrated by low conversion rates, or just curious if you're repelling customers, read on to identify (and avoid) the 6 fatal errors that can kill any sales pitch.

1. The first indication

So many people fumble before they even speak because they assume their role is to convince the buyer. In other words, they expect to pressure, arm-twist, and combat objections until they convert the buyer. Actuality, the approach stinks of disgust, and emotionally-aware buyers would feel "gross" immediately.

Instead of trying to persuade a customer to buy, ask questions that will lead them to do so on their own. When a customer discovers your product or service on their own, they need less outside persuasion. Why not position your offer in a way that leads customers to sell themselves on it?

2. A flawless performance

Are you memorizing a sales script, tweaking video testimonials, and expunging historical blemishes before hitting "publish" on your new campaign? If so, you may be hurting your conversion rate.

Perfection may be a step too far and cause prospects to mistrust your sincerity. Become a great conversationalist to boost your sales. Seriously. Being charismatic is hard without being genuine and showing a little vulnerability.

People like vulnerability, even if it dents your perfect facade. Show the customer's stuttering testimonial. Open up about your or your company's past mistakes (and how you've since improved). Make your sales pitch a two-way conversation. Let the customer talk about themselves to build rapport. Real people sell, not canned scripts and movie-trailer testimonials.

If marketing or sales calls feel like a performance, you may be doing something wrong or leaving money on the table.

3. Your greatest phobia

Three minutes into prospect talks, I'd start sweating. I was talking 100 miles per hour, covering as many bases as possible to avoid the ones I feared. I knew my then-offering was inadequate and my firm had fears I hadn't addressed. So I word-vomited facts, features, and everything else to avoid the customer's concerns.

Do my prospects know I'm insecure? Maybe not, but it added an unnecessary and unhelpful layer of paranoia that kept me stressed, rushed, and on edge instead of connecting with the prospect. Skirting around a company, product, or service's flaws or objections is a poor, temporary, lazy (and cowardly) decision.

How can you project confidence and trust if you're afraid? Before you make another sales call, face your shortcomings, weak points, and objections. Your company won't be everyone's cup of tea, but you should have answers to every question or objection. You should be your business's top spokesperson and defender.

4. The unintentional apologies

Have you ever begged for a sale? I'm going to say no, however you may be unknowingly emitting sorry, inferior, insecure energy.

Young founders, first-time entrepreneurs, and those with severe imposter syndrome may elevate their target customer. This is common when trying to get first customers for obvious reasons.

Since you're truly new at this, you naturally lack experience.

You don't have the self-confidence boost of thousands or hundreds of closed deals or satisfied client results to remind you that your good or service is worthwhile.

Getting those initial few clients seems like the most difficult task, as if doing so will decide the fate of your company as a whole (it probably won't, and you shouldn't actually place that much emphasis on any one transaction).

Customers can smell fear, insecurity, and anxiety just like they can smell B.S. If you believe your product or service improves clients' lives, selling it should feel like a benevolent act of service, not a sleazy money-grab. If you're a sincere entrepreneur, prospects will believe your proposition; if you're apprehensive, they'll notice.

Approach every sale as if you're fine with or without it. This has improved my salesmanship, marketing skills, and mental health. When you put pressure on yourself to close a sale or convince a difficult prospect "or else" (your company will fail, your rent will be late, your electricity will be cut), you emit desperation and lower the quality of your pitch. There's no point.

5. The endless promises

We've all read a million times how to answer or disprove prospects' arguments and add extra incentives to speed or secure the close. Some objections shouldn't be refuted. What if I told you not to offer certain incentives, bonuses, and promises? What if I told you to walk away from some prospects, even if it means losing your sales goal?

If you market to enough people, make enough sales calls, or grow enough companies, you'll encounter prospects who can't be satisfied. These prospects have endless questions, concerns, and requests for more, more, more that you'll never satisfy. These people are a distraction, a resource drain, and a test of your ability to cut losses before they erode your sanity and profit margin.

To appease or convert these insatiably needy, greedy Nellies into customers, you may agree with or acquiesce to every request and demand — even if you can't follow through. Once you overpromise and answer every hole they poke, their trust in you may wane quickly.

Telling a prospect what you can't do takes courage and integrity. If you're honest, upfront, and willing to admit when a product or service isn't right for the customer, you'll gain respect and positive customer experiences. Sometimes honesty is the most refreshing pitch and the deal-closer.

6. No matter what

Have you ever said, "I'll do anything to close this sale"? If so, you've probably already been disqualified. If a prospective customer haggles over a price, requests a discount, or continues to wear you down after you've made three concessions too many, you have a metal hook in your mouth, not them, and it may not end well. Why?

If you're so willing to cut a deal that you cut prices, comp services, extend payment plans, waive fees, etc., you betray your own confidence that your product or service was worth the stated price. They wonder if anyone is paying those prices, if you've ever had a customer (who wasn't a blood relative), and if you're legitimate or worth your rates.

Once a prospect senses that you'll do whatever it takes to get them to buy, their suspicions rise and they wonder why.

Why are you cutting pricing if something is wrong with you or your service?

Why are you so desperate for their sale?

Why aren't more customers waiting in line to pay your pricing, and if they aren't, what on earth are they doing there?

That's what a prospect thinks when you reveal your lack of conviction, desperation, and willingness to give up control. Some prospects will exploit it to drain you dry, while others will be too frightened to buy from you even if you paid them.

Walking down a two-way street. Be casual.

If we track each act of repulsion to an uneasiness, fear, misperception, or impulse, it's evident that these sales and marketing disasters were forced communications. Stiff, imbalanced, divisive, combative, bravado-filled, and desperate. They were unnatural and accepted a power struggle between two sparring, suspicious, unequal warriors, rather than a harmonious oneness of two natural, but opposite parties shaking hands.

Sales should be natural, harmonious. Sales should feel good for both parties, not like one party is having their arm twisted.

You may be doing sales wrong if it feels repulsive, icky, or degrading. If you're thinking cringe-worthy thoughts about yourself, your product, service, or sales pitch, imagine what you're projecting to prospects. Don't make it unpleasant, repulsive, or cringeworthy.

Theresa W. Carey

3 years ago

How Payment for Order Flow (PFOF) Works

What is PFOF?

PFOF is a brokerage firm's compensation for directing orders to different parties for trade execution. The brokerage firm receives fractions of a penny per share for directing the order to a market maker.

Each optionable stock could have thousands of contracts, so market makers dominate options trades. Order flow payments average less than $0.50 per option contract.

Order Flow Payments (PFOF) Explained

The proliferation of exchanges and electronic communication networks has complicated equity and options trading (ECNs) Ironically, Bernard Madoff, the Ponzi schemer, pioneered pay-for-order-flow.

In a December 2000 study on PFOF, the SEC said, "Payment for order flow is a method of transferring trading profits from market making to brokers who route customer orders to specialists for execution."

Given the complexity of trading thousands of stocks on multiple exchanges, market making has grown. Market makers are large firms that specialize in a set of stocks and options, maintaining an inventory of shares and contracts for buyers and sellers. Market makers are paid the bid-ask spread. Spreads have narrowed since 2001, when exchanges switched to decimals. A market maker's ability to play both sides of trades is key to profitability.

Benefits, requirements

A broker receives fees from a third party for order flow, sometimes without a client's knowledge. This invites conflicts of interest and criticism. Regulation NMS from 2005 requires brokers to disclose their policies and financial relationships with market makers.

Your broker must tell you if it's paid to send your orders to specific parties. This must be done at account opening and annually. The firm must disclose whether it participates in payment-for-order-flow and, upon request, every paid order. Brokerage clients can request payment data on specific transactions, but the response takes weeks.

Order flow payments save money. Smaller brokerage firms can benefit from routing orders through market makers and getting paid. This allows brokerage firms to send their orders to another firm to be executed with other orders, reducing costs. The market maker or exchange benefits from additional share volume, so it pays brokerage firms to direct traffic.

Retail investors, who lack bargaining power, may benefit from order-filling competition. Arrangements to steer the business in one direction invite wrongdoing, which can erode investor confidence in financial markets and their players.

Pay-for-order-flow criticism

It has always been controversial. Several firms offering zero-commission trades in the late 1990s routed orders to untrustworthy market makers. During the end of fractional pricing, the smallest stock spread was $0.125. Options spreads widened. Traders found that some of their "free" trades cost them a lot because they weren't getting the best price.

The SEC then studied the issue, focusing on options trades, and nearly decided to ban PFOF. The proliferation of options exchanges narrowed spreads because there was more competition for executing orders. Options market makers said their services provided liquidity. In its conclusion, the report said, "While increased multiple-listing produced immediate economic benefits to investors in the form of narrower quotes and effective spreads, these improvements have been muted with the spread of payment for order flow and internalization."

The SEC allowed payment for order flow to continue to prevent exchanges from gaining monopoly power. What would happen to trades if the practice was outlawed was also unclear. SEC requires brokers to disclose financial arrangements with market makers. Since then, the SEC has watched closely.

2020 Order Flow Payment

Rule 605 and Rule 606 show execution quality and order flow payment statistics on a broker's website. Despite being required by the SEC, these reports can be hard to find. The SEC mandated these reports in 2005, but the format and reporting requirements have changed over the years, most recently in 2018.

Brokers and market makers formed a working group with the Financial Information Forum (FIF) to standardize order execution quality reporting. Only one retail brokerage (Fidelity) and one market maker remain (Two Sigma Securities). FIF notes that the 605/606 reports "do not provide the level of information that allows a retail investor to gauge how well a broker-dealer fills a retail order compared to the NBBO (national best bid or offer’) at the time the order was received by the executing broker-dealer."

In the first quarter of 2020, Rule 606 reporting changed to require brokers to report net payments from market makers for S&P 500 and non-S&P 500 equity trades and options trades. Brokers must disclose payment rates per 100 shares by order type (market orders, marketable limit orders, non-marketable limit orders, and other orders).

Richard Repetto, Managing Director of New York-based Piper Sandler & Co., publishes a report on Rule 606 broker reports. Repetto focused on Charles Schwab, TD Ameritrade, E-TRADE, and Robinhood in Q2 2020. Repetto reported that payment for order flow was higher in the second quarter than the first due to increased trading activity, and that options paid more than equities.

Repetto says PFOF contributions rose overall. Schwab has the lowest options rates, while TD Ameritrade and Robinhood have the highest. Robinhood had the highest equity rating. Repetto assumes Robinhood's ability to charge higher PFOF reflects their order flow profitability and that they receive a fixed rate per spread (vs. a fixed rate per share by the other brokers).

Robinhood's PFOF in equities and options grew the most quarter-over-quarter of the four brokers Piper Sandler analyzed, as did their implied volumes. All four brokers saw higher PFOF rates.

TD Ameritrade took the biggest income hit when cutting trading commissions in fall 2019, and this report shows they're trying to make up the shortfall by routing orders for additional PFOF. Robinhood refuses to disclose trading statistics using the same metrics as the rest of the industry, offering only a vague explanation on their website.

Summary

Payment for order flow has become a major source of revenue as brokers offer no-commission equity (stock and ETF) orders. For retail investors, payment for order flow poses a problem because the brokerage may route orders to a market maker for its own benefit, not the investor's.

Infrequent or small-volume traders may not notice their broker's PFOF practices. Frequent traders and those who trade larger quantities should learn about their broker's order routing system to ensure they're not losing out on price improvement due to a broker prioritizing payment for order flow.

This post is a summary. Read full article here

Michael Salim

3 years ago

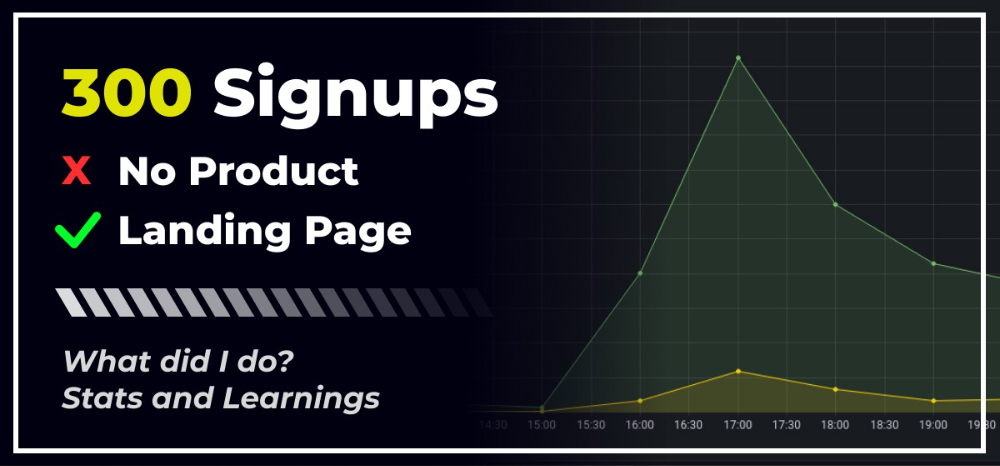

300 Signups, 1 Landing Page, 0 Products

I placed a link on HackerNews and got 300 signups in a week. This post explains what happened.

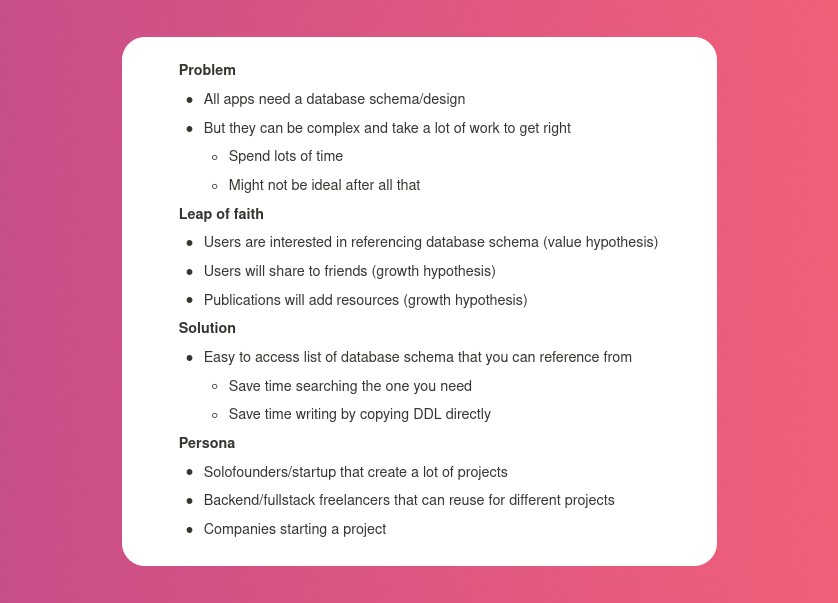

Product Concept

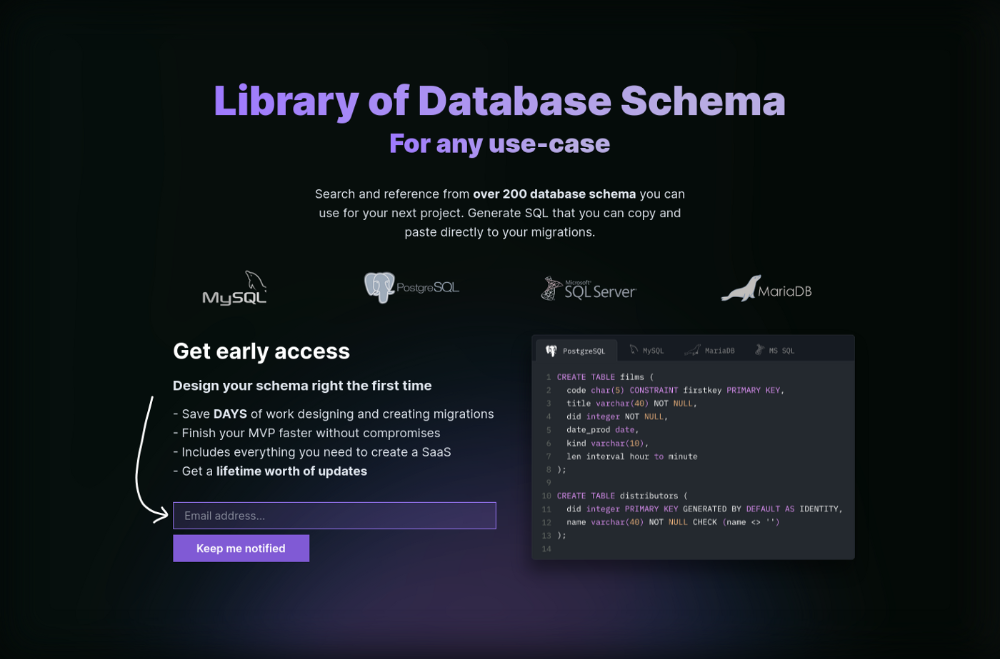

The product is DbSchemaLibrary. A library of Database Schema.

I'm not sure where this idea originated from. Very fast. Build fast, fail fast, test many ideas, and one will be a hit. I tried it. Let's try it anyway, even though it'll probably fail. I finished The Lean Startup book and wanted to use it.

Database job bores me. Important! I get drowsy working on it. Someone must do it. I remember this happening once. I needed examples at the time. Something similar to Recall (my other project) that I can copy — or at least use as a reference.

Frequently googled. Many tabs open. The results were useless. I raised my hand and agreed to construct the database myself.

It resurfaced. I decided to do something.

Due Diligence

Lean Startup emphasizes validated learning. Everything the startup does should result in learning. I may build something nobody wants otherwise. That's what happened to Recall.

So, I wrote a business plan document. This happens before I code. What am I solving? What is my proposed solution? What is the leap of faith between the problem and solution? Who would be my target audience?

My note:

In my previous project, I did the opposite!

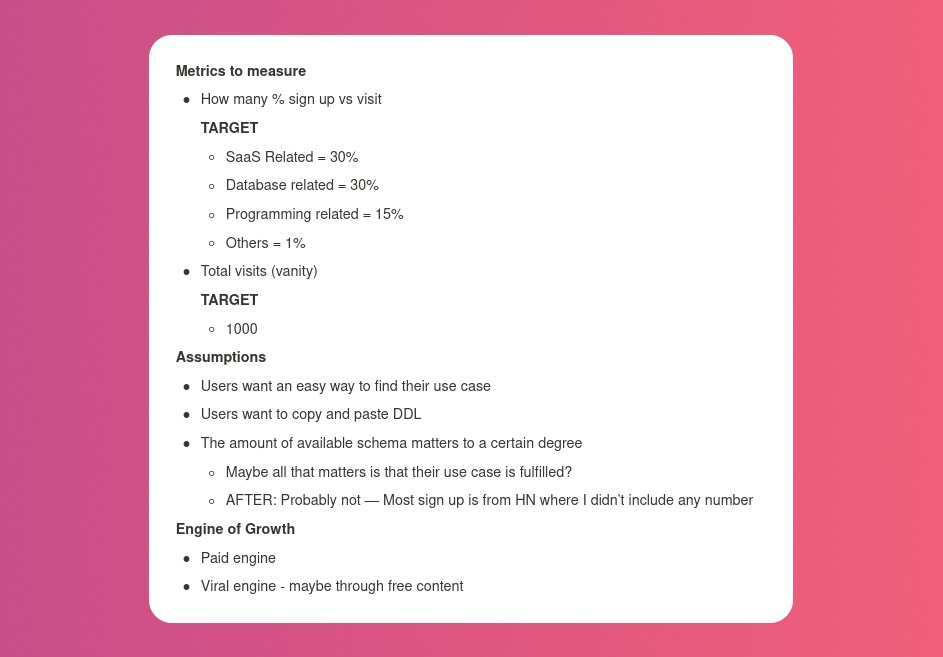

I wrote my expectations after reading the book's advice.

“Failure is a prerequisite to learning. The problem with the notion of shipping a product and then seeing what happens is that you are guaranteed to succeed — at seeing what happens.” — The Lean Startup book

These are successful metrics. If I don't reach them, I'll drop the idea and try another. I didn't understand numbers then. Below are guesses. But it’s a start!

I then wrote the project's What and Why. I'll use this everywhere. Before, I wrote a different pitch each time. I thought certain words would be better. I felt the audience might want something unusual.

Occasionally, this works. I'm unsure if it's a good idea. No stats, just my writing-time opinion. Writing every time is time-consuming and sometimes hazardous. Having a copy saved me duplication.

I can measure and learn from performance.

Last, I identified communities that might demand the product. This became an exercise in creativity.

The MVP

So now it’s time to build.

A MVP can test my assumptions. Business may learn from it. Not low-quality. We should learn from the tiniest thing.

I like the example of how Dropbox did theirs. They assumed that if the product works, people will utilize it. How can this be tested without a quality product? They made a movie demonstrating the software's functionality. Who knows how much functionality existed?

So I tested my biggest assumption. Users want schema references. How can I test if users want to reference another schema? I'd love this. Recall taught me that wanting something doesn't mean others do.

I made an email-collection landing page. Describe it briefly. Reference library. Each email sender wants a reference. They're interested in the product. Few other reasons exist.

Header and footer were skipped. No name or logo. DbSchemaLibrary is a name I thought of after the fact. 5-minute logo. I expected a flop. Recall has no users after months of labor. What could happen to a 2-day project?

I didn't compromise learning validation. How many visitors sign up? To draw a conclusion, I must track these results.

Posting Time

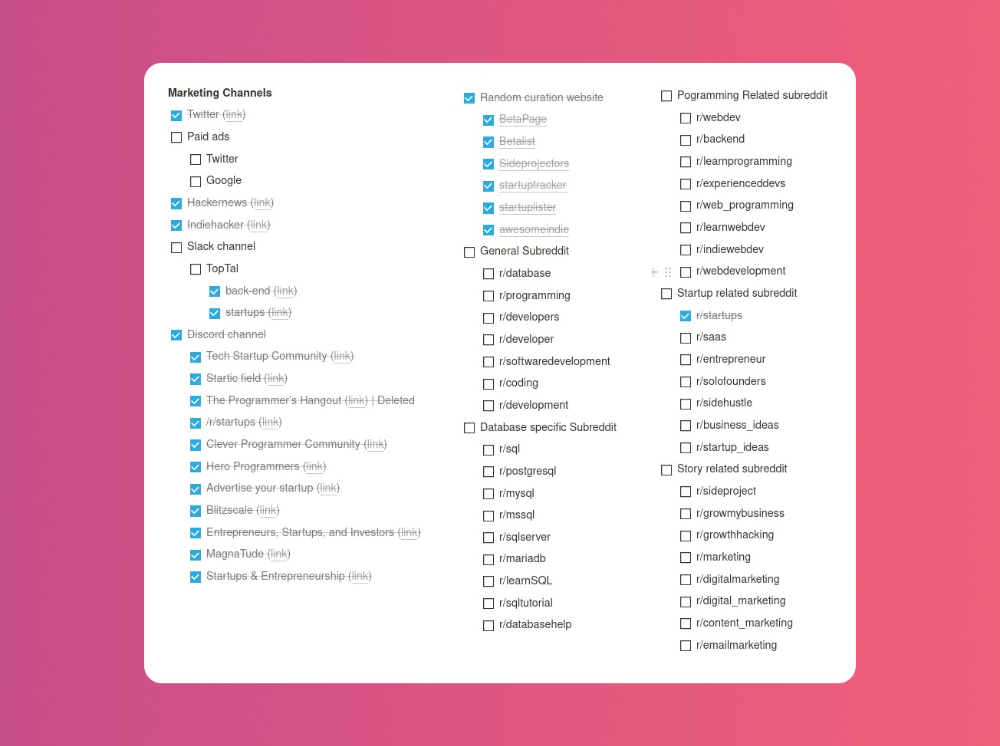

Now that the job is done, gauge interest. The next morning, I posted on all my channels. I didn't want to be spammy, therefore it required more time.

I made sure each channel had at least one fan of this product. I also answer people's inquiries in the channel.

My list stinks. Several channels wouldn't work. The product's target market isn't there. Posting there would waste our time. This taught me to create marketing channels depending on my persona.

Statistics! What actually happened

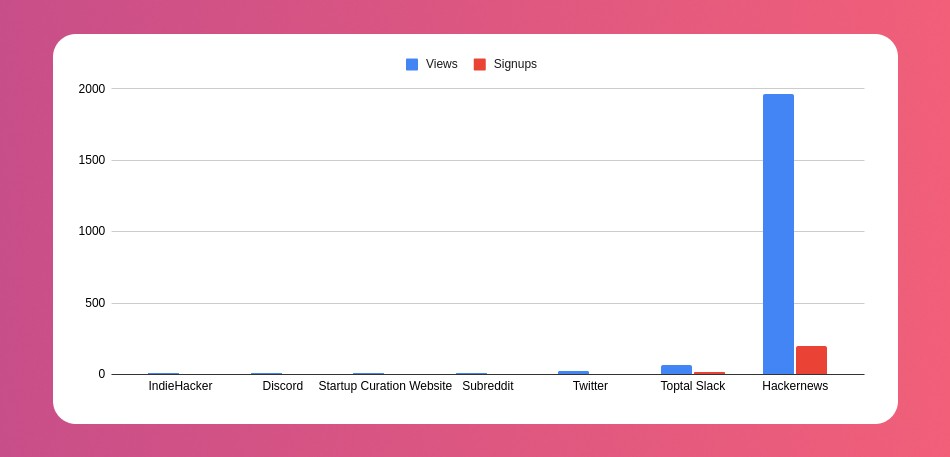

My favorite part! 23 channels received the link.

I stopped posting to Discord despite its high conversion rate. I eliminated some channels because they didn't fit. According to the numbers, some users like it. Most users think it's spam.

I was skeptical. And 12 people viewed it.

I didn't expect much attention on a startup subreddit. I'll likely examine Reddit further in the future. As I have enough info, I didn't post much. Time for the next validated learning

No comment. The post had few views, therefore the numbers are low.

The targeted people come next.

I'm a Toptal freelancer. There's a member-only Slack channel. Most people can't use this marketing channel, but you should! It's not as spectacular as discord's 27% conversion rate. But I think the users here are better.

I don’t really have a following anywhere so this isn’t something I can leverage.

The best yet. 10% is converted. With more data, I expect to attain a 10% conversion rate from other channels. Stable number.

This number required some work. Did you know that people use many different clients to read HN?

Unknowns

Untrackable views and signups abound. 1136 views and 135 signups are untraceable. It's 11%. I bet much of that came from Hackernews.

Overall Statistics

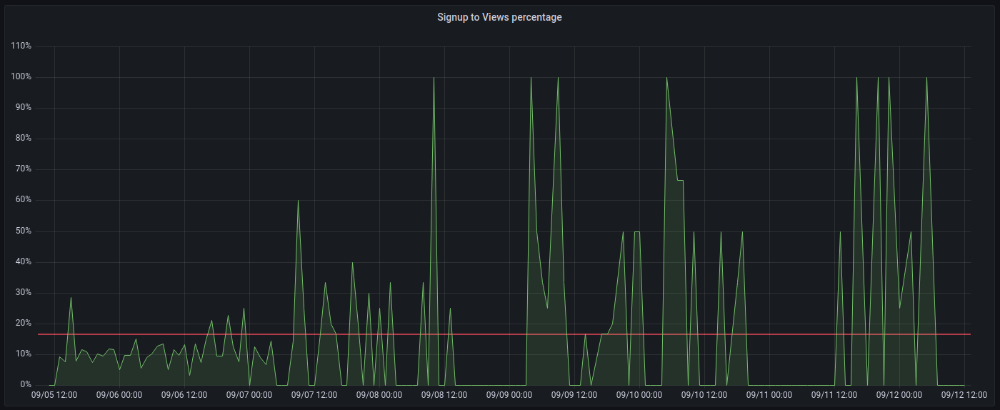

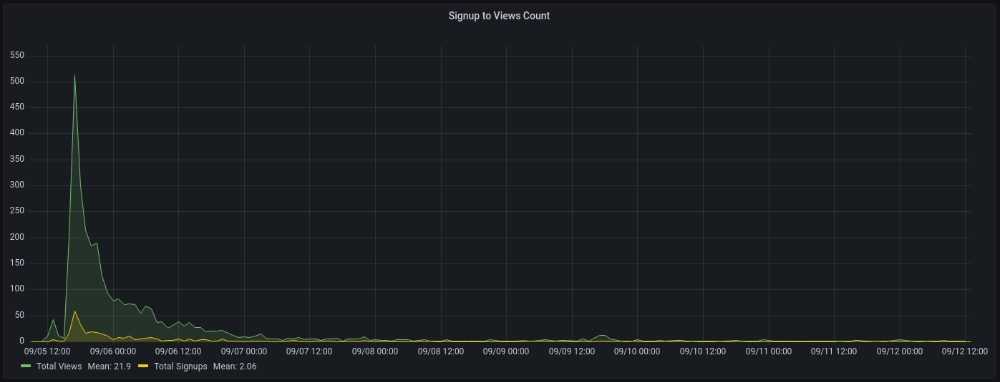

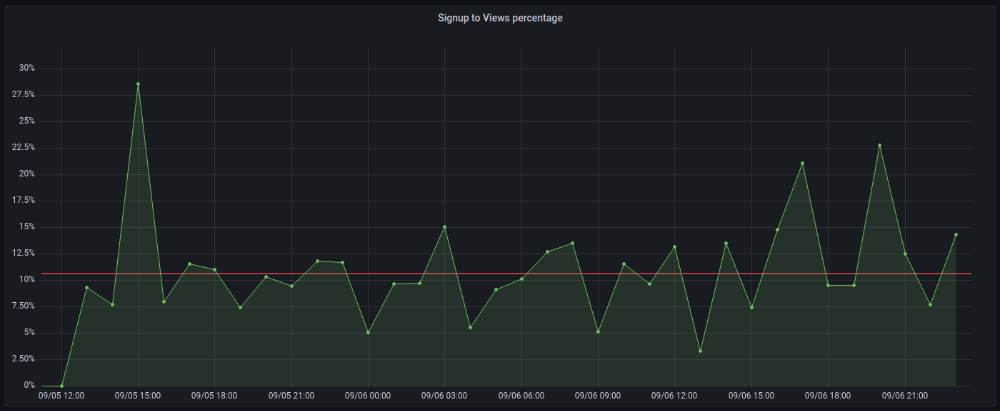

The 7-day signup-to-visit ratio was 17%. (Hourly data points)

First-day percentages were lower, which is noteworthy. Initially, it was little above 10%. The HN post started getting views then.

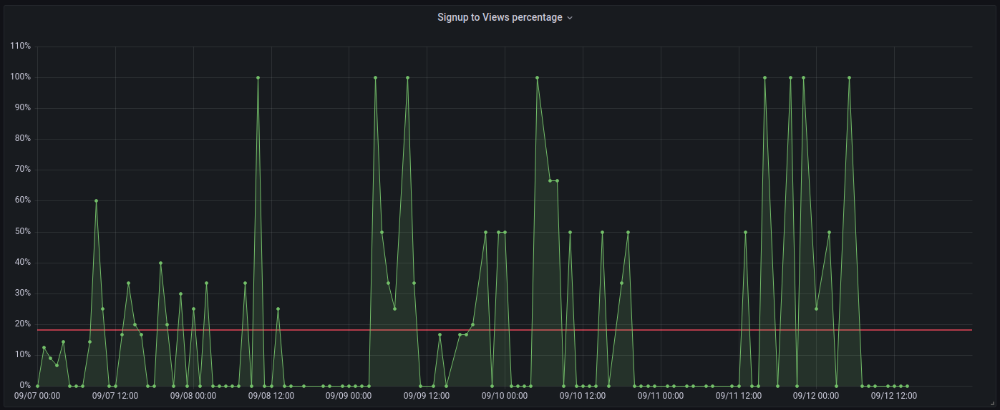

When traffic drops, the number reaches just around 20%. More individuals are interested in the connection. hn.algolia.com sent 2 visitors. This means people are searching and finding my post.

Interesting discoveries

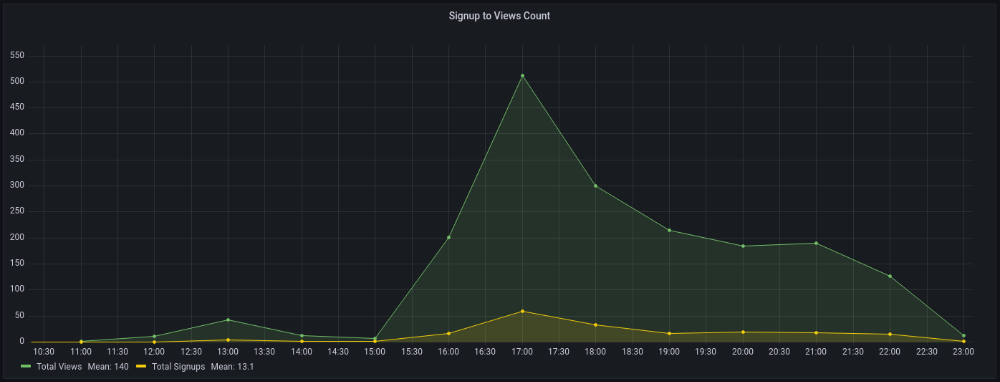

1. HN post struggled till the US woke up.

11am UTC. After an hour, it lost popularity. It seemed over. 7 signups converted 13%. Not amazing, but I would've thought ahead.

After 4pm UTC, traffic grew again. 4pm UTC is 9am PDT. US awakened. 10am PDT saw 512 views.

2. The product was highlighted in a newsletter.

I found Revue references when gathering data. Newsletter platform. Someone posted the newsletter link. 37 views and 3 registrations.

3. HN numbers are extremely reliable

I don't have a time-lapse graph (yet). The statistics were constant all day.

2717 views later 272 new users, or 10.1%

With 293 signups at 2856 views, 10.25%

At 306 signups at 2965 views, 10.32%

Learnings

1. My initial estimations were wildly inaccurate

I wrote 30% conversion. Reading some articles, looks like 10% is a good number to aim for.

2. Paying attention to what matters rather than vain metrics

The Lean Startup discourages vanity metrics. Feel-good metrics that don't measure growth or traction. Considering the proportion instead of the total visitors made me realize there was something here.

What’s next?

There are lots of work to do. Data aggregation, display, website development, marketing, legal issues. Fun! It's satisfying to solve an issue rather than investigate its cause.

In the meantime, I’ve already written the first project update in another post. Continue reading it if you’d like to know more about the project itself! Shifting from Quantity to Quality — DbSchemaLibrary