A Guide to VeeFriends and Series 2

VeeFriends is one of the most popular and unique NFT collections. VeeFriends launched around the same time as other PFP NFTs like Bored Ape Yacht Club.

Vaynerchuk (GaryVee) took a unique approach to his large-scale project, which has influenced the NFT ecosystem. GaryVee's VeeFriends is one of the most successful NFT membership use-cases, allowing him to build a community around his creative and business passions.

What is VeeFriends?

GaryVee's NFT collection, VeeFriends, was released on May 11, 2021. VeeFriends [Mini Drops], Book Games, and a forthcoming large-scale "Series 2" collection all stem from the initial drop of 10,255 tokens.

In "Series 1," there are G.O.O. tokens (Gary Originally Owned). GaryVee reserved 1,242 NFTs (over 12% of the supply) for his own collection, so only 9,013 were available at the Series 1 launch.

Each Series 1 token represents one of 268 human traits hand-drawn by Vaynerchuk. Gary Vee's NFTs offer owners incentives.

Who made VeeFriends?

Gary Vaynerchuk, AKA GaryVee, is influential in NFT. Vaynerchuk is the chairman of New York-based communications company VaynerX. Gary Vee, CEO of VaynerMedia, VaynerSports, and bestselling author, is worth $200 million.

GaryVee went from NFT collector to creator, launching VaynerNFT to help celebrities and brands.

Vaynerchuk's influence spans the NFT ecosystem as one of its most prolific voices. He's one of the most influential NFT figures, and his VeeFriends ecosystem keeps growing.

Vaynerchuk, a trend expert, thinks NFTs will be around for the rest of his life and VeeFriends will be a landmark project.

Why use VeeFriends NFTs?

The first VeeFriends collection has sold nearly $160 million via OpenSea. GaryVee insisted that the first 10,255 VeeFriends were just the beginning.

Book Games were announced to the VeeFriends community in August 2021. Mini Drops joined VeeFriends two months later.

Book Games

GaryVee's book "Twelve and a Half: Leveraging the Emotional Ingredients for Business Success" inspired Book Games. Even prior to the announcement Vaynerchuk had mapped out the utility of the book on an NFT scale. Book Games tied his book to the VeeFriends ecosystem and solidified its place in the collection.

GaryVee says Book Games is a layer 2 NFT project with 125,000 burnable tokens. Vaynerchuk's NFT fans were incentivized to buy as many copies of his new book as possible to receive NFT rewards later.

First, a bit about “layer 2.”

Layer 2 blockchain solutions help scale applications by routing transactions away from Ethereum Mainnet (layer 1). These solutions benefit from Mainnet's decentralized security model but increase transaction speed and reduce gas fees.

Polygon (integrated into OpenSea) and Immutable X are popular Ethereum layer 2 solutions. GaryVee chose Immutable X to reduce gas costs (transaction fees). Given the large supply of Book Games tokens, this decision will likely benefit the VeeFriends community, especially if the games run forever.

What's the strategy?

The VeeFriends patriarch announced on Aug. 27, 2021, that for every 12 books ordered during the Book Games promotion, customers would receive one NFT via airdrop. After nearly 100 days, GV sold over a million copies and announced that Book Games would go gamified on Jan. 10, 2022.

Immutable X's trading options make Book Games a "game." Book Games players can trade NFTs for other NFTs, sports cards, VeeCon tickets, and other prizes. Book Games can also whitelist other VeeFirends projects, which we'll cover in Series 2.

VeeFriends Mini Drops

GaryVee launched VeeFriends Mini Drops two months after Book Games, focusing on collaboration, scarcity, and the characters' "cultural longevity."

Spooky Vees, a collection of 31 1/1 Halloween-themed VeeFriends, was released on Halloween. First-come, first-served VeeFriend owners could claim these NFTs.

Mini Drops includes Gift Goat NFTs. By holding the Gift Goat VeeFriends character, collectors will receive 18 exclusive gifts curated by GaryVee and the team. Each gifting experience includes one physical gift and one NFT out of 555, to match the 555 Gift Goat tokens.

Gift Goat holders have gotten NFTs from Danny Cole (Creature World), Isaac "Drift" Wright (Where My Vans Go), Pop Wonder, and more.

GaryVee is poised to release the largest expansion of the VeeFriends and VaynerNFT ecosystem to date with VeeFriends Series 2.

VeeCon 101

By owning VeeFriends NFTs, collectors can join the VeeFriends community and attend VeeCon in 2022. The conference is only open to VeeCon NFT ticket holders (VeeFreinds + possibly more TBA) and will feature Beeple, Steve Aoki, and even Snoop Dogg.

The VeeFreinds floor in 2022 Q1 has remained at 16 ETH ($52,000), making VeeCon unattainable for most NFT enthusiasts. Why would someone spend that much crypto on a Minneapolis "superconference" ticket? Because of Gary Vaynerchuk.

Everything to know about VeeFriends Series 2

Vaynerchuk revealed in April 2022 that the VeeFriends ecosystem will grow by 55,555 NFTs after months of teasing.

With VeeFriends Series 2, each token will cost $995 USD in ETH, allowing NFT enthusiasts to join at a lower cost. The new series will be released on multiple dates in April.

Book Games NFT holders on the Friends List (whitelist) can mint Series 2 NFTs on April 12. Book Games holders have 32,000 NFTs.

VeeFriends Series 1 NFT holders can claim Series 2 NFTs on April 12. This allotment's supply is 10,255, like Series 1's.

On April 25, the public can buy 10,000 Series 2 NFTs. Unminted Friends List NFTs will be sold on this date, so this number may change.

The VeeFriends ecosystem will add 15 new characters (220 tokens each) on April 27. One character will be released per day for 15 days, and the only way to get one is to enter a daily raffle with Book Games tokens.

Series 2 NFTs won't give owners VeeCon access, but they will offer other benefits within the VaynerNFT ecosystem. Book Games and Series 2 will get new token burn mechanics in the upcoming drop.

Visit the VeeFriends blog for the latest collection info.

Where can you buy Gary Vee’s NFTs?

Need a VeeFriend NFT? Gary Vee recommends doing "50 hours of homework" before buying. OpenSea sells VeeFriends NFTs.

More on NFTs & Art

Amelia Winger-Bearskin

3 years ago

Hate NFTs? I must break some awful news to you...

If you think NFTs are awful, check out the art market.

The fervor around NFTs has subsided in recent months due to the crypto market crash and the media's short attention span. They were all anyone could talk about earlier this spring. Last semester, when passions were high and field luminaries were discussing "slurp juices," I asked my students and students from over 20 other universities what they thought of NFTs.

According to many, NFTs were either tasteless pyramid schemes or a new way for artists to make money. NFTs contributed to the climate crisis and harmed the environment, but so did air travel, fast fashion, and smartphones. Some students complained that NFTs were cheap, tasteless, algorithmically generated schlock, but others asked how this was different from other art.

I'm not sure what I expected, but the intensity of students' reactions surprised me. They had strong, emotional opinions about a technology I'd always considered administrative. NFTs address ownership and accounting, like most crypto/blockchain projects.

Art markets can be irrational, arbitrary, and subject to the same scams and schemes as any market. And maybe a few shenanigans that are unique to the art world.

The Fairness Question

Fairness, a deflating moral currency, was the general sentiment (the less of it in circulation, the more ardently we clamor for it.) These students, almost all of whom are artists, complained to the mismatch between the quality of the work in some notable NFT collections and the excessive amounts these items were fetching on the market. They can sketch a Bored Ape or Lazy Lion in their sleep. Why should they buy ramen with school loans while certain swindlers get rich?

I understand students. Art markets are unjust. They can be irrational, arbitrary, and governed by chance and circumstance, like any market. And art-world shenanigans.

Almost every mainstream critique leveled against NFTs applies just as easily to art markets

Over 50% of artworks in circulation are fake, say experts. Sincere art collectors and institutions are upset by the prevalence of fake goods on the market. Not everyone. Wealthy people and companies use art as investments. They can use cultural institutions like museums and galleries to increase the value of inherited art collections. People sometimes buy artworks and use family ties or connections to museums or other cultural taste-makers to hype the work in their collection, driving up the price and allowing them to sell for a profit. Money launderers can disguise capital flows by using market whims, hype, and fluctuating asset prices.

Almost every mainstream critique leveled against NFTs applies just as easily to art markets.

Art has always been this way. Edward Kienholz's 1989 print series satirized art markets. He stamped 395 identical pieces of paper from $1 to $395. Each piece was initially priced as indicated. Kienholz was joking about a strange feature of art markets: once the last print in a series sells for $395, all previous works are worth at least that much. The entire series is valued at its highest auction price. I don't know what a Kienholz print sells for today (inquire with the gallery), but it's more than $395.

I love Lee Lozano's 1969 "Real Money Piece." Lozano put cash in various denominations in a jar in her apartment and gave it to visitors. She wrote, "Offer guests coffee, diet pepsi, bourbon, half-and-half, ice water, grass, and money." "Offer real money as candy."

Lee Lozano kept track of who she gave money to, how much they took, if any, and how they reacted to the offer of free money without explanation. Diverse reactions. Some found it funny, others found it strange, and others didn't care. Lozano rarely says:

Apr 17 Keith Sonnier refused, later screws lid very tightly back on. Apr 27 Kaltenbach takes all the money out of the jar when I offer it, examines all the money & puts it all back in jar. Says he doesn’t need money now. Apr 28 David Parson refused, laughing. May 1 Warren C. Ingersoll refused. He got very upset about my “attitude towards money.” May 4 Keith Sonnier refused, but said he would take money if he needed it which he might in the near future. May 7 Dick Anderson barely glances at the money when I stick it under his nose and says “Oh no thanks, I intend to earn it on my own.” May 8 Billy Bryant Copley didn’t take any but then it was sort of spoiled because I had told him about this piece on the phone & he had time to think about it he said.

Smart Contracts (smart as in fair, not smart as in Blockchain)

Cornell University's Cheryl Finley has done a lot of research on secondary art markets. I first learned about her research when I met her at the University of Florida's Harn Museum, where she spoke about smart contracts (smart as in fair, not smart as in Blockchain) and new protocols that could help artists who are often left out of the economic benefits of their own work, including women and women of color.

Her talk included findings from her ArtNet op-ed with Lauren van Haaften-Schick, Christian Reeder, and Amy Whitaker.

NFTs allow us to think about and hack on formal contractual relationships outside a system of laws that is currently not set up to service our community.

The ArtNet article The Recent Sale of Amy Sherald's ‘Welfare Queen' Symbolizes the Urgent Need for Resale Royalties and Economic Equity for Artists discussed Sherald's 2012 portrait of a regal woman in a purple dress wearing a sparkling crown and elegant set of pearls against a vibrant red background.

Amy Sherald sold "Welfare Queen" to Princeton professor Imani Perry. Sherald agreed to a payment plan to accommodate Perry's budget.

Amy Sherald rose to fame for her 2016 portrait of Michelle Obama and her full-length portrait of Breonna Taylor, one of the most famous works of the past decade.

As is common, Sherald's rising star drove up the price of her earlier works. Perry's "Welfare Queen" sold for $3.9 million in 2021.

Imani Perry's early investment paid off big-time. Amy Sherald, whose work directly increased the painting's value and who was on an artist's shoestring budget when she agreed to sell "Welfare Queen" in 2012, did not see any of the 2021 auction money. Perry and the auction house got that money.

Sherald sold her Breonna Taylor portrait to the Smithsonian and Louisville's Speed Art Museum to fund a $1 million scholarship. This is a great example of what an artist can do for the community if they can amass wealth through their work.

NFTs haven't solved all of the art market's problems — fakes, money laundering, market manipulation — but they didn't create them. Blockchain and NFTs are credited with making these issues more transparent. More ideas emerge daily about what a smart contract should do for artists.

NFTs are a copyright solution. They allow us to hack formal contractual relationships outside a law system that doesn't serve our community.

Amy Sherald shows the good smart contracts can do (as in, well-considered, self-determined contracts, not necessarily blockchain contracts.) Giving back to our community, deciding where and how our work can be sold or displayed, and ensuring artists share in the equity of our work and the economy our labor creates.

Jim Clyde Monge

3 years ago

Can You Sell Images Created by AI?

Some AI-generated artworks sell for enormous sums of money.

But can you sell AI-Generated Artwork?

Simple answer: yes.

However, not all AI services enable allow usage and redistribution of images.

Let's check some of my favorite AI text-to-image generators:

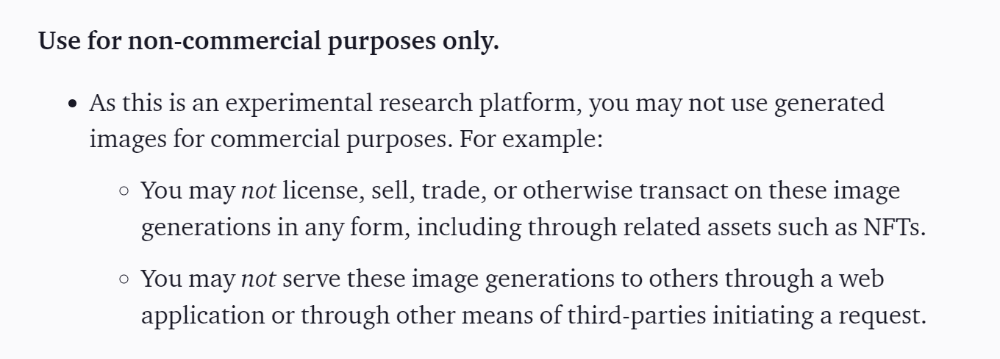

Dall-E2 by OpenAI

The AI art generator Dall-E2 is powerful. Since it’s still in beta, you can join the waitlist here.

OpenAI DOES NOT allow the use and redistribution of any image for commercial purposes.

Here's the policy as of April 6, 2022.

Here are some images from Dall-E2’s webpage to show its art quality.

Several Reddit users reported receiving pricing surveys from OpenAI.

This suggests the company may bring out a subscription-based tier and a commercial license to sell images soon.

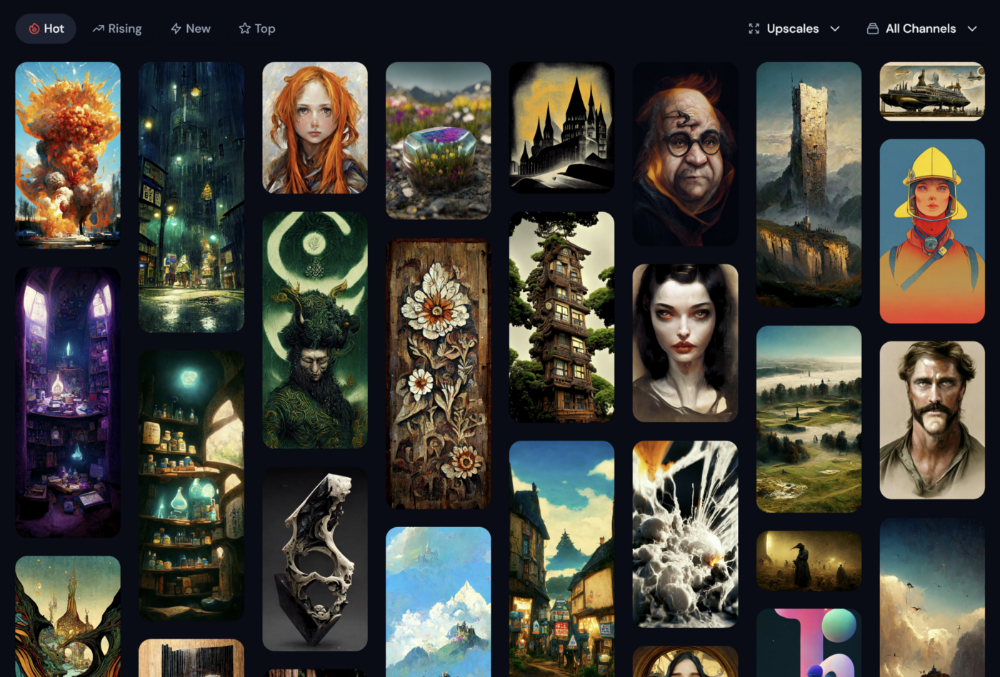

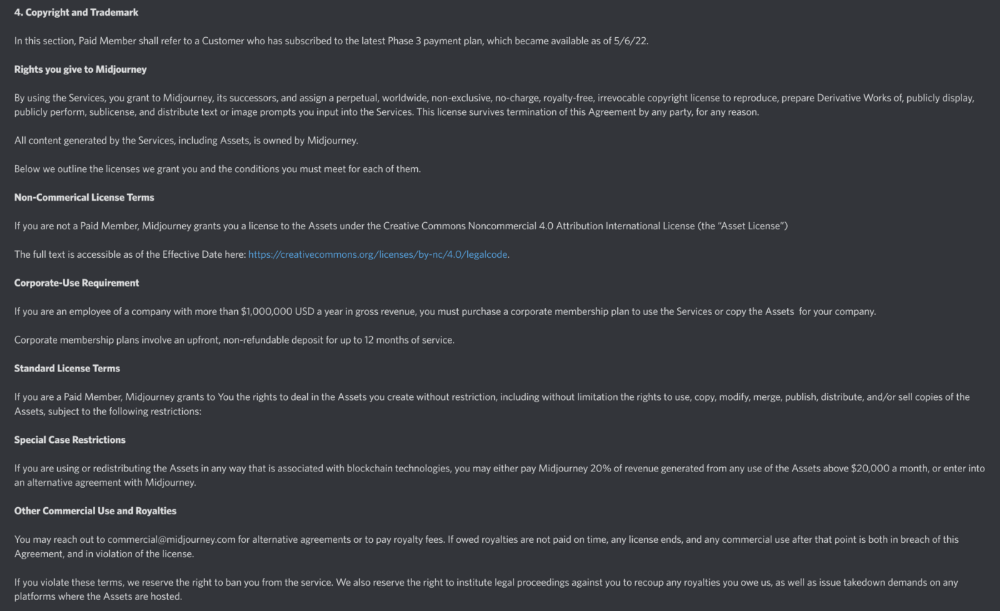

MidJourney

I like Midjourney's art generator. It makes great AI images. Here are some samples:

Standard Licenses are available for $10 per month.

Standard License allows you to use, copy, modify, merge, publish, distribute, and/or sell copies of the images, except for blockchain technologies.

If you utilize or distribute the Assets using blockchain technology, you must pay MidJourney 20% of revenue above $20,000 a month or engage in an alternative agreement.

Here's their copyright and trademark page.

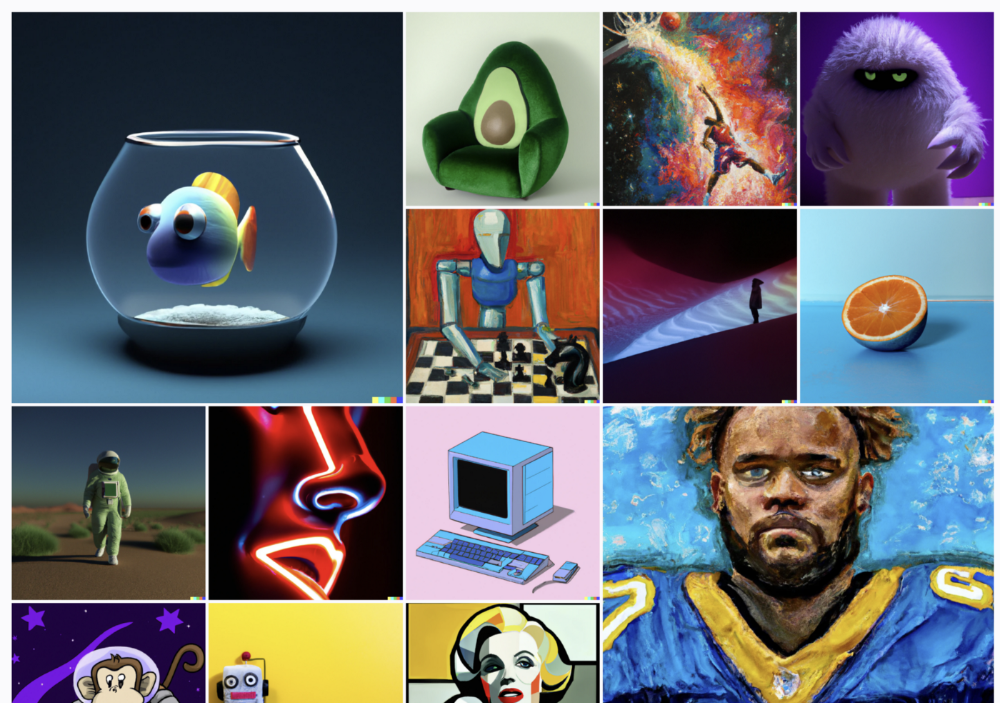

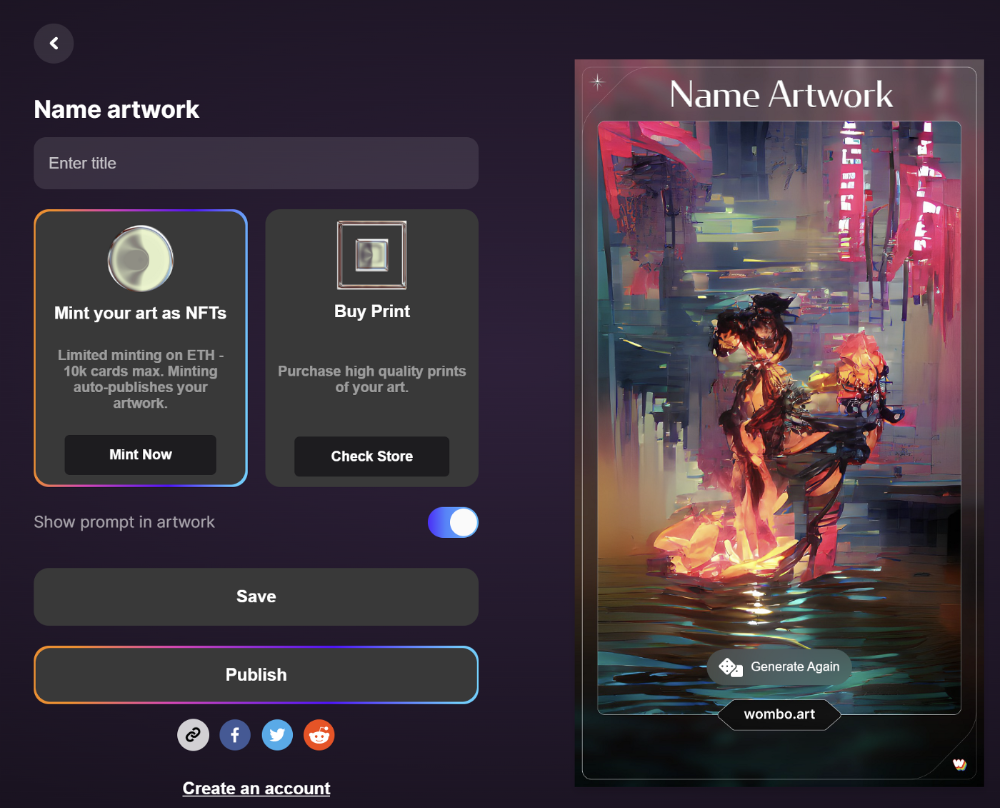

Dream by Wombo

Dream is one of the first public AI art generators.

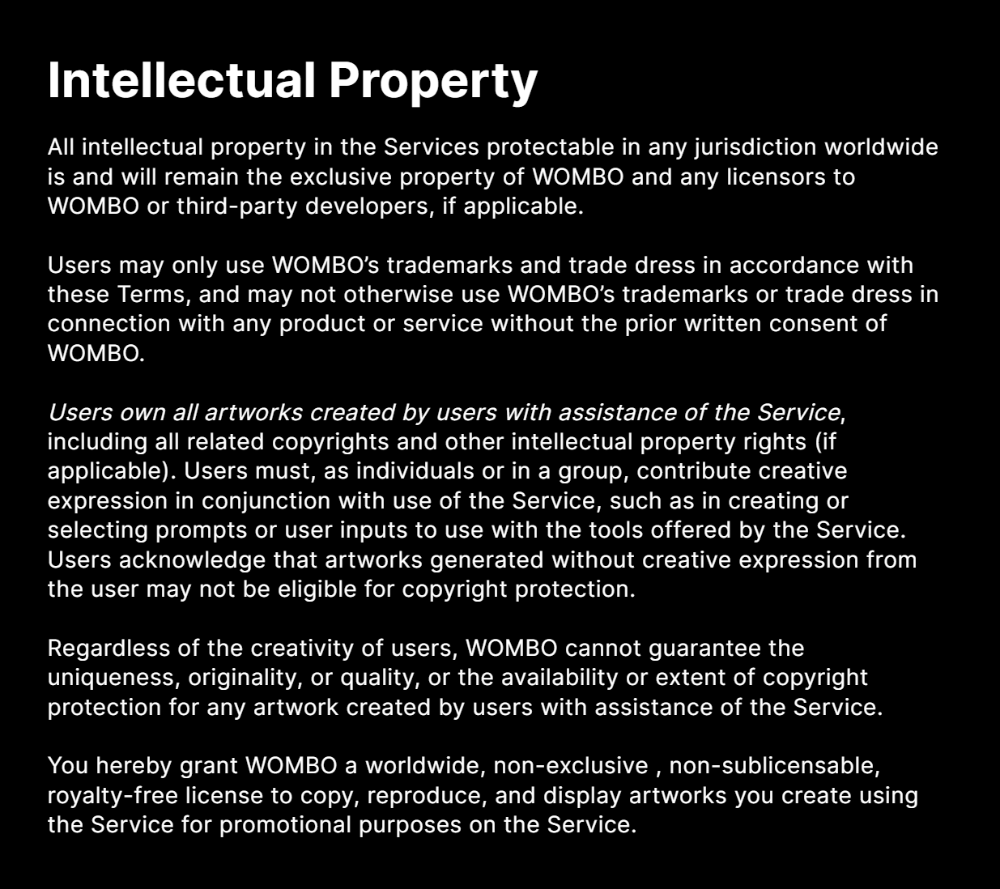

This AI program is free, easy to use, and Wombo gives a royalty-free license to copy or share artworks.

Users own all artworks generated by the tool. Including all related copyrights or intellectual property rights.

Here’s Wombos' intellectual property policy.

Final Reflections

AI is creating a new sort of art that's selling well. It’s becoming popular and valued, despite some skepticism.

Now that you know MidJourney and Wombo let you sell AI-generated art, you need to locate buyers. There are several ways to achieve this, but that’s for another story.

middlemarch.eth

3 years ago

ERC721R: A new ERC721 contract for random minting so people don’t snipe all the rares!

That is, how to snipe all the rares without using ERC721R!

Introduction: Blessed and Lucky

Mphers was the first mfers derivative, and as a Phunks derivative, I wanted one.

I wanted an alien. And there are only 8 in the 6,969 collection. I got one!

In case it wasn't clear from the tweet, I meant that I was lucky to have figured out how to 100% guarantee I'd get an alien without any extra luck.

Read on to find out how I did it, how you can too, and how developers can avoid it!

How to make rare NFTs without luck.

# How to mint rare NFTs without needing luck

The key to minting a rare NFT is knowing the token's id ahead of time.

For example, once I knew my alien was #4002, I simply refreshed the mint page until #3992 was minted, and then mint 10 mphers.

How did I know #4002 was extraterrestrial? Let's go back.

First, go to the mpher contract's Etherscan page and look up the tokenURI of a previously issued token, token #1:

As you can see, mphers creates metadata URIs by combining the token id and an IPFS hash.

This method gives you the collection's provenance in every URI, and while that URI can be changed, it affects everyone and is public.

Consider a token URI without a provenance hash, like https://mphers.art/api?tokenId=1.

As a collector, you couldn't be sure the devs weren't changing #1's metadata at will.

The API allows you to specify “if #4002 has not been minted, do not show any information about it”, whereas IPFS does not allow this.

It's possible to look up the metadata of any token, whether or not it's been minted.

Simply replace the trailing “1” with your desired id.

Mpher #4002

These files contain all the information about the mpher with the specified id. For my alien, we simply search all metadata files for the string “alien mpher.”

Take a look at the 6,969 meta-data files I'm using OpenSea's IPFS gateway, but you could use ipfs.io or something else.

Use curl to download ten files at once. Downloading thousands of files quickly can lead to duplicates or errors. But with a little tweaking, you should be able to get everything (and dupes are fine for our purposes).

Now that you have everything in one place, grep for aliens:

The numbers are the file names that contain “alien mpher” and thus the aliens' ids.

The entire process takes under ten minutes. This technique works on many NFTs currently minting.

In practice, manually minting at the right time to get the alien is difficult, especially when tokens mint quickly. Then write a bot to poll totalSupply() every second and submit the mint transaction at the exact right time.

You could even look for the token you need in the mempool before it is minted, and get your mint into the same block!

However, in my experience, the “big” approach wins 95% of the time—but not 100%.

“Am I being set up all along?”

Is a question you might ask yourself if you're new to this.

It's disheartening to think you had no chance of minting anything that someone else wanted.

But, did you have no opportunity? You had an equal chance as everyone else!

Take me, for instance: I figured this out using open-source tools and free public information. Anyone can do this, and not understanding how a contract works before minting will lead to much worse issues.

The mpher mint was fair.

While a fair game, “snipe the alien” may not have been everyone's cup of tea.

People may have had more fun playing the “mint lottery” where tokens were distributed at random and no one could gain an advantage over someone simply clicking the “mint” button.

How might we proceed?

Minting For Fashion Hats Punks, I wanted to create a random minting experience without sacrificing fairness. In my opinion, a predictable mint beats an unfair one. Above all, participants must be equal.

Sadly, the most common method of creating a random experience—the post-mint “reveal”—is deeply unfair. It works as follows:

- During the mint, token metadata is unavailable. Instead, tokenURI() returns a blank JSON file for each id.

- An IPFS hash is updated once all tokens are minted.

- You can't tell how the contract owner chose which token ids got which metadata, so it appears random.

Because they alone decide who gets what, the person setting the metadata clearly has a huge unfair advantage over the people minting. Unlike the mpher mint, you have no chance of winning here.

But what if it's a well-known, trusted, doxxed dev team? Are reveals okay here?

No! No one should be trusted with such power. Even if someone isn't consciously trying to cheat, they have unconscious biases. They might also make a mistake and not realize it until it's too late, for example.

You should also not trust yourself. Imagine doing a reveal, thinking you did it correctly (nothing is 100%! ), and getting the rarest NFT. Isn't that a tad odd Do you think you deserve it? An NFT developer like myself would hate to be in this situation.

Reveals are bad*

UNLESS they are done without trust, meaning everyone can verify their fairness without relying on the developers (which you should never do).

An on-chain reveal powered by randomness that is verifiably outside of anyone's control is the most common way to achieve a trustless reveal (e.g., through Chainlink).

Tubby Cats did an excellent job on this reveal, and I highly recommend their contract and launch reflections. Their reveal was also cool because it was progressive—you didn't have to wait until the end of the mint to find out.

In his post-launch reflections, @DefiLlama stated that he made the contract as trustless as possible, removing as much trust as possible from the team.

In my opinion, everyone should know the rules of the game and trust that they will not be changed mid-stream, while trust minimization is critical because smart contracts were designed to reduce trust (and it makes it impossible to hack even if the team is compromised). This was a huge mistake because it limited our flexibility and our ability to correct mistakes.

And @DefiLlama is a superstar developer. Imagine how much stress maximizing trustlessness will cause you!

That leaves me with a bad solution that works in 99 percent of cases and is much easier to implement: random token assignments.

Introducing ERC721R: A fully compliant IERC721 implementation that picks token ids at random.

ERC721R implements the opposite of a reveal: we mint token ids randomly and assign metadata deterministically.

This allows us to reveal all metadata prior to minting while reducing snipe chances.

Then import the contract and use this code:

What is ERC721R and how does it work

First, a disclaimer: ERC721R isn't truly random. In this sense, it creates the same “game” as the mpher situation, where minters compete to exploit the mint. However, ERC721R is a much more difficult game.

To game ERC721R, you need to be able to predict a hash value using these inputs:

This is impossible for a normal person because it requires knowledge of the block timestamp of your mint, which you do not have.

To do this, a miner must set the timestamp to a value in the future, and whatever they do is dependent on the previous block's hash, which expires in about ten seconds when the next block is mined.

This pseudo-randomness is “good enough,” but if big money is involved, it will be gamed. Of course, the system it replaces—predictable minting—can be manipulated.

The token id is chosen in a clever implementation of the Fisher–Yates shuffle algorithm that I copied from CryptoPhunksV2.

Consider first the naive solution: (a 10,000 item collection is assumed):

- Make an array with 0–9999.

- To create a token, pick a random item from the array and use that as the token's id.

- Remove that value from the array and shorten it by one so that every index corresponds to an available token id.

This works, but it uses too much gas because changing an array's length and storing a large array of non-zero values is expensive.

How do we avoid them both? What if we started with a cheap 10,000-zero array? Let's assign an id to each index in that array.

Assume we pick index #6500 at random—#6500 is our token id, and we replace the 0 with a 1.

But what if we chose #6500 again? A 1 would indicate #6500 was taken, but then what? We can't just "roll again" because gas will be unpredictable and high, especially later mints.

This allows us to pick a token id 100% of the time without having to keep a separate list. Here's how it works:

- Make a 10,000 0 array.

- Create a 10,000 uint numAvailableTokens.

- Pick a number between 0 and numAvailableTokens. -1

- Think of #6500—look at index #6500. If it's 0, the next token id is #6500. If not, the value at index #6500 is your next token id (weird!)

- Examine the array's last value, numAvailableTokens — 1. If it's 0, move the value at #6500 to the end of the array (#9999 if it's the first token). If the array's last value is not zero, update index #6500 to store it.

- numAvailableTokens is decreased by 1.

- Repeat 3–6 for the next token id.

So there you go! The array stays the same size, but we can choose an available id reliably. The Solidity code is as follows:

Unfortunately, this algorithm uses more gas than the leading sequential mint solution, ERC721A.

This is most noticeable when minting multiple tokens in one transaction—a 10 token mint on ERC721R costs 5x more than on ERC721A. That said, ERC721A has been optimized much further than ERC721R so there is probably room for improvement.

Conclusion

Listed below are your options:

- ERC721A: Minters pay lower gas but must spend time and energy devising and executing a competitive minting strategy or be comfortable with worse minting results.

- ERC721R: Higher gas, but the easy minting strategy of just clicking the button is optimal in all but the most extreme cases. If miners game ERC721R it’s the worst of both worlds: higher gas and a ton of work to compete.

- ERC721A + standard reveal: Low gas, but not verifiably fair. Please do not do this!

- ERC721A + trustless reveal: The best solution if done correctly, highly-challenging for dev, potential for difficult-to-correct errors.

Did I miss something? Comment or tweet me @dumbnamenumbers.

Check out the code on GitHub to learn more! Pull requests are welcome—I'm sure I've missed many gas-saving opportunities.

Thanks!

Read the original post here

You might also like

Asher Umerie

3 years ago

What is Bionic Reading?

Senses help us navigate a complicated world. They shape our worldview - how we hear, smell, feel, and taste. People claim a sixth sense, an intuitive capacity that extends perception.

Our brain is a half-pool of grey and white matter that stores data from our senses. Brains provide us context, so zombies' obsession makes sense.

Bionic reading uses the brain's visual information and context to simplify text comprehension.

Stay with me.

What is Bionic Reading?

Bionic reading is a software application established by Swiss typographic designer Renato Casutt. The term honors the brain (bio) and technology's collaboration to better text comprehension.

The image above shows two similar paragraphs with bionic reading.

Notice anything yet?

This Twitter user did.

I did too...

Image text describes bionic reading-

New method to aid reading by using artificial fixation points. The reader focuses on the highlighted starting letters, and the brain completes the word.

How is Bionic Reading possible?

Do you remember seeing social media posts asking you to stare at a black dot for 30 seconds (or more)? You blink and see an after-image on your wall.

Our brains are skilled at identifying patterns and'seeing' familiar objects, therefore optical illusions are conceivable.

Brain and sight collaborate well. Text comprehension proves it.

Considering evolutionary patterns, humans' understanding skills may be cosmic luck.

Scientists don't know why people can read and write, but they do know what reading does to the brain.

One portion of your brain recognizes words, while another analyzes their meaning. Fixation, saccade, and linguistic transparency/opacity aid.

Let's explain some terms.

-

Fixation is how the eyes move when reading. It's where you look. If the eyes fixate less, a reader can read quicker. [Eye fixation is a physiological process](Eye fixation is a naturally occurring physiological process) impacted by the reader's vocabulary, vision span, and text familiarity.

-

Saccade - Pause and look around. That's a saccade. Rapid eye movements that alter the place of fixation, as reading text or looking around a room. They can happen willingly (when you choose) or instinctively, even when your eyes are fixed.

-

Linguistic transparency and opacity analyze how well a composite word or phrase may be deduced from its constituents.

The Bionic reading website compares these tools.

Text highlights lead the eye. Fixation, saccade, and opacity can transfer visual stimuli to text, changing typeface.

## Final Thoughts on Bionic Reading

I'm excited about how this could influence my long-term assimilation and productivity.

This technology is still in development, with prototypes working on only a few apps. Like any new tech, it will be criticized.

I'll be watching Bionic Reading closely. Comment on it!

DC Palter

3 years ago

Is Venture Capital a Good Fit for Your Startup?

5 VC investment criteria

I reviewed 200 startup business concepts last week. Brainache.

The enterprises sold various goods and services. The concepts were achingly similar: give us money, we'll produce a product, then get more to expand. No different from daily plans and pitches.

Most of those 200 plans sounded plausible. But 10% looked venture-worthy. 90% of startups need alternatives to venture finance.

With the success of VC-backed businesses and the growth of venture funds, a common misperception is that investors would fund any decent company idea. Finding investors that believe in the firm and founders is the key to funding.

Incorrect. Venture capital needs investing in certain enterprises. If your startup doesn't match the model, as most early-stage startups don't, you can revise your business plan or locate another source of capital.

Before spending six months pitching angels and VCs, make sure your startup fits these criteria.

Likely to generate $100 million in sales

First, I check the income predictions in a pitch deck. If it doesn't display $100M, don't bother.

The math doesn't work for venture financing in smaller businesses.

Say a fund invests $1 million in a startup valued at $5 million that is later acquired for $20 million. That's a win everyone should celebrate. Most VCs don't care.

Consider a $100M fund. The fund must reach $360M in 7 years with a 20% return. Only 20-30 investments are possible. 90% of the investments will fail, hence the 23 winners must return $100M-$200M apiece. $15M isn't worth the work.

Angel investors and tiny funds use the same ideas as venture funds, but their smaller scale affects the calculations. If a company can support its growth through exit on less than $2M in angel financing, it must have $25M in revenues before large companies will consider acquiring it.

Aiming for Hypergrowth

A startup's size isn't enough. It must expand fast.

Developing a great business takes time. Complex technology must be constructed and tested, a nationwide expansion must be built, or production procedures must go from lab to pilot to factories. These can be enormous, world-changing corporations, but venture investment is difficult.

The normal 10-year venture fund life. Investments are made during first 3–4 years.. 610 years pass between investment and fund dissolution. Funds need their investments to exit within 5 years, 7 at the most, therefore add a safety margin.

Longer exit times reduce ROI. A 2-fold return in a year is excellent. Loss at 2x in 7 years.

Lastly, VCs must prove success to raise their next capital. The 2nd fund is raised from 1st fund portfolio increases. Third fund is raised using 1st fund's cash return. Fund managers must raise new money quickly to keep their jobs.

Branding or technology that is protected

No big firm will buy a startup at a high price if they can produce a competing product for less. Their development teams, consumer base, and sales and marketing channels are large. Who needs you?

Patents, specialist knowledge, or brand name are the only answers. The acquirer buys this, not the thing.

I've heard of several promising startups. It's not a decent investment if there's no exit strategy.

A company that installs EV charging stations in apartments and shopping areas is an example. It's profitable, repeatable, and big. A terrific company. Not a startup.

This building company's operations aren't secret. No technology to protect, no special information competitors can't figure out, no go-to brand name. Despite the immense possibilities, a large construction company would be better off starting their own.

Most venture businesses build products, not services. Services can be profitable but hard to safeguard.

Probable purchase at high multiple

Once a software business proves its value, acquiring it is easy. Pharma and medtech firms have given up on their own research and instead acquire startups after regulatory permission. Many startups, especially in specialized areas, have this weakness.

That doesn't mean any lucrative $25M-plus business won't be acquired. In many businesses, the venture model requires a high exit premium.

A startup invents a new glue. 3M, BASF, Henkel, and others may buy them. Adding more adhesive to their catalogs won't boost commerce. They won't compete to buy the business. They'll only buy a startup at a profitable price. The acquisition price represents a moderate EBITDA multiple.

The company's $100M revenue presumably yields $10m in profits (assuming they’ve reached profitability at all). A $30M-$50M transaction is likely. Not terrible, but not what venture investors want after investing $25M to create a plant and develop the business.

Private equity buys profitable companies for a moderate profit multiple. It's a good exit for entrepreneurs, but not for investors seeking 10x or more what PE firms pay. If a startup offers private equity as an exit, the conversation is over.

Constructed for purchase

The startup wants a high-multiple exit. Unless the company targets $1B in revenue and does an IPO, exit means acquisition.

If they're constructing the business for acquisition or themselves, founders must decide.

If you want an indefinitely-running business, I applaud you. We need more long-term founders. Most successful organizations are founded around consumer demands, not venture capital's urge to grow fast and exit. Not venture funding.

if you don't match the venture model, what to do

VC funds moonshots. The 10% that succeed are extraordinary. Not every firm is a rocketship, and launching the wrong startup into space, even with money, will explode.

But just because your startup won't make $100M in 5 years doesn't mean it's a bad business. Most successful companies don't follow this model. It's not venture capital-friendly.

Although venture capital gets the most attention due to a few spectacular triumphs (and disasters), it's not the only or even most typical option to fund a firm.

Other ways to support your startup:

Personal and family resources, such as credit cards, second mortgages, and lines of credit

bootstrapping off of sales

government funding and honors

Private equity & project financing

collaborating with a big business

Including a business partner

Before pitching angels and VCs, be sure your startup qualifies. If so, include them in your pitch.

Sam Hickmann

3 years ago

What is headline inflation?

Headline inflation is the raw Consumer price index (CPI) reported monthly by the Bureau of labour statistics (BLS). CPI measures inflation by calculating the cost of a fixed basket of goods. The CPI uses a base year to index the current year's prices.

Explaining Inflation

As it includes all aspects of an economy that experience inflation, headline inflation is not adjusted to remove volatile figures. Headline inflation is often linked to cost-of-living changes, which is useful for consumers.

The headline figure doesn't account for seasonality or volatile food and energy prices, which are removed from the core CPI. Headline inflation is usually annualized, so a monthly headline figure of 4% inflation would equal 4% inflation for the year if repeated for 12 months. Top-line inflation is compared year-over-year.

Inflation's downsides

Inflation erodes future dollar values, can stifle economic growth, and can raise interest rates. Core inflation is often considered a better metric than headline inflation. Investors and economists use headline and core results to set growth forecasts and monetary policy.

Core Inflation

Core inflation removes volatile CPI components that can distort the headline number. Food and energy costs are commonly removed. Environmental shifts that affect crop growth can affect food prices outside of the economy. Political dissent can affect energy costs, such as oil production.

From 1957 to 2018, the U.S. averaged 3.64 percent core inflation. In June 1980, the rate reached 13.60%. May 1957 had 0% inflation. The Fed's core inflation target for 2022 is 3%.

Central bank:

A central bank has privileged control over a nation's or group's money and credit. Modern central banks are responsible for monetary policy and bank regulation. Central banks are anti-competitive and non-market-based. Many central banks are not government agencies and are therefore considered politically independent. Even if a central bank isn't government-owned, its privileges are protected by law. A central bank's legal monopoly status gives it the right to issue banknotes and cash. Private commercial banks can only issue demand deposits.

What are living costs?

The cost of living is the amount needed to cover housing, food, taxes, and healthcare in a certain place and time. Cost of living is used to compare the cost of living between cities and is tied to wages. If expenses are higher in a city like New York, salaries must be higher so people can live there.

What's U.S. bureau of labor statistics?

BLS collects and distributes economic and labor market data about the U.S. Its reports include the CPI and PPI, both important inflation measures.