A Guide to VeeFriends and Series 2

VeeFriends is one of the most popular and unique NFT collections. VeeFriends launched around the same time as other PFP NFTs like Bored Ape Yacht Club.

Vaynerchuk (GaryVee) took a unique approach to his large-scale project, which has influenced the NFT ecosystem. GaryVee's VeeFriends is one of the most successful NFT membership use-cases, allowing him to build a community around his creative and business passions.

What is VeeFriends?

GaryVee's NFT collection, VeeFriends, was released on May 11, 2021. VeeFriends [Mini Drops], Book Games, and a forthcoming large-scale "Series 2" collection all stem from the initial drop of 10,255 tokens.

In "Series 1," there are G.O.O. tokens (Gary Originally Owned). GaryVee reserved 1,242 NFTs (over 12% of the supply) for his own collection, so only 9,013 were available at the Series 1 launch.

Each Series 1 token represents one of 268 human traits hand-drawn by Vaynerchuk. Gary Vee's NFTs offer owners incentives.

Who made VeeFriends?

Gary Vaynerchuk, AKA GaryVee, is influential in NFT. Vaynerchuk is the chairman of New York-based communications company VaynerX. Gary Vee, CEO of VaynerMedia, VaynerSports, and bestselling author, is worth $200 million.

GaryVee went from NFT collector to creator, launching VaynerNFT to help celebrities and brands.

Vaynerchuk's influence spans the NFT ecosystem as one of its most prolific voices. He's one of the most influential NFT figures, and his VeeFriends ecosystem keeps growing.

Vaynerchuk, a trend expert, thinks NFTs will be around for the rest of his life and VeeFriends will be a landmark project.

Why use VeeFriends NFTs?

The first VeeFriends collection has sold nearly $160 million via OpenSea. GaryVee insisted that the first 10,255 VeeFriends were just the beginning.

Book Games were announced to the VeeFriends community in August 2021. Mini Drops joined VeeFriends two months later.

Book Games

GaryVee's book "Twelve and a Half: Leveraging the Emotional Ingredients for Business Success" inspired Book Games. Even prior to the announcement Vaynerchuk had mapped out the utility of the book on an NFT scale. Book Games tied his book to the VeeFriends ecosystem and solidified its place in the collection.

GaryVee says Book Games is a layer 2 NFT project with 125,000 burnable tokens. Vaynerchuk's NFT fans were incentivized to buy as many copies of his new book as possible to receive NFT rewards later.

First, a bit about “layer 2.”

Layer 2 blockchain solutions help scale applications by routing transactions away from Ethereum Mainnet (layer 1). These solutions benefit from Mainnet's decentralized security model but increase transaction speed and reduce gas fees.

Polygon (integrated into OpenSea) and Immutable X are popular Ethereum layer 2 solutions. GaryVee chose Immutable X to reduce gas costs (transaction fees). Given the large supply of Book Games tokens, this decision will likely benefit the VeeFriends community, especially if the games run forever.

What's the strategy?

The VeeFriends patriarch announced on Aug. 27, 2021, that for every 12 books ordered during the Book Games promotion, customers would receive one NFT via airdrop. After nearly 100 days, GV sold over a million copies and announced that Book Games would go gamified on Jan. 10, 2022.

Immutable X's trading options make Book Games a "game." Book Games players can trade NFTs for other NFTs, sports cards, VeeCon tickets, and other prizes. Book Games can also whitelist other VeeFirends projects, which we'll cover in Series 2.

VeeFriends Mini Drops

GaryVee launched VeeFriends Mini Drops two months after Book Games, focusing on collaboration, scarcity, and the characters' "cultural longevity."

Spooky Vees, a collection of 31 1/1 Halloween-themed VeeFriends, was released on Halloween. First-come, first-served VeeFriend owners could claim these NFTs.

Mini Drops includes Gift Goat NFTs. By holding the Gift Goat VeeFriends character, collectors will receive 18 exclusive gifts curated by GaryVee and the team. Each gifting experience includes one physical gift and one NFT out of 555, to match the 555 Gift Goat tokens.

Gift Goat holders have gotten NFTs from Danny Cole (Creature World), Isaac "Drift" Wright (Where My Vans Go), Pop Wonder, and more.

GaryVee is poised to release the largest expansion of the VeeFriends and VaynerNFT ecosystem to date with VeeFriends Series 2.

VeeCon 101

By owning VeeFriends NFTs, collectors can join the VeeFriends community and attend VeeCon in 2022. The conference is only open to VeeCon NFT ticket holders (VeeFreinds + possibly more TBA) and will feature Beeple, Steve Aoki, and even Snoop Dogg.

The VeeFreinds floor in 2022 Q1 has remained at 16 ETH ($52,000), making VeeCon unattainable for most NFT enthusiasts. Why would someone spend that much crypto on a Minneapolis "superconference" ticket? Because of Gary Vaynerchuk.

Everything to know about VeeFriends Series 2

Vaynerchuk revealed in April 2022 that the VeeFriends ecosystem will grow by 55,555 NFTs after months of teasing.

With VeeFriends Series 2, each token will cost $995 USD in ETH, allowing NFT enthusiasts to join at a lower cost. The new series will be released on multiple dates in April.

Book Games NFT holders on the Friends List (whitelist) can mint Series 2 NFTs on April 12. Book Games holders have 32,000 NFTs.

VeeFriends Series 1 NFT holders can claim Series 2 NFTs on April 12. This allotment's supply is 10,255, like Series 1's.

On April 25, the public can buy 10,000 Series 2 NFTs. Unminted Friends List NFTs will be sold on this date, so this number may change.

The VeeFriends ecosystem will add 15 new characters (220 tokens each) on April 27. One character will be released per day for 15 days, and the only way to get one is to enter a daily raffle with Book Games tokens.

Series 2 NFTs won't give owners VeeCon access, but they will offer other benefits within the VaynerNFT ecosystem. Book Games and Series 2 will get new token burn mechanics in the upcoming drop.

Visit the VeeFriends blog for the latest collection info.

Where can you buy Gary Vee’s NFTs?

Need a VeeFriend NFT? Gary Vee recommends doing "50 hours of homework" before buying. OpenSea sells VeeFriends NFTs.

More on NFTs & Art

Sea Launch

4 years ago

A guide to NFT pre-sales and whitelists

Before we dig through NFT whitelists and pre-sales, if you know absolutely nothing about NFTs, check our NFT Glossary.

What are pre-sales and whitelists on NFTs?

An NFT pre-sale, as the name implies, allows community members or early supporters of an NFT project to mint before the public, usually via a whitelist or mint pass.

Coin collectors can use mint passes to claim NFTs during the public sale. Because the mint pass is executed by “burning” an NFT into a specific crypto wallet, the collector is not concerned about gas price spikes.

A whitelist is used to approve a crypto wallet address for an NFT pre-sale. In a similar way to an early access list, it guarantees a certain number of crypto wallets can mint one (or more) NFT.

New NFT projects can do a pre-sale without a whitelist, but whitelists are good practice to avoid gas wars and a fair shot at minting an NFT before launching in competitive NFT marketplaces like Opensea, Magic Eden, or CNFT.

Should NFT projects do pre-sales or whitelists? 👇

The reasons to do pre-sales or a whitelist for NFT creators:

Time the market and gain traction.

Pre-sale or whitelists can help NFT projects gauge interest early on.

Whitelist spots filling up quickly is usually a sign of a successful launch, though it does not guarantee NFT longevity (more on that later). Also, full whitelists create FOMO and momentum for the public sale among non-whitelisted NFT collectors.

If whitelist signups are low or slow, projects may need to work on their vision, community, or product. Or the market is in a bear cycle. In either case, it aids NFT projects in market timing.

Reward the early NFT Community members.

Pre-sale and whitelists can help NFT creators reward early supporters.

First, by splitting the minting process into two phases, early adopters get a chance to mint one or more NFTs from their collection at a discounted or even free price.

Did you know that BAYC started at 0.08 eth each? A serum that allowed you to mint a Mutant Ape has become as valuable as the original BAYC.

(2) Whitelists encourage early supporters to help build a project's community in exchange for a slot or status. If you invite 10 people to the NFT Discord community, you get a better ranking or even a whitelist spot.

Pre-sale and whitelisting have become popular ways for new projects to grow their communities and secure future buyers.

Prevent gas wars.

Most new NFTs are created on the Ethereum blockchain, which has the highest transaction fees (also known as gas) (Solana, Cardano, Polygon, Binance Smart Chain, etc).

An NFT public sale is a gas war when a large number of NFT collectors (or bots) try to mint an NFT at the same time.

Competing collectors are willing to pay higher gas fees to prioritize their transaction and out-price others when upcoming NFT projects are hyped and very popular.

Pre-sales and whitelisting prevent gas wars by breaking the minting process into smaller batches of members or season launches.

The reasons to do pre-sales or a whitelists for NFT collectors:

How do I get on an NFT whitelist?

- Popular NFT collections act as a launchpad for other new or hyped NFT collections.

Example: Interfaces NFTs gives out 100 whitelist spots to Deadfellaz NFTs holders. Both NFT projects win. Interfaces benefit from Deadfellaz's success and brand equity.

In this case, to get whitelisted NFT collectors need to hold that specific NFT that is acting like a launchpad.

- A NFT studio or collection that launches a new NFT project and rewards previous NFT holders with whitelist spots or pre-sale access.

The whitelist requires previous NFT holders or community members.

NFT Alpha Groups are closed, small, tight-knit Discord servers where members share whitelist spots or giveaways from upcoming NFTs.

The benefit of being in an alpha group is getting information about new NFTs first and getting in on pre-sale/whitelist before everyone else.

There are some entry barriers to alpha groups, but if you're active in the NFT community, you'll eventually bump into, be invited to, or form one.

- A whitelist spot is awarded to members of an NFT community who are the most active and engaged.

This participation reward is the most democratic. To get a chance, collectors must work hard and play to their strengths.

Whitelisting participation examples:

- Raffle, games and contest: NFT Community raffles, games, and contests. To get a whitelist spot, invite 10 people to X NFT Discord community.

- Fan art: To reward those who add value and grow the community by whitelisting the best fan art and/or artists is only natural.

- Giveaways: Lucky number crypto wallet giveaways promoted by an NFT community. To grow their communities and for lucky collectors, NFT projects often offer free NFT.

- Activate your voice in the NFT Discord Community. Use voice channels to get NFT teams' attention and possibly get whitelisted.

The advantage of whitelists or NFT pre-sales.

Chainalysis's NFT stats quote is the best answer:

“Whitelisting isn’t just some nominal reward — it translates to dramatically better investing results. OpenSea data shows that users who make the whitelist and later sell their newly-minted NFT gain a profit 75.7% of the time, versus just 20.8% for users who do so without being whitelisted. Not only that, but the data suggests it’s nearly impossible to achieve outsized returns on minting purchases without being whitelisted.” Full report here.

Sure, it's not all about cash. However, any NFT collector should feel secure in their investment by owning a piece of a valuable and thriving NFT project. These stats help collectors understand that getting in early on an NFT project (via whitelist or pre-sale) will yield a better and larger return.

The downsides of pre-sales & whitelists for NFT creators.

Pre-sales and whitelist can cause issues for NFT creators and collectors.

NFT flippers

NFT collectors who only want to profit from early minting (pre-sale) or low mint cost (via whitelist). To sell the NFT in a secondary market like Opensea or Solanart, flippers go after the discounted price.

For example, a 1000 Solana NFT collection allows 100 people to mint 1 Solana NFT at 0.25 SOL. The public sale price for the remaining 900 NFTs is 1 SOL. If an NFT collector sells their discounted NFT for 0.5 SOL, the secondary market floor price is below the public mint.

This may deter potential NFT collectors. Furthermore, without a cap in the pre-sale minting phase, flippers can get as many NFTs as possible to sell for a profit, dumping them in secondary markets and driving down the floor price.

Hijacking NFT sites, communities, and pre-sales phase

People try to scam the NFT team and their community by creating oddly similar but fake websites, whitelist links, or NFT's Discord channel.

Established and new NFT projects must be vigilant to always make sure their communities know which are the official links, how a whitelist or pre-sale rules and how the team will contact (or not) community members.

Another way to avoid the scams around the pre-sale phase, NFT projects opt to create a separate mint contract for the whitelisted crypto wallets and then another for the public sale phase.

Scam NFT projects

We've seen a lot of mid-mint or post-launch rug pulls, indicating that some bad NFT projects are trying to scam NFT communities and marketplaces for quick profit. What happened to Magic Eden's launchpad recently will help you understand the scam.

We discussed the benefits and drawbacks of NFT pre-sales and whitelists for both projects and collectors.

Finally, some practical tools and tips for finding new NFTs 👇

Tools & resources to find new NFT on pre-sale or to get on a whitelist:

In order to never miss an update, important pre-sale dates, or a giveaway, create a Tweetdeck or Tweeten Twitter dashboard with hyped NFT project pages, hashtags ( #NFTGiveaways , #NFTCommunity), or big NFT influencers.

Search for upcoming NFT launches that have been vetted by the marketplace and try to get whitelisted before the public launch.

Save-timing discovery platforms like sealaunch.xyz for NFT pre-sales and upcoming launches. How can we help 100x NFT collectors get projects? A project's official social media links, description, pre-sale or public sale dates, price and supply. We're also working with Dune on NFT data analysis to help NFT collectors make better decisions.

Don't invest what you can't afford to lose because a) the project may fail or become rugged. Find NFTs projects that you want to be a part of and support.

Read original post here

Jim Clyde Monge

3 years ago

Can You Sell Images Created by AI?

Some AI-generated artworks sell for enormous sums of money.

But can you sell AI-Generated Artwork?

Simple answer: yes.

However, not all AI services enable allow usage and redistribution of images.

Let's check some of my favorite AI text-to-image generators:

Dall-E2 by OpenAI

The AI art generator Dall-E2 is powerful. Since it’s still in beta, you can join the waitlist here.

OpenAI DOES NOT allow the use and redistribution of any image for commercial purposes.

Here's the policy as of April 6, 2022.

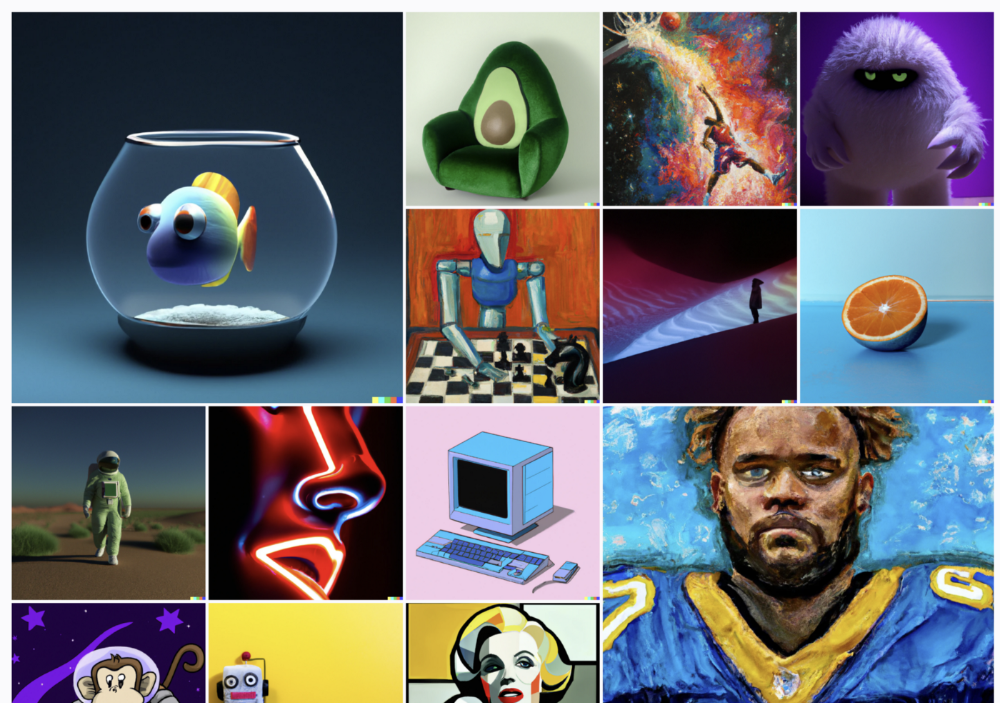

Here are some images from Dall-E2’s webpage to show its art quality.

Several Reddit users reported receiving pricing surveys from OpenAI.

This suggests the company may bring out a subscription-based tier and a commercial license to sell images soon.

MidJourney

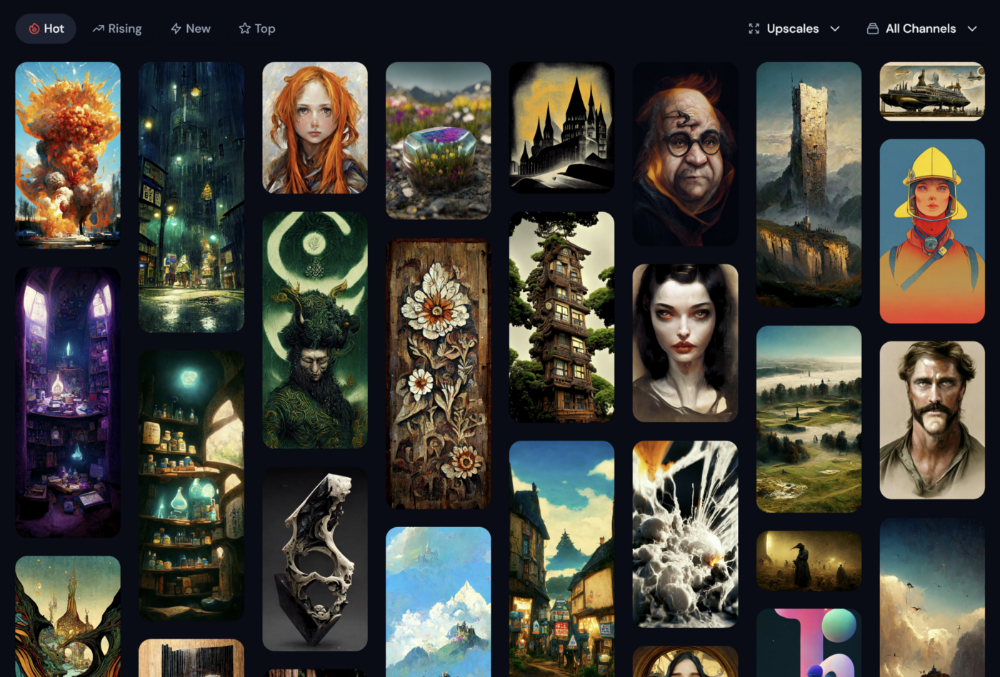

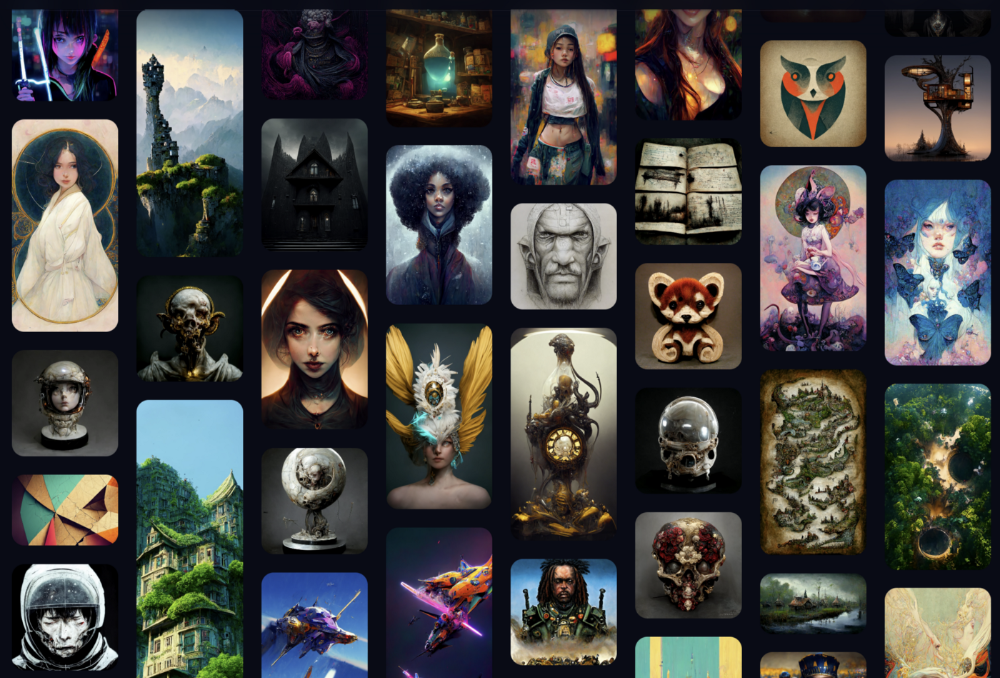

I like Midjourney's art generator. It makes great AI images. Here are some samples:

Standard Licenses are available for $10 per month.

Standard License allows you to use, copy, modify, merge, publish, distribute, and/or sell copies of the images, except for blockchain technologies.

If you utilize or distribute the Assets using blockchain technology, you must pay MidJourney 20% of revenue above $20,000 a month or engage in an alternative agreement.

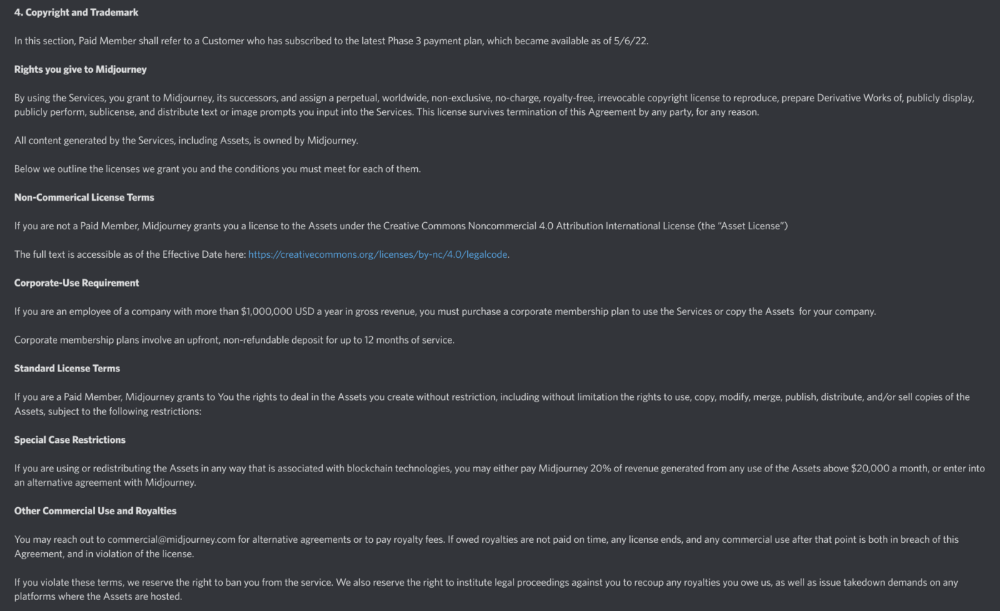

Here's their copyright and trademark page.

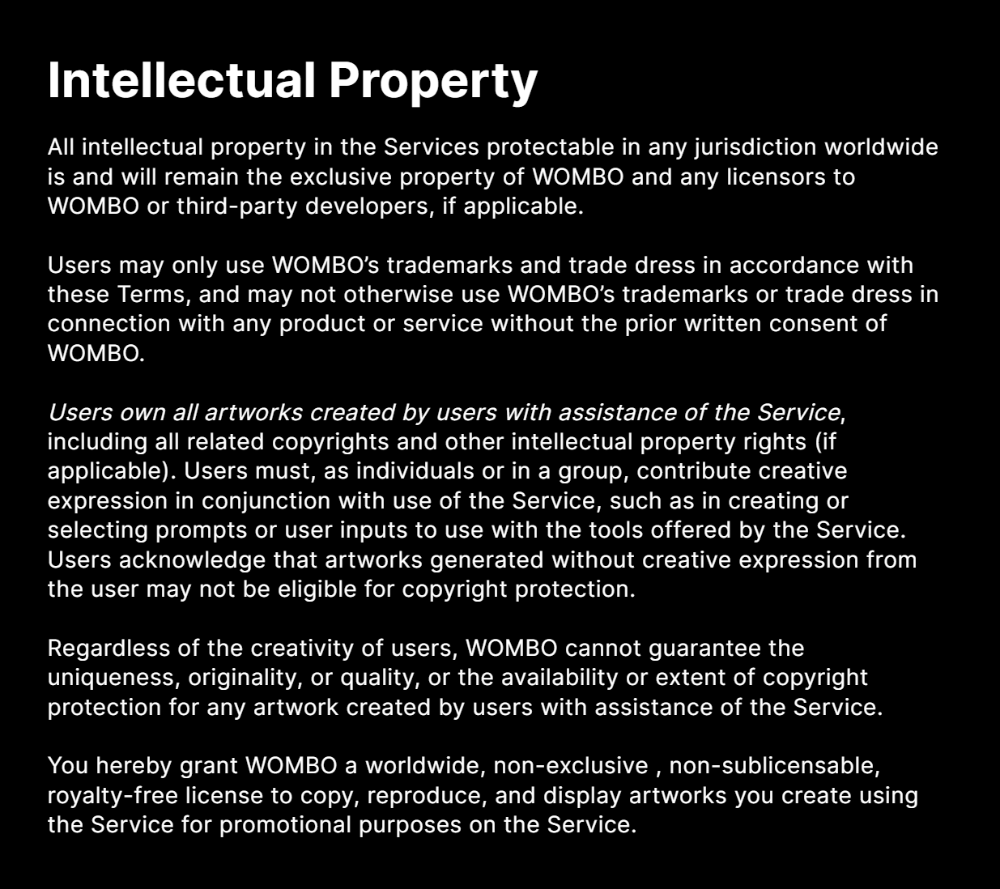

Dream by Wombo

Dream is one of the first public AI art generators.

This AI program is free, easy to use, and Wombo gives a royalty-free license to copy or share artworks.

Users own all artworks generated by the tool. Including all related copyrights or intellectual property rights.

Here’s Wombos' intellectual property policy.

Final Reflections

AI is creating a new sort of art that's selling well. It’s becoming popular and valued, despite some skepticism.

Now that you know MidJourney and Wombo let you sell AI-generated art, you need to locate buyers. There are several ways to achieve this, but that’s for another story.

Alex Carter

3 years ago

Metaverse, Web 3, and NFTs are BS

Most crypto is probably too.

The goals of Web 3 and the metaverse are admirable and attractive. Who doesn't want an internet owned by users? Who wouldn't want a digital realm where anything is possible? A better way to collaborate and visit pals.

Companies pursue profits endlessly. Infinite growth and revenue are expected, and if a corporation needs to sacrifice profits to safeguard users, the CEO, board of directors, and any executives will lose to the system of incentives that (1) retains workers with shares and (2) makes a company answerable to all of its shareholders. Only the government can guarantee user protections, but we know how successful that is. This is nothing new, just a problem with modern capitalism and tech platforms that a user-owned internet might remedy. Moxie, the founder of Signal, has a good articulation of some of these current Web 2 tech platform problems (but I forget the timestamp); thoughts on JRE aside, this episode is worth listening to (it’s about a bunch of other stuff too).

Moxie Marlinspike, founder of Signal, on the Joe Rogan Experience podcast.

Source: https://open.spotify.com/episode/2uVHiMqqJxy8iR2YB63aeP?si=4962b5ecb1854288

Web 3 champions are premature. There was so much spectacular growth during Web 2 that the next wave of founders want to make an even bigger impact, while investors old and new want a chance to get a piece of the moonshot action. Worse, crypto enthusiasts believe — and financially need — the fact of its success to be true, whether or not it is.

I’m doubtful that it will play out like current proponents say. Crypto has been the white-hot focus of SV’s best and brightest for a long time yet still struggles to come up any mainstream use case other than ‘buy, HODL, and believe’: a store of value for your financial goals and wishes. Some kind of the metaverse is likely, but will it be decentralized, mostly in VR, or will Meta (previously FB) play a big role? Unlikely.

METAVERSE

The metaverse exists already. Our digital lives span apps, platforms, and games. I can design a 3D house, invite people, use Discord, and hang around in an artificial environment. Millions of gamers do this in Rust, Minecraft, Valheim, and Animal Crossing, among other games. Discord's voice chat and Slack-like servers/channels are the present social anchor, but the interface, integrations, and data portability will improve. Soon you can stream YouTube videos on digital house walls. You can doodle, create art, play Jackbox, and walk through a door to play Apex Legends, Fortnite, etc. Not just gaming. Digital whiteboards and screen sharing enable real-time collaboration. They’ll review code and operate enterprises. Music is played and made. In digital living rooms, they'll watch movies, sports, comedy, and Twitch. They'll tweet, laugh, learn, and shittalk.

The metaverse is the evolution of our digital life at home, the third place. The closest analog would be Discord and the integration of Facebook, Slack, YouTube, etc. into a single, 3D, customizable hangout space.

I'm not certain this experience can be hugely decentralized and smoothly choreographed, managed, and run, or that VR — a luxury, cumbersome, and questionably relevant technology — must be part of it. Eventually, VR will be pragmatic, achievable, and superior to real life in many ways. A total sensory experience like the Matrix or Sword Art Online, where we're physically hooked into the Internet yet in our imaginations we're jumping, flying, and achieving athletic feats we never could in reality; exploring realms far grander than our own (as grand as it is). That VR is different from today's.

Ben Thompson released an episode of Exponent after Facebook changed its name to Meta. Ben was suspicious about many metaverse champion claims, but he made a good analogy between Oculus and the PC. The PC was initially far too pricey for the ordinary family to afford. It began as a business tool. It got so powerful and pervasive that it affected our personal life. Price continues to plummet and so much consumer software was produced that it's impossible to envision life without a home computer (or in our pockets). If Facebook shows product market fit with VR in business, through use cases like remote work and collaboration, maybe VR will become practical in our personal lives at home.

Before PCs, we relied on Blockbuster, the Yellow Pages, cabs to get to the airport, handwritten taxes, landline phones to schedule social events, and other archaic methods. It is impossible for me to conceive what VR, in the form of headsets and hand controllers, stands to give both professional and especially personal digital experiences that is an order of magnitude better than what we have today. Is looking around better than using a mouse to examine a 3D landscape? Do the hand controls make x10 or x100 work or gaming more fun or efficient? Will VR replace scalable Web 2 methods and applications like Web 1 and Web 2 did for analog? I don't know.

My guess is that the metaverse will arrive slowly, initially on displays we presently use, with more app interoperability. I doubt that it will be controlled by the people or by Facebook, a corporation that struggles to properly innovate internally, as practically every large digital company does. Large tech organizations are lousy at hiring product-savvy employees, and if they do, they rarely let them explore new things.

These companies act like business schools when they seek founders' results, with bureaucracy and dependency. Which company launched the last popular consumer software product that wasn't a clone or acquisition? Recent examples are scarce.

Web 3

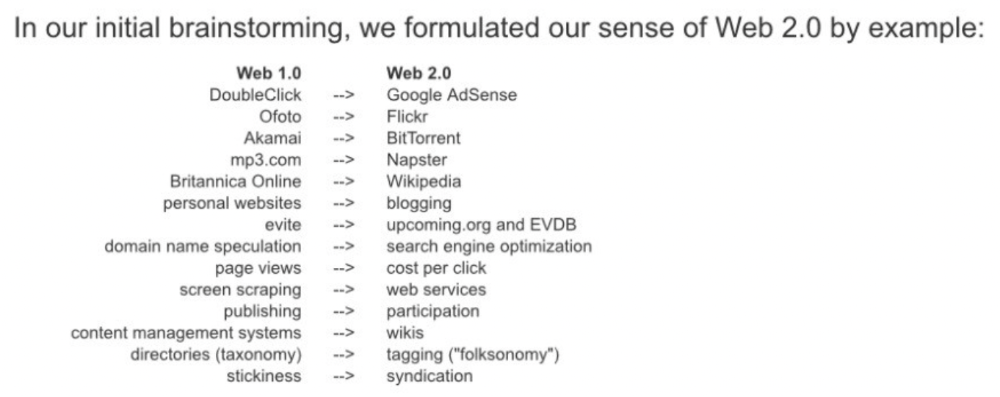

Investors and entrepreneurs of Web 3 firms are declaring victory: 'Web 3 is here!' Web 3 is the future! Many profitable Web 2 enterprises existed when Web 2 was defined. The word was created to explain user behavior shifts, not a personal pipe dream.

Origins of Web 2: http://www.oreilly.com/pub/a/web2/archive/what-is-web-20.html

One of these Web 3 startups may provide the connecting tissue to link all these experiences or become one of the major new digital locations. Even so, successful players will likely use centralized power arrangements, as Web 2 businesses do now. Some Web 2 startups integrated our digital lives. Rockmelt (2010–2013) was a customizable browser with bespoke connectors to every program a user wanted; imagine seeing Facebook, Twitter, Discord, Netflix, YouTube, etc. all in one location. Failure. Who knows what Opera's doing?

Silicon Valley and tech Twitter in general have a history of jumping on dumb bandwagons that go nowhere. Dot-com crash in 2000? The huge deployment of capital into bad ideas and businesses is well-documented. And live video. It was the future until it became a niche sector for gamers. Live audio will play out a similar reality as CEOs with little comprehension of audio and no awareness of lasting new user behavior deceive each other into making more and bigger investments on fool's gold. Twitter trying to buy Clubhouse for $4B, Spotify buying Greenroom, Facebook exploring live audio and 'Tiktok for audio,' and now Amazon developing a live audio platform. This live audio frenzy won't be worth their time or energy. Blind guides blind. Instead of learning from prior failures like Twitter buying Periscope for $100M pre-launch and pre-product market fit, they're betting on unproven and uncompelling experiences.

NFTs

NFTs are also nonsense. Take Loot, a time-limited bag drop of "things" (text on the blockchain) for a game that didn't exist, bought by rich techies too busy to play video games and foolish enough to think they're getting in early on something with a big reward. What gaming studio is incentivized to use these items? Who's encouraged to join? No one cares besides Loot owners who don't have NFTs. Skill, merit, and effort should be rewarded with rare things for gamers. Even if a small minority of gamers can make a living playing, the average game's major appeal has never been to make actual money - that's a profession.

No game stays popular forever, so how is this objective sustainable? Once popularity and usage drop, exclusive crypto or NFTs will fall. And if NFTs are designed to have cross-game appeal, incentives apart, 30 years from now any new game will need millions of pre-existing objects to build around before they start. It doesn’t work.

Many games already feature item economies based on real in-game scarcity, generally for cosmetic things to avoid pay-to-win, which undermines scaled gaming incentives for huge player bases. Counter-Strike, Rust, etc. may be bought and sold on Steam with real money. Since the 1990s, unofficial cross-game marketplaces have sold in-game objects and currencies. NFTs aren't needed. Making a popular, enjoyable, durable game is already difficult.

With NFTs, certain JPEGs on the internet went from useless to selling for $69 million. Why? Crypto, Web 3, early Internet collectibles. NFTs are digital Beanie Babies (unlike NFTs, Beanie Babies were a popular children's toy; their destinies are the same). NFTs are worthless and scarce. They appeal to crypto enthusiasts seeking for a practical use case to support their theory and boost their own fortune. They also attract to SV insiders desperate not to miss the next big thing, not knowing what it will be. NFTs aren't about paying artists and creators who don't get credit for their work.

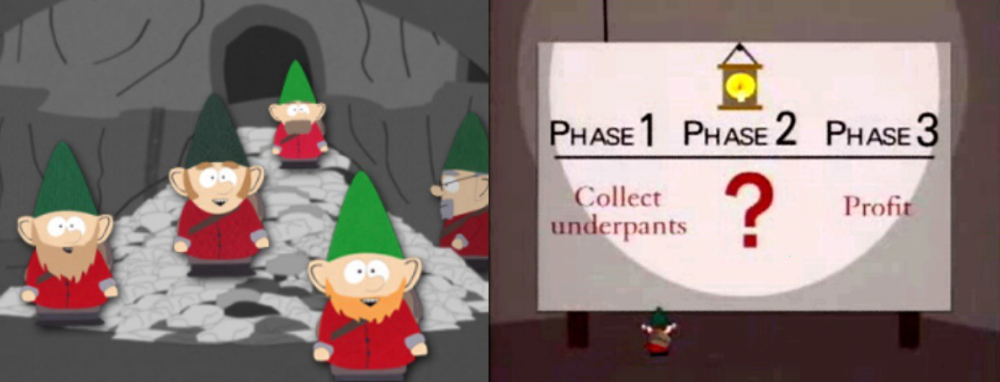

South Park's Underpants Gnomes

NFTs are a benign, foolish plan to earn money on par with South Park's underpants gnomes. At worst, they're the world of hucksterism and poor performers. Or those with money and enormous followings who, like everyone, don't completely grasp cryptocurrencies but are motivated by greed and status and believe Gary Vee's claim that CryptoPunks are the next Facebook. Gary's watertight logic: if NFT prices dip, they're on the same path as the most successful corporation in human history; buy the dip! NFTs aren't businesses or museum-worthy art. They're bs.

Gary Vee compares NFTs to Amazon.com. vm.tiktok.com/TTPdA9TyH2

We grew up collecting: Magic: The Gathering (MTG) cards printed in the 90s are now worth over $30,000. Imagine buying a digital Magic card with no underlying foundation. No one plays the game because it doesn't exist. An NFT is a contextless image someone conned you into buying a certificate for, but anyone may copy, paste, and use. Replace MTG with Pokemon for younger readers.

When Gary Vee strongarms 30 tech billionaires and YouTube influencers into buying CryptoPunks, they'll talk about it on Twitch, YouTube, podcasts, Twitter, etc. That will convince average folks that the product has value. These guys are smart and/or rich, so I'll get in early like them. Cryptography is similar. No solid, scaled, mainstream use case exists, and no one knows where it's headed, but since the global crypto financial bubble hasn't burst and many people have made insane fortunes, regular people are putting real money into something that is highly speculative and could be nothing because they want a piece of the action. Who doesn’t want free money? Rich techies and influencers won't be affected; normal folks will.

Imagine removing every $1 invested in Bitcoin instantly. What would happen? How far would Bitcoin fall? Over 90%, maybe even 95%, and Bitcoin would be dead. Bitcoin as an investment is the only scalable widespread use case: it's confidence that a better use case will arise and that being early pays handsomely. It's like pouring a trillion dollars into a company with no business strategy or users and a CEO who makes vague future references.

New tech and efforts may provoke a 'get off my lawn' mentality as you approach 40, but I've always prided myself on having a decent bullshit detector, and it's flying off the handle at this foolishness. If we can accomplish a functional, responsible, equitable, and ethical user-owned internet, I'm for it.

Postscript:

I wanted to summarize my opinions because I've been angry about this for a while but just sporadically tweeted about it. A friend handed me a Dan Olson YouTube video just before publication. He's more knowledgeable, articulate, and convincing about crypto. It's worth seeing:

This post is a summary. See the original one here.

You might also like

Esteban

3 years ago

The Berkus Startup Valuation Method: What Is It?

What Is That?

Berkus is a pre-revenue valuation method based exclusively on qualitative criteria, like Scorecard.

Few firms match their financial estimates, especially in the early stages, so valuation methodologies like the Berkus method are a good way to establish a valuation when the economic measures are not reliable.

How does it work?

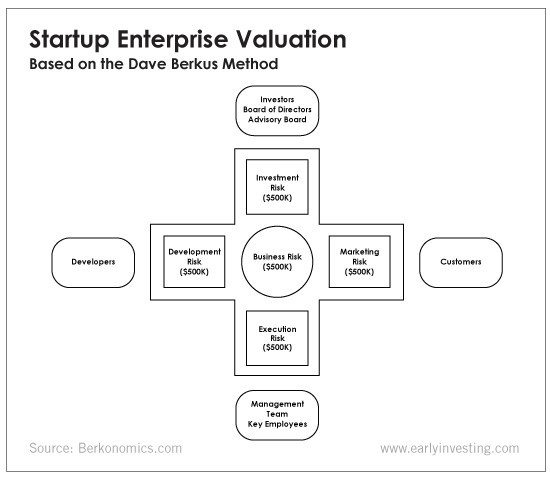

This technique evaluates five key success factors.

Fundamental principle

Technology

Execution

Strategic alliances in its primary market

Production, followed by sales

The Berkus technique values the business idea and four success factors. As seen in the matrix below, each of these dimensions poses a danger to the startup's success.

It assigns $0-$500,000 to each of these beginning regions. This approach enables a maximum $2.5M pre-money valuation.

This approach relies significantly on geography and uses the US as a baseline, as it differs in every country in Europe.

A set of standards for analyzing each dimension individually

Fundamental principle (or strength of the idea)

Ideas are worthless; execution matters. Most of us can relate to seeing a new business open in our area or a startup get funded and thinking, "I had this concept years ago!" Someone did it.

The concept remains. To assess the idea's viability, we must consider several criteria.

The concept's exclusivity It is necessary to protect a product or service's concept using patents and copyrights. Additionally, it must be capable of generating large profits.

Planned growth and growth that goes in a specific direction have a lot of potential, therefore incorporating them into a business is really advantageous.

The ability of a concept to grow A venture's ability to generate scalable revenue is a key factor in its emergence and continuation. A startup needs a scalable idea in order to compete successfully in the market.

The attraction of a business idea to a broad spectrum of people is significantly influenced by the current socio-political climate. Thus, the requirement for the assumption of conformity.

Concept Validation Ideas must go through rigorous testing with a variety of audiences in order to lower risk during the implementation phase.

Technology (Prototype)

This aspect reduces startup's technological risk. How good is the startup prototype when facing cyber threats, GDPR compliance (in Europe), tech stack replication difficulty, etc.?

Execution

Check the management team's efficacy. A potential angel investor must verify the founders' experience and track record with previous ventures. Good leadership is needed to chart a ship's course.

Strategic alliances in its primary market

Existing and new relationships will play a vital role in the development of both B2B and B2C startups. What are the startup's synergies? potential ones?

Production, followed by sales (product rollout)

Startup success depends on its manufacturing and product rollout. It depends on the overall addressable market, the startup's ability to market and sell their product, and their capacity to provide consistent, high-quality support.

Example

We're now founders of EyeCaramba, a machine vision-assisted streaming platform. My imagination always goes to poor puns when naming a startup.

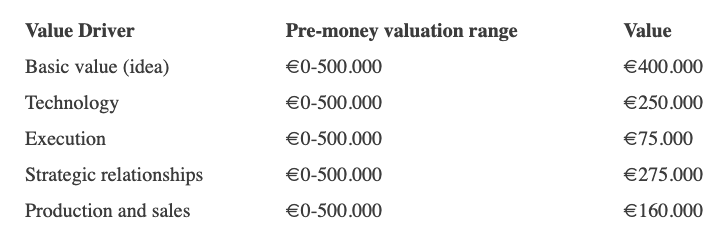

Since we're first-time founders and the Berkus technique depends exclusively on qualitative methods and the evaluator's skill, we ask our angel-investor acquaintance for a pre-money appraisal of EyeCaramba.

Our friend offers us the following table:

Because we're first-time founders, our pal lowered our Execution score. He knows the idea's value and that the gaming industry is red-hot, with worse startup ideas getting funded, therefore he gave the Basic value the highest value (idea).

EyeCaramba's pre-money valuation is $400,000 + $250,000 + $75,000 + $275,000 + $164,000 (1.16M). Good.

References

https://medium.com/humble-ventures/how-angel-investors-value-pre-revenue-startups-part-iii-8271405f0774#:~:text=pre%2Drevenue%20startups.-,Berkus%20Method,potential%20of%20the%20idea%20itself.%E2%80%9D

https://eqvista.com/berkus-valuation-method-for-startups/

https://www.venionaire.com/early-stage-startup-valuation-part-2-the-berkus-method/

Dung Claire Tran

3 years ago

Is the future of brand marketing with virtual influencers?

Digital influences that mimic humans are rising.

Lil Miquela has 3M Instagram followers, 3.6M TikTok followers, and 30K Twitter followers. She's been on the covers of Prada, Dior, and Calvin Klein magazines. Miquela released Not Mine in 2017 and launched Hard Feelings at Lollapazoolas this year. This isn't surprising, given the rise of influencer marketing.

This may be unexpected. Miquela's fake. Brud, a Los Angeles startup, produced her in 2016.

Lil Miquela is one of many rising virtual influencers in the new era of social media marketing. She acts like a real person and performs the same tasks as sports stars and models.

The emergence of online influencers

Before 2018, computer-generated characters were rare. Since the virtual human industry boomed, they've appeared in marketing efforts worldwide.

In 2020, the WHO partnered up with Atlanta-based virtual influencer Knox Frost (@knoxfrost) to gather contributions for the COVID-19 Solidarity Response Fund.

Lu do Magalu (@magazineluiza) has been the virtual spokeswoman for Magalu since 2009, using social media to promote reviews, product recommendations, unboxing videos, and brand updates. Magalu's 10-year profit was $552M.

In 2020, PUMA partnered with Southeast Asia's first virtual model, Maya (@mayaaa.gram). She joined Singaporean actor Tosh Zhang in the PUMA campaign. Local virtual influencer Ava Lee-Graham (@avagram.ai) partnered with retail firm BHG to promote their in-house labels.

In Japan, Imma (@imma.gram) is the face of Nike, PUMA, Dior, Salvatore Ferragamo SpA, and Valentino. Imma's bubblegum pink bob and ultra-fine fashion landed her on the cover of Grazia magazine.

Lotte Home Shopping created Lucy (@here.me.lucy) in September 2020. She made her TV debut as a Christmas show host in 2021. Since then, she has 100K Instagram followers and 13K TikTok followers.

Liu Yiexi gained 3 million fans in five days on Douyin, China's TikTok, in 2021. Her two-minute video went viral overnight. She's posted 6 videos and has 830 million Douyin followers.

China's virtual human industry was worth $487 million in 2020, up 70% year over year, and is expected to reach $875.9 million in 2021.

Investors worldwide are interested. Immas creator Aww Inc. raised $1 million from Coral Capital in September 2020, according to Bloomberg. Superplastic Inc., the Vermont-based startup behind influencers Janky and Guggimon, raised $16 million by 2020. Craft Ventures, SV Angels, and Scooter Braun invested. Crunchbase shows the company has raised $47 million.

The industries they represent, including Augmented and Virtual reality, were worth $14.84 billion in 2020 and are projected to reach $454.73 billion by 2030, a CAGR of 40.7%, according to PR Newswire.

Advantages for brands

Forbes suggests brands embrace computer-generated influencers. Examples:

Unlimited creative opportunities: Because brands can personalize everything—from a person's look and activities to the style of their content—virtual influencers may be suited to a brand's needs and personalities.

100% brand control: Brand managers now have more influence over virtual influencers, so they no longer have to give up and rely on content creators to include brands into their storytelling and style. Virtual influencers can constantly produce social media content to promote a brand's identity and ideals because they are completely scandal-free.

Long-term cost savings: Because virtual influencers are made of pixels, they may be reused endlessly and never lose their beauty. Additionally, they can move anywhere around the world and even into space to fit a brand notion. They are also always available. Additionally, the expense of creating their content will not rise in step with their expanding fan base.

Introduction to the metaverse: Statista reports that 75% of American consumers between the ages of 18 and 25 follow at least one virtual influencer. As a result, marketers that support virtual celebrities may now interact with younger audiences that are more tech-savvy and accustomed to the digital world. Virtual influencers can be included into any digital space, including the metaverse, as they are entirely computer-generated 3D personas. Virtual influencers can provide brands with a smooth transition into this new digital universe to increase brand trust and develop emotional ties, in addition to the young generations' rapid adoption of the metaverse.

Better engagement than in-person influencers: A Hype Auditor study found that online influencers have roughly three times the engagement of their conventional counterparts. Virtual influencers should be used to boost brand engagement even though the data might not accurately reflect the entire sector.

Concerns about influencers created by computers

Virtual influencers could encourage excessive beauty standards in South Korea, which has a $10.7 billion plastic surgery industry.

A classic Korean beauty has a small face, huge eyes, and pale, immaculate skin. Virtual influencers like Lucy have these traits. According to Lee Eun-hee, a professor at Inha University's Department of Consumer Science, this could make national beauty standards more unrealistic, increasing demand for plastic surgery or cosmetic items.

Other parts of the world raise issues regarding selling items to consumers who don't recognize the models aren't human and the potential of cultural appropriation when generating influencers of other ethnicities, called digital blackface by some.

Meta, Facebook and Instagram's parent corporation, acknowledges this risk.

“Like any disruptive technology, synthetic media has the potential for both good and harm. Issues of representation, cultural appropriation and expressive liberty are already a growing concern,” the company stated in a blog post. “To help brands navigate the ethical quandaries of this emerging medium and avoid potential hazards, (Meta) is working with partners to develop an ethical framework to guide the use of (virtual influencers).”

Despite theoretical controversies, the industry will likely survive. Companies think virtual influencers are the next frontier in the digital world, which includes the metaverse, virtual reality, and digital currency.

In conclusion

Virtual influencers may garner millions of followers online and help marketers reach youthful audiences. According to a YouGov survey, the real impact of computer-generated influencers is yet unknown because people prefer genuine connections. Virtual characters can supplement brand marketing methods. When brands are metaverse-ready, the author predicts virtual influencer endorsement will continue to expand.

William Brucee

3 years ago

This person is probably Satoshi Nakamoto.

Who founded bitcoin is the biggest mystery in technology today, not how it works.

On October 31, 2008, Satoshi Nakamoto posted a whitepaper to a cryptography email list. Still confused by the mastermind who changed monetary history.

Journalists and bloggers have tried in vain to uncover bitcoin's creator. Some candidates self-nominated. We're still looking for the mystery's perpetrator because none of them have provided proof.

One person. I'm confident he invented bitcoin. Let's assess Satoshi Nakamoto before I reveal my pick. Or what he wants us to know.

Satoshi's P2P Foundation biography says he was born in 1975. He doesn't sound or look Japanese. First, he wrote the whitepaper and subsequent articles in flawless English. His sleeping habits are unusual for a Japanese person.

Stefan Thomas, a Bitcoin Forum member, displayed Satoshi's posting timestamps. Satoshi Nakamoto didn't publish between 2 and 8 p.m., Japanese time. Satoshi's identity may not be real.

Why would he disguise himself?

There is a legitimate explanation for this

Phil Zimmermann created PGP to give dissidents an open channel of communication, like Pretty Good Privacy. US government seized this technology after realizing its potential. Police investigate PGP and Zimmermann.

This technology let only two people speak privately. Bitcoin technology makes it possible to send money for free without a bank or other intermediary, removing it from government control.

How much do we know about the person who invented bitcoin?

Here's what we know about Satoshi Nakamoto now that I've covered my doubts about his personality.

Satoshi Nakamoto first appeared with a whitepaper on metzdowd.com. On Halloween 2008, he presented a nine-page paper on a new peer-to-peer electronic monetary system.

Using the nickname satoshi, he created the bitcointalk forum. He kept developing bitcoin and created bitcoin.org. Satoshi mined the genesis block on January 3, 2009.

Satoshi Nakamoto worked with programmers in 2010 to change bitcoin's protocol. He engaged with the bitcoin community. Then he gave Gavin Andresen the keys and codes and transferred community domains. By 2010, he'd abandoned the project.

The bitcoin creator posted his goodbye on April 23, 2011. Mike Hearn asked Satoshi if he planned to rejoin the group.

“I’ve moved on to other things. It’s in good hands with Gavin and everyone.”

Nakamoto Satoshi

The man who broke the banking system vanished. Why?

Satoshi's wallets held 1,000,000 BTC. In December 2017, when the price peaked, he had over US$19 billion. Nakamoto had the 44th-highest net worth then. He's never cashed a bitcoin.

This data suggests something happened to bitcoin's creator. I think Hal Finney is Satoshi Nakamoto .

Hal Finney had ALS and died in 2014. I suppose he created the future of money, then he died, leaving us with only rumors about his identity.

Hal Finney, who was he?

Hal Finney graduated from Caltech in 1979. Student peers voted him the smartest. He took a doctoral-level gravitational field theory course as a freshman. Finney's intelligence meets the first requirement for becoming Satoshi Nakamoto.

Students remember Finney holding an Ayn Rand book. If he'd read this, he may have developed libertarian views.

His beliefs led him to a small group of freethinking programmers. In the 1990s, he joined Cypherpunks. This action promoted the use of strong cryptography and privacy-enhancing technologies for social and political change. Finney helped them achieve a crypto-anarchist perspective as self-proclaimed privacy defenders.

Zimmermann knew Finney well.

Hal replied to a Cypherpunk message about Phil Zimmermann and PGP. He contacted Phil and became PGP Corporation's first member, retiring in 2011. Satoshi Nakamoto quit bitcoin in 2011.

Finney improved the new PGP protocol, but he had to do so secretly. He knew about Phil's PGP issues. I understand why he wanted to hide his identity while creating bitcoin.

Why did he pretend to be from Japan?

His envisioned persona was spot-on. He resided near scientist Dorian Prentice Satoshi Nakamoto. Finney could've assumed Nakamoto's identity to hide his. Temple City has 36,000 people, so what are the chances they both lived there? A cryptographic genius with the same name as Bitcoin's creator: coincidence?

Things went differently, I think.

I think Hal Finney sent himself Satoshis messages. I know it's odd. If you want to conceal your involvement, do as follows. He faked messages and transferred the first bitcoins to himself to test the transaction mechanism, so he never returned their money.

Hal Finney created the first reusable proof-of-work system. The bitcoin protocol. In the 1990s, Finney was intrigued by digital money. He invented CRypto cASH in 1993.

Legacy

Hal Finney's contributions should not be forgotten. Even if I'm wrong and he's not Satoshi Nakamoto, we shouldn't forget his bitcoin contribution. He helped us achieve a better future.