More on Entrepreneurship/Creators

Jenn Leach

3 years ago

How Much I Got Paid by YouTube for a 68 Million Views Video

My nameless, faceless channel case study

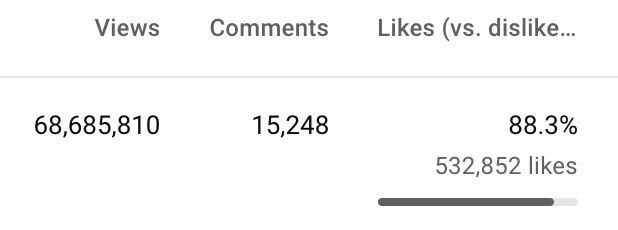

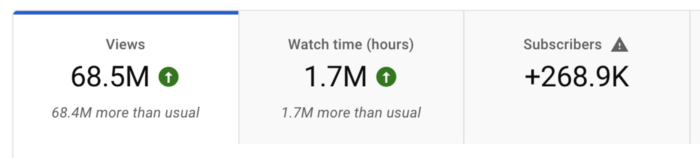

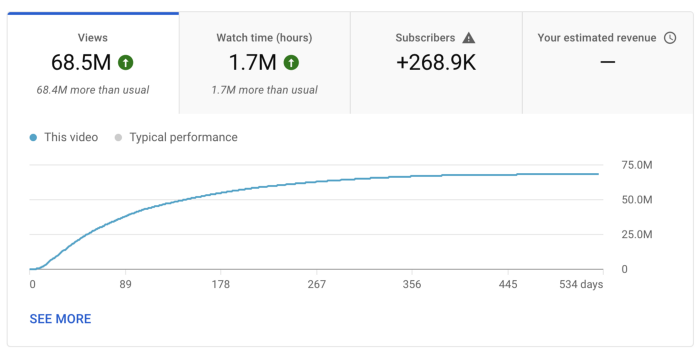

The Numbers

I anonymize this YouTube channel.

It's in a trendy, crowded niche. Sharing it publicly will likely enhance competition.

I'll still share my dashboard numbers:

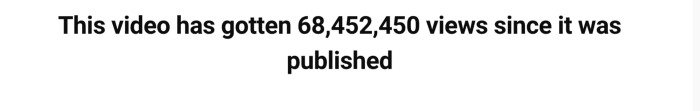

A year ago, the video was released.

What I earned

I'll stop stalling. Here's a screenshot of my YouTube statistics page displaying Adsense profits.

YouTube Adsense made me ZERO dollars.

OMG!

How is this possible?

YouTube Adsense can't monetize my niche. This is typical in faceless niches like TikTok's rain videos. If they were started a while ago, I'm sure certain rain accounts are monetized, but not today.

I actually started a soothing sounds faceless YouTube channel. This was another account of mine.

I looped Pexels films for hours. No background music, just wind, rain, etc.

People could watch these videos to relax or get ready for bed. They're ideal for background noise and relaxation.

They're long-lasting, too. It's easy to make a lot from YouTube Adsense if you insert ads.

Anyway, I tried to monetize it and couldn’t. This was about a year ago. That’s why I doubt new accounts in this genre would be able to get approved for ads.

Back to my faceless channel with 68 million views.

I received nothing from YouTube Adsense, but I made money elsewhere.

Getting paid by the gods of affiliate marketing

Place links in the video and other videos on the channel to get money. Visitors that buy through your affiliate link earn you a commission.

This video earned many clicks on my affiliate links.

I linked to a couple of Amazon products, a YouTube creator tool, my kofi link, and my subscribe link.

Sponsorships

Brands pay you to include ads in your videos.

This video led to many sponsorships.

I've done dozens of sponsorship campaigns that paid $40 to $50 for an end screen to $450 for a preroll ad.

Last word

Overall, I made less than $3,000.

If I had time, I'd be more proactive with sponsorships. You can pitch brand sponsorships. This actually works.

I'd do that if I could rewind time.

I still can, but I think the reaction rate would be higher closer to the viral video's premiere date.

Jim Siwek

3 years ago

In 2022, can a lone developer be able to successfully establish a SaaS product?

In the early 2000s, I began developing SaaS. I helped launch an internet fax service that delivered faxes to email inboxes. Back then, it saved consumers money and made the procedure easier.

Google AdWords was young then. Anyone might establish a new website, spend a few hundred dollars on keywords, and see dozens of new paying clients every day. That's how we launched our new SaaS, and these clients stayed for years. Our early ROI was sky-high.

Changing times

The situation changed dramatically after 15 years. Our paid advertising cost $200-$300 for every new customer. Paid advertising takes three to four years to repay.

Fortunately, we still had tens of thousands of loyal clients. Good organic rankings gave us new business. We needed less sponsored traffic to run a profitable SaaS firm.

Is it still possible?

Since selling our internet fax firm, I've dreamed about starting a SaaS company. One I could construct as a lone developer and progressively grow a dedicated customer base, as I did before in a small team.

It seemed impossible to me. Solo startups couldn't afford paid advertising. SEO was tough. Even the worst SaaS startup ideas attracted VC funding. How could I compete with startups that could hire great talent and didn't need to make money for years (or ever)?

The One and Only Way to Learn

After years of talking myself out of SaaS startup ideas, I decided to develop and launch one. I needed to know if a solitary developer may create a SaaS app in 2022.

Thus, I did. I invented webwriter.ai, an AI-powered writing tool for website content, from hero section headlines to blog posts, this year. I soft-launched an MVP in July.

Considering the Issue

Now that I've developed my own fully capable SaaS app for site builders and developers, I wonder if it's still possible. Can webwriter.ai be successful?

I know webwriter.ai's proposal is viable because Jasper.ai and Grammarly are also AI-powered writing tools. With competition comes validation.

To Win, Differentiate

To compete with well-funded established brands, distinguish to stand out to a portion of the market. So I can speak directly to a target user, unlike larger competition.

I created webwriter.ai to help web builders and designers produce web content rapidly. This may be enough differentiation for now.

Budget-Friendly Promotion

When paid search isn't an option, we get inventive. There are more tools than ever to promote a new website.

Organic Results

on social media (Twitter, Instagram, TikTok, LinkedIn)

Marketing with content that is compelling

Link Creation

Listings in directories

references made in blog articles and on other websites

Forum entries

The Beginning of the Journey

As I've labored to construct my software, I've pondered a new mantra. Not sure where that originated from, but I like it. I'll live by it and teach my kids:

“Do the work.”

Pat Vieljeux

3 years ago

The three-year business plan is obsolete for startups.

If asked, run.

An entrepreneur asked me about her pitch deck. A Platform as a Service (PaaS).

She told me she hadn't done her 5-year forecasts but would soon.

I said, Don't bother. I added "time-wasting."

“I've been asked”, she said.

“Who asked?”

“a VC”

“5-year forecast?”

“Yes”

“Get another VC. If he asks, it's because he doesn't understand your solution or to waste your time.”

Some VCs are lagging. They're still using steam engines.

10-years ago, 5-year forecasts were requested.

Since then, we've adopted a 3-year plan.

But It's outdated.

Max one year.

What has happened?

Revolutionary technology. NO-CODE.

Revolution's consequences?

Product viability tests are shorter. Hugely. SaaS and PaaS.

Let me explain:

Building a minimum viable product (MVP) that works only takes a few months.

1 to 2 months for practical testing.

Your company plan can be validated or rejected in 4 months as a consequence.

After validation, you can ask for VC money. Even while a prototype can generate revenue, you may not require any.

Good VCs won't ask for a 3-year business plan in that instance.

One-year, though.

If you want, establish a three-year plan, but realize that the second year will be different.

You may have changed your business model by then.

A VC isn't interested in a three-year business plan because your solution may change.

Your ability to create revenue will be key.

But also, to pivot.

They will be interested in your value proposition.

They will want to know what differentiates you from other competitors and why people will buy your product over another.

What will interest them is your resilience, your ability to bounce back.

Not to mention your mindset. The fact that you won’t get discouraged at the slightest setback.

The grit you have when facing adversity, as challenges will surely mark your journey.

The authenticity of your approach. They’ll want to know that you’re not just in it for the money, let alone to show off.

The fact that you put your guts into it and that you are passionate about it. Because entrepreneurship is a leap of faith, a leap into the void.

They’ll want to make sure you are prepared for it because it’s not going to be a walk in the park.

They’ll want to know your background and why you got into it.

They’ll also want to know your family history.

And what you’re like in real life.

So a 5-year plan…. You can bet they won’t give a damn. Like their first pair of shoes.

You might also like

Blake Montgomery

3 years ago

Explaining Twitter Files

Elon Musk, Matt Taibbi, the 'Twitter Files,' and Hunter Biden's laptop: what gives?

Explaining Twitter Files

Matt Taibbi released "The Twitter Files," a batch of emails sent by Twitter executives discussing the company's decision to stop an October 2020 New York Post story online.

What's on Twitter? New York Post and Fox News call them "bombshell" documents. Or, as a Post columnist admitted, are they "not the smoking gun"? Onward!

What started this?

The New York Post published an exclusive, potentially explosive story in October 2020: Biden's Secret Emails: Ukrainian executive thanks Hunter Biden for'meeting' veep dad. The story purported to report the contents of a laptop brought to the tabloid by a Delaware computer repair shop owner who said it belonged to President Biden's second son, Hunter Biden. Emails and files on the laptop allegedly showed how Hunter peddled influence with Ukranian businessmen and included a "raunchy 12-minute video" of Hunter smoking crack and having sex.

Twitter banned links to the Post story after it was published, calling it "hacked material." The Post's Twitter account was suspended for multiple days.

Why? Yoel Roth, Twitter's former head of trust and safety, said the company couldn't verify the story, implying they didn't trust the Post.

Twitter's stated purpose rarely includes verifying news stories. This seemed like intentional political interference. This story was hard to verify because the people who claimed to have found the laptop wouldn't give it to other newspapers. (Much of the story, including Hunter's business dealings in Ukraine and China, was later confirmed.)

Roth: "It looked like a hack and leak."

So what are the “Twitter Files?”

Twitter's decision to bury the story became a political scandal, and new CEO Elon Musk promised an explanation. The Twitter Files, named after Facebook leaks.

Musk promised exclusive details of "what really happened" with Hunter Biden late Friday afternoon. The tweet was punctuated with a popcorn emoji.

Explaining Twitter Files

Three hours later, journalist Matt Taibbi tweeted more than three dozen tweets based on internal Twitter documents that revealed "a Frankensteinian tale of a human-built mechanism grown out of its designer's control."

Musk sees this release as a way to shape Twitter's public perception and internal culture in his image. We don't know if the CEO gave Taibbi the documents. Musk hyped the document dump before and during publication, but Taibbi cited "internal sources."

Taibbi shares email screenshots showing Twitter execs discussing the Post story and blocking its distribution. Taibbi says the emails show Twitter's "extraordinary steps" to bury the story.

Twitter communications chief Brandon Borrman has the most damning quote in the Files. Can we say this is policy? The story seemed unbelievable. It seemed like a hack... or not? Could Twitter, which ex-CEO Dick Costolo called "the free speech wing of the free speech party," censor a news story?

Many on the right say the Twitter Files prove the company acted at the behest of Democrats. Both parties had these tools, writes Taibbi. In 2020, both the Trump White House and Biden campaign made requests. He says the system for reporting tweets for deletion is unbalanced because Twitter employees' political donations favor Democrats. Perhaps. These donations may have helped Democrats connect with Twitter staff, but it's also possible they didn't. No emails in Taibbi's cache show these alleged illicit relations or any actions Twitter employees took as a result.

Even Musk's supporters were surprised by the drop. Miranda Devine of the New York Post told Tucker Carlson the documents weren't "the smoking gun we'd hoped for." Sebastian Gorka said on Truth Social, "So far, I'm deeply underwhelmed." DC Democrats collude with Palo Alto Democrats. Whoop!” The Washington Free Beacon's Joe Simonson said the Twitter files are "underwhelming." Twitter was staffed by Democrats who did their bidding. (Why?)

If "The Twitter Files" matter, why?

These emails led Twitter to suppress the Hunter Biden laptop story has real news value. It's rare for a large and valuable company like Twitter to address wrongdoing so thoroughly. Emails resemble FOIA documents. They describe internal drama at a company with government-level power. Katie Notopoulos tweeted, "Any news outlet would've loved this scoop!" It's not a'scandal' as teased."

Twitter's new owner calls it "the de facto public town square," implying public accountability. Like a government agency. Though it's exciting to receive once-hidden documents in response to a FOIA, they may be boring and tell you nothing new. Like Twitter files. We learned how Twitter blocked the Post's story, but not why. Before these documents were released, we knew Twitter had suppressed the story and who was involved.

These people were disciplined and left Twitter. Musk fired Vijaya Gadde, the former CLO who reportedly played a "key role" in the decision. Roth quit over Musk's "dictatorship." Musk arrived after Borrman left. Jack Dorsey, then-CEO, has left. Did those who digitally quarantined the Post's story favor Joe Biden and the Democrats? Republican Party opposition and Trump hatred? New York Post distaste? According to our documents, no. Was there political and press interference? True. We knew.

Taibbi interviewed anonymous ex-Twitter employees about the decision; all expressed shock and outrage. One source said, "Everyone knew this was fucked." Since Taibbi doesn't quote that expletive, we can assume the leaked emails contained few or no sensational quotes. These executives said little to support nefarious claims.

Outlets more invested in the Hunter Biden story than Gizmodo seem vexed by the release and muted headlines. The New York Post, which has never shied away from a blaring headline in its 221-year history, owns the story of Hunter Biden's laptop. Two Friday-night Post alerts about Musk's actions were restrained. Elon Musk will drop Twitter files on NY Post-Hunter Biden laptop censorship today. Elon Musk's Twitter dropped Post censorship details from Biden's laptop. Fox News' Apple News push alert read, "Elon Musk drops Twitter censorship documents."

Bombshell, bombshell, bombshell… what, exactly, is the bombshell? Maybe we've heard this story too much and are missing the big picture. Maybe these documents detail a well-documented decision.

The Post explains why on its website. "Hunter Biden laptop bombshell: Twitter invented reason to censor Post's reporting," its headline says.

Twitter's ad hoc decision to moderate a tabloid's content is not surprising. The social network had done this for years as it battled toxic users—violent white nationalists, virulent transphobes, harassers and bullies of all political stripes, etc. No matter how much Musk crows, the company never had content moderation under control. Buzzfeed's 2016 investigation showed how Twitter has struggled with abusive posters since 2006. Jack Dorsey and his executives improvised, like Musk.

Did the US government interfere with the ex-social VP's media company? That's shocking, a bombshell. Musk said Friday, "Twitter suppressing free speech by itself is not a 1st amendment violation, but acting under government orders with no judicial review is." Indeed! Taibbi believed this. August 2022: "The laptop is secondary." Zeynep Tufecki, a Columbia professor and New York Times columnist, says the FBI is cutting true story distribution. Taibbi retracted the claim Friday night: "I've seen no evidence of government involvement in the laptop story."

What’s the bottom line?

I'm still not sure what's at stake in the Hunter Biden scandal after dozens of New York Post articles, hundreds of hours of Fox News airtime, and thousands of tweets. Briefly: Joe Biden's son left his laptop with a questionable repairman. FBI confiscated it? The repairman made a copy and gave it to Rudy Giuliani's lawyer. The Post got it from Steve Bannon. On that laptop were videos of Hunter Biden smoking crack, cavorting with prostitutes, and emails about introducing his father to a Ukrainian businessman for $50,000 a month. Joe Biden urged Ukraine to fire a prosecutor investigating the company. What? The story seems to be about Biden family business dealings, right?

The discussion has moved past that point anyway. Now, the story is the censorship of it. Adrienne Rich wrote in "Diving Into the Wreck" that she came for "the wreck and not the story of the wreck" No matter how far we go, Hunter Biden's laptop is done. Now, the crash's story matters.

I'm dizzy. Katherine Miller of BuzzFeed wrote, "I know who I believe, and you probably do, too. To believe one is to disbelieve the other, which implicates us in the decision; we're stuck." I'm stuck. Hunter Biden's laptop is a political fabrication. You choose. I've decided.

This could change. Twitter Files drama continues. Taibbi said, "Much more to come." I'm dizzy.

CyberPunkMetalHead

3 years ago

It's all about the ego with Terra 2.0.

UST depegs and LUNA crashes 99.999% in a fraction of the time it takes the Moon to orbit the Earth.

Fat Man, a Terra whistle-blower, promises to expose Do Kwon's dirty secrets and shady deals.

The Terra community has voted to relaunch Terra LUNA on a new blockchain. The Terra 2.0 Pheonix-1 blockchain went live on May 28, 2022, and people were airdropped the new LUNA, now called LUNA, while the old LUNA became LUNA Classic.

Does LUNA deserve another chance? To answer this, or at least start a conversation about the Terra 2.0 chain's advantages and limitations, we must assess its fundamentals, ideology, and long-term vision.

Whatever the result, our analysis must be thorough and ruthless. A failure of this magnitude cannot happen again, so we must magnify every potential breaking point by 10.

Will UST and LUNA holders be compensated in full?

The obvious. First, and arguably most important, is to restore previous UST and LUNA holders' bags.

Terra 2.0 has 1,000,000,000,000 tokens to distribute.

25% of a community pool

Holders of pre-attack LUNA: 35%

10% of aUST holders prior to attack

Holders of LUNA after an attack: 10%

UST holders as of the attack: 20%

Every LUNA and UST holder has been compensated according to the above proposal.

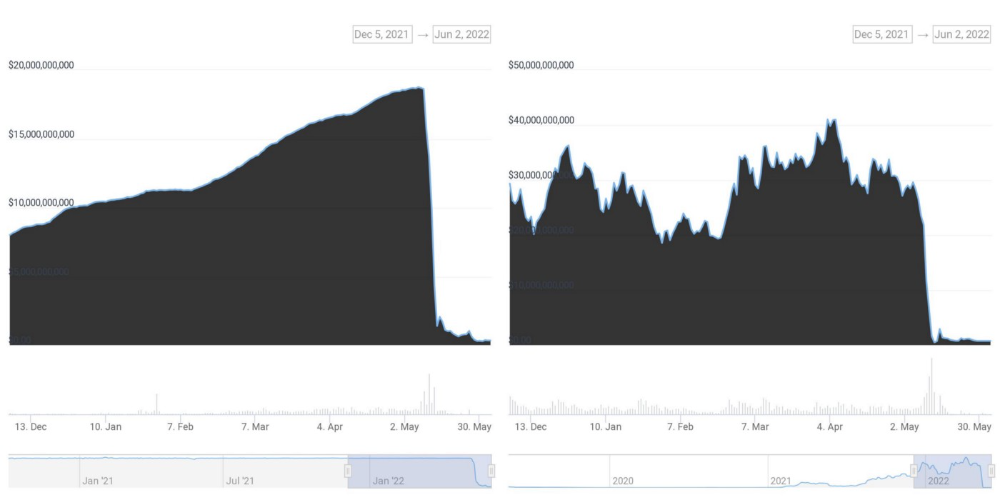

According to self-reported data, the new chain has 210.000.000 tokens and a $1.3bn marketcap. LUNC and UST alone lost $40bn. The new token must fill this gap. Since launch:

LUNA holders collectively own $1b worth of LUNA if we subtract the 25% community pool airdrop from the current market cap and assume airdropped LUNA was never sold.

At the current supply, the chain must grow 40 times to compensate holders. At the current supply, LUNA must reach $240.

LUNA needs a full-on Bull Market to make LUNC and UST holders whole.

Who knows if you'll be whole? From the time you bought to the amount and price, there are too many variables to determine if Terra can cover individual losses.

The above distribution doesn't consider individual cases. Terra didn't solve individual cases. It would have been huge.

What does LUNA offer in terms of value?

UST's marketcap peaked at $18bn, while LUNC's was $41bn. LUNC and UST drove the Terra chain's value.

After it was confirmed (again) that algorithmic stablecoins are bad, Terra 2.0 will no longer support them.

Algorithmic stablecoins contributed greatly to Terra's growth and value proposition. Terra 2.0 has no product without algorithmic stablecoins.

Terra 2.0 has an identity crisis because it has no actual product. It's like Volkswagen faking carbon emission results and then stopping car production.

A project that has already lost the trust of its users and nearly all of its value cannot survive without a clear and in-demand use case.

Do Kwon, how about him?

Oh, the Twitter-caller-poor? Who challenges crypto billionaires to break his LUNA chain? Who dissolved Terra Labs South Korea before depeg? Arrogant guy?

That's not a good image for LUNA, especially when making amends. I think he should step down and let a nicer person be Terra 2.0's frontman.

The verdict

Terra has a terrific community with an arrogant, unlikeable leader. The new LUNA chain must grow 40 times before it can start making up its losses, and even then, not everyone's losses will be covered.

I won't invest in Terra 2.0 or other algorithmic stablecoins in the near future. I won't be near any Do Kwon-related project within 100 miles. My opinion.

Can Terra 2.0 be saved? Comment below.

Boris Müller

3 years ago

Why Do Websites Have the Same Design?

My kids redesigned the internet because it lacks inventiveness.

Internet today is bland. Everything is generic: fonts, layouts, pages, and visual language. Microtypography is messy.

Web design today seems dictated by technical and ideological constraints rather than creativity and ideas. Text and graphics are in containers on every page. All design is assumed.

Ironically, web technologies can design a lot. We can execute most designs. We make shocking, evocative websites. Experimental typography, generating graphics, and interactive experiences are possible.

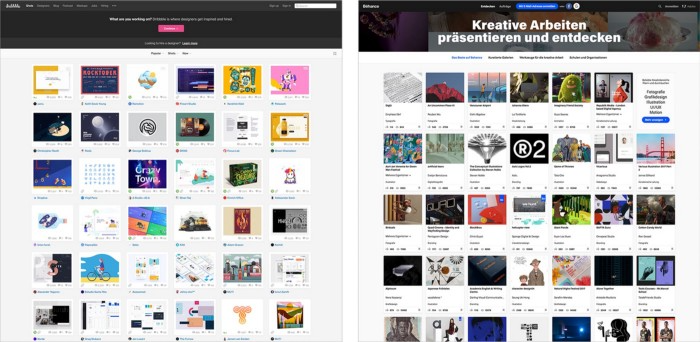

Even designer websites use containers in containers. Dribbble and Behance, the two most popular creative websites, are boring. Lead image.

How did this happen?

Several reasons. WordPress and other blogging platforms use templates. These frameworks build web pages by combining graphics, headlines, body content, and videos. Not designs, templates. These rules combine related data types. These platforms don't let users customize pages beyond the template. You filled the template.

Templates are content-neutral. Thus, the issue.

Form should reflect and shape content, which is a design principle. Separating them produces content containers. Templates have no design value.

One of the fundamental principles of design is a deep and meaningful connection between form and content.

Web design lacks imagination for many reasons. Most are pragmatic and economic. Page design takes time. Large websites lack the resources to create a page from scratch due to the speed of internet news and the frequency of new items. HTML, JavaScript, and CSS continue to challenge web designers. Web design can't match desktop publishing's straightforward operations.

Designers may also be lazy. Mobile-first, generic, framework-driven development tends to ignore web page visual and contextual integrity.

How can we overcome this? How might expressive and avant-garde websites look today?

Rediscovering the past helps design the future.

'90s-era web design

At the University of the Arts Bremen's research and development group, I created my first website 23 years ago. Web design was trendy. Young web. Pages inspired me.

We struggled with HTML in the mid-1990s. Arial, Times, and Verdana were the only web-safe fonts. Anything exciting required table layouts, monospaced fonts, or GIFs. HTML was originally content-driven, thus we had to work against it to create a page.

Experimental typography was booming. Designers challenged the established quo from Jan Tschichold's Die Neue Typographie in the twenties to April Greiman's computer-driven layouts in the eighties. By the mid-1990s, an uncommon confluence of technological and cultural breakthroughs enabled radical graphic design. Irma Boom, David Carson, Paula Scher, Neville Brody, and others showed it.

Early web pages were dull compared to graphic design's aesthetic explosion. The Web Design Museum shows this.

Nobody knew how to conduct browser-based graphic design. Web page design was undefined. No standards. No CMS (nearly), CSS, JS, video, animation.

Now is as good a time as any to challenge the internet’s visual conformity.

In 2018, everything is browser-based. Massive layouts to micro-typography, animation, and video. How do we use these great possibilities? Containerized containers. JavaScript-contaminated mobile-first pages. Visually uniform templates. Web design 23 years later would disappoint my younger self.

Our imagination, not technology, restricts web design. We're too conformist to aesthetics, economics, and expectations.

Crisis generates opportunity. Challenge online visual conformity now. I'm too old and bourgeois to develop a radical, experimental, and cutting-edge website. I can ask my students.

I taught web design at the Potsdam Interface Design Programme in 2017. Each team has to redesign a website. Create expressive, inventive visual experiences on the browser. Create with contemporary web technologies. Avoid usability, readability, and flexibility concerns. Act. Ignore Erwartungskonformität.

The class outcome pleased me. This overview page shows all results. Four diverse projects address the challenge.

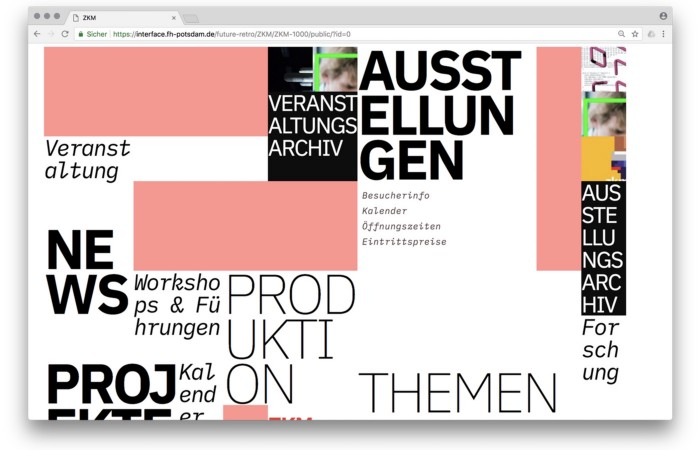

1. ZKM by Frederic Haase and Jonas Köpfer

Frederic and Jonas began their experiments on the ZKM website. The ZKM is Germany's leading media art exhibition location, but its website remains conventional. It's useful but not avant-garde like the shows' art.

Frederic and Jonas designed the ZKM site's concept, aesthetic language, and technical configuration to reflect the museum's progressive approach. A generative design engine generates new layouts for each page load.

ZKM redesign.

2. Streem by Daria Thies, Bela Kurek, and Lucas Vogel

Street art magazine Streem. It promotes new artists and societal topics. Streem includes artwork, painting, photography, design, writing, and journalism. Daria, Bela, and Lucas used these influences to develop a conceptual metropolis. They designed four neighborhoods to reflect magazine sections for their prototype. For a legible city, they use powerful illustrative styles and spatial typography.

Streem makeover.

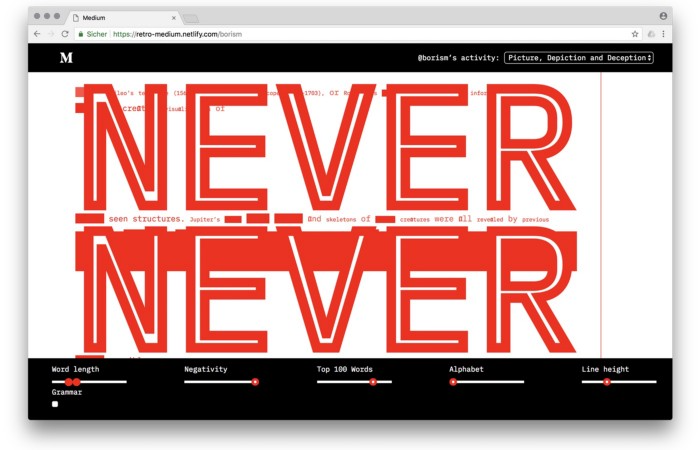

3. Medium by Amelie Kirchmeyer and Fabian Schultz

Amelie and Fabian structured. Instead of developing a form for a tale, they dissolved a web page into semantic, syntactical, and statistical aspects. HTML's flexibility was their goal. They broke Medium posts into experimental typographic space.

Medium revamp.

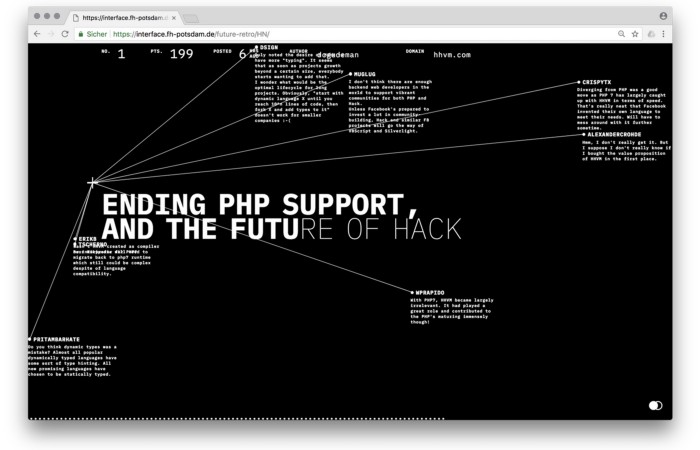

4. Hacker News by Fabian Dinklage and Florian Zia

Florian and Fabian made Hacker News interactive. The social networking site aggregates computer science and IT news. Its voting and debate features are extensive despite its simple style. Fabian and Florian transformed the structure into a typographic timeline and network area. News and comments sequence and connect the visuals. To read Hacker News, they connected their design to the API. Hacker News makeover.

Communication is not legibility, said Carson. Apply this to web design today. Modern websites must be legible, usable, responsive, and accessible. They shouldn't limit its visual palette. Visual and human-centered design are not stereotypes.

I want radical, generative, evocative, insightful, adequate, content-specific, and intelligent site design. I want to rediscover web design experimentation. More surprises please. I hope the web will appear different in 23 years.

Update: this essay has sparked a lively discussion! I wrote a brief response to the debate's most common points: Creativity vs. Usability