More on Current Events

Will Lockett

3 years ago

Russia's nukes may be useless

Russia's nuclear threat may be nullified by physics.

Putin seems nostalgic and wants to relive the Cold War. He's started a deadly war to reclaim the old Soviet state of Ukraine and is threatening the West with nuclear war. NATO can't risk starting a global nuclear war that could wipe out humanity to support Ukraine's independence as much as they want to. Fortunately, nuclear physics may have rendered Putin's nuclear weapons useless. However? How will Ukraine and NATO react?

To understand why Russia's nuclear weapons may be ineffective, we must first know what kind they are.

Russia has the world's largest nuclear arsenal, with 4,447 strategic and 1,912 tactical weapons (all of which are ready to be rolled out quickly). The difference between these two weapons is small, but it affects their use and logistics. Strategic nuclear weapons are ICBMs designed to destroy a city across the globe. Russia's ICBMs have many designs and a yield of 300–800 kilotonnes. 300 kilotonnes can destroy Washington. Tactical nuclear weapons are smaller and can be fired from artillery guns or small truck-mounted missile launchers, giving them a 1,500 km range. Instead of destroying a distant city, they are designed to eliminate specific positions, bases, or military infrastructure. They produce 1–50 kilotonnes.

These two nuclear weapons use different nuclear reactions. Pure fission bombs are compact enough to fit in a shell or small missile. All early nuclear weapons used this design for their fission bombs. This technology is inefficient for bombs over 50 kilotonnes. Larger bombs are thermonuclear. Thermonuclear weapons use a small fission bomb to compress and heat a hydrogen capsule, which undergoes fusion and releases far more energy than ignition fission reactions, allowing for effective giant bombs.

Here's Russia's issue.

A thermonuclear bomb needs deuterium (hydrogen with one neutron) and tritium (hydrogen with two neutrons). Because these two isotopes fuse at lower energies than others, the bomb works. One problem. Tritium is highly radioactive, with a half-life of only 12.5 years, and must be artificially made.

Tritium is made by irradiating lithium in nuclear reactors and extracting the gas. Tritium is one of the most expensive materials ever made, at $30,000 per gram.

Why does this affect Putin's nukes?

Thermonuclear weapons need tritium. Tritium decays quickly, so they must be regularly refilled at great cost, which Russia may struggle to do.

Russia has a smaller economy than New York, yet they are running an invasion, fending off international sanctions, and refining tritium for 4,447 thermonuclear weapons.

The Russian military is underfunded. Because the state can't afford it, Russian troops must buy their own body armor. Arguably, Putin cares more about the Ukraine conflict than maintaining his nuclear deterrent. Putin will likely lose power if he loses the Ukraine war.

It's possible that Putin halted tritium production and refueling to save money for Ukraine. His threats of nuclear attacks and escalating nuclear war may be a bluff.

This doesn't help Ukraine, sadly. Russia's tactical nuclear weapons don't need expensive refueling and will help with the invasion. So Ukraine still risks a nuclear attack. The bomb that destroyed Hiroshima was 15 kilotonnes, and Russia's tactical Iskander-K nuclear missile has a 50-kiloton yield. Even "little" bombs are deadly.

We can't guarantee it's happening in Russia. Putin may prioritize tritium. He knows the power of nuclear deterrence. Russia may have enough tritium for this conflict. Stockpiling a material with a short shelf life is unlikely, though.

This means that Russia's most powerful weapons may be nearly useless, but they may still be deadly. If true, this could allow NATO to offer full support to Ukraine and push the Russian tyrant back where he belongs. If Putin withholds funds from his crumbling military to maintain his nuclear deterrent, he may be willing to sink the ship with him. Let's hope the former.

Johnny Harris

4 years ago

The REAL Reason Putin is Invading Ukraine [video with transcript]

Transcript:

[Reporter] The Russian invasion of Ukraine.

Momentum is building for a war between Ukraine and Russia.

[Reporter] Tensions between Russia and the West

are growing rapidly.

[Reporter] President Biden considering deploying

thousands of troops to Eastern Europe.

There are now 100,000 troops

on the Eastern border of Ukraine.

Russia is setting up field hospitals on this border.

Like this is what preparation for war looks like.

A legitimate war.

Ukrainian troops are watching and waiting,

saying they are preparing for a fight.

The U.S. has ordered the families of embassy staff

to leave Ukraine.

Britain has sent all of their nonessential staff home.

And now the U.S. is sending tons of weapons and munitions

to Ukraine's army.

And we're even considering deploying

our own troops to the region.

I mean, this thing is heating up.

Meanwhile, Russia and the West have been in Geneva

and Brussels trying to talk it out,

and sort of getting nowhere.

The message is very clear.

Should Russia take further aggressive actions

against Ukraine the costs will be severe

and the consequences serious.

It's a scary, grim momentum that is unpredictable.

And the chances of miscalculation

and escalation are growing.

I want to explain what's going on here,

but I want to show you that this isn't just

typical geopolitical behavior.

Stuff that can just be explained on the map.

Instead, to understand why 100,000 troops are camped out

on Ukraine's Eastern border, ready for war,

you have to understand Russia

and how it's been cut down over the ages

from the Slavic empire that dominated this whole region

to then the Soviet Union,

which was defeated in the nineties.

And what you really have to understand here

is how that history is transposed

onto the brain of one man.

This guy, Vladimir Putin.

This is a story about regional domination

and struggles between big powers,

but really it's the story about

what Vladimir Putin really wants.

[Reporter] Russian troops moving swiftly

to take control of military bases in Crimea.

[Reporter] Russia has amassed more than 100,000 troops

and a lot of military hardware

at the border with Ukraine.

Let's dive back in.

Okay. Let's get up to speed on what's happening here.

And I'm just going to quickly give you the highlight version

of like the news that's happening,

because I want to get into the juicy part,

which is like why, the roots of all of this.

So let's go.

A few months ago, Russia started sending

more and more troops to this border.

It's this massive border between Ukraine and Russia.

They said they were doing a military exercise,

but the rest of the world was like,

"Yeah, we totally believe you Russia. Pshaw."

This was right before this big meeting

where North American and European countries

were coming together to talk about a lot

of different things, like these countries often do

in these diplomatic summits.

But soon, because of Russia's aggressive behavior

coming in and setting up 100,000 troops

on the border with Ukraine,

the entire summit turned into a whole, "WTF Russia,

what are you doing on the border of Ukraine," meeting.

Before the meeting Putin comes out and says,

"Listen, I have some demands for the West."

And everyone's like, "Okay, Russia, what are your demands?

You know, we have like, COVID19 right now.

And like, that's like surging.

So like, we don't need your like,

bluster about what your demands are."

And Putin's like, "No, here's my list of demands."

Putin's demands for the summit were this:

number one, that NATO, which is this big military alliance

between U.S., Canada, and Europe stop expanding,

meaning they don't let any new members in, okay.

So, Russia is like, "No more new members to your, like,

cool military club that I don't like.

You can't have any more members."

Number two, that NATO withdraw all of their troops

from anywhere in Eastern Europe.

Basically Putin is saying,

"I can veto any military cooperation

or troops going between countries

that have to do with Eastern Europe,

the place that used to be the Soviet Union."

Okay, and number three, Putin demands that America vow

not to protect its allies in Eastern Europe

with nuclear weapons.

"LOL," said all of the other countries,

"You're literally nuts, Vladimir Putin.

Like these are the most ridiculous demands, ever."

But there he is, Putin, with these demands.

These very, very aggressive demands.

And he sort of is implying that if his demands aren't met,

he's going to invade Ukraine.

I mean, it doesn't work like this.

This is not how international relations work.

You don't just show up and say like,

"I'm not gonna allow other countries to join your alliance

because it makes me feel uncomfortable."

But what I love about this list of demands

from Vladimir Putin for this summit

is that it gives us a clue

on what Vladimir Putin really wants.

What he's after here.

You read them closely and you can grasp his intentions.

But to grasp those intentions

you have to understand what NATO is.

and what Russia and Ukraine used to be.

(dramatic music)

Okay, so a while back I made this video

about why Russia is so damn big,

where I explain how modern day Russia started here in Kiev,

which is actually modern day Ukraine.

In other words, modern day Russia, as we know it,

has its original roots in Ukraine.

These places grew up together

and they eventually became a part

of the same mega empire called the Soviet Union.

They were deeply intertwined,

not just in their history and their culture,

but also in their economy and their politics.

So it's after World War II,

it's like the '50s, '60s, '70s, and NATO was formed,

the North Atlantic Treaty Organization.

This was a military alliance between all of these countries,

that was meant to sort of deter the Soviet Union

from expanding and taking over the world.

But as we all know, the Soviet Union,

which was Russia and all of these other countries,

collapsed in 1991.

And all of these Soviet republics,

including Ukraine, became independent,

meaning they were not now a part

of one big block of countries anymore.

But just because the border's all split up,

it doesn't mean that these cultural ties actually broke.

Like for example, the Soviet leader at the time

of the collapse of the Soviet Union, this guy, Gorbachev,

he was the son of a Ukrainian mother and a Russian father.

Like he grew up with his mother singing him

Ukrainian folk songs.

In his mind, Ukraine and Russia were like one thing.

So there was a major reluctance to accept Ukraine

as a separate thing from Russia.

In so many ways, they are one.

There was another Russian at the time

who did not accept this new division.

This young intelligence officer, Vladimir Putin,

who was starting to rise up in the ranks

of postSoviet Russia.

There's this amazing quote from 2005

where Putin is giving this stateoftheunionlike address,

where Putin declares the collapse of the Soviet Union,

quote, "The greatest catastrophe of the 20th century.

And as for the Russian people, it became a genuine tragedy.

Tens of millions of fellow citizens and countrymen

found themselves beyond the fringes of Russian territory."

Do you see how he frames this?

The Soviet Union were all one people in his mind.

And after it collapsed, all of these people

who are a part of the motherland were now outside

of the fringes or the boundaries of Russian territory.

First off, fact check.

Greatest catastrophe of the 20th century?

Like, do you remember what else happened

in the 20th century, Vladimir?

(ominous music)

Putin's worry about the collapse of this one people

starts to get way worse when the West, his enemy,

starts showing up to his neighborhood

to all these exSoviet countries that are now independent.

The West starts selling their ideology

of democracy and capitalism and inviting them

to join their military alliance called NATO.

And guess what?

These countries are totally buying it.

All these exSoviet countries are now joining NATO.

And some of them, the EU.

And Putin is hating this.

He's like not only did the Soviet Union divide

and all of these people are now outside

of the Russia motherland,

but now they're being persuaded by the West

to join their military alliance.

This is terrible news.

Over the years, this continues to happen,

while Putin himself starts to chip away

at Russian institutions, making them weaker and weaker.

He's silencing his rivals

and he's consolidating power in himself.

(triumphant music)

And in the past few years,

he's effectively silenced anyone who can challenge him;

any institution, any court,

or any political rival have all been silenced.

It's been decades since the Soviet Union fell,

but as Putin gains more power,

he still sees the region through the lens

of the old Cold War, Soviet, Slavic empire view.

He sees this region as one big block

that has been torn apart by outside forces.

"The greatest catastrophe of the 20th century."

And the worst situation of all of these,

according to Putin, is Ukraine,

which was like the gem of the Soviet Union.

There was tons of cultural heritage.

Again, Russia sort of started in Ukraine,

not to mention it was a very populous

and industrious, resourcerich place.

And over the years Ukraine has been drifting west.

It hasn't joined NATO yet, but more and more,

it's been electing proWestern presidents.

It's been flirting with membership in NATO.

It's becoming less and less attached

to the Russian heritage that Putin so adores.

And more than half of Ukrainians say

that they'd be down to join the EU.

64% of them say that it would be cool joining NATO.

But Putin can't handle this. He is in total denial.

Like an exboyfriend who handle his exgirlfriend

starting to date someone else,

Putin can't let Ukraine go.

He won't let go.

So for the past decade,

he's been trying to keep the West out

and bring Ukraine back into the motherland of Russia.

This usually takes the form of Putin sending

secret soldiers from Russia into Ukraine

to help the people in Ukraine who want to like separate

from Ukraine and join Russia.

It also takes the form of, oh yeah,

stealing entire parts of Ukraine for Russia.

Russian troops moving swiftly to take control

of military bases in Crimea.

Like in 2014, Putin just did this.

To what America is officially calling

a Russian invasion of Ukraine.

He went down and just snatched this bit of Ukraine

and folded it into Russia.

So you're starting to see what's going on here.

Putin's life's work is to salvage what he calls

the greatest catastrophe of the 20th century,

the division and the separation

of the Soviet republics from Russia.

So let's get to present day. It's 2022.

Putin is at it again.

And honestly, if you really want to understand

the mind of Vladimir Putin and his whole view on this,

you have to read this.

"On the History of Unity of Russians and Ukrainians,"

by Vladimir Putin.

A blog post that kind of sounds

like a ninth grade history essay.

In this essay, Vladimir Putin argues

that Russia and Ukraine are one people.

He calls them essentially the same historical

and spiritual space.

Kind of beautiful writing, honestly.

Anyway, he argues that the division

between the two countries is due to quote,

"a deliberate effort by those forces

that have always sought to undermine our unity."

And that the formula they use, these outside forces,

is a classic one: divide and rule.

And then he launches into this super indepth,

like 10page argument, as to every single historical beat

of Ukraine and Russia's history

to make this argument that like,

this is one people and the division is totally because

of outside powers, i.e. the West.

Okay, but listen, there's this moment

at the end of the post,

that actually kind of hit me in a big way.

He says this, "Just have a look at Austria and Germany,

or the U.S. and Canada, how they live next to each other.

Close in ethnic composition, culture,

and in fact, sharing one language,

they remain sovereign states with their own interests,

with their own foreign policy.

But this does not prevent them

from the closest integration or allied relations.

They have very conditional, transparent borders.

And when crossing them citizens feel at home.

They create families, study, work, do business.

Incidentally, so do millions of those born in Ukraine

who now live in Russia.

We see them as our own close people."

I mean, listen, like,

I'm not in support of what Putin is doing,

but like that, it's like a pretty solid like analogy.

If China suddenly showed up and started like

coaxing Canada into being a part of its alliance,

I would be a little bit like, "What's going on here?"

That's what Putin feels.

And so I kind of get what he means there.

There's a deep heritage and connection between these people.

And he's seen that falter and dissolve

and he doesn't like it.

He clearly genuinely feels a brotherhood

and this deep heritage connection

with the people of Ukraine.

Okay, okay, okay, okay. Putin, I get it.

Your essay is compelling there at the end.

You're clearly very smart and wellread.

But this does not justify what you've been up to. Okay?

It doesn't justify sending 100,000 troops to the border

or sending cyber soldiers to sabotage

the Ukrainian government, or annexing territory,

fueling a conflict that has killed

tens of thousands of people in Eastern Ukraine.

No. Okay.

No matter how much affection you feel for Ukrainian heritage

and its connection to Russia, this is not okay.

Again, it's like the boyfriend

who genuinely loves his girlfriend.

They had a great relationship,

but they broke up and she's free to see whomever she wants.

But Putin is not ready to let go.

[Man In Blue Shirt] What the hell's wrong with you?

I love you, Jessica.

What the hell is wrong with you?

Dude, don't fucking touch me.

I love you. Worldstar!

What is wrong with you? Just stop!

Putin has constructed his own reality here.

One in which Ukraine is actually being controlled

by shadowy Western forces

who are holding the people of Ukraine hostage.

And if that he invades, it will be a swift victory

because Ukrainians will accept him with open arms.

The great liberator.

(triumphant music)

Like, this guy's a total romantic.

He's a history buff and a romantic.

And he has a hill to die on here.

And it is liberating the people

who have been taken from the Russian motherland.

Kind of like the abusive boyfriend, who's like,

"She actually really loves me,

but it's her annoying friends

who were planting all these ideas in her head.

That's why she broke up with me."

And it's like, "No, dude, she's over you."

[Man In Blue Shirt] What the hell is wrong with you?

I love you, Jessica.

I mean, maybe this video should be called

Putin is just like your abusive exboyfriend.

[Man In Blue Shirt] What the hell is wrong with you?

I love you, Jessica!

Worldstar! What's wrong with you?

Okay. So where does this leave us?

It's 2022, Putin is showing up to these meetings in Europe

to tell them where he stands.

He says, "NATO, you cannot expand anymore. No new members.

And you need to withdraw all your troops

from Eastern Europe, my neighborhood."

He knows these demands will never be accepted

because they're ludicrous.

But what he's doing is showing a false effort to say,

"Well, we tried to negotiate with the West,

but they didn't want to."

Hence giving a little bit more justification

to a Russian invasion.

So will Russia invade? Is there war coming?

Maybe; it's impossible to know

because it's all inside of the head of this guy.

But, if I were to make the best argument

that war is not coming tomorrow,

I would look at a few things.

Number one, war in Ukraine would be incredibly costly

for Vladimir Putin.

Russia has a far superior army to Ukraine's,

but still, Ukraine has a very good army

that is supported by the West

and would give Putin a pretty bad bloody nose

in any invasion.

Controlling territory in Ukraine would be very hard.

Ukraine is a giant country.

They would fight back and it would be very hard

to actually conquer and take over territory.

Another major point here is that if Russia invades Ukraine,

this gives NATO new purpose.

If you remember, NATO was created because of the Cold War,

because the Soviet Union was big and nuclear powered.

Once the Soviet Union fell,

NATO sort of has been looking for a new purpose

over the past couple of decades.

If Russia invades Ukraine,

NATO suddenly has a brand new purpose to unite

and to invest in becoming more powerful than ever.

Putin knows that.

And it would be very bad news for him if that happened.

But most importantly, perhaps the easiest clue

for me to believe that war isn't coming tomorrow

is the Russian propaganda machine

is not preparing the Russian people for an invasion.

In 2014, when Russia was about to invade

and take over Crimea, this part of Ukraine,

there was a barrage of state propaganda

that prepared the Russian people

that this was a justified attack.

So when it happened, it wasn't a surprise

and it felt very normal.

That isn't happening right now in Russia.

At least for now. It may start happening tomorrow.

But for now, I think Putin is showing up to the border,

flexing his muscles and showing the West that he is earnest.

I'm not sure that he's going to invade tomorrow,

but he very well could.

I mean, read the guy's blog post

and you'll realize that he is a romantic about this.

He is incredibly idealistic about the glory days

of the Slavic empires, and he wants to get it back.

So there is dangerous momentum towards war.

And the way war works is even a small little, like, fight,

can turn into the other guy

doing something bigger and crazier.

And then the other person has to respond

with something a little bit bigger.

That's called escalation.

And there's not really a ceiling

to how much that momentum can spin out of control.

That is why it's so scary when two nuclear countries

go to war with each other,

because there's kind of no ceiling.

So yeah, it's dangerous. This is scary.

I'm not sure what happens next here,

but the best we can do is keep an eye on this.

At least for now, we better understand

what Putin really wants out of all of this.

Thanks for watching.

Jared A. Brock

4 years ago

Here is the actual reason why Russia invaded Ukraine

Democracy's demise

Our Ukrainian brothers and sisters are being attacked by a far superior force.

It's the biggest invasion since WWII.

43.3 million peaceful Ukrainians awoke this morning to tanks, mortars, and missiles. Russia is already 15 miles away.

America and the West will not deploy troops.

They're sanctioning. Except railways. And luxuries. And energy. Diamonds. Their dependence on Russian energy exports means they won't even cut Russia off from SWIFT.

Ukraine is desperate enough to hand out guns on the street.

France, Austria, Turkey, and the EU are considering military aid, but Ukraine will fall without America or NATO.

The Russian goal is likely to encircle Kyiv and topple Zelenskyy's government. A proxy power will be reinstated once Russia has total control.

“Western security services believe Putin intends to overthrow the government and install a puppet regime,” says Financial Times foreign affairs commentator Gideon Rachman. This “decapitation” strategy includes municipalities. Ukrainian officials are being targeted for arrest or death.”

Also, Putin has never lost a war.

Why is Russia attacking Ukraine?

Putin, like a snowflake college student, “feels unsafe.”

Why?

Because Ukraine is full of “Nazi ideas.”

Putin claims he has felt threatened by Ukraine since the country's pro-Putin leader was ousted and replaced by a popular Jewish comedian.

Hee hee

He fears a full-scale enemy on his doorstep if Ukraine joins NATO. But he refuses to see it both ways. NATO has never invaded Russia, but Russia has always stolen land from its neighbors. Can you blame them for joining a mutual defense alliance when a real threat exists?

Nations that feel threatened can join NATO. That doesn't justify an attack by Russia. It allows them to defend themselves. But NATO isn't attacking Moscow. They aren't.

Russian President Putin's "special operation" aims to de-Nazify the Jewish-led nation.

To keep Crimea and the other two regions he has already stolen, he wants Ukraine undefended by NATO.

(Warlords have fought for control of the strategically important Crimea for over 2,000 years.)

Putin wants to own all of Ukraine.

Why?

The Black Sea is his goal.

Ports bring money and power, and Ukraine pipelines transport Russian energy products.

Putin wants their wheat, too — with 70% crop coverage, Ukraine would be their southern breadbasket, and Russia has no qualms about starving millions of Ukrainians to death to feed its people.

In the end, it's all about greed and power.

Putin wants to own everything Russia has ever owned. This year he turns 70, and he wants to be remembered like his hero Peter the Great.

In order to get it, he's willing to kill thousands of Ukrainians

Art imitates life

This story began when a Jewish TV comedian portrayed a teacher elected President after ranting about corruption.

Servant of the People, the hit sitcom, is now the leading centrist political party.

Right, President Zelenskyy won the hearts and minds of Ukrainians by imagining a fairer world.

A fair fight is something dictators, corporatists, monopolists, and warlords despise.

Now Zelenskyy and his people will die, allowing one of history's most corrupt leaders to amass even more power.

The poor always lose

Meanwhile, the West will impose economic sanctions on Russia.

China is likely to step in to help Russia — or at least the wealthy.

The poor and working class in Russia will suffer greatly if there is a hard crash or long-term depression.

Putin's friends will continue to drink champagne and eat caviar.

Russia cutting off oil, gas, and fertilizer could cause more inflation and possibly a recession if it cuts off supplies to the West. This causes more suffering and hardship for the Western poor and working class.

Why? a billionaire sociopath gets his dirt.

Yes, Russia is simply copying America. Some of us think all war is morally wrong, regardless of who does it.

But let's not kid ourselves right now.

The markets rallied after the biggest invasion in Europe since WWII.

Investors hope Ukraine collapses and Russian oil flows.

Unbridled capitalists value lifeless.

What we can do about Ukraine

When the Russian army invaded eastern Finland, my wife's grandmother fled as a child. 80 years later, Russia still has Karelia.

Russia invaded Ukraine today to retake two eastern provinces.

History has taught us nothing.

Past mistakes won't fix the future.

Instead, we should try:

- Pray and/or meditate on our actions with our families.

- Stop buying Russian products (vodka, obviously, but also pay more for hydro/solar/geothermal/etc.)

- Stop wasting money on frivolous items and donate it to Ukrainian charities.

Here are 35+ places to donate.

- To protest, gather a few friends, contact the media, and shake signs in front of the Russian embassy.

- Prepare to welcome refugees.

More war won't save the planet or change hearts.

Only love can work.

You might also like

Alex Carter

3 years ago

Metaverse, Web 3, and NFTs are BS

Most crypto is probably too.

The goals of Web 3 and the metaverse are admirable and attractive. Who doesn't want an internet owned by users? Who wouldn't want a digital realm where anything is possible? A better way to collaborate and visit pals.

Companies pursue profits endlessly. Infinite growth and revenue are expected, and if a corporation needs to sacrifice profits to safeguard users, the CEO, board of directors, and any executives will lose to the system of incentives that (1) retains workers with shares and (2) makes a company answerable to all of its shareholders. Only the government can guarantee user protections, but we know how successful that is. This is nothing new, just a problem with modern capitalism and tech platforms that a user-owned internet might remedy. Moxie, the founder of Signal, has a good articulation of some of these current Web 2 tech platform problems (but I forget the timestamp); thoughts on JRE aside, this episode is worth listening to (it’s about a bunch of other stuff too).

Moxie Marlinspike, founder of Signal, on the Joe Rogan Experience podcast.

Source: https://open.spotify.com/episode/2uVHiMqqJxy8iR2YB63aeP?si=4962b5ecb1854288

Web 3 champions are premature. There was so much spectacular growth during Web 2 that the next wave of founders want to make an even bigger impact, while investors old and new want a chance to get a piece of the moonshot action. Worse, crypto enthusiasts believe — and financially need — the fact of its success to be true, whether or not it is.

I’m doubtful that it will play out like current proponents say. Crypto has been the white-hot focus of SV’s best and brightest for a long time yet still struggles to come up any mainstream use case other than ‘buy, HODL, and believe’: a store of value for your financial goals and wishes. Some kind of the metaverse is likely, but will it be decentralized, mostly in VR, or will Meta (previously FB) play a big role? Unlikely.

METAVERSE

The metaverse exists already. Our digital lives span apps, platforms, and games. I can design a 3D house, invite people, use Discord, and hang around in an artificial environment. Millions of gamers do this in Rust, Minecraft, Valheim, and Animal Crossing, among other games. Discord's voice chat and Slack-like servers/channels are the present social anchor, but the interface, integrations, and data portability will improve. Soon you can stream YouTube videos on digital house walls. You can doodle, create art, play Jackbox, and walk through a door to play Apex Legends, Fortnite, etc. Not just gaming. Digital whiteboards and screen sharing enable real-time collaboration. They’ll review code and operate enterprises. Music is played and made. In digital living rooms, they'll watch movies, sports, comedy, and Twitch. They'll tweet, laugh, learn, and shittalk.

The metaverse is the evolution of our digital life at home, the third place. The closest analog would be Discord and the integration of Facebook, Slack, YouTube, etc. into a single, 3D, customizable hangout space.

I'm not certain this experience can be hugely decentralized and smoothly choreographed, managed, and run, or that VR — a luxury, cumbersome, and questionably relevant technology — must be part of it. Eventually, VR will be pragmatic, achievable, and superior to real life in many ways. A total sensory experience like the Matrix or Sword Art Online, where we're physically hooked into the Internet yet in our imaginations we're jumping, flying, and achieving athletic feats we never could in reality; exploring realms far grander than our own (as grand as it is). That VR is different from today's.

Ben Thompson released an episode of Exponent after Facebook changed its name to Meta. Ben was suspicious about many metaverse champion claims, but he made a good analogy between Oculus and the PC. The PC was initially far too pricey for the ordinary family to afford. It began as a business tool. It got so powerful and pervasive that it affected our personal life. Price continues to plummet and so much consumer software was produced that it's impossible to envision life without a home computer (or in our pockets). If Facebook shows product market fit with VR in business, through use cases like remote work and collaboration, maybe VR will become practical in our personal lives at home.

Before PCs, we relied on Blockbuster, the Yellow Pages, cabs to get to the airport, handwritten taxes, landline phones to schedule social events, and other archaic methods. It is impossible for me to conceive what VR, in the form of headsets and hand controllers, stands to give both professional and especially personal digital experiences that is an order of magnitude better than what we have today. Is looking around better than using a mouse to examine a 3D landscape? Do the hand controls make x10 or x100 work or gaming more fun or efficient? Will VR replace scalable Web 2 methods and applications like Web 1 and Web 2 did for analog? I don't know.

My guess is that the metaverse will arrive slowly, initially on displays we presently use, with more app interoperability. I doubt that it will be controlled by the people or by Facebook, a corporation that struggles to properly innovate internally, as practically every large digital company does. Large tech organizations are lousy at hiring product-savvy employees, and if they do, they rarely let them explore new things.

These companies act like business schools when they seek founders' results, with bureaucracy and dependency. Which company launched the last popular consumer software product that wasn't a clone or acquisition? Recent examples are scarce.

Web 3

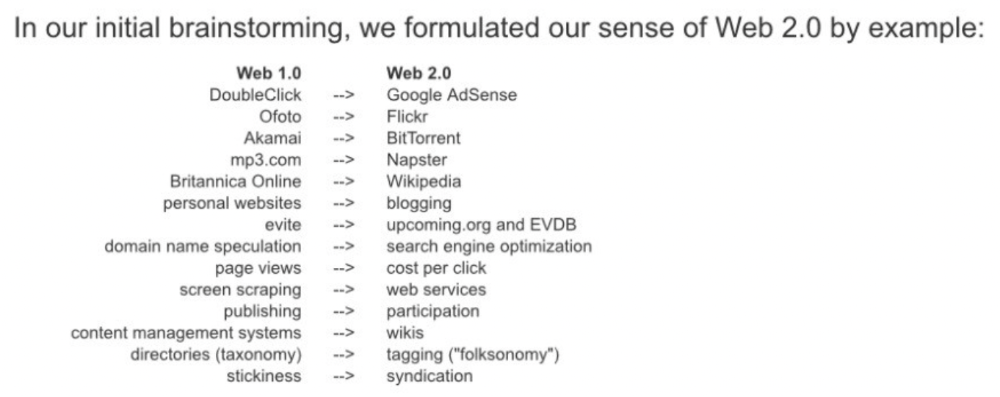

Investors and entrepreneurs of Web 3 firms are declaring victory: 'Web 3 is here!' Web 3 is the future! Many profitable Web 2 enterprises existed when Web 2 was defined. The word was created to explain user behavior shifts, not a personal pipe dream.

Origins of Web 2: http://www.oreilly.com/pub/a/web2/archive/what-is-web-20.html

One of these Web 3 startups may provide the connecting tissue to link all these experiences or become one of the major new digital locations. Even so, successful players will likely use centralized power arrangements, as Web 2 businesses do now. Some Web 2 startups integrated our digital lives. Rockmelt (2010–2013) was a customizable browser with bespoke connectors to every program a user wanted; imagine seeing Facebook, Twitter, Discord, Netflix, YouTube, etc. all in one location. Failure. Who knows what Opera's doing?

Silicon Valley and tech Twitter in general have a history of jumping on dumb bandwagons that go nowhere. Dot-com crash in 2000? The huge deployment of capital into bad ideas and businesses is well-documented. And live video. It was the future until it became a niche sector for gamers. Live audio will play out a similar reality as CEOs with little comprehension of audio and no awareness of lasting new user behavior deceive each other into making more and bigger investments on fool's gold. Twitter trying to buy Clubhouse for $4B, Spotify buying Greenroom, Facebook exploring live audio and 'Tiktok for audio,' and now Amazon developing a live audio platform. This live audio frenzy won't be worth their time or energy. Blind guides blind. Instead of learning from prior failures like Twitter buying Periscope for $100M pre-launch and pre-product market fit, they're betting on unproven and uncompelling experiences.

NFTs

NFTs are also nonsense. Take Loot, a time-limited bag drop of "things" (text on the blockchain) for a game that didn't exist, bought by rich techies too busy to play video games and foolish enough to think they're getting in early on something with a big reward. What gaming studio is incentivized to use these items? Who's encouraged to join? No one cares besides Loot owners who don't have NFTs. Skill, merit, and effort should be rewarded with rare things for gamers. Even if a small minority of gamers can make a living playing, the average game's major appeal has never been to make actual money - that's a profession.

No game stays popular forever, so how is this objective sustainable? Once popularity and usage drop, exclusive crypto or NFTs will fall. And if NFTs are designed to have cross-game appeal, incentives apart, 30 years from now any new game will need millions of pre-existing objects to build around before they start. It doesn’t work.

Many games already feature item economies based on real in-game scarcity, generally for cosmetic things to avoid pay-to-win, which undermines scaled gaming incentives for huge player bases. Counter-Strike, Rust, etc. may be bought and sold on Steam with real money. Since the 1990s, unofficial cross-game marketplaces have sold in-game objects and currencies. NFTs aren't needed. Making a popular, enjoyable, durable game is already difficult.

With NFTs, certain JPEGs on the internet went from useless to selling for $69 million. Why? Crypto, Web 3, early Internet collectibles. NFTs are digital Beanie Babies (unlike NFTs, Beanie Babies were a popular children's toy; their destinies are the same). NFTs are worthless and scarce. They appeal to crypto enthusiasts seeking for a practical use case to support their theory and boost their own fortune. They also attract to SV insiders desperate not to miss the next big thing, not knowing what it will be. NFTs aren't about paying artists and creators who don't get credit for their work.

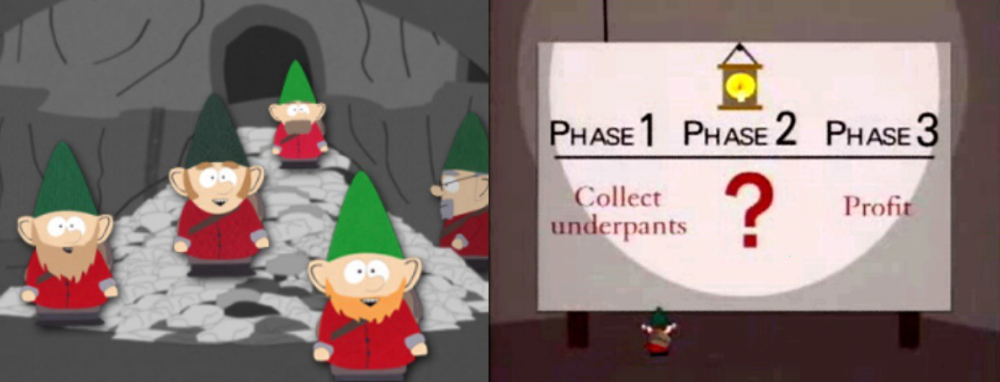

South Park's Underpants Gnomes

NFTs are a benign, foolish plan to earn money on par with South Park's underpants gnomes. At worst, they're the world of hucksterism and poor performers. Or those with money and enormous followings who, like everyone, don't completely grasp cryptocurrencies but are motivated by greed and status and believe Gary Vee's claim that CryptoPunks are the next Facebook. Gary's watertight logic: if NFT prices dip, they're on the same path as the most successful corporation in human history; buy the dip! NFTs aren't businesses or museum-worthy art. They're bs.

Gary Vee compares NFTs to Amazon.com. vm.tiktok.com/TTPdA9TyH2

We grew up collecting: Magic: The Gathering (MTG) cards printed in the 90s are now worth over $30,000. Imagine buying a digital Magic card with no underlying foundation. No one plays the game because it doesn't exist. An NFT is a contextless image someone conned you into buying a certificate for, but anyone may copy, paste, and use. Replace MTG with Pokemon for younger readers.

When Gary Vee strongarms 30 tech billionaires and YouTube influencers into buying CryptoPunks, they'll talk about it on Twitch, YouTube, podcasts, Twitter, etc. That will convince average folks that the product has value. These guys are smart and/or rich, so I'll get in early like them. Cryptography is similar. No solid, scaled, mainstream use case exists, and no one knows where it's headed, but since the global crypto financial bubble hasn't burst and many people have made insane fortunes, regular people are putting real money into something that is highly speculative and could be nothing because they want a piece of the action. Who doesn’t want free money? Rich techies and influencers won't be affected; normal folks will.

Imagine removing every $1 invested in Bitcoin instantly. What would happen? How far would Bitcoin fall? Over 90%, maybe even 95%, and Bitcoin would be dead. Bitcoin as an investment is the only scalable widespread use case: it's confidence that a better use case will arise and that being early pays handsomely. It's like pouring a trillion dollars into a company with no business strategy or users and a CEO who makes vague future references.

New tech and efforts may provoke a 'get off my lawn' mentality as you approach 40, but I've always prided myself on having a decent bullshit detector, and it's flying off the handle at this foolishness. If we can accomplish a functional, responsible, equitable, and ethical user-owned internet, I'm for it.

Postscript:

I wanted to summarize my opinions because I've been angry about this for a while but just sporadically tweeted about it. A friend handed me a Dan Olson YouTube video just before publication. He's more knowledgeable, articulate, and convincing about crypto. It's worth seeing:

This post is a summary. See the original one here.

Max Parasol

4 years ago

Are DAOs the future or just a passing fad?

How do you DAO? Can DAOs scale?

DAO: Decentralized Autonomous. Organization.

“The whole phrase is a misnomer. They're not decentralized, autonomous, or organizations,” says Monsterplay blockchain consultant David Freuden.

As part of the DAO initiative, Freuden coauthored a 51-page report in May 2020. “We need DAOs,” he says. “‘Shareholder first' is a 1980s/90s concept. Profits became the focus, not products.”

His predictions for DAOs have come true nearly two years later. DAOs had over 1.6 million participants by the end of 2021, up from 13,000 at the start of the year. Wyoming, in the US, will recognize DAOs and the Marshall Islands in 2021. Australia may follow that example in 2022.

But what is a DAO?

Members buy (or are rewarded with) governance tokens to vote on how the DAO operates and spends its money. “DeFi spawned DAOs as an investment vehicle. So a DAO is tokenomics,” says Freuden.

DAOs are usually built around a promise or a social cause, but they still want to make money. “If you can't explain why, the DAO will fail,” he says. “A co-op without tokenomics is not a DAO.”

Operating system DAOs, protocol DAOs, investment DAOs, grant DAOs, service DAOs, social DAOs, collector DAOs, and media DAOs are now available.

Freuden liked the idea of people rallying around a good cause. Speculators and builders make up the crypto world, so it needs a DAO for them.

,Speculators and builders, or both, have mismatched expectations, causing endless, but sometimes creative friction.

Organisms that boost output

Launching a DAO with an original product such as a cryptocurrency, an IT protocol or a VC-like investment fund like FlamingoDAO is common. DAOs enable distributed open-source contributions without borders. The goal is vital. Sometimes, after a product is launched, DAOs emerge, leaving the company to eventually transition to a DAO, as Uniswap did.

Doing things together is a DAO. So it's a way to reward a distributed workforce. DAOs are essentially productivity coordination organisms.

“Those who work for the DAO make permissionless contributions and benefit from fragmented employment,” argues Freuden. DAOs are, first and foremost, a new form of cooperation.

DAO? Distributed not decentralized

In decentralized autonomous organizations, words have multiple meanings. DAOs can emphasize one aspect over another. Autonomy is a trade-off for decentralization.

DAOstack CEO Matan Field says a DAO is a distributed governance system. Power is shared. However, there are two ways to understand a DAO's decentralized nature. This clarifies the various DAO definitions.

A decentralized infrastructure allows a DAO to be decentralized. It could be created on a public permissionless blockchain to prevent a takeover.

As opposed to a company run by executives or shareholders, a DAO is distributed. Its leadership does not wield power

Option two is clearly distributed.

But not all of this is “automated.”

Think quorum, not robot.

DAOs can be autonomous in the sense that smart contracts are self-enforcing and self-executing. So every blockchain transaction is a simplified smart contract.

Dao landscape

The DAO landscape is evolving.

Consider how Ethereum's smart contracts work. They are more like self-executing computer code, which Vitalik Buterin calls “persistent scripts”.

However, a DAO is self-enforcing once its members agree on its rules. As such, a DAO is “automated upon approval by the governance committee.” This distinguishes them from traditional organizations whose rules must be interpreted and applied.

Why a DAO? They move fast

A DAO can quickly adapt to local conditions as a governance mechanism. It's a collaborative decision-making tool.

Like UkraineDAO, created in response to Putin's invasion of Ukraine by Ukrainian expat Alona Shevchenko, Nadya Tolokonnikova, Trippy Labs, and PleasrDAO. The DAO sought to support Ukrainian charities by selling Ukrainian flag NFTs. With a single mission, a DAO can quickly raise funds for a country accepting crypto where banks are distrusted.

This could be a watershed moment for DAOs.

ConstitutionDAO was another clever use case for DAOs for Freuden. In a failed but “beautiful experiment in a single-purpose DAO,” ConstitutionDAO tried to buy a copy of the US Constitution from a Sotheby's auction. In November 2021, ConstitutionDAO raised $47 million from 19,000 people, but a hedge fund manager outbid them.

Contributions were returned or lost if transactional gas fees were too high. The ConstitutionDAO, as a “beautiful experiment,” proved exceptionally fast at organizing and crowdsourcing funds for a specific purpose.

We may soon be applauding UkraineDAO's geopolitical success in support of the DAO concept.

Some of the best use cases for DAOs today, according to Adam Miller, founder of DAOplatform.io and MIDAO Directory Services, involve DAO structures.

That is, a “flat community is vital.” Prototyping by the crowd is a good example. To succeed, members must be enthusiastic about DAOs as an alternative to starting a company. Because DAOs require some hierarchy, he agrees that "distributed is a better acronym."

Miller sees DAOs as a “new way of organizing people and resources.” He started DAOplatform.io, a DAO tooling advisery that is currently transitioning to a DAO due to the “woeful tech options for running a DAO,” which he says mainly comprises of just “multisig admin keys and a voting system.” So today he's advising on DAO tech stacks.

Miller identifies three key elements.

Tokenization is a common method and tool. Second, governance mechanisms connected to the DAO's treasury. Lastly, community.”

How a DAO works...

They can be more than glorified Discord groups if they have a clear mission. This mission is a mix of financial speculation and utopianism. The spectrum is vast.

The founder of Dash left the cryptocurrency project in 2017. It's the story of a prophet without an heir. So creating a global tokenized evangelical missionary community via a DAO made sense.

Evan Duffield, a “libertarian/anarchist” visionary, forked Bitcoin in January 2014 to make it instant and essentially free. He went away for a while, and DASH became a DAO.

200,000 US retailers, including Walmart and Barnes & Noble, now accept Dash as payment. This payment system works like a gift card.

Arden Goldstein, Dash's head of crypto, DAO, and blockchain marketing, claims Dash is the “first successful DAO.” It was founded in 2016 and disbanded after a hack, an Ethereum hard fork and much controversy. But what are the success metrics?

Crypto success is measured differently, says Goldstein. To achieve common goals, people must participate or be motivated in a healthy DAO. People are motivated to complete tasks in a successful DAO. And, crucially, when tasks get completed.

“Yes or no, 1 or 0, voting is not a new idea. The challenge is getting people to continue to participate and keep building a community.” A DAO motivates volunteers: Nothing keeps people from building. The DAO “philosophy is old news. You need skin in the game to play.”

MasterNodes must stake 1000 Dash. Those members are rewarded with DASH for marketing (and other tasks). It uses an outsourced team to onboard new users globally.

Joining a DAO is part of the fun of meeting crazy or “very active” people on Discord. No one gets fired (usually). If your work is noticed, you may be offered a full-time job.

DAO community members worldwide are rewarded for brand building. Dash is also a great product for developing countries with high inflation and undemocratic governments. The countries with the most Dash DAO members are Russia, Brazil, Venezuela, India, China, France, Italy, and the Philippines.

Grassroots activism makes this DAO work. A DAO is local. Venezuelans can't access Dash.org, so DAO members help them use a VPN. DAO members are investors, fervent evangelicals, and local product experts.

Every month, proposals and grant applications are voted on via the Dash platform. However, the DAO may decide not to fund you. For example, the DAO once hired a PR firm, but the community complained about the lack of press coverage. This raises a great question: How are real-world contractual obligations met by a DAO?

Does the DASH DAO work?

“I see the DAO defund projects I thought were valuable,” Goldstein says. Despite working full-time, I must submit a funding proposal. “Much faster than other companies I've worked on,” he says.

Dash DAO is a headless beast. Ryan Taylor is the CEO of the company overseeing the DASH Core Group project.

The issue is that “we don't know who has the most tokens [...] because we don't know who our customers are.” As a result, “the loudest voices usually don't have the most MasterNodes and aren't the most invested.”

Goldstein, the only female in the DAO, says she worked hard. “I was proud of the DAO when I made the logo pink for a day and got great support from the men.” This has yet to entice a major influx of female DAO members.

Many obstacles stand in the way of utopian dreams.

Governance problems remain

And what about major token holders behaving badly?

In early February, a heated crypto Twitter debate raged on about inclusion, diversity, and cancel culture in relation to decentralized projects. In this case, the question was how a DAO addresses alleged inappropriate behavior.

In a corporation, misconduct can result in termination. In a DAO, founders usually hold a large number of tokens and the keys to the blockchain (multisignature) or otherwise.

Brantly Millegan, the director of operations of Ethereum Name Service (ENS), made disparaging remarks about the LGBTQ community and other controversial topics. The screenshotted comments were made in 2016 and brought to the ENS board's attention in early 2022.

His contract with ENS has expired. But what of his large DAO governance token holdings?

Members of the DAO proposed a motion to remove Millegan from the DAO. His “delegated” votes net 370,000. He was and is the DAO's largest delegate.

What if he had refused to accept the DAO's decision?

Freuden says the answer is not so simple.

“Can a DAO kick someone out who built the project?”

The original mission “should be dissolved” if it no longer exists. “Does a DAO fail and return the money? They must r eturn the money with interest if the marriage fails.”

Before an IPO, VCs might try to remove a problematic CEO.

While DAOs use treasury as a governance mechanism, it is usually controlled (at least initially) by the original project creators. Or, in the case of Uniswap, the venture capital firm a16z has so much voting power that it has delegated it to student-run blockchain organizations.

So, can DAOs really work at scale? How to evolve voting paradigms beyond token holdings?

The whale token holder issue has some solutions. Multiple tokens, such as a utility token on top of a governance token, and quadratic voting for whales, are now common. Other safeguards include multisignature blockchain keys and decision time locks that allow for any automated decision to be made. The structure of each DAO will depend on the assets at stake.

In reality, voter turnout is often a bigger issue.

Is DAO governance scalable?

Many DAOs have low participation. Due to a lack of understanding of technology, apathy, or busy lives. “The bigger the DAO, the fewer voters who vote,” says Freuden.

Freuden's report cites British anthropologist Dunbar's Law, who argued that people can only maintain about 150 relationships.

"As the DAO grows in size, the individual loses influence because they perceive their voting power as being diminished or insignificant. The Ringelmann Effect and Dunbar's Rule show that as a group grows in size, members become lazier, disenfranchised, and detached.

Freuden says a DAO requires “understanding human relationships.” He believes DAOs work best as investment funds rooted in Cryptoland and small in scale. In just three weeks, SyndicateDAO enabled the creation of 450 new investment group DAOs.

Due to SEC regulations, FlamingoDAO, a famous NFT curation investment DAO, could only have 100 investors. The “LAO” is a member-directed venture capital fund and a US LLC. To comply with US securities law, they only allow 100 members with a 120ETH minimum staking contribution.

But how did FlamingoDAO make investment decisions? How often did all 70 members vote? Art and NFTs are highly speculative.

So, investment DAOs are thought to work well in a small petri dish environment. This is due to a crypto-native club's pooled capital (maximum 7% per member) and crowdsourced knowledge.

While scalability is a concern, each DAO will operate differently depending on the goal, technology stage, and personalities. Meetups and hackathons are common ways for techies to collaborate on a cause or test an idea. But somebody still organizes the hack.

Holographic consensus voting

But clever people are working on creative solutions to every problem.

Miller of DAOplatform.io cites DXdao as a successful DAO. Decentralized product and service creator DXdao runs the DAO entirely on-chain. “You earn voting rights by contributing to the community.”

DXdao, a DAOstack fork, uses holographic consensus, a voting algorithm invented by DAOstack founder Matan Field. The system lets a random or semi-random subset make group-wide decisions.

By acting as a gatekeeper for voters, DXdao's Luke Keenan explains that “a small predictions market economy emerges around the likely outcome of a proposal as tokens are staked on it.” Also, proposals that have been financially boosted have fewer requirements to be successful, increasing system efficiency.” DXdao “makes decisions by removing voting power as an economic incentive.”

Field explains that holographic consensus “does not require a quorum to render a vote valid.”

“Rather, it provides a parallel process. It is a game played (for profit) by ‘predictors' who make predictions about whether or not a vote will be approved by the voters. The voting process is valid even when the voting quorum is low if enough stake is placed on the outcome of the vote.

“In other words, a quorum is not a scalable DAO governance strategy,” Field says.

You don't need big votes on everything. If only 5% vote, fine. To move significant value or make significant changes, you need a longer voting period (say 30 days) and a higher quorum,” says Miller.

Clearly, DAOs are maturing. The emphasis is on tools like Orca and processes that delegate power to smaller sub-DAOs, committees, and working groups.

Miller also claims that “studies in psychology show that rewarding people too much for volunteering disincentivizes them.” So, rather than giving out tokens for every activity, you may want to offer symbolic rewards like POAPs or contributor levels.

“Free lunches are less rewarding. Random rewards can boost motivation.”

Culture and motivation

DAOs (and Web3 in general) can give early adopters a sense of ownership. In theory, they encourage early participation and bootstrapping before network effects.

"A double-edged sword," says Goldstein. In the developing world, they may not be fully scalable.

“There must always be a leader,” she says. “People won't volunteer if they don't want to.”

DAO members sometimes feel entitled. “They are not the boss, but they think they should be able to see my calendar or get a daily report,” Goldstein gripes. Say, “I own three MasterNodes and need to know X, Y, and Z.”

In most decentralized projects, strong community leaders are crucial to influencing culture.

Freuden says “the DAO's community builder is the cryptoland influencer.” They must “disseminate the DAO's culture, cause, and rally the troops” in English, not tech.

They must keep members happy.

So the community builder is vital. Building a community around a coin that promises riches is simple, but keeping DAO members motivated is difficult.

It's a human job. But tools like SourceCred or coordinate that measure contributions and allocate tokens are heavily marketed. Large growth funds/community funds/grant programs are common among DAOs.

The Future?

Onboarding, committed volunteers, and an iconic community builder may be all DAOs need.

It takes a DAO just one day to bring together a passionate (and sometimes obsessive) community. For organizations with a common goal, managing stakeholder expectations is critical.

A DAO's core values are community and cause, not scalable governance. “DAOs will work at scale like gaming communities, but we will have sub-DAOs everywhere like committees,” says Freuden.

So-called holographic consensuses “can handle, in principle, increasing rates of proposals by turning this tension between scale and resilience into an economical cost,” Field writes. Scalability is not guaranteed.

The DAO's key innovation is the fragmented workplace. “Voting is a subset of engagement,” says Freuden. DAO should allow for permissionless participation and engagement. DAOs allow for remote work.”

In 20 years, DAOs may be the AI-powered self-organizing concept. That seems far away now. But a new breed of productivity coordination organisms is maturing.

SAHIL SAPRU

3 years ago

How I grew my business to a $5 million annual recurring revenue

Scaling your startup requires answering customer demands, not growth tricks.

I cofounded Freedo Rentals in 2019. I reached 50 lakh+ ARR in 6 months before quitting owing to the epidemic.

Freedo aimed to solve 2 customer pain points:

Users lacked a reliable last-mile transportation option.

The amount that Auto walas charge for unmetered services

Solution?

Effectively simple.

Build ports at high-demand spots (colleges, residential societies, metros). Electric ride-sharing can meet demand.

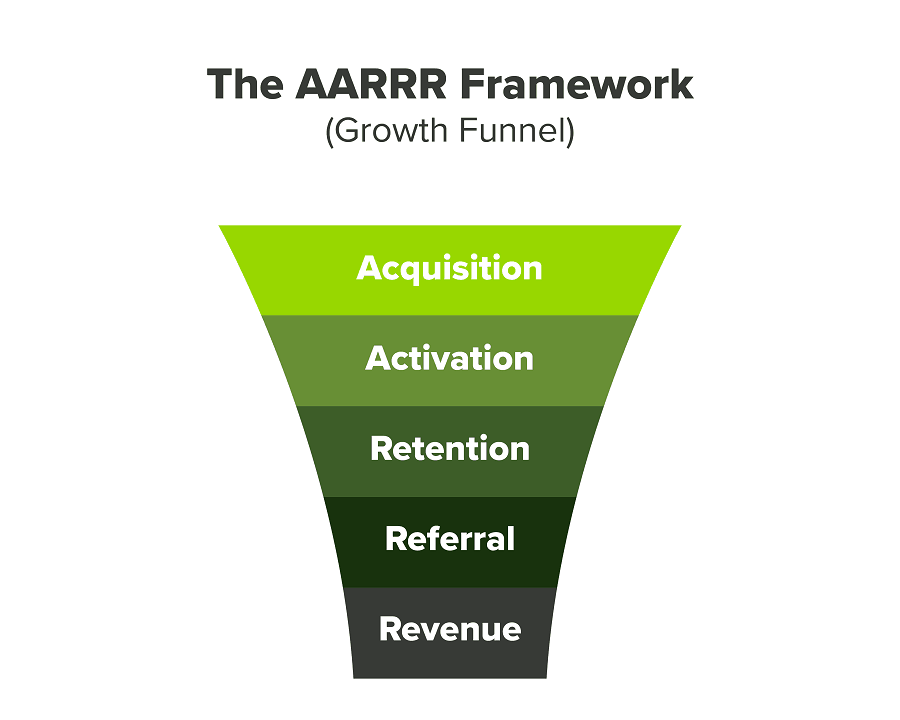

We had many problems scaling. I'll explain using the AARRR model.

Brand unfamiliarity or a novel product offering were the problems with awareness. Nobody knew what Freedo was or what it did.

Problem with awareness: Content and advertisements did a poor job of communicating the task at hand. The advertisements clashed with the white-collar part because they were too cheesy.

Retention Issue: We encountered issues, indicating that the product was insufficient. Problems with keyless entry, creating bills, stealing helmets, etc.

Retention/Revenue Issue: Costly compared to established rivals. Shared cars were 1/3 of our cost.

Referral Issue: Missing the opportunity to seize the AHA moment. After the ride, nobody remembered us.

Once you know where you're struggling with AARRR, iterative solutions are usually best.

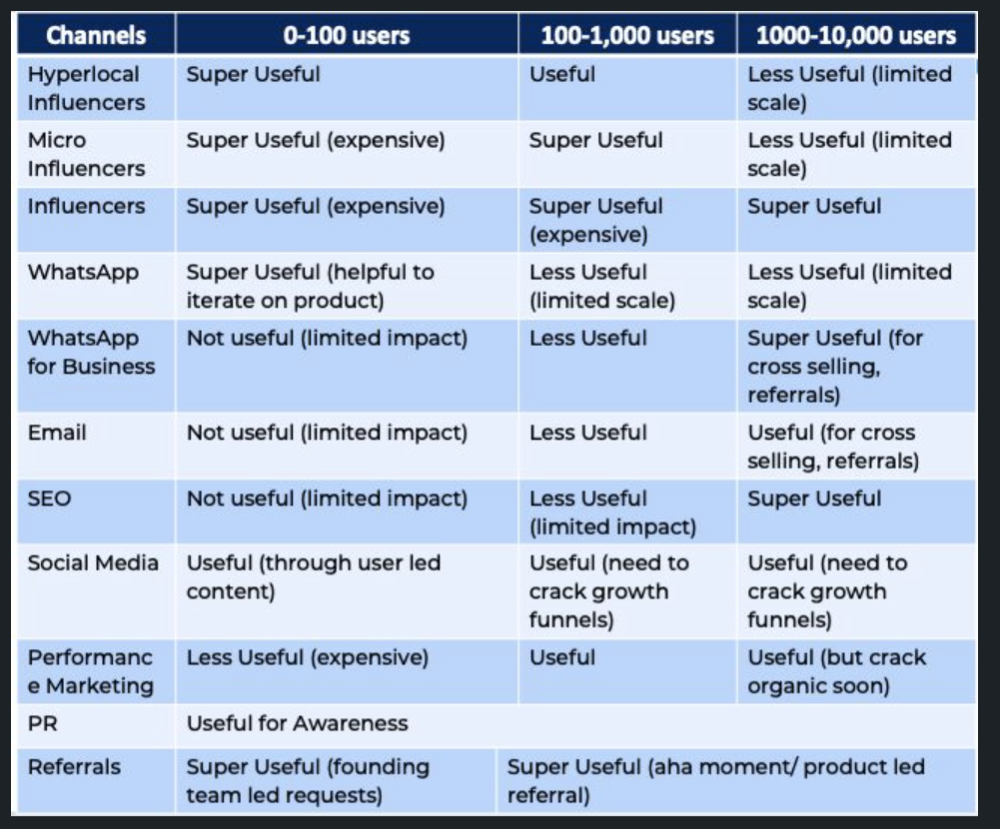

Once you have nailed the AARRR model, most startups use paid channels to scale. This dependence, on paid channels, increases with scale unless you crack your organic/inbound game.

Over-index growth loops. Growth loops increase inflow and customers as you scale.

When considering growth, ask yourself:

Who is the solution's ICP (Ideal Customer Profile)? (To whom are you selling)

What are the most important messages I should convey to customers? (This is an A/B test.)

Which marketing channels ought I prioritize? (Conduct analysis based on the startup's maturity/stage.)

Choose the important metrics to monitor for your AARRR funnel (not all metrics are equal)

Identify the Flywheel effect's growth loops (inertia matters)

My biggest mistakes:

not paying attention to consumer comments or satisfaction. It is the main cause of problems with referrals, retention, and acquisition for startups. Beyond your NPS, you should consider second-order consequences.

The tasks at hand should be quite clear.

Here's my scaling equation:

Growth = A x B x C

A = Funnel top (Traffic)

B = Product Valuation (Solving a real pain point)

C = Aha! (Emotional response)

Freedo's A, B, and C created a unique offering.

Freedo’s ABC:

A — Working or Studying population in NCR

B — Electric Vehicles provide last-mile mobility as a clean and affordable solution

C — One click booking with a no-noise scooter

Final outcome:

FWe scaled Freedo to Rs. 50 lakh MRR and were growing 60% month on month till the pandemic ceased our growth story.

How we did it?

We tried ambassadors and coupons. WhatsApp was our most successful A/B test.

We grew widespread adoption through college and society WhatsApp groups. We requested users for referrals in community groups.

What worked for us won't work for others. This scale underwent many revisions.

Every firm is different, thus you must know your customers. Needs to determine which channel to prioritize and when.

Users desired a safe, time-bound means to get there.

This (not mine) growth framework helped me a lot. You should follow suit.