More on NFTs & Art

Anton Franzen

3 years ago

This is the driving force for my use of NFTs, which will completely transform the world.

Its not a fuc*ing fad.

It's not about boring monkeys or photos as nfts; that's just what's been pushed up and made a lot of money. The technology underlying those ridiculous nft photos will one day prove your house and automobile ownership and tell you where your banana came from. Are you ready for web3? Soar!

People don't realize that absolutely anything can and will be part of the blockchain and smart contracts, making them even better. I'll tell you a secret: it will and is happening.

Why?

Why is something blockchain-based a good idea? So let’s speak about cars!

So a new Tesla car is manufactured, and when you buy it, it is bound to an NFT on the blockchain that proves current ownership. The NFT in the smart contract can contain some data about the current owner of the car and some data about the car's status, such as the number of miles driven, the car's overall quality, and so on, as well as a reference to a digital document bound to the NFT that has more information.

Now, 40 years from now, if you want to buy a used automobile, you can scan the car's serial number to view its NFT and see all of its history, each owner, how long they owned it, if it had damages, and more. Since it's on the blockchain, it can't be tampered with.

When you're ready to buy it, the owner posts it for sale, you buy it, and it's sent to your wallet. 5 seconds to change owner, 100% safe and verifiable.

Incorporate insurance logic into the car contract. If you crashed, your car's smart contract would take money from your insurance contract and deposit it in an insurance company wallet.

It's limitless. Your funds may be used by investors to provide insurance as they profit from everyone's investments.

Or suppose all car owners in a country deposit a fixed amount of money into an insurance smart contract that promises if something happens, we'll take care of it. It could be as little as $100-$500 per year, and in a country with 10 million people, maybe 3 million would do that, which would be $500 000 000 in that smart contract and it would be used by the insurance company to invest in assets or take a cut, literally endless possibilities.

Instead of $300 per month, you may pay $300 per year to be covered if something goes wrong, and that may include multiple insurances.

What about your grocery store banana, though?

Yes that too.

You can scan a banana to learn its complete history. You'll be able to see where it was cultivated, every middleman in the supply chain, and hopefully the banana's quality, farm, and ingredients used.

If you want locally decent bananas, you can only buy them, offering you transparency and options. I believe it will be an online marketplace where farmers publish their farms and products for trust and transparency. You might also buy bananas from the farmer.

And? Food security to finish the article. If an order of bananas included a toxin, you could easily track down every banana from the same origin and supply chain and uncover the root cause. This is a tremendous thing that will save lives and have a big impact; did you realize that 1 in 6 Americans gets poisoned by food every year? This could lower the number.

To summarize:

Smart contracts can issue nfts as proof of ownership and include functionality.

Jim Clyde Monge

3 years ago

Can You Sell Images Created by AI?

Some AI-generated artworks sell for enormous sums of money.

But can you sell AI-Generated Artwork?

Simple answer: yes.

However, not all AI services enable allow usage and redistribution of images.

Let's check some of my favorite AI text-to-image generators:

Dall-E2 by OpenAI

The AI art generator Dall-E2 is powerful. Since it’s still in beta, you can join the waitlist here.

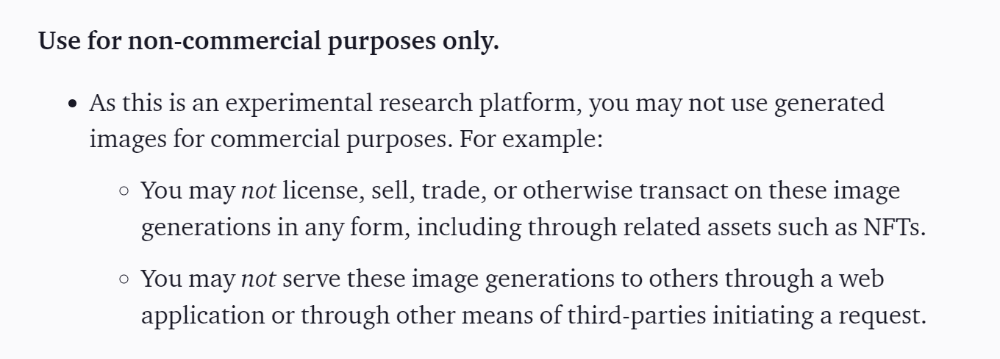

OpenAI DOES NOT allow the use and redistribution of any image for commercial purposes.

Here's the policy as of April 6, 2022.

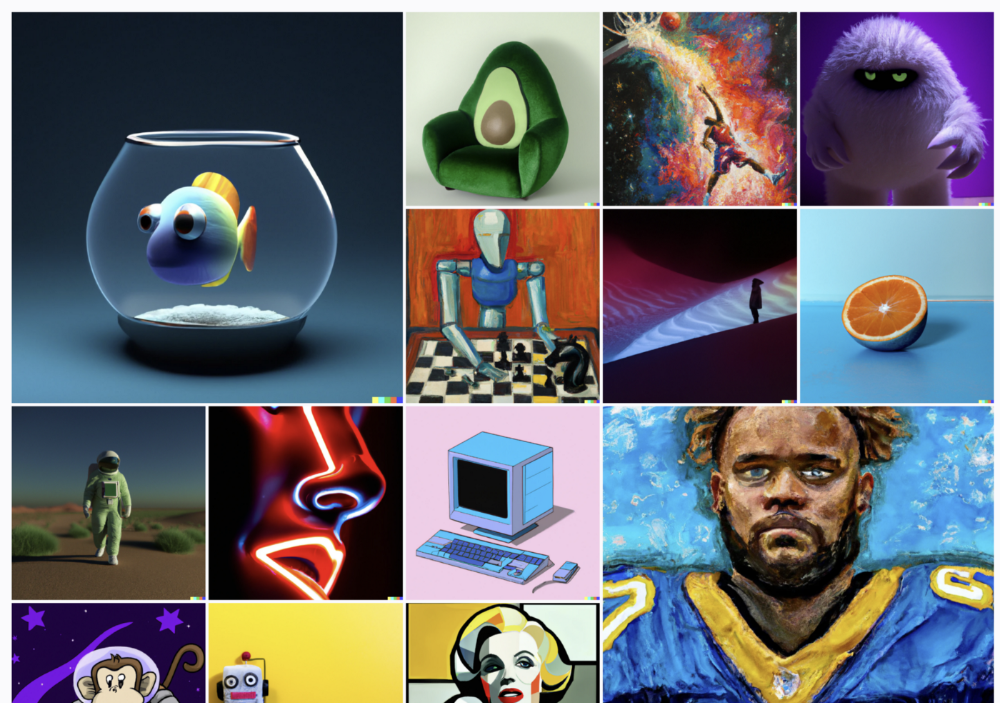

Here are some images from Dall-E2’s webpage to show its art quality.

Several Reddit users reported receiving pricing surveys from OpenAI.

This suggests the company may bring out a subscription-based tier and a commercial license to sell images soon.

MidJourney

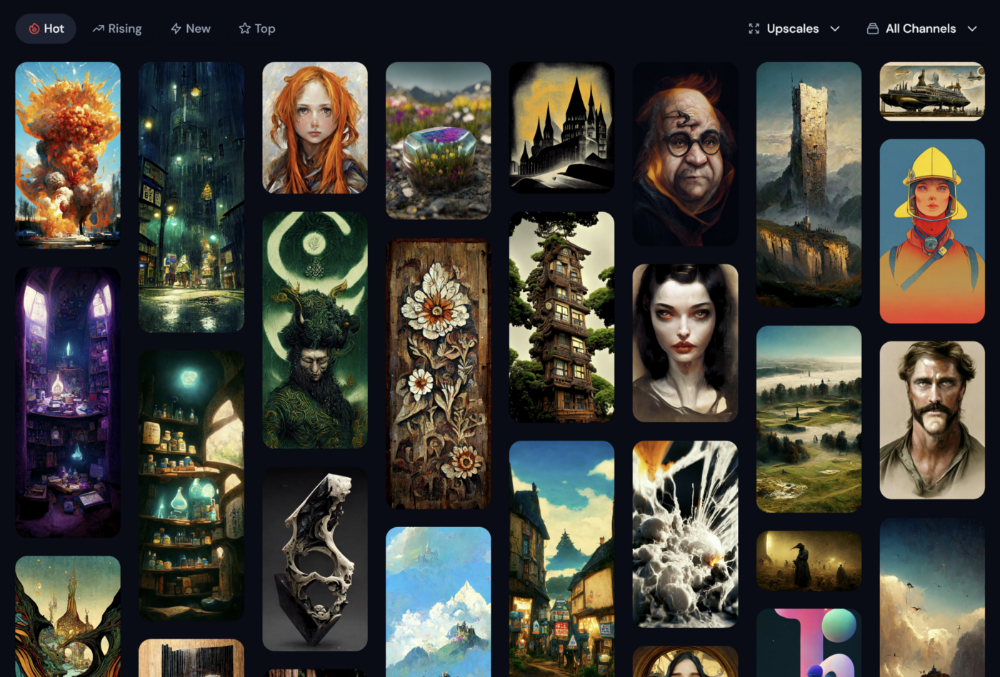

I like Midjourney's art generator. It makes great AI images. Here are some samples:

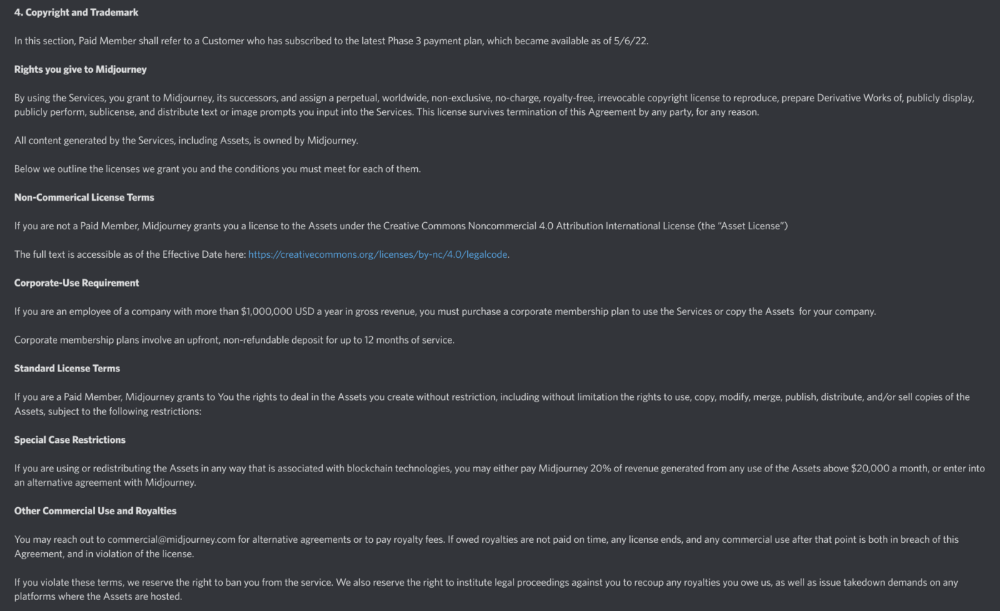

Standard Licenses are available for $10 per month.

Standard License allows you to use, copy, modify, merge, publish, distribute, and/or sell copies of the images, except for blockchain technologies.

If you utilize or distribute the Assets using blockchain technology, you must pay MidJourney 20% of revenue above $20,000 a month or engage in an alternative agreement.

Here's their copyright and trademark page.

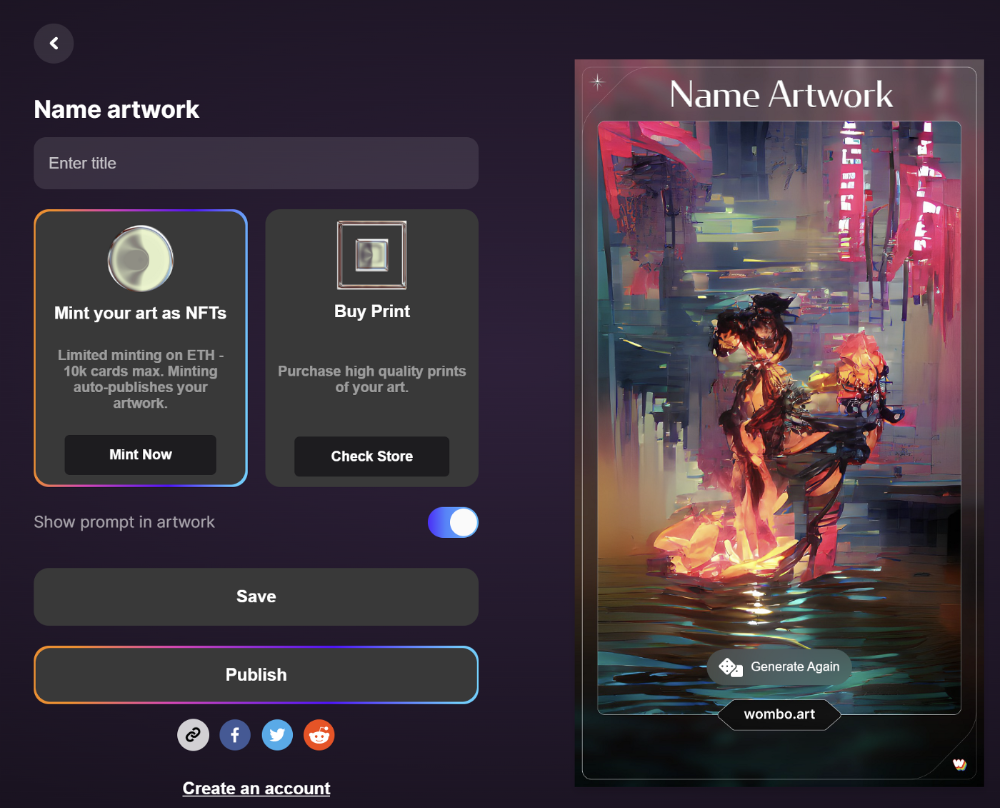

Dream by Wombo

Dream is one of the first public AI art generators.

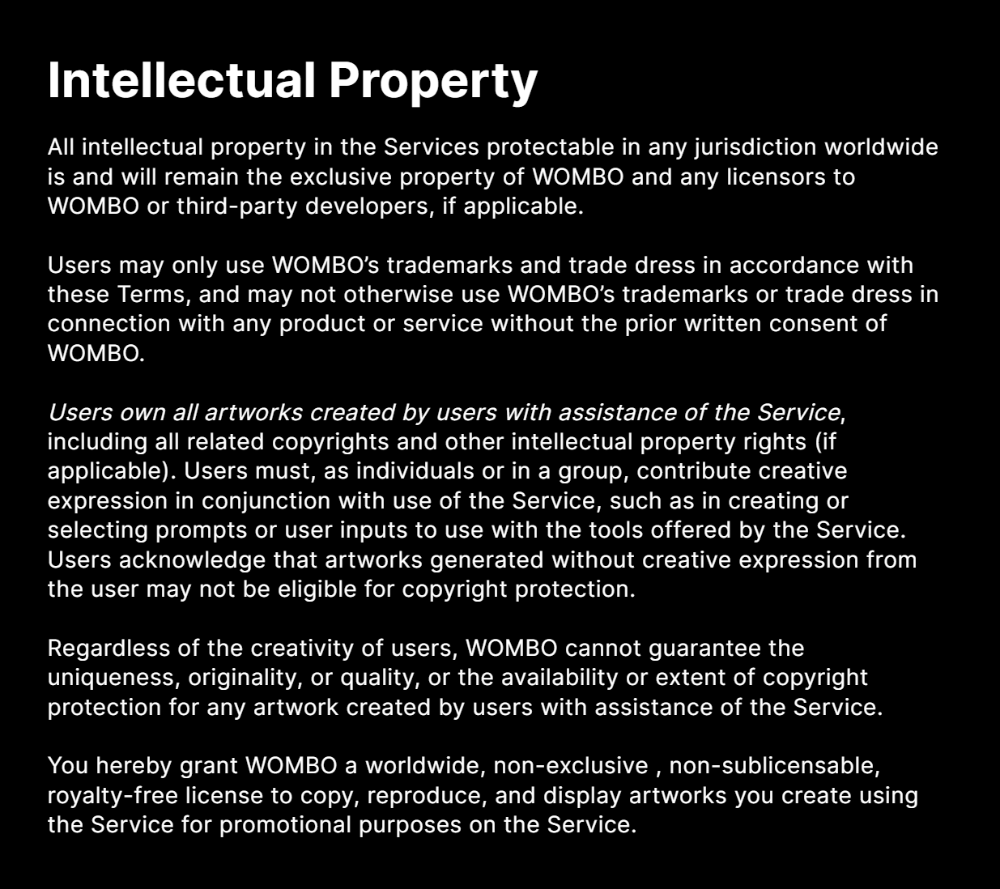

This AI program is free, easy to use, and Wombo gives a royalty-free license to copy or share artworks.

Users own all artworks generated by the tool. Including all related copyrights or intellectual property rights.

Here’s Wombos' intellectual property policy.

Final Reflections

AI is creating a new sort of art that's selling well. It’s becoming popular and valued, despite some skepticism.

Now that you know MidJourney and Wombo let you sell AI-generated art, you need to locate buyers. There are several ways to achieve this, but that’s for another story.

Dmytro Spilka

3 years ago

Why NFTs Have a Bright Future Away from Collectible Art After Punks and Apes

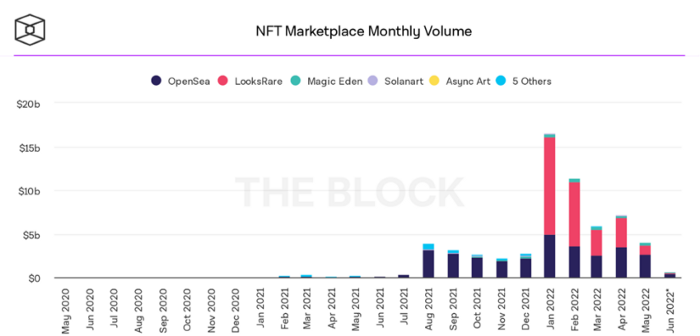

After a crazy second half of 2021 and significant trade volumes into 2022, the market for NFT artworks like Bored Ape Yacht Club, CryptoPunks, and Pudgy Penguins has begun a sharp collapse as market downturns hit token values.

DappRadar data shows NFT monthly sales have fallen below $1 billion since June 2021. OpenSea, the world's largest NFT exchange, has seen sales volume decline 75% since May and is trading like July 2021.

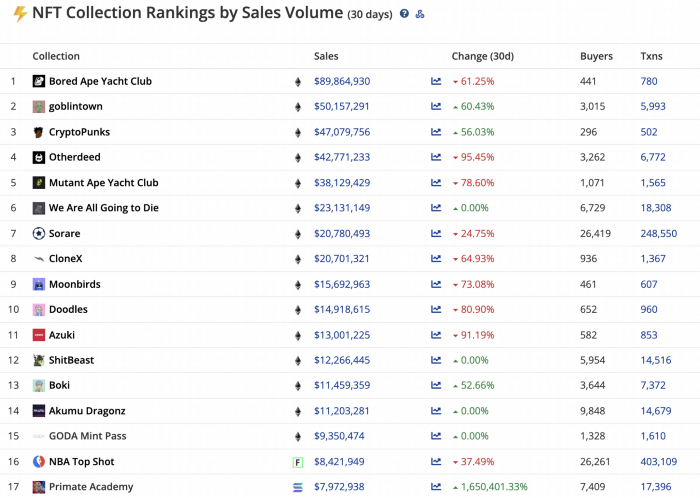

Prices of popular non-fungible tokens have also decreased. Bored Ape Yacht Club (BAYC) has witnessed volume and sales drop 63% and 15%, respectively, in the past month.

BeInCrypto analysis shows market decline. May 2022 cryptocurrency marketplace volume was $4 billion, according to a news platform. This is a sharp drop from April's $7.18 billion.

OpenSea, a big marketplace, contributed $2.6 billion, while LooksRare, Magic Eden, and Solanart also contributed.

NFT markets are digital platforms for buying and selling tokens, similar stock trading platforms. Although some of the world's largest exchanges offer NFT wallets, most users store their NFTs on their favorite marketplaces.

In January 2022, overall NFT sales volume was $16.57 billion, with LooksRare contributing $11.1 billion. May 2022's volume was $12.57 less than January, a 75% drop, and June's is expected to be considerably smaller.

A World Based on Utility

Despite declines in NFT trading volumes, not all investors are negative on NFTs. Although there are uncertainties about the sustainability of NFT-based art collections, there are fewer reservations about utility-based tokens and their significance in technology's future.

In June, business CEO Christof Straub said NFTs may help artists monetize unreleased content, resuscitate catalogs, establish deeper fan connections, and make processes more efficient through technology.

We all know NFTs can't be JPEGs. Straub noted that NFT music rights can offer more equitable rewards to musicians.

Music NFTs are here to stay if they have real value, solve real problems, are trusted and lawful, and have fair and sustainable business models.

NFTs can transform numerous industries, including music. Market opinion is shifting towards tokens with more utility than the social media artworks we're used to seeing.

While the major NFT names remain dominant in terms of volume, new utility-based initiatives are emerging as top 20 collections.

Otherdeed, Sorare, and NBA Top Shot are NFT-based games that rank above Bored Ape Yacht Club and Cryptopunks.

Users can switch video NFTs of basketball players in NBA Top Shot. Similar efforts are emerging in the non-fungible landscape.

Sorare shows how NFTs can support a new way of playing fantasy football, where participants buy and swap trading cards to create a 5-player team that wins rewards based on real-life performances.

Sorare raised 579.7 million in one of Europe's largest Series B financing deals in September 2021. Recently, the platform revealed plans to expand into Major League Baseball.

Strong growth indications suggest a promising future for NFTs. The value of art-based collections like BAYC and CryptoPunks may be questioned as markets become diluted by new limited collections, but the potential for NFTs to become intrinsically linked to tangible utility like online gaming, music and art, and even corporate reward schemes shows the industry has a bright future.

You might also like

MartinEdic

3 years ago

Russia Through the Windows: It's Very Bad

And why we must keep arming Ukraine

Russian expatriates write about horrific news from home.

Read this from Nadin Brzezinski. She's not a native English speaker, so there are grammar errors, but her tale smells true.

Terrible truth.

There's much more that reveals Russia's grim reality.

Non-leadership. Millions of missing supplies are presumably sold for profit, leaving untrained troops without food or gear. Missile attacks pause because they run out. Fake schemes to hold talks as a way of stalling while they scramble for solutions.

Street men were mobilized. Millions will be ground up to please a crazed despot. Fear, wrath, and hunger pull apart civilization.

It's the most dystopian story, but Ukraine is worse. Destruction of a society, country, and civilization. Only the invaders' corruption and incompetence save the Ukrainians.

Rochester, NY. My suburb had many Soviet-era Ukrainian refugees. Their kids were my classmates. Fifty years later, many are still my friends. I loved their food and culture. My town has 20,000 Ukrainians.

Grieving but determined. They don't quit. They won't quit. Russians are eternal enemies.

It's the Russian people's willingness to tolerate corruption, abuse, and stupidity by their leaders. They are paying. 65000 dead. Ruined economy. No freedom to speak. Americans do not appreciate that freedom as we should.

It lets me write/publish.

Russian friends are shocked. Many are here because their parents escaped Russian anti-semitism and authoritarian oppression. A Russian cultural legacy says a strongman's methods are admirable.

A legacy of a slavery history disguised as serfdom. Peasants and Princes.

Read Tolstoy. Then Anna Karenina. The main characters are princes and counts, whose leaders are incompetent idiots with wealth and power.

Peasants who die in their wars due to incompetence are nameless ciphers.

Sound familiar?

Will Lockett

3 years ago

Tesla recently disclosed its greatest secret.

The VP has revealed a secret that should frighten the rest of the EV world.

Tesla led the EV revolution. Elon Musk's invention offers a viable alternative to gas-guzzlers. Tesla has lost ground in recent years. VW, BMW, Mercedes, and Ford offer EVs with similar ranges, charging speeds, performance, and cost. Tesla's next-generation 4680 battery pack, Roadster, Cybertruck, and Semi were all delayed. CATL offers superior batteries than the 4680. Martin Viecha, Tesla's Vice President, recently told Business Insider something that startled the EV world and will establish Tesla as the EV king.

Viecha mentioned that Tesla's production costs have dropped 57% since 2017. This isn't due to cheaper batteries or devices like Model 3. No, this is due to amazing factory efficiency gains.

Musk wasn't crazy to want a nearly 100% automated production line, and Tesla's strategy of sticking with one model and improving it has paid off. Others change models every several years. This implies they must spend on new R&D, set up factories, and modernize service and parts systems. All of this costs a ton of money and prevents them from refining production to cut expenses.

Meanwhile, Tesla updates its vehicles progressively. Everything from the backseats to the screen has been enhanced in a 2022 Model 3. Tesla can refine, standardize, and cheaply produce every part without changing the production line.

In 2017, Tesla's automobile production averaged $84,000. In 2022, it'll be $36,000.

Mr. Viecha also claimed that new factories in Shanghai and Berlin will be significantly cheaper to operate once fully operating.

Tesla's hand is visible. Tesla selling $36,000 cars for $60,000 This barely beats the competition. Model Y long-range costs just over $60,000. Tesla makes $24,000+ every sale, giving it a 40% profit margin, one of the best in the auto business.

VW I.D4 costs about the same but makes no profit. Tesla's rivals face similar challenges. Their EVs make little or no profit.

Tesla costs the same as other EVs, but they're in a different league.

But don't forget that the battery pack accounts for 40% of an EV's cost. Tesla may soon fully utilize its 4680 battery pack.

The 4680 battery pack has larger cells and a unique internal design. This means fewer cells are needed for a car, making it cheaper to assemble and produce (per kWh). Energy density and charge speeds increase slightly.

Tesla underestimated the difficulty of making this revolutionary new cell. Each time they try to scale up production, quality drops and rejected cells rise.

Tesla recently installed this battery pack in Model Ys and is scaling production. If they succeed, Tesla battery prices will plummet.

Tesla's Model Ys 2170 battery costs $11,000. The same size pack with 4680 cells costs $3,400 less. Once scaled, it could be $5,500 (50%) less. The 4680 battery pack could reduce Tesla production costs by 20%.

With these cost savings, Tesla could sell Model Ys for $40,000 while still making a profit. They could offer a $25,000 car.

Even with new battery technology, it seems like other manufacturers will struggle to make EVs profitable.

Teslas cost about the same as competitors, so don't be fooled. Behind the scenes, they're still years ahead, and the 4680 battery pack and new factories will only increase that lead. Musk faces a first. He could sell Teslas at current prices and make billions while other manufacturers struggle. Or, he could massively undercut everyone and crush the competition once and for all. Tesla and Elon win.

SAHIL SAPRU

3 years ago

How I grew my business to a $5 million annual recurring revenue

Scaling your startup requires answering customer demands, not growth tricks.

I cofounded Freedo Rentals in 2019. I reached 50 lakh+ ARR in 6 months before quitting owing to the epidemic.

Freedo aimed to solve 2 customer pain points:

Users lacked a reliable last-mile transportation option.

The amount that Auto walas charge for unmetered services

Solution?

Effectively simple.

Build ports at high-demand spots (colleges, residential societies, metros). Electric ride-sharing can meet demand.

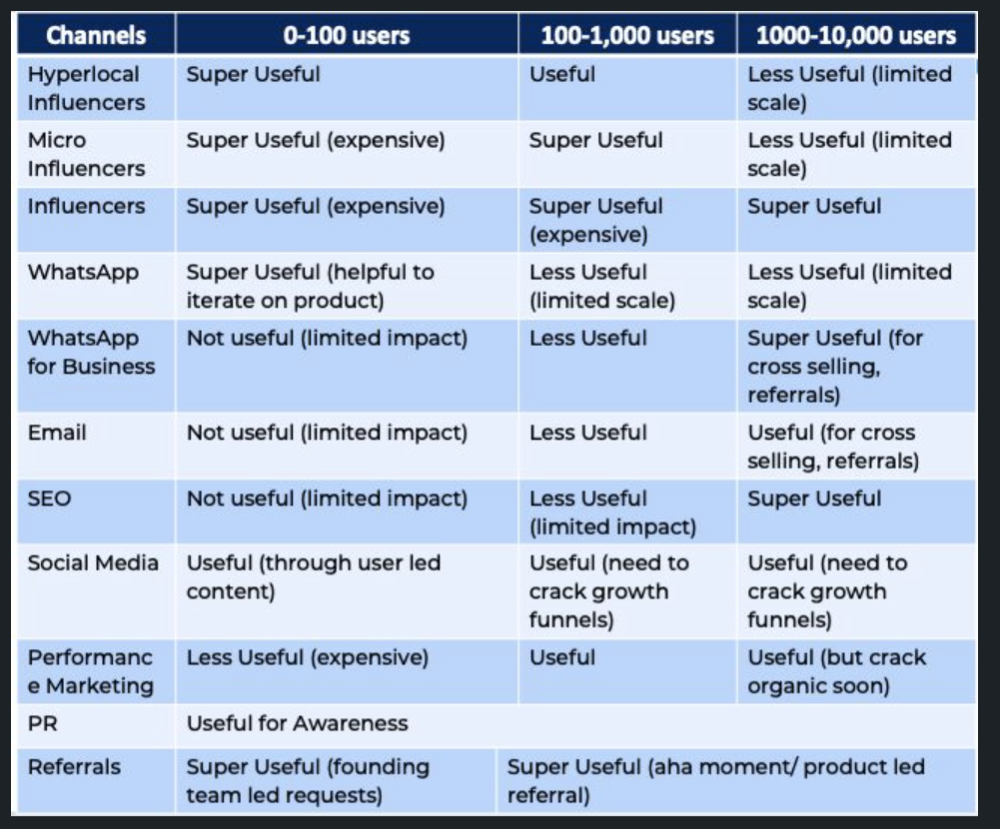

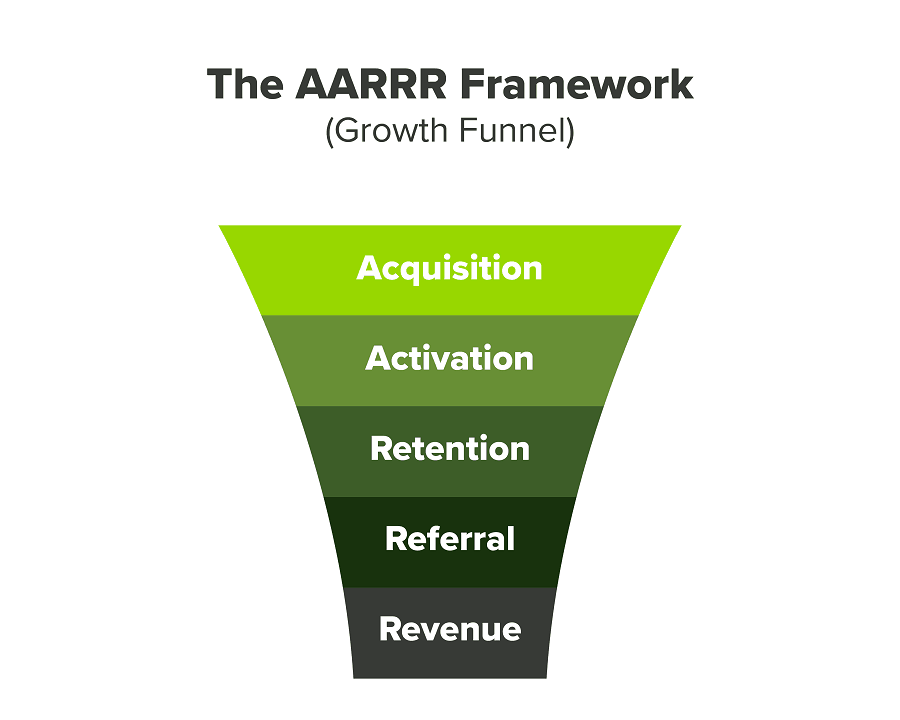

We had many problems scaling. I'll explain using the AARRR model.

Brand unfamiliarity or a novel product offering were the problems with awareness. Nobody knew what Freedo was or what it did.

Problem with awareness: Content and advertisements did a poor job of communicating the task at hand. The advertisements clashed with the white-collar part because they were too cheesy.

Retention Issue: We encountered issues, indicating that the product was insufficient. Problems with keyless entry, creating bills, stealing helmets, etc.

Retention/Revenue Issue: Costly compared to established rivals. Shared cars were 1/3 of our cost.

Referral Issue: Missing the opportunity to seize the AHA moment. After the ride, nobody remembered us.

Once you know where you're struggling with AARRR, iterative solutions are usually best.

Once you have nailed the AARRR model, most startups use paid channels to scale. This dependence, on paid channels, increases with scale unless you crack your organic/inbound game.

Over-index growth loops. Growth loops increase inflow and customers as you scale.

When considering growth, ask yourself:

Who is the solution's ICP (Ideal Customer Profile)? (To whom are you selling)

What are the most important messages I should convey to customers? (This is an A/B test.)

Which marketing channels ought I prioritize? (Conduct analysis based on the startup's maturity/stage.)

Choose the important metrics to monitor for your AARRR funnel (not all metrics are equal)

Identify the Flywheel effect's growth loops (inertia matters)

My biggest mistakes:

not paying attention to consumer comments or satisfaction. It is the main cause of problems with referrals, retention, and acquisition for startups. Beyond your NPS, you should consider second-order consequences.

The tasks at hand should be quite clear.

Here's my scaling equation:

Growth = A x B x C

A = Funnel top (Traffic)

B = Product Valuation (Solving a real pain point)

C = Aha! (Emotional response)

Freedo's A, B, and C created a unique offering.

Freedo’s ABC:

A — Working or Studying population in NCR

B — Electric Vehicles provide last-mile mobility as a clean and affordable solution

C — One click booking with a no-noise scooter

Final outcome:

FWe scaled Freedo to Rs. 50 lakh MRR and were growing 60% month on month till the pandemic ceased our growth story.

How we did it?

We tried ambassadors and coupons. WhatsApp was our most successful A/B test.

We grew widespread adoption through college and society WhatsApp groups. We requested users for referrals in community groups.

What worked for us won't work for others. This scale underwent many revisions.

Every firm is different, thus you must know your customers. Needs to determine which channel to prioritize and when.

Users desired a safe, time-bound means to get there.

This (not mine) growth framework helped me a lot. You should follow suit.