More on Marketing

Victoria Kurichenko

3 years ago

My Blog Is in Google's Top 10—Here's How to Compete

"Competition" is beautiful and hateful.

Some people bury their dreams because they are afraid of competition. Others challenge themselves, shaping our world.

Competition is normal.

It spurs innovation and progress.

I wish more people agreed.

As a marketer, content writer, and solopreneur, my readers often ask:

"I want to create a niche website, but I have no ideas. Everything's done"

"Is a website worthwhile?"

I can't count how many times I said, "Yes, it makes sense, and you can succeed in a competitive market."

I encourage and share examples, but it's not enough to overcome competition anxiety.

I launched an SEO writing website for content creators a year ago, knowing it wouldn't beat Ahrefs, Semrush, Backlinko, etc.

Not needed.

Many of my website's pages rank highly on Google.

Everyone can eat the pie.

In a competitive niche, I took a different approach.

Look farther

When chatting with bloggers that want a website, I discovered something fascinating.

They want to launch a website but have no ideas. As a next step, they start listing the interests they believe they should work on, like wellness, lifestyle, investments, etc. I could keep going.

Too many generalists who claim to know everything confuse many.

Generalists aren't trusted.

We want someone to fix our problems immediately.

I don't think broad-spectrum experts are undervalued. People have many demands that go beyond generalists' work. Narrow-niche experts can help.

I've done SEO for three years. I learned from experts and courses. I couldn't find a comprehensive SEO writing resource.

I read tons of articles before realizing that wasn't it. I took courses that covered SEO basics eventually.

I had a demand for learning SEO writing, but there was no solution on the market. My website fills this micro-niche.

Have you ever had trouble online?

Professional courses too general, boring, etc.?

You've bought off-topic books, right?

You're not alone.

Niche ideas!

Big players often disregard new opportunities. Too small. Individual content creators can succeed here.

In a competitive market:

Never choose wide subjects

Think about issues you can relate to and have direct experience with.

Be a consumer to discover both the positive and negative aspects of a good or service.

Merchandise your annoyances.

Consider ways to transform your frustrations into opportunities.

The right niche is half-success. Here is what else I did to hit the Google front page with my website.

An innovative method for choosing subjects

Why publish on social media and websites?

Want likes, shares, followers, or fame?

Some people do it for fun. No judgment.

I bet you want more.

You want to make decent money from blogging.

Writing about random topics, even if they are related to your niche, won’t help you attract an audience from organic search. I'm a marketer and writer.

I worked at companies with dead blogs because they posted for themselves, not readers. They did not follow SEO writing rules; that’s why most of their content flopped.

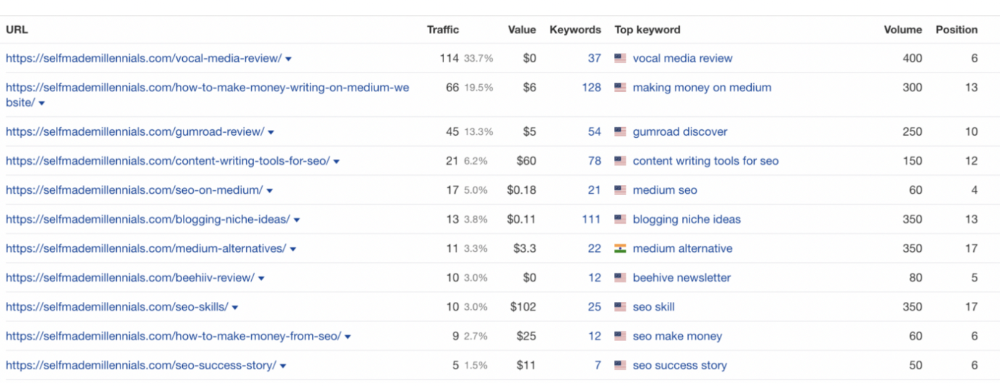

I learned these hard lessons and grew my website from 0 to 3,000+ visitors per month while working on it a few hours a week only. Evidence:

I choose website topics using these criteria:

- Business potential. The information should benefit my audience and generate revenue. There would be no use in having it otherwise.

My topics should help me:

Attract organic search traffic with my "fluff-free" content -> Subscribers > SEO ebook sales.

Simple and effective.

- traffic on search engines. The number of monthly searches reveals how popular my topic is all across the world. If I find that no one is interested in my suggested topic, I don't write a blog article.

- Competition. Every search term is up against rivals. Some are more popular (thus competitive) since more websites target them in organic search. A new website won't score highly for keywords that are too competitive. On the other side, keywords with moderate to light competition can help you rank higher on Google more quickly.

- Search purpose. The "why" underlying users' search requests is revealed. I analyze search intent to understand what users need when they plug various queries in the search bar and what content can perfectly meet their needs.

My specialty website produces money, ranks well, and attracts the target audience because I handpick high-traffic themes.

Following these guidelines, even a new website can stand out.

I wrote a 50-page SEO writing guide where I detailed topic selection and share my front-page Google strategy.

My guide can help you run a successful niche website.

In summary

You're not late to the niche-website party.

The Internet offers many untapped opportunities.

We need new solutions and are willing to listen.

There are unexplored niches in any topic.

Don't fight giants. They have their piece of the pie. They might overlook new opportunities while trying to keep that piece of the pie. You should act now.

Victoria Kurichenko

3 years ago

What Happened After I Posted an AI-Generated Post on My Website

This could cost you.

Content creators may have heard about Google's "Helpful content upgrade."

This change is another Google effort to remove low-quality, repetitive, and AI-generated content.

Why should content creators care?

Because too much content manipulates search results.

My experience includes the following.

Website admins seek high-quality guest posts from me. They send me AI-generated text after I say "yes." My readers are irrelevant. Backlinks are needed.

Companies copy high-ranking content to boost their Google rankings. Unfortunately, it's common.

What does this content offer?

Nothing.

Despite Google's updates and efforts to clean search results, webmasters create manipulative content.

As a marketer, I knew about AI-powered content generation tools. However, I've never tried them.

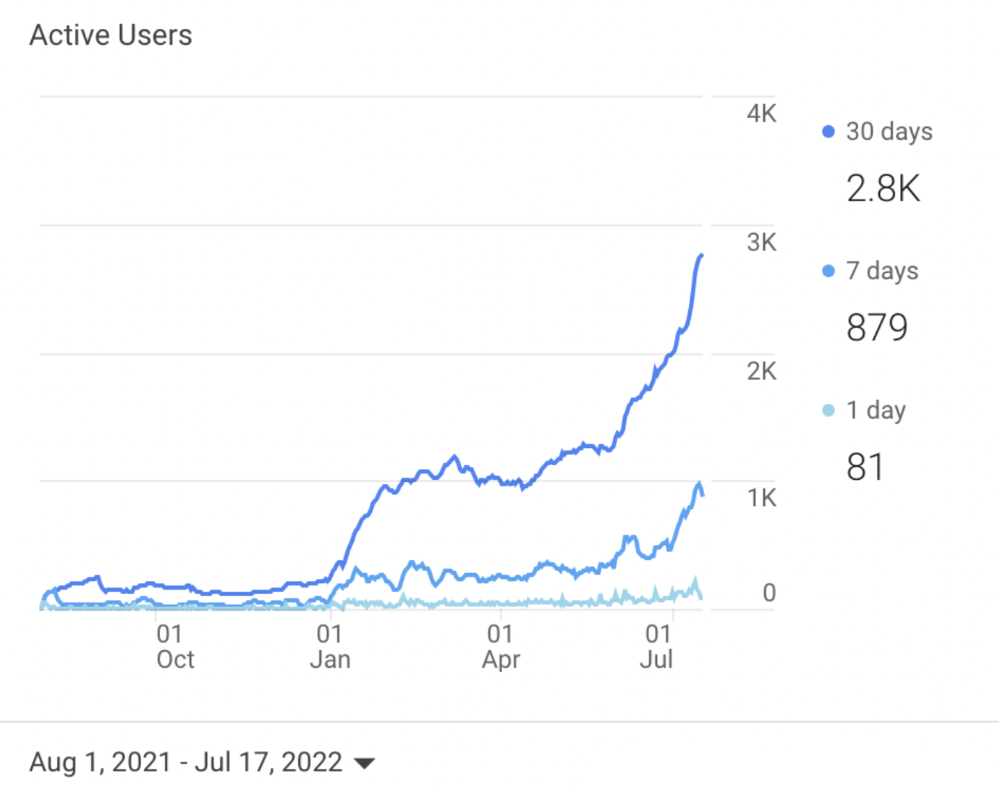

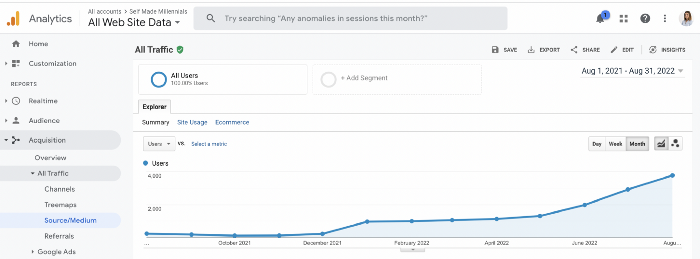

I use old-fashioned content creation methods to grow my website from 0 to 3,000 monthly views in one year.

Last year, I launched a niche website.

I do keyword research, analyze search intent and competitors' content, write an article, proofread it, and then optimize it.

This strategy is time-consuming.

But it yields results!

Here's proof from Google Analytics:

Proven strategies yield promising results.

To validate my assumptions and find new strategies, I run many experiments.

I tested an AI-powered content generator.

I used a tool to write this Google-optimized article about SEO for startups.

I wanted to analyze AI-generated content's Google performance.

Here are the outcomes of my test.

First, quality.

I dislike "meh" content. I expect articles to answer my questions. If not, I've wasted my time.

My essays usually include research, personal anecdotes, and what I accomplished and achieved.

AI-generated articles aren't as good because they lack individuality.

Read my AI-generated article about startup SEO to see what I mean.

It's dry and shallow, IMO.

It seems robotic.

I'd use quotes and personal experience to show how SEO for startups is different.

My article paraphrases top-ranked articles on a certain topic.

It's readable but useless. Similar articles abound online. Why read it?

AI-generated content is low-quality.

Let me show you how this content ranks on Google.

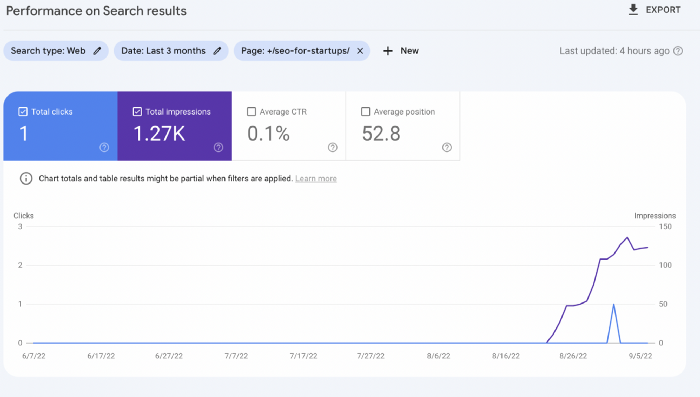

The Google Search Console report shows impressions, clicks, and average position.

Low numbers.

No one opens the 5th Google search result page to read the article. Too far!

You may say the new article will improve.

Marketing-wise, I doubt it.

This article is shorter and less comprehensive than top-ranking pages. It's unlikely to win because of this.

AI-generated content's terrible reality.

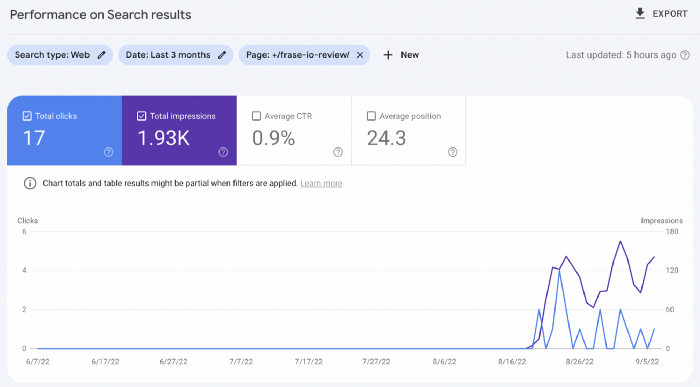

I'll compare how this content I wrote for readers and SEO performs.

Both the AI and my article are fresh, but trends are emerging.

My article's CTR and average position are higher.

I spent a week researching and producing that piece, unlike AI-generated content. My expert perspective and unique consequences make it interesting to read.

Human-made.

In summary

No content generator can duplicate a human's tone, writing style, or creativity. Artificial content is always inferior.

Not "bad," but inferior.

Demand for content production tools will rise despite Google's efforts to eradicate thin content.

Most won't spend hours producing link-building articles. Costly.

As guest and sponsored posts, artificial content will thrive.

Before accepting a new arrangement, content creators and website owners should consider this.

Jano le Roux

3 years ago

Here's What I Learned After 30 Days Analyzing Apple's Microcopy

Move people with tiny words.

Apple fanboy here.

Macs are awesome.

Their iPhones rock.

$19 cloths are great.

$999 stands are amazing.

I love Apple's microcopy even more.

It's like the marketing goddess bit into the Apple logo and blessed the world with microcopy.

I took on a 30-day micro-stalking mission.

Every time I caught myself wasting time on YouTube, I had to visit Apple’s website to learn the secrets of the marketing goddess herself.

We've learned. Golden apples are calling.

Cut the friction

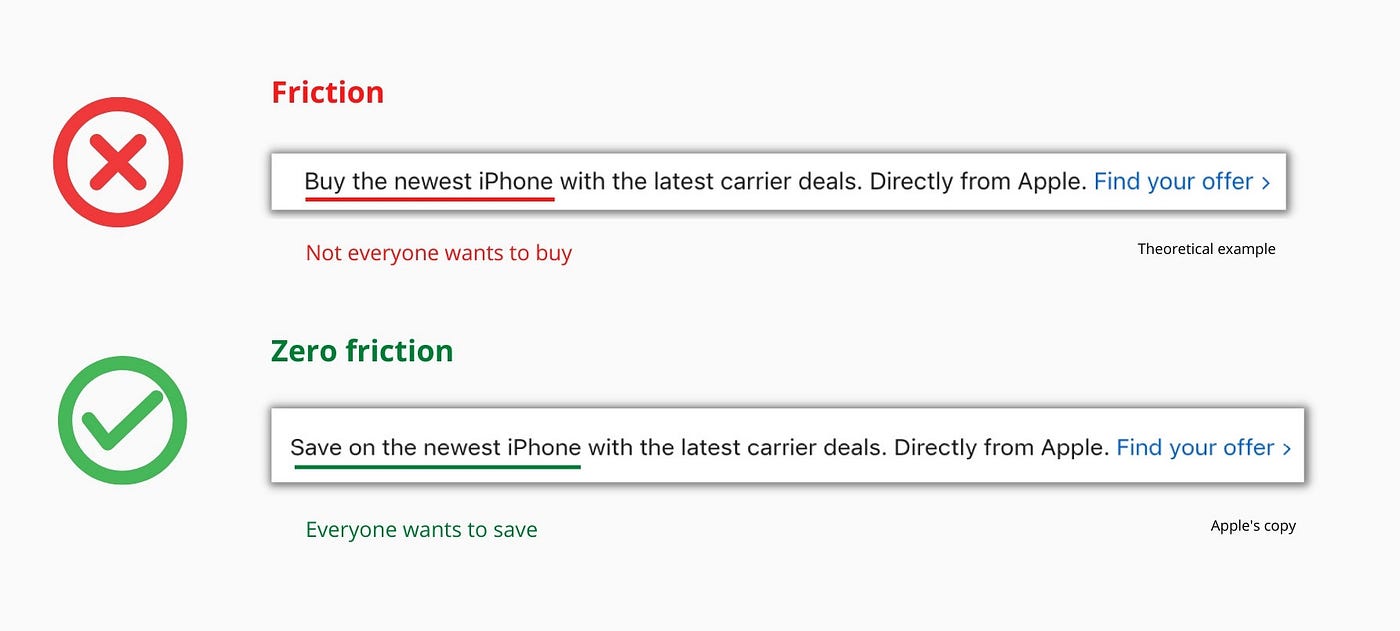

Benefit-first, not commitment-first.

Brands lose customers through friction.

Most brands don't think like customers.

Brands want sales.

Brands want newsletter signups.

Here's their microcopy:

“Buy it now.”

“Sign up for our newsletter.”

Both are difficult. They ask for big commitments.

People are simple creatures. Want pleasure without commitment.

Apple nails this.

So, instead of highlighting the commitment, they highlight the benefit of the commitment.

Saving on the latest iPhone sounds easier than buying it. Everyone saves, but not everyone buys.

A subtle change in framing reduces friction.

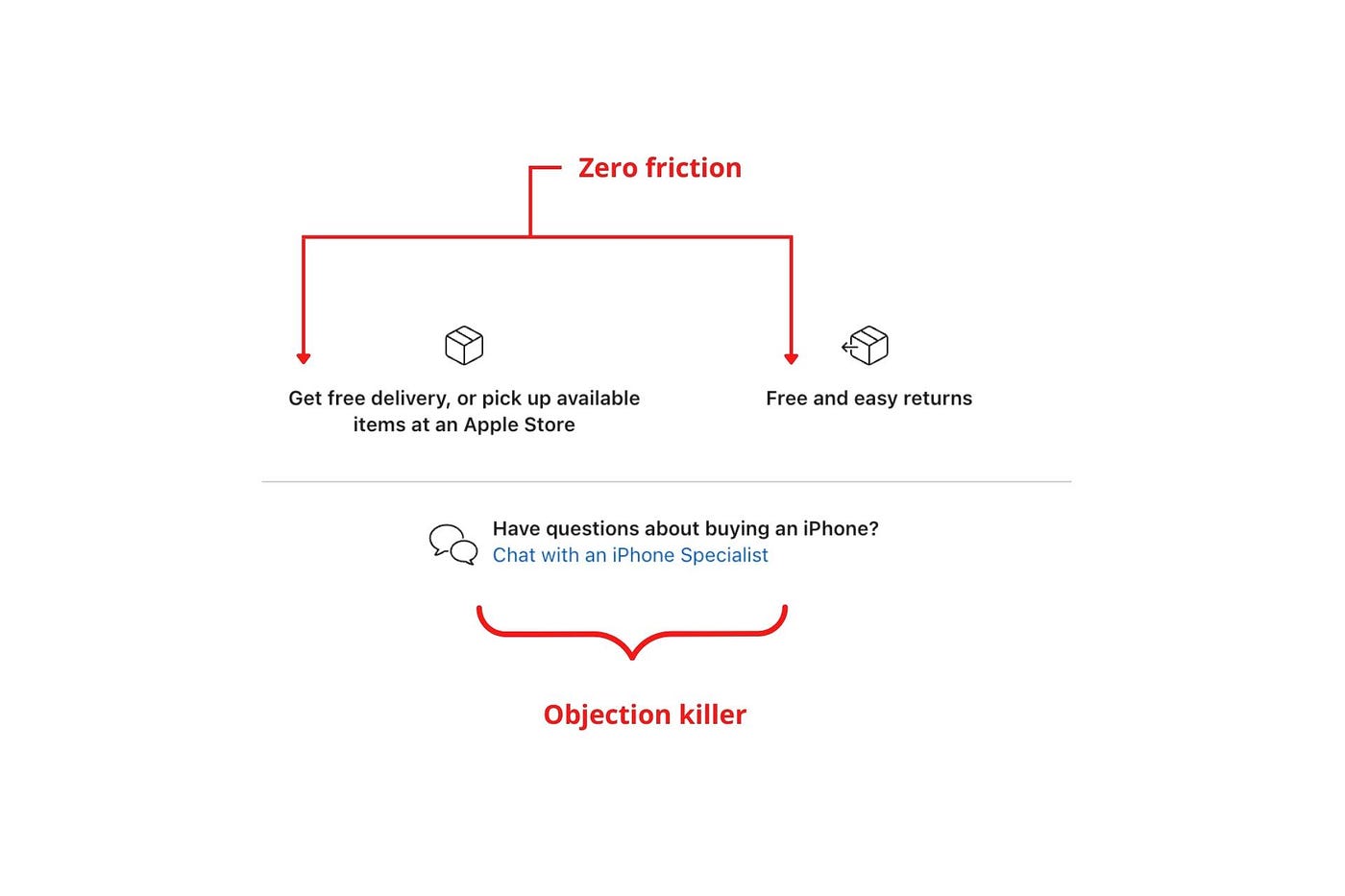

Apple eliminates customer objections to reduce friction.

Less customer friction means simpler processes.

Apple's copy expertly reassures customers about shipping fees and not being home. Apple assures customers that returning faulty products is easy.

Apple knows that talking to a real person is the best way to reduce friction and improve their copy.

Always rhyme

Learn about fine rhyme.

Poets make things beautiful with rhyme.

Copywriters use rhyme to stand out.

Apple’s copywriters have mastered the art of corporate rhyme.

Two techniques are used.

1. Perfect rhyme

Here, rhymes are identical.

2. Imperfect rhyme

Here, rhyming sounds vary.

Apple prioritizes meaning over rhyme.

Apple never forces rhymes that don't fit.

It fits so well that the copy seems accidental.

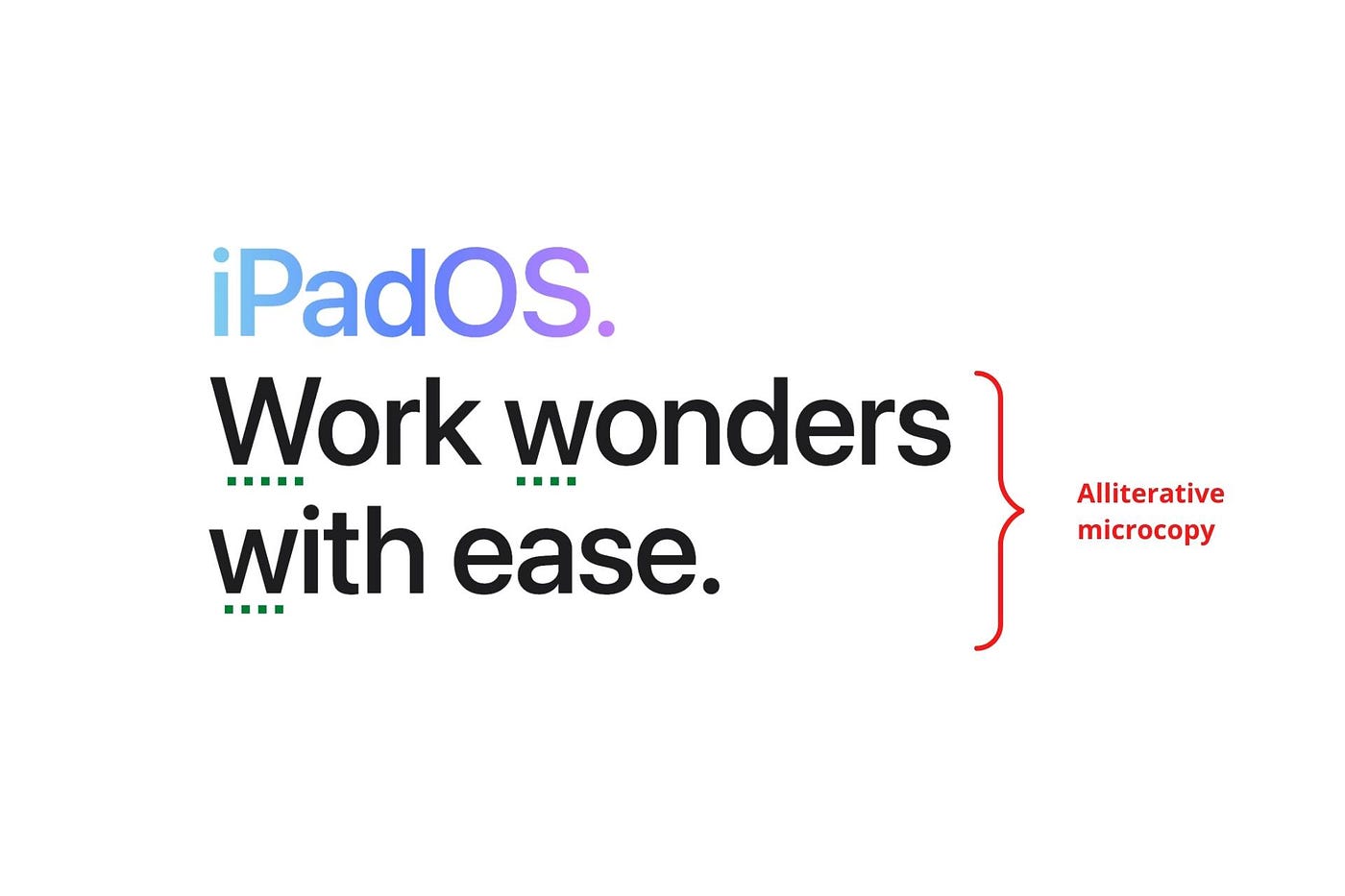

Add alliteration

Alliteration always entertains.

Alliteration repeats initial sounds in nearby words.

Apple's copy uses alliteration like no other brand I've seen to create a rhyming effect or make the text more fun to read.

For example, in the sentence "Sam saw seven swans swimming," the initial "s" sound is repeated five times. This creates a pleasing rhythm.

Microcopy overuse is like pouring ketchup on a Michelin-star meal.

Alliteration creates a memorable phrase in copywriting. It's subtler than rhyme, and most people wouldn't notice; it simply resonates.

I love how Apple uses alliteration and contrast between "wonders" and "ease".

Assonance, or repeating vowels, isn't Apple's thing.

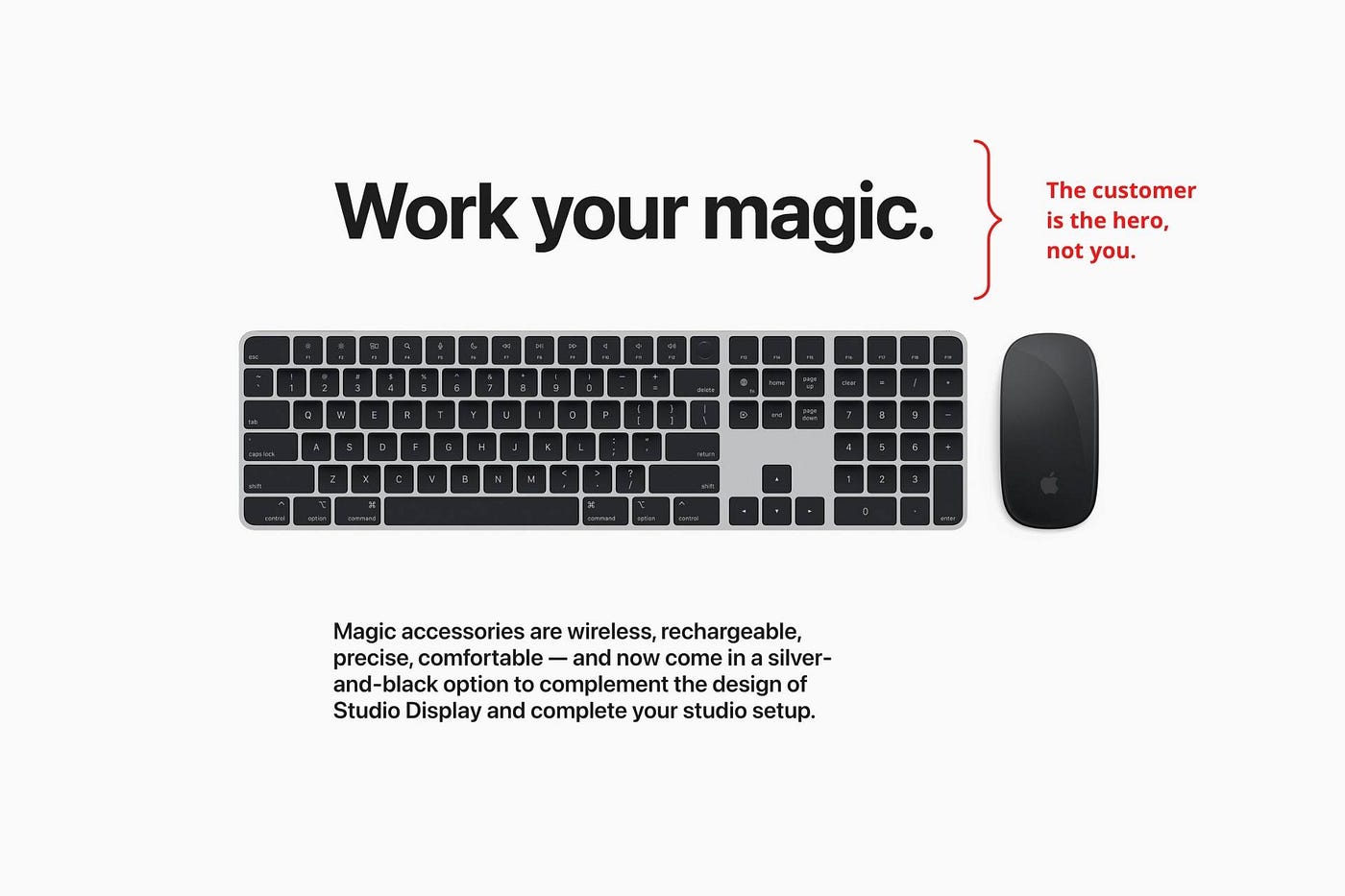

You ≠ Hero, Customer = Hero

Your brand shouldn't be the hero.

Because they'll be using your product or service, your customer should be the hero of your copywriting. With your help, they should feel like they can achieve their goals.

I love how Apple emphasizes what you can do with the machine in this microcopy.

It's divine how they position their tools as sidekicks to help below.

This one takes the cake:

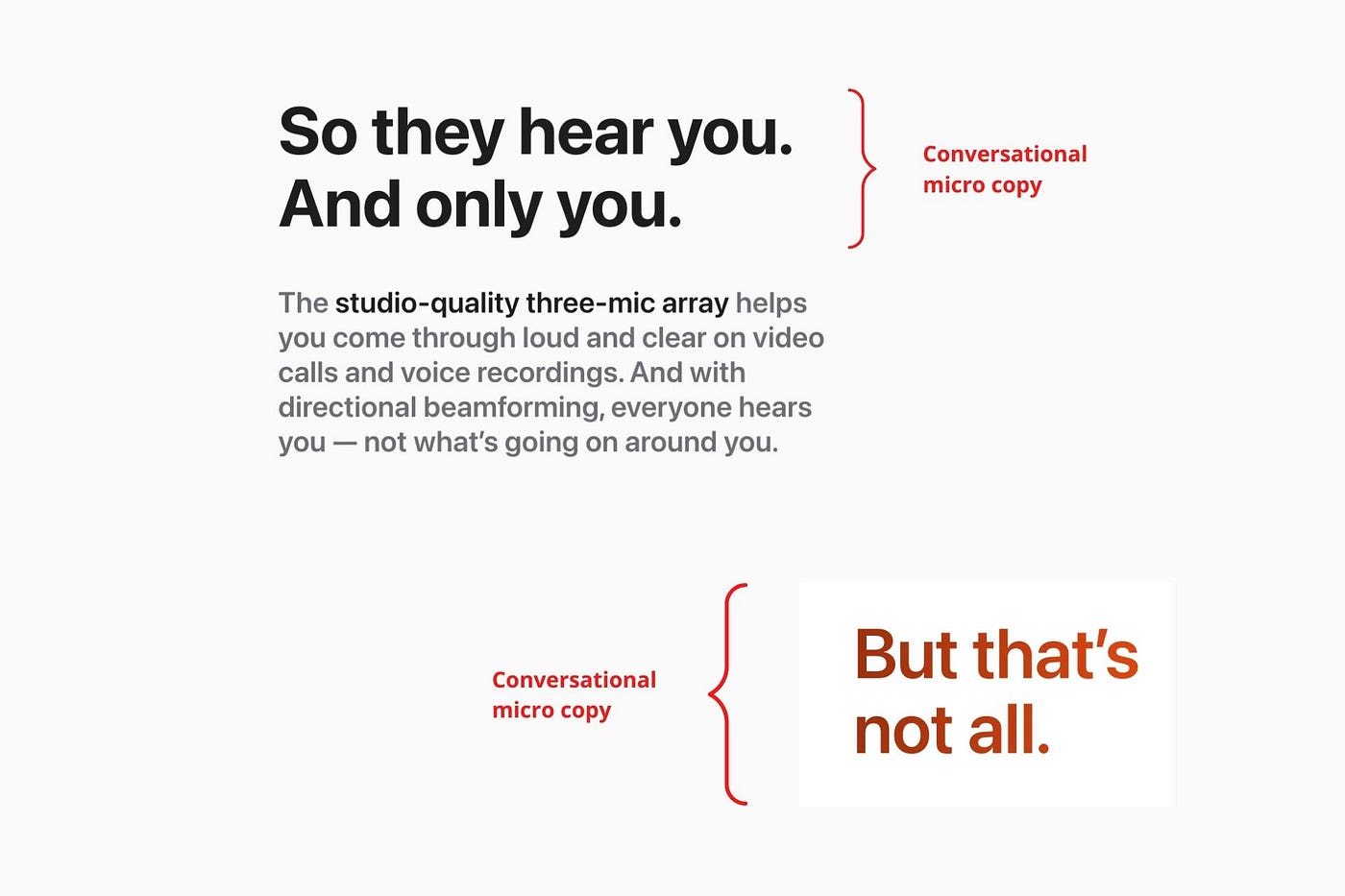

Dialogue-style writing

Conversational copy engages.

Excellent copy Like sharing gum with a friend.

This helps build audience trust.

Apple does this by using natural connecting words like "so" and phrases like "But that's not all."

Snowclone-proof

The mother of all microcopy techniques.

A snowclone uses an existing phrase or sentence to create a new one. The new phrase or sentence uses the same structure but different words.

It’s usually a well know saying like:

To be or not to be.

This becomes a formula:

To _ or not to _.

Copywriters fill in the blanks with cause-related words. Example:

To click or not to click.

Apple turns "survival of the fittest" into "arrival of the fittest."

It's unexpected and surprises the reader.

So this was fun.

But my fun has just begun.

Microcopy is 21st-century poetry.

I came as an Apple fanboy.

I leave as an Apple fanatic.

Now I’m off to find an apple tree.

Cause you know how it goes.

(Apples, trees, etc.)

This post is a summary. Original post available here.

You might also like

INTΞGRITY team

3 years ago

Terms of Service

Effective: August 31, 2022

These Terms of Service ("Terms") govern your access to and use of INTΞGRITY’s (or "we") websites, mobile applications, and other online products and services (collectively, the "Services"). By clicking your assent (e.g. "Continue," "Sign-in," or "Sign-up") or by utilizing our Services, you consent to these Terms, including the mandatory arbitration provision and class action waiver in the Resolving Disputes; Binding Arbitration Section.

Our Privacy Policy describes how we gather and utilize your information, while our Rules detail your duties when utilizing our Services. You agree to be bound by these Terms and our Rules by utilizing our Services. Please refer to our Privacy Statement for details on how we collect, utilize, disclose, and otherwise manage your information.

Please contact us at hello@int3grity.com if you have any queries regarding these Terms or our Services.

Account Details and Responsibilities

You are responsible for your use of the Services and any content you contribute, including compliance with all relevant laws. The Services may host content that is protected by the intellectual property rights of third parties. Please do not copy, post, download, or distribute content without permission.

You must adhere to our Rules when using the Services.

To use any or all of our services, you may need to register for an account. Contribute to the protection of your account. Protect your account's password, and maintain accurate account details. We advise you not to share your password with anyone else.

If you are accepting these Terms and using the Services on behalf of someone else (such as another person or entity), you confirm that you are allowed to do so, and the words "you" or "your" in these Terms refer to that other person or entity.

You must be at least 13 years old to access our services.

If you use the Services to access, collect, or otherwise utilize the personal information of other INTΞGRITY users ("Personal Information"), you agree to comply with all applicable laws. You also undertake not to sell any Personal Information, where "sell" has the meaning ascribed to it by relevant legislation.

For Personal Information you provide to us (as a Newsletter Editor, for example), you represent and warrant that you have lawfully collected the Personal Information and that you or a third party have provided all required notices and obtained all required consents prior to collecting the Personal Information. You further represent and warrant that INTΞGRITY’s use of such Personal Information in accordance with the purposes for which you provided the Personal Information will not violate, misappropriate, or infringe any rights of a third party (including intellectual property rights or privacy rights) or cause us to violate any applicable laws.

The Services' User Content

INTΞGRITY may monitor your conduct and material for compliance with these Terms and our Rules, and reserves the right to remove any content that violates these guidelines.

INTΞGRITY maintains the right to remove or disable content that is accused to violate the intellectual property rights of others, as well as to cancel the accounts of repeat infringers. We respond to notifications of alleged copyright violations if they comply with the law; please report such notices using our Copyright Policy.

Ownership and Rights

You maintain ownership of all content that you submit, upload, or display on or through the Services.

By submitting, posting, or displaying content on or through the Services, unless otherwise agreed in writing, you grant INTΞGRITY a nonexclusive, royalty-free, worldwide, fully paid, and sublicensable license to use, reproduce, modify, adapt, publish, translate, create derivative works from, distribute, publicly perform and display your content and any name, username or likeness provided in connection with your content in all media formats and distribution methods now known or later developed.

INTΞGRITY requires this license because you are the owner of your material, and INTΞGRITY cannot show it across its multiple platforms (mobile, online) without your consent.

This type of license is also required for content distribution throughout our Services. For example, you may publish a piece on INTΞGRITY. It is duplicated as versions on both our website and app, and distributed to many locations on INTΞGRITY, including the homepage and reading lists. A tweak could be that we display a fragment of your work as a preview (rather than the entire post), with attribution. An example of a derivative work might be a list of top authors or quotations on INTΞGRITY that includes chunks of your article, again with full attribution. This license solely applies to our Services and does not grant us permissions outside of our Services.

So long as you comply with these Terms, INTΞGRITY grants you a limited, non-exclusive, personal, and non-transferable license to access and utilize our Services.

Copyright, trademark, and other United States and international laws protect the Services. These Terms do not grant you any right, title, or interest in the Services, the material posted by other users on the Services, or INTΞGRITY’s trademarks, logos, or other brand characteristics.

In addition to the content you submit, post, or display on our Services, we appreciate your feedback, which may include your thoughts, ideas, and suggestions regarding our Services. This input may be used for any reason at our sole discretion and without obligation to you. We may treat your comments as non-confidential.

We reserve the right, at our sole discretion, to discontinue the Services or any of its features. In addition, we reserve the right to impose limits on use and storage, and to remove or restrict the distribution of content on the Services.

Termination

You are allowed to terminate your use of our services at any time. We have the right to stop or cancel your use of the Services with or without notice.

Moving and Processing Information

To enable us to deliver our Services, you accept that we may handle, transfer, and retain information about you in the United States and other countries, where you may not enjoy the same rights and protections as you do under local law.

Indemnification

To the maximum extent permitted by applicable law, you will indemnify, defend, and hold harmless INTΞGRITY, and our officers, directors, agents, partners, and employees (collectively, the "INTΞGRITY Parties"), from and against any losses, liabilities, claims, demands, damages, expenses or costs ("Claims") arising out of or relating to your violation, misappropriation, or infringement of any rights of another (including intellectual property rights or privacy rights). You undertake to promptly notify INTΞGRITY Parties of any third-party Claims, to assist INTΞGRITY Parties in fighting such Claims, and to pay any fees, charges, and expenses connected with defending such Claims (including attorneys' fees). You further agree that, at INTΞGRITY’s sole discretion, the INTΞGRITY Parties will govern the defense or settlement of any third-party Claims.

Disclaimers — Services Provided "As Is"

INTΞGRITY strives to provide you with excellent Services, but there are certain things we cannot guarantee. Utilization of our services is at your own risk. You acknowledge that our Services and any content uploaded or shared by users on the Services are given "as is" and "as available" without explicit or implied warranties of any kind, including warranties of merchantability, fitness for a particular purpose, title, and non-infringement. In addition, INTΞGRITY does not represent or promise that our Services are accurate, comprehensive, dependable, up-to-date, or error-free. No advice or information gained from INTΞGRITY or via the Services shall create any warranty or representation unless expressly set forth in this section. INTΞGRITY may provide information on third-party products, services, activities, or events, or we may permit third parties to make their material and information accessible via our Services (collectively, "Third-Party Content"). We neither control nor endorse any Third-Party Content, nor do we make any claims or warranties about it. Accessing and utilizing Third-Party Content is at your own risk. The disclaimers in this section may not apply to you if they are prohibited in your location.

Limitation of Liability

We do not exclude or limit our obligation to you where it would be unlawful to do so; this includes any liability for the gross negligence, fraud, or willful misconduct of INTΞGRITY or the other INTΞGRITY Parties in providing the Services. In jurisdictions where the foregoing exclusions are not permitted, our liability to you is limited to losses and damages that are reasonably foreseeable as a result of our failure to exercise reasonable care and skill or breach of contract with you. This paragraph does not impact consumer rights that cannot be waived or limited by contract.

In jurisdictions that permit liability exclusions or limits, INTΞGRITY and INTΞGRITY Parties will not be liable for:

(a) Any indirect, consequential, exemplary, incidental, punitive, or extraordinary damages, or any loss of use, data, or profits, based on any legal theory, even if INTΞGRITY or the other INTΞGRITY Parties were advised of the potential of such damages.

(b) Except for the types of liability we cannot limit by law (as described in this section), we limit the total liability of INTΞGRITY and the other INTΞGRITY Parties for any claim arising out of or related to these Terms or our Services, regardless of the form of action, to $100.00 USD.

Arbitration; Resolution of Disputes

We intend to address your concerns without filing a formal lawsuit. Before making a claim against INTΞGRITY, you agree to contact us and attempt to resolve the dispute informally by emailing hello@int3grity.com or by sending certified mail to INTΞGRITY, P.O. JOY, 479 Jessie St, San Francisco, CA 94103. The notice must (a) contain your name, address, email address, and telephone number; (b) identify the nature and grounds of the claim; and (c) detail the relief requested. Our notice to you will be sent to the email address linked with your online account and will contain the information specified in the preceding section. Any party may commence a formal procedure if we are unable to reach a resolution within thirty (30) days of the date of any notice.

Please read the following section carefully because it compels you to arbitrate certain claims and disputes with INTΞGRITY and limits the method in which you can seek redress from us, unless you opt out of arbitration by following the steps provided below. This arbitration provision does not permit class or representative lawsuits or arbitrations. In addition, arbitration prohibits you from filing a lawsuit or having a jury trial.

(a) Absence of Representative Actions You and INTΞGRITY agree that any dispute arising out of or relating to these Terms or our Services is personal to you and INTΞGRITY and will be resolved entirely via individual action, and not by class arbitration, class action, or other representative procedure.

(b) Dispute Arbitration. Except for small claims disputes in which you or INTΞGRITY seeks to bring an individual action in small claims court located in the county where you reside and disputes in which you or INTΞGRITY seeks injunctive or other equitable relief for the alleged infringement or misappropriation of intellectual property, you and INTΞGRITY waive your rights to a jury trial and to have any other dispute arising out of or relating to these Terms or our Services, including claims related to privity of contract, decided by a jury. All Disputes submitted to JAMS shall be decided by confidential, binding arbitration before a single arbitrator. If you are a consumer, you may choose to have the arbitration in your county of residence. A "consumer" is a person who uses the Services for personal, family, or household purposes for the purposes of this provision. You and INTΞGRITY agree that Disputes shall be resolved using the JAMS Streamlined Arbitration Rules and Procedures ("JAMS Rules"). The latest version of the JAMS Rules is accessible on the JAMS website and is incorporated herein by reference. Either you accept and agree that you have read and comprehended the JAMS Rules or you forfeit your right to read the JAMS Rules and any claim that the JAMS Rules are unreasonable or should not apply for any reason.

(c) You and INTΞGRITY agree that these Terms affect interstate commerce and that the enforceability of this provision is subject to the Federal Arbitration Act, 9 U.S.C. 1 et seq. (the "FAA"), to the maximum extent permissible by applicable law. As limited by the FAA, these Terms, and the JAMS Rules, the arbitrator will have sole authority to make all procedural and substantive judgments regarding any Dispute, and to grant any remedy that would otherwise be available in court, including the authority to determine arbitrability. The arbitrator may only conduct an individual arbitration and may not consolidate the claims of more than one party, preside over any sort of class or representative procedure, or preside over any proceeding involving more than one party.

d) The arbitration will permit the discovery or exchange of nonconfidential information pertinent to the Dispute. The arbitrator, INTΞGRITY, and you will maintain the confidentiality of all arbitration proceedings, judgments, and awards, as well as any information gathered, prepared, or presented for the purposes of the arbitration or relating to the Dispute(s) therein. Unless the law specifies otherwise, the arbitrator will have the right to make decisions that protect confidentiality. The duty of confidentiality does not apply where disclosure is required to prepare for or conduct the arbitration hearing on the merits, in connection with a court application for a preliminary remedy, in connection with a judicial challenge to an arbitration award or its enforcement, or where disclosure is otherwise required by law or judicial decision.

e) You and INTΞGRITY agree that for any arbitration you begin, you will pay the filing fee (up to $250 if you are a consumer) and INTΞGRITY will pay the remaining JAMS fees and costs. INTΞGRITY will pay all JAMS fees and costs for any and all arbitrations it initiates. You and INTΞGRITY agree that the state and federal courts of California and the United States located in San Francisco have exclusive jurisdiction over any appeals and the implementation of an arbitration award.

(f) Any Dispute must be filed within one year after the relevant claim arose; otherwise, the Dispute is permanently barred, meaning that neither you nor INTΞGRITY will be able to assert the claim.

(g) You have the right to opt-out of binding arbitration within 30 days of the date you initially accepted the terms of this section by sending an email to hello@int3grity.com. For the opt-out notification to be effective, it must include your full name and address and clearly explain your intent to opt out of binding arbitration. By declining binding arbitration, you consent to the resolution of Disputes in accordance with "Governing Law and Venue" below.

(h) If any portion of this section is found to be unenforceable or unlawful for any reason: (1) the unenforceable or unlawful provision shall be severed from these Terms; (2) the severance of the unenforceable or unlawful provision shall have no effect whatsoever on the remainder of this section or the parties' ability to compel arbitration of any remaining claims on an individual basis pursuant to this section; and (3) to the extent that any claims must therefore proceed on an individual basis, the parties agree to arbitrate those claims on an individual basis. In addition, if it is determined that any portion of this section prohibits an individual claim seeking public injunctive relief, that provision will be null and void to the extent that such relief may be sought outside of arbitration, and the balance of this section will be enforceable.

Statute and Location

These Terms and any dispute that may arise between you and INTΞGRITY are governed by California law, excluding its conflict of law provisions. Any issue between the parties that is not arbitrable or cannot be heard in small claims court will be determined by the state or federal courts of California and the United States, sitting in San Francisco, California.

Some nations have regulations that require agreements to be controlled by the consumer's country's laws. These statutes are not overridden by this paragraph.

Amendments

Periodically, we may make modifications to these Terms. If we make modifications, we will notify you by sending an email to the address connected with your account, providing an in-product message, or amending the date at the top of these Terms. Unless we specify otherwise in our notification, the modified Terms will take effect immediately, and your continued use of our Services after we issue such notice indicates your acceptance of the changes. If you do not accept the updated Terms, you must cease using our services.

Severability

If any section or portion of a provision of these Terms is determined to be unlawful, void, or unenforceable, that provision or part of the provision shall be deemed severable from these Terms and shall not affect the validity and enforceability of the other terms.

Miscellaneous INTΞGRITY’s omission to assert or enforce any right or term of these Terms is not a waiver of such right or provision. These Terms and the terms and policies specified in the Other Terms and Policies that May Apply to You Section constitute the complete agreement between the parties pertaining to the subject matter hereof and supersede all prior agreements, statements, and understandings between the parties. The section headings in these Terms are for convenience only and have no legal or contractual significance. The use of the word "including" shall be taken to mean "including without limitation." Unless otherwise specified, these Terms are intended solely for the benefit of the parties and are not intended to confer third-party beneficiary rights on any other person or entity. You consent to the use of electronic means for our communications and transactions.

Caleb Naysmith

3 years ago Draft

A Myth: Decentralization

It’s simply not conceivable, or at least not credible.

One of the most touted selling points of Crypto has always been this grandiose idea of decentralization. Bitcoin first arose in 2009 after the housing crisis and subsequent crash that came with it. It aimed to solve this supposed issue of centralization. Nobody “owns” Bitcoin in theory, so the idea then goes that it won’t be subject to the same downfalls that led to the 2008 crash or similarly speculative events that led to the 2008 disaster. The issue is the banks, not the human nature associated with the greedy individuals running them.

Subsequent blockchains have attempted to fix many of the issues of Bitcoin by increasing capacity, decreasing the costs and processing times associated with Bitcoin, and expanding what can be done with their blockchains. Since nobody owns Bitcoin, it hasn’t really been able to be expanded on. You have people like Vitalk Buterin, however, that actively work on Ethereum though.

The leap from Bitcoin to Ethereum was a massive leap toward centralization, and the trend has only gotten worse. In fact, crypto has since become almost exclusively centralized in recent years.

Decentralization is only good in theory

It’s a good idea. In fact, it’s a wonderful idea. However, like other utopian societies, individuals misjudge human nature and greed. In a perfect world, decentralization would certainly be a wonderful idea because sure, people may function as their own banks, move payments immediately, remain anonymous, and so on. However, underneath this are a couple issues:

You can already send money instantaneously today.

They are not decentralized.

Decentralization is a bad idea.

Being your own bank is a stupid move.

Let’s break these down. Some are quite simple, but lets have a look.

Sending money right away

One thing with crypto is the idea that you can send payments instantly. This has pretty much been entirely solved in current times. You can transmit significant sums of money instantly for a nominal cost and it’s instantaneously cleared. Venmo was launched in 2009 and has since increased to prominence, and currently is on most people's phones. I can directly send ANY amount of money quickly from my bank to another person's Venmo account.

Comparing that with ETH and Bitcoin, Venmo wins all around. I can send money to someone for free instantly in dollars and the only fee paid is optional depending on when you want it.

Both Bitcoin and Ethereum are subject to demand. If the blockchains have a lot of people trying to process transactions fee’s go up, and the time that it takes to receive your crypto takes longer. When Ethereum gets bad, people have reported spending several thousand of dollars on just 1 transaction.

These transactions take place via “miners” bundling and confirming transactions, then recording them on the blockchain to confirm that the transaction did indeed happen. They charge fees to do this and are also paid in Bitcoin/ETH. When a transaction is confirmed, it's then sent to the other users wallet. This within itself is subject to lots of controversy because each transaction needs to be confirmed 6 times, this takes massive amounts of power, and most of the power is wasted because this is an adversarial system in which the person that mines the transaction gets paid, and everyone else is out of luck. Also, these could theoretically be subject to a “51% attack” in which anyone with over 51% of the mining hash rate could effectively control all of the transactions, and reverse transactions while keeping the BTC resulting in “double spending”.

There are tons of other issues with this, but essentially it means: They rely on these third parties to confirm the transactions. Without people confirming these transactions, Bitcoin stalls completely, and if anyone becomes too dominant they can effectively control bitcoin.

Not to mention, these transactions are in Bitcoin and ETH, not dollars. So, you need to convert them to dollars still, and that's several more transactions, and likely to take several days anyway as the centralized exchange needs to send you the money by traditional methods.

They are not distributed

That takes me to the following point. This isn’t decentralized, at all. Bitcoin is the closest it gets because Satoshi basically closed it to new upgrades, although its still subject to:

Whales

Miners

It’s vital to realize that these are often the same folks. While whales aren’t centralized entities typically, they can considerably effect the price and outcome of Bitcoin. If the largest wallets holding as much as 1 million BTC were to sell, it’d effectively collapse the price perhaps beyond repair. However, Bitcoin can and is pretty much controlled by the miners. Further, Bitcoin is more like an oligarchy than decentralized. It’s been effectively used to make the rich richer, and both the mining and price is impacted by the rich. The overwhelming minority of those actually using it are retail investors. The retail investors are basically never the ones generating money from it either.

As far as ETH and other cryptos go, there is realistically 0 case for them being decentralized. Vitalik could not only kill it but even walking away from it would likely lead to a significant decline. It has tons of issues right now that Vitalik has promised to fix with the eventual Ethereum 2.0., and stepping away from it wouldn’t help.

Most tokens as well are generally tied to some promise of future developments and creators. The same is true for most NFT projects. The reason 99% of crypto and NFT projects fail is because they failed to deliver on various promises or bad dev teams, or poor innovation, or the founders just straight up stole from everyone. I could go more in-depth than this but go find any project and if there is a dev team, company, or person tied to it then it's likely, not decentralized. The success of that project is directly tied to the dev team, and if they wanted to, most hold large wallets and could sell it all off effectively killing the project. Not to mention, any crypto project that doesn’t have a locked contract can 100% be completely rugged and they can run off with all of the money.

Decentralization is undesirable

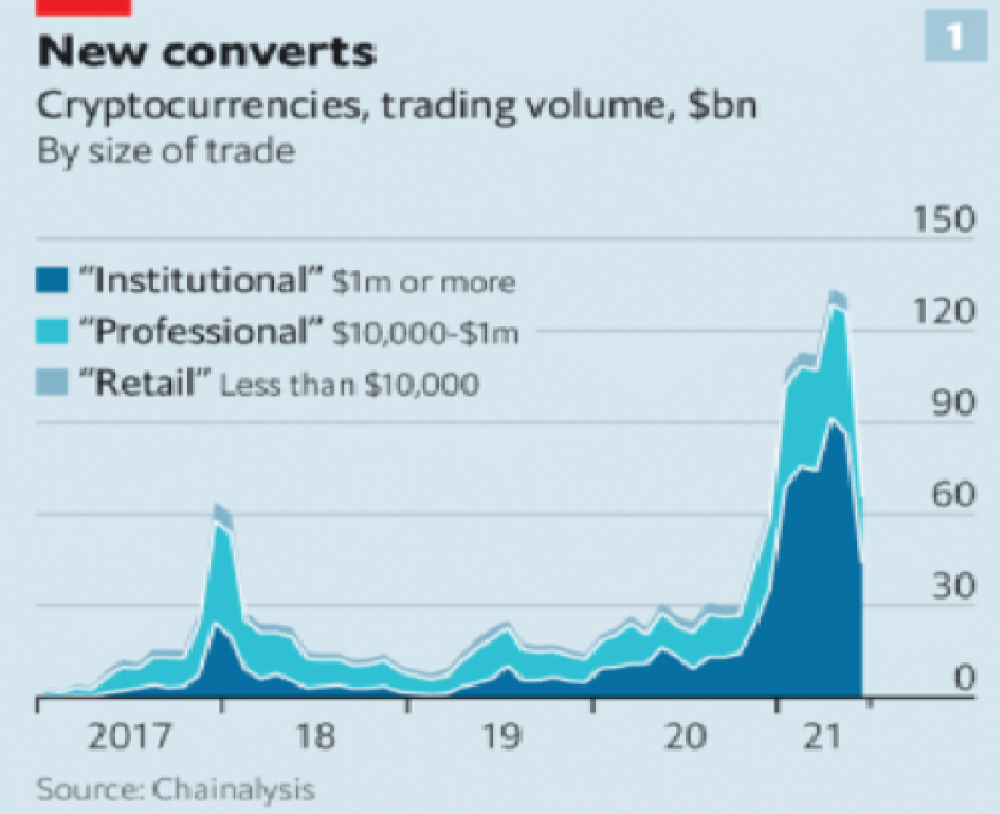

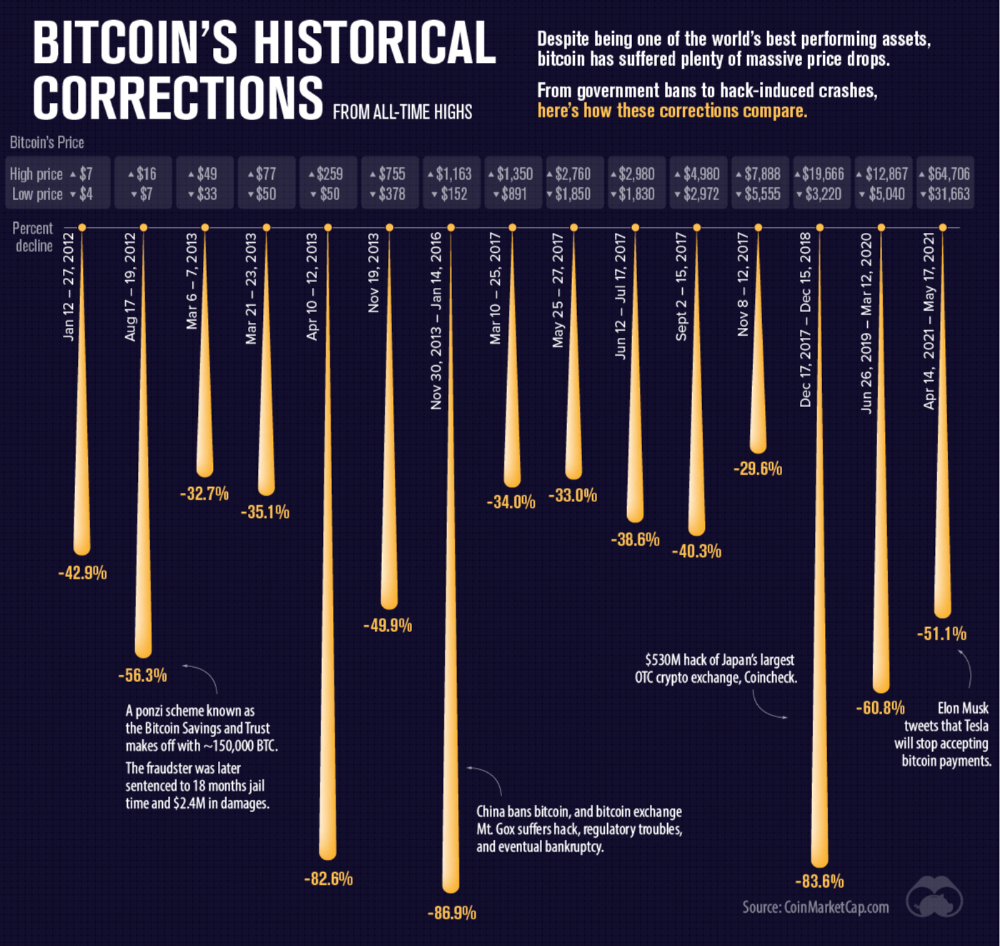

Even if they were decentralized then it would not be a good thing. The graphic above indicates this is effectively a rich person’s unregulated playground… so it’s exactly like… the very issue it tried to solve?

Not to mention, it’s supposedly meant to prevent things like 2008, but is regularly subjected to 50–90% drawdowns in value? Back when Bitcoin was only known in niche parts of the dark web and illegal markets, it would regularly drop as much as 90% and has a long history of massive drawdowns.

The majority of crypto is blatant scams, and ALL of crypto is a “zero” or “negative” sum game in that it relies on the next person buying for people to make money. This is not a good thing. This has yet to solve any issues around what caused the 2008 crisis. Rather, it seemingly amplified all of the bad parts of it actually. Crypto is the ultimate speculative asset and realistically has no valuation metric. People invest in Apple because it has revenue and cash on hand. People invest in crypto purely for speculation. The lack of regulation or accountability means this is amplified to the most extreme degree where anything goes: Fraud, deception, pump and dumps, scams, etc. This results in a pure speculative madhouse where, unsurprisingly, only the rich win. Not only that but the deck is massively stacked in against the everyday investor because you can’t do a pump and dump without money.

At the heart of all of this is still the same issues: greed and human nature. However, in setting out to solve the issues that allowed 2008 to happen, they made something that literally took all of the bad parts of 2008 and then amplified it. 2008, similarly, was due to greed and human nature but was allowed to happen due to lack of oversite, rich people's excessive leverage over the poor, and excessive speculation. Crypto trades SOLELY on human emotion, has 0 oversite, is pure speculation, and the power dynamic is just as bad or worse.

Why should each individual be their own bank?

This is the last one, and it's short and basic. Why do we want people functioning as their own bank? Everything we do relies on another person. Without the internet, and internet providers there is no crypto. We don’t have people functioning as their own home and car manufacturers or internet service providers. Sure, you might specialize in some of these things, but masquerading as your own bank is a horrible idea.

I am not in the banking industry so I don’t know all the issues with banking. Most people aren’t in banking or crypto, so they don’t know the ENDLESS scams associated with it, and they are bound to lose their money eventually.

If you appreciate this article and want to read more from me and authors like me, without any limits, consider buying me a coffee: buymeacoffee.com/calebnaysmith

Ben Carlson

3 years ago

Bear market duration and how to invest during one

Bear markets don't last forever, but that's hard to remember. Jamie Cullen's illustration

A bear market is a 20% decline from peak to trough in stock prices.

The S&P 500 was down 24% from its January highs at its low point this year. Bear market.

The U.S. stock market has had 13 bear markets since WWII (including the current one). Previous 12 bear markets averaged –32.7% losses. From peak to trough, the stock market averaged 12 months. The average time from bottom to peak was 21 months.

In the past seven decades, a bear market roundtrip to breakeven has averaged less than three years.

Long-term averages can vary widely, as with all historical market data. Investors can learn from past market crashes.

Historical bear markets offer lessons.

Bear market duration

A bear market can cost investors money and time. Most of the pain comes from stock market declines, but bear markets can be long.

Here are the longest U.S. stock bear markets since World war 2:

Stock market crashes can make it difficult to break even. After the 2008 financial crisis, the stock market took 4.5 years to recover. After the dotcom bubble burst, it took seven years to break even.

The longer you're underwater in the market, the more suffering you'll experience, according to research. Suffering can lead to selling at the wrong time.

Bear markets require patience because stocks can take a long time to recover.

Stock crash recovery

Bear markets can end quickly. The Corona Crash in early 2020 is an example.

The S&P 500 fell 34% in 23 trading sessions, the fastest bear market from a high in 90 years. The entire crash lasted one month. Stocks broke even six months after bottoming. Stocks rose 100% from those lows in 15 months.

Seven bear markets have lasted two years or less since 1945.

The 2020 recovery was an outlier, but four other bear markets have made investors whole within 18 months.

During a bear market, you don't know if it will end quickly or feel like death by a thousand cuts.

Recessions vs. bear markets

Many people believe the U.S. economy is in or heading for a recession.

I agree. Four-decade high inflation. Since 1945, inflation has exceeded 5% nine times. Each inflationary spike caused a recession. Only slowing economic demand seems to stop price spikes.

This could happen again. Stocks seem to be pricing in a recession.

Recessions almost always cause a bear market, but a bear market doesn't always equal a recession. In 1946, the stock market fell 27% without a recession in sight. Without an economic slowdown, the stock market fell 22% in 1966. Black Monday in 1987 was the most famous stock market crash without a recession. Stocks fell 30% in less than a week. Many believed the stock market signaled a depression. The crash caused no slowdown.

Economic cycles are hard to predict. Even Wall Street makes mistakes.

Bears vs. bulls

Bear markets for U.S. stocks always end. Every stock market crash in U.S. history has been followed by new all-time highs.

How should investors view the recession? Investing risk is subjective.

You don't have as long to wait out a bear market if you're retired or nearing retirement. Diversification and liquidity help investors with limited time or income. Cash and short-term bonds drag down long-term returns but can ensure short-term spending.

Young people with years or decades ahead of them should view this bear market as an opportunity. Stock market crashes are good for net savers in the future. They let you buy cheap stocks with high dividend yields.

You need discipline, patience, and planning to buy stocks when it doesn't feel right.

Bear markets aren't fun because no one likes seeing their portfolio fall. But stock market downturns are a feature, not a bug. If stocks never crashed, they wouldn't offer such great long-term returns.