More on Web3 & Crypto

Yusuf Ibrahim

4 years ago

How to sell 10,000 NFTs on OpenSea for FREE (Puppeteer/NodeJS)

So you've finished your NFT collection and are ready to sell it. Except you can't figure out how to mint them! Not sure about smart contracts or want to avoid rising gas prices. You've tried and failed with apps like Mini mouse macro, and you're not familiar with Selenium/Python. Worry no more, NodeJS and Puppeteer have arrived!

Learn how to automatically post and sell all 1000 of my AI-generated word NFTs (Nakahana) on OpenSea for FREE!

My NFT project — Nakahana |

NOTE: Only NFTs on the Polygon blockchain can be sold for free; Ethereum requires an initiation charge. NFTs can still be bought with (wrapped) ETH.

If you want to go right into the code, here's the GitHub link: https://github.com/Yusu-f/nftuploader

Let's start with the knowledge and tools you'll need.

What you should know

You must be able to write and run simple NodeJS programs. You must also know how to utilize a Metamask wallet.

Tools needed

- NodeJS. You'll need NodeJs to run the script and NPM to install the dependencies.

- Puppeteer – Use Puppeteer to automate your browser and go to sleep while your computer works.

- Metamask – Create a crypto wallet and sign transactions using Metamask (free). You may learn how to utilize Metamask here.

- Chrome – Puppeteer supports Chrome.

Let's get started now!

Starting Out

Clone Github Repo to your local machine. Make sure that NodeJS, Chrome, and Metamask are all installed and working. Navigate to the project folder and execute npm install. This installs all requirements.

Replace the “extension path” variable with the Metamask chrome extension path. Read this tutorial to find the path.

Substitute an array containing your NFT names and metadata for the “arr” variable and the “collection_name” variable with your collection’s name.

Run the script.

After that, run node nftuploader.js.

Open a new chrome instance (not chromium) and Metamask in it. Import your Opensea wallet using your Secret Recovery Phrase or create a new one and link it. The script will be unable to continue after this but don’t worry, it’s all part of the plan.

Next steps

Open your terminal again and copy the route that starts with “ws”, e.g. “ws:/localhost:53634/devtools/browser/c07cb303-c84d-430d-af06-dd599cf2a94f”. Replace the path in the connect function of the nftuploader.js script.

const browser = await puppeteer.connect({ browserWSEndpoint: "ws://localhost:58533/devtools/browser/d09307b4-7a75-40f6-8dff-07a71bfff9b3", defaultViewport: null });

Rerun node nftuploader.js. A second tab should open in THE SAME chrome instance, navigating to your Opensea collection. Your NFTs should now start uploading one after the other! If any errors occur, the NFTs and errors are logged in an errors.log file.

Error Handling

The errors.log file should show the name of the NFTs and the error type. The script has been changed to allow you to simply check if an NFT has already been posted. Simply set the “searchBeforeUpload” setting to true.

We're done!

If you liked it, you can buy one of my NFTs! If you have any concerns or would need a feature added, please let me know.

Thank you to everyone who has read and liked. I never expected it to be so popular.

Ann

3 years ago

These new DeFi protocols are just amazing.

I've never seen this before.

Focus on native crypto development, not price activity or turmoil.

CT is boring now. Either folks are still angry about FTX or they're distracted by AI. Plus, it's year-end, and people rest for the holidays. 2022 was rough.

So DeFi fans can get inspired by something fresh. Who's building? As I read the Defillama daily roundup, many updates are still on FTX and its contagion.

I've used the same method on their Raises page. Not much happened :(. Maybe my high standards are to fault, but the business may be resting. OK.

The handful I locate might last us till the end of the year. (If another big blowup occurs.)

Hashflow

An on-chain monitor account I follow reported a huge transfer of $HFT from Binance to Jump Tradings.

I was intrigued. Stacking? So I checked and discovered out the project was launched through Binance Launchpad, which has introduced many 100x tokens (although momentarily) in the past, such as GALA and STEPN.

Hashflow appears to be pumpable. Binance launchpad, VC backers, CEX listing immediately. What's the protocol?

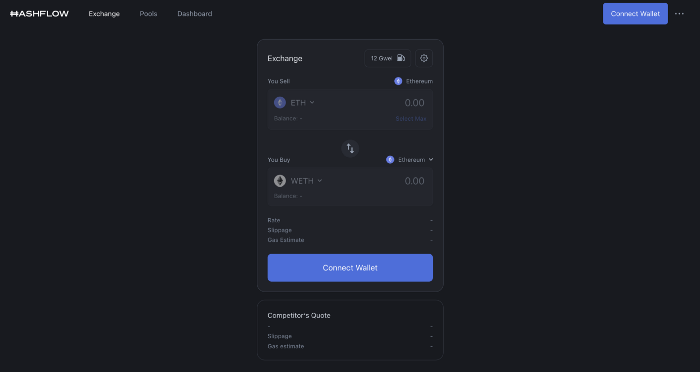

Hasflow is intriguing and timely, I discovered. After the FTX collapse, people looked more at DEXs.

Hashflow is a decentralized exchange that connects traders with professional market makers, according to its Binance launchpad description. Post-FTX, market makers lost their MM-ing chance with the collapse of the world's third-largest exchange. Jump and Wintermute back them?

Why is that the case? Hashflow doesn't use bonding curves like standard AMM. On AMMs, you pay more for the following trade because the prior trade reduces liquidity (supply and demand). With market maker quotations, you get a CEX-like experience (fewer coins in the pool, higher price). Stable prices, no MEV frontrunning.

Hashflow is innovative because...

DEXs gained from the FTX crash, but let's be honest: DEXs aren't as good as CEXs. Hashflow will change this.

Hashflow offers MEV protection, which major dealers seek in DEXs. You can trade large amounts without front running and sandwich assaults.

Hasflow offers a user-friendly swapping platform besides MEV. Any chain can be traded smoothly. This is a benefit because DEXs lag CEXs in UX.

Status, timeline:

Wintermute wrote in August that prominent market makers will work on Hashflow. Binance launched a month-long farming session in December. Jump probably participated in this initial sell, therefore we witnessed a significant transfer after the introduction.

Binance began trading HFT token on November 11 (the day FTX imploded). coincidence?)

Tokens are used for community rewards. Perhaps they'd copy dYdX. (Airdrop?). Read their documents about their future plans. Tokenomics doesn't impress me. Governance, rewards, and NFT.

Their stat page details their activity. First came Ethereum, then Arbitrum. For a new protocol in a bear market, they handled a lot of unique users daily.

It’s interesting to see their future. Will they be thriving? Not only against DEXs, but also among the CEXs too.

STFX

I forget how I found STFX. Possibly a Twitter thread concerning Arbitrum applications. STFX was the only new protocol I found interesting.

STFX is a new concept and trader problem-solver. I've never seen this protocol.

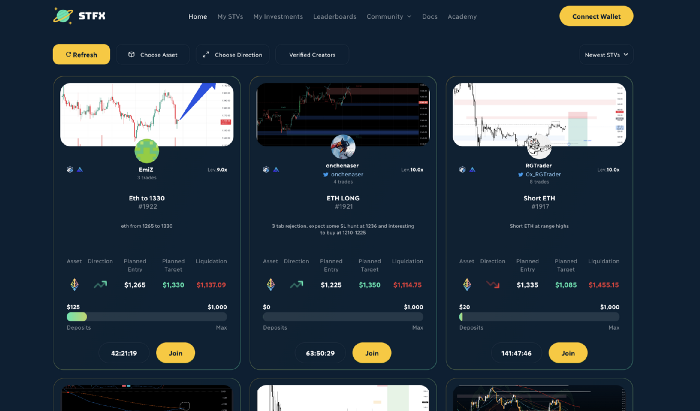

STFX allows you copy trades. You give someone your money to trade for you.

It's a marketplace. Traders are everywhere. You put your entry, exit, liquidation point, and trading theory. Twitter has a verification system for socials. Leaderboards display your trading skill.

This service could be popular. Staying disciplined is the hardest part of trading. Sometimes you take-profit too early or too late, or sell at a loss when an asset dumps, then it soon recovers (often happens in crypto.) It's hard to stick to entry-exit and liquidation plans.

What if you could hire someone to run your trade for a little commission? Set-and-forget.

Trading money isn't easy. Trust how? How do you know they won't steal your money?

Smart contracts.

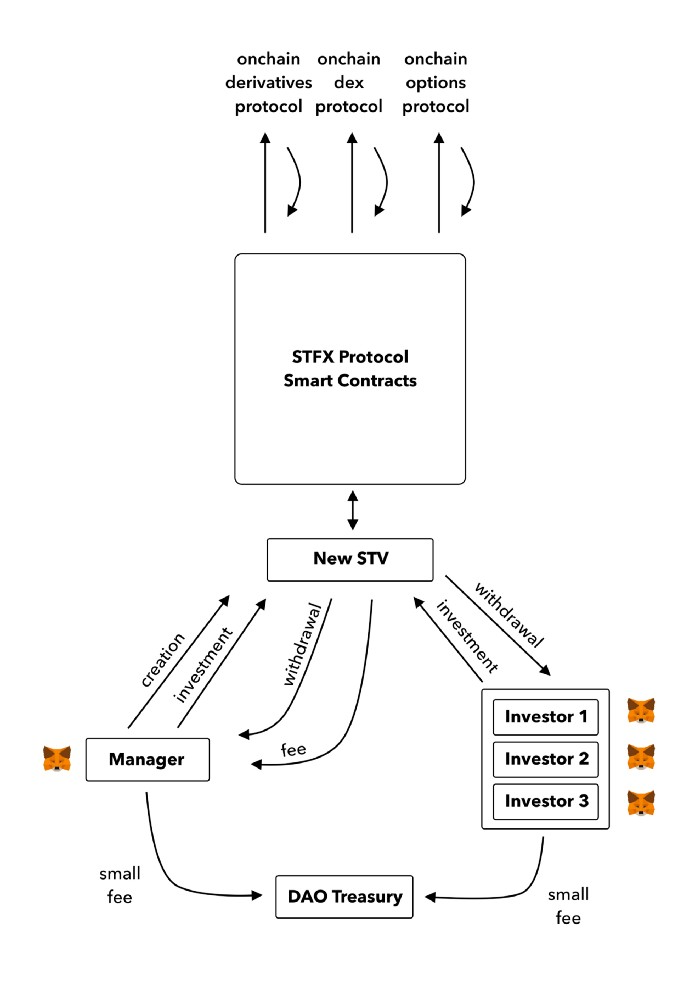

STFX's trader is a vault maker/manager. One trade=one vault. User sets long/short, entrance, exit, and liquidation point. Anyone who agrees can exchange instantly. The smart contract will keep the fund during the trade and limit the manager's actions.

Here's STFX's transaction flow.

Managers and the treasury receive fees. It's a sustainable business strategy that benefits everyone.

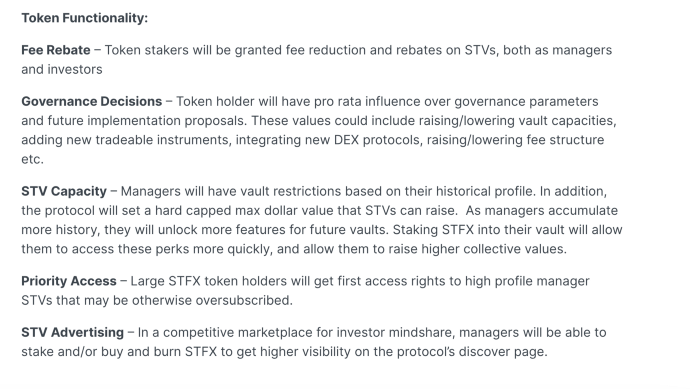

I'm impressed by $STFX's planned use. Brilliant priority access. A crypto dealer opens a vault here. Many would join. STFX tokens offer VIP access over those without tokens.

STFX provides short-term trading, which is mind-blowing to me. I agree with their platform's purpose. Crypto market pricing actions foster short-termism. When you trade, the turnover could be larger than long-term holding or trading. 2017 BTC buyers waited 5 years to complete their holdings.

STFX teams simply adapted. Volatility aids trading.

All things about STFX scream Degen. The protocol fully embraces the degen nature of some, if not most, crypto natives.

An enjoyable dApp. Leaderboards are fun for reputation-building. FLEXING COMPETITIONS. You can join for as low as $10. STFX uses Arbitrum, therefore gas costs are low. Alpha procedure completes the degen feeling.

Despite looking like they don't take themselves seriously, I sense a strong business plan below. There is a real demand for the solution STFX offers.

Onchain Wizard

3 years ago

Three Arrows Capital & Celsius Updates

I read 1k+ page 3AC liquidation documentation so you don't have to. Also sharing revised Celsius recovery plans.

3AC's liquidation documents:

Someone disclosed 3AC liquidation records in the BVI courts recently. I'll discuss the leak's timeline and other highlights.

Three Arrows Capital began trading traditional currencies in emerging markets in 2012. They switched to equities and crypto, then purely crypto in 2018.

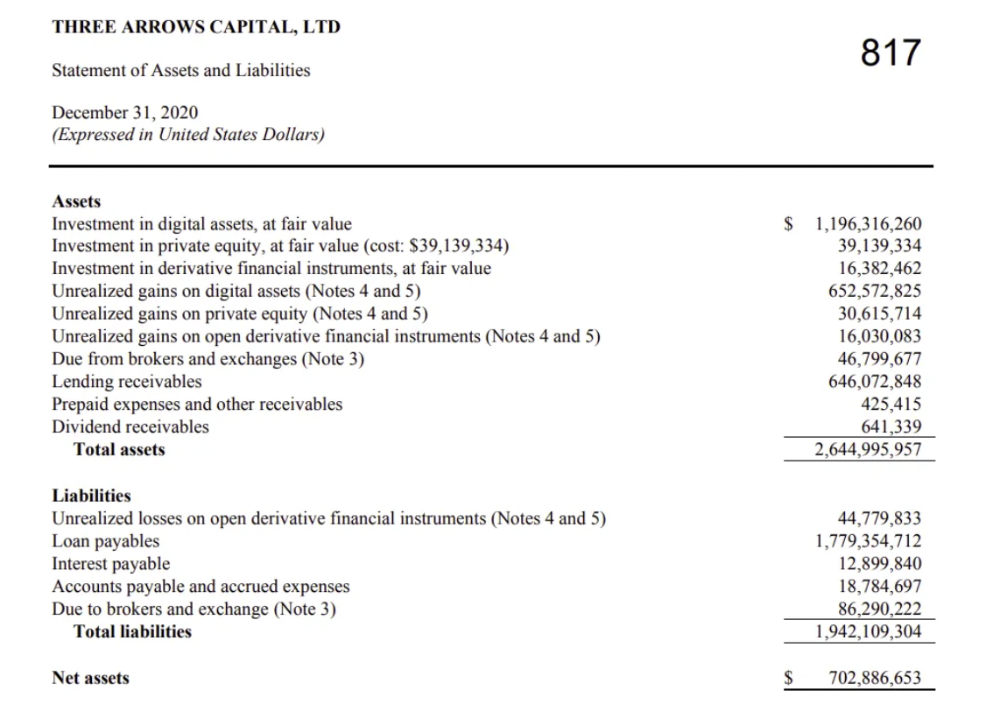

By 2020, the firm had $703mm in net assets and $1.8bn in loans (these guys really like debt).

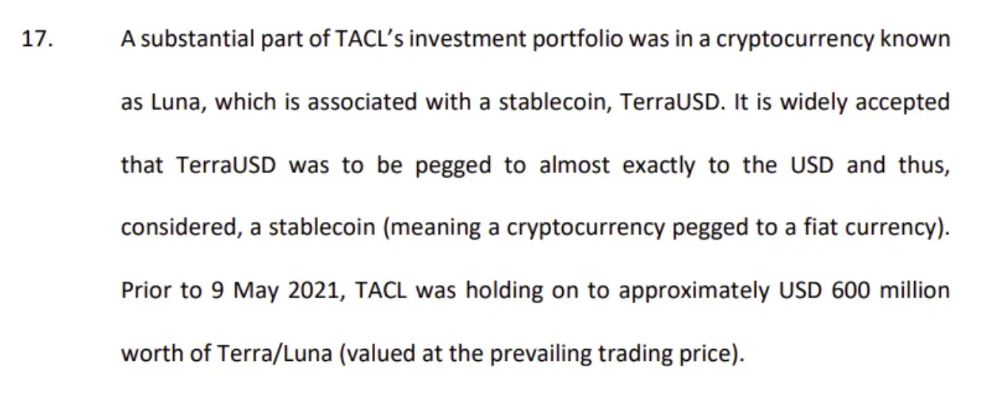

The firm's net assets under control reached $3bn in April 2022, according to the filings. 3AC had $600mm of LUNA/UST exposure before May 9th 2022, which put them over.

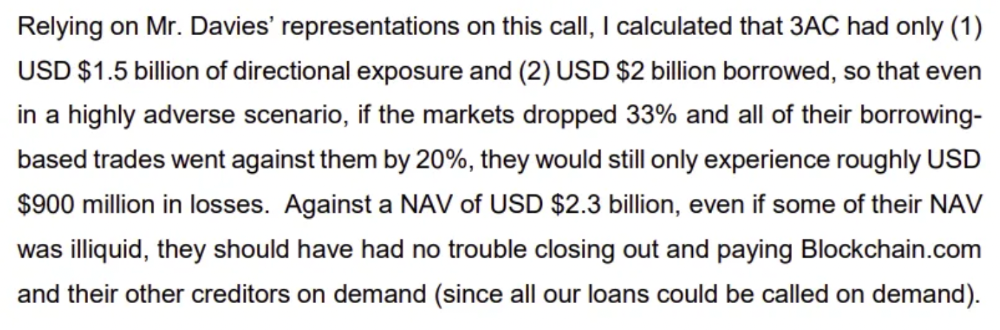

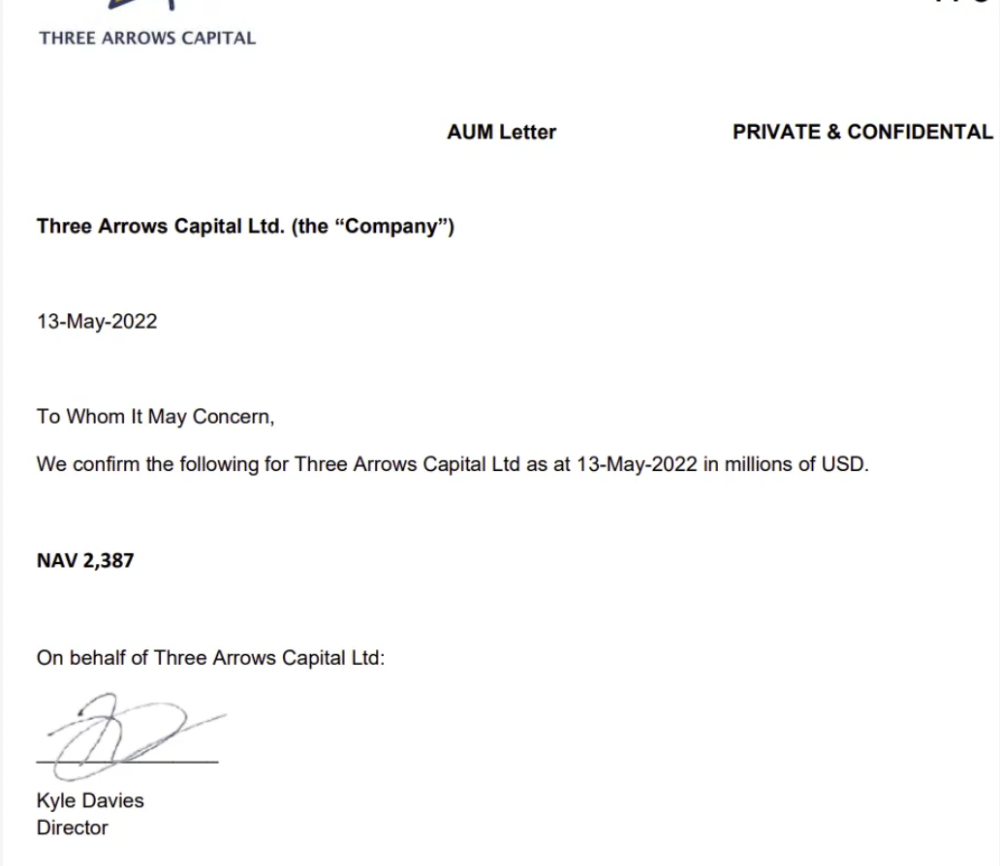

LUNA and UST go to zero quickly (I wrote about the mechanics of the blowup here). Kyle Davies, 3AC co-founder, told Blockchain.com on May 13 that they have $2.4bn in assets and $2.3bn NAV vs. $2bn in borrowings. As BTC and ETH plunged 33% and 50%, the company became insolvent by mid-2022.

3AC sent $32mm to Tai Ping Shen, a Cayman Islands business owned by Su Zhu and Davies' partner, Kelly Kaili Chen (who knows what is going on here).

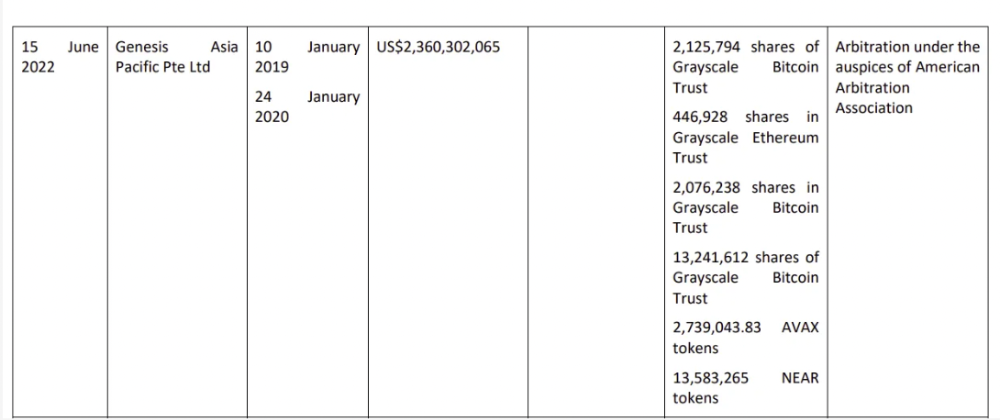

3AC had borrowed over $3.5bn in notional principle, with Genesis ($2.4bn) and Voyager ($650mm) having the most exposure.

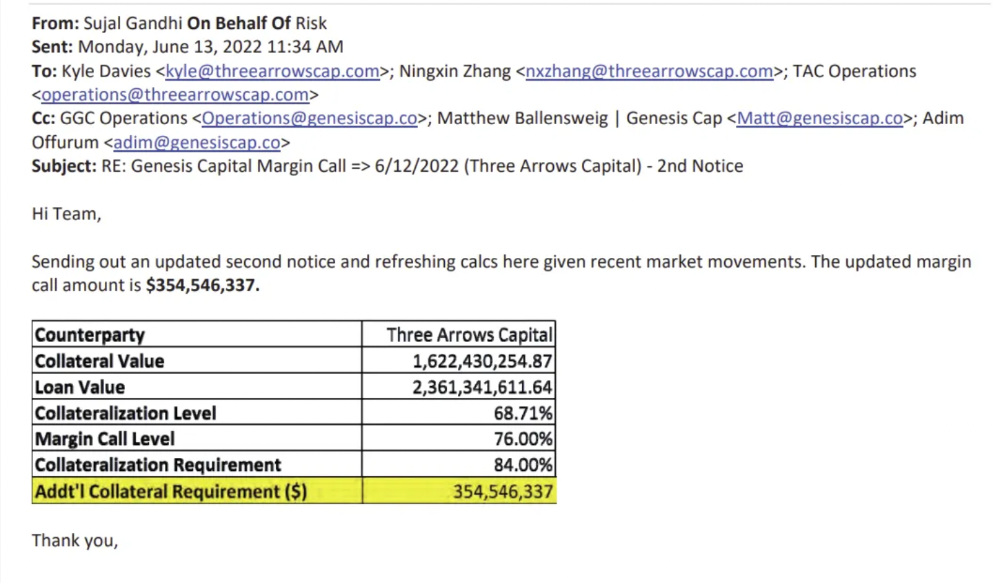

Genesis demanded $355mm in further collateral in June.

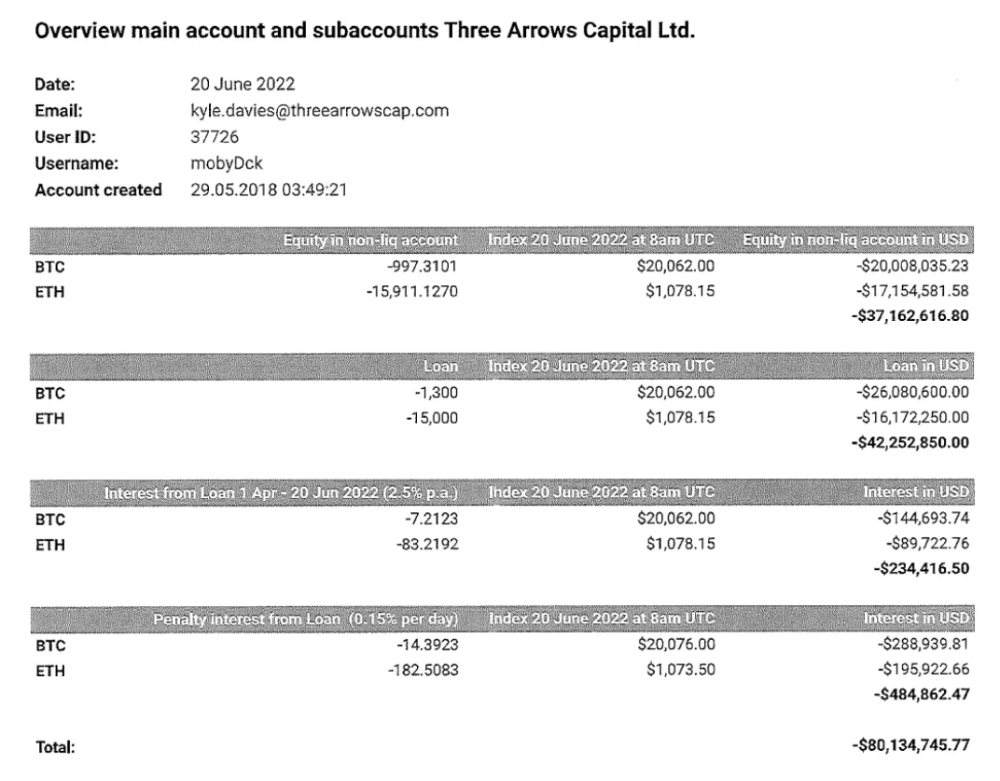

Deribit (another 3AC investment) called for $80 million in mid-June.

Even in mid-June, the corporation was trying to borrow more money to stay afloat. They approached Genesis for another $125mm loan (to pay another lender) and HODLnauts for BTC & ETH loans.

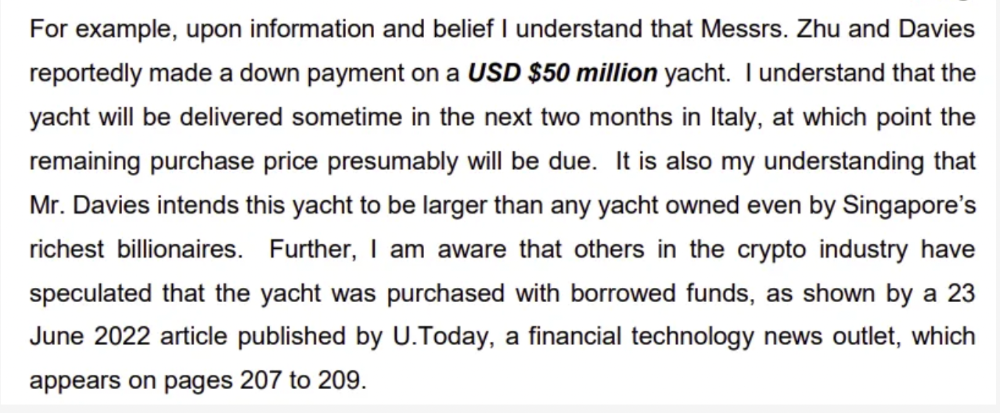

Pretty crazy. 3AC founders used borrowed money to buy a $50 million boat, according to the leak.

Su requesting for $5m + Chen Kaili Kelly asserting they loaned $65m unsecured to 3AC are identified as creditors.

Celsius:

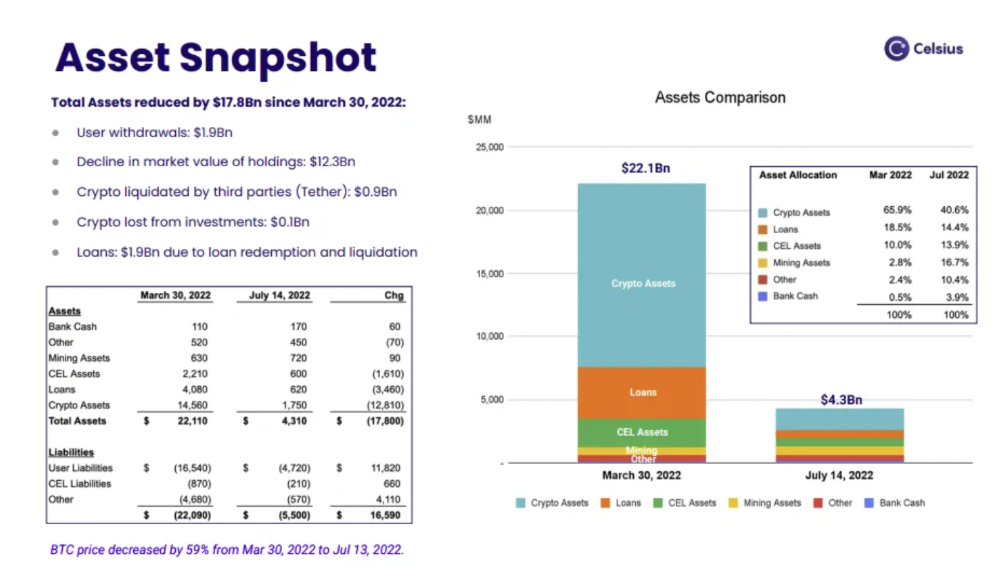

This bankruptcy presentation shows the Celsius breakdown from March to July 14, 2022. From $22bn to $4bn, crypto assets plummeted from $14.6bn to $1.8bn (ouch). $16.5bn in user liabilities dropped to $4.72bn.

In my recent post, I examined if "forced selling" is over, with Celsius' crypto assets being a major overhang. In this presentation, it looks that Chapter 11 will provide clients the opportunity to accept cash at a discount or remain long crypto. Provided that a fresh source of money is unlikely to enter the Celsius situation, cash at a discount or crypto given to customers will likely remain a near-term market risk - cash at a discount will likely come from selling crypto assets, while customers who receive crypto could sell at any time. I'll share any Celsius updates I find.

Conclusion

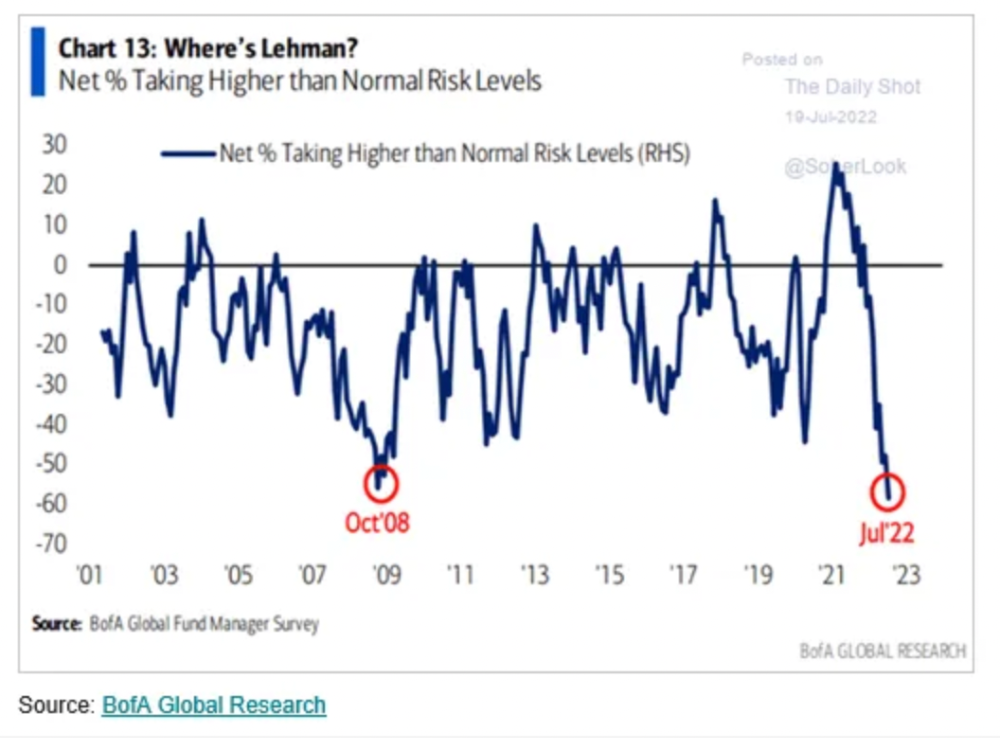

Only Celsius and the Mt Gox BTC unlock remain as forced selling catalysts. While everything went through a "relief" pump, with ETH up 75% from the bottom and numerous alts multiples higher, there are still macro dangers to equities + risk assets. There's a lot of wealth waiting to be deployed in crypto ($153bn in stables), but fund managers are risk apprehensive (lower than 2008 levels).

We're hopefully over crypto's "bottom," with peak anxiety and forced selling behind us, but we may chop around.

To see the full article, click here.

You might also like

Thomas Tcheudjio

3 years ago

If you don't crush these 3 metrics, skip the Series A.

I recently wrote about getting VCs excited about Marketplace start-ups. SaaS founders became envious!

Understanding how people wire tens of millions is the only Series A hack I recommend.

Few people understand the intellectual process behind investing.

VC is risk management.

Series A-focused VCs must cover two risks.

1. Market risk

You need a large market to cross a threshold beyond which you can build defensibilities. Series A VCs underwrite market risk.

They must see you have reached product-market fit (PMF) in a large total addressable market (TAM).

2. Execution risk

When evaluating your growth engine's blitzscaling ability, execution risk arises.

When investors remove operational uncertainty, they profit.

Series A VCs like businesses with derisked revenue streams. Don't raise unless you have a predictable model, pipeline, and growth.

Please beat these 3 metrics before Series A:

Achieve $1.5m ARR in 12-24 months (Market risk)

Above 100% Net Dollar Retention. (Market danger)

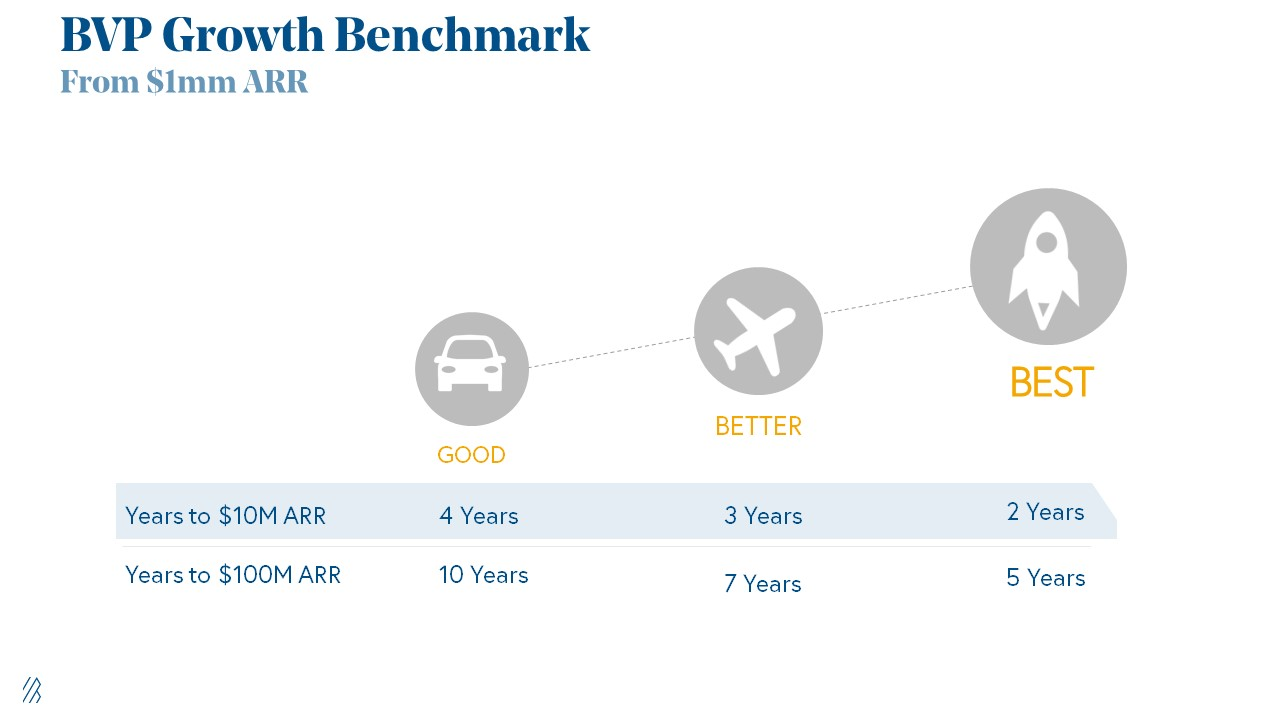

Lead Velocity Rate supporting $10m ARR in 2–4 years (Execution risk)

Hit the 3 and you'll raise $10M in 4 months. Discussing 2/3 may take 6–7 months.

If none, don't bother raising and focus on becoming a capital-efficient business (Topics for other posts).

Let's examine these 3 metrics for the brave ones.

1. Lead Velocity Rate supporting €$10m ARR in 2 to 4 years

Last because it's the least discussed. LVR is the most reliable data when evaluating a growth engine, in my opinion.

SaaS allows you to see the future.

Monthly Sales and Sales Pipelines, two predictive KPIs, have poor data quality. Both are lagging indicators, and minor changes can cause huge modeling differences.

Analysts and Associates will trash your forecasts if they're based only on Monthly Sales and Sales Pipeline.

LVR, defined as month-over-month growth in qualified leads, is rock-solid. There's no lag. You can See The Future if you use Qualified Leads and a consistent formula and process to qualify them.

With this metric in your hand, scaling your company turns into an execution play on which VCs are able to perform calculations risk.

2. Above-100% Net Dollar Retention.

Net Dollar Retention is a better-known SaaS health metric than LVR.

Net Dollar Retention measures a SaaS company's ability to retain and upsell customers. Ask what $1 of net new customer spend will be worth in years n+1, n+2, etc.

Depending on the business model, SaaS businesses can increase their share of customers' wallets by increasing users, selling them more products in SaaS-enabled marketplaces, other add-ons, and renewing them at higher price tiers.

If a SaaS company's annualized Net Dollar Retention is less than 75%, there's a problem with the business.

Slack's ARR chart (below) shows how powerful Net Retention is. Layer chart shows how existing customer revenue grows. Slack's S1 shows 171% Net Dollar Retention for 2017–2019.

Slack S-1

3. $1.5m ARR in the last 12-24 months.

According to Point 9, $0.5m-4m in ARR is needed to raise a $5–12m Series A round.

Target at least what you raised in Pre-Seed/Seed. If you've raised $1.5m since launch, don't raise before $1.5m ARR.

Capital efficiency has returned since Covid19. After raising $2m since inception, it's harder to raise $1m in ARR.

P9's 2016-2021 SaaS Funding Napkin

In summary, less than 1% of companies VCs meet get funded. These metrics can help you win.

If there’s demand for it, I’ll do one on direct-to-consumer.

Cheers!

Will Leitch

3 years ago

Don't treat Elon Musk like Trump.

He’s not the President. Stop treating him like one.

Elon Musk tweeted from Qatar, where he was watching the World Cup Final with Jared Kushner.

Musk's subsequent Tweets were as normal, basic, and bland as anyone's from a World Cup Final: It's depressing to see the world's richest man looking at his phone during a grand ceremony. Rich guy goes to rich guy event didn't seem important.

Before Musk posted his should-I-step-down-at-Twitter poll, CNN ran a long segment asking if it was hypocritical for him to reveal his real-time location after defending his (very dumb) suspension of several journalists for (supposedly) revealing his assassination coordinates by linking to a site that tracks Musks private jet. It was hard to ignore CNN's hypocrisy: It covered Musk as Twitter CEO like President Trump. EVERY TRUMP STORY WAS BASED ON HIM SAYING X, THEN DOING Y. Trump would do something horrific, lie about it, then pretend it was fine, then condemn a political rival who did the same thing, be called hypocritical, and so on. It lasted four years. Exhausting.

It made sense because Trump was the President of the United States. The press's main purpose is to relentlessly cover and question the president.

It's strange to say this out. Twitter isn't America. Elon Musk isn't a president. He maintains a money-losing social media service to harass and mock people he doesn't like. Treating Musk like Trump, as if he should be held accountable like Trump, shows a startling lack of perspective. Some journalists treat Twitter like a country.

The compulsive, desperate way many journalists utilize the site suggests as much. Twitter isn't the town square, despite popular belief. It's a place for obsessives to meet and converse. Journalists say they're breaking news. Their careers depend on it. They can argue it's a public service. Nope. It's a place lonely people go to speak all day. Twitter. So do journalists, Trump, and Musk. Acting as if it has a greater purpose, as if it's impossible to break news without it, or as if the republic is in peril is ludicrous. Only 23% of Americans are on Twitter, while 25% account for 97% of Tweets. I'd think a large portion of that 25% are journalists (or attention addicts) chatting to other journalists. Their loudness makes Twitter seem more important than it is. Nope. It's another stupid website. They were there before Twitter; they will be there after Twitter. It’s just a website. We can all get off it if we want. Most of us aren’t even on it in the first place.

Musk is a website-owner. No world leader. He's not as accountable as Trump was. Musk is cable news's primary character now that Trump isn't (at least for now). Becoming a TV news anchor isn't as significant as being president. Elon Musk isn't as important as we all pretend, and Twitter isn't even close. Twitter is a dumb website, Elon Musk is a rich guy going through a midlife crisis, and cable news is lazy because its leaders thought the entire world was on Twitter and are now freaking out that their playground is being disturbed.

I’ve said before that you need to leave Twitter, now. But even if you’re still on it, we need to stop pretending it matters more than it does. It’s a site for lonely attention addicts, from the man who runs it to the journalists who can’t let go of it. It’s not a town square. It’s not a country. It’s not even a successful website. Let’s stop pretending any of it’s real. It’s not.

Bloomberg

4 years ago

Expulsion of ten million Ukrainians

According to recent data from two UN agencies, ten million Ukrainians have been displaced.

The International Organization for Migration (IOM) estimates nearly 6.5 million Ukrainians have relocated. Most have fled the war zones around Kyiv and eastern Ukraine, including Dnipro, Zhaporizhzhia, and Kharkiv. Most IDPs have fled to western and central Ukraine.

Since Russia invaded on Feb. 24, 3.6 million people have crossed the border to seek refuge in neighboring countries, according to the latest UN data. While most refugees have fled to Poland and Romania, many have entered Russia.

Internally displaced figures are IOM estimates as of March 19, based on 2,000 telephone interviews with Ukrainians aged 18 and older conducted between March 9-16. The UNHCR compiled the figures for refugees to neighboring countries on March 21 based on official border crossing data and its own estimates. The UNHCR's top-line total is lower than the country totals because Romania and Moldova totals include people crossing between the two countries.

Sources: IOM, UNHCR

According to IOM estimates based on telephone interviews with a representative sample of internally displaced Ukrainians, over 53% of those displaced are women, and over 60% of displaced households have children.