More on Entrepreneurship/Creators

Alex Mathers

24 years ago

400 articles later, nobody bothered to read them.

Writing for readers:

14 years of daily writing.

I post practically everything on social media. I authored hundreds of articles, thousands of tweets, and numerous volumes to almost no one.

Tens of thousands of readers regularly praise me.

I despised writing. I'm stuck now.

I've learned what readers like and what doesn't.

Here are some essential guidelines for writing with impact:

Readers won't understand your work if you can't.

Though obvious, this slipped me up. Share your truths.

Stories engage human brains.

Showing the journey of a person from worm to butterfly inspires the human spirit.

Overthinking hinders powerful writing.

The best ideas come from inner understanding in between thoughts.

Avoid writing to find it. Write.

Writing a masterpiece isn't motivating.

Write for five minutes to simplify. Step-by-step, entertaining, easy steps.

Good writing requires a willingness to make mistakes.

So write loads of garbage that you can edit into a good piece.

Courageous writing.

A courageous story will move readers. Personal experience is best.

Go where few dare.

Templates, outlines, and boundaries help.

Limitations enhance writing.

Excellent writing is straightforward and readable, removing all the unnecessary fat.

Use five words instead of nine.

Use ordinary words instead of uncommon ones.

Readers desire relatability.

Too much perfection will turn it off.

Write to solve an issue if you can't think of anything to write.

Instead, read to inspire. Best authors read.

Every tweet, thread, and novel must have a central idea.

What's its point?

This can make writing confusing.

️ Don't direct your reader.

Readers quit reading. Demonstrate, describe, and relate.

Even if no one responds, have fun. If you hate writing it, the reader will too.

SAHIL SAPRU

3 years ago

Growth tactics that grew businesses from 1 to 100

Everyone wants a scalable startup.

Innovation helps launch a startup. The secret to a scalable business is growth trials (from 1 to 100).

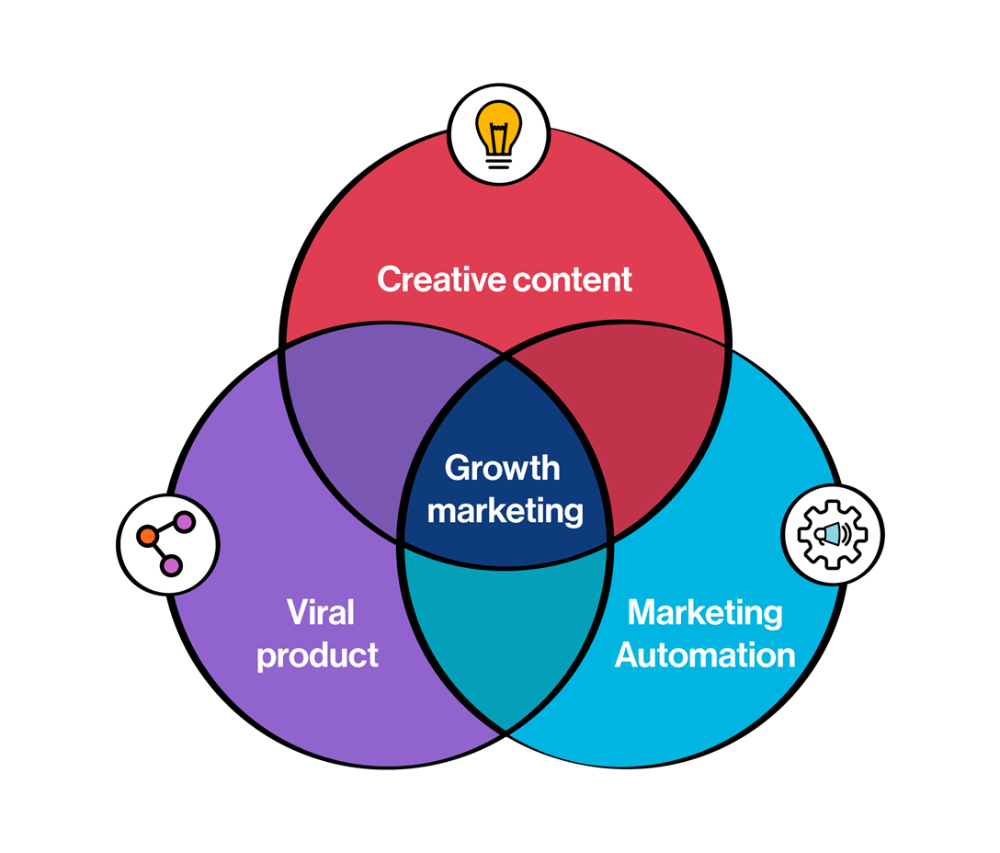

Growth marketing combines marketing and product development for long-term growth.

Today, I'll explain growth hacking strategies popular startups used to scale.

1/ A Facebook user's social value is proportional to their friends.

Facebook built its user base using content marketing and paid ads. Mark and his investors feared in 2007 when Facebook's growth stalled at 90 million users.

Chamath Palihapitiya was brought in by Mark.

The team tested SEO keywords and MAU chasing. The growth team introduced “people you may know”

This feature reunited long-lost friends and family. Casual users became power users as the retention curve flattened.

Growth Hack Insights: With social network effect the value of your product or platform increases exponentially if you have users you know or can relate with.

2/ Airbnb - Focus on your value propositions

Airbnb nearly failed in 2009. The company's weekly revenue was $200 and they had less than 2 months of runway.

Enter Paul Graham. The team noticed a pattern in 40 listings. Their website's property photos sucked.

Why?

Because these photos were taken with regular smartphones. Users didn't like the first impression.

Graham suggested traveling to New York to rent a camera, meet with property owners, and replace amateur photos with high-resolution ones.

A week later, the team's weekly revenue doubled to $400, indicating they were on track.

Growth Hack Insights: When selling an “online experience” ensure that your value proposition is aesthetic enough for users to enjoy being associated with them.

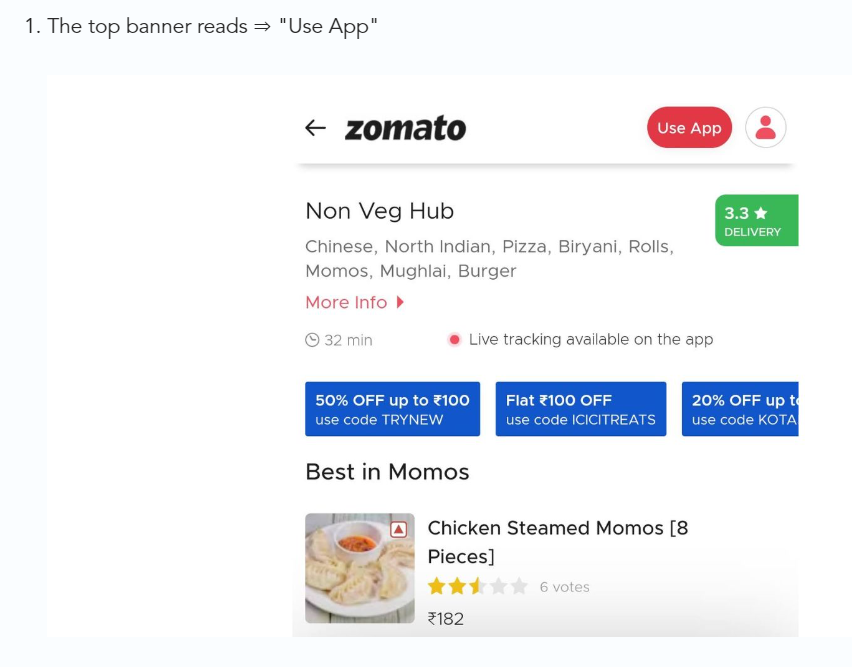

3/ Zomato - A company's smartphone push ensured growth.

Zomato delivers food. User retention was a challenge for the founders. Indian food customers are notorious for switching brands at the drop of a hat.

Zomato wanted users to order food online and repeat orders throughout the week.

Zomato created an attractive website with “near me” keywords for SEO indexing.

Zomato gambled to increase repeat orders. They only allowed mobile app food orders.

Zomato thought mobile apps were stickier. Product innovations in search/discovery/ordering or marketing campaigns like discounts/in-app notifications/nudges can improve user experience.

Zomato went public in 2021 after users kept ordering food online.

Growth Hack Insights: To improve user retention try to build platforms that build user stickiness. Your product and marketing team will do the rest for them.

4/ Hotmail - Signaling helps build premium users.

Ever sent or received an email or tweet with a sign — sent from iPhone?

Hotmail did it first! One investor suggested Hotmail add a signature to every email.

Overnight, thousands joined the company. Six months later, the company had 1 million users.

When serving an existing customer, improve their social standing. Signaling keeps the top 1%.

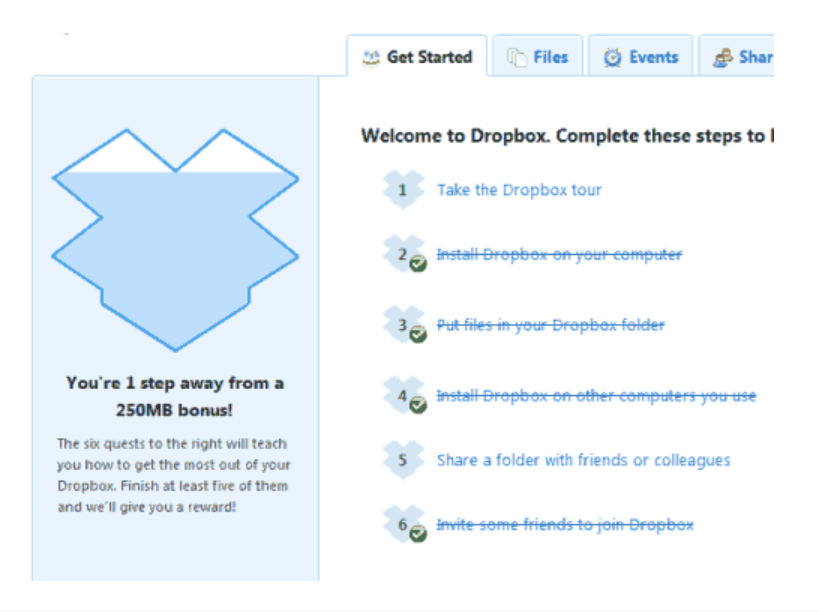

5/ Dropbox - Respect loyal customers

Dropbox is a company that puts people over profits. The company prioritized existing users.

Dropbox rewarded loyal users by offering 250 MB of free storage to anyone who referred a friend. The referral hack helped Dropbox get millions of downloads in its first few months.

Growth Hack Insights: Think of ways to improve the social positioning of your end-user when you are serving an existing customer. Signaling goes a long way in attracting the top 1% to stay.

These experiments weren’t hacks. Hundreds of failed experiments and user research drove these experiments. Scaling up experiments is difficult.

Contact me if you want to grow your startup's user base.

Sarah Bird

3 years ago

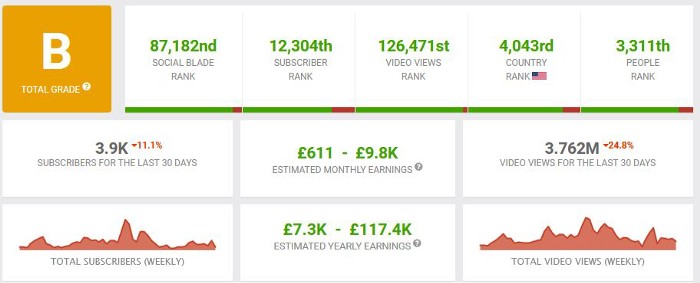

Memes Help This YouTube Channel Earn Over $12k Per Month

Take a look at a YouTube channel making anything up to over $12k a month from making very simple videos.

And the best part? Its replicable by anyone. Basic videos can be generated for free without design abilities.

Join me as I deconstruct the channel to estimate how much they make, how they do it, and how you can too.

What Do They Do Exactly?

Happy Land posts memes with a simple caption they wrote. So, it's new. The videos are a slideshow of meme photos with stock music.

The site posts 12 times a day.

8-10-minute videos show 10 second images. Thus, each video needs 48-60 memes.

Memes are video titles (e.g. times a boyfriend was hilarious, back to school fails, funny restaurant signs).

Some stats about the channel:

Founded on October 30, 2020

873 videos were added.

81.8k subscribers

67,244,196 views of the video

What Value Are They Adding?

Everyone can find free memes online. This channel collects similar memes into a single video so you don't have to scroll or click for more. It’s right there, you just keep watching and more will come.

By theming it, the audience is prepared for the video's content.

If you want hilarious animal memes or restaurant signs, choose the video and you'll get up to 60 memes without having to look for them. Genius!

How much money do they make?

According to www.socialblade.com, the channel earns $800-12.8k (image shown in my home currency of GBP).

That's a crazy estimate, but it highlights the unbelievable potential of a channel that presents memes.

This channel thrives on quantity, thus putting out videos is necessary to keep the flow continuing and capture its audience's attention.

How Are the Videos Made?

Straightforward. Memes are added to a presentation without editing (so you could make this in PowerPoint or Keynote).

Each slide should include a unique image and caption. Set 10 seconds per slide.

Add music and post the video.

Finding enough memes for the material and theming is difficult, but if you enjoy memes, this is a fun job.

This case study should have shown you that you don't need expensive software or design expertise to make entertaining videos. Why not try fresh, easy-to-do ideas and see where they lead?

You might also like

Max Parasol

3 years ago

What the hell is Web3 anyway?

"Web 3.0" is a trendy buzzword with a vague definition. Everyone agrees it has to do with a blockchain-based internet evolution, but what is it?

Yet, the meaning and prospects for Web3 have become hot topics in crypto communities. Big corporations use the term to gain a foothold in the space while avoiding the negative connotations of “crypto.”

But it can't be evaluated without a definition.

Among those criticizing Web3's vagueness is Cobie:

“Despite the dominie's deluge of undistinguished think pieces, nobody really agrees on what Web3 is. Web3 is a scam, the future, tokenizing the world, VC exit liquidity, or just another name for crypto, depending on your tribe.

“Even the crypto community is split on whether Bitcoin is Web3,” he adds.

The phrase was coined by an early crypto thinker, and the community has had years to figure out what it means. Many ideologies and commercial realities have driven reverse engineering.

Web3 is becoming clearer as a concept. It contains ideas. It was probably coined by Ethereum co-founder Gavin Wood in 2014. His definition of Web3 included “trustless transactions” as part of its tech stack. Wood founded the Web3 Foundation and the Polkadot network, a Web3 alternative future.

The 2013 Ethereum white paper had previously allowed devotees to imagine a DAO, for example.

Web3 now has concepts like decentralized autonomous organizations, sovereign digital identity, censorship-free data storage, and data divided by multiple servers. They intertwine discussions about the “Web3” movement and its viability.

These ideas are linked by Cobie's initial Web3 definition. A key component of Web3 should be “ownership of value” for one's own content and data.

Noting that “late-stage capitalism greedcorps that make you buy a fractionalized micropayment NFT on Cardano to operate your electric toothbrush” may build the new web, he notes that “crypto founders are too rich to care anymore.”

Very Important

Many critics of Web3 claim it isn't practical or achievable. Web3 critics like Moxie Marlinspike (creator of sslstrip and Signal/TextSecure) can never see people running their own servers. Early in January, he argued that protocols are more difficult to create than platforms.

While this is true, some projects, like the file storage protocol IPFS, allow users to choose which jurisdictions their data is shared between.

But full decentralization is a difficult problem. Suhaza, replying to Moxie, said:

”People don't want to run servers... Companies are now offering API access to an Ethereum node as a service... Almost all DApps interact with the blockchain using Infura or Alchemy. In fact, when a DApp uses a wallet like MetaMask to interact with the blockchain, MetaMask is just calling Infura!

So, here are the questions: Web3: Is it a go? Is it truly decentralized?

Web3 history is shaped by Web2 failure.

This is the story of how the Internet was turned upside down...

Then came the vision. Everyone can create content for free. Decentralized open-source believers like Tim Berners-Lee popularized it.

Real-world data trade-offs for content creation and pricing.

A giant Wikipedia page married to a giant Craig's List. No ads, no logins, and a private web carve-up. For free usage, you give up your privacy and data to the algorithmic targeted advertising of Web 2.

Our data is centralized and savaged by giant corporations. Data localization rules and geopolitical walls like China's Great Firewall further fragment the internet.

The decentralized Web3 reflects Berners-original Lee's vision: "No permission is required from a central authority to post anything... there is no central controlling node and thus no single point of failure." Now he runs Solid, a Web3 data storage startup.

So Web3 starts with decentralized servers and data privacy.

Web3 begins with decentralized storage.

Data decentralization is a key feature of the Web3 tech stack. Web2 has closed databases. Large corporations like Facebook, Google, and others go to great lengths to collect, control, and monetize data. We want to change it.

Amazon, Google, Microsoft, Alibaba, and Huawei, according to Gartner, currently control 80% of the global cloud infrastructure market. Web3 wants to change that.

Decentralization enlarges power structures by giving participants a stake in the network. Users own data on open encrypted networks in Web3. This area has many projects.

Apps like Filecoin and IPFS have led the way. Data is replicated across multiple nodes in Web3 storage providers like Filecoin.

But the new tech stack and ideology raise many questions.

Giving users control over their data

According to Ryan Kris, COO of Verida, his “Web3 vision” is “empowering people to control their own data.”

Verida targets SDKs that address issues in the Web3 stack: identity, messaging, personal storage, and data interoperability.

A big app suite? “Yes, but it's a frontier technology,” he says. They are currently building a credentialing system for decentralized health in Bermuda.

By empowering individuals, how will Web3 create a fairer internet? Kris, who has worked in telecoms, finance, cyber security, and blockchain consulting for decades, admits it is difficult:

“The viability of Web3 raises some good business questions,” he adds. “How can users regain control over centralized personal data? How are startups motivated to build products and tools that support this transition? How are existing Web2 companies encouraged to pivot to a Web3 business model to compete with market leaders?

Kris adds that new technologies have regulatory and practical issues:

"On storage, IPFS is great for redundantly sharing public data, but not designed for securing private personal data. It is not controlled by the users. When data storage in a specific country is not guaranteed, regulatory issues arise."

Each project has varying degrees of decentralization. The diehards say DApps that use centralized storage are no longer “Web3” companies. But fully decentralized technology is hard to build.

Web2.5?

Some argue that we're actually building Web2.5 businesses, which are crypto-native but not fully decentralized. This is vital. For example, the NFT may be on a blockchain, but it is linked to centralized data repositories like OpenSea. A server failure could result in data loss.

However, according to Apollo Capital crypto analyst David Angliss, OpenSea is “not exactly community-led”. Also in 2021, much to the chagrin of crypto enthusiasts, OpenSea tried and failed to list on the Nasdaq.

This is where Web2.5 is defined.

“Web3 isn't a crypto segment. “Anything that uses a blockchain for censorship resistance is Web3,” Angliss tells us.

“Web3 gives users control over their data and identity. This is not possible in Web2.”

“Web2 is like feudalism, with walled-off ecosystems ruled by a few. For example, an honest user owned the Instagram account “Meta,” which Facebook rebranded and then had to make up a reason to suspend. Not anymore with Web3. If I buy ‘Ethereum.ens,' Ethereum cannot take it away from me.”

Angliss uses OpenSea as a Web2.5 business example. Too decentralized, i.e. censorship resistant, can be unprofitable for a large company like OpenSea. For example, OpenSea “enables NFT trading”. But it also stopped the sale of stolen Bored Apes.”

Web3 (or Web2.5, depending on the context) has been described as a new way to privatize internet.

“Being in the crypto ecosystem doesn't make it Web3,” Angliss says. The biggest risk is centralized closed ecosystems rather than a growing Web3.

LooksRare and OpenDAO are two community-led platforms that are more decentralized than OpenSea. LooksRare has even been “vampire attacking” OpenSea, indicating a Web3 competitor to the Web2.5 NFT king could find favor.

The addition of a token gives these new NFT platforms more options for building customer loyalty. For example, OpenSea charges a fee that goes nowhere. Stakeholders of LOOKS tokens earn 100% of the trading fees charged by LooksRare on every basic sale.

Maybe Web3's time has come.

So whose data is it?

Continuing criticisms of Web3 platforms' decentralization may indicate we're too early. Users want to own and store their in-game assets and NFTs on decentralized platforms like the Metaverse and play-to-earn games. Start-ups like Arweave, Sia, and Aleph.im propose an alternative.

To be truly decentralized, Web3 requires new off-chain models that sidestep cloud computing and Web2.5.

“Arweave and Sia emerged as formidable competitors this year,” says the Messari Report. They seek to reduce the risk of an NFT being lost due to a data breach on a centralized server.

Aleph.im, another Web3 cloud competitor, seeks to replace cloud computing with a service network. It is a decentralized computing network that supports multiple blockchains by retrieving and encrypting data.

“The Aleph.im network provides a truly decentralized alternative where it is most needed: storage and computing,” says Johnathan Schemoul, founder of Aleph.im. For reasons of consensus and security, blockchains are not designed for large storage or high-performance computing.

As a result, large data sets are frequently stored off-chain, increasing the risk for centralized databases like OpenSea

Aleph.im enables users to own digital assets using both blockchains and off-chain decentralized cloud technologies.

"We need to go beyond layer 0 and 1 to build a robust decentralized web. The Aleph.im ecosystem is proving that Web3 can be decentralized, and we intend to keep going.”

Aleph.im raised $10 million in mid-January 2022, and Ubisoft uses its network for NFT storage. This is the first time a big-budget gaming studio has given users this much control.

It also suggests Web3 could work as a B2B model, even if consumers aren't concerned about “decentralization.” Starting with gaming is common.

Can Tokenomics help Web3 adoption?

Web3 consumer adoption is another story. The average user may not be interested in all this decentralization talk. Still, how much do people value privacy over convenience? Can tokenomics solve the privacy vs. convenience dilemma?

Holon Global Investments' Jonathan Hooker tells us that human internet behavior will change. “Do you own Bitcoin?” he asks in his Web3 explanation. How does it feel to own and control your own sovereign wealth? Then:

“What if you could own and control your data like Bitcoin?”

“The business model must find what that person values,” he says. Putting their own health records on centralized systems they don't control?

“How vital are those medical records to that person at a critical time anywhere in the world? Filecoin and IPFS can help.”

Web3 adoption depends on NFT storage competition. A free off-chain storage of NFT metadata and assets was launched by Filecoin in April 2021.

Denationalization and blockchain technology have significant implications for data ownership and compensation for lending, staking, and using data.

Tokenomics can change human behavior, but many people simply sign into Web2 apps using a Facebook API without hesitation. Our data is already owned by Google, Baidu, Tencent, and Facebook (and its parent company Meta). Is it too late to recover?

Maybe. “Data is like fruit, it starts out fresh but ages,” he says. "Big Tech's data on us will expire."

Web3 founder Kris agrees with Hooker that “value for data is the issue, not privacy.” People accept losing their data privacy, so tokenize it. People readily give up data, so why not pay for it?

"Personalized data offering is valuable in personalization. “I will sell my social media data but not my health data.”

Purists and mass consumer adoption struggle with key management.

Others question data tokenomics' optimism. While acknowledging its potential, Box founder Aaron Levie questioned the viability of Web3 models in a Tweet thread:

“Why? Because data almost always works in an app. A product and APIs that moved quickly to build value and trust over time.”

Levie contends that tokenomics may complicate matters. In addition to community governance and tokenomics, Web3 ideals likely add a new negotiation vector.

“These are hard problems about human coordination, not software or blockchains,”. Using a Facebook API is simple. The business model and user interface are crucial.

For example, the crypto faithful have a common misconception about logging into Web3. It goes like this: Web 1 had usernames and passwords. Web 2 uses Google, Facebook, or Twitter APIs, while Web 3 uses your wallet. Pay with Ethereum on MetaMask, for example.

But Levie is correct. Blockchain key management is stressed in this meme. Even seasoned crypto enthusiasts have heart attacks, let alone newbies.

Web3 requires a better user experience, according to Kris, the company's founder. “How does a user recover keys?”

And at this point, no solution is likely to be completely decentralized. So Web3 key management can be improved. ”The moment someone loses control of their keys, Web3 ceases to exist.”

That leaves a major issue for Web3 purists. Put this one in the too-hard basket.

Is 2022 the Year of Web3?

Web3 must first solve a number of issues before it can be mainstreamed. It must be better and cheaper than Web2.5, or have other significant advantages.

Web3 aims for scalability without sacrificing decentralization protocols. But decentralization is difficult and centralized services are more convenient.

Ethereum co-founder Vitalik Buterin himself stated recently"

This is why (centralized) Binance to Binance transactions trump Ethereum payments in some places because they don't have to be verified 12 times."

“I do think a lot of people care about decentralization, but they're not going to take decentralization if decentralization costs $8 per transaction,” he continued.

“Blockchains need to be affordable for people to use them in mainstream applications... Not for 2014 whales, but for today's users."

For now, scalability, tokenomics, mainstream adoption, and decentralization believers seem to be holding Web3 hostage.

Much like crypto's past.

But stay tuned.

Looi Qin En

3 years ago

I polled 52 product managers to find out what qualities make a great Product Manager

Great technology opens up an universe of possibilities.

Need a friend? WhatsApp, Telegram, Slack, etc.

Traveling? AirBnB, Expedia, Google Flights, etc.

Money transfer? Use digital banking, e-wallet, or crypto applications

Products inspire us. How do we become great?

I asked product managers in my network:

What does it take to be a great product manager?

52 product managers from 40+ prominent IT businesses in Southeast Asia responded passionately. Many of the PMs I've worked with have built fantastic products, from unicorns (Lazada, Tokopedia, Ovo) to incumbents (Google, PayPal, Experian, WarnerMedia) to growing (etaily, Nium, Shipper).

TL;DR:

Soft talents are more important than hard skills. Technical expertise was hardly ever stressed by product managers, and empathy was mentioned more than ten times. Janani from Xendit expertly recorded the moment. A superb PM must comprehend that their empathy for the feelings of their users must surpass all logic and data.

Constant attention to the needs of the user. Many people concur that the closer a PM gets to their customer/user, the more likely it is that the conclusion will be better. There were almost 30 references to customers and users. Focusing on customers has the advantage because it is hard to overshoot, as Rajesh from Lazada puts it best.

Setting priorities is invaluable. Prioritization is essential because there are so many problems that a PM must deal with every day. My favorite quotation on this is from Rakuten user Yee Jie. Viki, A competent product manager extinguishes fires. A good product manager lets things burn and then prioritizes.

This summary isn't enough to capture what excellent PMs claim it requires. Read below!

What qualities make a successful product manager?

Themed quotes are alphabetized by author.

Embrace your user/customer

Aeriel Dela Paz, Rainmaking Venture Architect, ex-GCash Product Head

Great PMs know what customers need even when they don’t say it directly. It’s about reading between the lines and going through the numbers to address that need.

Anders Nordahl, OrkestraSCS's Product Manager

Understanding the vision of your customer is as important as to get the customer to buy your vision

Angel Mendoza, MetaverseGo's Product Head

Most people think that to be a great product manager, you must have technical know-how. It’s textbook and I do think it is helpful to some extent, but for me the secret sauce is EMPATHY — the ability to see and feel things from someone else’s perspective. You can’t create a solution without deeply understanding the problem.

Senior Product Manager, Tokopedia

Focus on delivering value and helping people (consumer as well as colleague) and everything else will follow

Darren Lau, Deloitte Digital's Head of Customer Experience

Start with the users, and work backwards. Don’t have a solution looking for a problem

Darryl Tan, Grab Product Manager

I would say that a great product manager is able to identify the crucial problems to solve through strong user empathy and synthesis of insights

Diego Perdana, Kitalulus Senior Product Manager

I think to be a great product manager you need to be obsessed with customer problems and most important is solve the right problem with the right solution

Senior Product Manager, AirAsia

Lot of common sense + Customer Obsession. The most important role of a Product manager is to bring clarity of a solution. Your product is good if it solves customer problems. Your product is great if it solves an eco-system problem and disrupts the business in a positive way.

Edward Xie, Mastercard Managing Consultant, ex-Shopee Product Manager

Perfect your product, but be prepared to compromise for right users

AVP Product, Shipper

For me, a great product manager need to be rational enough to find the business opportunities while obsessing the customers.

Janani Gopalakrishnan is a senior product manager of a stealth firm.

While as a good PM it’s important to be data-driven, to be a great PM one needs to understand that their empathy for their users’ emotions must exceed all logic and data. Great PMs also make these product discussions thrive within the team by intently listening to all the members thoughts and influence the team’s skin in the game positively.

Director, Product Management, Indeed

Great product managers put their users first. They discover problems that matter most to their users and inspire their team to find creative solutions.

Grab's Senior Product Manager Lakshay Kalra

Product management is all about finding and solving most important user problems

Quipper's Mega Puji Saraswati

First of all, always remember the value of “user first” to solve what user really needs (the main problem) for guidance to arrange the task priority and develop new ideas. Second, ownership. Treat the product as your “2nd baby”, and the team as your “2nd family”. Third, maintain a good communication, both horizontally and vertically. But on top of those, always remember to have a work — life balance, and know exactly the priority in life :)

Senior Product Manager, Prosa.AI Miswanto Miswanto

A great Product Manager is someone who can be the link between customer needs with the readiness and flexibility of the team. So that it can provide, build, and produce a product that is useful and helps the community to carry out their daily activities. And He/She can improve product quality ongoing basis or continuous to help provide solutions for users or our customer.

Lead Product Manager, Tokopedia, Oriza Wahyu Utami

Be a great listener, be curious and be determined. every great product manager have the ability to listen the pain points and understand the problems, they are always curious on the users feedback, and they also very determined to look for the solutions that benefited users and the business.

99 Group CPO Rajesh Sangati

The advantage of focusing on customers: it’s impossible to overshoot

Ray Jang, founder of Scenius, formerly of ByteDance

The difference between good and great product managers is that great product managers are willing to go the unsexy and unglamorous extra mile by rolling up their sleeves and ironing out all minutiae details of the product such that when the user uses the product, they can’t help but say “This was made for me.”

BCG Digital Ventures' Sid Narayanan

Great product managers ensure that what gets built and shipped is at the intersection of what creates value for the customer and for the business that’s building the product…often times, especially in today’s highly liquid funding environment, the unit economics, aka ensuring that what gets shipped creates value for the business and is sustainable, gets overlooked

Stephanie Brownlee, BCG Digital Ventures Product Manager

There is software in the world that does more harm than good to people and society. Great Product Managers build products that solve problems not create problems

Experiment constantly

Delivery Hero's Abhishek Muralidharan

Embracing your failure is the key to become a great Product Manager

DeliveryHero's Anuraag Burman

Product Managers should be thick skinned to deal with criticism and the stomach to take risk and face failures.

DataSpark Product Head Apurva Lawale

Great product managers enjoy the creative process with their team to deliver intuitive user experiences to benefit users.

Dexter Zhuang, Xendit Product Manager

The key to creating winning products is building what customers want as quickly as you can — testing and learning along the way.

PayPal's Jay Ko

To me, great product managers always remain relentlessly curious. They are empathetic leaders and problem solvers that glean customer insights into building impactful products

Home Credit Philippines' Jedd Flores

Great Product Managers are the best dreamers; they think of what can be possible for the customers, for the company and the positive impact that it will have in the industry that they’re part of

Set priorities first, foremost, foremost.

HBO Go Product Manager Akshay Ishwar

Good product managers strive to balance the signal to noise ratio, Great product managers know when to turn the dials for each up exactly

Zuellig Pharma's Guojie Su

Have the courage to say no. Managing egos and request is never easy and rejecting them makes it harder but necessary to deliver the best value for the customers.

Ninja Van's John Prawira

(1) PMs should be able to ruthlessly prioritize. In order to be effective, PMs should anchor their product development process with their north stars (success metrics) and always communicate with a purpose. (2) User-first when validating assumptions. PMs should validate assumptions early and often to manage risk when leading initiatives with a focus on generating the highest impact to solving a particular user pain-point. We can’t expect a product/feature launch to be perfect (there might be bugs or we might not achieve our success metric — which is where iteration comes in), but we should try our best to optimize on user-experience earlier on.

Nium Product Manager Keika Sugiyama

I’d say a great PM holds the ability to balance ruthlessness and empathy at the same time. It’s easier said than done for sure!

ShopBack product manager Li Cai

Great product managers are like great Directors of movies. They do not create great products/movies by themselves. They deliver it by Defining, Prioritising, Energising the team to deliver what customers love.

Quincus' Michael Lim

A great product manager, keeps a pulse on the company’s big picture, identifies key problems, and discerns its rightful prioritization, is able to switch between the macro perspective to micro specifics, and communicates concisely with humility that influences naturally for execution

Mathieu François-Barseghian, SVP, Citi Ventures

“You ship your org chart”. This is Conway’s Law short version (1967!): the fundamental socio-technical driver behind innovation successes (Netflix) and failures (your typical bank). The hype behind micro-services is just another reflection of Conway’s Law

Mastercard's Regional Product Manager Nikhil Moorthy

A great PM should always look to build products which are scalable & viable , always keep the end consumer journey in mind. Keeping things simple & having a MVP based approach helps roll out products faster. One has to test & learn & then accordingly enhance / adapt, these are key to success

Rendy Andi, Tokopedia Product Manager

Articulate a clear vision and the path to get there, Create a process that delivers the best results and Be serious about customers.

Senior Product Manager, DANA Indonesia

Own the problem, not the solution — Great PMs are outstanding problem preventers. Great PMs are discerning about which problems to prevent, which problems to solve, and which problems not to solve

Tat Leong Seah, LionsBot International Senior UX Engineer, ex-ViSenze Product Manager

Prioritize outcomes for your users, not outputs of your system” or more succinctly “be agile in delivering value; not features”

Senior Product Manager, Rakuten Viki

A good product manager puts out fires. A great product manager lets fires burn and prioritize from there

acquire fundamental soft skills

Oracle NetSuite's Astrid April Dominguez

Personally, i believe that it takes grit, empathy, and optimistic mindset to become a great PM

Ovo Lead Product Manager Boy Al Idrus

Contrary to popular beliefs, being a great product manager doesn’t have anything to do with technicals, it sure plays a part but most important weapons are: understanding pain points of users, project management, sympathy in leadership and business critical skills; these 4 aspects would definitely help you to become a great product manager.

PwC Product Manager Eric Koh

Product managers need to be courageous to be successful. Courage is required to dive deep, solving big problems at its root and also to think far and dream big to achieve bold visions for your product

Ninja Van's Product Director

In my opinion the two most important ingredients to become a successful product manager is: 1. Strong critical thinking 2. Strong passion for the work. As product managers, we typically need to solve very complex problems where the answers are often very ambiguous. The work is tough and at times can be really frustrating. The 2 ingredients I mentioned earlier will be critical towards helping you to slowly discover the solution that may become a game changer.

PayPal's Lead Product Manager

A great PM has an eye of a designer, the brain of an engineer and the tongue of a diplomat

Product Manager Irene Chan

A great Product Manager is able to think like a CEO of the company. Visionary with Agile Execution in mind

Isabella Yamin, Rakuten Viki Product Manager

There is no one model of being a great product person but what I’ve observed from people I’ve had the privilege working with is an overflowing passion for the user problem, sprinkled with a knack for data and negotiation

Google product manager Jachin Cheng

Great product managers start with abundant intellectual curiosity and grow into a classic T-shape. Horizontally: generalists who range widely, communicate fluidly and collaborate easily cross-functionally, connect unexpected dots, and have the pulse both internally and externally across users, stakeholders, and ecosystem players. Vertically: deep product craftsmanship comes from connecting relentless user obsession with storytelling, business strategy with detailed features and execution, inspiring leadership with risk mitigation, and applying the most relevant tools to solving the right problems.

Jene Lim, Experian's Product Manager

3 Cs and 3 Rs. Critical thinking , Customer empathy, Creativity. Resourcefulness, Resilience, Results orientation.

Nirenj George, Envision Digital's Security Product Manager

A great product manager is someone who can lead, collaborate and influence different stakeholders around the product vision, and should be able to execute the product strategy based on customer insights, as well as take ownership of the product roadmap to create a greater impact on customers.

Grab's Lead Product Manager

Product Management is a multi-dimensional role that looks very different across each product team so each product manager has different challenges to deal with but what I have found common among great product managers is ability to create leverage through their efforts to drive outsized impacts for their products. This leverage is built using data with intuition, building consensus with stakeholders, empowering their teams and focussed efforts on needle moving work.

NCS Product Manager Umar Masagos

To be a great product manager, one must master both the science and art of Product Management. On one hand, you need have a strong understanding of the tools, metrics and data you need to drive your product. On the other hand, you need an in-depth understanding of your organization, your target market and target users, which is often the more challenging aspect to master.

M1 product manager Wei Jiao Keong

A great product manager is multi-faceted. First, you need to have the ability to see the bigger picture, yet have a keen eye for detail. Secondly, you are empathetic and is able to deliver products with exceptional user experience while being analytical enough to achieve business outcomes. Lastly, you are highly resourceful and independent yet comfortable working cross-functionally.

Yudha Utomo, ex-Senior Product Manager, Tokopedia

A great Product Manager is essentially an effective note-taker. In order to achieve the product goals, It is PM’s job to ensure objective has been clearly conveyed, efforts are assessed, and tasks are properly tracked and managed. PM can do this by having top-notch documentation skills.

Nir Zicherman

3 years ago

The Great Organizational Conundrum

Only two of the following three options can be achieved: consistency, availability, and partition tolerance

Someone told me that growing from 30 to 60 is the biggest adjustment for a team or business.

I remember thinking, That's random. Each company is unique. I've seen teams of all types confront the same issues during development periods. With new enterprises starting every year, we should be better at navigating growing difficulties.

As a team grows, its processes and systems break down, requiring reorganization or declining results. Why always? Why isn't there a perfect scaling model? Why hasn't that been found?

The Three Things Productive Organizations Must Have

Any company should be efficient and productive. Three items are needed:

First, it must verify that no two team members have conflicting information about the roadmap, strategy, or any input that could affect execution. Teamwork is required.

Second, it must ensure that everyone can receive the information they need from everyone else quickly, especially as teams become more specialized (an inevitability in a developing organization). It requires everyone's accessibility.

Third, it must ensure that the organization can operate efficiently even if a piece is unavailable. It's partition-tolerant.

From my experience with the many teams I've been on, invested in, or advised, achieving all three is nearly impossible. Why a perfect organization model cannot exist is clear after analysis.

The CAP Theorem: What is it?

Eric Brewer of Berkeley discovered the CAP Theorem, which argues that a distributed data storage should have three benefits. One can only have two at once.

The three benefits are consistency, availability, and partition tolerance, which implies that even if part of the system is offline, the remainder continues to work.

This notion is usually applied to computer science, but I've realized it's also true for human organizations. In a post-COVID world, many organizations are hiring non-co-located staff as they grow. CAP Theorem is more important than ever. Growing teams sometimes think they can develop ways to bypass this law, dooming themselves to a less-than-optimal team dynamic. They should adopt CAP to maximize productivity.

Path 1: Consistency and availability equal no tolerance for partitions

Let's imagine you want your team to always be in sync (i.e., for someone to be the source of truth for the latest information) and to be able to share information with each other. Only division into domains will do.

Numerous developing organizations do this, especially after the early stage (say, 30 people) when everyone may wear many hats and be aware of all the moving elements. After a certain point, it's tougher to keep generalists aligned than to divide them into specialized tasks.

In a specialized, segmented team, leaders optimize consistency and availability (i.e. every function is up-to-speed on the latest strategy, no one is out of sync, and everyone is able to unblock and inform everyone else).

Partition tolerance suffers. If any component of the organization breaks down (someone goes on vacation, quits, underperforms, or Gmail or Slack goes down), productivity stops. There's no way to give the team stability, availability, and smooth operation during a hiccup.

Path 2: Partition Tolerance and Availability = No Consistency

Some businesses avoid relying too heavily on any one person or sub-team by maximizing availability and partition tolerance (the organization continues to function as a whole even if particular components fail). Only redundancy can do that. Instead of specializing each member, the team spreads expertise so people can work in parallel. I switched from Path 1 to Path 2 because I realized too much reliance on one person is risky.

What happens after redundancy? Unreliable. The more people may run independently and in parallel, the less anyone can be the truth. Lack of alignment or updated information can lead to people executing slightly different strategies. So, resources are squandered on the wrong work.

Path 3: Partition and Consistency "Tolerance" equates to "absence"

The third, least-used path stresses partition tolerance and consistency (meaning answers are always correct and up-to-date). In this organizational style, it's most critical to maintain the system operating and keep everyone aligned. No one is allowed to read anything without an assurance that it's up-to-date (i.e. there’s no availability).

Always short-lived. In my experience, a business that prioritizes quality and scalability over speedy information transmission can get bogged down in heavy processes that hinder production. Large-scale, this is unsustainable.

Accepting CAP

When two puzzle pieces fit, the third won't. I've watched developing teams try to tackle these difficulties, only to find, as their ancestors did, that they can never be entirely solved. Idealized solutions fail in reality, causing lost effort, confusion, and lower production.

As teams develop and change, they should embrace CAP, acknowledge there is a limit to productivity in a scaling business, and choose the best two-out-of-three path.