More on Personal Growth

Teronie Donalson

3 years ago

The best financial advice I've ever received and how you can use it.

Taking great financial advice is key to financial success.

A wealthy man told me to INVEST MY MONEY when I was young.

As I entered Starbucks, an older man was leaving. I noticed his watch and expensive-looking shirt, not like the guy in the photo, but one made of fine fabric like vicuna wool, which can only be shorn every two to three years. His Bentley confirmed my suspicions about his wealth.

This guy looked like James Bond, so I asked him how to get rich like him.

"Drug dealer?" he laughed.

Whether he was telling the truth, I'll never know, and I didn't want to be an accessory, but he quickly added, "Kid, invest your money; it will do wonders." He left.

When he told me to invest, he didn't say what. Later, I realized the investment game has so many levels that even if he drew me a blueprint, I wouldn't understand it.

The best advice I received was to invest my earnings. I must decide where to invest.

I'll preface by saying I'm not a financial advisor or Your financial advisor, but I'll share what I've learned from books, links, and sources. The rest is up to you.

Basically:

Invest your Money

Money is money, whether you call it cake, dough, moolah, benjamins, paper, bread, etc.

If you're lucky, you can buy one of the gold shirts in the photo.

Investing your money today means putting it towards anything that could be profitable.

According to the website Investopedia:

“Investing is allocating money to generate income or profit.”

You can invest in a business, real estate, or a skill that will pay off later.

Everyone has different goals and wants at different stages of life, so investing varies.

He was probably a sugar daddy with his Bentley, nice shirt, and Rolex.

In my twenties, I started making "good" money; now, in my forties, with a family and three kids, I'm building a legacy for my grandkids.

“It’s not how much money you make, but how much money you keep, how hard it works for you, and how many generations you keep it for.” — Robert Kiyosaki.

Money isn't evil, but lack of it is.

Financial stress is a major source of problems, according to studies.

Being broke hurts, especially if you want to provide for your family or do things.

“An investment in knowledge pays the best interest.” — Benjamin Franklin.

Investing in knowledge is invaluable. Before investing, do your homework.

You probably didn't learn about investing when you were young, like I didn't. My parents were in survival mode, making investing difficult.

In my 20s, I worked in banking to better understand money.

So, why invest?

Growth requires investment.

Investing puts money to work and can build wealth. Your money may outpace inflation with smart investing. Compounding and the risk-return tradeoff boost investment growth.

Investing your money means you won't have to work forever — unless you want to.

Two common ways to make money are;

-working hard,

and

-interest or capital gains from investments.

Capital gains can help you invest.

“How many millionaires do you know who have become wealthy by investing in savings accounts? I rest my case.” — Robert G. Allen

If you keep your money in a savings account, you'll earn less than 2% interest at best; the bank makes money by loaning it out.

Savings accounts are a safe bet, but the low-interest rates limit your gains.

Don't skip it. An emergency fund should be in a savings account, not the market.

Other reasons to invest:

Investing can generate regular income.

If you own rental properties, the tenant's rent will add to your cash flow.

Daily, weekly, or monthly rentals (think Airbnb) generate higher returns year-round.

Capital gains are taxed less than earned income if you own dividend-paying or appreciating stock.

Time is on your side

“Compound interest is the eighth wonder of the world. He who understands it, earns it; he who doesn’t — pays it.” — Albert Einstein

Historical data shows that young investors outperform older investors. So you can use compound interest over decades instead of investing at 45 and having less time to earn.

If I had taken that man's advice and invested in my twenties, I would have made a decent return by my thirties. (Depending on my investments)

So for those who live a YOLO (you only live once) life, investing can't hurt.

Investing increases your knowledge.

Lessons are clearer when you're invested. Each win boosts confidence and draws attention to losses. Losing money prompts you to investigate.

Before investing, I read many financial books, but I didn't understand them until I invested.

Now what?

What do you invest in? Equities, mutual funds, ETFs, retirement accounts, savings, business, real estate, cryptocurrencies, marijuana, insurance, etc.

The key is to start somewhere. Know you don't know everything. You must care.

“A journey of a thousand miles must begin with a single step.” — Lao Tzu.

Start simple because there's so much information. My first investment book was:

Robert Kiyosaki's "Rich Dad, Poor Dad"

This easy-to-read book made me hungry for more. This book is about the money lessons rich parents teach their children, which poor and middle-class parents neglect. The poor and middle-class work for money, while the rich let their assets work for them, says Kiyosaki.

There is so much to learn, but you gotta start somewhere.

More books:

***Wisdom

I hope I'm not suggesting that investing makes everything rosy. Remember three rules:

1. Losing money is possible.

2. Losing money is possible.

3. Losing money is possible.

You can lose money, so be careful.

Read, research, invest.

Golden rules for Investing your money

Never invest money you can't lose.

Financial freedom is possible regardless of income.

"Courage taught me that any sound investment will pay off, no matter how bad a crisis gets." Helu Carlos

"I'll tell you Wall Street's secret to wealth. When others are afraid, you're greedy. You're afraid when others are greedy. Buffett

Buy low, sell high, and have an exit strategy.

Ask experts or wealthy people for advice.

"With a good understanding of history, we can have a clear vision of the future." Helu Carlos

"It's not whether you're right or wrong, but how much money you make when you're right." Soros

"The individual investor should act as an investor, not a speculator." Graham

"It's different this time" is the most dangerous investment phrase. Templeton

Lastly,

Avoid quick-money schemes. Building wealth takes years, not months.

Start small and work your way up.

Thanks for reading!

This post is a summary. Read the full article here

Leon Ho

3 years ago

Digital Brainbuilding (Your Second Brain)

The human brain is amazing. As more scientists examine the brain, we learn how much it can store.

The human brain has 1 billion neurons, according to Scientific American. Each neuron creates 1,000 connections, totaling over a trillion. If each neuron could store one memory, we'd run out of room. [1]

What if you could store and access more info, freeing up brain space for problem-solving and creativity?

Build a second brain to keep up with rising knowledge (what I refer to as a Digital Brain). Effectively managing information entails realizing you can't recall everything.

Every action requires information. You need the correct information to learn a new skill, complete a project at work, or establish a business. You must manage information properly to advance your profession and improve your life.

How to construct a second brain to organize information and achieve goals.

What Is a Second Brain?

How often do you forget an article or book's key point? Have you ever wasted hours looking for a saved file?

If so, you're not alone. Information overload affects millions of individuals worldwide. Information overload drains mental resources and causes anxiety.

This is when the second brain comes in.

Building a second brain doesn't involve duplicating the human brain. Building a system that captures, organizes, retrieves, and archives ideas and thoughts. The second brain improves memory, organization, and recall.

Digital tools are preferable to analog for building a second brain.

Digital tools are portable and accessible. Due to these benefits, we'll focus on digital second-brain building.

Brainware

Digital Brains are external hard drives. It stores, organizes, and retrieves. This means improving your memory won't be difficult.

Memory has three components in computing:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

For example:

Due to rigorous security settings, many websites need you to create complicated passwords with special characters.

You must now memorize (Record), organize (Organize), and input this new password the next time you check in (Recall).

Even in this simple example, there are many pieces to remember. We can't recognize this new password with our usual patterns. If we don't use the password every day, we'll forget it. You'll type the wrong password when you try to remember it.

It's common. Is it because the information is complicated? Nope. Passwords are basically letters, numbers, and symbols.

It happens because our brains aren't meant to memorize these. Digital Brains can do heavy lifting.

Why You Need a Digital Brain

Dual minds are best. Birth brain is limited.

The cerebral cortex has 125 trillion synapses, according to a Stanford Study. The human brain can hold 2.5 million terabytes of digital data. [2]

Building a second brain improves learning and memory.

Learn and store information effectively

Faster information recall

Organize information to see connections and patterns

Build a Digital Brain to learn more and reach your goals faster. Building a second brain requires time and work, but you'll have more time for vital undertakings.

Why you need a Digital Brain:

1. Use Brainpower Effectively

Your brain has boundaries, like any organ. This is true while solving a complex question or activity. If you can't focus on a work project, you won't finish it on time.

Second brain reduces distractions. A robust structure helps you handle complicated challenges quickly and stay on track. Without distractions, it's easy to focus on vital activities.

2. Staying Organized

Professional and personal duties must be balanced. With so much to do, it's easy to neglect crucial duties. This is especially true for skill-building. Digital Brain will keep you organized and stress-free.

Life success requires action. Organized people get things done. Organizing your information will give you time for crucial tasks.

You'll finish projects faster with good materials and methods. As you succeed, you'll gain creative confidence. You can then tackle greater jobs.

3. Creativity Process

Creativity drives today's world. Creativity is mysterious and surprising for millions worldwide. Immersing yourself in others' associations, triggers, thoughts, and ideas can generate inspiration and creativity.

Building a second brain is crucial to establishing your creative process and building habits that will help you reach your goals. Creativity doesn't require perfection or overthinking.

4. Transforming Your Knowledge Into Opportunities

This is the age of entrepreneurship. Today, you can publish online, build an audience, and make money.

Whether it's a business or hobby, you'll have several job alternatives. Knowledge can boost your economy with ideas and insights.

5. Improving Thinking and Uncovering Connections

Modern career success depends on how you think. Instead of overthinking or perfecting, collect the best images, stories, metaphors, anecdotes, and observations.

This will increase your creativity and reveal connections. Increasing your imagination can help you achieve your goals, according to research. [3]

Your ability to recognize trends will help you stay ahead of the pack.

6. Credibility for a New Job or Business

Your main asset is experience-based expertise. Others won't be able to learn without your help. Technology makes knowledge tangible.

This lets you use your time as you choose while helping others. Changing professions or establishing a new business become learning opportunities when you have a Digital Brain.

7. Using Learning Resources

Millions of people use internet learning materials to improve their lives. Online resources abound. These include books, forums, podcasts, articles, and webinars.

These resources are mostly free or inexpensive. Organizing your knowledge can save you time and money. Building a Digital Brain helps you learn faster. You'll make rapid progress by enjoying learning.

How does a second brain feel?

Digital Brain has helped me arrange my job and family life for years.

No need to remember 1001 passwords. I never forget anything on my wife's grocery lists. Never miss a meeting. I can access essential information and papers anytime, anywhere.

Delegating memory to a second brain reduces tension and anxiety because you'll know what to do with every piece of information.

No information will be forgotten, boosting your confidence. Better manage your fears and concerns by writing them down and establishing a strategy. You'll understand the plethora of daily information and have a clear head.

How to Develop Your Digital Brain (Your Second Brain)

It's cheap but requires work.

Digital Brain development requires:

Recording — storing the information

Organization — archiving it in a logical manner

Recall — retrieving it again when you need it

1. Decide what information matters before recording.

To succeed in today's environment, you must manage massive amounts of data. Articles, books, webinars, podcasts, emails, and texts provide value. Remembering everything is impossible and overwhelming.

What information do you need to achieve your goals?

You must consolidate ideas and create a strategy to reach your aims. Your biological brain can imagine and create with a Digital Brain.

2. Use the Right Tool

We usually record information without any preparation - we brainstorm in a word processor, email ourselves a message, or take notes while reading.

This information isn't used. You must store information in a central location.

Different information needs different instruments.

Evernote is a top note-taking program. Audio clips, Slack chats, PDFs, text notes, photos, scanned handwritten pages, emails, and webpages can be added.

Pocket is a great software for saving and organizing content. Images, videos, and text can be sorted. Web-optimized design

Calendar apps help you manage your time and enhance your productivity by reminding you of your most important tasks. Calendar apps flourish. The best calendar apps are easy to use, have many features, and work across devices. These calendars include Google, Apple, and Outlook.

To-do list/checklist apps are useful for managing tasks. Easy-to-use, versatility, budget, and cross-platform compatibility are important when picking to-do list apps. Google Keep, Google Tasks, and Apple Notes are good to-do apps.

3. Organize data for easy retrieval

How should you organize collected data?

When you collect and organize data, you'll see connections. An article about networking can assist you comprehend web marketing. Saved business cards can help you find new clients.

Choosing the correct tools helps organize data. Here are some tools selection criteria:

Can the tool sync across devices?

Personal or team?

Has a search function for easy information retrieval?

Does it provide easy data categorization?

Can users create lists or collections?

Does it offer easy idea-information connections?

Does it mind map and visually organize thoughts?

Conclusion

Building a Digital Brain (second brain) helps us save information, think creatively, and implement ideas. Your second brain is a biological extension. It prevents amnesia, allowing you to tackle bigger creative difficulties.

People who love learning often consume information without using it. Every day, they postpone life-improving experiences until they're forgotten. Useful information becomes strength.

Reference

[1] ^ Scientific American: What Is the Memory Capacity of the Human Brain?

[2] ^ Clinical Neurology Specialists: What is the Memory Capacity of a Human Brain?

[3] ^ National Library of Medicine: Imagining Success: Multiple Achievement Goals and the Effectiveness of Imagery

Mia Gradelski

3 years ago

Six Things Best-With-Money People Do Follow

I shouldn't generalize, yet this is true.

Spending is simpler than earning.

Prove me wrong, but with home debt at $145k in 2020 and individual debt at $67k, people don't have their priorities straight.

Where does this loan originate?

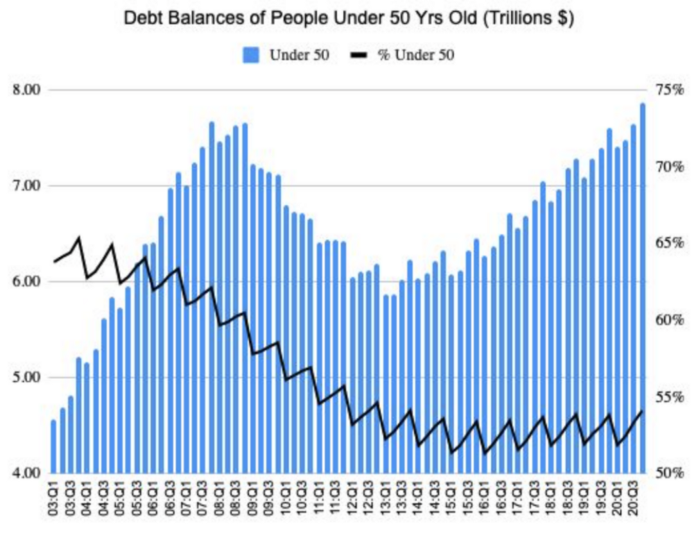

Under-50 Americans owed $7.86 trillion in Q4 20T. That's more than the US's 3-trillion-dollar deficit.

Here’s a breakdown:

🏡 Mortgages/Home Equity Loans = $5.28 trillion (67%)

🎓 Student Loans = $1.20 trillion (15%)

🚗 Auto Loans = $0.80 trillion (10%)

💳 Credit Cards = $0.37 trillion (5%)

🏥 Other/Medical = $0.20 trillion (3%)

Images.google.com

At least the Fed and government can explain themselves with their debt balance which includes:

-Providing stimulus packages 2x for Covid relief

-Stabilizing the economy

-Reducing inflation and unemployment

-Providing for the military, education and farmers

No American should have this much debt.

Don’t get me wrong. Debt isn’t all the same. Yes, it’s a negative number but it carries different purposes which may not be all bad.

Good debt: Use those funds in hopes of them appreciating as an investment in the future

-Student loans

-Business loan

-Mortgage, home equity loan

-Experiences

Paying cash for a home is wasteful. Just if the home is exceptionally uncommon, only 1 in a million on the market, and has an incredible bargain with numerous bidders seeking higher prices should you do so.

To impress the vendor, pay cash so they can sell it quickly. Most people can't afford most properties outright. Only 15% of U.S. homebuyers can afford their home. Zillow reports that only 37% of homes are mortgage-free.

People have clearly overreached.

Ignore appearances.

5% down can buy a 10-bedroom mansion.

Not paying in cash isn't necessarily a negative thing given property prices have increased by 30% since 2008, and throughout the epidemic, we've seen work-from-homers resort to the midwest, avoiding pricey coastal cities like NYC and San Francisco.

By no means do I think NYC is dead, nothing will replace this beautiful city that never sleeps, and now is the perfect time to rent or buy when everything is below average value for people who always wanted to come but never could. Once social distance ends, cities will recover. 24/7 sardine-packed subways prove New York isn't designed for isolation.

When buying a home, pay 20% cash and the balance with a mortgage. A mortgage must be incorporated into other costs such as maintenance, brokerage fees, property taxes, etc. If you're stuck on why a home isn't right for you, read here. A mortgage must be paid until the term date. Whether its a 10 year or 30 year fixed mortgage, depending on interest rates, especially now as the 10-year yield is inching towards 1.25%, it's better to refinance in a lower interest rate environment and pay off your debt as well since the Fed will be inching interest rates up following the 10-year eventually to stabilize the economy, but I believe that won't be until after Covid and when businesses like luxury, air travel, and tourism will get bashed.

Bad debt: I guess the contrary must be true. There is no way to profit from the loan in the future, therefore it is just money down the drain.

-Luxury goods

-Credit card debt

-Fancy junk

-Vacations, weddings, parties, etc.

Credit cards and school loans are the two largest risks to the financial security of those under 50 since banks love to compound interest to affect your credit score and make it tougher to take out more loans, not that you should with that much debt anyhow. With a low credit score and heavy debt, banks take advantage of you because you need aid to pay more for their services. Paying back debt is the challenge for most.

Choose Not Chosen

As a financial literacy advocate and blogger, I prefer not to brag, but I will now. I know what to buy and what to avoid. My parents educated me to live a frugal, minimalist stealth wealth lifestyle by choice, not because we had to.

That's the lesson.

The poorest person who shows off with bling is trying to seem rich.

Rich people know garbage is a bad investment. Investing in education is one of the best long-term investments. With information, you can do anything.

Good with money shun some items out of respect and appreciation for what they have.

Less is more.

Instead of copying the Joneses, use what you have. They may look cheerful and stylish in their 20k ft home, yet they may be as broke as OJ Simpson in his 20-bedroom mansion.

Let's look at what appears good to follow and maintain your wealth.

#1: Quality comes before quantity

Being frugal doesn't entail being cheap and cruel. Rich individuals care about relationships and treating others correctly, not impressing them. You don't have to be rich to be good with money, although most are since they don't live the fantasy lifestyle.

Underspending is appreciating what you have.

Many people believe organic food is the same as washing chemical-laden produce. Hopefully. Organic, vegan, fresh vegetables from upstate may be more expensive in the short term, but they will help you live longer and save you money in the long run.

Consider. You'll save thousands a month eating McDonalds 3x a day instead of fresh seafood, veggies, and organic fruit, but your life will be shortened. If you want to save money and die early, go ahead, but I assume we all want to break the world record for longest person living and would rather spend less. Plus, elderly people get tax breaks, medicare, pensions, 401ks, etc. You're living for free, therefore eating fast food forever is a terrible decision.

With a few longer years, you may make hundreds or millions more in the stock market, spend more time with family, and just live.

Folks, health is wealth.

Consider the future benefit, not simply the cash sign. Cheapness is useless.

Same with stuff. Don't stock your closet with fast-fashion you can't wear for years. Buying inexpensive goods that will fail tomorrow is stupid.

Investing isn't only in stocks. You're living. Consume less.

#2: If you cannot afford it twice, you cannot afford it once

I learned this from my dad in 6th grade. I've been lucky to travel, experience things, go to a great university, and conduct many experiments that others without a stable, decent lifestyle can afford.

I didn't live this way because of my parents' paycheck or financial knowledge.

Saving and choosing caused it.

I always bring cash when I shop. I ditch Apple Pay and credit cards since I can spend all I want on even if my account bounces.

Banks are nasty. When you lose it, they profit.

Cash hinders banks' profits. Carrying a big, hefty wallet with cash is lame and annoying, but it's the best method to only spend what you need. Not for vacation, but for tiny daily expenses.

Physical currency lets you know how much you have for lunch or a taxi.

It's physical, thus losing it prevents debt.

If you can't afford it, it will harm more than help.

#3: You really can purchase happiness with money.

If used correctly, yes.

Happiness and satisfaction differ.

It won't bring you fulfillment because you must work hard on your own to help others, but you can travel and meet individuals you wouldn't otherwise meet.

You can meet your future co-worker or strike a deal while waiting an hour in first class for takeoff, or you can meet renowned people at a networking brunch.

Seen a pattern here?

Your time and money are best spent on connections. Not automobiles or firearms. That’s just stuff. It doesn’t make you a better person.

Be different if you've earned less. Instead of trying to win the lotto or become an NFL star for your first big salary, network online for free.

Be resourceful. Sign up for LinkedIn, post regularly, and leave unengaged posts up because that shows power.

Consistency is beneficial.

I did that for a few months and met amazing people who helped me get jobs. Money doesn't create jobs, it creates opportunities.

Resist social media and scammers that peddle false hopes.

Choose wisely.

#4: Avoid gushing over titles and purchasing trash.

As Insider’s Hillary Hoffower reports, “Showing off wealth is no longer the way to signify having wealth. In the US particularly, the top 1% have been spending less on material goods since 2007.”

I checked my closet. No brand comes to mind. I've never worn a brand's logo and rotate 6 white shirts daily. I have my priorities and don't waste money or effort on clothing that won't fit me in a year.

Unless it's your full-time work, clothing shouldn't be part of our mornings.

Lifestyle of stealth wealth. You're so fulfilled that seeming homeless won't hurt your self-esteem.

That's self-assurance.

Extroverts aren't required.

That's irrelevant.

Showing off won't win you friends.

They'll like your personality.

#5: Time is the most valuable commodity.

Being rich doesn't entail working 24/7 M-F.

They work when they are ready to work.

Waking up at 5 a.m. won't make you a millionaire, but it will inculcate diligence and tenacity in you.

You have a busy day yet want to exercise. You can skip the workout or wake up at 4am instead of 6am to do it.

Emotion-driven lazy bums stay in bed.

Those that are accountable keep their promises because they know breaking one will destroy their week.

Since 7th grade, I've worked out at 5am for myself, not to impress others. It gives me greater energy to contribute to others, especially on weekends and holidays.

It's a habit that I have in my life.

Find something that you take seriously and makes you a better person.

As someone who is close to becoming a millionaire and has encountered them throughout my life, I can share with you a few important differences that have shaped who we are as a society based on the weekends:

-Read

-Sleep

-Best time to work with no distractions

-Eat together

-Take walks and be in nature

-Gratitude

-Major family time

-Plan out weeks

-Go grocery shopping because health = wealth

#6. Perspective is Important

Timing the markets will slow down your career. Professors preach scarcity, not abundance. Why should school teach success? They give us bad advice.

If you trust in abundance and luck by attempting and experimenting, growth will come effortlessly. Passion isn't a term that just appears. Mistakes and fresh people help. You can get money. If you don't think it's worth it, you won't.

You don’t have to be wealthy to be good at money, but most are for these reasons. Rich is a mindset, wealth is power. Prioritize your resources. Invest in yourself, knowing the toughest part is starting.

Thanks for reading!

You might also like

Aaron Dinin, PhD

2 years ago

The Advantages and Disadvantages of Having Investors Sign Your NDA

Startup entrepreneurs assume what risks when pitching?

Last week I signed four NDAs.

Four!

NDA stands for non-disclosure agreement. A legal document given to someone receiving confidential information. By signing, the person pledges not to share the information for a certain time. If they do, they may be in breach of contract and face legal action.

Companies use NDAs to protect trade secrets and confidential internal information from employees and contractors. Appropriate. If you manage a huge, successful firm, you don't want your employees selling their information to your competitors. To be true, business NDAs don't always prevent corporate espionage, but they usually make employees and contractors think twice before sharing.

I understand employee and contractor NDAs, but I wasn't asked to sign one. I counsel entrepreneurs, thus the NDAs I signed last week were from startups that wanted my feedback on their concepts.

I’m not a startup investor. I give startup guidance online. Despite that, four entrepreneurs thought their company ideas were so important they wanted me to sign a generically written legal form they probably acquired from a shady, spam-filled legal templates website before we could chat.

False. One company tried to get me to sign their NDA a few days after our conversation. I gently rejected, but their tenacity encouraged me. I considered sending retroactive NDAs to everyone I've ever talked to about one of my startups in case they establish a successful company based on something I said.

Two of the other three NDAs were from nearly identical companies. Good thing I didn't sign an NDA for the first one, else they may have sued me for talking to the second one as though I control the firms people pitch me.

I wasn't talking to the fourth NDA company. Instead, I received an unsolicited email from someone who wanted comments on their fundraising pitch deck but required me to sign an NDA before sending it.

That's right, before I could read a random Internet stranger's unsolicited pitch deck, I had to sign his NDA, potentially limiting my ability to discuss what was in it.

You should understand. Advisors, mentors, investors, etc. talk to hundreds of businesses each year. They cannot manage all the companies they deal with, thus they cannot risk legal trouble by talking to someone. Well, if I signed NDAs for all the startups I spoke with, half of the 300+ articles I've written on Medium over the past several years could get me sued into the next century because I've undoubtedly addressed topics in my articles that I discussed with them.

The four NDAs I received last week are part of a recent trend of entrepreneurs sending out NDAs before meetings, despite the practical and legal issues. They act like asking someone to sign away their right to talk about all they see and hear in a day is as straightforward as asking for a glass of water.

Given this inflow of NDAs, I wanted to briefly remind entrepreneurs reading this blog about the merits and cons of requesting investors (or others in the startup ecosystem) to sign your NDA.

Benefits of having investors sign your NDA include:

None. Zero. Nothing.

Disadvantages of requesting investor NDAs:

You'll come off as an amateur who has no idea what it takes to launch a successful firm.

Investors won't trust you with their money since you appear to be a complete amateur.

Printing NDAs will be a waste of paper because no genuine entrepreneur will ever sign one.

I apologize for missing any cons. Please leave your remarks.

Vitalik

4 years ago

An approximate introduction to how zk-SNARKs are possible (part 2)

If tasked with the problem of coming up with a zk-SNARK protocol, many people would make their way to this point and then get stuck and give up. How can a verifier possibly check every single piece of the computation, without looking at each piece of the computation individually? But it turns out that there is a clever solution.

Polynomials

Polynomials are a special class of algebraic expressions of the form:

- x+5

- x^4

- x^3+3x^2+3x+1

- 628x^{271}+318x^{270}+530x^{269}+…+69x+381

i.e. they are a sum of any (finite!) number of terms of the form cx^k

There are many things that are fascinating about polynomials. But here we are going to zoom in on a particular one: polynomials are a single mathematical object that can contain an unbounded amount of information (think of them as a list of integers and this is obvious). The fourth example above contained 816 digits of tau, and one can easily imagine a polynomial that contains far more.

Furthermore, a single equation between polynomials can represent an unbounded number of equations between numbers. For example, consider the equation A(x)+ B(x) = C(x). If this equation is true, then it's also true that:

- A(0)+B(0)=C(0)

- A(1)+B(1)=C(1)

- A(2)+B(2)=C(2)

- A(3)+B(3)=C(3)

And so on for every possible coordinate. You can even construct polynomials to deliberately represent sets of numbers so you can check many equations all at once. For example, suppose that you wanted to check:

- 12+1=13

- 10+8=18

- 15+8=23

- 15+13=28

You can use a procedure called Lagrange interpolation to construct polynomials A(x) that give (12,10,15,15) as outputs at some specific set of coordinates (eg. (0,1,2,3)), B(x) the outputs (1,8,8,13) on thos same coordinates, and so forth. In fact, here are the polynomials:

- A(x)=-2x^3+\frac{19}{2}x^2-\frac{19}{2}x+12

- B(x)=2x^3-\frac{19}{2}x^2+\frac{29}{2}x+1

- C(x)=5x+13

Checking the equation A(x)+B(x)=C(x) with these polynomials checks all four above equations at the same time.

Comparing a polynomial to itself

You can even check relationships between a large number of adjacent evaluations of the same polynomial using a simple polynomial equation. This is slightly more advanced. Suppose that you want to check that, for a given polynomial F, F(x+2)=F(x)+F(x+1) with the integer range {0,1…89} (so if you also check F(0)=F(1)=1, then F(100) would be the 100th Fibonacci number)

As polynomials, F(x+2)-F(x+1)-F(x) would not be exactly zero, as it could give arbitrary answers outside the range x={0,1…98}. But we can do something clever. In general, there is a rule that if a polynomial P is zero across some set S=\{x_1,x_2…x_n\} then it can be expressed as P(x)=Z(x)*H(x), where Z(x)=(x-x_1)*(x-x_2)*…*(x-x_n) and H(x) is also a polynomial. In other words, any polynomial that equals zero across some set is a (polynomial) multiple of the simplest (lowest-degree) polynomial that equals zero across that same set.

Why is this the case? It is a nice corollary of polynomial long division: the factor theorem. We know that, when dividing P(x) by Z(x), we will get a quotient Q(x) and a remainder R(x) is strictly less than that of Z(x). Since we know that P is zero on all of S, it means that R has to be zero on all of S as well. So we can simply compute R(x) via polynomial interpolation, since it's a polynomial of degree at most n-1 and we know n values (the zeros at S). Interpolating a polynomial with all zeroes gives the zero polynomial, thus R(x)=0 and H(x)=Q(x).

Going back to our example, if we have a polynomial F that encodes Fibonacci numbers (so F(x+2)=F(x)+F(x+1) across x=\{0,1…98\}), then I can convince you that F actually satisfies this condition by proving that the polynomial P(x)=F(x+2)-F(x+1)-F(x) is zero over that range, by giving you the quotient:

H(x)=\frac{F(x+2)-F(x+1)-F(x)}{Z(x)}

Where Z(x) = (x-0)*(x-1)*…*(x-98).

You can calculate Z(x) yourself (ideally you would have it precomputed), check the equation, and if the check passes then F(x) satisfies the condition!

Now, step back and notice what we did here. We converted a 100-step-long computation into a single equation with polynomials. Of course, proving the N'th Fibonacci number is not an especially useful task, especially since Fibonacci numbers have a closed form. But you can use exactly the same basic technique, just with some extra polynomials and some more complicated equations, to encode arbitrary computations with an arbitrarily large number of steps.

see part 3

Bart Krawczyk

2 years ago

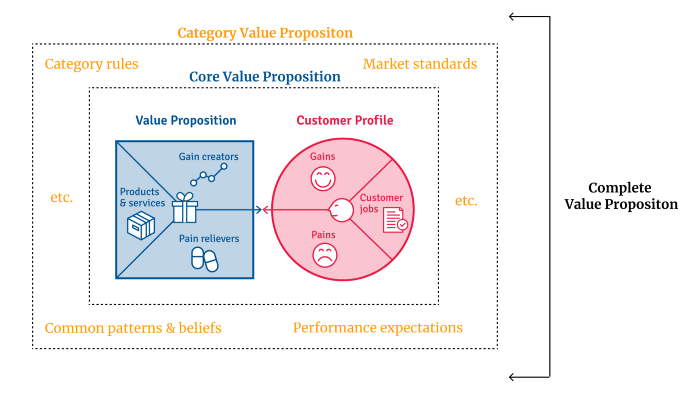

Understanding several Value Proposition kinds will help you create better goods.

Fixing problems isn't enough.

Numerous articles and how-to guides on value propositions focus on fixing consumer concerns.

Contrary to popular opinion, addressing customer pain rarely suffices. Win your market category too.

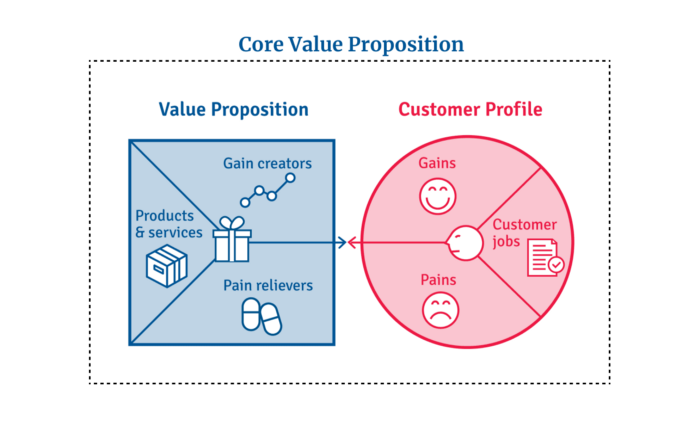

Core Value Statement

Value proposition usually means a product's main value.

Its how your product solves client problems. The product's core.

Answering these questions creates a relevant core value proposition:

What tasks is your customer trying to complete? (Jobs for clients)

How much discomfort do they feel while they perform this? (pains)

What would they like to see improved or changed? (gains)

After that, you create products and services that alleviate those pains and give value to clients.

Value Proposition by Category

Your product belongs to a market category and must follow its regulations, regardless of its value proposition.

Creating a new market category is challenging. Fitting into customers' product perceptions is usually better than trying to change them.

New product users simplify market categories. Products are labeled.

Your product will likely be associated with a collection of products people already use.

Example: IT experts will use your communication and management app.

If your target clients think it's an advanced mail software, they'll compare it to others and expect things like:

comprehensive calendar

spam detectors

adequate storage space

list of contacts

etc.

If your target users view your product as a task management app, things change. You can survive without a contact list, but not status management.

Find out what your customers compare your product to and if it fits your value offer. If so, adapt your product plan to dominate this market. If not, try different value propositions and messaging to put the product in the right context.

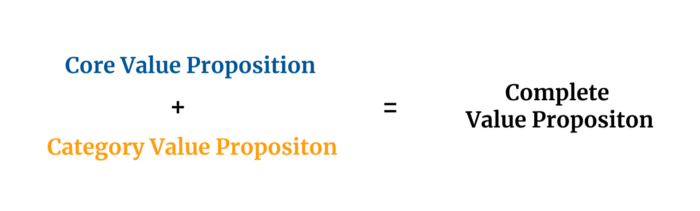

Finished Value Proposition

A comprehensive value proposition is when your solution addresses user problems and wins its market category.

Addressing simply the primary value proposition may produce a valuable and original product, but it may struggle to cross the chasm into the mainstream market. Meeting expectations is easier than changing views.

Without a unique value proposition, you will drown in the red sea of competition.

To conclude:

Find out who your target consumer is and what their demands and problems are.

To meet these needs, develop and test a primary value proposition.

Speak with your most devoted customers. Recognize the alternatives they use to compare you against and the market segment they place you in.

Recognize the requirements and expectations of the market category.

To meet or surpass category standards, modify your goods.

Great products solve client problems and win their category.