More on Leadership

Jason Kottke

3 years ago

Lessons on Leadership from the Dancing Guy

This is arguably the best three-minute demonstration I've ever seen of anything. Derek Sivers turns a shaky video of a lone dancing guy at a music festival into a leadership lesson.

A leader must have the courage to stand alone and appear silly. But what he's doing is so straightforward that it's almost instructive. This is critical. You must be simple to follow!

Now comes the first follower, who plays an important role: he publicly demonstrates how to follow. The leader embraces him as an equal, so it's no longer about the leader — it's about them, plural. He's inviting his friends to join him. It takes courage to be the first follower! You stand out and dare to be mocked. Being a first follower is a style of leadership that is underappreciated. The first follower elevates a lone nut to the position of leader. If the first follower is the spark that starts the fire, the leader is the flint.

This link was sent to me by @ottmark, who noted its resemblance to Kurt Vonnegut's three categories of specialists required for revolution.

The rarest of these specialists, he claims, is an actual genius – a person capable generating seemingly wonderful ideas that are not widely known. "A genius working alone is generally dismissed as a crazy," he claims.

The second type of specialist is much easier to find: a highly intellectual person in good standing in his or her community who understands and admires the genius's new ideas and can attest that the genius is not insane. "A person like him working alone can only crave loudly for changes, but fail to say what their shapes should be," Slazinger argues.

Jeff Veen reduced the three personalities to "the inventor, the investor, and the evangelist" on Twitter.

Alison Randel

3 years ago

Raising the Bar on Your 1:1s

Managers spend much time in 1:1s. Most team members meet with supervisors regularly. 1:1s can help create relationships and tackle tough topics. Few appreciate the 1:1 format's potential. Most of the time, that potential is spent on small talk, surface-level updates, and ranting (Ugh, the marketing team isn’t stepping up the way I want them to).

What if you used that time to have deeper conversations and important insights? What if change was easy?

This post introduces a new 1:1 format to help you dive deeper, faster, and develop genuine relationships without losing impact.

A 1:1 is a chat, you would assume. Why use structure to talk to a coworker? Go! I know how to talk to people. I can write. I've always written. Also, This article was edited by Zoe.

Before you discard something, ask yourself if there's a good reason not to try anything new. Is the 1:1 only a talk, or do you want extra benefits? Try the steps below to discover more.

I. Reflection (5 minutes)

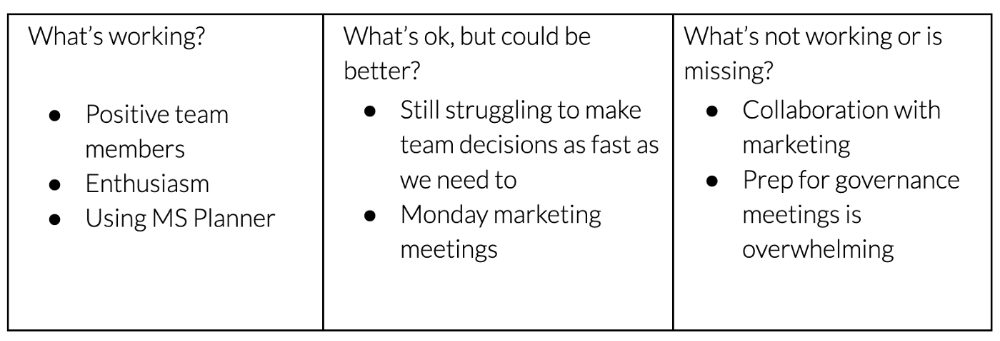

Context-free, broad comments waste time and are useless. Instead, give team members 5 minutes to write these 3 prompts.

What's effective?

What is decent but could be improved?

What is broken or missing?

Why these? They encourage people to be honest about all their experiences. Answering these questions helps people realize something isn't working. These prompts let people consider what's working.

Why take notes? Because you get more in less time. Will you feel awkward sitting quietly while your coworker writes? Probably. Persevere. Multi-task. Take a break from your afternoon meeting marathon. Any awkwardness will pay off.

What happens? After a few minutes of light conversation, create a template like the one given here and have team members fill in their replies. You can pre-share the template (with the caveat that this isn’t meant to take much prep time). Do this with your coworker: Answer the prompts. Everyone can benefit from pondering and obtaining guidance.

This step's output.

Part II: Talk (10-20 minutes)

Most individuals can explain what they see but not what's behind an answer. You don't like a meeting. Why not? Marketing partnership is difficult. What makes working with them difficult? I don't recommend slandering coworkers. Consider how your meetings, decisions, and priorities make work harder. The excellent stuff too. You want to know what's humming so you can reproduce the magic.

First, recognize some facts.

Real power dynamics exist. To encourage individuals to be honest, you must provide a safe environment and extend clear invites. Even then, it may take a few 1:1s for someone to feel secure enough to go there in person. It is part of your responsibility to admit that it is normal.

Curiosity and self-disclosure are crucial. Most leaders have received training to present themselves as the authorities. However, you will both benefit more from the dialogue if you can be open and honest about your personal experience, ask questions out of real curiosity, and acknowledge the pertinent sacrifices you're making as a leader.

Honesty without bias is difficult and important. Due to concern for the feelings of others, people frequently hold back. Or if they do point anything out, they do so in a critical manner. The key is to be open and unapologetic about what you observe while not presuming that your viewpoint is correct and that of the other person is incorrect.

Let's go into some prompts (based on genuine conversations):

“What do you notice across your answers?”

“What about the way you/we/they do X, Y, or Z is working well?”

“ Will you say more about item X in ‘What’s not working?’”

“I’m surprised there isn’t anything about Z. Why is that?”

“All of us tend to play some role in maintaining certain patterns. How might you/we be playing a role in this pattern persisting?”

“How might the way we meet, make decisions, or collaborate play a role in what’s currently happening?”

Consider the preceding example. What about the Monday meeting isn't working? Why? or What about the way we work with marketing makes collaboration harder? Remember to share your honest observations!

Third section: observe patterns (10-15 minutes)

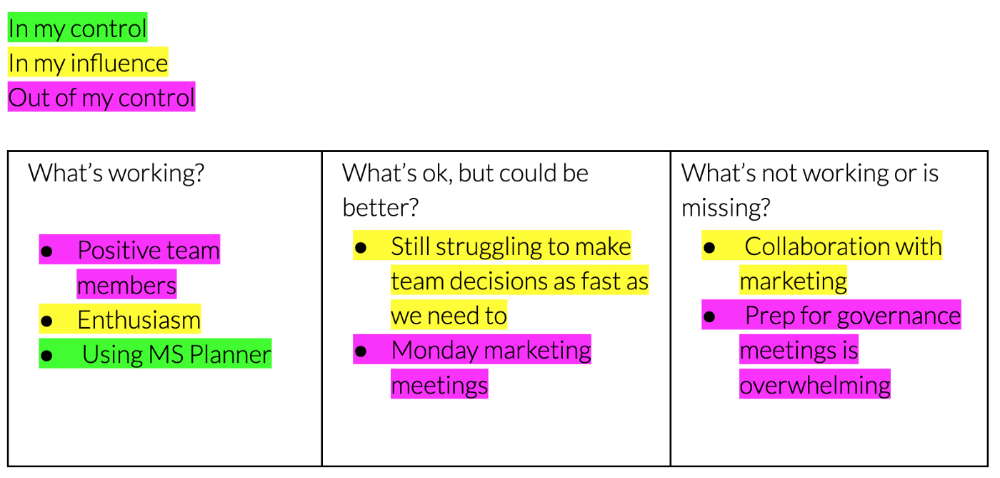

Leaders desire to empower their people but don't know how. We also have many preconceptions about what empowerment means to us and how it works. The next phase in this 1:1 format will assist you and your team member comprehend team power and empowerment. This understanding can help you support and shift your team member's behavior, especially where you disagree.

How to? After discussing the stated responses, ask each team member what they can control, influence, and not control. Mark their replies. You can do the same, adding colors where you disagree.

This step's output.

Next, consider the color constellation. Discuss these questions:

Is one color much more prevalent than the other? Why, if so?

Are the colors for the "what's working," "what's fine," and "what's not working" categories clearly distinct? Why, if so?

Do you have any disagreements? If yes, specifically where does your viewpoint differ? What activities do you object to? (Remember, there is no right or wrong in this. Give explicit details and ask questions with curiosity.)

Example: Based on the colors, you can ask, Is the marketing meeting's quality beyond your control? Were our marketing partners consulted? Are there any parts of team decisions we can control? We can't control people, but have we explored another decision-making method? How can we collaborate and generate governance-related information to reduce work, even if the requirement for prep can't be eliminated?

Consider the top one or two topics for this conversation. No 1:1 can cover everything, and that's OK. Focus on the present.

Part IV: Determine the next step (5 minutes)

Last, examine what this conversation means for you and your team member. It's easy to think we know the next moves when we don't.

Like what? You and your teammate answer these questions.

What does this signify moving ahead for me? What can I do to change this? Make requests, for instance, and see how people respond before thinking they won't be responsive.

What demands do I have on other people or my partners? What should I do first? E.g. Make a suggestion to marketing that we hold a monthly retrospective so we can address problems and exchange input more frequently. Include it on the meeting's agenda for next Monday.

Close the 1:1 by sharing what you noticed about the chat. Observations? Learn anything?

Yourself, you, and the 1:1

As a leader, you either reinforce or disrupt habits. Try this template if you desire greater ownership, empowerment, or creativity. Consider how you affect surrounding dynamics. How can you expect others to try something new in high-stakes scenarios, like meetings with cross-functional partners or senior stakeholders, if you won't? How can you expect deep thought and relationship if you don't encourage it in 1:1s? What pattern could this new format disrupt or reinforce?

Fight reluctance. First attempts won't be ideal, and that's OK. You'll only learn by trying.

KonstantinDr

3 years ago

Early Adopters And the Fifth Reason WHY

Product management wizardry.

Early adopters buy a product even if it hasn't hit the market or has flaws.

Who are the early adopters?

Early adopters try a new technology or product first. Early adopters are interested in trying or buying new technologies and products before others. They're risk-tolerant and can provide initial cash flow and product reviews. They help a company's new product or technology gain social proof.

Early adopters are most common in the technology industry, but they're in every industry. They don't follow the crowd. They seek innovation and report product flaws before mass production. If the product works well, the first users become loyal customers, and colleagues value their opinion.

What to do with early adopters?

They can be used to collect feedback and initial product promotion, first sales, and product value validation.

How to find early followers?

Start with your immediate environment and target audience. Communicate with them to see if they're interested in your value proposition.

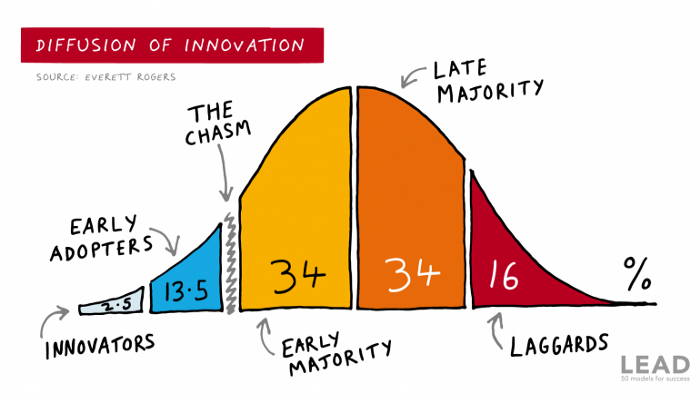

1) Innovators (2.5% of the population) are risk-takers seeking novelty. These people are the first to buy new and trendy items and drive social innovation. However, these people are usually elite;

Early adopters (13.5%) are inclined to accept innovations but are more cautious than innovators; they start using novelties when innovators or famous people do;

3) The early majority (34%) is conservative; they start using new products when many people have mastered them. When the early majority accepted the innovation, it became ingrained in people's minds.

4) Attracting 34% of the population later means the novelty has become a mass-market product. Innovators are using newer products;

5) Laggards (16%) are the most conservative, usually elderly people who use the same products.

Stages of new information acceptance

1. The information is strange and rejected by most. Accepted only by innovators;

2. When early adopters join, more people believe it's not so bad; when a critical mass is reached, the novelty becomes fashionable and most people use it.

3. Fascination with a novelty peaks, then declines; the majority and laggards start using it later; novelty becomes obsolete; innovators master something new.

Problems with early implementation

Early adopter sales have disadvantages.

Higher risk of defects

Selling to first-time users increases the risk of defects. Early adopters are often influential, so this can affect the brand's and its products' long-term perception.

Not what was expected

First-time buyers may be disappointed by the product. Marketing messages can mislead consumers, and if the first users believe the company misrepresented the product, this will affect future sales.

Compatibility issues

Some technological advances cause compatibility issues. Consumers may be disappointed if new technology is incompatible with their electronics.

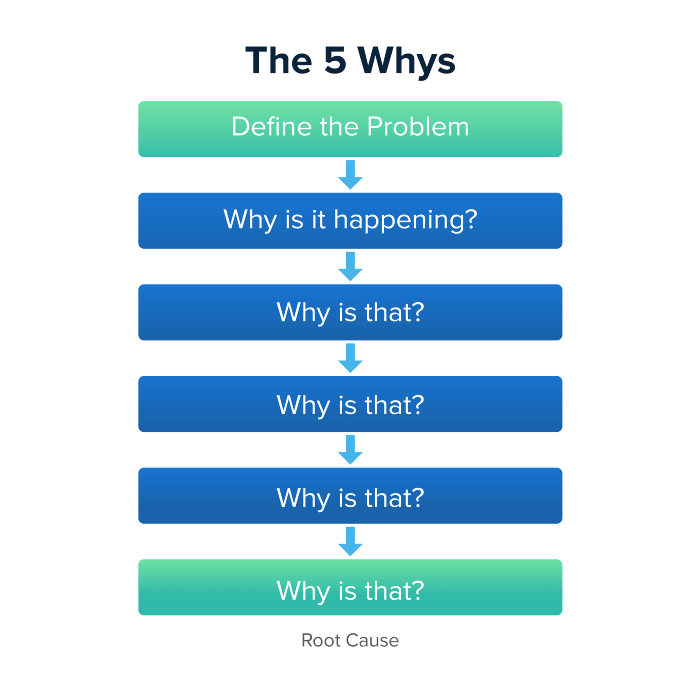

Method 5 WHY

Let's talk about 5 why, a good tool for finding project problems' root causes. This method is also known as the five why rule, method, or questions.

The 5 why technique came from Toyota's lean manufacturing and helps quickly determine a problem's root cause.

On one, two, and three, you simply do this:

We identify and frame the issue for which a solution is sought.

We frequently ponder this question. The first 2-3 responses are frequently very dull, making you want to give up on this pointless exercise. However, after that, things get interesting. And occasionally it's so fascinating that you question whether you really needed to know.

We consider the final response, ponder it, and choose a course of action.

Always do the 5 whys with the customer or team to have a reasonable discussion and better understand what's happening.

And the “five whys” is a wonderful and simplest tool for introspection. With the accumulated practice, it is used almost automatically in any situation like “I can’t force myself to work, the mood is bad in the morning” or “why did I decide that I have no life without this food processor for 20,000 rubles, which will take half of my rather big kitchen.”

An illustration of the five whys

A simple, but real example from my work practice that I think is very indicative, given the participants' low IT skills. Anonymized, of course.

Users spend too long looking for tender documents.

Why? Because they must search through many company tender documents.

Why? Because the system can't filter department-specific bids.

Why? Because our contract management system requirements didn't include a department-tender link. That's it, right? We'll add a filter and be happy. but still…

why? Because we based the system's requirements on regulations for working with paper tender documents (when they still had envelopes and autopsies), not electronic ones, and there was no search mechanism.

Why? We didn't consider how our work would change when switching from paper to electronic tenders when drafting the requirements.

Now I know what to do in the future. We add a filter, enter department data, and teach users to use it. This is tactical, but strategically we review the same forgotten requirements to make all the necessary changes in a package, plus we include it in the checklist for the acceptance of final requirements for the future.

Errors when using 5 why

Five whys seems simple, but it can be misused.

Popular ones:

The accusation of everyone and everything is then introduced. After all, the 5 why method focuses on identifying the underlying causes rather than criticizing others. As a result, at the third step, it is not a good idea to conclude that the system is ineffective because users are stupid and that we can therefore do nothing about it.

to fight with all my might so that the outcome would be exactly 5 reasons, neither more nor less. 5 questions is a typical number (it sounds nice, yes), but there could be 3 or 7 in actuality.

Do not capture in-between responses. It is difficult to overestimate the power of the written or printed word, so the result is so-so when the focus is lost. That's it, I suppose. Simple, quick, and brilliant, like other project management tools.

Conclusion

Today we analyzed important study elements:

Early adopters and 5 WHY We've analyzed cases and live examples of how these methods help with product research and growth point identification. Next, consider the HADI cycle.

You might also like

Sukhad Anand

3 years ago

How Do Discord's Trillions Of Messages Get Indexed?

They depend heavily on open source..

Discord users send billions of messages daily. Users wish to search these messages. How do we index these to search by message keywords?

Let’s find out.

Discord utilizes Elasticsearch. Elasticsearch is a free, open search engine for textual, numerical, geographical, structured, and unstructured data. Apache Lucene powers Elasticsearch.

How does elastic search store data? It stores it as numerous key-value pairs in JSON documents.

How does elastic search index? Elastic search's index is inverted. An inverted index lists every unique word in every page and where it appears.

4. Elasticsearch indexes documents and generates an inverted index to make data searchable in near real-time. The index API adds or updates JSON documents in a given index.

Let's examine how discord uses Elastic Search. Elasticsearch prefers bulk indexing. Discord couldn't index real-time messages. You can't search posted messages. You want outdated messages.

6. Let's check what bulk indexing requires.

1. A temporary queue for incoming communications.

2. Indexer workers that index messages into elastic search.

Discord's queue is Celery. The queue is open-source. Elastic search won't run on a single server. It's clustered. Where should a message go? Where?

8. A shard allocator decides where to put the message. Nevertheless. Shattered? A shard combines elastic search and index on. So, these two form a shard which is used as a unit by discord. The elastic search itself has some shards. But this is different, so don’t get confused.

Now, the final part is service discovery — to discover the elastic search clusters and the hosts within that cluster. This, they do with the help of etcd another open source tool.

A great thing to notice here is that discord relies heavily on open source systems and their base implementations which is very different from a lot of other products.

Aaron Dinin, PhD

3 years ago

I put my faith in a billionaire, and he destroyed my business.

How did his money blind me?

Like most fledgling entrepreneurs, I wanted a mentor. I met as many nearby folks with "entrepreneur" in their LinkedIn biographies for coffee.

These meetings taught me a lot, and I'd suggest them to any new creator. Attention! Meeting with many experienced entrepreneurs means getting contradictory advice. One entrepreneur will tell you to do X, then the next one you talk to may tell you to do Y, which are sometimes opposites. You'll have to chose which suggestion to take after the chats.

I experienced this. Same afternoon, I had two coffee meetings with experienced entrepreneurs. The first meeting was with a billionaire entrepreneur who took his company public.

I met him in a swanky hotel lobby and ordered a drink I didn't pay for. As a fledgling entrepreneur, money was scarce.

During the meeting, I demoed the software I'd built, he liked it, and we spent the hour discussing what features would make it a success. By the end of the meeting, he requested I include a killer feature we both agreed would attract buyers. The feature was complex and would require some time. The billionaire I was sipping coffee with in a beautiful hotel lobby insisted people would love it, and that got me enthusiastic.

The second meeting was with a young entrepreneur who had recently raised a small amount of investment and looked as eager to pitch me as I was to pitch him. I forgot his name. I mostly recall meeting him in a filthy coffee shop in a bad section of town and buying his pricey cappuccino. Water for me.

After his pitch, I demoed my app. When I was done, he barely noticed. He questioned my customer acquisition plan. Who was my client? What did they offer? What was my plan? Etc. No decent answers.

After our meeting, he insisted I spend more time learning my market and selling. He ignored my questions about features. Don't worry about features, he said. Customers will request features. First, find them.

Putting your faith in results over relevance

Problems plagued my afternoon. I met with two entrepreneurs who gave me differing advice about how to proceed, and I had to decide which to pursue. I couldn't decide.

Ultimately, I followed the advice of the billionaire.

Obviously.

Who wouldn’t? That was the guy who clearly knew more.

A few months later, I constructed the feature the billionaire said people would line up for.

The new feature was unpopular. I couldn't even get the billionaire to answer an email showing him what I'd done. He disappeared.

Within a few months, I shut down the company, wasting all the time and effort I'd invested into constructing the killer feature the billionaire said I required.

Would follow the struggling entrepreneur's advice have saved my company? It would have saved me time in retrospect. Potential consumers would have told me they didn't want what I was producing, and I could have shut down the company sooner or built something they did want. Both outcomes would have been better.

Now I know, but not then. I favored achievement above relevance.

Success vs. relevance

The millionaire gave me advice on building a large, successful public firm. A successful public firm is different from a startup. Priorities change in the last phase of business building, which few entrepreneurs reach. He gave wonderful advice to founders trying to double their stock values in two years, but it wasn't beneficial for me.

The other failing entrepreneur had relevant, recent experience. He'd recently been in my shoes. We still had lots of problems. He may not have achieved huge success, but he had valuable advice on how to pass the closest hurdle.

The money blinded me at the moment. Not alone So much of company success is defined by money valuations, fundraising, exits, etc., so entrepreneurs easily fall into this trap. Money chatter obscures the value of knowledge.

Don't base startup advice on a person's income. Focus on what and when the person has learned. Relevance to you and your goals is more important than a person's accomplishments when considering advice.

Hudson Rennie

3 years ago

My Work at a $1.2 Billion Startup That Failed

Sometimes doing everything correctly isn't enough.

In 2020, I could fix my life.

After failing to start a business, I owed $40,000 and had no work.

A $1.2 billion startup on the cusp of going public pulled me up.

Ironically, it was getting ready for an epic fall — with the world watching.

Life sometimes helps. Without a base, even the strongest fall. A corporation that did everything right failed 3 months after going public.

First-row view.

Apple is the creator of Adore.

Out of respect, I've altered the company and employees' names in this account, despite their failure.

Although being a publicly traded company, it may become obvious.

We’ll call it “Adore” — a revolutionary concept in retail shopping.

Two Apple execs established Adore in 2014 with a focus on people-first purchasing.

Jon and Tim:

The concept for the stylish Apple retail locations you see today was developed by retail expert Jon Swanson, who collaborated closely with Steve Jobs.

Tim Cruiter is a graphic designer who produced the recognizable bouncing lamp video that appears at the start of every Pixar film.

The dynamic duo realized their vision.

“What if you could combine the convenience of online shopping with the confidence of the conventional brick-and-mortar store experience.”

Adore's mobile store concept combined traditional retail with online shopping.

Adore brought joy to 70+ cities and 4 countries over 7 years, including the US, Canada, and the UK.

Being employed on the ground floor, with world dominance and IPO on the horizon, was exciting.

I started as an Adore Expert.

I delivered cell phones, helped consumers set them up, and sold add-ons.

As the company grew, I became a Virtual Learning Facilitator and trained new employees across North America using Zoom.

In this capacity, I gained corporate insider knowledge. I worked with the creative team and Jon and Tim.

It's where I saw company foundation fissures. Despite appearances, investors were concerned.

The business strategy was ground-breaking.

Even after seeing my employee stocks fall from a home down payment to $0 (when Adore filed for bankruptcy), it's hard to pinpoint what went wrong.

Solid business model, well-executed.

Jon and Tim's chase for public funding ended in glory.

Here’s the business model in a nutshell:

Buying cell phones is cumbersome. You have two choices:

Online purchase: not knowing what plan you require or how to operate your device.

Enter a store, which can be troublesome and stressful.

Apple, AT&T, and Rogers offered Adore as a free delivery add-on. Customers could:

Have their phone delivered by UPS or Canada Post in 1-2 weeks.

Alternately, arrange for a person to visit them the same day (or sometimes even the same hour) to assist them set up their phone and demonstrate how to use it (transferring contacts, switching the SIM card, etc.).

Each Adore Expert brought a van with extra devices and accessories to customers.

Happy customers.

Here’s how Adore and its partners made money:

Adores partners appreciated sending Experts to consumers' homes since they improved customer satisfaction, average sale, and gadget returns.

**Telecom enterprises have low customer satisfaction. The average NPS is 30/100. Adore's global NPS was 80.

Adore made money by:

a set cost for each delivery

commission on sold warranties and extras

Consumer product applications seemed infinite.

A proprietary scheduling system (“The Adore App”), allowed for same-day, even same-hour deliveries.

It differentiates Adore.

They treated staff generously by:

Options on stock

health advantages

sales enticements

high rates per hour

Four-day workweeks were set by experts.

Being hired early felt like joining Uber, Netflix, or Tesla. We hoped the company's stocks would rise.

Exciting times.

I smiled as I greeted more than 1,000 new staff.

I spent a decade in retail before joining Adore. I needed a change.

After a leap of faith, I needed a lifeline. So, I applied for retail sales jobs in the spring of 2019.

The universe typically offers you what you want after you accept what you need. I needed a job to settle my debt and reach $0 again.

And the universe listened.

After being hired as an Adore Expert, I became a Virtual Learning Facilitator. Enough said.

After weeks of economic damage from the pandemic.

This employment let me work from home during the pandemic. It taught me excellent business skills.

I was active in brainstorming, onboarding new personnel, and expanding communication as we grew.

This job gave me vital skills and a regular paycheck during the pandemic.

It wasn’t until January of 2022 that I left on my own accord to try to work for myself again — this time, it’s going much better.

Adore was perfect. We valued:

Connection

Discovery

Empathy

Everything we did centered on compassion, and we held frequent Justice Calls to discuss diversity and work culture.

The last day of onboarding typically ended in tears as employees felt like they'd found a home, as I had.

Like all nice things, the wonderful vibes ended.

First indication of distress

My first day at the workplace was great.

Fun, intuitive, and they wanted creative individuals, not salesman.

While sales were important, the company's vision was more important.

“To deliver joy through life-changing mobile retail experiences.”

Thorough, forward-thinking training. We had a module on intuition. It gave us role ownership.

We were flown cross-country for training, gave feedback, and felt like we made a difference. Multiple contacts responded immediately and enthusiastically.

The atmosphere was genuine.

Making money was secondary, though. Incredible service was a priority.

Jon and Tim answered new hires' questions during Zoom calls during onboarding. CEOs seldom meet new hires this way, but they seemed to enjoy it.

All appeared well.

But in late 2021, things started changing.

Adore's leadership changed after its IPO. From basic values to sales maximization. We lost communication and were forced to fend for ourselves.

Removed the training wheels.

It got tougher to gain instructions from those above me, and new employees told me their roles weren't as advertised.

External money-focused managers were hired.

Instead of creative types, we hired salespeople.

With a new focus on numbers, Adore's uniqueness began to crumble.

Via Zoom, hundreds of workers were let go.

So.

Early in 2022, mass Zoom firings were trending. A CEO firing 900 workers over Zoom went viral.

Adore was special to me, but it became a headline.

30 June 2022, Vice Motherboard published Watch as Adore's CEO Fires Hundreds.

It described a leaked video of Jon Swanson laying off all staff in Canada and the UK.

They called it a “notice of redundancy”.

The corporation couldn't pay its employees.

I loved Adore's underlying ideals, among other things. We called clients Adorers and sold solutions, not add-ons.

But, like anything, a company is only as strong as its weakest link. And obviously, the people-first focus wasn’t making enough money.

There were signs. The expansion was presumably a race against time and money.

Adore finally declared bankruptcy.

Adore declared bankruptcy 3 months after going public. It happened in waves, like any large-scale fall.

Initial key players to leave were

Then, communication deteriorated.

Lastly, the corporate culture disintegrated.

6 months after leaving Adore, I received a letter in the mail from a Law firm — it was about my stocks.

Adore filed Chapter 11. I had to sue to collect my worthless investments.

I hoped those stocks will be valuable someday. Nope. Nope.

Sad, I sighed.

$1.2 billion firm gone.

I left the workplace 3 months before starting a writing business. Despite being mediocre, I'm doing fine.

I got up as Adore fell.

Finally, can we scale kindness?

I trust my gut. Changes at Adore made me leave before it sank.

Adores' unceremonious slide from a top startup to bankruptcy is astonishing to me.

The company did everything perfectly, in my opinion.

first to market,

provided excellent service

paid their staff handsomely.

was responsible and attentive to criticism

The company wasn't led by an egotistical eccentric. The crew had centuries of cumulative space experience.

I'm optimistic about the future of work culture, but is compassion scalable?