How to make a >800 million dollars in crypto attacking the once 3rd largest stablecoin, Soros style

Everyone is talking about the $UST attack right now, including Janet Yellen. But no one is talking about how much money the attacker made (or how brilliant it was). Lets dig in.

Our story starts in late March, when the Luna Foundation Guard (or LFG) starts buying BTC to help back $UST. LFG started accumulating BTC on 3/22, and by March 26th had a $1bn+ BTC position. This is leg #1 that made this trade (or attack) brilliant.

The second leg comes in the form of the 4pool Frax announcement for $UST on April 1st. This added the second leg needed to help execute the strategy in a capital efficient way (liquidity will be lower and then the attack is on).

We don't know when the attacker borrowed 100k BTC to start the position, other than that it was sold into Kwon's buying (still speculation). LFG bought 15k BTC between March 27th and April 11th, so lets just take the average price between these dates ($42k).

So you have a ~$4.2bn short position built. Over the same time, the attacker builds a $1bn OTC position in $UST. The stage is now set to create a run on the bank and get paid on your BTC short. In anticipation of the 4pool, LFG initially removes $150mm from 3pool liquidity.

The liquidity was pulled on 5/8 and then the attacker uses $350mm of UST to drain curve liquidity (and LFG pulls another $100mm of liquidity).

But this only starts the de-pegging (down to 0.972 at the lows). LFG begins selling $BTC to defend the peg, causing downward pressure on BTC while the run on $UST was just getting started.

With the Curve liquidity drained, the attacker used the remainder of their $1b OTC $UST position ($650mm or so) to start offloading on Binance. As withdrawals from Anchor turned from concern into panic, this caused a real de-peg as people fled for the exits

So LFG is selling $BTC to restore the peg while the attacker is selling $UST on Binance. Eventually the chain gets congested and the CEXs suspend withdrawals of $UST, fueling the bank run panic. $UST de-pegs to 60c at the bottom, while $BTC bleeds out.

The crypto community panics as they wonder how much $BTC will be sold to keep the peg. There are liquidations across the board and LUNA pukes because of its redemption mechanism (the attacker very well could have shorted LUNA as well). BTC fell 25% from $42k on 4/11 to $31.3k

So how much did our attacker make? There aren't details on where they covered obviously, but if they are able to cover (or buy back) the entire position at ~$32k, that means they made $952mm on the short.

On the $350mm of $UST curve dumps I don't think they took much of a loss, lets assume 3% or just $11m. And lets assume that all the Binance dumps were done at 80c, thats another $125mm cost of doing business. For a grand total profit of $815mm (bf borrow cost).

BTC was the perfect playground for the trade, as the liquidity was there to pull it off. While having LFG involved in BTC, and foreseeing they would sell to keep the peg (and prevent LUNA from dying) was the kicker.

Lastly, the liquidity being low on 3pool in advance of 4pool allowed the attacker to drain it with only $350mm, causing the broader panic in both BTC and $UST. Any shorts on LUNA would've added a lot of P&L here as well, with it falling -65% since 5/7.

And for the reply guys, yes I know a lot of this involves some speculation & assumptions. But a lot of money was made here either way, and I thought it would be cool to dive into how they did it.

More on Web3 & Crypto

Ashraful Islam

4 years ago

Clean API Call With React Hooks

| Photo by Juanjo Jaramillo on Unsplash |

Calling APIs is the most common thing to do in any modern web application. When it comes to talking with an API then most of the time we need to do a lot of repetitive things like getting data from an API call, handling the success or error case, and so on.

When calling tens of hundreds of API calls we always have to do those tedious tasks. We can handle those things efficiently by putting a higher level of abstraction over those barebone API calls, whereas in some small applications, sometimes we don’t even care.

The problem comes when we start adding new features on top of the existing features without handling the API calls in an efficient and reusable manner. In that case for all of those API calls related repetitions, we end up with a lot of repetitive code across the whole application.

In React, we have different approaches for calling an API. Nowadays mostly we use React hooks. With React hooks, it’s possible to handle API calls in a very clean and consistent way throughout the application in spite of whatever the application size is. So let’s see how we can make a clean and reusable API calling layer using React hooks for a simple web application.

I’m using a code sandbox for this blog which you can get here.

import "./styles.css";

import React, { useEffect, useState } from "react";

import axios from "axios";

export default function App() {

const [posts, setPosts] = useState(null);

const [error, setError] = useState("");

const [loading, setLoading] = useState(false);

useEffect(() => {

handlePosts();

}, []);

const handlePosts = async () => {

setLoading(true);

try {

const result = await axios.get(

"https://jsonplaceholder.typicode.com/posts"

);

setPosts(result.data);

} catch (err) {

setError(err.message || "Unexpected Error!");

} finally {

setLoading(false);

}

};

return (

<div className="App">

<div>

<h1>Posts</h1>

{loading && <p>Posts are loading!</p>}

{error && <p>{error}</p>}

<ul>

{posts?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

</div>

);

}

I know the example above isn’t the best code but at least it’s working and it’s valid code. I will try to improve that later. For now, we can just focus on the bare minimum things for calling an API.

Here, you can try to get posts data from JsonPlaceholer. Those are the most common steps we follow for calling an API like requesting data, handling loading, success, and error cases.

If we try to call another API from the same component then how that would gonna look? Let’s see.

500: Internal Server Error

Now it’s going insane! For calling two simple APIs we’ve done a lot of duplication. On a top-level view, the component is doing nothing but just making two GET requests and handling the success and error cases. For each request, it’s maintaining three states which will periodically increase later if we’ve more calls.

Let’s refactor to make the code more reusable with fewer repetitions.

Step 1: Create a Hook for the Redundant API Request Codes

Most of the repetitions we have done so far are about requesting data, handing the async things, handling errors, success, and loading states. How about encapsulating those things inside a hook?

The only unique things we are doing inside handleComments and handlePosts are calling different endpoints. The rest of the things are pretty much the same. So we can create a hook that will handle the redundant works for us and from outside we’ll let it know which API to call.

500: Internal Server Error

Here, this request function is identical to what we were doing on the handlePosts and handleComments. The only difference is, it’s calling an async function apiFunc which we will provide as a parameter with this hook. This apiFunc is the only independent thing among any of the API calls we need.

With hooks in action, let’s change our old codes in App component, like this:

500: Internal Server Error

How about the current code? Isn’t it beautiful without any repetitions and duplicate API call handling things?

Let’s continue our journey from the current code. We can make App component more elegant. Now it knows a lot of details about the underlying library for the API call. It shouldn’t know that. So, here’s the next step…

Step 2: One Component Should Take Just One Responsibility

Our App component knows too much about the API calling mechanism. Its responsibility should just request the data. How the data will be requested under the hood, it shouldn’t care about that.

We will extract the API client-related codes from the App component. Also, we will group all the API request-related codes based on the API resource. Now, this is our API client:

import axios from "axios";

const apiClient = axios.create({

// Later read this URL from an environment variable

baseURL: "https://jsonplaceholder.typicode.com"

});

export default apiClient;

All API calls for comments resource will be in the following file:

import client from "./client";

const getComments = () => client.get("/comments");

export default {

getComments

};

All API calls for posts resource are placed in the following file:

import client from "./client";

const getPosts = () => client.get("/posts");

export default {

getPosts

};

Finally, the App component looks like the following:

import "./styles.css";

import React, { useEffect } from "react";

import commentsApi from "./api/comments";

import postsApi from "./api/posts";

import useApi from "./hooks/useApi";

export default function App() {

const getPostsApi = useApi(postsApi.getPosts);

const getCommentsApi = useApi(commentsApi.getComments);

useEffect(() => {

getPostsApi.request();

getCommentsApi.request();

}, []);

return (

<div className="App">

{/* Post List */}

<div>

<h1>Posts</h1>

{getPostsApi.loading && <p>Posts are loading!</p>}

{getPostsApi.error && <p>{getPostsApi.error}</p>}

<ul>

{getPostsApi.data?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

{/* Comment List */}

<div>

<h1>Comments</h1>

{getCommentsApi.loading && <p>Comments are loading!</p>}

{getCommentsApi.error && <p>{getCommentsApi.error}</p>}

<ul>

{getCommentsApi.data?.map((comment) => (

<li key={comment.id}>{comment.name}</li>

))}

</ul>

</div>

</div>

);

}

Now it doesn’t know anything about how the APIs get called. Tomorrow if we want to change the API calling library from axios to fetch or anything else, our App component code will not get affected. We can just change the codes form client.js This is the beauty of abstraction.

Apart from the abstraction of API calls, Appcomponent isn’t right the place to show the list of the posts and comments. It’s a high-level component. It shouldn’t handle such low-level data interpolation things.

So we should move this data display-related things to another low-level component. Here I placed those directly in the App component just for the demonstration purpose and not to distract with component composition-related things.

Final Thoughts

The React library gives the flexibility for using any kind of third-party library based on the application’s needs. As it doesn’t have any predefined architecture so different teams/developers adopted different approaches to developing applications with React. There’s nothing good or bad. We choose the development practice based on our needs/choices. One thing that is there beyond any choices is writing clean and maintainable codes.

Dylan Smyth

4 years ago

10 Ways to Make Money Online in 2022

As a tech-savvy person (and software engineer) or just a casual technology user, I'm sure you've had this same question countless times: How do I make money online? and how do I make money with my PC/Mac?

You're in luck! Today, I will list the top 5 easiest ways to make money online. Maybe a top ten in the future? Top 5 tips for 2022.

1. Using the gig economy

There are many websites on the internet that allow you to earn extra money using skills and equipment that you already own.

I'm referring to the gig economy. It's a great way to earn a steady passive income from the comfort of your own home. For some sites, premium subscriptions are available to increase sales and access features like bidding on more proposals.

Some of these are:

- Freelancer

- Upwork

- Fiverr (⭐ my personal favorite)

- TaskRabbit

2. Mineprize

MINEPRIZE is a great way to make money online. What's more, You need not do anything! You earn money by lending your idle CPU power to MINEPRIZE.

To register with MINEPRIZE, all you need is an email address and a password. Let MINEPRIZE use your resources, and watch the money roll in! You can earn up to $100 per month by letting your computer calculate. That's insane.

3. Writing

“O Romeo, Romeo, why art thou Romeo?” Okay, I admit that not all writing is Shakespearean. To be a copywriter, you'll need to be fluent in English. Thankfully, we don't have to use typewriters anymore.

Writing is a skill that can earn you a lot of money (claps for the rhyme).

Here are a few ways you can make money typing on your fancy keyboard:

Self-publish a book

Write scripts for video creators

Write for social media

Book-checking

Content marketing help

What a list within a list!

4. Coding

Yes, kids. You've probably coded before if you understand

You've probably coded before if you understand

print("hello world");

Computational thinking (or coding) is one of the most lucrative ways to earn extra money, or even as a main source of income.

Of course, there are hardcode coders (like me) who write everything line by line, binary di — okay, that last part is a bit exaggerated.

But you can also make money by writing websites or apps or creating low code or no code platforms.

But you can also make money by writing websites or apps or creating low code or no code platforms.

Some low-code platforms

Sheet : spreadsheets to apps :

Loading... We'll install your new app... No-Code Your team can create apps and automate tasks. Agile…

www.appsheet.com

Low-code platform | Business app creator - Zoho Creator

Work is going digital, and businesses of all sizes must adapt quickly. Zoho Creator is a...

www.zoho.com

Sell your data with TrueSource. NO CODE NEEDED

Upload data, configure your product, and earn in minutes.

www.truesource.io

Cool, huh?

5. Created Content

If we use the internet correctly, we can gain unfathomable wealth and extra money. But this one is a bit more difficult. Unlike some of the other items on this list, it takes a lot of time up front.

I'm referring to sites like YouTube and Medium. It's a great way to earn money both passively and actively. With the likes of Jake- and Logan Paul, PewDiePie (a.k.a. Felix Kjellberg) and others, it's never too late to become a millionaire on YouTube. YouTubers are always rising to the top with great content.

6. NFTs and Cryptocurrency

It is now possible to amass large sums of money by buying and selling digital assets on NFTs and cryptocurrency exchanges. Binance's Initial Game Offer rewards early investors who produce the best results.

One awesome game sold a piece of its plot for US$7.2 million! It's Axie Infinity. It's free and available on Google Play and Apple Store.

7. Affiliate Marketing

Affiliate marketing is a form of advertising where businesses pay others (like bloggers) to promote their goods and services. Here's an example. I write a blog (like this one) and post an affiliate link to an item I recommend buying — say, a camera — and if you buy the camera, I get a commission!

These programs pay well:

- Elementor

- AWeber

- Sendinblue

- ConvertKit\sLeadpages

- GetResponse

- SEMRush\sFiverr

- Pabbly

8. Start a blog

Now, if you're a writer or just really passionate about something or a niche, blogging could potentially monetize that passion!

Create a blog about anything you can think of. It's okay to start right here on Medium, as I did.

9. Dropshipping

And I mean that in the best possible way — drop shopping is ridiculously easy to set up, but difficult to maintain for some.

Luckily, Shopify has made setting up an online store a breeze. Drop-shipping from Alibaba and DHGate is quite common. You've got a winner if you can find a local distributor willing to let you drop ship their product!

10. Set up an Online Course

If you have a skill and can articulate it, online education is for you.

Skillshare, Pluralsight, and Coursera have all made inroads in recent years, upskilling people with courses that YOU can create and earn from.

That's it for today! Please share if you liked this post. If not, well —

Jeff John Roberts

3 years ago

Jack Dorsey and Jay-Z Launch 'Bitcoin Academy' in Brooklyn rapper's home

The new Bitcoin Academy will teach Jay-Marcy Z's Houses neighbors "What is Cryptocurrency."

Jay-Z grew up in Brooklyn's Marcy Houses. The rapper and Block CEO Jack Dorsey are giving back to his hometown by creating the Bitcoin Academy.

The Bitcoin Academy will offer online and in-person classes, including "What is Money?" and "What is Blockchain?"

The program will provide participants with a mobile hotspot and a small amount of Bitcoin for hands-on learning.

Students will receive dinner and two evenings of instruction until early September. The Shawn Carter Foundation will help with on-the-ground instruction.

Jay-Z and Dorsey announced the program Thursday morning. It will begin at Marcy Houses but may be expanded.

Crypto Blockchain Plug and Black Bitcoin Billionaire, which has received a grant from Block, will teach the classes.

Jay-Z, Dorsey reunite

Jay-Z and Dorsey have previously worked together to promote a Bitcoin and crypto-based future.

In 2021, Dorsey's Block (then Square) acquired the rapper's streaming music service Tidal, which they propose using for NFT distribution.

Dorsey and Jay-Z launched an endowment in 2021 to fund Bitcoin development in Africa and India.

Dorsey is funding the new Bitcoin Academy out of his own pocket (as is Jay-Z), but he's also pushed crypto-related charitable endeavors at Block, including a $5 million fund backed by corporate Bitcoin interest.

This post is a summary. Read full article here

You might also like

Alex Mathers

3 years ago

400 articles later, nobody bothered to read them.

Writing for readers:

14 years of daily writing.

I post practically everything on social media. I authored hundreds of articles, thousands of tweets, and numerous volumes to almost no one.

Tens of thousands of readers regularly praise me.

I despised writing. I'm stuck now.

I've learned what readers like and what doesn't.

Here are some essential guidelines for writing with impact:

Readers won't understand your work if you can't.

Though obvious, this slipped me up. Share your truths.

Stories engage human brains.

Showing the journey of a person from worm to butterfly inspires the human spirit.

Overthinking hinders powerful writing.

The best ideas come from inner understanding in between thoughts.

Avoid writing to find it. Write.

Writing a masterpiece isn't motivating.

Write for five minutes to simplify. Step-by-step, entertaining, easy steps.

Good writing requires a willingness to make mistakes.

So write loads of garbage that you can edit into a good piece.

Courageous writing.

A courageous story will move readers. Personal experience is best.

Go where few dare.

Templates, outlines, and boundaries help.

Limitations enhance writing.

Excellent writing is straightforward and readable, removing all the unnecessary fat.

Use five words instead of nine.

Use ordinary words instead of uncommon ones.

Readers desire relatability.

Too much perfection will turn it off.

Write to solve an issue if you can't think of anything to write.

Instead, read to inspire. Best authors read.

Every tweet, thread, and novel must have a central idea.

What's its point?

This can make writing confusing.

️ Don't direct your reader.

Readers quit reading. Demonstrate, describe, and relate.

Even if no one responds, have fun. If you hate writing it, the reader will too.

Aniket

3 years ago

Yahoo could have purchased Google for $1 billion

Let's see this once-dominant IT corporation crumble.

What's the capital of Kazakhstan? If you don't know the answer, you can probably find it by Googling. Google Search returned results for Nur-Sultan in 0.66 seconds.

Google is the best search engine I've ever used. Did you know another search engine ruled the Internet? I'm sure you guessed Yahoo!

Google's friendly UI and wide selection of services make it my top choice. Let's explore Yahoo's decline.

Yahoo!

YAHOO stands for Yet Another Hierarchically Organized Oracle. Jerry Yang and David Filo established Yahoo.

Yahoo is primarily a search engine and email provider. It offers News and an advertising platform. It was a popular website in 1995 that let people search the Internet directly. Yahoo began offering free email in 1997 by acquiring RocketMail.

According to a study, Yahoo used Google Search Engine technology until 2000 and then developed its own in 2004.

Yahoo! rejected buying Google for $1 billion

Larry Page and Sergey Brin, Google's founders, approached Yahoo in 1998 to sell Google for $1 billion so they could focus on their studies. Yahoo denied the offer, thinking it was overvalued at the time.

Yahoo realized its error and offered Google $3 billion in 2002, but Google demanded $5 billion since it was more valuable. Yahoo thought $5 billion was overpriced for the existing market.

In 2022, Google is worth $1.56 Trillion.

What happened to Yahoo!

Yahoo refused to buy Google, and Google's valuation rose, making a purchase unfeasible.

Yahoo started losing users when Google launched Gmail. Google's UI was far cleaner than Yahoo's.

Yahoo offered $1 billion to buy Facebook in July 2006, but Zuckerberg and the board sought $1.1 billion. Yahoo rejected, and Facebook's valuation rose, making it difficult to buy.

Yahoo was losing users daily while Google and Facebook gained many. Google and Facebook's popularity soared. Yahoo lost value daily.

Microsoft offered $45 billion to buy Yahoo in February 2008, but Yahoo declined. Microsoft increased its bid to $47 billion after Yahoo said it was too low, but Yahoo rejected it. Then Microsoft rejected Yahoo’s 10% bid increase in May 2008.

In 2015, Verizon bought Yahoo for $4.5 billion, and Apollo Global Management bought 90% of Yahoo's shares for $5 billion in May 2021. Verizon kept 10%.

Yahoo's opportunity to acquire Google and Facebook could have been a turning moment. It declined Microsoft's $45 billion deal in 2008 and was sold to Verizon for $4.5 billion in 2015. Poor decisions and lack of vision caused its downfall. Yahoo's aim wasn't obvious and it didn't stick to a single domain.

Hence, a corporation needs a clear vision and a leader who can see its future.

Liked this article? Join my tech and programming newsletter here.

Caspar Mahoney

3 years ago

Changing Your Mindset From a Project to a Product

Product game mindsets? How do these vary from Project mindset?

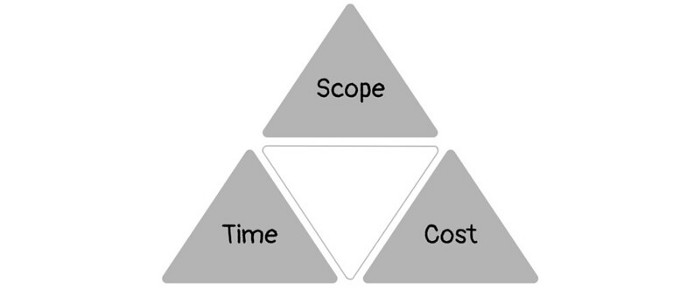

1950s spawned the Iron Triangle. Project people everywhere know and live by it. In stakeholder meetings, it is used to stretch the timeframe, request additional money, or reduce scope.

Quality was added to this triangle as things matured.

Quality was intended to be transformative, but none of these principles addressed why we conduct projects.

Value and benefits are key.

Product value is quantified by ROI, revenue, profit, savings, or other metrics. For me, every project or product delivery is about value.

Most project managers, especially those schooled 5-10 years or more ago (thousands working in huge corporations worldwide), understand the world in terms of the iron triangle. What does that imply? They worry about:

a) enough time to get the thing done.

b) have enough resources (budget) to get the thing done.

c) have enough scope to fit within (a) and (b) >> note, they never have too little scope, not that I have ever seen! although, theoretically, this could happen.

Boom—iron triangle.

To make the triangle function, project managers will utilize formal governance (Steering) to move those things. Increase money, scope, or both if time is short. Lacking funds? Increase time, scope, or both.

In current product development, shifting each item considerably may not yield value/benefit.

Even terrible. This approach will fail because it deprioritizes Value/Benefit by focusing the major stakeholders (Steering participants) and delivery team(s) on Time, Scope, and Budget restrictions.

Pre-agile, this problem was terrible. IT projects failed wildly. History is here.

Value, or benefit, is central to the product method. Product managers spend most of their time planning value-delivery paths.

Product people consider risk, schedules, scope, and budget, but value comes first. Let me illustrate.

Imagine managing internal products in an enterprise. Your core customer team needs a rapid text record of a chat to fix a problem. The consumer wants a feature/features added to a product you're producing because they think it's the greatest spot.

Project-minded, I may say;

Ok, I have budget as this is an existing project, due to run for a year. This is a new requirement to add to the features we’re already building. I think I can keep the deadline, and include this scope, as it sounds related to the feature set we’re building to give the desired result”.

This attitude repeats Scope, Time, and Budget.

Since it meets those standards, a project manager will likely approve it. If they have a backlog, they may add it and start specking it out assuming it will be built.

Instead, think like a product;

What problem does this feature idea solve? Is that problem relevant to the product I am building? Can that problem be solved quicker/better via another route ? Is it the most valuable problem to solve now? Is the problem space aligned to our current or future strategy? or do I need to alter/update the strategy?

A product mindset allows you to focus on timing, resource/cost, feasibility, feature detail, and so on after answering the aforementioned questions.

The above oversimplifies because

Leadership in discovery

Project managers are facilitators of ideas. This is as far as they normally go in the ‘idea’ space.

Business Requirements collection in classic project delivery requires extensive upfront documentation.

Agile project delivery analyzes requirements iteratively.

However, the project manager is a facilitator/planner first and foremost, therefore topic knowledge is not expected.

I mean business domain, not technical domain (to confuse matters, it is true that in some instances, it can be both technical and business domains that are important for a single individual to master).

Product managers are domain experts. They will become one if they are training/new.

They lead discovery.

Product Manager-led discovery is much more than requirements gathering.

Requirements gathering involves a Business Analyst interviewing people and documenting their requests.

The project manager calculates what fits and what doesn't using their Iron Triangle (presumably in their head) and reports back to Steering.

If this requirements-gathering exercise failed to identify requirements, what would a project manager do? or bewildered by project requirements and scope?

They would tell Steering they need a Business SME or Business Lead assigning or more of their time.

Product discovery requires the Product Manager's subject knowledge and a new mindset.

How should a Product Manager handle confusing requirements?

Product Managers handle these challenges with their talents and tools. They use their own knowledge to fill in ambiguity, but they have the discipline to validate those assumptions.

To define the problem, they may perform qualitative or quantitative primary research.

They might discuss with UX and Engineering on a whiteboard and test assumptions or hypotheses.

Do Product Managers escalate confusing requirements to Steering/Senior leaders? They would fix that themselves.

Product managers raise unclear strategy and outcomes to senior stakeholders. Open talks, soft skills, and data help them do this. They rarely raise requirements since they have their own means of handling them without top stakeholder participation.

Discovery is greenfield, exploratory, research-based, and needs higher-order stakeholder management, user research, and UX expertise.

Product Managers also aid discovery. They lead discovery. They will not leave customer/user engagement to a Business Analyst. Administratively, a business analyst could aid. In fact, many product organizations discourage business analysts (rely on PM, UX, and engineer involvement with end-users instead).

The Product Manager must drive user interaction, research, ideation, and problem analysis, therefore a Product professional must be skilled and confident.

Creating vs. receiving and having an entrepreneurial attitude

Product novices and project managers focus on details rather than the big picture. Project managers prefer spreadsheets to strategy whiteboards and vision statements.

These folks ask their manager or senior stakeholders, "What should we do?"

They then elaborate (in Jira, in XLS, in Confluence or whatever).

They want that plan populated fast because it reduces uncertainty about what's going on and who's supposed to do what.

Skilled Product Managers don't only ask folks Should we?

They're suggesting this, or worse, Senior stakeholders, here are some options. After asking and researching, they determine what value this product adds, what problems it solves, and what behavior it changes.

Therefore, to move into Product, you need to broaden your view and have courage in your ability to discover ideas, find insightful pieces of information, and collate them to form a valuable plan of action. You are constantly defining RoI and building Business Cases, so much so that you no longer create documents called Business Cases, it is simply ingrained in your work through metrics, intelligence, and insights.

Product Management is not a free lunch.

Plateless.

Plates and food must be prepared.

In conclusion, Product Managers must make at least three mentality shifts:

You put value first in all things. Time, money, and scope are not as important as knowing what is valuable.

You have faith in the field and have the ability to direct the search. YYou facilitate, but you don’t just facilitate. You wouldn't want to limit your domain expertise in that manner.

You develop concepts, strategies, and vision. You are not a waiter or an inbox where other people can post suggestions; you don't merely ask folks for opinion and record it. However, you excel at giving things that aren't clearly spoken or written down physical form.