More on Science

Katherine Kornei

3 years ago

The InSight lander from NASA has recorded the greatest tremor ever felt on Mars.

The magnitude 5 earthquake was responsible for the discharge of energy that was 10 times greater than the previous record holder.

Any Martians who happen to be reading this should quickly learn how to duck and cover.

NASA's Jet Propulsion Laboratory in Pasadena, California, reported that on May 4, the planet Mars was shaken by an earthquake of around magnitude 5, making it the greatest Marsquake ever detected to this point. The shaking persisted for more than six hours and unleashed more than ten times as much energy as the earthquake that had previously held the record for strongest.

The event was captured on record by the InSight lander, which is operated by the United States Space Agency and has been researching the innards of Mars ever since it touched down on the planet in 2018 (SN: 11/26/18). The epicenter of the earthquake was probably located in the vicinity of Cerberus Fossae, which is located more than 1,000 kilometers away from the lander.

The surface of Cerberus Fossae is notorious for being broken up and experiencing periodic rockfalls. According to geophysicist Philippe Lognonné, who is the lead investigator of the Seismic Experiment for Interior Structure, the seismometer that is onboard the InSight lander, it is reasonable to assume that the ground is moving in that area. "This is an old crater from a volcanic eruption."

Marsquakes, which are similar to earthquakes in that they give information about the interior structure of our planet, can be utilized to investigate what lies beneath the surface of Mars (SN: 7/22/21). And according to Lognonné, who works at the Institut de Physique du Globe in Paris, there is a great deal that can be gleaned from analyzing this massive earthquake. Because the quality of the signal is so high, we will be able to focus on the specifics.

Tomas Pueyo

2 years ago

Soon, a Starship Will Transform Humanity

SpaceX's Starship.

Launched last week.

Four minutes in:

SpaceX will succeed. When it does, its massiveness will matter.

Its payload will revolutionize space economics.

Civilization will shift.

We don't yet understand how this will affect space and Earth culture. Grab it.

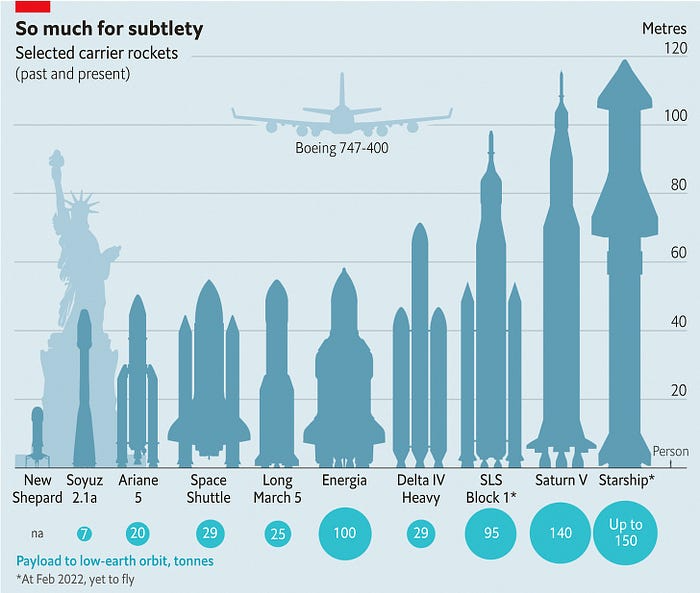

The Cost of Space Transportation Has Decreased Exponentially

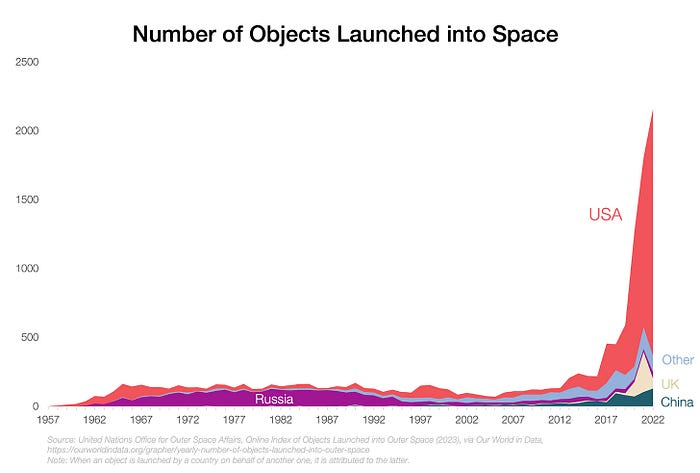

Space launches have increased dramatically in recent years.

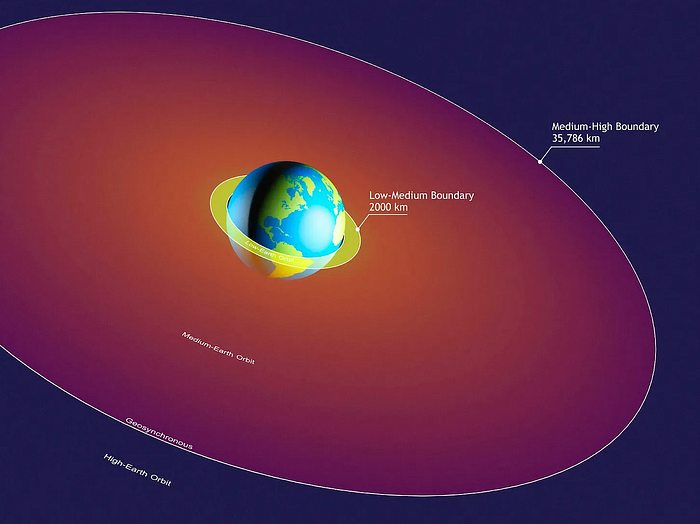

We mostly send items to LEO, the green area below:

SpaceX's reusable rockets can send these things to LEO. Each may launch dozens of payloads into space.

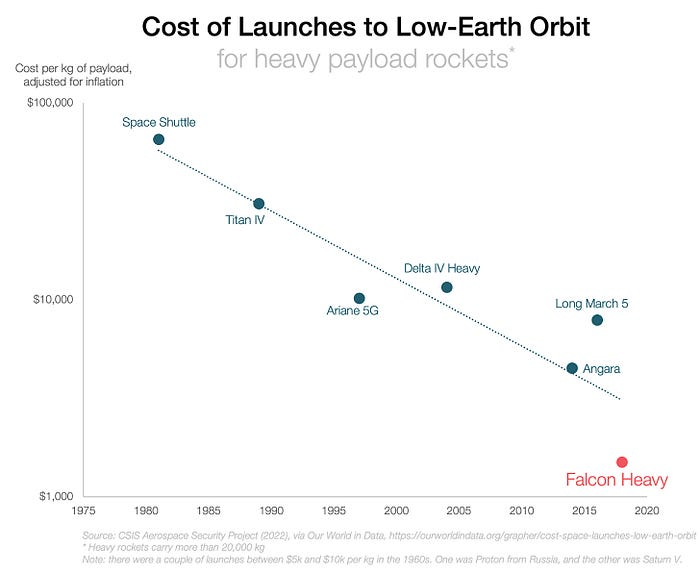

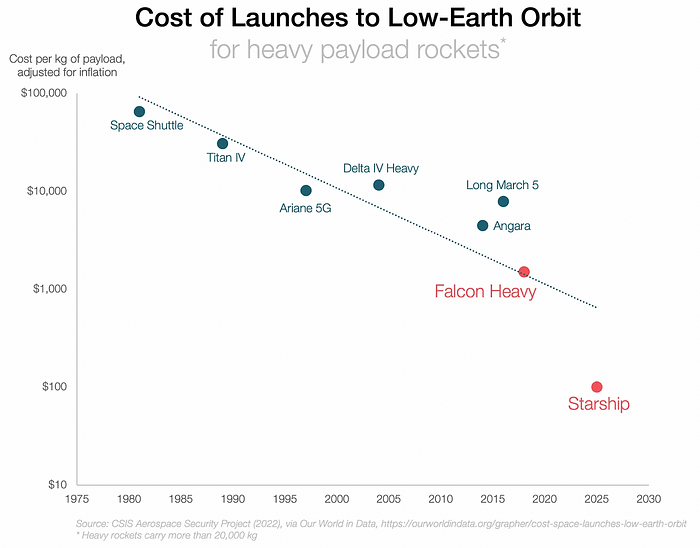

With all these launches, we're sending more than simply things to space. Volume and mass. Since the 1980s, launching a kilogram of payload to LEO has become cheaper:

One kilogram in a large rocket cost over $75,000 in the 1980s. Carrying one astronaut cost nearly $5M! Falcon Heavy's $1,500/kg price is 50 times lower. SpaceX's larger, reusable rockets are amazing.

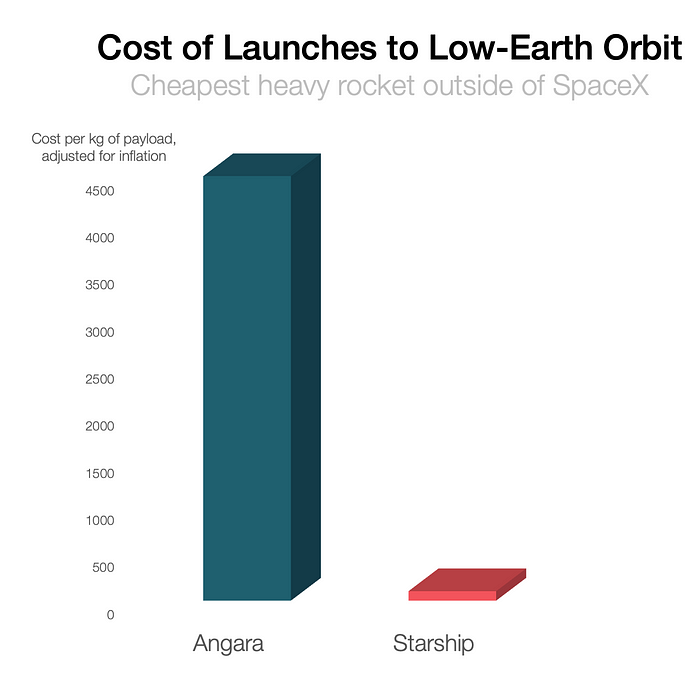

SpaceX's Starship rocket will continue. It can carry over 100 tons to LEO, 50% more than the current Falcon heavy. Thousands of launches per year. Elon Musk predicts Falcon Heavy's $1,500/kg cost will plummet to $100 in 23 years.

In context:

People underestimate this.

2. The Benefits of Affordable Transportation

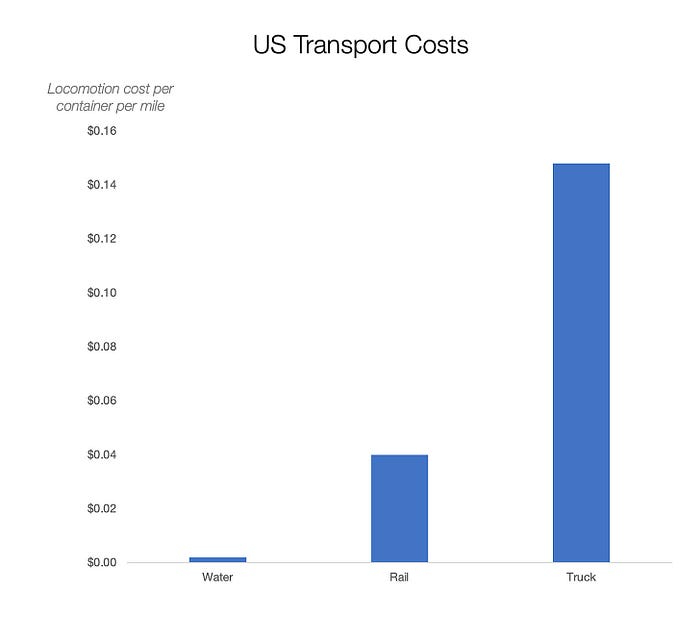

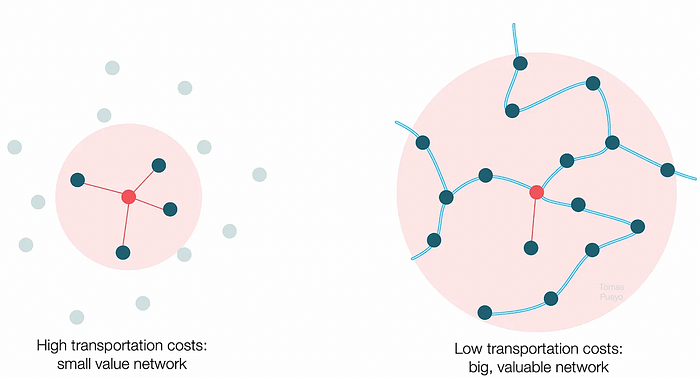

Compare Earth's transportation costs:

It's no surprise that the US and Northern Europe are the wealthiest and have the most navigable interior waterways.

So what? since sea transportation is cheaper than land. Inland waterways are even better than sea transportation since weather is less of an issue, currents can be controlled, and rivers serve two banks instead of one for coastal transportation.

In France, because population density follows river systems, rivers are valuable. Cheap transportation brought people and money to rivers, especially their confluences.

How come? Why were humans surrounding rivers?

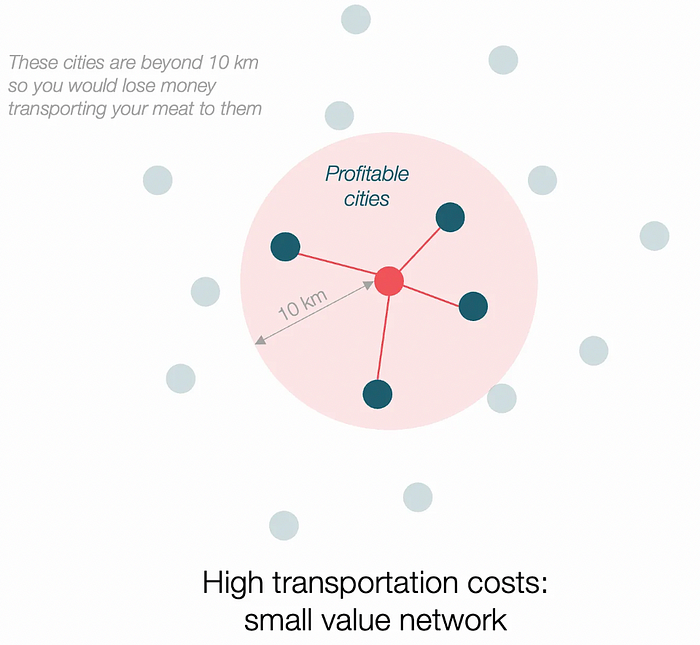

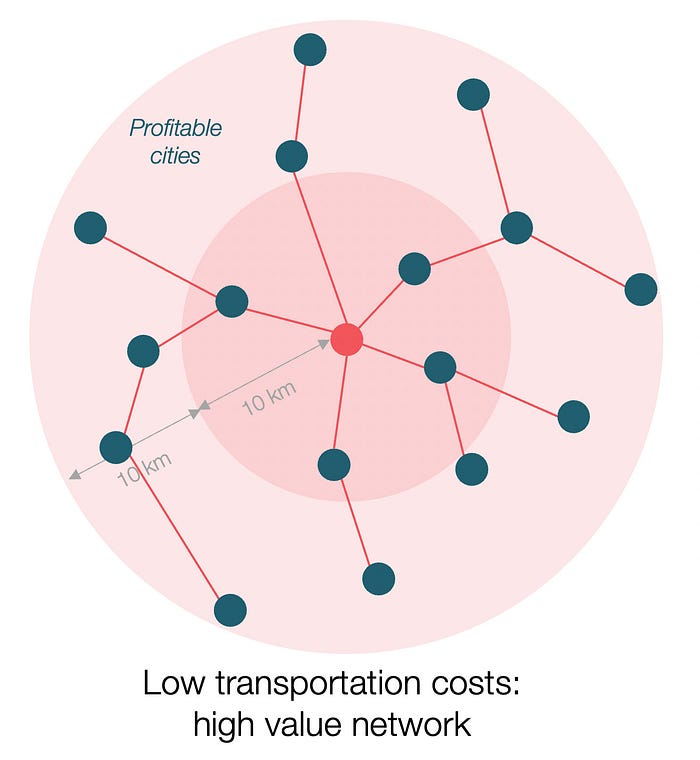

Imagine selling meat for $10 per kilogram. Transporting one kg one kilometer costs $1. Your margin decreases $1 each kilometer. You can only ship 10 kilometers. For example, you can only trade with four cities:

If instead, your cost of transportation is half, what happens? It costs you $0.5 per km. You now have higher margins with each city you traded with. More importantly, you can reach 20-km markets.

However, 2x distance 4x surface! You can now trade with sixteen cities instead of four! Metcalfe's law states that a network's value increases with its nodes squared. Since now sixteen cities can connect to yours. Each city now has sixteen connections! They get affluent and can afford more meat.

Rivers lower travel costs, connecting many cities, which can trade more, get wealthy, and buy more.

The right network is worth at least an order of magnitude more than the left! The cheaper the transport, the more trade at a lower cost, the more income generated, the more that wealth can be reinvested in better canals, bridges, and roads, and the wealth grows even more.

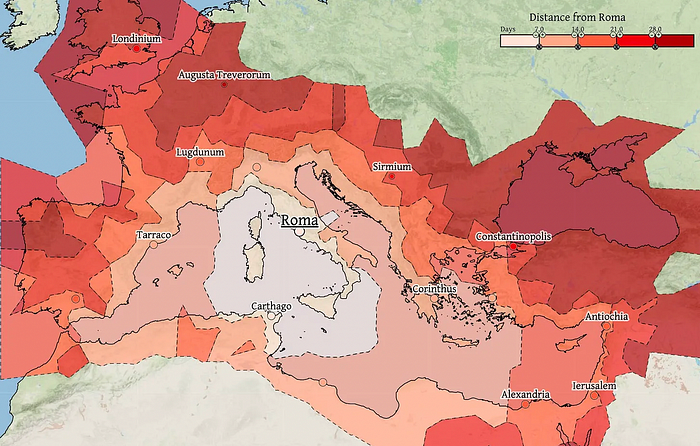

Throughout history. Rome was established around cheap Mediterranean transit and preoccupied with cutting overland transportation costs with their famous roadways. Communications restricted their empire.

The Egyptians lived around the Nile, the Vikings around the North Sea, early Japan around the Seto Inland Sea, and China started canals in the 5th century BC.

Transportation costs shaped empires.Starship is lowering new-world transit expenses. What's possible?

3. Change Organizations, Change Companies, Change the World

Starship is a conveyor belt to LEO. A new world of opportunity opens up as transportation prices drop 100x in a decade.

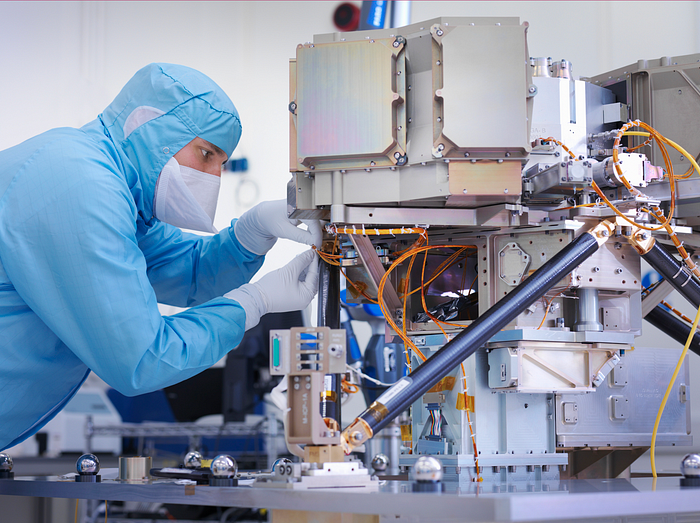

Satellite engineers have spent decades shedding milligrams. Weight influenced every decision: pricing structure, volumes to be sent, material selections, power sources, thermal protection, guiding, navigation, and control software. Weight was everything in the mission. To pack as much science into every millimeter, NASA missions had to be miniaturized. Engineers were indoctrinated against mass.

No way.

Starship is not constrained by any space mission, robotic or crewed.

Starship obliterates the mass constraint and every last vestige of cultural baggage it has gouged into the minds of spacecraft designers. A dollar spent on mass optimization no longer buys a dollar saved on launch cost. It buys nothing. It is time to raise the scope of our ambition and think much bigger. — Casey Handmer, Starship is still not understood

A Tesla Roadster in space makes more sense.

It went beyond bad PR. It told the industry: Did you care about every microgram? No more. My rockets are big enough to send a Tesla without noticing. Industry watchers should have noticed.

Most didn’t. Artemis is a global mission to send astronauts to the Moon and build a base. Artemis uses disposable Space Launch System rockets. Instead of sending two or three dinky 10-ton crew habitats over the next decade, Starship might deliver 100x as much cargo and create a base for 1,000 astronauts in a year or two. Why not? Because Artemis remains in a pre-Starship paradigm where each kilogram costs a million dollars and we must aggressively descope our objective.

Space agencies can deliver 100x more payload to space for the same budget with 100x lower costs and 100x higher transportation volumes. How can space economy saturate this new supply?

Before Starship, NASA supplied heavy equipment for Moon base construction. After Starship, Caterpillar and Deere may space-qualify their products with little alterations. Instead than waiting decades for NASA engineers to catch up, we could send people to build a space outpost with John Deere equipment in a few years.

History is littered with the wreckage of former industrial titans that underestimated the impact of new technology and overestimated their ability to adapt: Blockbuster, Motorola, Kodak, Nokia, RIM, Xerox, Yahoo, IBM, Atari, Sears, Hitachi, Polaroid, Toshiba, HP, Palm, Sony, PanAm, Sega, Netscape, Compaq, GM… — Casey Handmer, Starship is still not understood

Everyone saw it coming, but senior management failed to realize that adaption would involve moving beyond their established business practice. Others will if they don't.

4. The Starship Possibilities

It's Starlink.

SpaceX invented affordable cargo space and grasped its implications first. How can we use all this inexpensive cargo nobody knows how to use?

Satellite communications seemed like the best way to capitalize on it. They tried. Starlink, designed by SpaceX, provides fast, dependable Internet worldwide. Beaming information down is often cheaper than cable. Already profitable.

Starlink is one use for all this cheap cargo space. Many more. The longer firms ignore the opportunity, the more SpaceX will acquire.

What are these chances?

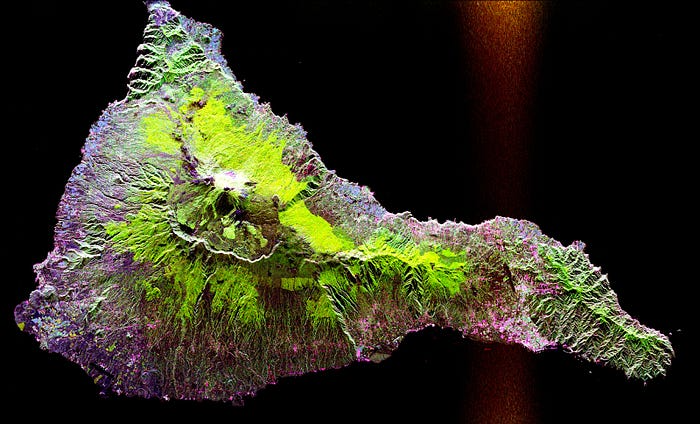

Satellite imagery is outdated and lacks detail. We can improve greatly. Synthetic aperture radar can take beautiful shots like this:

Have you ever used Google Maps and thought, "I want to see this in more detail"? What if I could view Earth live? What if we could livestream an infrared image of Earth?

We could launch hundreds of satellites with such mind-blowing visual precision of the Earth that we would dramatically improve the accuracy of our meteorological models; our agriculture; where crime is happening; where poachers are operating in the savannah; climate change; and who is moving military personnel where. Is that useful?

What if we could see Earth in real time? That affects businesses? That changes society?

Sam Warain

3 years ago

Sam Altman, CEO of Open AI, foresees the next trillion-dollar AI company

“I think if I had time to do something else, I would be so excited to go after this company right now.”

Sam Altman, CEO of Open AI, recently discussed AI's present and future.

Open AI is important. They're creating the cyberpunk and sci-fi worlds.

They use the most advanced algorithms and data sets.

GPT-3...sound familiar? Open AI built most copyrighting software. Peppertype, Jasper AI, Rytr. If you've used any, you'll be shocked by the quality.

Open AI isn't only GPT-3. They created DallE-2 and Whisper (a speech recognition software released last week).

What will they do next? What's the next great chance?

Sam Altman, CEO of Open AI, recently gave a lecture about the next trillion-dollar AI opportunity.

Who is the organization behind Open AI?

Open AI first. If you know, skip it.

Open AI is one of the earliest private AI startups. Elon Musk, Greg Brockman, and Rebekah Mercer established OpenAI in December 2015.

OpenAI has helped its citizens and AI since its birth.

They have scary-good algorithms.

Their GPT-3 natural language processing program is excellent.

The algorithm's exponential growth is astounding. GPT-2 came out in November 2019. May 2020 brought GPT-3.

Massive computation and datasets improved the technique in just a year. New York Times said GPT-3 could write like a human.

Same for Dall-E. Dall-E 2 was announced in April 2022. Dall-E 2 won a Colorado art contest.

Open AI's algorithms challenge jobs we thought required human innovation.

So what does Sam Altman think?

The Present Situation and AI's Limitations

During the interview, Sam states that we are still at the tip of the iceberg.

So I think so far, we’ve been in the realm where you can do an incredible copywriting business or you can do an education service or whatever. But I don’t think we’ve yet seen the people go after the trillion dollar take on Google.

He's right that AI can't generate net new human knowledge. It can train and synthesize vast amounts of knowledge, but it simply reproduces human work.

“It’s not going to cure cancer. It’s not going to add to the sum total of human scientific knowledge.”

But the key word is yet.

And that is what I think will turn out to be wrong that most surprises the current experts in the field.

Reinforcing his point that massive innovations are yet to come.

But where?

The Next $1 Trillion AI Company

Sam predicts a bio or genomic breakthrough.

There’s been some promising work in genomics, but stuff on a bench top hasn’t really impacted it. I think that’s going to change. And I think this is one of these areas where there will be these new $100 billion to $1 trillion companies started, and those areas are rare.

Avoid human trials since they take time. Bio-materials or simulators are suitable beginning points.

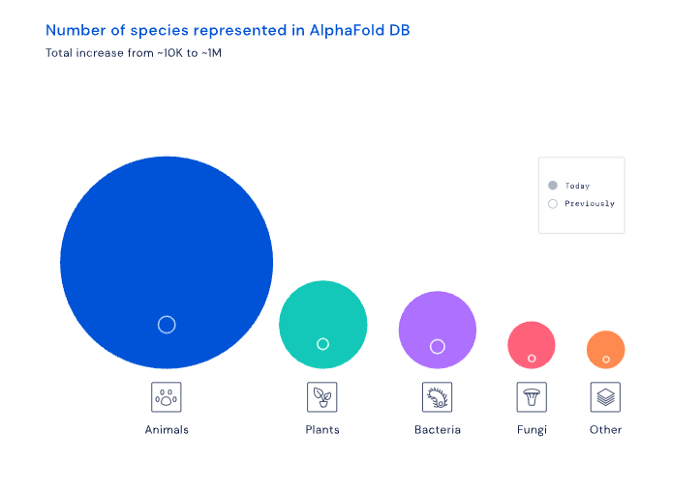

AI may have a breakthrough. DeepMind, an OpenAI competitor, has developed AlphaFold to predict protein 3D structures.

It could change how we see proteins and their function. AlphaFold could provide fresh understanding into how proteins work and diseases originate by revealing their structure. This could lead to Alzheimer's and cancer treatments. AlphaFold could speed up medication development by revealing how proteins interact with medicines.

Deep Mind offered 200 million protein structures for scientists to download (including sustainability, food insecurity, and neglected diseases).

Being in AI for 4+ years, I'm amazed at the progress. We're past the hype cycle, as evidenced by the collapse of AI startups like C3 AI, and have entered a productive phase.

We'll see innovative enterprises that could replace Google and other trillion-dollar companies.

What happens after AI adoption is scary and unpredictable. How will AGI (Artificial General Intelligence) affect us? Highly autonomous systems that exceed humans at valuable work (Open AI)

My guess is that the things that we’ll have to figure out are how we think about fairly distributing wealth, access to AGI systems, which will be the commodity of the realm, and governance, how we collectively decide what they can do, what they don’t do, things like that. And I think figuring out the answer to those questions is going to just be huge. — Sam Altman CEO

You might also like

Liz Martin

3 years ago

What Motivated Amazon to Spend $1 Billion for The Rings of Power?

Amazon's Rings of Power is the most costly TV series ever made. This is merely a down payment towards Amazon's grand goal.

Here's a video:

Amazon bought J.R.R. Tolkien's fantasy novels for $250 million in 2017. This agreement allows Amazon to create a Tolkien series for Prime Video.

The business spent years developing and constructing a Lord of the Rings prequel. Rings of Power premiered on September 2, 2022.

It drew 25 million global viewers in 24 hours. Prime Video's biggest debut.

An Exorbitant Budget

The most expensive. First season cost $750 million to $1 billion, making it the most costly TV show ever.

Jeff Bezos has spent years looking for the next Game of Thrones, a critically and commercially successful original series. Rings of Power could help.

Why would Amazon bet $1 billion on one series?

It's Not Just About the Streaming War

It's simple to assume Amazon just wants to win. Since 2018, the corporation has been fighting Hulu, Netflix, HBO, Apple, Disney, and NBC. Each wants your money, talent, and attention. Amazon's investment goes beyond rivalry.

Subscriptions Are the Bait

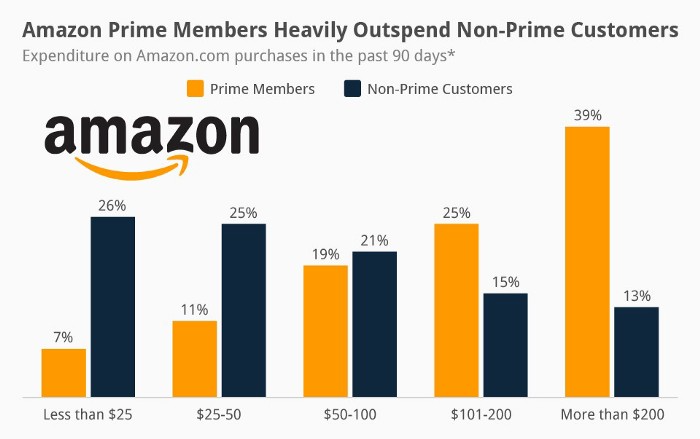

Audible, Amazon Music, and Prime Video are subscription services, although the company's fundamental business is retail. Amazon's online stores contribute over 50% of company revenue. Subscription services contribute 6.8%. The company's master plan depends on these subscriptions.

Streaming videos on Prime increases membership renewals. Free trial participants are more likely to join. Members buy twice as much as non-members.

Amazon Studios doesn't generate original programming to earn from Prime Video subscriptions. It aims to retain and attract clients.

Amazon can track what you watch and buy. Its algorithm recommends items and services. Mckinsey says you'll use more Amazon products, shop at Amazon stores, and watch Amazon entertainment.

In 2015, the firm launched the first season of The Man in the High Castle, a dystopian alternate history TV series depicting a world ruled by Nazi Germany and Japan after World War II.

This $72 million production earned two Emmys. It garnered 1.15 million new Prime users globally.

When asked about his Hollywood investment, Bezos said, "A Golden Globe helps us sell more shoes."

Selling more footwear

Amazon secured a deal with DirecTV to air Thursday Night Football in restaurants and bars. First streaming service to have exclusive NFL games.

This isn't just about Thursday night football, says media analyst Ritchie Greenfield. This sells t-shirts. This may be a ticket. Amazon does more than stream games.

The Rings of Power isn't merely a production showcase, either. This sells Tolkien's fantasy novels such Lord of the Rings, The Hobbit, and The Silmarillion.

This tiny commitment keeps you in Amazon's ecosystem.

The woman

3 years ago

Why Google's Hiring Process is Brilliant for Top Tech Talent

Without a degree and experience, you can get a high-paying tech job.

Most organizations follow this hiring rule: you chat with HR, interview with your future boss and other senior managers, and they make the final hiring choice.

If you've ever applied for a job, you know how arduous it can be. A newly snapped photo and a glossy resume template can wear you out. Applying to Google can change this experience.

According to an Universum report, Google is one of the world's most coveted employers. It's not simply the search giant's name and reputation that attract candidates, but its role requirements or lack thereof.

Candidates no longer need a beautiful resume, cover letter, Ivy League laurels, or years of direct experience. The company requires no degree or experience.

Elon Musk started it. He employed the two-hands test to uncover talented non-graduates. The billionaire eliminated the requirement for experience.

Google is deconstructing traditional employment with programs like the Google Project Management Degree, a free online and self-paced professional credential course.

Google's hiring is interesting. After its certification course, applicants can work in project management. Instead of academic degrees and experience, the company analyzes coursework.

Google finds the best project managers and technical staff in exchange. Google uses three strategies to find top talent.

Chase down the innovators

Google eliminates restrictions like education, experience, and others to find the polar bear amid the snowfall. Google's free project management education makes project manager responsibilities accessible to everyone.

Many jobs don't require a degree. Overlooking individuals without a degree can make it difficult to locate a candidate who can provide value to a firm.

Firsthand knowledge follows the same rule. A lack of past information might be an employer's benefit. This is true for creative teams or businesses that prefer to innovate.

Or when corporations conduct differently from the competition. No-experience candidates can offer fresh perspectives. Fast Company reports that people with no sales experience beat those with 10 to 15 years of experience.

Give the aptitude test first priority.

Google wants the best candidates. Google wouldn't be able to receive more applications if it couldn't screen them for fit. Its well-organized online training program can be utilized as a portfolio.

Google learns a lot about an applicant through completed assignments. It reveals their ability, leadership style, communication capability, etc. The course mimics the job to assess candidates' suitability.

Basic screening questions might provide information to compare candidates. Any size small business can use screening questions and test projects to evaluate prospective employees.

Effective training for employees

Businesses must train employees regardless of their hiring purpose. Formal education and prior experience don't guarantee success. Maintaining your employees' professional knowledge gaps is key to their productivity and happiness. Top-notch training can do that. Learning and development are key to employee engagement, says Bob Nelson, author of 1,001 Ways to Engage Employees.

Google's online certification program isn't available everywhere. Improving the recruiting process means emphasizing aptitude over experience and a degree. Instead of employing new personnel and having them work the way their former firm trained them, train them how you want them to function.

If you want to know more about Google’s recruiting process, we recommend you watch the movie “Internship.”

Mark Schaefer

3 years ago

20 Fun Uses for ChatGPT

Our RISE community is stoked on ChatGPT. ChatGPT has countless uses.

Early on. Companies are figuring out the legal and ethical implications of AI's content revolution. Using AI for everyday tasks is cool.

So I challenged RISE friends... Let's have fun and share non-obvious uses.

Onward!

1. Tweet

Jim MacLeod requested a design-themed Twitter thread from ChatGPT.

2. Novelize

Giuseppe Fratoni writes novels.

"This is amazing!" Impotent. I asked about writing, mystery novels, and jazz.

"Develop a mystery novel plot in which a disillusioned homicide detective hunts a serial killer who targets jazz musicians who play Autumn Leaves."

"I have:

Detective James Thompson is a seasoned veteran of the Homicide department, but lately he’s been feeling burnt out and disillusioned. His latest case, the murder of a young jazz pianist who was found brutally killed in his home, only serves to deepen his sense of despair … (much more provided)

3. Question-and-answer sessions

Bliss recommends:

You have a guest speaker and want to ask audience questions.

Pre-generate questions with ChatGPT. "And here's a question from our AI?"

The conference is about Western water scarcity. Water management expert. My AI question was...

Which desalination-related questions should I ask an expert?

ChatGPT said...

How is desalination currently being used around the world, and in what types of locations is it most effective?

What are the potential advantages and disadvantages of using desalination to provide fresh water?

What are some of the key challenges and limitations of desalination technology, and how are they being addressed?

4. Game-making

Richard's a board game expert. He suggests using ChatGPT to plan games. Note: Some people have started getting help solving crossword puzzle clues.

5. Matchmaking

Tanya Thompson thinks it helps dating. ChatGPT:

Here are some flirty first-message ideas for dating apps:

I noticed you from afar. I had to say hi and see if we clicked.

Your profile picture is gorgeous. I'm obsessed with your [Feature] and want to know you better.

I don't usually message first, but I had to. You're [Attractive Quality] and I can't wait to talk.”

Hello. Your profile intimidates me. I'm starstruck by your [Attractiveness]. (Many instances)

5. Solitude/anxiety

ChatGPT excels at one-on-one conversations.

PepperBrooks: "I'm sad and lonely."

ChatGPT: I’m sorry to hear that you’re feeling sad and lonely. It’s natural to feel down sometimes, but it’s important to remember that you’re not alone. There are many people who care about you and want to help you. It can be helpful to talk to someone about your feelings, whether it’s a close friend, family member, or mental health professional.

Sometimes, just expressing your thoughts and emotions to someone else can help to alleviate your sadness and loneliness. It’s also important to take care of yourself and do things that make you happy, such as spending time with loved ones, pursuing a hobby, or exercising. These things can help to improve your mood and make you feel more connected to others. Remember that you are strong and capable, and that you can get through this difficult time.

Daniel Nestle asked ChatGPT to generate journal prompts to help him deal with anxiety.

Write down the things that are causing you anxiety at the moment.

Describe a situation in which you felt anxious and how you coped with it.

Reflect on how your thoughts and beliefs contribute to your anxiety.

Write a letter to your anxiety, expressing your feelings and concerns

Imagine yourself in a calm and peaceful place, and describe it in detail.

(many more items followed)

6. Names

Continued Daniel:

Also, naming ideas.

Me: Name this drink. Japanese gin, unsweetened tonic, lime, Szechuan pepper, grapefruit.

ChatGPT: How about “Sakura Sunrise?” The name incorporates the Japanese gin and the citrus flavors of grapefruit and lime, and the word “sunrise” suggests a fresh and invigorating start to the day. Alternatively, you could go with something like “Peppered Grapefruit Gin Fizz” to emphasize the unique flavor combination of Szechuan pepper and grapefruit.

7. Translation on the go

Monks recommends: ChatGPT is a perfect mobile translation tool. Say you need help in Italy.

ME: Translate to Italian

Where is the nearest gas station?

ChatGPT: “La mia auto sta per esaurire il carburante, mi puoi dire dove si trova la stazione di servizio più vicina?”

8. Fitness

Monks added:

ME: In 3 months, I want to run 5km. Plan out how I can do this.

ChatGPT: Here's a 3-month plan to run 5km.

Start by walking for 15–20 minutes each day to build up your endurance and get your body used to regular exercise.

After a week of walking, begin incorporating short jogs into your routine. Start by jogging for 1–2 minutes at a time, with walking breaks in between. Gradually increase the length of your jogs and reduce the amount of time you spend walking.

Once you can jog for 10 minutes without stopping, start increasing the distance of your runs. Aim to run 1km without stopping and then gradually increase the distance over time.

In addition to your regular runs, incorporate strength training and stretching into your routine to improve your overall fitness and reduce the risk of injury.

(more details continued on)

9. Integrations and coding

10. Teacher lesson plans

Spencer Crandall saved time.

This morning, I wondered who has the hardest job. Teachers.

Many students, little time. ChatGPT could handle lesson planning. I tried it and got this.

Lesson Title: The History and Impact of Artificial Intelligence

Students will understand the history and development of AI and the potential positive and negative impacts on society.

AI timeline AI technologies and applications

Overview:

Introduce the topic of artificial intelligence, and explain that it refers to the development of computer systems and algorithms that can simulate human intelligence and behavior.

Show the timeline of significant events in the history of AI, and discuss the key milestones and developments in the field.

Show examples of AI technologies and applications, and explain how they are used in various industries and fields.

Discuss the potential positive and negative impacts of AI on society, including its potential to improve productivity, efficiency, and safety, as well as its potential to create new challenges and concerns, such as job displacement and ethical issues.

(many more details followed)

11. Find podcast guests.

Joeri Billast suggested:

Who should be on my Web3 podcast?

ChatGPT: Web3 marketing experts, influencers, and thought leaders make great podcast guests. These include Web3 marketers, content creators, business owners, entrepreneurs, and technologists. You could invite Web3 startups and organizations to your podcast.

Please give examples.

ChatGPT: Ideal Web3 marketing podcast guests include:

Tim Berner-Lee, inventor of the World Wide Web

Chris Dixon, general partner at Andreessen Horowitz

Vitalik Buterin, founder of Ethereum

Joe Lubin, founder of ConsenSys

Susan Fowler, author of Whistleblower

(many other suggestions)

12. Comments and reviews on social media

Lisa Monk thinks ChatGPT can help retail staff with social media.

She asked ChatGPT to "review this restaurant"

POSITIVE CUSTOMER REVIEW: I absolutely love this place. We eat out a lot however this place has topped it all. Perfect meals, amazing staff and the timing for it all was great! Special thanks to Sophie B (you rock) From your guests all the way from the Gold Coast, THANK YOU

ChatGPT REPLY: Thank you so much for your kind words and for taking the time to write a review. We are delighted to hear that you had such a great experience at our restaurant. We are thrilled that you enjoyed your meal and the service provided by Sophie. It’s always a pleasure to serve guests visiting from the Gold Coast and we are grateful for your support. We look forward to welcoming you back again soon. Thank you again for your positive review.

CUSTOMER REVIEW: Most average food, there is a nice view, But that’s about it

ChatGPT: Thank you for your review. We apologize if your dining experience was not up to your expectations. We strive to provide top-quality food and service to all of our customers, and we will take your feedback into consideration as we continue to improve. Thank you for letting us know about your experience.

13. Crime-fighting

Zack Seipert said, "I researched some famous unsolved murders, and ChatGPT laid out the crime, suspects, clues, and more." I bet experienced detectives could solve some of these cold cases.

14. Reduce bias

Joanne Taylor struggles to remove biases from her writing. She asked ChatGPT for ideas.

I asked ChatGPT to check a text for racial bias, and it did well.

15. Furnishings

I designed a small, unique table using ChatGPT and MidJourney. I edited ChatGPT's idea for clarity and brevity before entering it in MidJourney.

Results:

16. Serialize a novel

Scott Scowcoft led ChatCPT through iterations of a story text to create serialized novel frames. You can view this here.

17. Interview preparation

I was on a sales podcast. Although this is marketing-related, it's not my forte. I asked ChatGPT for help with a sales interview. Response:

Here are some interview questions about the future of sales and how to answer them.

How will technology affect sales in the future?

Technology will impact sales in the coming years. Automation and AI could be used to handle routine tasks, and data analysis and CRM could become more important. E-commerce and online sales channels are also reshaping the sales landscape.

How will the salesperson's role change?

Future sales roles may change in response to changing customer needs and expectations. Salespeople must be knowledgeable and consultative to help customers navigate complex products and services. Increasing importance of data analysis and CRM tools will require salespeople to understand and use technology effectively.

Future sales professionals will need what skills?

Future salespeople will need many skills. Sales professionals must have strong communication and interpersonal skills, as well as product and service knowledge and the ability to use data analysis and CRM tools effectively. Adapting to changing customer needs and staying current on industry trends will also be important for sales success.

18. Gift ideas

I need Christmas gift ideas for my wife. I asked:

Suggest a gift idea under $200 for a middle-aged woman who loves The Eagles.

A middle-aged woman who loves The Eagles might like a vinyl record of "Hotel California" or "The Long Run." Many music fans enjoy the unique sound and experience of vinyl records.

Pair the record with a high-quality record player for a complete gift. The vinyl record and record player should fit within your $200 budget for an Eagles fan.

19. Explaining complex concepts

I entered: Explain quantum physics to me as if I were 10 years old.

Quantum physics is a branch of science that studies how tiny particles, like atoms and subatomic particles, behave. These particles are so small that they don’t always follow the same rules as the things we can see and touch every day.

For example, sometimes they can be in two places at the same time, or they can be friends with each other even if they are far apart. This might sound strange, but it’s what scientists have learned from studying these tiny particles. Quantum physics helps us understand how the world works at a very small scale, and it can also help us make new technologies, like computers that are much faster than the ones we have today.

20. AP-style editing

Blogs, journals, and books follow AP Style. I asked ChatGPT to "edit using AP Style" and it did a great job.

And then... "Write a catchy headline for a blog post about 16 ChatGPT uses"

Here are some possible blog post titles for 20 creative ChatGPT uses:

“20 Fun and Creative Ways to Use ChatGPT: From Games to Quizzes and More”

“The Ultimate Guide to Using ChatGPT for Entertainment and Creativity”

“20 Reasons to Use ChatGPT: How it Can Enhance Your Gaming, Storytelling, and More”

20 Entertaining Uses for ChatGPT You Never Knew Were Possible”

“Unleash Your Imagination with ChatGPT: 16 Creative and Fun Ways to Use it”

In a previous post, I said ChatGPT will change marketing careers forever. I have never made a statement like that in my life. Even in the early days of the internet, people were cautious.

This technology was just released two weeks ago, and over a million people are already using it. This is the fastest technology adoption in history.

Today's post offers inventive and entertaining ideas, but it's just the beginning. ChatGPT writes code, music, and papers.