More on Technology

Nick Babich

3 years ago

Is ChatGPT Capable of Generating a Complete Mobile App?

TL;DR: It'll be harder than you think.

Mobile app development is a complicated product design sector. You require broad expertise to create a mobile app. You must write Swift or Java code and consider mobile interactions.

When ChatGPT was released, many were amazed by its capabilities and wondered if it could replace designers and developers. This article will use ChatGPT to answer a specific query.

Can ChatGPT build an entire iOS app?

This post will use ChatGPT to construct an iOS meditation app. Video of the article is available.

App concepts for meditation

After deciding on an app, think about the user experience. What should the app offer?

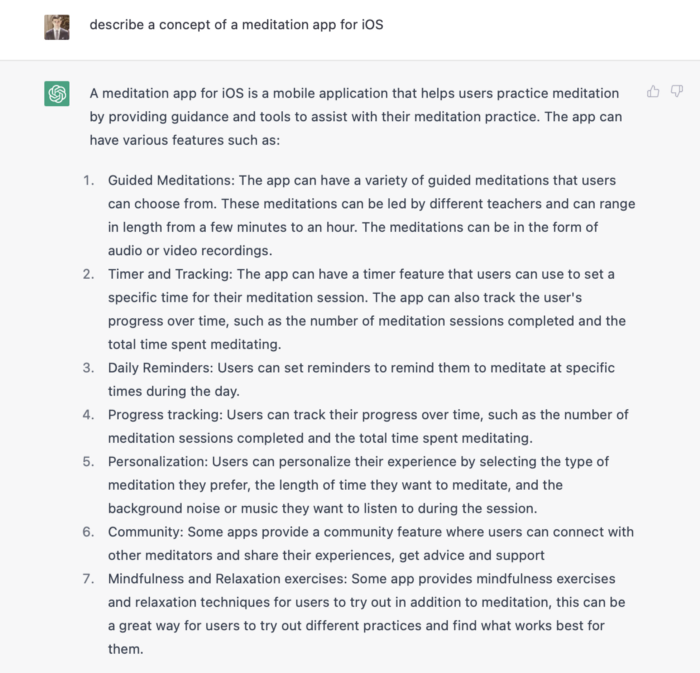

Let's ask ChatGPT for the answer.

ChatGPT described a solid meditation app with various exercises. Use this list to plan product design. Our first product iteration will have few features. A simple, one-screen software will let users set the timeframe and play music during meditation.

Structure of information

Information architecture underpins product design. Our app's navigation mechanism should be founded on strong information architecture, so we need to identify our mobile's screens first.

ChatGPT can define our future app's information architecture since we already know it.

ChatGPT uses the more complicated product's structure. When adding features to future versions of our product, keep this information picture in mind.

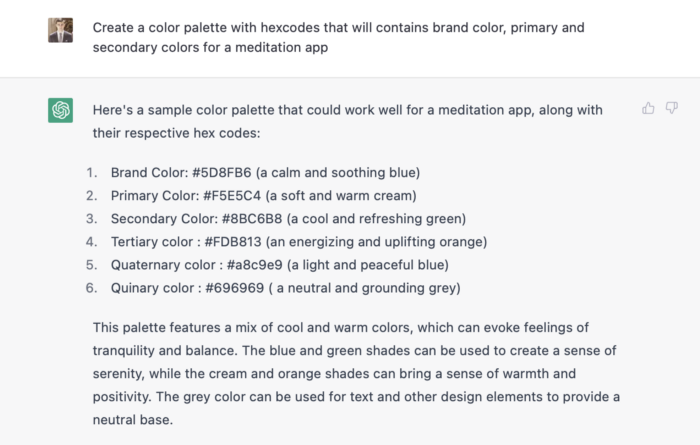

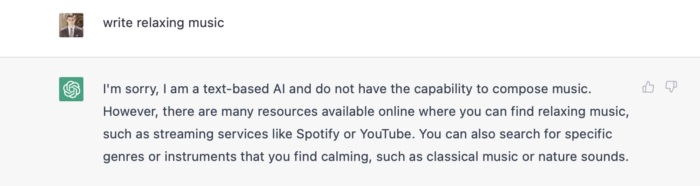

Color palette

Meditation apps need colors. We want to employ relaxing colors in a meditation app because colors affect how we perceive items. ChatGPT can suggest product colors.

See the hues in person:

Neutral colors dominate the color scheme. Playing with color opacity makes this scheme useful.

Ambiance music

Meditation involves music. Well-chosen music calms the user.

Let ChatGPT make music for us.

ChatGPT can only generate text. It directs us to Spotify or YouTube to look for such stuff and makes precise recommendations.

Fonts

Fonts can impress app users. Round fonts are easier on the eyes and make a meditation app look friendlier.

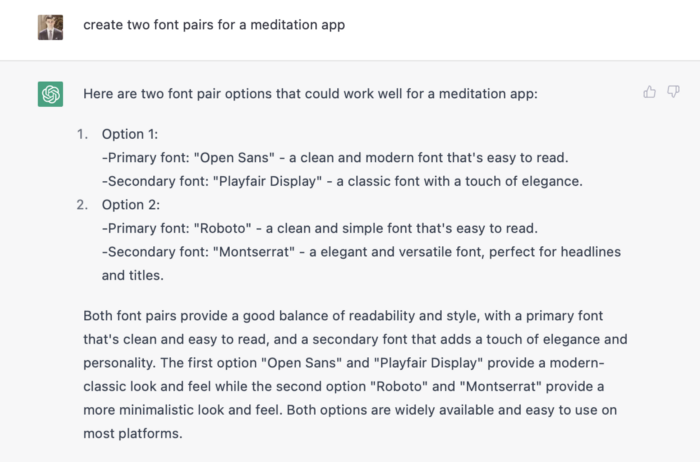

ChatGPT can suggest app typefaces. I compare two font pairs when making a product. I'll ask ChatGPT for two font pairs.

See the hues in person:

Despite ChatGPT's convincing font pairing arguments, the output is unattractive. The initial combo (Open Sans + Playfair Display) doesn't seem to work well for a mediation app.

Content

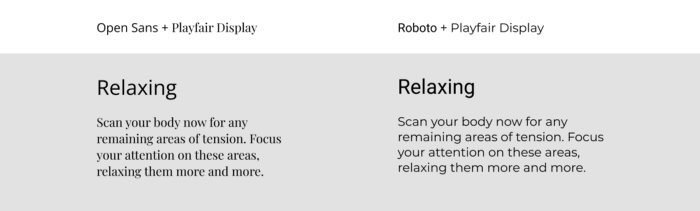

Meditation requires the script. Find the correct words and read them calmly and soothingly to help listeners relax and focus on each region of their body to enhance the exercise's effect.

ChatGPT's offerings:

ChatGPT outputs code. My prompt's word script may cause it.

Timer

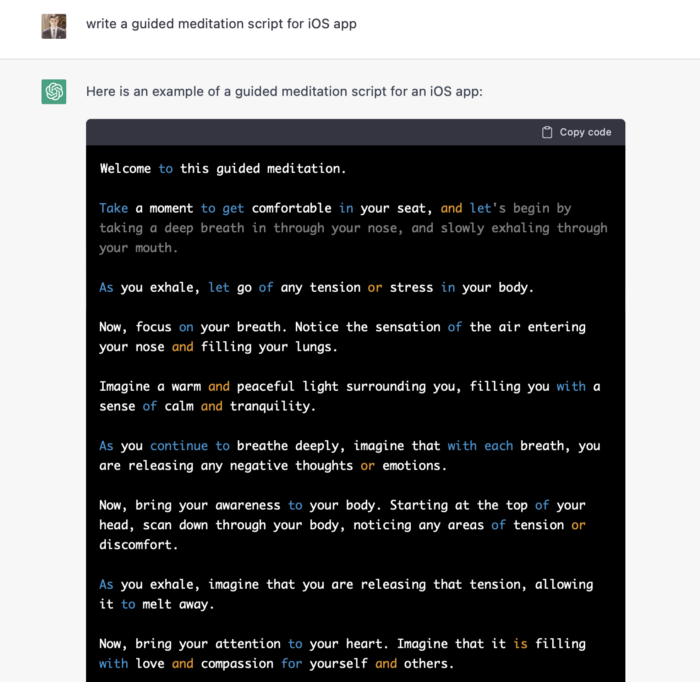

After fonts, colors, and content, construct functional pieces. Timer is our first functional piece. The meditation will be timed.

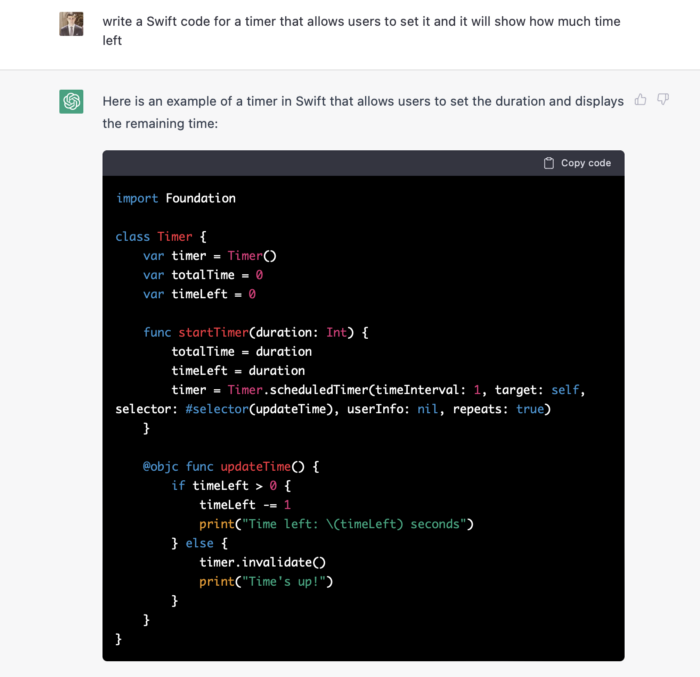

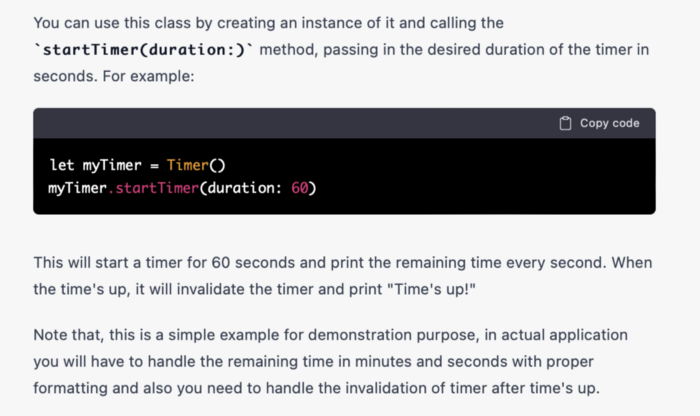

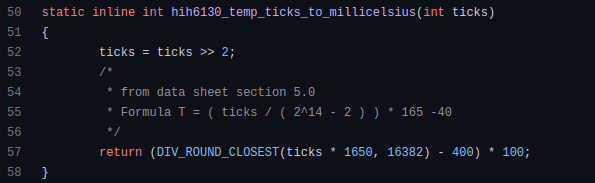

Let ChatGPT write Swift timer code (since were building an iOS app, we need to do it using Swift language).

ChatGPT supplied a timer class, initializer, and usage guidelines.

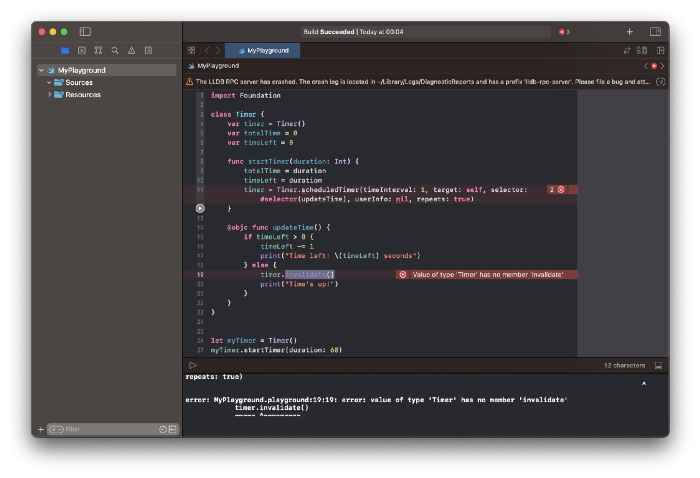

Apple Xcode requires a playground to test this code. Xcode will report issues after we paste the code to the playground.

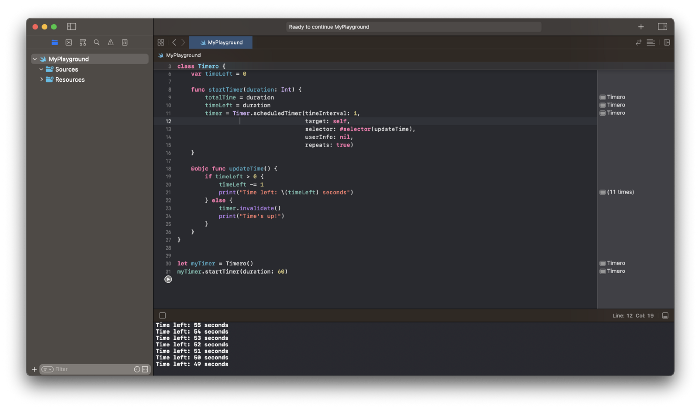

Fixing them is simple. Just change Timer to another class name (Xcode shows errors because it thinks that we access the properties of the class we’ve created rather than the system class Timer; it happens because both classes have the same name Timer). I titled our class Timero and implemented the project. After this quick patch, ChatGPT's code works.

Can ChatGPT produce a complete app?

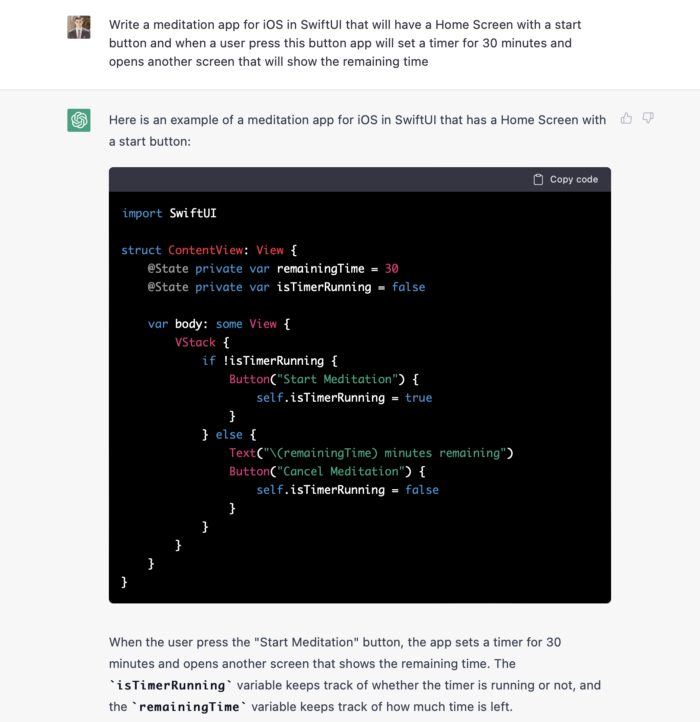

Since ChatGPT can help us construct app components, we may question if it can write a full app in one go.

Question ChatGPT:

ChatGPT supplied basic code and instructions. It's unclear if ChatGPT purposely limits output or if my prompt wasn't good enough, but the tool cannot produce an entire app from a single prompt.

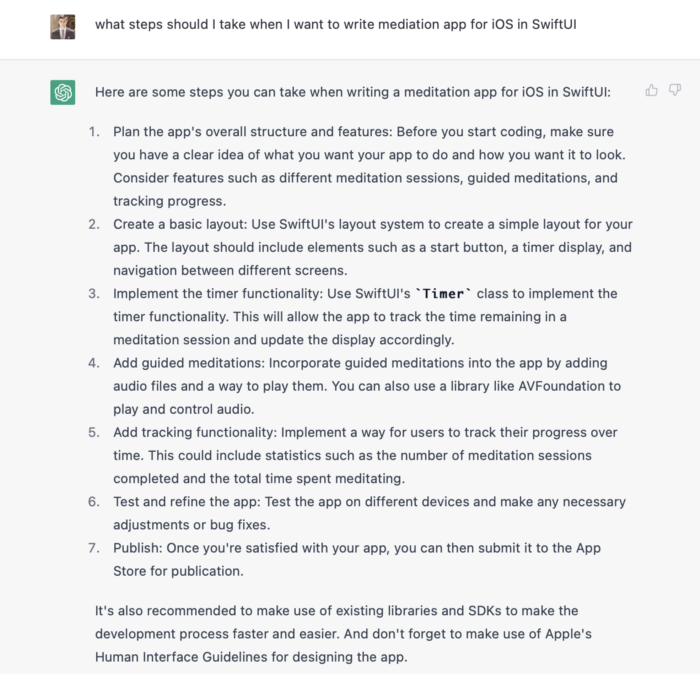

However, we can contact ChatGPT for thorough Swift app construction instructions.

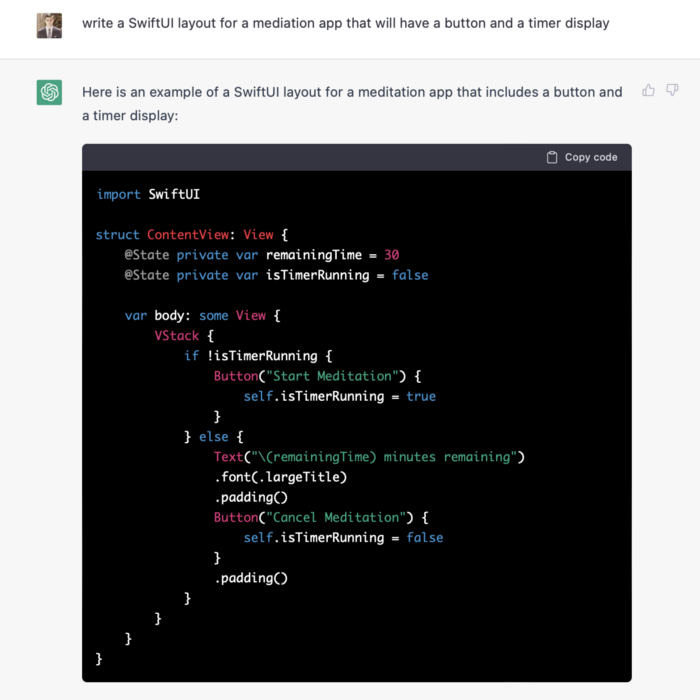

We can ask ChatGPT for step-by-step instructions now that we know what to do. Request a basic app layout from ChatGPT.

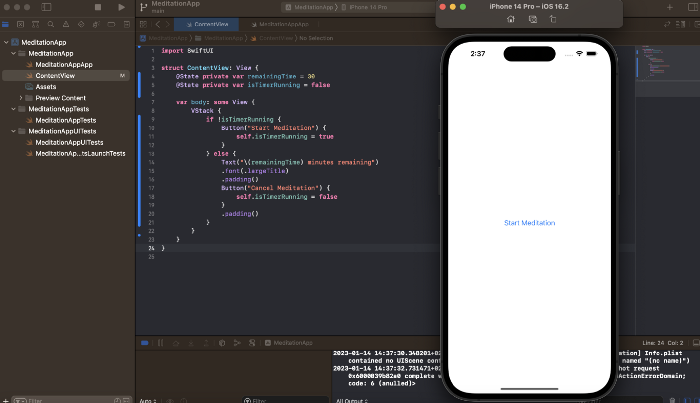

Copying this code to an Xcode project generates a functioning layout.

Takeaways

ChatGPT may provide step-by-step instructions on how to develop an app for a specific system, and individual steps can be utilized as prompts to ChatGPT. ChatGPT cannot generate the source code for the full program in one go.

The output that ChatGPT produces needs to be examined by a human. The majority of the time, you will need to polish or adjust ChatGPT's output, whether you develop a color scheme or a layout for the iOS app.

ChatGPT is unable to produce media material. Although ChatGPT cannot be used to produce images or sounds, it can assist you build prompts for programs like midjourney or Dalle-2 so that they can provide the appropriate images for you.

Shalitha Suranga

3 years ago

The Top 5 Mathematical Concepts Every Programmer Needs to Know

Using math to write efficient code in any language

Programmers design, build, test, and maintain software. Employ cases and personal preferences determine the programming languages we use throughout development. Mobile app developers use JavaScript or Dart. Some programmers design performance-first software in C/C++.

A generic source code includes language-specific grammar, pre-implemented function calls, mathematical operators, and control statements. Some mathematical principles assist us enhance our programming and problem-solving skills.

We all use basic mathematical concepts like formulas and relational operators (aka comparison operators) in programming in our daily lives. Beyond these mathematical syntaxes, we'll see discrete math topics. This narrative explains key math topics programmers must know. Master these ideas to produce clean and efficient software code.

Expressions in mathematics and built-in mathematical functions

A source code can only contain a mathematical algorithm or prebuilt API functions. We develop source code between these two ends. If you create code to fetch JSON data from a RESTful service, you'll invoke an HTTP client and won't conduct any math. If you write a function to compute the circle's area, you conduct the math there.

When your source code gets more mathematical, you'll need to use mathematical functions. Every programming language has a math module and syntactical operators. Good programmers always consider code readability, so we should learn to write readable mathematical expressions.

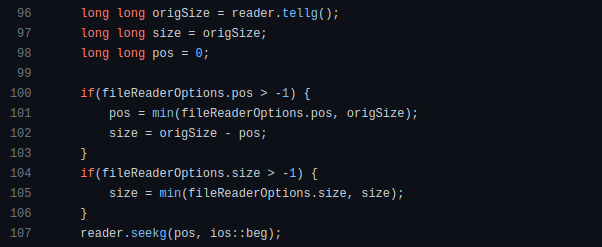

Linux utilizes clear math expressions.

Inbuilt max and min functions can minimize verbose if statements.

How can we compute the number of pages needed to display known data? In such instances, the ceil function is often utilized.

import math as m

results = 102

items_per_page = 10

pages = m.ceil(results / items_per_page)

print(pages)Learn to write clear, concise math expressions.

Combinatorics in Algorithm Design

Combinatorics theory counts, selects, and arranges numbers or objects. First, consider these programming-related questions. Four-digit PIN security? what options exist? What if the PIN has a prefix? How to locate all decimal number pairs?

Combinatorics questions. Software engineering jobs often require counting items. Combinatorics counts elements without counting them one by one or through other verbose approaches, therefore it enables us to offer minimum and efficient solutions to real-world situations. Combinatorics helps us make reliable decision tests without missing edge cases. Write a program to see if three inputs form a triangle. This is a question I commonly ask in software engineering interviews.

Graph theory is a subfield of combinatorics. Graph theory is used in computerized road maps and social media apps.

Logarithms and Geometry Understanding

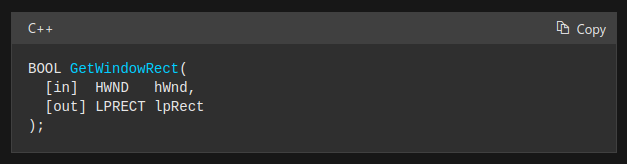

Geometry studies shapes, angles, and sizes. Cartesian geometry involves representing geometric objects in multidimensional planes. Geometry is useful for programming. Cartesian geometry is useful for vector graphics, game development, and low-level computer graphics. We can simply work with 2D and 3D arrays as plane axes.

GetWindowRect is a Windows GUI SDK geometric object.

High-level GUI SDKs and libraries use geometric notions like coordinates, dimensions, and forms, therefore knowing geometry speeds up work with computer graphics APIs.

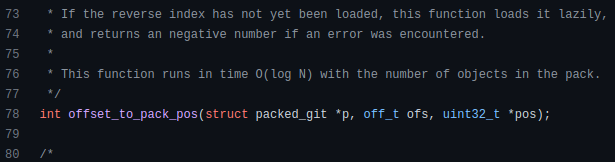

How does exponentiation's inverse function work? Logarithm is exponentiation's inverse function. Logarithm helps programmers find efficient algorithms and solve calculations. Writing efficient code involves finding algorithms with logarithmic temporal complexity. Programmers prefer binary search (O(log n)) over linear search (O(n)). Git source specifies O(log n):

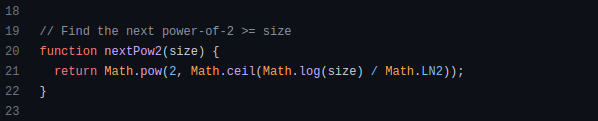

Logarithms aid with programming math. Metas Watchman uses a logarithmic utility function to find the next power of two.

Employing Mathematical Data Structures

Programmers must know data structures to develop clean, efficient code. Stack, queue, and hashmap are computer science basics. Sets and graphs are discrete arithmetic data structures. Most computer languages include a set structure to hold distinct data entries. In most computer languages, graphs can be represented using neighboring lists or objects.

Using sets as deduped lists is powerful because set implementations allow iterators. Instead of a list (or array), store WebSocket connections in a set.

Most interviewers ask graph theory questions, yet current software engineers don't practice algorithms. Graph theory challenges become obligatory in IT firm interviews.

Recognizing Applications of Recursion

A function in programming isolates input(s) and output(s) (s). Programming functions may have originated from mathematical function theories. Programming and math functions are different but similar. Both function types accept input and return value.

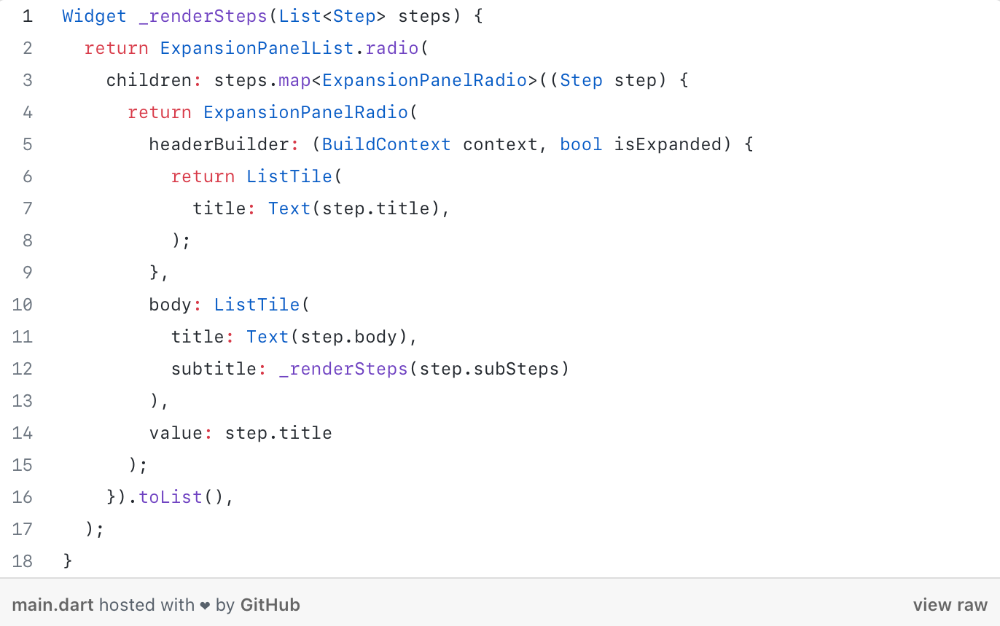

Recursion involves calling the same function inside another function. In its implementation, you'll call the Fibonacci sequence. Recursion solves divide-and-conquer software engineering difficulties and avoids code repetition. I recently built the following recursive Dart code to render a Flutter multi-depth expanding list UI:

Recursion is not the natural linear way to solve problems, hence thinking recursively is difficult. Everything becomes clear when a mathematical function definition includes a base case and recursive call.

Conclusion

Every codebase uses arithmetic operators, relational operators, and expressions. To build mathematical expressions, we typically employ log, ceil, floor, min, max, etc. Combinatorics, geometry, data structures, and recursion help implement algorithms. Unless you operate in a pure mathematical domain, you may not use calculus, limits, and other complex math in daily programming (i.e., a game engine). These principles are fundamental for daily programming activities.

Master the above math fundamentals to build clean, efficient code.

Duane Michael

3 years ago

Don't Fall Behind: 7 Subjects You Must Understand to Keep Up with Technology

As technology develops, you should stay up to date

You don't want to fall behind, do you? This post covers 7 tech-related things you should know.

You'll learn how to operate your computer (and other electronic devices) like an expert and how to leverage the Internet and social media to create your brand and business. Read on to stay relevant in today's tech-driven environment.

You must learn how to code.

Future-language is coding. It's how we and computers talk. Learn coding to keep ahead.

Try Codecademy or Code School. There are also numerous free courses like Coursera or Udacity, but they take a long time and aren't necessarily self-paced, so it can be challenging to find the time.

Artificial intelligence (AI) will transform all jobs.

Our skillsets must adapt with technology. AI is a must-know topic. AI will revolutionize every employment due to advances in machine learning.

Here are seven AI subjects you must know.

What is artificial intelligence?

How does artificial intelligence work?

What are some examples of AI applications?

How can I use artificial intelligence in my day-to-day life?

What jobs have a high chance of being replaced by artificial intelligence and how can I prepare for this?

Can machines replace humans? What would happen if they did?

How can we manage the social impact of artificial intelligence and automation on human society and individual people?

Blockchain Is Changing the Future

Few of us know how Bitcoin and blockchain technology function or what impact they will have on our lives. Blockchain offers safe, transparent, tamper-proof transactions.

It may alter everything from business to voting. Seven must-know blockchain topics:

Describe blockchain.

How does the blockchain function?

What advantages does blockchain offer?

What possible uses for blockchain are there?

What are the dangers of blockchain technology?

What are my options for using blockchain technology?

What does blockchain technology's future hold?

Cryptocurrencies are here to stay

Cryptocurrencies employ cryptography to safeguard transactions and manage unit creation. Decentralized cryptocurrencies aren't controlled by governments or financial institutions.

Bitcoin, the first cryptocurrency, was launched in 2009. Cryptocurrencies can be bought and sold on decentralized exchanges.

Bitcoin is here to stay.

Bitcoin isn't a fad, despite what some say. Since 2009, Bitcoin's popularity has grown. Bitcoin is worth learning about now. Since 2009, Bitcoin has developed steadily.

With other cryptocurrencies emerging, many people are wondering if Bitcoin still has a bright future. Curiosity is natural. Millions of individuals hope their Bitcoin investments will pay off since they're popular now.

Thankfully, they will. Bitcoin is still running strong a decade after its birth. Here's why.

The Internet of Things (IoT) is no longer just a trendy term.

IoT consists of internet-connected physical items. These items can share data. IoT is young but developing fast.

20 billion IoT-connected devices are expected by 2023. So much data! All IT teams must keep up with quickly expanding technologies. Four must-know IoT topics:

Recognize the fundamentals: Priorities first! Before diving into more technical lingo, you should have a fundamental understanding of what an IoT system is. Before exploring how something works, it's crucial to understand what you're working with.

Recognize Security: Security does not stand still, even as technology advances at a dizzying pace. As IT professionals, it is our duty to be aware of the ways in which our systems are susceptible to intrusion and to ensure that the necessary precautions are taken to protect them.

Be able to discuss cloud computing: The cloud has seen various modifications over the past several years once again. The use of cloud computing is also continually changing. Knowing what kind of cloud computing your firm or clients utilize will enable you to make the appropriate recommendations.

Bring Your Own Device (BYOD)/Mobile Device Management (MDM) is a topic worth discussing (MDM). The ability of BYOD and MDM rules to lower expenses while boosting productivity among employees who use these services responsibly is a major factor in their continued growth in popularity.

IoT Security is key

As more gadgets connect, they must be secure. IoT security includes securing devices and encrypting data. Seven IoT security must-knows:

fundamental security ideas

Authorization and identification

Cryptography

electronic certificates

electronic signatures

Private key encryption

Public key encryption

Final Thoughts

With so much going on in the globe, it can be hard to stay up with technology. We've produced a list of seven tech must-knows.

You might also like

Adam Frank

3 years ago

Humanity is not even a Type 1 civilization. What might a Type 3 be capable of?

The Kardashev scale grades civilizations from Type 1 to Type 3 based on energy harvesting.

How do technologically proficient civilizations emerge across timescales measuring in the tens of thousands or even millions of years? This is a question that worries me as a researcher in the search for “technosignatures” from other civilizations on other worlds. Since it is already established that longer-lived civilizations are the ones we are most likely to detect, knowing something about their prospective evolutionary trajectories could be translated into improved search tactics. But even more than knowing what to seek for, what I really want to know is what happens to a society after so long time. What are they capable of? What do they become?

This was the question Russian SETI pioneer Nikolai Kardashev asked himself back in 1964. His answer was the now-famous “Kardashev Scale.” Kardashev was the first, although not the last, scientist to try and define the processes (or stages) of the evolution of civilizations. Today, I want to launch a series on this question. It is crucial to technosignature studies (of which our NASA team is hard at work), and it is also important for comprehending what might lay ahead for mankind if we manage to get through the bottlenecks we have now.

The Kardashev scale

Kardashev’s question can be expressed another way. What milestones in a civilization’s advancement up the ladder of technical complexity will be universal? The main notion here is that all (or at least most) civilizations will pass through some kind of definable stages as they progress, and some of these steps might be mirrored in how we could identify them. But, while Kardashev’s major focus was identifying signals from exo-civilizations, his scale gave us a clear way to think about their evolution.

The classification scheme Kardashev employed was not based on social systems of ethics because they are something that we can probably never predict about alien cultures. Instead, it was built on energy, which is something near and dear to the heart of everybody trained in physics. Energy use might offer the basis for universal stages of civilisation progression because you cannot do the work of establishing a civilization without consuming energy. So, Kardashev looked at what energy sources were accessible to civilizations as they evolved technologically and used those to build his scale.

From Kardashev’s perspective, there are three primary levels or “types” of advancement in terms of harvesting energy through which a civilization should progress.

Type 1: Civilizations that can capture all the energy resources of their native planet constitute the first stage. This would imply capturing all the light energy that falls on a world from its host star. This makes it reasonable, given solar energy will be the largest source available on most planets where life could form. For example, Earth absorbs hundreds of atomic bombs’ worth of energy from the Sun every second. That is a rather formidable energy source, and a Type 1 race would have all this power at their disposal for civilization construction.

Type 2: These civilizations can extract the whole energy resources of their home star. Nobel Prize-winning scientist Freeman Dyson famously anticipated Kardashev’s thinking on this when he imagined an advanced civilization erecting a large sphere around its star. This “Dyson Sphere” would be a machine the size of the complete solar system for gathering stellar photons and their energy.

Type 3: These super-civilizations could use all the energy produced by all the stars in their home galaxy. A normal galaxy has a few hundred billion stars, so that is a whole lot of energy. One way this may be done is if the civilization covered every star in their galaxy with Dyson spheres, but there could also be more inventive approaches.

Implications of the Kardashev scale

Climbing from Type 1 upward, we travel from the imaginable to the god-like. For example, it is not hard to envisage utilizing lots of big satellites in space to gather solar energy and then beaming that energy down to Earth via microwaves. That would get us to a Type 1 civilization. But creating a Dyson sphere would require chewing up whole planets. How long until we obtain that level of power? How would we have to change to get there? And once we get to Type 3 civilizations, we are virtually thinking about gods with the potential to engineer the entire cosmos.

For me, this is part of the point of the Kardashev scale. Its application for thinking about identifying technosignatures is crucial, but even more strong is its capacity to help us shape our imaginations. The mind might become blank staring across hundreds or thousands of millennia, and so we need tools and guides to focus our attention. That may be the only way to see what life might become — what we might become — once it arises to start out beyond the boundaries of space and time and potential.

This is a summary. Read the full article here.

Sea Launch

3 years ago

📖 Guide to NFT terms: an NFT glossary.

NFT lingo can be overwhelming. As the NFT market matures and expands so does its own jargon, slang, colloquialisms or acronyms.

This ever-growing NFT glossary goal is to unpack key NFT terms to help you better understand the NFT market or at least not feel like a total n00b in a conversation about NFTs on Reddit, Discord or Twitter.

#

1:1 Art

Art where each piece is one of a kind (1 of 1). Unlike 10K projects, PFP or Generative Art collections have a cap of NFTs released that can range from a few hundreds to 10K.

1/1 of X

Contrary to 1:1 Art, 1/1 of X means each NFT is unique, but part of a large and cohesive collection. E.g: Fidenzas by Tyler Hobbs or Crypto Punks (each Punk is 1/1 of 10,000).

10K Project

A type of NFT collection that consists of approximately 10,000 NFTs (but not strictly).

A

AB

ArtBlocks, the most important platform for generative art currently.

AFAIK

As Far As I Know.

Airdrop

Distribution of an NFT token directly into a crypto wallet for free. Can be used as a marketing campaign or as scam by airdropping fake tokens to empty someone’s wallet.

Alpha

The first or very primitive release of a project. Or Investment term to track how a certain investment outdoes the market. E.g: Alpha of 1.0 = 1% improvement or Alpha of 20.0 = 20% improvement.

Altcoin

Any other crypto that is not Bitcoin. Bitcoin Maximalists can also refer to them as shitcoins.

AMA

Ask Me Anything. NFT creators or artists do sessions where anyone can ask questions about the NFT project, team, vision, etc. Usually hosted on Discord, but also on Reddit or even Youtube.

Ape

Someone can be aping, ape in or aped on an NFT meaning someone is taking a large position relative to its own portfolio size. Some argue that when someone apes can mean that they're following the hype, out of FOMO or without due diligence. Not related directly to the Bored Ape Yatch Club.

ATH

All-Time High. When a NFT project or token reaches the highest price to date.

Avatar project

An NFT collection that consists of avatars that people can use as their profile picture (see PFP) in social media to show they are part of an NFT community like Crypto Punks.

Axie Infinity

ETH blockchain-based game where players battle and trade Axies (digital pets). The main ERC-20 tokens used are Axie Infinity Shards (AXS) and Smooth Love Potions (formerly Small Love Potion) (SLP).

Axie Infinity Shards

AXS is an Eth token that powers the Axie Infinity game.

B

Bag Holder

Someone who holds its position in a crypto or keeps an NFT until it's worthless.

BAYC

Bored Ape Yacht Club. A very successful PFP 1/1 of 10,000 individual ape characters collection. People use BAYC as a Twitter profile picture to brag about being part of this NFT community.

Bearish

Borrowed finance slang meaning someone is doubtful about the current market and that it will crash.

Bear Market

When the Crypto or NFT market is going down in value.

Bitcoin (BTC)

First and original cryptocurrency as outlined in a whitepaper by the anonymous creator(s) Satoshi Nakamoto.

Bitcoin Maximalist

Believer that Bitcoin is the only cryptocurrency needed. All other cryptocurrencies are altcoins or shitcoins.

Blockchain

Distributed, decentralized, immutable database that is the basis of trust in Web 3.0 technology.

Bluechip

When an NFT project has a long track record of success and its value is sustained over time, therefore considered a solid investment.

BTD

Buy The Dip. A bear market can be an opportunity for crypto investors to buy a crypto or NFT at a lower price.

Bullish

Borrowed finance slang meaning someone is optimistic that a market will increase in value aka moon.

Bull market

When the Crypto or NFT market is going up and up in value.

Burn

Common crypto strategy to destroy or delete tokens from the circulation supply intentionally and permanently in order to limit supply and increase the value.

Buying on secondary

Whenever you don’t mint an NFT directly from the project, you can always buy it in secondary NFT marketplaces like OpenSea. Most NFT sales are secondary market sales.

C

Cappin or Capping

Slang for lying or faking. Opposed to no cap which means “no lie”.

Coinbase

Nasdaq listed US cryptocurrency exchange. Coinbase Wallet is one of Coinbase’s products where users can use a Chrome extension or app hot wallet to store crypto and NFTs.

Cold wallet

Otherwise called hardware wallet or cold storage. It’s a physical device to store your cryptocurrencies and/or NFTs offline. They are not connected to the Internet so are at less risk of being compromised.

Collection

A set of NFTs under a common theme as part of a NFT drop or an auction sale in marketplaces like OpenSea or Rarible.

Collectible

A collectible is an NFT that is a part of a wider NFT collection, usually part of a 10k project, PFP project or NFT Game.

Collector

Someone who buys NFTs to build an NFT collection, be part of a NFT community or for speculative purposes to make a profit.

Cope

The opposite of FOMO. When someone doesn’t buy an NFT because one is still dealing with a previous mistake of not FOMOing at a fraction of the price. So choosing to stay out.

Consensus mechanism

Method of authenticating and validating a transaction on a blockchain without the need to trust or rely on a central authority. Examples of consensus mechanisms are Proof of Work (PoW) or Proof of Stake (PoS).

Cozomo de’ Medici

Twitter alias used by Snoop Dogg for crypto and NFT chat.

Creator

An NFT creator is a person that creates the asset for the NFT idea, vision and in many cases the art (e.g. a jpeg, audio file, video file).

Crowsale

Where a crowdsale is the sale of a token that will be used in the business, an Initial Coin Offering (ICO) is the sale of a token that’s linked to the value of the business. Buying an ICO token is akin to buying stock in the company because it entitles you a share of the earnings and profits. Also, some tokens give you voting rights similar to holding stock in the business. The US Securities and Exchange Commission recently ruled that ICOs, but not crowdselling, will be treated as the sale of a security. This basically means that all ICOs must be registered like IPOs and offered only to accredited investors. This dramatically increases the costs and limits the pool of potential buyers.

Crypto Bags/Bags

Refers to how much cryptocurrencies someone holds, as in their bag of coins.

Cryptocurrency

The native coin of a blockchain (or protocol coin), secured by cryptography to be exchanged within a Peer 2 Peer economic system. E.g: Bitcoin (BTC) for the Bitcoin blockchain, Ether (ETH) for the Ethereum blockchain, etc.

Crypto community

The community of a specific crypto or NFT project. NFT communities use Twitter and Discord as their primary social media to hang out.

Crypto exchange

Where someone can buy, sell or trade cryptocurrencies and tokens.

Cryptography

The foundation of blockchain technology. The use of mathematical theory and computer science to encrypt or decrypt information.

CryptoKitties

One of the first and most popular NFT based blockchain games. In 2017, the NFT project almost broke the Ethereum blockchain and increased the gas prices dramatically.

CryptoPunk

Currently one of the most valuable blue chip NFT projects. It was created by Larva Labs. Crypto Punk holders flex their NFT as their profile picture on Twitter.

CT

Crypto Twitter, the crypto-community on Twitter.

Cypherpunks

Movement in the 1980s, advocating for the use of strong cryptography and privacy-enhancing technologies as a route to social and political change. The movement contributed and shaped blockchain tech as we know today.

D

DAO

Stands for Decentralized Autonomous Organization. When a NFT project is structured like a DAO, it grants all the NFT holders voting rights, control over future actions and the NFT’s project direction and vision. Many NFT projects are also organized as DAO to be a community-driven project.

Dapp

Mobile or web based decentralized application that interacts on a blockchain via smart contracts. E.g: Dapp is the frontend and the smart contract is the backend.

DCA

Acronym for Dollar Cost Averaging. An investment strategy to reduce the impact of crypto market volatility. E.g: buying into a crypto asset on a regular monthly basis rather than a big one time purchase.

Ded

Abbreviation for dead like "I sold my Punk for 90 ETH. I am ded."

DeFi

Short for Decentralized Finance. Blockchain alternative for traditional finance, where intermediaries like banks or brokerages are replaced by smart contracts to offer financial services like trading, lending, earning interest, insure, etc.

Degen

Short for degenerate, a gambler who buys into unaudited or unknown NFT or DeFi projects, without proper research hoping to chase high profits.

Delist

No longer offer an NFT for sale on a secondary market like Opensea. NFT Marketplaces can delist an NFT that infringes their rules. Or NFT owners can choose to delist their NFTs (has long as they have sufficient funds for the gas fees) due to price surges to avoid their NFT being bought or sold for a higher price.

Derivative

Projects derived from the original project that reinforces the value and importance of the original NFT. E.g: "alternative" punks.

Dev

A skilled professional who can build NFT projects using smart contracts and blockchain technology.

Dex

Decentralised Exchange that allows for peer-to-peer trustless transactions that don’t rely on a centralized authority to take place. E.g: Uniswap, PancakeSwap, dYdX, Curve Finance, SushiSwap, 1inch, etc.

Diamond Hands

Someone who believes and holds a cryptocurrency or NFT regardless of the crypto or NFT market fluctuations.

Discord

Chat app heavily used by crypto and NFT communities for knowledge sharing and shilling.

DLT

Acronym for Distributed Ledger Technology. It’s a protocol that allows the secure functioning of a decentralized database, through cryptography. This technological infrastructure scraps the need for a central authority to keep in check manipulation or exploitation of the network.

Dog coin

It’s a memecoin based on the Japanese dog breed, Shiba Inu, first popularised by Dogecoin. Other notable coins are Shiba Inu or Floki Inu. These dog coins are frequently subjected to pump and dumps and are extremely volatile. The original dog coin DOGE was created as a joke in 2013. Elon Musk is one of Dogecoin's most famous supporters.

Doxxed/Doxed

When the identity of an NFT team member, dev or creator is public, known or verifiable. In the NFT market, when a NFT team is doxed it’s a usually sign of confidence and transparency for NFT collectors to ensure they will not be scammed for an anonymous creator.

Drop

The release of an NFT (single or collection) into the NFT market.

DYOR

Acronym for Do Your Own Research. A common expression used in the crypto or NFT community to disclaim responsibility for the financial/strategy advice someone is providing the community and to avoid being called out by others in theNFT or crypto community.

E

EIP-1559 EIP

Referring to Ethereum Improvement Proposal 1559, commonly known as the London Fork. It’s an upgrade to the Ethereum protocol code to improve the blockchain security and scalability. The major change consists in shifting from a proof-of-work consensus mechanism (PoW) to a low energy and lower gas fees proof-of-stake system (PoS).

ERC-1155

Stands for Ethereum Request for Comment-1155. A multi-token standard that can represent any number of fungible (ERC-20) and non-fungible tokens (ERC-721).

ERC-20

Ethereum Request for Comment-20 is a standard defining a fungible token like a cryptocurrency.

ERC-721

Ethereum Request for Comment-721 is a standard defining a non-fungible token (NFT).

ETH

Aka Ether, the currency symbol for the native cryptocurrency of the Ethereum blockchain.

ETH2.0

Also known as the London Fork or EIP-1559 EIP. It’s an upgrade to the Ethereum network to improve the network’s security and scalability. The most dramatic change is the shift from the proof-of-work consensus mechanism (PoW) to proof-of-stake system (PoS).

Ether

Or ETH, the native cryptocurrency of the Ethereum blockchain.

Ethereum

Network protocol that allows users to create and run smart contracts over a decentralized network.

F

FCFS

Acronym for First Come First Served. Commonly used strategy in a NFT collection drop when the demand surpasses the supply.

Few

Short for "few understand". Similar to the irony behind the "probably nothing" expression. Like X person bought into a popular NFT, because it understands its long term value.

Fiat Currencies or Money

National government-issued currencies like the US Dollar (USD), Euro (EUR) or Great British Pound (GBP) that are not backed by a commodity like silver or gold. FIAT means an authoritative or arbitrary order like a government decree.

Flex

Slang for showing off. In the crypto community, it’s a Lamborghini or a gold Rolex. In the NFT world, it’s a CryptoPunk or BAYC PFP on Twitter.

Flip

Quickly buying and selling crypto or NFTs to make a profit.

Flippening

Colloquial expression coined in 2017 for when Ethereum’s market capitalisation surpasses Bitcoin’s.

Floor Price

It means the lowest asking price for an NFT collection or subset of a collection on a secondary market like OpenSea.

Floor Sweep

Refers when a NFT collector or investor buys all the lowest listed NFTs on a secondary NFT marketplace.

FOMO

Acronym for Fear Of Missing Out. Buying a crypto or NFT out of fear of missing out on the next big thing.

FOMO-in

Buying a crypto or NFT regardless if it's at the top of the market for FOMO.

Fractionalize

Turning one NFT like a Crypto Punk into X number of fractions ERC-20 tokens that prove ownership of that Punk. This allows for i) collective ownership of an NFT, ii) making an expensive NFT affordable for the common NFT collector and iii) adds more liquidity to a very illiquid NFT market.

FR

Abbreviation for For Real?

Fren

Means Friend and what people in the NFT community call each other in an endearing and positive way.

Foundation

An exclusive, by invitation only, NFT marketplace that specializes in NFT art.

Fungible

Means X can be traded for another X and still hold the same value. E.g: My dollars = your dollars. My 1 ether = your 1 ether. My casino chip = your casino chip. On Ethereum, fungible tokens are defined by the ERC-20 standard.

FUD

Acronym for Fear Uncertainty Doubt. It can be a) when someone spreads negative and sometimes false news to discredit a certain crypto or NFT project. Or b) the overall negative feeling regarding the future of the NFT/Crypto project or market, especially when going through a bear market.

Fudder

Someone who has FUD or engages in FUD about a NFT project.

Fudding your own bags

When an NFT collector or crypto investor speaks negatively about an NFT or crypto project he/she has invested in or has a stake in. Usually negative comments about the team or vision.

G

G

Means Gangster. A term of endearment used amongst the NFT Community.

Gas/Gas fees/Gas prices

The fee charged to complete a transaction in a blockchain. These gas prices vary tremendously between the blockchains, the consensus mechanism used to validate transactions or the number of transactions being made at a specific time.

Gas war

When a lot of NFT collectors (or bots) are trying to mint an NFT at once and therefore resulting in gas price surge.

Generative art

Artwork that is algorithmically created by code with unique traits and rarity.

Genesis drop

It refers to the first NFT drop a creator makes on an NFT auction platform.

GG

Interjection for Good Game.

GM

Interjection for Good Morning.

GMI

Acronym for Going to Make It. Opposite of NGMI (NOT Going to Make It).

GOAT

Acronym for Greatest Of All Time.

GTD

Acronym for Going To Dust. When a token or NFT project turns out to be a bad investment.

GTFO

Get The F*ck Out, as in “gtfo with that fud dude” if someone is talking bull.

GWEI

One billionth of an Ether (ETH) also known as a Shannon / Nanoether / Nano — unit of account used to price Ethereum gas transactions.

H

HEN (Hic Et Nunc)

A popular NFT art marketplace for art built on the Tezos blockchain. Big NFT marketplace for inexpensive NFTs but not a very user-friendly UI/website.

HODL

Misspelling of HOLD coined in an old Reddit post. Synonym with “Hold On for Dear Life” meaning hold your coin or NFT until the end, whether that they’ll moon or dust.

Hot wallet

Wallets connected to the Internet, less secure than cold wallet because they’re more susceptible to hacks.

Hype

Term used to show excitement or anticipation about an upcoming crypto project or NFT.

I

ICO

Acronym for Initial Coin Offering. It’s the crypto equivalent to a stocks’ IPO (Initial Public Offering) but with far less scrutiny or regulation (leading to a lot of scams). ICO’s are a popular way for crypto projects to raise funds.

IDO

Acronym for Initial Dex Offering. To put it simply it means to launch NFTs or tokens via a decentralized liquidity exchange. It’s a common fundraising method used by upcoming crypto or NFT projects. Many consider IDOs a far better fundraising alternative to ICOs.

IDK

Acronym for I Don’t Know.

IDEK

Acronym for I Don’t Even Know.

Imma

Short for I’m going to be.

IRL

Acronym for In Real Life. Refers to the physical world outside of the online/virtual world of crypto, NFTs, gaming or social media.

IPFS

Acronym for Interplanetary File System. A peer-to-peer file storage system using hashes to recall and preserve the integrity of the file, commonly used to store NFTs outside of the blockchain.

It’s Money Laundering

Someone can use this expression to suggest that NFT prices aren’t real and that actually people are using NFTs to launder money, without providing much proof or explanation on how it works.

IYKYK

Stands for If You Know, You Know This. Similar to the expression "few", used when someone buys into a popular crypto or NFT project, slightly because of FOMO but also because it believes in its long term value.

J

JPEG/JPG

File format typically used to encode NFT art. Some people also use Jpeg to mock people buying NFTs as in “All that money for a jpeg”.

K

KMS

Short for Kill MySelf.

L

Larva Labs/ LL

NFT Creators behind the popular NFT projects like Cryptopunks,Meebits or Autoglyphs.

Laser eyes

Bitcoin meme signalling support for BTC and/or it will break the $100k per coin valuation.

LFG

Acronym for Let’s F*cking Go! A common rallying call used in the crypto or NFT community to lead people into buying an NFT or a crypto.

Liquidity

Term that means that a token or NFT has a high volume activity in the crypto/NFT market. It’s easily sold and resold. But usually the NFT market it’s illiquid when compared to the general crypto market, due to the non-fungibility nature of an NFT (there are less buyers for every NFTs out there).

LMFAO

Stands for Laughing My F*cking Ass Off.

Looks Rare

Ironic expression commonly used in the NFT Community. Rarity is a driver of an NFT’s value.

London Hard Fork

Known as EIP-1559, was an Ethereum code upgrade proposal designed to improve the blockchain security and scalability. It’s major change is to shift from PoW to PoS consensus mechanism.

Long run

Means someone is committed to the NFT market or an NFT project in the long term.

M

Maximalist

Typically refers to Bitcoin Maximalists. People who only believe that Bitcoin is the most secure and resilient blockchain. For Maximalists, all other cryptocurrencies are shitcoins therefore a waste of time, development and money.

McDonald's

Common and ironic expression amongst the crypto community. It means that Mcdonald’s is always a valid backup plan or career in the case all cryptocurrencies crash and disappear.

Meatspace

Synonymous with IRL - In Real Life.

Memecoin

Cryptocurrency like Dogecoin that is based on an internet joke or meme.

Metamask

Popular crypto hot wallet platform to store crypto and NFTs.

Metaverse

Term was coined by writer Neal Stephenson in the 1992 dystopian novel “Snow Crash”. It’s an immersive and digital place where people interact via their avatars. Big tech players like Meta (formerly known as Facebook) and other independent players have been designing their own version of a metaverse. NFTs can have utility for users like buying, trading, winning, accessing, experiencing or interacting with things inside a metaverse.

Mfer

Short for “mother fker”.

Miners

Single person or company that mines one or more cryptocurrencies like Bitcoin or Ethereum. Both blockchains need computing power for their Proof of Work consensus mechanism. Miners provide the computing power and receive coins/tokens in return as payment.

Mining

Mining is the process by which new tokens enter in circulation as for example in the Bitcoin blockchain. Also, mining ensures the validity of new transactions happening in a given blockchain that uses the PoW consensus mechanism. Therefore, the ones who mine are rewarded by ensuring the validity of a blockchain.

Mint/Minting

Mint an NFT is the act of publishing your unique instance to a specific blockchain like Ethereum or Tezos blockchain. In simpler terms, a creator is adding a one-of-kind token (NFT) into circulation in a specific blockchain.

Once the NFT is minted - aka created - NFT collectors can i) direct mint, therefore purchase the NFT by paying the specified amount directly into the project’s wallet. Or ii) buy it via an intermediary like an NFT marketplace (e.g: OpenSea, Foundation, Rarible, etc.). Later, the NFT owner can choose to resell the NFT, most NFT creators set up a royalty for every time their NFT is resold.

Minting interval

How often an NFT creator can mint or create tokens.

MOAR

A misspelling that means “more”.

Moon/Mooning

When a coin (e.g. ETH), or token, like an NFT goes exponential in price and the price graph sees a vertical climb. Crypto or NFT users then use the expression that “X token is going to the moon!”.

Moon boys

Slang for crypto or NFT holders who are looking to pump the price dramatically - taking a token to the moon - for short term gains and with no real long term vision or commitment.

N

Never trust, always verify

Treat everyone or every project like something potentially malicious.

New coiner

Crypto slang for someone new to the cryptocurrency space. Usually newcomers can be more susceptible to FUD or scammers.

NFA

Acronym for Not Financial Advice.

NFT

Acronym for Non-Fungible Token. The type of token that can be created, bought, sold, resold and viewed in different dapps. The ERC-721 smart contract standard (Ethereum blockchain) is the most popular amongst NFTs.

NFT Marketplace / NFT Auction platform

Platforms where people can sell and buy NFTs, either via an auction or pay the seller’s price. The largest NFT marketplace is OpenSea. But there are other popular NFT marketplace examples like Foundation, SuperRare, Nifty Gateway, Rarible, Hic et Nunc (HeN), etc.

NFT Whale

A NFT collector or investor who buys a large amount of NFTs.

NGMI

Acronym for Not Going to Make It. For example, something said to someone who has paper hands.

NMP

Acronym for Not My Problem.

Nocoiner

It can be someone who simply doesn’t hold cryptocurrencies, mistrust the crypto market or believes that crypto is either a scam or a ponzi scheme.

Noob/N00b/Newbie

Slang for someone new or not experienced in cryptocurrency or NFTs. These people are more susceptible to scams, drawn into pump and dumps or getting rekt on bad coins.

Normie/Normy

Similar expression for a nocoiner.

NSFW

Acronym for Not Suitable For Work. Referring to online content inappropriate for viewing in public or at work. It began as mostly a tag for sexual content, nudity, or violence, but it has envolved to range a number of other topics that might be delicate or trigger viewers.

Nuclear NFTs

An NFT or collectible with more than 1,000 owners. For the NFT to be sold or resold, every co-owners must give their permission beforehand. Otherwise, the NFT transaction can’t be made.

O

OG

Acronym for Original Gangster and it popularized by 90s Hip Hop culture. It means the first, the original or the person who has been around since the very start and earned respect in the community. In NFT terms, Cryptopunks are the OG of NFTs.

On-chain vs Off-chain

An on-chain NFT is when the artwork (like a jpeg, video or music file) is stored directly into the blockchain making it more secure and less susceptible to being stolen. But, note that most blockchains can only store small amounts of data.

Off-chain NFTs means that the high quality image, music or video file is not stored in the blockchain. But, the NFT data is stored on an external party like a) a centralized server, highly vulnerable to the server being shut down/exploited. Or b) an InterPlanetary File System (IPFS), also an external party but more secure way of finding data because it utilizes a distributed, decentralized system.

OpenSea

By far the largest NFT marketplace in the world, currently.

P

Paper Hands

A crypto or NFT holder who is permeable to negative market sentiment or FUD. And does not hold their crypto or NFT for long. Expression used to describe someone who sells as soon as NFTs enter a bear market.

PFP

Stands for Picture For Profile. Twitter users who hold popular NFTs like Crypto Punk or BAYC use their punk or monkey avatar as their profile picture.

POAP NFT

Stands for Proof of Attendance Protocol. These types of NFTs are awarded to attendees of events, regardless if they’re physical or virtual, as proof you attended.

PoS

Stands for Proof of Stake. A consensus mechanism used by blockchains like Bitcoin or Ethereum to achieve agreement, trust and security in every transaction and keep the integrity of the blockchain intact. PoS mechanisms are considered more environmentally friendly than PoW as they’re lower energy and in emissions.

PoW

Stands for Proof of Work. A consensus mechanism used by blockchains like Bitcoin to achieve agreement, trust and security and keep the transactional integrity of the blockchain intact. PoW mechanism requires a lot of computational power, therefore uses more energy resources and higher CO2 emissions than the PoS mechanism.

Private Key

It can be similar to a password. It’s a secret number that allows users to access their cold or hot wallet funds, prove ownership of a certain address and sign transactions on the blockchain.

It’s not advisable to share a private key with anyone as it makes a person vulnerable to thefts. In case someone loses or forgets its private key, it can use a recovery phrase to restore access to a crypto or NFT wallet.

Pre-mine

A term used in crypto to refer to the act of creating a set amount of tokens before their public launch. It can also be known as a Genesis Sale and is usually associated with Initial Coin Offerings (ICOs) in order to compensate founders, developers or early investors.

Probably nothing

It’s an ironic expression used by NFT enthusiasts to refer to an important or soon to be big news, project or person in the NFT space. Meaning when someone says probably nothing it actually means that it is probably something.

Protocol Coin

Stands for the native coin of a blockchain. As in Ether for the Ethereum blockchain or BTC on the Bitcoin blockchain.

Pump & Dump

The term pump means when a person or a group of people buy or convince others to buy large quantities of a crypto or an NFT with the single goal to drive the price to a peak. When the price peaks, these people sell their position high and for a hefty profit, therefore dumping the price and leaving other slower investors or newbies rekt or at a loss.

R

Rarity

Rarity in NFT terms refers to how rare an NFT is. The rarity can be defined by the number of traits, scarcity or properties of an NFT.

Reaching

Slang for an exaggeration over something to make it sound worse than what it actually is or to take a point/scenario too far.

Recovery phrase

A 12-word phrase that acts like backup for your crypto private keys. A person can recover all of the crypto wallet accounts’ private keys from the recovery phrase. Is not advisable to share the recovery phrase with anyone.

Rekt

Slang for wrecked. When a crypto or NFT project goes wrong or down in value sharply. Or more broadly, when something goes wrong like a person is price out by the gas surge or an NFT floor price goes down.

Right Click Save As

An Ironic expression used by people who don’t understand the value or potential unlocked by NFTs. Person who makes fun that she/he can easily get a digital artwork by Right Click Save As and mock the NFT space and its hype.

Roadmap

The strategy outlined by an NFT project. A way to explain to the NFT community or a potential NFT investor, the different stages, value and the long term vision of the NFT project.

Royalties

NFT creators can set up their NFT so each time their NFT is resold, the creator gets paid a percentage of the sale price.

RN

Acronym for Right Now.

Rug Pull/Rugged

Slang for a scam when the founders, team or developers suddenly leave a crypto project and run away with all the investors’ funds leaving them with nothing.

S

Satoshi Nakamoto

The anonymous creator of the Bitcoin whitepaper and whose identity has never been verified.

Scammer

Someone actively trying to steal other people’s crypto or NFTs.

Secondary

Secondary refers to secondary NFT marketplaces, where NFT collectors or investors can resell NFTs after they’ve been minted. The price of an NFT or NFT collection is determined by those who list them.

Seed phrase

Another name for recovery phrase is the 12-word phrase that allows you to recover all of the crypto wallet accounts’ private keys and regain control of the wallet. Is not advisable to share the seed phrase with anyone.

Seems legit

When an NFT project or a person in the NFT community looks promising and the real deal, meaning seems legitimate. Depending on the context can also be used ironically.

Seems rare

An ironic expression or dismissive comment used by the NFT community. For example, It can be used sarcastically when someone asks for feedback on an NFT they own or created.

Ser

Slang for sir and a polite way of addressing others in an NFT community.

Shill

Expression when someone wants to promote or get exposure to an NFT they own or created.

Shill Thread

It’s a common Twitter strategy to gain traction by encouraging NFT creators to share a link to their NFT project in the hopes of getting bought or noticed by the NFT Community and potential buyers.

Simp/Simping

A NFT holder or creator who comes off as trying to hard impress an NFT whale or investor.

Sh*tposter

A person who mostly posts meme content on Twitter for fun.

SLP

Acronym for Smooth Love Potion. It’s a token players can earn as a reward in the NFT game Axie Infinity.

Smart Contract

A self-executing contract where the terms of the agreement between buyer and seller are directly written into the code and without third party or human intervention. Ethereum is a blockchain that can execute smart contracts, on the contrary to Bitcoin which does not have that capability.

SMFH

Acronym for Shaking My F*cking Head. Common reply to a person showing unbelievable idiocy.

Sock Puppet

Scam account used to lure noob investors into fake investment services.

Snag

It means to buy an NFT quickly and for a very low price. Can also be known as sniping.

Sotheby’s

Very famous auction house that has recently auctioned Beeple’s NFTs or Bored Ape Yacht Club and Crypto Punks’ NFT collections.

Stake

Crypto term for locking up a certain amount of crypto tokens for a set period of time to earn interest. In the NFT space, there are popping up a lot of projects or services that allow NFT holders to earn interest for holding a certain NFT.

Szn

Stands for season referring to crypto or NFT market cycles.

T

TINA

Acronym for There Is No Alternative. Example: someone asks “why are you investing in BTC?”, to which the reply is “TINA”.

TINA RIF

Acronym for There Is No Alternative Resistance Is Futile.

This is the way

A commendation for positive behavior by someone in the NFT Community.

Tokenomics

Referring to the economics of cryptocurrencies, DeFi or NFT projects.

V

Valhalla

Ironic use of the Viking “heaven”. Meaning someone’s NFT collection is either going to be a profitable and blue chip project, therefore they can ascend to Valhalla or is going to tank and that person will have to work at a Mcdonald’s.

Vibe

Term used to express a positive emotional state.

Volatile/Volatility

Term used to describe rapid market fluctuations and crypto or NFT prices go up and down quickly in a short period.

W

WAGMI

Acronym for We Are Going to Make It. Rally cry to build momentum for a crypto or NFT project and lead even more people into buying, shilling or supporting a specific project.

Wallet

There can be a hot or cold wallet, but both are a place where someone can store their cryptocurrency and tokens. Hot wallets are always connected to the Internet like MetaMask, Trust wallet or Phantom. On the contrary cold wallets are hardware wallets to store crypto or NFTs offline like Nano Ledger.

Weak Hands

Synonymous with Paper Hands. Someone who immediately sells their crypto or NFT because of a bear market, FUD or any other negative sentiment.

Web 1.0

Refers to the beginning of the Web. A period from around 1990 to 2005, also known as the read-only web.

Web 2.0

Refers to an iteration of Web 1.0. From 2005 to the present moment, where social media platforms like Facebook, Instagram, TikTok, Google, Twitter, etc reshaped the web, therefore becoming the read-write web.

Web 3.0

A term coined by Ethereum co-founder Gavin Wood and it’s an idea of what the future of the web could look like. Most peoples’ data, info or content would no longer be centralized in Web 2.0 giants - the Big Tech - but decentralized, mostly thanks to blockchain technology. Web 3.0 could be known as read-write-trust web.

Wen

As in When.

Wen Moon

Popular expression from crypto Twitter not so much in the NFT space. Refers to the still distant future when a token will moon.

Whitepaper

Document released by a crypto or NFT project where it lays the technical information behind the concept, vision, roadmap and plans to grow a certain project.

Whale

Someone who owns a large position on a specific or many cryptos or NFTs.

Y

Yodo

Acronym for You Only Die Once. The opposite of Yolo.

Yolo

Acronym for You Only Live Once. A person can use this when they just realized they bought a shitcoin or crap NFT and they’re getting rekt.

Original post

Ben

3 years ago

The Real Value of Carbon Credit (Climate Coin Investment)

Disclaimer : This is not financial advice for any investment.

TL;DR

You might not have realized it, but as we move toward net zero carbon emissions, the globe is already at war.

According to the Paris Agreement of COP26, 64% of nations have already declared net zero, and the issue of carbon reduction has already become so important for businesses that it affects their ability to survive. Furthermore, the time when carbon emission standards will be defined and controlled on an individual basis is becoming closer.

Since 2017, the market for carbon credits has experienced extraordinary expansion as a result of widespread talks about carbon credits. The carbon credit market is predicted to expand much more once net zero is implemented and carbon emission rules inevitably tighten.

Hello! Ben here from Nonce Classic. Nonce Classic has recently confirmed the tremendous growth potential of the carbon credit market in the midst of a major trend towards the global goal of net zero (carbon emissions caused by humans — carbon reduction by humans = 0 ). Moreover, we too believed that the questions and issues the carbon credit market suffered from the last 30–40yrs could be perfectly answered through crypto technology and that is why we have added a carbon credit crypto project to the Nonce Classic portfolio. There have been many teams out there that have tried to solve environmental problems through crypto but very few that have measurable experience working in the carbon credit scene. Thus we have put in our efforts to find projects that are not crypto projects created for the sake of issuing tokens but projects that pragmatically use crypto technology to combat climate change by solving problems of the current carbon credit market. In that process, we came to hear of Climate Coin, a veritable carbon credit crypto project, and us Nonce Classic as an accelerator, have begun contributing to its growth and invested in its tokens. Starting with this article, we plan to publish a series of articles explaining why the carbon credit market is bullish, why we invested in Climate Coin, and what kind of project Climate Coin is specifically. In this first article let us understand the carbon credit market and look into its growth potential! Let’s begin :)

The Unavoidable Entry of the Net Zero Era

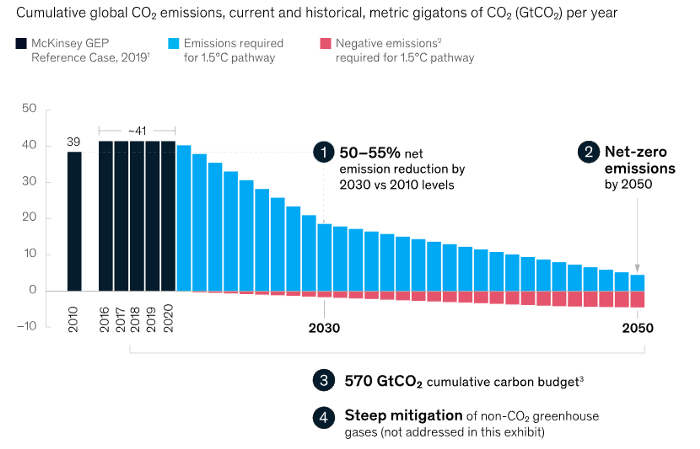

Net zero means... Human carbon emissions are balanced by carbon reduction efforts. A non-environmentalist may find it hard to accept that net zero is attainable by 2050. Global cooperation to save the earth is happening faster than we imagine.

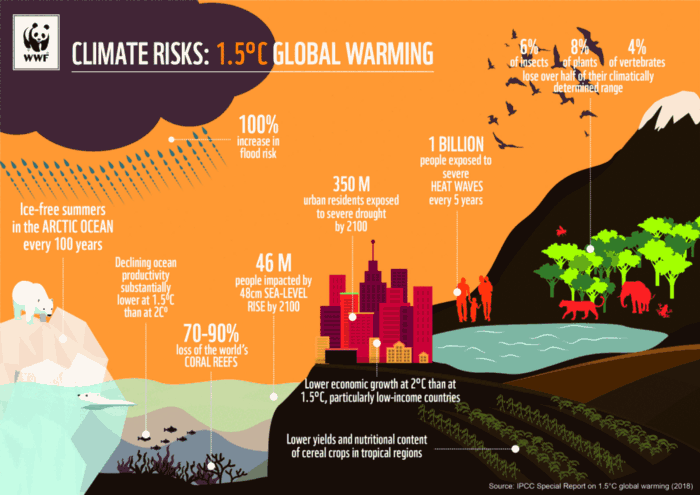

In the Paris Agreement of COP26, concluded in Glasgow, UK on Oct. 31, 2021, nations pledged to reduce worldwide yearly greenhouse gas emissions by more than 50% by 2030 and attain net zero by 2050. Governments throughout the world have pledged net zero at the national level and are holding each other accountable by submitting Nationally Determined Contributions (NDC) every five years to assess implementation. 127 of 198 nations have declared net zero.

Each country's 1.5-degree reduction plans have led to carbon reduction obligations for companies. In places with the strictest environmental regulations, like the EU, companies often face bankruptcy because the cost of buying carbon credits to meet their carbon allowances exceeds their operating profits. In this day and age, minimizing carbon emissions and securing carbon credits are crucial.

Recent SEC actions on climate change may increase companies' concerns about reducing emissions. The SEC required all U.S. stock market companies to disclose their annual greenhouse gas emissions and climate change impact on March 21, 2022. The SEC prepared the proposed regulation through in-depth analysis and stakeholder input since last year. Three out of four SEC members agreed that it should pass without major changes. If the regulation passes, it will affect not only US companies, but also countless companies around the world, directly or indirectly.

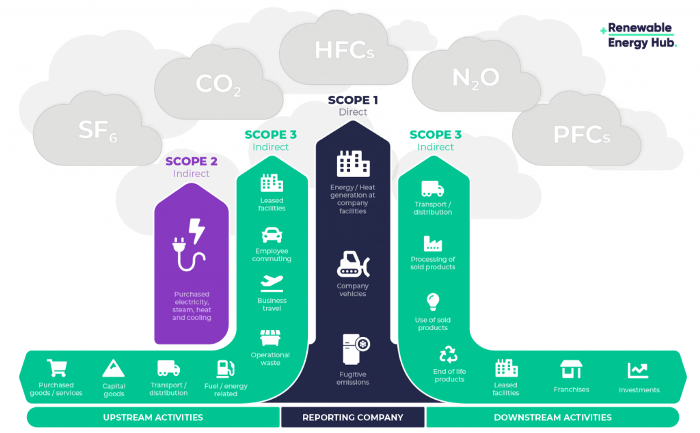

Even companies not listed on the U.S. stock market will be affected and, in most cases, required to disclose emissions. Companies listed on the U.S. stock market with significant greenhouse gas emissions or specific targets are subject to stricter emission standards (Scope 3) and disclosure obligations, which will magnify investigations into all related companies. Greenhouse gas emissions can be calculated three ways. Scope 1 measures carbon emissions from a company's facilities and transportation. Scope 2 measures carbon emissions from energy purchases. Scope 3 covers all indirect emissions from a company's value chains.

The SEC's proposed carbon emission disclosure mandate and regulations are one example of how carbon credit policies can cross borders and affect all parties. As such incidents will continue throughout the implementation of net zero, even companies that are not immediately obligated to disclose their carbon emissions must be prepared to respond to changes in carbon emission laws and policies.

Carbon reduction obligations will soon become individual. Individual consumption has increased dramatically with improved quality of life and convenience, despite national and corporate efforts to reduce carbon emissions. Since consumption is directly related to carbon emissions, increasing consumption increases carbon emissions. Countries around the world have agreed that to achieve net zero, carbon emissions must be reduced on an individual level. Solutions to individual carbon reduction are being actively discussed and studied under the term Personal Carbon Trading (PCT).

PCT is a system that allows individuals to trade carbon emission quotas in the form of carbon credits. Individuals who emit more carbon than their allotment can buy carbon credits from those who emit less. European cities with well-established carbon credit markets are preparing for net zero by conducting early carbon reduction prototype projects. The era of checking product labels for carbon footprints, choosing low-emissions transportation, and worrying about hot shower emissions is closer than we think.

The Market for Carbon Credits Is Expanding Fearfully

Compliance and voluntary carbon markets make up the carbon credit market.

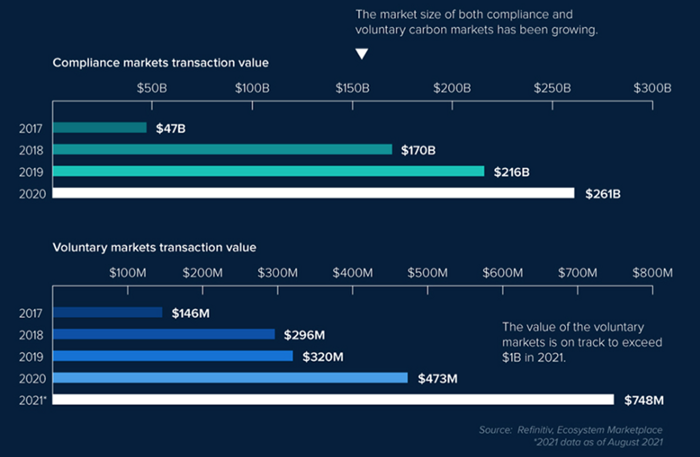

A Compliance Market enforces carbon emission allowances for actors. Companies in industries that previously emitted a lot of carbon are included in the mandatory carbon market, and each government receives carbon credits each year. If a company's emissions are less than the assigned cap and it has extra carbon credits, it can sell them to other companies that have larger emissions and require them (Cap and Trade). The annual number of free emission permits provided to companies is designed to decline, therefore companies' desire for carbon credits will increase. The compliance market's yearly trading volume will exceed $261B in 2020, five times its 2017 level.

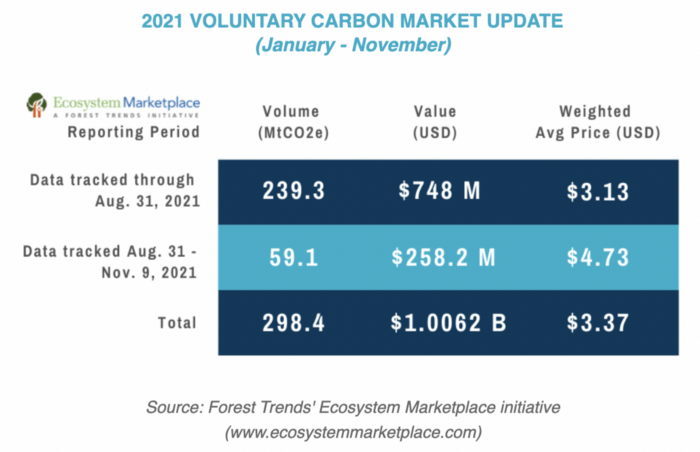

In the Voluntary Market, carbon reduction is voluntary and carbon credits are sold for personal reasons or to build market participants' eco-friendly reputations. Even if not in the compliance market, it is typical for a corporation to be obliged to offset its carbon emissions by acquiring voluntary carbon credits. When a company seeks government or company investment, it may be denied because it is not net zero. If a significant shareholder declares net zero, the companies below it must execute it. As the world moves toward ESG management, becoming an eco-friendly company is no longer a strategic choice to gain a competitive edge, but an important precaution to not fall behind. Due to this eco-friendly trend, the annual market volume of voluntary emission credits will approach $1B by November 2021. The voluntary credit market is anticipated to reach $5B to $50B by 2030. (TSCVM 2021 Report)

In conclusion

This article analyzed how net zero, a target promised by countries around the world to combat climate change, has brought governmental, corporate, and human changes. We discussed how these shifts will become more obvious as we approach net zero, and how the carbon credit market would increase exponentially in response. In the following piece, let's analyze the hurdles impeding the carbon credit market's growth, how the project we invested in tries to tackle these issues, and why we chose Climate Coin. Wait! Jim Skea, co-chair of the IPCC working group, said,

“It’s now or never, if we want to limit global warming to 1.5°C” — Jim Skea

Join nonceClassic’s community:

Telegram: https://t.me/non_stock

Youtube: https://www.youtube.com/channel/UCqeaLwkZbEfsX35xhnLU2VA

Twitter: @nonceclassic

Mail us : general@nonceclassic.org