More on Society & Culture

Enrique Dans

3 years ago

What happens when those without morals enter the economic world?

I apologize if this sounds basic, but throughout my career, I've always been clear that a company's activities are shaped by its founder(s)' morality.

I consider Palantir, owned by PayPal founder Peter Thiel, evil. He got $5 billion tax-free by hacking a statute to help middle-class savings. That may appear clever, but I think it demonstrates a shocking lack of solidarity with society. As a result of this and other things he has said and done, I early on dismissed Peter Thiel as someone who could contribute anything positive to society, and events soon proved me right: we are talking about someone who clearly considers himself above everyone else and who does not hesitate to set up a company, Palantir, to exploit the data of the little people and sell it to the highest bidder, whoever that is and whatever the consequences.

The German courts have confirmed my warnings concerning Palantir. The problem is that politicians love its surveillance tools because they think knowing more about their constituents gives them power. These are ideal for dictatorships who want to snoop on their populace. Hence, Silicon Valley's triumphalist dialectic has seduced many governments at many levels and collected massive volumes of data to hold forever.

Dangerous company. There are many more. My analysis of the moral principles that disclose company management changed my opinion of Facebook, now Meta, and anyone with a modicum of interest might deduce when that happened, a discovery that leaves you dumbfounded. TikTok was easy because its lack of morality was revealed early when I saw the videos it encouraged minors to post and the repercussions of sharing them through its content recommendation algorithm. When you see something like this, nothing can convince you that the firm can change its morals and become good. Nothing. You know the company is awful and will fail. Speak it, announce it, and change it. It's like a fingerprint—unchangeable.

Some of you who read me frequently make its Facebook today jokes when I write about these firms, and that's fine: they're my moral standards, those of an elderly professor with thirty-five years of experience studying corporations and discussing their cases in class, but you don't have to share them. Since I'm writing this and don't have to submit to any editorial review, that's what it is: when you continuously read a person, you have to assume that they have moral standards and that sometimes you'll agree with them and sometimes you won't. Morality accepts hierarchies, nuances, and even obsessions. I know not everyone shares my opinions, but at least I can voice them. One day, one of those firms may sue me (as record companies did some years ago).

Palantir is incredibly harmful. Limit its operations. Like Meta and TikTok, its business strategy is shaped by its founders' immorality. Such a procedure can never be beneficial.

Charlie Brown

3 years ago

What Happens When You Sell Your House, Never Buying It Again, Reverse the American Dream

Homeownership isn't the only life pattern.

Want to irritate people?

My party trick is to say I used to own a house but no longer do.

I no longer wish to own a home, not because I lost it or because I'm moving.

It was a long-term plan. It was more deliberate than buying a home. Many people are committed for this reason.

Poppycock.

Anyone who told me that owning a house (or striving to do so) is a must is wrong.

Because, URGH.

One pattern for life is to own a home, but there are millions of others.

You can afford to buy a home? Go, buddy.

You think you need 1,000 square feet (or more)? You think it's non-negotiable in life?

Nope.

It's insane that society forces everyone to own real estate, regardless of income, wants, requirements, or situation. As if this trade brings happiness, stability, and contentment.

Take it from someone who thought this for years: drywall isn't happy. Living your way brings contentment.

That's in real estate. It may also be renting a small apartment in a city that makes your soul sing, but you can't afford the downpayment or mortgage payments.

Living or traveling abroad is difficult when your life savings are connected to something that eats your money the moment you sign.

#vanlife, which seems like torment to me, makes some people feel alive.

I've seen co-living, vacation rental after holiday rental, living with family, and more work.

Insisting that home ownership is the only path in life is foolish and reduces alternative options.

How little we question homeownership is a disgrace.

No one challenges a homebuyer's motives. We congratulate them, then that's it.

When you offload one, you must answer every question, even if you have a loose screw.

Why do you want to sell?

Do you have any concerns about leaving the market?

Why would you want to renounce what everyone strives for?

Why would you want to abandon a beautiful place like that?

Why would you mismanage your cash in such a way?

But surely it's only temporary? RIGHT??

Incorrect questions. Buying a property requires several inquiries.

The typical American has $4500 saved up. When something goes wrong with the house (not if, it’s never if), can you actually afford the repairs?

Are you certain that you can examine a home in less than 15 minutes before committing to buying it outright and promising to pay more than twice the asking price on a 30-year 7% mortgage?

Are you certain you're ready to leave behind friends, family, and the services you depend on in order to acquire something?

Have you thought about the connotation that moving to a suburb, which more than half of Americans do, means you will be dependent on a car for the rest of your life?

Plus:

Are you sure you want to prioritize home ownership over debt, employment, travel, raising kids, and daily routines?

Homeownership entails that. This ex-homeowner says it will rule your life from the time you put the key in the door.

This isn't questioned. We don't question enough. The holy home-ownership grail was set long ago, and we don't challenge it.

Many people question after signing the deeds. 70% of homeowners had at least one regret about buying a property, including the expense.

Exactly. Tragic.

Homes are different from houses

We've been fooled into thinking home ownership will make us happy.

Some may agree. No one.

Bricks and brick hindered me from living the version of my life that made me most comfortable, happy, and steady.

I'm spending the next month in a modest apartment in southern Spain. Even though it's late November, today will be 68 degrees. My spouse and I will soon meet his visiting parents. We'll visit a Sherry store. We'll eat, nap, walk, and drink Sherry. Writing. Jerez means flamenco.

That's my home. This is such a privilege. Living a fulfilling life brings me the contentment that buying a home never did.

I'm happy and comfortable knowing I can make almost all of my days good. Rejecting home ownership is partly to blame.

I'm broke like most folks. I had to choose between home ownership and comfort. I said, I didn't find them together.

Feeling at home trumps owning brick-and-mortar every day.

The following is the reality of what it's like to turn the American Dream around.

Leaving the housing market.

Sometimes I wish I owned a home.

I miss having my own yard and bed. My kitchen, cookbooks, and pizza oven are missed.

But I rarely do.

Someone else's life plan pushed home ownership on me. I'm grateful I figured it out at 35. Many take much longer, and some never understand homeownership stinks (for them).

It's confusing. People will think you're dumb or suicidal.

If you read what I write, you'll know. You'll realize that all you've done is choose to live intentionally. Find a home beyond four walls and a picket fence.

Miss? As I said, they're not home. If it were, a pizza oven, a good mattress, and a well-stocked kitchen would bring happiness.

No.

If you can afford a house and desire one, more power to you.

There are other ways to discover home. Find calm and happiness. For fun.

For it, look deeper than your home's foundation.

Max Chafkin

4 years ago

Elon Musk Bets $44 Billion on Free Speech's Future

Musk’s purchase of Twitter has sealed his bond with the American right—whether the platform’s left-leaning employees and users like it or not.

Elon Musk's pursuit of Twitter Inc. began earlier this month as a joke. It started slowly, then spiraled out of control, culminating on April 25 with the world's richest man agreeing to spend $44 billion on one of the most politically significant technology companies ever. There have been bigger financial acquisitions, but Twitter's significance has always outpaced its balance sheet. This is a unique Silicon Valley deal.

To recap: Musk announced in early April that he had bought a stake in Twitter, citing the company's alleged suppression of free speech. His complaints were vague, relying heavily on the dog whistles of the ultra-right. A week later, he announced he'd buy the company for $54.20 per share, four days after initially pledging to join Twitter's board. Twitter's directors noticed the 420 reference as well, and responded with a “shareholder rights” plan (i.e., a poison pill) that included a 420 joke.

Musk - Patrick Pleul/Getty Images

No one knew if the bid was genuine. Musk's Twitter plans seemed implausible or insincere. In a tweet, he referred to automated accounts that use his name to promote cryptocurrency. He enraged his prospective employees by suggesting that Twitter's San Francisco headquarters be turned into a homeless shelter, renaming the company Titter, and expressing solidarity with his growing conservative fan base. “The woke mind virus is making Netflix unwatchable,” he tweeted on April 19.

But Musk got funding, and after a frantic weekend of negotiations, Twitter said yes. Unlike most buyouts, Musk will personally fund the deal, putting up up to $21 billion in cash and borrowing another $12.5 billion against his Tesla stock.

Free Speech and Partisanship

Percentage of respondents who agree with the following

The deal is expected to replatform accounts that were banned by Twitter for harassing others, spreading misinformation, or inciting violence, such as former President Donald Trump's account. As a result, Musk is at odds with his own left-leaning employees, users, and advertisers, who would prefer more content moderation rather than less.

Dorsey - Photographer: Joe Raedle/Getty Images

Previously, the company's leadership had similar issues. Founder Jack Dorsey stepped down last year amid concerns about slowing growth and product development, as well as his dual role as CEO of payments processor Block Inc. Compared to Musk, a father of seven who already runs four companies (besides Tesla and SpaceX), Dorsey is laser-focused.

Musk's motivation to buy Twitter may be political. Affirming the American far right with $44 billion spent on “free speech” Right-wing activists have promoted a series of competing upstart Twitter competitors—Parler, Gettr, and Trump's own effort, Truth Social—since Trump was banned from major social media platforms for encouraging rioters at the US Capitol on Jan. 6, 2021. But Musk can give them a social network with lax content moderation and a real user base. Trump said he wouldn't return to Twitter after the deal was announced, but he wouldn't be the first to do so.

Trump - Eli Hiller/Bloomberg

Conservative activists and lawmakers are already ecstatic. “A great day for free speech in America,” said Missouri Republican Josh Hawley. The day the deal was announced, Tucker Carlson opened his nightly Fox show with a 10-minute laudatory monologue. “The single biggest political development since Donald Trump's election in 2016,” he gushed over Musk.

But Musk's supporters and detractors misunderstand how much his business interests influence his political ideology. He marketed Tesla's cars as carbon-saving machines that were faster and cooler than gas-powered luxury cars during George W. Bush's presidency. Musk gained a huge following among wealthy environmentalists who reserved hundreds of thousands of Tesla sedans years before they were made during Barack Obama's presidency. Musk in the Trump era advocated for a carbon tax, but he also fought local officials (and his own workers) over Covid rules that slowed the reopening of his Bay Area factory.

Teslas at the Las Vegas Convention Center Loop Central Station in April 2021. The Las Vegas Convention Center Loop was Musk's first commercial project. Ethan Miller/Getty Images

Musk's rightward shift matched the rise of the nationalist-populist right and the desire to serve a growing EV market. In 2019, he unveiled the Cybertruck, a Tesla pickup, and in 2018, he announced plans to manufacture it at a new plant outside Austin. In 2021, he decided to move Tesla's headquarters there, citing California's "land of over-regulation." After Ford and General Motors beat him to the electric truck market, Musk reframed Tesla as a company for pickup-driving dudes.

Similarly, his purchase of Twitter will be entwined with his other business interests. Tesla has a factory in China and is friendly with Beijing. This could be seen as a conflict of interest when Musk's Twitter decides how to treat Chinese-backed disinformation, as Amazon.com Inc. founder Jeff Bezos noted.

Musk has focused on Twitter's product and social impact, but the company's biggest challenges are financial: Either increase cash flow or cut costs to comfortably service his new debt. Even if Musk can't do that, he can still benefit from the deal. He has recently used the increased attention to promote other business interests: Boring has hyperloops and Neuralink brain implants on the way, Musk tweeted. Remember Tesla's long-promised robotaxis!

Musk may be comfortable saying he has no expectation of profit because it benefits his other businesses. At the TED conference on April 14, Musk insisted that his interest in Twitter was solely charitable. “I don't care about money.”

The rockets and weed jokes make it easy to see Musk as unique—and his crazy buyout will undoubtedly add to that narrative. However, he is a megabillionaire who is risking a small amount of money (approximately 13% of his net worth) to gain potentially enormous influence. Musk makes everything seem new, but this is a rehash of an old media story.

You might also like

Chris

3 years ago

What the World's Most Intelligent Investor Recently Said About Crypto

Cryptoshit. This thing is crazy to buy.

Charlie Munger is revered and powerful in finance.

Munger, vice chairman of Berkshire Hathaway, is noted for his wit, no-nonsense attitude to investment, and ability to spot promising firms and markets.

Munger's crypto views have upset some despite his reputation as a straight shooter.

“There’s only one correct answer for intelligent people, just totally avoid all the people that are promoting it.” — Charlie Munger

The Munger Interview on CNBC (4:48 secs)

This Monday, CNBC co-anchor Rebecca Quick interviewed Munger and brought up his 2007 statement, "I'm not allowed to have an opinion on this subject until I can present the arguments against my viewpoint better than the folks who are supporting it."

Great investing and life advice!

If you can't explain the opposing reasons, you're not informed enough to have an opinion.

In today's world, it's important to grasp both sides of a debate before supporting one.

Rebecca inquired:

Does your Wall Street Journal article on banning cryptocurrency apply? If so, would you like to present the counterarguments?

Mungers reply:

I don't see any viable counterarguments. I think my opponents are idiots, hence there is no sensible argument against my position.

Consider his words.

Do you believe Munger has studied both sides?

He said, "I assume my opponents are idiots, thus there is no sensible argument against my position."

This is worrisome, especially from a guy who once encouraged studying both sides before forming an opinion.

Munger said:

National currencies have benefitted humanity more than almost anything else.

Hang on, I think we located the perpetrator.

Munger thinks crypto will replace currencies.

False.

I doubt he studied cryptocurrencies because the name is deceptive.

He misread a headline as a Dollar destroyer.

Cryptocurrencies are speculations.

Like Tesla, Amazon, Apple, Google, Microsoft, etc.

Crypto won't replace dollars.

In the interview with CNBC, Munger continued:

“I’m not proud of my country for allowing this crap, what I call the cryptoshit. It’s worthless, it’s no good, it’s crazy, it’ll do nothing but harm, it’s anti-social to allow it.” — Charlie Munger

Not entirely inaccurate.

Daily cryptos are established solely to pump and dump regular investors.

Let's get into Munger's crypto aversion.

Rat poison is bitcoin.

Munger famously dubbed Bitcoin rat poison and a speculative bubble that would implode.

Partially.

But the bubble broke. Since 2021, the market has fallen.

Scam currencies and NFTs are being eliminated, which I like.

Whoa.

Why does Munger doubt crypto?

Mungers thinks cryptocurrencies has no intrinsic value.

He worries about crypto fraud and money laundering.

Both are valid issues.

Yet grouping crypto is intellectually dishonest.

Ethereum, Bitcoin, Solana, Chainlink, Flow, and Dogecoin have different purposes and values (not saying they’re all good investments).

Fraudsters who hurt innocents will be punished.

Therefore, complaining is useless.

Why not stop it? Repair rather than complain.

Regrettably, individuals today don't offer solutions.

Blind Areas for Mungers

As with everyone, Mungers' bitcoin views may be impacted by his biases and experiences.

OK.

But Munger has always advocated classic value investing and may be wary of investing in an asset outside his expertise.

Mungers' banking and insurance investments may influence his bitcoin views.

Could a coworker or acquaintance have told him crypto is bad and goes against traditional finance?

Right?

Takeaways

Do you respect Charlie Mungers?

Yes and no, like any investor or individual.

To understand Mungers' bitcoin beliefs, you must be critical.

Mungers is a successful investor, but his views about bitcoin should be considered alongside other viewpoints.

Munger’s success as an investor has made him an influencer in the space.

Influence gives power.

He controls people's thoughts.

Munger's ok. He will always be heard.

I'll do so cautiously.

Jano le Roux

3 years ago

Apple Quietly Introduces A Revolutionary Savings Account That Kills Banks

Would you abandon your bank for Apple?

Banks are struggling.

not as a result of inflation

not due to the economic downturn.

not due to the conflict in Ukraine.

But because they’re underestimating Apple.

Slowly but surely, Apple is looking more like a bank.

An easy new savings account like Apple

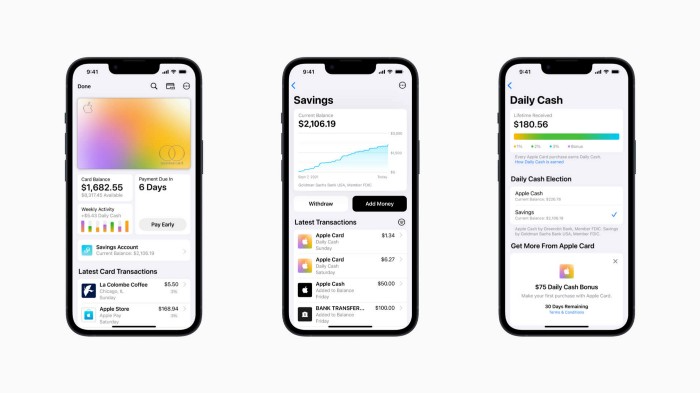

Apple has a new savings account.

Apple says Apple Card users may set up and manage savings straight in Wallet.

No more charges

Colorfully high yields

With no minimum balance

No minimal down payments

Most consumer-facing banks will have to match Apple's offer or suffer disruption.

Users may set it up from their iPhones without traveling to a bank or filling out paperwork.

It’s built into the iPhone in your pocket.

So now more waiting for slow approval processes.

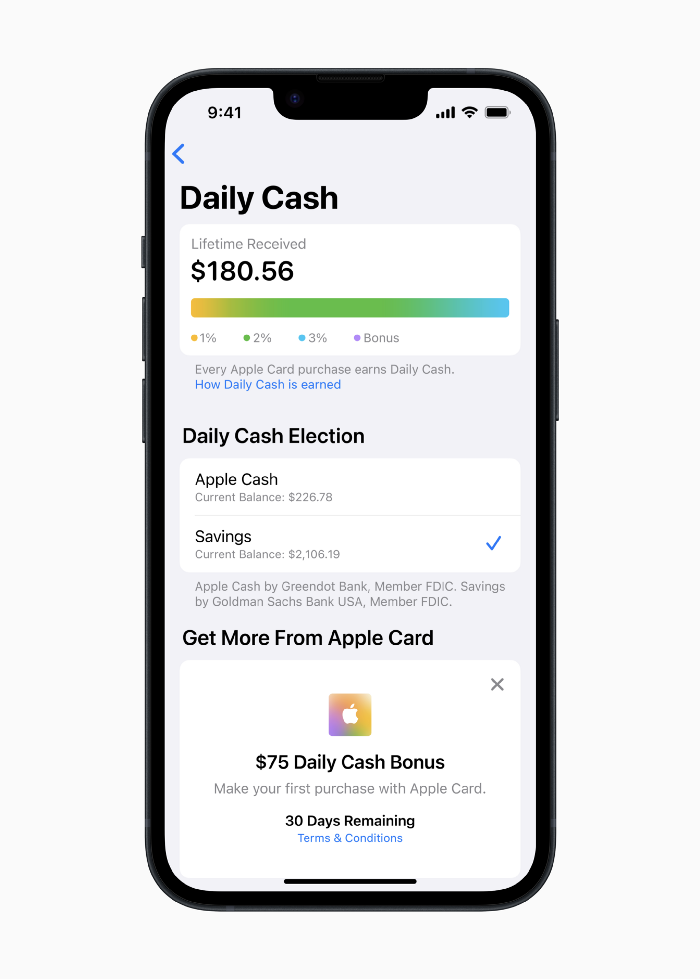

Once the savings account is set up, Apple will automatically transfer all future Daily Cash into it. Users may also add these cash to an Apple Cash card in their Apple Wallet app and adjust where Daily Cash is paid at any time.

Apple Pay and Apple Wallet VP Jennifer Bailey:

Savings enables Apple Card users to grow their Daily Cash rewards over time, while also saving for the future.

Bailey says Savings adds value to Apple Card's Daily Cash benefit and offers another easy-to-use tool to help people lead healthier financial lives.

Transfer money from a linked bank account or Apple Cash to a Savings account. Users can withdraw monies to a connected bank account or Apple Cash card without costs.

Once set up, Apple Card customers can track their earnings via Wallet's Savings dashboard. This dashboard shows their account balance and interest.

This product targets younger people as the easiest way to start a savings account on the iPhone.

Why would a Gen Z account holder travel to the bank if their iPhone could be their bank?

Using this concept, Apple will transform the way we think about banking by 2030.

Two other nightmares keep bankers awake at night

Apple revealed two new features in early 2022 that banks and payment gateways hated.

Tap to Pay with Apple

Late Apple Pay

They startled the industry.

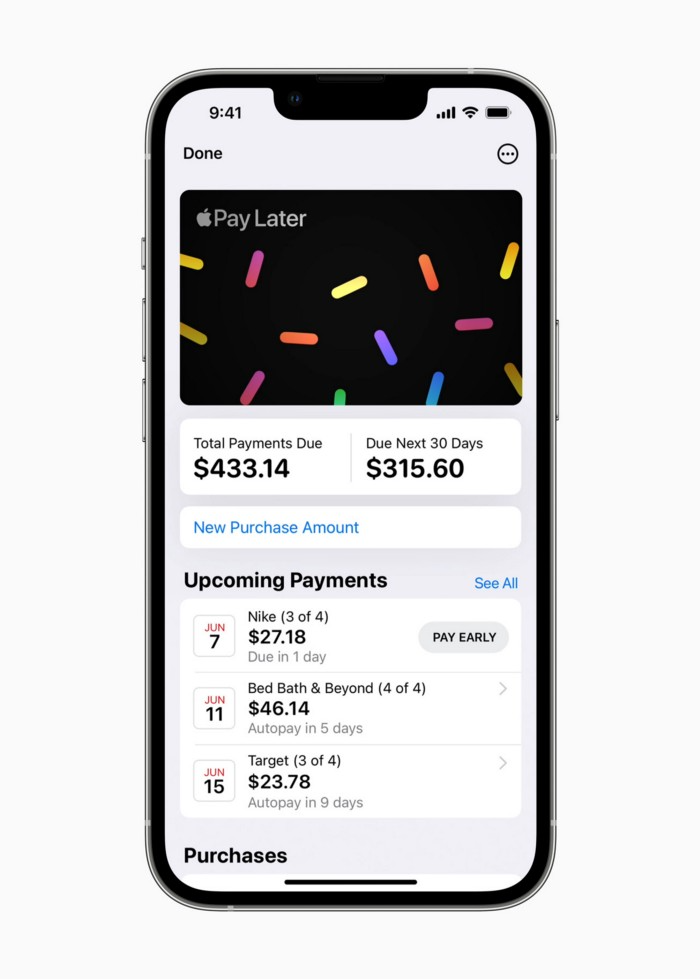

Tap To Pay converts iPhones into mobile POS card readers. Apple Pay Later is pushing the BNPL business in a consumer-friendly direction, hopefully ending dodgy lending practices.

Tap to Pay with Apple

iPhone POS

Millions of US merchants, from tiny shops to huge establishments, will be able to accept Apple Pay, contactless credit and debit cards, and other digital wallets with a tap.

No hardware or payment terminal is needed.

Revolutionary!

Stripe has previously launched this feature.

Tap to Pay on iPhone will provide companies with a secure, private, and quick option to take contactless payments and unleash new checkout experiences, said Bailey.

Apple's solution is ingenious. Brilliant!

Bailey says that payment platforms, app developers, and payment networks are making it easier than ever for businesses of all sizes to accept contactless payments and thrive.

I admire that Apple is offering this up to third-party services instead of closing off other functionalities.

Slow POS terminals, farewell.

Late Apple Pay

Pay Apple later.

Apple Pay Later enables US consumers split Apple Pay purchases into four equal payments over six weeks with no interest or fees.

The Apple ecosystem integration makes this BNPL scheme unique. Nonstick. No dumb forms.

Frictionless.

Just double-tap the button.

Apple Pay Later was designed with users' financial well-being in mind. Apple makes it easy to use, track, and pay back Apple Pay Later from Wallet.

Apple Pay Later can be signed up in Wallet or when using Apple Pay. Apple Pay Later can be used online or in an app that takes Apple Pay and leverages the Mastercard network.

Apple Pay Order Tracking helps consumers access detailed receipts and order tracking in Wallet for Apple Pay purchases at participating stores.

Bad BNPL suppliers, goodbye.

Most bankers will be caught in Apple's eye playing mini golf in high-rise offices.

The big problem:

Banks still think about features and big numbers just like other smartphone makers did not too long ago.

Apple thinks about effortlessness, seamlessness, and frictionlessness that just work through integrated hardware and software.

Let me know what you think Apple’s next power moves in the banking industry could be.

Jayden Levitt

3 years ago

Starbucks' NFT Project recently defeated its rivals.

The same way Amazon killed bookstores. You just can’t see it yet.

Shultz globalized coffee. Before Starbucks, coffee sucked.

All accounts say 1970s coffee was awful.

Starbucks had three stores selling ground Indonesian coffee in the 1980s.

What a show!

A year after joining the company at 29, Shultz traveled to Italy for R&D.

He noticed the coffee shops' sense of theater and community and realized Starbucks was in the wrong business.

Integrating coffee and destination created a sense of community in the store.

Brilliant!

He told Starbucks' founders about his experience.

They disapproved.

For two years.

Shultz left and opened an Italian coffee shop chain like any good entrepreneur.

Starbucks ran into financial trouble, so the founders offered to sell to Shultz.

Shultz bought Starbucks in 1987 for $3.8 million, including six stores and a payment plan.

Starbucks is worth $100.79Billion, per Google Finance.

26,500 times Shultz's initial investment

Starbucks is releasing its own NFT Platform under Shultz and his early Vision.

This year, Starbucks Odyssey launches. The new digital experience combines a Loyalty Rewards program with NFT.

The side chain Polygon-based platform doesn't require a Crypto Wallet. Customers can earn and buy digital assets to unlock incentives and experiences.

They've removed all friction, making it more immersive and convenient than a coffee shop.

Brilliant!

NFTs are the access coupon to their digital community, but they don't highlight the technology.

They prioritize consumer experience by adding non-technical users to Web3. Their collectables are called journey stamps, not NFTs.

No mention of bundled gas fees.

Brady Brewer, Starbucks' CMO, said;

“It happens to be built on blockchain and web3 technologies, but the customer — to be honest — may very well not even know that what they’re doing is interacting with blockchain technology. It’s just the enabler,”

Rewards members will log into a web app using their loyalty program credentials to access Starbucks Odyssey. They won't know about blockchain transactions.

Starbucks has just dealt its rivals a devastating blow.

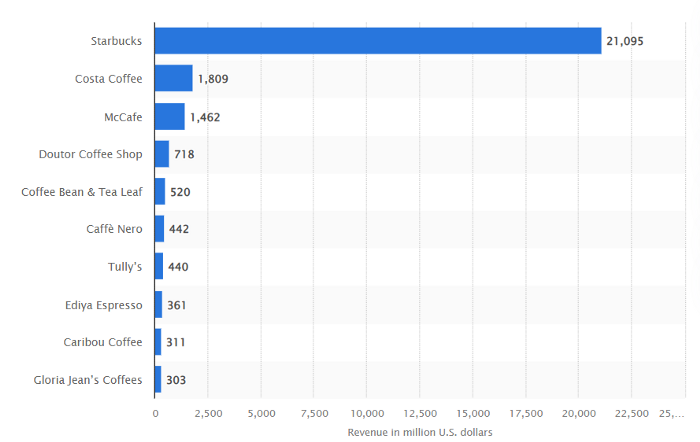

It generates more than ten times the revenue of its closest competitor Costa Coffee.

The coffee giant is booming.

Starbucks is ahead of its competitors. No wonder.

They have an innovative, adaptable leadership team.

Starbucks' DNA challenges the narrative, especially when others reject their ideas.

I’m off for a cappuccino.