More on Leadership

Sam Hickmann

3 years ago

Improving collaboration with the Six Thinking Hats

Six Thinking Hats was written by Dr. Edward de Bono. "Six Thinking Hats" and parallel thinking allow groups to plan thinking processes in a detailed and cohesive way, improving collaboration.

Fundamental ideas

In order to develop strategies for thinking about specific issues, the method assumes that the human brain thinks in a variety of ways that can be intentionally challenged. De Bono identifies six brain-challenging directions. In each direction, the brain brings certain issues into conscious thought (e.g. gut instinct, pessimistic judgement, neutral facts). Some may find wearing hats unnatural, uncomfortable, or counterproductive.

The example of "mismatch" sensitivity is compelling. In the natural world, something out of the ordinary may be dangerous. This mode causes negative judgment and critical thinking.

Colored hats represent each direction. Putting on a colored hat symbolizes changing direction, either literally or metaphorically. De Bono first used this metaphor in his 1971 book "Lateral Thinking for Management" to describe a brainstorming framework. These metaphors allow more complete and elaborate thought separation. Six thinking hats indicate ideas' problems and solutions.

Similarly, his CoRT Thinking Programme introduced "The Five Stages of Thinking" method in 1973.

| HAT | OVERVIEW | TECHNIQUE |

|---|---|---|

| BLUE | "The Big Picture" & Managing | CAF (Consider All Factors); FIP (First Important Priorities) |

| WHITE | "Facts & Information" | Information |

| RED | "Feelings & Emotions" | Emotions and Ego |

| BLACK | "Negative" | PMI (Plus, Minus, Interesting); Evaluation |

| YELLOW | "Positive" | PMI |

| GREEN | "New Ideas" | Concept Challenge; Yes, No, Po |

Strategies and programs

After identifying the six thinking modes, programs can be created. These are groups of hats that encompass and structure the thinking process. Several of these are included in the materials for franchised six hats training, but they must often be adapted. Programs are often "emergent," meaning the group plans the first few hats and the facilitator decides what to do next.

The group agrees on how to think, then thinks, then evaluates the results and decides what to do next. Individuals or groups can use sequences (and indeed hats). Each hat is typically used for 2 minutes at a time, although an extended white hat session is common at the start of a process to get everyone on the same page. The red hat is recommended to be used for a very short period to get a visceral gut reaction – about 30 seconds, and in practice often takes the form of dot-voting.

| ACTIVITY | HAT SEQUENCE |

|---|---|

| Initial Ideas | Blue, White, Green, Blue |

| Choosing between alternatives | Blue, White, (Green), Yellow, Black, Red, Blue |

| Identifying Solutions | Blue, White, Black, Green, Blue |

| Quick Feedback | Blue, Black, Green, Blue |

| Strategic Planning | Blue, Yellow, Black, White, Blue, Green, Blue |

| Process Improvement | Blue, White, White (Other People's Views), Yellow, Black, Green, Red, Blue |

| Solving Problems | Blue, White, Green, Red, Yellow, Black, Green, Blue |

| Performance Review | Blue, Red, White, Yellow, Black, Green, Blue |

Use

Speedo's swimsuit designers reportedly used the six thinking hats. "They used the "Six Thinking Hats" method to brainstorm, with a green hat for creative ideas and a black one for feasibility.

Typically, a project begins with extensive white hat research. Each hat is used for a few minutes at a time, except the red hat, which is limited to 30 seconds to ensure an instinctive gut reaction, not judgement. According to Malcolm Gladwell's "blink" theory, this pace improves thinking.

De Bono believed that the key to a successful Six Thinking Hats session was focusing the discussion on a particular approach. A meeting may be called to review and solve a problem. The Six Thinking Hats method can be used in sequence to explore the problem, develop a set of solutions, and choose a solution through critical examination.

Everyone may don the Blue hat to discuss the meeting's goals and objectives. The discussion may then shift to Red hat thinking to gather opinions and reactions. This phase may also be used to determine who will be affected by the problem and/or solutions. The discussion may then shift to the (Yellow then) Green hat to generate solutions and ideas. The discussion may move from White hat thinking to Black hat thinking to develop solution set criticisms.

Because everyone is focused on one approach at a time, the group is more collaborative than if one person is reacting emotionally (Red hat), another is trying to be objective (White hat), and another is critical of the points which emerge from the discussion (Black hat). The hats help people approach problems from different angles and highlight problem-solving flaws.

Christian Soschner

3 years ago

Steve Jobs' Secrets Revealed

From 1984 until 2011, he ran Apple using the same template.

What is a founder CEO's most crucial skill?

Presentation, communication, and sales

As a Business Angel Investor, I saw many pitch presentations and met with investors one-on-one to promote my companies.

There is always the conception of “Investors have to invest,” so there is no need to care about the presentation.

It's false. Nobody must invest. Many investors believe that entrepreneurs must convince them to invest in their business.

Sometimes — like in 2018–2022 — too much money enters the market, and everyone makes good money.

Do you recall the Buy Now, Pay Later Movement? This amazing narrative had no return potential. Only buyers who couldn't acquire financing elsewhere shopped at these companies.

Klarna's failing business concept led to high valuations.

Investors become more cautious when the economy falters. 2022 sees rising inflation, interest rates, wars, and civil instability. It's like the apocalypse's four horsemen have arrived.

Storytelling is important in rough economies.

When investors draw back, how can entrepreneurs stand out?

In Q2/2022, every study I've read said:

Investors cease investing

Deals are down in almost all IT industries from previous quarters.

What do founders need to do?

Differentiate yourself.

Storytelling talents help.

The Steve Jobs Way

Every time I watch a Steve Jobs presentation, I'm enthralled.

I'm a techie. Everything technical interests me. But, I skim most presentations.

What's Steve Jobs's secret?

Steve Jobs created Apple in 1976 and made it a profitable software and hardware firm in the 1980s. Macintosh goods couldn't beat IBM's. This mistake sacked him in 1985.

Before rejoining Apple in 1997, Steve Jobs founded Next Inc. and Pixar.

From then on, Apple became America's most valuable firm.

Steve Jobs understood people's needs. He said:

“People don’t know what they want until you show it to them. That’s why I never rely on market research. Our task is to read things that are not yet on the page.”

In his opinion, people talk about problems. A lot. Entrepreneurs must learn what the population's pressing problems are and create a solution.

Steve Jobs showed people what they needed before they realized it.

I'll explain:

Present a Big Vision

Steve Jobs starts every presentation by describing his long-term goals for Apple.

1984's Macintosh presentation set up David vs. Goliath. In a George Orwell-style dystopia, IBM computers were bad. It was 1984.

Apple will save the world, like Jedis.

Why do customers and investors like Big Vision?

People want a wider perspective, I think. Humans love improving the planet.

Apple users often cite emotional reasons for buying the brand.

Revolutionizing several industries with breakthrough inventions

Establish Authority

Everyone knows Apple in 2022. It's hard to find folks who confuse Apple with an apple around the world.

Apple wasn't as famous as it is today until Steve Jobs left in 2011.

Most entrepreneurs lack experience. They may market their company or items to folks who haven't heard of it.

Steve Jobs presented the company's historical accomplishments to overcome opposition.

In his presentation of the first iPhone, he talked about the Apple Macintosh, which altered the computing sector, and the iPod, which changed the music industry.

People who have never heard of Apple feel like they're seeing a winner. It raises expectations that the new product will be game-changing and must-have.

The Big Reveal

A pitch or product presentation always has something new.

Steve Jobs doesn't only demonstrate the product. I don't think he'd skip the major point of a company presentation.

He consistently discusses present market solutions, their faults, and a better consumer solution.

No solution exists yet.

It's a multi-faceted play:

It's comparing the new product to something familiar. This makes novelty and the product more relatable.

Describe a desirable solution.

He's funny. He demonstrated an iPod with an 80s phone dial in his iPhone presentation.

Then he reveals the new product. Macintosh presented itself.

Show the benefits

He outlines what Apple is doing differently after demonstrating the product.

How do you distinguish from others? The Big Breakthrough Presentation.

A few hundred slides might list all benefits.

Everyone would fall asleep. Have you ever had similar presentations?

When the brain is overloaded with knowledge, the limbic system changes to other duties, like lunch planning.

What should a speaker do? There's a classic proverb:

“Tell me and I forget, teach me and I may remember, involve me and I learn” (— Not Benjamin Franklin).

Steve Jobs showcased the product live.

Again, using ordinary scenarios to highlight the product's benefits makes it relatable.

The 2010 iPad Presentation uses this technique.

Invite the Team and Let Them Run the Presentation

CEOs spend most time outside the organization. Many companies elect to have only one presenter.

It sends the incorrect message to investors. Product presentations should always include the whole team.

Let me explain why.

Companies needing investment money frequently have shaky business strategies or no product-market fit or robust corporate structure.

Investors solely bet on a team's ability to implement ideas and make a profit.

Early team involvement helps investors understand the company's drivers. Travel costs are worthwhile.

But why for product presentations?

Presenters of varied ages, genders, social backgrounds, and skillsets are relatable. CEOs want relatable products.

Some customers may not believe a white man's message. A black woman's message may be more accepted.

Make the story relatable when you have the best product that solves people's concerns.

Best example: 1984 Macintosh presentation with development team panel.

What is the largest error people make when companies fail?

Saving money on the corporate and product presentation.

Invite your team to five partner meetings when five investors are shortlisted.

Rehearse the presentation till it's natural. Let the team speak.

Successful presentations require structure, rehearsal, and a team. Steve Jobs nailed it.

The woman

3 years ago

Why Google's Hiring Process is Brilliant for Top Tech Talent

Without a degree and experience, you can get a high-paying tech job.

Most organizations follow this hiring rule: you chat with HR, interview with your future boss and other senior managers, and they make the final hiring choice.

If you've ever applied for a job, you know how arduous it can be. A newly snapped photo and a glossy resume template can wear you out. Applying to Google can change this experience.

According to an Universum report, Google is one of the world's most coveted employers. It's not simply the search giant's name and reputation that attract candidates, but its role requirements or lack thereof.

Candidates no longer need a beautiful resume, cover letter, Ivy League laurels, or years of direct experience. The company requires no degree or experience.

Elon Musk started it. He employed the two-hands test to uncover talented non-graduates. The billionaire eliminated the requirement for experience.

Google is deconstructing traditional employment with programs like the Google Project Management Degree, a free online and self-paced professional credential course.

Google's hiring is interesting. After its certification course, applicants can work in project management. Instead of academic degrees and experience, the company analyzes coursework.

Google finds the best project managers and technical staff in exchange. Google uses three strategies to find top talent.

Chase down the innovators

Google eliminates restrictions like education, experience, and others to find the polar bear amid the snowfall. Google's free project management education makes project manager responsibilities accessible to everyone.

Many jobs don't require a degree. Overlooking individuals without a degree can make it difficult to locate a candidate who can provide value to a firm.

Firsthand knowledge follows the same rule. A lack of past information might be an employer's benefit. This is true for creative teams or businesses that prefer to innovate.

Or when corporations conduct differently from the competition. No-experience candidates can offer fresh perspectives. Fast Company reports that people with no sales experience beat those with 10 to 15 years of experience.

Give the aptitude test first priority.

Google wants the best candidates. Google wouldn't be able to receive more applications if it couldn't screen them for fit. Its well-organized online training program can be utilized as a portfolio.

Google learns a lot about an applicant through completed assignments. It reveals their ability, leadership style, communication capability, etc. The course mimics the job to assess candidates' suitability.

Basic screening questions might provide information to compare candidates. Any size small business can use screening questions and test projects to evaluate prospective employees.

Effective training for employees

Businesses must train employees regardless of their hiring purpose. Formal education and prior experience don't guarantee success. Maintaining your employees' professional knowledge gaps is key to their productivity and happiness. Top-notch training can do that. Learning and development are key to employee engagement, says Bob Nelson, author of 1,001 Ways to Engage Employees.

Google's online certification program isn't available everywhere. Improving the recruiting process means emphasizing aptitude over experience and a degree. Instead of employing new personnel and having them work the way their former firm trained them, train them how you want them to function.

If you want to know more about Google’s recruiting process, we recommend you watch the movie “Internship.”

You might also like

CNET

4 years ago

How a $300K Bored Ape Yacht Club NFT was accidentally sold for $3K

The Bored Ape Yacht Club is one of the most prestigious NFT collections in the world. A collection of 10,000 NFTs, each depicting an ape with different traits and visual attributes, Jimmy Fallon, Steph Curry and Post Malone are among their star-studded owners. Right now the price of entry is 52 ether, or $210,000.

Which is why it's so painful to see that someone accidentally sold their Bored Ape NFT for $3,066.

Unusual trades are often a sign of funny business, as in the case of the person who spent $530 million to buy an NFT from themselves. In Saturday's case, the cause was a simple, devastating "fat-finger error." That's when people make a trade online for the wrong thing, or for the wrong amount. Here the owner, real name Max or username maxnaut, meant to list his Bored Ape for 75 ether, or around $300,000. Instead he accidentally listed it for 0.75. One hundredth the intended price.

It was bought instantaneously. The buyer paid an extra $34,000 to speed up the transaction, ensuring no one could snap it up before them. The Bored Ape was then promptly listed for $248,000. The transaction appears to have been done by a bot, which can be coded to immediately buy NFTs listed below a certain price on behalf of their owners in order to take advantage of these exact situations.

"How'd it happen? A lapse of concentration I guess," Max told me. "I list a lot of items every day and just wasn't paying attention properly. I instantly saw the error as my finger clicked the mouse but a bot sent a transaction with over 8 eth [$34,000] of gas fees so it was instantly sniped before I could click cancel, and just like that, $250k was gone."

"And here within the beauty of the Blockchain you can see that it is both honest and unforgiving," he added.

Fat finger trades happen sporadically in traditional finance -- like the Japanese trader who almost bought 57% of Toyota's stock in 2014 -- but most financial institutions will stop those transactions if alerted quickly enough. Since cryptocurrency and NFTs are designed to be decentralized, you essentially have to rely on the goodwill of the buyer to reverse the transaction.

Fat finger errors in cryptocurrency trades have made many a headline over the past few years. Back in 2019, the company behind Tether, a cryptocurrency pegged to the US dollar, nearly doubled its own coin supply when it accidentally created $5 billion-worth of new coins. In March, BlockFi meant to send 700 Gemini Dollars to a set of customers, worth roughly $1 each, but mistakenly sent out millions of dollars worth of bitcoin instead. Last month a company erroneously paid a $24 million fee on a $100,000 transaction.

Similar incidents are increasingly being seen in NFTs, now that many collections have accumulated in market value over the past year. Last month someone tried selling a CryptoPunk NFT for $19 million, but accidentally listed it for $19,000 instead. Back in August, someone fat finger listed their Bored Ape for $26,000, an error that someone else immediately capitalized on. The original owner offered $50,000 to the buyer to return the Bored Ape -- but instead the opportunistic buyer sold it for the then-market price of $150,000.

"The industry is so new, bad things are going to happen whether it's your fault or the tech," Max said. "Once you no longer have control of the outcome, forget and move on."

The Bored Ape Yacht Club launched back in April 2021, with 10,000 NFTs being sold for 0.08 ether each -- about $190 at the time. While NFTs are often associated with individual digital art pieces, collections like the Bored Ape Yacht Club, which allow owners to flaunt their NFTs by using them as profile pictures on social media, are becoming increasingly prevalent. The Bored Ape Yacht Club has since become the second biggest NFT collection in the world, second only to CryptoPunks, which launched in 2017 and is considered the "original" NFT collection.

Alex Mathers

3 years ago Draft

12 practices of the zenith individuals I know

Calmness is a vital life skill.

It aids communication. It boosts creativity and performance.

I've studied calm people's habits for years. Commonalities:

Have learned to laugh at themselves.

Those who have something to protect can’t help but make it a very serious business, which drains the energy out of the room.

They are fixated on positive pursuits like making cool things, building a strong physique, and having fun with others rather than on depressing influences like the news and gossip.

Every day, spend at least 20 minutes moving, whether it's walking, yoga, or lifting weights.

Discover ways to take pleasure in life's challenges.

Since perspective is malleable, they change their view.

Set your own needs first.

Stressed people neglect themselves and wonder why they struggle.

Prioritize self-care.

Don't ruin your life to please others.

Make something.

Calm people create more than react.

They love creating beautiful things—paintings, children, relationships, and projects.

Hold your breath, please.

If you're stressed or angry, you may be surprised how much time you spend holding your breath and tightening your belly.

Release, breathe, and relax to find calm.

Stopped rushing.

Rushing is disadvantageous.

Calm people handle life better.

Are attuned to their personal dietary needs.

They avoid junk food and eat foods that keep them healthy, happy, and calm.

Don’t take anything personally.

Stressed people control everything.

Self-conscious.

Calm people put others and their work first.

Keep their surroundings neat.

Maintaining an uplifting and clutter-free environment daily calms the mind.

Minimise negative people.

Calm people are ruthless with their boundaries and avoid negative and drama-prone people.

Matt Ward

3 years ago

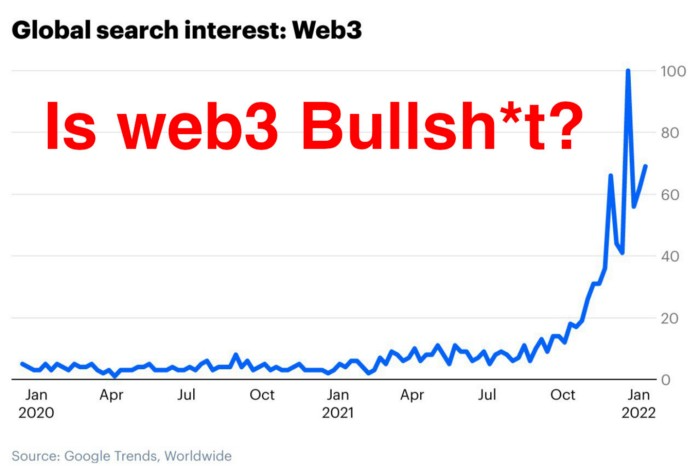

Is Web3 nonsense?

Crypto and blockchain have rebranded as web3. They probably thought it sounded better and didn't want the baggage of scam ICOs, STOs, and skirted securities laws.

It was like Facebook becoming Meta. Crypto's biggest players wanted to change public (and regulator) perception away from pump-and-dump schemes.

After the 2018 ICO gold rush, it's understandable. Every project that raised millions (or billions) never shipped a meaningful product.

Like many crazes, charlatans took the money and ran.

Despite its grifter past, web3 is THE hot topic today as more founders, venture firms, and larger institutions look to build the future decentralized internet.

Supposedly.

How often have you heard: This will change the world, fix the internet, and give people power?

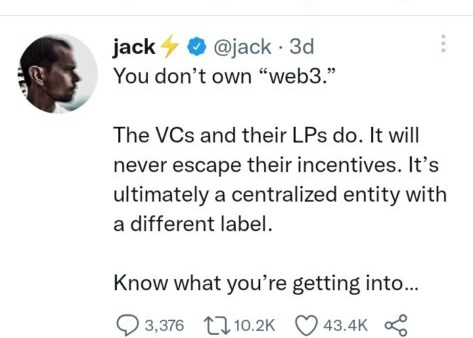

Why are most of web3's biggest proponents (and beneficiaries) the same rich, powerful players who built and invested in the modern internet? It's like they want to remake and own the internet.

Something seems off about that.

Why are insiders getting preferential presale terms before the public, allowing early investors and proponents to flip dirt cheap tokens and advisors shares almost immediately after the public sale?

It's a good gig with guaranteed markups, no risk or progress.

If it sounds like insider trading, it is, at least practically. This is clear when people talk about blockchain/web3 launches and tokens.

Fast money, quick flips, and guaranteed markups/returns are common.

Incentives-wise, it's hard to blame them. Who can blame someone for following the rules to win? Is it their fault or regulators' for not leveling the playing field?

It's similar to oil companies polluting for profit, Instagram depressing you into buying a new dress, or pharma pushing an unnecessary pill.

All of that is fair game, at least until we change the playbook, because people (and corporations) change for pain or love. Who doesn't love money?

belief based on money gain

Sinclair:

“It is difficult to get a man to understand something when his salary depends upon his not understanding it.”

Bitcoin, blockchain, and web3 analogies?

Most blockchain and web3 proponents are true believers, not cynical capitalists. They believe blockchain's inherent transparency and permissionless trust allow humanity to evolve beyond our reptilian ways and build a better decentralized and democratic world.

They highlight issues with the modern internet and monopoly players like Google, Facebook, and Apple. Decentralization fixes everything

If we could give power back to the people and get governments/corporations/individuals out of the way, we'd fix everything.

Blockchain solves supply chain and child labor issues in China.

To meet Paris climate goals, reduce emissions. Create a carbon token.

Fixing online hatred and polarization Web3 Twitter and Facebook replacement.

Web3 must just be the answer for everything… your “perfect” silver bullet.

Nothing fits everyone. Blockchain has pros and cons like everything else.

Blockchain's viral, ponzi-like nature has an MLM (mid level marketing) feel. If you bought Taylor Swift's NFT, your investment is tied to her popularity.

Probably makes you promote Swift more. Play music loudly.

Here's another example:

Imagine if Jehovah’s Witnesses (or evangelical preachers…) got paid for every single person they converted to their cause.

It becomes a self-fulfilling prophecy as their faith and wealth grow.

Which breeds extremism? Ultra-Orthodox Jews are an example. maximalists

Bitcoin and blockchain are causes, religions. It's a money-making movement and ideal.

We're good at convincing ourselves of things we want to believe, hence filter bubbles.

I ignore anything that doesn't fit my worldview and seek out like-minded people, which algorithms amplify.

Then what?

Is web3 merely a new scam?

No, never!

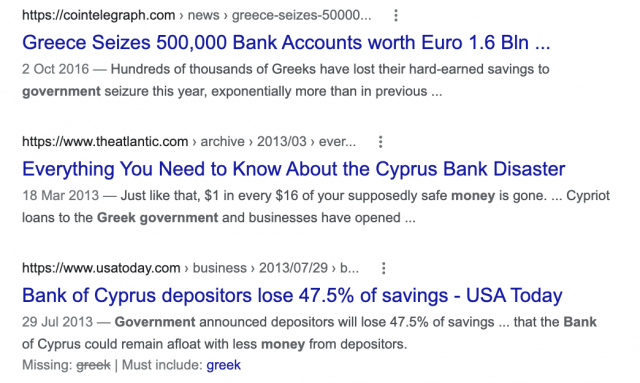

Blockchain has many crucial uses.

Sending money home/abroad without bank fees;

Like fleeing a war-torn country and converting savings to Bitcoin;

Like preventing Twitter from silencing dissidents.

Permissionless, trustless databases could benefit society and humanity. There are, however, many limitations.

Lost password?

What if you're cheated?

What if Trump/Putin/your favorite dictator incites a coup d'état?

What-ifs abound. Decentralization's openness brings good and bad.

No gatekeepers or firefighters to rescue you.

ISIS's fundraising is also frictionless.

Community-owned apps with bad interfaces and service.

Trade-offs rule.

So what compromises does web3 make?

What are your trade-offs? Decentralization has many strengths and flaws. Like Bitcoin's wasteful proof-of-work or Ethereum's political/wealth-based proof-of-stake.

To ensure the survival and veracity of the network/blockchain and to safeguard its nodes, extreme measures have been designed/put in place to prevent hostile takeovers aimed at altering the blockchain, i.e., adding money to your own wallet (account), etc.

These protective measures require significant resources and pose challenges. Reduced speed and throughput, high gas fees (cost to submit/write a transaction to the blockchain), and delayed development times, not to mention forked blockchain chains oops, web3 projects.

Protecting dissidents or rogue regimes makes sense. You need safety, privacy, and calm.

First-world life?

What if you assumed EVERYONE you saw was out to rob/attack you? You'd never travel, trust anyone, accomplish much, or live fully. The economy would collapse.

It's like an ant colony where half the ants do nothing but wait to be attacked.

Waste of time and money.

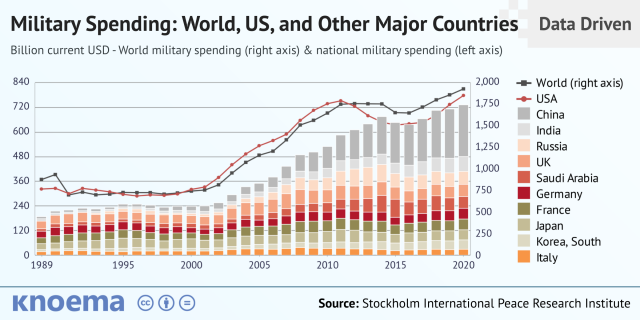

11% of the US budget goes to the military. Imagine what we could do with the $766B+ we spend on what-ifs annually.

Is so much hypothetical security needed?

Blockchain and web3 are similar.

Does your app need permissionless decentralization? Does your scooter-sharing company really need a proof-of-stake system and 1000s of nodes to avoid Russian hackers? Why?

Worst-case scenario? It's not life or death, unless you overstate the what-ifs. Web3 proponents find improbable scenarios to justify decentralization and tokenization.

Do I need a token to prove ownership of my painting? Unless I'm a master thief, I probably bought it.

despite losing the receipt.

I do, however, love Web 3.

Enough Web3 bashing for now. Understand? Decentralization isn't perfect, but it has huge potential when applied to the right problems.

I see many of the right problems as disrupting big tech's ruthless monopolies. I wrote several years ago about how tokenized blockchains could be used to break big tech's stranglehold on platforms, marketplaces, and social media.

Tokenomics schemes can be used for good and are powerful. Here’s how.

Before the ICO boom, I made a series of predictions about blockchain/crypto's future. It's still true.

Here's where I was then and where I see web3 going:

My 11 Big & Bold Predictions for Blockchain

In the near future, people may wear crypto cash rings or bracelets.

While some governments repress cryptocurrency, others will start to embrace it.

Blockchain will fundamentally alter voting and governance, resulting in a more open election process.

Money freedom will lead to a more geographically open world where people will be more able to leave when there is unrest.

Blockchain will make record keeping significantly easier, eliminating the need for a significant portion of government workers whose sole responsibility is paperwork.

Overrated are smart contracts.

6. Tokens will replace company stocks.

7. Blockchain increases real estate's liquidity, value, and volatility.

8. Healthcare may be most affected.

9. Crypto could end privacy and lead to Minority Report.

10. New companies with network effects will displace incumbents.

11. Soon, people will wear rings or bracelets with crypto cash.

Some have already happened, while others are still possible.

Time will tell if they happen.

And finally:

What will web3 be?

Who will be in charge?

Closing remarks

Hope you enjoyed this web3 dive. There's much more to say, but that's for another day.

We're writing history as we go.

Tech regulation, mergers, Bitcoin surge How will history remember us?

What about web3 and blockchain?

Is this a revolution or a tulip craze?

Remember, actions speak louder than words (share them in the comments).

Your turn.