More on Marketing

M.G. Siegler

3 years ago

Apple: Showing Ads on Your iPhone

This report from Mark Gurman has stuck with me:

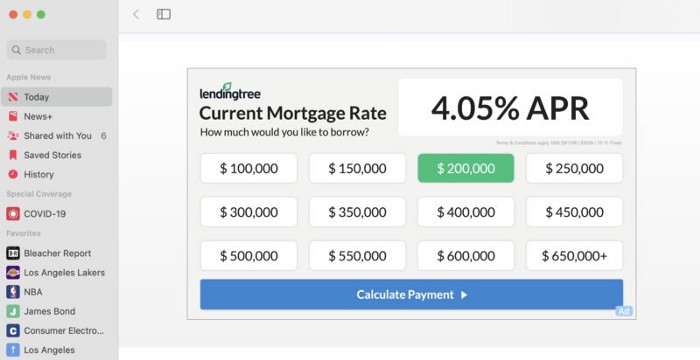

In the News and Stocks apps, the display ads are no different than what you might get on an ad-supported website. In the App Store, the ads are for actual apps, which are probably more useful for Apple users than mortgage rates. Some people may resent Apple putting ads in the News and Stocks apps. After all, the iPhone is supposed to be a premium device. Let’s say you shelled out $1,000 or more to buy one, do you want to feel like Apple is squeezing more money out of you just to use its standard features? Now, a portion of ad revenue from the News app’s Today tab goes to publishers, but it’s not clear how much. Apple also lets publishers advertise within their stories and keep the vast majority of that money. Surprisingly, Today ads also appear if you subscribe to News+ for $10 per month (though it’s a smaller number).

I use Apple News often. It's a good general news catch-up tool, like Twitter without the BS. Customized notifications are helpful. Fast and lovely. Except for advertisements. I have Apple One, which includes News+, and while I understand why the magazines still have brand ads, it's ridiculous to me that Apple enables web publishers to introduce awful ads into this experience. Apple's junky commercials are ridiculous.

We know publishers want and probably requested this. Let's keep Apple News ad-free for the much smaller percentage of paid users, and here's your portion. (Same with Stocks, which is more sillier.)

Paid app placement in the App Store is a wonderful approach for developers to find new users (though far too many of those ads are trying to trick users, in my opinion).

Apple is also planning to increase ads in its Maps app. This sounds like Google Maps, and I don't like it. I never find these relevant, and they clutter up the user experience. Apple Maps now has a UI advantage (though not a data/search one, which matters more).

Apple is nickel-and-diming its customers. We spend thousands for their products and premium services like Apple One. We all know why: income must rise, and new firms are needed to scale. This will eventually backfire.

Victoria Kurichenko

3 years ago

What Happened After I Posted an AI-Generated Post on My Website

This could cost you.

Content creators may have heard about Google's "Helpful content upgrade."

This change is another Google effort to remove low-quality, repetitive, and AI-generated content.

Why should content creators care?

Because too much content manipulates search results.

My experience includes the following.

Website admins seek high-quality guest posts from me. They send me AI-generated text after I say "yes." My readers are irrelevant. Backlinks are needed.

Companies copy high-ranking content to boost their Google rankings. Unfortunately, it's common.

What does this content offer?

Nothing.

Despite Google's updates and efforts to clean search results, webmasters create manipulative content.

As a marketer, I knew about AI-powered content generation tools. However, I've never tried them.

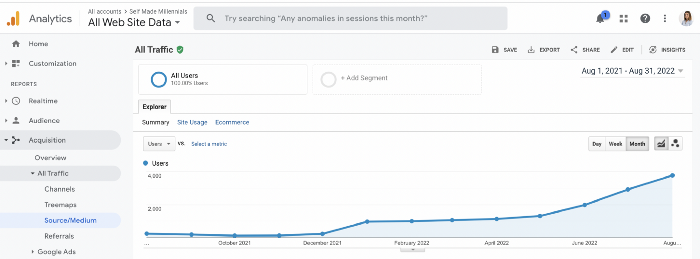

I use old-fashioned content creation methods to grow my website from 0 to 3,000 monthly views in one year.

Last year, I launched a niche website.

I do keyword research, analyze search intent and competitors' content, write an article, proofread it, and then optimize it.

This strategy is time-consuming.

But it yields results!

Here's proof from Google Analytics:

Proven strategies yield promising results.

To validate my assumptions and find new strategies, I run many experiments.

I tested an AI-powered content generator.

I used a tool to write this Google-optimized article about SEO for startups.

I wanted to analyze AI-generated content's Google performance.

Here are the outcomes of my test.

First, quality.

I dislike "meh" content. I expect articles to answer my questions. If not, I've wasted my time.

My essays usually include research, personal anecdotes, and what I accomplished and achieved.

AI-generated articles aren't as good because they lack individuality.

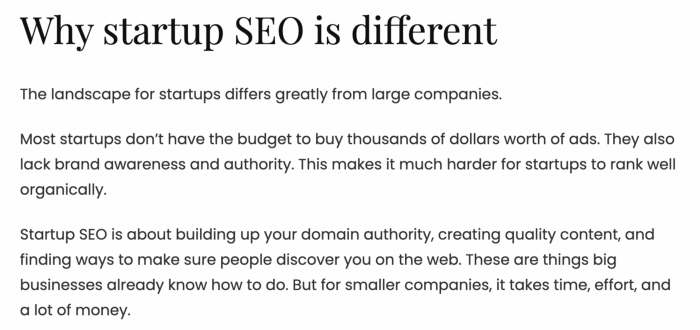

Read my AI-generated article about startup SEO to see what I mean.

It's dry and shallow, IMO.

It seems robotic.

I'd use quotes and personal experience to show how SEO for startups is different.

My article paraphrases top-ranked articles on a certain topic.

It's readable but useless. Similar articles abound online. Why read it?

AI-generated content is low-quality.

Let me show you how this content ranks on Google.

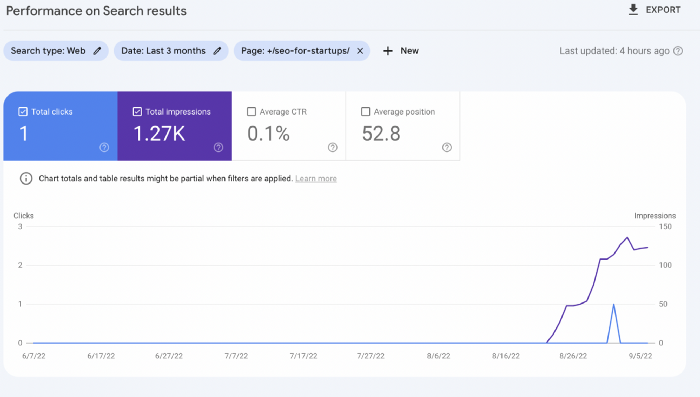

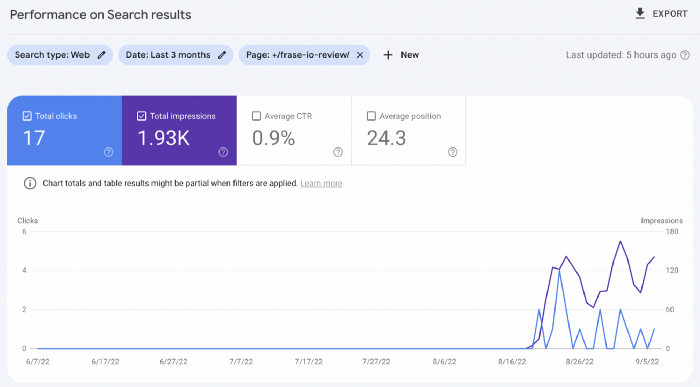

The Google Search Console report shows impressions, clicks, and average position.

Low numbers.

No one opens the 5th Google search result page to read the article. Too far!

You may say the new article will improve.

Marketing-wise, I doubt it.

This article is shorter and less comprehensive than top-ranking pages. It's unlikely to win because of this.

AI-generated content's terrible reality.

I'll compare how this content I wrote for readers and SEO performs.

Both the AI and my article are fresh, but trends are emerging.

My article's CTR and average position are higher.

I spent a week researching and producing that piece, unlike AI-generated content. My expert perspective and unique consequences make it interesting to read.

Human-made.

In summary

No content generator can duplicate a human's tone, writing style, or creativity. Artificial content is always inferior.

Not "bad," but inferior.

Demand for content production tools will rise despite Google's efforts to eradicate thin content.

Most won't spend hours producing link-building articles. Costly.

As guest and sponsored posts, artificial content will thrive.

Before accepting a new arrangement, content creators and website owners should consider this.

Guillaume Dumortier

3 years ago

Mastering the Art of Rhetoric: A Guide to Rhetorical Devices in Successful Headlines and Titles

Unleash the power of persuasion and captivate your audience with compelling headlines.

As the old adage goes, "You never get a second chance to make a first impression."

In the world of content creation and social ads, headlines and titles play a critical role in making that first impression.

A well-crafted headline can make the difference between an article being read or ignored, a video being clicked on or bypassed, or a product being purchased or passed over.

To make an impact with your headlines, mastering the art of rhetoric is essential. In this post, we'll explore various rhetorical devices and techniques that can help you create headlines that captivate your audience and drive engagement.

tl;dr : Headline Magician will help you craft the ultimate headline titles powered by rhetoric devices

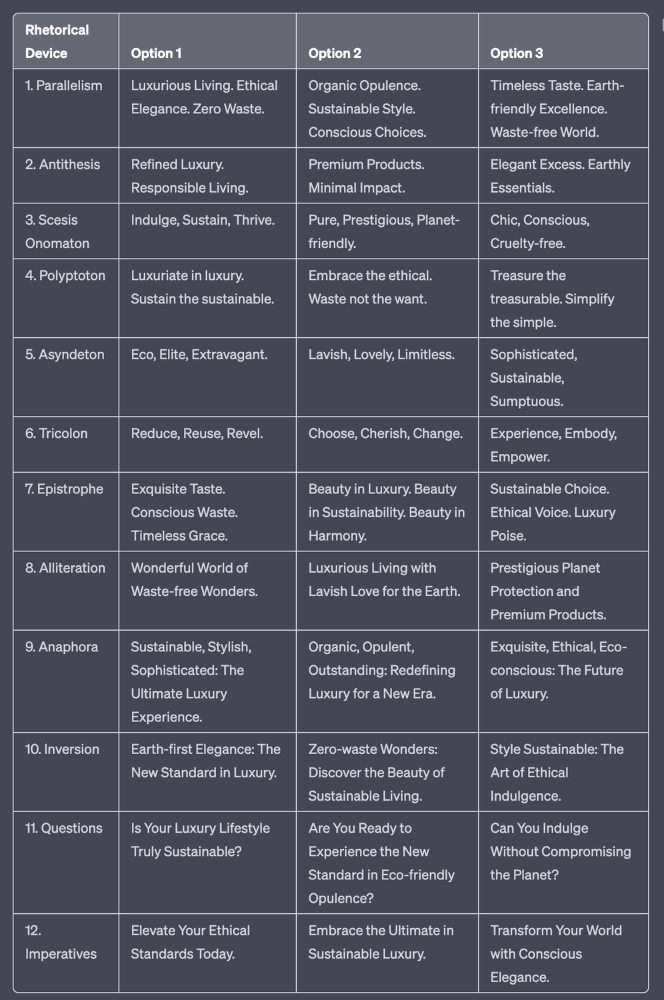

Example with a high-end luxury organic zero-waste skincare brand

✍️ The Power of Alliteration

Alliteration is the repetition of the same consonant sound at the beginning of words in close proximity. This rhetorical device lends itself well to headlines, as it creates a memorable, rhythmic quality that can catch a reader's attention.

By using alliteration, you can make your headlines more engaging and easier to remember.

Examples:

"Crafting Compelling Content: A Comprehensive Course"

"Mastering the Art of Memorable Marketing"

🔁 The Appeal of Anaphora

Anaphora is the repetition of a word or phrase at the beginning of successive clauses. This rhetorical device emphasizes a particular idea or theme, making it more memorable and persuasive.

In headlines, anaphora can be used to create a sense of unity and coherence, which can draw readers in and pique their interest.

Examples:

"Create, Curate, Captivate: Your Guide to Social Media Success"

"Innovation, Inspiration, and Insight: The Future of AI"

🔄 The Intrigue of Inversion

Inversion is a rhetorical device where the normal order of words is reversed, often to create an emphasis or achieve a specific effect.

In headlines, inversion can generate curiosity and surprise, compelling readers to explore further.

Examples:

"Beneath the Surface: A Deep Dive into Ocean Conservation"

"Beyond the Stars: The Quest for Extraterrestrial Life"

⚖️ The Persuasive Power of Parallelism

Parallelism is a rhetorical device that involves using similar grammatical structures or patterns to create a sense of balance and symmetry.

In headlines, parallelism can make your message more memorable and impactful, as it creates a pleasing rhythm and flow that can resonate with readers.

Examples:

"Eat Well, Live Well, Be Well: The Ultimate Guide to Wellness"

"Learn, Lead, and Launch: A Blueprint for Entrepreneurial Success"

⏭️ The Emphasis of Ellipsis

Ellipsis is the omission of words, typically indicated by three periods (...), which suggests that there is more to the story.

In headlines, ellipses can create a sense of mystery and intrigue, enticing readers to click and discover what lies behind the headline.

Examples:

"The Secret to Success... Revealed"

"Unlocking the Power of Your Mind... A Step-by-Step Guide"

🎭 The Drama of Hyperbole

Hyperbole is a rhetorical device that involves exaggeration for emphasis or effect.

In headlines, hyperbole can grab the reader's attention by making bold, provocative claims that stand out from the competition. Be cautious with hyperbole, however, as overuse or excessive exaggeration can damage your credibility.

Examples:

"The Ultimate Guide to Mastering Any Skill in Record Time"

"Discover the Revolutionary Technique That Will Transform Your Life"

❓The Curiosity of Questions

Posing questions in your headlines can be an effective way to pique the reader's curiosity and encourage engagement.

Questions compel the reader to seek answers, making them more likely to click on your content. Additionally, questions can create a sense of connection between the content creator and the audience, fostering a sense of dialogue and discussion.

Examples:

"Are You Making These Common Mistakes in Your Marketing Strategy?"

"What's the Secret to Unlocking Your Creative Potential?"

💥 The Impact of Imperatives

Imperatives are commands or instructions that urge the reader to take action. By using imperatives in your headlines, you can create a sense of urgency and importance, making your content more compelling and actionable.

Examples:

"Master Your Time Management Skills Today"

"Transform Your Business with These Innovative Strategies"

💢 The Emotion of Exclamations

Exclamations are powerful rhetorical devices that can evoke strong emotions and convey a sense of excitement or urgency.

Including exclamations in your headlines can make them more attention-grabbing and shareable, increasing the chances of your content being read and circulated.

Examples:

"Unlock Your True Potential: Find Your Passion and Thrive!"

"Experience the Adventure of a Lifetime: Travel the World on a Budget!"

🎀 The Effectiveness of Euphemisms

Euphemisms are polite or indirect expressions used in place of harsher, more direct language.

In headlines, euphemisms can make your message more appealing and relatable, helping to soften potentially controversial or sensitive topics.

Examples:

"Navigating the Challenges of Modern Parenting"

"Redefining Success in a Fast-Paced World"

⚡Antithesis: The Power of Opposites

Antithesis involves placing two opposite words side-by-side, emphasizing their contrasts. This device can create a sense of tension and intrigue in headlines.

Examples:

"Once a day. Every day"

"Soft on skin. Kill germs"

"Mega power. Mini size."

To utilize antithesis, identify two opposing concepts related to your content and present them in a balanced manner.

🎨 Scesis Onomaton: The Art of Verbless Copy

Scesis onomaton is a rhetorical device that involves writing verbless copy, which quickens the pace and adds emphasis.

Example:

"7 days. 7 dollars. Full access."

To use scesis onomaton, remove verbs and focus on the essential elements of your headline.

🌟 Polyptoton: The Charm of Shared Roots

Polyptoton is the repeated use of words that share the same root, bewitching words into memorable phrases.

Examples:

"Real bread isn't made in factories. It's baked in bakeries"

"Lose your knack for losing things."

To employ polyptoton, identify words with shared roots that are relevant to your content.

✨ Asyndeton: The Elegance of Omission

Asyndeton involves the intentional omission of conjunctions, adding crispness, conviction, and elegance to your headlines.

Examples:

"You, Me, Sushi?"

"All the latte art, none of the environmental impact."

To use asyndeton, eliminate conjunctions and focus on the core message of your headline.

🔮 Tricolon: The Magic of Threes

Tricolon is a rhetorical device that uses the power of three, creating memorable and impactful headlines.

Examples:

"Show it, say it, send it"

"Eat Well, Live Well, Be Well."

To use tricolon, craft a headline with three key elements that emphasize your content's main message.

🔔 Epistrophe: The Chime of Repetition

Epistrophe involves the repetition of words or phrases at the end of successive clauses, adding a chime to your headlines.

Examples:

"Catch it. Bin it. Kill it."

"Joint friendly. Climate friendly. Family friendly."

To employ epistrophe, repeat a key phrase or word at the end of each clause.

You might also like

Chritiaan Hetzner

3 years ago

Mystery of the $1 billion'meme stock' that went to $400 billion in days

Who is AMTD Digital?

An unknown Hong Kong corporation joined the global megacaps worth over $500 billion on Tuesday.

The American Depository Share (ADS) with the ticker code HKD gapped at the open, soaring 25% over the previous closing price as trading began, before hitting an intraday high of $2,555.

At its peak, its market cap was almost $450 billion, more than Facebook parent Meta or Alibaba.

Yahoo Finance reported a daily volume of 350,500 shares, the lowest since the ADS began trading and much below the average of 1.2 million.

Despite losing a fifth of its value on Wednesday, it's still worth more than Toyota, Nike, McDonald's, or Walt Disney.

The company sold 16 million shares at $7.80 each in mid-July, giving it a $1 billion market valuation.

Why the boom?

That market cap seems unjustified.

According to SEC reports, its income-generating assets barely topped $400 million in March. Fortune's emails and calls went unanswered.

Website discloses little about company model. Its one-minute business presentation film uses a Star Wars–like design to sell the company as a "one-stop digital solutions platform in Asia"

The SEC prospectus explains.

AMTD Digital sells a "SpiderNet Ecosystems Solutions" kind of club membership that connects enterprises. This is the bulk of its $25 million annual revenue in April 2021.

Pretax profits have been higher than top line over the past three years due to fair value accounting gains on Appier, DayDayCook, WeDoctor, and five Asian fintechs.

AMTD Group, the company's parent, specializes in investment banking, hotel services, luxury education, and media and entertainment. AMTD IDEA, a $14 billion subsidiary, is also traded on the NYSE.

“Significant volatility”

Why AMTD Digital listed in the U.S. is unknown, as it informed investors in its share offering prospectus that could delist under SEC guidelines.

Beijing's red tape prevents the Sarbanes-Oxley Board from inspecting its Chinese auditor.

This frustrates Chinese stock investors. If the U.S. and China can't achieve a deal, 261 Chinese companies worth $1.3 trillion might be delisted.

Calvin Choi left UBS to become AMTD Group's CEO.

His capitalist background and status as a Young Global Leader with the World Economic Forum don't stop him from praising China's Communist party or celebrating the "glory and dream of the Great Rejuvenation of the Chinese nation" a century after its creation.

Despite having an executive vice chairman with a record of battling corruption and ties to Carrie Lam, Beijing's previous proconsul in Hong Kong, Choi is apparently being targeted for a two-year industry ban by the city's securities regulator after an investor accused Choi of malfeasance.

Some CMIG-funded initiatives produced money, but he didn't give us the proceeds, a corporate official told China's Caixin in October 2020. We don't know if he misappropriated or lost some money.

A seismic anomaly

In fundamental analysis, where companies are valued based on future cash flows, AMTD Digital's mind-boggling market cap is a statistical aberration that should occur once every hundred years.

AMTD Digital doesn't know why it's so valuable. In a thank-you letter to new shareholders, it said it was confused by the stock's performance.

Since its IPO, the company has seen significant ADS price volatility and active trading volume, it said Tuesday. "To our knowledge, there have been no important circumstances, events, or other matters since the IPO date."

Permabears awoke after the jump. Jim Chanos asked if "we're all going to ignore the $400 billion meme stock in the room," while Nate Anderson called AMTD Group "sketchy."

It happened the same day SEC Chair Gary Gensler praised the 20th anniversary of the Sarbanes-Oxley Act, aimed to restore trust in America's financial markets after the Enron and WorldCom accounting fraud scandals.

The run-up revived unpleasant memories of Robinhood's decision to limit retail investors' ability to buy GameStop, regarded as a measure to protect hedge funds invested in the meme company.

Why wasn't HKD's buy button removed? Because retail wasn't behind it?" tweeted Gensler on Tuesday. "Real stock fraud. "You're worthless."

Isaiah McCall

3 years ago

There is a new global currency emerging, but it is not bitcoin.

America should avoid BRICS

Vladimir Putin has watched videos of Muammar Gaddafi's CIA-backed demise.

Gaddafi...

Thief.

Did you know Gaddafi wanted a gold-backed dinar for Africa? Because he considered our global financial system was a Ponzi scheme, he wanted to discontinue trading oil in US dollars.

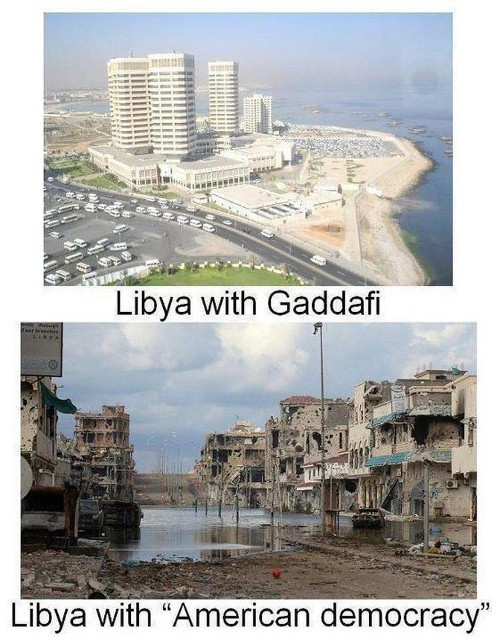

Or, Gaddafi's Libya enjoyed Africa's highest quality of living before becoming freed. Pictured:

Vladimir Putin is a nasty guy, but he had his reasons for not mentioning NATO assisting Ukraine in resisting US imperialism. Nobody tells you. Sure.

The US dollar's corruption post-2008, debasement by quantitative easing, and lack of value are key factors. BRICS will replace the dollar.

BRICS aren't bricks.

Economy-related.

Brazil, Russia, India, China, and South Africa have cooperated for 14 years to fight U.S. hegemony with a new international currency: BRICS.

BRICS is mostly comical. Now. Saudi Arabia, the second-largest oil hegemon, wants to join.

So what?

The New World Currency is BRICS

Russia was kicked out of G8 for its aggressiveness in Crimea in 2014.

It's now G7.

No biggie, said Putin, he said, and I quote, “Bon appetite.”

He was prepared. China, India, and Brazil lead the New World Order.

Together, they constitute 40% of the world's population and, according to the IMF, 50% of the world's GDP by 2030.

Here’s what the BRICS president Marcos Prado Troyjo had to say earlier this year about no longer needing the US dollar: “We have implemented the mechanism of mutual settlements in rubles and rupees, and there is no need for our countries to use the dollar in mutual settlements. And today a similar mechanism of mutual settlements in rubles and yuan is being developed by China.”

Ick. That's D.C. and NYC warmongers licking their chops for WW3 nasty.

Here's a lovely picture of BRICS to relax you:

If Saudi Arabia joins BRICS, as President Mohammed Bin Salman has expressed interest, a majority of the Middle East will have joined forces to construct a new world order not based on the US currency.

I'm not sure of the new acronym.

SBRICSS? CIRBSS? CRIBSS?

The Reason America Is Harvesting What It Sowed

BRICS began 14 years ago.

14 years ago, what occurred? Concentrate. It involved CDOs, bad subprime mortgages, and Wall Street quants crunching numbers.

2008 recession

When two nations trade, they do so in US dollars, not Euros or gold.

What happened when 2008, an avoidable crisis caused by US banks' cupidity and ignorance, what happened?

Everyone WORLDWIDE felt the pain.

Mostly due to corporate America's avarice.

This should have been a warning that China and Russia had enough of our bs. Like when France sent a battleship to America after Nixon scrapped the gold standard. The US was warned to shape up or be dethroned (or at least try).

Nixon improved in 1971. Kinda. Invented PetroDollar.

Another BS system that unfairly favors America and possibly pushed Russia, China, and Saudi Arabia into BRICS.

The PetroDollar forces oil-exporting nations to trade in US dollars and invest in US Treasury bonds. Brilliant. Genius evil.

Our misdeeds are:

In conflicts that are not its concern, the USA uses the global reserve currency as a weapon.

Targeted nations abandon the dollar, and rightfully so, as do nations that depend on them for trade in vital resources.

The dollar's position as the world's reserve currency is in jeopardy, which could have disastrous economic effects.

Although we have actually sown our own doom, we appear astonished. According to the Bible, whomever sows to appease his sinful nature will reap destruction from that nature whereas whoever sows to appease the Spirit will reap eternal life from the Spirit.

Americans, even our leaders, lack caution and delayed pleasure. When our unsustainable systems fail, we double down. Bailouts of the banks in 2008 were myopic, puerile, and another nail in America's hegemony.

America has screwed everyone.

We're unpopular.

The BRICS's future

It's happened before.

Saddam Hussein sold oil in Euros in 2000, and the US invaded Iraq a month later. The media has devalued the word conspiracy. The Iraq conspiracy.

There were no WMDs, but NYT journalists like Judy Miller drove Americans into a warmongering frenzy because Saddam would ruin the PetroDollar. Does anyone recall that this war spawned ISIS?

I think America has done good for the world. You can make a convincing case that we're many people's villain.

Learn more in Confessions of an Economic Hitman, The Devil's Chessboard, or Tyranny of the Federal Reserve. Or ignore it. That's easier.

We, America, should extend an olive branch, ask for forgiveness, and learn from our faults, as the Tao Te Ching advises. Unlikely. Our population is apathetic and stupid, and our government is corrupt.

Argentina, Iran, Egypt, and Turkey have also indicated interest in joining BRICS. They're also considering making it gold-backed, making it a new world reserve currency.

You should pay attention.

Thanks for reading!

Will Lockett

3 years ago

The Unlocking Of The Ultimate Clean Energy

The company seeking 24/7 ultra-powerful solar electricity.

We're rushing to adopt low-carbon energy to prevent a self-made doomsday. We're using solar, wind, and wave energy. These low-carbon sources aren't perfect. They consume large areas of land, causing habitat loss. They don't produce power reliably, necessitating large grid-level batteries, an environmental nightmare. We can and must do better than fossil fuels. Longi, one of the world's top solar panel producers, is creating a low-carbon energy source. Solar-powered spacecraft. But how does it work? Why is it so environmentally harmonious? And how can Longi unlock it?

Space-based solar makes sense. Satellites above Medium Earth Orbit (MEO) enjoy 24/7 daylight. Outer space has no atmosphere or ozone layer to block the Sun's high-energy UV radiation. Solar panels can create more energy in space than on Earth due to these two factors. Solar panels in orbit can create 40 times more power than those on Earth, according to estimates.

How can we utilize this immense power? Launch a geostationary satellite with solar panels, then beam power to Earth. Such a technology could be our most eco-friendly energy source. (Better than fusion power!) How?

Solar panels create more energy in space, as I've said. Solar panel manufacture and grid batteries emit the most carbon. This indicates that a space-solar farm's carbon footprint (which doesn't need a battery because it's a constant power source) might be over 40 times smaller than a terrestrial one. Combine that with carbon-neutral launch vehicles like Starship, and you have a low-carbon power source. Solar power has one of the lowest emissions per kWh at 6g/kWh, so space-based solar could approach net-zero emissions.

Space solar is versatile because it doesn't require enormous infrastructure. A space-solar farm could power New York and Dallas with the same efficiency, without cables. The satellite will transmit power to a nearby terminal. This allows an energy system to evolve and adapt as the society it powers changes. Building and maintaining infrastructure can be carbon-intensive, thus less infrastructure means less emissions.

Space-based solar doesn't destroy habitats, either. Solar and wind power can be engineered to reduce habitat loss, but they still harm ecosystems, which must be restored. Space solar requires almost no land, therefore it's easier on Mother Nature.

Space solar power could be the ultimate energy source. So why haven’t we done it yet?

Well, for two reasons: the cost of launch and the efficiency of wireless energy transmission.

Advances in rocket construction and reusable rocket technology have lowered orbital launch costs. In the early 2000s, the Space Shuttle cost $60,000 per kg launched into LEO, but a SpaceX Falcon 9 costs only $3,205. 95% drop! Even at these low prices, launching a space-based solar farm is commercially questionable.

Energy transmission efficiency is half of its commercial viability. Space-based solar farms must be in geostationary orbit to get 24/7 daylight, 22,300 miles above Earth's surface. It's a long way to wirelessly transmit energy. Most laser and microwave systems are below 20% efficient.

Space-based solar power is uneconomical due to low efficiency and high deployment costs.

Longi wants to create this ultimate power. But how?

They'll send solar panels into space to develop space-based solar power that can be beamed to Earth. This mission will help them design solar panels tough enough for space while remaining efficient.

Longi is a Chinese company, and China's space program and universities are developing space-based solar power and seeking commercial partners. Xidian University has built a 98%-efficient microwave-based wireless energy transmission system for space-based solar power. The Long March 5B is China's super-cheap (but not carbon-offset) launch vehicle.

Longi fills the gap. They have the commercial know-how and ability to build solar satellites and terrestrial terminals at scale. Universities and the Chinese government have transmission technology and low-cost launch vehicles to launch this technology.

It may take a decade to develop and refine this energy solution. This could spark a clean energy revolution. Once operational, Longi and the Chinese government could offer the world a flexible, environmentally friendly, rapidly deployable energy source.

Should the world adopt this technology and let China control its energy? I'm not very political, so you decide. This seems to be the beginning of tapping into this planet-saving energy source. Forget fusion reactors. Carbon-neutral energy is coming soon.