Apple AR/VR heaset

Apple is said to have opted for a standalone AR/VR headset over a more powerful tethered model.

It has had a tumultuous history.

Apple's alleged mixed reality headset appears to be the worst-kept secret in tech, and a fresh story from The Information is jam-packed with details regarding the device's rocky development.

Apple's decision to use a separate headgear is one of the most notable aspects of the story. Apple had yet to determine whether to pursue a more powerful VR headset that would be linked with a base station or a standalone headset. According to The Information, Apple officials chose the standalone product over the version with the base station, which had a processor that later arrived as the M1 Ultra. In 2020, Bloomberg published similar information.

That decision appears to have had a long-term impact on the headset's development. "The device's many processors had already been in development for several years by the time the choice was taken, making it impossible to go back to the drawing board and construct, say, a single chip to handle all the headset's responsibilities," The Information stated. "Other difficulties, such as putting 14 cameras on the headset, have given hardware and algorithm engineers stress."

Jony Ive remained to consult on the project's design even after his official departure from Apple, according to the story. Ive "prefers" a wearable battery, such as that offered by Magic Leap. Other prototypes, according to The Information, placed the battery in the headset's headband, and it's unknown which will be used in the final design.

The headset was purportedly shown to Apple's board of directors last week, indicating that a public unveiling is imminent. However, it is possible that it will not be introduced until later this year, and it may not hit shop shelves until 2023, so we may have to wait a bit to try it.

For further down the line, Apple is working on a pair of AR spectacles that appear like Ray-Ban wayfarer sunglasses, but according to The Information, they're "still several years away from release." (I'm interested to see how they compare to Meta and Ray-Bans' true wayfarer-style glasses.)

More on Technology

Nitin Sharma

3 years ago

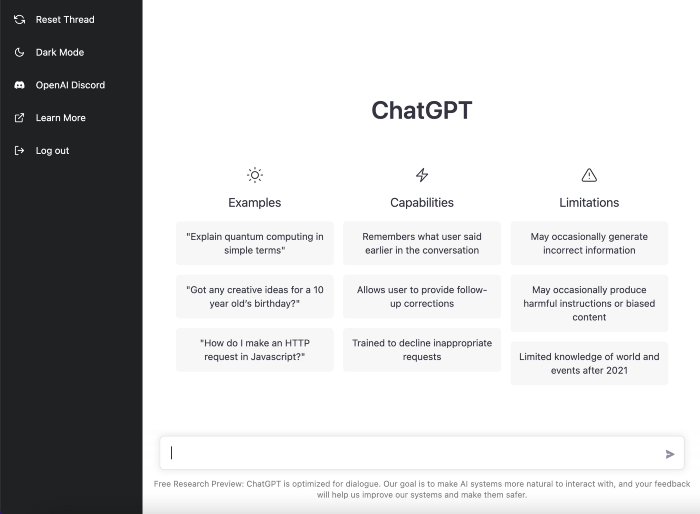

The result of my side-hustle blog post with ChatGPT is remarkable.

It's free now.

Most content writers—including me—write about side hustles because they do well.

I wrote several articles on a profitable programming side hustle.

Later, I learnt about AI content-generating platforms like Jasper.ai and Copy.ai, which I thought were terrible because they required subscriptions and had bad content. Even I dislike automatic online posting.

Since ChatGPT is becoming more popular, I tried it. It's free now.

Note: I wasn’t compensated to write this piece by this tool. In other words, I don’t receive any payment from their founders. There is no affiliate link, and right now it is free to use. Also, ChatGPT can do a lot more than what I ever did.

Begin.

OpenAI account registration requires visiting the website (if you don’t have one). Just write anything into the chatbot and get a response.

First, I wrote about the top 10 2023 side businesses.

This came in seconds.

2023 is shaping up to be a year of growth and opportunity for entrepreneurs and side hustlers. With the rise of remote work and the growing gig economy, there are many income streams to consider launching in the coming year. Here are the top 10 income streams to launch in 2023:

Virtual Assistant — The demand for virtual assistants is on the rise as more businesses move online. As a virtual assistant, you can offer administrative, technical, or creative services to clients remotely.

Online Tutoring — If you have expertise in a particular subject, consider becoming an online tutor. There are many platforms that connect tutors with students looking for help with their studies.

Freelance Writing — If you have a knack for writing, consider becoming a freelance writer. You can offer your services to businesses, bloggers, and individuals looking for content for their websites or social media platforms.

Graphic Design — If you have a talent for design, consider starting a graphic design side hustle. You can create logos, banners, and other visual content for businesses and individuals.

Social Media Management — Many businesses are looking for help with managing their social media accounts. As a social media manager, you can help businesses create and execute social media strategies to reach their target audience.

Dropshipping — Dropshipping is a business model where you sell products without actually holding any inventory. You can partner with suppliers and ship the products directly to the customer on behalf of the business.

Pet Sitting — If you love animals, consider starting a pet-sitting side hustle. You can offer your services to pet owners who need someone to take care of their pets while they are away.

Home Cleaning — With busy schedules, many people are looking for help with cleaning their homes. As a home cleaning service provider, you can offer your services to help keep homes tidy and organized.

Event Planning — If you have a talent for organization and planning, consider starting an event planning side hustle. You can offer your services to help plan and execute events for businesses and individuals.

Online Teaching — With the rise of online education, there is a growing demand for online teachers. If you have expertise in a particular subject, consider becoming an online teacher and offer your services to students looking for personalized instruction.

In conclusion, 2023 is shaping up to be a great year for launching new income streams. Whether you are looking to start a virtual assistant business, become an online tutor, or offer home cleaning services, there are many opportunities to turn your skills and expertise into a profitable side hustle.

Better than most online side hustle posts, right?

Write about the top 10 programming side hustles to start in 2023.

I got this.

If you are a programmer looking to start a side hustle, 2023 is the perfect time to launch your own business. With the growing demand for software and app development, there are many opportunities for programmers to turn their skills into a profitable side hustle. Here are the top 10 programming side hustles to start in 2023:

App Development — With the rise of smartphones and tablets, there is a growing demand for app development. You can offer your services to businesses and individuals looking to create custom apps for their needs.

Website Development — Many businesses and individuals are looking for help with creating and maintaining their websites. As a website developer, you can offer your services to help design, build, and manage websites for your clients.

Software Development — If you have expertise in a particular programming language, consider starting a software development side hustle. You can offer your services to businesses and individuals looking to create custom software solutions for their needs.

Game Development — The gaming industry is thriving, and there is a growing demand for game development. If you have experience in game development, you can offer your services to businesses and individuals looking to create custom games.

Data Analysis — With the rise of big data, there is a growing demand for data analysts. As a data analyst, you can offer your services to businesses and individuals looking to make sense of their data and gain insights from it.

Machine Learning — Machine learning is a rapidly growing field, and there is a growing demand for machine learning experts. If you have experience in machine learning, you can offer your services to businesses and individuals looking to develop custom machine learning solutions.

Cloud Computing — With the rise of cloud computing, there is a growing demand for cloud experts. If you have experience in cloud computing, you can offer your services to businesses and individuals looking to migrate their applications and data to the cloud.

Blockchain Development — Blockchain technology is gaining popularity, and there is a growing demand for blockchain developers. If you have experience in blockchain development, you can offer your services to businesses and individuals looking to develop custom blockchain solutions.

Artificial Intelligence — Artificial intelligence is a rapidly growing field, and there is a growing demand for AI experts. If you have experience in artificial intelligence, you can offer your services to businesses and individuals looking to develop custom AI solutions.

Virtual Reality — Virtual reality is an exciting and emerging technology, and there is a growing demand for VR developers. If you have experience in VR development, you can offer your services to businesses and individuals looking to create custom VR experiences.

In conclusion, 2023 is a great time to start a programming side hustle. Whether you are interested in app development, website development, or machine learning, there are many opportunities for programmers to turn their skills into a profitable business.

These two blogs show that AI can simplify blog authoring.

I asked some tough programming questions, and most were suitable.

The content may occasionally not be what we want, but it will almost always be very helpful to you.

Enjoy.

Gareth Willey

3 years ago

I've had these five apps on my phone for a long time.

TOP APPS

Who survives spring cleaning?

Relax. Notion is off-limits. This topic is popular.

(I wrote about it 2 years ago, before everyone else did.) So).

These apps are probably new to you. I hope you find a new phone app after reading this.

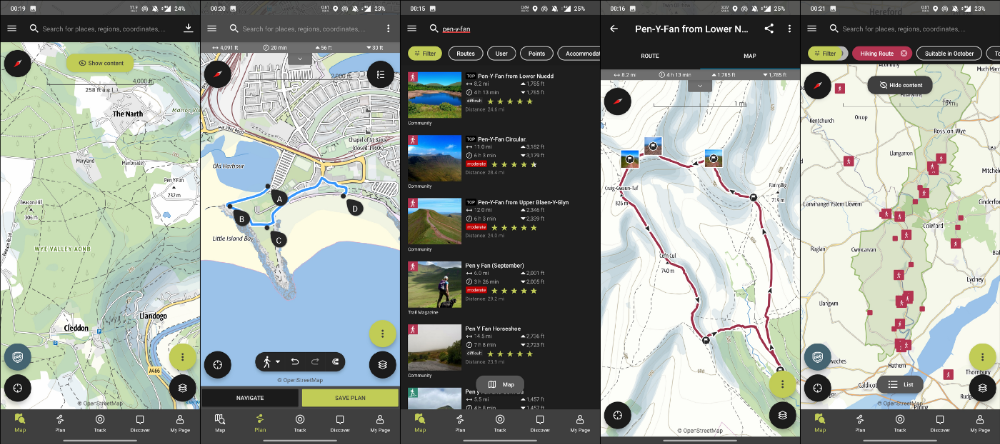

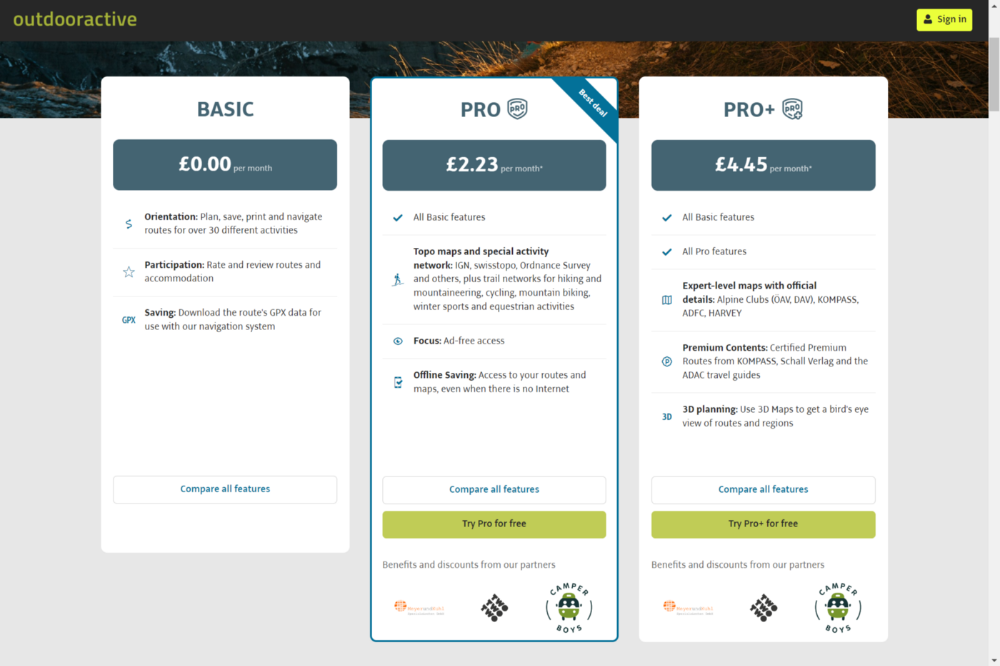

Outdooractive

ViewRanger is Google Maps for outdoor enthusiasts.

This app has been so important to me as a freedom-loving long-distance walker and hiker.

This app shows nearby trails and right-of-ways on top of an Open Street Map.

Helpful detail and data. Any route's distance,

You can download and follow tons of routes planned by app users.

This has helped me find new routes and places a fellow explorer has tried.

Free with non-intrusive ads. Years passed before I subscribed. Pro costs £2.23/month.

This app is for outdoor lovers.

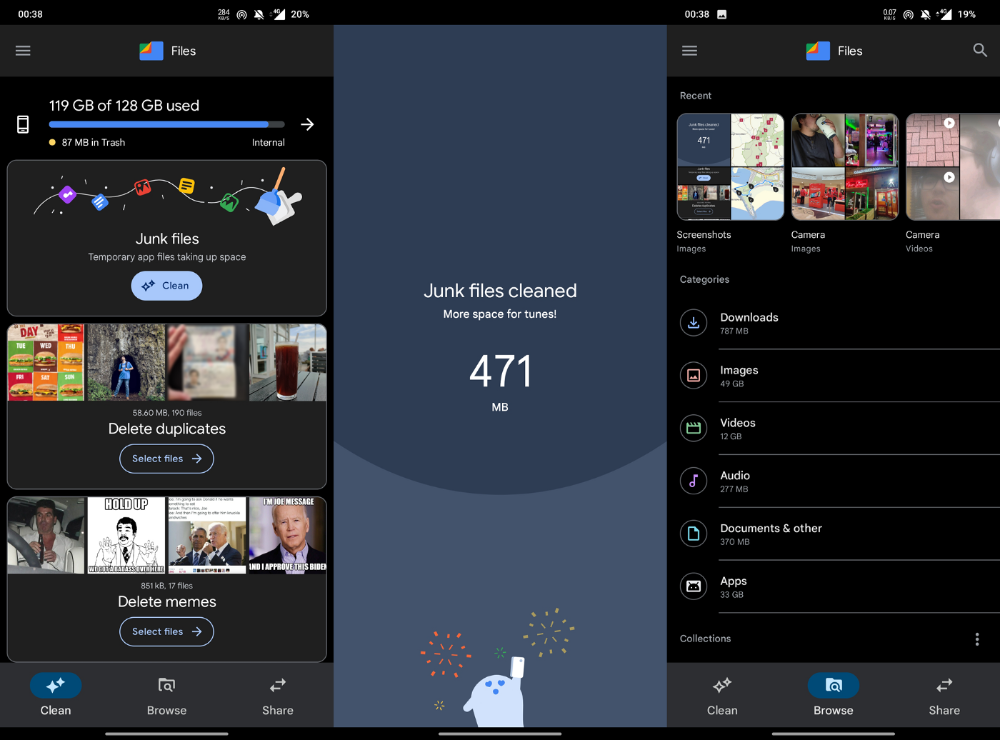

Google Files

New phones come with bloatware. These rushed apps are frustrating.

We must replace these apps. 2017 was Google's year.

Files is a file manager. It's quick, innovative, and clean. They've given people what they want.

It's easy to organize files, clear space, and clear cache.

I recommend Gallery by Google as a gallery app alternative. It's quick and easy.

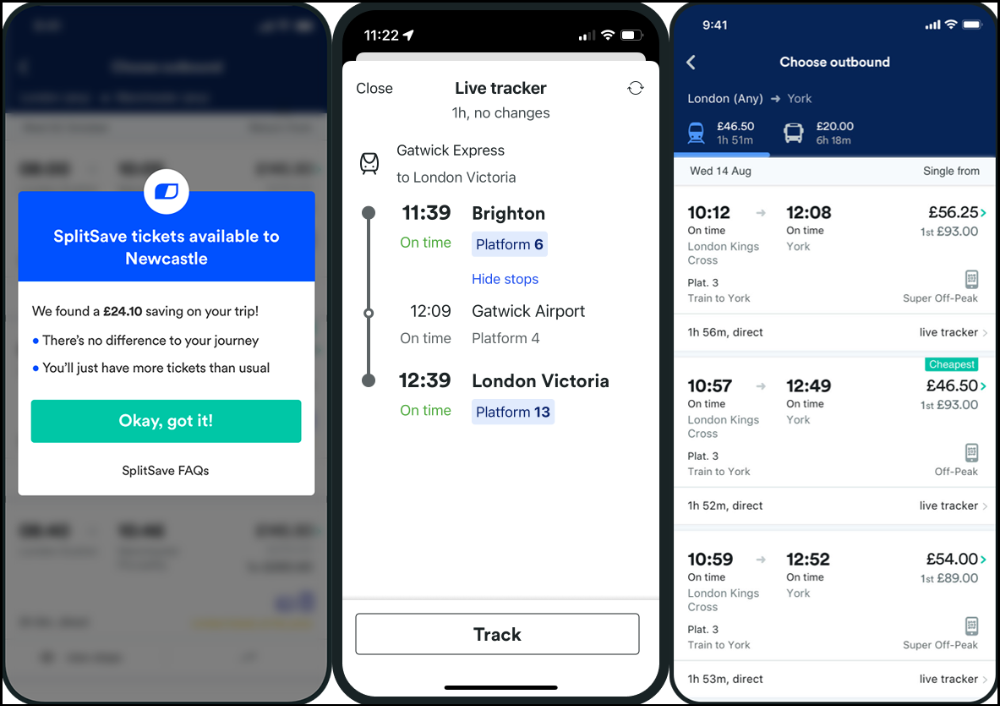

Trainline

App for trains, buses, and coaches.

I've used this app for years. It did the basics well when I first used it.

Since then, it's improved. It's constantly adding features to make traveling easier and less stressful.

Split-ticketing helps me save hundreds a year on train fares. This app is only available in the UK and Europe.

This service doesn't link to a third-party site. Their app handles everything.

Not all train and coach companies use this app. All the big names are there, though.

Here's more on the app.

Battlefield: Mobile

Play Store has 478,000 games. Few can turn my phone into a console.

Call of Duty Mobile and Asphalt 8/9 are examples.

Asphalt's loot boxes and ads make it unplayable. Call of Duty opens with a few ads. Close them to play without hassle.

This game uses all your phone's features to provide a high-quality, seamless experience. If my internet connection is good, I never experience lag or glitches.

The gameplay is energizing and intense, just like on consoles. Sometimes I'm too involved. I've thrown my phone in anger. I'm totally absorbed.

Customizability is my favorite. Since phones have limited screen space, we should only have the buttons we need, placed conveniently.

Size, opacity, and position are modifiable. Adjust audio, graphics, and textures. It's customizable.

This game has been on my phone for three years. It began well and has gotten better. When I think the creators can't do more, they do.

If you play, read my tips for winning a Battle Royale.

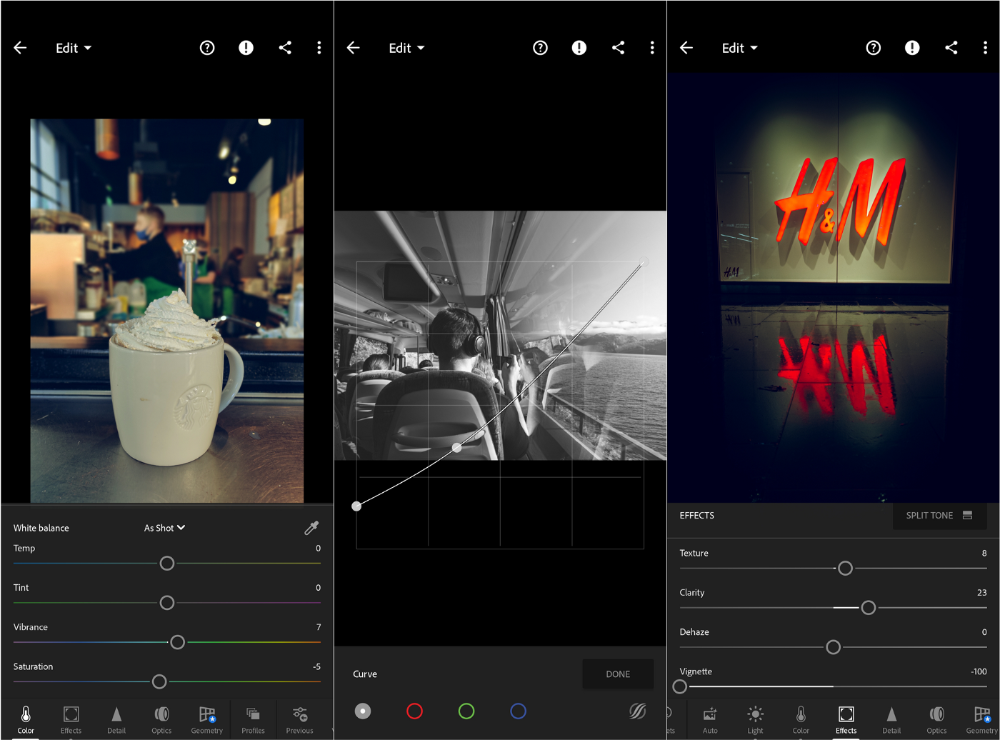

Lightroom

As a photographer, I believe your best camera is on you. The phone.

2017 was a big year for this app. I've tried many photo-editing apps since then. This always wins.

The app is dull. I've never seen better photo editing on a phone.

Adjusting settings and sliders doesn't damage or compress photos. It's detailed.

This is important for phone photos, which are lower quality than professional ones.

Some tools are behind a £4.49/month paywall. Adobe must charge a subscription fee instead of selling licenses. (I'm still bitter about Creative Cloud's price)

Snapseed is my pick. Lightroom is where I do basic editing before moving to Snapseed. Snapseed review:

These apps are great. They cover basic and complex editing needs while traveling.

Final Reflections

I hope you downloaded one of these. Share your favorite apps. These apps are scarce.

Waleed Rikab, PhD

3 years ago

The Enablement of Fraud and Misinformation by Generative AI What You Should Understand

Recent investigations have shown that generative AI can boost hackers and misinformation spreaders.

Since its inception in late November 2022, OpenAI's ChatGPT has entertained and assisted many online users in writing, coding, task automation, and linguistic translation. Given this versatility, it is maybe unsurprising but nonetheless regrettable that fraudsters and mis-, dis-, and malinformation (MDM) spreaders are also considering ChatGPT and related AI models to streamline and improve their operations.

Malign actors may benefit from ChatGPT, according to a WithSecure research. ChatGPT promises to elevate unlawful operations across many attack channels. ChatGPT can automate spear phishing attacks that deceive corporate victims into reading emails from trusted parties. Malware, extortion, and illicit fund transfers can result from such access.

ChatGPT's ability to simulate a desired writing style makes spear phishing emails look more genuine, especially for international actors who don't speak English (or other languages like Spanish and French).

This technique could let Russian, North Korean, and Iranian state-backed hackers conduct more convincing social engineering and election intervention in the US. ChatGPT can also create several campaigns and various phony online personas to promote them, making such attacks successful through volume or variation. Additionally, image-generating AI algorithms and other developing techniques can help these efforts deceive potential victims.

Hackers are discussing using ChatGPT to install malware and steal data, according to a Check Point research. Though ChatGPT's scripts are well-known in the cyber security business, they can assist amateur actors with little technical understanding into the field and possibly develop their hacking and social engineering skills through repeated use.

Additionally, ChatGPT's hacking suggestions may change. As a writer recently indicated, ChatGPT's ability to blend textual and code-based writing might be a game-changer, allowing the injection of innocent content that would subsequently turn out to be a malicious script into targeted systems. These new AI-powered writing- and code-generation abilities allow for unique cyber attacks, regardless of viability.

OpenAI fears ChatGPT usage. OpenAI, Georgetown University's Center for Security and Emerging Technology, and Stanford's Internet Observatory wrote a paper on how AI language models could enhance nation state-backed influence operations. As a last resort, the authors consider polluting the internet with radioactive or misleading data to ensure that AI language models produce outputs that other language models can identify as AI-generated. However, the authors of this paper seem unaware that their "solution" might cause much worse MDM difficulties.

Literally False News

The public argument about ChatGPTs content-generation has focused on originality, bias, and academic honesty, but broader global issues are at stake. ChatGPT can influence public opinion, troll individuals, and interfere in local and national elections by creating and automating enormous amounts of social media material for specified audiences.

ChatGPT's capacity to generate textual and code output is crucial. ChatGPT can write Python scripts for social media bots and give diverse content for repeated posts. The tool's sophistication makes it irrelevant to one's language skills, especially English, when writing MDM propaganda.

I ordered ChatGPT to write a news piece in the style of big US publications declaring that Ukraine is on the verge of defeat in its fight against Russia due to corruption, desertion, and exhaustion in its army. I also gave it a fake reporter's byline and an unidentified NATO source's remark. The outcome appears convincing:

Worse, terrible performers can modify this piece to make it more credible. They can edit the general's name or add facts about current wars. Furthermore, such actors can create many versions of this report in different forms and distribute them separately, boosting its impact.

In this example, ChatGPT produced a news story regarding (fictional) greater moviegoer fatality rates:

Editing this example makes it more plausible. Dr. Jane Smith, the putative author of the medical report, might be replaced with a real-life medical person or a real victim of this supposed medical hazard.

Can deceptive texts be found? Detecting AI text is behind AI advancements. Minor AI-generated text alterations can upset these technologies.

Some OpenAI individuals have proposed covert methods to watermark AI-generated literature to prevent its abuse. AI models would create information that appears normal to humans but would follow a cryptographic formula that would warn other machines that it was AI-made. However, security experts are cautious since manually altering the content interrupts machine and human detection of AI-generated material.

How to Prepare

Cyber security and IT workers can research and use generative AI models to fight spear fishing and extortion. Governments may also launch MDM-defence projects.

In election cycles and global crises, regular people may be the most vulnerable to AI-produced deceit. Until regulation or subsequent technical advances, individuals must recognize exposure to AI-generated fraud, dating scams, other MDM activities.

A three-step verification method of new material in suspicious emails or social media posts can help identify AI content and manipulation. This three-step approach asks about the information's distribution platform (is it reliable? ), author (is the reader familiar with them? ), and plausibility given one's prior knowledge of the topic.

Consider a report by a trusted journalist that makes shocking statements in their typical manner. AI-powered fake news may be released on an unexpected platform, such as a newly created Facebook profile. However, if it links to a known media source, it is more likely to be real.

Though hard and subjective, this verification method may be the only barrier against manipulation for now.

AI language models:

How to Recognize an AI-Generated Article ChatGPT, the popular AI-powered chatbot, can and likely does generate medium.com-style articles.

AI-Generated Text Detectors Fail. Do This. Online tools claim to detect ChatGPT output. Even with superior programming, I tested some of these tools. pub

Why Original Writers Matter Despite AI Language Models Creative writers may never be threatened by AI language models.

You might also like

Vishal Chawla

4 years ago

5 Bored Apes borrowed to claim $1.1 million in APE tokens

Takeaway

Unknown user took advantage of the ApeCoin airdrop to earn $1.1 million.

He used a flash loan to borrow five BAYC NFTs, claim the airdrop, and repay the NFTs.

Yuga Labs, the creators of BAYC, airdropped ApeCoin (APE) to anyone who owns one of their NFTs yesterday.

For the Bored Ape Yacht Club and Mutant Ape Yacht Club collections, the team allocated 150 million tokens, or 15% of the total ApeCoin supply, worth over $800 million. Each BAYC holder received 10,094 tokens worth $80,000 to $200,000.

But someone managed to claim the airdrop using NFTs they didn't own. They used the airdrop's specific features to carry it out. And it worked, earning them $1.1 million in ApeCoin.

The trick was that the ApeCoin airdrop wasn't based on who owned which Bored Ape at a given time. Instead, anyone with a Bored Ape at the time of the airdrop could claim it. So if you gave someone your Bored Ape and you hadn't claimed your tokens, they could claim them.

The person only needed to get hold of some Bored Apes that hadn't had their tokens claimed to claim the airdrop. They could be returned immediately.

So, what happened?

The person found a vault with five Bored Ape NFTs that hadn't been used to claim the airdrop.

A vault tokenizes an NFT or a group of NFTs. You put a bunch of NFTs in a vault and make a token. This token can then be staked for rewards or sold (representing part of the value of the collection of NFTs). Anyone with enough tokens can exchange them for NFTs.

This vault uses the NFTX protocol. In total, it contained five Bored Apes: #7594, #8214, #9915, #8167, and #4755. Nobody had claimed the airdrop because the NFTs were locked up in the vault and not controlled by anyone.

The person wanted to unlock the NFTs to claim the airdrop but didn't want to buy them outright s o they used a flash loan, a common tool for large DeFi hacks. Flash loans are a low-cost way to borrow large amounts of crypto that are repaid in the same transaction and block (meaning that the funds are never at risk of not being repaid).

With a flash loan of under $300,000 they bought a Bored Ape on NFT marketplace OpenSea. A large amount of the vault's token was then purchased, allowing them to redeem the five NFTs. The NFTs were used to claim the airdrop, before being returned, the tokens sold back, and the loan repaid.

During this process, they claimed 60,564 ApeCoin airdrops. They then sold them on Uniswap for 399 ETH ($1.1 million). Then they returned the Bored Ape NFT used as collateral to the same NFTX vault.

Attack or arbitrage?

However, security firm BlockSecTeam disagreed with many social media commentators. A flaw in the airdrop-claiming mechanism was exploited, it said.

According to BlockSecTeam's analysis, the user took advantage of a "vulnerability" in the airdrop.

"We suspect a hack due to a flaw in the airdrop mechanism. The attacker exploited this vulnerability to profit from the airdrop claim" said BlockSecTeam.

For example, the airdrop could have taken into account how long a person owned the NFT before claiming the reward.

Because Yuga Labs didn't take a snapshot, anyone could buy the NFT in real time and claim it. This is probably why BAYC sales exploded so soon after the airdrop announcement.

Ashraful Islam

4 years ago

Clean API Call With React Hooks

| Photo by Juanjo Jaramillo on Unsplash |

Calling APIs is the most common thing to do in any modern web application. When it comes to talking with an API then most of the time we need to do a lot of repetitive things like getting data from an API call, handling the success or error case, and so on.

When calling tens of hundreds of API calls we always have to do those tedious tasks. We can handle those things efficiently by putting a higher level of abstraction over those barebone API calls, whereas in some small applications, sometimes we don’t even care.

The problem comes when we start adding new features on top of the existing features without handling the API calls in an efficient and reusable manner. In that case for all of those API calls related repetitions, we end up with a lot of repetitive code across the whole application.

In React, we have different approaches for calling an API. Nowadays mostly we use React hooks. With React hooks, it’s possible to handle API calls in a very clean and consistent way throughout the application in spite of whatever the application size is. So let’s see how we can make a clean and reusable API calling layer using React hooks for a simple web application.

I’m using a code sandbox for this blog which you can get here.

import "./styles.css";

import React, { useEffect, useState } from "react";

import axios from "axios";

export default function App() {

const [posts, setPosts] = useState(null);

const [error, setError] = useState("");

const [loading, setLoading] = useState(false);

useEffect(() => {

handlePosts();

}, []);

const handlePosts = async () => {

setLoading(true);

try {

const result = await axios.get(

"https://jsonplaceholder.typicode.com/posts"

);

setPosts(result.data);

} catch (err) {

setError(err.message || "Unexpected Error!");

} finally {

setLoading(false);

}

};

return (

<div className="App">

<div>

<h1>Posts</h1>

{loading && <p>Posts are loading!</p>}

{error && <p>{error}</p>}

<ul>

{posts?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

</div>

);

}

I know the example above isn’t the best code but at least it’s working and it’s valid code. I will try to improve that later. For now, we can just focus on the bare minimum things for calling an API.

Here, you can try to get posts data from JsonPlaceholer. Those are the most common steps we follow for calling an API like requesting data, handling loading, success, and error cases.

If we try to call another API from the same component then how that would gonna look? Let’s see.

500: Internal Server Error

Now it’s going insane! For calling two simple APIs we’ve done a lot of duplication. On a top-level view, the component is doing nothing but just making two GET requests and handling the success and error cases. For each request, it’s maintaining three states which will periodically increase later if we’ve more calls.

Let’s refactor to make the code more reusable with fewer repetitions.

Step 1: Create a Hook for the Redundant API Request Codes

Most of the repetitions we have done so far are about requesting data, handing the async things, handling errors, success, and loading states. How about encapsulating those things inside a hook?

The only unique things we are doing inside handleComments and handlePosts are calling different endpoints. The rest of the things are pretty much the same. So we can create a hook that will handle the redundant works for us and from outside we’ll let it know which API to call.

500: Internal Server Error

Here, this request function is identical to what we were doing on the handlePosts and handleComments. The only difference is, it’s calling an async function apiFunc which we will provide as a parameter with this hook. This apiFunc is the only independent thing among any of the API calls we need.

With hooks in action, let’s change our old codes in App component, like this:

500: Internal Server Error

How about the current code? Isn’t it beautiful without any repetitions and duplicate API call handling things?

Let’s continue our journey from the current code. We can make App component more elegant. Now it knows a lot of details about the underlying library for the API call. It shouldn’t know that. So, here’s the next step…

Step 2: One Component Should Take Just One Responsibility

Our App component knows too much about the API calling mechanism. Its responsibility should just request the data. How the data will be requested under the hood, it shouldn’t care about that.

We will extract the API client-related codes from the App component. Also, we will group all the API request-related codes based on the API resource. Now, this is our API client:

import axios from "axios";

const apiClient = axios.create({

// Later read this URL from an environment variable

baseURL: "https://jsonplaceholder.typicode.com"

});

export default apiClient;

All API calls for comments resource will be in the following file:

import client from "./client";

const getComments = () => client.get("/comments");

export default {

getComments

};

All API calls for posts resource are placed in the following file:

import client from "./client";

const getPosts = () => client.get("/posts");

export default {

getPosts

};

Finally, the App component looks like the following:

import "./styles.css";

import React, { useEffect } from "react";

import commentsApi from "./api/comments";

import postsApi from "./api/posts";

import useApi from "./hooks/useApi";

export default function App() {

const getPostsApi = useApi(postsApi.getPosts);

const getCommentsApi = useApi(commentsApi.getComments);

useEffect(() => {

getPostsApi.request();

getCommentsApi.request();

}, []);

return (

<div className="App">

{/* Post List */}

<div>

<h1>Posts</h1>

{getPostsApi.loading && <p>Posts are loading!</p>}

{getPostsApi.error && <p>{getPostsApi.error}</p>}

<ul>

{getPostsApi.data?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

{/* Comment List */}

<div>

<h1>Comments</h1>

{getCommentsApi.loading && <p>Comments are loading!</p>}

{getCommentsApi.error && <p>{getCommentsApi.error}</p>}

<ul>

{getCommentsApi.data?.map((comment) => (

<li key={comment.id}>{comment.name}</li>

))}

</ul>

</div>

</div>

);

}

Now it doesn’t know anything about how the APIs get called. Tomorrow if we want to change the API calling library from axios to fetch or anything else, our App component code will not get affected. We can just change the codes form client.js This is the beauty of abstraction.

Apart from the abstraction of API calls, Appcomponent isn’t right the place to show the list of the posts and comments. It’s a high-level component. It shouldn’t handle such low-level data interpolation things.

So we should move this data display-related things to another low-level component. Here I placed those directly in the App component just for the demonstration purpose and not to distract with component composition-related things.

Final Thoughts

The React library gives the flexibility for using any kind of third-party library based on the application’s needs. As it doesn’t have any predefined architecture so different teams/developers adopted different approaches to developing applications with React. There’s nothing good or bad. We choose the development practice based on our needs/choices. One thing that is there beyond any choices is writing clean and maintainable codes.

Michael Salim

3 years ago

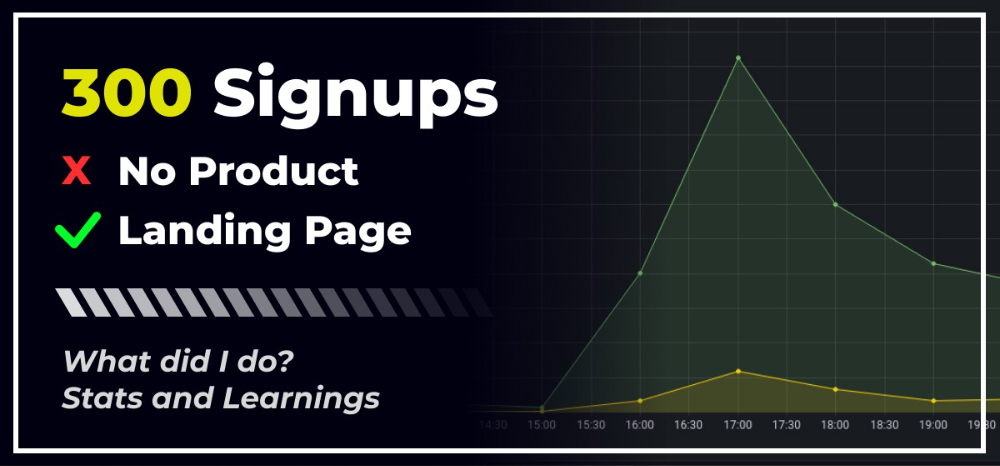

300 Signups, 1 Landing Page, 0 Products

I placed a link on HackerNews and got 300 signups in a week. This post explains what happened.

Product Concept

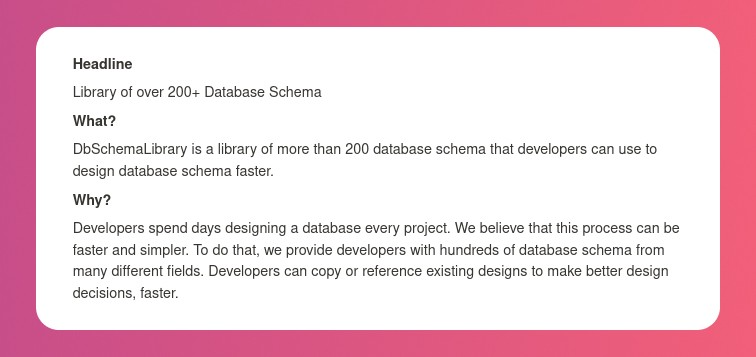

The product is DbSchemaLibrary. A library of Database Schema.

I'm not sure where this idea originated from. Very fast. Build fast, fail fast, test many ideas, and one will be a hit. I tried it. Let's try it anyway, even though it'll probably fail. I finished The Lean Startup book and wanted to use it.

Database job bores me. Important! I get drowsy working on it. Someone must do it. I remember this happening once. I needed examples at the time. Something similar to Recall (my other project) that I can copy — or at least use as a reference.

Frequently googled. Many tabs open. The results were useless. I raised my hand and agreed to construct the database myself.

It resurfaced. I decided to do something.

Due Diligence

Lean Startup emphasizes validated learning. Everything the startup does should result in learning. I may build something nobody wants otherwise. That's what happened to Recall.

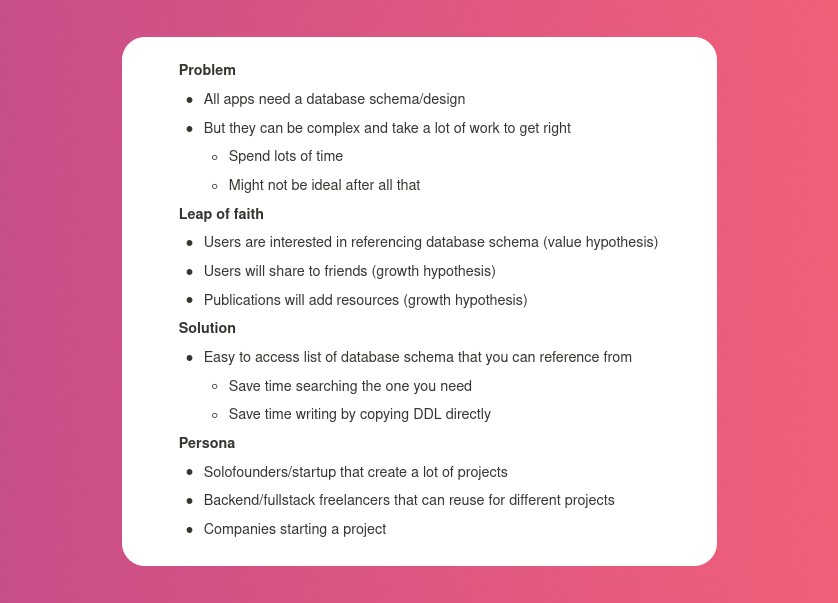

So, I wrote a business plan document. This happens before I code. What am I solving? What is my proposed solution? What is the leap of faith between the problem and solution? Who would be my target audience?

My note:

In my previous project, I did the opposite!

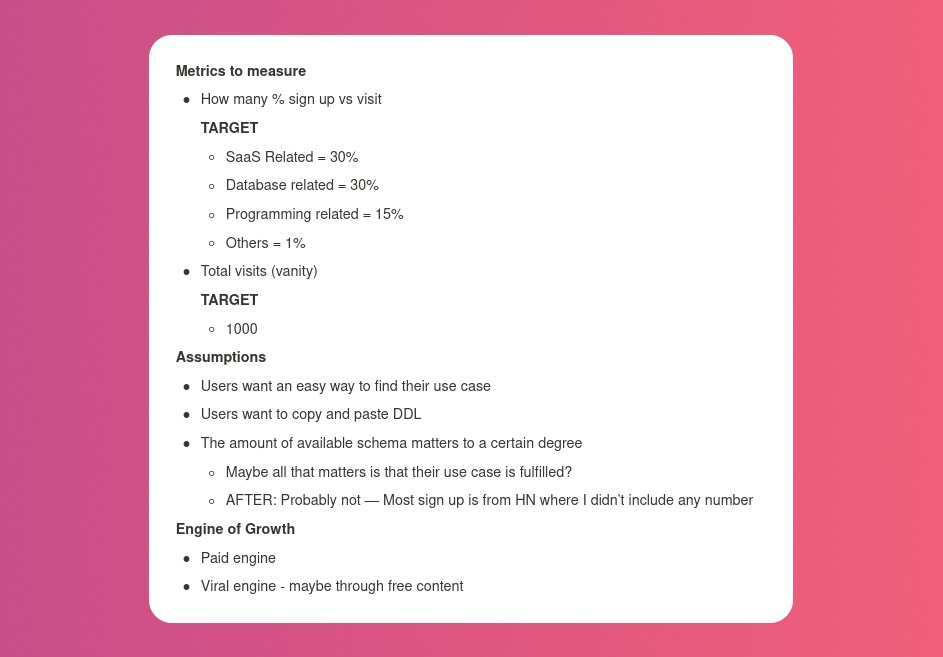

I wrote my expectations after reading the book's advice.

“Failure is a prerequisite to learning. The problem with the notion of shipping a product and then seeing what happens is that you are guaranteed to succeed — at seeing what happens.” — The Lean Startup book

These are successful metrics. If I don't reach them, I'll drop the idea and try another. I didn't understand numbers then. Below are guesses. But it’s a start!

I then wrote the project's What and Why. I'll use this everywhere. Before, I wrote a different pitch each time. I thought certain words would be better. I felt the audience might want something unusual.

Occasionally, this works. I'm unsure if it's a good idea. No stats, just my writing-time opinion. Writing every time is time-consuming and sometimes hazardous. Having a copy saved me duplication.

I can measure and learn from performance.

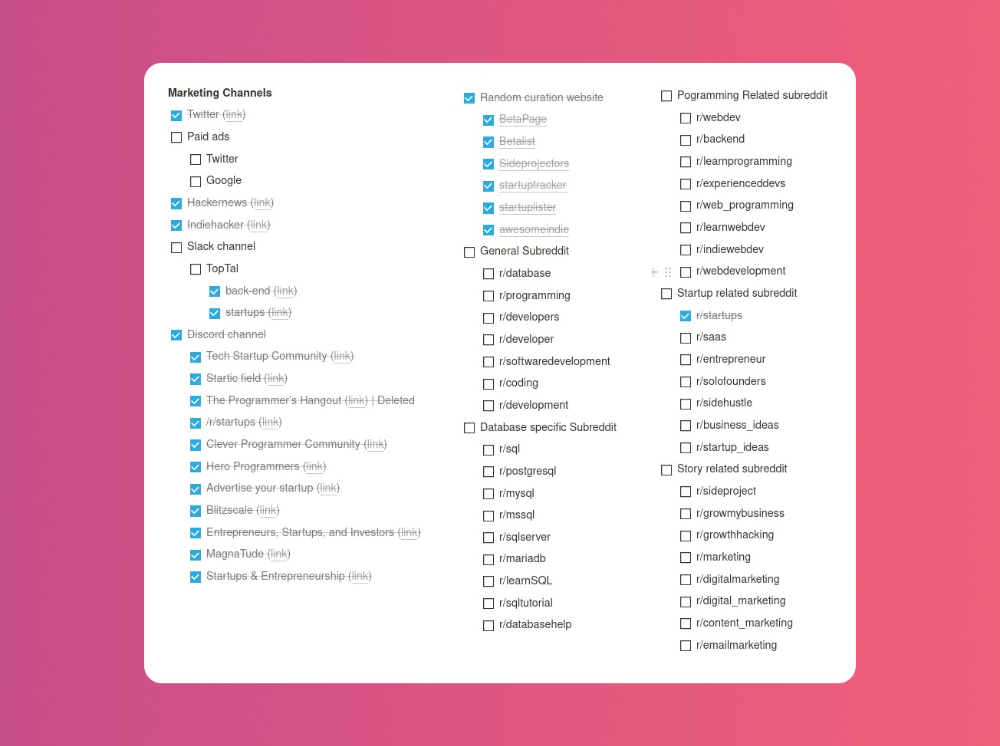

Last, I identified communities that might demand the product. This became an exercise in creativity.

The MVP

So now it’s time to build.

A MVP can test my assumptions. Business may learn from it. Not low-quality. We should learn from the tiniest thing.

I like the example of how Dropbox did theirs. They assumed that if the product works, people will utilize it. How can this be tested without a quality product? They made a movie demonstrating the software's functionality. Who knows how much functionality existed?

So I tested my biggest assumption. Users want schema references. How can I test if users want to reference another schema? I'd love this. Recall taught me that wanting something doesn't mean others do.

I made an email-collection landing page. Describe it briefly. Reference library. Each email sender wants a reference. They're interested in the product. Few other reasons exist.

Header and footer were skipped. No name or logo. DbSchemaLibrary is a name I thought of after the fact. 5-minute logo. I expected a flop. Recall has no users after months of labor. What could happen to a 2-day project?

I didn't compromise learning validation. How many visitors sign up? To draw a conclusion, I must track these results.

Posting Time

Now that the job is done, gauge interest. The next morning, I posted on all my channels. I didn't want to be spammy, therefore it required more time.

I made sure each channel had at least one fan of this product. I also answer people's inquiries in the channel.

My list stinks. Several channels wouldn't work. The product's target market isn't there. Posting there would waste our time. This taught me to create marketing channels depending on my persona.

Statistics! What actually happened

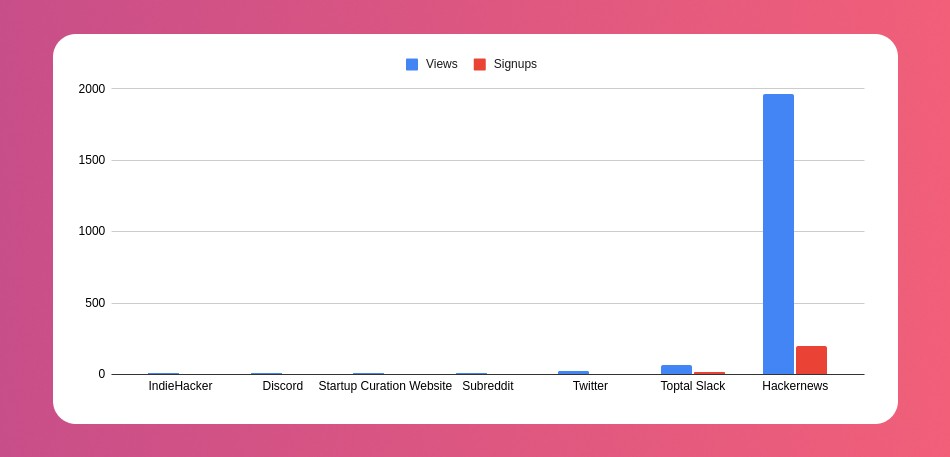

My favorite part! 23 channels received the link.

I stopped posting to Discord despite its high conversion rate. I eliminated some channels because they didn't fit. According to the numbers, some users like it. Most users think it's spam.

I was skeptical. And 12 people viewed it.

I didn't expect much attention on a startup subreddit. I'll likely examine Reddit further in the future. As I have enough info, I didn't post much. Time for the next validated learning

No comment. The post had few views, therefore the numbers are low.

The targeted people come next.

I'm a Toptal freelancer. There's a member-only Slack channel. Most people can't use this marketing channel, but you should! It's not as spectacular as discord's 27% conversion rate. But I think the users here are better.

I don’t really have a following anywhere so this isn’t something I can leverage.

The best yet. 10% is converted. With more data, I expect to attain a 10% conversion rate from other channels. Stable number.

This number required some work. Did you know that people use many different clients to read HN?

Unknowns

Untrackable views and signups abound. 1136 views and 135 signups are untraceable. It's 11%. I bet much of that came from Hackernews.

Overall Statistics

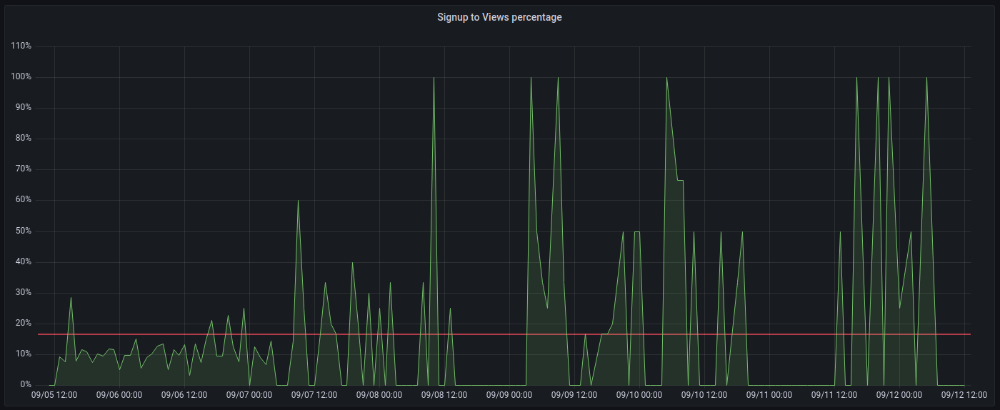

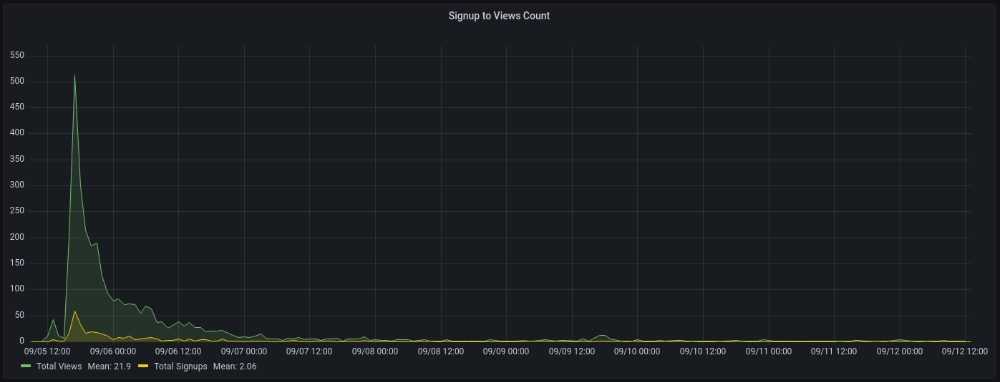

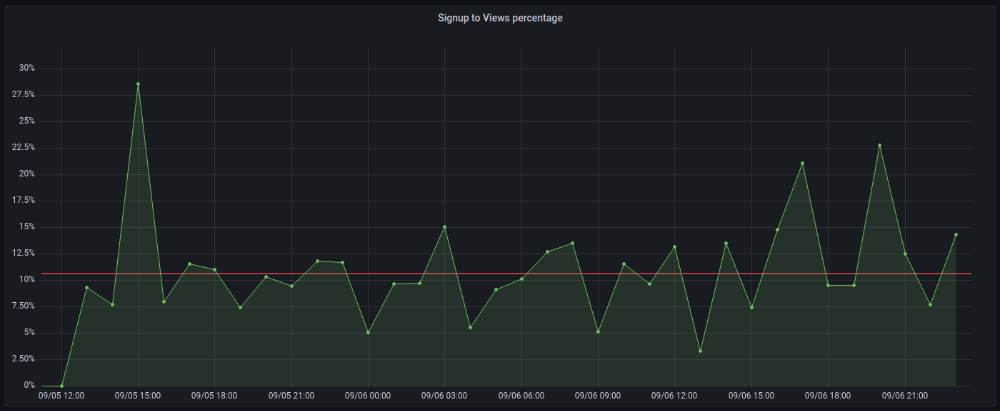

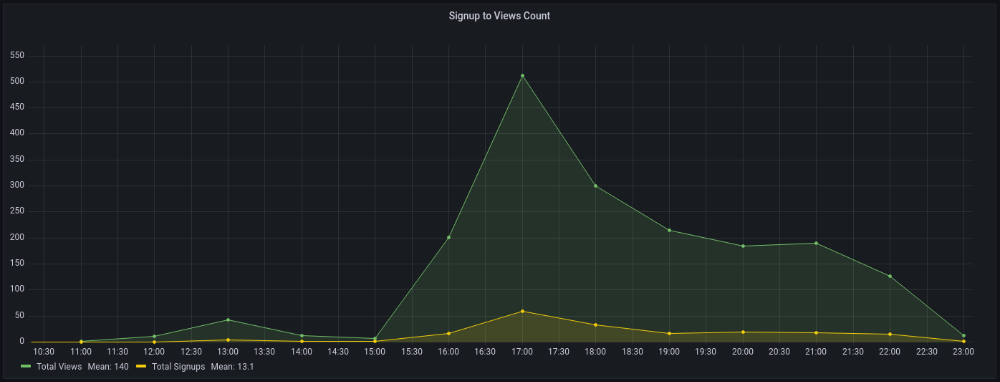

The 7-day signup-to-visit ratio was 17%. (Hourly data points)

First-day percentages were lower, which is noteworthy. Initially, it was little above 10%. The HN post started getting views then.

When traffic drops, the number reaches just around 20%. More individuals are interested in the connection. hn.algolia.com sent 2 visitors. This means people are searching and finding my post.

Interesting discoveries

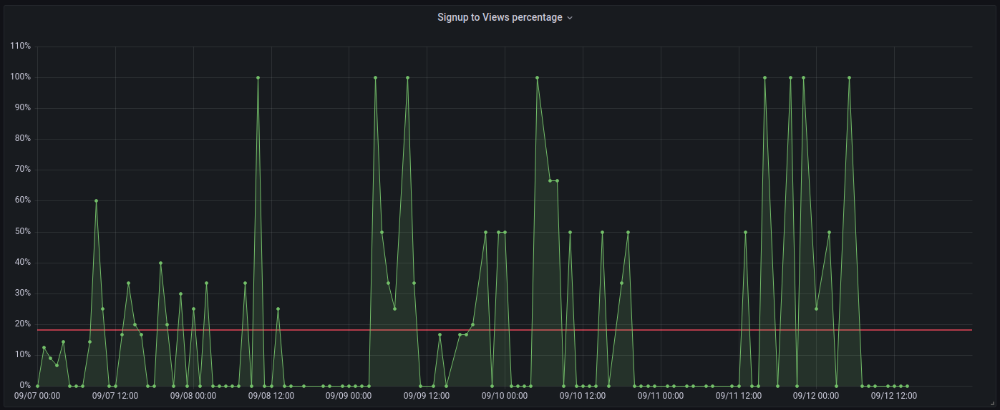

1. HN post struggled till the US woke up.

11am UTC. After an hour, it lost popularity. It seemed over. 7 signups converted 13%. Not amazing, but I would've thought ahead.

After 4pm UTC, traffic grew again. 4pm UTC is 9am PDT. US awakened. 10am PDT saw 512 views.

2. The product was highlighted in a newsletter.

I found Revue references when gathering data. Newsletter platform. Someone posted the newsletter link. 37 views and 3 registrations.

3. HN numbers are extremely reliable

I don't have a time-lapse graph (yet). The statistics were constant all day.

2717 views later 272 new users, or 10.1%

With 293 signups at 2856 views, 10.25%

At 306 signups at 2965 views, 10.32%

Learnings

1. My initial estimations were wildly inaccurate

I wrote 30% conversion. Reading some articles, looks like 10% is a good number to aim for.

2. Paying attention to what matters rather than vain metrics

The Lean Startup discourages vanity metrics. Feel-good metrics that don't measure growth or traction. Considering the proportion instead of the total visitors made me realize there was something here.

What’s next?

There are lots of work to do. Data aggregation, display, website development, marketing, legal issues. Fun! It's satisfying to solve an issue rather than investigate its cause.

In the meantime, I’ve already written the first project update in another post. Continue reading it if you’d like to know more about the project itself! Shifting from Quantity to Quality — DbSchemaLibrary