More on Entrepreneurship/Creators

The woman

3 years ago

Because he worked on his side projects during working hours, my junior was fired and sued.

Many developers do it, but I don't approve.

Aren't many programmers part-time? Many work full-time but also freelance. If the job agreement allows it, I see no problem.

Tech businesses' policies vary. I have a friend in Google, Germany. According to his contract, he couldn't do an outside job. Google owns any code he writes while employed.

I was shocked. Later, I found that different Google regions have different policies.

A corporation can normally establish any agreement before hiring you. They're negotiable. When there's no agreement, state law may apply. In court, law isn't so simple.

I won't delve into legal details. Instead, let’s talk about the incident.

How he was discovered

In one month, he missed two deadlines. His boss was frustrated because the assignment wasn't difficult to miss twice. When a team can't finish work on time, they all earn bad grades.

He annoyed the whole team. One team member (anonymous) told the project manager he worked on side projects during office hours. He may have missed deadlines because of this.

The project manager was furious. He needed evidence. The manager caught him within a week. The manager told higher-ups immediately.

The company wanted to set an example

Management could terminate him and settle the problem. But the company wanted to set an example for those developers who breached the regulation.

Because dismissal isn't enough. Every organization invests heavily in developer hiring. If developers depart or are fired after a few months, the company suffers.

The developer spent 10 months there. The employer sacked him and demanded ten months' pay. Or they'd sue him.

It was illegal and unethical. The youngster paid the fine and left the company quietly to protect his career.

Right or wrong?

Is the developer's behavior acceptable? Let's discuss developer malpractice.

During office hours, may developers work on other projects? If they're bored during office hours, they might not. Check the employment contract or state law.

If there's no employment clause, check country/state law. Because you can't justify breaking the law. Always. Most employers own their employees' work hours unless it's a contractual position.

If the company agrees, it's fine.

I also oppose companies that force developers to work overtime without pay.

Most states and countries have laws that help companies and workers. Law supports employers in this case. If any of the following are true, the company/employer owns the IP under California law.

using the business's resources

any equipment, including a laptop used for business.

company's mobile device.

offices of the company.

business time as well. This is crucial. Because this occurred in the instance of my junior.

Company resources are dangerous. Because your company may own the product's IP. If you have seen the TV show Silicon Valley, you have seen a similar situation there, right?

Conclusion

Simple rule. I avoid big side projects. I work on my laptop on weekends for side projects. I'm safe. But I also know that my company might not be happy with that.

As an employee, I suppose I can. I can make side money. I won't promote it, but I'll respect their time, resources, and task. I also sometimes work extra time to finish my company’s deadlines.

Owolabi Judah

3 years ago

How much did YouTube pay for 10 million views?

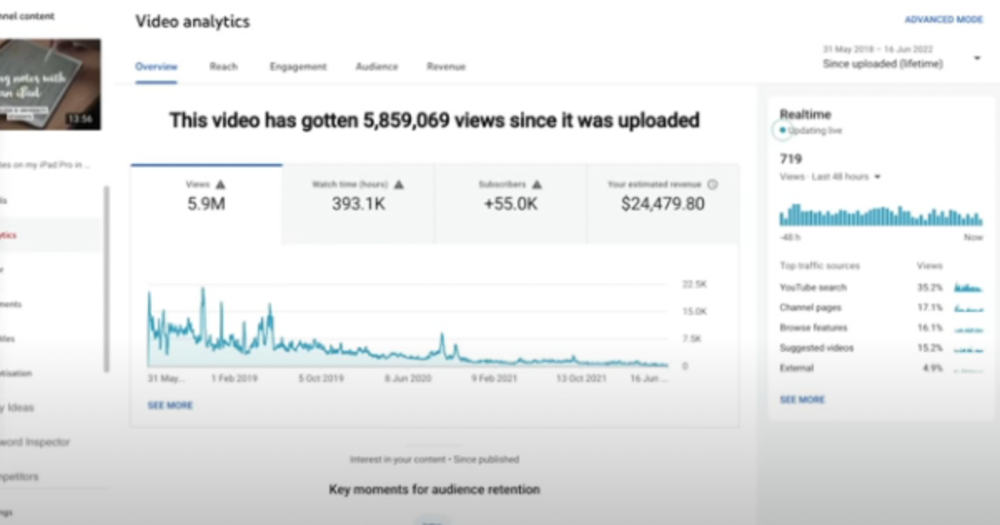

Ali's $1,054,053.74 YouTube Adsense haul.

YouTuber, entrepreneur, and former doctor Ali Abdaal. He began filming productivity and financial videos in 2017. Ali Abdaal has 3 million YouTube subscribers and has crossed $1 million in AdSense revenue. Crazy, no?

Ali will share the revenue of his top 5 youtube videos, things he's learned that you can apply to your side hustle, and how many views it takes to make a livelihood off youtube.

First, "The Long Game."

All good things take time to bear fruit. Compounding improves everything. Long-term work yields better returns. Ali made his first dollar after nine months and 85 videos.

Second, "One piece of content can transform your life, but you never know which one."

Had he abandoned YouTube at 84 videos without making any money, he wouldn't have filmed the 85th video that altered everything.

Third Lesson: Your Industry Choice Can Multiply.

The industry or niche you target as a business owner or side hustler can have a major impact on how much money you make.

Here are the top 5 videos.

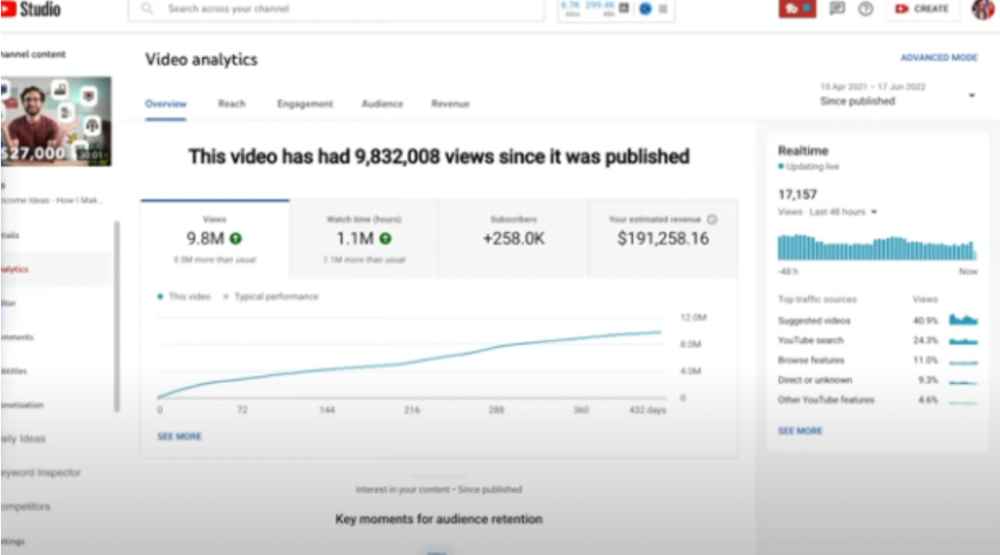

1) 9.8m views: $191,258.16 for 9 passive income ideas

Ali made 2 points.

We should consider YouTube videos digital assets. They're investments, which make us money. His investments are yielding passive income.

Investing extra time and effort in your films can pay off.

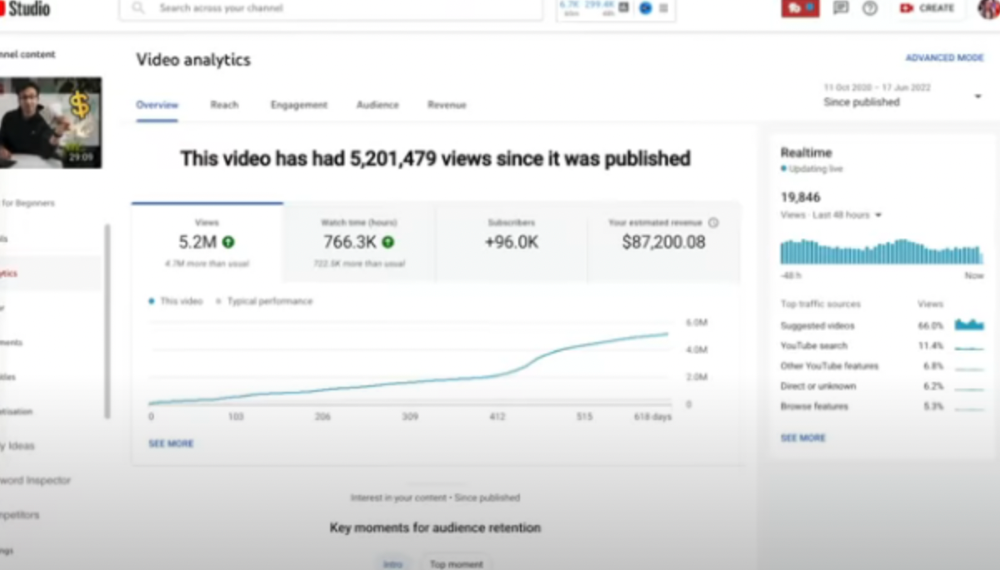

2) How to Invest for Beginners — 5.2m Views: $87,200.08.

This video did poorly in the first several weeks after it was published; it was his tenth poorest performer. Don't worry about things you can't control. This applies to life, not just YouTube videos.

He stated we constantly have anxieties, fears, and concerns about things outside our control, but if we can find that line, life is easier and more pleasurable.

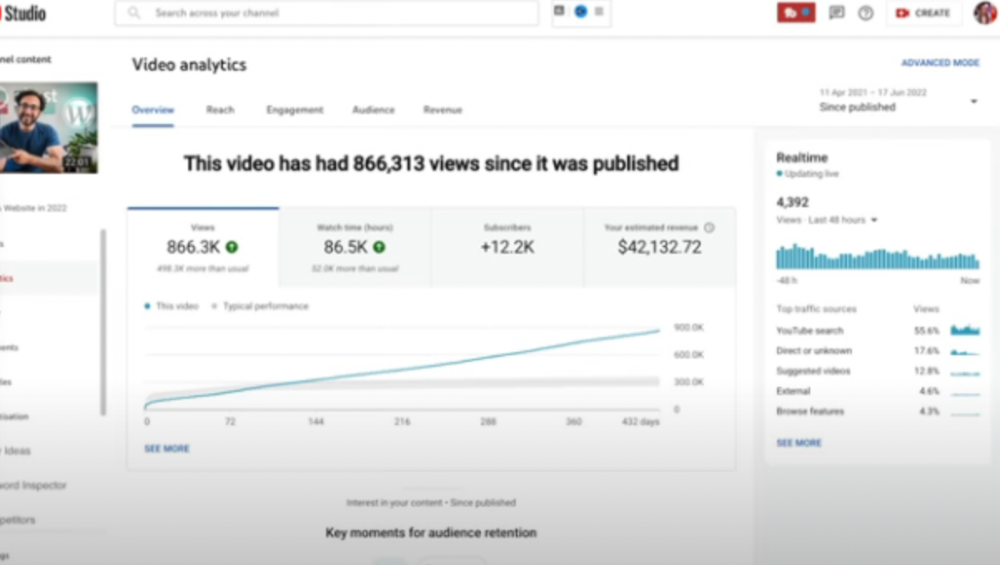

3) How to Build a Website in 2022— 866.3k views: $42,132.72.

The RPM was $48.86 per thousand views, making it his highest-earning video. Squarespace, Wix, and other website builders are trying to put ads on it and competing against one other, so ad rates go up.

Because it was beyond his niche, Ali almost didn't make the video. He made the video because he wanted to help at least one person.

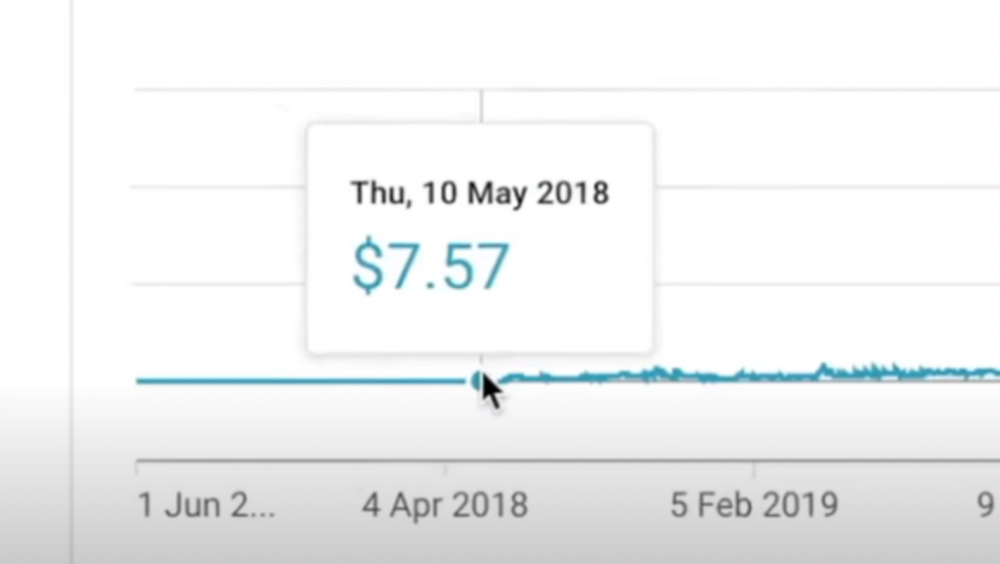

4) How I take notes on my iPad in medical school — 5.9m views: $24,479.80

85th video. It's the video that affected Ali's YouTube channel and his life the most. The video's success wasn't certain.

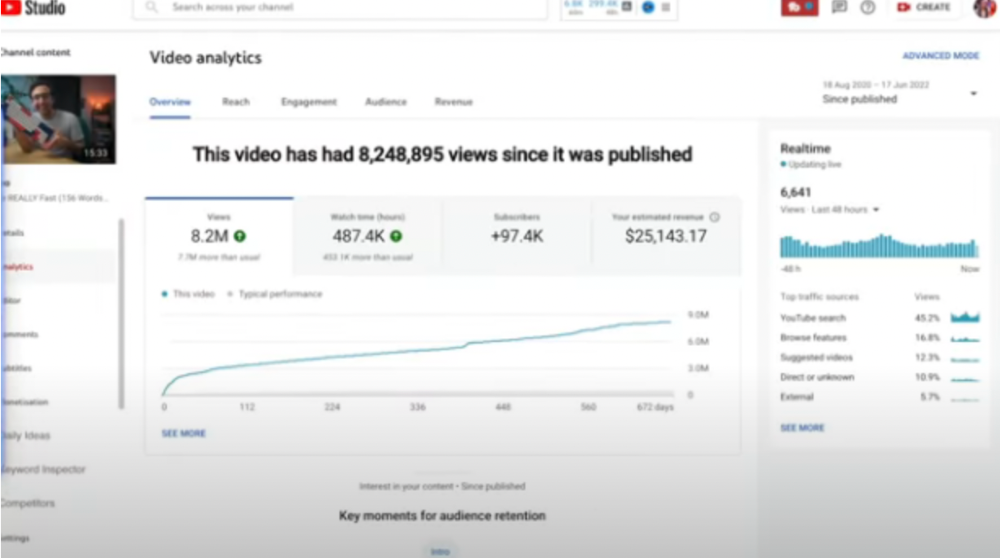

5) How I Type Fast 156 Words Per Minute — 8.2M views: $25,143.17

Ali didn't know this video would perform well; he made it because he can type fast and has been practicing for 10 years. So he made a video with his best advice.

How many views to different wealth levels?

It depends on geography, niche, and other monetization sources. To keep things simple, he would solely utilize AdSense.

How many views to generate money?

To generate money on Youtube, you need 1,000 subscribers and 4,000 hours of view time. How much work do you need to make pocket money?

Ali's first 1,000 subscribers took 52 videos and 6 months. The typical channel with 1,000 subscribers contains 152 videos, according to Tubebuddy. It's time-consuming.

After monetizing, you'll need 15,000 views/month to make $5-$10/day.

How many views to go part-time?

Say you make $35,000/year at your day job. If you work 5 days/week, you make $7,000/year each day. If you want to drop down from 5 days to 4 days/week, you need to make an extra $7,000/year from YouTube, or $600/month.

What's the quit-your-job budget?

Silicon Valley Girl is in a highly successful niche targeting tech-focused folks in the west. When her channel had 500k views/month, she made roughly $3,000/month or $47,000/year, enough to quit your work.

Marina has another 1.5m subscriber channel in Russia, which has a lower rpm because fewer corporations advertise there than in the west. 2.3 million views/month is $4,000/month or $50,000/year, enough to quit your employment.

Marina is an intriguing example because she has three YouTube channels with the same skills, but one is 16x more profitable due to the niche she chose.

In Ali's case, he made 100+ videos when his channel was producing enough money to quit his job, roughly $4,000/month.

How many views make you rich?

Depending on how you define rich. Ali felt prosperous with over $100,000/year and 3–5m views/month.

Conclusion

YouTubers and artists don't treat their work like a company, which is a mistake. Businesses have been attempting to figure this out for decades, if not centuries.

We can learn from the business world how to monetize YouTube, Instagram, and Tiktok and make them into sustainable enterprises where we can hire people and delegate tasks.

Bonus

Watch Ali's video explaining all this:

This post is a summary. Read the full article here

Nick Nolan

3 years ago

In five years, starting a business won't be hip.

People are slowly recognizing entrepreneurship's downside.

Growing up, entrepreneurship wasn't common. High school class of 2012 had no entrepreneurs.

Businesses were different.

They had staff and a lengthy history of achievement.

I never wanted a business. It felt unattainable. My friends didn't care.

Weird.

People desired degrees to attain good jobs at big companies.

When graduated high school:

9 out of 10 people attend college

Earn minimum wage (7%) working in a restaurant or retail establishment

Or join the military (3%)

Later, entrepreneurship became a thing.

2014-ish

I was in the military and most of my high school friends were in college, so I didn't hear anything.

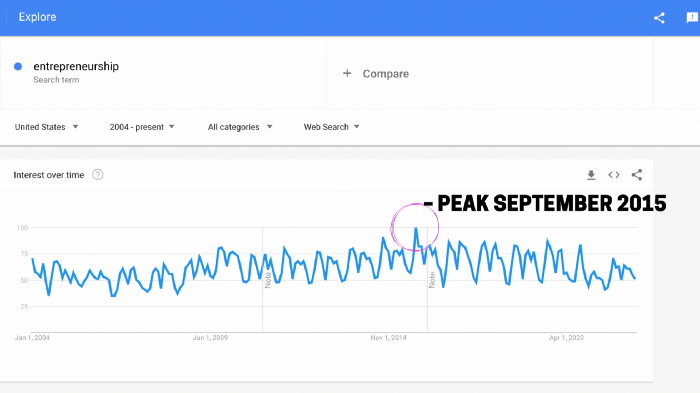

Entrepreneurship soared in 2015, according to Google Trends.

Then more individuals were interested. Entrepreneurship went from unusual to cool.

In 2015, it was easier than ever to build a website, run Facebook advertisements, and achieve organic social media reach.

There were several online business tools.

You didn't need to spend years or money figuring it out. Most entry barriers were gone.

Everyone wanted a side gig to escape the 95.

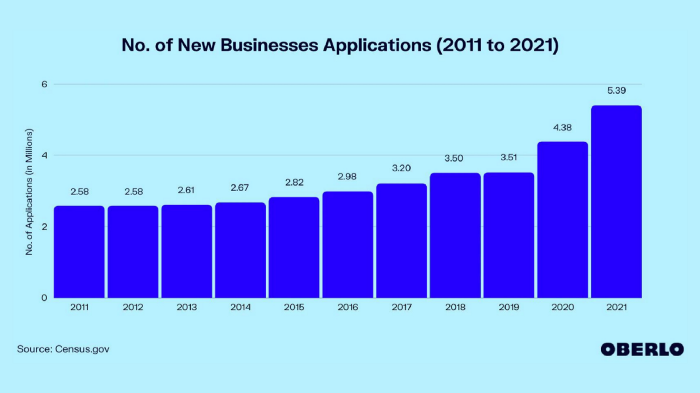

Small company applications have increased during the previous 10 years.

2011-2014 trend continues.

2015 adds 150,000 applications. 2016 adds 200,000. Plus 300,000 in 2017.

The graph makes it look little, but that's a considerable annual spike with no indications of stopping.

By 2021, new business apps had doubled.

Entrepreneurship will return to its early 2010s level.

I think we'll go backward in 5 years.

Entrepreneurship is half as popular as it was in 2015.

In the late 2020s and 30s, entrepreneurship will again be obscure.

Entrepreneurship's decade-long splendor is fading. People will cease escaping 9-5 and launch fewer companies.

That’s not a bad thing.

I think people have a rose-colored vision of entrepreneurship. It's fashionable. People feel that they're missing out if they're not entrepreneurial.

Reality is showing up.

People say on social media, "I knew starting a business would be hard, but not this hard."

More negative posts on entrepreneurship:

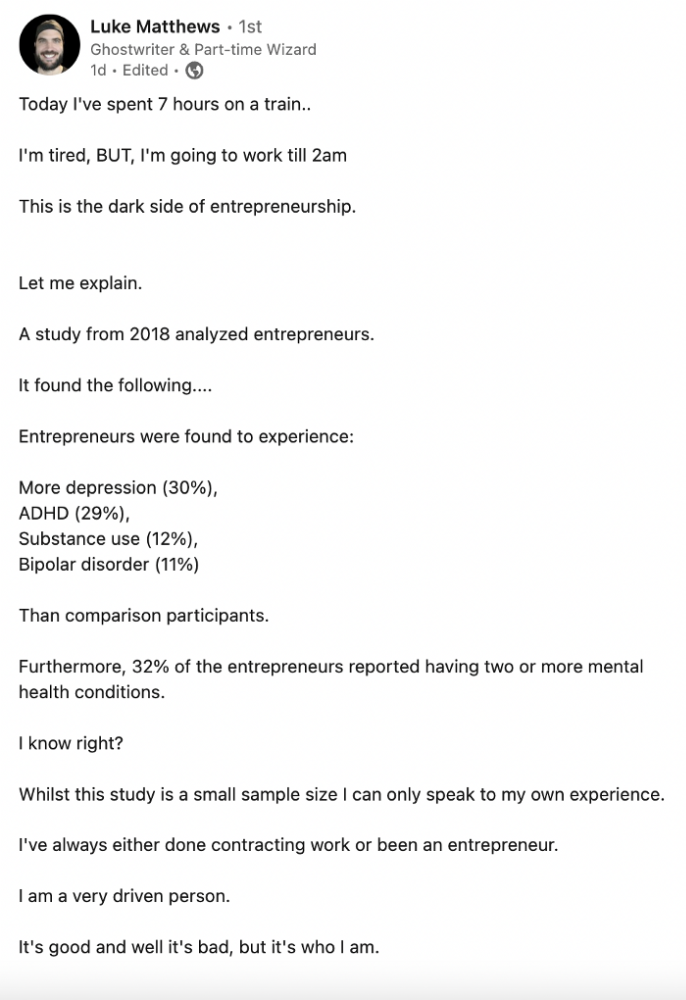

Luke adds:

Is being an entrepreneur ‘healthy’? I don’t really think so. Many like Gary V, are not role models for a well-balanced life. Despite what feel-good LinkedIn tells you the odds are against you as an entrepreneur. You have to work your face off. It’s a tough but rewarding lifestyle. So maybe let’s stop glorifying it because it takes a lot of (bleepin) work to survive a pandemic, mental health battles, and a competitive market.

Entrepreneurship is no longer a pipe dream.

It’s hard.

I went full-time in March 2020. I was done by April 2021. I had a good-paying job with perks.

When that fell through (on my start date), I had to continue my entrepreneurial path. I needed money by May 1 to pay rent.

Entrepreneurship isn't as great as many think.

Entrepreneurship is a serious business.

If you have a 9-5, the grass isn't greener here. Most people aren't telling the whole story when they post on social media or quote successful entrepreneurs.

People prefer to communicate their victories than their defeats.

Is this a bad thing?

I don’t think so.

Over the previous decade, entrepreneurship went from impossible to the finest thing ever.

It peaked in 2020-21 and is returning to reality.

Startups aren't for everyone.

If you like your job, don't quit.

Entrepreneurship won't amaze people if you quit your job.

It's irrelevant.

You're doomed.

And you'll probably make less money.

If you hate your job, quit. Change jobs and bosses. Changing jobs could net you a greater pay or better perks.

When you go solo, your paycheck and perks vanish. Did I mention you'll fail, sleep less, and stress more?

Nobody will stop you from pursuing entrepreneurship. You'll face several challenges.

Possibly.

Entrepreneurship may be romanticized for years.

Based on what I see from entrepreneurs on social media and trends, entrepreneurship is challenging and few will succeed.

You might also like

Enrique Dans

3 years ago

You may not know about The Merge, yet it could change society

Ethereum is the second-largest cryptocurrency. The Merge, a mid-September event that will convert Ethereum's consensus process from proof-of-work to proof-of-stake if all goes according to plan, will be a game changer.

Why is Ethereum ditching proof-of-work? Because it can. We're talking about a fully functioning, open-source ecosystem with a capacity for evolution that other cryptocurrencies lack, a change that would allow it to scale up its performance from 15 transactions per second to 100,000 as its blockchain is used for more and more things. It would reduce its energy consumption by 99.95%. Vitalik Buterin, the system's founder, would play a less active role due to decentralization, and miners, who validated transactions through proof of work, would be far less important.

Why has this conversion taken so long and been so cautious? Because it involves modifying a core process while it's running to boost its performance. It requires running the new mechanism in test chains on an ever-increasing scale, assessing participant reactions, and checking for issues or restrictions. The last big test was in early June and was successful. All that's left is to converge the mechanism with the Ethereum blockchain to conclude the switch.

What's stopping Bitcoin, the leader in market capitalization and the cryptocurrency that began blockchain's appeal, from doing the same? Satoshi Nakamoto, whoever he or she is, departed from public life long ago, therefore there's no community leadership. Changing it takes a level of consensus that is impossible to achieve without strong leadership, which is why Bitcoin's evolution has been sluggish and conservative, with few modifications.

Secondly, The Merge will balance the consensus mechanism (proof-of-work or proof-of-stake) and the system decentralization or centralization. Proof-of-work prevents double-spending, thus validators must buy hardware. The system works, but it requires a lot of electricity and, as it scales up, tends to re-centralize as validators acquire more hardware and the entire network activity gets focused in a few nodes. Larger operations save more money, which increases profitability and market share. This evolution runs opposed to the concept of decentralization, and some anticipate that any system that uses proof of work as a consensus mechanism will evolve towards centralization, with fewer large firms able to invest in efficient network nodes.

Yet radical bitcoin enthusiasts share an opposite argument. In proof-of-stake, transaction validators put their funds at stake to attest that transactions are valid. The algorithm chooses who validates each transaction, giving more possibilities to nodes that put more coins at stake, which could open the door to centralization and government control.

In both cases, we're talking about long-term changes, but Bitcoin's proof-of-work has been evolving longer and seems to confirm those fears, while proof-of-stake is only employed in coins with a minuscule volume compared to Ethereum and has no predictive value.

As of mid-September, we will have two significant cryptocurrencies, each with a different consensus mechanisms and equally different characteristics: one is intrinsically conservative and used only for economic transactions, while the other has been evolving in open source mode, and can be used for other types of assets, smart contracts, or decentralized finance systems. Some even see it as the foundation of Web3.

Many things could change before September 15, but The Merge is likely to be a turning point. We'll have to follow this closely.

Joseph Mavericks

3 years ago

5 books my CEO read to make $30M

Offices without books are like bodies without souls.

After 10 years, my CEO sold his company for $30 million. I've shared many of his lessons on medium. You could ask him anything at his always-open office. He also said we could use his office for meetings while he was away. When I used his office for work, I was always struck by how many books he had.

Books are useful in almost every aspect of learning. Building a business, improving family relationships, learning a new language, a new skill... Books teach, guide, and structure. Whether fiction or nonfiction, books inspire, give ideas, and develop critical thinking skills.

My CEO prefers non-fiction and attends a Friday book club. This article discusses 5 books I found in his office that impacted my life/business. My CEO sold his company for $30 million, but I've built a steady business through blogging and video making.

I recall events and lessons I learned from my CEO and how they relate to each book, and I explain how I applied the book's lessons to my business and life.

Note: This post has no affiliate links.

1. The One Thing — Gary Keller

Gary Keller, a real estate agent, wanted more customers. So he and his team brainstormed ways to get more customers. They decided to write a bestseller about work and productivity. The more people who saw the book, the more customers they'd get.

Gary Keller focused on writing the best book on productivity, work, and efficiency for months. His business experience. Keller's business grew after the book's release.

The author summarizes the book in one question.

"What's the one thing that will make everything else easier or unnecessary?"

When I started my blog and business alongside my 9–5, I quickly identified my one thing: writing. My business relied on it, so it had to be great. Without writing, there was no content, traffic, or business.

My CEO focused on funding when he started his business. Even in his final years, he spent a lot of time on the phone with investors, either to get more money or to explain what he was doing with it. My CEO's top concern was money, and the other super important factors were handled by separate teams.

Product tech and design

Incredible customer support team

Excellent promotion team

Profitable sales team

My CEO didn't always focus on one thing and ignore the rest. He was on all of those teams when I started my job. He'd start his day in tech, have lunch with marketing, and then work in sales. He was in his office on the phone at night.

He eventually realized his errors. Investors told him he couldn't do everything for the company. If needed, he had to change internally. He learned to let go, mind his own business, and focus for the next four years. Then he sold for $30 million.

The bigger your project/company/idea, the more you'll need to delegate to stay laser-focused. I started something new every few months for 10 years before realizing this. So much to do makes it easy to avoid progress. Once you identify the most important aspect of your project and enlist others' help, you'll be successful.

2. Eat That Frog — Brian Tracy

The author quote sums up book's essence:

Mark Twain said that if you eat a live frog in the morning, it's probably the worst thing that will happen to you all day. Your "frog" is the biggest, most important task you're most likely to procrastinate on.

"Frog" and "One Thing" are both about focusing on what's most important. Eat That Frog recommends doing the most important task first thing in the morning.

I shared my CEO's calendar in an article 10 months ago. Like this:

CEO's average week (some information crossed out for confidentiality)

Notice anything about 8am-8:45am? Almost every day is the same (except Friday). My CEO started his day with a management check-in for 2 reasons:

Checking in with all managers is cognitively demanding, and my CEO is a morning person.

In a young startup where everyone is busy, the morning management check-in was crucial. After 10 am, you couldn't gather all managers.

When I started my blog, writing was my passion. I'm a morning person, so I woke up at 6 am and started writing by 6:30 am every day for a year. This allowed me to publish 3 articles a week for 52 weeks to build my blog and audience. After 2 years, I'm not stopping.

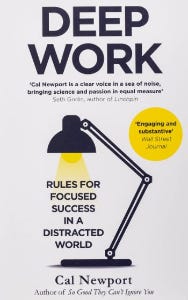

3. Deep Work — Cal Newport

Deep work is focusing on a cognitively demanding task without distractions (like a morning management meeting). It helps you master complex information quickly and produce better results faster. In a competitive world 10 or 20 years ago, focus wasn't a huge advantage. Smartphones, emails, and social media made focus a rare, valuable skill.

Most people can't focus anymore. Screens light up, notifications buzz, emails arrive, Instagram feeds... Many people don't realize they're interrupted because it's become part of their normal workflow.

Cal Newport mentions Bill Gates' "Think Weeks" in Deep Work.

Microsoft CEO Bill Gates would isolate himself (often in a lakeside cottage) twice a year to read and think big thoughts.

Inside Bill's Brain on Netflix shows Newport's lakeside cottage. I've always wanted a lakeside cabin to work in. My CEO bought a lakehouse after selling his company, but now he's retired.

As a company grows, you can focus less on it. In a previous section, I said investors told my CEO to get back to basics and stop micromanaging. My CEO's commitment and ability to get work done helped save the company. His deep work and new frameworks helped us survive the corona crisis (more on this later).

The ability to deep work will be a huge competitive advantage in the next century. Those who learn to work deeply will likely be successful while everyone else is glued to their screens, Bluetooth-synced to their watches, and playing Candy Crush on their tablets.

4. The 7 Habits of Highly Effective People — Stephen R. Covey

It took me a while to start reading this book because it seemed like another shallow self-help bible. I kept finding this book when researching self-improvement. I tried it because it was everywhere.

Stephen Covey taught me 2 years ago to have a personal mission statement.

A 7 Habits mission statement describes the life you want to lead, the character traits you want to embody, and the impact you want to have on others. shortform.com

I've had many lunches with my CEO and talked about Vipassana meditation and Sunday forest runs, but I've never seen his mission statement. I'm sure his family is important, though. In the above calendar screenshot, you can see he always included family events (in green) so we could all see those time slots. We couldn't book him then. Although he never spent as much time with his family as he wanted, he always made sure to be on time for his kid's birthday rather than a conference call.

My CEO emphasized his company's mission. Your mission statement should answer 3 questions.

What does your company do?

How does it do it?

Why does your company do it?

As a graphic designer, I had to create mission-statement posters. My CEO hung posters in each office.

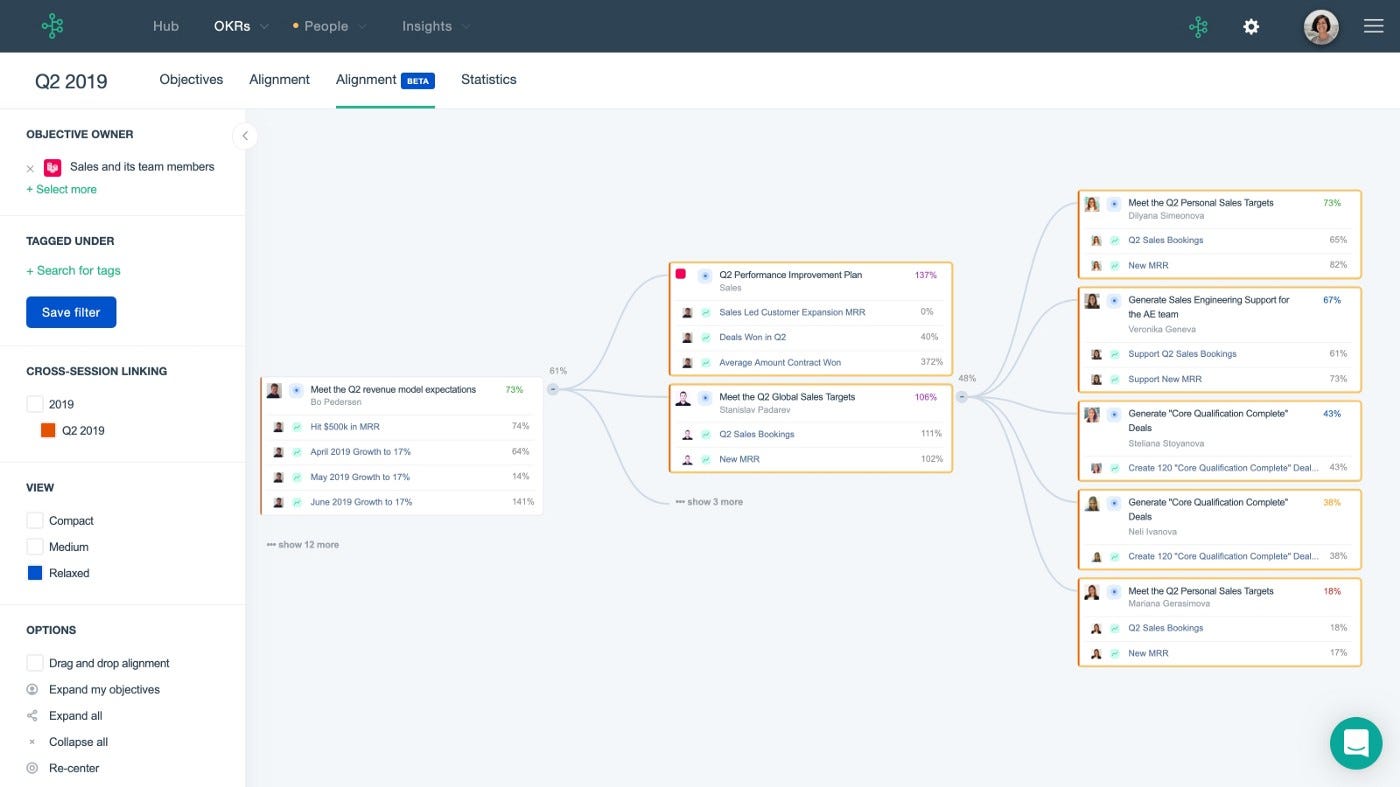

5. Measure What Matters — John Doerr

This book is about Andrew Grove's OKR strategy, developed in 1968. When he joined Google's early investors board, he introduced it to Larry Page and Sergey Brin. Google still uses OKR.

Objective Key Results

Objective: It explains your goals and desired outcome. When one goal is reached, another replaces it. OKR objectives aren't technical, measured, or numerical. They must be clear.

Key Result should be precise, technical, and measurable, unlike the Objective. It shows if the Goal is being worked on. Time-bound results are quarterly or yearly.

Our company almost sank several times. Sales goals were missed, management failed, and bad decisions were made. On a Monday, our CEO announced we'd implement OKR to revamp our processes.

This was a year before the pandemic, and I'm certain we wouldn't have sold millions or survived without this change. This book impacted the company the most, not just management but all levels. Organization and transparency improved. We reached realistic goals. Happy investors. We used the online tool Gtmhub to implement OKR across the organization.

My CEO's company went from near bankruptcy to being acquired for $30 million in 2 years after implementing OKR.

I hope you enjoyed this booklist. Here's a recap of the 5 books and the lessons I learned from each.

The 7 Habits of Highly Effective People — Stephen R. Covey

Have a mission statement that outlines your goals, character traits, and impact on others.

Deep Work — Cal Newport

Focus is a rare skill; master it. Deep workers will succeed in our hyper-connected, distracted world.

The One Thing — Gary Keller

What can you do that will make everything else easier or unnecessary? Once you've identified it, focus on it.

Eat That Frog — Brian Tracy

Identify your most important task the night before and do it first thing in the morning. You'll have a lighter day.

Measure What Matters — John Doerr

On a timeline, divide each long-term goal into chunks. Divide those slices into daily tasks (your goals). Time-bound results are quarterly or yearly. Objectives aren't measured or numbered.

Thanks for reading. Enjoy the ride!

Jano le Roux

3 years ago

Quit worrying about Twitter: Elon moves quickly before refining

Elon's rides start rough, but then...

Elon Musk has never been so hated.

They don’t get Elon.

He began using PayPal in this manner.

He began with SpaceX in a similar manner.

He began with Tesla in this manner.

Disruptive.

Elon had rocky starts. His creativity requires it. Just like writing a first draft.

His fastest way to find the way is to avoid it.

PayPal's pricey launch

PayPal was a 1999 business flop.

They were considered insane.

Elon and his co-founders had big plans for PayPal. They adopted the popular philosophy of the time, exchanging short-term profit for growth, and pulled off a miracle just before the bubble burst.

PayPal was created as a dollar alternative. Original PayPal software allowed PalmPilot money transfers. Unfortunately, there weren't enough PalmPilot users.

Since everyone had email, the company emailed payments. Costs rose faster than sales.

The startup wanted to get a million subscribers by paying $10 to sign up and $10 for each referral. Elon thought the price was fair because PayPal made money by charging transaction fees. They needed to make money quickly.

A Wall Street Journal article valuing PayPal at $500 million attracted investors. The dot-com bubble burst soon after they rushed to get financing.

Musk and his partners sold PayPal to eBay for $1.5 billion in 2002. Musk's most successful company was PayPal.

SpaceX's start-up error

Elon and his friends bought a reconditioned ICBM in Russia in 2002.

He planned to invest much of his wealth in a stunt to promote NASA and space travel.

Many called Elon crazy.

The goal was to buy a cheap Russian rocket to launch mice or plants to Mars and return them. He thought SpaceX would revive global space interest. After a bad meeting in Moscow, Elon decided to build his own rockets to undercut launch contracts.

Then SpaceX was founded.

Elon’s plan was harder than expected.

Explosions followed explosions.

Millions lost on cargo.

Millions lost on the rockets.

Investors thought Elon was crazy, but he wasn't.

NASA's biggest competitor became SpaceX. NASA hired SpaceX to handle many of its missions.

Tesla's shaky beginning

Tesla began shakily.

Clients detested their roadster.

They continued to miss deadlines.

Lotus would handle the car while Tesla focused on the EV component, easing Tesla's entry. The business experienced elegance creep. Modifying specific parts kept the car from getting worse.

Cost overruns, delays, and other factors changed the Elise-like car's appearance. Only 7% of the Tesla Roadster's parts matched its Lotus twin.

Tesla was about to die.

Elon saved the mess as CEO.

He fired 25% of the workforce to reduce costs.

Elon Musk transformed Tesla into the world's most valuable automaker by running it like a startup.

Tesla hasn't spent a dime on advertising. They let the media do the talking by investing in innovation.

Elon sheds. Elon tries. Elon learns. Elon refines.

Twitter doesn't worry me.

The media is shocked. I’m not.

This is just Elon being Elon.

Elon makes lean.

Elon tries new things.

Elon listens to feedback.

Elon refines.

Besides Twitter will always be Twitter.