More on Entrepreneurship

Tim Denning

4 months ago

Elon Musk’s Rich Life Is a Nightmare

I'm sure you haven't read about Elon's other side.

Elon divorced badly.

Nobody's surprised.

Imagine you're a parent. Someone isn't home year-round. What's next?

That’s what happened to YOLO Elon.

He can do anything. He can intervene in wars, shoot his mouth off, bang anyone he wants, avoid tax, make cool tech, buy anything his ego desires, and live anywhere exotic.

Few know his billionaire backstory. I'll tell you so you don't worship his lifestyle. It’s a cult.

Only his career succeeds. His life is a nightmare otherwise.

Psychopaths' schedule

Elon has said he works 120-hour weeks.

As he told the reporter about his job, he choked up, which was unusual for him.

His crazy workload and lack of sleep forced him to scold innocent Wall Street analysts. Later, he apologized.

In the same interview, he admits he hadn't taken more than a week off since 2001, when he was bedridden with malaria. Elon stays home after a near-death experience.

He's rarely outside.

Elon says he sometimes works 3 or 4 days straight.

He admits his crazy work schedule has cost him time with his kids and friends.

Elon's a slave

Elon's birthday description made him emotional.

Elon worked his entire birthday.

"No friends, nothing," he said, stuttering.

His brother's wedding in Catalonia was 48 hours after his birthday. That meant flying there from Tesla's factory prison.

He arrived two hours before the big moment, barely enough time to eat and change, let alone see his brother.

Elon had to leave after the bouquet was tossed to a crowd of billionaire lovers. He missed his brother's first dance with his wife.

Shocking.

He went straight to Tesla's prison.

The looming health crisis

Elon was asked if overworking affected his health.

Not great. Friends are worried.

Now you know why Elon tweets dumb things. Working so hard has probably caused him mental health issues.

Mental illness removed my reality filter. You do stupid things because you're tired.

Astronauts pelted Elon

Elon's overwork isn't the first time his life has made him emotional.

When asked about Neil Armstrong and Gene Cernan criticizing his SpaceX missions, he got emotional. Elon's heroes.

They're why he started the company, and they mocked his work. In another interview, we see how Elon’s business obsession has knifed him in the heart.

Once you have a company, you must feed, nurse, and care for it, even if it destroys you.

"Yep," Elon says, tearing up.

In the same interview, he's asked how Tesla survived the 2008 recession. Elon stopped the interview because he was crying. When Tesla and SpaceX filed for bankruptcy in 2008, he nearly had a nervous breakdown. He called them his "children."

All the time, he's risking everything.

Jack Raines explains best:

Too much money makes you a slave to your net worth.

Elon's emotions are admirable. It's one of the few times he seems human, not like an alien Cyborg.

Stop idealizing Elon's lifestyle

Building a side business that becomes a billion-dollar unicorn startup is a nightmare.

"Billionaire" means financially wealthy but otherwise broke. A rich life includes more than business and money.

This post is a summary. Read full article here

Carter Kilmann

2 months ago

I finally achieved a $100K freelance income. Here's what I wish I knew.

We love round numbers, don't we? $100,000 is a frequent freelancing milestone. You feel like six figures means you're doing something properly.

You've most likely already conquered initial freelancing challenges like finding clients, setting fair pricing, coping with criticism, getting through dry spells, managing funds, etc.

You think I must be doing well. Last month, my freelance income topped $100,000.

That may not sound impressive considering I've been freelancing for 2.75 years, but I made 30% of that in the previous four months, which is crazy.

Here are the things I wish I'd known during the early days of self-employment that would have helped me hit $100,000 faster.

1. The Volatility of Freelancing Will Stabilize.

Freelancing is risky. No surprise.

Here's an example.

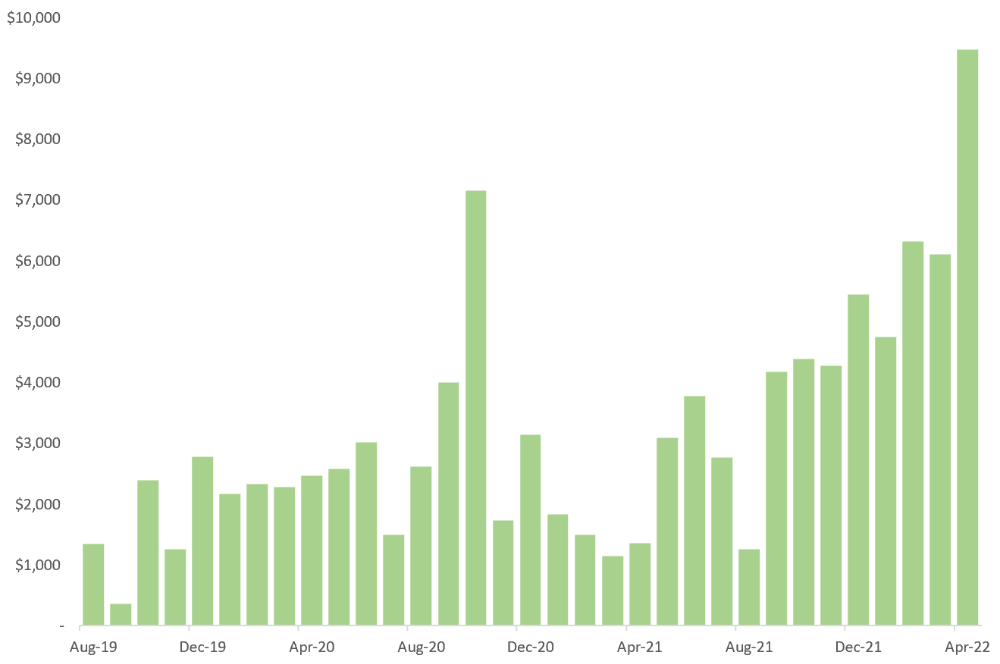

October 2020 was my best month, earning $7,150. Between $4,004 in September and $1,730 in November. Unsteady.

Freelancing is regrettably like that. Moving clients. Content requirements change. Allocating so much time to personal pursuits wasn't smart, but yet.

Stabilizing income takes time. Consider my rolling three-month average income since I started freelancing. My three-month average monthly income. In February, this metric topped $5,000. Now, it's in the mid-$7,000s, but it took a while to get there.

Finding freelance gigs that provide high pay, high volume, and recurring revenue is difficult. But it's not impossible.

TLDR: Don't expect a steady income increase at first. Be patient.

2. You Have More Value Than You Realize.

Writing is difficult. Assembling words, communicating a message, and provoking action are a puzzle.

People are willing to pay you for it because they can't do what you do or don't have enough time.

Keeping that in mind can have huge commercial repercussions.

When talking to clients, don't tiptoe. You can ignore ridiculous deadlines. You don't have to take unmanageable work.

You solve an issue, so make sure you get rightly paid.

TLDR: Frame services as problem-solutions. This will let you charge more and set boundaries.

3. Increase Your Prices.

I studied hard before freelancing. I read articles and watched videos about writing businesses.

I didn't want to work for pennies. Despite this clarity, I had no real strategy to raise my rates.

I then luckily stumbled into higher-paying work. We discussed fees and hours with a friend who launched a consulting business. It's subjective and speculative because value isn't standardized. One company may laugh at your charges. If your solution helps them create a solid ROI, another client may pay $200 per hour.

When he told me he charged his first client $125 per hour, I thought, Why not?

A new-ish client wanted to discuss a huge forthcoming project, so I raised my rates. They knew my worth, so they didn't blink when I handed them my new number.

TLDR: Increase rates periodically (e.g., every 6 or 12 months). Writing skill develops with practice. You'll gain value over time.

4. Remember Your Limits.

If you can squeeze additional time into a day, let me know. I can't manipulate time yet.

We all have time and economic limits. You could theoretically keep boosting rates, but your prospect pool diminishes. Outsourcing and establishing extra revenue sources might boost monthly revenues.

I've devoted a lot of time to side projects (hopefully extra cash sources), but I've only just started outsourcing. I wish I'd tried this earlier.

If you can discover good freelancers, you can grow your firm without sacrificing time.

TLDR: Expand your writing network immediately. You'll meet freelancers who understand your daily grind and locate reference sources.

5. Every Action You Take Involves an Investment. Be Certain to Select Correctly.

Investing in stocks or crypto requires paying money, right?

In business, time is your currency (and maybe money too). Your daily habits define your future. If you spend time collecting software customers and compiling content in the space, you'll end up with both. So be sure.

I only spend around 50% of my time on client work, therefore it's taken me nearly three years to earn $100,000. I spend the remainder of my time on personal projects including a freelance book, an investment newsletter, and this blog.

Why? I don't want to rely on client work forever. So, I'm working on projects that could pay off later and help me live a more fulfilling life.

TLDR: Consider the long-term impact of your time commitments, and don't overextend. You can only make so many "investments" in a given time.

6. LinkedIn Is an Endless Mine of Gold. Use It.

Why didn't I use LinkedIn earlier?

I designed a LinkedIn inbound lead strategy that generates 12 leads a month and a few high-quality offers. As a result, I've turned down good gigs. Wish I'd begun earlier.

If you want to create a freelance business, prioritize LinkedIn. Too many freelancers ignore this site, missing out on high-paying clients. Build your profile, post often, and interact.

TLDR: Study LinkedIn's top creators. Once you understand their audiences, start posting and participating daily.

For 99% of People, Freelancing is Not a Get-Rich-Quick Scheme.

Here's a list of things I wish I'd known when I started freelancing.

Although it is erratic, freelancing eventually becomes stable.

You deserve respect and discretion over how you conduct business because you have solved an issue.

Increase your charges rather than undervaluing yourself. If necessary, add a reminder to your calendar. Your worth grows with time.

In order to grow your firm, outsource jobs. After that, you can work on the things that are most important to you.

Take into account how your present time commitments may affect the future. It will assist in putting things into perspective and determining whether what you are doing is indeed worthwhile.

Participate on LinkedIn. You'll get better jobs as a result.

If I could give my old self (and other freelancers) one bit of advice, it's this:

Despite appearances, you're making progress.

Each job. Tweets. Newsletters. Progress. It's simpler to see retroactively than in the moment.

Consistent, intentional work pays off. No good comes from doing nothing. You must set goals, divide them into time-based targets, and then optimize your calendar.

Then you'll understand you're doing well.

Want to learn more? I’ll teach you.

Stephen Moore

2 months ago

Adam Neumanns is working to create the future of living in a classic example of a guy failing upward.

The comeback tour continues…

First, he founded a $47 billion co-working company (sorry, a “tech company”).

He established WeLive to disrupt apartment life.

Then he created WeGrow, a school that tossed aside the usual curriculum to feed children's souls and release their potential.

He raised the world’s consciousness.

Then he blew it all up (without raising the world’s consciousness). (He bought a wave pool.)

Adam Neumann's WeWork business burned investors' money. The founder sailed off with unimaginable riches, leaving long-time employees with worthless stocks and the company bleeding money. His track record, which includes a failing baby clothing company, should have stopped investors cold.

Once the dust settled, folks went on. We forgot about the Neumanns! We forgot about the private jets, company retreats, many houses, and WeWork's crippling. In that moment, the prodigal son of entrepreneurship returned, choosing the blockchain as his industry. His homecoming tour began with Flowcarbon, which sold Goddess Nature Tokens to lessen companies' carbon footprints.

Did it work?

Of course not.

Despite receiving $70 million from Andreessen Horowitz's a16z, the project has been halted just two months after its announcement.

This triumph should lower his grade.

Neumann seems to have moved on and has another revolutionary idea for the future of living. Flow (not Flowcarbon) aims to help people live in flow and will launch in 2023. It's the classic Neumann pitch: lofty goals, yogababble, and charisma to attract investors.

It's a winning formula for one investment fund. a16z has backed the project with its largest single check, $350 million. It has a splash page and 3,000 rental units, but is valued at over $1 billion. The blog post praised Neumann for reimagining the office and leading a paradigm-shifting global company.

Flow's mission is to solve the nation's housing crisis. How? Idk. It involves offering community-centric services in apartment properties to the same remote workforce he once wooed with free beer and a pingpong table. Revolutionary! It seems the goal is to apply WeWork's goals of transforming physical spaces and building community to apartments to solve many of today's housing problems.

The elevator pitch probably sounded great.

At least a16z knows it's a near-impossible task, calling it a seismic shift. Marc Andreessen opposes affordable housing in his wealthy Silicon Valley town. As details of the project emerge, more investors will likely throw ethics and morals out the window to go with the flow, throwing money at a man known for burning through it while building toxic companies, hoping he can bank another fantasy valuation before it all crashes.

Insanity is repeating the same action and expecting a different result. Everyone on the Neumann hype train needs to sober up.

Like WeWork, this venture Won’tWork.

Like before, it'll cause a shitstorm.

You might also like

Sneaker News

5 months ago

This Month Will See The Release Of Travis Scott x Nike Footwear

Following the catastrophes at Astroworld, Travis Scott was swiftly vilified by both media outlets and fans alike, and the names who had previously supported him were quickly abandoned. Nike, on the other hand, remained silent, only delaying the release of La Flame's planned collaborations, such as the Air Max 1 and Air Trainer 1, indefinitely. While some may believe it is too soon for the artist to return to the spotlight, the Swoosh has other ideas, as Nice Kicks reveals that these exact sneakers will be released in May.

Both the Travis Scott x Nike Air Max 1 and the Travis Scott x Nike Air Trainer 1 are set to come in two colorways this month. Tinker Hatfield's renowned runner will meet La Flame's "Baroque Brown" and "Saturn Gold" make-ups, which have been altered with backwards Swooshes and outdoors-themed webbing. The high-top trainer is being customized with Hatfield's "Wheat" and "Grey Haze" palettes, both of which include zippers across the heel, co-branded patches, and other details.

See below for a closer look at the four footwear. TravisScott.com is expected to release the shoes on May 20th, according to Nice Kicks. Following that, on May 27th, Nike SNKRS will release the shoe.

Travis Scott x Nike Air Max 1 "Baroque Brown"

Release Date: 2022

Color: Baroque Brown/Lemon Drop/Wheat/Chile Red

Mens: $160

Style Code: DO9392-200

Pre-School: $85

Style Code: DN4169-200

Infant & Toddler: $70

Style Code: DN4170-200

Travis Scott x Nike Air Max 1 "Saturn Gold"

Release Date: 2022

Color: N/A

Mens: $160

Style Code: DO9392-700

Travis Scott x Nike Air Trainer 1 "Wheat"

Restock Date: May 27th, 2022 (Friday)

Original Release Date: May 20th, 2022 (Friday)

Color: N/A

Mens: $140

Style Code: DR7515-200

Travis Scott x Nike Air Trainer 1 "Grey Haze"

Restock Date: May 27th, 2022 (Friday)

Original Release Date: May 20th, 2022 (Friday)

Color: N/A

Mens: $140

Style Code: DR7515-001

Bloomberg

7 months ago

Expulsion of ten million Ukrainians

According to recent data from two UN agencies, ten million Ukrainians have been displaced.

The International Organization for Migration (IOM) estimates nearly 6.5 million Ukrainians have relocated. Most have fled the war zones around Kyiv and eastern Ukraine, including Dnipro, Zhaporizhzhia, and Kharkiv. Most IDPs have fled to western and central Ukraine.

Since Russia invaded on Feb. 24, 3.6 million people have crossed the border to seek refuge in neighboring countries, according to the latest UN data. While most refugees have fled to Poland and Romania, many have entered Russia.

Internally displaced figures are IOM estimates as of March 19, based on 2,000 telephone interviews with Ukrainians aged 18 and older conducted between March 9-16. The UNHCR compiled the figures for refugees to neighboring countries on March 21 based on official border crossing data and its own estimates. The UNHCR's top-line total is lower than the country totals because Romania and Moldova totals include people crossing between the two countries.

Sources: IOM, UNHCR

According to IOM estimates based on telephone interviews with a representative sample of internally displaced Ukrainians, over 53% of those displaced are women, and over 60% of displaced households have children.

Solomon Ayanlakin

1 month ago

Metrics for product management and being a good leader

Never design a product without explicit metrics and tracking tools.

Imagine driving cross-country without a dashboard. How do you know your school zone speed? Low gas? Without a dashboard, you can't monitor your car. You can't improve what you don't measure, as Peter Drucker said. Product managers must constantly enhance their understanding of their users, how they use their product, and how to improve it for optimum value. Customers will only pay if they consistently acquire value from your product.

I’m Solomon Ayanlakin. I’m a product manager at CredPal, a financial business that offers credit cards and Buy Now Pay Later services. Before falling into product management (like most PMs lol), I self-trained as a data analyst, using Alex the Analyst's YouTube playlists and DannyMas' virtual data internship. This article aims to help product managers, owners, and CXOs understand product metrics, give a methodology for creating them, and execute product experiments to enhance them.

☝🏽Introduction

Product metrics assist companies track product performance from the user's perspective. Metrics help firms decide what to construct (feature priority), how to build it, and the outcome's success or failure. To give the best value to new and existing users, track product metrics.

Why should a product manager monitor metrics?

to assist your users in having a "aha" moment

To inform you of which features are frequently used by users and which are not

To assess the effectiveness of a product feature

To aid in enhancing client onboarding and retention

To assist you in identifying areas throughout the user journey where customers are satisfied or dissatisfied

to determine the percentage of returning users and determine the reasons for their return

📈 What Metrics Ought a Product Manager to Monitor?

What indicators should a product manager watch to monitor product health? The metrics to follow change based on the industry, business stage (early, growth, late), consumer needs, and company goals. A startup should focus more on conversion, activation, and active user engagement than revenue growth and retention. The company hasn't found product-market fit or discovered what features drive customer value.

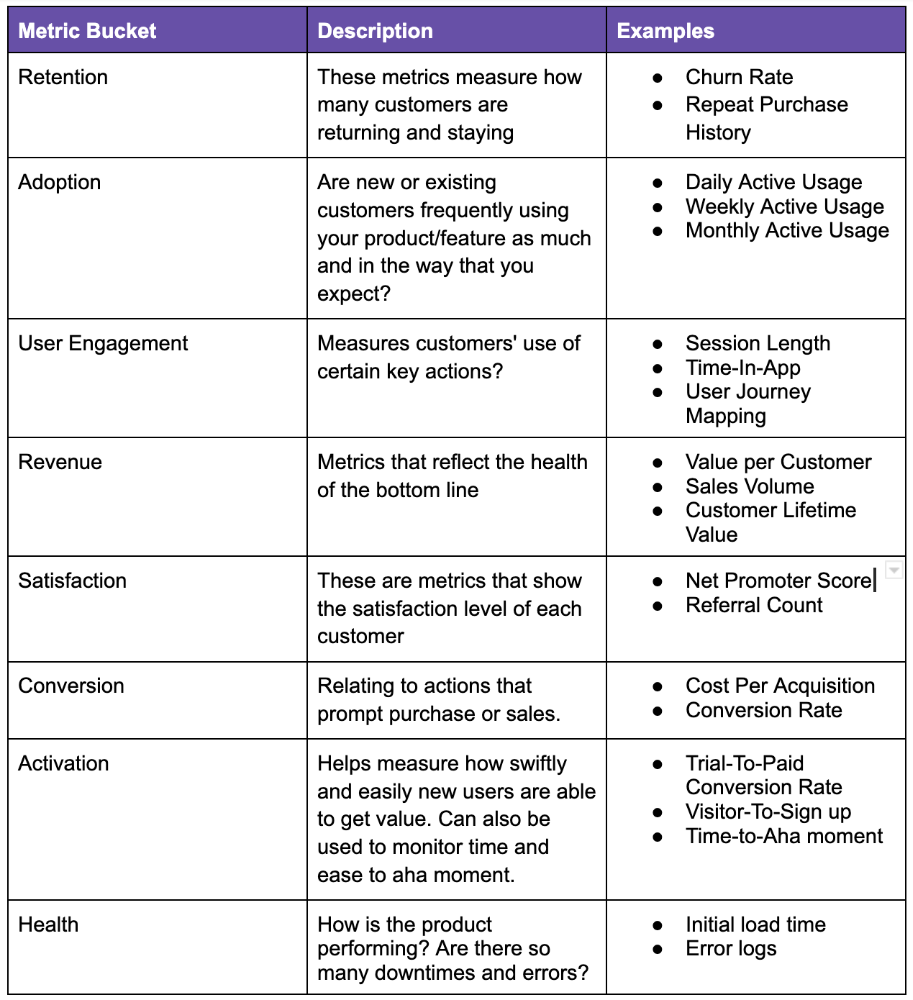

Depending on your use case, company goals, or business stage, here are some important product metric buckets:

All measurements shouldn't be used simultaneously. It depends on your business goals and what value means for your users, then selecting what metrics to track to see if they get it.

Some KPIs are more beneficial to track, independent of industry or customer type. To prevent recording vanity metrics, product managers must clearly specify the types of metrics they should track. Here's how to segment metrics:

The North Star Metric, also known as the Focus Metric, is the indicator and aid in keeping track of the top value you provide to users.

Primary/Level 1 Metrics: These metrics should either add to the north star metric or be used to determine whether it is moving in the appropriate direction. They are metrics that support the north star metric.

These measures serve as leading indications for your north star and Level 2 metrics. You ought to have been aware of certain problems with your L2 measurements prior to the North star metric modifications.

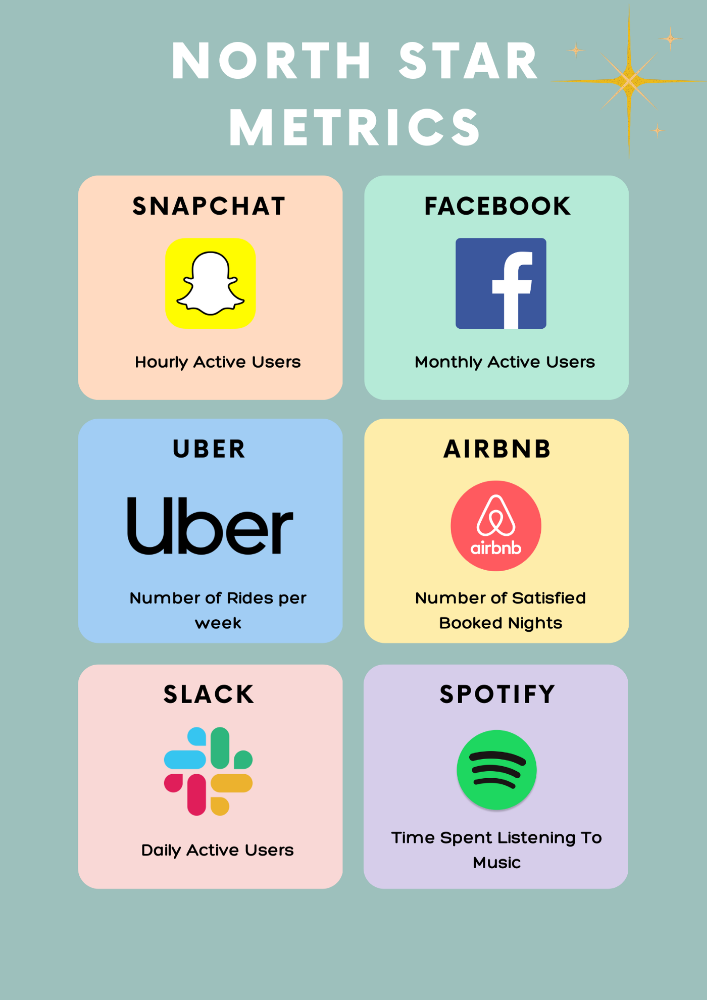

North Star Metric

This is the key metric. A good north star metric measures customer value. It emphasizes your product's longevity. Many organizations fail to grow because they confuse north star measures with other indicators. A good focus metric should touch all company teams and be tracked forever. If a company gives its customers outstanding value, growth and success are inevitable. How do we measure this value?

A north star metric has these benefits:

Customer Obsession: It promotes a culture of customer value throughout the entire organization.

Consensus: Everyone can quickly understand where the business is at and can promptly make improvements, according to consensus.

Growth: It provides a tool to measure the company's long-term success. Do you think your company will last for a long time?

How can I pick a reliable North Star Metric?

Some fear a single metric. Ensure product leaders can objectively determine a north star metric. Your company's focus metric should meet certain conditions. Here are a few:

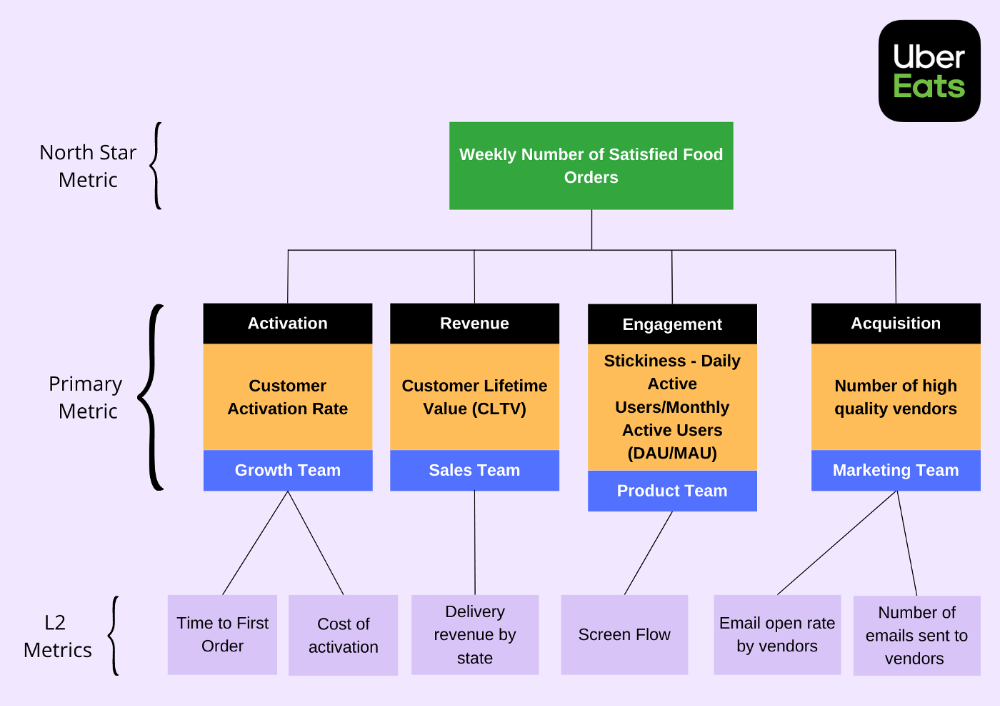

A good focus metric should reflect value and, as such, should be closely related to the point at which customers obtain the desired value from your product. For instance, the quick delivery to your home is a value proposition of UberEats. The value received from a delivery would be a suitable focal metric to use. While counting orders is alluring, the quantity of successfully completed positive review orders would make a superior north star statistic. This is due to the fact that a client who placed an order but received a defective or erratic delivery is not benefiting from Uber Eats. By tracking core value gain, which is the number of purchases that resulted in satisfied customers, we are able to track not only the total number of orders placed during a specific time period but also the core value proposition.

Focus metrics need to be quantifiable; they shouldn't only be feelings or states; they need to be actionable. A smart place to start is by counting how many times an activity has been completed.

A great focus metric is one that can be measured within predetermined time limits; otherwise, you are not measuring at all. The company can improve that measure more quickly by having time-bound focus metrics. Measuring and accounting for progress over set time periods is the only method to determine whether or not you are moving in the right path. You can then evaluate your metrics for today and yesterday. It's generally not a good idea to use a year as a time frame. Ideally, depending on the nature of your organization and the measure you are focusing on, you want to take into account on a daily, weekly, or monthly basis.

Everyone in the firm has the potential to affect it: A short glance at the well-known AAARRR funnel, also known as the Pirate Metrics, reveals that various teams inside the organization have an impact on the funnel. Ideally, the NSM should be impacted if changes are made to one portion of the funnel. Consider how the growth team in your firm is enhancing customer retention. This would have a good effect on the north star indicator because at this stage, a repeat client is probably being satisfied on a regular basis. Additionally, if the opposite were true and a client churned, it would have a negative effect on the focus metric.

It ought to be connected to the business's long-term success: The direction of sustainability would be indicated by a good north star metric. A company's lifeblood is product demand and revenue, so it's critical that your NSM points in the direction of sustainability. If UberEats can effectively increase the monthly total of happy client orders, it will remain in operation indefinitely.

Many product teams make the mistake of focusing on revenue. When the bottom line is emphasized, a company's goal moves from giving value to extracting money from customers. A happy consumer will stay and pay for your service. Customer lifetime value always exceeds initial daily, monthly, or weekly revenue.

Great North Star Metrics Examples

🥇 Basic/L1 Metrics:

The NSM is broad and focuses on providing value for users, while the primary metric is product/feature focused and utilized to drive the focus metric or signal its health. The primary statistic is team-specific, whereas the north star metric is company-wide. For UberEats' NSM, the marketing team may measure the amount of quality food vendors who sign up using email marketing. With quality vendors, more orders will be satisfied. Shorter feedback loops and unambiguous team assignments make L1 metrics more actionable and significant in the immediate term.

🥈 Supporting L2 metrics:

These are supporting metrics to the L1 and focus metrics. Location, demographics, or features are examples of L1 metrics. UberEats' supporting metrics might be the number of sales emails sent to food vendors, the number of opens, and the click-through rate. Secondary metrics are low-level and evident, and they relate into primary and north star measurements. UberEats needs a high email open rate to attract high-quality food vendors. L2 is a leading sign for L1.

Where can I find product metrics?

How can I measure in-app usage and activity now that I know what metrics to track? Enter product analytics. Product analytics tools evaluate and improve product management parameters that indicate a product's health from a user's perspective.

Various analytics tools on the market supply product insight. From page views and user flows through A/B testing, in-app walkthroughs, and surveys. Depending on your use case and necessity, you may combine tools to see how users engage with your product. Gainsight, MixPanel, Amplitude, Google Analytics, FullStory, Heap, and Pendo are product tools.

This article isn't sponsored and doesn't market product analytics tools. When choosing an analytics tool, consider the following:

Tools for tracking your Focus, L1, and L2 measurements

Pricing

Adaptations to include external data sources and other products

Usability and the interface

Scalability

Security

An investment in the appropriate tool pays off. To choose the correct metrics to track, you must first understand your business need and what value means to your users. Metrics and analytics are crucial for any tech product's growth. It shows how your business is doing and how to best serve users.