More on Entrepreneurship/Creators

Alex Mathers

3 years ago

400 articles later, nobody bothered to read them.

Writing for readers:

14 years of daily writing.

I post practically everything on social media. I authored hundreds of articles, thousands of tweets, and numerous volumes to almost no one.

Tens of thousands of readers regularly praise me.

I despised writing. I'm stuck now.

I've learned what readers like and what doesn't.

Here are some essential guidelines for writing with impact:

Readers won't understand your work if you can't.

Though obvious, this slipped me up. Share your truths.

Stories engage human brains.

Showing the journey of a person from worm to butterfly inspires the human spirit.

Overthinking hinders powerful writing.

The best ideas come from inner understanding in between thoughts.

Avoid writing to find it. Write.

Writing a masterpiece isn't motivating.

Write for five minutes to simplify. Step-by-step, entertaining, easy steps.

Good writing requires a willingness to make mistakes.

So write loads of garbage that you can edit into a good piece.

Courageous writing.

A courageous story will move readers. Personal experience is best.

Go where few dare.

Templates, outlines, and boundaries help.

Limitations enhance writing.

Excellent writing is straightforward and readable, removing all the unnecessary fat.

Use five words instead of nine.

Use ordinary words instead of uncommon ones.

Readers desire relatability.

Too much perfection will turn it off.

Write to solve an issue if you can't think of anything to write.

Instead, read to inspire. Best authors read.

Every tweet, thread, and novel must have a central idea.

What's its point?

This can make writing confusing.

️ Don't direct your reader.

Readers quit reading. Demonstrate, describe, and relate.

Even if no one responds, have fun. If you hate writing it, the reader will too.

Ben Chino

3 years ago

100-day SaaS buildout.

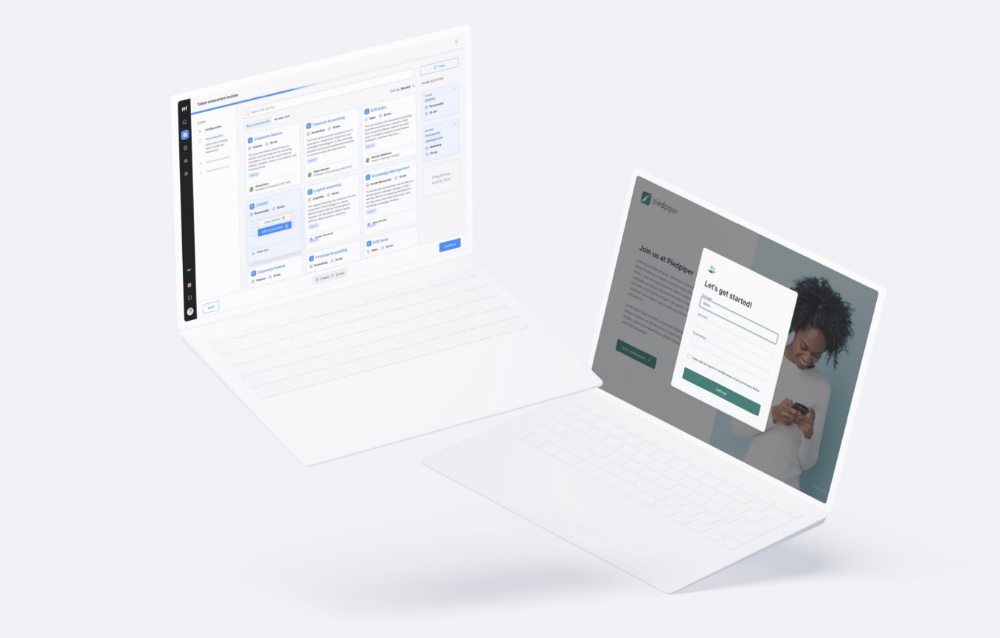

We're opening up Maki through a series of Medium posts. We'll describe what Maki is building and how. We'll explain how we built a SaaS in 100 days. This isn't a step-by-step guide to starting a business, but a product philosophy to help you build quickly.

Focus on end-users.

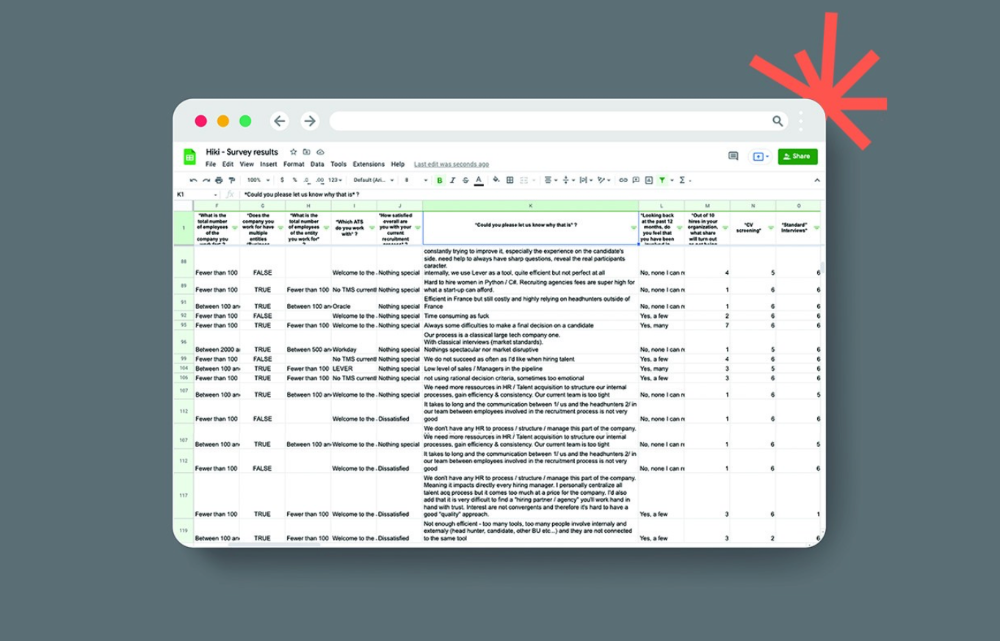

This may seem obvious, but it's important to talk to users first. When we started thinking about Maki, we interviewed 100 HR directors from SMBs, Next40 scale-ups, and major Enterprises to understand their concerns. We initially thought about the future of employment, but most of their worries centered on Recruitment. We don't have a clear recruiting process, it's time-consuming, we recruit clones, we don't support diversity, etc. And as hiring managers, we couldn't help but agree.

Co-create your product with your end-users.

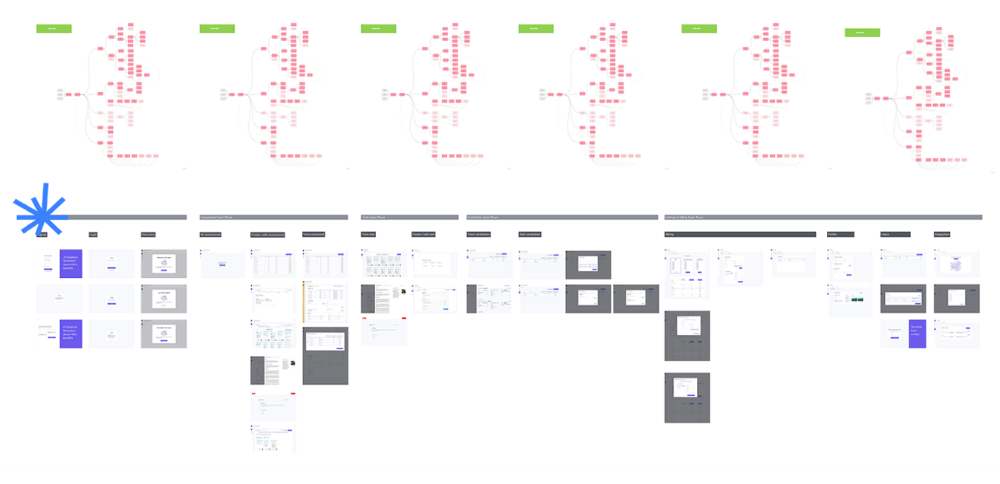

We went to the drawing board, read as many books as possible (here, here, and here), and when we started getting a sense for a solution, we questioned 100 more operational HR specialists to corroborate the idea and get a feel for our potential answer. This confirmed our direction to help hire more objectively and efficiently.

Back to the drawing board, we designed our first flows and screens. We organized sessions with certain survey respondents to show them our early work and get comments. We got great input that helped us build Maki, and we met some consumers. Obsess about users and execute alongside them.

Don’t shoot for the moon, yet. Make pragmatic choices first.

Once we were convinced, we began building. To launch a SaaS in 100 days, we needed an operating principle that allowed us to accelerate while still providing a reliable, secure, scalable experience. We focused on adding value and outsourced everything else. Example:

Concentrate on adding value. Reuse existing bricks.

When determining which technology to use, we looked at our strengths and the future to see what would last. Node.js for backend, React for frontend, both with typescript. We thought this technique would scale well since it would attract more talent and the surrounding mature ecosystem would help us go quicker.

We explored for ways to bootstrap services while setting down strong foundations that might support millions of users. We built our backend services on NestJS so we could extend into microservices later. Hasura, a GraphQL APIs engine, automates Postgres data exposing through a graphQL layer. MUI's ready-to-use components powered our design-system. We used well-maintained open-source projects to speed up certain tasks.

We outsourced important components of our platform (Auth0 for authentication, Stripe for billing, SendGrid for notifications) because, let's face it, we couldn't do better. We choose to host our complete infrastructure (SQL, Cloud run, Logs, Monitoring) on GCP to simplify our work between numerous providers.

Focus on your business, use existing bricks for the rest. For the curious, we'll shortly publish articles detailing each stage.

Most importantly, empower people and step back.

We couldn't have done this without the incredible people who have supported us from the start. Since Powership is one of our key values, we provided our staff the power to make autonomous decisions from day one. Because we believe our firm is its people, we hired smart builders and let them build.

Nicolas left Spendesk to create scalable interfaces using react-router, react-queries, and MUI. JD joined Swile and chose Hasura as our GraphQL engine. Jérôme chose NestJS to build our backend services. Since then, Justin, Ben, Anas, Yann, Benoit, and others have followed suit.

If you consider your team a collective brain, you should let them make decisions instead of directing them what to do. You'll make mistakes, but you'll go faster and learn faster overall.

Invest in great talent and develop a strong culture from the start. Here's how to establish a SaaS in 100 days.

MAJESTY AliNICOLE WOW!

3 years ago

YouTube's faceless videos are growing in popularity, but this is nothing new.

I've always bucked social media norms. YouTube doesn't compare. Traditional video made me zig when everyone zagged. Audio, picture personality animation, thought movies, and slide show videos are most popular and profitable.

YouTube's business is shifting. While most video experts swear by the idea that YouTube success is all about making personal and professional Face-Share-Videos, those who use YouTube for business know things are different.

In this article, I will share concepts from my mini master class Figures to Followers: Prioritizing Purposeful Profits Over Popularity on YouTube to Create the Win-Win for You, Your Audience & More and my forthcoming publication The WOWTUBE-PRENEUR FACTOR EVOLUTION: The Basics of Powerfully & Profitably Positioning Yourself as a Video Communications Authority to Broadcast Your WOW Effect as a Video Entrepreneur.

I've researched the psychology, anthropology, and anatomy of significant social media platforms as an entrepreneur and social media marketing expert. While building my YouTube empire, I've paid particular attention to what works for short, mid, and long-term success, whether it's a niche-focused, lifestyle, or multi-interest channel.

Most new, semi-new, and seasoned YouTubers feel vlog-style or live-on-camera videos are popular. Faceless, animated, music-text-based, and slideshow videos do well for businesses.

Buyer-consumer vs. content-consumer thinking is totally different when absorbing content. Profitability and popularity are closely related, however most people become popular with traditional means but not profitable.

In my experience, Faceless videos are more profitable, although it depends on the channel's style. Several professionals are now teaching in their courses that non-traditional films are making the difference in their business success and popularity.

Face-Share-Personal-Touch videos make audiences feel like they know the personality, but they're not profitable.

Most spend hours creating articles, videos, and thumbnails to seem good. That's how most YouTubers gained their success in the past, but not anymore.

Looking the part and performing a typical role in videos doesn't convert well, especially for newbie channels.

Working with video marketers and YouTubers for years, I've noticed that most struggle to be consistent with content publishing since they exclusively use formats that need extensive development. Camera and green screen set ups, shooting/filming, and editing for post productions require their time, making it less appealing to post consistently, especially if they're doing all the work themselves.

Because they won't make simple format videos or audio videos with an overlay image, they overcomplicate the procedure (even with YouTube Shorts), and they leave their channels for weeks or months. Again, they believe YouTube only allows specific types of videos. Even though this procedure isn't working, they plan to keep at it.

A successful YouTube channel needs multiple video formats to suit viewer needs, I teach. Face-Share-Personal Touch and Faceless videos are both useful.

How people engage with YouTube content has changed over the years, and the average customer is no longer interested in an all-video channel.

Face-Share-Personal-Touch videos are great

Google Live

Online training

Giving listeners a different way to access your podcast that is being broadcast on sites like Anchor, BlogTalkRadio, Spreaker, Google, Apple Store, and others Many people enjoy using a video camera to record themselves while performing the internet radio, Facebook, or Instagram Live versions of their podcasts.

Video Blog Updates

even more

Faceless videos are popular for business and benefit both entrepreneurs and audiences.

For the business owner/entrepreneur…

Less production time results in time dollar savings.

enables the business owner to demonstrate the diversity of content development

For the Audience…

The channel offers a variety of appealing content options.

The same format is not monotonous or overly repetitive for the viewers.

Below are a couple videos from YouTube guru Make Money Matt's channel, which has over 347K subscribers.

Enjoy

24 Best Niches to Make Money on YouTube Without Showing Your Face

Make Money on YouTube Without Making Videos (Free Course)

In conclusion, you have everything it takes to build your own YouTube brand and empire. Learn the rules, then adapt them to succeed.

Please reread this and the other suggested articles for optimal benefit.

I hope this helped. How has this article helped you? Follow me for more articles like this and more multi-mission expressions.

You might also like

Theresa W. Carey

3 years ago

How Payment for Order Flow (PFOF) Works

What is PFOF?

PFOF is a brokerage firm's compensation for directing orders to different parties for trade execution. The brokerage firm receives fractions of a penny per share for directing the order to a market maker.

Each optionable stock could have thousands of contracts, so market makers dominate options trades. Order flow payments average less than $0.50 per option contract.

Order Flow Payments (PFOF) Explained

The proliferation of exchanges and electronic communication networks has complicated equity and options trading (ECNs) Ironically, Bernard Madoff, the Ponzi schemer, pioneered pay-for-order-flow.

In a December 2000 study on PFOF, the SEC said, "Payment for order flow is a method of transferring trading profits from market making to brokers who route customer orders to specialists for execution."

Given the complexity of trading thousands of stocks on multiple exchanges, market making has grown. Market makers are large firms that specialize in a set of stocks and options, maintaining an inventory of shares and contracts for buyers and sellers. Market makers are paid the bid-ask spread. Spreads have narrowed since 2001, when exchanges switched to decimals. A market maker's ability to play both sides of trades is key to profitability.

Benefits, requirements

A broker receives fees from a third party for order flow, sometimes without a client's knowledge. This invites conflicts of interest and criticism. Regulation NMS from 2005 requires brokers to disclose their policies and financial relationships with market makers.

Your broker must tell you if it's paid to send your orders to specific parties. This must be done at account opening and annually. The firm must disclose whether it participates in payment-for-order-flow and, upon request, every paid order. Brokerage clients can request payment data on specific transactions, but the response takes weeks.

Order flow payments save money. Smaller brokerage firms can benefit from routing orders through market makers and getting paid. This allows brokerage firms to send their orders to another firm to be executed with other orders, reducing costs. The market maker or exchange benefits from additional share volume, so it pays brokerage firms to direct traffic.

Retail investors, who lack bargaining power, may benefit from order-filling competition. Arrangements to steer the business in one direction invite wrongdoing, which can erode investor confidence in financial markets and their players.

Pay-for-order-flow criticism

It has always been controversial. Several firms offering zero-commission trades in the late 1990s routed orders to untrustworthy market makers. During the end of fractional pricing, the smallest stock spread was $0.125. Options spreads widened. Traders found that some of their "free" trades cost them a lot because they weren't getting the best price.

The SEC then studied the issue, focusing on options trades, and nearly decided to ban PFOF. The proliferation of options exchanges narrowed spreads because there was more competition for executing orders. Options market makers said their services provided liquidity. In its conclusion, the report said, "While increased multiple-listing produced immediate economic benefits to investors in the form of narrower quotes and effective spreads, these improvements have been muted with the spread of payment for order flow and internalization."

The SEC allowed payment for order flow to continue to prevent exchanges from gaining monopoly power. What would happen to trades if the practice was outlawed was also unclear. SEC requires brokers to disclose financial arrangements with market makers. Since then, the SEC has watched closely.

2020 Order Flow Payment

Rule 605 and Rule 606 show execution quality and order flow payment statistics on a broker's website. Despite being required by the SEC, these reports can be hard to find. The SEC mandated these reports in 2005, but the format and reporting requirements have changed over the years, most recently in 2018.

Brokers and market makers formed a working group with the Financial Information Forum (FIF) to standardize order execution quality reporting. Only one retail brokerage (Fidelity) and one market maker remain (Two Sigma Securities). FIF notes that the 605/606 reports "do not provide the level of information that allows a retail investor to gauge how well a broker-dealer fills a retail order compared to the NBBO (national best bid or offer’) at the time the order was received by the executing broker-dealer."

In the first quarter of 2020, Rule 606 reporting changed to require brokers to report net payments from market makers for S&P 500 and non-S&P 500 equity trades and options trades. Brokers must disclose payment rates per 100 shares by order type (market orders, marketable limit orders, non-marketable limit orders, and other orders).

Richard Repetto, Managing Director of New York-based Piper Sandler & Co., publishes a report on Rule 606 broker reports. Repetto focused on Charles Schwab, TD Ameritrade, E-TRADE, and Robinhood in Q2 2020. Repetto reported that payment for order flow was higher in the second quarter than the first due to increased trading activity, and that options paid more than equities.

Repetto says PFOF contributions rose overall. Schwab has the lowest options rates, while TD Ameritrade and Robinhood have the highest. Robinhood had the highest equity rating. Repetto assumes Robinhood's ability to charge higher PFOF reflects their order flow profitability and that they receive a fixed rate per spread (vs. a fixed rate per share by the other brokers).

Robinhood's PFOF in equities and options grew the most quarter-over-quarter of the four brokers Piper Sandler analyzed, as did their implied volumes. All four brokers saw higher PFOF rates.

TD Ameritrade took the biggest income hit when cutting trading commissions in fall 2019, and this report shows they're trying to make up the shortfall by routing orders for additional PFOF. Robinhood refuses to disclose trading statistics using the same metrics as the rest of the industry, offering only a vague explanation on their website.

Summary

Payment for order flow has become a major source of revenue as brokers offer no-commission equity (stock and ETF) orders. For retail investors, payment for order flow poses a problem because the brokerage may route orders to a market maker for its own benefit, not the investor's.

Infrequent or small-volume traders may not notice their broker's PFOF practices. Frequent traders and those who trade larger quantities should learn about their broker's order routing system to ensure they're not losing out on price improvement due to a broker prioritizing payment for order flow.

This post is a summary. Read full article here

Eric Esposito

3 years ago

$100M in NFT TV shows from Fox

Fox executives will invest $100 million in NFT-based TV shows. Fox brought in "Rick and Morty" co-creator Dan Harmon to create "Krapopolis"

Fox's Blockchain Creative Labs (BCL) will develop these NFT TV shows with Bento Box Entertainment. BCL markets Fox's WWE "Moonsault" NFT.

Fox said it would use the $100 million to build a "creative community" and "brand ecosystem." The media giant mentioned using these funds for NFT "benefits."

"Krapopolis" will be a Greek-themed animated comedy, per Rarity Sniper. Initial reports said NFT buyers could collaborate on "character development" and get exclusive perks.

Fox Entertainment may drop "Krapopolis" NFTs on Ethereum, according to new reports. Fox says it will soon release more details on its NFT plans for "Krapopolis."

Media Giants Favor "NFT Storytelling"

"Krapopolis" is one of the largest "NFT storytelling" experiments due to Dan Harmon's popularity and Fox Entertainment's reach. Many celebrities have begun exploring Web3 for TV shows.

Mila Kunis' animated sitcom "The Gimmicks" lets fans direct the show. Any "Gimmick" NFT holder could contribute to episode plots.

"The Gimmicks" lets NFT holders write fan fiction about their avatars. If show producers like what they read, their NFT may appear in an episode.

Rob McElhenney recently launched "Adimverse," a Web3 writers' community. Anyone with a "Adimverse" NFT can collaborate on creative projects and share royalties.

Many blue-chip NFTs are appearing in movies and TV shows. Coinbase will release Bored Ape Yacht Club shorts at NFT. NYC. Reese Witherspoon is working on a World of Women NFT series.

PFP NFT collections have Hollywood media partners. Guy Oseary manages Madonna's World of Women and Bored Ape Yacht Club collections. The Doodles signed with Billboard's Julian Holguin and the Cool Cats with CAA.

Web3 and NFTs are changing how many filmmakers tell stories.

Sam Bourgi

4 years ago

DAOs are legal entities in Marshall Islands.

The Pacific island state recognizes decentralized autonomous organizations.

The Republic of the Marshall Islands has recognized decentralized autonomous organizations (DAOs) as legal entities, giving collectively owned and managed blockchain projects global recognition.

The Marshall Islands' amended the Non-Profit Entities Act 2021 that now recognizes DAOs, which are blockchain-based entities governed by self-organizing communities. Incorporating Admiralty LLC, the island country's first DAO, was made possible thanks to the amendement. MIDAO Directory Services Inc., a domestic organization established to assist DAOs in the Marshall Islands, assisted in the incorporation.

The new law currently allows any DAO to register and operate in the Marshall Islands.

“This is a unique moment to lead,” said Bobby Muller, former Marshall Islands chief secretary and co-founder of MIDAO. He believes DAOs will help create “more efficient and less hierarchical” organizations.

A global hub for DAOs, the Marshall Islands hopes to become a global hub for DAO registration, domicile, use cases, and mass adoption. He added:

"This includes low-cost incorporation, a supportive government with internationally recognized courts, and a technologically open environment."

According to the World Bank, the Marshall Islands is an independent island state in the Pacific Ocean near the Equator. To create a blockchain-based cryptocurrency that would be legal tender alongside the US dollar, the island state has been actively exploring use cases for digital assets since at least 2018.

In February 2018, the Marshall Islands approved the creation of a new cryptocurrency, Sovereign (SOV). As expected, the IMF has criticized the plan, citing concerns that a digital sovereign currency would jeopardize the state's financial stability. They have also criticized El Salvador, the first country to recognize Bitcoin (BTC) as legal tender.

Marshall Islands senator David Paul said the DAO legislation does not pose the same issues as a government-backed cryptocurrency. “A sovereign digital currency is financial and raises concerns about money laundering,” . This is more about giving DAOs legal recognition to make their case to regulators, investors, and consumers.