More on Leadership

Sean Bloomfield

3 years ago

How Jeff Bezos wins meetings over

We've all been there: You propose a suggestion to your team at a meeting, and most people appear on board, but a handful or small minority aren't. How can we achieve collective buy-in when we need to go forward but don't know how to deal with some team members' perceived intransigence?

Steps:

Investigate the divergent opinions: Begin by sincerely attempting to comprehend the viewpoint of your disagreeing coworkers. Maybe it makes sense to switch horses in the middle of the race. Have you completely overlooked a blind spot, such as a political concern that could arise as an unexpected result of proceeding? This is crucial to ensure that the person or people feel heard as well as to advance the goals of the team. Sometimes all individuals need is a little affirmation before they fully accept your point of view.

It says a lot about you as a leader to be someone who always lets the perceived greatest idea win, regardless of the originating channel, if after studying and evaluating you see the necessity to align with the divergent position.

If, after investigation and assessment, you determine that you must adhere to the original strategy, we go to Step 2.

2. Disagree and Commit: Jeff Bezos, CEO of Amazon, has had this experience, and Julie Zhuo describes how he handles it in her book The Making of a Manager.

It's OK to disagree when the team is moving in the right direction, but it's not OK to accidentally or purposefully damage the team's efforts because you disagree. Let the team know your opinion, but then help them achieve company goals even if they disagree. Unknown. You could be wrong in today's ever-changing environment.

So next time you have a team member who seems to be dissenting and you've tried the previous tactics, you may ask the individual in the meeting I understand you but I don't want us to leave without you on board I need your permission to commit to this approach would you give us your commitment?

Julie Zhuo

3 years ago

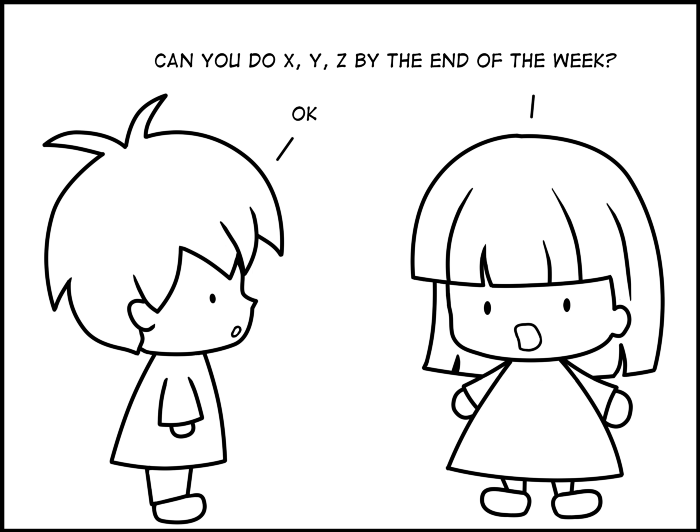

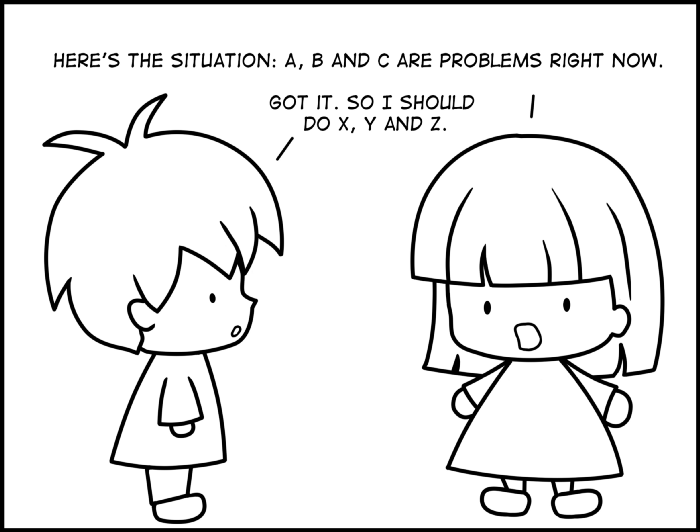

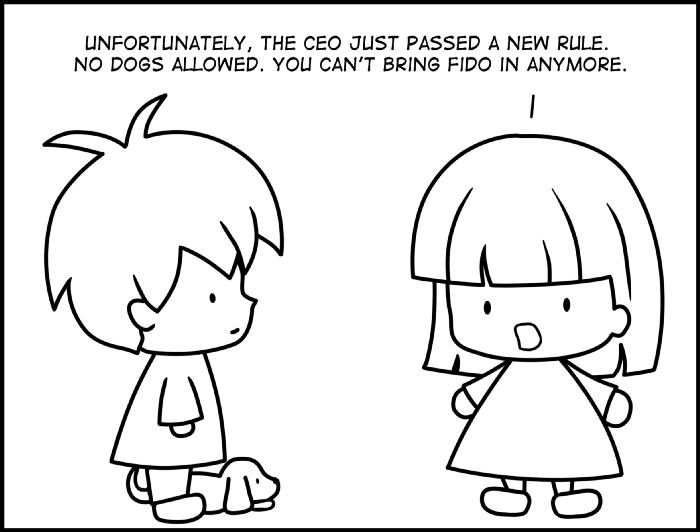

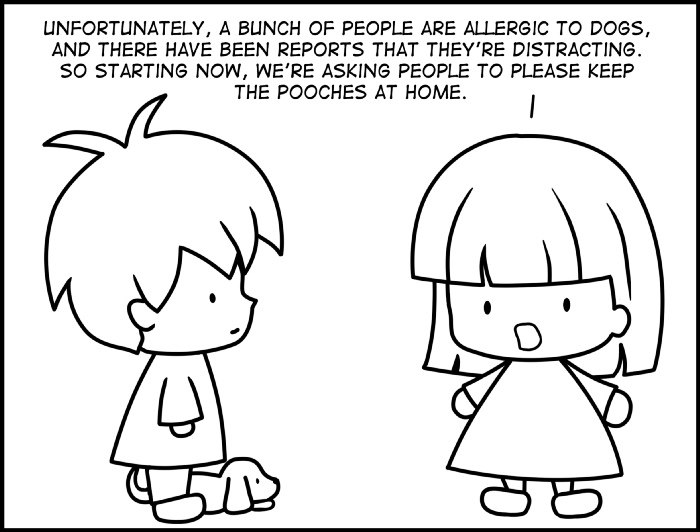

Comparing poor and excellent managers

10-sketch explanation

Choosing Tasks

Bringing News

carrying out 1:1s

providing critique

Managing Turbulence

Hunter Walk

3 years ago

Is it bad of me to want our portfolio companies to generate greater returns for outside investors than they did for us as venture capitalists?

Wishing for Lasting Companies, Not Penny Stocks or Goodwill Write-Downs

Get me a NASCAR-style company-logoed cremation urn (notice to the executor of my will, theres gonna be a lot of weird requests). I believe in working on projects that would be on your tombstone. As the Homebrew logo is tattooed on my shoulder, expanding the portfolio to my posthumous commemoration is easy. But this isn't an IRR victory lap; it's a hope that the firms we worked for would last beyond my lifetime.

![a little boy planting a dollar bill in the ground and pouring a watering can out on it, digital art [DALL-E]](https://storage.googleapis.com/int3grity/posts/V62hkReDx56S/images/vMwzqrYeXaYnUIMXAdTY9)

Venture investors too often take credit or distance themselves from startups based on circumstances. Successful companies tell stories of crucial introductions, strategy conversations, and other value. Defeats Even whether our term involves Board service or systematic ethical violations, I'm just a little investment, so there's not much I can do. Since I'm guilty, I'm tossing stones from within the glass home (although we try to own our decisions through the lifecycle).

Post-exit company trajectories are usually unconfounded. Off the cap table, no longer a shareholder (or a diminishing one as you sell off/distribute), eventually leaving the Board. You can cheer for the squad or forget about it, but you've freed the corporation and it's back to portfolio work.

As I look at the downward track of most SPACs and other tarnished IPOs from the last few years, I wonder how I would feel if those were my legacy. Is my job done? Yes. When investing in a business, the odds are against it surviving, let alone thriving and being able to find sunlight. SPAC sponsors, institutional buyers, retail investments. Free trade in an open market is their right. Risking and losing capital is the system working! But

We were lead or co-lead investors in our first three funds, but as additional VCs joined the company, we were pushed down the cap table. Voting your shares rarely matters; supporting the firm when they need it does. Being valuable, consistent, and helping the company improve builds trust with the founders.

I hope every startup we sponsor becomes a successful public company before, during, and after we benefit. My perspective of American capitalism. Well, a stock ticker has a lot of garbage, and I support all types of regulation simplification (in addition to being a person investor in the Long-Term Stock Exchange). Yet being owned by a large group of investors and making actual gains for them is great. Likewise does seeing someone you met when they were just starting out become a public company CEO without losing their voice, leadership, or beliefs.

I'm just thinking about what we can do from the start to realize value from our investments and build companies with bright futures. Maybe seed venture financing shouldn't impact those outcomes, but I'm not comfortable giving up that obligation.

You might also like

umair haque

3 years ago

The reasons why our civilization is deteriorating

The Industrial Revolution's Curse: Why One Age's Power Prevents the Next Ones

A surprising fact. Recently, Big Oil's 1970s climate change projections were disturbingly accurate. Of course, we now know that it worked tirelessly to deny climate change, polluting our societies to this day. That's a small example of the Industrial Revolution's curse.

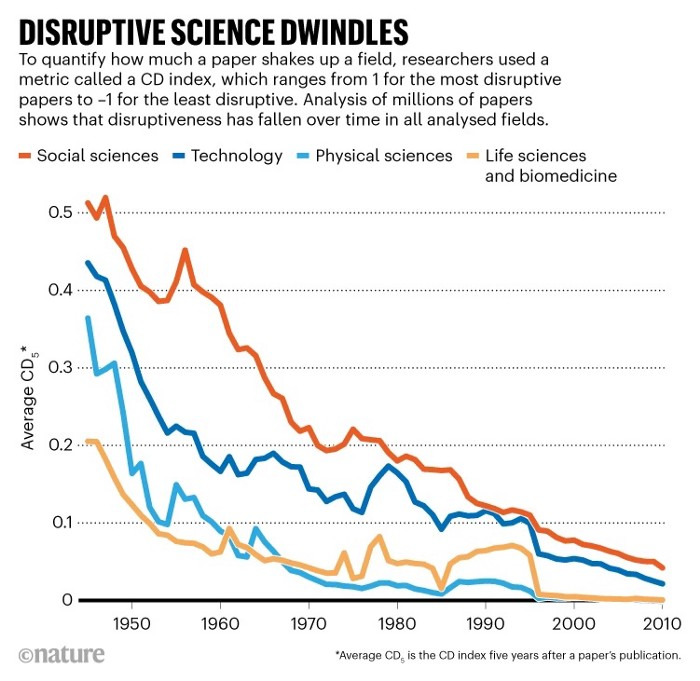

Let me rephrase this nuanced and possibly weird thought. The chart above? Disruptive science is declining. The kind that produces major discoveries, new paradigms, and shattering prejudices.

Not alone. Our civilisation reached a turning point suddenly. Progress stopped and reversed for the first time in centuries.

The Industrial Revolution's Big Bang started it all. At least some humans had riches for the first time, if not all, and with that wealth came many things. Longer, healthier lives since now health may be publicly and privately invested in. For the first time in history, wealthy civilizations could invest their gains in pure research, a good that would have sounded frivolous to cultures struggling to squeeze out the next crop, which required every shoulder to the till.

So. Don't confuse me with the Industrial Revolution's curse. Industry progressed. Contrary. I'm claiming that the Big Bang of Progress is slowing, plateauing, and ultimately reversing. All social indicators show that. From progress itself to disruptive, breakthrough research, everything is slowing down.

It's troubling. Because progress slows and plateaus, pre-modern social problems like fascism, extremism, and fundamentalism return. People crave nostalgic utopias when they lose faith in modernity. That strongman may shield me from this hazardous life. If I accept my place in a blood-and-soil hierarchy, I have a stable, secure position and someone to punch and detest. It's no coincidence that as our civilization hits a plateau of progress, there is a tsunami pulling the world backwards, with people viscerally, openly longing for everything from theocracy to fascism to fundamentalism, an authoritarian strongman to soothe their fears and tell them what to do, whether in Britain, heartland America, India, China, and beyond.

However, one aspect remains unknown. Technology. Let me clarify.

How do most people picture tech? Say that without thinking. Most people think of social media or AI. Well, small correlation engines called artificial neurons are a far cry from biological intelligence, which functions in far more obscure and intricate ways, down to the subatomic level. But let's try it.

Today, tech means AI. But. Do you foresee it?

Consider why civilisation is plateauing and regressing. Because we can no longer provide the most basic necessities at the same rate. On our track, clean air, water, food, energy, medicine, and healthcare will become inaccessible to huge numbers within a decade or three. Not enough. There isn't, therefore prices for food, medicine, and energy keep rising, with occasional relief.

Why our civilizations are encountering what economists like me term a budget constraint—a hard wall of what we can supply—should be evident. Global warming and extinction. Megafires, megadroughts, megafloods, and failed crops. On a civilizational scale, good luck supplying the fundamentals that way. Industrial food production cannot feed a planet warming past two degrees. Crop failures, droughts, floods. Another example: glaciers melt, rivers dry up, and the planet's fresh water supply contracts like a heart attack.

Now. Let's talk tech again. Mostly AI, maybe phone apps. The unsettling reality is that current technology cannot save humanity. Not much.

AI can do things that have become cliches to titillate the masses. It may talk to you and act like a person. It can generate art, which means reproduce it, but nonetheless, AI art! Despite doubts, it promises to self-drive cars. Unimportant.

We need different technology now. AI won't grow crops in ash-covered fields, cleanse water, halt glaciers from melting, or stop the clear-cutting of the planet's few remaining forests. It's not useless, but on a civilizational scale, it's much less beneficial than its proponents claim. By the time it matures, AI can help deliver therapy, keep old people company, and even drive cars more efficiently. None of it can save our culture.

Expand that scenario. AI's most likely use? Replacing call-center workers. Support. It may help doctors diagnose, surgeons orient, or engineers create more fuel-efficient motors. This is civilizationally marginal.

Non-disruptive. Do you see the connection with the paper that indicated disruptive science is declining? AI exemplifies that. It's called disruptive, yet it's a textbook incremental technology. Oh, cool, I can communicate with a bot instead of a poor human in an underdeveloped country and have the same or more trouble being understood. This bot is making more people unemployed. I can now view a million AI artworks.

AI illustrates our civilization's trap. Its innovative technologies will change our lives. But as you can see, its incremental, delivering small benefits at most, and certainly not enough to balance, let alone solve, the broader problem of steadily dropping living standards as our society meets a wall of being able to feed itself with fundamentals.

Contrast AI with disruptive innovations we need. What do we need to avoid a post-Roman Dark Age and preserve our civilization in the coming decades? We must be able to post-industrially produce all our basic needs. We need post-industrial solutions for clean water, electricity, cement, glass, steel, manufacture for garments and shoes, starting with the fossil fuel-intensive plastic, cotton, and nylon they're made of, and even food.

Consider. We have no post-industrial food system. What happens when crop failures—already dangerously accelerating—reach a critical point? Our civilization is vulnerable. Think of ancient civilizations that couldn't survive the drying up of their water sources, the failure of their primary fields, which they assumed the gods would preserve forever, or an earthquake or sickness that killed most of their animals. Bang. Lost. They failed. They splintered, fragmented, and abandoned vast capitols and cities, and suddenly, in history's sight, poof, they were gone.

We're getting close. Decline equals civilizational peril.

We believe dumb notions about AI becoming disruptive when it's incremental. Most of us don't realize our civilization's risk because we believe these falsehoods. Everyone should know that we cannot create any thing at civilizational scale without fossil fuels. Most of us don't know it, thus we don't realize that the breakthrough technologies and systems we need don't manipulate information anymore. Instead, biotechnologies, largely but not genes, generate food without fossil fuels.

We need another Industrial Revolution. AI, apps, bots, and whatnot won't matter unless you think you can eat and drink them while the world dies and fascists, lunatics, and zealots take democracy's strongholds. That's dramatic, but only because it's already happening. Maybe AI can entertain you in that bunker while society collapses with smart jokes or a million Mondrian-like artworks. If civilization is to survive, it cannot create the new Industrial Revolution.

The revolution has begun, but only in small ways. Post-industrial fundamental systems leaders are developing worldwide. The Netherlands is leading post-industrial agriculture. That's amazing because it's a tiny country performing well. Correct? Discover how large-scale agriculture can function, not just you and me, aged hippies, cultivating lettuce in our backyards.

Iceland is leading bioplastics, which, if done well, will be a major advance. Of sure, microplastics are drowning the oceans. What should we do since we can't live without it? We need algae-based bioplastics for green plastic.

That's still young. Any of the above may not function on a civilizational scale. Bioplastics use algae, which can cause problems if overused. None of the aforementioned indicate the next Industrial Revolution is here. Contrary. Slowly.

We have three decades until everything fails. Before life ends. Curtain down. No more fields, rivers, or weather. Freshwater and life stocks have plummeted. Again, we've peaked and declined in our ability to live at today's relatively rich standards. Game over—no more. On a dying planet, producing the fundamentals for a civilisation that left it too late to construct post-industrial systems becomes next to impossible, with output dropping faster and quicker each year, quarter, and day.

Too slow. That's because it's not really happening. Most people think AI when I say tech. I get a politicized response if I say Green New Deal or Clean Industrial Revolution. Half the individuals I talk to have been politicized into believing that climate change isn't real and that any breakthrough technical progress isn't required, desirable, possible, or genuine. They'll suffer.

The Industrial Revolution curse. Every revolution creates new authorities, which ossify and refuse to relinquish their privileges. For fifty years, Big Oil has denied climate change, even though their scientists predicted it. We also have a software industry and its venture capital power centers that are happy for the average person to think tech means chatbots, not being able to produce basics for a civilization without destroying the planet, and billionaires who buy comms platforms for the same eye-watering amount of money it would take to save life on Earth.

The entire world's vested interests are against the next industrial revolution, which is understandable since they were established from fossil money. From finance to energy to corporate profits to entertainment, power in our world is the result of the last industrial revolution, which means it has no motivation or purpose to give up fossil money, as we are witnessing more brutally out in the open.

Thus, the Industrial Revolution's curse—fossil power—rules our globe. Big Agriculture, Big Pharma, Wall St., Silicon Valley, and many others—including politics, which they buy and sell—are basically fossil power, and they have no interest in generating or letting the next industrial revolution happen. That's why tiny enterprises like those creating bioplastics in Iceland or nations savvy enough to shun fossil power, like the Netherlands, which has a precarious relationship with nature, do it. However, fossil power dominates politics, economics, food, clothes, energy, and medicine, and it has no motivation to change.

Allow disruptive innovations again. As they occur, its position becomes increasingly vulnerable. If you were fossil power, would you allow another industrial revolution to destroy its privilege and wealth?

You might, since power and money haven't corrupted you. However, fossil power prevents us from building, creating, and growing what we need to survive as a society. I mean the entire economic, financial, and political power structure from the last industrial revolution, not simply Big Oil. My friends, fossil power's chokehold over our society is likely to continue suffocating the advances that could have spared our civilization from a decline that's now here and spiraling closer to oblivion.

joyce shen

4 years ago

Framework to Evaluate Metaverse and Web3

Everywhere we turn, there's a new metaverse or Web3 debut. Microsoft recently announced a $68.7 BILLION cash purchase of Activision.

Like AI in 2013 and blockchain in 2014, NFT growth in 2021 feels like this year's metaverse and Web3 growth. We are all bombarded with information, conflicting signals, and a sensation of FOMO.

How can we evaluate the metaverse and Web3 in a noisy, new world? My framework for evaluating upcoming technologies and themes is shown below. I hope you will also find them helpful.

Understand the “pipes” in a new space.

Whatever people say, Metaverse and Web3 will have to coexist with the current Internet. Companies who host, move, and store data over the Internet have a lot of intriguing use cases in Metaverse and Web3, whether in infrastructure, data analytics, or compliance. Hence the following point.

## Understand the apps layer and their infrastructure.

Gaming, crypto exchanges, and NFT marketplaces would not exist today if not for technology that enables rapid app creation. Yes, according to Chainalysis and other research, 30–40% of Ethereum is self-hosted, with the rest hosted by large cloud providers. For Microsoft to acquire Activision makes strategic sense. It's not only about the games, but also the infrastructure that supports them.

Follow the money

Understanding how money and wealth flow in a complex and dynamic environment helps build clarity. Unless you are exceedingly wealthy, you have limited ability to significantly engage in the Web3 economy today. Few can just buy 10 ETH and spend it in one day. You must comprehend who benefits from the process, and how that 10 ETH circulates now and possibly tomorrow. Major holders and players control supply and liquidity in any market. Today, most Web3 apps are designed to increase capital inflow so existing significant holders can utilize it to create a nascent Web3 economy. When you see a new Metaverse or Web3 application, remember how money flows.

What is the use case?

What does the app do? If there is no clear use case with clear makers and consumers solving a real problem, then the euphoria soon fades, and the only stakeholders who remain enthused are those who have too much to lose.

Time is a major competition that is often overlooked.

We're only busier, but each day is still 24 hours. Using new apps may mean that time is lost doing other things. The user must be eager to learn. Metaverse and Web3 vs. our time? I don't think we know the answer yet (at least for working adults whose cost of time is higher).

I don't think we know the answer yet (at least for working adults whose cost of time is higher).

People and organizations need security and transparency.

For new technologies or apps to be widely used, they must be safe, transparent, and trustworthy. What does secure Metaverse and Web3 mean? This is an intriguing subject for both the business and public sectors. Cloud adoption grew in part due to improved security and data protection regulations.

The following frameworks can help analyze and understand new technologies and emerging technological topics, unless you are a significant investment fund with the financial ability to gamble on numerous initiatives and essentially form your own “index fund”.

I write on VC, startups, and leadership.

More on https://www.linkedin.com/in/joycejshen/ and https://joyceshen.substack.com/

This writing is my own opinion and does not represent investment advice.

Scott Galloway

3 years ago

Don't underestimate the foolish

ZERO GRACE/ZERO MALICE

Big companies and wealthy people make stupid mistakes too.

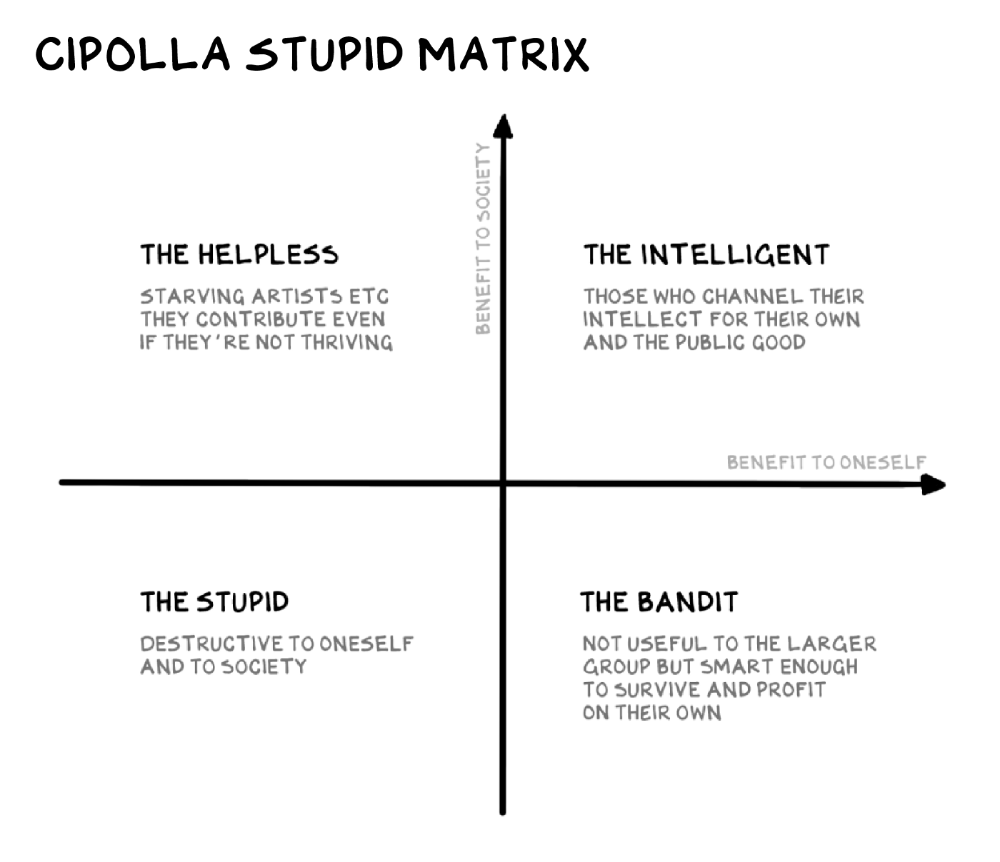

Your ancestors kept snakes and drank bad water. You (probably) don't because you've learnt from their failures via instinct+, the ultimate life-lessons streaming network in your head. Instincts foretell the future. If you approach a lion, it'll eat you. Our society's nuanced/complex decisions have surpassed instinct. Human growth depends on how we handle these issues. 80% of people believe they are above-average drivers, yet few believe they make many incorrect mistakes that make them risky. Stupidity hurts others like death. Basic Laws of Human Stupidity by Carlo Cipollas:

Everyone underestimates the prevalence of idiots in our society.

Any other trait a person may have has no bearing on how likely they are to be stupid.

A dumb individual is one who harms someone without benefiting themselves and may even lose money in the process.

Non-dumb people frequently underestimate how destructively powerful stupid people can be.

The most dangerous kind of person is a moron.

Professor Cippola defines stupid as bad for you and others. We underestimate the corporate world's and seemingly successful people's ability to make bad judgments that harm themselves and others. Success is an intoxication that makes you risk-aggressive and blurs your peripheral vision.

Stupid companies and decisions:

Big Dumber

Big-company bad ideas have more bulk and inertia. The world's most valuable company recently showed its board a VR headset. Jony Ive couldn't destroy Apple's terrible idea in 2015. Mr. Ive said that VR cut users off from the outer world, made them seem outdated, and lacked practical uses. Ives' design team doubted users would wear headsets for lengthy periods.

VR has cost tens of billions of dollars over a decade to prove nobody wants it. The next great SaaS startup will likely come from Florence, not Redmond or San Jose.

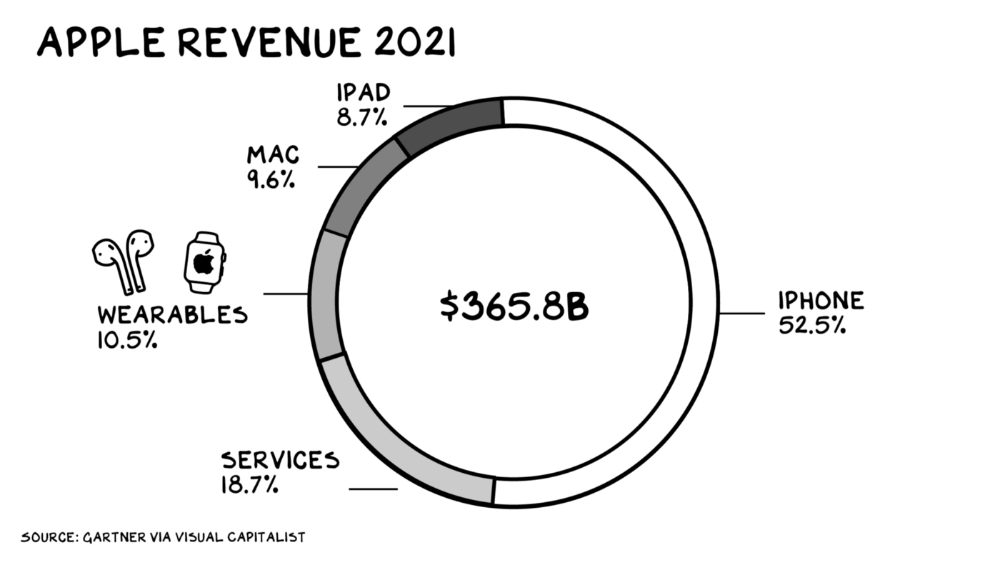

Apple Watch and Airpods have made the Cupertino company the world's largest jewelry maker. 10.5% of Apple's income, or $38 billion, comes from wearables in 2021. (seven times the revenue of Tiffany & Co.). Jewelry makes you more appealing and useful. Airpods and Apple Watch do both.

Headsets make you less beautiful and useful and promote isolation, loneliness, and unhappiness among American teenagers. My sons pretend they can't hear or see me when on their phones. VR headsets lack charisma.

Coinbase disclosed a plan to generate division and tension within its workplace weeks after Apple was pitched $2,000 smokes. The crypto-trading platform is piloting a program that rates staff after every interaction. If a coworker says anything you don't like, you should tell them how to improve. Everyone gets a 110-point scorecard. Coworkers should evaluate a person's rating while deciding whether to listen to them. It's ridiculous.

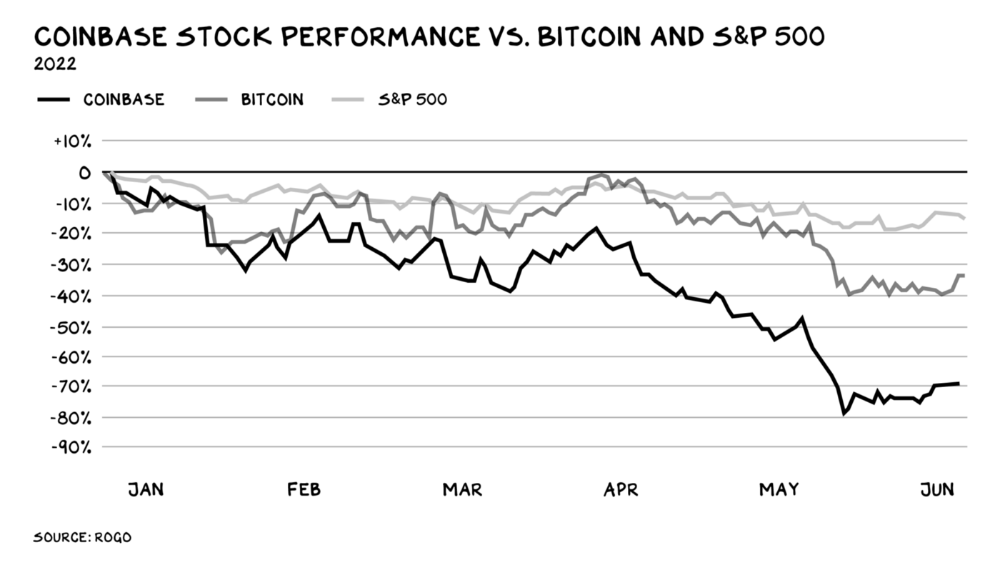

Organizations leverage our superpower of cooperation. This encourages non-cooperation, period. Bridgewater's founder Ray Dalio designed the approach to promote extreme transparency. Dalio has 223 billion reasons his managerial style works. There's reason to suppose only a small group of people, largely traders, will endure a granular scorecard. Bridgewater has 20% first-year turnover. Employees cry in bathrooms, and sex scandals are settled by ignoring individuals with poor believability levels. Coinbase might take solace that the stock is 80% below its initial offering price.

Poor Stupid

Fools' ledgers are valuable. More valuable are lists of foolish rich individuals.

Robinhood built a $8 billion corporation on financial ignorance. The firm's median account value is $240, and its stock has dropped 75% since last summer. Investors, customers, and society lose. Stupid. Luna published a comparable list on the blockchain, grew to $41 billion in market cap, then plummeted.

A podcast presenter is recruiting dentists and small-business owners to invest in Elon Musk's Twitter takeover. Investors pay a 7% fee and 10% of the upside for the chance to buy Twitter at a 35% premium to the current price. The proposal legitimizes CNBC's Trade Like Chuck advertising (Chuck made $4,600 into $460,000 in two years). This is stupid because it adds to the Twitter deal's desperation. Mr. Musk made an impression when he urged his lawyers to develop a legal rip-cord (There are bots on the platform!) to abandon the share purchase arrangement (for less than they are being marketed by the podcaster). Rolls-Royce may pay for this list of the dumb affluent because it includes potential Cullinan buyers.

Worst company? Flowcarbon, founded by WeWork founder Adam Neumann, operates at the convergence of carbon and crypto to democratize access to offsets and safeguard the earth's natural carbon sinks. Can I get an ayahuasca Big Gulp?

Neumann raised $70 million with their yogababble drink. More than half of the consideration came from selling GNT. Goddess Nature Token. I hope the company gets an S-1. Or I'll start a decentralized AI Meta Renewable NFTs company. My Community Based Ebitda coin will fund the company. Possible.

Stupidity inside oneself

This weekend, I was in NYC with my boys. My 14-year-old disappeared. He's realized I'm not cool and is mad I let the charade continue. When out with his dad, he likes to stroll home alone and depart before me. Friends told me hell would return, but I was surprised by how fast the eye roll came.

Not so with my 11-year-old. We went to The Edge, a Hudson Yards observation platform where you can see the city from 100 storeys up for $38. This is hell's seventh ring. Leaning into your boys' interests is key to engaging them (dad tip). Neither loves Crossfit, WW2 history, or antitrust law.

We take selfies on the Thrilling Glass Floor he spots. Dad, there's a bar! Coke? I nod, he rushes to the bar, stops, runs back for money, and sprints back. Sitting on stone seats, drinking Atlanta Champagne, he turns at me and asks, Isn't this amazing? I'll never reach paradise.

Later that night, the lads are asleep and I've had two Zacapas and Cokes. I SMS some friends about my day and how I feel about sons/fatherhood/etc. How I did. They responded and approached. The next morning, I'm sober, have distance from my son, and feel ashamed by my texts. Less likely to impulsively share my emotions with others. Stupid again.