More on Entrepreneurship/Creators

Raad Ahmed

2 years ago

How We Just Raised $6M At An $80M Valuation From 100+ Investors Using A Link (Without Pitching)

Lawtrades nearly failed three years ago.

We couldn't raise Series A or enthusiasm from VCs.

We raised $6M (at a $80M valuation) from 100 customers and investors using a link and no pitching.

Step-by-step:

We refocused our business first.

Lawtrades raised $3.7M while Atrium raised $75M. By comparison, we seemed unimportant.

We had to close the company or try something new.

As I've written previously, a pivot saved us. Our initial focus on SMBs attracted many unprofitable customers. SMBs needed one-off legal services, meaning low fees and high turnover.

Tech startups were different. Their General Councels (GCs) needed near-daily support, resulting in higher fees and lower churn than SMBs.

We stopped unprofitable customers and focused on power users. To avoid dilution, we borrowed against receivables. We scaled our revenue 10x, from $70k/mo to $700k/mo.

Then, we reconsidered fundraising (and do it differently)

This time was different. Lawtrades was cash flow positive for most of last year, so we could dictate our own terms. VCs were still wary of legaltech after Atrium's shutdown (though they were thinking about the space).

We neither wanted to rely on VCs nor dilute more than 10% equity. So we didn't compete for in-person pitch meetings.

AngelList Roll-Up Vehicle (RUV). Up to 250 accredited investors can invest in a single RUV. First, we emailed customers the RUV. Why? Because I wanted to help the platform's users.

Imagine if Uber or Airbnb let all drivers or Superhosts invest in an RUV. Humans make the platform, theirs and ours. Giving people a chance to invest increases their loyalty.

We expanded after initial interest.

We created a Journey link, containing everything that would normally go in an investor pitch:

- Slides

- Trailer (from me)

- Testimonials

- Product demo

- Financials

We could also link to our AngelList RUV and send the pitch to an unlimited number of people. Instead of 1:1, we had 1:10,000 pitches-to-investors.

We posted Journey's link in RUV Alliance Discord. 600 accredited investors noticed it immediately. Within days, we raised $250,000 from customers-turned-investors.

Stonks, which live-streamed our pitch to thousands of viewers, was interested in our grassroots enthusiasm. We got $1.4M from people I've never met.

These updates on Pump generated more interest. Facebook, Uber, Netflix, and Robinhood executives all wanted to invest. Sahil Lavingia, who had rejected us, gave us $100k.

We closed the round with public support.

Without a single pitch meeting, we'd raised $2.3M. It was a result of natural enthusiasm: taking care of the people who made us who we are, letting them move first, and leveraging their enthusiasm with VCs, who were interested.

We used network effects to raise $3.7M from a founder-turned-VC, bringing the total to $6M at a $80M valuation (which, by the way, I set myself).

What flipping the fundraising script allowed us to do:

We started with private investors instead of 2–3 VCs to show VCs what we were worth. This gave Lawtrades the ability to:

- Without meetings, share our vision. Many people saw our Journey link. I ended up taking meetings with people who planned to contribute $50k+, but still, the ratio of views-to-meetings was outrageously good for us.

- Leverage ourselves. Instead of us selling ourselves to VCs, they did. Some people with large checks or late arrivals were turned away.

- Maintain voting power. No board seats were lost.

- Utilize viral network effects. People-powered.

- Preemptively halt churn by turning our users into owners. People are more loyal and respectful to things they own. Our users make us who we are — no matter how good our tech is, we need human beings to use it. They deserve to be owners.

I don't blame founders for being hesitant about this approach. Pump and RUVs are new and scary. But it won’t be that way for long. Our approach redistributed some of the power that normally lies entirely with VCs, putting it into our hands and our network’s hands.

This is the future — another way power is shifting from centralized to decentralized.

Jared Heyman

1 year ago

The survival and demise of Y Combinator startups

I've written a lot about Y Combinator's success, but as any startup founder or investor knows, many startups fail.

Rebel Fund invests in the top 5-10% of new Y Combinator startups each year, so we focus on identifying and supporting the most promising technology startups in our ecosystem. Given the power law dynamic and asymmetric risk/return profile of venture capital, we worry more about our successes than our failures. Since the latter still counts, this essay will focus on the proportion of YC startups that fail.

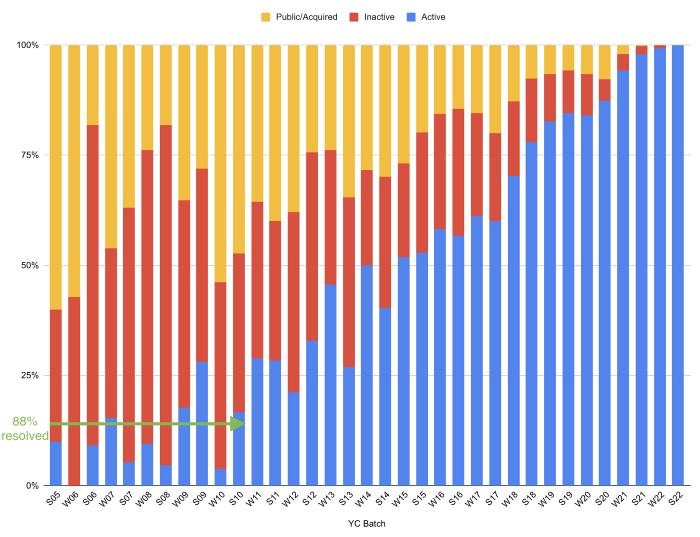

Since YC's launch in 2005, the figure below shows the percentage of active, inactive, and public/acquired YC startups by batch.

As more startups finish, the blue bars (active) decrease significantly. By 12 years, 88% of startups have closed or exited. Only 7% of startups reach resolution each year.

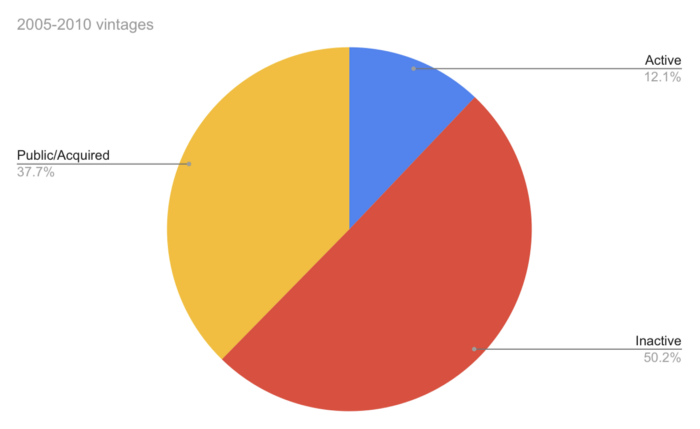

YC startups by status after 12 years:

Half the startups have failed, over one-third have exited, and the rest are still operating.

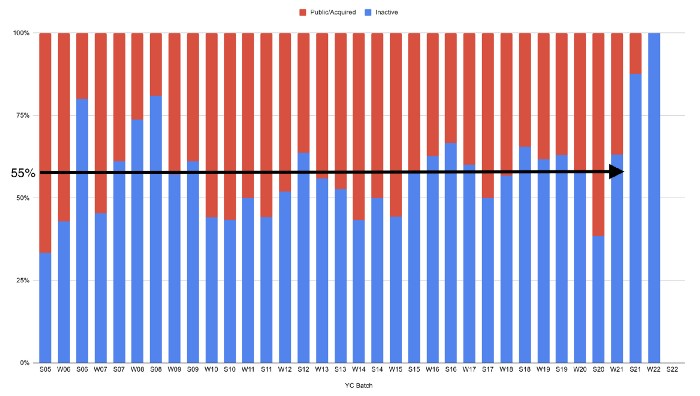

In venture investing, it's said that failed investments show up before successful ones. This is true for YC startups, but only in their early years.

Below, we only present resolved companies from the first chart. Some companies fail soon after establishment, but after a few years, the inactive vs. public/acquired ratio stabilizes around 55:45. After a few years, a YC firm is roughly as likely to quit as fail, which is better than I imagined.

I prepared this post because Rebel investors regularly question me about YC startup failure rates and how long it takes for them to exit or shut down.

Early-stage venture investors can overlook it because 100x investments matter more than 0x investments.

YC founders can ignore it because it shouldn't matter if many of their peers succeed or fail ;)

Nick Nolan

1 year ago

In five years, starting a business won't be hip.

People are slowly recognizing entrepreneurship's downside.

Growing up, entrepreneurship wasn't common. High school class of 2012 had no entrepreneurs.

Businesses were different.

They had staff and a lengthy history of achievement.

I never wanted a business. It felt unattainable. My friends didn't care.

Weird.

People desired degrees to attain good jobs at big companies.

When graduated high school:

9 out of 10 people attend college

Earn minimum wage (7%) working in a restaurant or retail establishment

Or join the military (3%)

Later, entrepreneurship became a thing.

2014-ish

I was in the military and most of my high school friends were in college, so I didn't hear anything.

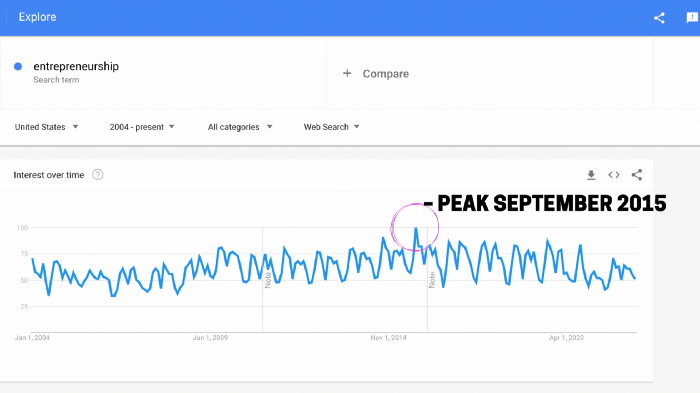

Entrepreneurship soared in 2015, according to Google Trends.

Then more individuals were interested. Entrepreneurship went from unusual to cool.

In 2015, it was easier than ever to build a website, run Facebook advertisements, and achieve organic social media reach.

There were several online business tools.

You didn't need to spend years or money figuring it out. Most entry barriers were gone.

Everyone wanted a side gig to escape the 95.

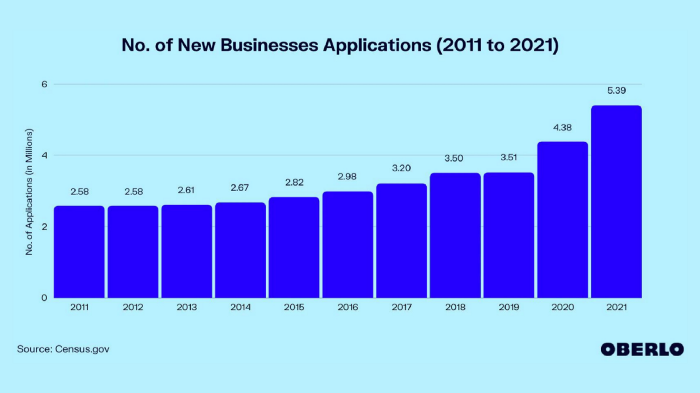

Small company applications have increased during the previous 10 years.

2011-2014 trend continues.

2015 adds 150,000 applications. 2016 adds 200,000. Plus 300,000 in 2017.

The graph makes it look little, but that's a considerable annual spike with no indications of stopping.

By 2021, new business apps had doubled.

Entrepreneurship will return to its early 2010s level.

I think we'll go backward in 5 years.

Entrepreneurship is half as popular as it was in 2015.

In the late 2020s and 30s, entrepreneurship will again be obscure.

Entrepreneurship's decade-long splendor is fading. People will cease escaping 9-5 and launch fewer companies.

That’s not a bad thing.

I think people have a rose-colored vision of entrepreneurship. It's fashionable. People feel that they're missing out if they're not entrepreneurial.

Reality is showing up.

People say on social media, "I knew starting a business would be hard, but not this hard."

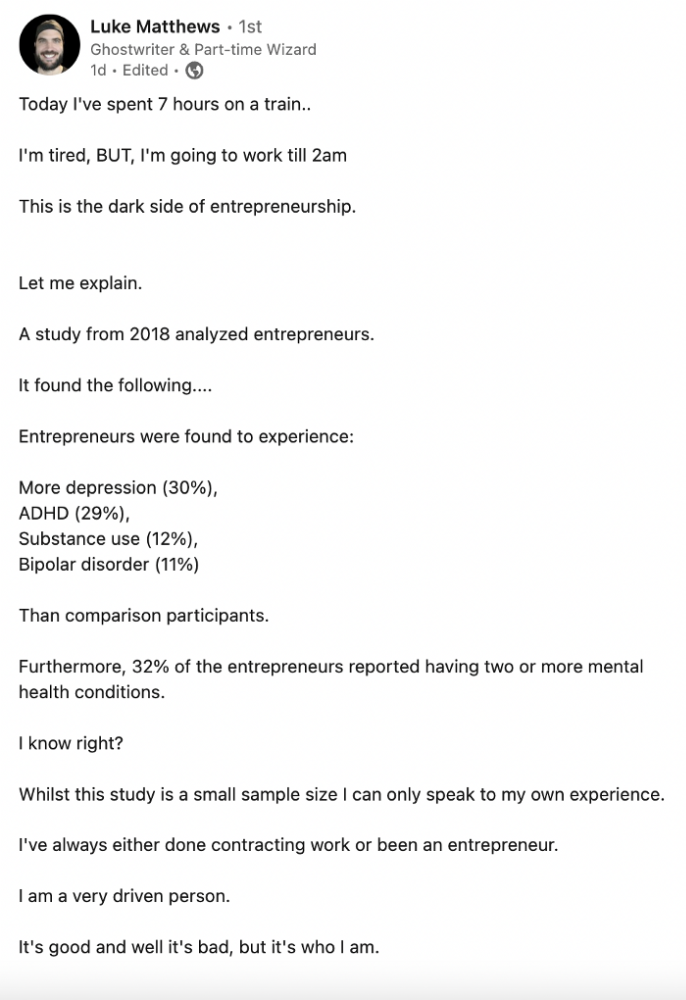

More negative posts on entrepreneurship:

Luke adds:

Is being an entrepreneur ‘healthy’? I don’t really think so. Many like Gary V, are not role models for a well-balanced life. Despite what feel-good LinkedIn tells you the odds are against you as an entrepreneur. You have to work your face off. It’s a tough but rewarding lifestyle. So maybe let’s stop glorifying it because it takes a lot of (bleepin) work to survive a pandemic, mental health battles, and a competitive market.

Entrepreneurship is no longer a pipe dream.

It’s hard.

I went full-time in March 2020. I was done by April 2021. I had a good-paying job with perks.

When that fell through (on my start date), I had to continue my entrepreneurial path. I needed money by May 1 to pay rent.

Entrepreneurship isn't as great as many think.

Entrepreneurship is a serious business.

If you have a 9-5, the grass isn't greener here. Most people aren't telling the whole story when they post on social media or quote successful entrepreneurs.

People prefer to communicate their victories than their defeats.

Is this a bad thing?

I don’t think so.

Over the previous decade, entrepreneurship went from impossible to the finest thing ever.

It peaked in 2020-21 and is returning to reality.

Startups aren't for everyone.

If you like your job, don't quit.

Entrepreneurship won't amaze people if you quit your job.

It's irrelevant.

You're doomed.

And you'll probably make less money.

If you hate your job, quit. Change jobs and bosses. Changing jobs could net you a greater pay or better perks.

When you go solo, your paycheck and perks vanish. Did I mention you'll fail, sleep less, and stress more?

Nobody will stop you from pursuing entrepreneurship. You'll face several challenges.

Possibly.

Entrepreneurship may be romanticized for years.

Based on what I see from entrepreneurs on social media and trends, entrepreneurship is challenging and few will succeed.

You might also like

Ellane W

1 year ago

The Last To-Do List Template I'll Ever Need, Years in the Making

The holy grail of plain text task management is finally within reach

Plain text task management? Are you serious?? Dedicated task managers exist for a reason, you know. Sheesh.

—Oh, I know. Believe me, I know! But hear me out.

I've managed projects and tasks in plain text for more than four years. Since reorganizing my to-do list, plain text task management is within reach.

Data completely yours? One billion percent. Beef it up with coding? Be my guest.

Enter: The List

The answer? A list. That’s it!

Write down tasks. Obsidian, Notenik, Drafts, or iA Writer are good plain text note-taking apps.

List too long? Of course, it is! A large list tells you what to do. Feel the itch and friction. Then fix it.

But I want to be able to distinguish between work and personal life! List two things.

However, I need to know what should be completed first. Put those items at the top.

However, some things keep coming up, and I need to be reminded of them! Put those in your calendar and make an alarm for them.

But since individual X hasn't completed task Y, I can't proceed with this. Create a Waiting section on your list by dividing it.

But I must know what I'm supposed to be doing right now! Read your list(s). Check your calendar. Think critically.

Before I begin a new one, I remind myself that "Listory Never Repeats."

There’s no such thing as too many lists if all are needed. There is such a thing as too many lists if you make them before they’re needed. Before they complain that their previous room was small or too crowded or needed a new light.

A list that feels too long has a voice; it’s telling you what to do next.

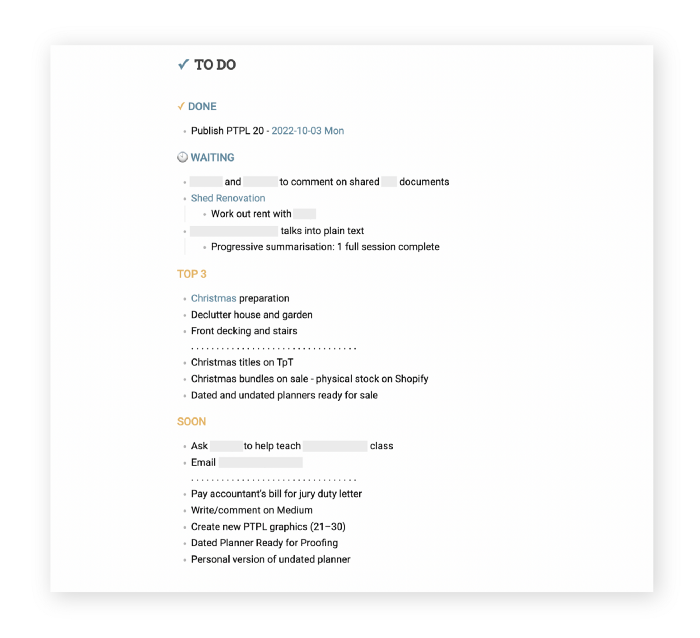

I use one Master List. It's a control panel that tells me what to focus on short-term. If something doesn't need semi-immediate attention, it goes on my Backlog list.

Todd Lewandowski's DWTS (Done, Waiting, Top 3, Soon) performance deserves praise. His DWTS to-do list structure has transformed my plain-text task management. I didn't realize it was upside down.

This is my take on it:

D = Done

Move finished items here. If they pile up, clear them out every week or month. I have a Done Archive folder.

W = Waiting

Things seething in the background, awaiting action. Stir them occasionally so they don't burn.

T = Top 3

Three priorities. Personal comes first, then work. There will always be a top 3 (no more than 5) in every category. Projects, not chores, usually.

S = Soon

This part is action-oriented. It's for anything you can accomplish to finish one of the Top 3. This collection includes thoughts and project lists. The sole requirement is that they should be short-term goals.

Some of you have probably concluded this isn't for you. Please read Todd's piece before throwing out the baby. Often. You shouldn't miss a newborn.

As much as Dancing With The Stars helps me recall this method, I may try switching their order. TSWD; Drilling Tunnel Seismic? Serenity After Task?

Master List Showcase

My Master List lives alone in its own file, but sometimes appears in other places. It's included in my Weekly List template. Here's a (soon-to-be-updated) demo vault of my Obsidian planning setup to download for free.

Here's the code behind my weekly screenshot:

## [[Master List - 2022|✓]] TO DO

![[Master List - 2022]]FYI, I use the Minimal Theme in Obsidian, with a few tweaks.

You may note I'm utilizing a checkmark as a link. For me, that's easier than locating the proper spot to click on the embed.

Blue headings for Done and Waiting are links. Done links to the Done Archive page and Waiting to a general waiting page.

Read my full article here.

Michael Salim

1 year ago

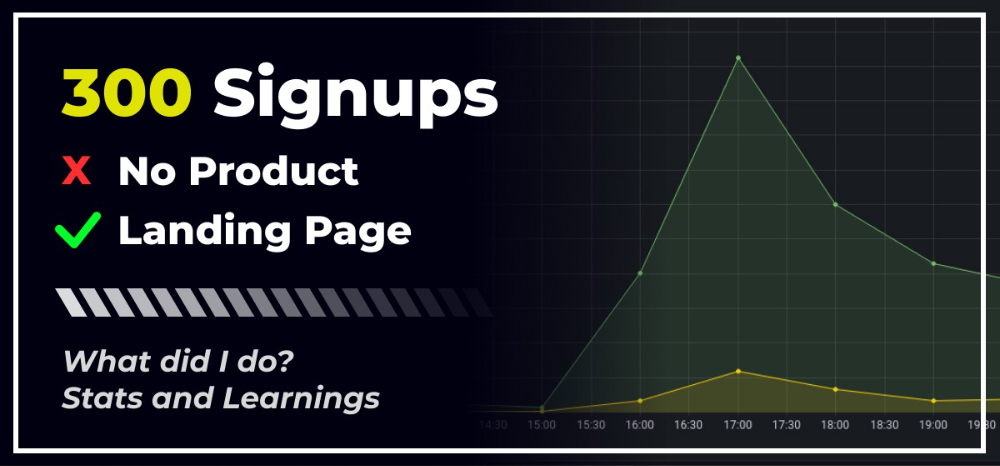

300 Signups, 1 Landing Page, 0 Products

I placed a link on HackerNews and got 300 signups in a week. This post explains what happened.

Product Concept

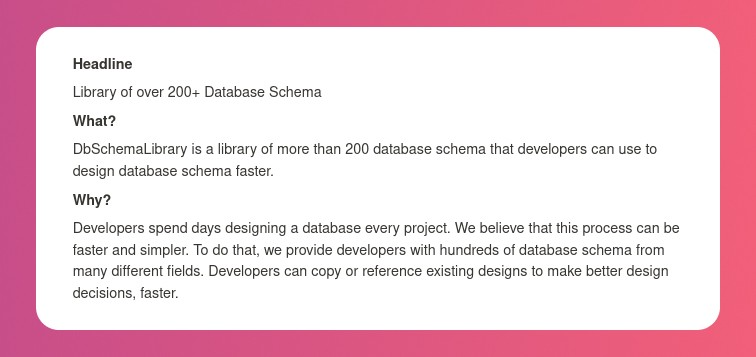

The product is DbSchemaLibrary. A library of Database Schema.

I'm not sure where this idea originated from. Very fast. Build fast, fail fast, test many ideas, and one will be a hit. I tried it. Let's try it anyway, even though it'll probably fail. I finished The Lean Startup book and wanted to use it.

Database job bores me. Important! I get drowsy working on it. Someone must do it. I remember this happening once. I needed examples at the time. Something similar to Recall (my other project) that I can copy — or at least use as a reference.

Frequently googled. Many tabs open. The results were useless. I raised my hand and agreed to construct the database myself.

It resurfaced. I decided to do something.

Due Diligence

Lean Startup emphasizes validated learning. Everything the startup does should result in learning. I may build something nobody wants otherwise. That's what happened to Recall.

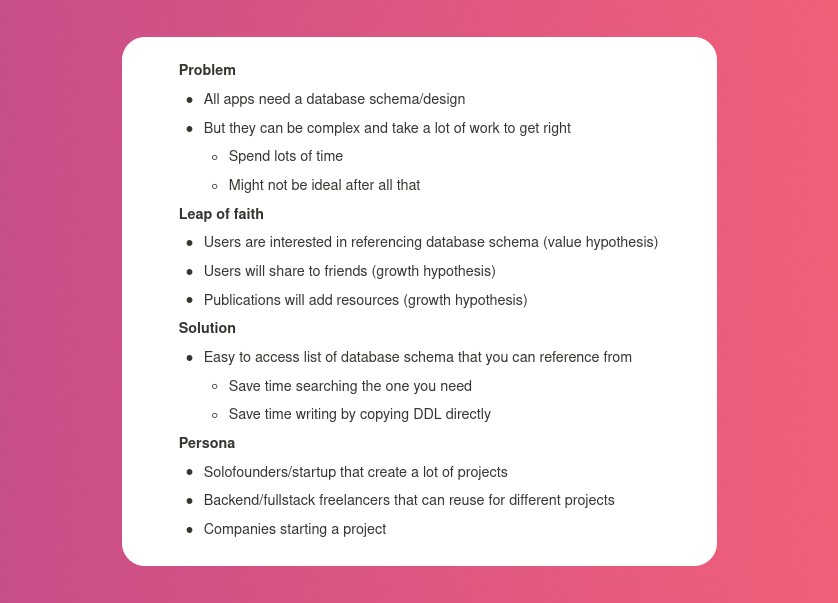

So, I wrote a business plan document. This happens before I code. What am I solving? What is my proposed solution? What is the leap of faith between the problem and solution? Who would be my target audience?

My note:

In my previous project, I did the opposite!

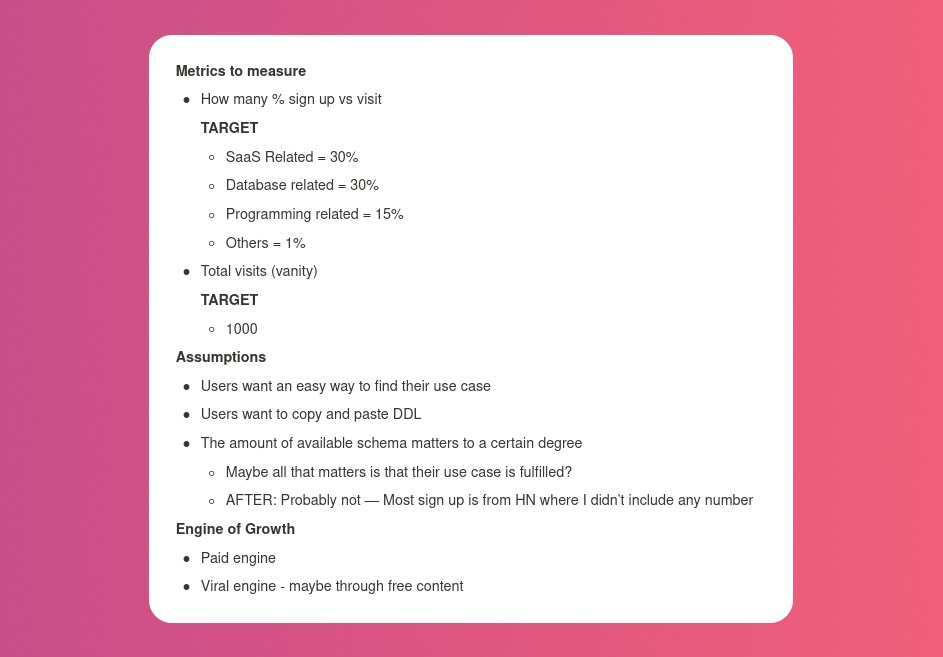

I wrote my expectations after reading the book's advice.

“Failure is a prerequisite to learning. The problem with the notion of shipping a product and then seeing what happens is that you are guaranteed to succeed — at seeing what happens.” — The Lean Startup book

These are successful metrics. If I don't reach them, I'll drop the idea and try another. I didn't understand numbers then. Below are guesses. But it’s a start!

I then wrote the project's What and Why. I'll use this everywhere. Before, I wrote a different pitch each time. I thought certain words would be better. I felt the audience might want something unusual.

Occasionally, this works. I'm unsure if it's a good idea. No stats, just my writing-time opinion. Writing every time is time-consuming and sometimes hazardous. Having a copy saved me duplication.

I can measure and learn from performance.

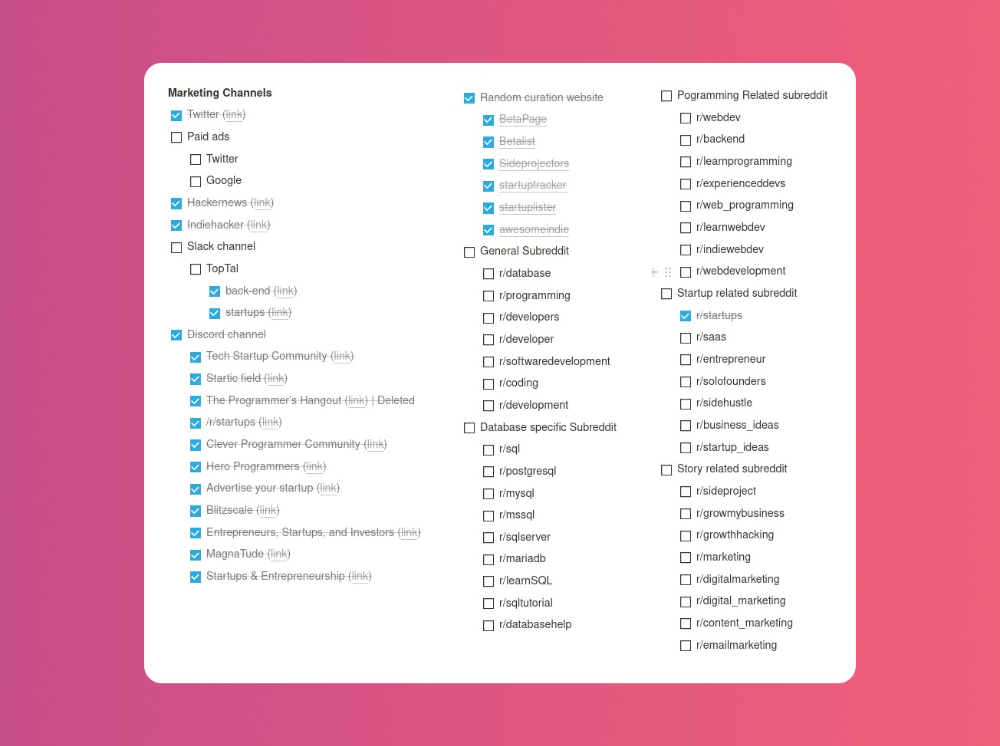

Last, I identified communities that might demand the product. This became an exercise in creativity.

The MVP

So now it’s time to build.

A MVP can test my assumptions. Business may learn from it. Not low-quality. We should learn from the tiniest thing.

I like the example of how Dropbox did theirs. They assumed that if the product works, people will utilize it. How can this be tested without a quality product? They made a movie demonstrating the software's functionality. Who knows how much functionality existed?

So I tested my biggest assumption. Users want schema references. How can I test if users want to reference another schema? I'd love this. Recall taught me that wanting something doesn't mean others do.

I made an email-collection landing page. Describe it briefly. Reference library. Each email sender wants a reference. They're interested in the product. Few other reasons exist.

Header and footer were skipped. No name or logo. DbSchemaLibrary is a name I thought of after the fact. 5-minute logo. I expected a flop. Recall has no users after months of labor. What could happen to a 2-day project?

I didn't compromise learning validation. How many visitors sign up? To draw a conclusion, I must track these results.

Posting Time

Now that the job is done, gauge interest. The next morning, I posted on all my channels. I didn't want to be spammy, therefore it required more time.

I made sure each channel had at least one fan of this product. I also answer people's inquiries in the channel.

My list stinks. Several channels wouldn't work. The product's target market isn't there. Posting there would waste our time. This taught me to create marketing channels depending on my persona.

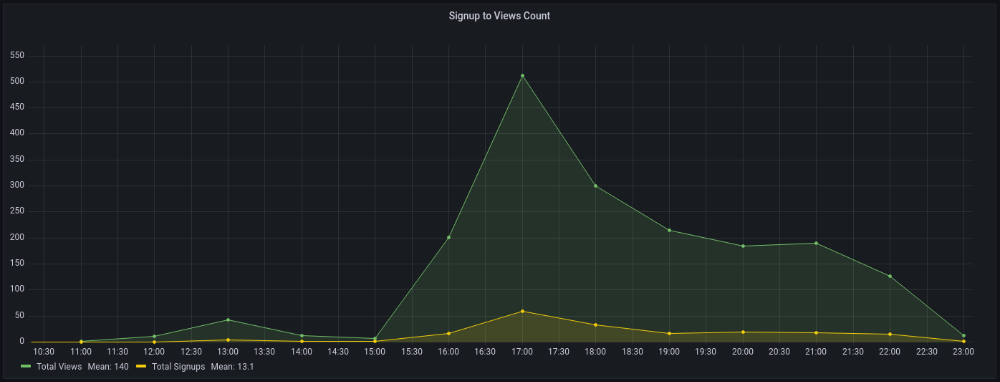

Statistics! What actually happened

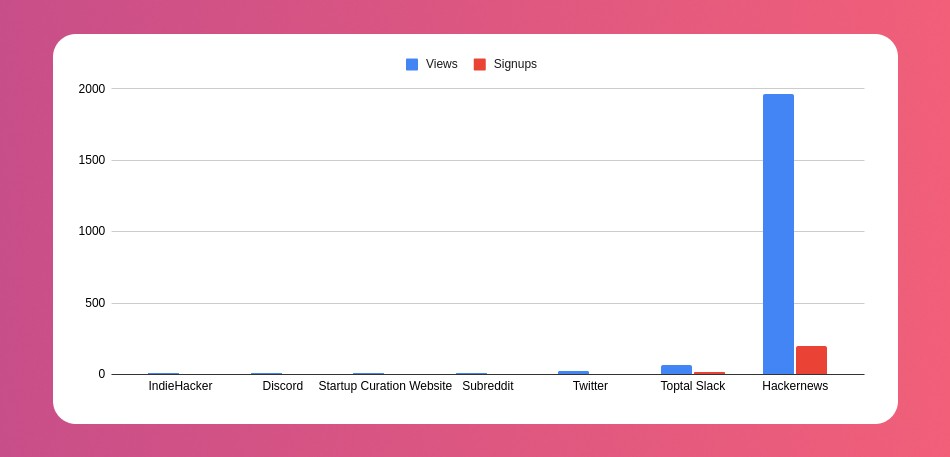

My favorite part! 23 channels received the link.

I stopped posting to Discord despite its high conversion rate. I eliminated some channels because they didn't fit. According to the numbers, some users like it. Most users think it's spam.

I was skeptical. And 12 people viewed it.

I didn't expect much attention on a startup subreddit. I'll likely examine Reddit further in the future. As I have enough info, I didn't post much. Time for the next validated learning

No comment. The post had few views, therefore the numbers are low.

The targeted people come next.

I'm a Toptal freelancer. There's a member-only Slack channel. Most people can't use this marketing channel, but you should! It's not as spectacular as discord's 27% conversion rate. But I think the users here are better.

I don’t really have a following anywhere so this isn’t something I can leverage.

The best yet. 10% is converted. With more data, I expect to attain a 10% conversion rate from other channels. Stable number.

This number required some work. Did you know that people use many different clients to read HN?

Unknowns

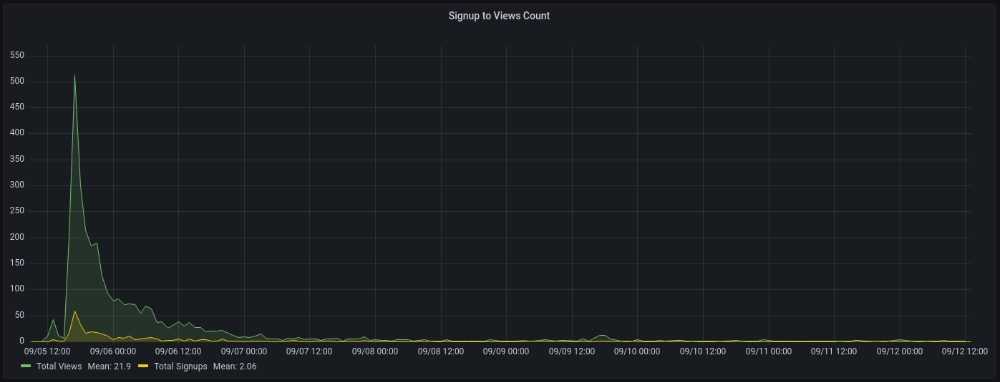

Untrackable views and signups abound. 1136 views and 135 signups are untraceable. It's 11%. I bet much of that came from Hackernews.

Overall Statistics

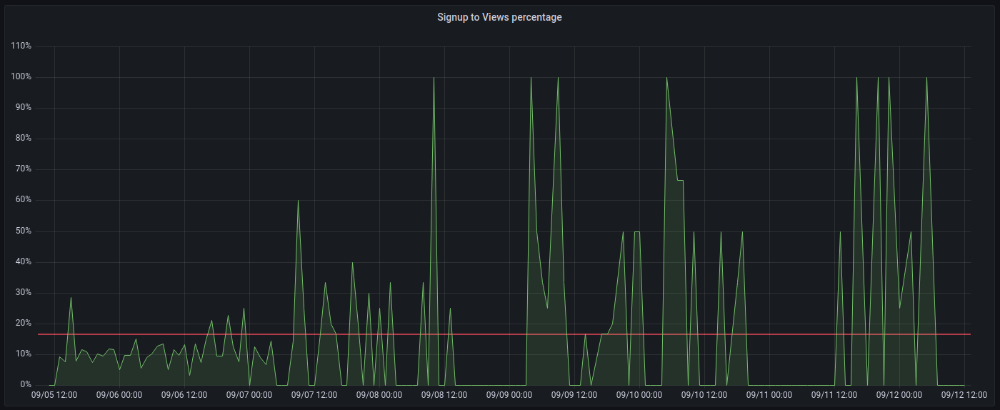

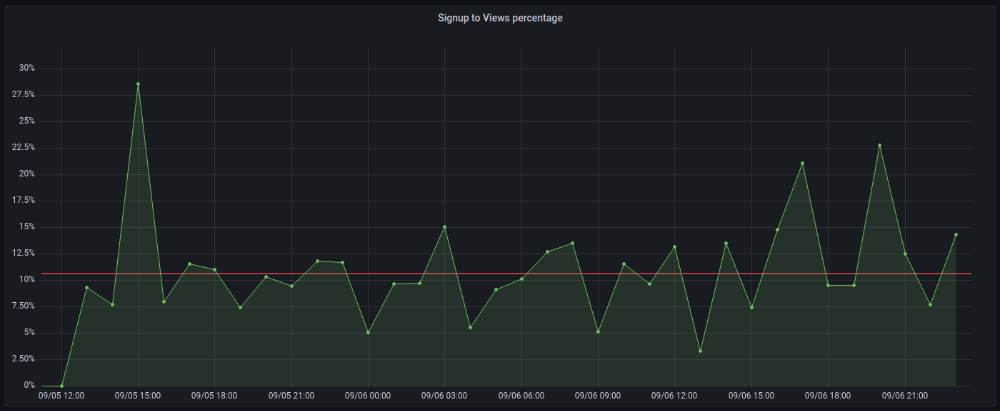

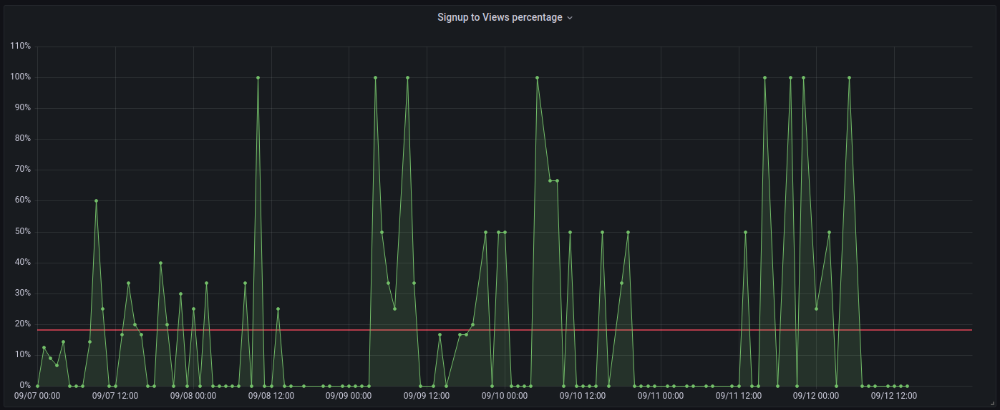

The 7-day signup-to-visit ratio was 17%. (Hourly data points)

First-day percentages were lower, which is noteworthy. Initially, it was little above 10%. The HN post started getting views then.

When traffic drops, the number reaches just around 20%. More individuals are interested in the connection. hn.algolia.com sent 2 visitors. This means people are searching and finding my post.

Interesting discoveries

1. HN post struggled till the US woke up.

11am UTC. After an hour, it lost popularity. It seemed over. 7 signups converted 13%. Not amazing, but I would've thought ahead.

After 4pm UTC, traffic grew again. 4pm UTC is 9am PDT. US awakened. 10am PDT saw 512 views.

2. The product was highlighted in a newsletter.

I found Revue references when gathering data. Newsletter platform. Someone posted the newsletter link. 37 views and 3 registrations.

3. HN numbers are extremely reliable

I don't have a time-lapse graph (yet). The statistics were constant all day.

2717 views later 272 new users, or 10.1%

With 293 signups at 2856 views, 10.25%

At 306 signups at 2965 views, 10.32%

Learnings

1. My initial estimations were wildly inaccurate

I wrote 30% conversion. Reading some articles, looks like 10% is a good number to aim for.

2. Paying attention to what matters rather than vain metrics

The Lean Startup discourages vanity metrics. Feel-good metrics that don't measure growth or traction. Considering the proportion instead of the total visitors made me realize there was something here.

What’s next?

There are lots of work to do. Data aggregation, display, website development, marketing, legal issues. Fun! It's satisfying to solve an issue rather than investigate its cause.

In the meantime, I’ve already written the first project update in another post. Continue reading it if you’d like to know more about the project itself! Shifting from Quantity to Quality — DbSchemaLibrary

Mark Schaefer

1 year ago

20 Fun Uses for ChatGPT

Our RISE community is stoked on ChatGPT. ChatGPT has countless uses.

Early on. Companies are figuring out the legal and ethical implications of AI's content revolution. Using AI for everyday tasks is cool.

So I challenged RISE friends... Let's have fun and share non-obvious uses.

Onward!

1. Tweet

Jim MacLeod requested a design-themed Twitter thread from ChatGPT.

2. Novelize

Giuseppe Fratoni writes novels.

"This is amazing!" Impotent. I asked about writing, mystery novels, and jazz.

"Develop a mystery novel plot in which a disillusioned homicide detective hunts a serial killer who targets jazz musicians who play Autumn Leaves."

"I have:

Detective James Thompson is a seasoned veteran of the Homicide department, but lately he’s been feeling burnt out and disillusioned. His latest case, the murder of a young jazz pianist who was found brutally killed in his home, only serves to deepen his sense of despair … (much more provided)

3. Question-and-answer sessions

Bliss recommends:

You have a guest speaker and want to ask audience questions.

Pre-generate questions with ChatGPT. "And here's a question from our AI?"

The conference is about Western water scarcity. Water management expert. My AI question was...

Which desalination-related questions should I ask an expert?

ChatGPT said...

How is desalination currently being used around the world, and in what types of locations is it most effective?

What are the potential advantages and disadvantages of using desalination to provide fresh water?

What are some of the key challenges and limitations of desalination technology, and how are they being addressed?

4. Game-making

Richard's a board game expert. He suggests using ChatGPT to plan games. Note: Some people have started getting help solving crossword puzzle clues.

5. Matchmaking

Tanya Thompson thinks it helps dating. ChatGPT:

Here are some flirty first-message ideas for dating apps:

I noticed you from afar. I had to say hi and see if we clicked.

Your profile picture is gorgeous. I'm obsessed with your [Feature] and want to know you better.

I don't usually message first, but I had to. You're [Attractive Quality] and I can't wait to talk.”

Hello. Your profile intimidates me. I'm starstruck by your [Attractiveness]. (Many instances)

5. Solitude/anxiety

ChatGPT excels at one-on-one conversations.

PepperBrooks: "I'm sad and lonely."

ChatGPT: I’m sorry to hear that you’re feeling sad and lonely. It’s natural to feel down sometimes, but it’s important to remember that you’re not alone. There are many people who care about you and want to help you. It can be helpful to talk to someone about your feelings, whether it’s a close friend, family member, or mental health professional.

Sometimes, just expressing your thoughts and emotions to someone else can help to alleviate your sadness and loneliness. It’s also important to take care of yourself and do things that make you happy, such as spending time with loved ones, pursuing a hobby, or exercising. These things can help to improve your mood and make you feel more connected to others. Remember that you are strong and capable, and that you can get through this difficult time.

Daniel Nestle asked ChatGPT to generate journal prompts to help him deal with anxiety.

Write down the things that are causing you anxiety at the moment.

Describe a situation in which you felt anxious and how you coped with it.

Reflect on how your thoughts and beliefs contribute to your anxiety.

Write a letter to your anxiety, expressing your feelings and concerns

Imagine yourself in a calm and peaceful place, and describe it in detail.

(many more items followed)

6. Names

Continued Daniel:

Also, naming ideas.

Me: Name this drink. Japanese gin, unsweetened tonic, lime, Szechuan pepper, grapefruit.

ChatGPT: How about “Sakura Sunrise?” The name incorporates the Japanese gin and the citrus flavors of grapefruit and lime, and the word “sunrise” suggests a fresh and invigorating start to the day. Alternatively, you could go with something like “Peppered Grapefruit Gin Fizz” to emphasize the unique flavor combination of Szechuan pepper and grapefruit.

7. Translation on the go

Monks recommends: ChatGPT is a perfect mobile translation tool. Say you need help in Italy.

ME: Translate to Italian

Where is the nearest gas station?

ChatGPT: “La mia auto sta per esaurire il carburante, mi puoi dire dove si trova la stazione di servizio più vicina?”

8. Fitness

Monks added:

ME: In 3 months, I want to run 5km. Plan out how I can do this.

ChatGPT: Here's a 3-month plan to run 5km.

Start by walking for 15–20 minutes each day to build up your endurance and get your body used to regular exercise.

After a week of walking, begin incorporating short jogs into your routine. Start by jogging for 1–2 minutes at a time, with walking breaks in between. Gradually increase the length of your jogs and reduce the amount of time you spend walking.

Once you can jog for 10 minutes without stopping, start increasing the distance of your runs. Aim to run 1km without stopping and then gradually increase the distance over time.

In addition to your regular runs, incorporate strength training and stretching into your routine to improve your overall fitness and reduce the risk of injury.

(more details continued on)

9. Integrations and coding

10. Teacher lesson plans

Spencer Crandall saved time.

This morning, I wondered who has the hardest job. Teachers.

Many students, little time. ChatGPT could handle lesson planning. I tried it and got this.

Lesson Title: The History and Impact of Artificial Intelligence

Students will understand the history and development of AI and the potential positive and negative impacts on society.

AI timeline AI technologies and applications

Overview:

Introduce the topic of artificial intelligence, and explain that it refers to the development of computer systems and algorithms that can simulate human intelligence and behavior.

Show the timeline of significant events in the history of AI, and discuss the key milestones and developments in the field.

Show examples of AI technologies and applications, and explain how they are used in various industries and fields.

Discuss the potential positive and negative impacts of AI on society, including its potential to improve productivity, efficiency, and safety, as well as its potential to create new challenges and concerns, such as job displacement and ethical issues.

(many more details followed)

11. Find podcast guests.

Joeri Billast suggested:

Who should be on my Web3 podcast?

ChatGPT: Web3 marketing experts, influencers, and thought leaders make great podcast guests. These include Web3 marketers, content creators, business owners, entrepreneurs, and technologists. You could invite Web3 startups and organizations to your podcast.

Please give examples.

ChatGPT: Ideal Web3 marketing podcast guests include:

Tim Berner-Lee, inventor of the World Wide Web

Chris Dixon, general partner at Andreessen Horowitz

Vitalik Buterin, founder of Ethereum

Joe Lubin, founder of ConsenSys

Susan Fowler, author of Whistleblower

(many other suggestions)

12. Comments and reviews on social media

Lisa Monk thinks ChatGPT can help retail staff with social media.

She asked ChatGPT to "review this restaurant"

POSITIVE CUSTOMER REVIEW: I absolutely love this place. We eat out a lot however this place has topped it all. Perfect meals, amazing staff and the timing for it all was great! Special thanks to Sophie B (you rock) From your guests all the way from the Gold Coast, THANK YOU

ChatGPT REPLY: Thank you so much for your kind words and for taking the time to write a review. We are delighted to hear that you had such a great experience at our restaurant. We are thrilled that you enjoyed your meal and the service provided by Sophie. It’s always a pleasure to serve guests visiting from the Gold Coast and we are grateful for your support. We look forward to welcoming you back again soon. Thank you again for your positive review.

CUSTOMER REVIEW: Most average food, there is a nice view, But that’s about it

ChatGPT: Thank you for your review. We apologize if your dining experience was not up to your expectations. We strive to provide top-quality food and service to all of our customers, and we will take your feedback into consideration as we continue to improve. Thank you for letting us know about your experience.

13. Crime-fighting

Zack Seipert said, "I researched some famous unsolved murders, and ChatGPT laid out the crime, suspects, clues, and more." I bet experienced detectives could solve some of these cold cases.

14. Reduce bias

Joanne Taylor struggles to remove biases from her writing. She asked ChatGPT for ideas.

I asked ChatGPT to check a text for racial bias, and it did well.

15. Furnishings

I designed a small, unique table using ChatGPT and MidJourney. I edited ChatGPT's idea for clarity and brevity before entering it in MidJourney.

Results:

16. Serialize a novel

Scott Scowcoft led ChatCPT through iterations of a story text to create serialized novel frames. You can view this here.

17. Interview preparation

I was on a sales podcast. Although this is marketing-related, it's not my forte. I asked ChatGPT for help with a sales interview. Response:

Here are some interview questions about the future of sales and how to answer them.

How will technology affect sales in the future?

Technology will impact sales in the coming years. Automation and AI could be used to handle routine tasks, and data analysis and CRM could become more important. E-commerce and online sales channels are also reshaping the sales landscape.

How will the salesperson's role change?

Future sales roles may change in response to changing customer needs and expectations. Salespeople must be knowledgeable and consultative to help customers navigate complex products and services. Increasing importance of data analysis and CRM tools will require salespeople to understand and use technology effectively.

Future sales professionals will need what skills?

Future salespeople will need many skills. Sales professionals must have strong communication and interpersonal skills, as well as product and service knowledge and the ability to use data analysis and CRM tools effectively. Adapting to changing customer needs and staying current on industry trends will also be important for sales success.

18. Gift ideas

I need Christmas gift ideas for my wife. I asked:

Suggest a gift idea under $200 for a middle-aged woman who loves The Eagles.

A middle-aged woman who loves The Eagles might like a vinyl record of "Hotel California" or "The Long Run." Many music fans enjoy the unique sound and experience of vinyl records.

Pair the record with a high-quality record player for a complete gift. The vinyl record and record player should fit within your $200 budget for an Eagles fan.

19. Explaining complex concepts

I entered: Explain quantum physics to me as if I were 10 years old.

Quantum physics is a branch of science that studies how tiny particles, like atoms and subatomic particles, behave. These particles are so small that they don’t always follow the same rules as the things we can see and touch every day.

For example, sometimes they can be in two places at the same time, or they can be friends with each other even if they are far apart. This might sound strange, but it’s what scientists have learned from studying these tiny particles. Quantum physics helps us understand how the world works at a very small scale, and it can also help us make new technologies, like computers that are much faster than the ones we have today.

20. AP-style editing

Blogs, journals, and books follow AP Style. I asked ChatGPT to "edit using AP Style" and it did a great job.

And then... "Write a catchy headline for a blog post about 16 ChatGPT uses"

Here are some possible blog post titles for 20 creative ChatGPT uses:

“20 Fun and Creative Ways to Use ChatGPT: From Games to Quizzes and More”

“The Ultimate Guide to Using ChatGPT for Entertainment and Creativity”

“20 Reasons to Use ChatGPT: How it Can Enhance Your Gaming, Storytelling, and More”

20 Entertaining Uses for ChatGPT You Never Knew Were Possible”

“Unleash Your Imagination with ChatGPT: 16 Creative and Fun Ways to Use it”

In a previous post, I said ChatGPT will change marketing careers forever. I have never made a statement like that in my life. Even in the early days of the internet, people were cautious.

This technology was just released two weeks ago, and over a million people are already using it. This is the fastest technology adoption in history.

Today's post offers inventive and entertaining ideas, but it's just the beginning. ChatGPT writes code, music, and papers.