More on Entrepreneurship/Creators

Simone Basso

3 years ago

How I set up my teams to be successful

After 10 years of working in scale-ups, I've embraced a few concepts for scaling Tech and Product teams.

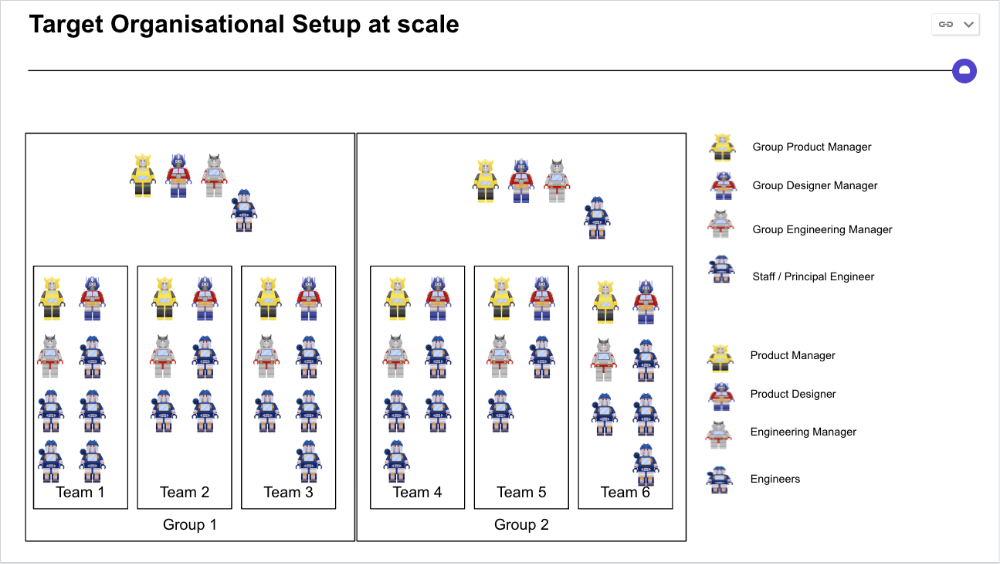

First, cross-functionalize teams. Product Managers represent the business, Product Designers the consumer, and Engineers build.

I organize teams of 5-10 individuals, following AWS's two pizza teams guidelines, with a Product Trio guiding each.

If more individuals are needed to reach a goal, I group teams under a Product Trio.

With Engineering being the biggest group, Staff/Principal Engineers often support the Trio on cross-team technical decisions.

Product Managers, Engineering Managers, or Engineers in the team may manage projects (depending on the project or aim), but the trio is collectively responsible for the team's output and outcome.

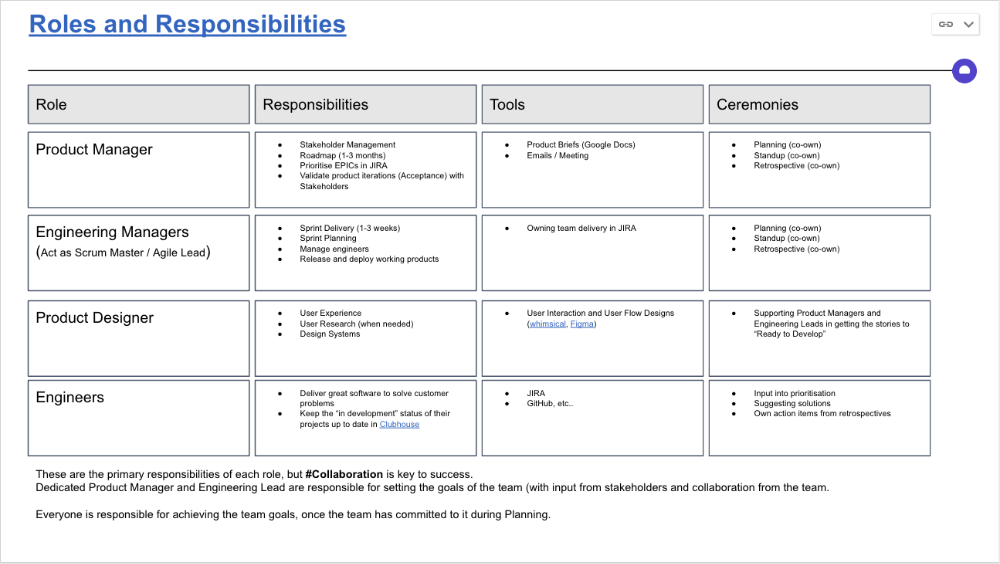

Once the Product Trio model is created, roles, duties, team ceremonies, and cooperation models must be clarified.

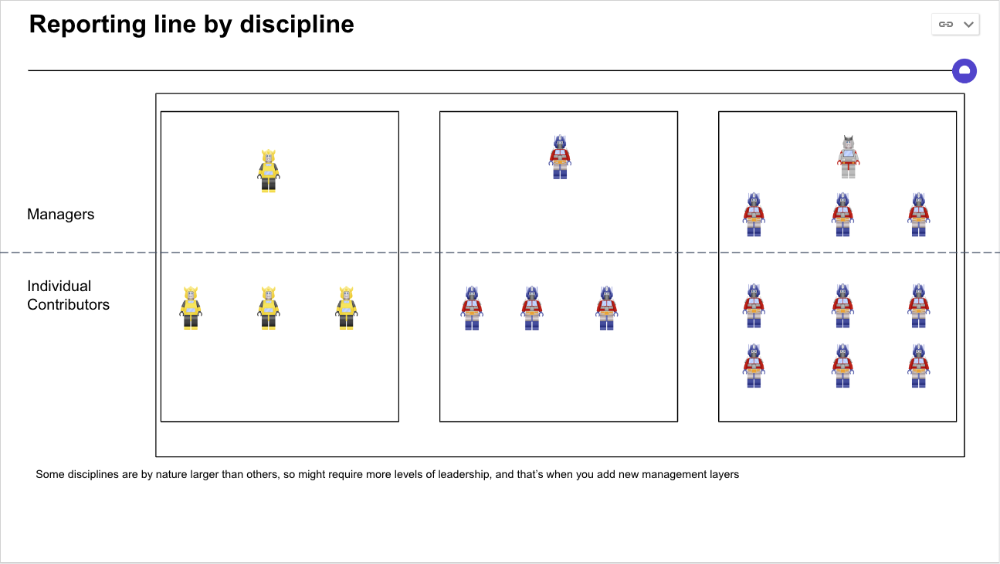

Keep reporting lines by discipline. Line managers are accountable for each individual's advancement, thus it's crucial that they know the work in detail.

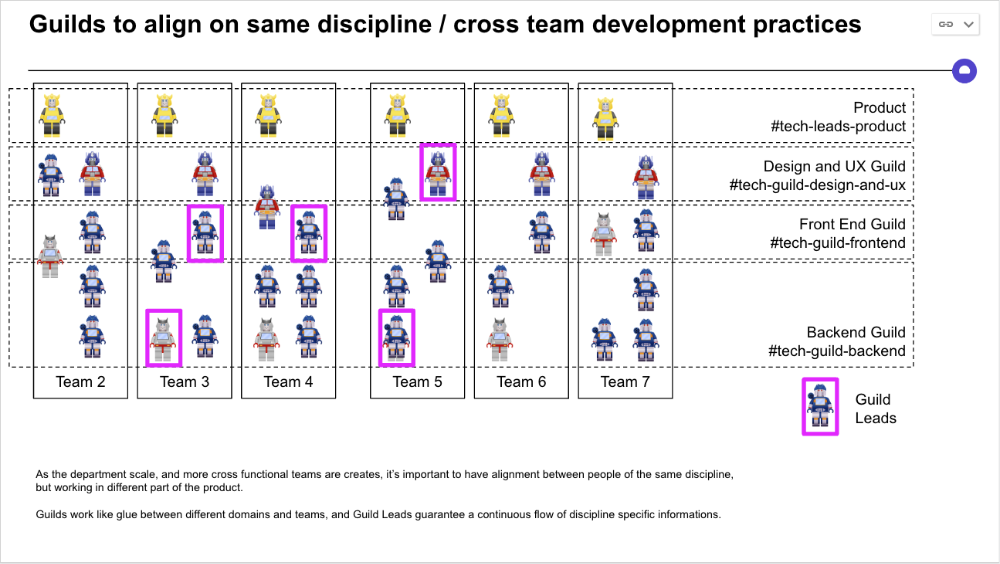

Cross-team collaboration becomes more important after 3 teams (15-30 people). Teams can easily diverge in how they write code, run ceremonies, and build products.

Establishing groups of people that are cross-team, but grouped by discipline and skills, sharing and agreeing on working practices becomes critical.

The “Spotify Guild” model has been where I’ve taken a lot of my inspiration from.

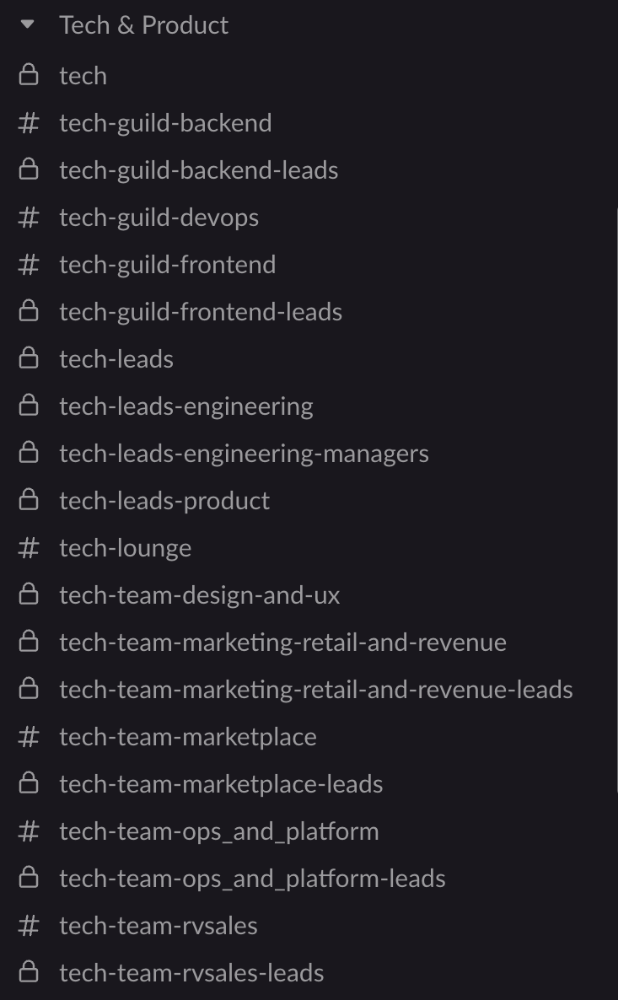

Last, establish a taxonomy for communication channels.

In Slack, I create one channel per team and one per guild (and one for me to have discussions with the team leads).

These are just some of the basic principles I follow to organize teams.

A book I particularly like about team types and how they interact with each other is https://teamtopologies.com/.

Aaron Dinin, PhD

3 years ago

There Are Two Types of Entrepreneurs in the World Make sure you are aware of your type!

Know why it's important.

The entrepreneur I was meeting with said, "I should be doing crypto, or maybe AI? Aren't those the hot spots? I should look there for a startup idea.”

I shook my head. Yes, they're exciting, but that doesn't mean they're best for you and your business.

“There are different types of entrepreneurs?” he asked.

I said "obviously." Two types, actually. Knowing what type of entrepreneur you are helps you build the right startup.

The two types of businesspeople

The best way for me to describe the two types of entrepreneurs is to start by telling you exactly the kinds of entrepreneurial opportunities I never get excited about: future opportunities.

In the early 1990s, my older brother showed me the World Wide Web and urged me to use it. Unimpressed, I returned to my Super Nintendo.

My roommate tried to get me to join Facebook as a senior in college. I remember thinking, This is dumb. Who'll use it?

In 2011, my best friend tried to convince me to buy bitcoin and I laughed.

Heck, a couple of years ago I had to buy a new car, and I never even considered buying something that didn’t require fossilized dinosaur bones.

I'm no visionary. I don't anticipate the future. I focus on the present.

This tendency makes me a problem-solving entrepreneur. I identify entrepreneurial opportunities by spotting flaws and/or inefficiencies in the world and devising solutions.

There are other ways to find business opportunities. Visionary entrepreneurs also exist. I don't mean visionary in the hyperbolic sense that implies world-changing impact. I mean visionary as an entrepreneur who identifies future technological shifts that will change how people work and live and create new markets.

Problem-solving and visionary entrepreneurs are equally good. But the two approaches to building companies are very different. Knowing the type of entrepreneur you are will help you build a startup that fits your worldview.

What is the distinction?

Let's use some simple hypotheticals to compare problem-solving and visionary entrepreneurship.

Imagine a city office building without nearby restaurants. Those office workers love to eat. Sometimes they'd rather eat out than pack a lunch. As an entrepreneur, you can solve the lack of nearby restaurants. You'd open a restaurant near that office, say a pizza parlor, and get customers because you solved the lack of nearby restaurants. Problem-solving entrepreneurship.

Imagine a new office building in a developing area with no residents or workers. In this scenario, a large office building is coming. The workers will need to eat then. As a visionary entrepreneur, you're excited about the new market and decide to open a pizzeria near the construction to meet demand.

Both possibilities involve the same product. You opened a pizzeria. How you launched that pizza restaurant and what will affect its success are different.

Why is the distinction important?

Let's say you opened a pizzeria near an office. You'll probably get customers. Because people are nearby and demand isn't being met, someone from a nearby building will stop in within the first few days of your pizzeria's grand opening. This makes solving the problem relatively risk-free. You'll get customers unless you're a fool.

The market you're targeting existed before you entered it, so you're not guaranteed success. This means people in that market solved the lack of nearby restaurants. Those office workers are used to bringing their own lunches. Why should your restaurant change their habits? Even when they eat out, they're used to traveling far. They've likely developed pizza preferences.

To be successful with your problem-solving startup, you must convince consumers to change their behavior, which is difficult.

Unlike opening a pizza restaurant near a construction site. Once the building opens, workers won't have many preferences or standardized food-getting practices. Your pizza restaurant can become the incumbent quickly. You'll be the first restaurant in the area, so you'll gain a devoted following that makes your food a routine.

Great, right? It's easier than changing people's behavior. The benefit comes with a risk. Opening a pizza restaurant near a construction site increases future risk. What if builders run out of money? No one moves in? What if the building's occupants are the National Association of Pizza Haters? Then you've opened a pizza restaurant next to pizza haters.

Which kind of businessperson are you?

This isn't to say one type of entrepreneur is better than another. Each type of entrepreneurship requires different skills.

As my simple examples show, a problem-solving entrepreneur must operate in markets with established behaviors and habits. To be successful, you must be able to teach a market a new way of doing things.

Conversely, the challenge of being a visionary entrepreneur is that you have to be good at predicting the future and getting in front of that future before other people.

Both are difficult in different ways. So, smart entrepreneurs don't just chase opportunities. Smart entrepreneurs pursue opportunities that match their skill sets.

Benjamin Lin

3 years ago

I sold my side project for $20,000: 6 lessons I learned

How I monetized and sold an abandoned side project for $20,000

The Origin Story

I've always wanted to be an entrepreneur but never succeeded. I often had business ideas, made a landing page, and told my buddies. Never got customers.

In April 2021, I decided to try again with a new strategy. I noticed that I had trouble acquiring an initial set of customers, so I wanted to start by acquiring a product that had a small user base that I could grow.

I found a SaaS marketplace called MicroAcquire.com where you could buy and sell SaaS products. I liked Shareit.video, an online Loom-like screen recorder.

Shareit.video didn't generate revenue, but 50 people visited daily to record screencasts.

Purchasing a Failed Side Project

I eventually bought Shareit.video for $12,000 from its owner.

$12,000 was probably too much for a website without revenue or registered users.

I thought time was most important. I could have recreated the website, but it would take months. $12,000 would give me an organized code base and a working product with a few users to monetize.

I considered buying a screen recording website and trying to grow it versus buying a new car or investing in crypto with the $12K.

Buying the website would make me a real entrepreneur, which I wanted more than anything.

Putting down so much money would force me to commit to the project and prevent me from quitting too soon.

A Year of Development

I rebranded the website to be called RecordJoy and worked on it with my cousin for about a year. Within a year, we made $5000 and had 3000 users.

We spent $3500 on ads, hosting, and software to run the business.

AppSumo promoted our $120 Life Time Deal in exchange for 30% of the revenue.

We put RecordJoy on maintenance mode after 6 months because we couldn't find a scalable user acquisition channel.

We improved SEO and redesigned our landing page, but nothing worked.

Despite not being able to grow RecordJoy any further, I had already learned so much from working on the project so I was fine with putting it on maintenance mode. RecordJoy still made $500 a month, which was great lunch money.

Getting Taken Over

One of our customers emailed me asking for some feature requests and I replied that we weren’t going to add any more features in the near future. They asked if we'd sell.

We got on a call with the customer and I asked if he would be interested in buying RecordJoy for 15k. The customer wanted around $8k but would consider it.

Since we were negotiating with one buyer, we put RecordJoy on MicroAcquire to see if there were other offers.

We quickly received 10+ offers. We got 18.5k. There was also about $1000 in AppSumo that we could not withdraw, so we agreed to transfer that over for $600 since about 40% of our sales on AppSumo usually end up being refunded.

Lessons Learned

First, create an acquisition channel

We couldn't discover a scalable acquisition route for RecordJoy. If I had to start another project, I'd develop a robust acquisition channel first. It might be LinkedIn, Medium, or YouTube.

Purchase Power of the Buyer Affects Acquisition Price

Some of the buyers we spoke to were individuals looking to buy side projects, as well as companies looking to launch a new product category. Individual buyers had less budgets than organizations.

Customers of AppSumo vary.

AppSumo customers value lifetime deals and low prices, which may not be a good way to build a business with recurring revenue. Designed for AppSumo users, your product may not connect with other users.

Try to increase acquisition trust

Acquisition often fails. The buyer can go cold feet, cease communicating, or run away with your stuff. Trusting the buyer ensures a smooth asset exchange. First acquisition meeting was unpleasant and price negotiation was tight. In later meetings, we spent the first few minutes trying to get to know the buyer’s motivations and background before jumping into the negotiation, which helped build trust.

Operating expenses can reduce your earnings.

Monitor operating costs. We were really happy when we withdrew the $5000 we made from AppSumo and Stripe until we realized that we had spent $3500 in operating fees. Spend money on software and consultants to help you understand what to build.

Don't overspend on advertising

We invested $1500 on Google Ads but made little money. For a side project, it’s better to focus on organic traffic from SEO rather than paid ads unless you know your ads are going to have a positive ROI.

You might also like

Arthur Hayes

3 years ago

Contagion

(The author's opinions should not be used to make investment decisions or as a recommendation to invest.)

The pandemic and social media pseudoscience have made us all epidemiologists, for better or worse. Flattening the curve, social distancing, lockdowns—remember? Some of you may remember R0 (R naught), the number of healthy humans the average COVID-infected person infects. Thankfully, the world has moved on from Greater China's nightmare. Politicians have refocused their talent for misdirection on getting their constituents invested in the war for Russian Reunification or Russian Aggression, depending on your side of the iron curtain.

Humanity battles two fronts. A war against an invisible virus (I know your Commander in Chief might have told you COVID is over, but viruses don't follow election cycles and their economic impacts linger long after the last rapid-test clinic has closed); and an undeclared World War between US/NATO and Eurasia/Russia/China. The fiscal and monetary authorities' current policies aim to mitigate these two conflicts' economic effects.

Since all politicians are short-sighted, they usually print money to solve most problems. Printing money is the easiest and fastest way to solve most problems because it can be done immediately without much discussion. The alternative—long-term restructuring of our global economy—would hurt stakeholders and require an honest discussion about our civilization's state. Both of those requirements are non-starters for our short-sighted political friends, so whether your government practices capitalism, communism, socialism, or fascism, they all turn to printing money-ism to solve all problems.

Free money stimulates demand, so people buy crap. Overbuying shit raises prices. Inflation. Every nation has food, energy, or goods inflation. The once-docile plebes demand action when the latter two subsets of inflation rise rapidly. They will be heard at the polls or in the streets. What would you do to feed your crying hungry child?

Global central banks During the pandemic, the Fed, PBOC, BOJ, ECB, and BOE printed money to aid their governments. They worried about inflation and promised to remove fiat liquidity and tighten monetary conditions.

Imagine Nate Diaz's round-house kick to the face. The financial markets probably felt that way when the US and a few others withdrew fiat wampum. Sovereign debt markets suffered a near-record bond market rout.

The undeclared WW3 is intensifying, with recent gas pipeline attacks. The global economy is already struggling, and credit withdrawal will worsen the situation. The next pandemic, the Yield Curve Control (YCC) virus, is spreading as major central banks backtrack on inflation promises. All central banks eventually fail.

Here's a scorecard.

In order to save its financial system, BOE recently reverted to Quantitative Easing (QE).

BOJ Continuing YCC to save their banking system and enable affordable government borrowing.

ECB printing money to buy weak EU member bonds, but will soon start Quantitative Tightening (QT).

PBOC Restarting the money printer to give banks liquidity to support the falling residential property market.

Fed raising rates and QT-shrinking balance sheet.

80% of the world's biggest central banks are printing money again. Only the Fed has remained steadfast in the face of a financial market bloodbath, determined to end the inflation for which it is at least partially responsible—the culmination of decades of bad economic policies and a world war.

YCC printing is the worst for fiat currency and society. Because it necessitates central banks fixing a multi-trillion-dollar bond market. YCC central banks promise to infinitely expand their balance sheets to keep a certain interest rate metric below an unnatural ceiling. The market always wins, crushing humanity with inflation.

BOJ's YCC policy is longest-standing. The BOE joined them, and my essay this week argues that the ECB will follow. The ECB joining YCC would make 60% of major central banks follow this terrible policy. Since the PBOC is part of the Chinese financial system, the number could be 80%. The Chinese will lend any amount to meet their economic activity goals.

The BOE committed to a 13-week, GBP 65bn bond price-fixing operation. However, BOEs YCC may return. If you lose to the market, you're stuck. Since the BOE has announced that it will buy your Gilt at inflated prices, why would you not sell them all? Market participants taking advantage of this policy will only push the bank further into the hole it dug itself, so I expect the BOE to re-up this program and count them as YCC.

In a few trading days, the BOE went from a bank determined to slay inflation by raising interest rates and QT to buying an unlimited amount of UK Gilts. I expect the ECB to be dragged kicking and screaming into a similar policy. Spoiler alert: big daddy Fed will eventually die from the YCC virus.

Threadneedle St, London EC2R 8AH, UK

Before we discuss the BOE's recent missteps, a chatroom member called the British royal family the Kardashians with Crowns, which made me laugh. I'm sad about royal attention. If the public was as interested in energy and economic policies as they are in how the late Queen treated Meghan, Duchess of Sussex, UK politicians might not have been able to get away with energy and economic fairy tales.

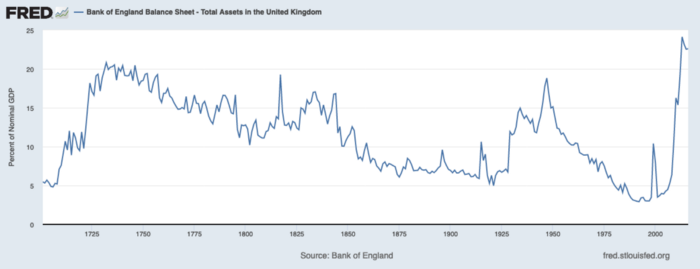

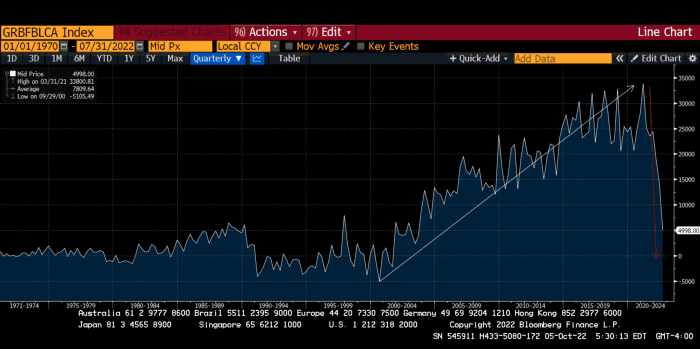

The BOE printed money to recover from COVID, as all good central banks do. For historical context, this chart shows the BOE's total assets as a percentage of GDP since its founding in the 18th century.

The UK has had a rough three centuries. Pandemics, empire wars, civil wars, world wars. Even so, the BOE's recent money printing was its most aggressive ever!

BOE Total Assets as % of GDP (white) vs. UK CPI

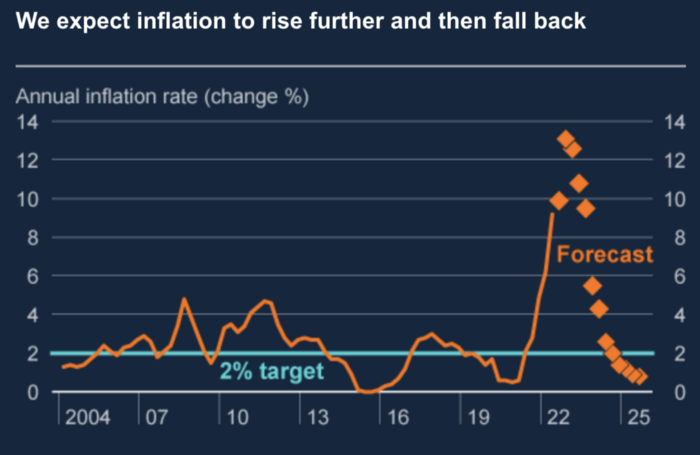

Now, inflation responded slowly to the bank's most aggressive monetary loosening. King Charles wishes the gold line above showed his popularity, but it shows his subjects' suffering.

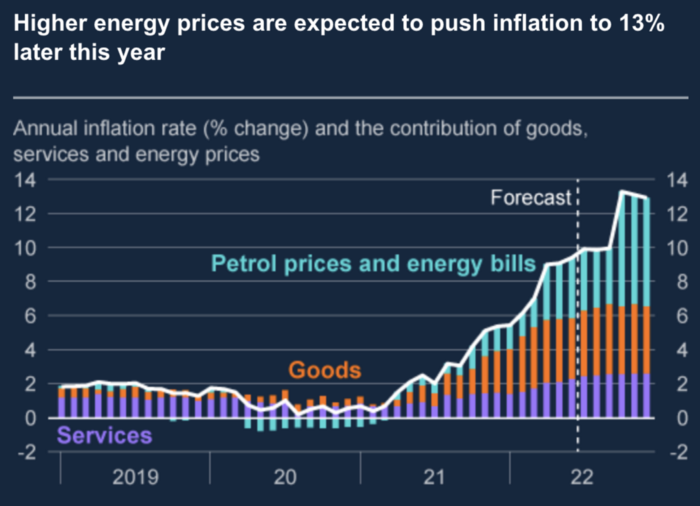

The BOE recognized early that its money printing caused runaway inflation. In its August 2022 report, the bank predicted that inflation would reach 13% by year end before aggressively tapering in 2023 and 2024.

Aug 2022 BOE Monetary Policy Report

The BOE was the first major central bank to reduce its balance sheet and raise its policy rate to help.

The BOE first raised rates in December 2021. Back then, JayPow wasn't even considering raising rates.

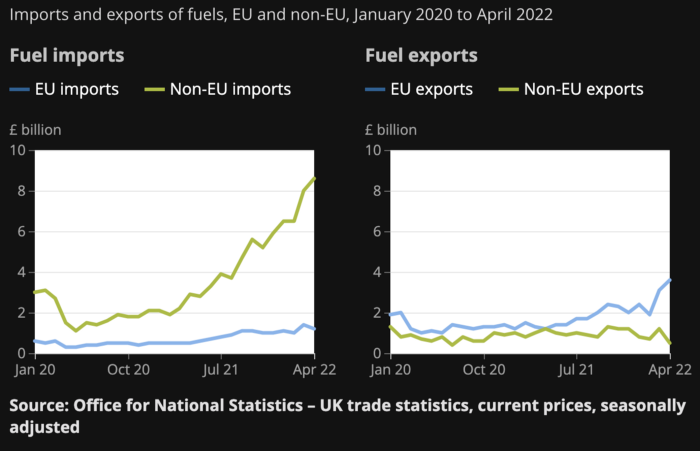

UK policymakers, like most developed nations, believe in energy fairy tales. Namely, that the developed world, which grew in lockstep with hydrocarbon use, could switch to wind and solar by 2050. The UK's energy import bill has grown while coal, North Sea oil, and possibly stranded shale oil have been ignored.

WW3 is an economic war that is balkanizing energy markets, which will continue to inflate. A nation that imports energy and has printed the most money in its history cannot avoid inflation.

The chart above shows that energy inflation is a major cause of plebe pain.

The UK is hit by a double whammy: the BOE must remove credit to reduce demand, and energy prices must rise due to WW3 inflation. That's not economic growth.

Boris Johnson was knocked out by his country's poor economic performance, not his lockdown at 10 Downing St. Prime Minister Truss and her merry band of fools arrived with the tried-and-true government remedy: goodies for everyone.

She released a budget full of economic stimulants. She cut corporate and individual taxes for the rich. She plans to give poor people vouchers for higher energy bills. Woohoo! Margret Thatcher's new pants suit.

My buddy Jim Bianco said Truss budget's problem is that it works. It will boost activity at a time when inflation is over 10%. Truss' budget didn't include austerity measures like tax increases or spending cuts, which the bond market wanted. The bond market protested.

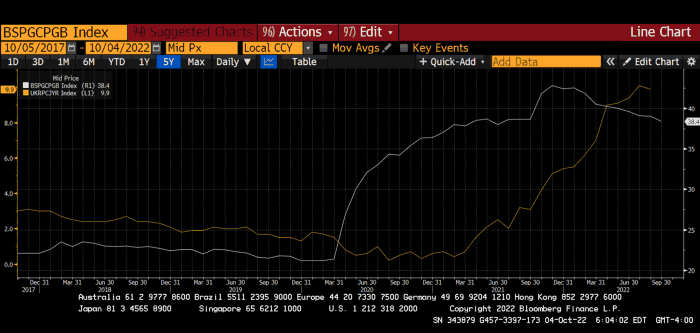

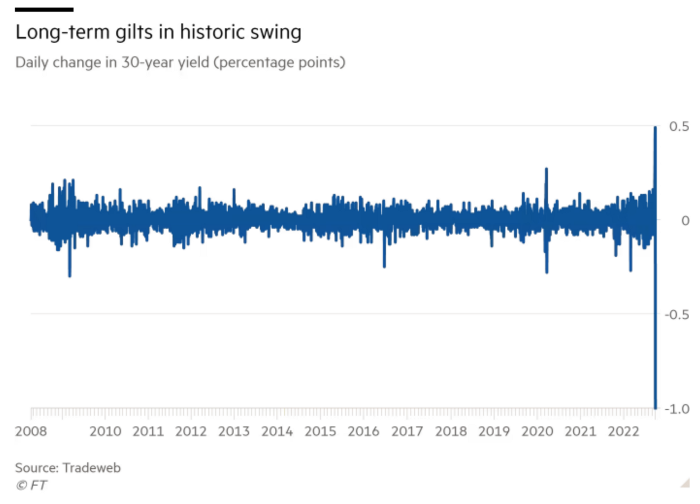

30-year Gilt yield chart. Yields spiked the most ever after Truss announced her budget, as shown. The Gilt market is the longest-running bond market in the world.

The Gilt market showed the pole who's boss with Cardi B.

Before this, the BOE was super-committed to fighting inflation. To their credit, they raised short-term rates and shrank their balance sheet. However, rapid yield rises threatened to destroy the entire highly leveraged UK financial system overnight, forcing them to change course.

Accounting gimmicks allowed by regulators for pension funds posed a systemic threat to the UK banking system. UK pension funds could use interest rate market levered derivatives to match liabilities. When rates rise, short rate derivatives require more margin. The pension funds spent all their money trying to pick stonks and whatever else their sell side banker could stuff them with, so the historic rate spike would have bankrupted them overnight. The FT describes BOE-supervised chicanery well.

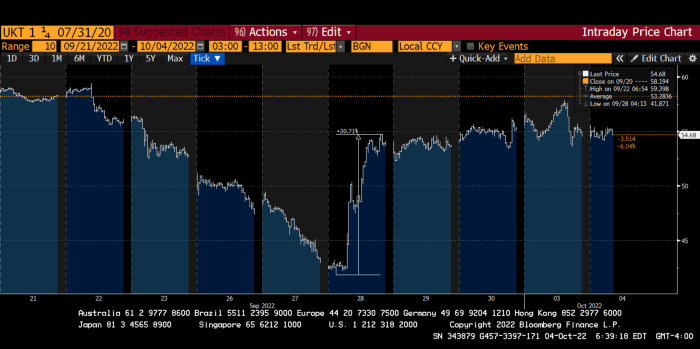

To avoid a financial apocalypse, the BOE in one morning abandoned all their hard work and started buying unlimited long-dated Gilts to drive prices down.

Another reminder to never fight a central bank. The 30-year Gilt is shown above. After the BOE restarted the money printer on September 28, this bond rose 30%. Thirty-fucking-percent! Developed market sovereign bonds rarely move daily. You're invested in His Majesty's government obligations, not a Chinese property developer's offshore USD bond.

The political need to give people goodies to help them fight the terrible economy ran into a financial reality. The central bank protected the UK financial system from asset-price deflation because, like all modern economies, it is debt-based and highly levered. As bad as it is, inflation is not their top priority. The BOE example demonstrated that. To save the financial system, they abandoned almost a year of prudent monetary policy in a few hours. They also started the endgame.

Let's play Central Bankers Say the Darndest Things before we go to the continent (and sorry if you live on a continent other than Europe, but you're not culturally relevant).

Pre-meltdown BOE output:

FT, October 17, 2021 On Sunday, the Bank of England governor warned that it must act to curb inflationary pressure, ignoring financial market moves that have priced in the first interest rate increase before the end of the year.

On July 19, 2022, Gov. Andrew Bailey spoke. Our 2% inflation target is unwavering. We'll do our job.

August 4th 2022 MPC monetary policy announcement According to its mandate, the MPC will sustainably return inflation to 2% in the medium term.

Catherine Mann, MPC member, September 5, 2022 speech. Fast and forceful monetary tightening, possibly followed by a hold or reversal, is better than gradualism because it promotes inflation expectations' role in bringing inflation back to 2% over the medium term.

When their financial system nearly collapsed in one trading session, they said:

The Bank of England's Financial Policy Committee warned on 28 September that gilt market dysfunction threatened UK financial stability. It advised action and supported the Bank's urgent gilt market purchases for financial stability.

It works when the price goes up but not down. Is my crypto portfolio dysfunctional enough to get a BOE bailout?

Next, the EU and ECB. The ECB is also fighting inflation, but it will also succumb to the YCC virus for the same reasons as the BOE.

Frankfurt am Main, ECB Tower, Sonnemannstraße 20, 60314

Only France and Germany matter economically in the EU. Modern European history has focused on keeping Germany and Russia apart. German manufacturing and cheap Russian goods could change geopolitics.

France created the EU to keep Germany down, and the Germans only cooperated because of WWII guilt. France's interests are shared by the US, which lurks in the shadows to prevent a Germany-Russia alliance. A weak EU benefits US politics. Avoid unification of Eurasia. (I paraphrased daddy Felix because I thought quoting a large part of his most recent missive would get me spanked.)

As with everything, understanding Germany's energy policy is the best way to understand why the German economy is fundamentally fucked and why that spells doom for the EU. Germany, the EU's main economic engine, is being crippled by high energy prices, threatening a depression. This economic downturn threatens the union. The ECB may have to abandon plans to shrink its balance sheet and switch to YCC to save the EU's unholy political union.

France did the smart thing and went all in on nuclear energy, which is rare in geopolitics. 70% of electricity is nuclear-powered. Their manufacturing base can survive Russian gas cuts. Germany cannot.

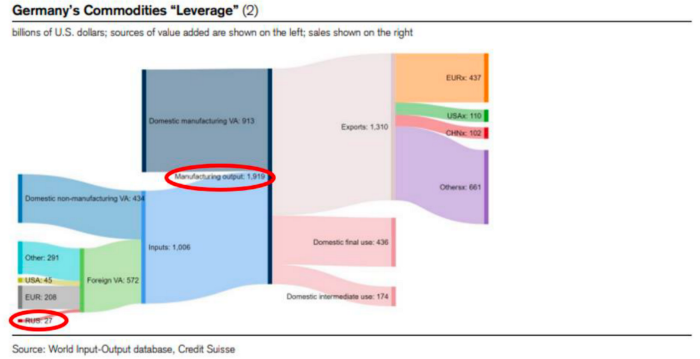

My boy Zoltan made this great graphic showing how screwed Germany is as cheap Russian gas leaves the industrial economy.

$27 billion of Russian gas powers almost $2 trillion of German economic output, a 75x energy leverage. The German public was duped into believing the same energy fairy tales as their politicians, and they overwhelmingly allowed the Green party to dismantle any efforts to build a nuclear energy ecosystem over the past several decades. Germany, unlike France, must import expensive American and Qatari LNG via supertankers due to Nordstream I and II pipeline sabotage.

American gas exports to Europe are touted by the media. Gas is cheap because America isn't the Western world's swing producer. If gas prices rise domestically in America, the plebes would demand the end of imports to avoid paying more to heat their homes.

German goods would cost much more in this scenario. German producer prices rose 46% YoY in August. The German current account is rapidly approaching zero and will soon be negative.

German PPI Change YoY

German Current Account

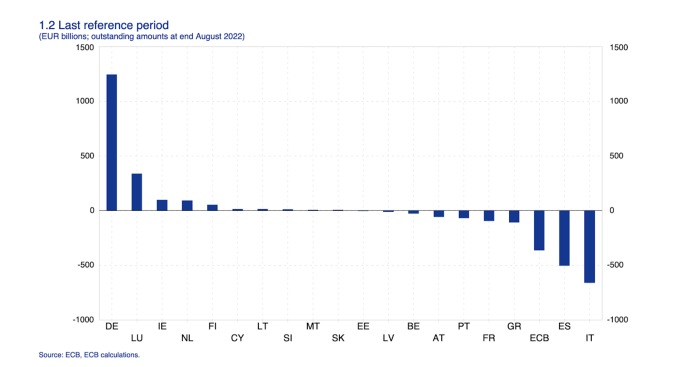

The reason this matters is a curious construction called TARGET2. Let’s hear from the horse’s mouth what exactly this beat is:

TARGET2 is the real-time gross settlement (RTGS) system owned and operated by the Eurosystem. Central banks and commercial banks can submit payment orders in euro to TARGET2, where they are processed and settled in central bank money, i.e. money held in an account with a central bank.

Source: ECB

Let me explain this in plain English for those unfamiliar with economic dogma.

This chart shows intra-EU credits and debits. TARGET2. Germany, Europe's powerhouse, is owed money. IOU-buying Greeks buy G-wagons. The G-wagon pickup truck is badass.

If all EU countries had fiat currencies, the Deutsche Mark would be stronger than the Italian Lira, according to the chart above. If Europe had to buy goods from non-EU countries, the Euro would be much weaker. Credits and debits between smaller political units smooth out imbalances in other federal-provincial-state political systems. Financial and fiscal unions allow this. The EU is financial, so the centre cannot force the periphery to settle their imbalances.

Greece has never had to buy Fords or Kias instead of BMWs, but what if Germany had to shut down its auto manufacturing plants due to energy shortages?

Italians have done well buying ammonia from Germany rather than China, but what if BASF had to close its Ludwigshafen facility due to a lack of affordable natural gas?

I think you're seeing the issue.

Instead of Germany, EU countries would owe foreign producers like America, China, South Korea, Japan, etc. Since these countries aren't tied into an uneconomic union for politics, they'll demand hard fiat currency like USD instead of Euros, which have become toilet paper (or toilet plastic).

Keynesian economists have a simple solution for politicians who can't afford market prices. Government debt can maintain production. The debt covers the difference between what a business can afford and the international energy market price.

Germans are monetary policy conservative because of the Weimar Republic's hyperinflation. The Bundesbank is the only thing preventing ECB profligacy. Germany must print its way out without cheap energy. Like other nations, they will issue more bonds for fiscal transfers.

More Bunds mean lower prices. Without German monetary discipline, the Euro would have become a trash currency like any other emerging market that imports energy and food and has uncompetitive labor.

Bunds price all EU country bonds. The ECB's money printing is designed to keep the spread of weak EU member bonds vs. Bunds low. Everyone falls with Bunds.

Like the UK, German politicians seeking re-election will likely cause a Bunds selloff. Bond investors will understandably reject their promises of goodies for industry and individuals to offset the lack of cheap Russian gas. Long-dated Bunds will be smoked like UK Gilts. The ECB will face a wave of ultra-levered financial players who will go bankrupt if they mark to market their fixed income derivatives books at higher Bund yields.

Some treats People: Germany will spend 200B to help consumers and businesses cope with energy prices, including promoting renewable energy.

That, ladies and germs, is why the ECB will immediately abandon QT, move to a stop-gap QE program to normalize the Bund and every other EU bond market, and eventually graduate to YCC as the market vomits bonds of all stripes into Christine Lagarde's loving hands. She probably has soft hands.

The 30-year Bund market has noticed Germany's economic collapse. 2021 yields skyrocketed.

30-year Bund Yield

ECB Says the Darndest Things:

Because inflation is too high and likely to stay above our target for a long time, we took today's decision and expect to raise interest rates further.- Christine Lagarde, ECB Press Conference, Sept 8.

The Governing Council will adjust all of its instruments to stabilize inflation at 2% over the medium term. July 21 ECB Monetary Decision

Everyone struggles with high inflation. The Governing Council will ensure medium-term inflation returns to two percent. June 9th ECB Press Conference

I'm excited to read the after. Like the BOE, the ECB may abandon their plans to shrink their balance sheet and resume QE due to debt market dysfunction.

Eighty Percent

I like YCC like dark chocolate over 80%. ;).

Can 80% of the world's major central banks' QE and/or YCC overcome Sir Powell's toughness on fungible risky asset prices?

Gold and crypto are fungible global risky assets. Satoshis and gold bars are the same in New York, London, Frankfurt, Tokyo, and Shanghai.

As more Euros, Yen, Renminbi, and Pounds are printed, people will move their savings into Dollars or other stores of value. As the Fed raises rates and reduces its balance sheet, the USD will strengthen. Gold/EUR and BTC/JPY may also attract buyers.

Gold and crypto markets are much smaller than the trillions in fiat money that will be printed, so they will appreciate in non-USD currencies. These flows only matter in one instance because we trade the global or USD price. Arbitrage occurs when BTC/EUR rises faster than EUR/USD. Here is how it works:

An investor based in the USD notices that BTC is expensive in EUR terms.

Instead of buying BTC, this investor borrows USD and then sells it.

After that, they sell BTC and buy EUR.

Then they choose to sell EUR and buy USD.

The investor receives their profit after repaying the USD loan.

This triangular FX arbitrage will align the global/USD BTC price with the elevated EUR, JPY, CNY, and GBP prices.

Even if the Fed continues QT, which I doubt they can do past early 2023, small stores of value like gold and Bitcoin may rise as non-Fed central banks get serious about printing money.

“Arthur, this is just more copium,” you might retort.

Patience. This takes time. Economic and political forcing functions take time. The BOE example shows that bond markets will reject politicians' policies to appease voters. Decades of bad energy policy have no immediate fix. Money printing is the only politically viable option. Bond yields will rise as bond markets see more stimulative budgets, and the over-leveraged fiat debt-based financial system will collapse quickly, followed by a monetary bailout.

America has enough food, fuel, and people. China, Europe, Japan, and the UK suffer. America can be autonomous. Thus, the Fed can prioritize domestic political inflation concerns over supplying the world (and most of its allies) with dollars. A steady flow of dollars allows other nations to print their currencies and buy energy in USD. If the strongest player wins, everyone else loses.

I'm making a GDP-weighted index of these five central banks' money printing. When ready, I'll share its rate of change. This will show when the 80%'s money printing exceeds the Fed's tightening.

Cory Doctorow

3 years ago

The current inflation is unique.

New Stiglitz just dropped.

Here's the inflation story everyone believes (warning: it's false): America gave the poor too much money during the recession, and now the economy is awash with free money, which made them so rich they're refusing to work, meaning the economy isn't making anything. Prices are soaring due to increased cash and missing labor.

Lawrence Summers says there's only one answer. We must impoverish the poor: raise interest rates, cause a recession, and eliminate millions of jobs, until the poor are stripped of their underserved fortunes and return to work.

https://pluralistic.net/2021/11/20/quiet-part-out-loud/#profiteering

This is nonsense. Countries around the world suffered inflation during and after lockdowns, whether they gave out humanitarian money to keep people from starvation. America has slightly greater inflation than other OECD countries, but it's not due to big relief packages.

The Causes of and Responses to Today's Inflation, a Roosevelt Institute report by Nobel-winning economist Joseph Stiglitz and macroeconomist Regmi Ira, debunks this bogus inflation story and offers a more credible explanation for inflation.

https://rooseveltinstitute.org/wp-content/uploads/2022/12/RI CausesofandResponsestoTodaysInflation Report 202212.pdf

Sharp interest rate hikes exacerbate the slump and increase inflation, the authors argue. They compare monetary policy inflation cures to medieval bloodletting, where doctors repeated the same treatment until the patient recovered (for which they received credit) or died (which was more likely).

Let's discuss bloodletting. Inflation hawks warn of the wage price spiral, when inflation rises and powerful workers bargain for higher pay, driving up expenses, prices, and wages. This is the fairy-tale narrative of the 1970s, and it's true except that OPEC's embargo drove up oil prices, which produced inflation. Oh well.

Let's be generous to seventies-haunted inflation hawks and say we're worried about a wage-price spiral. Fantastic! No. Real wages are 2.3% lower than they were in Oct 2021 after peaking in June at 4.8%.

Why did America's powerful workers take a paycut rather than demand inflation-based pay? Weak unions, globalization, economic developments.

Workers don't expect inflation to rise, so they're not requesting inflationary hikes. Inflationary expectations have remained moderate, consistent with our data interpretation.

https://www.newyorkfed.org/microeconomics/sce#/

Neither are workers. Working people see surplus savings as wealth and spend it gradually over their lives, despite rising demand. People may have saved money by staying in during the lockdown, but they don't eat out every night to make up for it. Instead, they keep those savings as precautionary balances. This is why the economy is lagging.

People don't buy non-traded goods with pandemic savings (basically, imports). Imports don't multiply like domestic purchases. If you buy a loaf of bread from the corner baker for $1 and they spend it at the tavern across the street, that dollar generates $3 in economic activity. Spending a dollar on foreign goods leaves the country and any multiplier effect happens there, not in the US.

Only marginally higher wages. The ECI is up 1.6% from 2019. Almost all gains went to the 25% lowest-paid Americans. Contrary to the inflation worry about too much savings, these workers don't make enough to save, even post-pandemic.

Recreation and transit spending are at or below pre-pandemic levels. Higher food and hotel prices (which doesn’t mean we’re buying more food than we were in 2019, just that it costs more).

What causes inflation if not greedy workers, free money, and high demand? The most expensive domestic goods produce the biggest revenues for their manufacturers. They charge you more without paying their workers or suppliers more.

The largest price-gougers are funneling their earnings to rich people who store it offshore through stock buybacks and dividends. A $1 billion stock buyback doesn't buy $1 billion in bread.

Five factors influence US inflation today:

I. Price rises for energy and food

II. shifts in consumer tastes

III. supply interruptions (mainly autos);

IV. increased rents (due to telecommuting);

V. monopoly (AKA price-gouging).

None can be remedied by raising interest rates or laying off workers.

Russia's invasion of Ukraine, omicron, and China's Zero Covid policy all disrupted the flow of food, energy, and production inputs. The price went higher because we made less.

After Russia invaded Ukraine, oil prices spiked, and sanctions made it worse. But that was February. By October, oil prices had returned to pre-pandemic, 2015 levels attributable to global economic adjustments, including a shift to renewables. Every new renewable installation reduces oil consumption and affects oil prices.

High food prices have a simple solution. The US and EU have bribed farmers not to produce for 50 years. If the war continues, this program may end, and food prices may decline.

Demand changes. We want different things than in 2019, not more. During the lockdown, people substituted goods. Half of the US toilet-paper supply in 2019 was on commercial-sized rolls. This is created from different mills and stock than our toilet paper.

Lockdown pushed toilet paper demand to residential rolls, causing shortages (the TP hoarding story was just another pandemic urban legend). Because supermarket stores don't have accounts with commercial paper distributors, ordering from languishing stores was difficult. Kleenex and paper towel substitutions caused greater shortages.

All that drove increased costs in numerous product categories, and there were more cases. These increases are transient, caused by supply chain inefficiencies that are resolving.

Demand for frontline staff saw a one-time repricing of pay, which is being recouped as we speak.

Illnesses. Brittle, hollowed-out global supply chains aggravated this. The constant pursuit of cheap labor and minimal regulation by monopolies that dominate most sectors means things are manufactured in far-flung locations. Financialization means any surplus capital assets were sold off years ago, leaving firms with little production slack. After the epidemic, several of these systems took years to restart.

Automobiles are to blame. Financialization and monopolization consolidated microchip and auto production in Taiwan and China. When the lockdowns came, these worldwide corporations cancelled their chip orders, and when they placed fresh orders, they were at the back of the line.

That drove up car prices, which is why the US has slightly higher inflation than other wealthy countries: the economy is car-centric. Automobile prices account for 9% of the CPI. France: 3.6%

Rent shocks and telecommuting. After the epidemic, many professionals moved to exurbs, small towns, and the countryside to work from home. As commercial properties were vacated, it was impractical to adapt them for residential use due to planning restrictions. Addressing these restrictions will cut rent prices more than raising inflation rates, which halts housing construction.

Statistical mirages cause some rent inflation. The CPI estimates what homeowners would pay to rent their properties. When rents rise in your neighborhood, the CPI believes you're spending more on rent even if you have a 30-year fixed-rate mortgage.

Market dominance. Almost every area of the US economy is dominated by monopolies, whose CEOs disclose on investor calls that they use inflation scares to jack up prices and make record profits.

https://pluralistic.net/2022/02/02/its-the-economy-stupid/#overinflated

Long-term profit margins are rising. Markups averaged 26% from 1960-1980. 2021: 72%. Market concentration explains 81% of markup increases (e.g. monopolization). Profit margins reach a 70-year high in 2022. These elements interact. Monopolies thin out their sectors, making them brittle and sensitive to shocks.

If we're worried about a shrinking workforce, there are more humanitarian and sensible solutions than causing a recession and mass unemployment. Instead, we may boost US production capacity by easing workers' entry into the workforce.

https://pluralistic.net/2022/06/01/factories-to-condos-pipeline/#stuff-not-money

US female workforce participation ranks towards the bottom of developed countries. Many women can't afford to work due to America's lack of daycare, low earnings, and bad working conditions in female-dominated fields. If America doesn't have enough workers, childcare subsidies and minimum wages can help.

By contrast, driving the country into recession with interest-rate hikes will reduce employment, and the last recruited (women, minorities) are the first fired and the last to be rehired. Forcing America into recession won't enhance its capacity to create what its people want; it will degrade it permanently.

Nothing the Fed does can stop price hikes from international markets, lack of supply chain investment, COVID-19 disruptions, climate change, the Ukraine war, or market power. They can worsen it. When supply problems generate inflation, raising interest rates decreases investments that can remedy shortages.

Increasing interest rates won't cut rents since landlords pass on the expenses and high rates restrict investment in new dwellings where tenants could escape the costs.

Fixing the supply fixes supply-side inflation. Increase renewables investment (as the Inflation Reduction Act does). Monopolies can be busted (as the IRA does). Reshore key goods (as the CHIPS Act does). Better pay and child care attract employees.

Windfall taxes can claw back price-gouging corporations' monopoly earnings.

https://pluralistic.net/2022/03/15/sanctions-financing/#soak-the-rich

In 2008, we ruled out fiscal solutions (bailouts for debtors) and turned to monetary policy (bank bailouts). This preserved the economy but increased inequality and eroded public trust.

Monetary policy won't help. Even monetary policy enthusiasts recognize an 18-month lag between action and result. That suggests monetary tightening is unnecessary. Like the medieval bloodletter, central bankers whose interest rate hikes don't work swiftly may do more of the same, bringing the economy to its knees.

Interest rates must rise. Zero-percent interest fueled foolish speculation and financialization. Increasing rates will stop this. Increasing interest rates will destroy the economy and dampen inflation.

Then what? All recent evidence indicate to inflation decreasing on its own, as the authors argue. Supply side difficulties are finally being overcome, evidence shows. Energy and food prices are showing considerable mean reversion, which is disinflationary.

The authors don't recommend doing nothing. Best case scenario, they argue, is that the Fed won't keep raising interest rates until morale improves.

Al Anany

2 years ago

Because of this covert investment that Bezos made, Amazon became what it is today.

He kept it under wraps for years until he legally couldn’t.

His shirt is incomplete. I can’t stop thinking about this…

Actually, ignore the article. Look at it. JUST LOOK at it… It’s quite disturbing, isn’t it?

Ughh…

Me: “Hey, what up?” Friend: “All good, watching lord of the rings on amazon prime video.” Me: “Oh, do you know how Amazon grew and became famous?” Friend: “Geek alert…Can I just watch in peace?” Me: “But… Bezos?” Friend: “Let it go, just let it go…”

I can question you, the reader, and start answering instantly without his consent. This far.

Reader, how did Amazon succeed? You'll say, Of course, it was an internet bookstore, then it sold everything.

Mistaken. They moved from zero to one because of this. How did they get from one to thousand? AWS-some. Understand? It's geeky and lame. If not, I'll explain my geekiness.

Over an extended period of time, Amazon was not profitable.

Business basics. You want customers if you own a bakery, right?

Well, 100 clients per day order $5 cheesecakes (because cheesecakes are awesome.)

$5 x 100 consumers x 30 days Equals $15,000 monthly revenue. You proudly work here.

Now you have to pay the barista (unless ChatGPT is doing it haha? Nope..)

The barista is requesting $5000 a month.

Each cheesecake costs the cheesecake maker $2.5 ($2.5 × 100 x 30 = $7500).

The monthly cost of running your bakery, including power, is about $5000.

Assume no extra charges. Your operating costs are $17,500.

Just $15,000? You have income but no profit. You might make money selling coffee with your cheesecake next month.

Is losing money bad? You're broke. Losing money. It's bad for financial statements.

It's almost a business ultimatum. Most startups fail. Amazon took nine years.

I'm reading Amazon Unbound: Jeff Bezos and the Creation of a Global Empire to comprehend how a company has a $1 trillion market cap.

Many things made Amazon big. The book claims that Bezos and Amazon kept a specific product secret for a long period.

Clouds above the bald head.

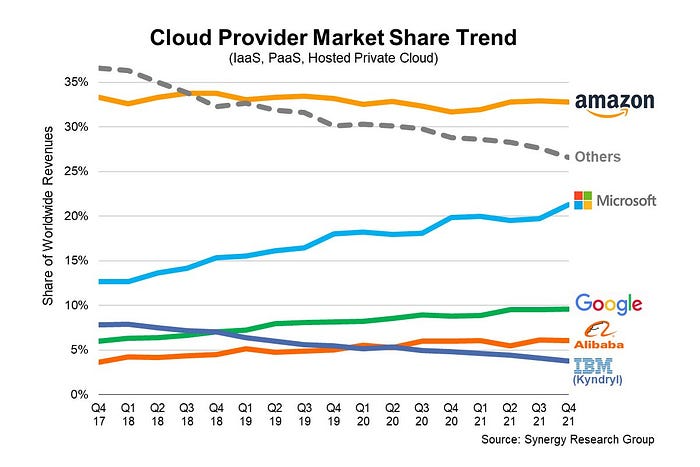

In 2006, Bezos started a cloud computing initiative. They believed many firms like Snapchat would pay for reliable servers.

In 2006, cloud computing was not what it is today. I'll simplify. 2006 had no iPhone.

Bezos invested in Amazon Web Services (AWS) without disclosing its revenue. That's permitted till a certain degree.

Google and Microsoft would realize Amazon is heavily investing in this market and worry.

Bezos anticipated high demand for this product. Microsoft built its cloud in 2010, and Google in 2008.

If you managed Google or Microsoft, you wouldn't know how much Amazon makes from their cloud computing service. It's enough. Yet, Amazon is an internet store, so they'll focus on that.

All but Bezos were wrong.

Time to come clean now.

They revealed AWS revenue in 2015. Two things were apparent:

Bezos made the proper decision to bet on the cloud and keep it a secret.

In this race, Amazon is in the lead.

They continued. Let me list some AWS users today.

Netflix

Airbnb

Twitch

More. Amazon was unprofitable for nine years, remember? This article's main graph.

AWS accounted for 74% of Amazon's profit in 2021. This 74% might not exist if they hadn't invested in AWS.

Bring this with you home.

Amazon predated AWS. Yet, it helped the giant reach $1 trillion. Bezos' secrecy? Perhaps, until a time machine is invented (they might host the time machine software on AWS, though.)

Without AWS, Amazon would have been profitable but unimpressive. They may have invested in anything else that would have returned more (like crypto? No? Ok.)

Bezos has business flaws. His success. His failures include:

introducing the Fire Phone and suffering a $170 million loss.

Amazon's failure in China In 2011, Amazon had a about 15% market share in China. 2019 saw a decrease of about 1%.

not offering a higher price to persuade the creator of Netflix to sell the company to him. He offered a rather reasonable $15 million in his proposal. But what if he had offered $30 million instead (Amazon had over $100 million in revenue at the time)? He might have owned Netflix, which has a $156 billion market valuation (and saved billions rather than invest in Amazon Prime Video).

Some he could control. Some were uncontrollable. Nonetheless, every action he made in the foregoing circumstances led him to invest in AWS.