5 Bored Apes borrowed to claim $1.1 million in APE tokens

Takeaway

Unknown user took advantage of the ApeCoin airdrop to earn $1.1 million.

He used a flash loan to borrow five BAYC NFTs, claim the airdrop, and repay the NFTs.

Yuga Labs, the creators of BAYC, airdropped ApeCoin (APE) to anyone who owns one of their NFTs yesterday.

For the Bored Ape Yacht Club and Mutant Ape Yacht Club collections, the team allocated 150 million tokens, or 15% of the total ApeCoin supply, worth over $800 million. Each BAYC holder received 10,094 tokens worth $80,000 to $200,000.

But someone managed to claim the airdrop using NFTs they didn't own. They used the airdrop's specific features to carry it out. And it worked, earning them $1.1 million in ApeCoin.

The trick was that the ApeCoin airdrop wasn't based on who owned which Bored Ape at a given time. Instead, anyone with a Bored Ape at the time of the airdrop could claim it. So if you gave someone your Bored Ape and you hadn't claimed your tokens, they could claim them.

The person only needed to get hold of some Bored Apes that hadn't had their tokens claimed to claim the airdrop. They could be returned immediately.

So, what happened?

The person found a vault with five Bored Ape NFTs that hadn't been used to claim the airdrop.

A vault tokenizes an NFT or a group of NFTs. You put a bunch of NFTs in a vault and make a token. This token can then be staked for rewards or sold (representing part of the value of the collection of NFTs). Anyone with enough tokens can exchange them for NFTs.

This vault uses the NFTX protocol. In total, it contained five Bored Apes: #7594, #8214, #9915, #8167, and #4755. Nobody had claimed the airdrop because the NFTs were locked up in the vault and not controlled by anyone.

The person wanted to unlock the NFTs to claim the airdrop but didn't want to buy them outright s o they used a flash loan, a common tool for large DeFi hacks. Flash loans are a low-cost way to borrow large amounts of crypto that are repaid in the same transaction and block (meaning that the funds are never at risk of not being repaid).

With a flash loan of under $300,000 they bought a Bored Ape on NFT marketplace OpenSea. A large amount of the vault's token was then purchased, allowing them to redeem the five NFTs. The NFTs were used to claim the airdrop, before being returned, the tokens sold back, and the loan repaid.

During this process, they claimed 60,564 ApeCoin airdrops. They then sold them on Uniswap for 399 ETH ($1.1 million). Then they returned the Bored Ape NFT used as collateral to the same NFTX vault.

Attack or arbitrage?

However, security firm BlockSecTeam disagreed with many social media commentators. A flaw in the airdrop-claiming mechanism was exploited, it said.

According to BlockSecTeam's analysis, the user took advantage of a "vulnerability" in the airdrop.

"We suspect a hack due to a flaw in the airdrop mechanism. The attacker exploited this vulnerability to profit from the airdrop claim" said BlockSecTeam.

For example, the airdrop could have taken into account how long a person owned the NFT before claiming the reward.

Because Yuga Labs didn't take a snapshot, anyone could buy the NFT in real time and claim it. This is probably why BAYC sales exploded so soon after the airdrop announcement.

More on NFTs & Art

Jennifer Tieu

4 years ago

Why I Love Azuki

Azuki Banner (www.azuki.com)

Disclaimer: This is my personal viewpoint. I'm not on the Azuki team. Please keep in mind that I am merely a fan, community member, and holder. Please do your own research and pardon my grammar. Thanks!

Azuki has changed my view of NFTs.

When I first entered the NFT world, I had no idea what to expect. I liked the idea. So I invested in some projects, fought for whitelists, and discovered some cool NFTs projects (shout-out to CATC). I lost more money than I earned at one point, but I hadn't invested excessively (only put in what you can afford to lose). Despite my losses, I kept looking. I almost waited for the “ah-ha” moment. A NFT project that changed my perspective on NFTs. What makes an NFT project more than a work of art?

Answer: Azuki.

The Art

The Azuki art drew me in as an anime fan. It looked like something out of an anime, and I'd never seen it before in NFT.

The project was still new. The first two animated teasers were released with little fanfare, but I was impressed with their quality. You can find them on Instagram or in their earlier Tweets.

The teasers hinted that this project could be big and that the team could deliver. It was amazing to see Shao cut the Azuki posters with her katana. Especially at the end when she sheaths her sword and the music cues. Then the live action video of the young boy arranging the Azuki posters seemed movie-like. I felt like I was entering the Azuki story, brand, and dope theme.

The team did not disappoint with the Azuki NFTs. The level of detail in the art is stunning. There were Azukis of all genders, skin and hair types, and more. These 10,000 Azukis have so much representation that almost anyone can find something that resonates. Rather than me rambling on, I suggest you visit the Azuki gallery

The Team

If the art is meant to draw you in and be the project's face, the team makes it more. The NFT would be a JPEG without a good team leader. Not that community isn't important, but no community would rally around a bad team.

Because I've been rugged before, I'm very focused on the team when considering a project. While many project teams are anonymous, I try to find ones that are doxxed (public) or at least appear to be established. Unlike Azuki, where most of the Azuki team is anonymous, Steamboy is public. He is (or was) Overwatch's character art director and co-creator of Azuki. I felt reassured and could trust the project after seeing someone from a major game series on the team.

Then I tried to learn as much as I could about the team. Following everyone on Twitter, reading their tweets, and listening to recorded AMAs. I was impressed by the team's professionalism and dedication to their vision for Azuki, led by ZZZAGABOND.

I believe the phrase “actions speak louder than words” applies to Azuki. I can think of a few examples of what the Azuki team has done, but my favorite is ERC721A.

With ERC721A, Azuki has created a new algorithm that allows minting multiple NFTs for essentially the same cost as minting one NFT.

I was ecstatic when the dev team announced it. This fascinates me as a self-taught developer. Azuki released a product that saves people money, improves the NFT space, and is open source. It showed their love for Azuki and the NFT community.

The Community

Community, community, community. It's almost a chant in the NFT space now. A community, like a team, can make or break a project. We are the project's consumers, shareholders, core, and lifeblood. The team builds the house, and we fill it. We stay for the community.

When I first entered the Azuki Discord, I was surprised by the calm atmosphere. There was no news about the project. No release date, no whitelisting requirements. No grinding or spamming either. People just wanted to hangout, get to know each other, and talk. It was nice. So the team could pick genuine people for their mintlist (aka whitelist).

But nothing fundamental has changed since the release. It has remained an authentic, fun, and helpful community. I'm constantly logging into Discord to chat with others or follow conversations. I see the community's openness to newcomers. Everyone respects each other (barring a few bad apples) and the variety of people passing through is fascinating. This human connection and interaction is what I enjoy about this place. Being a part of a group that supports a cause.

Finally, I want to thank the amazing Azuki mod team and the kissaten channel for their contributions.

The Brand

So, what sets Azuki apart from other projects? They are shaping a brand or identity. The Azuki website, I believe, best captures their vision. (This is me gushing over the site.)

If you go to the website, turn on the dope playlist in the bottom left. The playlist features a mix of Asian and non-Asian hip-hop and rap artists, with some lo-fi thrown in. The songs on the playlist change, but I think you get the vibe Azuki embodies just by turning on the music.

The Garden is our next stop where we are introduced to Azuki.

A brand.

We're creating a new brand together.

A metaverse brand. By the people.

A collection of 10,000 avatars that grant Garden membership. It starts with exclusive streetwear collabs, NFT drops, live events, and more. Azuki allows for a new media genre that the world has yet to discover. Let's build together an Azuki, your metaverse identity.

The Garden is a magical internet corner where art, community, and culture collide. The boundaries between the physical and digital worlds are blurring.

Try a Red Bean.

The text begins with Azuki's intention in the space. It's a community-made metaverse brand. Then it goes into more detail about Azuki's plans. Initiation of a story or journey. "Would you like to take the red bean and jump down the rabbit hole with us?" I love the Matrix red pill or blue pill play they used. (Azuki in Japanese means red bean.)

Morpheus, the rebel leader, offers Neo the choice of a red or blue pill in The Matrix. “You take the blue pill... After the story, you go back to bed and believe whatever you want. Your red pill... Let me show you how deep the rabbit hole goes.” Aware that the red pill will free him from the enslaving control of the machine-generated dream world and allow him to escape into the real world, he takes it. However, living the “truth of reality” is harsher and more difficult.

It's intriguing and draws you in. Taking the red bean causes what? Where am I going? I think they did well in piqueing a newcomer's interest.

Not convinced by the Garden? Read the Manifesto. It reinforces Azuki's role.

Here comes a new wave…

And surfing here is different.

Breaking down barriers.

Building open communities.

Creating magic internet money with our friends.

To those who don’t get it, we tell them: gm.

They’ll come around eventually.

Here’s to the ones with the courage to jump down a peculiar rabbit hole.

One that pulls you away from a world that’s created by many and owned by few…

To a world that’s created by more and owned by all.

From The Garden come the human beans that sprout into your family.

We rise together.

We build together.

We grow together.

Ready to take the red bean?

Not to mention the Mindmap, it sets Azuki apart from other projects and overused Roadmaps. I like how the team recognizes that the NFT space is not linear. So many of us are still trying to figure it out. It is Azuki's vision to adapt to changing environments while maintaining their values. I admire their commitment to long-term growth.

Conclusion

To be honest, I have no idea what the future holds. Azuki is still new and could fail. But I'm a long-term Azuki fan. I don't care about quick gains. The future looks bright for Azuki. I believe in the team's output. I love being an Azuki.

Thank you! IKUZO!

Full post here

Sea Launch

3 years ago

📖 Guide to NFT terms: an NFT glossary.

NFT lingo can be overwhelming. As the NFT market matures and expands so does its own jargon, slang, colloquialisms or acronyms.

This ever-growing NFT glossary goal is to unpack key NFT terms to help you better understand the NFT market or at least not feel like a total n00b in a conversation about NFTs on Reddit, Discord or Twitter.

#

1:1 Art

Art where each piece is one of a kind (1 of 1). Unlike 10K projects, PFP or Generative Art collections have a cap of NFTs released that can range from a few hundreds to 10K.

1/1 of X

Contrary to 1:1 Art, 1/1 of X means each NFT is unique, but part of a large and cohesive collection. E.g: Fidenzas by Tyler Hobbs or Crypto Punks (each Punk is 1/1 of 10,000).

10K Project

A type of NFT collection that consists of approximately 10,000 NFTs (but not strictly).

A

AB

ArtBlocks, the most important platform for generative art currently.

AFAIK

As Far As I Know.

Airdrop

Distribution of an NFT token directly into a crypto wallet for free. Can be used as a marketing campaign or as scam by airdropping fake tokens to empty someone’s wallet.

Alpha

The first or very primitive release of a project. Or Investment term to track how a certain investment outdoes the market. E.g: Alpha of 1.0 = 1% improvement or Alpha of 20.0 = 20% improvement.

Altcoin

Any other crypto that is not Bitcoin. Bitcoin Maximalists can also refer to them as shitcoins.

AMA

Ask Me Anything. NFT creators or artists do sessions where anyone can ask questions about the NFT project, team, vision, etc. Usually hosted on Discord, but also on Reddit or even Youtube.

Ape

Someone can be aping, ape in or aped on an NFT meaning someone is taking a large position relative to its own portfolio size. Some argue that when someone apes can mean that they're following the hype, out of FOMO or without due diligence. Not related directly to the Bored Ape Yatch Club.

ATH

All-Time High. When a NFT project or token reaches the highest price to date.

Avatar project

An NFT collection that consists of avatars that people can use as their profile picture (see PFP) in social media to show they are part of an NFT community like Crypto Punks.

Axie Infinity

ETH blockchain-based game where players battle and trade Axies (digital pets). The main ERC-20 tokens used are Axie Infinity Shards (AXS) and Smooth Love Potions (formerly Small Love Potion) (SLP).

Axie Infinity Shards

AXS is an Eth token that powers the Axie Infinity game.

B

Bag Holder

Someone who holds its position in a crypto or keeps an NFT until it's worthless.

BAYC

Bored Ape Yacht Club. A very successful PFP 1/1 of 10,000 individual ape characters collection. People use BAYC as a Twitter profile picture to brag about being part of this NFT community.

Bearish

Borrowed finance slang meaning someone is doubtful about the current market and that it will crash.

Bear Market

When the Crypto or NFT market is going down in value.

Bitcoin (BTC)

First and original cryptocurrency as outlined in a whitepaper by the anonymous creator(s) Satoshi Nakamoto.

Bitcoin Maximalist

Believer that Bitcoin is the only cryptocurrency needed. All other cryptocurrencies are altcoins or shitcoins.

Blockchain

Distributed, decentralized, immutable database that is the basis of trust in Web 3.0 technology.

Bluechip

When an NFT project has a long track record of success and its value is sustained over time, therefore considered a solid investment.

BTD

Buy The Dip. A bear market can be an opportunity for crypto investors to buy a crypto or NFT at a lower price.

Bullish

Borrowed finance slang meaning someone is optimistic that a market will increase in value aka moon.

Bull market

When the Crypto or NFT market is going up and up in value.

Burn

Common crypto strategy to destroy or delete tokens from the circulation supply intentionally and permanently in order to limit supply and increase the value.

Buying on secondary

Whenever you don’t mint an NFT directly from the project, you can always buy it in secondary NFT marketplaces like OpenSea. Most NFT sales are secondary market sales.

C

Cappin or Capping

Slang for lying or faking. Opposed to no cap which means “no lie”.

Coinbase

Nasdaq listed US cryptocurrency exchange. Coinbase Wallet is one of Coinbase’s products where users can use a Chrome extension or app hot wallet to store crypto and NFTs.

Cold wallet

Otherwise called hardware wallet or cold storage. It’s a physical device to store your cryptocurrencies and/or NFTs offline. They are not connected to the Internet so are at less risk of being compromised.

Collection

A set of NFTs under a common theme as part of a NFT drop or an auction sale in marketplaces like OpenSea or Rarible.

Collectible

A collectible is an NFT that is a part of a wider NFT collection, usually part of a 10k project, PFP project or NFT Game.

Collector

Someone who buys NFTs to build an NFT collection, be part of a NFT community or for speculative purposes to make a profit.

Cope

The opposite of FOMO. When someone doesn’t buy an NFT because one is still dealing with a previous mistake of not FOMOing at a fraction of the price. So choosing to stay out.

Consensus mechanism

Method of authenticating and validating a transaction on a blockchain without the need to trust or rely on a central authority. Examples of consensus mechanisms are Proof of Work (PoW) or Proof of Stake (PoS).

Cozomo de’ Medici

Twitter alias used by Snoop Dogg for crypto and NFT chat.

Creator

An NFT creator is a person that creates the asset for the NFT idea, vision and in many cases the art (e.g. a jpeg, audio file, video file).

Crowsale

Where a crowdsale is the sale of a token that will be used in the business, an Initial Coin Offering (ICO) is the sale of a token that’s linked to the value of the business. Buying an ICO token is akin to buying stock in the company because it entitles you a share of the earnings and profits. Also, some tokens give you voting rights similar to holding stock in the business. The US Securities and Exchange Commission recently ruled that ICOs, but not crowdselling, will be treated as the sale of a security. This basically means that all ICOs must be registered like IPOs and offered only to accredited investors. This dramatically increases the costs and limits the pool of potential buyers.

Crypto Bags/Bags

Refers to how much cryptocurrencies someone holds, as in their bag of coins.

Cryptocurrency

The native coin of a blockchain (or protocol coin), secured by cryptography to be exchanged within a Peer 2 Peer economic system. E.g: Bitcoin (BTC) for the Bitcoin blockchain, Ether (ETH) for the Ethereum blockchain, etc.

Crypto community

The community of a specific crypto or NFT project. NFT communities use Twitter and Discord as their primary social media to hang out.

Crypto exchange

Where someone can buy, sell or trade cryptocurrencies and tokens.

Cryptography

The foundation of blockchain technology. The use of mathematical theory and computer science to encrypt or decrypt information.

CryptoKitties

One of the first and most popular NFT based blockchain games. In 2017, the NFT project almost broke the Ethereum blockchain and increased the gas prices dramatically.

CryptoPunk

Currently one of the most valuable blue chip NFT projects. It was created by Larva Labs. Crypto Punk holders flex their NFT as their profile picture on Twitter.

CT

Crypto Twitter, the crypto-community on Twitter.

Cypherpunks

Movement in the 1980s, advocating for the use of strong cryptography and privacy-enhancing technologies as a route to social and political change. The movement contributed and shaped blockchain tech as we know today.

D

DAO

Stands for Decentralized Autonomous Organization. When a NFT project is structured like a DAO, it grants all the NFT holders voting rights, control over future actions and the NFT’s project direction and vision. Many NFT projects are also organized as DAO to be a community-driven project.

Dapp

Mobile or web based decentralized application that interacts on a blockchain via smart contracts. E.g: Dapp is the frontend and the smart contract is the backend.

DCA

Acronym for Dollar Cost Averaging. An investment strategy to reduce the impact of crypto market volatility. E.g: buying into a crypto asset on a regular monthly basis rather than a big one time purchase.

Ded

Abbreviation for dead like "I sold my Punk for 90 ETH. I am ded."

DeFi

Short for Decentralized Finance. Blockchain alternative for traditional finance, where intermediaries like banks or brokerages are replaced by smart contracts to offer financial services like trading, lending, earning interest, insure, etc.

Degen

Short for degenerate, a gambler who buys into unaudited or unknown NFT or DeFi projects, without proper research hoping to chase high profits.

Delist

No longer offer an NFT for sale on a secondary market like Opensea. NFT Marketplaces can delist an NFT that infringes their rules. Or NFT owners can choose to delist their NFTs (has long as they have sufficient funds for the gas fees) due to price surges to avoid their NFT being bought or sold for a higher price.

Derivative

Projects derived from the original project that reinforces the value and importance of the original NFT. E.g: "alternative" punks.

Dev

A skilled professional who can build NFT projects using smart contracts and blockchain technology.

Dex

Decentralised Exchange that allows for peer-to-peer trustless transactions that don’t rely on a centralized authority to take place. E.g: Uniswap, PancakeSwap, dYdX, Curve Finance, SushiSwap, 1inch, etc.

Diamond Hands

Someone who believes and holds a cryptocurrency or NFT regardless of the crypto or NFT market fluctuations.

Discord

Chat app heavily used by crypto and NFT communities for knowledge sharing and shilling.

DLT

Acronym for Distributed Ledger Technology. It’s a protocol that allows the secure functioning of a decentralized database, through cryptography. This technological infrastructure scraps the need for a central authority to keep in check manipulation or exploitation of the network.

Dog coin

It’s a memecoin based on the Japanese dog breed, Shiba Inu, first popularised by Dogecoin. Other notable coins are Shiba Inu or Floki Inu. These dog coins are frequently subjected to pump and dumps and are extremely volatile. The original dog coin DOGE was created as a joke in 2013. Elon Musk is one of Dogecoin's most famous supporters.

Doxxed/Doxed

When the identity of an NFT team member, dev or creator is public, known or verifiable. In the NFT market, when a NFT team is doxed it’s a usually sign of confidence and transparency for NFT collectors to ensure they will not be scammed for an anonymous creator.

Drop

The release of an NFT (single or collection) into the NFT market.

DYOR

Acronym for Do Your Own Research. A common expression used in the crypto or NFT community to disclaim responsibility for the financial/strategy advice someone is providing the community and to avoid being called out by others in theNFT or crypto community.

E

EIP-1559 EIP

Referring to Ethereum Improvement Proposal 1559, commonly known as the London Fork. It’s an upgrade to the Ethereum protocol code to improve the blockchain security and scalability. The major change consists in shifting from a proof-of-work consensus mechanism (PoW) to a low energy and lower gas fees proof-of-stake system (PoS).

ERC-1155

Stands for Ethereum Request for Comment-1155. A multi-token standard that can represent any number of fungible (ERC-20) and non-fungible tokens (ERC-721).

ERC-20

Ethereum Request for Comment-20 is a standard defining a fungible token like a cryptocurrency.

ERC-721

Ethereum Request for Comment-721 is a standard defining a non-fungible token (NFT).

ETH

Aka Ether, the currency symbol for the native cryptocurrency of the Ethereum blockchain.

ETH2.0

Also known as the London Fork or EIP-1559 EIP. It’s an upgrade to the Ethereum network to improve the network’s security and scalability. The most dramatic change is the shift from the proof-of-work consensus mechanism (PoW) to proof-of-stake system (PoS).

Ether

Or ETH, the native cryptocurrency of the Ethereum blockchain.

Ethereum

Network protocol that allows users to create and run smart contracts over a decentralized network.

F

FCFS

Acronym for First Come First Served. Commonly used strategy in a NFT collection drop when the demand surpasses the supply.

Few

Short for "few understand". Similar to the irony behind the "probably nothing" expression. Like X person bought into a popular NFT, because it understands its long term value.

Fiat Currencies or Money

National government-issued currencies like the US Dollar (USD), Euro (EUR) or Great British Pound (GBP) that are not backed by a commodity like silver or gold. FIAT means an authoritative or arbitrary order like a government decree.

Flex

Slang for showing off. In the crypto community, it’s a Lamborghini or a gold Rolex. In the NFT world, it’s a CryptoPunk or BAYC PFP on Twitter.

Flip

Quickly buying and selling crypto or NFTs to make a profit.

Flippening

Colloquial expression coined in 2017 for when Ethereum’s market capitalisation surpasses Bitcoin’s.

Floor Price

It means the lowest asking price for an NFT collection or subset of a collection on a secondary market like OpenSea.

Floor Sweep

Refers when a NFT collector or investor buys all the lowest listed NFTs on a secondary NFT marketplace.

FOMO

Acronym for Fear Of Missing Out. Buying a crypto or NFT out of fear of missing out on the next big thing.

FOMO-in

Buying a crypto or NFT regardless if it's at the top of the market for FOMO.

Fractionalize

Turning one NFT like a Crypto Punk into X number of fractions ERC-20 tokens that prove ownership of that Punk. This allows for i) collective ownership of an NFT, ii) making an expensive NFT affordable for the common NFT collector and iii) adds more liquidity to a very illiquid NFT market.

FR

Abbreviation for For Real?

Fren

Means Friend and what people in the NFT community call each other in an endearing and positive way.

Foundation

An exclusive, by invitation only, NFT marketplace that specializes in NFT art.

Fungible

Means X can be traded for another X and still hold the same value. E.g: My dollars = your dollars. My 1 ether = your 1 ether. My casino chip = your casino chip. On Ethereum, fungible tokens are defined by the ERC-20 standard.

FUD

Acronym for Fear Uncertainty Doubt. It can be a) when someone spreads negative and sometimes false news to discredit a certain crypto or NFT project. Or b) the overall negative feeling regarding the future of the NFT/Crypto project or market, especially when going through a bear market.

Fudder

Someone who has FUD or engages in FUD about a NFT project.

Fudding your own bags

When an NFT collector or crypto investor speaks negatively about an NFT or crypto project he/she has invested in or has a stake in. Usually negative comments about the team or vision.

G

G

Means Gangster. A term of endearment used amongst the NFT Community.

Gas/Gas fees/Gas prices

The fee charged to complete a transaction in a blockchain. These gas prices vary tremendously between the blockchains, the consensus mechanism used to validate transactions or the number of transactions being made at a specific time.

Gas war

When a lot of NFT collectors (or bots) are trying to mint an NFT at once and therefore resulting in gas price surge.

Generative art

Artwork that is algorithmically created by code with unique traits and rarity.

Genesis drop

It refers to the first NFT drop a creator makes on an NFT auction platform.

GG

Interjection for Good Game.

GM

Interjection for Good Morning.

GMI

Acronym for Going to Make It. Opposite of NGMI (NOT Going to Make It).

GOAT

Acronym for Greatest Of All Time.

GTD

Acronym for Going To Dust. When a token or NFT project turns out to be a bad investment.

GTFO

Get The F*ck Out, as in “gtfo with that fud dude” if someone is talking bull.

GWEI

One billionth of an Ether (ETH) also known as a Shannon / Nanoether / Nano — unit of account used to price Ethereum gas transactions.

H

HEN (Hic Et Nunc)

A popular NFT art marketplace for art built on the Tezos blockchain. Big NFT marketplace for inexpensive NFTs but not a very user-friendly UI/website.

HODL

Misspelling of HOLD coined in an old Reddit post. Synonym with “Hold On for Dear Life” meaning hold your coin or NFT until the end, whether that they’ll moon or dust.

Hot wallet

Wallets connected to the Internet, less secure than cold wallet because they’re more susceptible to hacks.

Hype

Term used to show excitement or anticipation about an upcoming crypto project or NFT.

I

ICO

Acronym for Initial Coin Offering. It’s the crypto equivalent to a stocks’ IPO (Initial Public Offering) but with far less scrutiny or regulation (leading to a lot of scams). ICO’s are a popular way for crypto projects to raise funds.

IDO

Acronym for Initial Dex Offering. To put it simply it means to launch NFTs or tokens via a decentralized liquidity exchange. It’s a common fundraising method used by upcoming crypto or NFT projects. Many consider IDOs a far better fundraising alternative to ICOs.

IDK

Acronym for I Don’t Know.

IDEK

Acronym for I Don’t Even Know.

Imma

Short for I’m going to be.

IRL

Acronym for In Real Life. Refers to the physical world outside of the online/virtual world of crypto, NFTs, gaming or social media.

IPFS

Acronym for Interplanetary File System. A peer-to-peer file storage system using hashes to recall and preserve the integrity of the file, commonly used to store NFTs outside of the blockchain.

It’s Money Laundering

Someone can use this expression to suggest that NFT prices aren’t real and that actually people are using NFTs to launder money, without providing much proof or explanation on how it works.

IYKYK

Stands for If You Know, You Know This. Similar to the expression "few", used when someone buys into a popular crypto or NFT project, slightly because of FOMO but also because it believes in its long term value.

J

JPEG/JPG

File format typically used to encode NFT art. Some people also use Jpeg to mock people buying NFTs as in “All that money for a jpeg”.

K

KMS

Short for Kill MySelf.

L

Larva Labs/ LL

NFT Creators behind the popular NFT projects like Cryptopunks,Meebits or Autoglyphs.

Laser eyes

Bitcoin meme signalling support for BTC and/or it will break the $100k per coin valuation.

LFG

Acronym for Let’s F*cking Go! A common rallying call used in the crypto or NFT community to lead people into buying an NFT or a crypto.

Liquidity

Term that means that a token or NFT has a high volume activity in the crypto/NFT market. It’s easily sold and resold. But usually the NFT market it’s illiquid when compared to the general crypto market, due to the non-fungibility nature of an NFT (there are less buyers for every NFTs out there).

LMFAO

Stands for Laughing My F*cking Ass Off.

Looks Rare

Ironic expression commonly used in the NFT Community. Rarity is a driver of an NFT’s value.

London Hard Fork

Known as EIP-1559, was an Ethereum code upgrade proposal designed to improve the blockchain security and scalability. It’s major change is to shift from PoW to PoS consensus mechanism.

Long run

Means someone is committed to the NFT market or an NFT project in the long term.

M

Maximalist

Typically refers to Bitcoin Maximalists. People who only believe that Bitcoin is the most secure and resilient blockchain. For Maximalists, all other cryptocurrencies are shitcoins therefore a waste of time, development and money.

McDonald's

Common and ironic expression amongst the crypto community. It means that Mcdonald’s is always a valid backup plan or career in the case all cryptocurrencies crash and disappear.

Meatspace

Synonymous with IRL - In Real Life.

Memecoin

Cryptocurrency like Dogecoin that is based on an internet joke or meme.

Metamask

Popular crypto hot wallet platform to store crypto and NFTs.

Metaverse

Term was coined by writer Neal Stephenson in the 1992 dystopian novel “Snow Crash”. It’s an immersive and digital place where people interact via their avatars. Big tech players like Meta (formerly known as Facebook) and other independent players have been designing their own version of a metaverse. NFTs can have utility for users like buying, trading, winning, accessing, experiencing or interacting with things inside a metaverse.

Mfer

Short for “mother fker”.

Miners

Single person or company that mines one or more cryptocurrencies like Bitcoin or Ethereum. Both blockchains need computing power for their Proof of Work consensus mechanism. Miners provide the computing power and receive coins/tokens in return as payment.

Mining

Mining is the process by which new tokens enter in circulation as for example in the Bitcoin blockchain. Also, mining ensures the validity of new transactions happening in a given blockchain that uses the PoW consensus mechanism. Therefore, the ones who mine are rewarded by ensuring the validity of a blockchain.

Mint/Minting

Mint an NFT is the act of publishing your unique instance to a specific blockchain like Ethereum or Tezos blockchain. In simpler terms, a creator is adding a one-of-kind token (NFT) into circulation in a specific blockchain.

Once the NFT is minted - aka created - NFT collectors can i) direct mint, therefore purchase the NFT by paying the specified amount directly into the project’s wallet. Or ii) buy it via an intermediary like an NFT marketplace (e.g: OpenSea, Foundation, Rarible, etc.). Later, the NFT owner can choose to resell the NFT, most NFT creators set up a royalty for every time their NFT is resold.

Minting interval

How often an NFT creator can mint or create tokens.

MOAR

A misspelling that means “more”.

Moon/Mooning

When a coin (e.g. ETH), or token, like an NFT goes exponential in price and the price graph sees a vertical climb. Crypto or NFT users then use the expression that “X token is going to the moon!”.

Moon boys

Slang for crypto or NFT holders who are looking to pump the price dramatically - taking a token to the moon - for short term gains and with no real long term vision or commitment.

N

Never trust, always verify

Treat everyone or every project like something potentially malicious.

New coiner

Crypto slang for someone new to the cryptocurrency space. Usually newcomers can be more susceptible to FUD or scammers.

NFA

Acronym for Not Financial Advice.

NFT

Acronym for Non-Fungible Token. The type of token that can be created, bought, sold, resold and viewed in different dapps. The ERC-721 smart contract standard (Ethereum blockchain) is the most popular amongst NFTs.

NFT Marketplace / NFT Auction platform

Platforms where people can sell and buy NFTs, either via an auction or pay the seller’s price. The largest NFT marketplace is OpenSea. But there are other popular NFT marketplace examples like Foundation, SuperRare, Nifty Gateway, Rarible, Hic et Nunc (HeN), etc.

NFT Whale

A NFT collector or investor who buys a large amount of NFTs.

NGMI

Acronym for Not Going to Make It. For example, something said to someone who has paper hands.

NMP

Acronym for Not My Problem.

Nocoiner

It can be someone who simply doesn’t hold cryptocurrencies, mistrust the crypto market or believes that crypto is either a scam or a ponzi scheme.

Noob/N00b/Newbie

Slang for someone new or not experienced in cryptocurrency or NFTs. These people are more susceptible to scams, drawn into pump and dumps or getting rekt on bad coins.

Normie/Normy

Similar expression for a nocoiner.

NSFW

Acronym for Not Suitable For Work. Referring to online content inappropriate for viewing in public or at work. It began as mostly a tag for sexual content, nudity, or violence, but it has envolved to range a number of other topics that might be delicate or trigger viewers.

Nuclear NFTs

An NFT or collectible with more than 1,000 owners. For the NFT to be sold or resold, every co-owners must give their permission beforehand. Otherwise, the NFT transaction can’t be made.

O

OG

Acronym for Original Gangster and it popularized by 90s Hip Hop culture. It means the first, the original or the person who has been around since the very start and earned respect in the community. In NFT terms, Cryptopunks are the OG of NFTs.

On-chain vs Off-chain

An on-chain NFT is when the artwork (like a jpeg, video or music file) is stored directly into the blockchain making it more secure and less susceptible to being stolen. But, note that most blockchains can only store small amounts of data.

Off-chain NFTs means that the high quality image, music or video file is not stored in the blockchain. But, the NFT data is stored on an external party like a) a centralized server, highly vulnerable to the server being shut down/exploited. Or b) an InterPlanetary File System (IPFS), also an external party but more secure way of finding data because it utilizes a distributed, decentralized system.

OpenSea

By far the largest NFT marketplace in the world, currently.

P

Paper Hands

A crypto or NFT holder who is permeable to negative market sentiment or FUD. And does not hold their crypto or NFT for long. Expression used to describe someone who sells as soon as NFTs enter a bear market.

PFP

Stands for Picture For Profile. Twitter users who hold popular NFTs like Crypto Punk or BAYC use their punk or monkey avatar as their profile picture.

POAP NFT

Stands for Proof of Attendance Protocol. These types of NFTs are awarded to attendees of events, regardless if they’re physical or virtual, as proof you attended.

PoS

Stands for Proof of Stake. A consensus mechanism used by blockchains like Bitcoin or Ethereum to achieve agreement, trust and security in every transaction and keep the integrity of the blockchain intact. PoS mechanisms are considered more environmentally friendly than PoW as they’re lower energy and in emissions.

PoW

Stands for Proof of Work. A consensus mechanism used by blockchains like Bitcoin to achieve agreement, trust and security and keep the transactional integrity of the blockchain intact. PoW mechanism requires a lot of computational power, therefore uses more energy resources and higher CO2 emissions than the PoS mechanism.

Private Key

It can be similar to a password. It’s a secret number that allows users to access their cold or hot wallet funds, prove ownership of a certain address and sign transactions on the blockchain.

It’s not advisable to share a private key with anyone as it makes a person vulnerable to thefts. In case someone loses or forgets its private key, it can use a recovery phrase to restore access to a crypto or NFT wallet.

Pre-mine

A term used in crypto to refer to the act of creating a set amount of tokens before their public launch. It can also be known as a Genesis Sale and is usually associated with Initial Coin Offerings (ICOs) in order to compensate founders, developers or early investors.

Probably nothing

It’s an ironic expression used by NFT enthusiasts to refer to an important or soon to be big news, project or person in the NFT space. Meaning when someone says probably nothing it actually means that it is probably something.

Protocol Coin

Stands for the native coin of a blockchain. As in Ether for the Ethereum blockchain or BTC on the Bitcoin blockchain.

Pump & Dump

The term pump means when a person or a group of people buy or convince others to buy large quantities of a crypto or an NFT with the single goal to drive the price to a peak. When the price peaks, these people sell their position high and for a hefty profit, therefore dumping the price and leaving other slower investors or newbies rekt or at a loss.

R

Rarity

Rarity in NFT terms refers to how rare an NFT is. The rarity can be defined by the number of traits, scarcity or properties of an NFT.

Reaching

Slang for an exaggeration over something to make it sound worse than what it actually is or to take a point/scenario too far.

Recovery phrase

A 12-word phrase that acts like backup for your crypto private keys. A person can recover all of the crypto wallet accounts’ private keys from the recovery phrase. Is not advisable to share the recovery phrase with anyone.

Rekt

Slang for wrecked. When a crypto or NFT project goes wrong or down in value sharply. Or more broadly, when something goes wrong like a person is price out by the gas surge or an NFT floor price goes down.

Right Click Save As

An Ironic expression used by people who don’t understand the value or potential unlocked by NFTs. Person who makes fun that she/he can easily get a digital artwork by Right Click Save As and mock the NFT space and its hype.

Roadmap

The strategy outlined by an NFT project. A way to explain to the NFT community or a potential NFT investor, the different stages, value and the long term vision of the NFT project.

Royalties

NFT creators can set up their NFT so each time their NFT is resold, the creator gets paid a percentage of the sale price.

RN

Acronym for Right Now.

Rug Pull/Rugged

Slang for a scam when the founders, team or developers suddenly leave a crypto project and run away with all the investors’ funds leaving them with nothing.

S

Satoshi Nakamoto

The anonymous creator of the Bitcoin whitepaper and whose identity has never been verified.

Scammer

Someone actively trying to steal other people’s crypto or NFTs.

Secondary

Secondary refers to secondary NFT marketplaces, where NFT collectors or investors can resell NFTs after they’ve been minted. The price of an NFT or NFT collection is determined by those who list them.

Seed phrase

Another name for recovery phrase is the 12-word phrase that allows you to recover all of the crypto wallet accounts’ private keys and regain control of the wallet. Is not advisable to share the seed phrase with anyone.

Seems legit

When an NFT project or a person in the NFT community looks promising and the real deal, meaning seems legitimate. Depending on the context can also be used ironically.

Seems rare

An ironic expression or dismissive comment used by the NFT community. For example, It can be used sarcastically when someone asks for feedback on an NFT they own or created.

Ser

Slang for sir and a polite way of addressing others in an NFT community.

Shill

Expression when someone wants to promote or get exposure to an NFT they own or created.

Shill Thread

It’s a common Twitter strategy to gain traction by encouraging NFT creators to share a link to their NFT project in the hopes of getting bought or noticed by the NFT Community and potential buyers.

Simp/Simping

A NFT holder or creator who comes off as trying to hard impress an NFT whale or investor.

Sh*tposter

A person who mostly posts meme content on Twitter for fun.

SLP

Acronym for Smooth Love Potion. It’s a token players can earn as a reward in the NFT game Axie Infinity.

Smart Contract

A self-executing contract where the terms of the agreement between buyer and seller are directly written into the code and without third party or human intervention. Ethereum is a blockchain that can execute smart contracts, on the contrary to Bitcoin which does not have that capability.

SMFH

Acronym for Shaking My F*cking Head. Common reply to a person showing unbelievable idiocy.

Sock Puppet

Scam account used to lure noob investors into fake investment services.

Snag

It means to buy an NFT quickly and for a very low price. Can also be known as sniping.

Sotheby’s

Very famous auction house that has recently auctioned Beeple’s NFTs or Bored Ape Yacht Club and Crypto Punks’ NFT collections.

Stake

Crypto term for locking up a certain amount of crypto tokens for a set period of time to earn interest. In the NFT space, there are popping up a lot of projects or services that allow NFT holders to earn interest for holding a certain NFT.

Szn

Stands for season referring to crypto or NFT market cycles.

T

TINA

Acronym for There Is No Alternative. Example: someone asks “why are you investing in BTC?”, to which the reply is “TINA”.

TINA RIF

Acronym for There Is No Alternative Resistance Is Futile.

This is the way

A commendation for positive behavior by someone in the NFT Community.

Tokenomics

Referring to the economics of cryptocurrencies, DeFi or NFT projects.

V

Valhalla

Ironic use of the Viking “heaven”. Meaning someone’s NFT collection is either going to be a profitable and blue chip project, therefore they can ascend to Valhalla or is going to tank and that person will have to work at a Mcdonald’s.

Vibe

Term used to express a positive emotional state.

Volatile/Volatility

Term used to describe rapid market fluctuations and crypto or NFT prices go up and down quickly in a short period.

W

WAGMI

Acronym for We Are Going to Make It. Rally cry to build momentum for a crypto or NFT project and lead even more people into buying, shilling or supporting a specific project.

Wallet

There can be a hot or cold wallet, but both are a place where someone can store their cryptocurrency and tokens. Hot wallets are always connected to the Internet like MetaMask, Trust wallet or Phantom. On the contrary cold wallets are hardware wallets to store crypto or NFTs offline like Nano Ledger.

Weak Hands

Synonymous with Paper Hands. Someone who immediately sells their crypto or NFT because of a bear market, FUD or any other negative sentiment.

Web 1.0

Refers to the beginning of the Web. A period from around 1990 to 2005, also known as the read-only web.

Web 2.0

Refers to an iteration of Web 1.0. From 2005 to the present moment, where social media platforms like Facebook, Instagram, TikTok, Google, Twitter, etc reshaped the web, therefore becoming the read-write web.

Web 3.0

A term coined by Ethereum co-founder Gavin Wood and it’s an idea of what the future of the web could look like. Most peoples’ data, info or content would no longer be centralized in Web 2.0 giants - the Big Tech - but decentralized, mostly thanks to blockchain technology. Web 3.0 could be known as read-write-trust web.

Wen

As in When.

Wen Moon

Popular expression from crypto Twitter not so much in the NFT space. Refers to the still distant future when a token will moon.

Whitepaper

Document released by a crypto or NFT project where it lays the technical information behind the concept, vision, roadmap and plans to grow a certain project.

Whale

Someone who owns a large position on a specific or many cryptos or NFTs.

Y

Yodo

Acronym for You Only Die Once. The opposite of Yolo.

Yolo

Acronym for You Only Live Once. A person can use this when they just realized they bought a shitcoin or crap NFT and they’re getting rekt.

Original post

Jayden Levitt

3 years ago

How to Explain NFTs to Your Grandmother, in Simple Terms

In simple terms, you probably don’t.

But try. Grandma didn't grow up with Facebook, but she eventually joined.

Perhaps the fear of being isolated outweighed the discomfort of learning the technology.

Grandmas are Facebook likers, sharers, and commenters.

There’s no stopping her.

Not even NFTs. Web3 is currently very complex.

It's difficult to explain what NFTs are, how they work, and why we might use them.

Three explanations.

1. Everything will be ours to own, both physically and digitally.

Why own something you can't touch? What's the point?

Blockchain technology proves digital ownership.

Untouchables need ownership proof. What?

Digital assets reduce friction, save time, and are better for the environment than physical goods.

Many valuable things are intangible. Feeling like your favorite brands. You'll pay obscene prices for clothing that costs pennies.

Secondly, NFTs Are Contracts. Agreements Have Value.

Blockchain technology will replace all contracts and intermediaries.

Every insurance contract, deed, marriage certificate, work contract, plane ticket, concert ticket, or sports event is likely an NFT.

We all have public wallets, like Grandma's Facebook page.

3. Your NFT Purchases Will Be Visible To Everyone.

Everyone can see your public wallet. What you buy says more about you than what you post online.

NFTs issued double as marketing collateral when seen on social media.

While I doubt Grandma knows who Snoop Dog is, imagine him or another famous person holding your NFT in his public wallet and the attention that could bring to you, your company, or brand.

This Technical Section Is For You

The NFT is a contract; its founders can add value through access, events, tuition, and possibly royalties.

Imagine Elon Musk releasing an NFT to his network. Or yearly business consultations for three years.

Christ-alive.

It's worth millions.

These determine their value.

No unsuspecting schmuck willing to buy your hot potato at zero. That's the trend, though.

Overpriced NFTs for low-effort projects created a bubble that has burst.

During a market bubble, you can make money by buying overvalued assets and selling them later for a profit, according to the Greater Fool Theory.

People are struggling. Some are ruined by collateralized loans and the gold rush.

Finances are ruined.

It's uncomfortable.

The same happened in 2018, during the ICO crash or in 1999/2000 when the dot com bubble burst. But the underlying technology hasn’t gone away.

You might also like

Owolabi Judah

3 years ago

How much did YouTube pay for 10 million views?

Ali's $1,054,053.74 YouTube Adsense haul.

YouTuber, entrepreneur, and former doctor Ali Abdaal. He began filming productivity and financial videos in 2017. Ali Abdaal has 3 million YouTube subscribers and has crossed $1 million in AdSense revenue. Crazy, no?

Ali will share the revenue of his top 5 youtube videos, things he's learned that you can apply to your side hustle, and how many views it takes to make a livelihood off youtube.

First, "The Long Game."

All good things take time to bear fruit. Compounding improves everything. Long-term work yields better returns. Ali made his first dollar after nine months and 85 videos.

Second, "One piece of content can transform your life, but you never know which one."

Had he abandoned YouTube at 84 videos without making any money, he wouldn't have filmed the 85th video that altered everything.

Third Lesson: Your Industry Choice Can Multiply.

The industry or niche you target as a business owner or side hustler can have a major impact on how much money you make.

Here are the top 5 videos.

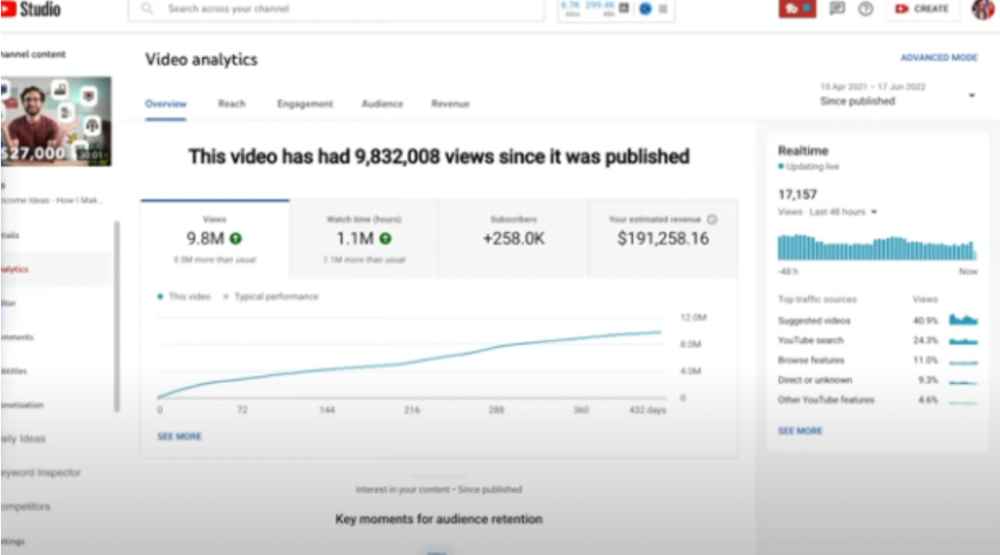

1) 9.8m views: $191,258.16 for 9 passive income ideas

Ali made 2 points.

We should consider YouTube videos digital assets. They're investments, which make us money. His investments are yielding passive income.

Investing extra time and effort in your films can pay off.

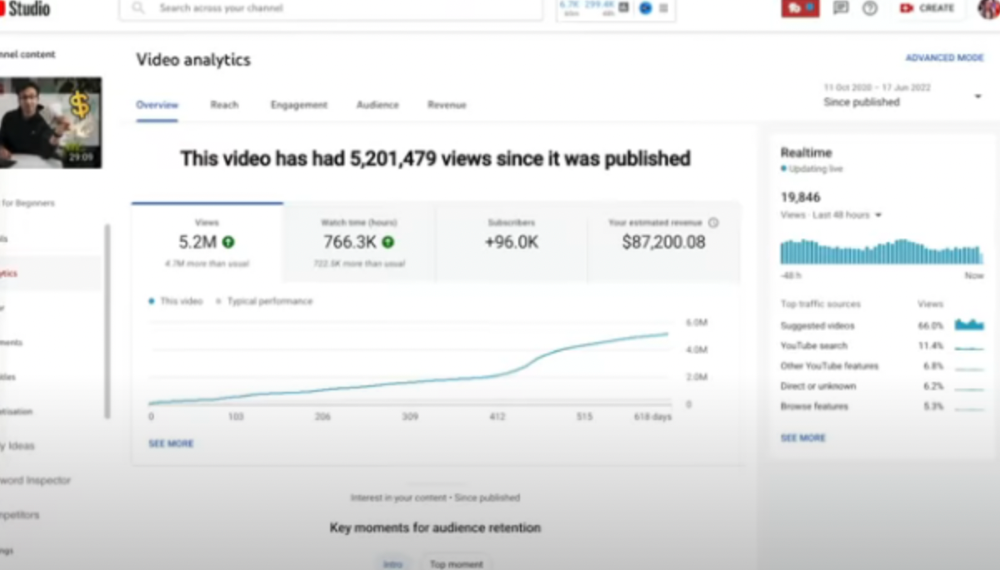

2) How to Invest for Beginners — 5.2m Views: $87,200.08.

This video did poorly in the first several weeks after it was published; it was his tenth poorest performer. Don't worry about things you can't control. This applies to life, not just YouTube videos.

He stated we constantly have anxieties, fears, and concerns about things outside our control, but if we can find that line, life is easier and more pleasurable.

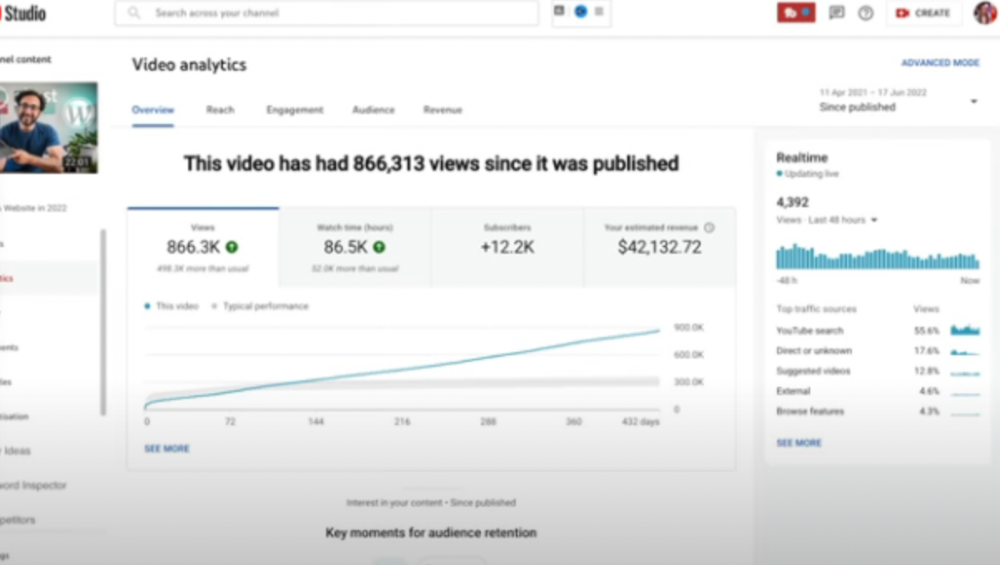

3) How to Build a Website in 2022— 866.3k views: $42,132.72.

The RPM was $48.86 per thousand views, making it his highest-earning video. Squarespace, Wix, and other website builders are trying to put ads on it and competing against one other, so ad rates go up.

Because it was beyond his niche, Ali almost didn't make the video. He made the video because he wanted to help at least one person.

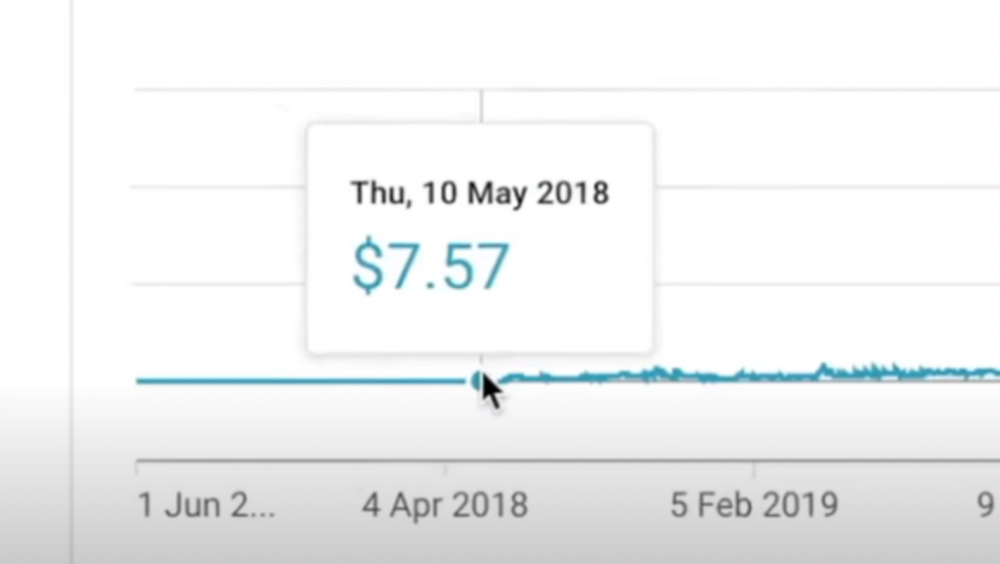

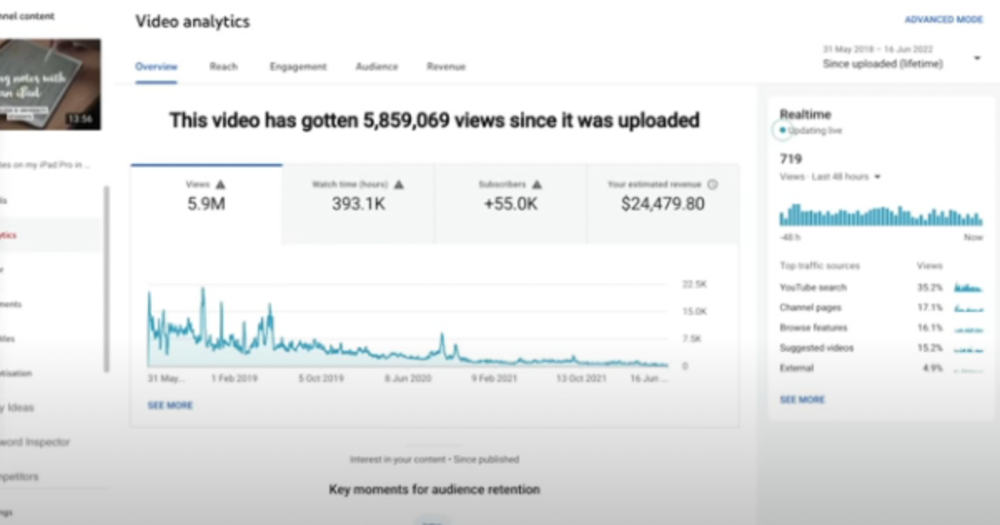

4) How I take notes on my iPad in medical school — 5.9m views: $24,479.80

85th video. It's the video that affected Ali's YouTube channel and his life the most. The video's success wasn't certain.

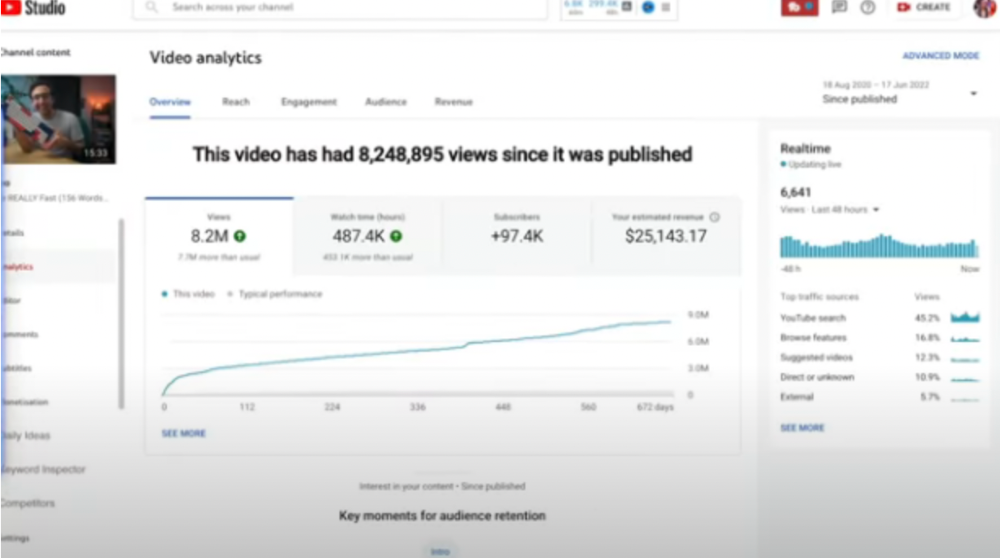

5) How I Type Fast 156 Words Per Minute — 8.2M views: $25,143.17

Ali didn't know this video would perform well; he made it because he can type fast and has been practicing for 10 years. So he made a video with his best advice.

How many views to different wealth levels?

It depends on geography, niche, and other monetization sources. To keep things simple, he would solely utilize AdSense.

How many views to generate money?

To generate money on Youtube, you need 1,000 subscribers and 4,000 hours of view time. How much work do you need to make pocket money?

Ali's first 1,000 subscribers took 52 videos and 6 months. The typical channel with 1,000 subscribers contains 152 videos, according to Tubebuddy. It's time-consuming.

After monetizing, you'll need 15,000 views/month to make $5-$10/day.

How many views to go part-time?

Say you make $35,000/year at your day job. If you work 5 days/week, you make $7,000/year each day. If you want to drop down from 5 days to 4 days/week, you need to make an extra $7,000/year from YouTube, or $600/month.

What's the quit-your-job budget?

Silicon Valley Girl is in a highly successful niche targeting tech-focused folks in the west. When her channel had 500k views/month, she made roughly $3,000/month or $47,000/year, enough to quit your work.

Marina has another 1.5m subscriber channel in Russia, which has a lower rpm because fewer corporations advertise there than in the west. 2.3 million views/month is $4,000/month or $50,000/year, enough to quit your employment.

Marina is an intriguing example because she has three YouTube channels with the same skills, but one is 16x more profitable due to the niche she chose.

In Ali's case, he made 100+ videos when his channel was producing enough money to quit his job, roughly $4,000/month.

How many views make you rich?

Depending on how you define rich. Ali felt prosperous with over $100,000/year and 3–5m views/month.

Conclusion

YouTubers and artists don't treat their work like a company, which is a mistake. Businesses have been attempting to figure this out for decades, if not centuries.

We can learn from the business world how to monetize YouTube, Instagram, and Tiktok and make them into sustainable enterprises where we can hire people and delegate tasks.

Bonus

Watch Ali's video explaining all this:

This post is a summary. Read the full article here

Darius Foroux

3 years ago

My financial life was changed by a single, straightforward mental model.

Prioritize big-ticket purchases

I've made several spending blunders. I get sick thinking about how much money I spent.

My financial mental model was poor back then.

Stoicism and mindfulness keep me from attaching to those feelings. It still hurts.

Until four or five years ago, I bought a new winter jacket every year.

Ten years ago, I spent twice as much. Now that I have a fantastic, warm winter parka, I don't even consider acquiring another one. No more spending. I'm not looking for jackets either.

Saving time and money by spending well is my thinking paradigm.

The philosophy is expressed in most languages. Cheap is expensive in the Netherlands. This applies beyond shopping.

In this essay, I will offer three examples of how this mental paradigm transformed my financial life.

Publishing books

In 2015, I presented and positioned my first book poorly.

I called the book Huge Life Success and made a funny Canva cover in 30 minutes. This:

That looks nothing like my present books. No logo or style. The book felt amateurish.

The book started bothering me a few weeks after publication. The advice was good, but it didn't appear professional. I studied the book business extensively.

I created a style for all my designs. Branding. Win Your Inner Wars was reissued a year later.

Title, cover, and description changed. Rearranging the chapters improved readability.

Seven years later, the book sells hundreds of copies a month. That taught me a lot.

Rushing to finish a project is enticing. Send it and move forward.

Avoid rushing everything. Relax. Develop your projects. Perform well. Perform the job well.

My first novel was underfunded and underworked. A bad book arrived. I then invested time and money in writing the greatest book I could.

That book still sells.

Traveling

I hate travel. Airports, flights, trains, and lines irritate me.

But, I enjoy traveling to beautiful areas.

I do it strangely. I make up travel rules. I never go to airports in summer. I hate being near airports on holidays. Unworthy.

No vacation packages for me. Those airline packages with a flight, shuttle, and hotel. I've had enough.

I try to avoid crowds and popular spots. July Paris? Nuts and bolts, please. Christmas in NYC? No, please keep me sane.

I fly business class behind. I accept upgrades upon check-in. I prefer driving. I drove from the Netherlands to southern Spain.

Thankfully, no lines. What if travel costs more? Thus? I enjoy it from the start. I start traveling then.

I rarely travel since I'm so difficult. One great excursion beats several average ones.

Personal effectiveness

New apps, tools, and strategies intrigue most productivity professionals.

No.

I researched years ago. I spent years investigating productivity in university.

I bought books, courses, applications, and tools. It was expensive and time-consuming.

Im finished. Productivity no longer costs me time or money. OK. I worked on it once and now follow my strategy.

I avoid new programs and systems. My stuff works. Why change winners?

Spending wisely saves time and money.

Spending wisely means spending once. Many people ignore productivity. It's understudied. No classes.

Some assume reading a few articles or a book is enough. Productivity is personal. You need a personal system.

Time invested is one-time. You can trust your system for life once you find it.

Concentrate on the expensive choices.

Life's short. Saving money quickly is enticing.

Spend less on groceries today. True. That won't fix your finances.

Adopt a lifestyle that makes you affluent over time. Consider major choices.

Are they causing long-term poverty? Are you richer?

Leasing cars comes to mind. The automobile costs a fortune today. The premium could accomplish a million nice things.

Focusing on important decisions makes life easier. Consider your future. You want to improve next year.

Max Parasol

4 years ago

What the hell is Web3 anyway?

"Web 3.0" is a trendy buzzword with a vague definition. Everyone agrees it has to do with a blockchain-based internet evolution, but what is it?

Yet, the meaning and prospects for Web3 have become hot topics in crypto communities. Big corporations use the term to gain a foothold in the space while avoiding the negative connotations of “crypto.”

But it can't be evaluated without a definition.

Among those criticizing Web3's vagueness is Cobie:

“Despite the dominie's deluge of undistinguished think pieces, nobody really agrees on what Web3 is. Web3 is a scam, the future, tokenizing the world, VC exit liquidity, or just another name for crypto, depending on your tribe.

“Even the crypto community is split on whether Bitcoin is Web3,” he adds.

The phrase was coined by an early crypto thinker, and the community has had years to figure out what it means. Many ideologies and commercial realities have driven reverse engineering.

Web3 is becoming clearer as a concept. It contains ideas. It was probably coined by Ethereum co-founder Gavin Wood in 2014. His definition of Web3 included “trustless transactions” as part of its tech stack. Wood founded the Web3 Foundation and the Polkadot network, a Web3 alternative future.

The 2013 Ethereum white paper had previously allowed devotees to imagine a DAO, for example.

Web3 now has concepts like decentralized autonomous organizations, sovereign digital identity, censorship-free data storage, and data divided by multiple servers. They intertwine discussions about the “Web3” movement and its viability.

These ideas are linked by Cobie's initial Web3 definition. A key component of Web3 should be “ownership of value” for one's own content and data.

Noting that “late-stage capitalism greedcorps that make you buy a fractionalized micropayment NFT on Cardano to operate your electric toothbrush” may build the new web, he notes that “crypto founders are too rich to care anymore.”

Very Important

Many critics of Web3 claim it isn't practical or achievable. Web3 critics like Moxie Marlinspike (creator of sslstrip and Signal/TextSecure) can never see people running their own servers. Early in January, he argued that protocols are more difficult to create than platforms.

While this is true, some projects, like the file storage protocol IPFS, allow users to choose which jurisdictions their data is shared between.

But full decentralization is a difficult problem. Suhaza, replying to Moxie, said:

”People don't want to run servers... Companies are now offering API access to an Ethereum node as a service... Almost all DApps interact with the blockchain using Infura or Alchemy. In fact, when a DApp uses a wallet like MetaMask to interact with the blockchain, MetaMask is just calling Infura!

So, here are the questions: Web3: Is it a go? Is it truly decentralized?

Web3 history is shaped by Web2 failure.

This is the story of how the Internet was turned upside down...

Then came the vision. Everyone can create content for free. Decentralized open-source believers like Tim Berners-Lee popularized it.

Real-world data trade-offs for content creation and pricing.

A giant Wikipedia page married to a giant Craig's List. No ads, no logins, and a private web carve-up. For free usage, you give up your privacy and data to the algorithmic targeted advertising of Web 2.

Our data is centralized and savaged by giant corporations. Data localization rules and geopolitical walls like China's Great Firewall further fragment the internet.

The decentralized Web3 reflects Berners-original Lee's vision: "No permission is required from a central authority to post anything... there is no central controlling node and thus no single point of failure." Now he runs Solid, a Web3 data storage startup.

So Web3 starts with decentralized servers and data privacy.

Web3 begins with decentralized storage.

Data decentralization is a key feature of the Web3 tech stack. Web2 has closed databases. Large corporations like Facebook, Google, and others go to great lengths to collect, control, and monetize data. We want to change it.

Amazon, Google, Microsoft, Alibaba, and Huawei, according to Gartner, currently control 80% of the global cloud infrastructure market. Web3 wants to change that.

Decentralization enlarges power structures by giving participants a stake in the network. Users own data on open encrypted networks in Web3. This area has many projects.

Apps like Filecoin and IPFS have led the way. Data is replicated across multiple nodes in Web3 storage providers like Filecoin.

But the new tech stack and ideology raise many questions.

Giving users control over their data

According to Ryan Kris, COO of Verida, his “Web3 vision” is “empowering people to control their own data.”

Verida targets SDKs that address issues in the Web3 stack: identity, messaging, personal storage, and data interoperability.

A big app suite? “Yes, but it's a frontier technology,” he says. They are currently building a credentialing system for decentralized health in Bermuda.

By empowering individuals, how will Web3 create a fairer internet? Kris, who has worked in telecoms, finance, cyber security, and blockchain consulting for decades, admits it is difficult:

“The viability of Web3 raises some good business questions,” he adds. “How can users regain control over centralized personal data? How are startups motivated to build products and tools that support this transition? How are existing Web2 companies encouraged to pivot to a Web3 business model to compete with market leaders?

Kris adds that new technologies have regulatory and practical issues:

"On storage, IPFS is great for redundantly sharing public data, but not designed for securing private personal data. It is not controlled by the users. When data storage in a specific country is not guaranteed, regulatory issues arise."

Each project has varying degrees of decentralization. The diehards say DApps that use centralized storage are no longer “Web3” companies. But fully decentralized technology is hard to build.

Web2.5?

Some argue that we're actually building Web2.5 businesses, which are crypto-native but not fully decentralized. This is vital. For example, the NFT may be on a blockchain, but it is linked to centralized data repositories like OpenSea. A server failure could result in data loss.

However, according to Apollo Capital crypto analyst David Angliss, OpenSea is “not exactly community-led”. Also in 2021, much to the chagrin of crypto enthusiasts, OpenSea tried and failed to list on the Nasdaq.

This is where Web2.5 is defined.

“Web3 isn't a crypto segment. “Anything that uses a blockchain for censorship resistance is Web3,” Angliss tells us.

“Web3 gives users control over their data and identity. This is not possible in Web2.”

“Web2 is like feudalism, with walled-off ecosystems ruled by a few. For example, an honest user owned the Instagram account “Meta,” which Facebook rebranded and then had to make up a reason to suspend. Not anymore with Web3. If I buy ‘Ethereum.ens,' Ethereum cannot take it away from me.”

Angliss uses OpenSea as a Web2.5 business example. Too decentralized, i.e. censorship resistant, can be unprofitable for a large company like OpenSea. For example, OpenSea “enables NFT trading”. But it also stopped the sale of stolen Bored Apes.”

Web3 (or Web2.5, depending on the context) has been described as a new way to privatize internet.

“Being in the crypto ecosystem doesn't make it Web3,” Angliss says. The biggest risk is centralized closed ecosystems rather than a growing Web3.

LooksRare and OpenDAO are two community-led platforms that are more decentralized than OpenSea. LooksRare has even been “vampire attacking” OpenSea, indicating a Web3 competitor to the Web2.5 NFT king could find favor.

The addition of a token gives these new NFT platforms more options for building customer loyalty. For example, OpenSea charges a fee that goes nowhere. Stakeholders of LOOKS tokens earn 100% of the trading fees charged by LooksRare on every basic sale.

Maybe Web3's time has come.

So whose data is it?

Continuing criticisms of Web3 platforms' decentralization may indicate we're too early. Users want to own and store their in-game assets and NFTs on decentralized platforms like the Metaverse and play-to-earn games. Start-ups like Arweave, Sia, and Aleph.im propose an alternative.

To be truly decentralized, Web3 requires new off-chain models that sidestep cloud computing and Web2.5.

“Arweave and Sia emerged as formidable competitors this year,” says the Messari Report. They seek to reduce the risk of an NFT being lost due to a data breach on a centralized server.

Aleph.im, another Web3 cloud competitor, seeks to replace cloud computing with a service network. It is a decentralized computing network that supports multiple blockchains by retrieving and encrypting data.

“The Aleph.im network provides a truly decentralized alternative where it is most needed: storage and computing,” says Johnathan Schemoul, founder of Aleph.im. For reasons of consensus and security, blockchains are not designed for large storage or high-performance computing.

As a result, large data sets are frequently stored off-chain, increasing the risk for centralized databases like OpenSea

Aleph.im enables users to own digital assets using both blockchains and off-chain decentralized cloud technologies.

"We need to go beyond layer 0 and 1 to build a robust decentralized web. The Aleph.im ecosystem is proving that Web3 can be decentralized, and we intend to keep going.”

Aleph.im raised $10 million in mid-January 2022, and Ubisoft uses its network for NFT storage. This is the first time a big-budget gaming studio has given users this much control.

It also suggests Web3 could work as a B2B model, even if consumers aren't concerned about “decentralization.” Starting with gaming is common.

Can Tokenomics help Web3 adoption?

Web3 consumer adoption is another story. The average user may not be interested in all this decentralization talk. Still, how much do people value privacy over convenience? Can tokenomics solve the privacy vs. convenience dilemma?

Holon Global Investments' Jonathan Hooker tells us that human internet behavior will change. “Do you own Bitcoin?” he asks in his Web3 explanation. How does it feel to own and control your own sovereign wealth? Then:

“What if you could own and control your data like Bitcoin?”

“The business model must find what that person values,” he says. Putting their own health records on centralized systems they don't control?

“How vital are those medical records to that person at a critical time anywhere in the world? Filecoin and IPFS can help.”

Web3 adoption depends on NFT storage competition. A free off-chain storage of NFT metadata and assets was launched by Filecoin in April 2021.

Denationalization and blockchain technology have significant implications for data ownership and compensation for lending, staking, and using data.

Tokenomics can change human behavior, but many people simply sign into Web2 apps using a Facebook API without hesitation. Our data is already owned by Google, Baidu, Tencent, and Facebook (and its parent company Meta). Is it too late to recover?

Maybe. “Data is like fruit, it starts out fresh but ages,” he says. "Big Tech's data on us will expire."

Web3 founder Kris agrees with Hooker that “value for data is the issue, not privacy.” People accept losing their data privacy, so tokenize it. People readily give up data, so why not pay for it?

"Personalized data offering is valuable in personalization. “I will sell my social media data but not my health data.”

Purists and mass consumer adoption struggle with key management.

Others question data tokenomics' optimism. While acknowledging its potential, Box founder Aaron Levie questioned the viability of Web3 models in a Tweet thread:

“Why? Because data almost always works in an app. A product and APIs that moved quickly to build value and trust over time.”

Levie contends that tokenomics may complicate matters. In addition to community governance and tokenomics, Web3 ideals likely add a new negotiation vector.

“These are hard problems about human coordination, not software or blockchains,”. Using a Facebook API is simple. The business model and user interface are crucial.

For example, the crypto faithful have a common misconception about logging into Web3. It goes like this: Web 1 had usernames and passwords. Web 2 uses Google, Facebook, or Twitter APIs, while Web 3 uses your wallet. Pay with Ethereum on MetaMask, for example.

But Levie is correct. Blockchain key management is stressed in this meme. Even seasoned crypto enthusiasts have heart attacks, let alone newbies.

Web3 requires a better user experience, according to Kris, the company's founder. “How does a user recover keys?”

And at this point, no solution is likely to be completely decentralized. So Web3 key management can be improved. ”The moment someone loses control of their keys, Web3 ceases to exist.”

That leaves a major issue for Web3 purists. Put this one in the too-hard basket.

Is 2022 the Year of Web3?

Web3 must first solve a number of issues before it can be mainstreamed. It must be better and cheaper than Web2.5, or have other significant advantages.

Web3 aims for scalability without sacrificing decentralization protocols. But decentralization is difficult and centralized services are more convenient.

Ethereum co-founder Vitalik Buterin himself stated recently"

This is why (centralized) Binance to Binance transactions trump Ethereum payments in some places because they don't have to be verified 12 times."

“I do think a lot of people care about decentralization, but they're not going to take decentralization if decentralization costs $8 per transaction,” he continued.

“Blockchains need to be affordable for people to use them in mainstream applications... Not for 2014 whales, but for today's users."

For now, scalability, tokenomics, mainstream adoption, and decentralization believers seem to be holding Web3 hostage.

Much like crypto's past.

But stay tuned.