More on Web3 & Crypto

joyce shen

4 years ago

Framework to Evaluate Metaverse and Web3

Everywhere we turn, there's a new metaverse or Web3 debut. Microsoft recently announced a $68.7 BILLION cash purchase of Activision.

Like AI in 2013 and blockchain in 2014, NFT growth in 2021 feels like this year's metaverse and Web3 growth. We are all bombarded with information, conflicting signals, and a sensation of FOMO.

How can we evaluate the metaverse and Web3 in a noisy, new world? My framework for evaluating upcoming technologies and themes is shown below. I hope you will also find them helpful.

Understand the “pipes” in a new space.

Whatever people say, Metaverse and Web3 will have to coexist with the current Internet. Companies who host, move, and store data over the Internet have a lot of intriguing use cases in Metaverse and Web3, whether in infrastructure, data analytics, or compliance. Hence the following point.

## Understand the apps layer and their infrastructure.

Gaming, crypto exchanges, and NFT marketplaces would not exist today if not for technology that enables rapid app creation. Yes, according to Chainalysis and other research, 30–40% of Ethereum is self-hosted, with the rest hosted by large cloud providers. For Microsoft to acquire Activision makes strategic sense. It's not only about the games, but also the infrastructure that supports them.

Follow the money

Understanding how money and wealth flow in a complex and dynamic environment helps build clarity. Unless you are exceedingly wealthy, you have limited ability to significantly engage in the Web3 economy today. Few can just buy 10 ETH and spend it in one day. You must comprehend who benefits from the process, and how that 10 ETH circulates now and possibly tomorrow. Major holders and players control supply and liquidity in any market. Today, most Web3 apps are designed to increase capital inflow so existing significant holders can utilize it to create a nascent Web3 economy. When you see a new Metaverse or Web3 application, remember how money flows.

What is the use case?

What does the app do? If there is no clear use case with clear makers and consumers solving a real problem, then the euphoria soon fades, and the only stakeholders who remain enthused are those who have too much to lose.

Time is a major competition that is often overlooked.

We're only busier, but each day is still 24 hours. Using new apps may mean that time is lost doing other things. The user must be eager to learn. Metaverse and Web3 vs. our time? I don't think we know the answer yet (at least for working adults whose cost of time is higher).

I don't think we know the answer yet (at least for working adults whose cost of time is higher).

People and organizations need security and transparency.

For new technologies or apps to be widely used, they must be safe, transparent, and trustworthy. What does secure Metaverse and Web3 mean? This is an intriguing subject for both the business and public sectors. Cloud adoption grew in part due to improved security and data protection regulations.

The following frameworks can help analyze and understand new technologies and emerging technological topics, unless you are a significant investment fund with the financial ability to gamble on numerous initiatives and essentially form your own “index fund”.

I write on VC, startups, and leadership.

More on https://www.linkedin.com/in/joycejshen/ and https://joyceshen.substack.com/

This writing is my own opinion and does not represent investment advice.

Jeff John Roberts

3 years ago

Jack Dorsey and Jay-Z Launch 'Bitcoin Academy' in Brooklyn rapper's home

The new Bitcoin Academy will teach Jay-Marcy Z's Houses neighbors "What is Cryptocurrency."

Jay-Z grew up in Brooklyn's Marcy Houses. The rapper and Block CEO Jack Dorsey are giving back to his hometown by creating the Bitcoin Academy.

The Bitcoin Academy will offer online and in-person classes, including "What is Money?" and "What is Blockchain?"

The program will provide participants with a mobile hotspot and a small amount of Bitcoin for hands-on learning.

Students will receive dinner and two evenings of instruction until early September. The Shawn Carter Foundation will help with on-the-ground instruction.

Jay-Z and Dorsey announced the program Thursday morning. It will begin at Marcy Houses but may be expanded.

Crypto Blockchain Plug and Black Bitcoin Billionaire, which has received a grant from Block, will teach the classes.

Jay-Z, Dorsey reunite

Jay-Z and Dorsey have previously worked together to promote a Bitcoin and crypto-based future.

In 2021, Dorsey's Block (then Square) acquired the rapper's streaming music service Tidal, which they propose using for NFT distribution.

Dorsey and Jay-Z launched an endowment in 2021 to fund Bitcoin development in Africa and India.

Dorsey is funding the new Bitcoin Academy out of his own pocket (as is Jay-Z), but he's also pushed crypto-related charitable endeavors at Block, including a $5 million fund backed by corporate Bitcoin interest.

This post is a summary. Read full article here

Jeff Scallop

3 years ago

The Age of Decentralized Capitalism and DeFi

DeCap is DeFi's killer app.

“Software is eating the world.” Marc Andreesen, venture capitalist

DeFi. Imagine a blockchain-based alternative financial system that offers the same products and services as traditional finance, but with more variety, faster, more secure, lower cost, and simpler access.

Decentralised finance (DeFi) is a marketplace without gatekeepers or central authority managing the flow of money, where customers engage directly with smart contracts running on a blockchain.

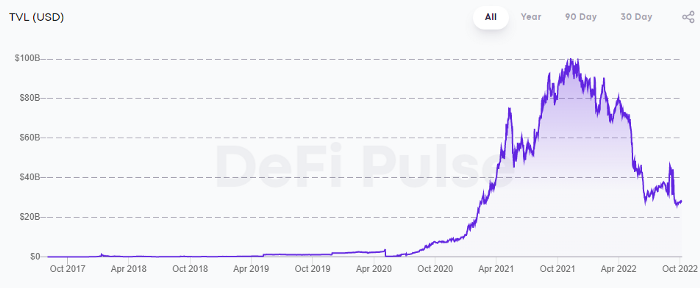

DeFi grew exponentially in 2020/21, with Total Value Locked (an inadequate estimate for market size) topping at $100 billion. After that, it crashed.

The accumulation of funds by individuals with high discretionary income during the epidemic, the novelty of crypto trading, and the high yields given (5% APY for stablecoins on established platforms to 100%+ for risky assets) are among the primary elements explaining this exponential increase.

No longer your older brothers DeFi

Since transactions are anonymous, borrowers had to overcollateralize DeFi 1.0. To borrow $100 in stablecoins, you must deposit $150 in ETH. DeFi 1.0's business strategy raises two problems.

Why does DeFi offer interest rates that are higher than those of the conventional financial system?;

Why would somebody put down more cash than they intended to borrow?

Maxed out on their own resources, investors took loans to acquire more crypto; the demand for those loans raised DeFi yields, which kept crypto prices increasing; as crypto prices rose, investors made a return on their positions, allowing them to deposit more money and borrow more crypto.

This is a bull market game. DeFi 1.0's overcollateralization speculation is dead. Cryptocrash sank it.

The “speculation by overcollateralisation” world of DeFi 1.0 is dead

At a JP Morgan digital assets conference, institutional investors were more interested in DeFi than crypto or fintech. To me, that shows DeFi 2.0's institutional future.

DeFi 2.0 protocols must handle KYC/AML, tax compliance, market abuse, and cybersecurity problems to be institutional-ready.

Stablecoins gaining market share under benign regulation and more CBDCs coming online in the next couple of years could help DeFi 2.0 separate from crypto volatility.

DeFi 2.0 will have a better footing to finally decouple from crypto volatility

Then we can transition from speculation through overcollateralization to DeFi's genuine comparative advantages: cheaper transaction costs, near-instant settlement, more efficient price discovery, faster time-to-market for financial innovation, and a superior audit trail.

Akin to Amazon for financial goods

Amazon decimated brick-and-mortar shops by offering millions of things online, warehouses by keeping just-in-time inventory, and back-offices by automating invoicing and payments. Software devoured retail. DeFi will eat banking with software.

DeFi is the Amazon for financial items that will replace fintech. Even the most advanced internet brokers offer only 100 currency pairings and limited bonds, equities, and ETFs.

Old banks settlement systems and inefficient, hard-to-upgrade outdated software harm them. For advanced gamers, it's like driving an F1 vehicle on dirt.

It is like driving a F1 car on a dirt road, for the most sophisticated players

Central bankers throughout the world know how expensive and difficult it is to handle cross-border payments using the US dollar as the reserve currency, which is vulnerable to the economic cycle and geopolitical tensions.

Decentralization is the only method to deliver 24h global financial markets. DeFi 2.0 lets you buy and sell startup shares like Google or Tesla. VC funds will trade like mutual funds. Or create a bundle coverage for your car, house, and NFTs. Defi 2.0 consumes banking and creates Global Wall Street.

Defi 2.0 is how software eats banking and delivers the global Wall Street

Decentralized Capitalism is Emerging

90% of markets are digital. 10% is hardest to digitalize. That's money creation, ID, and asset tokenization.

90% of financial markets are already digital. The only problem is that the 10% left is the hardest to digitalize

Debt helped Athens construct a powerful navy that secured trade routes. Bonds financed the Renaissance's wars and supply chains. Equity fueled industrial growth. FX drove globalization's payments system. DeFi's plans:

If the 20th century was a conflict between governments and markets over economic drivers, the 21st century will be between centralized and decentralized corporate structures.

Offices vs. telecommuting. China vs. onshoring/friendshoring. Oil & gas vs. diverse energy matrix. National vs. multilateral policymaking. DAOs vs. corporations Fiat vs. crypto. TradFi vs.

An age where the network effects of the sharing economy will overtake the gains of scale of the monopolistic competition economy

This is the dawn of Decentralized Capitalism (or DeCap), an age where the network effects of the sharing economy will reach a tipping point and surpass the scale gains of the monopolistic competition economy, further eliminating inefficiencies and creating a more robust economy through better data and automation. DeFi 2.0 enables this.

DeFi needs to pay the piper now.

DeCap won't be Web3.0's Shangri-La, though. That's too much for an ailing Atlas. When push comes to shove, DeFi folks want to survive and fight another day for the revolution. If feasible, make a tidy profit.

Decentralization wasn't meant to circumvent regulation. It circumvents censorship. On-ramp, off-ramp measures (control DeFi's entry and exit points, not what happens in between) sound like a good compromise for DeFi 2.0.

The sooner authorities realize that DeFi regulation is made ex-ante by writing code and constructing smart contracts with rules, the faster DeFi 2.0 will become the more efficient and safe financial marketplace.

More crucially, we must boost system liquidity. DeFi's financial stability risks are downplayed. DeFi must improve its liquidity management if it's to become mainstream, just as banks rely on capital constraints.

This reveals the complex and, frankly, inadequate governance arrangements for DeFi protocols. They redistribute control from tokenholders to developers, which is bad governance regardless of the economic model.

But crypto can only ride the existing banking system for so long before forming its own economy. DeFi will upgrade web2.0's financial rails till then.

You might also like

Leah

3 years ago

The Burnout Recovery Secrets Nobody Is Talking About

What works and what’s just more toxic positivity

Just keep at it; you’ll get it.

I closed the Zoom call and immediately dropped my head. Open tabs included material on inspiration, burnout, and recovery.

I searched everywhere for ways to avoid burnout.

It wasn't that I needed to keep going, change my routine, employ 8D audio playlists, or come up with fresh ideas. I had several ideas and a schedule. I knew what to do.

I wasn't interested. I kept reading, changing my self-care and mental health routines, and writing even though it was tiring.

Since burnout became a psychiatric illness in 2019, thousands have shared their experiences. It's spreading rapidly among writers.

What is the actual key to recovering from burnout?

Every A-list burnout story emphasizes prevention. Other lists provide repackaged self-care tips. More discuss mental health.

It's like the mid-2000s, when pink quotes about bubble baths saturated social media.

The self-care mania cost us all. Self-care is crucial, but utilizing it to address everything didn't work then or now.

How can you recover from burnout?

Time

Are extended breaks actually good for you? Most people need a break every 62 days or so to avoid burnout.

Real-life burnout victims all took breaks. Perhaps not a long hiatus, but breaks nonetheless.

Burnout is slow and gradual. It takes little bits of your motivation and passion at a time. Sometimes it’s so slow that you barely notice or blame it on other things like stress and poor sleep.

Burnout doesn't come overnight; neither will recovery.

I don’t care what anyone else says the cure for burnout is. It has to be time because time is what gave us all burnout in the first place.

Hasan AboulHasan

3 years ago

High attachment products can help you earn money automatically.

Affiliate marketing is a popular online moneymaker. You promote others' products and get commissions. Affiliate marketing requires constant product promotion.

Affiliate marketing can be profitable even without much promotion. Yes, this is Autopilot Money.

How to Pick an Affiliate Program to Generate Income Autonomously

Autopilot moneymaking requires a recurring affiliate marketing program.

Finding the best product and testing it takes a lot of time and effort.

Here are three ways to choose the best service or product to promote:

Find a good attachment-rate product or service.

When choosing a product, ask if you can easily switch to another service. Attachment rate is how much people like a product.

Higher attachment rates mean better Autopilot products.

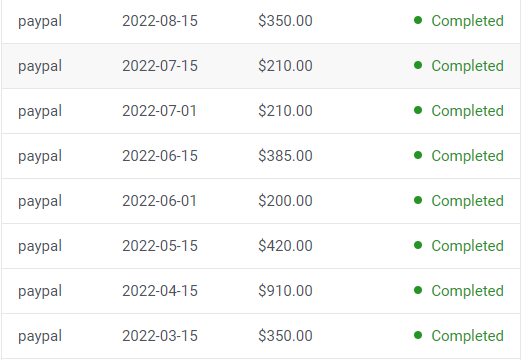

Consider promoting GetResponse. It's a 33% recurring commission email marketing tool. This means you get 33% of the customer's plan as long as he pays.

GetResponse has a high attachment rate because it's hard to leave and start over with another tool.

2. Pick a good or service with a lot of affiliate assets.

Check if a program has affiliate assets or creatives before joining.

Images and banners to promote the product in your business.

They save time; I look for promotional creatives. Creatives or affiliate assets are website banners or images. This reduces design time.

3. Select a service or item that consumers already adore.

New products are hard to sell. Choosing a trusted company's popular product or service is helpful.

As a beginner, let people buy a product they already love.

Online entrepreneurs and digital marketers love Systeme.io. It offers tools for creating pages, email marketing, funnels, and more. This product guarantees a high ROI.

Make the product known!

Affiliate marketers struggle to get traffic. Using affiliate marketing to make money is easier than you think if you have a solid marketing strategy.

Your plan should include:

1- Publish affiliate-related blog posts and SEO-optimize them

2- Sending new visitors product-related emails

3- Create a product resource page.

4-Review products

5-Make YouTube videos with links in the description.

6- Answering FAQs about your products and services on your blog and Quora.

7- Create an eCourse on how to use this product.

8- Adding Affiliate Banners to Your Website.

With these tips, you can promote your products and make money on autopilot.

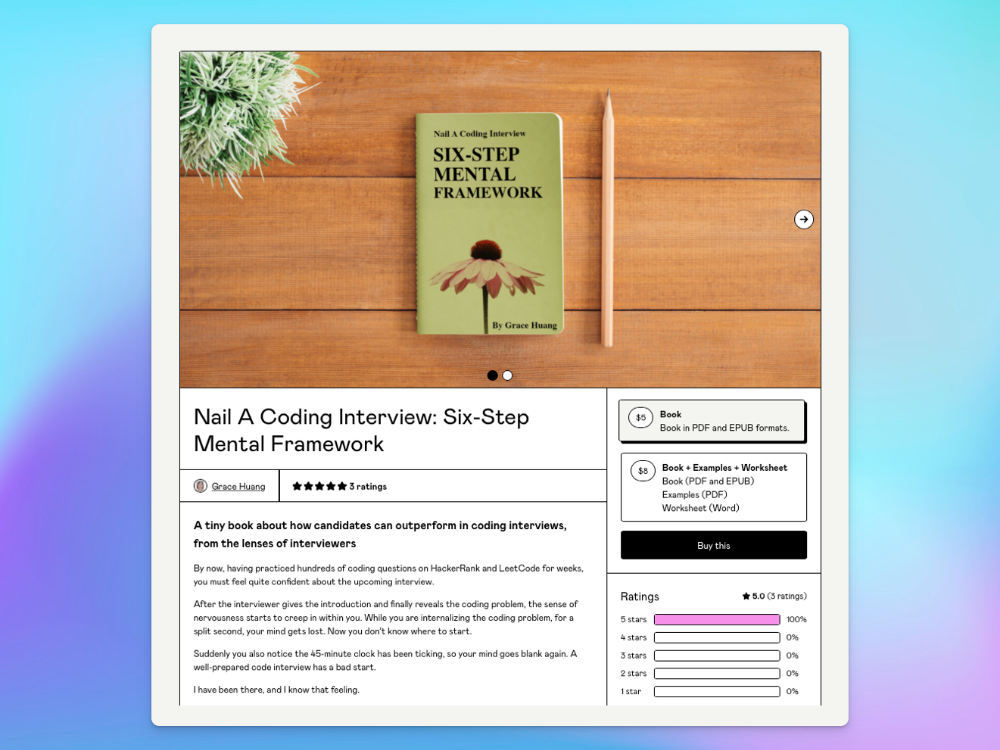

Grace Huang

3 years ago

I sold 100 copies of my book when I had anticipated selling none.

After a decade in large tech, I know how software engineers were interviewed. I've seen outstanding engineers fail interviews because their responses were too vague.

So I wrote Nail A Coding Interview: Six-Step Mental Framework. Give candidates a mental framework for coding questions; help organizations better prepare candidates so they can calibrate traits.

Recently, I sold more than 100 books, something I never expected.

In this essay, I'll describe my publication journey, which included self-doubt and little triumphs. I hope this helps if you want to publish.

It was originally a Medium post.

How did I know to develop a coding interview book? Years ago, I posted on Medium.

Six steps to ace a coding interview Inhale. blog.devgenius.io

This story got a lot of attention and still gets a lot of daily traffic. It indicates this domain's value.

Converted the Medium article into an ebook

The Medium post contains strong bullet points, but it is missing the “flesh”. How to use these strategies in coding interviews, for example. I filled in the blanks and made a book.

I made the book cover for free. It's tidy.

Shared the article with my close friends on my social network WeChat.

I shared the book on Wechat's Friend Circle (朋友圈) after publishing it on Gumroad. Many friends enjoyed my post. It definitely triggered endorphins.

In Friend Circle, I presented a 100% off voucher. No one downloaded the book. Endorphins made my heart sink.

Several days later, my Apple Watch received a Gumroad notification. A friend downloaded it. I majored in finance, he subsequently said. My brother-in-law can get it? He downloaded it to cheer me up.

I liked him, but was disappointed that he didn't read it.

The Tipping Point: Reddit's Free Giving

I trusted the book. It's based on years of interviewing. I felt it might help job-hunting college students. If nobody wants it, it can still have value.

I posted the book's link on /r/leetcode. I told them to DM me for a free promo code.

Momentum shifted everything. Gumroad notifications kept coming when I was out with family. Following orders.

As promised, I sent DMs a promo code. Some consumers ordered without asking for a promo code. Some readers finished the book and posted reviews.

My book was finally on track.

A 5-Star Review, plus More

A reader afterwards DMed me and inquired if I had another book on system design interviewing. I said that was a good idea, but I didn't have one. If you write one, I'll be your first reader.

Later, I asked for a book review. Yes, but how? That's when I learned readers' reviews weren't easy. I built up an email pipeline to solicit customer reviews. Since then, I've gained credibility through ratings.

Learnings

I wouldn't have gotten 100 if I gave up when none of my pals downloaded. Here are some lessons.

Your friends are your allies, but they are not your clients.

Be present where your clients are

Request ratings and testimonials

gain credibility gradually

I did it, so can you. Follow me on Twitter @imgracehuang for my publishing and entrepreneurship adventure.