More on Society & Culture

Will Leitch

3 years ago

Don't treat Elon Musk like Trump.

He’s not the President. Stop treating him like one.

Elon Musk tweeted from Qatar, where he was watching the World Cup Final with Jared Kushner.

Musk's subsequent Tweets were as normal, basic, and bland as anyone's from a World Cup Final: It's depressing to see the world's richest man looking at his phone during a grand ceremony. Rich guy goes to rich guy event didn't seem important.

Before Musk posted his should-I-step-down-at-Twitter poll, CNN ran a long segment asking if it was hypocritical for him to reveal his real-time location after defending his (very dumb) suspension of several journalists for (supposedly) revealing his assassination coordinates by linking to a site that tracks Musks private jet. It was hard to ignore CNN's hypocrisy: It covered Musk as Twitter CEO like President Trump. EVERY TRUMP STORY WAS BASED ON HIM SAYING X, THEN DOING Y. Trump would do something horrific, lie about it, then pretend it was fine, then condemn a political rival who did the same thing, be called hypocritical, and so on. It lasted four years. Exhausting.

It made sense because Trump was the President of the United States. The press's main purpose is to relentlessly cover and question the president.

It's strange to say this out. Twitter isn't America. Elon Musk isn't a president. He maintains a money-losing social media service to harass and mock people he doesn't like. Treating Musk like Trump, as if he should be held accountable like Trump, shows a startling lack of perspective. Some journalists treat Twitter like a country.

The compulsive, desperate way many journalists utilize the site suggests as much. Twitter isn't the town square, despite popular belief. It's a place for obsessives to meet and converse. Journalists say they're breaking news. Their careers depend on it. They can argue it's a public service. Nope. It's a place lonely people go to speak all day. Twitter. So do journalists, Trump, and Musk. Acting as if it has a greater purpose, as if it's impossible to break news without it, or as if the republic is in peril is ludicrous. Only 23% of Americans are on Twitter, while 25% account for 97% of Tweets. I'd think a large portion of that 25% are journalists (or attention addicts) chatting to other journalists. Their loudness makes Twitter seem more important than it is. Nope. It's another stupid website. They were there before Twitter; they will be there after Twitter. It’s just a website. We can all get off it if we want. Most of us aren’t even on it in the first place.

Musk is a website-owner. No world leader. He's not as accountable as Trump was. Musk is cable news's primary character now that Trump isn't (at least for now). Becoming a TV news anchor isn't as significant as being president. Elon Musk isn't as important as we all pretend, and Twitter isn't even close. Twitter is a dumb website, Elon Musk is a rich guy going through a midlife crisis, and cable news is lazy because its leaders thought the entire world was on Twitter and are now freaking out that their playground is being disturbed.

I’ve said before that you need to leave Twitter, now. But even if you’re still on it, we need to stop pretending it matters more than it does. It’s a site for lonely attention addicts, from the man who runs it to the journalists who can’t let go of it. It’s not a town square. It’s not a country. It’s not even a successful website. Let’s stop pretending any of it’s real. It’s not.

Tim Smedley

3 years ago

When Investment in New Energy Surpassed That in Fossil Fuels (Forever)

A worldwide energy crisis might have hampered renewable energy and clean tech investment. Nope.

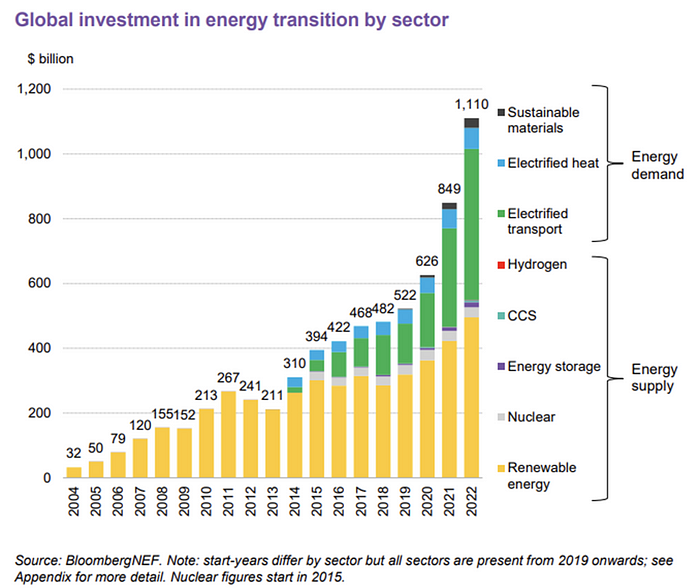

BNEF's 2023 Energy Transition Investment Trends study surprised and encouraged. Global energy transition investment reached $1 trillion for the first time ($1.11t), up 31% from 2021. From 2013, the clean energy transition has come and cannot be reversed.

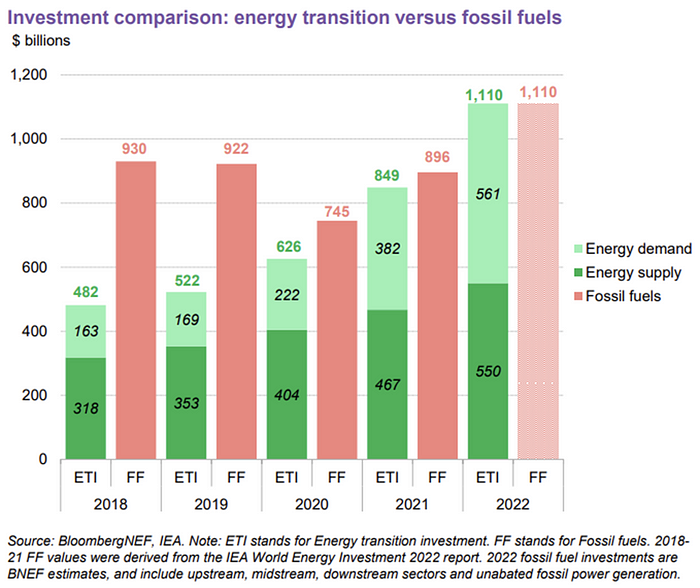

BNEF Head of Global Analysis Albert Cheung said our findings ended the energy crisis's influence on renewable energy deployment. Energy transition investment has reached a record as countries and corporations implement transition strategies. Clean energy investments will soon surpass fossil fuel investments.

The table below indicates the tripping point, which means the energy shift is occuring today.

BNEF calls money invested on clean technology including electric vehicles, heat pumps, hydrogen, and carbon capture energy transition investment. In 2022, electrified heat received $64b and energy storage $15.7b.

Nonetheless, $495b in renewables (up 17%) and $466b in electrified transport (up 54%) account for most of the investment. Hydrogen and carbon capture are tiny despite the fanfare. Hydrogen received the least funding in 2022 at $1.1 billion (0.1%).

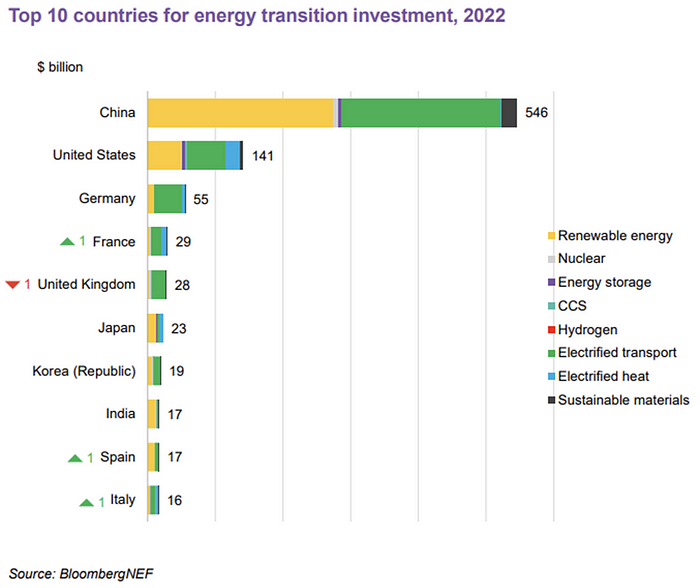

China dominates investment. China spends $546 billion on energy transition, half the global amount. Second, the US total of $141 billion in 2022 was up 11% from 2021. With $180 billion, the EU is unofficially second. China invested 91% in battery technologies.

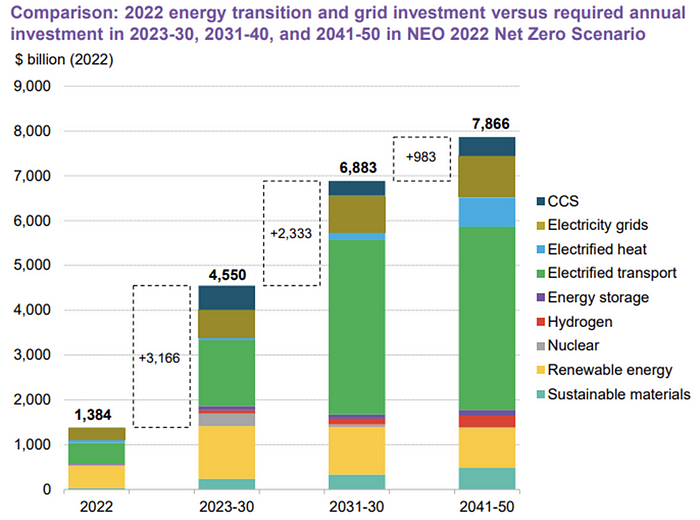

The 2022 transition tipping point is encouraging, but the BNEF research shows how far we must go to get Net Zero. Energy transition investment must average $4.55 trillion between 2023 and 2030—three times the amount spent in 2022—to reach global Net Zero. Investment must be seven times today's record to reach Net Zero by 2050.

BNEF 2023 Energy Transition Investment Trends.

As shown in the graph above, BNEF experts have been using their crystal balls to determine where that investment should go. CCS and hydrogen are still modest components of the picture. Interestingly, they see nuclear almost fading. Active transport advocates like me may have something to say about the massive $4b in electrified transport. If we focus on walkable 15-minute cities, we may need fewer electric automobiles. Though we need more electric trains and buses.

Albert Cheung of BNEF emphasizes the challenge. This week's figures promise short-term job creation and medium-term energy security, but more investment is needed to reach net zero in the long run.

I expect the BNEF Energy Transition Investment Trends report to show clean tech investment outpacing fossil fuels investment every year. Finally saying that is amazing. It's insufficient. The planet must maintain its electric (not gas) pedal. In response to the research, Christina Karapataki, VC at Breakthrough Energy Ventures, a clean tech investment firm, tweeted: Clean energy investment needs to average more than 3x this level, for the remainder of this decade, to get on track for BNEFs Net Zero Scenario. Go!

Isaiah McCall

3 years ago

Is TikTok slowly destroying a new generation?

It's kids' digital crack

TikTok is a destructive social media platform.

The interface shortens attention spans and dopamine receptors.

TikTok shares more data than other apps.

Seeing an endless stream of dancing teens on my glowing box makes me feel like a Blade Runner extra.

TikTok did in one year what MTV, Hollywood, and Warner Music tried to do in 20 years. TikTok has psychotized the two-thirds of society Aldous Huxley said were hypnotizable.

Millions of people, mostly kids, are addicted to learning a new dance, lip-sync, or prank, and those who best dramatize this collective improvisation get likes, comments, and shares.

TikTok is a great app. So what?

The Commercial Magnifying Glass TikTok made me realize my generation's time was up and the teenage Zoomers were the target.

I told my 14-year-old sister, "Enjoy your time under the commercial magnifying glass."

TikTok sells your every move, gesture, and thought. Data is the new oil. If you tell someone, they'll say, "Yeah, they collect data, but who cares? I have nothing to hide."

It's a George Orwell novel's beginning. Look up Big Brother Award winners to see if TikTok won.

TikTok shares your data more than any other social media app, and where it goes is unclear. TikTok uses third-party trackers to monitor your activity after you leave the app.

Consumers can't see what data is shared or how it will be used. — Genius URL

32.5 percent of Tiktok's users are 10 to 19 and 29.5% are 20 to 29.

TikTok is the greatest digital marketing opportunity in history, and they'll use it to sell you things, track you, and control your thoughts. Any of its users will tell you, "I don't care, I just want to be famous."

TikTok manufactures mental illness

TikTok's effect on dopamine and the brain is absurd. Dopamine controls the brain's pleasure and reward centers. It's like a switch that tells your brain "this feels good, repeat."

Dr. Julie Albright, a digital culture and communication sociologist, said TikTok users are "carried away by dopamine." It's hypnotic, you'll keep watching."

TikTok constantly releases dopamine. A guy on TikTok recently said he didn't like books because they were slow and boring.

The US didn't ban Tiktok.

Biden and Trump agree on bad things. Both agree that TikTok threatens national security and children's mental health.

The Chinese Communist Party owns and operates TikTok, but that's not its only problem.

There’s borderline child porn on TikTok

It's unsafe for children and violated COPPA.

It's also Chinese spyware. I'm not a Trump supporter, but I was glad he wanted TikTok regulated and disappointed when he failed.

Full-on internet censorship is rare outside of China, so banning it may be excessive. US should regulate TikTok more.

We must reject a low-quality present for a high-quality future.

TikTok vs YouTube

People got mad when I wrote about YouTube's death.

They didn't like when I said TikTok was YouTube's first real challenger.

Indeed. TikTok is the fastest-growing social network. In three years, the Chinese social media app TikTok has gained over 1 billion active users. In the first quarter of 2020, it had the most downloads of any app in a single quarter.

TikTok is the perfect social media app in many ways. It's brief and direct.

Can you believe they had a YouTube vs TikTok boxing match? We are doomed as a species.

YouTube hosts my favorite videos. That’s why I use it. That’s why you use it. New users expect more. They want something quicker, more addictive.

TikTok's impact on other social media platforms frustrates me. YouTube copied TikTok to compete.

It's all about short, addictive content.

I'll admit I'm probably wrong about TikTok. My friend says his feed is full of videos about food, cute animals, book recommendations, and hot lesbians.

Whatever.

TikTok makes us bad

TikTok is the opposite of what the Ancient Greeks believed about wisdom.

It encourages people to be fake. It's like a never-ending costume party where everyone competes.

It does not mean that Gen Z is doomed.

They could be the saviors of the world for all I know.

TikTok feels like a step towards Mike Judge's "Idiocracy," where the average person is a pleasure-seeking moron.

You might also like

Maddie Wang

3 years ago

Easiest and fastest way to test your startup idea!

Here's the fastest way to validate company concepts.

I squandered a year after dropping out of Stanford designing a product nobody wanted.

But today, I’m at 100k!

Differences:

I was designing a consumer product when I dropped out.

I coded MVP, got 1k users, and got YC interview.

Nice, huh?

WRONG!

Still coding and getting users 12 months later

WOULD PEOPLE PAY FOR IT? was the riskiest assumption I hadn't tested.

When asked why I didn't verify payment, I said,

Not-ready products. Now, nobody cares. The website needs work. Include this. Increase usage…

I feared people would say no.

After 1 year of pushing it off, my team told me they were really worried about the Business Model. Then I asked my audience if they'd buy my product.

So?

No, overwhelmingly.

I felt like I wasted a year building a product no one would buy.

Founders Cafe was the opposite.

Before building anything, I requested payment.

40 founders were interviewed.

Then we emailed Stanford, YC, and other top founders, asking them to join our community.

BOOM! 10/12 paid!

Without building anything, in 1 day I validated my startup's riskiest assumption. NOT 1 year.

Asking people to pay is one of the scariest things.

I understand.

I asked Stanford queer women to pay before joining my gay sorority.

I was afraid I'd turn them off or no one would pay.

Gay women, like those founders, were in such excruciating pain that they were willing to pay me upfront to help.

You can ask for payment (before you build) to see if people have the burning pain. Then they'll pay!

Examples from Founders Cafe members:

😮 Using a fake landing page, a college dropout tested a product. Paying! He built it and made $3m!

😮 YC solo founder faked a Powerpoint demo. 5 Enterprise paid LOIs. $1.5m raised, built, and in YC!

😮 A Harvard founder can convert Figma to React. 1 day, 10 customers. Built a tool to automate Figma -> React after manually fulfilling requests. 1m+

Bad example:

😭 Stanford Dropout Spends 1 Year Building Product Without Payment Validation

Some people build for a year and then get paying customers.

What I'm sharing is my experience and what Founders Cafe members have told me about validating startup ideas.

Don't waste a year like I did.

After my first startup failed, I planned to re-enroll at Stanford/work at Facebook.

After people paid, I quit for good.

I've hit $100k!

Hope this inspires you to request upfront payment! It'll change your life

Startup Journal

3 years ago

The Top 14 Software Business Ideas That Are Sure To Succeed in 2023

Software can change any company.

Software is becoming essential. Everyone should consider how software affects their lives and others'.

Software on your phone, tablet, or computer offers many new options. We're experts in enough ways now.

Software Business Ideas will be popular by 2023.

ERP Programs

ERP software meets rising demand.

ERP solutions automate and monitor tasks that large organizations, businesses, and even schools would struggle to do manually.

ERP software could reach $49 billion by 2024.

CRM Program

CRM software is a must-have for any customer-focused business.

Having an open mind about your business services and products allows you to change platforms.

Another company may only want your CRM service.

Medical software

Healthcare facilities need reliable, easy-to-use software.

EHRs, MDDBs, E-Prescribing, and more are software options.

The global medical software market could reach $11 billion by 2025, and mobile medical apps may follow.

Presentation Software in the Cloud

SaaS presentation tools are great.

They're easy to use, comprehensive, and full of traditional Software features.

In today's cloud-based world, these solutions make life easier for people. We don't know about you, but we like it.

Software for Project Management

People began working remotely without signs or warnings before the 2020 COVID-19 pandemic.

Many organizations found it difficult to track projects and set deadlines.

With PMP software tools, teams can manage remote units and collaborate effectively.

App for Blockchain-Based Invoicing

This advanced billing and invoicing solution is for businesses and freelancers.

These blockchain-based apps can calculate taxes, manage debts, and manage transactions.

Intelligent contracts help blockchain track transactions more efficiently. It speeds up and improves invoice generation.

Software for Business Communications

Internal business messaging is tricky.

Top business software tools for communication can share files, collaborate on documents, host video conferences, and more.

Payroll Automation System

Software development also includes developing an automated payroll system.

These software systems reduce manual tasks for timely employee payments.

These tools help enterprise clients calculate total wages quickly, simplify tax calculations, improve record-keeping, and support better financial planning.

System for Detecting Data Leaks

Both businesses and individuals value data highly. Yahoo's data breach is dangerous because of this.

This area of software development can help people protect their data.

You can design an advanced data loss prevention system.

AI-based Retail System

AI-powered shopping systems are popular. The systems analyze customers' search and purchase patterns and store history and are equipped with a keyword database.

These systems offer many customers pre-loaded products.

AI-based shopping algorithms also help users make purchases.

Software for Detecting Plagiarism

Software can help ensure your projects are original and not plagiarized.

These tools detect plagiarized content that Google, media, and educational institutions don't like.

Software for Converting Audio to Text

Machine Learning converts speech to text automatically.

These programs can quickly transcribe cloud-based files.

Software for daily horoscopes

Daily and monthly horoscopes will continue to be popular.

Software platforms that can predict forecasts, calculate birth charts, and other astrology resources are good business ideas.

E-learning Programs

Traditional study methods are losing popularity as virtual schools proliferate and physical space shrinks.

Khan Academy online courses are the best way to keep learning.

Online education portals can boost your learning. If you want to start a tech startup, consider creating an e-learning program.

Conclusion

Software is booming. There's never been a better time to start a software development business, with so many people using computers and smartphones. This article lists eight business ideas for 2023. Consider these ideas if you're just starting out or looking to expand.

Bart Krawczyk

3 years ago

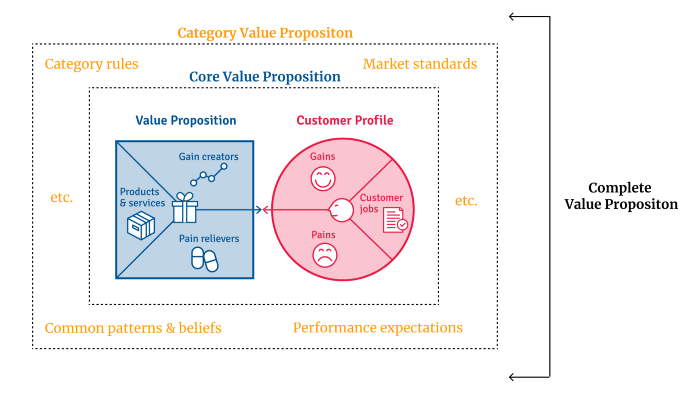

Understanding several Value Proposition kinds will help you create better goods.

Fixing problems isn't enough.

Numerous articles and how-to guides on value propositions focus on fixing consumer concerns.

Contrary to popular opinion, addressing customer pain rarely suffices. Win your market category too.

Core Value Statement

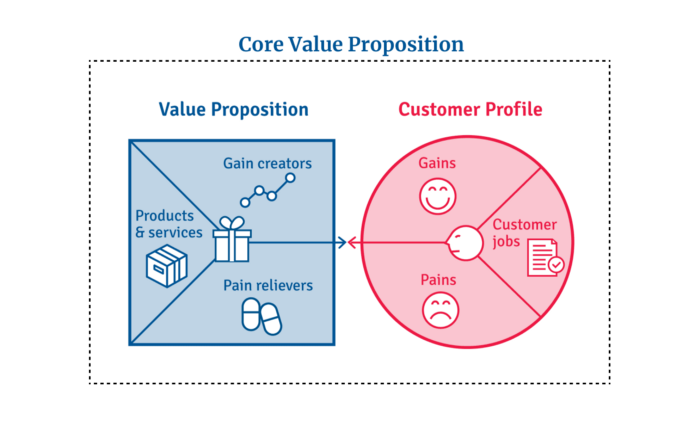

Value proposition usually means a product's main value.

Its how your product solves client problems. The product's core.

Answering these questions creates a relevant core value proposition:

What tasks is your customer trying to complete? (Jobs for clients)

How much discomfort do they feel while they perform this? (pains)

What would they like to see improved or changed? (gains)

After that, you create products and services that alleviate those pains and give value to clients.

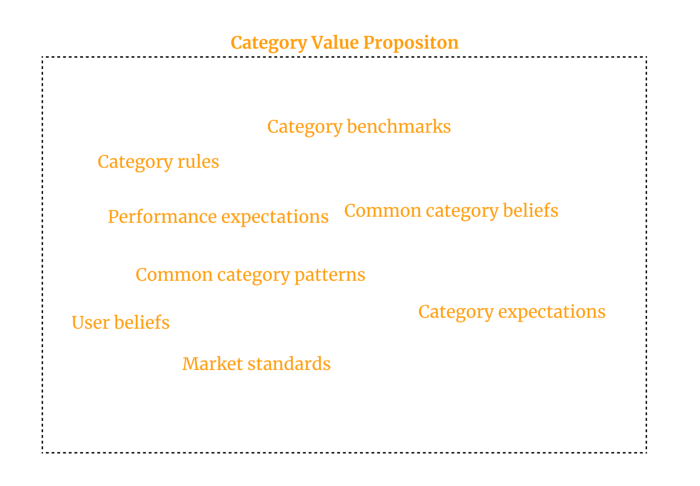

Value Proposition by Category

Your product belongs to a market category and must follow its regulations, regardless of its value proposition.

Creating a new market category is challenging. Fitting into customers' product perceptions is usually better than trying to change them.

New product users simplify market categories. Products are labeled.

Your product will likely be associated with a collection of products people already use.

Example: IT experts will use your communication and management app.

If your target clients think it's an advanced mail software, they'll compare it to others and expect things like:

comprehensive calendar

spam detectors

adequate storage space

list of contacts

etc.

If your target users view your product as a task management app, things change. You can survive without a contact list, but not status management.

Find out what your customers compare your product to and if it fits your value offer. If so, adapt your product plan to dominate this market. If not, try different value propositions and messaging to put the product in the right context.

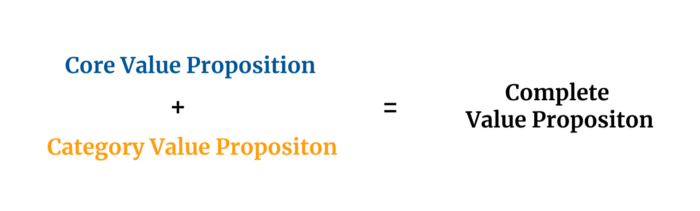

Finished Value Proposition

A comprehensive value proposition is when your solution addresses user problems and wins its market category.

Addressing simply the primary value proposition may produce a valuable and original product, but it may struggle to cross the chasm into the mainstream market. Meeting expectations is easier than changing views.

Without a unique value proposition, you will drown in the red sea of competition.

To conclude:

Find out who your target consumer is and what their demands and problems are.

To meet these needs, develop and test a primary value proposition.

Speak with your most devoted customers. Recognize the alternatives they use to compare you against and the market segment they place you in.

Recognize the requirements and expectations of the market category.

To meet or surpass category standards, modify your goods.

Great products solve client problems and win their category.