More on Entrepreneurship/Creators

Jerry Keszka

3 years ago

10 Crazy Useful Free Websites No One Told You About But You Needed

The internet is a massive information resource. With so much stuff, it's easy to forget about useful websites. Here are five essential websites you may not have known about.

1. Companies.tools

Companies.tools are what successful startups employ. This website offers a curated selection of design, research, coding, support, and feedback resources. Ct has the latest app development platform and greatest client feedback method.

2. Excel Formula Bot

Excel Formula Bot can help if you forget a formula. Formula Bot uses AI to convert text instructions into Excel formulas, so you don't have to remember them.

Just tell the Bot what to do, and it will do it. Excel Formula Bot can calculate sales tax and vacation days. When you're stuck, let the Bot help.

3.TypeLit

TypeLit helps you improve your typing abilities while reading great literature.

TypeLit.io lets you type any book or dozens of preset classics. TypeLit provides real-time feedback on accuracy and speed.

Goals and progress can be tracked. Why not improve your typing and learn great literature with TypeLit?

4. Calm Schedule

Finding a meeting time that works for everyone is difficult. Personal and business calendars might be difficult to coordinate.

Synchronize your two calendars to save time and avoid problems. You may avoid searching through many calendars for conflicts and keep your personal information secret. Having one source of truth for personal and work occasions will help you never miss another appointment.

https://calmcalendar.com/

5. myNoise

myNoise makes the outside world quieter. myNoise is the right noise for a noisy office or busy street.

If you can't locate the right noise, make it. MyNoise unlocks the world. Shut out distractions. Thank your ears.

6. Synthesia

Professional videos require directors, filmmakers, editors, and animators. Now, thanks to AI, you can generate high-quality videos without video editing experience.

AI avatars are crucial. You can design a personalized avatar using a web-based software like synthesia.io. Our avatars can lip-sync in over 60 languages, so you can make worldwide videos. There's an AI avatar for every video goal.

Not free. Amazing service, though.

7. Cleaning-up-images

Have you shot a wonderful photo just to notice something in the background? You may have a beautiful headshot but wish to erase an imperfection.

Cleanup.pictures removes undesirable objects from photos. Our algorithms will eliminate the selected object.

Cleanup.pictures can help you obtain the ideal shot every time. Next time you take images, let Cleanup.pictures fix any flaws.

8. PDF24 Tools

Editing a PDF can be a pain. Most of us don't know Adobe Acrobat's functionalities. Why buy something you'll rarely use? Better options exist.

PDF24 is an online PDF editor that's free and subscription-free. Rotate, merge, split, compress, and convert PDFs in your browser. PDF24 makes document signing easy.

Upload your document, sign it (or generate a digital signature), and download it. It's easy and free. PDF24 is a free alternative to pricey PDF editing software.

9. Class Central

Finding online classes is much easier. Class Central has classes from Harvard, Stanford, Coursera, Udemy, and Google, Amazon, etc. in one spot.

Whether you want to acquire a new skill or increase your knowledge, you'll find something. New courses bring variety.

10. Rome2rio

Foreign travel offers countless transport alternatives. How do you get from A to B? It’s easy!

Rome2rio will show you the best method to get there, including which mode of transport is ideal.

Plane

Car

Train

Bus

Ferry

Driving

Shared bikes

Walking

Do you know any free, useful websites?

Bastian Hasslinger

3 years ago

Before 2021, most startups had excessive valuations. It is currently causing issues.

Higher startup valuations are often favorable for all parties. High valuations show a business's potential. New customers and talent are attracted. They earn respect.

Everyone benefits if a company's valuation rises.

Founders and investors have always been incentivized to overestimate a company's value.

Post-money valuations were inflated by 2021 market expectations and the valuation model's mechanisms.

Founders must understand both levers to handle a normalizing market.

2021, the year of miracles

2021 must've seemed miraculous to entrepreneurs, employees, and VCs. Valuations rose, and funding resumed after the first Covid-19 epidemic caution.

In 2021, VC investments increased from $335B to $643B. 518 new worldwide unicorns vs. 134 in 2020; 951 US IPOs vs. 431.

Things can change quickly, as 2020-21 showed.

Rising interest rates, geopolitical developments, and normalizing technology conditions drive down share prices and tech company market caps in 2022. Zoom, the poster-child of early lockdown success, is down 37% since 1st Jan.

Once-inflated valuations can become a problem in a normalizing market, especially for founders, employees, and early investors.

the reason why startups are always overvalued

To see why inflated valuations are a problem, consider one of its causes.

Private company values only fluctuate following a new investment round, unlike publicly-traded corporations. The startup's new value is calculated simply:

(Latest round share price) x (total number of company shares)

This is the industry standard Post-Money Valuation model.

Let’s illustrate how it works with an example. If a VC invests $10M for 1M shares (at $10/share), and the company has 10M shares after the round, its Post-Money Valuation is $100M (10/share x 10M shares).

This approach might seem like the most natural way to assess a business, but the model often unintentionally overstates the underlying value of the company even if the share price paid by the investor is fair. All shares aren't equal.

New investors in a corporation will always try to minimize their downside risk, or the amount they lose if things go wrong. New investors will try to negotiate better terms and pay a premium.

How the value of a struggling SpaceX increased

SpaceX's 2008 Series D is an example. Despite the financial crisis and unsuccessful rocket launches, the company's Post-Money Valuation was 36% higher after the investment round. Why?

Series D SpaceX shares were protected. In case of liquidation, Series D investors were guaranteed a 2x return before other shareholders.

Due to downside protection, investors were willing to pay a higher price for this new share class.

The Post-Money Valuation model overpriced SpaceX because it viewed all the shares as equal (they weren't).

Why entrepreneurs, workers, and early investors stand to lose the most

Post-Money Valuation is an effective and sufficient method for assessing a startup's valuation, despite not taking share class disparities into consideration.

In a robust market, where the firm valuation will certainly expand with the next fundraising round or exit, the inflated value is of little significance.

Fairness endures. If a corporation leaves at a greater valuation, each stakeholder will receive a proportional distribution. (i.e., 5% of a $100M corporation yields $5M).

SpaceX's inherent overvaluation was never a problem. Had it been sold for less than its Post-Money Valuation, some shareholders, including founders, staff, and early investors, would have seen their ownership drop.

The unforgiving world of 2022

In 2022, founders, employees, and investors who benefited from inflated values will face below-valuation exits and down-rounds.

For them, 2021 will be a curse, not a blessing.

Some tech giants are worried. Klarna's valuation fell from $45B (Oct 21) to $30B (Jun 22), Canvas from $40B to $27B, and GoPuffs from $17B to $8.3B.

Shazam and Blue Apron have to exit or IPO at a cheaper price. Premium share classes are protected, while others receive less. The same goes for bankrupts.

Those who continue at lower valuations will lose reputation and talent. When their value declines by half, generous employee stock options become less enticing, and their ability to return anything is questioned.

What can we infer about the present situation?

Such techniques to enhance your company's value or stop a normalizing market are fiction.

The current situation is a painful reminder for entrepreneurs and a crucial lesson for future firms.

The devastating market fall of the previous six months has taught us one thing:

Keep in mind that any valuation is speculative. Money Post A startup's valuation is a highly simplified approximation of its true value, particularly in the early phases when it lacks significant income or a cutting-edge product. It is merely a projection of the future and a hypothetical meter. Until it is achieved by an exit, a valuation is nothing more than a number on paper.

Assume the value of your company is lower than it was in the past. Your previous valuation might not be accurate now due to substantial changes in the startup financing markets. There is little reason to think that your company's value will remain the same given the 50%+ decline in many newly listed IT companies. Recognize how the market situation is changing and use caution.

Recognize the importance of the stake you hold. Each share class has a unique value that varies. Know the sort of share class you own and how additional contractual provisions affect the market value of your security. Frameworks have been provided by Metrick and Yasuda (Yale & UC) and Gornall and Strebulaev (Stanford) for comprehending the terms that affect investors' cash-flow rights upon withdrawal. As a result, you will be able to more accurately evaluate your firm and determine the worth of each share class.

Be wary of approving excessively protective share terms.

The trade-offs should be considered while negotiating subsequent rounds. Accepting punitive contractual terms could first seem like a smart option in order to uphold your inflated worth, but you should proceed with caution. Such provisions ALWAYS result in misaligned shareholders, with common shareholders (such as you and your staff) at the bottom of the list.

Maddie Wang

3 years ago

Easiest and fastest way to test your startup idea!

Here's the fastest way to validate company concepts.

I squandered a year after dropping out of Stanford designing a product nobody wanted.

But today, I’m at 100k!

Differences:

I was designing a consumer product when I dropped out.

I coded MVP, got 1k users, and got YC interview.

Nice, huh?

WRONG!

Still coding and getting users 12 months later

WOULD PEOPLE PAY FOR IT? was the riskiest assumption I hadn't tested.

When asked why I didn't verify payment, I said,

Not-ready products. Now, nobody cares. The website needs work. Include this. Increase usage…

I feared people would say no.

After 1 year of pushing it off, my team told me they were really worried about the Business Model. Then I asked my audience if they'd buy my product.

So?

No, overwhelmingly.

I felt like I wasted a year building a product no one would buy.

Founders Cafe was the opposite.

Before building anything, I requested payment.

40 founders were interviewed.

Then we emailed Stanford, YC, and other top founders, asking them to join our community.

BOOM! 10/12 paid!

Without building anything, in 1 day I validated my startup's riskiest assumption. NOT 1 year.

Asking people to pay is one of the scariest things.

I understand.

I asked Stanford queer women to pay before joining my gay sorority.

I was afraid I'd turn them off or no one would pay.

Gay women, like those founders, were in such excruciating pain that they were willing to pay me upfront to help.

You can ask for payment (before you build) to see if people have the burning pain. Then they'll pay!

Examples from Founders Cafe members:

😮 Using a fake landing page, a college dropout tested a product. Paying! He built it and made $3m!

😮 YC solo founder faked a Powerpoint demo. 5 Enterprise paid LOIs. $1.5m raised, built, and in YC!

😮 A Harvard founder can convert Figma to React. 1 day, 10 customers. Built a tool to automate Figma -> React after manually fulfilling requests. 1m+

Bad example:

😭 Stanford Dropout Spends 1 Year Building Product Without Payment Validation

Some people build for a year and then get paying customers.

What I'm sharing is my experience and what Founders Cafe members have told me about validating startup ideas.

Don't waste a year like I did.

After my first startup failed, I planned to re-enroll at Stanford/work at Facebook.

After people paid, I quit for good.

I've hit $100k!

Hope this inspires you to request upfront payment! It'll change your life

You might also like

nft now

3 years ago

Instagram NFTs Are Here… How does this affect artists?

Instagram (IG) is officially joining NFT. With the debut of new in-app NFT functionalities, influential producers can interact with blockchain tech on the social media platform.

Meta unveiled intentions for an Instagram NFT marketplace in March, but these latest capabilities focus more on content sharing than commerce. And why shouldn’t they? IG's entry into the NFT market is overdue, given that Twitter and Discord are NFT hotspots.

The NFT marketplace/Web3 social media race has continued to expand, with the expected Coinbase NFT Beta now live and blazing a trail through the NFT ecosystem.

IG's focus is on visual art. It's unlike any NFT marketplace or platform. IG NFTs and artists: what's the deal? Let’s take a look.

What are Instagram’s NFT features anyways?

As said, not everyone has Instagram's new features. 16 artists, NFT makers, and collectors can now post NFTs on IG by integrating third-party digital wallets (like Rainbow or MetaMask) in-app. IG doesn't charge to publish or share digital collectibles.

NFTs displayed on the app have a "shimmer" aesthetic effect. NFT posts also have a "digital collectable" badge that lists metadata such as the creator and/or owner, the platform it was created on, a brief description, and a blockchain identification.

Meta's social media NFTs have launched on Instagram, but the company is also preparing to roll out digital collectibles on Facebook, with more on the way for IG. Currently, only Ethereum and Polygon are supported, but Flow and Solana will be added soon.

How will artists use these new features?

Artists are publishing NFTs they developed or own on IG by linking third-party digital wallets. These features have no NFT trading aspects built-in, but are aimed to let authors share NFTs with IG audiences.

Creators, like IG-native aerial/street photographer Natalie Amrossi (@misshattan), are discovering novel uses for IG NFTs.

Amrossi chose to not only upload his own NFTs but also encourage other artists in the field. "That's the beauty of connecting your wallet and sharing NFTs. It's not just what you make, but also what you accumulate."

Amrossi has been producing and posting Instagram art for years. With IG's NFT features, she can understand Instagram's importance in supporting artists.

Web2 offered Amrossi the tools to become an artist and make a life. "Before 'influencer' existed, I was just making art. Instagram helped me reach so many individuals and brands, giving me a living.

Even artists without millions of viewers are encouraged to share NFTs on IG. Wilson, a relatively new name in the NFT space, seems to have already gone above and beyond the scope of these new IG features. By releasing "Losing My Mind" via IG NFT posts, she has evaded the lack of IG NFT commerce by using her network to market her multi-piece collection.

"'Losing My Mind' is a long-running photo series. Wilson was preparing to release it as NFTs before IG approached him, so it was a perfect match.

Wilson says the series is about Black feminine figures and media depiction. Respectable effort, given POC artists have been underrepresented in NFT so far.

“Over the past year, I've had mental health concerns that made my emotions so severe it was impossible to function in daily life, therefore that prompted this photo series. Every Wednesday and Friday for three weeks, I'll release a new Meta photo for sale.

Wilson hopes these new IG capabilities will help develop a connection between the NFT community and other internet subcultures that thrive on Instagram.

“NFTs can look scary as an outsider, but seeing them on your daily IG feed makes it less foreign,” adds Wilson. I think Instagram might become a hub for NFT aficionados, making them more accessible to artists and collectors.

What does it all mean for the NFT space?

Meta's NFT and metaverse activities will continue to impact Instagram's NFT ecosystem. Many think it will be for the better, as IG NFT frauds are another problem hurting the NFT industry.

IG's new NFT features seem similar to Twitter's PFP NFT verifications, but Instagram's tools should help cut down on scams as users can now verify the creation and ownership of whole NFT collections included in IG posts.

Given the number of visual artists and NFT creators on IG, it might become another hub for NFT fans, as Wilson noted. If this happens, it raises questions about Instagram success. Will artists be incentivized to distribute NFTs? Or will those with a large fanbase dominate?

Elise Swopes (@swopes) believes these new features should benefit smaller artists. Swopes was one of the first profiles placed to Instagram's original suggested user list in 2012.

Swopes says she wants IG to be a magnet for discovery and understands the value of NFT artists and producers.

"I'd love to see IG become a focus of discovery for everyone, not just the Beeples and Apes and PFPs. That's terrific for them, but [IG NFT features] are more about using new technology to promote emerging artists, Swopes added.

“Especially music artists. It's everywhere. Dancers, writers, painters, sculptors, musicians. My element isn't just for digital artists; it can be anything. I'm delighted to witness people's creativity."

Swopes, Wilson, and Amrossi all believe IG's new features can help smaller artists. It remains to be seen how these new features will effect the NFT ecosystem once unlocked for the rest of the IG NFT community, but we will likely see more social media NFT integrations in the months and years ahead.

Read the full article here

Ivona Hirschi

3 years ago

7 LinkedIn Tips That Will Help in Audience Growth

In 8 months, I doubled my audience with them.

LinkedIn's buzz isn't over.

People dream of social proof every day. They want clients, interesting jobs, and field recognition.

LinkedIn coaches will benefit greatly. Sell learning? Probably. Can you use it?

Consistency has been key in my eight-month study of LinkedIn. However, I'll share seven of my tips. 700 to 4500 people followed me.

1. Communication, communication, communication

LinkedIn is a social network. I like to think of it as a cafe. Here, you can share your thoughts, meet friends, and discuss life and work.

Do not treat LinkedIn as if it were a board for your post-its.

More socializing improves relationships. It's about people, like any network.

Consider interactions. Three main areas:

Respond to criticism left on your posts.

Comment on other people's posts

Start and maintain conversations through direct messages.

Engage people. You spend too much time on Facebook if you only read your wall. Keeping in touch and having meaningful conversations helps build your network.

Every day, start a new conversation to make new friends.

2. Stick with those you admire

Interact thoughtfully.

Choose your contacts. Build your tribe is a term. Respectful networking.

I only had past colleagues, family, and friends in my network at the start of this year. Not business-friendly. Since then, I've sought out people I admire or can learn from.

Finding a few will help you. As they connect you to their networks. Friendships can lead to clients.

Don't underestimate network power. Cafe-style. Meet people at each table. But avoid people who sell SEO, web redesign, VAs, mysterious job opportunities, etc.

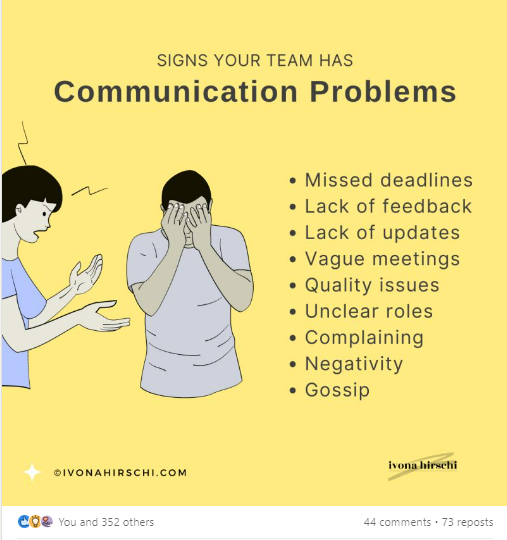

3. Share eye-catching infographics

Daily infographics flood LinkedIn. Visuals are popular. Use Canva's free templates if you can't draw them.

Last week's:

It's a fun way to visualize your topic.

You can repost and comment on infographics. Involve your network. I prefer making my own because I build my brand around certain designs.

My friend posted infographics consistently for four months and grew his network to 30,000.

If you start, credit the authors. As you steal someone's work.

4. Invite some friends over.

LinkedIn alone can be lonely. Having a few friends who support your work daily will boost your growth.

I was lucky to be invited to a group of networkers. We share knowledge and advice.

Having a few regulars who can discuss your posts is helpful. It's artificial, but it works and engages others.

Consider who you'd support if they were in your shoes.

You can pay for an engagement group, but you risk supporting unrelated people with rubbish posts.

Help each other out.

5. Don't let your feed or algorithm divert you.

LinkedIn's algorithm is magical.

Which time is best? How fast do you need to comment? Which days are best?

Overemphasize algorithms. Consider the user. No need to worry about the best time.

Remember to spend time on LinkedIn actively. Not passively. That is what Facebook is for.

Surely someone would find a LinkedIn recipe. Don't beat the algorithm yet. Consider your audience.

6. The more personal, the better

Personalization isn't limited to selfies. Share your successes and failures.

The more personality you show, the better.

People relate to others, not theories or quotes. Why should they follow you? Everyone posts the same content?

Consider your friends. What's their appeal?

Because they show their work and identity. It's simple. Medium and Linkedin are your platforms. Find out what works.

You can copy others' hooks and structures. You decide how simple to make it, though.

7. Have fun with those who have various post structures.

I like writing, infographics, videos, and carousels. Because you can:

Repurpose your content!

Out of one blog post I make:

Newsletter

Infographics (positive and negative points of view)

Carousel

Personal stories

Listicle

Create less but more variety. Since LinkedIn posts last 24 hours, you can rotate the same topics for weeks without anyone noticing.

Effective!

The final LI snippet to think about

LinkedIn is about consistency. Some say 15 minutes. If you're serious about networking, spend more time there.

The good news is that it is worth it. The bad news is that it takes time.

James White

3 years ago

Ray Dalio suggests reading these three books in 2022.

An inspiring reading list

I'm no billionaire or hedge-fund manager. My bank account doesn't have millions. Ray Dalio's love of reading motivates me to think differently.

Here are some books recommended by Ray Dalio. Each influenced me. Hope they'll help you.

Sapiens by Yuval Noah Harari

Page Count: 512

Rating on Goodreads: 4.39

My favorite nonfiction book.

Sapiens explores human evolution. It explains how Homo Sapiens developed from hunter-gatherers to a dominant species. Amazing!

Sapiens will teach you about human history. Yuval Noah Harari has a follow-up book on human evolution.

My favorite book quotes are:

The tendency for luxuries to turn into necessities and give rise to new obligations is one of history's few unbreakable laws.

Happiness is not dependent on material wealth, physical health, or even community. Instead, it depends on how closely subjective expectations and objective circumstances align.

The romantic comparison between today's industry, which obliterates the environment, and our forefathers, who coexisted well with nature, is unfounded. Homo sapiens held the record among all organisms for eradicating the most plant and animal species even before the Industrial Revolution. The unfortunate distinction of being the most lethal species in the history of life belongs to us.

The Power Of Habit by Charles Duhigg

Page Count: 375

Rating on Goodreads: 4.13

Great book: The Power Of Habit. It illustrates why habits are everything. The book explains how healthier habits can improve your life, career, and society.

The Power of Habit rocks. It's a great book on productivity. Its suggestions helped me build healthier behaviors (and drop bad ones).

Read ASAP!

My favorite book quotes are:

Change may not occur quickly or without difficulty. However, almost any behavior may be changed with enough time and effort.

People who exercise begin to eat better and produce more at work. They are less smokers and are more patient with friends and family. They claim to feel less anxious and use their credit cards less frequently. A fundamental habit that sparks broad change is exercise.

Habits are strong but also delicate. They may develop independently of our awareness or may be purposefully created. They frequently happen without our consent, but they can be altered by changing their constituent pieces. They have a much greater influence on how we live than we realize; in fact, they are so powerful that they cause our brains to adhere to them above all else, including common sense.

Tribe Of Mentors by Tim Ferriss

Page Count: 561

Rating on Goodreads: 4.06

Unusual book structure. It's worth reading if you want to learn from successful people.

The book is Q&A-style. Tim questions everyone. Each chapter features a different person's life-changing advice. In the book, Pressfield, Willink, Grylls, and Ravikant are interviewed.

Amazing!

My favorite book quotes are:

According to one's courage, life can either get smaller or bigger.

Don't engage in actions that you are aware are immoral. The reputation you have with yourself is all that constitutes self-esteem. Always be aware.

People mistakenly believe that focusing means accepting the task at hand. However, that is in no way what it represents. It entails rejecting the numerous other worthwhile suggestions that exist. You must choose wisely. Actually, I'm just as proud of the things we haven't accomplished as I am of what I have. Saying no to 1,000 things is what innovation is.