Elon Musk’s Rich Life Is a Nightmare

I'm sure you haven't read about Elon's other side.

Elon divorced badly.

Nobody's surprised.

Imagine you're a parent. Someone isn't home year-round. What's next?

That’s what happened to YOLO Elon.

He can do anything. He can intervene in wars, shoot his mouth off, bang anyone he wants, avoid tax, make cool tech, buy anything his ego desires, and live anywhere exotic.

Few know his billionaire backstory. I'll tell you so you don't worship his lifestyle. It’s a cult.

Only his career succeeds. His life is a nightmare otherwise.

Psychopaths' schedule

Elon has said he works 120-hour weeks.

As he told the reporter about his job, he choked up, which was unusual for him.

His crazy workload and lack of sleep forced him to scold innocent Wall Street analysts. Later, he apologized.

In the same interview, he admits he hadn't taken more than a week off since 2001, when he was bedridden with malaria. Elon stays home after a near-death experience.

He's rarely outside.

Elon says he sometimes works 3 or 4 days straight.

He admits his crazy work schedule has cost him time with his kids and friends.

Elon's a slave

Elon's birthday description made him emotional.

Elon worked his entire birthday.

"No friends, nothing," he said, stuttering.

His brother's wedding in Catalonia was 48 hours after his birthday. That meant flying there from Tesla's factory prison.

He arrived two hours before the big moment, barely enough time to eat and change, let alone see his brother.

Elon had to leave after the bouquet was tossed to a crowd of billionaire lovers. He missed his brother's first dance with his wife.

Shocking.

He went straight to Tesla's prison.

The looming health crisis

Elon was asked if overworking affected his health.

Not great. Friends are worried.

Now you know why Elon tweets dumb things. Working so hard has probably caused him mental health issues.

Mental illness removed my reality filter. You do stupid things because you're tired.

Astronauts pelted Elon

Elon's overwork isn't the first time his life has made him emotional.

When asked about Neil Armstrong and Gene Cernan criticizing his SpaceX missions, he got emotional. Elon's heroes.

They're why he started the company, and they mocked his work. In another interview, we see how Elon’s business obsession has knifed him in the heart.

Once you have a company, you must feed, nurse, and care for it, even if it destroys you.

"Yep," Elon says, tearing up.

In the same interview, he's asked how Tesla survived the 2008 recession. Elon stopped the interview because he was crying. When Tesla and SpaceX filed for bankruptcy in 2008, he nearly had a nervous breakdown. He called them his "children."

All the time, he's risking everything.

Jack Raines explains best:

Too much money makes you a slave to your net worth.

Elon's emotions are admirable. It's one of the few times he seems human, not like an alien Cyborg.

Stop idealizing Elon's lifestyle

Building a side business that becomes a billion-dollar unicorn startup is a nightmare.

"Billionaire" means financially wealthy but otherwise broke. A rich life includes more than business and money.

This post is a summary. Read full article here

More on Entrepreneurship/Creators

Ben Chino

3 years ago

100-day SaaS buildout.

We're opening up Maki through a series of Medium posts. We'll describe what Maki is building and how. We'll explain how we built a SaaS in 100 days. This isn't a step-by-step guide to starting a business, but a product philosophy to help you build quickly.

Focus on end-users.

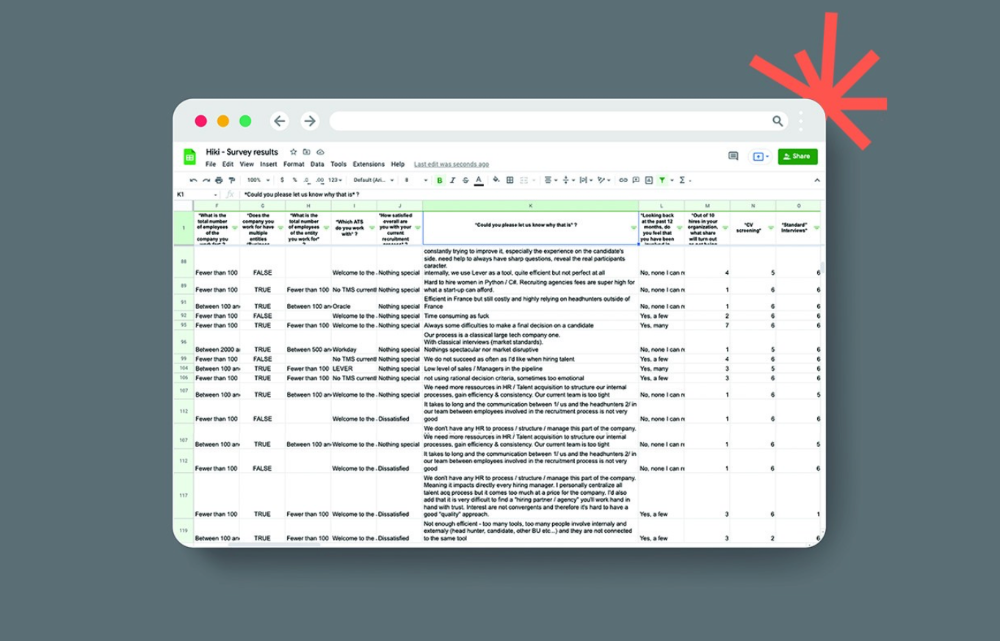

This may seem obvious, but it's important to talk to users first. When we started thinking about Maki, we interviewed 100 HR directors from SMBs, Next40 scale-ups, and major Enterprises to understand their concerns. We initially thought about the future of employment, but most of their worries centered on Recruitment. We don't have a clear recruiting process, it's time-consuming, we recruit clones, we don't support diversity, etc. And as hiring managers, we couldn't help but agree.

Co-create your product with your end-users.

We went to the drawing board, read as many books as possible (here, here, and here), and when we started getting a sense for a solution, we questioned 100 more operational HR specialists to corroborate the idea and get a feel for our potential answer. This confirmed our direction to help hire more objectively and efficiently.

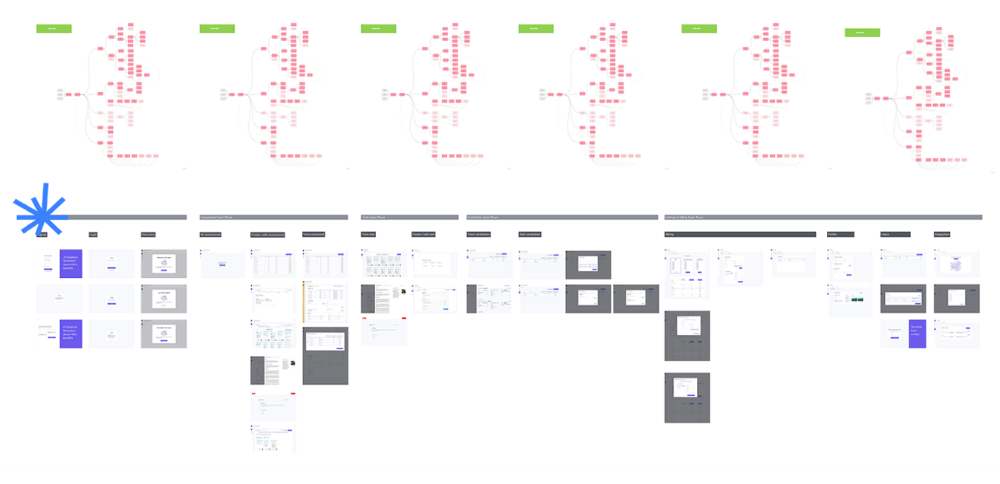

Back to the drawing board, we designed our first flows and screens. We organized sessions with certain survey respondents to show them our early work and get comments. We got great input that helped us build Maki, and we met some consumers. Obsess about users and execute alongside them.

Don’t shoot for the moon, yet. Make pragmatic choices first.

Once we were convinced, we began building. To launch a SaaS in 100 days, we needed an operating principle that allowed us to accelerate while still providing a reliable, secure, scalable experience. We focused on adding value and outsourced everything else. Example:

Concentrate on adding value. Reuse existing bricks.

When determining which technology to use, we looked at our strengths and the future to see what would last. Node.js for backend, React for frontend, both with typescript. We thought this technique would scale well since it would attract more talent and the surrounding mature ecosystem would help us go quicker.

We explored for ways to bootstrap services while setting down strong foundations that might support millions of users. We built our backend services on NestJS so we could extend into microservices later. Hasura, a GraphQL APIs engine, automates Postgres data exposing through a graphQL layer. MUI's ready-to-use components powered our design-system. We used well-maintained open-source projects to speed up certain tasks.

We outsourced important components of our platform (Auth0 for authentication, Stripe for billing, SendGrid for notifications) because, let's face it, we couldn't do better. We choose to host our complete infrastructure (SQL, Cloud run, Logs, Monitoring) on GCP to simplify our work between numerous providers.

Focus on your business, use existing bricks for the rest. For the curious, we'll shortly publish articles detailing each stage.

Most importantly, empower people and step back.

We couldn't have done this without the incredible people who have supported us from the start. Since Powership is one of our key values, we provided our staff the power to make autonomous decisions from day one. Because we believe our firm is its people, we hired smart builders and let them build.

Nicolas left Spendesk to create scalable interfaces using react-router, react-queries, and MUI. JD joined Swile and chose Hasura as our GraphQL engine. Jérôme chose NestJS to build our backend services. Since then, Justin, Ben, Anas, Yann, Benoit, and others have followed suit.

If you consider your team a collective brain, you should let them make decisions instead of directing them what to do. You'll make mistakes, but you'll go faster and learn faster overall.

Invest in great talent and develop a strong culture from the start. Here's how to establish a SaaS in 100 days.

Woo

3 years ago

How To Launch A Business Without Any Risk

> Say Hello To The Lean-Hedge Model

People think starting a business requires significant debt and investment. Like Shark Tank, you need a world-changing idea. I'm not saying to avoid investors or brilliant ideas.

Investing is essential to build a genuinely profitable company. Think Apple or Starbucks.

Entrepreneurship is risky because many people go bankrupt from debt. As starters, we shouldn't do it. Instead, use lean-hedge.

Simply defined, you construct a cash-flow business to hedge against long-term investment-heavy business expenses.

What the “fx!$rench-toast” is the lean-hedge model?

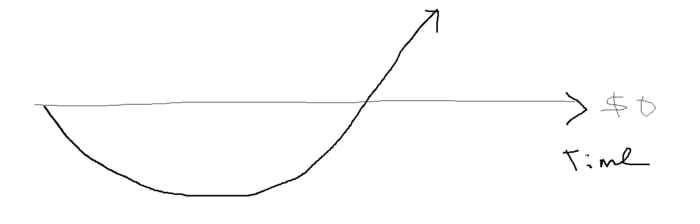

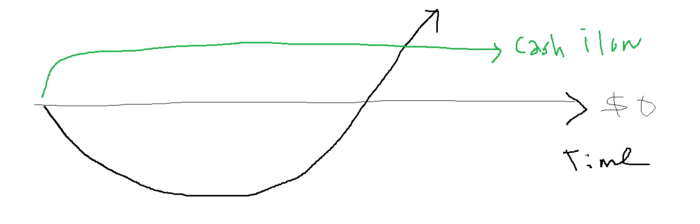

When you start a business, your money should move down, down, down, then up when it becomes profitable.

Many people don't survive the business's initial losses and debt. What if, we created a cash-flow business BEFORE we started our Starbucks to hedge against its initial expenses?

Lean-hedge has two sections. Start a cash-flow business. A cash-flow business takes minimal investment and usually involves sweat and time.

Let’s take a look at some examples:

A Translation company

Personal portfolio website (you make a site then you do cold e-mail marketing)

FREELANCE (UpWork, Fiverr).

Educational business.

Infomarketing. (You design a knowledge-based product. You sell the info).

Online fitness/diet/health coaching ($50-$300/month, calls, training plan)

Amazon e-book publishing. (Medium writers do this)

YouTube, cash-flow channel

A web development agency (I'm a dev, but if you're not, a graphic design agency, etc.) (Sell your time.)

Digital Marketing

Online paralegal (A million lawyers work in the U.S).

Some dropshipping (Organic Tik Tok dropshipping, where you create content to drive traffic to your shopify store instead of spend money on ads).

(Disclaimer: My first two cash-flow enterprises, which were language teaching, failed terribly. My translation firm is now booming because B2B e-mail marketing is easy.)

Crossover occurs. Your long-term business starts earning more money than your cash flow business.

My cash-flow business (freelancing, translation) makes $7k+/month.

I’ve decided to start a slightly more investment-heavy digital marketing agency

Here are the anticipated business's time- and money-intensive investments:

($$$) Top Front-End designer's Figma/UI-UX design (in negotiation)

(Time): A little copywriting (I will do this myself)

($$) Creating an animated webpage with HTML (in negotiation)

Backend Development (Duration) (I'll carry out this myself using Laravel.)

Logo Design ($$)

Logo Intro Video for $

Video Intro (I’ll edit this myself with Premiere Pro)

etc.

Then evaluate product, place, price, and promotion. Consider promotion and pricing.

The lean-hedge model's point is:

Don't gamble. Avoid debt. First create a cash-flow project, then grow it steadily.

Check read my previous posts on “Nightmare Mode” (which teaches you how to make work as interesting as video games) and Why most people can't escape a 9-5 to learn how to develop a cash-flow business.

Alex Mathers

2 years ago

How to Produce Enough for People to Not Neglect You

Internet's fantastic, right?

We've never had a better way to share our creativity.

I can now draw on my iPad and tweet or Instagram it to thousands. I may get some likes.

With such a great, free tool, you're not alone.

Millions more bright-eyed artists are sharing their work online.

The issue is getting innovative work noticed, not sharing it.

In a world where creators want attention, attention is valuable.

We build for attention.

Attention helps us establish a following, make money, get notoriety, and make a difference.

Most of us require attention to stay sane while creating wonderful things.

I know how hard it is to work hard and receive little views.

How do we receive more attention, more often, in a sea of talent?

Advertising and celebrity endorsements are options. These may work temporarily.

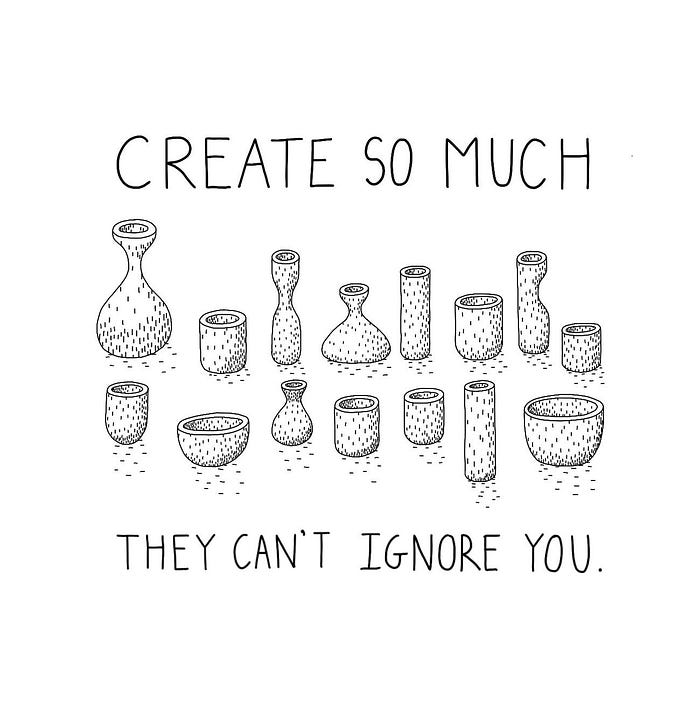

To attract true, organic, and long-term attention, you must create in high quality, high volume, and consistency.

Adapting Steve Martin's Be so amazing, they can't ignore you (with a mention to Dan Norris in his great book Create or Hate for the reminder)

Create a lot.

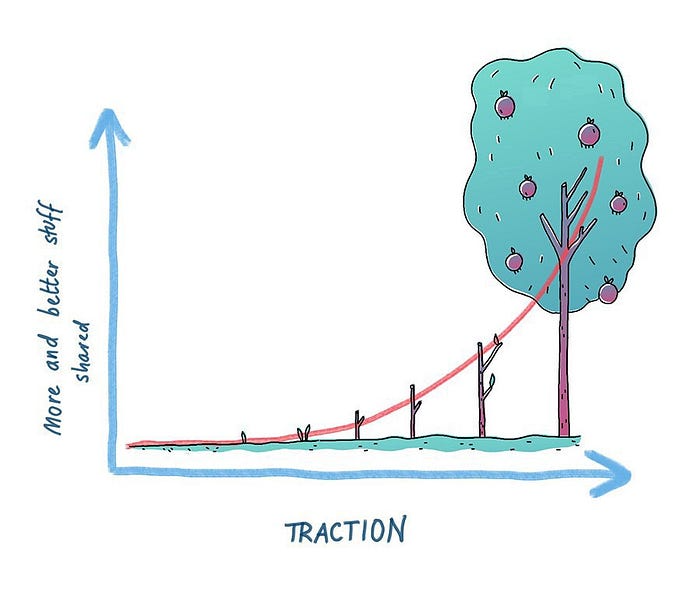

Eventually, your effort will gain traction.

Traction shows your work's influence.

Traction is when your product sells more. Traction is exponential user growth. Your work is shared more.

No matter how good your work is, it will always have minimal impact on the world.

Your work can eventually dent or puncture. Daily, people work to dent.

To achieve this tipping point, you must consistently produce exceptional work.

Expect traction after hundreds of outputs.

Dilbert creator Scott Adams says repetition persuades. If you don't stop, you can persuade practically anyone with anything.

Volume lends believability. So make more.

I worked as an illustrator for at least a year and a half without any recognition. After 150 illustrations on iStockphoto, my work started selling.

With 350 illustrations on iStock, I started getting decent client commissions.

Producing often will improve your craft and draw attention.

It's the only way to succeed. More creation means better results and greater attention.

Austin Kleon says you can improve your skill in relative anonymity before you become famous. Before obtaining traction, generate a lot and become excellent.

Most artists, even excellent ones, don't create consistently enough to get traction.

It may hurt. For makers who don't love and flow with their work, it's extremely difficult.

Your work must bring you to life.

To generate so much that others can't ignore you, decide what you'll accomplish every day (or most days).

Commit and be patient.

Prepare for zero-traction.

Anticipating this will help you persevere and create.

My online guru Grant Cardone says: Anything worth doing is worth doing every day.

Do.

You might also like

Al Anany

2 years ago

Because of this covert investment that Bezos made, Amazon became what it is today.

He kept it under wraps for years until he legally couldn’t.

His shirt is incomplete. I can’t stop thinking about this…

Actually, ignore the article. Look at it. JUST LOOK at it… It’s quite disturbing, isn’t it?

Ughh…

Me: “Hey, what up?” Friend: “All good, watching lord of the rings on amazon prime video.” Me: “Oh, do you know how Amazon grew and became famous?” Friend: “Geek alert…Can I just watch in peace?” Me: “But… Bezos?” Friend: “Let it go, just let it go…”

I can question you, the reader, and start answering instantly without his consent. This far.

Reader, how did Amazon succeed? You'll say, Of course, it was an internet bookstore, then it sold everything.

Mistaken. They moved from zero to one because of this. How did they get from one to thousand? AWS-some. Understand? It's geeky and lame. If not, I'll explain my geekiness.

Over an extended period of time, Amazon was not profitable.

Business basics. You want customers if you own a bakery, right?

Well, 100 clients per day order $5 cheesecakes (because cheesecakes are awesome.)

$5 x 100 consumers x 30 days Equals $15,000 monthly revenue. You proudly work here.

Now you have to pay the barista (unless ChatGPT is doing it haha? Nope..)

The barista is requesting $5000 a month.

Each cheesecake costs the cheesecake maker $2.5 ($2.5 × 100 x 30 = $7500).

The monthly cost of running your bakery, including power, is about $5000.

Assume no extra charges. Your operating costs are $17,500.

Just $15,000? You have income but no profit. You might make money selling coffee with your cheesecake next month.

Is losing money bad? You're broke. Losing money. It's bad for financial statements.

It's almost a business ultimatum. Most startups fail. Amazon took nine years.

I'm reading Amazon Unbound: Jeff Bezos and the Creation of a Global Empire to comprehend how a company has a $1 trillion market cap.

Many things made Amazon big. The book claims that Bezos and Amazon kept a specific product secret for a long period.

Clouds above the bald head.

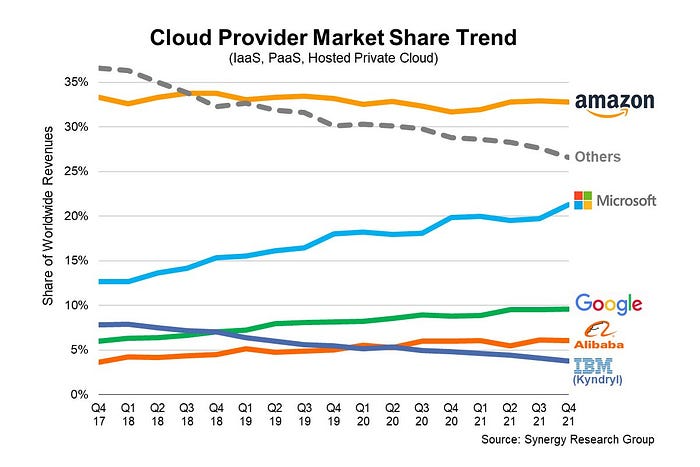

In 2006, Bezos started a cloud computing initiative. They believed many firms like Snapchat would pay for reliable servers.

In 2006, cloud computing was not what it is today. I'll simplify. 2006 had no iPhone.

Bezos invested in Amazon Web Services (AWS) without disclosing its revenue. That's permitted till a certain degree.

Google and Microsoft would realize Amazon is heavily investing in this market and worry.

Bezos anticipated high demand for this product. Microsoft built its cloud in 2010, and Google in 2008.

If you managed Google or Microsoft, you wouldn't know how much Amazon makes from their cloud computing service. It's enough. Yet, Amazon is an internet store, so they'll focus on that.

All but Bezos were wrong.

Time to come clean now.

They revealed AWS revenue in 2015. Two things were apparent:

Bezos made the proper decision to bet on the cloud and keep it a secret.

In this race, Amazon is in the lead.

They continued. Let me list some AWS users today.

Netflix

Airbnb

Twitch

More. Amazon was unprofitable for nine years, remember? This article's main graph.

AWS accounted for 74% of Amazon's profit in 2021. This 74% might not exist if they hadn't invested in AWS.

Bring this with you home.

Amazon predated AWS. Yet, it helped the giant reach $1 trillion. Bezos' secrecy? Perhaps, until a time machine is invented (they might host the time machine software on AWS, though.)

Without AWS, Amazon would have been profitable but unimpressive. They may have invested in anything else that would have returned more (like crypto? No? Ok.)

Bezos has business flaws. His success. His failures include:

introducing the Fire Phone and suffering a $170 million loss.

Amazon's failure in China In 2011, Amazon had a about 15% market share in China. 2019 saw a decrease of about 1%.

not offering a higher price to persuade the creator of Netflix to sell the company to him. He offered a rather reasonable $15 million in his proposal. But what if he had offered $30 million instead (Amazon had over $100 million in revenue at the time)? He might have owned Netflix, which has a $156 billion market valuation (and saved billions rather than invest in Amazon Prime Video).

Some he could control. Some were uncontrollable. Nonetheless, every action he made in the foregoing circumstances led him to invest in AWS.

Merve Yılmaz

3 years ago

Dopamine detox

This post is for you if you can't read or study for 5 minutes.

If you clicked this post, you may be experiencing problems focusing on tasks. A few minutes of reading may tire you. Easily distracted? Using social media and video games for hours without being sidetracked may impair your dopamine system.

When we achieve a goal, the brain secretes dopamine. It might be as simple as drinking water or as crucial as college admission. Situations vary. Various events require different amounts.

Dopamine is released when we start learning but declines over time. Social media algorithms provide new material continually, making us happy. Social media use slows down the system. We can't continue without an award. We return to social media and dopamine rewards.

Mice were given a button that released dopamine into their brains to study the hormone. The mice lost their hunger, thirst, and libido and kept pressing the button. Think this is like someone who spends all day gaming or on Instagram?

When we cause our brain to release so much dopamine, the brain tries to balance it in 2 ways:

1- Decreases dopamine production

2- Dopamine cannot reach its target.

Too many quick joys aren't enough. We'll want more joys. Drugs and alcohol are similar. Initially, a beer will get you drunk. After a while, 3-4 beers will get you drunk.

Social media is continually changing. Updates to these platforms keep us interested. When social media conditions us, we can't read a book.

Same here. I used to complete a book in a day and work longer without distraction. Now I'm addicted to Instagram. Daily, I spend 2 hours on social media. This must change. My life needs improvement. So I started the 50-day challenge.

I've compiled three dopamine-related methods.

Recommendations:

Day-long dopamine detox

First, take a day off from all your favorite things. Social media, gaming, music, junk food, fast food, smoking, alcohol, friends. Take a break.

Hanging out with friends or listening to music may seem pointless. Our minds are polluted. One day away from our pleasures can refresh us.

2. One-week dopamine detox by selecting

Choose one or more things to avoid. Social media, gaming, music, junk food, fast food, smoking, alcohol, friends. Try a week without Instagram or Twitter. I use this occasionally.

One week all together

One solid detox week. It's the hardest program. First or second options are best for dopamine detox. Time will help you.

You can walk, read, or pray during a dopamine detox. Many options exist. If you want to succeed, you must avoid instant gratification. Success after hard work is priceless.

Yogita Khatri

3 years ago

Moonbirds NFT sells for $1 million in first week

On Saturday, Moonbird #2642, one of the collection's rarest NFTs, sold for a record 350 ETH (over $1 million) on OpenSea.

The Sandbox, a blockchain-based gaming company based in Hong Kong, bought the piece. The seller, "oscuranft" on OpenSea, made around $600,000 after buying the NFT for 100 ETH a week ago.

Owl avatars

Moonbirds is a 10,000 owl NFT collection. It is one of the quickest collections to achieve bluechip status. Proof, a media startup founded by renowned VC Kevin Rose, launched Moonbirds on April 16.

Rose is currently a partner at True Ventures, a technology-focused VC firm. He was a Google Ventures general partner and has 1.5 million Twitter followers.

Rose has an NFT podcast on Proof. It follows Proof Collective, a group of 1,000 NFT collectors and artists, including Beeple, who hold a Proof Collective NFT and receive special benefits.

These include early access to the Proof podcast and in-person events.

According to the Moonbirds website, they are "the official Proof PFP" (picture for proof).

Moonbirds NFTs sold nearly $360 million in just over a week, according to The Block Research and Dune Analytics. Its top ten sales range from $397,000 to $1 million.

In the current market, Moonbirds are worth 33.3 ETH. Each NFT is 2.5 ETH. Holders have gained over 12 times in just over a week.

Why was it so popular?

The Block Research's NFT analyst, Thomas Bialek, attributes Moonbirds' rapid rise to Rose's backing, the success of his previous Proof Collective project, and collectors' preference for proven NFT projects.

Proof Collective NFT holders have made huge gains. These NFTs were sold in a Dutch auction last December for 5 ETH each. According to OpenSea, the current floor price is 109 ETH.

According to The Block Research, citing Dune Analytics, Proof Collective NFTs have sold over $39 million to date.

Rose has bigger plans for Moonbirds. Moonbirds is introducing "nesting," a non-custodial way for holders to stake NFTs and earn rewards.

Holders of NFTs can earn different levels of status based on how long they keep their NFTs locked up.

"As you achieve different nest status levels, we can offer you different benefits," he said. "We'll have in-person meetups and events, as well as some crazy airdrops planned."

Rose went on to say that Proof is just the start of "a multi-decade journey to build a new media company."