More on Entrepreneurship/Creators

DC Palter

3 years ago

Is Venture Capital a Good Fit for Your Startup?

5 VC investment criteria

I reviewed 200 startup business concepts last week. Brainache.

The enterprises sold various goods and services. The concepts were achingly similar: give us money, we'll produce a product, then get more to expand. No different from daily plans and pitches.

Most of those 200 plans sounded plausible. But 10% looked venture-worthy. 90% of startups need alternatives to venture finance.

With the success of VC-backed businesses and the growth of venture funds, a common misperception is that investors would fund any decent company idea. Finding investors that believe in the firm and founders is the key to funding.

Incorrect. Venture capital needs investing in certain enterprises. If your startup doesn't match the model, as most early-stage startups don't, you can revise your business plan or locate another source of capital.

Before spending six months pitching angels and VCs, make sure your startup fits these criteria.

Likely to generate $100 million in sales

First, I check the income predictions in a pitch deck. If it doesn't display $100M, don't bother.

The math doesn't work for venture financing in smaller businesses.

Say a fund invests $1 million in a startup valued at $5 million that is later acquired for $20 million. That's a win everyone should celebrate. Most VCs don't care.

Consider a $100M fund. The fund must reach $360M in 7 years with a 20% return. Only 20-30 investments are possible. 90% of the investments will fail, hence the 23 winners must return $100M-$200M apiece. $15M isn't worth the work.

Angel investors and tiny funds use the same ideas as venture funds, but their smaller scale affects the calculations. If a company can support its growth through exit on less than $2M in angel financing, it must have $25M in revenues before large companies will consider acquiring it.

Aiming for Hypergrowth

A startup's size isn't enough. It must expand fast.

Developing a great business takes time. Complex technology must be constructed and tested, a nationwide expansion must be built, or production procedures must go from lab to pilot to factories. These can be enormous, world-changing corporations, but venture investment is difficult.

The normal 10-year venture fund life. Investments are made during first 3–4 years.. 610 years pass between investment and fund dissolution. Funds need their investments to exit within 5 years, 7 at the most, therefore add a safety margin.

Longer exit times reduce ROI. A 2-fold return in a year is excellent. Loss at 2x in 7 years.

Lastly, VCs must prove success to raise their next capital. The 2nd fund is raised from 1st fund portfolio increases. Third fund is raised using 1st fund's cash return. Fund managers must raise new money quickly to keep their jobs.

Branding or technology that is protected

No big firm will buy a startup at a high price if they can produce a competing product for less. Their development teams, consumer base, and sales and marketing channels are large. Who needs you?

Patents, specialist knowledge, or brand name are the only answers. The acquirer buys this, not the thing.

I've heard of several promising startups. It's not a decent investment if there's no exit strategy.

A company that installs EV charging stations in apartments and shopping areas is an example. It's profitable, repeatable, and big. A terrific company. Not a startup.

This building company's operations aren't secret. No technology to protect, no special information competitors can't figure out, no go-to brand name. Despite the immense possibilities, a large construction company would be better off starting their own.

Most venture businesses build products, not services. Services can be profitable but hard to safeguard.

Probable purchase at high multiple

Once a software business proves its value, acquiring it is easy. Pharma and medtech firms have given up on their own research and instead acquire startups after regulatory permission. Many startups, especially in specialized areas, have this weakness.

That doesn't mean any lucrative $25M-plus business won't be acquired. In many businesses, the venture model requires a high exit premium.

A startup invents a new glue. 3M, BASF, Henkel, and others may buy them. Adding more adhesive to their catalogs won't boost commerce. They won't compete to buy the business. They'll only buy a startup at a profitable price. The acquisition price represents a moderate EBITDA multiple.

The company's $100M revenue presumably yields $10m in profits (assuming they’ve reached profitability at all). A $30M-$50M transaction is likely. Not terrible, but not what venture investors want after investing $25M to create a plant and develop the business.

Private equity buys profitable companies for a moderate profit multiple. It's a good exit for entrepreneurs, but not for investors seeking 10x or more what PE firms pay. If a startup offers private equity as an exit, the conversation is over.

Constructed for purchase

The startup wants a high-multiple exit. Unless the company targets $1B in revenue and does an IPO, exit means acquisition.

If they're constructing the business for acquisition or themselves, founders must decide.

If you want an indefinitely-running business, I applaud you. We need more long-term founders. Most successful organizations are founded around consumer demands, not venture capital's urge to grow fast and exit. Not venture funding.

if you don't match the venture model, what to do

VC funds moonshots. The 10% that succeed are extraordinary. Not every firm is a rocketship, and launching the wrong startup into space, even with money, will explode.

But just because your startup won't make $100M in 5 years doesn't mean it's a bad business. Most successful companies don't follow this model. It's not venture capital-friendly.

Although venture capital gets the most attention due to a few spectacular triumphs (and disasters), it's not the only or even most typical option to fund a firm.

Other ways to support your startup:

Personal and family resources, such as credit cards, second mortgages, and lines of credit

bootstrapping off of sales

government funding and honors

Private equity & project financing

collaborating with a big business

Including a business partner

Before pitching angels and VCs, be sure your startup qualifies. If so, include them in your pitch.

Tim Denning

3 years ago

Bills are paid by your 9 to 5. 6 through 12 help you build money.

40 years pass. After 14 years of retirement, you die. Am I the only one who sees the problem?

I’m the Jedi master of escaping the rat race.

Not to impress. I know this works since I've tried it. Quitting a job to make money online is worse than Kim Kardashian's internet-burning advice.

Let me help you rethink the move from a career to online income to f*ck you money.

To understand why a job is a joke, do some life math.

Without a solid why, nothing makes sense.

The retirement age is 65. Our processed food consumption could shorten our 79-year average lifespan.

You spend 40 years working.

After 14 years of retirement, you die.

Am I alone in seeing the problem?

Life is too short to work a job forever, especially since most people hate theirs. After-hours skills are vital.

Money equals unrestricted power, f*ck you.

F*ck you money is the answer.

Jack Raines said it first. He says we can do anything with the money. Jack, a young rebel straight out of college, can travel and try new foods.

F*ck you money signifies not checking your bank account before buying.

F*ck you” money is pure, unadulterated freedom with no strings attached.

Jack claims you're rich when you rarely think about money.

Avoid confusion.

This doesn't imply you can buy a Lamborghini. It indicates your costs, income, lifestyle, and bank account are balanced.

Jack established an online portfolio while working for UPS in Atlanta, Georgia. So he gained boundless power.

The portion that many erroneously believe

Yes, you need internet abilities to make money, but they're not different from 9-5 talents.

Sahil Lavingia, Gumroad's creator, explains.

A job is a way to get paid to learn.

Mistreat your boss 9-5. Drain his skills. Defuse him. Love and leave him (eventually).

Find another employment if yours is hazardous. Pick an easy job. Make sure nothing sneaks into your 6-12 time slot.

The dumb game that makes you a sheep

A 9-5 job requires many job interviews throughout life.

You email your résumé to employers and apply for jobs through advertisements. This game makes you a sheep.

You're competing globally. Work-from-home makes the competition tougher. If you're not the cheapest, employers won't hire you.

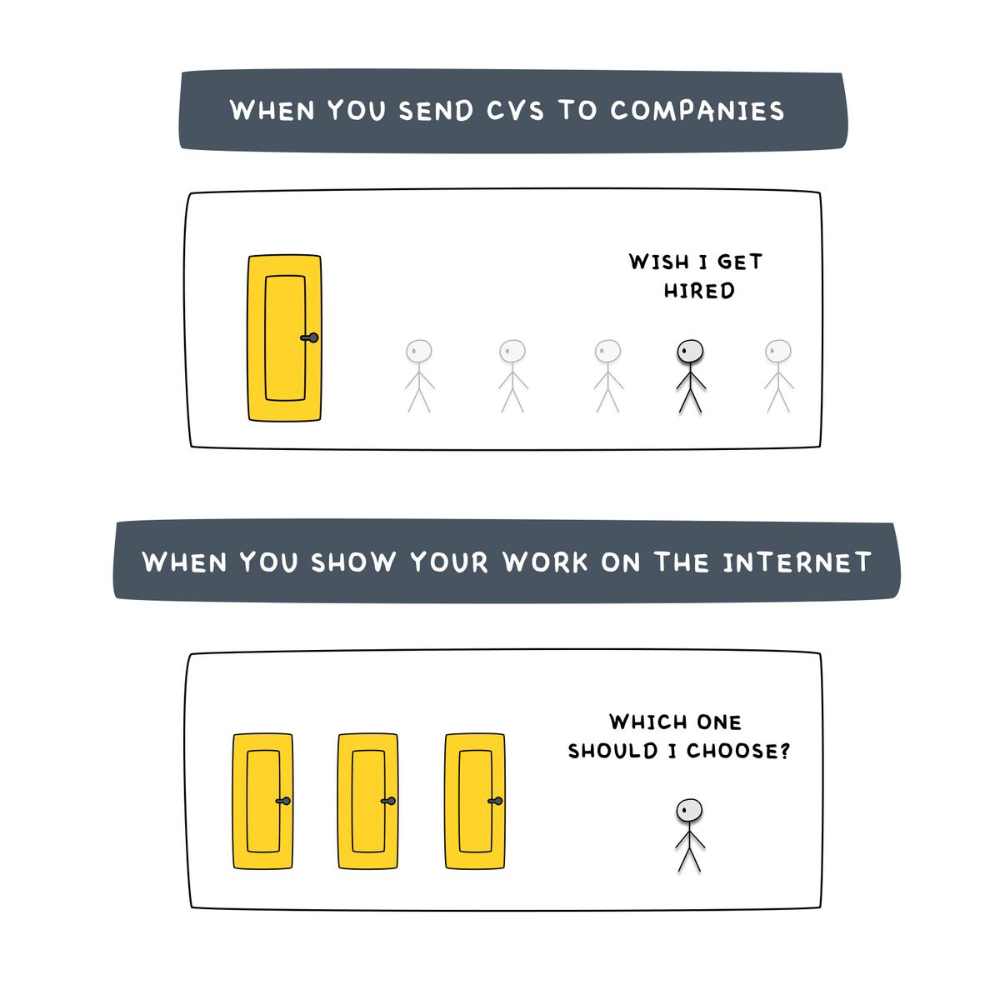

After-hours online talents (say, 6 pm-12 pm) change the game. This graphic explains it better:

Online talents boost after-hours opportunities.

You go from wanting to be picked to picking yourself. More chances equal more money. Your f*ck you fund gets the extra cash.

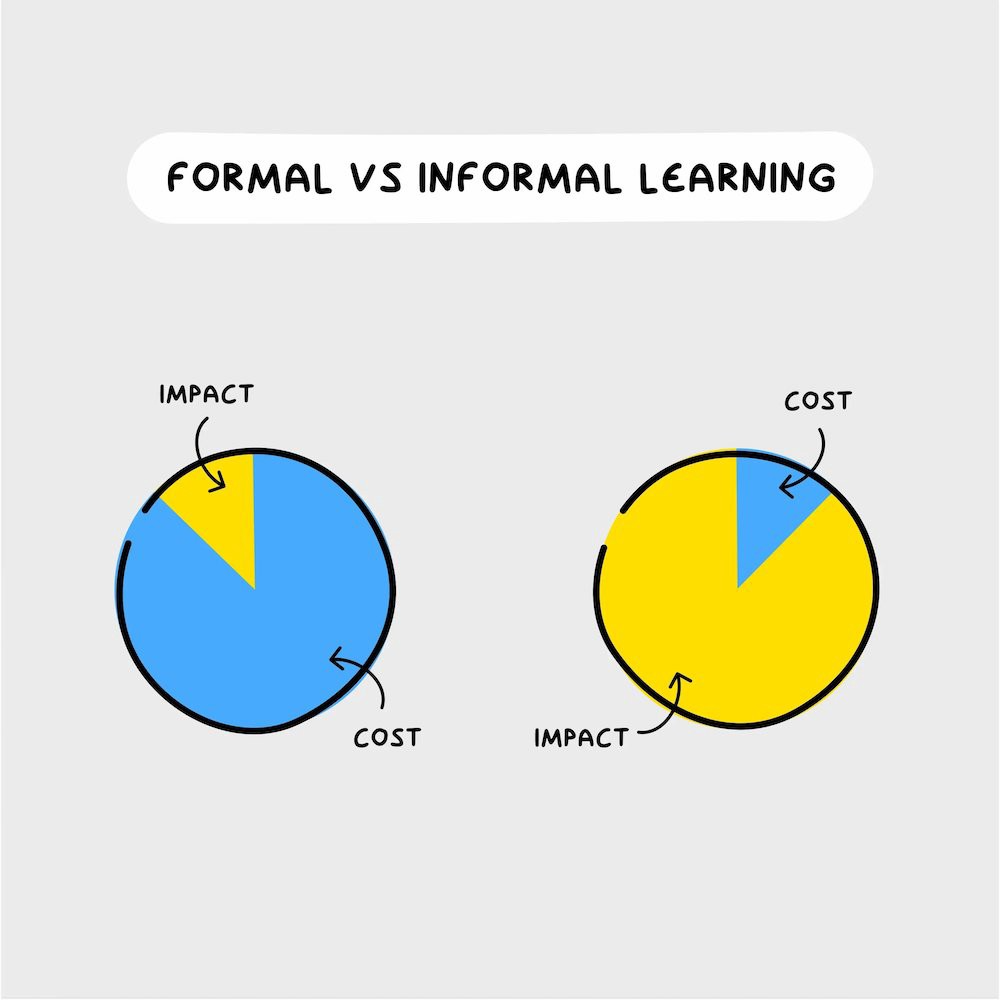

A novel method of learning is essential.

College costs six figures and takes a lifetime to repay.

Informal learning is distinct. 6-12pm:

Observe the carefully controlled Twitter newsfeed.

Make use of Teachable and Gumroad's online courses.

Watch instructional YouTube videos

Look through the top Substack newsletters.

Informal learning is more effective because it's not obvious. It's fun to follow your curiosity and hobbies.

The majority of people lack one attitude. It's simple to learn.

One big impediment stands in the way of f*ck you money and time independence. So often.

Too many people plan after 6-12 hours. Dreaming. Big-thinkers. Strategically. They fill their calendar with meetings.

This is after-hours masturb*tion.

Sahil Bloom reminded me that a bias towards action will determine if this approach works for you.

The key isn't knowing what to do from 6-12 a.m. Trust yourself and develop abilities as you go. It's for building the parachute after you jump.

Sounds risky. We've eliminated the risk by finishing this process after hours while you work 9-5.

With no risk, you can have an I-don't-care attitude and still be successful.

When you choose to move forward, this occurs.

Once you try 9-5/6-12, you'll tell someone.

It's bad.

Few of us hang out with problem-solvers.

It's how much of society operates. So they make reasons so they can feel better about not giving you money.

Matthew Kobach told me chasing f*ck you money is easier with like-minded folks.

Without f*ck you money friends, loneliness will take over and you'll think you've messed up when you just need to keep going.

Steal this easy guideline

Let's act. No more fluffing and caressing.

1. Learn

If you detest your 9-5 talents or don't think they'll work online, get new ones. If you're skilled enough, continue.

Easlo recommends these skills:

Designer for Figma

Designer Canva

bubble creators

editor in Photoshop

Automation consultant for Zapier

Designer of Webflow

video editor Adobe

Ghostwriter for Twitter

Idea consultant

Artist in Blender Studio

2. Develop the ability

Every night from 6-12, apply the skill.

Practicing ghostwriting? Write someone's tweets for free. Do someone's website copy to learn copywriting. Get a website to the top of Google for a keyword to understand SEO.

Free practice is crucial. Your 9-5 pays the money, so work for free.

3. Take off stealthily like a badass

Another mistake. Sell to few. Don't be the best. Don't claim expertise.

Sell your new expertise to others behind you.

Two ways:

Using a digital good

By providing a service,

Point 1 also includes digital service examples. Digital products include eBooks, communities, courses, ad-supported podcasts, and templates. It's easy. Your 9-5 job involves one of these.

Take ideas from work.

Why? They'll steal your time for profit.

4. Iterate while feeling awful

First-time launches always fail. You'll feel terrible. Okay. Remember your 9-5?

Find improvements. Ask free and paying consumers what worked.

Multiple relaunches, each 1% better.

5. Discover more

Never stop learning. Improve your skill. Add a relevant skill. Learn copywriting if you write online.

After-hours students earn the most.

6. Continue

Repetition is key.

7. Make this one small change.

Consistently. The 6-12 momentum won't make you rich in 30 days; that's success p*rn.

Consistency helps wage slaves become f*ck you money. Most people can't switch between the two.

Putting everything together

It's easy. You're probably already doing some.

This formula explains why, how, and what to do. It's a 5th-grade-friendly blueprint. Good.

Reduce financial risk with your 9-to-5. Replace Netflix with 6-12 money-making talents.

Life is short; do whatever you want. Today.

Raad Ahmed

3 years ago

How We Just Raised $6M At An $80M Valuation From 100+ Investors Using A Link (Without Pitching)

Lawtrades nearly failed three years ago.

We couldn't raise Series A or enthusiasm from VCs.

We raised $6M (at a $80M valuation) from 100 customers and investors using a link and no pitching.

Step-by-step:

We refocused our business first.

Lawtrades raised $3.7M while Atrium raised $75M. By comparison, we seemed unimportant.

We had to close the company or try something new.

As I've written previously, a pivot saved us. Our initial focus on SMBs attracted many unprofitable customers. SMBs needed one-off legal services, meaning low fees and high turnover.

Tech startups were different. Their General Councels (GCs) needed near-daily support, resulting in higher fees and lower churn than SMBs.

We stopped unprofitable customers and focused on power users. To avoid dilution, we borrowed against receivables. We scaled our revenue 10x, from $70k/mo to $700k/mo.

Then, we reconsidered fundraising (and do it differently)

This time was different. Lawtrades was cash flow positive for most of last year, so we could dictate our own terms. VCs were still wary of legaltech after Atrium's shutdown (though they were thinking about the space).

We neither wanted to rely on VCs nor dilute more than 10% equity. So we didn't compete for in-person pitch meetings.

AngelList Roll-Up Vehicle (RUV). Up to 250 accredited investors can invest in a single RUV. First, we emailed customers the RUV. Why? Because I wanted to help the platform's users.

Imagine if Uber or Airbnb let all drivers or Superhosts invest in an RUV. Humans make the platform, theirs and ours. Giving people a chance to invest increases their loyalty.

We expanded after initial interest.

We created a Journey link, containing everything that would normally go in an investor pitch:

- Slides

- Trailer (from me)

- Testimonials

- Product demo

- Financials

We could also link to our AngelList RUV and send the pitch to an unlimited number of people. Instead of 1:1, we had 1:10,000 pitches-to-investors.

We posted Journey's link in RUV Alliance Discord. 600 accredited investors noticed it immediately. Within days, we raised $250,000 from customers-turned-investors.

Stonks, which live-streamed our pitch to thousands of viewers, was interested in our grassroots enthusiasm. We got $1.4M from people I've never met.

These updates on Pump generated more interest. Facebook, Uber, Netflix, and Robinhood executives all wanted to invest. Sahil Lavingia, who had rejected us, gave us $100k.

We closed the round with public support.

Without a single pitch meeting, we'd raised $2.3M. It was a result of natural enthusiasm: taking care of the people who made us who we are, letting them move first, and leveraging their enthusiasm with VCs, who were interested.

We used network effects to raise $3.7M from a founder-turned-VC, bringing the total to $6M at a $80M valuation (which, by the way, I set myself).

What flipping the fundraising script allowed us to do:

We started with private investors instead of 2–3 VCs to show VCs what we were worth. This gave Lawtrades the ability to:

- Without meetings, share our vision. Many people saw our Journey link. I ended up taking meetings with people who planned to contribute $50k+, but still, the ratio of views-to-meetings was outrageously good for us.

- Leverage ourselves. Instead of us selling ourselves to VCs, they did. Some people with large checks or late arrivals were turned away.

- Maintain voting power. No board seats were lost.

- Utilize viral network effects. People-powered.

- Preemptively halt churn by turning our users into owners. People are more loyal and respectful to things they own. Our users make us who we are — no matter how good our tech is, we need human beings to use it. They deserve to be owners.

I don't blame founders for being hesitant about this approach. Pump and RUVs are new and scary. But it won’t be that way for long. Our approach redistributed some of the power that normally lies entirely with VCs, putting it into our hands and our network’s hands.

This is the future — another way power is shifting from centralized to decentralized.

You might also like

Joanna Henderson

3 years ago

An Average Day in the Life of a 25-Year-Old -A Rich Man's At-Home Unemployed Girlfriend

And morning water bottle struggles.

Welcome to my TikTok, where I share my stay-at-home life! I'll show you my usual day from morning to night.

I rise early to prepare my guy iced coffee. I make matcha, my favorite drink. I also fill our water bottles, which takes time and effort, so I record and describe the procedure. As you see me perform the unthinkable by putting a water bottle in a soda machine, you'll see my magnificent but unowned condo. My lover has everything, including:

In the living room, a sizable velvet alabaster divan. I was unable to use the words white or sofa in place of alabaster or a divan since they are insufficiently elegant and do not adequately convey how opulent the item is. The price tag on the divan was another huge feature; I'm sure my lover wouldn't purchase any furniture for less than $20k because it would be beneath him.

A plush Swiss coffee-colored Tabriz carpet. Once more, white is a color associated with the underclass; for us, the wealthy, it's alabaster or swiss coffee. Sorry, my boyfriend is wealthy; I'm truly in the same situation. And yet, I’m the one whos freeloading off of him, not you haha!

Soft translucent powder is the hue of the vinyl wallcoverings. I merely made up the name of that hue, but I have to maintain the online character I've established. There is no room for adopting language typical of peasant people; I must reiterate that I am wealthy while they are not.

I rest after filling our water bottles. I'm really fatigued from chores. My boyfriend is skeptical about hiring a housekeeper and cook. Does he assume I'm a servant or maid? I can't be overly demanding or throw a tantrum since he may replace me with a younger version. Leonardo Di Caprio's fault!

After the break, I bring my lover a water bottle. He's off to work with my best wishes. After cleaning the shower, I text my BF saying I broke a nail. He charged $675 for a crystal-topped shellac manicure. Lucky me!

After this morning's crazy choirs, especially the water bottle one, I'm famished. I dress quickly and go to the neighborhood organic-vegan-gluten-free-sugar-free-plasma-free-GMO-free-HBO-free breakfast place. Most folks can't afford $17.99 for a caffeine-free-mushroom-plus-mud-and-electrolytes morning beverage. It goes nicely with my matcha. Eggs Benedict cost $68. English muffins are off-limits. I can't make myself obese. My partner said he'd swap me for a 19-year-old Eastern European if I keep eating bacon.

I leave no tip since tipping is too much pressure and math for me, so I go shopping.

My shopping adventures have gotten monotonous. 47 designer bags and 114 bag covers Birkins need their own luggage. My babies! I've never caught my BF with a baby. I have sleeping medications and a turkey baster. Tatiana is much younger and thinner than me, so I can't lose him to her. The goal is to become a stay-at-home wife shortly. A turkey baster is essential.

After spending $955 on La Mer lotions and getting a crystal manicure, I nap. Before my boyfriend's return, I can nap for 5 hours.

I wake up around 4 pm — it’s time to prepare dinner. Yes, I said “prepare for dinner,” not “prepare dinner.” I have crystals on my nails! Do you really think I would cook? No way.

My husband's arrival still requires much work. I clean the kitchen, get cutlery and napkins. I order UberEats while my BF is 30-45 minutes away.

Wagyu steaks with Matsutake mushroom soup today. I pick desserts for my lover but not myself. Eastern European threat?

When my BF gets home from work, we eat. I don't believe in tipping UberEats drivers. If he wants to appreciate life's finer things, he should locate a rich woman.

After eating, we plan our getaway. I requested Aruba's fanciest hotel for winter and expect a butler. We're bickering over who gets the butler. We may need two.

Day's end, I'm exhausted. Stay-at-home girlfriends put in a lot of time and work. Work and duties are never-ending.

Before bed, I shower and use a liquid gold mask in my 27-step makeup procedure. It's a French luxury brand, not La Mer.

Here's my day.

Note: I like satire and absurd trends. Stay-at-home-girlfriend TikTok videos have become popular recently.

I don't shame or support such agreements; I'm just an observer. Thanks for reading.

Steffan Morris Hernandez

3 years ago

10 types of cognitive bias to watch out for in UX research & design

10 biases in 10 visuals

Cognitive biases are crucial for UX research, design, and daily life. Our biases distort reality.

After learning about biases at my UX Research bootcamp, I studied Erika Hall's Just Enough Research and used the Nielsen Norman Group's wealth of information. 10 images show my findings.

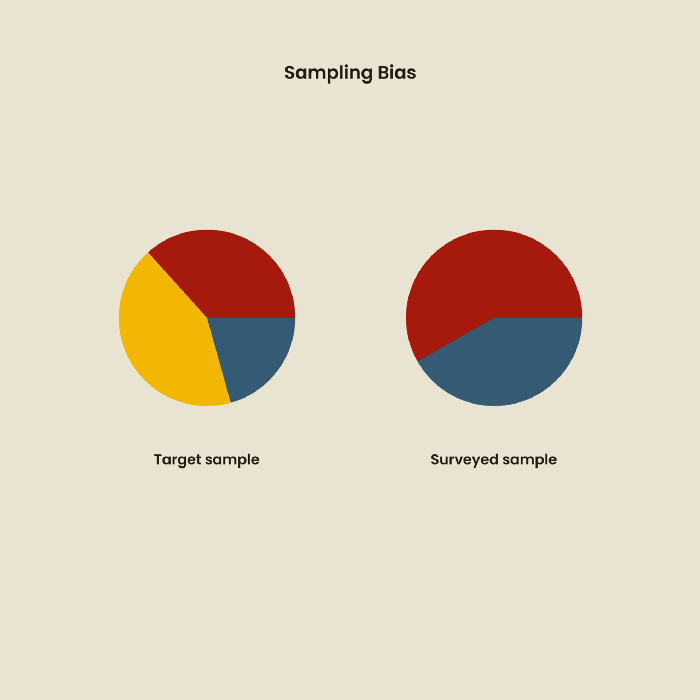

1. Bias in sampling

Misselection of target population members causes sampling bias. For example, you are building an app to help people with food intolerances log their meals and are targeting adult males (years 20-30), adult females (ages 20-30), and teenage males and females (ages 15-19) with food intolerances. However, a sample of only adult males and teenage females is biased and unrepresentative.

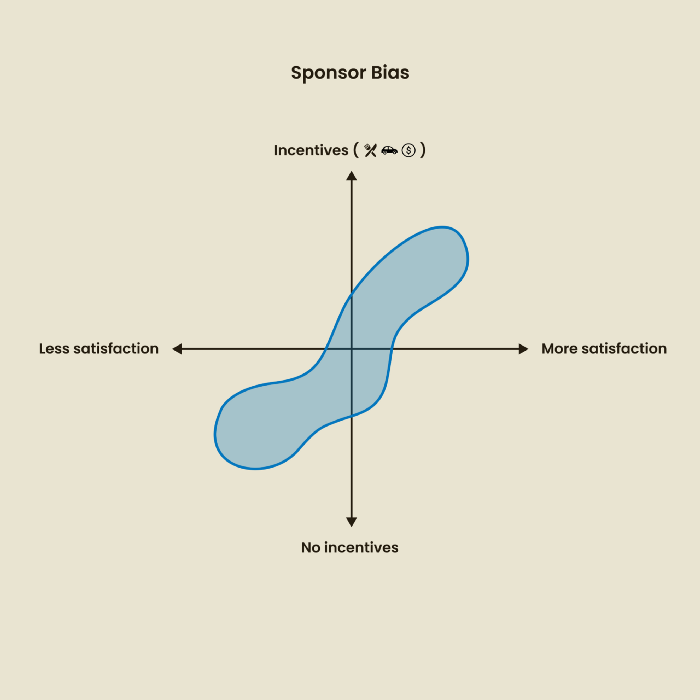

2. Sponsor Disparity

Sponsor bias occurs when a study's findings favor an organization's goals. Beware if X organization promises to drive you to their HQ, compensate you for your time, provide food, beverages, discounts, and warmth. Participants may endeavor to be neutral, but incentives and prizes may bias their evaluations and responses in favor of X organization.

In Just Enough Research, Erika Hall suggests describing the company's aims without naming it.

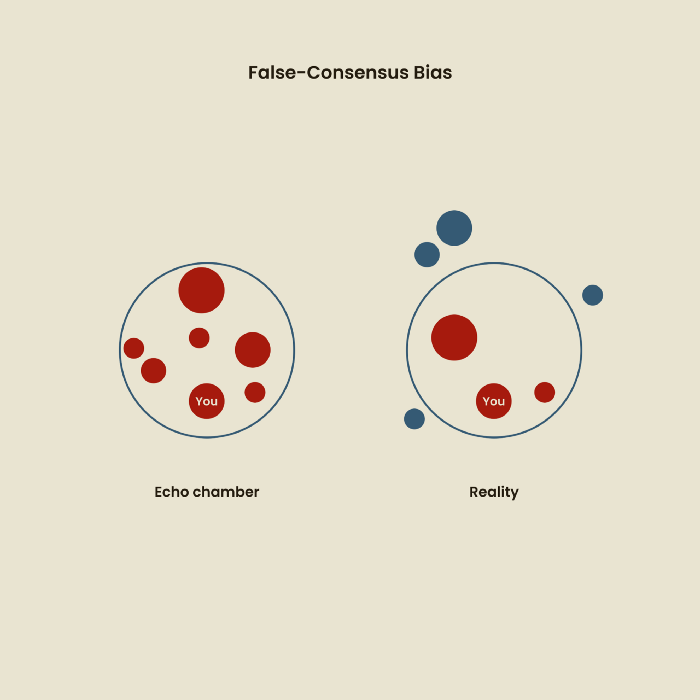

Third, False-Consensus Bias

False-consensus bias is when a person thinks others think and act the same way. For instance, if a start-up designs an app without researching end users' needs, it could fail since end users may have different wants. https://www.nngroup.com/videos/false-consensus-effect/

Working directly with the end user and employing many research methodologies to improve validity helps lessen this prejudice. When analyzing data, triangulation can boost believability.

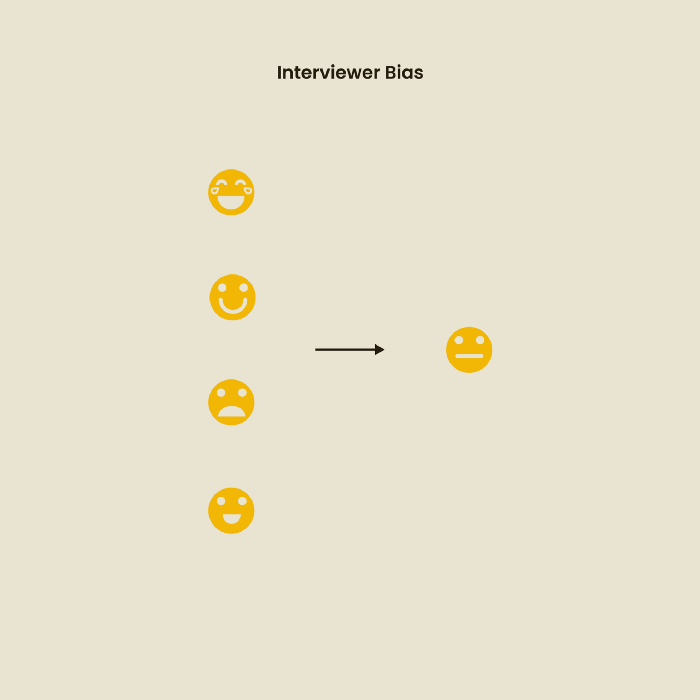

Bias of the interviewer

I struggled with this bias during my UX research bootcamp interviews. Interviewing neutrally takes practice and patience. Avoid leading questions that structure the story since the interviewee must interpret them. Nodding or smiling throughout the interview may subconsciously influence the interviewee's responses.

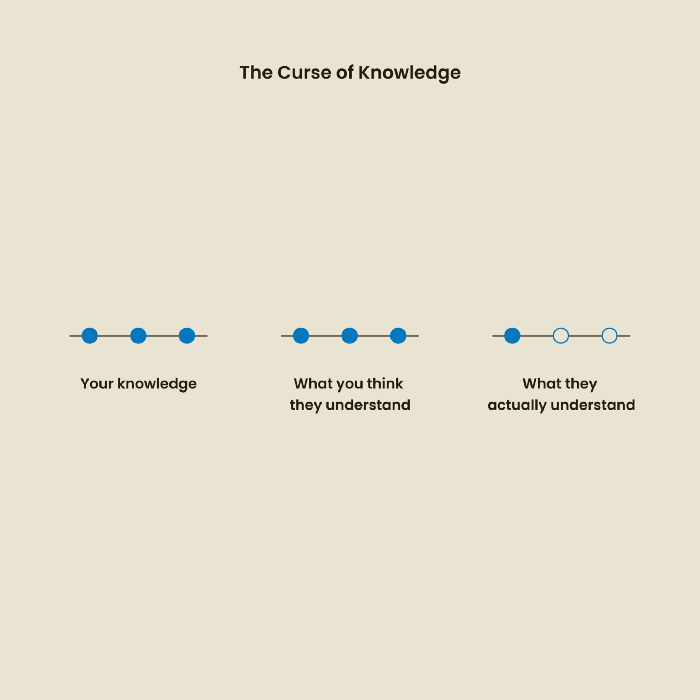

The Curse of Knowledge

The curse of knowledge occurs when someone expects others understand a subject as well as they do. UX research interviews and surveys should reduce this bias because technical language might confuse participants and harm the research. Interviewing participants as though you are new to the topic may help them expand on their replies without being influenced by the researcher's knowledge.

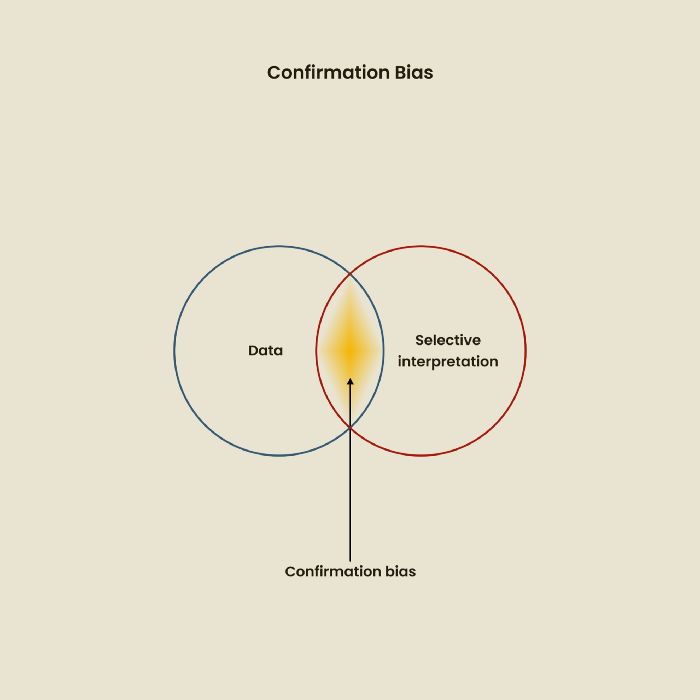

Confirmation Bias

Most prevalent bias. People highlight evidence that supports their ideas and ignore data that doesn't. The echo chamber of social media creates polarization by promoting similar perspectives.

A researcher with confirmation bias may dismiss data that contradicts their research goals. Thus, the research or product may not serve end users.

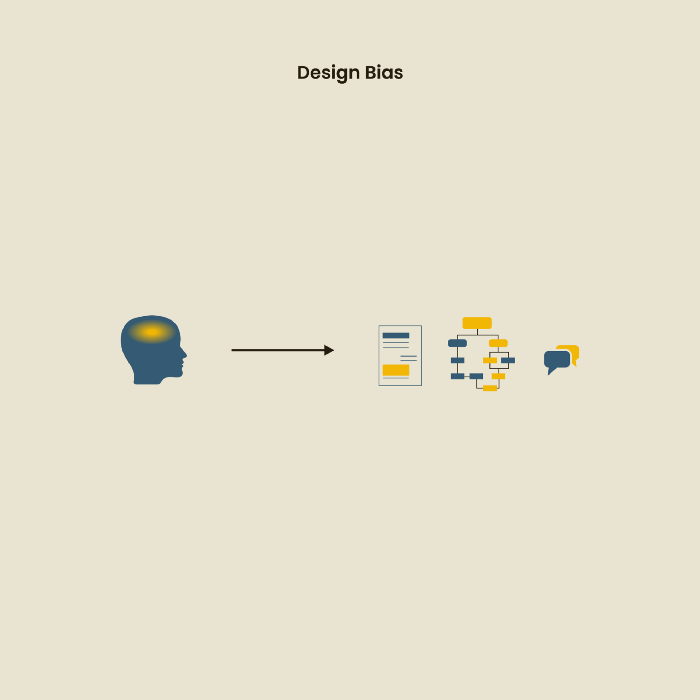

Design biases

UX Research design bias pertains to study construction and execution. Design bias occurs when data is excluded or magnified based on human aims, assumptions, and preferences.

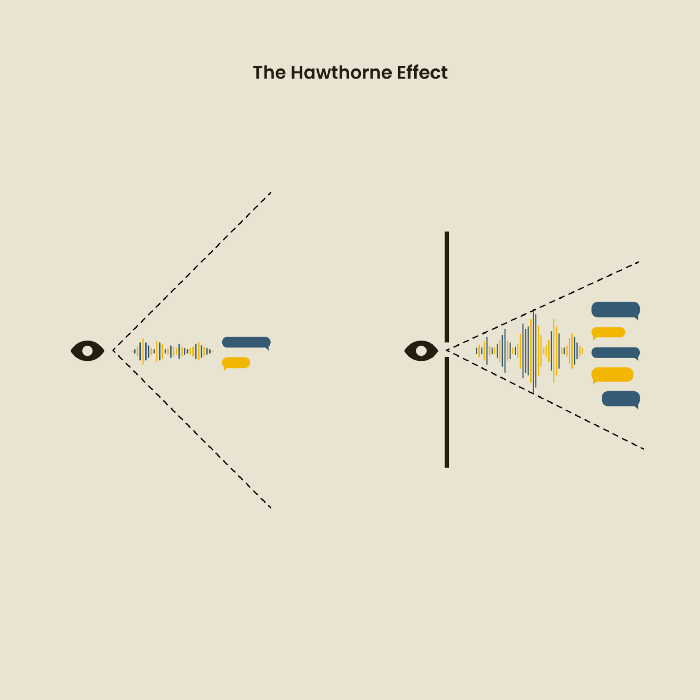

The Hawthorne Impact

Remember when you behaved differently while the teacher wasn't looking? When you behaved differently without your parents watching? A UX research study's Hawthorne Effect occurs when people modify their behavior because you're watching. To escape judgment, participants may act and speak differently.

To avoid this, researchers should blend into the background and urge subjects to act alone.

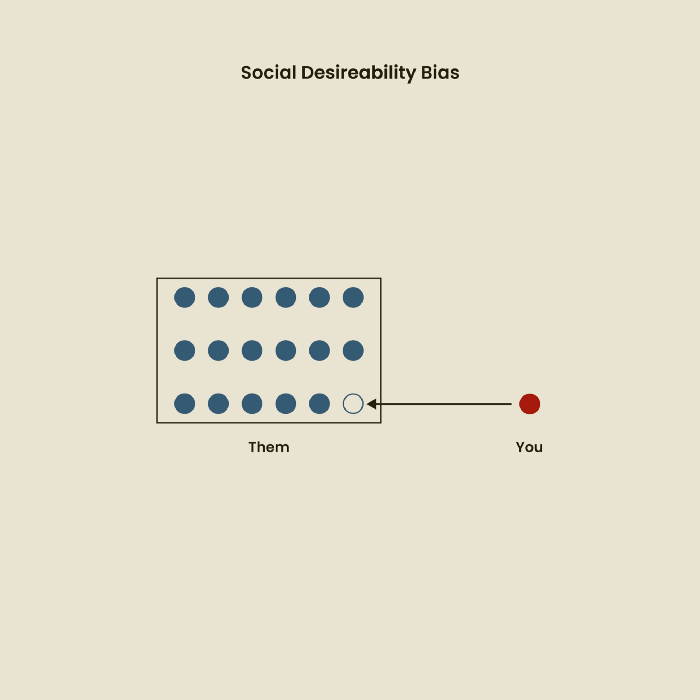

The bias against social desire

People want to belong to escape rejection and hatred. Research interviewees may mislead or slant their answers to avoid embarrassment. Researchers should encourage honesty and confidentiality in studies to address this. Observational research may reduce bias better than interviews because participants behave more organically.

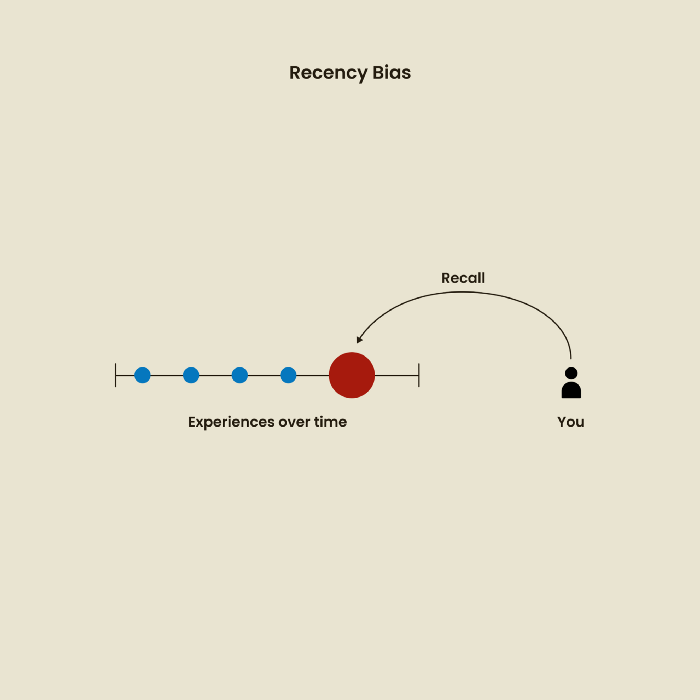

Relative Time Bias

Humans tend to appreciate recent experiences more. Consider school. Say you failed a recent exam but did well in the previous 7 exams. Instead, you may vividly recall the last terrible exam outcome.

If a UX researcher relies their conclusions on the most recent findings instead of all the data and results, recency bias might occur.

I hope you liked learning about UX design, research, and real-world biases.

Jano le Roux

3 years ago

Apple Quietly Introduces A Revolutionary Savings Account That Kills Banks

Would you abandon your bank for Apple?

Banks are struggling.

not as a result of inflation

not due to the economic downturn.

not due to the conflict in Ukraine.

But because they’re underestimating Apple.

Slowly but surely, Apple is looking more like a bank.

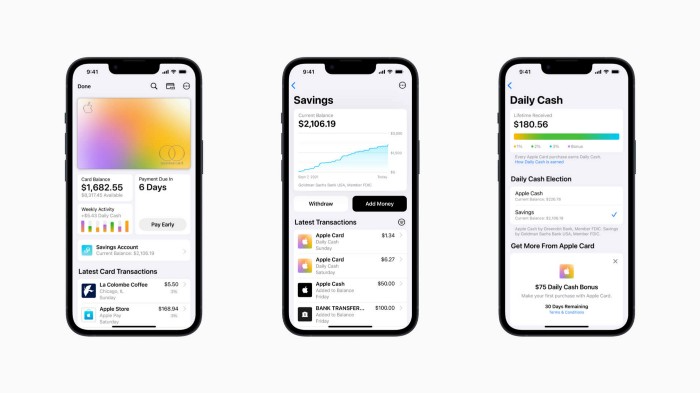

An easy new savings account like Apple

Apple has a new savings account.

Apple says Apple Card users may set up and manage savings straight in Wallet.

No more charges

Colorfully high yields

With no minimum balance

No minimal down payments

Most consumer-facing banks will have to match Apple's offer or suffer disruption.

Users may set it up from their iPhones without traveling to a bank or filling out paperwork.

It’s built into the iPhone in your pocket.

So now more waiting for slow approval processes.

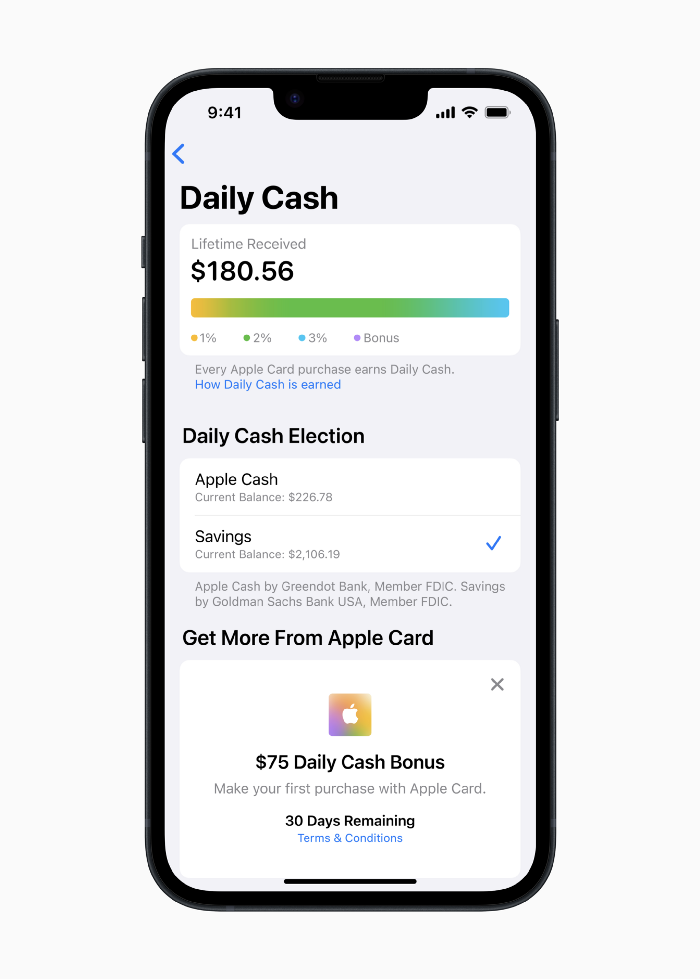

Once the savings account is set up, Apple will automatically transfer all future Daily Cash into it. Users may also add these cash to an Apple Cash card in their Apple Wallet app and adjust where Daily Cash is paid at any time.

Apple Pay and Apple Wallet VP Jennifer Bailey:

Savings enables Apple Card users to grow their Daily Cash rewards over time, while also saving for the future.

Bailey says Savings adds value to Apple Card's Daily Cash benefit and offers another easy-to-use tool to help people lead healthier financial lives.

Transfer money from a linked bank account or Apple Cash to a Savings account. Users can withdraw monies to a connected bank account or Apple Cash card without costs.

Once set up, Apple Card customers can track their earnings via Wallet's Savings dashboard. This dashboard shows their account balance and interest.

This product targets younger people as the easiest way to start a savings account on the iPhone.

Why would a Gen Z account holder travel to the bank if their iPhone could be their bank?

Using this concept, Apple will transform the way we think about banking by 2030.

Two other nightmares keep bankers awake at night

Apple revealed two new features in early 2022 that banks and payment gateways hated.

Tap to Pay with Apple

Late Apple Pay

They startled the industry.

Tap To Pay converts iPhones into mobile POS card readers. Apple Pay Later is pushing the BNPL business in a consumer-friendly direction, hopefully ending dodgy lending practices.

Tap to Pay with Apple

iPhone POS

Millions of US merchants, from tiny shops to huge establishments, will be able to accept Apple Pay, contactless credit and debit cards, and other digital wallets with a tap.

No hardware or payment terminal is needed.

Revolutionary!

Stripe has previously launched this feature.

Tap to Pay on iPhone will provide companies with a secure, private, and quick option to take contactless payments and unleash new checkout experiences, said Bailey.

Apple's solution is ingenious. Brilliant!

Bailey says that payment platforms, app developers, and payment networks are making it easier than ever for businesses of all sizes to accept contactless payments and thrive.

I admire that Apple is offering this up to third-party services instead of closing off other functionalities.

Slow POS terminals, farewell.

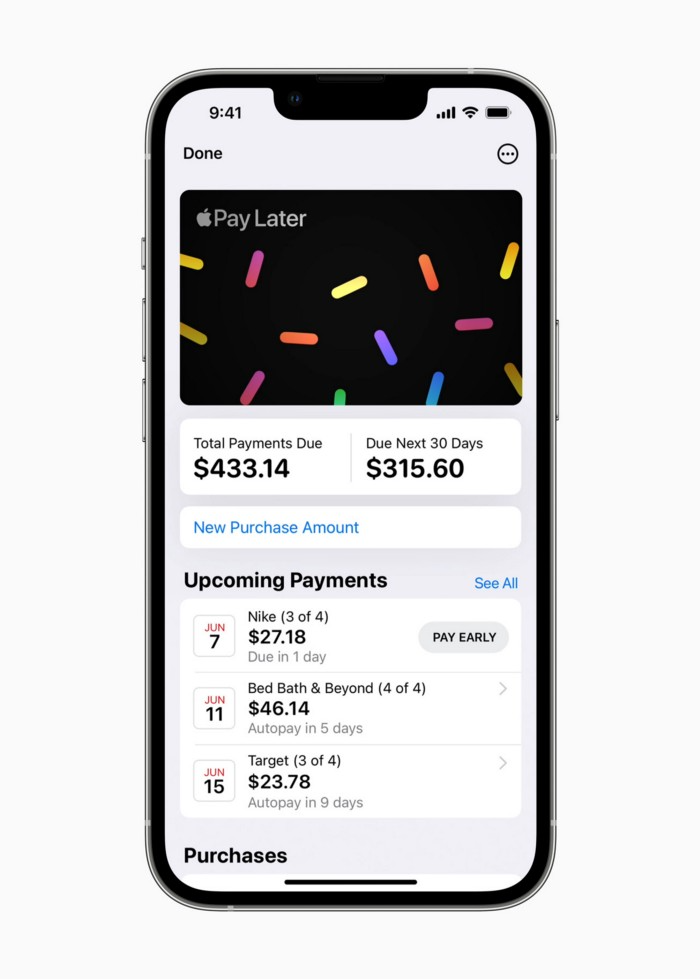

Late Apple Pay

Pay Apple later.

Apple Pay Later enables US consumers split Apple Pay purchases into four equal payments over six weeks with no interest or fees.

The Apple ecosystem integration makes this BNPL scheme unique. Nonstick. No dumb forms.

Frictionless.

Just double-tap the button.

Apple Pay Later was designed with users' financial well-being in mind. Apple makes it easy to use, track, and pay back Apple Pay Later from Wallet.

Apple Pay Later can be signed up in Wallet or when using Apple Pay. Apple Pay Later can be used online or in an app that takes Apple Pay and leverages the Mastercard network.

Apple Pay Order Tracking helps consumers access detailed receipts and order tracking in Wallet for Apple Pay purchases at participating stores.

Bad BNPL suppliers, goodbye.

Most bankers will be caught in Apple's eye playing mini golf in high-rise offices.

The big problem:

Banks still think about features and big numbers just like other smartphone makers did not too long ago.

Apple thinks about effortlessness, seamlessness, and frictionlessness that just work through integrated hardware and software.

Let me know what you think Apple’s next power moves in the banking industry could be.