More on Marketing

Dung Claire Tran

3 years ago

Is the future of brand marketing with virtual influencers?

Digital influences that mimic humans are rising.

Lil Miquela has 3M Instagram followers, 3.6M TikTok followers, and 30K Twitter followers. She's been on the covers of Prada, Dior, and Calvin Klein magazines. Miquela released Not Mine in 2017 and launched Hard Feelings at Lollapazoolas this year. This isn't surprising, given the rise of influencer marketing.

This may be unexpected. Miquela's fake. Brud, a Los Angeles startup, produced her in 2016.

Lil Miquela is one of many rising virtual influencers in the new era of social media marketing. She acts like a real person and performs the same tasks as sports stars and models.

The emergence of online influencers

Before 2018, computer-generated characters were rare. Since the virtual human industry boomed, they've appeared in marketing efforts worldwide.

In 2020, the WHO partnered up with Atlanta-based virtual influencer Knox Frost (@knoxfrost) to gather contributions for the COVID-19 Solidarity Response Fund.

Lu do Magalu (@magazineluiza) has been the virtual spokeswoman for Magalu since 2009, using social media to promote reviews, product recommendations, unboxing videos, and brand updates. Magalu's 10-year profit was $552M.

In 2020, PUMA partnered with Southeast Asia's first virtual model, Maya (@mayaaa.gram). She joined Singaporean actor Tosh Zhang in the PUMA campaign. Local virtual influencer Ava Lee-Graham (@avagram.ai) partnered with retail firm BHG to promote their in-house labels.

In Japan, Imma (@imma.gram) is the face of Nike, PUMA, Dior, Salvatore Ferragamo SpA, and Valentino. Imma's bubblegum pink bob and ultra-fine fashion landed her on the cover of Grazia magazine.

Lotte Home Shopping created Lucy (@here.me.lucy) in September 2020. She made her TV debut as a Christmas show host in 2021. Since then, she has 100K Instagram followers and 13K TikTok followers.

Liu Yiexi gained 3 million fans in five days on Douyin, China's TikTok, in 2021. Her two-minute video went viral overnight. She's posted 6 videos and has 830 million Douyin followers.

China's virtual human industry was worth $487 million in 2020, up 70% year over year, and is expected to reach $875.9 million in 2021.

Investors worldwide are interested. Immas creator Aww Inc. raised $1 million from Coral Capital in September 2020, according to Bloomberg. Superplastic Inc., the Vermont-based startup behind influencers Janky and Guggimon, raised $16 million by 2020. Craft Ventures, SV Angels, and Scooter Braun invested. Crunchbase shows the company has raised $47 million.

The industries they represent, including Augmented and Virtual reality, were worth $14.84 billion in 2020 and are projected to reach $454.73 billion by 2030, a CAGR of 40.7%, according to PR Newswire.

Advantages for brands

Forbes suggests brands embrace computer-generated influencers. Examples:

Unlimited creative opportunities: Because brands can personalize everything—from a person's look and activities to the style of their content—virtual influencers may be suited to a brand's needs and personalities.

100% brand control: Brand managers now have more influence over virtual influencers, so they no longer have to give up and rely on content creators to include brands into their storytelling and style. Virtual influencers can constantly produce social media content to promote a brand's identity and ideals because they are completely scandal-free.

Long-term cost savings: Because virtual influencers are made of pixels, they may be reused endlessly and never lose their beauty. Additionally, they can move anywhere around the world and even into space to fit a brand notion. They are also always available. Additionally, the expense of creating their content will not rise in step with their expanding fan base.

Introduction to the metaverse: Statista reports that 75% of American consumers between the ages of 18 and 25 follow at least one virtual influencer. As a result, marketers that support virtual celebrities may now interact with younger audiences that are more tech-savvy and accustomed to the digital world. Virtual influencers can be included into any digital space, including the metaverse, as they are entirely computer-generated 3D personas. Virtual influencers can provide brands with a smooth transition into this new digital universe to increase brand trust and develop emotional ties, in addition to the young generations' rapid adoption of the metaverse.

Better engagement than in-person influencers: A Hype Auditor study found that online influencers have roughly three times the engagement of their conventional counterparts. Virtual influencers should be used to boost brand engagement even though the data might not accurately reflect the entire sector.

Concerns about influencers created by computers

Virtual influencers could encourage excessive beauty standards in South Korea, which has a $10.7 billion plastic surgery industry.

A classic Korean beauty has a small face, huge eyes, and pale, immaculate skin. Virtual influencers like Lucy have these traits. According to Lee Eun-hee, a professor at Inha University's Department of Consumer Science, this could make national beauty standards more unrealistic, increasing demand for plastic surgery or cosmetic items.

Other parts of the world raise issues regarding selling items to consumers who don't recognize the models aren't human and the potential of cultural appropriation when generating influencers of other ethnicities, called digital blackface by some.

Meta, Facebook and Instagram's parent corporation, acknowledges this risk.

“Like any disruptive technology, synthetic media has the potential for both good and harm. Issues of representation, cultural appropriation and expressive liberty are already a growing concern,” the company stated in a blog post. “To help brands navigate the ethical quandaries of this emerging medium and avoid potential hazards, (Meta) is working with partners to develop an ethical framework to guide the use of (virtual influencers).”

Despite theoretical controversies, the industry will likely survive. Companies think virtual influencers are the next frontier in the digital world, which includes the metaverse, virtual reality, and digital currency.

In conclusion

Virtual influencers may garner millions of followers online and help marketers reach youthful audiences. According to a YouGov survey, the real impact of computer-generated influencers is yet unknown because people prefer genuine connections. Virtual characters can supplement brand marketing methods. When brands are metaverse-ready, the author predicts virtual influencer endorsement will continue to expand.

Jon Brosio

3 years ago

You can learn more about marketing from these 8 copywriting frameworks than from a college education.

Email, landing pages, and digital content

Today's most significant skill:

Copywriting.

Unfortunately, most people don't know how to write successful copy because they weren't taught in school.

I've been obsessed with copywriting for two years. I've read 15 books, completed 3 courses, and studied internet's best digital entrepreneurs.

Here are 8 copywriting frameworks that educate more than a four-year degree.

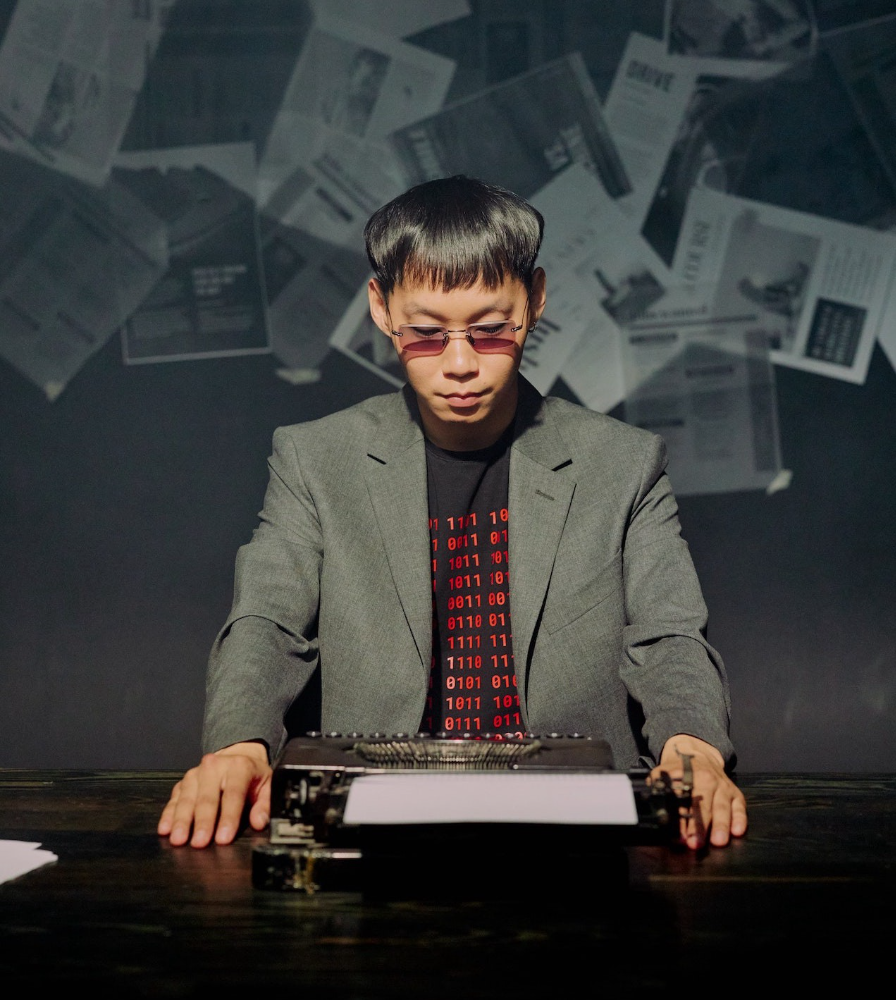

1. Feature — Advantage — Benefit (F.A.B)

This is the most basic copywriting foundation. Email marketing, landing page copy, and digital video ads can use it.

F.A.B says:

How it works (feature)

which is helpful (advantage)

What's at stake (benefit)

The Hustle uses this framework on their landing page to convince people to sign up:

2. P. A. S. T. O. R.

This framework is for longer-form copywriting. PASTOR uses stories to engage with prospects. It explains why people should buy this offer.

PASTOR means:

Problem

Amplify

Story

Testimonial

Offer

Response

Dan Koe's landing page is a great example. It shows PASTOR frame-by-frame.

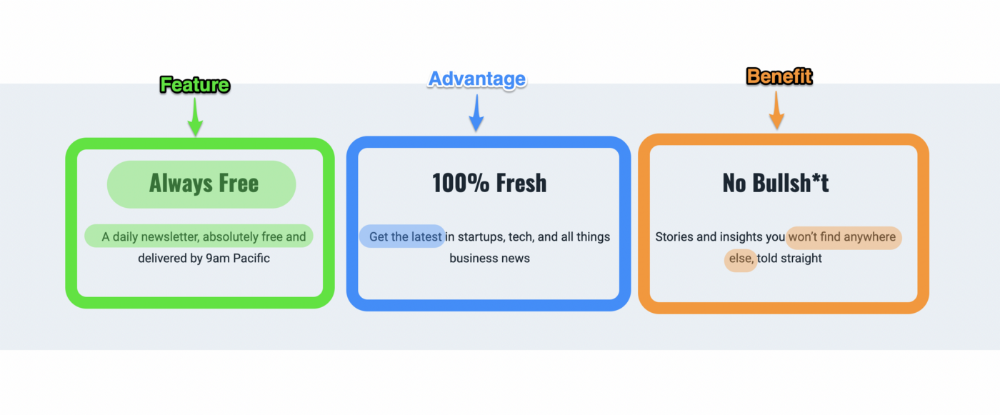

3. Before — After — Bridge

Before-after-bridge is a copywriting framework that draws attention and shows value quickly.

This framework highlights:

where you are

where you want to be

how to get there

Works great for: Email threads/landing pages

Zain Kahn utilizes this framework to write viral threads.

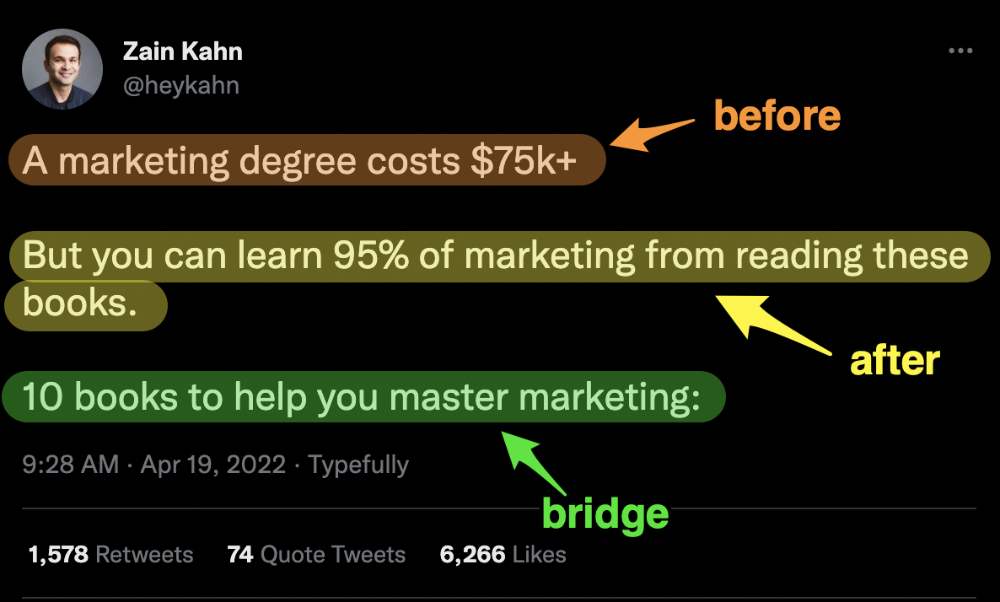

4. Q.U.E.S.T

QUEST is about empathetic writing. You know their issues, obstacles, and headaches. This allows coverups.

QUEST:

Qualifies

Understands

Educates

Stimulates

Transitions

Tom Hirst's landing page uses the QUEST framework.

5. The 4P’s model

The 4P’s approach pushes your prospect to action. It educates and persuades quickly.

4Ps:

The problem the visitor is dealing with

The promise that will help them

The proof the promise works

A push towards action

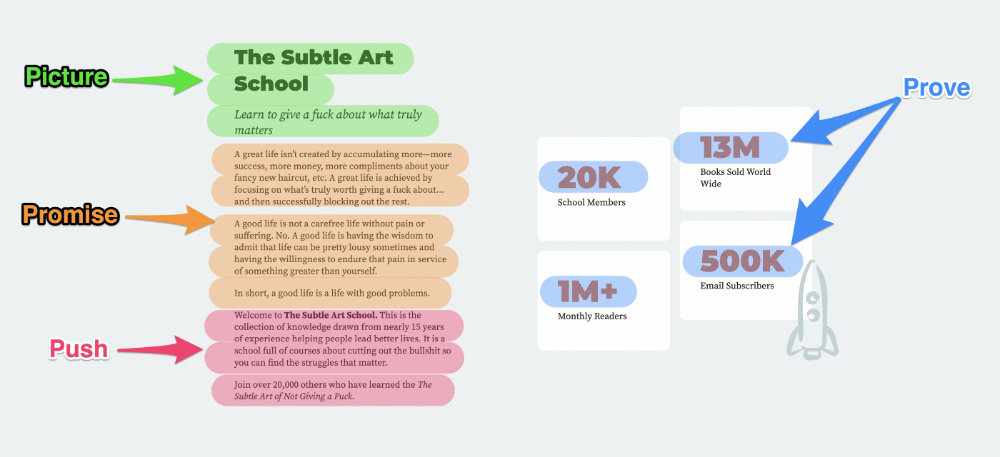

Mark Manson is a bestselling author, digital creator, and pop-philosopher. He's also a great copywriter, and his membership offer uses the 4P’s framework.

6. Problem — Agitate — Solution (P.A.S)

Up-and-coming marketers should understand problem-agitate-solution copywriting. Once you understand one structure, others are easier. It drives passion and presents a clear solution.

PAS outlines:

The issue the visitor is having

It then intensifies this issue through emotion.

finally offers an answer to that issue (the offer)

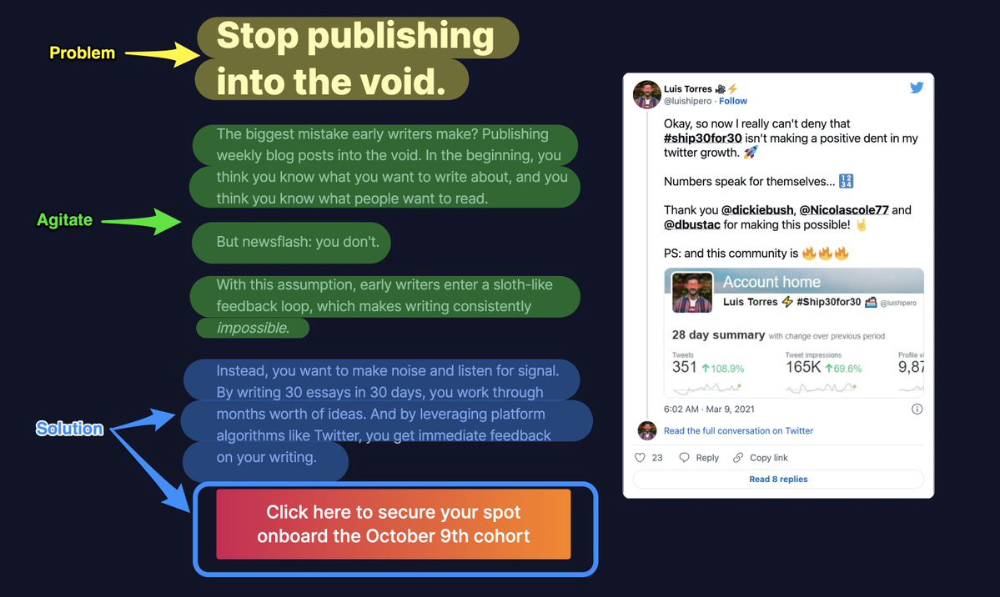

The customer's story loops. Nicolas Cole and Dickie Bush use PAS to promote Ship 30 for 30.

7. Star — Story — Solution (S.S.S)

PASTOR + PAS = star-solution-story. Like PAS, it employs stories to persuade.

S.S.S. is effective storytelling:

Star: (Person had a problem)

Story: (until they had a breakthrough)

Solution: (That created a transformation)

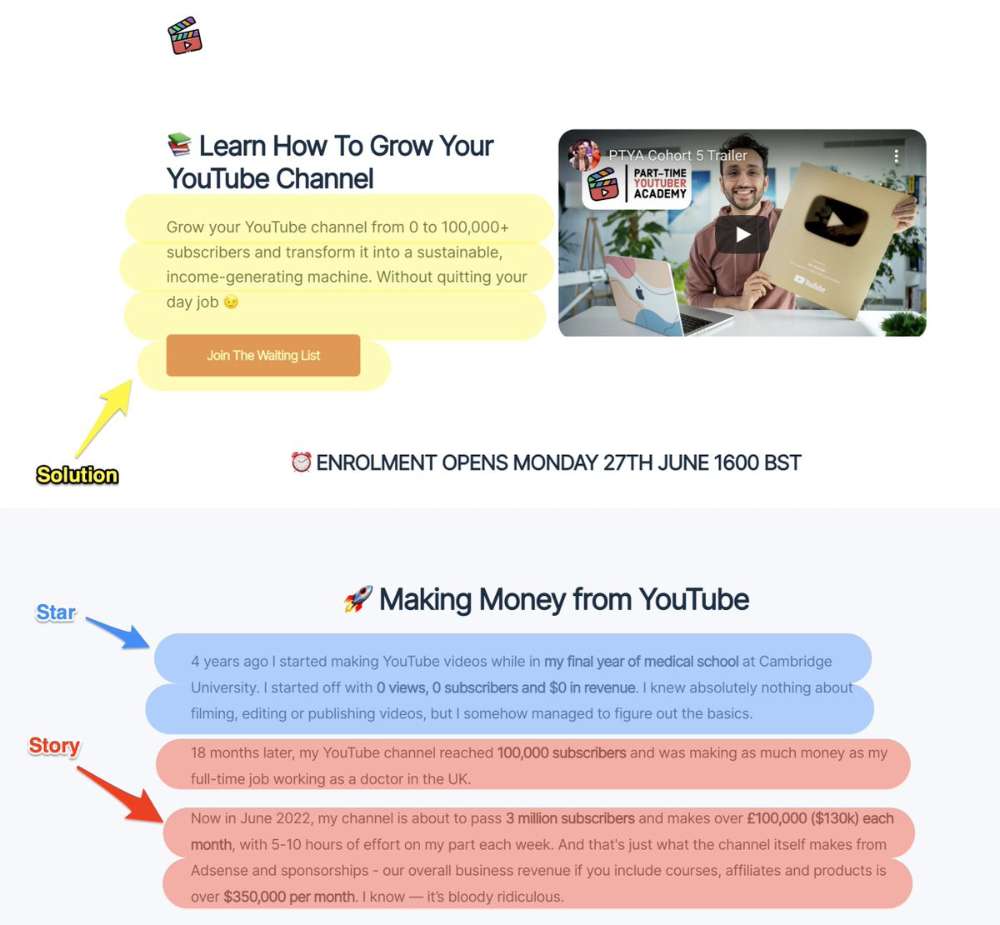

Ali Abdaal is a YouTuber with a great S.S.S copy.

8. Attention — Interest — Desire — Action

AIDA is another classic. This copywriting framework is great for fast-paced environments (think all digital content on Linkedin, Twitter, Medium, etc.).

It works with:

Page landings

writing on thread

Email

It's a good structure since it's concise, attention-grabbing, and action-oriented.

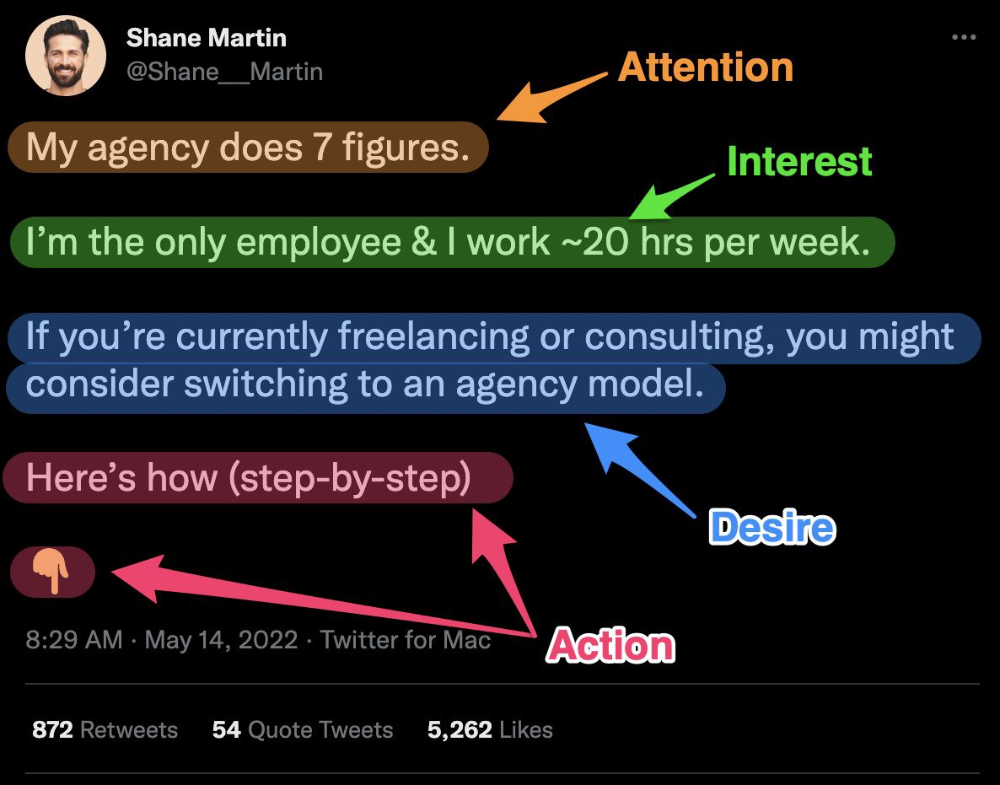

Shane Martin, Twitter's creator, uses this approach to create viral content.

TL;DR

8 copywriting frameworks that teach marketing better than a four-year degree

Feature-advantage-benefit

Before-after-bridge

Star-story-solution

P.A.S.T.O.R

Q.U.E.S.T

A.I.D.A

P.A.S

4P’s

M.G. Siegler

3 years ago

Apple: Showing Ads on Your iPhone

This report from Mark Gurman has stuck with me:

In the News and Stocks apps, the display ads are no different than what you might get on an ad-supported website. In the App Store, the ads are for actual apps, which are probably more useful for Apple users than mortgage rates. Some people may resent Apple putting ads in the News and Stocks apps. After all, the iPhone is supposed to be a premium device. Let’s say you shelled out $1,000 or more to buy one, do you want to feel like Apple is squeezing more money out of you just to use its standard features? Now, a portion of ad revenue from the News app’s Today tab goes to publishers, but it’s not clear how much. Apple also lets publishers advertise within their stories and keep the vast majority of that money. Surprisingly, Today ads also appear if you subscribe to News+ for $10 per month (though it’s a smaller number).

I use Apple News often. It's a good general news catch-up tool, like Twitter without the BS. Customized notifications are helpful. Fast and lovely. Except for advertisements. I have Apple One, which includes News+, and while I understand why the magazines still have brand ads, it's ridiculous to me that Apple enables web publishers to introduce awful ads into this experience. Apple's junky commercials are ridiculous.

We know publishers want and probably requested this. Let's keep Apple News ad-free for the much smaller percentage of paid users, and here's your portion. (Same with Stocks, which is more sillier.)

Paid app placement in the App Store is a wonderful approach for developers to find new users (though far too many of those ads are trying to trick users, in my opinion).

Apple is also planning to increase ads in its Maps app. This sounds like Google Maps, and I don't like it. I never find these relevant, and they clutter up the user experience. Apple Maps now has a UI advantage (though not a data/search one, which matters more).

Apple is nickel-and-diming its customers. We spend thousands for their products and premium services like Apple One. We all know why: income must rise, and new firms are needed to scale. This will eventually backfire.

You might also like

Mircea Iosif

3 years ago

How To Start An Online Business That Will Be Profitable Without Investing A Lot Of Time

Don't know how to start an online business? Here's a guide. By following these recommendations, you can build a lucrative and profitable online business.

What Are Online Businesses Used For?

Most online businesses are websites. A self-created, self-managed website. You may sell things and services online.

To establish an internet business, you must locate a host and set up accounts with numerous companies. Once your accounts are set up, you may start publishing content and selling products or services.

How to Make Money from Your Online Business

Advertising and marketing are the best ways to make money online. You must develop strategies to contact new customers and generate leads. Make sure your website is search engine optimized so people can find you online.

Top 5 Online Business Tips for Startups:

1. Know your target audience's needs.

2. Make your website as appealing as possible.

3. Generate leads and sales with marketing.

4. Track your progress and learn from your mistakes to improve.

5. Be prepared to expand into new markets or regions.

How to Launch a Successful Online Business Without Putting in a Lot of Work

Build with a solid business model to start a profitable online business. By using these tips, you can start your online business without paying much.

First, develop a user-friendly website. You can use an internet marketing platform or create your own website. Once your website is live, optimize it for search engines and add relevant content.

Second, sell online. This can be done through ads or direct sales to website visitors. Finally, use social media to advertise your internet business. By accomplishing these things, you'll draw visitors to your website and make money.

When launching a business, invest long-term. This involves knowing your goals and how you'll pay for them. Volatility can have several effects on your business. If you offer things online, you may need to examine if the market is ready for them.

Invest wisely

Investing all your money in one endeavor can lead to too much risk and little ROI. Diversify your investments to take advantage of all available chances. So, your investments won't encounter unexpected price swings and you'll be immune to economic upheavals.

Financial news updates

When launching or running a thriving online business, financial news is crucial. By knowing current trends and upcoming developments, you can keep your business lucrative.

Keeping up with financial news can also help you avoid potential traps that could harm your bottom line. If you don't know about new legislation that could affect your industry, potential customers may choose another store when they learn about your business's problems.

Volatility ahead

You should expect volatility in the financial sector. Without a plan for coping with volatility, you could run into difficulty. If your organization relies on client input, you may not be able to exploit customer behavior shifts.

Your company could go bankrupt if you don't understand how fickle the stock market can be. By preparing for volatility, you can ensure your organization survives difficult times and market crashes.

Conclusion

Many internet businesses can be profitable. Start quickly with a few straightforward steps. Diversify your investments, follow financial news, and be prepared for volatility to develop a successful business.

Thanks for reading!

JEFF JOHN ROBERTS

3 years ago

What just happened in cryptocurrency? A plain-English Q&A about Binance's FTX takedown.

Crypto people have witnessed things. They've seen big hacks, mind-boggling swindles, and amazing successes. They've never seen a day like Tuesday, when the world's largest crypto exchange murdered its closest competition.

Here's a primer on Binance and FTX's lunacy and why it matters if you're new to crypto.

What happened?

CZ, a shrewd Chinese-Canadian billionaire, runs Binance. FTX, a newcomer, has challenged Binance in recent years. SBF (Sam Bankman-Fried)—a young American with wild hair—founded FTX (initials are a thing in crypto).

Last weekend, CZ complained about SBF's lobbying and then exploited Binance's market power to attack his competition.

How did CZ do that?

CZ invested in SBF's new cryptocurrency exchange when they were friends. CZ sold his investment in FTX for FTT when he no longer wanted it. FTX clients utilize those tokens to get trade discounts, although they are less liquid than Bitcoin.

SBF made a mistake by providing CZ just too many FTT tokens, giving him control over FTX. It's like Pepsi handing Coca-Cola a lot of stock it could sell at any time. CZ got upset with SBF and flooded the market with FTT tokens.

SBF owns a trading fund with many FTT tokens, therefore this was catastrophic. SBF sought to defend FTT's worth by selling other assets to buy up the FTT tokens flooding the market, but it didn't succeed, and as FTT's value plummeted, his liabilities exceeded his assets. By Tuesday, his companies were insolvent, so he sold them to his competition.

Crazy. How could CZ do that?

CZ likely did this to crush a rising competition. It was also personal. In recent months, regulators have been tough toward the crypto business, and Binance and FTX have been trying to stay on their good side. CZ believed SBF was poisoning U.S. authorities by saying CZ was linked to China, so CZ took retribution.

“We supported previously, but we won't pretend to make love after divorce. We're neutral. But we won't assist people that push against other industry players behind their backs," CZ stated in a tragic tweet on Sunday. He crushed his rival's company two days later.

So does Binance now own FTX?

No. Not yet. CZ has only stated that Binance signed a "letter of intent" to acquire FTX. CZ and SBF say Binance will protect FTX consumers' funds.

Who’s to blame?

You could blame CZ for using his control over FTX to destroy it. SBF is also being criticized for not disclosing the full overlap between FTX and his trading company, which controlled plenty of FTT. If he had been upfront, someone might have warned FTX about this vulnerability earlier, preventing this mess.

Others have alleged that SBF utilized customer monies to patch flaws in his enterprises' balance accounts. That happened to multiple crypto startups that collapsed this spring, which is unfortunate. These are allegations, not proof.

Why does this matter? Isn't this common in crypto?

Crypto is notorious for shady executives and pranks. FTX is the second-largest crypto business, and SBF was largely considered as the industry's golden boy who would help it get on authorities' good side. Thus far.

Does this affect cryptocurrency prices?

Short-term, it's bad. Prices fell on suspicions that FTX was in peril, then rallied when Binance rescued it, only to fall again later on Tuesday.

These occurrences have hurt FTT and SBF's Solana token. It appears like a huge token selloff is affecting the rest of the market. Bitcoin fell 10% and Ethereum 15%, which is bad but not catastrophic for the two largest coins by market cap.

Mangu Solutions

3 years ago

Growing a New App to $15K/mo in 6 Months [SaaS Case Study]

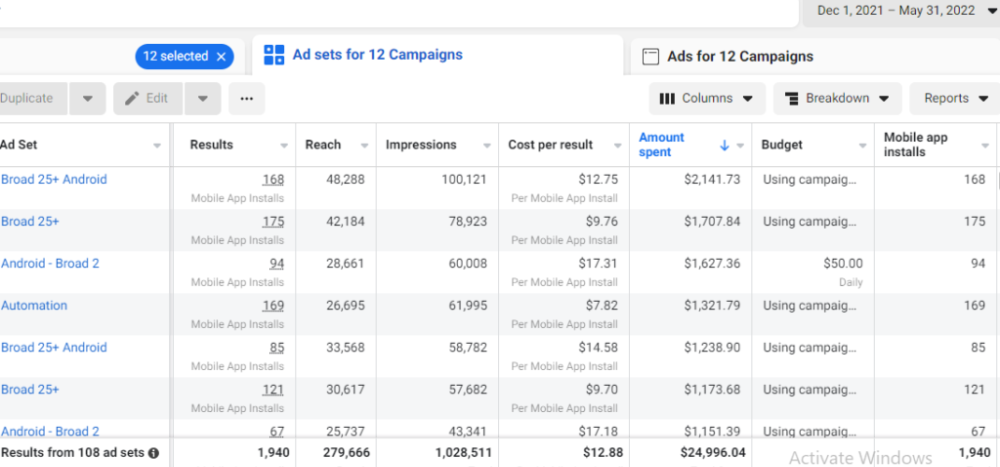

Discover How We Used Facebook Ads to Grow a New Mobile App from $0 to $15K MRR in Just 6 Months and Our Strategy to Hit $100K a Month.

Our client introduced a mobile app for Poshmark resellers in December and wanted as many to experience it and subscribe to the monthly plan.

An Error We Committed

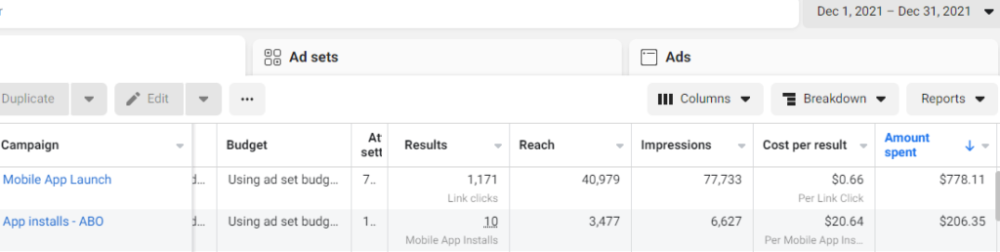

We initiated a Facebook ad campaign with a "awareness" goal, not "installs." This sent them to a landing page that linked to the iPhone App Store and Android Play Store. Smart, right?

We got some installs, but we couldn't tell how many came from the ad versus organic/other channels because the objective we chose only reported landing page clicks, not app installs.

We didn't know which interest groups/audiences had the best cost per install (CPI) to optimize and scale our budget.

After spending $700 without adequate data (installs and trials report), we stopped the campaign and worked with our client's app developer to set up app events tracking.

This allowed us to create an installs campaign and track installs, trials, and purchases (in some cases).

Finding a Successful Audience

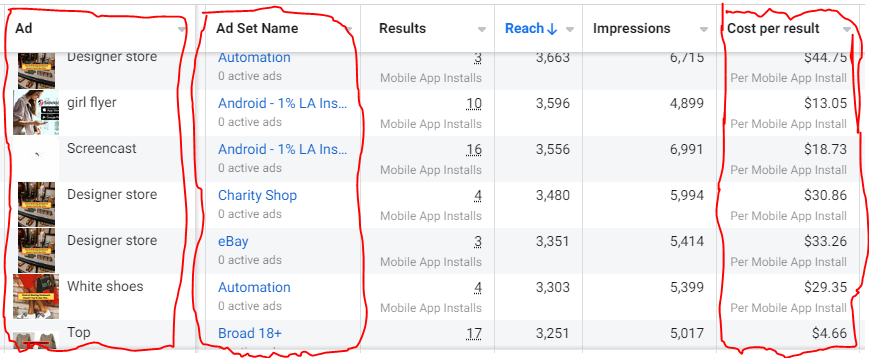

Once we knew what ad sets brought in what installs at what cost, we began optimizing and testing other interest groups and audiences, growing the profitable low CPI ones and eliminating the high CPI ones.

We did all our audience testing using an ABO campaign (Ad Set Budget Optimization), spending $10 to $30 on each ad set for three days and optimizing afterward. All ad sets under $30 were moved to a CBO campaign (Campaign Budget Optimization).

We let Facebook's AI decide how much to spend on each ad set, usually the one most likely to convert at the lowest cost.

If the CBO campaign maintains a nice CPI, we keep increasing the budget by $50 every few days or duplicating it sometimes in order to double the budget. This is how we've scaled to $400/day profitably.

Finding Successful Creatives

Per campaign, we tested 2-6 images/videos. Same ad copy and CTA. There was no clear winner because some images did better with some interest groups.

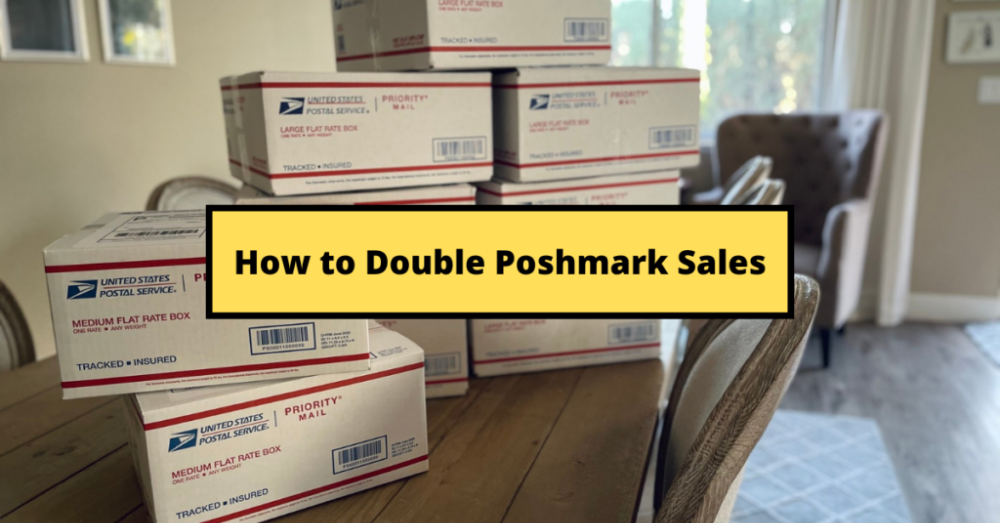

The image above with mail packages, for example, got us a cheap CPI of $9.71 from our Goodwill Stores interest group but, a high $48 CPI from our lookalike audience. Once we had statistically significant data, we turned off the high-cost ad.

New marketers who are just discovering A/B testing may assume it's black and white — winner and loser. However, Facebook ads' machine learning and reporting has gotten so sophisticated that it's hard to call a creative a flat-out loser, but rather a 'bad fit' for some audiences, and perfect for others.

You can see how each creative performs across age groups and optimize.

How Many Installs Did It Take Us to Earn $15K Per Month?

Six months after paying $25K, we got 1,940 app installs, 681 free trials, and 522 $30 monthly subscriptions. 522 * $30 gives us $15,660 in monthly recurring revenue (MRR).

Next, what? $100K per month

The conversation above is with the app's owner. We got on a 30-minute call where I shared how I plan to get the app to be making $100K a month like I’ve done for other businesses.

Reverse Engineering $100K

Formula:

For $100K/month, we need 3,334 people to pay $30/month. 522 people pay that. We need 2,812 more paid users.

522 paid users from 1,940 installs is a 27% conversion rate. To hit $100K/month, we need 10,415 more installs. Assuming...

With a $400 daily ad spend, we average 40 installs per day. This means that if everything stays the same, it would take us 260 days (around 9 months) to get to $100K a month (MRR).

Conclusion

You must market your goods to reach your income objective (without waiting forever). Paid ads is the way to go if you hate knocking on doors or irritating friends and family (who aren’t scalable anyways).

You must also test and optimize different angles, audiences, interest groups, and creatives.